Abstract

Body-part-centered response fields are pervasive in single neurons, functional magnetic resonance imaging, electroencephalography and behavior, but there is no unifying formal explanation of their origins and role. In the present study, we used reinforcement learning and artificial neural networks to demonstrate that body-part-centered fields do not simply reflect stimulus configuration, but rather action value: they naturally arise from the basic assumption that agents often experience positive or negative reward after contacting environmental objects. This perspective successfully reproduces experimental findings that are foundational in the peripersonal space literature. It also suggests that peripersonal fields provide building blocks that create a modular model of the world near the agent: an egocentric value map. This concept is strongly supported by the emergent modularity that we observed in our artificial networks. The short-term, close-range, egocentric map is analogous to the long-term, long-range, allocentric hippocampal map. This perspective fits empirical data from multiple experiments, provides testable predictions and accommodates existing explanations of peripersonal fields.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$259.00 per year

only $21.58 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

All used empirical data was extracted from publicly available figures11,14,25,26,27,46,47,48. Generated data can be recreated using the code at https://github.com/rorybufacchi/EgocentricValueMaps. Source data are provided with this paper.

Code availability

All analyses were performed in MATLAB (2020a and 2022b). All code is available at https://github.com/rorybufacchi/EgocentricValueMaps.

References

Moser, E. I., Kropff, E. & Moser, M.-B. Place cells, grid cells, and the brain’s spatial representation system. Annu. Rev. Neurosci. 31, 69–89 (2008).

Banino, A. et al. Vector-based navigation using grid-like representations in artificial agents. Nature 557, 429–433 (2018).

Stachenfeld, K. L., Botvinick, M. M. & Gershman, S. J. The hippocampus as a predictive map. Nat. Neurosci. 20, 1643–1653 (2017).

Russek, E. M., Momennejad, I., Botvinick, M. M., Gershman, S. J. & Daw, N. D. Predictive representations can link model-based reinforcement learning to model-free mechanisms. PLoS Comput. Biol. 13, e1005768 (2017).

Behrens, T. E. J. et al. What Is a cognitive map? Organizing knowledge for flexible behavior. Neuron 100, 490–509 (2018).

Bufacchi, R. J. & Iannetti, G. D. An action field theory of peripersonal space. Trends Cogn. Sci. 22, 1076–1090 (2018).

Graziano, M. S. & Cooke, D. F. Parieto-frontal interactions, personal space, and defensive behavior. Neuropsychologia 44, 845–859 (2006).

Noel, J.-P., Blanke, O. & Serino, A. From multisensory integration in peripersonal space to bodily self-consciousness: from statistical regularities to statistical inference. Ann. N. Y. Acad. Sci. 1426, 146–165 (2018).

Cléry, J., Guipponi, O., Wardak, C. & Ben Hamed, S. Neuronal bases of peripersonal and extrapersonal spaces, their plasticity and their dynamics: knowns and unknowns. Neuropsychologia 70, 313–326 (2015).

Makin, T. R., Holmes, N. P. & Zohary, E. Is that near my hand? Multisensory representation of peripersonal space in human intraparietal sulcus. J. Neurosci. 27, 731–740 (2007).

Wamain, Y., Gabrielli, F. & Coello, Y. EEG μ rhythm in virtual reality reveals that motor coding of visual objects in peripersonal space is task dependent. Cortex 74, 20–30 (2016).

Sambo, C. F. & Forster, B. An ERP investigation on visuotactile interactions in peripersonal and extrapersonal space: evidence for the spatial rule. J. Cogn. Neurosci. 21, 1550–1559 (2009).

de Haan, A. M., Smit, M., Van der Stigchel, S. & Dijkerman, H. C. Approaching threat modulates visuotactile interactions in peripersonal space. Exp. Brain Res. 234, 1875–1884 (2016).

Serino, A. et al. Body part-centered and full body-centered peripersonal space representations. Sci. Rep. 5, 18603 (2015).

Hunley, S. B. & Lourenco, S. F. What is peripersonal space? An examination of unresolved empirical issues and emerging findings. Cogn. Sci. 9, e1472 (2018).

Cléry, J. et al. The prediction of impact of a looming stimulus onto the body is subserved by multisensory integration mechanisms. J. Neurosci. 37, 10656–10670 (2017).

Bertoni, T., Magosso, E. & Serino, A. From statistical regularities in multisensory inputs to peripersonal space representation and body ownership: insights from a neural network model. Eur. J. Neurosci. 53, 611–636 (2021).

Roncone, A., Hoffmann, M., Pattacini, U., Fadiga, L. & Metta, G. Peripersonal space and margin of safety around the body: learning visuo-tactile associations in a humanoid robot with artificial skin. PLoS ONE 11, e0163713 (2016).

Magosso, E., Zavaglia, M., Serino, A., di Pellegrino, G. & Ursino, M. Visuotactile representation of peripersonal space: a neural network study. Neural Comput. 22, 190–243 (2010).

Straka, Z., Noel, J.-P. & Hoffmann, M. A normative model of peripersonal space encoding as performing impact prediction. PLoS Comput. Biol. 18, e1010464 (2022).

Bufacchi, R. J., Liang, M., Griffin, L. D. & Iannetti, G. D. A geometric model of defensive peripersonal space. J. Neurophysiol. 115, 218–225 (2016).

Pezzulo, G. & Cisek, P. Navigating the affordance landscape: feedback control as a process model of behavior and cognition. Trends Cogn. Sci. 20, 414–424 (2016).

Rizzolatti, G., Fadiga, L., Fogassi, L. & Gallese, V. The space around us. Science 277, 190–191 (1997).

Fogassi, L. et al. Coding of peripersonal space in inferior premotor cortex (area F4). J. Neurophysiol. 76, 141–157 (1996).

Huijsmans, M., Haan de, M. A., Müller, B. C. N., Dijkerman, H. C. & Schie van, H. T. Knowledge of collision modulates defensive multisensory responses to looming insects in Arachnophobes. J. Exp. Psychol. Hum. Percept. Perform. https://doi.org/10.1037/xhp0000974.supp (2022).

Graziano, M. S., Hu, X. T. & Gross, C. G. Visuospatial properties of ventral premotor cortex. J. Neurophysiol. 77, 2268–2292 (1997).

Holmes, N. P. Does tool use extend peripersonal space? A review and re-analysis. Exp. Brain Res. 218, 273–282 (2012).

Iriki, A., Tanaka, M. & Iwamura, Y. Coding of modified body schema during tool use by macaque postcentral neurones. Neuroreport 7, 2325–2330 (1996).

Graziano, M. The Spaces between Us: A Story of Neuroscience, Evolution, and Human Nature (Oxford Univ. Press, 2017).

Mountcastle, V. B., Lynch, J. C., Georgopoulos, A., Sakata, H. & Acuna, C. Posterior parietal association cortex of the monkey: command functions for operations within extrapersonal space. J. Neurophysiol. 38, 871–908 (1975).

Barreto, A. et al. Transfer in deep reinforcement learning using successor features and generalised policy improvement. In 35th International Conference on Machine Learning, Vol. 2, 844–853 (ICML, 2018).

Dolan, R. J. & Dayan, P. Goals and habits in the brain. Neuron 80, 312–325 (2013).

Cisek, P. & Kalaska, J. F. Neural mechanisms for interacting with a world full of action choices. Annu. Rev. Neurosci. 33, 269–298 (2010).

Gigliotti, M. F., Soares Coelho, P., Coutinho, J. & Coello, Y. Peripersonal space in social context is modulated by action reward, but differently in males and females. Psychol. Res. 85, 181–194 (2021).

Bertoni, T. et al. The self and the Bayesian brain: testing probabilistic models of body ownership through a self-localization task. Cortex 167, 247–272 (2023).

Friston, K., Sajid, N., Heins, C., Pavliotis, G. A. & Parr, T. The free energy principle made simpler but not too simple. Physics Rep. 1024, 1–29 (2022).

Wolpert, D. M., Goodbody, S. J. & Husain, M. Maintaining internal representations: the role of the human superior parietal lobe. Nat. Neurosci. 1, 529–533 (1998).

Medendorp, W. P. & Heed, T. State estimation in posterior parietal cortex: distinct poles of environmental and bodily states. Prog. Neurobiol. https://doi.org/10.1016/j.pneurobio.2019.101691 (2019).

Bufacchi, R. J., Battaglia-Mayer, A., Iannetti, G. D. & Caminiti, R. Cortico-spinal modularity in the parieto-frontal system: a new perspective on action control. Prog. Neurobiol. 231, 102537 (2023).

Arber, S. & Costa, R. M. Networking brainstem and basal ganglia circuits for movement. Nat. Rev. Neurosci. 23, 342–360 (2022).

Burgess, N. Spatial memory: how egocentric and allocentric combine. Trends Cogn. Sci. 10, 551–557 (2006).

Melzack, R. & Wall, P. D. The Challenge of Pain (Penguin, 1988).

Seymour, B. et al. Temporal difference models describe higher-order learning in humans. Nature 429, 664–667 (2004).

Baliki, M. N. et al. Corticostriatal functional connectivity predicts transition to chronic back pain. Nat. Neurosci. 15, 1117–1119 (2012).

Dong, W. K., Chudler, E. H., Sugiyama, K., Roberts, V. J. & Hayashi, T. Somatosensory, multisensory, and task-related neurons in cortical area 7b (PF) of unanesthetized monkeys. J. Neurophysiol. 72, 542–564 (1994).

Ronga, I. et al. Seeming confines: electrophysiological evidence of peripersonal space remapping following tool-use in humans. Cortex 144, 133–150 (2021).

Quinlan, D. J. & Culham, J. C. fMRI reveals a preference for near viewing in the human parieto-occipital cortex. Neuroimage 36, 167–187 (2007).

Holt, D. J. et al. Neural correlates of personal space intrusion. J. Neurosci. 34, 4123–4134 (2014).

Ferri, F., Tajadura-Jiménez, A., Väljamäe, A., Vastano, R. & Costantini, M. Emotion-inducing approaching sounds shape the boundaries of multisensory peripersonal space. Neuropsychologia 70, 468–475 (2015).

Taffou, M. & Viaud-Delmon, I. Cynophobic fear adaptively extends peri-personal space. Front. Psychiatry 5, 3–9 (2014).

Acknowledgements

We thank L. Bonini, N. Burgess, F. Cacucci, P. Neri and G. Vallortigara for their comments on earlier versions of the manuscript, and K. Shao and S. Perovic for discussions and contributions to the figures. The present study was supported by the European Research Council (ERC Consolidator Grant PAINSTRAT to G.D.I.), a fellowship from the Shanghai Municipal Human Resources and Social Security Bureau (no. E35CN31A21 to R.J.B.), a fellowship from the Italian Academy for Advanced Studies of Columbia University (to G.D.I.) and the Shanghai Municipal Science and Technology Major Project (grant no. 2019SHZDZX02 to N.L).

Author information

Authors and Affiliations

Contributions

R.J.B. and G.D.I. conceived the project. R.J.B. did the modeling and analysis. R.J.B. and G.D.I. created the figures. R.J.B., R.S., A.M.F., R.C. and G.D.I. planned the analysis. R.J.B. and G.D.I. wrote the original draft. R.J.B., R.S., A.M.F., Y.M., N.L., R.C. and G.D.I. reviewed and edited the MS. Y.M., N.L. and G.D.I. acquired the funds.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Peer review

Peer review information

Nature Neuroscience thanks Andrea Serino and the other, anonymous, reviewer(s) for their contribution to the peer review of this work.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

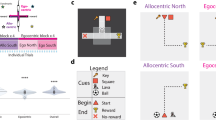

Extended Data Fig. 1 Bodypart-centred value fields arise even if touch is not directly rewarded.

a) In the main text we assumed that touch resulted in immediate reward, for the sake of simplicity. However, bodypart-centred fields can also arise if touch itself is not directly rewarded, but is a prerequisite to obtain reward at a later stage. To demonstrate this, consider the situation in which an agent must first catch an object (here, an apple) with its limb (step 1) before bringing it to its mouth (step 2) and finally obtaining the reward by ‘eating’ it (step 3). b) Value fields as a function of object and mouth position. Each column shows Q-values for a specific mouth position. Right-most column shows the average Q-values across all mouth positions. Line plots to the bottom and left of each heatmap are average Q-values along the y and x axes, respectively. In this environment, value fields still emerge around the limb, because contact is a step along the path to reward. The field shape and magnitude also depend on the position of the mouth, because intercepting the ‘apple’ when the mouth is near the hand leads to more immediate reward, and is therefore more valuable.

Extended Data Fig. 2 Individual neural response fields in all architectures used for ANN model 1.

a) Each colour map shows the activity of a single network unit as a function of the stimulus spatial position relative to the body part (body-part location is indicated as white circles). The three main rows indicate different network architectures. Full-colour plots show units classified as bodypart-centred; greyed-out plots show units not classified as bodypart-centred. Within each ANN, the proportion of bodypart-centred units per layer increased as a function of layer depth. b) Same as (a), but for ANNs that controlled two body parts instead of 1.

Extended Data Fig. 3 Modularity emerges only when multiple tasks are learned successfully, regardless of artificial neuron type.

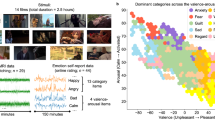

a) An ANN trained on simultaneous interception and avoidance tasks naturally adopts a modular, task-specialised structure. This example ANN consists of fewer neurons than the modular ANNs reported in the main text (see Fig. 5 and Supplementary Methods 3.2.6). Individual units are classified as threat- or goal-preferring (red and blue, respectively). b) Structural modularity in ANNs, split by the neuron types that compose the ANNs (see Supplementary Methods 3.2.6 and Supplementary Results 4.1.2). Rows indicate neuron type, while columns indicate regularization method. The histograms above each line plot show the amount of sub-network structure (indexed by a t-statistic; see Supplementary Methods 3.2.6). Histograms to the left of each line plot show network performance (indexed by expected reward per unit time). Light blue shaded area indicates above-chance sub-network structure. Network types with above-chance performance also had above-chance sub-network structure, regardless of regularization method. c) Across neuron types and regularization methods, the amount of sub-network structure (x-axis) predicted the performance of the network (y-axis). Colours indicate neuron types. Dashed line indicates zero. Light blue shaded area indicates above-chance sub-network structure. Rho and p-value result from a Pearson's correlation test. d) The same ANN as in (a) also naturally encodes task-specific variables in orthogonal spaces. Scatter plots show activity from the first 3 principal components. Information related to goal-proximity (black-to-blue scatter plot) is orthogonal to information about threat-proximity (black-to-red scatter plot). e) Orthogonality of goal and threat proximity coding in the same networks described in (b). Coloured histograms show the angle between goal- and threat-coding in the 1st 3 PCs. Light blue areas indicate angles within 15° of 90°. Grey histograms indicate null distributions (1000 permutations). f) The degree of task-encoding orthogonality in the first 3 principal components (x-axis) predicted the performance of the network (y-axis). Dashed line indicates the absolute dot product equivalent to a 75° angle difference. Light blue shaded area indicates angles within 15° of 90°. Rho and p-value result from a Pearson correlation test. g) Across neuron types and regularization methods, the amount of sub-network structure (x-axis) is predictive of the ability of the network to reconstruct Q-values for novel tasks (y-axis; correlation coefficient between original and reconstructed Q-values; see Supplementary Methods 3.2.6). The novel tasks were the same as those described in Fig. 4a of the main text. Rho and p-value result from a Partial correlation test, which factors out the effects of performance (reward per unit time) and task-encoding orthogonality. h) Across neuron types and regularization methods, the amount of task-encoding orthogonality (x-axis) is also predictive of the ability of the network to reconstruct Q-values for novel tasks (y-axis). Colours indicate neuron types. Rho and p-value result from a Partial correlation test, which factors out the effects of performance (reward per unit time) and network structure.

Extended Data Fig. 4 Fitting egocentric maps to empirical data, part 2: setup and behavioural measures.

a) To create a model egocentric map around the upper body, we created a default set of voxels on the surface of the hand, head, and trunk, which offered reward upon contact (coloured cubes). Experiment-dependent variations of these rewarded voxels are shown in b-e. For extended data fitting methodology, see Methods 'Empirical data fitting' and Supplementary Methods 3.4.1. b) To model arm-centred peripersonal neurons in macaques (Fig. 7a), we created two sets of voxels, each simulating one of the two arm positions during the experiment. c) In a subset of human experiments (I,j,l,m,n,o,p; Fig. 7e–h), the hand was held in front of the chest, instead of to the side. d) To model experiments with variable stimulus valence (n,o,p), we additionally optimised the negative reward offered by contact (Methods, 'Empirical data fitting' & Supplementary Methods 3.4.1). The optimised reward values implied by the stimuli of differing valence are shown in q. e) To model tool use (m; Fig.7h), we also rewarded contact with the tip of a tool. The two tool-related experiments used a stick and a rake, which we respectively modelled with 1 (green) or 5 (yellow) voxels. f-p) Empirical data (right in each panel) and model fits (left in each panel) from experiments in which behavioural peripersonal measures were collected. For data and fits of neural measures, see Fig. 7c–h. Colours indicate experimental conditions. Shaded surfaces on figurines show body parts included in the egocentric map. Error bars show SEM. For a detailed description of each fitted experiment, see Supplementary Methods 3.4.1. f) See Supplementary Methods 3.4.1.6 for details. g) See Supplementary Methods 3.4.1.6 for details. h) See Supplementary Methods 3.4.1.6 for details. i) See Supplementary Methods 3.4.1.6 for details. j) See Supplementary Methods 3.4.1.6 for details. k) See Supplementary Methods 3.4.1.6 for details. l) See Supplementary Methods 3.4.1.6 for details. m) See Supplementary Methods 3.4.1.7 for details. n) See Supplementary Methods 3.4.1.8 for details. o) See Supplementary Methods 3.4.1.9 for details. p) See Supplementary Methods 3.4.1.10 for details. q) Best-fitting negative reward magnitudes for the stimuli of differing valence from experiments displayed in n,o, and p.

Extended Data Fig. 5 Comparison to alternative models.

a) We fitted three model families to an empirical dataset combined from 23 published experiments across 10 different research groups. The ‘Egocentric maps’ family (top three models) is the main topic of this paper, and the ‘Q-fields (Q-learning)’ model is the specific model described in the main text. The ‘Monotonous decay’ family (middle three models) contains purely empirical models that attempt to describe the data, but without having a theoretical a-priori reason for being appropriate models. The ‘Perceptual models’ (bottom two models) have previously been used to fit individual datasets, and are largely based on the notion that peripersonal fields arise due to uncertainty in visual and auditory input, while estimating the probability that the source of the visual input makes contact with the body. We calculated all quantities for each 5 × 5 × 5cm voxel around the upper body, and fit them to the data with at least the same number of parameters as we used for Q-value fitting. The exponential and linear falloffs required two additional parameters, to fit the size and slope of the receptive fields. We parametrised the uncertainty necessary for the perceptual models by taking the same values as reported in17,20. b) Mathematical description of each model. For the ‘Egocentric maps’ models family, we display the update equation for the Q values, and underline the part of the equation that is unique to each of the three models. c) Summed error when each model is fitted to the empirical data (red line). The error of all models other than egocentric maps is larger than the error expected from a model that appropriately describes the generative mechanism behind the data (blue distribution). Models with a summed error corresponding to p < 0.05 (using the variant of chi-squared goodness of fit testing described in Methods 'Empirical data fitting') can be confidently rejected as explanations of the data. d) Metrics of fit quality relative to the ‘Q-fields (Q-learning)’ model. The normalised error (left y axis, red) is the summed error from (c) scaled by the variability of the data. Purple bars show the difference between the AIC and BIC (Akaike and Bayes Information Criterion, respectively) of each model vs the ‘Q-fields (Q-learning)’ model (right y axis, purple). A difference of >10 for AIC and BIC (indicated by dashed black lines) is commonly taken to indicate that the considered model can be rejected in favour of the reference model.

Supplementary information

Supplementary Information (download PDF )

Supplementary Methods, Results, Discussion, Figs. 1–5, Videos and References.

Source data

Source Data Fig. 2 (download XLSX )

Statistical source data.

Source Data Fig. 5 (download XLSX )

Statistical source data.

Source Data Fig. 6 (download XLSX )

Statistical source data.

Source Data Fig. 7 (download XLSX )

Statistical source data.

Source Data Extended Data Fig. 4 (download XLSX )

Statistical source data.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Bufacchi, R.J., Somervail, R., Fitzpatrick, A.M. et al. Egocentric value maps of the near-body environment. Nat Neurosci 28, 1336–1347 (2025). https://doi.org/10.1038/s41593-025-01958-7

Received:

Accepted:

Published:

Version of record:

Issue date:

DOI: https://doi.org/10.1038/s41593-025-01958-7