Abstract

Defects to popular two-dimensional (2D) transition metal dichalcogenides (TMDs) seriously lower the efficiency of field-effect transistor (FET) and depress the development of 2D materials. These atomic defects are mainly identified and researched by scanning tunneling microscope (STM) because it can provide precise measurement without harming the samples. The long analysis time of STM for locating defects in images has been solved by combining feature detection with convolutional neural networks (CNN). However, the low signal-noise ratio, insufficient data, and a large amount of TMDs members make the automatic defect detection system hard to be applied. In this study, we propose a deep learning-based atomic defect detection framework (DL-ADD) to efficiently detect atomic defects in molybdenum disulfide (MoS2) and generalize the model for defect detection in other TMD materials. We design DL-ADD with data augmentation, color preprocessing, noise filtering, and a detection model to improve detection quality. The DL-ADD provides precise detection in MoS2 (F2-scores is 0.86 on average) and good generality to WS2 (F2-scores is 0.89 on average).

Similar content being viewed by others

Background & Summary

Transition metal dichalcogenides (TMDs), as two-dimensional (2D) materials, have been predicted to have huge application potential in the solar energy and semi-conductor industry1,2,3,4,5. Nevertheless,TMDs usually obtain many defects because of the low formation energy of chalcogen vacancies6. When attempting to construct a high-performance field-effect transistor, defects can affect contact resistance7, result in Fermi-level pinning7,8, and reduce carrier mobility9,10.

To prevent defects from affecting the performance of TMD devices, researchers usually use optical methods11,12, probing techniques13,14,15,16 and transmission electron techniques17,18 to measure the defect distribution on each TMD surface. Optical methods have advantages, such as large area and element detection, especially excellent in X-ray photoelectron spectroscopy measurement19. However, the spatial resolution of optical methods cannot exceed the diffraction limit of approximately 0.1 µm and can sometimes harm the sample because of heat generated from pumping light. The problem can be conquered by applying probing techniques, particularly scanning tunneling microscopy (STM) which can provide ultra-high-resolution images without harming the sample. Because of the extremely high resolution of the image, STM measurements exhibit zero deviations and element recognition in defect density estimation. Yet, the STM measurements require scanning hundreds of images and counting thousands of defects from images one by one, which takes a lot of time. Therefore, estimating the defect density of samples by STM will require roughly dozens hours for counting defects. Artificial intelligence techniques are used to solve this problem.

Deep learning-based STM analysis provides substantial advantages but also presents problems when applying sensing defect variance in TMDs-based field-effect transistor (FET). Based on many training images, most studies have achieved high accuracy in detecting defects20,21,22. They used 3500 to 7500 images in their reports, depending on the signal-to-noise ratio. Using STM to collect this amount of data must take months. Furthermore, the STM tip is frequently exposed to residual chemical and poor conducting areas, while scanning FETs, resulting in images with highly unstable quality and low signal-to-noise ratio. Density functional theory (DFT) calculation is used to generate a large amount of mimic data to solve this problem23. However, inadequate and low-quality experiment data can still result in model overfitting and showing low accuracy.

Furthermore, an increasing number of materials that can be applied to FET will require defect detection. A well-trained model must be retrained when applying to different materials22 because the appearance of defects are changed, resulting in a large data collection cost and model training time. The combination of these problems makes detecting defects in TMD-based FETs difficult.

In this study, we propose a deep learning-based atomic defect detection framework (DL-ADD) for diagnosing defects in TMD-based FETs to address the problem of insufficient and low-quality data and to improve model generality. In DL-ADD, we design a data augmentation module, a color preprocessing module, a noise filtering module, and a detection model to reduce analysis time and improve detection accuracy. We also develop a data augmentation module to generate more pseudo data for the training model to depress overfitting. Color preprocessing, and noise filtering modules are designed to improve the quality of data. We implement U-Net24 as the detection model to accurately locate and identify the atomic defect because it can segment images faster and more precisely. Furthermore, we conduct experiments based on molybdenum disulfide (MoS2) and tungsten disulfide (WS2) images to evaluate the accuracy and the generality of DL-ADD. The defects are defined as impurities8,25 and voids13,26 since these two defects are frequently exist in MoS2 and WS2. The proposed achieves an F1-score of 0.89 for impurities and 0.80 for voids in MoS2 with an extremely small amount of data (approximately 70 images). We use the MoS2 model to differentiate the WS2 defects without retraining. The F1-score for voids in WS2 is 0.94, that is, the proposed DL-ADD can be effectively used for TMD-based FETs with limited and low-quality data and widely applied to other 2D materials.

Methods

As shown in Fig. 1, we propose a deep learning-based atomic defect detection framework (DL-ADD) for 2D materials. Three data preprocessing modules and a detection model were included. The code of DL-ADD is accessible at GitHub: https://github.com/MeatYuan/MOS2.

DL-ADD Framework.

Experimental detail

This research applies room temperature STM (RT-STM) to scan MoS2 and WS2 surfaces at vapor pressure 1 × 10−10 torr. Single crystal WS2 and MoS2 bulk were both bought from Structure Probe Inc. The WS2 and MoS2 crystals were applied with the different processes before scanned to increase the number of impurities and voids. The MoS2 sample was kept at normal pressure after cleaved by mechanical exfoliation and were transferred to make field effect transistor. The WS2 sample was cleaved at an ultra-high vacuum chamber and heated for 200 for 12 hours to increase only the number of voids. All images are scanned in constant current mode with −1 V sample bias and 1 nA tunneling current. By the help of this method, statistical property of defect density was researched16.

Data augmentation

To train an accurate model, a certain amount of data is required. However, collecting 2D material images requires much labor and takes a long time. Therefore, we designed a data augmentation process to increase the amount of training data. The process of data augmentation is shown in Fig. 2. To augment data and maintain the diversity of data, we first manually create the defect data and then augment more data using traditional augmentation methods. We cut the voids and impurities from the training data. After that, we selected a clear background of the MoS2 surface and pasted the cut defects into the background images. The proportions of impurities and voids are randomized to simulate real images. We used the Gaussian blur on images to reduce the discontinuity of the edge to make the boundary of the cut images and background smoother. Finally, we randomly choose horizontal flip, vertical flip, rotation, and shift methods to augment data.

Data Augmentation Process.

Color preprocessing

Compared to color images, the gray images are easier to remove noise and reduce data difference. Therefore, we resize images to 256 × 256 pixels and convert them to gray in this module.

Noise filtering

In scanning probe microscope research, the quality of experiment data strongly depends on tip conditions. When dealing with a rough sample (root mean square roughness above 1 nm), a tip can easily hit the sample, resulting in a large amount of data scanned in bad conditions. Under this condition, high-frequency noise shows stronger intensity than signals on the image, making it more difficult for the model to detect defects. We first use Fast Fourier Transform (FFT) on the images, then a low pass filter to remove high-frequency signals before performing inverse FFT. Finally, we adjust the images contrast higher to make the impurity and void more visible.

Detection model

We choose U-Net as the defect detection model since it can be trained with few images and achieve precise segmentation. U-Net24 is a convolutional network architecture that consists of a contracting path for downsampling and an expansive path for upsampling, as shown in Fig. 3. Six identical parts are used in the downsampling, each comprising two convolutional layers with a 3 × 3 kernel size and one max pooling layer. Also six identical parts are used in the upsampling, each comprising two convolutional layers with a 3 × 3 kernel size, one transposed convolution layer, and a concatenate with the same size as the previous feature map. Finally, we use three convolutional layers to output a 256 × 256 size image.

Model architecture.

Data Records

The actual size of MoS2 and WS2 images are separately 50 × 50 nm2 and 60 × 60 nm2 stored in Bitmap format (BMP) with 256 pixels, which is the most suitable size to distinguish and detect those defects. All of the data is kept at Open Science Framework at OSF27: https://doi.org/10.17605/OSF.IO/ZXGTJ

Technical Validation

In this section, we will evaluate DL-ADD. We first compare DL-ADD and YOLOv428 and then conduct ablation studies of image preprocessing module and data augmentation module of DL-ADD. Furthermore, to demonstrate the generality of DL-ADD, we apply it to WS2 material which also contains voids and impurities. A void can be labeled as a black dip appears on the surface, while an impurity can be labeled as bright protrusion. We scanned the entire scannable area in one MoS2 FET sample and obtained 90 images to evaluate DL-ADD in the following experiments. Seventy images are used as training dataset while twenty images are used as testing dataset.

The evaluation metrics are recall, precision, F1-score and F2-score. False Positive (FP) is referred to as pseudo defects. False Negative (FN) is the defects that the model does not detect. True Positive (TP) is the true defects that are correctly classified. F-Score is the harmonic average of precision and recall. Since the defect is more important, we add F2-score in the following result.

Model evaluation

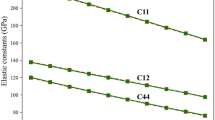

Table 1 compares DL-ADD with YOLOv4 to evaluate the model, indicating that F1-score of DL-ADD outperforms the YOLO model in terms of voids and impurities. As shown in Fig. 4, the loss of DL-ADD drops faster and more dramatically compared to YOLOv4. The difference of loss clearly indicates YOLOv4 is more difficult to converge. This may be due to the complexity of YOLOv4 increasing the necessity of data numbers. As such, the simple structure of the U-Net of DL-ADD can achieve better performance based on little data. Some of the DL-ADD and YOLO results are shown in Fig. 5.

Training and validation loss of models. DL-ADD ends training at 1600 batches.

Result of MoS2 defect detection. STM MoS2 image (left), Ground Truth (middle left), DL-ADD detection (middle right), YOLOV4 detection (right). The size of the scanning frame for each image is 50 × 50 nm2.

Data augmentation validation

In DL-ADD, we use data augmentation by manually imitating data. We validate the effect of data augmentation in Table 2. Both defects are detected more accurately after the process is used. The augmentation process primarily balance model precision and recall relief the miss-alarm problem.

Noise filtering validation

The noise signals mainly exist in the high-frequency region, while the defect signals are mostly found in the low-frequency region. Thus, we design a low pass filter in DL-ADD to improve the quality of images. We show the effects of noise filtering methods in Table 3. The results show that our procedure successfully improves the signal-to-noise ratio and significantly improves accuracy regardless of recall or precision.

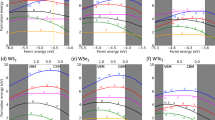

Generality validation

Some 2D materials are similar. If DL-ADD can be generalized to these materials, we can save much model training time. We apply DL-ADD which is trained with MoS2 to detect the defects of WS2. The results of detecting defects on 15 WS2 images are shown in Fig. 6 and Table 4. The F1-Score and F2-Score of DL-ADD are significantly higher than those of YOLO. The impurity data of the two training models in the MoS2 report are barely different, but the difference of void data is more obvious. Among the two materials, the accuracy of DL-ADD does not decrease too much, but the F1-Score of YOLO is less than 0.6, indicating that YOLO is completely overfitted on MoS2. That is, the generality of DL-ADD is better than YOLO.

Result of WS2 defect detection. STM WS2 image (left), Ground Truth (middle left), DL-ADD detection (middle right), YOLOv4 detection (right).The size of the scanning frame for each image is 60 × 60 nm2.

Training and inference time

In addition to improving accuracy, the detection time is essential to defect detection. We compare the training and inference times of the two models, as shown in Table 5. We use the RTX 3090 to train two models. DL-ADD takes 29 minutes to finish model training, and YOLOv4 takes 91 minutes, more than three times that of DL-ADD. In the inference speed, DL-ADD takes 0.17 seconds per image, and YOLOv4 takes 0.15 seconds per image, and there is not much difference between the two.

Usage Notes

In this study, we proposed a DL-ADD framework to efficiently detect atomic defects in TMD-based FET. In DL-ADD, we designed an image preprocessing module, a data augmentation module, and a detection model. With low quality and small amounts of data, DL-ADD performed well in detecting defects (F-score > 0.85 on average) and resisting intense noise, significantly lowering the restriction to apply this model for FET research. The good generality of DL-ADD further increased the convenience of this model, allowing to be used as a simple label tool while changing to other materials in the TMD family. Overall, DL-ADD demonstrated strong noise resistance, high accuracy, and good generality. This study lowered the threshold for using CNN models with the aforementioned properties and significantly improved the efficiency of the atomic defect detection process.

Code availability

All the code to produce the results of this paper is accessible at: https://github.com/MeatYuan/MOS2.We all use Python and jupyter notebook.

References

Balis, N., Stratakis, E. & Kymakis, E. Graphene and transition metal dichalcogenide nanosheets as charge transport layers for solution processed solar cells. Materials Today 19, 580–594 (2016).

Singh, E., Kim, K. S., Yeom, G. Y. & Nalwa, H. S. Two-dimensional transition metal dichalcogenide-based counter electrodes for dye-sensitized solar cells. RSC Adv. 7, 28234–28290 (2017).

Wu, K., Ma, H., Gao, Y., Hu, W. & Yang, J. Highly-efficient heterojunction solar cells based on two-dimensional tellurene and transition metal dichalcogenides. Journal of Materials Chemistry A 7, 7430–7436 (2019).

Rawat, B., Vinaya, M. & Paily, R. Transition metal dichalcogenide-based field-effect transistors for analog/mixed-signal applications. IEEE Trans Electron Devices 66, 2424–2430 (2019).

Liu, X. et al. High performance field-effect transistor based on multilayer tungsten disulfide. ACS nano 8, 10396–10402 (2014).

Guo, Y., Liu, D. & Robertson, J. Chalcogen vacancies in monolayer transition metal dichalcogenides and fermi level pinning at contacts. Applied Physics Letters 106, 173106 (2015).

McDonnell, S., Addou, R., Buie, C., Wallace, R. M. & Hinkle, C. L. Defect-dominated doping and contact resistance in MoS2. ACS nano 8, 2880–2888 (2014).

Bampoulis, P. et al. Defect dominated charge transport and fermi level pinning in MoS2/metal contacts. ACS Appl. Mater. Interfaces 9, 19278–19286 (2017).

Wu, Z. et al. Defects as a factor limiting carrier mobility in WSe2: A spectroscopic investigation. Nano Research 9, 3622–3631 (2016).

Lin, Z. et al. Defect engineering of two-dimensional transition metal dichalcogenides. 2D Materials 3, 022002 (2016).

Verhagen, T., Guerra, V. L., Haider, G., Kalbac, M. & Vejpravova, J. Towards the evaluation of defects in MoS2 using cryogenic photoluminescence spectroscopy. Nanoscale 12, 3019–3028 (2020).

Mignuzzi, S. et al. Effect of disorder on raman scattering of single-layer mo s 2. Physical Review B 91, 195411 (2015).

Vancsó, P. et al. The intrinsic defect structure of exfoliated MoS2 single layers revealed by scanning tunneling microscopy. Sci. Rep. 6, 1–7 (2016).

Barja, S. et al. Identifying substitutional oxygen as a prolific point defect in monolayer transition metal dichalcogenides. Nature Commun. 10, 1–8 (2019).

Tumino, F., Casari, C. S., Li Bassi, A. & Tosoni, S. Nature of point defects in single-layer MoS2 supported on Au (111). J. Phys. Chem. C 124, 12424–12431 (2020).

Chen, F.-X. R. et al. Visualizing correlation between carrier mobility and defect density in mos2 fet. Applied Physics Letters 121, 151601 (2022).

Yang, S.-H. et al. Deep learning-assisted quantification of atomic dopants and defects in 2D materials. Advanced Science 8, 2101099 (2021).

Lee, K. et al. Stem image analysis based on deep learning: Identification of vacancy defects and polymorphs of MoS2. Nano Letters (2022).

Gali, S. M., Pershin, A., Lherbier, A., Charlier, J.-C. & Beljonne, D. Electronic and transport properties in defective MoS2: impact of sulfur vacancies. J. Phys. Chem. C 124, 15076–15084 (2020).

Rashidi, M. & Wolkow, R. A. Autonomous scanning probe microscopy in situ tip conditioning through machine learning. ACS nano 12, 5185–5189 (2018).

Krull, A., Hirsch, P., Rother, C., Schiffrin, A. & Krull, C. Artificial-intelligence-driven scanning probe microscopy. Communications Physics 3, 1–8 (2020).

Gordon, O. et al. Scanning tunneling state recognition with multi-class neural network ensembles. Rev. Sci. Instrum. 90, 103704 (2019).

Choudhary, K. et al. Computational scanning tunneling microscope image database. Scientific data 8, 1–9 (2021).

Ronneberger, O., Fischer, P. & Brox, T. U-net: Convolutional networks for biomedical image segmentation. In International Conference on Medical image computing and computer-assisted intervention, 234–241 (Springer, 2015).

Schuler, B. et al. Electrically driven photon emission from individual atomic defects in monolayer WS2. Sci. Adv. 6, eabb5988 (2020).

Schuler, B. et al. How substitutional point defects in two-dimensional WS2 induce charge localization, spin–orbit splitting, and strain. ACS nano 13, 10520–10534 (2019).

Chen, F.-X. R. et al. TMD-FET defect autodetection at STM images. OSF https://doi.org/10.17605/OSF.IO/ZXGTJ (2022).

Jiang, P., Ergu, D., Liu, F., Cai, Y. & Ma, B. A review of yolo algorithm developments. Procedia Computer Science 199, 1066–1073 (2022).

Acknowledgements

This work is jointly sponsored by the National Yang Ming Chiao Tung University, National Central University, Yuan Ze University, Ministry of Education (MOE), National Science and Technology Council (NSTC), and Center for the Semiconductor Technology Research from The Featured Areas Research Center Program under the projects “Higher Education Sprout”, NSTC 110-2222-E-008 -008 -MY3, NSTC 109-2634-F-009-029 and NSTC 110-2112-M-A49 -013 -MY3, respectively.

Author information

Authors and Affiliations

Contributions

Under the supervision of Chia-Yu Lin (C.-Y. Lin) and Chun-Liang Lin (C.-L. Lin), Fu-Xiang Rikudo Chen (F.-X. R. Chen) and Hui-Ying Siao (H.-Y. Siao) constructed the idea of DL-ADD framework. The STM data was collected by Yong-Cheng Yang (Y.-C. Yang) and F.-X. R. Chen. The DL-ADD is firstly developed by H.-Y. Siao and updated by Cheng-Yuan Jian (C.-Y. Jian). The U-net model was trained by H.-Y. Siao and C.-Y. Jian, and the YOLO model was trained by F.-X.R. Chen. The manuscript was written by C.-Y Lin, C.-L. Lin, F.-X.R. Chen and C.-Y. Jian.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Chen, FX.R., Lin, CY., Siao, HY. et al. Deep learning based atomic defect detection framework for two-dimensional materials. Sci Data 10, 91 (2023). https://doi.org/10.1038/s41597-023-02004-6

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41597-023-02004-6