Abstract

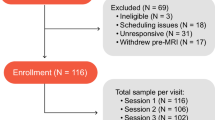

Traditional laboratory tasks offer tight experimental control but lack the richness of our everyday human experience. As a result, many cognitive neuroscientists have been motivated to adopt experimental paradigms that are more natural, such as stories and movies. Here we describe data collected from 58 healthy adult participants (aged 18–76 years) who viewed 10 minutes of a movie (The Good, the Bad, and the Ugly, 1966). Most (36) participants viewed the clip more than once, resulting in 106 sessions of data. Cortical responses were mapped using high-density diffuse optical tomography (first- through fourth nearest neighbor separations of 1.3, 3.0, 3.9, and 4.7 cm), covering large portions of superficial occipital, temporal, parietal, and frontal lobes. Consistency of measured activity across subjects was quantified using intersubject correlation analysis. Data are provided in both channel format (SNIRF) and projected to standard space (NIfTI) using an atlas-based light model. These data are suitable for methods exploration as well as investigating a wide variety of cognitive phenomena.

Similar content being viewed by others

Background & Summary

Most cognitive neuroscientists are interested in how human brains interact with the real world. To do so, we frequently create tightly controlled laboratory paradigms intended to isolate one or more aspects of sensory or cognitive processing. We hope that the findings from these purposefully artificial experiments will generalize to real-world scenarios. However, is such an assumption justified? To answer this question, and bolstered by methodological advances, cognitive neuroscience has been increasingly moving towards the use of naturalistic stimuli1,2,3,4.

For over 20 years, researchers using fMRI have explored the use of movies to provide rich stimulation for research participants including children5,6,7, healthy adults8,9,10,11,12,13, and numerous other populations14,15,16,17. Although not completely naturalistic, movies convey complex auditory and visual information covering a range of sensory, cognitive, and linguistic domains. Movies therefore provide researchers the opportunity to study processing that more closely mimics everyday experience than standard laboratory tasks. Additionally, the ability to concurrently address multiple domains of sensory and cognitive processing may make movies a more efficient way to collect data than traditional cognitive psychology paradigms. For example, 10 minutes of movie data collection might replace 30 minutes of domain-specific data collection (e.g., 10 minutes of an auditory task, 10 minutes of a visual task, 10 minutes of a language task). Movies also facilitate functional connectivity analysis which can identify brain networks based on temporal correspondence.

Optical neuroimaging, particularly functional near infrared spectroscopy (fNIRS), offers many advantages for studying human brain function. First, it is acoustically silent, avoiding the auditory confounds present in fMRI18. Second, implanted medical devices are not contraindicated, meaning optical imaging can be used on people with implanted medical devices, such as cochlear implants19,20 or implanted electrodes used for deep brain stimulation21. Finally, fNIRS facilitates real-world applications including imaging during face-to-face interaction22. These advantages make fNIRS well suited for studying cognition in context23 that includes social interaction24.

Traditionally, drawbacks associated with fNIRS include limited coverage of the cortex, uneven sensitivity over the field of view, and lack of depth information necessary for removing superficial (i.e., non-brain) hemodynamic components. These challenges motivated the development of high-density diffuse optical tomography (HD-DOT), in which a lattice of closely-spaced sources and detectors provides homogenous sensitivity and spatial resolution comparable to that obtained in fMRI (Fig. 1)21.

(a) Illustration of HD-DOT cap and optode arrangement (see ref. 21 for details). (b) Illustration of field of view in the reconstructed images.

Here, we present data collected using custom HD-DOT during movie-viewing, which complements existing open fMRI data sets of movie viewing13,25,26,27. Although the use of movies in HD-DOT has been previously explored28, our hope is that by making the current data available we will facilitate methodological and theoretical advances related to naturalistic stimulation. These include identification of quality control metrics, how to best handle estimated motion, and optimal methods for modeling quasi-continuous variables.

Method

Participants

Participants were recruited from the Washington University in Saint Louis community. Data from 58 participants are included in the data set, ranging in age from 18–76 years (M = 23; F = 35; mean age = 31 years); self-reported handedness (right = 55, left = 2, ambidextrous = 1). Forty-nine of the participants reported English as the first language they learned. In addition, participants reported having normal vision and hearing and no known history of neurological disorders. All participants gave written informed consent prior to the experiment session, which was approved by and carried out in accordance with the Human Research Protection Office at Washington University (protocol numbers 201101896, 201908154, and 202108047).

Stimulus

The stimulus was a clip of approximately 10 minutes taken from The Good, the Bad, and the Ugly (1966), a movie previously used in fMRI8 and HD-DOT28. All participants viewed between 16:48 and 27:30. A subset of the participants viewed a longer clip; in this case, their data were truncated so that all participants have the same amount of data covering an identical portion of the movie. Copyright restrictions preclude openly sharing the stimulus but guidance is available on request from the corresponding author. We did not systematically document whether participants had previously viewed the selected clip; anecdotally, most participants reported being unfamiliar with the movie.

The same movie clip was used in several projects between 2012–2022 and thus was presented along with various other tasks, including auditory, visual, motor, language, and collection of resting state data. Analyses including 7 of these participants have been published previously28 but none of the data is included in a public data set.

Procedure

Participants were seated on a comfortable chair in an acoustically isolated room facing an LCD screen located 76 cm from them, at approximately eye level, which displayed the movie. The soundtrack was presented through two speakers located approximately 150 cm away at about ±21° from the subjects’ ears. The sound level was approximately 65 dBA but not calibrated. The HD-DOT cap was fitted to the subject’s head to maximize optode-scalp coupling, assessed via real-time coupling coefficient readouts using in-house software. The movie was presented using Psychophysics Toolbox 329 (RRID SCR_002881) in MATLAB.

Data acquisition

Data was acquired using two different continuous wave HD-DOT caps. The first cap had 96 sources and 92 detectors (LED; 750 nm and 850 nm)21 and was used for 9 participants. This device has been previously described in detail, and been validated against fMRI for mapping localizer tasks, movie viewing, and resting state functional connectivity21. The second cap was built by attaching an additional pad on top of the previous cap and had 128 sources and 125 detectors (Laser diode; 685 nm and 830 nm) and was used in the remaining 49 participants. Source-detector pairs were arranged on both caps to enable first- through fourth nearest neighbor separations of 1.3, 3.0, 3.9, and 4.7 cm, respectively. Our in-house software controlled temporal, frequency, and spatial encoding patterns which achieved an overall framerate of 10 Hz.

Full measurement sets are available in the raw data formats (NeuroDOT-compatible .mat files and SNIRF). In order to make the analyses in this paper consistent, we have removed all the measurements from the additional motor pad in 49 participants that were scanned using the bigger cap to contain the same 96 sources and 92 detectors as the other subjects in the preprocessed data formats (NIfTI) and all the analyses presented in this paper.

Data processing

Data processing is schematically illustrated in Fig. 2. Data preprocessing was done using the NeuroDOT toolbox (https://www.nitrc.org/projects/neurodot) based on the principles of modeling light emission, diffusion, and detection through the head20,30. Data processing steps included taking the log-mean ratio of the light levels for each time-point and the temporal mean across the run (as a baseline value). This step was followed by excluding any source-detector measurement that had a temporal standard deviation of 7.5% in the least-noisy 60 sec (lowest mean GVTD)31 of each run or higher to exclude any noisy measurements due to poor optode-scalp coupling or movement. In summary, the percentage of measurements remained for each source-detector separation in this dataset was (mean ± STD): 99 ± 1% out of 644 first nearest-neighbor pairs, 95 ± 5% out of 1068 second nearest-neighbor pairs. Due to low SNR, we did not use the measurements beyond second nearest neighbors. The measurements were then high-pass filtered (0.02 Hz cutoff) to remove low-frequency drift. We then estimated and regressed the global superficial signal as the average of all first nearest neighbor measurements (1.3 cm source-detector pair separation)32. Following that, data were low-pass filtered to 0.5 Hz cutoff to remove cardiac oscillations. These frequencies were chosen in all previous studies that were published using the existing HD-DOT devices and are consistent with best practices33. Data were downsampled from 10 Hz to 1 Hz and then used for image reconstruction.

Schematic of data processing and file formats. The data are provided in three different formats: SNIRF, NeuroDOT, and NIfTI.

We then computed a forward model of light propagation based on the two wavelengths used for each device on an anatomical atlas including the non-uniform tissue structures: scalp, skull, CSF, gray matter, and white matter34. The resulting sensitivity matrix was then inverted for calculating the relative changes in absorption at the two wavelengths via reconstruction using Tikhonov regularization and spatially variant regularization21. Relative changes in oxygenated, deoxygenated, and total hemoglobin (ΔHbO, HbR, ΔHbT) were then computed using the absorption and extinction coefficients of oxygenated and deoxygenated hemoglobin at the two wavelengths. We resampled all data to a 3 × 3 × 3 mm standard atlas using a linear affine transformation.

The preprocessed data were then converted to the NIfTI file format for analysis and sharing purposes. Intersubject correlation analysis was performed using the automatic analysis (aa) environment35, version 5.8. Additionally, the ndot2snirf function in NeuroDOT was used to convert the raw data to SNIRF36 file format followed by snirf2bids function to generate other necessary metadata files to satisfy the BIDS specification for NIRS36,37. In addition to using these standard functions, the NIfTI files were cropped to align with the actual start and end points of the movie stimulus.

Technical Validation

Data quality

To quantify data quality across sessions we focused on two measures intended to capture effects of participant motion and light levels (Fig. 3). Because we do not have objective measures of motion (e.g., photometry or accelerometers), we rely on a signal-based proxy for motion: global variance of temporal derivatives (GVTD)31. GVTD sums the temporal derivatives of light levels over voxels as a parsimonious way of summarizing fluctuations in signal intensity, conceptually similar to the DVARS measure sometimes used in fMRI40,41. Previous studies have shown that GVTD is highly correlated with accelerometer-based measures of motion31.

Quality control measures. (a) Mean light levels over the cap for a sample participant (850 nm light). (b) Top: example temporal derivative time traces from source detector distances between 1–19 mm, 20–29 mm, and 30–40 mm respectively, for 850 nm light in the same sample participant; Bottom: GVTD over the same time period. (c) Scatter plot of GVTD (proxy for motion) and pulse SNR levels for all sessions.

We used the heartbeat (pulse) signal-to-noise ratio (SNR) as an indicator of detecting physiological signals in the data. Data with low levels of pulse SNR are more likely to have low SNR levels for the hemodynamics frequencies as well. This is mainly due to a poor optode-scalp coupling. The pulse SNR was calculated using the NeuroDOT function PlotCapPhysiologyPower as the proportion of the pulse power (based on the peak FFT magnitudes in the 0.5–2 Hz window) of the optical density signal divided by a noise floor (based on the FFT magnitudes in the 0.5–2 Hz window)20.

High quality data will contain low levels of GVTD and high levels of SNR. This is because GVTD is correlated with motion level (which is not desirable) and pulse SNR is correlated with hemodynamic signal SNR (which is desirable).

Intersubject correlation analyses

In an intersubject correlation analysis, the similarity of time courses is computed for the same voxel in each pair of participants; averaging these values provides an average intersubject correlation value for every voxel of the brain42. Numerous studies have used intersubject correlation analysis to identify regions of the brain which show similar patterns of activity across a group of participants43,44,45. For movie viewing, these values are typically higher in sensory regions (e.g., visual cortex and auditory cortex) than regions associated with complex linguistic or executive processing8,25. One benefit of intersubject correlation analysis is that it is sensitive to shifts in the timing of activity across participants. Thus, demonstrating reasonable intersubject correlation values in sensory regions suggests the time courses across participants are correctly temporally aligned.

As part of our technical validation, we therefore performed an intersubject correlation analysis (Fig. 4a). Because not all participants had multiple sessions of movie data, we restricted our analysis to the session with the highest pulse SNR from each participant. The average signal was subtracted from each frame and a Pearson correlation coefficient was then computed voxelwise using the corrected data for all pairs of subjects. We see the highest values in auditory and visual cortices, broadly consistent with prior studies in both fMRI8 and HD-DOT28.

Intersubject correlation analysis. (a) Correlation map shows the average pairwise correlation across all subjects. Maximum r values are observed in sensory areas. The SNR in these subjects, as quantified by pulse amplitude, ranged from approximately 3 to 25. (b) A histogram of pulse values, and used to separate subjects into low (red), medium (blue), and high (green) SNR groups. (c) Intersubject correlation maps obtained for subjects grouped by SNR. High correlations extend beyond sensory areas in the highest SNR group.

We then placed participants into one of three groups based on pulse amplitude measured in the optical signal (low group: 3.0 < pulse ≤ 6.0; med: 6.0 < pulse ≤ 14.5; high: 14.5 < pulse < 25.5) (Fig. 4b). As shown in Fig. 4c, we observed that r values were notably higher in subjects in the high pulse amplitude group compared to those in the medium and low groups, supporting our use of pulse amplitude as a quality control measure.

Finally, we wanted to make sure that the spatial pattern of our intersubject correlation maps was not a result of artifacts in the data. We adopted a censoring approach using GVTD to exclude frames with high variance (likely driven by motion).

We used a GVTD threshold of 5E-04 to identify outliers. If the GVTD of a given frame exceeded this threshold in either subject during pairwise correlations, the frame was excluded when computing the correlation. Results from this analysis are shown in Fig. 5. Although the absolute correlation values are reduced, we see a similar spatial pattern to the intersubject correlation maps, consistent with the data being correctly temporally aligned across participants.

Results of intersubject correlation after adjusting for motion artifacts. Layout mirrors the organization of Fig. 4. (a) Correlation map shows the average pairwise Pearson correlation across all subjects. Maximum r values are observed in sensory areas. (b) A histogram showing the number of participants who had different numbers of frames excluded for exceeding the GVTD threshold. (c) Intersubject correlation maps on participants grouped by pulse SNR. (Pulse SNR was calculated prior to censoring, so these groups of participants are the same as those shown in Fig. 4.) (d) Histograms of voxel values in the three SNR groups. Mean and standard deviation shown at upper right. An average correlation value was calculated for all pairwise maps in each group and analyzed using Welsh’s ANOVA, which found a significant difference exists between the group means (F = 13.4, p = 4.2E-06).

Data availability

The dataset has been deposited to OpenNeuro (accession number ds004569) and is available from https://openneuro.org/datasets/ds004569/.

Code availability

Code used for technical validation is available from http://github.com/jpeelle/GBUDOT.

References

Sonkusare, S., Breakspear, M. & Guo, C. Naturalistic Stimuli in Neuroscience: Critically Acclaimed. Trends Cogn. Sci. 23, 699–714 (2019).

Zaki, J. & Ochsner, K. The need for a cognitive neuroscience of naturalistic social cognition. Ann. N. Y. Acad. Sci. 1167, 16–30 (2009).

Nastase, S. A., Goldstein, A. & Hasson, U. Keep it real: rethinking the primacy of experimental control in cognitive neuroscience. Neuroimage 222, 117254 (2020).

Finn, E. S., Glerean, E., Hasson, U. & Vanderwal, T. Naturalistic imaging: The use of ecologically valid conditions to study brain function. Neuroimage 247, 118776 (2022).

Richardson, H., Lisandrelli, G., Riobueno-Naylor, A. & Saxe, R. Development of the social brain from age three to twelve years. Nat. Commun. 9, 1027 (2018).

Yates, T. S., Ellis, C. T. & Turk-Browne, N. B. Emergence and organization of adult brain function throughout child development. Neuroimage 226, 117606 (2021).

Tansey, R. et al. Functional MRI responses to naturalistic stimuli are increasingly typical across early childhood. Dev. Cogn. Neurosci. 62, 101268 (2023).

Hasson, U., Nir, Y., Levy, I., Fuhrmann, G. & Malach, R. Intersubject synchronization of cortical activity during natural vision. Science 303, 1634–1640 (2004).

Naci, L., Cusack, R., Anello, M. & Owen, A. M. A common neural code for similar conscious experiences in different individuals. Proc. Natl. Acad. Sci. USA. 111, 14277–14282 (2014).

Caldinelli, C. & Cusack, R. The fronto-parietal network is not a flexible hub during naturalistic cognition. Hum. Brain Mapp. 43, 750–759 (2022).

Chen, J. et al. Shared memories reveal shared structure in neural activity across individuals. Nat. Neurosci. 20, 115–125 (2017).

Zacks, J. M. et al. Human brain activity time-locked to perceptual event boundaries. Nat. Neurosci. 4, 651–655 (2001).

Aliko, S., Huang, J., Gheorghiu, F., Meliss, S. & Skipper, J. I. A naturalistic neuroimaging database for understanding the brain using ecological stimuli. Sci Data 7, 347 (2020).

Musz, E., Loiotile, R., Chen, J., Cusack, R. & Bedny, M. Naturalistic stimuli reveal a sensitive period in cross modal responses of visual cortex: Evidence from adult-onset blindness. Neuropsychologia 172, 108277 (2022).

Loiotile, R. E., Cusack, R. & Bedny, M. Naturalistic Audio-Movies and Narrative Synchronize “Visual” Cortices across Congenitally Blind But Not Sighted Individuals. J. Neurosci. 39, 8940–8948 (2019).

Yao, S. et al. Movie-watching fMRI for presurgical language mapping in patients with brain tumour. J. Neurol. Neurosurg. Psychiatry 93, 220–221 (2022).

Lyons, K. M., Stevenson, R. A., Owen, A. M. & Stojanoski, B. Examining the relationship between measures of autistic traits and neural synchrony during movies in children with and without autism. Neuroimage Clin 28, 102477 (2020).

Peelle, J. E. Methodological challenges and solutions in auditory functional magnetic resonance imaging. Front. Neurosci. 8, 253 (2014).

Saliba, J., Bortfeld, H., Levitin, D. J. & Oghalai, J. S. Functional near-infrared spectroscopy for neuroimaging in cochlear implant recipients. Hear. Res. 338, 64–75 (2016).

Sherafati, A. et al. Prefrontal cortex supports speech perception in listeners with cochlear implants. Elife 11 (2022).

Eggebrecht, A. T. et al. Mapping distributed brain function and networks with diffuse optical tomography. Nat. Photonics 8, 448–454 (2014).

Hirsch, J. et al. Interpersonal Agreement and Disagreement During Face-to-Face Dialogue: An fNIRS Investigation. Front. Hum. Neurosci. 14, 606397 (2020).

Thomas, A. K. Studying cognition in context to identify universal principles. Nature Reviews Psychology 2, 453–454 (2023).

Dingemanse, M. et al. Beyond Single-Mindedness: A Figure-Ground Reversal for the Cognitive Sciences. Cogn. Sci. 47, e13230 (2023).

Visconti di Oleggio Castello, M., Chauhan, V., Jiahui, G. & Gobbini, M. I. An fMRI dataset in response to “The Grand Budapest Hotel”, a socially-rich, naturalistic movie. Scientific Data 7, 1–9 (2020).

Liu, X., Zhen, Z., Yang, A., Bai, H. & Liu, J. A manually denoised audio-visual movie watching fMRI dataset for the studyforrest project. Sci. Data 6 (2019).

Hanke, M. et al. A studyforrest extension, simultaneous fMRI and eye gaze recordings during prolonged natural stimulation. Sci. Data 3, 160092 (2016).

Fishell, A. K., Burns-Yocum, T. M., Bergonzi, K. M., Eggebrecht, A. T. & Culver, J. P. Mapping brain function during naturalistic viewing using high-density diffuse optical tomography. Sci. Rep. 9, 11115 (2019).

Brainard, D. H. The Psychophysics Toolbox. Spat. Vis. 10, 433–436 (1997).

Eggebrecht, A. T. & Culver, J. P. NeuroDOT: an extensible Matlab toolbox for streamlined optical functional mapping. in Diffuse Optical Spectroscopy and Imaging VII vol. 11074 110740M (International Society for Optics and Photonics, 2019).

Sherafati, A. et al. Global motion detection and censoring in high-density diffuse optical tomography. Hum. Brain Mapp., https://doi.org/10.1002/hbm.25111 (2020).

Gregg, N. M., White, B. R., Zeff, B. W., Berger, A. J. & Culver, J. P. Brain specificity of diffuse optical imaging: improvements from superficial signal regression and tomography. Front. Neuroenergetics 2 (2010).

Yücel, M. A. et al. Best practices for fNIRS publications. Neurophotonics 8, 012101 (2021).

Ferradal, S. L., Eggebrecht, A. T., Hassanpour, M. S., Snyder, A. Z. & Culver, J. P. Atlas-based head modeling and spatial normalization for high-density diffuse optical tomography: In vivo validation against fMRI. Neuroimage 85, 117–126 (2014).

Cusack, R. et al. Automatic analysis (aa): Efficient neuroimaging workflows and parallel processing using Matlab and XML. Front. Neuroinform. 8, 90 (2015).

Tucker, S. et al. Introduction to the shared near infrared spectroscopy format. Neurophotonics 10, 013507 (2023).

Gorgolewski, K. J. et al. The brain imaging data structure, a format for organizing and describing outputs of neuroimaging experiments. Sci Data 3, 160044 (2016).

Sherafati, A. et al. A high-density diffuse optical tomography dataset of naturalistic viewing. OpenNeuro https://doi.org/10.18112/openneuro.ds004569.v1.1.0 (2025).

Markiewicz, C. J. et al. The OpenNeuro resource for sharing of neuroscience data. Elife 10, e71774 (2021).

Smyser, C. D., Snyder, A. Z. & Neil, J. J. Functional connectivity MRI in infants: exploration of the functional organization of the developing brain. Neuroimage 56, 1437–1452 (2011).

Power, J. D., Barnes, K. A., Snyder, A. Z., Schlaggar, B. L. & Petersen, S. E. Spurious but systematic correlations in functional connectivity MRI networks arise from subject motion. Neuroimage 59, 2142–2154 (2012).

Nastase, S. A., Gazzola, V., Hasson, U. & Keysers, C. Measuring shared responses across subjects using intersubject correlation. Soc. Cogn. Affect. Neurosci. 14, 667–685 (2019).

Jangraw, D. C. et al. Inter-subject correlation during long narratives reveals widespread neural correlates of reading ability. Neuroimage 282, 120390 (2023).

Rowland, S. C., Hartley, D. E. H. & Wiggins, I. M. Listening in naturalistic scenes: What can functional near-infrared spectroscopy and intersubject correlation analysis tell us about the underlying brain activity? Trends Hear. 22, 2331216518804116 (2018).

Dikker, S. et al. Brain-to-Brain Synchrony Tracks Real-World Dynamic Group Interactions in the Classroom. Curr. Biol. 27, 1375–1380 (2017).

Acknowledgements

This work was supported by R01 DC019507, R21 DC016086, R21 DC015884, R01 MH122751, K01 MH103594, R21 NS098020, and T32 EB014855 from the US National Institutes of Health. We thank Tessa G. George, Kelsey T. King, Karla M. Bergonzi, and Tracy M. Burns-Yocum for assistance with data collection.

Author information

Authors and Affiliations

Contributions

J.E.P. conceived of the data sharing effort. A.F., A.S., A.B., M.M., and C.H.L. collected the data. E.S., A.B., and A.S. oversaw data conversion and organization. A.B., A.S., M.S.J. completed preprocessing and quality control analyses. M.S.J. conducted the intersubject correlation analyses. The manuscript was drafted by J.E.P., A.S., A.B., and M.S.J., and critically reviewed by all authors. J.E.P., J.P.C., A.T.E., and T.H. obtained funding.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Sherafati, A., Bajracharya, A., Jones, M.S. et al. A high-density diffuse optical tomography dataset of naturalistic viewing. Sci Data 12, 1762 (2025). https://doi.org/10.1038/s41597-025-06041-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41597-025-06041-1