Abstract

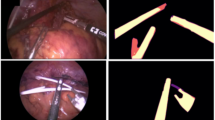

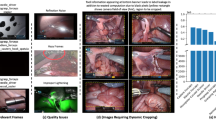

Localisation of surgical tools constitutes a foundational building block for computer-assisted interventional technologies. Works in this field typically focus on training deep learning models to perform segmentation tasks. Performance of learning-based approaches is limited by the availability of diverse annotated data. We argue that skeletal pose annotations are a more efficient annotation approach for surgical tools, striking a balance between richness of semantic information and ease of annotation, thus allowing for accelerated growth of available annotated data. To encourage adoption of this annotation style, we present, ROBUST-MIPS, a combined tool pose and tool instance segmentation dataset derived from the existing ROBUST-MIS dataset. Our enriched dataset facilitates the joint study of these two annotation styles and allow head-to-head comparison on various downstream tasks. To demonstrate the adequacy of pose annotations for surgical tool localisation, we set up a simple benchmark using popular pose estimation methods and observe high-quality results. To ease adoption, together with the dataset, we release our benchmark models and custom tool pose annotation software.

Similar content being viewed by others

Data availability

The ROBUST-MIPS dataset generated and analyzed in this study is publicly available at https://doi.org/10.7303/syn6402338121. The imaging data used to construct this dataset were obtained from the publicly available ROBUST-MIS dataset, accessible via https://doi.org/10.7303/syn1877962422.

Code availability

The annotation software is made public at https://github.com/cai4cai/tool-pose-annotation-gui. We also release the code for benchmark training at https://github.com/cai4cai/ROBUST_MIPS_toolpose. It also contains scripts for converting the data to the COCO format.

References

Ríos, M. S. et al. Cholec80-CVS: An open dataset with an evaluation of Strasberg’s critical view of safety for AI. Scientific Data 10, 194 (2023).

Gruijthuijsen, C. et al. Robotic endoscope control via autonomous instrument tracking. Frontiers in Robotics and AI 9, 832208 (2022).

Ronneberger, O., Fischer, P. & Brox, T. U-net: Convolutional networks for biomedical image segmentation. In Medical image computing and computer-assisted intervention–MICCAI 2015: 18th international conference, Munich, Germany, October 5-9, 2015, proceedings, part III 18, 234–241 (Springer, 2015).

García-Peraza-Herrera, L. C. et al. ToolNet: Holistically-nested real-time segmentation of robotic surgical tools. In 2017 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 5717–5722 (2017).

Alabi, O. et al. CholecInstanceSeg: A tool instance segmentation dataset for laparoscopic surgery. Scientific Data 12, 1–12 (2025).

Twinanda, A. P. et al. EndoNet: a deep architecture for recognition tasks on laparoscopic videos. IEEE Transactions on Medical Imaging 36, 86–97 (2016).

Hong, W.-Y. et al. CholecSeg8k: a semantic segmentation dataset for laparoscopic cholecystectomy based on Cholec80. arXiv preprint arXiv:2012.12453 (2020).

Roß, T. et al. Comparative validation of multi-instance instrument segmentation in endoscopy: Results of the ROBUST-MIS 2019 challenge. Medical Image Analysis 70, 101920 (2021).

Cao, Z., Simon, T., Wei, S.-E. & Sheikh, Y. Realtime multi-person 2d pose estimation using part affinity fields. In Proceedings of the IEEE conference on computer vision and pattern recognition, 7291–7299 (2017).

Peng, J., Chen, Q., Kang, L., Jie, H. & Han, Y. Autonomous recognition of multiple surgical instruments tips based on arrow OBB-YOLO network. IEEE Transactions on Instrumentation and Measurement 71, 1–13 (2022).

De Backer, P. et al. Multicentric exploration of tool annotation in robotic surgery: lessons learned when starting a surgical artificial intelligence project. Surgical Endoscopy 36, 8533–8548 (2022).

Du, X. et al. Articulated multi-instrument 2-d pose estimation using fully convolutional networks. IEEE Transactions on Medical Imaging 37, 1276–1287 (2018).

Sznitman, R. et al. Data-driven visual tracking in retinal microsurgery. In Ayache, N., Delingette, H., Golland, P. & Mori, K. (eds.) Medical Image Computing and Computer-Assisted Intervention – MICCAI 2012, 568–575 (Springer Berlin Heidelberg, Berlin, Heidelberg, 2012).

Reiter, A., Allen, P. K. & Zhao, T. Feature classification for tracking articulated surgical tools. In Medical Image Computing and Computer-Assisted Intervention–MICCAI 2012: 15th International Conference, Nice, France, October 1-5, 2012, Proceedings, Part II 15, 592–600 (Springer, 2012).

Ghanekar, B., Johnson, L. R., Laughlin, J. L., O’Malley, M. K. & Veeraraghavan, A. Video-based surgical tool-tip and keypoint tracking using multi-frame context-driven deep learning models. In 2025 IEEE 22nd International Symposium on Biomedical Imaging (ISBI), 1–5 (IEEE, 2025).

Gao, Y. et al. Jhu-isi gesture and skill assessment working set (jigsaws): A surgical activity dataset for human motion modeling. In MICCAI workshop: M2cai, vol. 3, 3 (2014).

Wu, Z. et al. Surgpose: a dataset for articulated robotic surgical tool pose estimation and tracking. In 2025 IEEE International Conference on Robotics and Automation (ICRA), 10507–10514 (2025).

Rueckert, T. et al. Comparative validation of surgical phase recognition, instrument keypoint estimation, and instrument instance segmentation in endoscopy: Results of the phakir 2024 challenge https://arxiv.org/abs/2507.16559 2507.16559 (2025).

Maier-Hein, L. et al. Heidelberg colorectal data set for surgical data science in the sensor operating room. Scientific Data 8, 101 (2021).

Budd, C., Garcia-Peraza Herrera, L. C., Huber, M., Ourselin, S. & Vercauteren, T. Rapid and robust endoscopic content area estimation: A lean GPU-based pipeline and curated benchmark dataset. Computer Methods in Biomechanics and Biomedical Engineering: Imaging & Visualization 11, 1215–1224 (2023).

Han, Z. et al. Robust-mips: A combined segmentation and skeletal representation dataset for surgical instruments in laparoscopic surgery https://doi.org/10.7303/SYN64023381 (2025).

Roß, T. et al. Robust medical instrument segmentation (robust-mis) challenge 2019 https://doi.org/10.7303/SYN18779624 (2019).

Lin, T.-Y. et al. Microsoft COCO: Common objects in context. In Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6-12, 2014, Proceedings, Part V 13, 740–755 (Springer, 2014).

Zheng, C. et al. Deep learning-based human pose estimation: A survey. ACM Computing Surveys 56, 1–37 (2023).

Jiang, T. et al. RTMPose: Real-time multi-person pose estimation based on MMPose. arXiv preprint arXiv:2303.07399 (2023).

Xiao, B., Wu, H. & Wei, Y. Simple baselines for human pose estimation and tracking. In European Conference on Computer Vision (ECCV) (2018).

Xu, Y., Zhang, J., Zhang, Q. & Tao, D. VITPose: Simple vision transformer baselines for human pose estimation. Advances in Neural Information Processing Systems 35, 38571–38584 (2022).

MMPose Contributors. OpenMMLab pose estimation toolbox and benchmark. https://github.com/open-mmlab/mmpose (2020).

Li, Y. et al. SimCC: A simple coordinate classification perspective for human pose estimation. In European Conference on Computer Vision, 89–106 (Springer, 2022).

Acknowledgements

Data Sources: We would like to thank the authors of the ROBUST-MIS dataset for making their data publicly available, which served as the foundation for this work. Funding Sources: This work was supported by core funding from Wellcome/EPSRC [WT203148/Z/16/Z; NS/A000049/1]. Additional support was received from the European Union’s Horizon 2020 research and innovation programme under grant agreement No. 101016985 (FAROS project), and from Wellcome [WT223880/Z/21/Z]. For the purpose of open access, the authors have applied a CC BY public copyright licence to any Author Accepted Manuscript version arising from this submission.

Author information

Authors and Affiliations

Contributions

Zhe Han: Data curation, Methodology, Validation, Writing- Original draft preparation. Charlie Budd: Software, Data curation, Writing- Reviewing and Editing. Gongyu Zhang: Writing- Reviewing and Editing. Huanyu Tian: Data curation, Writing- Reviewing and Editing. Christos Bergeles: Supervision. Tom Vercauteren: Conceptualisation, Supervision.

Corresponding authors

Ethics declarations

Competing interests

T.V. is a co-founder and shareholder of Hypervision Surgical Ltd, London, UK. The authors declare that they have no other conflict of interest.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Han, Z., Budd, C., Zhang, G. et al. ROBUST-MIPS: A Combined Skeletal Pose and Instance Segmentation Dataset for Laparoscopic Surgical Instruments. Sci Data (2026). https://doi.org/10.1038/s41597-026-06938-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41597-026-06938-5