Abstract

Age-related macular degeneration (AMD) and diabetic macular edema (DME) are significant causes of blindness worldwide. The prevalence of these diseases is steadily increasing due to population aging. Therefore, early diagnosis and prevention are crucial for effective treatment. Classification of Macular Degeneration OCT Images is a widely used method for assessing retinal lesions. However, there are two main challenges in OCT image classification: incomplete image feature extraction and lack of prominence in important positional features. To address these challenges, we proposed a deep learning neural network model called MSA-Net, which incorporates our proposed multi-scale architecture and spatial attention mechanism. Our multi-scale architecture is based on depthwise separable convolution, which ensures comprehensive feature extraction from multiple scales while minimizing the growth of model parameters. The spatial attention mechanism is aim to highlight the important positional features in the images, which emphasizes the representation of macular region features in OCT images. We test MSA-NET on the NEH dataset and the UCSD dataset, performing three-class (CNV, DURSEN, and NORMAL) and four-class (CNV, DURSEN, DME, and NORMAL) classification tasks. On the NEH dataset, the accuracy, sensitivity, and specificity are 98.1%, 97.9%, and 98.0%, respectively. After fine-tuning on the UCSD dataset, the accuracy, sensitivity, and specificity are 96.7%, 96.7%, and 98.9%, respectively. Experimental results demonstrate the excellent classification performance and generalization ability of our model compared to previous models and recent well-known OCT classification models, establishing it as a highly competitive intelligence classification approach in the field of macular degeneration.

Similar content being viewed by others

Introduction

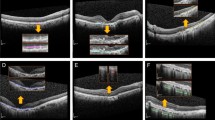

Age-related macular degeneration (AMD) is leading causes of blindness worldwide, accounting for 8.7% of the total global blindness. It is particularly prevalent among individuals aged 60 and above in developed countries1,2. Additionally, AMD significantly affects the visual function and quality of life of elderly individuals worldwide. Diabetic retinopathy (DR) is another major cause of visual impairment and blindness among adults globally, with diabetic macular edema (DME) being the primary cause of vision impairment in diabetic patients3. AMD is commonly classified as either dry AMD or wet AMD4. Dry AMD represents approximately 80% to 85% of all cases5. It is characterized by the accumulation of retinal deposits, known as drusen, which is the most prevalent morphological type. Dry AMD has the potential to progress to wet AMD6. Wet AMD, on the other hand, accounts for the remaining 15% to 20%5 and is characterized by the growth of choroidal neovascularization (CNV) membrane, leading to retinal hemorrhage and exudation that severely affects vision6. DME occurs when there is an abnormal accumulation of fluid in the macular area under diabetic conditions, resulting in retinal thickening near or involving the fovea. The prevalence of DME varies significantly, ranging from 4.2 to 14.3% in type 1 diabetes mellitus (T1DM) population and from 1.4 to 5.57% in the type 2 diabetes mellitus (T2DM) population7,8. Optical coherence tomography (OCT) is a widely used non-invasive tool that provides detailed imaging of each layer of the retina9. Therefore, Ophthalmologists rely on OCT for accurate assessment of retinal diseases such as AMD and DME3,10. Figure 1 illustrate OCT images of Drusen, CNV, DME, and Normal retina.

Illustrates OCT examples from the University of California San Diego dataset. (a) Example of CNV; (b) example of DME; (c) example of DRUSEN; (d) example of NORMAL. The red boxes indicate the lesion areas in the OCT example images.

Currently, there is no effective cure for dry AMD, and research is being conducted to develop interventions that can be implemented during the optimal period. The primary treatment for wet AMD involves the direct injection of anti-VEGF drugs into the vitreous humor of the eye, known as intravitreal injection11. However, the use of anti-VEGF drugs is associated with high costs1, significantly increasing the economic burden on patients. Meanwhile, research indicates that smokers are at a higher risk of further progression of AMD compared to non-smokers12, highlighting the importance of early diagnosis in mitigating the impact of smoking on AMD. Similarly, the pathogenesis of DME involves various factors and pathways, making it crucial to determine treatment strategies through early diagnosis3. Therefore, it is beneficial to perform classification of OCT images for these types of ocular diseases. However, ophthalmologists face a significant burden due to a shortage of ophthalmologists and a large number of patients. To address this issue, we proposed an automated classification method for screening retinal OCT images, aiming to achieve timely prevention and intervention.

Automated classification and diagnosis of OCT images for AMD and DME can be accomplished using deep learning methods. Currently, there are many research approaches for image classification, with convolutional neural network (CNN) methods are the most commonly employed. For example, AlexNet13 classifies images by extracting their features and is one of the earliest and most famous neural networks. Subsequently, excellent neural network architectures such as VGG14, GoogLeNet15, ResNet16, DenseNet17, and EfficientNet18 have emerged, all of which perform well in image classification tasks. By comprehending these well-known network structures, we have discovered that the EfficientNetB0 network framework possesses relatively fewer parameters while delivering exceptional performance in image classification tasks. Therefore, we have made improvements to the attention mechanism module based on the EfficientNetB0 network framework. The features of the macular region in retinal OCT images are concentrated in a specific area. In order to enhance the representation of this feature in the image, we have added a spatial attention mechanism (SA) on top of the existing Squeeze-and-Excitation (SE) channel attention mechanism. In addition, compared to traditional multi-scale feature structures, we have proposed a lightweight multi-scale feature extraction and fusion module that complements the improved attention mechanism. This enables the neural network to capture more features from OCT images and pay closer attention to important pathological features in the images, thereby enabling precise classification screening of OCT images for AMD and DME. In this study, we conducted experiments on publicly available datasets from Noor Eye Hospital (NEH)19 and the University of California San Diego (UCSD)20. The objective of the experiments was to classify four types of OCT images: DRUSEN, CNV, DME, and NORMAL. The experimental results demonstrate that our improved model outperforms several other advanced frameworks for retinal OCT image classification.

Related works

OCT is the current imaging modality to be used to detect and diagnose various eye diseases non-invasively, such as glaucoma, macular degeneration, diabetic retinopathy, etc. In recent years, with the rapid development of deep learning technology, automated classification and diagnosis based on retinal OCT images have become a research hotspot. Deep learning models can learn rich feature representations from large-scale retinal image data, thereby achieving accurate classification and diagnosis of eye diseases. In this section, we will provide a detailed explanation of the main research methods in OCT image classification in recent years and explain the motivation behind our proposed method.

In 2013, Albarrak et al.21 proposed a method for feature extraction of 140 3D OCT images based on volume decomposition combined with local feature extraction. They then used a Bayesian classifier for classification. This method enhances the accuracy of feature extraction and facilitates classification. In 2014, Srinivasan et al.22 introduced an algorithm that utilized multi-scale histograms with directional gradient descriptors as the feature extraction module and employed support vector machines as the classifier. This approach was applied to classify OCT images of NORMAL, AMD, and DME subjects. While this method achieved satisfactory results in the classification of AMD and DME, its performance in classifying NORMAL images was inadequate, which could potentially lead to misjudgments. In 2016, Deng et al.23 proposed a method for extracting texture features using Gabor filter banks and nonlinear energy transformations. They employed random forests, support vector machines, and neural networks as classifiers to categorize Healthy, dry AMD, and wet AMD OCT images. However, due to insufficient data, the discrimination of early-stage AMD was not clear. In the same year, Wang et al.24 presented a feature extraction method based on the linear configuration pattern (LCP) using the Correlation-based Feature Subset Selection (CFS) algorithm. They utilized several classifiers including Sequential Minimal Optimization (SMO), Backpropagation (BP) neural networks, Multi-layer Perceptrons, Support Vector Machines (SVM), and Logistic Regression (LR) to classify AMD, DME, and NORMAL OCT images. Their experiments concluded that the SMO algorithm exhibited the best performance among the selected classification algorithms. In 2018, Kermany et al.25 employed the InceptionV3 architecture for classifying CNV, DME, DRUSEN, and NORMAL data. They pre-trained the model on the ImageNet dataset and then conducted transfer learning on the UCSD dataset. This study utilized transfer learning to reduce the model's dependence on large datasets. That same year, Kaymak et al.26 utilized AlexNet for training on a large-scale OCT dataset. They achieved superior results compared to Kermany et al.'s25 transfer learning method. This superiority was attributed to the better training of the model with the large-scale OCT dataset compared to transfer learning. In 2019, Fang et al. (JVCIR)27 proposed the Iterative Fusion Convolutional Neural Network (IFCNN) for automated classification of retinal OCT images. This method utilized an iterative fusion strategy to combine features from current convolutional layers with those from previous layers, thereby improved classification accuracy. Additionally, Fang et al. (TMI)28 introduced a novel Lesion-aware Convolutional Neural Network (LACNN) integrated with a Lesion Detection Network (LDN) to generate attention maps for classifying retinal OCT images. This approach enhanced the classification network's focus on lesion-relevant regions, thereby speeding up the training process and improving classification accuracy. However, using two independent networks (LDN + LACNN) increased computational complexity. In 2021, Thomas et al.29 proposed a multi-scale CNN architecture with seven convolutional layers for classification experiments on the UCSD dataset. Their binary classification between NORMAL and AMD cases achieved a weighted average accuracy of 99.73%. In 2022, Saman et al.30 introduced a multi-scale structure based on the Feature Pyramid Network (FPN), which was combined with several networks including VGG16, ResNet50, DenseNet121, and EfficientNetB0. They conducted experiments on NEH and UCSD retinal OCT image datasets, demonstrating that the integration of the multi-scale module with VGG16 yielded the best performance. Their multi-scale structure differed from Thomas et al.'s in that it was designed to capture scale variations and utilized feature fusion strategies across convolutional blocks to improve performance. In 2023, Moradi et al.31 proposed an ensemble learning model for the classification and segmentation of 2D SD-OCT and 3D SD-OCT images. They integrated three neural network models (VGG16, EfficientNetB3, and DenseNet121) for 2D SD-OCT image classification and three networks (Residual Attention U-Net, Regular U-Net, and ResNet34) for retinal OCT image edge segmentation.

Our study builds on the research mentioned above and falls within the scope of deep learning. Currently, deep learning has shown remarkable performance in image classification, leading to a growing interest in developing lightweight solutions within neural network. With this in mind, we have chosen the renowned lightweight neural network EfficientNetB018 as the foundational framework. Based on the majority of research findings, it has been proven that multi-scale feature extraction and fusion are effective methods for enhancing a model's ability to capture features. However, indiscriminately adjusting receptive field sizes to obtain features of different scales can result in excessive model parameters and computational complexity, which impeding training speed. To address this issue, we have opted to utilize depth wise separable convolution to achieve multi-scale feature extraction. This approach ensures that our proposed multi-scale structure enhances model performance while also maintaining a manageable number of parameters. Furthermore, considering the characteristics of retinal OCT images, where the crucial feature regions are concentrated in the macular area at the center of the image, it is crucial for the model to focus its attention on spatial positions accordingly. To achieve this, we introduce a spatial attention mechanism, which is combined with the model's existing SE channel attention mechanism. This integration enables the model to enhance feature representation in both channel and spatial dimensions simultaneously, resulting in more accurate classification of OCT images. Detailed descriptions of the model and experiments will be provided in the following subsections.

Methods

MSA-Net model

The main challenges in OCT image classification tasks lie in the variation of regions of interest (ROIs) at different scales and the spatial concentration of important features. To address these challenges, we propose a multi-scale feature extraction and fusion structure along with a spatial attention mechanism, integrated into the EfficientNet-B0 model. The combined model is referred to as the multi-scale attention network (MSA-NET), as illustrated in Fig. 2. This model aims to automatically classify OCT images of macular degeneration. This section describes the main architecture of MSA-Net.

Illustrates the structure of the MSA-Net model. The model consists primarily of three parts: (a) encoder, (b) inverted residual modules, and (c) classifier. The input encoder transforms the input image into feature maps through convolution, the inverted residual modules enhance the representations of the feature maps, and finally, the classifier computes the probability of each input image belonging to a particular class.

The MSA-NET structure mainly consists of three parts: (a) the encoder, (b) the inverted residual modules, and (c) the classifier. The encoder is responsible for converting the input image into feature maps. Our input image has dimensions of \({x}_{0}({x}_{0}=224\times 224\times 3)\). After the initial convolution operation \({x}_{in}=Conv\left({x}_{0},k=3\times 3\right)\), we obtain feature maps of dimensions \(112\times 112\times 32\), denoted as \({x}_{in}\). These feature maps serve as input to the reverse residual module. Within the reverse residual module, \({x}_{in}\) undergoes a series of enhanced feature representation operations, including the multi-scale structure and attention mechanism proposed by us, which will be elaborated on in subsequent sections. The inverted residual modules aim to maintain network depth while maximizing the enhancement of feature maps. This process can be expressed using the following formulas.

In Formula (1), \(F\) represents the final output feature map of the inverted residual modules, and \({R}_{n}\) denotes the calculation of the inverted residual, where \(n\) indicates the nth \(n(n\in \{\text{1,2},3,\dots ,7\})\) inverted residual modules calculation. Each inverted residual modules are composed of stacked reverse residual structures with different numbers of layers, as shown in Table 1. Formula (2) represents the specific algorithm for the inverted residual calculation. Here, \(x\) represents the original input feature map for the inverted residual calculation, and \(f\left(x\right)\) represents the auxiliary feature extraction and enhancement computation within the inverted residual modules. This includes operations such as convolution, multi-scale feature extraction and fusion, and attention mechanism, the specific computations of which will be provided in subsequent sections. The third part is the classifier, responsible for processing the output feature map F of the inverted residual modules into a one-dimensional feature vector suitable for classification comparison, and outputting the final classification result. The specific operations are as follows in the following formula.

Formula (3) represents the top convolution operation with a \(1\times 1\) kernel size. The purpose of this convolutional layer is to adjust the channel count of the feature map \(F\). In this approach, we adjust the channel count to 1280, making its dimensions suitable for subsequent classification tasks. Then, adaptive average pooling is used to pool the convolutional feature vector \({x}_{n}\) to the specified size, calculated as shown in Formula (4). Here, \({y}_{n,c,\text{1,1}}\) denotes the value of the output tensor y for the nth sample in the cth channel (a single value within a \(1\times 1\) spatial grid), while \({x}_{n,c,i,j}\) represents the value at position \((i,j)\) of the input feature map \(x\) for the nth sample in the cth channel. \(H\) and \(W\) represent the height and width of the input feature map, respectively. Thus, the feature vector \({x}_{n}\) is resized to a tensor y of size \((\text{32,1280,1},1)\). Formulas (5) and (6) then flatten and perform fully connected computation on the tensor \(y\). In this process, the \(Flatten\) operation flattens the tensor \(y\) into a one-dimensional vector of size \((\text{32,1280}\times 1\times 1)\), which is then fed through a fully connected layer to obtain the vector \(z\). The values in vector z represent the raw scores for each class. In these two formulas, \({\theta }_{N}\) and \(N\) respectively denote the dimensions and batch size in the \(Flatten\) operation, where \({\theta }_{N}\) is set to 1, indicating flattening along the channel dimension, and \(N\) is set to 32. Before the fully connected layer, a dropout layer with a dropout rate of 0.5 is applied to reduce overfitting. Finally, class probabilities \(P\) for each sample are obtained through softmax computation as shown in Formulas (7) and (8). The softmax computation is specifically defined in Formula (8), where \({z}_{i}\) represents the ith element of the input vector \(z\), \(e\) is the base of the natural logarithm, and \(k\) is the length of vector \(z\), which corresponds to the number of classes. The number of classes depends on the number of classes in the input dataset, such as three classes for the NEH dataset (CNV, DRUSEN, and NORMAL)19 and four classes for the UCSD dataset (CNV, DME, DRUSEN, and NORMAL)20. The resulting values in the final computation of P range between 0 and 1, representing the probability distribution for each class.

Inverted residual module

This section provides a detailed description of the proposed structure, namely the inverted residual modules within the MSA-NET architecture, as depicted in Fig. 3. It primarily encompasses our proposed multi-scale structure and attention mechanism. The workflow of the inverted residual modules is illustrated in Algorithm1.

Inverted residual structure. The core of this structure consists of multi-scale layers and attention mechanism layers, which enhance the feature representation of the feature maps.

The inverted residual module is roughly divided into five parts, with the first two parts being convolution and depthwise separable convolution operations. Convolution operation is utilized to expand the input channels by a factor of 1 or 6. The expansion rate varies for different numbers of inverted residual layers, as indicated in Table 1. The purpose of employing depthwise separable convolution is to reduce the parameter count and computational complexity of the model while extracting features from both channel and spatial dimensions of the feature map. The specific calculation formula for depthwise separable convolution can be found in line 9 of Algorithm 1, where \(Z\) and \(Y\) represent the output feature maps of depthwise and pointwise convolutions respectively, \(i\) denotes the channel index of the output feature map, \(p\) and \(q\) denote the spatial positions of the input feature map, \({c}_{in}\) and \({c}_{out}\) represent the numbers of input and output feature map channels respectively. The kernel sizes for depthwise separable convolution are also shown in Table 1. We place the proposed multi-scale feature extraction and fusion structure, as well as the SE-Driven Spatial Attention structure, after the depthwise separable convolution layers. Through these two structures, features are adequately extracted from multiple scales, and feature weighting is applied in both channel and spatial dimensions to highlight important features. The specific calculation methods for these two structures are provided in Algorithm1, and detailed explanations will be given in the following two subsections. The last part of the inverted residual structure adjusts the channel count of the feature map through convolution, followed by a dropout layer with a dropout rate of 0.2 to reduce overfitting and improve the generalization ability of the structure.

Multi-scale feature extraction and fusion structure

For fundus OCT images, the features displayed at a single scale are limited, and the classification results obtained solely from a single scale may not be satisfactory. Therefore, it is necessary to analyze the images comprehensively using feature maps from multiple scales. To address this issue, we propose a multi-scale feature extraction and fusion structure based on depthwise separable convolution design. In traditional multi-scale structures, the kernel size of regular convolution is typically altered to obtain different receptive fields. However, regular convolution entails a large number of parameters and computational load, and increasing multiple scales with regular convolution significantly inflates the model's parameter count and computational load. To tackle this problem, we opted for the more lightweight depthwise separable convolution. Depthwise separable convolution breaks down standard convolution into two steps: depthwise convolution and pointwise convolution. This decomposition significantly reduces the computational load by breaking down a large-scale convolution operation into two smaller-scale operations. In formulas (9), (10), (11), and (12), we demonstrate the calculation methods for parameters and computational complexity of both standard and depthwise separable convolutions. This reduction in computational complexity is particularly beneficial for high-resolution OCT images, which contain extensive details and high-dimensional data. Additionally, depthwise separable convolution significantly decreases the number of model parameters, making the model more lightweight and suitable for resource-limited environments. It effectively extracts and integrates features across different scales and spatial locations, making it especially suitable for complex medical images like macular degeneration OCT images. The experimental results also confirm these advantages.

Algorithm 1 Inverted residual module

Here, \(k\) represents the kernel size, \({c}_{in}\) and \({c}_{out}\) represent the number of input and output channels, and \(H\) and \(W\) represent the height and width of the feature map. For a feature map with dimensions of \(224\times 224\times 3\), both regular convolution with a kernel size of \(3\times 3\times 3\) and depthwise separable convolution are applied. According to the formulas, the parameter count and computational load for regular convolution are 81 and 4,064,256, respectively, while for depthwise separable convolution, they are 36 and 1,806,336, respectively. The parameter count and computational load of regular convolution are 2.25 times those of depthwise separable convolution. This ratio can be calculated using \(r=1/{c}_{out}+1/(k\times k)\), where \(r\) represents the multiplier relationship between the parameter count and computational load of depthwise separable convolution relative to regular convolution. From the formulas, it can be observed that as the kernel size and output channels increase, the difference in parameter count and computational load between the two becomes more significant. Therefore, utilizing depthwise separable convolution to implement a multi-scale structure can significantly reduce the model's parameter count and computational load. Figure 4 illustrates the specific structure of the multi-scale feature extraction and fusion module.

Depicts the multi-scale feature extraction and fusion structure. This structure extracts and integrates features from the feature map across three different scales.

Combining Fig. 4 with the specific calculation of multi-scale feature extraction and fusion in line 10 of Algorithm 1, we can see that our proposed multi-scale structure is designed with three scales. Feature extraction for each scale is achieved using depthwise separable convolutions with kernel sizes of \(3\times 3\), \(5\times 5\), and \(7\times 7\). Subsequently, features from the three scales are fused along the channel dimension. The fused feature map has three times the original number of channels. Therefore, we use a \(1\times 1\) convolution to adjust the channel count of the fused feature map for input to subsequent layers. Compared to a single scale, the fusion of features from three scales is more enriched and advantageous for the model in identifying and extracting important features. In theory, by increasing the number of scales using this approach, we can obtain more image features. However, adding too many scales significantly increases model complexity, consumes computational resources, and may lead to overfitting, thereby increasing training difficulty. Therefore, it is advisable to choose different scale combinations based on specific conditions.

SE-driven spatial attention module

In OCT fundus images, important features are spatially concentrated, yet most models focus on enhancing feature maps in the channel dimension only, neglecting important features in spatial positions. This results in insufficient attention to spatial features by the models. To address this issue, we propose a spatial attention mechanism driven by the Squeeze-and-Excitation (SE) attention mechanism. This attention mechanism can weight important features in the feature map in both channel and spatial dimensions simultaneously, thereby improving the model's ability to recognize important features. Figure 5 illustrates the specific structure of the SE-driven spatial attention module.

Attention mechanism structure. This structure mainly consists of (a) SE channel attention mechanism and (b) spatial attention mechanism.

From Fig. 5, it can be observed that the structure of this attention mechanism mainly consists of two parts: the SE channel attention mechanism and the spatial attention mechanism. The specific calculation formulas and steps are demonstrated in line 11 of Algorithm 1. The SE channel attention part primarily comprises an average pooling layer and two fully connected layers. Initially, the feature map is compressed into a global vector through adaptive average pooling. Subsequently, channel attention weights are obtained through two fully connected layers. Multiplying these channel attention weights with the input feature map yields the weighted feature map. The second part, the spatial attention mechanism, operates under the guidance of the SE channel attention mechanism, using the feature map weighted by channel attention as input. Firstly, the feature map's maximum and average values at each spatial position are obtained through max pooling and average pooling. Then, these two pooled feature maps are fused along the channel dimension, and convolution is utilized to obtain spatial attention weights. Finally, the input feature map is multiplied by the spatial attention weights to obtain the final weighted feature map.

Experiments

Dataset

Our proposed method was evaluated on two publicly available databases. The first database was collected from the Heidelberg SD-OCT imaging system at Noor Eye Hospital in Tehran, Iran19. This database, comprises over 16,000 retinal OCT images from 441 cases, including 120 normal cases, 160 cases with DRUSEN, and 161 cases with CNV. To facilitate training and comparison, we algorithmically selected the OCT images with the poorest results from each case in the database. For instance, if a patient was detected with DRUSEN, only the images identified as DRUSEN in that patient's OCT images were retained. Ultimately, 12,649 images were selected for training and testing, as outlined in Table 2. We divided the selected dataset into training and testing sets in a 9:1 ratio, result in 11,353 images in the training set and 1,288 images in the testing set. The second database, known as the University of California San Diego (UCSD) database20, consists of both training set and testing set. These sets are further categorized into four distinct classes: DRUSEN, CNV, DME, and NORMAL. The training set comprises a total 108,312 retinal OCT images, as outlined in Table 2. On the other hand, the testing set consists of 1,000 retinal OCT images, with 250 images allocated to each class.

Experimental evaluation metrics

In this study's experiments, we primarily calculate the accuracy, sensitivity, and specificity for each class based on the confusion matrices obtained from image classification. Then, we aggregate the results for each class and compute their averages. The confusion matrix is a matrix used to summarize the classification performance of the model, including the counts of true positives (TP), false positives (FP), true negatives (TN), and false negatives (FN). Accuracy represents the overall performance of the model in correctly classifying samples, with higher accuracy indicating better overall performance. Sensitivity indicates the model's ability to correctly predict positive samples among the actual positive class, reducing the risk of false negatives. On the other hand, specificity represents the model's ability to correctly predict negative samples among the actual negative class, reducing the risk of false positives. The formulas for accuracy, sensitivity, and specificity are as follows.

Here, \(i\) represents the corresponding value for class \(i\) when it is treated as the positive sample.

Experimental settings

In this study, we resized all images to 224 × 224 × 3. Moreover, we employed a five-fold cross-validation method to ensure the rationality of model training, enhance the model's generalization ability, and reduce overfitting. Furthermore, to increase the diversity of the data and improve the generality of the proposed model, we applied data augmentation techniques. These techniques encompassed random rotations, translations, changes in brightness, scaling, and horizontal flipping to the four subsets used for training in each training iteration. However, the validation set was not subjected to augmentation. Table 3 provides detailed specifications of our data augmentation approach.

All networks were trained using stochastic gradient descent (SGD) optimizer with cosine annealing to adjust the learning rate. The momentum for SGD optimizer was set to 0.9, weight decay to 1e−4, initial learning rate to 0.001, and the minimum learning rate for scheduling was set to 0.0005 to prevent the learning rate from decreasing too low and causing the model to fall into local minima or early stagnation. The number of epochs was set to 100 to ensure full convergence of the model, with a batch size of 32. The cross-entropy loss function was used to measure the training status and adjust the model’s parameters. The entire training process was end-to-end.

Results and analysis

We train the model on the NEH19 and UCSD20 datasets and evaluate the model's classification performance using 3-class and 4-class confusion matrices, respectively. The performance evaluation metrics include accuracy, sensitivity, specificity, and the model's parameter count as mentioned above.

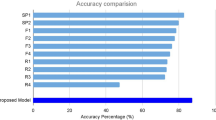

First, on the NEH dataset, we compared our model with several state-of-the-art networks known for their excellent classification performance, such as VGG14, ResNet16, DenseNet17, EfficientNet18, EfficientNetV232 and recently proposed models for retinal OCT image classification. Among these models, two also have multi-scale architectures, and there are models based on improvements to EfficientNet18. Table 4 shows the average performance of all models on the NEH dataset by using five-fold cross-validation.

The results from Table 4 indicate that our model (MSA-NET) outperforms other models in terms of classification accuracy, sensitivity, and specificity. MSA-NET achieves a classification accuracy of 98.1%, sensitivity of 97.9%, and specificity of 98.0%. Notably, MSA-NET exhibits superior performance in accuracy, sensitivity, and specificity compared to well-known neural networks such as VGG1614, ResNet5016, DenseNet12117, and EfficientNetB018. Particularly, when compared to the EfficientNetB0 network, the enhanced MSA-NET demonstrates a significant improvement in accuracy by 12.7%, sensitivity by 13.4%, and specificity by 5.9%. The comparison between the two datasets clearly highlights the substantial enhancement in the performance of MSA-Net for retinal OCT image classification within the EfficientNetB0 framework. Furthermore, MSA-NET exhibits superior performance compared to the advanced version of EfficientNet, EfficientNetV2, as well as the AlexNet model implemented by Kaymak et al. In addition, when compared to the multi-scale structures proposed by Thomas et al.29 and Saman et al.30 respectively, the multi-scale structure in MSA-NET clearly demonstrates a more significant improvement in model performance. Furthermore, the utilization of depthwise separable convolutions as the foundation of the multi-scale structure ensures that MSA-NET has a lesser increase in parameter count. While the ensemble network model constructed by Moradi et al.31, integrating VGG16, EfficientNetB3, and DenseNet121, exhibits a significant improvement in model performance, this approach also makes the model more complex and greatly increases computational demands. Moreover, according to the comparison of parameter counts in Table 4, it can be seen that our method maintains a relatively small parameter count while ensuring high accuracy. Particularly, compared to the high-performing ensemble methods proposed by Moradi31, our method significantly outperforms theirs in terms of parameter control while also showing a noticeable performance advantage. Therefore, it is evident that the proposed multi-scale structure and attention mechanism have a significant impact on model improvement. The incorporation of these two structures enables the model to more accurately classify OCT images.

Another comparative study that was conducted on the UCSD20 dataset not only reflect the classification performance of our model but also demonstrate its generalization ability. In the case of MSA-Net, we directly fine-tuned the parameters trained on the NEH dataset directly using the UCSD dataset. The performance evaluation of the model was also represented by the results of five-fold cross-validation.

For comparison, we selected the FPN-VGG16 model proposed by Saman et al.30 and the ensemble network model proposed by Moradi et al.31, both of which demonstrated excellent performance in the initial experiment. Similar to our model, these two methods also utilized parameters that trained on the NEH dataset to train on the UCSD dataset in order to assess the models' generalization ability. Additionally, we compared two studies conducted by Fang et al.27,28 In one study, they introduced a feature fusion strategy for iteratively combining features within convolutional neural network layers. In the other study, they utilized a Lesion Detection Network (LDN) to generate attention maps, which were then integrated into the classification framework. Both of these methods were trained directly on the UCSD dataset20. Table 5 presents the average performance of all models on the UCSD dataset under five-fold cross-validation.

Table 5 shows that MSA-NET still demonstrates superior performance on the UCSD dataset. With an accuracy of 96.7%, sensitivity of 96.7%, and specificity of 98.9%, these metrics highlight the excellent classification performance of MSA-NET on the UCSD dataset, showcasing its strong generalization ability. Compared to the methods proposed by Saman et al.30 and Moradi et al.31, MSA-NET also exhibits advantages in performance. However, it is noteworthy that the ensemble network model proposed by Moradi et al.31 performs comparably well. Nevertheless, due to its lightweight nature, Moradi et al.'s ensemble network model possesses a more complex structure and relatively more parameters. Whereas MSA-NET, with the addition of the multi-scale structure and spatial attention mechanism, has fewer parameters, making the model more concise. Compared to the two methods proposed by Fang et al.27,28, MSA-NET demonstrates superior performance.

The experimental data from both experiments validate the multi-scale structure and attention mechanism in MSA-NET, not only in improving model performance but also in ensuring exceptional generalization capabilities.

To further demonstrate the effectiveness of our proposed structure, we incorporated the proposed MSA (Multi-scale Structure and Attention Mechanism) into DenseNet121 and ResNet50 models and conducted three-class classification experiments on the NEH dataset using five-fold cross-validation. The results are shown in Table 6.

The results in Table 6 show that incorporating our proposed MSA into other model architectures also improves their classification performance, which demonstrates the effectiveness of our MSA. Particularly, it performs best when combined with the EfficientNetB0 network, as EfficientNetB0 has excellent classification performance. Inserting the MSA module into the inverse residual modules of EfficientNetB0, which are repeated seven times in the network, maximizes the feature extraction capabilities of the MSA module, leading to better results compared to other network combinations.

Ablation experiment

In order to investigate the specific impact of the two proposed structures on model performance, we conducted ablation experiments on the NEH dataset. These experiments involved combining the structures with the EfficientNetB0 model in various configurations. The evaluation metrics utilized were accuracy, sensitivity, and specificity. It is important to note that none of the combinations use pre-trained parameters.

The first combination involves training the EfficientNetB018 model alone. In the second combination, we add the spatial attention mechanism to EfficientNetB0, denoted as EfficientNetB0 + SA. The third combination combines the multi-scale module with EfficientNetB0, placing the multi-scale module before the SE attention mechanism, denoted as EfficientNetB0 + Multi-Scale. The final combination integrates both the spatial attention mechanism and the multi-scale module with the EfficientNetB0 network, denoted as EfficientNetB0 + SA + Multi-Scale. Four experimental tasks were conducted to explore the effects of the different structural combinations. The results of these ablation experiments, carried out under five-fold cross-validation, are presented in Table 7.

The results presented in Table 7 indicate that both our proposed multi-scale structure and attention mechanism contribute to improving the model's classification performance. Notably, the multi-scale structure exhibits the most substantial improvement in performance. By incorporating both of these structures into the model, we achieve the highest level of performance, albeit with a longer training time. However, the enhancement in performance is significantly more pronounced. These findings suggest that placing the multi-scale structure before the attention mechanism optimizes the extraction of features from multi-scale feature maps. By enhancing feature representation in both the channel and spatial dimensions, the model is able to prioritize crucial image features such as the macular region.

To investigate the impact of each convolution kernel on the multi-scale structure, we divided the three different kernel sizes into three combinations: (3, 5), (3, 7), and (5, 7). We combined each of these with the EfficientNetB0 model and conducted three-class classification experiments on the NEH dataset using five-fold cross-validation. Each experiment incorporated our proposed attention mechanism. The results are shown in Table 8.

The results in Table 8 show that each of these three combinations improves the model's classification performance to some extent. When combining all three different kernel sizes, the model achieves the best results in terms of accuracy and sensitivity among all comparisons, and the performance improvement is the greatest. This is because the inclusion of features from different-sized kernels allows the model to extract more diverse and richer features, leading to a more significant performance enhancement.

Visualization experiment

The pathological features identified by the model hold immense significance for both doctors and patients, making it a crucial aspect diagnosis. Therefore, in this section, we will use the Grad-CAM33 technique to generate Class Activation Maps (CAM) for visualizing the model and discuss its in identifying image category features. Figures 6 and 7 show examples of CAM generated by MSA-NET for the four classes of images in the UCSD dataset and the three classes of images in the NEH dataset at the feature extraction layer.

Illustrates examples of class activation maps (CAM) generated by MSA-NET on the UCSD dataset using Grad-CAM. In particular, (a) CNV case, (b) DME case, (c) DRUSEN case, and (d) NORMAL case.

Illustrates examples of CAM generated by MSA-NET on the NEH dataset using Grad-CAM. The left column shows the original images and the right column shows the CAM images: (a) CNV case, (b) DRUSEN case, and (c) NORMAL case.

Based on the heatmap examples in Figs. 6 and 7, it can be observed that MSA-NET's feature extraction layer predominantly focuses on the macular region. For the CNV, DME, and DRUSEN categories, MSA-NET accurately highlights the macular degeneration lesions. These CAM examples visually present the model's focal points, demonstrating that our model has a certain level of interpretability and indicating that our proposed multi-scale structure and attention mechanism are highly effective in the feature extraction process. For both doctors and patients, CAM images can be used to showcase the specific performance and application value of the model. Of course, to enhance the model's focus on image detail features, we will place greater emphasis on improving the model's attention to detail in future research.

Conclusion

In this paper, we propose a model incorporating an improved multi-scale structure and attention mechanism to address the classification task of AMD-related retinal OCT images. Our research offers two main contributions: Firstly, we employ depthwise separable convolution to achieve multi-scale feature extraction, which reduces the number of parameters compared to regular convolution. This approach accelerates training and enhance the model’s feature acquisition by obtaining multiple Feature information at different scales. Secondly, by integrating multi-scale information and spatial attention mechanism, we improve the model's ability to focus on important features, resulting in significantly enhanced classification performance. Experimental results show that our method outperforms mainstream classification neural networks and several well-known retinal OCT image classification models. Moreover, our model exhibits substantial performance improvement compared to the pre-improved network model, validating the effectiveness of our structure in enhancing model performance. Additionally, our model demonstrates strong generalization capability when trained on OCT datasets from different sources. We also explore the impact of different structures on model performance through different combinations, ultimately identifying the optimal model structure. Finally, we use Grad-CAM technology to generate CAM diagrams of the model feature extraction layer, visually presenting the feature extraction process and providing compelling evidence for the model's classification basis.

Of course, the model still has certain limitations. Based on the experimental results, we found that the classification accuracy for the DURSEN category is relatively lower compared to the CNV, DME, and NORMAL categories. Analysis of the OCT images in the UCSD dataset reveals that the pathological features of early-stage macular degeneration, such as DURSEN, are less distinct compared to other categories, which results in a slightly lower classification accuracy for this category. Additionally, as shown in Table 2, there is a class imbalance issue in the dataset, which is more pronounced in the UCSD dataset. Experiments also indicate that our model performs relatively poorly in classifying the DURSEN category on this dataset. In future research, we will adjust the model structure and training strategies to focus more on image detail features and mitigate the impact of class imbalance on classification performance. This aims to improve performance across all pathological stages and enhance the model's diagnostic capability and practical application effectiveness.

Currently, the primary diagnostic and therapeutic approach for macular degeneration involves ophthalmologists examining retinal images with fundus cameras to check for degeneration or bleeding. MSA-Net, on the other hand, can provide preliminary diagnostic suggestions through automated image data analysis. This can significantly reduce the workload for doctors, especially when dealing with large volumes of patient data. By providing efficient and accurate preliminary analysis results, MSA-Net allows doctors to allocate more time to in-depth analysis and decision-making for complex cases. Additionally, MSA-Net can serve as an auxiliary diagnostic tool to enhance diagnostic efficiency and accuracy. It also supports long-term health management and early screening through electronic health records and real-time monitoring systems, enabling doctors to intervene earlier, thereby reducing the risk of disease progression and improving the overall patient healthcare experience.

Data availability

The Noor Eye Hospital (NEH) dataset can be obtained at https://data.mendeley.com/datasets/8kt969dhx6/1, and the University of California San Diego (UCSD) dataset is available at https://data.mendeley.com/datasets/rscbjbr9sj.

References

Wong, W. L. et al. Global prevalence of age-related macular degeneration and disease burden projection for 2020 and 2040: A systematic review and meta-analysis. Lancet Glob. Health 2(2), e106–e116. https://doi.org/10.1016/S2214-109X(13)70145-1 (2014).

Klein, R. et al. Fifteen-year cumulative incidence of age-related macular degeneration: The Beaver Dam Eye Study. Ophthalmology 114(2), 253–262. https://doi.org/10.1016/j.ophtha.2006.10.040 (2007).

Zhang, J. et al. Diabetic macular edema: Current understanding, molecular mechanisms and therapeutic implications. Cells 11(21), 3362. https://doi.org/10.3390/cells11213362 (2022).

Rasti, R., Rabbani, H., Mehridehnavi, A. & Hajizadeh, F. Macular OCT classification using a multi-scale convolutional neural network ensemble. IEEE Trans. Med. Imaging 37(4), 1024–1034. https://doi.org/10.1109/TMI.2017.2780115 (2017).

Ferris, F. L., Fine, S. L. & Hyman, L. Age-related macular degeneration and blindness due to neovascular maculopathy. Arch. Ophthalmol. 102(11), 1640–1642. https://doi.org/10.1001/archopht.1984.01040031330019 (1984).

Thomas, C. J., Mirza, R. G. & Gill, M. K. Age-related macular degeneration. Med. Clin. 105(3), 473–491. https://doi.org/10.1016/j.mcna.2021.01.003 (2021).

Thomas, R. L. et al. Prevalence of diabetic retinopathy within a national diabetic retinopathy screening service. Br. J. Ophthalmol. 99(1), 64–68. https://doi.org/10.1136/bjophthalmol-2013-304017 (2015).

Yau, J. W. et al. Global prevalence and major risk factors of diabetic retinopathy. Diabetes Care 35(3), 556–564. https://doi.org/10.2337/dc11-1909 (2012).

Gess, A. J., Fung, A. E. & Rodriguez, J. G. Imaging in neovascular age-related macular degeneration. Semin. Ophthalmol. 26(3), 225–233. https://doi.org/10.3109/08820538.2011.582533 (2011).

Das, V., Dandapat, S. & Bora, P. K. Multi-scale deep feature fusion for automated classification of macular pathologies from OCT images. Biomed. Signal Process. Control 54, 101605. https://doi.org/10.1016/j.bspc.2019.101605 (2019).

Stahl, A. The diagnosis and treatment of age-related macular degeneration. Dtsch. Ärztebl. Int. 117(29–30), 513. https://doi.org/10.3238/arztebl.2020.0513 (2020).

Velilla, S. et al. Smoking and age-related macular degeneration: Review and update. J. Ophthalmol. 1, 1. https://doi.org/10.1155/2013/895147 (2013).

Krizhevsky, A., Sutskever, I. & Hinton, G. E. Imagenet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 25, 1. https://doi.org/10.1145/3065386 (2012).

Simonyan, K., & Zisserman, A. Very deep convolutional networks for large-scale image recognition. Apr. 10. arXiv:1409.1556. https://doi.org/10.48550/arXiv.1409.1556 (2015).

Szegedy, C., et al. Going deeper with convolutions. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 1–9. https://doi.org/10.48550/arXiv.1409.4842 (2015).

He, K., Zhang, Z., Ren, S., & Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 770–778. https://doi.org/10.48550/arXiv.1512.03385 (2016).

Huang, G., Liu, Z., Van Der Maaten, L., & Weinberger, K. Q. Densely connected convolutional networks. In Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 4700–4708. https://doi.org/10.48550/arXiv.1608.06993 (2017).

Tan, M., & Le, Q. Efficientnet: Rethinking model scaling for convolutional neural networks. In International conference on machine learning, PMLR, pp. 6105–6114. https://doi.org/10.48550/arXiv.1905.11946 (2019).

Sotoudeh-Paima, S., Hajizadeh, F., & Soltanian-Zadeh, H. Labeled retinal optical coherence tomography dataset for classification of Normal, Drusen, and CNV cases, vol. 1. https://doi.org/10.17632/8kt969dhx6.1 (2021).

Kermany, D., Zhang, K., & Goldbaum, M. Large dataset of labeled optical coherence tomography (OCT) and chest X-ray images. vol. 3. https://doi.org/10.17632/rscbjbr9sj.3 (2018).

Albarrak, A., Coenen, F., & Zheng, Y. Age-related macular degeneration identification in volumetric optical coherence tomography using decomposition and local feature extraction. In Proceedings of 2013 international conference on medical image, understanding and analysis, pp. 59–64. Accessed: Apr. 17, 2024. [Online]. Available: https://www.researchgate.net/profile/Abdulrahman-Albarrak-2/publication/260814094_Age-related_Macular_Degeneration_Identification_In_Volumetric_Optical_Coherence_Tomography_Using_Decomposition_and_Local_Feature_Extraction/links/53e0dcc00cf24f90ff60bd92/Age-related-Macular-Degeneration-Identification-In-Volumetric-Optical-Coherence-Tomography-Using-Decomposition-and-Local-Feature-Extraction.pdf (2013).

Srinivasan, P. P. et al. Fully automated detection of diabetic macular edema and dry age-related macular degeneration from optical coherence tomography images. Biomed. Opt. Express 5(10), 3568–3577. https://doi.org/10.1364/BOE.5.003568 (2014).

Deng, J., et al. Age-related macular degeneration detection and stage classification using choroidal OCT images. In Campilho, A., & Karray, F., Eds. Image analysis and recognition, Lecture Notes in Computer Science, vol. 9730, pp. 707–715 (Springer International Publishing, Cham, 2016). https://doi.org/10.1007/978-3-319-41501-7_79.

Wang, Y., Zhang, Y., Yao, Z., Zhao, R. & Zhou, F. Machine learning based detection of age-related macular degeneration (AMD) and diabetic macular edema (DME) from optical coherence tomography (OCT) images. Biomed. Opt. Express 7(12), 4928–4940. https://doi.org/10.1364/BOE.7.004928 (2016).

Kermany, D. S. et al. Identifying medical diagnoses and treatable diseases by image-based deep learning. Cell 172(5), 1122–1131. https://doi.org/10.1016/j.cell.2018.02.010 (2018).

Kaymak, S., & Serener, A. Automated age-related macular degeneration and diabetic macular edema detection on oct images using deep learning. In 2018 IEEE 14th international conference on intelligent computer communication and processing (ICCP), IEEE, pp. 265–269. https://doi.org/10.1109/ICCP.2018.8516635 (2018).

Fang, L. et al. Iterative fusion convolutional neural networks for classification of optical coherence tomography images. J. Vis. Commun. Image Represent. 59, 327–333. https://doi.org/10.1016/j.jvcir.2019.01.022 (2019).

Fang, L. et al. Attention to lesion: Lesion-aware convolutional neural network for retinal optical coherence tomography image classification. IEEE Trans. Med. Imaging 38(8), 1959–1970. https://doi.org/10.1109/TMI.2019.2898414 (2019).

Thomas, A., Harikrishnan, P. M., Krishna, A. K., Palanisamy, P. & Gopi, V. P. A novel multiscale convolutional neural network based age-related macular degeneration detection using OCT images. Biomed. Signal Process. Control 67, 102538. https://doi.org/10.1016/j.bspc.2021.102538 (2021).

Sotoudeh-Paima, S., Jodeiri, A., Hajizadeh, F. & Soltanian-Zadeh, H. Multi-scale convolutional neural network for automated AMD classification using retinal OCT images. Comput. Biol. Med. 144, 105368. https://doi.org/10.1016/j.compbiomed.2022.105368 (2022).

Moradi, M., Chen, Y., Du, X. & Seddon, J. M. Deep ensemble learning for automated non-advanced AMD classification using optimized retinal layer segmentation and SD-OCT scans. Comput. Biol. Med. 154, 106512. https://doi.org/10.1016/j.compbiomed.2022.106512 (2023).

Tan, M., & Le, Q. Efficientnetv2: Smaller models and faster training. In International conference on machine learning, PMLR, pp. 10096–10106. https://doi.org/10.48550/arXiv.2104.00298 (2021).

Selvaraju, R. R., Cogswell, M., Das, A., Vedantam, R., Parikh, D., & Batra, D. Grad-cam: Visual explanations from deep networks via gradient-based localization. In Proceedings of the IEEE international conference on computer vision, pp. 618–626. https://doi.org/10.48550/arXiv.1610.02391 (2017).

Funding

This study was funded by the Hunan Provincial Natural Science Foundation of China (grant No. 2021JJ30173), the 2023 Hunan Traditional Chinese Medicine Scientific Research Project (grant No. A2023048), Research Foundation of Education Bureau of Hunan Province, China (grant NO. 23A0273).

Author information

Authors and Affiliations

Contributions

Shilong Pang: writing—review and editing, writing—original draft, visualization, methodology, funding acquisition, formal analysis, conceptualization. Beiji Zou: writing—review and editing, writing—original draft, validation, supervision, methodology, funding acquisition, conceptualization. Xiaoxia Xiao: writing—review and editing, writing—original draft, validation, supervision, methodology, funding acquisition, conceptualization. Qinghua Peng: writing—review and editing, Validation, Formal analysis, Conceptualization. Junfeng Yan: writing—review and editing, supervision, investigation, conceptualization. Wensheng Zhang: validation, supervision, formal analysis, conceptualization. Kejuan Yue: supervision, formal analysis, conceptualization.

Corresponding author

Ethics declarations

Competing interests

The authors declare the following financial interests/personal relationships which may be considered as potential competing interests: Beiji Zou reports financial support was provided by Department of Science and Technology of Hunan Province. XIaoxia Xiao reports financial support was provided by Hunan Provincial Administration of Traditional Chinese Medicine.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Pang, S., Zou, B., Xiao, X. et al. A novel approach for automatic classification of macular degeneration OCT images. Sci Rep 14, 19285 (2024). https://doi.org/10.1038/s41598-024-70175-2

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-70175-2

Keywords

This article is cited by

-

Research on deep learning-based lesion identification in optical coherence tomography

BMC Ophthalmology (2026)

-

MultiModal craniocerebral diagnose based on 3D CT and image reports

Scientific Reports (2025)

-

Context-aware diagnosis of age-related macular degeneration (AMD) stages: a unified approach using self-supervised U-Net and graph neural networks on OCT imaging

Iran Journal of Computer Science (2025)