Abstract

Unmanned aerial vehicle (UAV)-assisted communication based on automatic modulation classification (AMC) technology is considered an effective solution to improve the transmission efficiency of wireless communication systems, as it can adaptively select the most suitable modulation method according to the current communication environment. However, many existing deep learning (DL)-based AMC methods cannot be directly applied to UAV platform with limited computing power and storage space, because of the contradiction between accuracy and efficiency. This paper mainly studies the lightweight of DL-based AMC networks to improve adaptability in resource-constrained scenarios. To address this challenge, we propose an ultra-lightweight neural network (ULNN). This network incorporates a lightweight convolutional structure, attention mechanism, and cross-channel feature fusion technique. Additionally, we introduce data augmentation (DA) based on signal phase offsets during the model training process, aimed at improving the model’s generalization ability and preventing overfitting. Through experimental validation on the public dataset RML2016.10 A, the ULNN we proposed achieves an average precision of 62.83% with only 8815 parameters and reaches a peak classification accuracy of 92.11% at SNR = 10 dB. The experimental results show that ULNN can achieve high recognition accuracy while keeping the model lightweight, and is suitable for UAV platform with limited resources.

Similar content being viewed by others

Introduction

UAVs, commonly known as drones, are flying machines that operate without a human pilot onboard. They have been extensively utilized across various domains for many years. For instance, UAVs are employed in aerial photography and mapping1, 3D modeling of buildings combined with MR techniques2, as well as in assisting communication3 and target detection4. Due to the rapid growth of UAV applications, they have attracted significant attention from both the industrial and academic sectors5. Specifically, UAVs are regarded as a potentially solution for resolving challenges associated with the design of wireless communication networks. This is due to their highly flexible deployment and dynamic mobility characteristics. However, the scarcity of spectrum resources has hindered substantial rate improvements for many emerging applications6. A potential solution involves the use of AMC, which enables UAVs to select the most suitable modulation method adaptively based on current channel conditions and transmission distance. This approach effectively enhances data transmission rates7.

In this paper, the UVAs are constrained to consumer-grade specifications, with a focus on limited power consumption and size. The wireless communication network with a fog computing communication system architecture8 is formed based on such UAVs, allowing UAVs to process data locally without transmitting it to remote cloud servers. Due to the dynamic mobility characteristics of UAVs, UAV communication networks have intermittent links and fluid topology9. AMC10 is one technique for automatically identifying the modulation scheme of signal, with no or some prior knowledge. This process acts as an intermediate step between signal detection and demodulation. In UAV communication systems, the application of AMC enables UAV to adaptively select the most suitable modulation method based on current channel conditions and transmission distance, thereby effectively enhancing data transmission7. Furthermore, as a key technology11 for dynamic spectrum access (DSA), AMC plays a significant role in enhancing spectrum efficiency and expanding communication capacity12. In complex electromagnetic environments13, AMC technology further demonstrates its potential in monitoring unauthorized devices or signals, particularly in applications such as UAV identification14, interference recognition15, and electronic countermeasures16. In conclusion, AMC serve an important function in optimizing the UAV communication performance and ensuring communication security.

After decades of development, AMC methods have diverged into two main categories: traditional recognition methods and DL-based recognition methods. And the traditional AMC approaches can be also broadly divided into two types: likelihood-based (LB)17 hypothesis testing and feature-based (FB)18,19 methods. LB is rooted in Bayesian theory and aims to achieve the optimal estimation of modulation schemes by minimizing the probability of misclassification. Although these methods are theoretically optimal, their practical application is not ideal due to the lack of necessary prior knowledge in noncooperative communication scenarios. On the other hand, FB methods involve extracting features and constructing a classifier. The common features include constellation features20, statistical parameter features21, and wavelet transform features22 etc. These methods usually rely on expert experience and have limitations in automation. Therefore, with the increasing complexity of communication systems and the demand for rapid response in UAV systems. Traditional AMC methods no longer meet current application requirements. Against this backdrop, DL-based AMC technology has emerged.

DL possesses the ability to automatically extract complex features from training samples and build classifiers, making it highly suitable for AMC tasks. O’Shea et al.23 were the first to validate the effectiveness of DL in AMC and released the RML2016.10 A dataset, which attracted a large number of researchers to participate. The most popular model architectures for DL in AMC include CNN24, long short-term memory (LSTM)25, and transformers 26. In 2017, O’Shea et al.27 proposed a CLDNN network that combines CNN and LSTM to explore the relationship between signal modulation recognition and network depth. F. Zhang et al. 28 introduced a robust structure called MCNet, based on residual connections and asymmetric convolution kernels, which achieved significant performance improvements. J. Xu et al. 29 proposed a hybrid structure called MCLDNN, featuring 1D convolution, 2D convolution and LSTM, which can extract and fuse spatiotemporal features from the In-phase (I) components, Quadrature (Q) components, and IQ of the signal. Cui30 developed an IQCLNet network that uses convolution kernels capable of extracting IQ-related features, achieving an average recognition accuracy of 81.7% when identifying 11 types of modulation methods at SNR > 0dB. The above networks designed corresponding complex structures starting from the phase features, temporal features, or fused features of the signal. However, these methods did not fully consider the model’s adaptability to edge devices.

F. Wang et al.31 proposed a lightweight radio transformer (MobileRaT) method based on deep learning, which reduces model weights through iterative training combined with information entropy-based weight pruning. A. Gon et al.32 introduced an AMC approach using hybrid data augmentation and a lightweight neural network. The network structure was designed with depth-wise separable convolutions to lower computational complexity. Q. Zheng et al.33 presented a lightweight deep neural network framework that integrates residual modules, LSTM modules, and attention mechanisms, along with the use of hybrid data augmentation techniques. These methods have contributed to the realization of lightweight AMC technology and edge computing. While lightweight models can reduce computational complexity and energy consumption, maintaining high accuracy remains a challenge.

In light of this, we focus on the design of light weight and low complexity models for resource constrained scenarios while maintaining high accuracy. The main contributions is summarized as follows:

-

Development of a lightweight neural network model: for the AMC task, we have developed a novel ultra lightweight neural network model. This model contains only 8,815 parameters but simultaneously demonstrates high performance, making it highly suitable for deployment in resource constrained applications.

-

Design of a DSECA module: we have designed a dual-stream efficient channel attention (DSECA) module that can effectively capture the correlations between different channels, thereby enhancing the representational power of features. Moreover, the DSECA module is highly versatile and can be easily integrated into any existing CNN architecture.

-

Design of a DSFFM module: we have designed an innovative dual-stream feature fusion module (DSFFM) that enhances the model’s ability to capture features at different granularities by fusing output feature maps from different layers. This strategy contributes to improving model performance.

Theoretical model

Drone communication model

In the UAV communication system, the ground control station is responsible for real-time monitoring of the UAV’s status and sending control instructions. The UAV navigates autonomously according to a predetermined flight plan and conducts reconnaissance on the communication links in space in real-time. Equipped with an embedded microprocessor, the UAV can immediately demodulate and analyze the received signals. During the UAV communication process, the received signal can be expressed as

where \(\rho\) denotes the channel amplitude gain following a Rayleigh distribution within the range (0,1]. \({\Delta _{fo}}(t)\) and \({\Delta _{co}}(t)\) are the carrier frequency offset and the sampling rate offset respectively. \({\tau _0}\) is the maximum delay spread. \(s(t)\) represents the noise-free complex transmitted signal. And \(\tilde {n}(t)\) represents the additive white Gaussian noise (AWGN) with zero mean and variance \({\sigma ^2}\).

Signal representation and data augmentation

The signal received by the UAV is typically recorded in IQ mode34. In this mode, the continuous signal \(r(t)\) is internally sampled by the UAV and converted into a discrete time sequence \(r(n)\) for storage. The discrete signal \(r(n)\) consists of two data vectors, the in-phase component \({r_I}\) and the quadrature component \({r_Q}\). Therefore, the received signal \(r(n)\) can be rewritten as

Phase shift33 is an effective means to achieve data augmentation in radio signal processing. By rotating the radio signal, we can generate data samples with different phase angles without altering the signal amplitude, thereby increasing the diversity of the dataset. Specifically, by performing a phase rotation on the received signal \(({r_I},{r_Q})\) around its origin, we can obtain the following augmented signal35 sample \((r_{I}^{\prime },r_{Q}^{\prime })\).

where \(\theta\) is the rotation angle. By rotating the received signal by 0, π/2, π, and 3π/2 respectively, the original sample data will be augmented fourfold, yielding the augmented signal set \(X=(r,{r_{\pi /2}},{r_\pi },{r_{\pi /4}})\). Figure 1 demonstrates the constellation diagram of CPFSK signal samples, which can visually observe the distribution of sample points obtained after different phase rotations.

Constellation diagram of the GFSK signal with phase-shift data augmentation.

Problem formulation

The objective of this study is to adopt a DL-based approach to solve the AMC task. The aim is to construct a neural network model f that is both highly accurate and low in complexity, thereby enabling it to accurately identify the modulation scheme of the input sample signal x. The model f maps the input signal x to the modulation class output y through parameters w. The objective is to find a set of weights w such that the predicted modulation class \(\hat {y}\) is as consistent as possible with the actual modulation class y when given the input signal x, maximizing the probability of correct prediction. The process can be formulated as follows.

Our proposed AMC method

Model structure

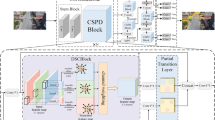

We proposed an ultra-lightweight network model ULNN for AMC, as shown in Fig. 2. The network consists of three key components: the lightweight convolution module (LCM), the dual-stream efficient channel attention module (DSECA), and the dual-stream feature fusion module (DSFFM). The DSECA module is included within the structure of the LCM module. In the following subsections, we will introduce the three modules in detail.

The overall architecture of the ULNN network.

Lightweight convolution module

The sample signal x is size of 128 × 2, where the real component \({{\mathbf{x}}_0}\) is in the first column and the imaginary component \({{\mathbf{x}}_1}\) is in the second column. Before the signal enters the LCM module, it is necessary to perform a complex convolution operation on the sample to exploit the correlation between the I and Q channels of the IQ signal36. The complex convolution operation37 is shown in Eq. (5). Here, w and k represent the weight and size of the complex convolution kernel, respectively. Complex numbers inherently include phase information, and complex convolution can process both magnitude and phase simultaneously. Therefore, despite being more complicated than real convolution, complex convolution is a more appropriate choice for data samples that have been augmented by phase rotation. It should be noted that after the complex convolution layer, there is also a complex batch normalization (BN) layer and a complex activation function ReLU connected. The output feature map after complex convolution is shown in Eq. (6).

The primary role of the LCM module is to extract deep features and reduce feature dimensions. As shown in Fig. 3, the LCM module mainly consists of split convolution, channel shuffle, and attention mechanism operations. Split convolution is an efficient convolution operation used for extracting feature maps. It includes two main parts. The first is depth-wise convolution (DW): convolution is performed on each input channel separately, rather than mixing all channels together, which can significantly reduce computational costs and model complexity. The second is pointwise convolution (PW): after DW, 1 × 1 convolution kernels are used to combine channel information, enhancing the network’s expressive ability while maintaining the depth of the feature map. After split convolution, since it is difficult for different groups of feature maps to exchange information, this may lead to reduced learning efficiency of the network. Therefore, we introduce a channel shuffle operation to increase the information flow between the output feature maps. Since channel shuffle simply achieves information flow by rearranging channels, it avoids using additional convolution or fully connected layers, thereby reducing the number of model parameters and computational complexity. After obtaining the output feature map F through shuffling, we apply an attention mechanism operation to further realize local cross channel interaction.

The architecture of the lightweight convolution module.

Dual-stream efficient channel attention module

The DSECA module is an improvement based on the ECA38 module. As shown in Fig. 4, the ECA module extracts aggregated features \({M_1}\) from the feature map F by using Global Average Pooling (GAP) and a one-dimensional convolution (Conv1D). Building on the ECA module, the DSECA adds a parallel path of Global Max Pooling (GMP) and Conv1D to extract salient features \({M_2}\) from the feature map F. Subsequently, \({M_1}\) and \({M_2}\) are vertically concatenated along the channel dimension to form the comprehensive channel weight M. Finally, the comprehensive channel weight M is multiplied with the original feature map F, achieving a reweighting of the features across different channels. This design allows the DSECA module to more effectively highlight useful features and suppress less important ones, enhancing the model’s ability to capture key information. The overall attention process can be summarized as

In the formulas mentioned above, k represents the size of the convolution kernel, which is adaptively matched to the number of channels C by constants b and r. w denotes the weight of the convolution kernel. \({g_1}\) and \({g_2}\) respectively represent GAP and GMP. σ is the sigmoid function. In summary, DSECA can be easily integrated into any CNN architecture, contributing to the construction of more efficient deep learning models with improved generalization capabilities.

The architecture of the dual-stream ECA module.

Dual-stream feature fusion module

Upon returning to the initial phase where the sample x enters the network, after undergoing complex convolution, it cascades through six LCM modules. The DSFFM module is applied to the last three LCM modules with the aim of fusing the features of these deeper layers. As depicted in Fig. 5, the input to the DSFFM module is the feature map \(F_{3}^{\prime }\), which is the output of the third LCM module. Similar to the strategy of the DSECA module, the fourth, fifth, and sixth LCM modules are processed through parallel GAP and GMP branches, resulting in the feature maps \({F_{Ave}}\) and \({F_{Max}}\). Within each branch, an ‘Add’ operation is used for feature fusion, and Conv1D is employed for dimensionality reduction. Finally, \({F_{Ave}}\) and \({F_{Max}}\) are vertically concatenated along the channel dimension to obtain the final output feature map \({F_{End}}\). The overall process can be summarized as

Here w represents the weights of the Conv1D kernels, and \(F_{1}^{\prime }\) is the output feature map of the first LCM module. The activation function used is the ReLU function, denoted by σ. After passing through the DSFFM module, the output feature map \({F_{End}}\) is concatenated to a fully connected layer. A cross-entropy loss function SoftMax is employed to map the output of the fully connected layer to probabilities for each modulation category, with the label corresponding to the highest probability considered as the predicted modulation type of the input signal. The DSFFM module allows the network to integrate feature maps from different layers, thereby capturing features at varying levels of granularity. This strategy contributes to enhancing the model’s performance because it is capable of capturing both local detailed information and more global contextual information.

The architecture of the dual-stream feature fusion module.

Experimental methodology

To gain a deeper understanding of the characteristics of the ULNN network, we designed a series of experiments to address the following three research questions: (1) Ablation experiments: We evaluated the specific impact of data augmentation, DSECA and DSFFM modules, as well as the dual-stream structure on model performance. (2) Model performance analysis: We compared the ULNN network with five other benchmark models to analyze its relative performance on the RML2016.10 A dataset. (3) Model complexity analysis: We compared the ULNN network with the other five benchmark models in terms of the number of parameters and other aspects. These experiments aim to comprehensively assess the effectiveness and efficiency of the ULNN network in the task of AMC.

Datasets

All experiments will be conducted on the public dataset RML2016.10 A, which is generated using Python scripts with the GNU Radio processing library. The signals consist of ASCII text and audio, with channel impairments such as sampling frequency offset, Rayleigh fading, and time delay. The specific details of the dataset are shown in Table 1.

Baseline models

In the evaluation of network model performance, we compared model with five existing bench mark. These models include: (1) CLDNN27: CLDNN is a classic structure in speech recognition that has been successfully transferred to the field of electromagnetic signal recognition. CLDNN effectively extracts temporal features of signals by combining CNN and LSTM. (2) MCNet28: MCNet effectively captures temporal features and prevents overfitting by arranging nonsymmetric kernels and residual modules in parallel. (3) MCLDNN29: MCLDNN is a space-time multi-stream architecture that integrates the temporal feature information of the I, Q, and both IQ streams. (4) IQCLNet30: IQCLNet is also based on the CNN and LSTM architecture, but unlike CLDNN, IQCLNet places more emphasis on extracting the correlation features between the I and Q streams. (5) FastMLDNN39: FastMLDNN introduces a novel lightweight single-stream neural network composed of group convolution layers and transformer encoding layers. These networks have all been validated on the RML2016.10 A datasets. Therefore, they serve as reasonable benchmarks for assessing the performance of the proposed ULNN model.

Evaluation metrics

In our experimental evaluation, we primarily conducted classification tasks for modulated signals, hence we used accuracy as the metric to assess the model’s classification capability. The formula for accuracy is as follows. Where PAcc represents the classification accuracy. Ncorrect denotes the number of the correctly identified samples and Ntest denotes the number of test samples.

Experiment results and analysis

In this section, we delve into the research questions posed in Sect. 4 and present the experimental results. To begin with, we should state the model training environment and parameter settings. All experiments were conducted on a GTX1080Ti GPU using Python 3.6, Tensorflow-gpu 1.14, and Keras 2.2.4. The number of training, validation, and testing samples was 308,000 (after DA), 33,000, and 110,000, respectively. For the optimization algorithm, we chose the Adam algorithm. And we use a dynamic learning rate adjustment strategy that the learning rate started at 0.001 and decreased by 80% if there was no improvement in accuracy on the validation set for 10 consecutive iterations. Additionally, we set the batch size to 128 and the number of epochs to 150 to guide the network training.

Ablation studies

To verify the impact of DA on model performance, we conducted comparative experiments on all models using both the original dataset and the augmented dataset. The experimental results are shown in Fig. 6. After using the augmented dataset, ULNN, IQCLNet, and CLDNN and achieved performance improvements of 7.01%, 4.62%, and 4.17% respectively. Among them, the proposed ULNN model showed the most significant performance gain. This indicates that the ability of ULNN to capture features is far greater than the feature expression of the original dataset. In contrast, the performance improvements of MCNet, MCLDNN and FastMLDNN were relatively limited, at 1.25%, 0.72% and 0.71% respectively. This suggests that the generalization capabilities of these three networks on the original dataset were already close to optimal, and DA had a smaller effect on their performance improvement. Overall, the use of rotational phase augmentation can enhance model performance to a certain extent, which is mainly attributed to two factors: one is that DA provides richer feature expressions, helping the model to capture more subtle features. The other is that the data samples themselves have phase offsets37. Rotational phase augmentation helps to correct phase offsets, thereby improving the model’s processing ability for data.

The classification performance of different networks with DA and without DA.

In the ablation study, we first validated the effectiveness of the dual-branch structure in both the DSECA and DSFFM module. In these two modules, we employed the same dual-branch structure strategy, which involves extracting the average features from the feature maps through the GAP branch and extracting the salient features through the GMP branch, followed by concatenation to obtain fused features. To verify the efficacy of the dual-branch structure, we conducted experiments with single-branch and dual-stream structures in each module and calculated the average recognition accuracy for 11 classes of modulated signals. It is important to note that when conducting an ablation experiment on one module, the other module maintained the dual-branch structure. As shown in Table 2, the dual-branch structure achieved performance improvements over the single-branch structure in both the DSECA module and the DSFFM module. This is because the dual-branch structure extracts features from different perspectives, providing richer information and enhancing the model’s expressive power.

Next, we evaluated the impact of the DSECA module and the DSFFM module on network performance. The experiment involved multiple network variants, detailed as follows (1) Base Model (BM): The base model is structured with a complex convolution layer and followed by six LCM modules in cascade. A SoftMax layer is employed at the final stage to perform classification. Compared to the complete model, the base model does not include the DSECA and DSFFM modules; however, all other structural features and parameters remain consistent. (2) BM + DSECA: The network variant with the DSECA module added to the base model. (3) BM + DSFFM: The network variant with the DSFFM module added to the base model. (4) BM + Both: The complete network model that includes both the DSECA and DSFFM modules.

In Fig. 7, we compared the average recognition accuracy of these four network variants. The results show that the average recognition accuracy of the BM, BM + DSECA, BM + DSFFM, and BM + Both networks were 60.84%, 61.94%, 61.27%, and 62.83% respectively. The experimental results indicate that each module in the ULNN has a positive gain effect on network performance, with the greatest gain achieved when both modules are present, reaching an increase of 1.99%.

Ablation experiments on different modules of the ULNN network.

Comparison of model performance

As shown in Fig. 8, we tested all networks on the dataset RML2016.10 A and arranged their average recognition accuracy in descending order of performance. The specific results are as follows: FastMLDNN:63.01%, ULNN: 62.83%, MCLDNN: 62.22%, IQCLNet: 61.17%, CLDNN: 60.18% and MCNet: 58.43%. The average recognition accuracy of the six networks is 61.30%. From these data, it can be seen that our proposed ULNN network exceeding the average network recognition accuracy by 1.53%, and outperforming the MCNet, which has the lowest recognition accuracy, by 4.4%. But there is still a 0.18% gap from the best performing FastMLDNN. From Fig. 8, it is evident that FastMLDNN significantly outperforms other networks in low SNR ranging from − 20 dB to − 8 dB. This superior performance can be attributed to the use of a novel central distance expansion loss function incorporated with the cross entropy loss during the training process.

The classification performance of different networks on RML2016.10 A.

Additionally, in Fig. 9, we present the validation set loss rates for all models over 150 epochs. From the figure, it can be observed that MCLDNN reached the minimum loss at around 20 epochs, but the loss rate showed a gradual increasing trend in subsequent training rounds. This indicates that MCLDNN suffers from overfitting, which also explains why the performance improvement was almost negligible in the ablation experiments regarding DA for MCLDNN. The other networks did not exhibit overfitting issues, with their loss rates converging steadily as training rounds increased.

The validation loss of different networks in 150 epochs.

To better analyze the network’s performance, we divided the SNR range of the dataset into two intervals: low SNR ([-20dB, -2dB]) and high SNR ([0dB, 18dB]). In Table 3, we present the average recognition accuracy of all networks within these two SNR ranges. In the low SNR range, FastMLDNN achieved the highest recognition accuracy at 34.78%, ULNN network achieved the second highest recognition accuracy at 33.77%. The remaining networks did not exceed 33%. As mentioned earlier, FastMLDNN use of an improved loss function resulted in superior anti-interference performance at low SNR.

In the high SNR range, there was a significant difference in the performance of the networks. MCLDNN achieved the highest recognition accuracy at 91.97%. Our network, ULNN, followed closely behind, also showing excellent performance at 91.89%. In contrast, MCNet had an accuracy of only 84.63%. Combining this with the results from the previous ablation experiments regarding DA, it can be inferred that the MCNet network may have underfitting issues. Overall, whether under low SNR or high SNR conditions, our ULNN network demonstrated superior performance, giving it a greater advantage in complex electromagnetic environments.

Complexity analysis

In the task of AMC, considering the application requirements for resource constrained scenarios, we conducted a detailed comparison of the model’s number of parameters, weight size, single instance inference time and floating point operations (FLOPs). As shown in Table 4, The number of parameters in ULNN we proposed is the lowest among all networks, with a total of 8,825. The storage space required only 34.48 KB, representing a mere 2.17% of the largest network, MCLDNN. Furthermore, ULNN achieves a higher recognition accuracy than MCLDNN. While FastMLDNN reached the highest average recognition accuracy, its weight size, inference time, and FLOPs are significantly larger than those of ULNN.

However, in terms of single instance inference time, our network is not the fastest. The MCNet performs better in this aspect. Additionally, we can observe that the inference time does not strictly correlate positively with the number of parameters. This is because the model’s inference time is not only related to the number of parameters but also to the model’s computational volume, memory access volume, hardware platform, and other factors. Therefore, even though our model has the fewest parameters, due to the operational characteristics of the depth-wise separable convolution within the model, the inference time may be constrained by memory bandwidth and data input/output (IO) limitations. Moreover, since the MCLDNN, IQCLNet, and CLDNN networks all contain LSTM structures, the LSTM requires processing each element in the sequence, and the longer the sequence, the greater the computational volume needed. Also, an LSTM model includes multiple gating structures and cell states, all of which require multistep computations. Hence, the inference times for these three networks are generally longer.

In terms of FLOPs, the computational load of ULNN is only 0.0007, which is at least two orders of magnitude lower than that of other networks. Consequently, ULNN necessitates a mere fraction of the computational resources typically required by other networks during both training and inference processes. This not only serves to reduce the hardware overhead but also has the potential to decrease energy consumption. In summary, ULNN achieves a good balance between accuracy and efficiency in addressing the contradiction existing in AMC lightweight. Moreover, ULNN has low requirements for storage space and computing power. These advantages make it more competitive in practical applications, especially in resource-constrained environments.

It should be noted that, due to the limited onboard storage and computational resources of drones. When implementing a modulation signal identification scheme for UAVs based on fog computing communication architecture, it may encounter challenges such as energy constraints, communication delays, network stability, and security issues faced by UAVs acting as fog nodes.

Conclusion

This paper proposes a lightweight and efficient ULNN for AMC tasks. Firstly, we verify the validity of DA, DSFFM and DSECA modules by ablation experiments. Then in the comparison experiment with other benchmark networks, the proposed ULNN network shows excellent performance at both low SNR and high SNR. In addition, our network can achieve high recognition accuracy while remaining lightweight. The experimental results show that ULNN is an efficient neural network with strong anti-jamming ability and suitable for resource constrained environment. In addition, in the ablation experiments of DA, we noticed that data enhancement had different effects on different complexity networks. Therefore, how to balance the model complexity and sample size is also a worthy research direction. This helps us to design more accurate and efficient models.

Data availability

The experimental datasets RadioML2016.10 A can be found at https://www.deepsig.ai/datasets. And The experiment code can be contacting the corresponding author@13373422473@163.com.

References

Cheng, W. & Xia, X. The practical application of mapping yangtze river waterway by UAV. In 2022 Fourth International Conference on Emerging Research in Electronics, Computer Science and Technology (ICERECT) 1–5. https://doi.org/10.1109/ICERECT56837.2022.10060676 (2022).

Zachos, A. & Anagnostopoulos, C.-N. Using TLS, UAV and MR methodologies for 3d modelling and historical recreation of religious heritage monuments. J. Comput. Cult. Herit.1, 1. https://doi.org/10.1145/3679021 (2024).

Nomikos, N., Gkonis, P. K., Bithas, P. S. & Trakadas, P. A survey on UAV-aided maritime communications: Deployment considerations, applications, and future challenges. IEEE Open J. Commun. Soc.4, 56–78. https://doi.org/10.1109/OJCOMS.2022.3225590 (2023).

Zhu, X., Vanegas, F., Gonzalez, F. & Sanderson, C. A Multi-UAV system for exploration and target finding in cluttered and GPS-Denied environments. In 2021 International Conference on Unmanned Aircraft Systems (ICUAS) 721–729. https://doi.org/10.1109/ICUAS51884.2021.9476820 (2021).

Alabadi, M., Habbal, A. & Wei, X. Industrial internet of things: Requirements, architecture, challenges, and future research directions. IEEE Access10, 66374–66400. https://doi.org/10.1109/ACCESS.2022.3185049 (2022).

Zhang, L. et al. A survey on 5 g millimeter wave communications for UAV-assisted wireless networks. IEEE Access7, 117460–117504. https://doi.org/10.1109/ACCESS.2019.2929241 (2019).

Zhang, H., Zhou, F., Wu, Q., Wu, W. & Hu, R. Q. A novel automatic modulation classification scheme based on multi-scale networks. IEEE Trans. Cogn. Commun. Netw.8, 97–110. https://doi.org/10.1109/TCCN.2021.3091730 (2022).

Tarekegn, G. B. et al. Deep-reinforcement-learning-based drone base station deployment for wireless communication services. IEEE Internet Things J.9, 21899–21915. https://doi.org/10.1109/JIOT.2022.3182633 (2022).

Gupta, L., Jain, R. & Vaszkun, G. Survey of important issues in UAV communication networks. IEEE Commun. Surv. Tutor.18, 1123–1152. https://doi.org/10.1109/COMST.2015.2495297 (2016).

Ma, M., Xu, Y., Wang, Z., Fu, X. & Gui, G. Decentralized learning and model averaging based automatic modulation classification in drone communication systems. Drones7, 391 (2023).

Liu, X., Sun, C., Yu, W. & Zhou, M. Reinforcement-learning-based dynamic spectrum access for software-defined cognitive industrial internet of things. IEEE Trans. Ind. Inform.18, 4244–4253. https://doi.org/10.1109/TII.2021.3113949 (2021).

Peng, Y. et al. Automatic modulation classification using deep residual neural network with masked modeling for wireless communications. Drones7, 390. https://doi.org/10.3390/drones7060390 (2023).

Zheng, Q. et al. A real-time transformer discharge pattern recognition method based on cnn-lstm driven by few-shot learning. Electr. Power Syst. Res.219, 109241 (2023).

Zhou, Q., Wu, S., Jiang, C., Zhang, R. & Jing, X. Over-the-air federated transfer learning over UAV swarm for automatic modulation recognition in V2X radio monitoring. IEEE Trans. Veh. Technol.73, 3597–3607. https://doi.org/10.1109/TVT.2023.3324505 (2024).

Li, X., Ran, J. & Zhang, H. Isrnet: An effective network for SAR interference suppression and recognition. In 2022 IEEE 9th International Symposium on Microwave, Antenna, Propagation and EMC Technologies for Wireless Communications (MAPE) 428–431. https://doi.org/10.1109/MAPE53743.2022.9935209 (2022).

Gupta, A. & Krishnamurthy, V. Principal–agent problem as a principled approach to electronic counter-countermeasures in radar. IEEE Trans. Aerosp. Electron. Syst.58, 3223–3235. https://doi.org/10.1109/TAES.2022.3147739 (2022).

Maroto, J., Bovet, G. & Frossard, P. Maximum likelihood distillation for robust modulation classification. In ICASSP 2023–2023 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP) 1–5. https://doi.org/10.1109/ICASSP49357.2023.10096156 (2023).

Shuli, D., Zhipeng, L. & Linfeng, Z. A modulation recognition algorithm based on cyclic spectrum and svm classification. In 2020 IEEE 4th Information Technology, Networking, Electronic and Automation Control Conference (ITNEC), vol. 1, 2123–2127. https://doi.org/10.1109/ITNEC48623.2020.9085022 (IEEE, 2020).

Zhang, Z., Luo, H., Wang, C., Gan, C. & Xiang, Y. Automatic modulation classification using cnn-lstm based dual-stream structure. IEEE Trans. Veh. Technol.69, 13521–13531. https://doi.org/10.1109/TVT.2020.3030018 (2020).

Abdel-Moneim, M. A. et al. Efficient cnn-based automatic modulation classification in uwa communication systems using constellation diagrams and gabor filtering. In 2023 3rd International Conference on Electronic Engineering (ICEEM) 1–6. https://doi.org/10.1109/ICEEM58740.2023.10319475 (2023).

Li, T., Li, Y. & Dobre, O. A. Modulation classification based on fourth-order cumulants of superposed signal in NOMA systems. IEEE Trans. Inf. Forens. Secur.16, 2885–2897. https://doi.org/10.1109/TIFS.2021.3068006 (2021).

Peng, C., Cheng, W., Song, Z. & Dong, R. A noise-robust modulation signal classification method based on continuous wavelet transform. In 2020 IEEE 5th Information Technology and Mechatronics Engineering Conference (ITOEC) 745–750. https://doi.org/10.1109/ITOEC49072.2020.9141879 (2020).

O’Shea, T. J., Corgan, J. & Clancy, T. C. Convolutional radio modulation recognition networks. In Engineering Applications of Neural Networks: 17th International Conference, EANN 2016, Aberdeen, UK, September 2–5, 2016, Proceedings 17 213–226. https://doi.org/10.1007/978-3-319-44188-7_16 (Springer, 2016).

Zheng, Q., Zhao, P., Li, Y., Wang, H. & Yang, Y. Spectrum interference-based two-level data augmentation method in deep learning for automatic modulation classification. Neural Comput. Appl.33, 7723–7745. https://doi.org/10.1007/s00521-020-05514-1 (2021).

Zhou, Q. et al. Lstm-based automatic modulation classification. In 2020 IEEE International Symposium on Broadband Multimedia Systems and Broadcasting (BMSB) 1–4. https://doi.org/10.1109/BMSB49480.2020.9379677 (2020).

Zheng, Q., Zhao, P., Wang, H., Elhanashi, A. & Saponara, S. Fine-grained modulation classification using multi-scale radio transformer with dual-channel representation. IEEE Commun. Lett.26, 1298–1302. https://doi.org/10.1109/LCOMM.2022.3145647 (2022).

27. West, N. E. & O’shea, T. Deep architectures for modulation recognition. In 2017 IEEE International Symposium on Dynamic Spectrum Access Networks (DySPAN) 1–6. https://doi.org/10.1109/DySPAN.2017.7920754 (IEEE, 2017).

Huynh-The, T., Hua, C.-H., Pham, Q.-V. & Kim, D.-S. Mcnet: An efficient cnn architecture for robust automatic modulation classification. IEEE Commun. Lett.24, 811–815. https://doi.org/10.1109/LCOMM.2020.2968030 (2020).

Xu, J., Luo, C., Parr, G. & Luo, Y. A spatiotemporal multi-channel learning framework for automatic modulation recognition. IEEE Wirel. Commun. Lett.9, 1629–1632. https://doi.org/10.1109/LWC.2020.2999453 (2020).

Cui, T., Wang, D., Ji, L., Han, J. & Huang, Z. Time and phase features network model for automatic modulation classification. Comput. Electr. Eng.111, 108948 (2023).

Zheng, Q. et al. Mobilerat: A lightweight radio transformer method for automatic modulation classification in drone communication systems. Drones7, 596. https://doi.org/10.3390/drones7100596 (2023).

Wang, F., Shang, T., Hu, C. & Liu, Q. Automatic modulation classification using hybrid data augmentation and lightweight neural network. Sensors23, 4187. https://doi.org/10.3390/s23094187 (2023).

Gong, A., Zhang, X., Wang, Y., Zhang, Y. & Li, M. Hybrid data augmentation and dual-stream spatiotemporal fusion neural network for automatic modulation classification in drone communications. Drones7, 346. https://doi.org/10.3390/drones7060346 (2023).

Ren, B., Teh, K. C., An, H. & Gunawan, E. Ofdm modulation classification using cross-sknet with blind iq imbalance and carrier frequency offset compensation. IEEE Trans. Veh. Technol.73, 8389–8403. https://doi.org/10.1109/TVT.20243356606 (2024).

Huang, L. et al. Data augmentation for deep learning-based radio modulation classification. IEEE Access8, 1498–1506. https://doi.org/10.1109/ACCESS.2019.2960775 (2019).

Guo, L., Wang, Y., Lin, Y., Zhao, H. & Gui, G. Ultra lite convolutional neural network for fast automatic modulation classification in low-resource scenarios. Preprint at http://arXiv.org/2208.04659 (2022).

O’Shea, T. J., Pemula, L., Batra, D. & Clancy, T. C. Radio transformer networks: Attention models for learning to synchronize in wireless systems. In 2016 50th Asilomar Conference on Signals, Systems and Computers 662–666. https://doi.org/10.1109/ACSSC.2016.7869126 (IEEE, 2016).

Wang, Q. et al. Eca-net: Efficient channel attention for deep convolutional neural networks. In Proc. IEEE/CVF Conference on Computer Vision and Pattern Recognition 11534–11542. https://doi.org/10.1109/CVPR42600.2020.01155 (2020).

Chang, S. et al. A fast multi-loss learning deep neural network for automatic modulation classification. IEEE Trans. Cogn. Commun. Netw.9, 10. https://doi.org/10.1109/TCCN.2023.3309010 (2023).

Author information

Authors and Affiliations

Contributions

Conceptualization, M.W. and Y.F.; methodology, J.L and Y.Z.; software, M.W. and Y.W.; writing—original draft preparation, J.L. and Y.Z.; writing—review and editing, M.W. and Y.F.; visualization, Y.Z. and Y.W.; funding acquisition, S.F. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wang, M., Fang, S., Fan, Y. et al. An ultra lightweight neural network for automatic modulation classification in drone communications. Sci Rep 14, 21540 (2024). https://doi.org/10.1038/s41598-024-72867-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-72867-1

This article is cited by

-

SCALNet: A lightweight attention-enhanced convolutional network for robust modulation classification using constellation diagrams

Signal, Image and Video Processing (2026)

-

A lightweight LSTM-based open-set RF fingerprinting identification for edge deployment

Scientific Reports (2025)