Abstract

Investigators in neuroscience have turned to Big Data to address replication and reliability issues by increasing sample size. These efforts unveil new questions about how to integrate data across distinct sources and instruments. The goal of this study was to link scores across common auditory verbal learning tasks (AVLTs). This international secondary analysis aggregated multisite raw data for AVLTs across 53 studies totaling 10,505 individuals. Using the ComBat-GAM algorithm, we isolated and removed the component of memory scores associated with site effects while preserving instrumental effects. After adjustment, a continuous item response theory model used multiple memory items of varying difficulty to estimate each individual’s latent verbal learning ability on a single scale. Equivalent raw scores across AVLTs were then found by linking individuals through the ability scale. Harmonization reduced total cross-site score variance by 37% while preserving meaningful memory effects. Age had the largest impact on scores overall (− 11.4%), while race/ethnicity variable was not significant (p > 0.05). The resulting tools were validated on dually administered tests. The conversion tool is available online so researchers and clinicians can convert memory scores across instruments. This work demonstrates that global harmonization initiatives can address reproducibility challenges across the behavioral sciences.

Similar content being viewed by others

Introduction

Data sharing consortia aim to increase the robustness and statistical power of results by aggregating large and diverse samples1,2. While analyses of large datasets can provide unparalleled statistical power, data aggregation without robust harmonization can mask and even introduce flaws and biases3. This is a critical consideration in the behavioral sciences, where multi-site collaboration often requires the synthesis of non-identical cognitive measures4,5,6. For example, verbal memory/recall is a core cognitive function, and deficits in learning and memory are one of the most common and widely assessed patient complaints7. However, a wide variety of auditory verbal learning tasks (AVLTs) exist that can be administered to assess verbal memory and recall, and these differ across a range of qualitative and quantitative features7,8,9. Such differences in assessment instruments can contribute to inconsistencies in the measurement of neurocognitive performance10. Therefore, what is needed is a means to accurately convert scores across common AVLTs. This paper specifically focused on auditory verbal learning tests (AVLTs) as an example of how harmonisation procedures previously applied to other assessments4. can be applied to harmonize instruments commonly used to assess cognitive effects following TBI.

Methods to accurately relate scores across AVLTs could facilitate highly powered studies of verbal memory and recall, offering opportunities for new clinical insights11,12. However, data from single sites are typically biased by the specific attributes, demographics, and inclusion criteria of the study which can increase variance/error and confound reproducibility13,14,15. To address these limitations, emerging data harmonization approaches offer new ways to perform data transformations that remove unwanted influences in aggregated data, such as site-specific differences in test administration, while preserving meaningful effects. Data harmonization of large and heterogeneous AVLT data sources represents an appropriate framework for the development of cross-AVLT score conversion tools, but such efforts come with analytical and organizational challenges1,4,16,17.

In particular, the Enhancing NeuroImaging Genetics through Meta-Analysis (ENIGMA) Brain Injury working group brings together researchers from around the world to study brain structure, function, and cognitive endpoints after brain injury by aggregating large sample studies from multiple studies. However, studies that aim to evaluate subtle differences in cognitive endpoints of brain injury must overcome three primary challenges (1) A large, international sample of data is required including both controls and brain injured individuals, (2) Multisite raw data aggregation requires methods that can isolate and remove unwanted site effects, while explicitly preserving meaningful relationships, (3) Appropriate psychometric methods are needed to measure and account for different instrumental effects across multiple item scales.

To overcome these challenges, we report a retrospective multisite (n = 53 datasets) mega study analysis of three Common AVLTs: the California Verbal Learning Test (CVLT7; the Rey Auditory Verbal Learning Test (RAVLT18; and the Hopkins Verbal Learning Test-Revised (HVLT19; drawing from international healthy and brain-injured populations across 13 countries and 8 languages. In contrast to meta-analyses which combine summary statistics from several sites, we conducted a mega-analysis that centralizes and pools individual raw data from many sites. This allows for a richer range of experimental designs which can consider subtle single item differences in detail1,2.

Our primary hypothesis was that conversion performance would be significantly improved by a mega-analytic pipeline combining harmonization and item response theory (IRT) models. IRT is an appropriate method for this purpose because it can make use of multiple memory items of varying properties and difficulty in order to place all individuals on the same ability scale, regardless of the memory instrument used for assessment. Similarly, batch harmonization algorithms are appropriate when there may be spurious or nonbiological effects attributed to a large number of underlying sites that must be isolated and removed. The goal of this study was to establish crosswalks between common memory measures, and address long standing data compatibility issues for AVLTs through the dissemination of freely available instrument conversion tools: enigma-tools.shinyapps.io/verbal-learning-calculator/

Methods

Data sources and inclusion criteria

A range of international studies of head injury and comparator groups and controls for a variety of conditions were included. Comprehensive details and references for these studies are provided in the supplement, alongside exhaustive study-level definitions of what constituted brain injury, controls, groups, and inclusion/exclusion criteria (see Supplementary Table S1 and S2)20,21,22,23,24. This secondary multisite (N = 53 datasets) mega-analysis focused on three AVLTs: the CVLT7, HVLT19, and RAVLT18. To mitigate balance issues, we included only comparator controls and groups with TBI. As described in prior work4, we aggregated data contributed by collaborators in the Psychiatric Genomics Consortium (PGC), the Enhancing NeuroImaging Genetics through Meta-Analysis Consortium (ENIGMA) working groups25, the ENIGMA Brain Injury working group15, and the Long-term Impact of Military-relevant Brain Injury Consortium—Chronic Effects of Neurotrauma Consortium, LIMBIC-CENC17. The University of Utah provided overall Institutional Review Board (IRB) study approval and each contributed study was approved by the IRBs of their respective institutions. Each contributed study was conducted in accordance with the Declaration of Helsinki, including obtaining informed consent from each participant.

To limit sources of variability, we excluded anyone with a known clinically diagnosed mental health or neurological condition other than traumatic brain injury (TBI). Consistent with standard AVLT administration practices, we included only participants aged 16 years or over. In the case of longitudinal or serial measurement designs, only the first measurement of AVLTs per person were included; repeated measurements were dropped.

Verbal learning task contents and scoring

Table 1 provides a summary overview of the key features of the AVLTs assessed. AVLT scores on each trial denote the number of correct words that are recalled. The maximum score reflects the number of memory items per list. The sum of the total words recalled across all immediate free recall (learning) trials is the immediate free recall summary score (Sum of Learning Trials). These raw scores are often subsequently normed so that the performance of the individual can be contextualized relative to a population of interest. However, in this work we exclusively assess raw scores, and not t-scores or normative scores. We focused on raw scores because normative values are occasionally updated over time and are based upon instrumentally-distinct normative samples.

The California verbal learning test

The CVLT7 refers to a family of instruments that assess verbal learning and memory deficits. The CVLT has been revised twice, and three iterations exist (CVLT-I, CVLT-II, and CVLT-3). Additionally, the CVLT comes in standard, short, and alternate forms. In this work, we estimated crosswalks for the more recent CVLT-II and the CVLT-3. While the CVLT-3 is nominally a revision of the CVLT-II, in practice the target words, their order, and their number are the same for both the CVLT-3 and CVLT-II. Thus, we refer to both CVLT-3 and CVLT-II standard and alternate forms together as ‘CVLT’. Table 1 provides a numerical overview of the key features of the CVLT. The CVLT uses M = 16-word list lengths, which are drawn from 4 semantic categories, and 5 consecutive learning trials. The CVLT is a comprehensive test that includes a distractor list, cued and free recall assessments, short and long delay trials, and a recognition trial with 48 words.

The Hopkins verbal learning test–revised

The HVLT-R19 is a relatively short measure of verbal learning and memory deficits. The HVLT exists in two primary forms (original and revised) denoted together as ‘HVLT’. Table 1 outlines the key features of the HVLT. The HVLT does not use a distractor list for immediate recall and does not assess cued or a short delay recall performance. The HVLT uses M = 12-word list lengths, which are drawn from 3 semantic categories, and uses a small (N = 24) total pool of words for scoring. The HVLT has three consecutive learning trials.

The rey auditory verbal learning test

The RAVLT18 is a measure of verbal learning and memory deficits. Table 1 provides an overview of the key features of the RAVLT. The RAVLT draws from random, semantically unrelated words, and employs a M = 15-word list length, a distractor list, as well as a large (N = 50) total pool of words for scoring recognition hits. Alternate forms also exist for the RAVLT.

Covariates

Language, country of origin, age at testing, sex/gender, race/ethnicity, site/study, military/civilian status, TBI history, and education level were included and adjusted for in this study. The exclusion criteria were used to rule out the presence of any other clinically relevant variables, including epilepsy, dementia, and mild or early onset cognitive impairment26. While some of the studies recorded gender, others recorded biological sex, and these were aggregated into a single variable. Ethnicity was binarized to Hispanic/Latino, or Not Hispanic/Latino. Perspectives on race/ethnicity differ widely according to cultural context27, and we elected to use broad categories of Black, White, Asian, and Other. Covariate coefficients per AVLT model were converted to percentages, averaged, and then applied back to adjust the full cohort. This means each covariate had the same effect on scores regardless of the instrument used.

Statistical analysis

Analysis was performed in Python 3 and in R. Kruskal–Wallis H tests (omnibus) were used to test for overall significance across groups. Where normality was confirmed, t tests were used for post-hoc pairwise comparisons with additional correction for multiple comparisons. Overall missing values were low (< 5%) and any missing data points were imputed with nearest neighbor imputation. After data cleaning and imputation, Empirical Bayes harmonization using the ComBat-GAM algorithm13 was used to remove unwanted site effects while preserving instrumental effects for further analysis. The ComBat-Gam algorithm is a recent, popular approach for removing complex batch effects while preserving important other data properties.

Correcting for site effects

Modeling was conducted in three stages: first, the overall dataset was divided into three subsets, one per AVLT instrument. The ComBat-GAM algorithm13 was applied to each of these three subsets separately to remove site effects within each AVLT. ComBat-GAM explictly preserves complex covariate effects while isolating and removing site effects13 and the effectiveness of ComBat in reducing site and batch effects is independent of data type. ComBat-GAM was selected as the harmonisation method because it can preserve nonlinear covariate effects such as age effects on cognition through generalized additive modeling. After site correction, covariate adjustment was performed as follows: the overall dataset was again divided into three distinct subsets by instrument, and ordinary least squares (OLS) linear models were used to estimate and remove covariates in AVLT linear models.

Sampling structure

Cross-validation is constrained for use in harmonisation studies where the data are altered by a procedure using information from other samples that are not part of each validation set. This raises concern for data leakage, where information about the harmonisation or site effect parameters might influence the validation of the crosswalk after harmonisation. To ensure robust validation, we elected to have an entirely separate test set of scores for individuals who were dually administered two AVLTs. If the crosswalk model (fit between measure scores) agreed with the empirical data, then this would provide evidence of crosswalk accuracy.

Conversion process

Our goal was to obtain equivalent scores across tests. If two people have the same underlying verbal learning ability, then on average they will obtain equivalent (although not necessarily equal) scores on two tests of the same construct, regardless of their difficulty. Therefore, what is needed is to place individuals on a single construct ability scale. We estimated relative item parameters (including difficulties) for all but false positive items from the available data. We then used maximum likelihood estimation to calculate ability scores per person using both their raw item scores and the estimated item parameters (including difficulties). The estimated item difficulties served as weights for the (log) odds of observing a proportion correct of scores, with more discriminating and/or more difficult items weighted more heavily. Weighted item scores were then summed to estimate individual abilities. With all individuals placed on the same ability scale, item scores were equatable.

Item response theory

A Continuous Response Model (CRM) in the IRT family28,29,30,31 was used to estimate each subject’s verbal learning ability because item score ranges were large (> = 12) and different across tests. CRM is an extension of the Graded Response Model (Samejima, 1969) for continuous response formats. Graded response formats with large number of score points (e.g., > = 9) are often considered continuous and CRM is appropriate in this condition32. To calibrate parameters, we used Shojima’s29 simplified expectation maximization (EM) method by assuming non-informative priors for item parameters as implemented in the EstCRM (Continuous Response Model) R package28. After all data adjustments, samples taking different AVLTs were assumed to be randomly equivalent (see Limitations), such that verbal learning ability estimates were placed on the same scale using a ‘randomly equivalent groups’ linking design. The relative difficulty of all items across tests was taken into account to create a single ability scale, and tables of equivalent AVLT scores were linked through the ability scale.

Anchor items and ability measures

Anchor items similar in format and nature were identified for each of the three required crosswalks (1. RAVLT ↔ CVLT; 2. RAVLT ↔ HVLT; and 3. CVLT ↔ HVLT). After expert consensus and trials of different anchor combinations, we elected to use immediate free recall learning trials, short delay, and long delay free recall as anchor items, where available. Short delay was used as an anchor item between CVLT and RAVLT only (short delay is not assessed in HVLT). False positive measures were not recorded consistently across sites and were not used. Recognition hits showed inconsistent behavior and were excluded from conversions (see Limitations). Since all site effects and measured covariate effects had been removed prior to IRT analysis, we assumed scores were randomly equivalent across measures and ability scores did not require further scaling.

Results

Data summary

An overview of the key features differentiating the CVLT, RAVLT, and HVLT assessments are provided in Table 1. Supplementary Table S1 shows summary characteristics itemized for each of the 53 aggregated datasets after applying exclusion criteria. Overall, the sample size was N = 10,505 (31.8% female) which included both controls and TBI groups. The median age was 42 years with an interquartile range of 30–55 years. Different studies showed significant differences in total sum of trial scores (Fig. 1), and site-related variation in scores was reduced by harmonization. Table 2 shows the summary statistics of the full cohort after aggregation. Each instrument was represented by > 1000 subjects across > 10 studies, indicating good representation of AVLTs. Significant differences in demographic characteristics were evident across measures, indicating that covariate adjustment was required.

Comparing multisite data of total of trials scores before and after ComBat harmonization and adjustment for (a) CVLT, (b) RAVLT, and (c) HVLT. Results are sorted by median score per study. Variation in site medians were reduced after harmonization. Full details for all sites are available in Supplementary Fig. S1.

Harmonization

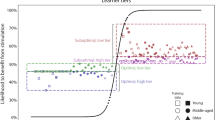

The ComBat-GAM algorithm was implemented to correct for site-specific variations such as differences in inclusion/exclusion criteria (Supplementary Table S2) while preserving real covariate effects. Figure 2 shows a comparison of single site mean scores, before (gray dots) and after (colored dots) site harmonization. A line is drawn to connect each site from its pre- to post-harmonized value. Gray distributions portray the variation in mean scores across sites; colored distributions portray site mean score distributions after harmonization (CVLT: Blue, RAVLT: Orange, HVLT: Red). The unadjusted distributions of scores (gray areas) exhibit much higher variance than their post-harmonized equivalents, and overall harmonization reduced total variance by 37% across all items. As covariate effects were preserved, variations owing to unwanted site effect were reduced. In a secondary analysis of latent dimensions, a principal component analysis (PCA) reduction of all verbal learning memory items identified a 3.8% mean reduction in interquartile spread of latent factors after harmonisation across all measures (Supplemental Fig. S1). Therefore, the majority of the latent factor was associated with verbal learning ability, and largely preserved by harmonization.

Comparing proportions of memory items recalled before and after harmonization. Mean scores for each site (dots) are shown broken out by instrument (color) and item (Top: Trial 1 immediate free recall, Middle: Total sum of all Trials, Bottom: Long-delay free recall scores).

Covariate adjustment

Figure 3a shows boxplots of unadjusted sum of learning trial scores as percentages stratified by group (TBI vs. control) and sex/gender. TBI history and male sex/gender were both associated with lower sum scores across all three tests. Age-related declines were well-fit by quadratics; years of education were well-fit by a straight line (Fig. 3b,c). The effects of both age and education were significant and consistent across all tests. Linear models within each measure were used to assess covariates (Table 3) after language and country of origin effects were removed separately prior to harmonization.

Visualizing covariate effects on covariate unadjusted, harmonized scores. (a) Boxplots of scores stratified by group (TBI vs. control) and sex/gender indicated that males and those with history of TBI had significantly lower scores on average. Age-related declines (b) and the beneficial effects of education (c) on scores were consistent across all AVLTs.

Score conversion

A continuous IRT model (Fig. 4) was used to estimate the latent trait of all individuals while accounting for different item difficulties and discriminations across multiple test items. Third degree polynomials estimated the relationship between observed scores and ability scores for each. Cubic polynomial fits of ability vs. score are shown in Fig. 4a for immediate, short, and long delay items. Horizontal lines of equivalent ability connect equivalent scores. Items with longer delay show larger differences in difficulty than shorter delay items. A secondary sensitivity analysis conducted using only the control population and no individuals with TBI resulted in similar conversions.

Visualizing and Validating Conversions. (a) Average scores as a function of individual ability are shown approximated as cubic polynomial fits for immediate, short, and long delay trials. Scores shown are not normed or T-scored. Horizontal lines of equivalent ability connect equivalent scores across tests, which facilitates the construction of crosswalks. (b) Scatter plot and fit to the sum of learning Trial scores for a subset of cases who were administered both the CVLT and RAVLT (n = 36). The confidence area of the dually assessed data is shown in blue and agrees with the derived crosswalk for CVLT- > RAVLT (n = 9362, black dotted line).

Validation with dually administered tests

We validated the derived conversions on held-out data not used in other analyses. Validation was conducted by comparing the conversion estimates to real data where two verbal learning tests were administered to the same set of individuals (Fig. 4b; n = 36). How well conversion lines fit the dually administered test scores is a measure of conversion accuracy. Although this sample size was small compared to the total aggregated data, it still independently suggests agreement between the derived conversion models and dually administered test scores (Fig. 4b, blue shaded area). These data are fitted against the IRT-derived conversion scores (black dotted line) for RAVLT to CVLT. The line falls within the 95% confidence bound for the dually administered tests, indicating agreement. Compared to the same conversion model constructed using unadjusted data, the harmonized conversion exhibited a 9.5% lower root mean squared error against the held-out data, indicating that harmonization moderately improved conversion. Figure 4b indicated plausible, modest model agreement within the constraints of the sample size. As a further validation, cross-item correlations for each AVLT were compared before and after harmonisation. As shown in supplemental Fig. S2, harmonisation had only a small effect on the item cross-correlations (− 0.016 reduction in average cross-correlation). Table 3. Blocked linear regressions predicting sum of learning scores per instrument. The average percentage effect across all AVLTs are shown, indicating that Age > 65 had the largest impact on scores overall (− 11.4%). * indicates significance after correction for multiple comparisons.

Ability scale properties

The properties of the derived verbal learning ability scale were evaluated as a function of sex and TBI status for all participants (Fig. S2). Consistent with prior findings for the total of trials scores (Fig. 3a), females exhibited significantly higher verbal learning ability than males across all instruments. Comparing TBI and control groups, history of TBI was associated with significant declines in ability, ranging from 0.12 to 0.79 standard deviations across groups. In Fig. 5, the distribution of unadjusted ability scores is shown for each site ranked by ability and color-coded by median age per site. Unadjusted median verbal learning ability varied across sites, and these differences were strongly associated with the median age per site, and other covariates.

The distribution of unadjusted ability scores are shown for each site, ranked by ability and color-coded by median age per site.

Application

Details for converting scores using the online tool available at enigma-tools.shinyapps.io/verbal-learning-calculator/are provided as Supplementary Note 1: Procedure for data conversion.

Discussion

There have never been more studies published annually in the history of the neurosciences33 This intensive rate of research offers unparalleled opportunity for data combination and nuanced examination of cognitive and behavioral changes associated with neurological diagnoses. However, “high volume science” lacks coordination between studies, which poses critical challenges for the integration of findings and data harmonization. For example, AVLTs are the most common method for learning and memory assessment, but they were independently developed and without explicit quantitative reference to pre-existing instrumentation. Over the last 70 years, this has led to a scenario where clinicians and researchers routinely use distinct AVLTs with incomparable results7,8,9. This is not only a technical inconvenience but is problematic for the interpretation and reproducibility of results and findings.

Constructing reliable standards for converting scores across common AVLTs is challenging, because conversions should be made independent of factors such as language, study group, and instrumental details. For example, given more words to recall, it is more likely that more words will be recalled. Naim et al. found the average number of memory items recalled (R) scales with the root of M items presented34, and there are other subtle differences between seemingly similar assessments. Large-sample mega-analysis and harmonization present a promising solution to address these concerns and examine interesting clinical features.

Beyond conversions, comparing the difficulty of tests on the same ability scale may assist with the selection of AVLT across different research and clinical contexts. For example, the HVLT was the easiest test overall, while the CVLT was the most challenging test. The HVLT may be most appropriate for the assessment of individuals who are at risk for significant impairment. Conversely, the CVLT has sufficient dynamic range to discriminate within high ability groups, while the RAVLT may be well suited for studies involving a wide range of abilities. However, these are relatively coarse recommendations which may only be suitable in specific scenarios35.

In the process of converting across AVLTs, site effects such as different settings, inclusions, and procedures were found to have an appreciable impact on verbal learning scores. This may also be attributable to underlying differences in inclusion/exclusion criteria across studies (Table S2). However, a detailed list of all the ways our sources differed was not necessary to remove these effects in aggregate with a harmonization algorithm. We confirmed our primary hypothesis that conversion error would be reduced by implementing a mega-analytic pipeline combining harmonization and IRT. In time, these conversions may be found to be suitable for clinical utilization at the individual level, although verifying this will require further independent scrutiny.

Appropriate harmonization transforms data in ways that preserve its core relationships. For this study, these relationships include the associations between scores and ability, between scores and covariates (e.g., age-related memory decline), and the measurement of the underlying cognitive construct. After harmonization, the higher scores associated with younger age, female sex/gender, more education, and controls persisted for all AVLTs, despite a large drop in cross-site variance, indicating unwanted effects were removed, while important covariate effects were preserved. Interestingly, despite the large sample size, no Race/Ethnicity variable was consistently associated with higher or lower scores.

Strengths and limitations

Strengths of this study include a comprehensive dataset of more than ten thousand participants drawn from 53 international datasets that recorded performance on verbal learning tasks. This work suggests the specific choice of AVLT has a pronounced effect; averaged across items, the CVLT was the most challenging test, although it was similar to the RAVLT in difficulty, while the HVLT was the least difficult, as expected due to its lower complexity36. Free conversion tables and tools can assist clinicians to track and compare patient scores against large reference groups, regardless of differences in AVLT administration practices. For example, the derived crosswalks operate independent of whether adjustment has or has not been implemented in the scores input into the online calculator. More broadly, this work demonstrates that data harmonization of large data sharing initiatives can offer new tools to address long standing data challenges.

Our study has several limitations. This study considered only a limited binary interpretation of lifetime history of TBI, which exists along a spectrum of severity and has distinct phenotypes (37. However, a secondary sensitivity analysis conducted using only the control population and no individuals with TBI resulted in similar conversions. This study was primarily from English-speaking and western hemisphere countries. Our IRT conversions were validated against held-out data of dually administered tests (Fig. 4b) which found that the harmonization pipeline reduced conversion error by 9.5% compared to unadjusted conversions. However, the sample size was small, and we did not have data to independently assess the other two conversions (RAVLT ↔ HVLT and 3. CVLT ↔ HVLT). We attempted to construct a crosswalk for recognition memory trials, but unlike the other items, we could not establish low error IRT results for the recognition item.

Conclusion

Investigators in neuroscience are increasingly turning to Big Data to address replication and reliability issues. However, the aggregation of data from distinct instruments raises new questions about how to integrate data in ways that preserve meaning. This study aggregated data from 53 sites to link scores across common auditory verbal learning tasks (AVLTs). A conversion tool is made freely available online for researchers and clinicians who wish to directly compare memory scores across different instruments. Harmonized AVLT offers opportunities for new, highly powered mega-analytic investigations of verbal learning and memory. This may be particularly beneficial as a means to functionally characterize the imaging findings from large-scale global open neuroscience initiatives, where interesting imaging features are emerging that are not seen in smaller samples31.

Data availability

Raw data are available upon reasonable request pending appropriate study approvals and data transfer agreements between participating institutions. Interested researchers should contact Emily Dennis (Emily.dennis@hsc.utah.edu). Code used for analysis and online tool creation are available upon request.

References

Thompson, P. M. et al. ENIGMA and global neuroscience: A decade of large-scale studies of the brain in health and disease across more than 40 countries. Transl. Psychiatry10, 100 (2020).

Nagaraj, A., Shears, E. & de Vaan, M. Improving data access democratizes and diversifies science. Proc. Natl. Acad. Sci. U. S. A.117, 23490–23498 (2020).

Rajtmajer, S. M., Errington, T. M. & Hillary, F. G. How failure to falsify in high-volume science contributes to the replication crisis. Elife11, e78830 (2022).

Kennedy, E. et al. Harmonizing PTSD severity scales across instruments and sites. Neuropsychology Accepted.

Pan, F.-F., Huang, L., Chen, K.-L., Zhao, Q.-H. & Guo, Q.-H. A comparative study on the validations of three cognitive screening tests in identifying subtle cognitive decline. BMC Neurol.20, 78 (2020).

Roalf, D. R. et al. Bridging cognitive screening tests in neurologic disorders: A crosswalk between the short Montreal cognitive assessment and mini-mental state examination. Alzheimers. Dement.13, 947–952 (2017).

Woods, S., Delis, D., Scott, J., Kramer, J. & Holdnack, J. The California Verbal Learning Test—second edition: Test-retest reliability, practice effects, and reliable change indices for the standard and alternate forms. Arch. Clin. Neuropsychol.21, 413–420. https://doi.org/10.1016/j.acn.2006.06.002 (2006).

Guilmette, T. J. & Rasile, D. Sensitivity, specificity, and diagnostic accuracy of three verbal memory measures in the assessment of mild brain injury. Neuropsychology9, 338–344 (1995).

Spreen, O. & Strauss, E. A Compendium of neuropsychological tests: Administration, norms, and commentary (Oxford University Press, 1998).

Cardenas, S. A., Kassem, L., Brotman, M. A., Leibenluft, E. & McMahon, F. J. Neurocognitive functioning in euthymic patients with bipolar disorder and unaffected relatives: A review of the literature. Neurosci. Biobehav. Rev.69, 193–215 (2016).

Jennett, B. & Teasdale, G. Wechsler D. A standardized memory scale for clinical use. J. Psychol.

Thiruselvam, I. & Hoelzle, J. B. Refined measurement of verbal learning and memory: Application of item response theory to California Verbal Learning Test—Second Edition (CVLT-II) learning trials. Arch. Clin. Neuropsychol.35, 90–104 (2020).

Pomponio, R. et al. Harmonization of large MRI datasets for the analysis of brain imaging patterns throughout the lifespan. Neuroimage208, 116450 (2020).

Radua, J. et al. Increased power by harmonizing structural MRI site differences with the ComBat batch adjustment method in ENIGMA. Neuroimage218, 116956 (2020).

Wilde, E. A., Dennis, E. L. & Tate, D. F. The ENIGMA brain injury working group: Approach, challenges, and potential benefits. Brain Imaging Behav. Under Review, (2019).

Coryn, C., Hobson, K. & McCowen, R. Meta-analysis as a method of multi-site evaluation: An example from international development. Evaluat. J. Austral.15, 4–14 (2015).

Cifu, D. X. & Dixon, K. J. Chronic effects of neurotrauma consortium. Brain Inj.30, 1397–1398 (2016).

Schoenberg, M. R. et al. Test performance and classification statistics for the Rey Auditory Verbal Learning Test in selected clinical samples. Arch. Clin. Neuropsychol.21, 693–703 (2006).

Benedict, R. H. B., Schretlen, D., Groninger, L. & Brandt, J. Hopkins verbal learning test—revised: Normative data and analysis of inter-form and test-retest reliability. Clin. Neuropsychol.12, 43–55. https://doi.org/10.1076/clin.12.1.43.1726 (1998).

Toga, A. W. & Crawford, K. L. The Alzheimer’s Disease Neuroimaging Initiative informatics core: A decade in review. Alzheimers. Dement.11, 832–839 (2015).

Schmidt, A. et al. Structural network disorganization in subjects at clinical high risk for psychosis. Schizophrenia Bull.https://doi.org/10.1093/schbul/sbw110 (2016).

Lundervold, A. J., Halleland, H. B., Brevik, E. J., Haavik, J. & Sørensen, L. Verbal memory function in intellectually well-functioning adults with ADHD: Relations to working memory and response inhibition. J. Atten. Disorders23, 1188–1198. https://doi.org/10.1177/1087054715580842 (2019).

Petrovsky, N. et al. Sensorimotor gating is associated with CHRNA3 polymorphisms in schizophrenia and healthy volunteers. Neuropsychopharmacology35, 1429–1439. https://doi.org/10.1038/npp.2010.12 (2010).

Broglio, S. P. et al. The natural history of sport-related concussion in collegiate athletes: Findings from the NCAA-DoD CARE consortium. Sports Med.52, 403–415 (2022).

Logue, M. W. et al. Smaller hippocampal volume in posttraumatic stress disorder: A multisite ENIGMA-PGC study: Subcortical volumetry results from posttraumatic stress disorder consortia. Biol. Psychiatry83, 244–253 (2018).

Kennedy, E. et al. Traumatic brain injury and early onset dementia in post 9–11 veterans. Brain Inj.36, 620–627 (2022).

Nasir, N. S. & Hand, V. M. Exploring sociocultural perspectives on race, culture, and learning. Rev. Educ. Res.76, 449–475 (2006).

Zopluoglu, C. EstCRM: An R package for Samejima’s continuous IRT model. Appl. Psychol. Meas.36, 149 (2012).

Shojima, K. A noniterative item parameter solution in each EM cycle of the continuous response model. Educ. Technol. Res. Dev.28, 11–22 (2005).

Samejima, F. Homogeneous case of the continuous response model. Psychometrika38, 203–219 (1973).

Wang, T. & Zeng, L. Item parameter estimation for a continuous response model using an EM algorithm. Appl. Psychol. Meas.22, 333–344 (1998).

Ferrando, P. J. Theoretical and empirical comparisons between two models for continuous item response. Multivar. Behav. Res.37, 521–542 (2002).

Priestley, D. R. et al. Establishing ground truth in the traumatic brain injury literature: If replication is the answer, then what are the questions?. Brain Commun.5, fcac322 (2023).

Naim, M., Katkov, M., Romani, S. & Tsodyks, M. Fundamental law of memory recall. Phys. Rev. Lett.124, 018101 (2020).

Barwegen, K., Resch, Z. J., Ovsiew, G. P., Jennette, K. J. & Soble, J. R. A-232 head-to-head comparison of the rey auditory verbal learning test effort score and forced choice embedded performance validity indicators among patients with and without verbal memory impairment. Arch. Clin. Neuropsychol.37, 1388–1388 (2022).

Shapiro, A. M., Benedict, R. H., Schretlen, D. & Brandt, J. Construct and concurrent validity of the Hopkins Verbal Learning Test-revised. Clin. Neuropsychol.13, 348–358 (1999).

Pugh, M. J. et al. Phenotyping the spectrum of traumatic brain injury: A review and pathway to standardization. J. Neurotrauma38, 3222–3234 (2021).

Acknowledgements

Spanish Ministry of Science and Innovation, Instituto de Salud Carlos III: PI15-00852, PI18-00945, JR19-00024, PI17-00481, PI20-00721, Sara Borrell contract (CD19-00149); European Union: NextGenerationEU (PMP21/00051), PI19/01024, Structural Funds, Seventh Framework Program, H2020 Program under the Innovative Medicines Initiative 2 Joint Undertaking: Project PRISM-2 (Grant agreement No.101034377), Project AIMS-2-TRIALS (Grant agreement No 777394), Horizon Europe; National Institutes of Health: U01MH124639, P50MH115846, R01MH113827, R25MH080663, K08MH068540, R01NS100973, R01EB006841, P20GM103472, RO1MH083553, T32MH019535, R01 HD061504, RO1MH083553, R01AG050595, R01AG076838, R01AG060470, R01AG064955, P01AG055367, K23MH095661, R01MH094524, R01MH121246, T32MH019535, R01NS124585, R01NS122827, R61NS120249, R01NS122184, U54EB020403, R01MH116147, R56AG058854, P41EB015922, R01MH111671, P41RR14075, M01RR01066, R01EB006841, R01EB005846, R01 EB000840, RC1MH089257, U24 RR021992, NCRR 5 month-RR001066 (MGH General Clinical Research Center); NSF: 2112455; Madrid Regional Government: B2017/BMD-3740 AGES-CM-2; Dalhousie Medical Research Foundation; Research Nova Scotia, RNS-NHIG-2021-1931; US Department of Defense: Award # AZ150145; US Department of Veterans Affairs: 1I01RX003444; NJ Commission on TBI Research Grants: CBIR11PJT020, CBIR13IRG026: Department of Psychology, University of Oslo; Sunnaas Rehabilitation Hospital: HF F32NS119285; Canadian Institutes of Health Research: Grant 166098; Neurological Foundation of New Zealand; Canterbury Medical Research Foundation, University of Otago. Biogen US, Investigator-initiated grant; Italian Ministry of Health: RF-2019-12370182, Ricerca Corrente RC 23; National Institute on Aging: National Health and Medical Research Council, Investigator Grant APP1176426; PA Health Research: Grant SAP #4100077082 to Dr. Hillary; La Caixa Foundation, ID: 100010434, fellowship code: LCF/BQ/PR22/11920017; Research Council of Norway: 248238; Health Research Council of New Zealand: Sir Charles Hercus Early Career Development (17/039), 14-440; South-Eastern Norway Regional Health Authority, 2018076; Norwegian ExtraFoundation for Health and Rehabilitation: 2015/ FO5146, 2015044; Stiftelsen K.G. Jebsen, SKGJ MED-02; German Research Foundation: DFG grant FOR2107 to Andreas Jansen, JA 1890/7-1, JA 1890/7-2, DFG grant FOR2107 to Igor Nenadić, NE2254/1-2,NE2254/3-1,NE2254/4-1, DFG grant FOR2107, KI588/14-1 and FOR2107, KI588/14-2, DFG, grant FOR2107 DA1151/5-1 and DA1151/5-2, SFB-TRR58, Projects C09 and Z02; Central Norway Regional Health Authority (RHA) and the Norwegian University of Science and Technology (NTNU); National Health and Medical Research Council: APP1020526; Brain Foundation, Wicking Trust, Collie Trust, Sidney and Fiona Myer Family Foundation. U.S. Army Medical Research and Materiel Command (USAMRMC): Award #13129004; Department of Energy: DE- FG02-99ER62764: Mind Research Network, National Association for Research in Schizophrenia and Affective Disorders: Young Investigator Award, Blowitz Ridgeway and Essel Foundations, NOW ZonMw TOP 91211021, UCLA Easton Clinic for Brain Health, UCLA Brain Injury Research Center, Stan and Patty Silver, Clinical and Translational Research Center: UL1RR033176, UL1TR000124; Mount Sinai Institute for NeuroAIDS Disparities, VA Rehab SPIRE, CDMRP PRAP, VA RR&D IK2RX002922, Veski Fellowship, Femino Foundation grant, Fundación Familia Alonso, Fundación Alicia Koplowitz, CIBERSAM, Madrid Regional Government (B2017/BMD-3740 AGES-CM-2), 2019R1C1C1002457, 21-BR-03-01, 2020M3E5D9079910, 21-BR-03-01, Interdisciplinary Center for Clinical Research (IZKF) of the medical faculty of Münster.

Author information

Authors and Affiliations

Author notes

A comprehensive list of consortium members appears at the end of the paper.

Consortia

Contributions

Original data were collected by the members of the ENIGMA Clinical Endpoints Working Group. E.K., H.M.L., P.W.L., M.J.P., P.M.T., F.G.H., D.F.T., E.A.W., and E.L.D. conceived of the project. E.L.D. and H.M.L. compiled and cleaned the data. E.K. and S.V. completed the processing and analysis. E.K., H.M.L., P.W.L., and E.L.D. wrote the manuscript and all authors reviewed, edited, and approved the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical standards

The overall study was approved by the institutional review board of the University of Utah. This study was limited to secondary deidentified data analysis, and was designated as non-human subjects research.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Kennedy, E., Vadlamani, S., Lindsey, H.M. et al. Bridging big data in the ENIGMA consortium to combine non-equivalent cognitive measures. Sci Rep 14, 24289 (2024). https://doi.org/10.1038/s41598-024-72968-x

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-72968-x