Abstract

Recently, social media has gradually become an important topic in public opinion analysis field. Among social media, Microblog is one of the most important platforms because it is short, convenient, mobile and instantaneous. Social media microblog recognition well reflects the attitudes from an enormous big colony to a specific incident, either positive or negative, which can be used for deriving competitive intelligence, marketing strategies, detecting depression and so on. However, the existing methods usually use only text or image from internet but not take advantages of their complementary information to finalize the recognition, it limits the performance and robustness of the algorithms. In this paper, we present a collaborate decision network (CDN) based on cross-modal attention to exploit the discriminative attributes of multi modalities by data- and knowledge joint driven strategy in depth, and further improve the recognition performance. In addition, we collect and construct a visual-text microblog recognition dataset with 2854 samples to support the subsequent research of related fields. Finally, experimental reuslts on the collected dataset show the effectiveness and superiority of the proposed CDN.

Similar content being viewed by others

Introduction

With the development of internet technology and social media platform, manners of social communication in people daily lives have changed1. As a new platform, Microblog has been highly concerned for sharing, disseminating, and obtaining information, the amount of content posted by its users is very huge and the differences of various contents are also vary considerably2,3,4. The recognition of microblog can help the publisher to adjust the content and type, and can help users to quickly recognize interesting and valuable contents, so as to obtain economic (deriving competitive intelligence and marketing strategies) and emotional benefits. Moreover, based on the content, social media microblog recognition can be used to detect whether the publisher has depression5.

Actually, most social media microblog contents contains two-modality datum, including visual and text, and the social media microblog recognition task aims to encode corresponding image and text pair as discriminative representation to classify theirs attributes. Even though the single-modal large language models or multimodal large language models have achieved significant performances on natural language processing and related tasks, their computational loads and resource consumption are too heavy to processing massive and highly concurrent social media data. In addition, the lack of high-quality annotated data of social media microblogs has also hampered the development of related fields to a certain extent.

In this paper, we construct a lightweight collaborate decision network based on cross-modal attention and dynamical constraints for social media tweet recognition to improve the recognition performance and robustness. First of all, we collect microblog contents consisted of text and video, and downloads them from internet. Besides, we preprocess the microblog content, including text cleaning, word segmenting, and stop words removal. In addition, randomly choose different images from the video as the video datum. In addition, design feature representation network and classifier to distinguish the microblogs to different categories.

In summary, the contributions of this paper can be summarized as follows:

-

1.

We propose a multiscale channel shuffle block, and utilize it construct a lightweight visual representation network, which can significantly reduce the parameter scale of the model and decrease the computational load.

-

2.

We design a cross-modal attention fusion mechanism to exploit the supplementary information between different modalities, and present an auxiliary constraint to improve the representation ability of the model.

-

3.

We construct a large scale of social media tweet recognition dataset, which can provide effective support for the development of the social media and related fields.

The reminder of the paper is organized as follows: section “Related works” reviews the existing methods of visual representation, text representation, and joint decision in brief. Section “Proposed method” details the proposed method. Section “Experiment” reports the experiments. Section “Conclusion” concludes the paper.

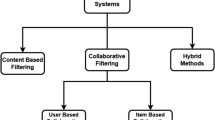

Related works

Visual representation

Visual representation learning aims to obtain the semantic visual embedding space, and there are usually two methods including generation and discrimination. The generation method assumes that a model capable of capturing image distribution can be learned to obtain semantically relevant features6. In contrast, discriminant methods assumes that better features can be obtained by distinguishing images. This idea dates back to early work on metric learning7 and dimensionality reduction8, and is also explicitly represented in supervised classification models9. Recently, Wu et al.10 proposed a way to treat each image as a separate class and utilize enhanced images as class instances to alleviate the need for human annotations. Subsequently, several papers simplified the method11 and proposed non-contrastive variants12. Even though these approaches have achieved significant performances, the efficacy of augmentation-based self-supervised learning has been questioned13. In these cases, many researchers began to use objectivity14 and salience15 to alleviate these concerns. Fang et al.16 proposed a vision-centric method to explored the limits of Visual representation by utilizing publicly accessible data. To learn the structure information in representations of objects and scenes, Ge et al.17 launched a contrastive learning framework with different loss to encourage model to learn different representation. Inspired by human beings’manner of capturing a image, Song et al.18 proposed a semantic-aware autoregressive image modeling framework for visual representation learning. To balance the goals of high accuracy and high efficiency, Su et al.19 designed two types of convolutions which can extract higher-order local differential information.

Text representation

Text representation is a key research topic in multi-modal research, it aims to transform natural language text into a vector representation that machine can understand and process. Here are some common ways to represent text: bag-of-words models treat text as an unordered collection of words and represent text by counting the frequency of each word or using a weighting method. Term frequency-inverse document frequency (TF-IDF) method measures the importance of words in text by combining their word frequency and document frequency. Word embedding is to map a word to a continuous vector space, and the common embedding models contans Word2Vec, Glove, and FastText. Embedding from language models (ELMo)20 is a classical pre-trained language model proposed by the allen institute for artificial intelligence (AI2). In contrast to the traditional fixed word vector, ELMo introduces a contextual representation of the word vector, allowing the word vector to be dynamically adjusted based on contextual information. Unlike traditional language models such as ELMo, BERT21 is a bidirectional model that considers both the left and right context of words in sentences. This means that in the pre-training phase, BERT can better understand the context of words in the sentences, resulting in a richer semantic representation. Avrahami et al.22 presented a model for text-to-image generation utilizing open-vocabulary scene control. To learn joint representations of unlabeled video and text, Zhu et al.23 introduced ActBERT with global action information and entangled transformer block.

Multi-modal joint decision

At present, the problem of using single modal information to make decision is hindered because the incomplete information. In this case, the popularity of multi-modal joint decision strategy has been increased to a new level in recent years. Song et al.24 proposed a video-audio based emotion recognition system, it uses VGG16-Net and Mel frequency Cepstral coefficients (MFCC) to extract video and audio features, which takes advantages of wealth of information in video and audio in order to improve the successive classification rate. The study25 developed a multimodal data fusion short video recommendation method for multi-modal data fusion to make full use of the similarities and differences between different models, it increases the model’s understanding ability of user behaviour, and improves the effect of short video recommendation. Ruan et al.26 proposed a framework for combining audio and video generation using a multimodal diffusion model, it uses a sequential multi-modal U-Net to finalize the joint denoising process, and simultaneously brings an engaging viewing and listening experience for high-quality real video. To bridge the cross-modal gap between text and image, Wang et al.27 presented a dual-path rare content enhancement network to address the long-tail problem. Xue et al.28 utilized multimodal information (images, language, and3D point clouds) to learn a unified representation which can alleviate the limit of a small number of annotated 3D data. In text-image person re-identification, Yan et al.29 launched a fine-grained information excavation framework which is driven by contrastive language-image pretraining. As for multimodal sentiment analysis, Wang et al.30 proposed the text enhanced transformer fusion network which can achieve effective unified multimodal representations.

Proposed method

The overall framework of the proposed method is shown as Fig. 1, it mainly contains three components, including visual representation module, text representation module, and feature fusion module. Specifically, the visual representation module is a lightweight network based on multiscale channel shuffle block, it adopts group convolution mechanism to reduce the parameters of the model and uses channel shuffle strategy to share the information among different groups. The text representation module is a bidirectional encoder representations from transformer (BERT), which can capture language information of different levels from shallow syntactic features to deep semantic features. The feature fusion module is a cross attention module consists of cross attention layer and self-attention layer, which can sufficiently dig out the complementary information of different modalities.

The overall framework of the proposed method.

Visual representation module

Backbone network

To improve the efficiency of the and avoid the overfitting risk of the model, we design a lightweight network named multiscale shuffle convolution network (MSCN) based on multiscale channel shuffle block (MSCB) to encode the visual data, and its inner architecture is shown as Table 1.

where \(S_{no}\) denotes the serial number of processing stage. \(M_{name}\) denotes the module name. \(R_{in}\) denotes the resolution of input while \(R_{out}\) denotes the resolution of output. \(n_M\) denotes the number of modules. \(n_F\) denotes the number of filters. K denotes the kernel size while S denotes the convolution stride step. Conv and ReLU denotes the regular convolution block and relu activation function respectively. MSCB denotes the multiscale shuffle convolution block, which is detailed in the next section.

Multiscale channel shuffle block

The inner architecture of the proposed multiscale channel shuffle block (MCSB) is shown as Fig. 2, which can effectively dig out information on different receptive fields and significantly decrease parameter scale of the classical convolution.

The inner architecture of the multiscale channel shuffle block.

Specifically, inspired by ShuffleNet31, we first partition the feature cube into n groups to reduce the parameter scale and computational load of the network, and apply convolution kernels with different sizes (\(k_{(1)}\) to \(k_{(n)}\)) on them to obtain features with various perception field, and \(k_{(i)}\) is defined as Eq. (1).

where n denotes the number of groups we separated. \(\left\lfloor \cdot \right\rfloor\) denotes the round down operator. Then we perform channel shuffle operation of all feature cubes from different groups to enhance the interactions among different feature maps and improve the representation ability of the module. In addition, we utilize a \(1\times 1\) convolution layer to fuse the shuffled features into a common space. Finally, the residual connection is used to transmit the information from the last MCSB to the next one.

Text representation module

Because the pre-trained bidirectional encoder representation (BERT) has achieved competitive performance on many natural language processing tasks, we use it to extract the feature of text modality. The inner architecture of the bert model is shown as Fig. 3, it contains two-layer deep transformer modules and can effectively construct the relationships and dependencies among different words in the sentence, and further improve the semantic information depict ability.

The inner architecture of the bert model.

Specifically, the inner architecture of transformer is shown as Fig. 4, it utilizes multi-head attention to improve the parallel processing ability and structural representation ability.

The inner architecture of the transformer.

Feature fusion module

After obtain the visual representation \({\mathbf{{f}}_v}\) and text embedding \({\mathbf{{f}}_t}\), we design a novel cross attention module insisted of cross attention layer and self-attention layer to fuse them to obtain more complete information. In the cross attention layer, each modality can shield the other modality according to its the confidence of its own input dynamically. The obstructed features of the two modalities are feed forward to the self-attention layer, and determine what information should be transmitted to the next layer. The self-attention operator uses fully connected layer to project \({\mathbf{{f}}_v}\) to a K dimensional space, and \({\mathbf{{f}}_t}\) is also projected to a K dimensional space, and the definitions are formulated as Eq. (2).

where \(\mathrm{{ReLU}} \left( \cdot \right)\) denotes the ReLU activation operator. In the condition of one modality datum contains misleading information, we can not easily combine \(\varvec{{\hat{f}}}_v\) and \({\varvec{\hat{f}}}_t\) together without performance decrement if we do not use attention mechanism such as common attention. In this case, we design the cross attention strategy, where the attention map \({\alpha _v}\) of visual modality are fully dependent on text feature \(\mathbf{{f}}_t\) while the attention map \({\alpha _t}\) of text modality are fully dependent on visual feature \(\mathbf{{f}}_v\). The definitions of \({\alpha _v}\) and \({\alpha _t}\) are formulated as Eq. (3).

where \(\sigma \left( \cdot \right)\) denotes the SigMoid activation function, and its formulation is defined as Eq. (4).

After obtain the visual mask \(\alpha _v\) and the text mask \(\alpha _t\), we can enhance the visual and text representation by the formulation defined as Eq. (5).

Then we concatenate \({\varvec{\tilde{f}}}_v\) and \({\varvec{\tilde{f}}}_t\) together as a new embedding, and feed it forward to a two-layer fully connected neural network to obtain the final recognition score.

Collaborate decision

Optimization in training phase

To ensure the discriminant of each modality datum and emphasize the semantic attributes of the final representation, we construct an auxiliary constraint guided cost function to optimize the network proposed in this paper, and the loss function of \(n_{th}\) training epoch is defined as Eq. (6).

where \(o_v\), \(o_t\), and \(o_f\) denotes the recognition score of visual representation, text representation, and fused representation respectively. y denotes the label (positive or negative) of corresponding visual and text data. \(\ell \left( \cdot \right)\) denotes the binary cross entropy function, it is defined as Eq. (7),

\(\xi _v\), \(\xi _t\), and \(\xi _f\) denotes three dynamical coefficient weights used to balance the relative importance of different loss terms, and they are defined in Eq. (8).

Collaborate decision in testing phase

Actually, we can obtain three recognition scores from the proposed network in testing phase, we design a collaborate decision mechanism to select the most discriminative one as our final result, and the selection strategy is defined as Eq. (9).

where \(s_v\), \(s_t\), and \(s_f\) denotes the scores from visual branch, text branch, and fusion branch respectively.

Experiments

This section reports the experiments, including dataset, evaluation metrics, and experimental results & analysis.

Dataset

As a new field, there is no public social media microblog recognition dataset according to our extensive research. In this case, we construct a new dataset called Chinese Microblog Recognition (CMR) dataset, and all samples are collected from blog. Specifically, CMR contains 2854 video-text pairs, and it contains many reports on hot social issues. In addition, the label of each sample is defined as Eq. (10).

where \(\textrm{I}\left\{ \cdot \right\}\) denotes the indicator function, it equals to one when the condition satisfies and zeros otherwise. T denotes the number of total samples in the dataset. \({n^{like}}\), \(n^{respond}\) and \(n^{review}\) denotes the number of the number of likes, the number of responds, and the number of reviews respectively.

Evaluation metrics

We use the precision (P), recall (R), and \(F_\beta\) to measure the performances of different methods, and they are defined in Eq. (11).

where \(n_{tt}\) denotes the number of samples whose true label and predicted label are all positive. \(n_p\) denotes the number of samples whose predicted are positive while \(n_t\) denotes the number of samples whose true label are positive. \(\beta\) is a hyperparameter to balance the relative importance between P and R, and \({\beta }^2\) is set to 0.3 in this paper.

Experimental settings

The proposed method is set up under the PyTorch framework, the input image size is resized 336\(\times\)336. The batch size is set to 8. The Adam optimizer with weight decay of \(10^{-4}\) is used of when model is trained. The poly scheduler is used to adjust the learning rate, defined as Eq. (12).

where \(lr_{init}\) is set to \(5\times 10^{-4}\). power is set to 0.9. \(N_{epoch}\) is set to 50. n denotes the epoch index in training phase.

Experimental results and analysis

Contrasting experimental results

In order to demonstrate the effectiveness and superiority of the proposed method, we conduct a series of experiments on the constructed CMR dataset, and quantitative results are shown in Table 2.

From which we can see that, the performances of methods based on multi modalities are better than ones based on single modality in general. Investigating its reasons, either visual or text can not reflects the true intentions of the microblog publishers sufficiently, and the complementary relationships between two modalities can contribute to the recognition performance to a large extent. In addition, the proposed CDN surpasses all the contrasting experiments based on multi-modalities, the main reasons can be concluded as follows: (1) the proposed network based on multiscale channel shuffle block can sufficiently dig out the discriminant information of the image, because it can directly trained on the task-specific dataset and hence avoid the semantic gap among different datasets. (2) The auxiliary constraint guided cost function in training phase can remain the discriminant of different modalities, and the decision strategy based on score selection can obtain the most representative recognition result. It is noteworthy the proposed CDN surpasses the state-of-the-art large multi-modal language model TinyLLAVA, this is because TinyLLAVA has a large scale of parameters and the constructed dataset is not sufficient to fine-tune the model, and hence the large model can not adaptive the this specific task well.

Scalability and computational efficiency illustrations

Scalability: In the proposed CDN, the image encoder (MSCN) is pretrained on ImageNet while the text encoder (BERT) is pretrained on large scale dataset, and hence the basic feature extraction ability of the model can be guaranteed even if the constructed dataset contains only 2854 samples. In this case, when facing with larger or more diverse dataset, we can finetune the multi-modal classification network on the corresponding dataset to improve its performance.

Computational efficiency: Except for the significant recognition performance, the proposed CDN can realize 285 FPS inference speed on RTX3090 and 79 FPS inference speed on edge terminal RK3588. In this case, the proposed method is high-efficiency, and it is suitable for the processing of large-scale microblog data on both center server and edge terminal.

Conclusion

In this paper, we proposed a lightweight collaborate decision network based on cross modal attention for social media micrblog recognition. Specifically, we present a novel multiscale channel shuffle block to build the lightweight visual representation network, which can simultaneously satisfy the characteristics of stronger feature extract ability and lower computational loads. Besides, we design a cross-modal attention mechanism to fuse the features from visual and text branches, which can dig out the complementary information of different modalities, and uses an auxiliary constraint to ensure the transparency of different branches. In addition, we construct a large scale of social media tweet recognition dataset, which can effectively promote the development of the related fields.

Data availability

The datasets generated and/or analysed during the current study are not publicly available due to the rules of our lab but are available from the corresponding author on reasonable request.

References

Islam, J., Akhand, M., Habib, M. A., Kamal, M. A. S. & Siddique, N. Recognition of emotion from emoticon with text in microblog using lstm. Adv. Sci. Technol. Eng. Syst. J. 6, 347–354 (2021).

Zhao, S., Gao, Y., Ding, G. & Chua, T.-S. Real-time multimedia social event detection in microblog. IEEE Trans. Cybern. 48, 3218–3231 (2017).

Arrigo, E. Deriving competitive intelligence from social media: Microblog challenges and opportunities. Int. J. Online Market. (IJOM) 6, 49–61 (2016).

Jia, Y., Liu, L., Chen, H. & Sun, Y. A chinese unknown word recognition method for micro-blog short text based on improved fp-growth. Pattern Anal. Appl. 23, 1011–1020 (2020).

Bi, Y., Li, B. & Wang, H. Detecting depression on sina microblog using depressing domain lexicon. In 2021 IEEE Intl Conf on Dependable, Autonomic and Secure Computing, Intl Conf on Pervasive Intelligence and Computing, Intl Conf on Cloud and Big Data Computing, Intl Conf on Cyber Science and Technology Congress (DASC/PiCom/CBDCom/CyberSciTech) 965–970 (IEEE, 2021).

Doersch, C., Gupta, A. & Efros, A. A. Unsupervised visual representation learning by context prediction. In Proceedings of the IEEE International Conference on Computer Vision 1422–1430 (2015).

Chopra, S., Hadsell, R. & LeCun, Y. Learning a similarity metric discriminatively, with application to face verification. In 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), vol. 1 539–546 (IEEE, 2005).

Hadsell, R., Chopra, S. & LeCun, Y. Dimensionality reduction by learning an invariant mapping. In 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’06), vol. 2 1735–1742 (IEEE, 2006).

Sharif Razavian, A., Azizpour, H., Sullivan, J. & Carlsson, S. Cnn features off-the-shelf: An astounding baseline for recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops 806–813 (2014).

Wu, Z., Xiong, Y., Yu, S. X. & Lin, D. Unsupervised feature learning via non-parametric instance discrimination. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 3733–3742 (2018).

Chen, T., Kornblith, S., Norouzi, M. & Hinton, G. A simple framework for contrastive learning of visual representations. In International Conference on Machine Learning 1597–1607 (PMLR, 2020).

Chen, X. & He, K. Exploring simple siamese representation learning. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 15750–15758 (2021).

Newell, A. & Deng, J. How useful is self-supervised pretraining for visual tasks? In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 7345–7354 (2020).

Mishra, S. et al. Object-aware cropping for self-supervised learning. arXiv preprint arXiv:2112.00319 (2021).

Selvaraju, R. R., Desai, K., Johnson, J. & Naik, N. Casting your model: Learning to localize improves self-supervised representations. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 11058–11067 (2021).

Fang, Y. et al. Eva: Exploring the limits of masked visual representation learning at scale. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 19358–19369 (2023).

Ge, S., Mishra, S., Kornblith, S., Li, C.-L. & Jacobs, D. Hyperbolic contrastive learning for visual representations beyond objects. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 6840–6849 (2023).

Song, K., Zhang, S. & Wang, T. Semantic-aware autoregressive image modeling for visual representation learning. In Proceedings of the AAAI Conference on Artificial Intelligence, vol. 38 4925–4933 (2024).

Su, Z. et al. Lightweight pixel difference networks for efficient visual representation learning. IEEE Trans. Pattern Anal. Mach. Intell. (2023).

Ilić, S., Marrese-Taylor, E., Balazs, J. A. & Matsuo, Y. Deep contextualized word representations for detecting sarcasm and irony. arXiv preprint arXiv:1809.09795 (2018).

Devlin, J., Chang, M.-W., Lee, K. & Toutanova, K. Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805 (2018).

Avrahami, O. et al. Spatext: Spatio-textual representation for controllable image generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 18370–18380 (2023).

Zhu, L. & Yang, Y. Actbert: Learning global-local video-text representations. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 8746–8755 (2020).

Song, Y., Cai, Y. & Tan, L. Video-audio emotion recognition based on feature fusion deep learning method. In 2021 IEEE International Midwest Symposium on Circuits and Systems (MWSCAS) 611–616 (IEEE, 2021).

Li, H., Lin, J., Wang, T., Zhang, L. & Wang, P. A Personalized Short Video Recommendation Method Based on Multimodal Feature Fusion (Springer, 2022).

Ruan, L. et al. Mm-diffusion: Learning multi-modal diffusion models for joint audio and video generation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 10219–10228 (2023).

Wang, Y. et al. Dual-path rare content enhancement network for image and text matching. IEEE Trans. Circ. Syst. Video Technol. (2023).

Xue, L. et al. Ulip: Learning a unified representation of language, images, and point clouds for 3d understanding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition 1179–1189 (2023).

Yan, S., Dong, N., Zhang, L. & Tang, J. Clip-driven fine-grained text-image person re-identification. IEEE Trans. Image Process. (2023).

Wang, D. et al. Tetfn: A text enhanced transformer fusion network for multimodal sentiment analysis. Pattern Recogn. 136, 109259 (2023).

Zhang, X., Zhou, X., Lin, M. & Sun, J. Shufflenet: An extremely efficient convolutional neural network for mobile devices. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 6848–6856 (2018).

Zhou, B. et al. Tinyllava: A framework of small-scale large multimodal models. arXiv preprint arXiv:2402.14289 (2024).

Author information

Authors and Affiliations

Contributions

Y. Peng wrote the main manuscript text, J. Fang provided the motivation, and B. Li reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Peng, Y., Fang, J. & Li, B. Collaborate decision network based on cross-modal attention for social media microblog recognition. Sci Rep 14, 25673 (2024). https://doi.org/10.1038/s41598-024-77025-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-77025-1