Abstract

Traditional mosquito identification methods, relied on microscopic observation and morphological characteristics, often require significant expertise and experience, which can limit their effectiveness. This study introduces a self-supervised learning-based image classification model using the Bootstrap Your Own Latent (BYOL) algorithm, designed to enhance mosquito species identification efficiently. The BYOL algorithm offers a key advantage by eliminating the need for labeled data during pretraining, as it autonomously learns important features. During fine-tuning, the model requires only a small fraction of labeled data to achieve accurate results. Our approach demonstrates impressive performance, achieving over 96.77% accuracy in mosquito image analysis, with minimized both false positives and false negatives. Additionally, the model’s overall accuracy, measured by the area under the ROC curve, surpasses 99.55%, highlighting its robustness and reliability. A notable finding is that fine-tuning with just 10% of labeled data produces results comparable to using the full dataset. This is particularly valuable for resource-limited settings with limited access to advanced equipment and expertise. Our model provides a practical solution for mosquito identification, overcoming the challenges of traditional microscopic methods, such as the time-consuming process and reliance on specialized knowledge in healthcare services. Overall, this model supports personnel in resource-constrained environments by facilitating mosquito vector density analysis and paving the way for future mosquito species identification methodologies.

Similar content being viewed by others

Introduction

The World Health Organization (WHO) underscores the pivotal role of entomological research in our fight against global arbovirus outbreaks, which currently afflict a staggering 80% of the world’s population, resulting in an estimated annual infection rate of 3.9 billion cases in over 129 countries1. In Thailand, mosquito-borne viruses, including Dengue, Chikungunya, and Zika, are responsible for causing human illnesses. Common symptoms associated with these viruses include high fever, rash, muscle pain, and joint pain. On average, around 50,000 cases of these viruses are reported each year in the country2. At the core of this conflict, the analysis at a microscopic level of the species of mosquitos that act as vectors takes center stage, serving as the fundamental basis for the identification of insects within the field of entomology. At the present time, our principal method of differentiation between species that are vectors and those that are not, as well as distinguishing local species from invasive ones, relies heavily on the careful observation of distinct physical characteristics of insects and the utilization of morphological keys3,4,5,6.

This approach serves as the foundation for evaluating the efficacy of prevention strategies, tailored to the mosquito species that may be classified as either epidemic or endemic. These methods are the established routines in insect identification, and they have traditionally been executed by well-trained and highly skilled personnel, typically belonging to the public health sector. This practice is widely regarded as the gold standard. However, as effective as these methods are, they come with significant drawbacks. They are undeniably time-consuming and demanding. The shortage of well-trained public health personnel further compounds the challenge. Not all individuals possess the requisite level of training, skill, and experience to perform these identifications accurately and efficiently. This shortfall in expertise not only hampers the speed of mosquito species determination but also adds to the overall cost of surveillance activities.

Alternative approaches to improve the accuracy of insect identification encompass both qualitative and quantitative methods like polymerase chain reaction (PCR)7, quantitative real-time PCR8, and DNA barcoding9. However, these techniques necessitate costly equipment and high-throughput technology. Furthermore, they can only be executed by professionals with specialized expertise in molecular biology. In this context, utilizing automated systems can help deliver consistently accurate results while minimizing the need for skilled labor.

The identification and surveillance of mosquito species are crucial for controlling arboviruses and protecting public health10,11,12,13,14. Traditional manual techniques struggle with large datasets and environmental factors. However, innovative technologies are transforming mosquito classification and surveillance. A previously designed trap with specialized feature extraction showed promise with small datasets, but larger volumes require an intelligent computer platform for effective species identification from images15. Another system using a neural network achieved 96% accuracy but faced challenges from environmental factors like temperature, wind, and insect size16. An infrared device showed 80% accuracy in identifying the genus and gender of Aedes aegypti, Aedes albopictus, and Culex quinquefasciatus17. Recently, the field system equipped with an optical sensor accurately distinguished target mosquitoes (from the Aedes and Culex genera) with a balanced accuracy of 95.5% and identified both the genus and sex of the mosquitoes with a balanced accuracy of 88.8%18. Despite these advances, using these tools remains complex11,12,13. Mosquito morphology can change during capture, and taxonomy management is challenging, even for expert entomologists. Another approach repurposed mobile phones to monitor mosquitoes using acoustic recordings. This method mapped mosquito distribution but had limitations in recording range and sound recorder positioning19,20,21.

The challenge of identifying and quantifying mosquito vectors has long been critical for public health and arbovirus control. Morphological taxonomy analysis through image visualization has led to innovative equipment and methodologies. This represents a major advancement in reliable mosquito vector identification. Addressing the limitations of neural networks, particularly with small datasets, a novel machine learning approach has been developed. This method leverages the distinctive wing shape and components of mosquitoes for detection, achieving accuracy rates ranging from 85 to 100%22. Machine learning algorithms, like the support vector machine (SVM)23 and artificial neural network (ANNs)24, combined with morphological keys, have achieved over 95% accuracy in classifying fruit fly species25,26. A recent study utilized machine learning techniques to detect sublethal effects of insecticide exposure on the motor behavior of Mediterranean fruit flies, with Random Forest and K-Nearest Neighbor algorithms achieving 71% accuracy27. However, the analysis lacked external stimuli, and complex behaviors like courtship were not evaluated.

Deep learning algorithms, particularly convolutional neural networks (CNNs), have significantly advanced in object detection and image recognition across various fields. Their success spans from detecting cyclists on roads to identifying agricultural pests and solving complex medical challenges28,29,30,31,32. This success highlights the potential of CNNs in entomology, especially for detecting and classifying mosquito vectors. In 2021, advancements demonstrated the potential of CNNs in mosquito vector identification. For instance, a high-performance CNN used images from a mobile phone to identify Aedes larvae, marking a significant step forward despite a misclassification rate over 30%33. Another study used the Google Net neural network to identify Aedes and Culex mosquitoes, achieving an accuracy range of 57% to 93%. Additionally, the study of mosquito wing beat frequencies has proven useful for species and gender classification34,35,36. In another field of entomology, a CNN-based model using the You Only Look Once (YOLO)37 network has been employed to detect and classify two similar tephritid pest species, such as the Mediterranean fruit fly and olive fruit fly, in real-time conditions. This CNN-based YOLO network achieved a 93% precision rate with a processing time of 2.2 milliseconds38. A recent study used a CNN-based YOLO network with a large annotated dataset of important insects achieved an average precision of 92.7% and a recall of 93.8% in detection and classification across species. When presented with uncommon or unclear insects not seen during training, the model successfully detected 80% of these individuals, often interpreting them as closely related species39. Although these advancements represent significant progress in entomology, they require substantial resources, deep expertise, and extensive labeling of large datasets—challenges that are critical to the effective application of these techniques.

Self-supervised learning (SSL) algorithms offer effective and efficient solutions in various domains. Jean-Bastien Grill introduced Bootstrap Your Own Latent (BYOL)40, a groundbreaking SSL concept. BYOL allows models to autonomously learn and adapt by using their own latent representations to enhance data understanding. It employs two neural networks, online and target, which learn from each other. The online network predicts the target network’s representation of the same image from a different augmented view, while the target network is updated using a slow-moving average of the online network. Unlike methods that rely on negative pairs41, BYOL achieves new performance benchmarks without them. A key strength of BYOL is its performance with small datasets. While SSL typically relies on large amounts of data for pre-training, BYOL excels by maximizing the use of limited data, making it ideal for tasks with impractical or unavailable large datasets. BYOL achieved 74.3% top-1 classification accuracy on ImageNet42, and 79.6% with a larger ResNet model43. In a recent study, Kar and Nagasubramanian applied self-supervised learning (SSL) to classify 22 agriculturally important insect pests. They utilized Nearest Neighbor Contrastive Learning of Visual Representations (NNCLR), BYOL40, and Barlow Twins44 for pre-training, and ResNet-18 and ResNet-50 for fine-tuning. The highest accuracy, 79%, was achieved with NNCLR pre-training and ResNet-50 fine-tuning, using only 5% of the annotated images from the whole dataset45. In a related work, Pinetsuksai proposed the BYOL algorithm for SSL-based object classification for human helminthic ova identification. The BYOL algorithm performed pre-training to learn the necessary features of human helminthic ova autonomously. The fine-tuning process utilized ResNet-101 with 10% of the labeled dataset from all datasets, achieving 95% accuracy and an area under the receiver operating characteristic curve (AUC) greater than 94%46.

In entomological research, innovative approaches are essential for overcoming data limitations and the need for technical expertise in mosquito classification. Bootstrap Your Own Latent (BYOL) has emerged as a promising solution, effectively addressing data scarcity by maximizing the use of available data, making it invaluable when extensive datasets are not available. Additionally, BYOL simplifies the classification process, making it accessible to practitioners with varying levels of technical expertise and democratizing mosquito classification. Moreover, methods such as deep neural networks, machine learning, deep learning, and AI are key to resolving challenges in entomology. These approaches enhance efficiency and address limitations, contributing significantly to improvements in the healthcare service sector47.

In this work, Bootstrap Your Own Latent (BYOL) is applied to mosquito classification. This innovative approach aims to address data limitations and technical challenges while advancing the understanding and combat of mosquito-borne diseases. The application of BYOL reveals its potential to revolutionize mosquito classification and contribute significantly to global public health efforts.

The primary aim of this research is to develop an innovative self-supervised learning approach for object classification, addressing the need for accurate and efficient species identification despite the limitations of small labeled datasets. This approach will be trained and tested using digital stereomicroscopy to ensure its practicality and effectiveness. The model’s performance will be rigorously evaluated with real-world mosquito data, validated through collaboration with experienced entomologists to ensure precision and reliability. In remote areas of Thailand, resource constraints hinder comprehensive molecular mosquito identification by the Offices of Disease Prevention and Control. They primarily rely on morphological keys and digital stereomicroscopy methods for species identification, with the expertise of public health personnel playing a crucial role in ensuring accurate surveillance of mosquito populations48. This research aspires to introduce a transformative tool that enhances our ability to classify mosquitoes, contributing to the broader goals of public health and virus control.

Architecture

Self-supervised learning (SSL)

The algorithms represent a powerful paradigm in machine learning. They are designed to address the problem of feature extraction and representation learning without the need for human-provided labels or annotations. This means that SSL algorithms can extract valuable information from large datasets that lack explicit class labels or annotations. The core idea behind SSL is depicted in Fig. 1, where the learning process consists of two main phases: self-supervised pre-training and downstream task application49.

The overall process of self-supervised learning (SSL), Self-Supervised Pre-Training in this phase and Downstream Tasks40.

Self-Supervised Pre-Training in this phase, a deep learning model is exposed to a pre-defined pretext task. The choice of the pretext task is crucial, as it should be carefully designed to encourage the model to learn meaningful features from the data. The term “pretext” here signifies that the task is constructed specifically to facilitate feature learning and is not the ultimate task the model will be used for. To enable the model to learn from unlabeled data, pseudo-labels are automatically generated based on attributes or transformations of the input data. These pseudo-labels serve as the supervisory signal for the pretext task. For instance, in computer vision, the pretext task could be predicting the rotation angle of an image, and the pseudo-labels might be the angles by which the images are rotated.

Transfer to Downstream Tasks Once the self-supervised pre-training phase is completed, the model has effectively learned valuable features from the unlabeled data. These features can be transferred to downstream tasks, which are typically the real tasks of interest, such as image classification, object detection, or natural language understanding. SSL particularly compelling is its ability to leverage extensive amounts of unlabeled data. This contrasts with supervised learning, where obtaining labeled data can be expensive and time-consuming. By training the model with the automatically generated pseudo-labels during the self-supervised pre-training phase, SSL algorithms can achieve promising results. They are capable of narrowing the performance gap between models trained with labeled data (supervised) and those trained with unlabeled data (self-supervised).

The success of SSL lies in its ability to generate generalizable features. These features are not tied to specific tasks but instead capture fundamental aspects of the data, making them applicable to a wide range of downstream tasks. This generalizability is a key advantage, as it allows the model to exhibit robust performance across different domains and applications. In essence, SSL represents a powerful strategy for making the most of the wealth of unlabeled data available, enabling machine learning models to learn valuable representations and perform well in various practical applications. In addition to the advantages of self-supervised learning (SSL) in extracting valuable features from unlabeled data, the downstream applications of SSL have a significant impact on addressing data limitations with labeled data. SSL offers a solution to this problem by allowing models to be pre-trained on large amounts of unlabeled data. The features learned during this pre-training phase are generalizable and can be fine-tuned for specific downstream tasks with limited labeled data. This fine-tuning process is considerably more data-efficient compared to training models from scratch with only the available labeled examples.

In summary, the downstream advantages of SSL extend beyond feature learning. They address the practical challenges of data limitations in supervised learning scenarios by enabling the transfer of knowledge from a broad range of unlabeled data sources, ultimately enhancing the adaptability and efficiency of machine learning models in real-world applications. This capability is particularly valuable in domains where labeled data is scarce or costly to obtain.

Residual networks (Resnet)

Residual Networks (Resnet) address the challenge of training very deep neural networks, where adding more layers often leads to increased training errors instead of improved performance. The Resnet architecture tackles this issue by using residual blocks with shortcut connections. Each residual block consists of several convolutional layers followed by batch normalization and ReLU activation. A building block defined as: \(x\) is the input to a residual block and \(F(x)\) represents the function learned by the convolutional layers, the output of the block is given by \(F\left(x\right)+x\). The key innovation is this shortcut connection that bypasses the convolutional layers and directly adds the block’s input \(x\) to its output \(F(x)\). This design allows the network to learn residual mappings (the difference between the input and the desired output) rather than the full transformation, simplifying the learning process. Resnet are structured by stacking these residual blocks into stages, with deeper models (such as ResNet-50 or ResNet-101) incorporating a bottleneck design to manage computational complexity. Specifically, a bottleneck block uses three convolutional layers instead of two: a 1 × 1 convolution to reduce dimensions, a 3 × 3 convolution for the main processing, and another 1 × 1 convolution to restore dimensions. This architecture enables effective training and improved performance for extremely deep networks, overcoming the degradation problem where adding more layers could otherwise worsen the model’s accuracy43.

In depth and complexity to cater to different levels of computational needs and task complexity in Fig. 2. Resnet-18, the simplest in the series, consists of 18 layers and employs basic residual blocks with two 3 × 3 convolutional layers, making it suitable for less complex tasks and faster training. Resnet-50 increases depth to 50 layers and incorporates the bottleneck design in its residual blocks, using a 1 × 1 convolution to reduce dimensionality, a 3 × 3 convolution for processing, and another 1 × 1 convolution to restore dimensions. This design strikes a balance between computational efficiency and performance. Resnet-101 builds on this with 101 layers, offering even greater depth and capacity for more complex tasks and datasets. Finally, Resnet-152, with its 152 layers, represents one of the deepest models, utilizing an extensive number of bottleneck blocks to achieve high performance on challenging tasks, though it demands more computational resources. Each model in the Resnet family enhances its predecessor’s capabilities, enabling increasingly sophisticated feature extraction and learning.

CNN networks used in this study. Networks architecture of Resnet-18, Resnet-50, Resnet-101, and Resnet-152.

Bootstrap your own latent (BYOL)

BYOL is a remarkable self-supervised learning framework, known for its simplicity and elegance. What sets it apart is its ability to learn powerful feature representations without the need for traditional negative sample pairs or a large batch size during training. Another remarkable aspect of BYOL is its autonomy. Unlike methods that rely on human expertise for feature engineering, BYOL allows algorithms to automatically glean feature information directly from the original data. This feature learning process saves considerable time and effort, as it removes the need for manual feature engineering. Moreover, BYOL excels at extracting high-quality feature representations, making it especially useful for handling massive datasets. These learned features contribute to models with superior generalization capabilities. In practical terms, this means that the feature representations obtained through BYOL can be applied to other tasks that share the same domain as the original dataset. To accomplish this, BYOL employs two interacting and mutually learning neural networks: the “online network” and the "target network." The online network consists of an encoder for feature extraction, a projector for feature projection, and a predictor for feature prediction. The target network, on the other hand, includes an encoder for feature extraction and a projector for feature projection. The training process of BYOL is detailed in Fig. 3, illustrating how these networks work together to create robust feature representations that can subsequently be utilized for various downstream tasks50.

BYOL’s architecture involves minimizing a similarity loss between \({q}_{\theta }\left({z}_{\theta }\right)\) and \(sq({z}_{\xi }{\prime})\), where \(\theta\) represents the trained weights, \(\xi\) denotes an exponential moving average of \(\theta\), and sg means stop-gradient. In the last of training only \({f}_{\theta }\) is retrain, while \({y}_{\theta }\) is utilized as the image representation. The BYOL process results in the creation of pre-trained weight files. These weight files contain valuable, informative data representations. These representations are acquired through an unsupervised learning approach, where the primary goal is to enhance the similarity between variously augmented iterations of the same image. BYOL integrates with various Resnet backbones, including Resnet-18, Resnet-50, Resnet-101, and Resnet-152, as illustrated in Fig. 2.

BYOL aims to learn a representation \({y}_{\theta }\) that can be utilized for various downstream tasks. This process involves two neural networks: the online network and the target network. The online network, characterized by a set of weights \(\theta\), consists of three components such as an encoder \({f}_{\theta }\), a projector \({g}_{\theta }\) and a predictor \({q}_{\theta }\) as illustrated in Fig. 2. The target network mirrors the structure of the online network but employs a distinct set of weights \(\xi\), It supplies regression targets to train the online network, with its parameters \(\xi\) being updated as an exponential moving average of the online parameters \(\theta\). Specifically, given a target decay rate \(\tau\) \(\in\) , the update is performed after each training step.

Two distributions of image augmentations, \(\mathcal{T}\) and \({\mathcal{T}}{\prime}\), are used to generate two augmented views \(v\triangleq t(x)\) and \({v}^{{\prime} }\triangleq {t}{\prime}(x)\) , where \(t \sim \mathcal{T}\) and \({t}{\prime} \sim {\mathcal{T}}{\prime}\) are the respective augmentations applied. From the first augmented view \(v\), the online network produces a representation \({y}_{\theta }= {f}_{\theta }(v)\) and a projection \({z}_{\theta }= {g}_{\theta }({y}_{\theta })\). The target network, from the second augmented view \({v}{\prime}\), outputs \({y}_{\xi }{\prime}= {f}_{\xi }({v}{\prime})\) and the target projection \({z}_{\xi }{\prime}= {g}_{\xi }({y}_{\xi }{\prime})\) . The online network then predicts \({q}_{\theta }({z}_{\theta })\) based on \({z}_{\xi }{\prime}\), and both \({q}_{\theta }({z}_{\theta })\) and \({z}_{\xi }{\prime}\) are \({\mathcal{L}}_{2}\)-normalized: \(\overline{{q }_{\theta }}\left({z}_{\theta }\right)\triangleq {q}_{\theta }({z}_{\theta })/ ||{q}_{\theta }\left({z}_{\theta }\right)|{|}_{2}\) and \({\overline{z} }_{\xi }{\prime}\triangleq {\overline{z} }_{\xi }{\prime}/ ||{\overline{z} }_{\xi }{\prime}|{|}_{2}\). It is important to note that this predictor is only applied to the online branch, creating an asymmetry between the online and target pipelines. Finally, the mean squared error between the normalized predictions and target projections is defined as the following equation.

Symmetrize the loss \({\mathcal{L}}_{\theta , \xi }\) in Eq. 2 by separately feeding \({v}{\prime}\) to the online network and \(v\) to the target network to compute \({{\widetilde{\mathcal{L}}}_{\theta , \xi }}\). During each training step, we perform a stochastic optimization step to minimize \({\mathcal{L}}_{\theta , \xi }^{BYOL}= {\mathcal{L}}_{\theta , \xi }+ {\widetilde{\mathcal{L}}}_{\theta , \xi }\) with respect to \(\theta\) only, and not \(\xi\), as indicated by the stop-gradient in Fig. 2. BYOL’s dynamics are summarized as follows.

The optimizer is an optimizer, and \(n\) is the learning rate. At the end of the training process, we retain only the encoder \({f}_{\theta }\)

Materials and method

Experimental design

This study explores the effectiveness of self-supervised learning (SSL) compared to supervised learning (SL) models, with SL models serving as the baseline. Two experiments were conducted.

Experiment 1: Comparison of Pre-trained (SSL) vs. Non-pre-trained (SL) Models.

-

Objective: To evaluate whether SSL pre-training, using weights obtained from the BYOL process, improves model performance compared to baseline SL models.

-

Method: Compare the performance of individual representative models fine-tuned with SSL pre-trained weights against baseline SL models trained from scratch. This comparison aims to assess the impact of SSL pre-training on model performance.

Experiment 2: Efficiency of SSL with Limited Labeled Data.

-

Objective: To determine the optimal amount of labeled training data needed for effective fine-tuning and to assess the efficiency of SSL in scenarios with limited labeled data.

-

Method: Fine-tune representative models using only 5% and 10% of the labeled training data. Compare the performance of these SSL fine-tuned models with baseline SL models trained with 100% of the labeled training data. This will help evaluate how SSL performs with minimal labeled data compared to traditional SL approaches.

The aim of these experiments is to provide valuable insights into the advantages of SSL over conventional SL techniques, particularly in terms of performance improvement and data efficiency.

Dataset preparation

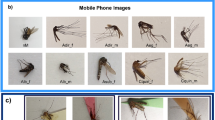

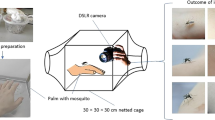

This study utilized archived mosquito species identified by expert entomologists, who employed taxonomic keys based on morphological characteristics for the identification process3,51. These species included Aedes aegypti (Ae. aegypti) and Aedes albopictus (Ae. albopictus), which serve as primary vectors for the transmission of dengue, Zika, and Chikungunya viruses. Additionally, Anopheles dirus (An. dirus), Anopheles maculatus (An. maculatus), Anopheles minimus (An. minimus_A), and Anopheles harrisoni (An. minimus_C) were examined as vectors for human malaria. Furthermore, Culex species, particularly Culex quinquefasciatus, were identified as key vectors responsible for the transmission of Japanese encephalitis (JE) and filariasis. We placed meticulous focus on the preparation of our mosquito species dataset, which comprises images captured through stereomicroscopy and meticulously categorized into 7 distinct mosquito species Ae.aegypti, Ae.albopictus, An.dirus, An.mac, An.minimus_A, An.minimus_C, and Cx.spp. To ensure the utmost precision in representing these mosquito species’ features, the images, obtained via stereomicroscopy, were standardized to a resolution of 1024 × 1080 pixels see in Fig. 4.

Sample categories of 7 Mosquito species dataset.

Dataset preparation unfolded systematically, encompassing the following key steps.

-

Pre-training Dataset, the dataset’s inception involved the assembly of a comprehensive collection of 1863 unlabeled mosquito images. This foundational dataset was instrumental in priming our pre-training model’s understanding of mosquito features. To achieve this, we employed the method for self-supervised learning pre-training (Fig. 5).

-

Training Dataset, the dataset was further enriched with an additional 1863 labeled mosquito images, which served as the training data for supervised learning (SL) model.

-

Fine-tuning Dataset, for fine-tuning in self-supervised learning, we thoughtfully selected a subset representing only 5% and 10% of the Training Dataset. Each image within this subset was meticulously categorized into one of the seven distinct mosquito classes. This fine-tuning process was essential for enabling self-supervised learning to achieve precise species identification.

-

Validation Dataset, to assess and validate the model’s performance during training, we carefully curated a validation dataset comprising 350 labeled images. These images were deliberately distinct from both the pre-training and fine-tuning datasets and played a pivotal role in monitoring the model’s progress and fine-tuning hyperparameters.

-

Testing Dataset, to comprehensively evaluate the performance of our proposed model, we established a robust testing dataset comprising 700 labeled images. This dataset was maintained separately from the training and validation datasets and served as the ultimate benchmark for assessing the model’s classification accuracy, precision, recall, F1-score, and other critical performance metrics.

Image dataset in supervised learning (SL), self-supervised leaning (SSL) and fine-tuning processes.

The meticulous preparation of these distinct datasets, including pre-training, fine-tuning for self-supervised learning, and the incorporation of separate validation and testing sets, forms the unshakable foundation underpinning the reliability and robustness of our study. Furthermore, this dataset not only advances the field of mosquito species classification research but also serves as a valuable resource for future studies in the realms of entomology and self-supervised learning.

Image augmentation

BYOL leverages augmentations to enhance the robustness of models by creating multiple diverse views of the same image through transformations. For training BYOL, image augmentation is essential [35]. The augmentation process starts with a random crop of the input image to a size of 224 × 224 pixels, followed by a random horizontal flip. This is complemented by color distortion, which includes random adjustments in brightness, contrast, saturation, and hue, as well as an optional grayscale conversion. The process is completed with Gaussian blur to further enhance the model’s robustness.

Self-supervised learning configuration and model training

In pre-training dataset, we employed the BYOL (Bootstrap Your Own Latent) algorithm for self-supervised learning to optimize model performance. We utilized Resnet-18, Resnet-50, Resnet-101, and Resnet-152 as backbone models. Subsequently, we assessed the quality of the pre-trained weight files by employing The Uniform Manifold Approximation and Projection (UMAP). UMAP visualization provided insights into the clustering of data points (Fig. 6), with well-defined clusters within each class signifying the effectiveness of our pre-training approach, which has the potential to enhance subsequent performance in downstream tasks.

In the workflow of BYOL training process, the unlabeled dataset underwent diverse augmentations. The online network utilized Resnet to convert the image into a vector, followed by dimension reduction through a Multi-Layer Perceptron (MLP). Subsequently, the network represented itself, calculated and compared the loss with the target network, and underwent backpropagation in another iteration. The target network, employing different augmented images, followed a similar process without backpropagation. Instead, the network parameters were updated via Exponential Moving Average (EMA), copying parameters from the online network to update the target network. Finally, the loss was obtained for comparison. Post obtaining the pre-trained weights, the model’s quality was assessed using UMAP, which visualizes the clustering of data points representing image vector representations.

The Solo-learn library proves to be an invaluable resource for machine learning enthusiasts, offering an array of self-supervised learning algorithms. When combined with Nvidia DALI, a robust data management tool, it becomes an efficient solution for handling data input, streamlining preprocessing pipelines, and managing hyperparameters. This powerful partnership significantly expedites computations, enabling swift model training and inference. This, in turn, creates an optimal environment for deploying self-supervised learning techniques52, resulting in superior learning representations, and enhanced overall performance. In Table 1, we present a comprehensive overview of the training conditions used for our self-supervised learning experiments.

For model training, hyperparameters are configured based on default settings from existing studies40 and adjusted (batch size and learning rate) for computational resources, specifically an AMD EPYC 7742 CPU, an Nvidia DGX-A100 40 GB GPU, and RAM 32 GB. Nvidia DALI is utilized for efficient data handling52, ensuring swift data loading and preprocessing to minimize bottlenecks and enhance training speed. A batch size of 64 is chosen to strike a balance between computational efficiency and model stability, while a learning rate of 0.125 is set to ensure effective learning. The tau base is set to 0.99 and the tau final to 1.00, which manage the rate of weight updates in the target network; a high tau base provides stability, and a tau final of 1.00 ensures synchronization by the end of training. The LARS (Layer-wise Adaptive Rate Scaling) optimizer adjusts learning rates per layer, accommodating large batch sizes and improving convergence [48]. Training is scheduled for up to 6000 epochs to allow ample learning time. These hyperparameters are carefully tuned to achieve an optimal balance between training efficiency, model stability, and generalization performance.

In the loss context of BYOL, we compute the loss using the mean squared error, which is determined by calculating the difference between the L2 normalized representations of the online and target networks (as illustrated in Fig. 3)

To assess the training progress, we analyze the training loss graph, enabling us to gauge whether the model has reached saturation. As seen in Fig. 7, the trend line exhibits an initial increase during the first 3000 epochs, followed by a period of loss decreasing between 3000 and 4000 epochs, indicating that the training had not yet reached saturation. Subsequently, we observed the model achieving a stable state during the training phase, specifically between 4000 and 6000 epochs. This observation proved pivotal in guiding our determination of the optimal training duration (refer to Fig. 7).

The training loss graph of BYOL utilized Resnet-18, Resnet-50, Resnet-101, and Resnet-152 as backbone models.

For dimensionality reduction, we employed UMAP to investigate whether the pre-trained model could proficiently separate and cluster data points according to their respective classes.

The UMAP analysis of Resnet-18, Resnet-52, Resnet-101, and Resnet-152 assesses their data clustering effectiveness. Resnet-52 excels by exhibiting denser data clustering compared to the other models (Fig. 8), suggesting a comparable level of proficiency in feature detection and classification.

Performed UMAP embedding for Resnet-18, Resnet-50, Resnet-101, and Resnet-152 models.

Downstream

For Supervised Learning, we initially utilized the entire supervised learning dataset to train supervised learning model. Additionally, we divided supervised learning dataset into 5% and 10% of the data, which corresponded to 20 and 40 images, respectively. These subsets were used to train separate self-supervised learning models. To validate our well-trained models, we leveraged validation dataset, and for testing, we employed testing dataset.

Simultaneously, we engaged in the downstream process using fine-tuning dataset, which featured proportions similar to those subsets from supervised learning dataset (Fig. 9). We fine-tuned Resnet 18, Resnet 50, Resnet 101, and Resnet 152 models with pre-trained weights obtained from BYOL. Alongside fine-tuning dataset, we utilized validation dataset for model validation and testing dataset to assess the performance of our fine-tuned models.

In the downstream training workflow, a small sized labeled dataset comprising only 5% or 10% of the overall dataset is utilized for fine-tuning the pre-trained model. This involves incorporating the pre-trained model into a traditional supervised learning model to enhance the performance of the classification model. Subsequently, the self-supervised learning results, based on the classification model, demonstrate highly effective classification of mosquito images using only a minimal 5% or 10% labeled dataset. Model performance is evaluated through the use of a confusion matrix.

Comparing the results obtained from the supervised learning (SL) models and the fine-tuned models allowed us to gain valuable insights into the effectiveness of our approach. This comparison aided us in determining the optimal learning conditions and the appropriate amount of training data required for our tasks. Our experimental setup included a batch size of 64, the utilization of cross-entropy loss, an Adam optimizer, a learning rate set at 0.0001, and a maximum of 500 epochs for training our models.

Evaluation metrics

The performance of the meticulously trained model, which consistently achieved a low loss throughout the training process, was assessed by classifying mosquitoes within the test Validation dataset in Fig. 10.

Compare the training accuracy and loss of Resnet 18, Resnet 50, Resnet 101, and Resnet 152 models trained with 5% and 10% training set in self-supervised learning against models trained with a 100% training set in supervised learning.

The performance of the models was assessed using confusion metrics. Evaluation of statistical metrics, which encompass precision, recall, specificity, F1 score, and overall accuracy, was conducted utilizing the information derived from the confusion matrix table. This matrix yielded data for true positives (TP), true negatives (TN), false positives (FP), and false negatives (FN). With these values at our disposal54, we were able to calculate a variety of statistical metrics such as.

Tp, Tn, Fp, and Fn represent true positive, true negative, false positive, and false negative counts in binary classification. “Actual positive” is the sum of true positives and false negatives, while “Actual negative” is the sum of true negatives and false positives. Evaluating a mosquito classification model involves analyzing precision, recall, specificity, F1 score, and overall accuracy metrics to gauge its performance. Precision measures the accuracy of positive predictions, ensuring that identified mosquitoes are correctly classified, which is crucial for avoiding misidentifications. Recall indicates the model’s ability to detect all relevant instances, ensuring that most mosquitoes are identified, which is essential for comprehensive monitoring. The F1-score balances precision and recall, providing a single metric that reflects overall effectiveness when both false positives and false negatives are important.

All performance metrics for the proposed model are derived from the confusion matrix. The prediction scores for each class are calculated based on the number of correctly identified images retrieved from the nearest images in the trained database, expressed as percentages. The class with the highest score is selected as the predicted class for the query image. Subsequently, the number of correctly identified images in the testing dataset is gathered to construct the confusion matrix.

The receiver operating characteristic (ROC) curve is a binary classification performance metric that plots the True Positive Rate (Sensitivity) against the False Positive Rate (1-Specificity). ROC curve was generated with a 95% confidence interval, and the area under the curve (AUC) was calculated to evaluate the model’s accuracy. The ROC analysis was conducted using the Scikit-Learn library in Python.

Result

Model performance by confusion matrix tables

All statistics in the confusion matrix are essential for assessing the performance of the proposed model. The prediction scores for each class are calculated by converting the number of correctly identified images from the nearest images in the trained dataset into percentages. The class with the highest score is chosen as the predicted class for the query image. Furthermore, the confusion matrix is constructed using the corrected images from the testing dataset.

In the Materials and Methods section, we utilized a dataset of 7 mosquitoes that were labeled through a fine-tuned process involving a classification model with pre-trained weights. The performance of our trained models was evaluated using a confusion matrix table in Fig. 11, where a distinct diagonal pattern, progressing from left to right, represents a notable concentration of true positive (TP) values for each model. It is noteworthy that, while supervised learning (SL) approaches exhibit a more robust TP pattern than self-supervised learning (SSL) methods, SSL operates with a significantly reduced training dataset, underscoring its innovative nature. Our findings suggest that the SSL-based Resnet 50 model stands out as the top performer in terms of overall performance.

Compare the performance of different SSL Resnet models using confusion matrix tables and make a comparison between SSL and SL models.

Supervised learning (SL) initially outperforms self-supervised learning (SSL), but a nuanced comparison is imperative. Notably, SSL models trained on only 5% and 10% of the data showcase a remarkable reduction in false negatives (FN) and false positives (FP). However, it’s worth highlighting that within the self-supervised learning domain, the performance of Resnet models trained on limited data exhibits sporadic outcomes. In Table 2, with a mere 10% of the data, the Resnet 50 model shines, achieving outstanding statistical metrics, including a 96.77% accuracy, 98.28% specificity, 88.58% F1 score, 87.77% recall, and 89.47% precision. This underscores the model’s robustness in the face of limited training data challenges. Given the inherent constraints associated with mosquito data and the intricacies of label accuracy arising from technical complexities, the judicious use of only 5% of the training data emerges as a highly effective strategy. This strategy yields impressive performance metrics, including a 96.20% accuracy, 97.94% specificity, 86.55% F1 score, 85.71% recall, and 87.4% precision. These findings highlight the growing potential of SSL techniques in addressing open datasets, typically characterized by limited labeling.

Gauging the performance of various models

The area under the ROC curve (AUC) that was calculated demonstrates the overall accuracy of the developed model.

As described earlier, the AUC results indicate the performance of SSL Resnet 50 and SSL Resnet 152 models on 10% of the training data. These models excelled with an AUC exceeding 99%, showcasing superior performance compared to other SSL models. In particular, SSL Resnet 50 demonstrated the highest AUC values (AUC = 99.5%) among the SL models, while SSL Resnet 152 exhibited AUC values (AUC = 99%) closely matching those of the other SL models (refer to Fig. 12). Notably, with just 5% of the training data and a small network layer in Resnet 18, the AUC reached an impressive 96.8%. This strong AUC score underscores the model’s suitability for real-world applications, a conclusion reinforced by the findings in Fig. 11. Despite employing small neural network layers in the Resnet 18 model trained with dataset (5%), the AUC yielded results comparable to the SL model in term of application. This observation underscores the superiority of the SSL technique over the SL model.

Left: ROC curves are valuable for evaluating the overall performance of both supervised and self-supervised models in terms of accuracy. Right: Comparing AUC values between self-supervised learning (SSL) and supervised learning (SL) models, with a focus on assessing the performance of models trained with limited labeled data (5% and 10%).

Discussion

Several studies in entomology have investigated the application of machine learning and deep learning techniques, with particular emphasis on the efficiency of self-supervised learning (SSL) algorithms. Nazir et al. employed Artificial Neural Networks (ANN) and Random Forest (RF) to study fruit fly host preferences, though their methods required large labeled datasets24. Salifu et al. focused on fruit fly morphometrics using Support Vector Machines (SVM) and ANN, achieving an accuracy of 95–96%. However, their approach necessitated further feature selection25. Similarly, Ling et al. utilized Random Forest for analyzing wing venation patterns, attaining a high F1-score (0.89), but their method involved complex preprocessing due to limited sample sizes26. Remboski T’s work on insect classification using SVM heavily relied on manual segmentation23, whereas our model simplified this process by using whole-body images. Additionally, Manduca G’s study on insect motor anomalies utilized Random Forest and K-Nearest Neighbors (KNN), achieving 71% accuracy, but required highly precise labeled data for numerous features27. Motta et al. employed supervised learning-based classification models such as LeNet, AlexNet, and GoogleNet for mosquito classification15, while our self-supervised learning model demonstrated a significant improvement over these existing models. Park et al. employed supervised learning using the VGG-16 model on a fully labeled, large-scale dataset of mosquitoes, attaining a high level of classification accuracy55. Although existing models demonstrated performance comparable to ours on larger datasets, our model showed marked improvement when trained on a smaller labeled dataset, maintaining a high degree of accuracy. In contrast, the Bootstrap Your Own Latent (BYOL) framework autonomously learns features during pretraining, significantly reducing the reliance on labeled data and extensive preprocessing. In our research, the proposed model achieved a 96.77% accuracy and an F1-score of 0.88 without the need for intensive preprocessing. The self-supervised learning (SSL) approach, capable of learning from minimal labeled data, offers a promising alternative for research with limited datasets. Our findings indicate that the proposed model, trained on only 10% labeled data (40 images per class), outperforms existing models15,55,56. The model exhibits an impressive accuracy of over 96% in accurately identifying the genus and species of mosquito vectors. A distinctive feature of our approach is its adaptability to small datasets, as it requires merely 5% (20 images) and 10% (40 images) of labeled datasets for effective training. Notably, the results of training on these smaller datasets using self-supervised learning are comparable to those of models trained on fully labeled datasets through traditional supervised learning methods, as well as the previously established model15,55,56. This capability of our model addresses the prevalent challenges associated with the scarcity of adequately trained personnel, particularly within the public health sector, by eliminating the necessity for extensive labeled datasets. The utilization of BYOL (Bootstrap Your Own Latent) in this study has proven to be instrumental in the extraction of intricate features from mosquito images. Furthermore, the model incorporates a clustering mechanism to organize these features, enabling the accurate classification of mosquito vectors into their respective genera and species. Subsequently, the knowledge gained is seamlessly transferred to a Resnet representation classification model, enhancing the overall classification process.

Although our well-trained self-supervised learning model faces limitations due to the extensive features extracted and the batch size parameter, these factors affect its ability to effectively discern crucial and sensitive features. A significant concern in our study was the limited computational resources available. Although larger batch sizes like 512, 1024, 2048, and 4096 could potentially boost performance compared to 6440, our computational constraints compelled us to not use the largest. We optimized training by running the BYOL model for 6000 epochs to achieve the best results within our resource limitations. The confusion matrix in Fig. 10 demonstrates the performance of our self-supervised learning model based on ResNet-50, trained with only a 10% labeled dataset. We observed a higher rate of false negatives, especially in the An.minimus A class, highlighting the challenge of accurately classifying this mosquito species. This issue may stem from our limitation, where the limited representation of An.minimus A instances in the dataset affects the model’s ability to learn distinguishing features. To address this, to overcome the limitations imposed by batch size during the training process and achieve a larger parameter for breakthroughs, we propose a strategic approach. By strategically eliminating non-essential feature areas and focusing solely on the crucial features of the mosquito body within the image, we can significantly reduce the size of the image while ensuring that essential features are retained. To enhance and integrate with other models to boost the performance of Self-Supervised Learning (SSL), we propose focusing on the architecture of the pre-training process using the Dinov257 algorithm. Dinov2 is a pre-trained model developed with the large LVD-124 M dataset and fine-tuned with ViT-S, ViT-B, and ViT-L57 architectures on a fraction of the entire labeled dataset in the downstream process. Pre-training offers several advantages: it leverages extensive and diverse datasets to capture generalized features, which can then be fine-tuned with a smaller labeled dataset, improving model performance and reducing the need for large amounts of labeled data. According to Pinetsuksai N’s study on developing SSL with Dinov2-distilled models for parasite classification in screening58, Dinov2 was applied in the pre-training process using only a fraction of the labeled dataset (1% and 10%) of parasite images. This was compared with the BYOL algorithm to determine which approach yields better performance. The study found that using Dinov2 with ViT-L and 10% of the labeled dataset achieved the highest model performance. Specifically, Dinov2 outperformed BYOL (with ResNet-50) on several metrics: accuracy increased from 97.70% to 99.00%, recall improved from 78.50% to 93.70%, precision rose from 92.30% to 95.20%, specificity grew from 99.20% to 99.90%, and the F1 score advanced from 84.90% to 94.30%, all with only 10% of the labeled dataset. These improvements highlight the advantage of pre-training: it allows models to achieve high performance even with limited labeled data, making it a powerful approach for tasks such as mosquito species identification. Integrating Dinov2 could thus significantly enhance our model’s performance, leveraging the benefits of pre-training to optimize results. This optimization strategy allows us to maintain the necessary features for accurate classification while mitigating the computational burden associated with large feature vectors. By doing so, we aim to enhance the efficiency and effectiveness of our training process. In another way, efforts could focus on enhancing the dataset’s diversity for this specific class, exploring feature representation improvements, and considering fine-tuning strategies or ensemble approaches to mitigate false negatives and improve overall classification performance.

In real-world applications, implementing models across diverse hardware platforms, such as mini-PCs, edge computing devices, and mobile phones, presents challenges in scaling down models to fit resource constraints while maintaining performance. Wei Niu’s CADNN59 addresses these challenges by optimizing ResNet-50 for mobile devices using several techniques. CADNN enhances integration by fusing layers (e.g., Convolution, BatchNorm, Activation) into larger computation kernels, which improves memory performance and SIMD utilization. For 1 × 1 convolution filters, CADNN converts these operations into matrix multiplications to further enhance memory and SIMD efficiency. It also refines memory layout and load operations with techniques like tiling, alignment, and padding, and reduces redundant memory loads. Additionally, CADNN tunes optimization parameters by pruning sub-optimal configurations and using compiler transformations to generate efficient computation kernels. Collectively, these strategies enhance ResNet-50’s performance on mobile devices, addressing memory and computational constraints. Experimental evaluations on a Xiaomi 6 phone with Android 8.0, featuring a Snapdragon 835 CPU (up to 2.45 GHz), Adreno 540 GPU (710 MHz), and 6 GB of RAM, showed varying inference times based on implementation conditions: CADNN dense on CPU at 250 ms, dense on GPU at 70 ms, compressed on CPU at 80 ms, and compressed on GPU at 25 ms. The model size was 102.4 MB with an accuracy of 75.2%. Alternatively, deploying the model on a server, where edge or mobile devices capture images and request computations, can help maintain performance while simplifying implementation. This approach not only alleviates the computational burden on mobile devices but also facilitates easier updates to the model, allowing for the incorporation of new features or performance enhancements.

In this research, the multiple image data sources from mobile devices, such as mobile phones and IoT devices, present a promising alternative for delivering healthcare services in remote areas. Our proposed model focuses on the critical importance of capturing detailed mosquito features to optimize the performance of BYOL using pre-trained models, particularly when fine-tuning with limited labeled datasets. Although mobile phone images offer high resolution, they still face limitations related to the ratio between the entire image and the mosquito’s body. This limitation can lead to the loss of essential mosquito body features, which negatively impacts model performance. Kittichai V’s study indicates that models trained with mobile phone images showed reduced performance in identifying mosquito species (An. dirus and Ae. aegypti)60. This decline could stem from variations in sample size and image quality, which affect the learning accuracy of the model. Another limitation of mobile phone images is camera’s focus length. Many mobile phones, especially those without high-end specifications, lack built-in macro lenses, making it difficult to focus sharply on the mosquito’s body during close-up shots. To mitigate this issue, integrating a macro lens kit with mobile phones could improve image clarity. However, the wide range of macro lens kits and their compatibility with different mobile phones present practical challenges. As an alternative, stereomicroscopy provides superior image quality, making it an excellent choice for ensuring dataset integrity and improving model performance. By offering clearer and more detailed images of mosquito features, stereomicroscopy help overcome the limitations of mobile phone imaging, thereby enhancing the accuracy of our proposed model.

In this study, archived mosquito species identified by expert entomologists were utilized51, including Aedes aegypti (Ae. aegypti) and Aedes albopictus (Ae. albopictus) as primary vectors for dengue61 (Zika, and Chikungunya viruses). Anopheles dirus (An. dirus), Anopheles maculatus (An. mac), Anopheles minimus (An. minimus_A), and Anopheles harrisoni (An. minimus_C) as vectors for malaria in humans61,62,63, and Culex quinquefasciatus (Cx. spp) as a vector for Japanese encephalitis (JE)64 and filariasis65. In related work, Eiamsamang, S. conducted experiments comparing mosquito species identification methods between entomologists, public health officers, and deep learning approaches51. The study revealed that entomologists and public health officers struggled to accurately identify Anopheles minimus (An. minimus_A) and Anopheles harrisoni (An. minimus_C) due to the complexity of their features. In contrast, deep learning methods achieved high performance in identifying these two species. Although this study focused on only seven mosquito species, the proposed model demonstrates the potential to handle a broader range of mosquito vectors effectively, particularly in Thailand.

The significance of our proposed model lies in its potential real-world application for vector identification. By achieving high accuracy without an overwhelming reliance on extensive labeled datasets, our model offers a practical and efficient solution, reducing the dependence on highly skilled personnel from the public health sector. In summary, our study establishes a robust and adaptable methodology for mosquito vector identification that holds promise for broader implementation in real-world settings.

Conclusion

In this study, we conducted a detailed comparison between supervised and self-supervised learning models using small datasets (5% and 10% labeling) for mosquito classification, aiming to elucidate their respective performances. The initial advantage observed in supervised learning accuracy, as shown in Table 2, underwent a significant shift with self-supervised learning models, particularly Resnet 50 trained on limited data, achieving an impressive 96.7% accuracy. These models not only demonstrated competitive accuracy but also excelled in key statistical metrics, including specificity (98.2%), F1 score (88.5%), recall (87.7%), and precision (89.4%) outperforming other models. Furthermore, even with a minimal dataset of just 5% labeling, the self-supervised learning model approached the performance of the fully supervised model that used 100% labeling. Specifically, Resnet 18, the smallest network in our study, achieved a notable accuracy of 95.7%, closely comparable to the best-performing supervised learning model at 97.7%. The distinctive strength of self-supervised learning models, exemplified by BYOL, lies in their ability to learn powerful feature representations without the conventional reliance on positive or negative sample pairs or the need for a large batch size during training. This unique characteristic played a pivotal role in achieving exceptional statistical metrics and has implications for the efficiency of these models in mosquito classification. Moreover, the adaptability and flexibility of self-supervised learning algorithms in extracting valuable information from large datasets without explicit class labels or annotations represent a groundbreaking advancement. This flexibility, combined with the capacity to overcome data limitations and technical challenges, positions self-supervised learning, when coupled with advanced technologies, as a potent approach in real-world applications for mosquito classification.

In conclusion, our findings suggest that self-supervised learning models not only match the performance of supervised learning models in mosquito classification but also offer additional advantages. The inherent flexibility, adaptability, and the ability to learn powerful feature representations without explicit labels make self-supervised learning an appealing choice in the context of mosquito surveillance and control. As we continue to grapple with the global challenges posed by mosquito-borne diseases, this study contributes valuable insights to the evolving landscape of machine learning applications in entomology. The observed strengths of self-supervised learning models encourage further exploration and development, pointing towards a future where these models play a pivotal role in addressing the complexities of mosquito-borne diseases and contribute to global public health initiatives.

Data availability

The data supporting the findings of this study are available from the corresponding author upon reasonable request.

References

Organization, W. H. & UNICEF. Global vector control response 2017–2030. (2017).

Khongwichit, S., Chuchaona, W., Vongpunsawad, S. & Poovorawan, Y. Molecular surveillance of arboviruses circulation and co-infection during a large chikungunya virus outbreak in Thailand, October 2018 to February 2020. Sci. Rep. 12, 22323 (2022).

Rattanarithikul, R. A guide to the genera of mosquitoes (Diptera: Culicidae) of Thailand with illustrated keys, biological notes and preservation and mounting techniques. Mosq Syst 14, 139–208 (1982).

Rueda, L. M. Pictorial keys for the identification of mosquitoes (Diptera: Culicidae) associated with dengue virus transmission. Zootaxa 589, 1–60–61–60 (2004).

Eritja, R. et al. First detection of Aedes japonicus in Spain: an unexpected finding triggered by citizen science. Parasit. Vectors 12, 1–9 (2019).

Werner, D., Kronefeld, M., Schaffner, F. & Kampen, H. Two invasive mosquito species, Aedes albopictus and Aedes japonicus japonicus, trapped in south-west Germany, July to August 2011. Eurosurveillance 17, 20067 (2012).

Cornel, A. J. & Collins, F. H. PCR of the ribosomal DNA intergenic spacer regions as a method for identifying mosquitoes in the Anopheles gambiae complex. Species Diagnostics Protocols: PCR and Other Nucleic acid Methods, 321–332 (1996).

Kothera, L., Byrd, B. & Savage, H. M. Duplex real-time PCR assay distinguishes Aedes aegypti from Ae. albopictus (Diptera: Culicidae) using DNA from sonicated first-instar larvae. Journal of medical entomology 54, 1567–1572 (2017).

Beebe, N. W. DNA barcoding mosquitoes: advice for potential prospectors. Parasitology 145, 622–633 (2018).

Smith, D. L. & Marshall, J. M. MGSurvE: A framework to optimize trap placement for genetic surveillance of mosquito populations. PLOS Computational Biology 20 (2024).

Giunti, G., Becker, N. & Benelli, G. Invasive mosquito vectors in Europe: from bioecology to surveillance and management. Acta Tropica 239, 106832 (2023).

Gutiérrez-López, R., Figuerola, J. & Martínez-de la Puente, J. Methodological procedures explain observed differences in the competence of European populations of Aedes albopictus for the transmission of Zika virus. Acta Tropica 237, 106724 (2023).

Lippi, C. A. et al. Trends in mosquito species distribution modeling: insights for vector surveillance and disease control. Parasites & Vectors 16, 302 (2023).

Wong, J. C. C. et al. Case report: Zika surveillance complemented with wastewater and mosquito testing. EBioMedicine 101 (2024).

Motta, D. et al. Application of convolutional neural networks for classification of adult mosquitoes in the field. PloS one 14, e0210829 (2019).

Villarreal, S. M., Winokur, O. & Harrington, L. The impact of temperature and body size on fundamental flight tone variation in the mosquito vector Aedes aegypti (Diptera: Culicidae): implications for acoustic lures. Journal of medical entomology 54, 1116–1121 (2017).

Ouyang, T.-H., Yang, E.-C., Jiang, J.-A. & Lin, T.-T. Mosquito vector monitoring system based on optical wingbeat classification. Computers and Electronics in Agriculture 118, 47–55 (2015).

González-Pérez, M. I. et al. Field evaluation of an automated mosquito surveillance system which classifies Aedes and Culex mosquitoes by genus and sex. Parasites & Vectors 17, 97 (2024).

Mukundarajan, H., Hol, F. J. H., Castillo, E. A., Newby, C. & Prakash, M. Using mobile phones as acoustic sensors for high-throughput mosquito surveillance. elife 6, e27854 (2017).

Jackson, J. C. & Robert, D. Nonlinear auditory mechanism enhances female sounds for male mosquitoes. Proceedings of the National Academy of Sciences 103, 16734–16739 (2006).

Arthur, B. J., Emr, K. S., Wyttenbach, R. A. & Hoy, R. R. Mosquito (Aedes aegypti) flight tones: Frequency, harmonicity, spherical spreading, and phase relationships. The Journal of the Acoustical Society of America 135, 933–941 (2014).

Lorenz, C., Ferraudo, A. S. & Suesdek, L. Artificial Neural Network applied as a methodology of mosquito species identification. Acta Tropica 152, 165–169 (2015).

Remboski, T. B., de Souza, W. D., de Aguiar, M. S. & Ferreira Jr, P. R. in Proceedings of the 33rd Annual ACM Symposium on Applied Computing. 260–267.

Nazir, N. et al. Zeugodacus fruit flies (Diptera: Tephritidae) host preference analysis by machine learning-based approaches. Computers and Electronics in Agriculture 222, 109095 (2024).

Salifu, D., Ibrahim, E. A. & Tonnang, H. E. Leveraging machine learning tools and algorithms for analysis of fruit fly morphometrics. Scientific reports 12, 7208 (2022).

Ling, M. H. et al. Machine learning analysis of wing venation patterns accurately identifies Sarcophagidae, Calliphoridae and Muscidae fly species. Medical and Veterinary Entomology 37, 767–781 (2023).

Manduca, G. et al. Learning algorithms estimate pose and detect motor anomalies in flies exposed to minimal doses of a toxicant. Iscience 26 (2023).

Liu, C., Guo, Y., Li, S. & Chang, F. ACF based region proposal extraction for YOLOv3 network towards high-performance cyclist detection in high resolution images. Sensors 19, 2671 (2019).

Pang, S. et al. A novel YOLOv3-arch model for identifying cholelithiasis and classifying gallstones on CT images. PloS one 14, e0217647 (2019).

Rajaraman, S. et al. Pre-trained convolutional neural networks as feature extractors toward improved malaria parasite detection in thin blood smear images. PeerJ 6, e4568 (2018).

Zhong, Y., Gao, J., Lei, Q. & Zhou, Y. A vision-based counting and recognition system for flying insects in intelligent agriculture. Sensors 18, 1489 (2018).

Zhou, J. et al. Improved UAV opium poppy detection using an updated YOLOv3 model. Sensors 19, 4851 (2019).

Ortiz, A. S., Miyatake, M. N., Tünnermann, H., Teramoto, T. & Shouno, H. in Proceedings of the International Conference on Parallel and Distributed Processing Techniques and Applications (PDPTA). 320–325 (The Steering Committee of The World Congress in Computer Science, Computer …).

Li, Z., Zhou, Z., Shen, Z. & Yao, Q. in Artificial Intelligence Applications and Innovations: IFIP TC12 WG12. 5-Second IFIP Conference on Artificial Intelligence Applications and Innovations (AIAI2005), September 7–9, 2005, Beijing, China 2. 483–489 (Springer).

Genoud, A. P., Basistyy, R., Williams, G. M. & Thomas, B. P. Optical remote sensing for monitoring flying mosquitoes, gender identification and discussion on species identification. Applied Physics B 124, 1–11 (2018).

Jakhete, S., Allan, S. & Mankin, R. Wingbeat frequency-sweep and visual stimuli for trapping male Aedes aegypti (Diptera: Culicidae). Journal of medical entomology 54, 1415–1419 (2017).

Redmon, J., Divvala, S., Girshick, R. & Farhadi, A. in Proceedings of the IEEE conference on computer vision and pattern recognition. 779–788.

Tannous, M., Stefanini, C. & Romano, D. A Deep-Learning-Based detection approach for the identification of insect species of economic importance. Insects 14, 148 (2023).

Bjerge, K. et al. Accurate detection and identification of insects from camera trap images with deep learning. PLOS Sustainability and Transformation 2, e0000051 (2023).

Grill, J.-B. et al. Bootstrap your own latent-a new approach to self-supervised learning. Advances in neural information processing systems 33, 21271–21284 (2020).

Saunshi, N., Plevrakis, O., Arora, S., Khodak, M. & Khandeparkar, H. in International Conference on Machine Learning. 5628–5637 (PMLR).

Russakovsky, O. et al. Imagenet large scale visual recognition challenge. International journal of computer vision 115, 211–252 (2015).

He, K., Zhang, X., Ren, S. & Sun, J. in Proceedings of the IEEE conference on computer vision and pattern recognition. 770–778.

Zbontar, J., Jing, L., Misra, I., LeCun, Y. & Deny, S. in International conference on machine learning. 12310–12320 (PMLR).

Kar, S. et al. in AI for Agriculture and Food Systems.

Pinetsuksai, N. et al. in Asian Conference on Intelligent Information and Database Systems. 40–51 (Springer).

Pallathadka, H. et al. Impact of machine learning on management, healthcare and agriculture. Materials Today: Proceedings 80, 2803–2806 (2023).

Sukkanon, C. et al. Distribution of mosquitoes (Diptera: Culicidae) in Thailand: a dataset. GigaByte 2023 (2023).

Gui, J. et al. A Survey on Self-supervised Learning: Algorithms, Applications, and Future Trends. IEEE Transactions on Pattern Analysis and Machine Intelligence (2024).

Wang, Z., Li, Z., Wang, J. & Li, D. Network Intrusion Detection Model Based on Improved BYOL Self-Supervised Learning. Security and Communication Networks 2021, 9486949 (2021).

Eiamsamang, S. et al. DEEP LEARNING TECHNOLOGY FOR FIELD-BASE MOSQUITO VECTOR IDENTIFICATION.

Da Costa, V. G. T., Fini, E., Nabi, M., Sebe, N. & Ricci, E. solo-learn: A library of self-supervised methods for visual representation learning. Journal of Machine Learning Research 23, 1–6 (2022).

You, Y., Gitman, I. & Ginsburg, B. Large batch training of convolutional networks. arXiv:1708.03888 (2017).

Chu, K. An introduction to sensitivity, specificity, predictive values and likelihood ratios. Emergency Medicine 11, 175–181 (1999).

Park, J., Kim, D. I., Choi, B., Kang, W. & Kwon, H. W. Classification and morphological analysis of vector mosquitoes using deep convolutional neural networks. Scientific reports 10, 1012 (2020).

De Los Reyes, A. M. M., Reyes, A. C. A., Torres, J. L., Padilla, D. A. & Villaverde, J. in 2016 IEEE Region 10 Conference (TENCON). 2342–2345 (IEEE).

Oquab, M. et al. Dinov2: Learning robust visual features without supervision. arXiv:2304.07193 (2023).

Pinetsuksai, N. et al. in 2023 15th International Conference on Information Technology and Electrical Engineering (ICITEE). 323–328 (IEEE).

Niu, W., Ma, X., Wang, Y. & Ren, B. 26ms inference time for resnet-50: Towards real-time execution of all dnns on smartphone. arXiv:1905.00571 (2019).

Kittichai, V. et al. Automatic identification of medically important mosquitoes using embedded learning approach-based image-retrieval system. Scientific Reports 13, 10609. https://doi.org/10.1038/s41598-023-37574-3 (2023).

Kittichai, V. et al. Deep learning approaches for challenging species and gender identification of mosquito vectors. Scientific Reports 11, 4838. https://doi.org/10.1038/s41598-021-84219-4 (2021).

Muenworn, V. et al. Biting activity and host preference of the malaria vectors Anopheles maculatus and Anopheles sawadwongporni (Diptera: Culicidae) in Thailand. Journal of Vector Ecology 34, 62–69. https://doi.org/10.1111/j.1948-7134.2009.00008.x (2009).

Garros, C., Van Bortel, W., Trung, H. D., Coosemans, M. & Manguin, S. Review of the Minimus Complex of Anopheles, main malaria vector in Southeast Asia: from taxonomic issues to vector control strategies. Tropical Medicine & International Health 11, 102–114. https://doi.org/10.1111/j.1365-3156.2005.01536.x (2006).

Nitatpattana, N. et al. First isolation of Japanese encephalitis from Culex quinquefasciatus in Thailand. The Southeast Asian journal of tropical medicine and public health 36, 875–878 (2005).

Vadivalagan, C. et al. Exploring genetic variation in haplotypes of the filariasis vector Culex quinquefasciatus (Diptera: Culicidae) through DNA barcoding. Acta Tropica 169, 43–50. https://doi.org/10.1016/j.actatropica.2017.01.020 (2017).

Acknowledgements

We appreciate the financial support provided by the College of Advanced Manufacturing Innovation at King Mongkut’s Institute of Technology Ladkrabang [AMI-RESEARCH-01-65-03M] for this research project. Additionally, we extend our gratitude to the CiRA CORE team for providing the deep learning platform and software essential for supporting this research project.

Author information

Authors and Affiliations

Contributions

R.C., K.M.N., S.E., and V.K. collected the samples and acquired the image data. R.C., V.K., K.M.N., N.P., and T.T. constructed the mosquito dataset and performed the training, validation, and testing of the SSL models. R.C., V.K., and S.C. wrote most of the manuscript. V.K., S.B., and S.C. designed the study. N.P., T.T., and S.C. contributed source code and a dataset. R.C., V.K., P.S., S.B., and S.C. reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Charoenpanyakul, R., Kittichai, V., Eiamsamang, S. et al. Enhancing mosquito classification through self-supervised learning. Sci Rep 14, 27123 (2024). https://doi.org/10.1038/s41598-024-78260-2

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-78260-2