Abstract

To improve students’ understanding of physical education teaching concepts and help teachers analyze students’ cognitive patterns, the study proposes an association learning-based method for understanding physical education teaching concepts using deep learning algorithms, which extracts image features related to teaching concepts using convolutional neural networks. Moreover, a neurocognitive diagnostic model based on hypergraph convolution is constructed to mine the data of students’ long-term learning sequences and identify students’ cognitive outcomes. The findings revealed that the highest accuracy of the association graph convolutional neural network was 0.84 when the number of training samples was 90,000. In each of the three datasets, the cognitive diagnostic model’s accuracy was 0.76, 0.77, and 0.75, respectively. The use of the association graph convolutional neural network model resulted in an increase of 29% in the mastery of students in the concepts and knowledge of sports. The predictive accuracy of the cognitive schema diagnostic model ranged from 0.6 to 1.0 with a mean value of 0.81. The study reveals that the model proposed in the study has high accuracy and stability in predicting cognitive patterns, which can better identify students’ cognitive states and provide strong support for instructional guidance and personalized learning.

Similar content being viewed by others

Introduction

People are becoming more and more concerned about their health as society and the economy continue to grow. Physical education (PE), as an important way to improve people’s physical fitness, has also received more and more attention in terms of its status and role. PE reform has been going on for many years and has achieved remarkable results, but there are still some problems and challenges1,2. PE has long been viewed as a process of imparting skills and knowledge, ignoring students’ active participation and individualized development. With the change of educational philosophy, it has been gradually recognized that PE should be a comprehensive and integrated educational activity aimed at improving students’ physical fitness, mental health and social adaptability3,4. PE’s development of cognitive patterns (CPs) seeks to challenge the status quo in education, rise students’ curiosity and passion for learning, and develop their capacity for self-directed learning. Teachers must consider each student’s unique characteristics when building CPs, use a variety of instructional strategies, and establish a setting that encourages students to think, explore, and practice5. In this context, the research on conceptual understanding (CU) and CP construction of PE based on deep learning (DL) algorithm emerged.

DL is a type of artificial intelligence that mimics the neural network architecture of the human brain to learn and reason. An anomaly detection approach based on a deep feature extractor and a multi-scale autoencoder was presented by Banerjee et al. The model was able to maintain good detection capabilities under various hardware settings, according to the results6. Liu et al. collected data from four infrastructures and construction sites for building modularity target detection and thoroughly evaluated two DL algorithms. It was found that the performance of the algorithms was affected by the detection context and that the faster convolutional neural network (CNN) showed higher accuracy in the detection of selected objects7. Amirabadi et al. proposed a low-complexity DL structure for free-space optical communication systems by considering different combinatorial schemes8. Arif et al. used a DL approach including multilayer perceptron, long short-term memory (LSTM) and bi-directional LSTM neural networks, and a data-driven approach to predict 1-day seawater temperature. The findings revealed that the hybrid model performed best in daily seawater temperature prediction, obtaining an average absolute error of 0.1877 °C9. Shen et al. introduced blockchain and DL to construct a new complexity analysis model for university textbooks. The study’s findings suggested that the model might offer recommendations for future revisions and authorship of college English textbooks10.

MacPhail et al. used a reverse design process to design coordination learning opportunities and shared three examples of embedding teaching coordination into the physical education teacher education module. These examples were derived from the author’s own teacher education practice and implemented in various teacher education projects to assist pre service teachers in designing coordinated courses11. Through hardware and software design, Zhang et al. realized an assessment system with high real-time and anti-interference capabilities using the university PE reform evaluation system, which is based on wireless sensor networks. According to the experimental findings, the system was able to send the evaluation results in real time and finish an accurate evaluation quickly, which significantly raised the standard of PE reform12. Ma et al. addressed the issue of obesity and low physical fitness in teenagers by putting out a corrective method for PE vocational education. The measure supported giving students’ physical and mental health top priority and stressed physical education as a required subject. To effectively support and promote the improvement of physical education and health among adolescents, the study also suggested a novel method of sharing instructional materials in PE programs based on the Internet13. CP refers to the way an individual receives, processes and understands external information. Guo et al. used text mining to analyze online learners’ discussion forum data. The results showed that learners’ cognition is closely related to the level of interaction. Cognitive engagement ranged from low to high depending on the degree of interaction, and as the learning process progressed, the learners’ patterns of cognitive engagement underwent substantial changes14. Using a multilevel data mining technique, Liu et al. discovered an inverse U-curve link between the behavioral traits of online learners and their performance. According to the study, this approach can be used to statistically measure the cognitive load of online learners, exposing unique learning rules that are concealed from view15. Yang et al. proposed to formulate the task of assembling personalized exercise groups as a constrained multi-objective problem. Based on a novel cognitive diagnostic model, a co evolutionary algorithm based on dual encoding and dual population was used to solve the problem. The main population binary encoding was used to search for exercises, and the auxiliary population integer encoding was used to accelerate convergence. In experiments on three popular datasets, compared with advanced recommendation methods, the exercises assembled by this method are effective and can improve students’ proficiency in mastering insufficient and new knowledge concepts16.

In summary, in the field of physical education teaching, helping students efficiently understand teaching concepts and accurately analyze their cognitive patterns has always been a key goal pursued by educators. Traditional physical education teaching often uses indoctrination based explanations and demonstration demonstrations to assist students in understanding sports concepts, but there are many limitations. On the one hand, relying solely on verbal and physical demonstrations is difficult to demonstrate complex knowledge systems, and students often have only a partial understanding of key concepts. On the other hand, previous teaching lacked effective mechanisms for mining students’ long-term learning data, making it difficult to capture cognitive trajectories and achieve personalized teaching. With the innovation of educational technology, although digital resources are gradually becoming popular, the old methods that rely on simple data analysis and experiential judgment of cognitive status have low accuracy and cannot meet the needs of diverse teaching scenarios. This not only hinders the improvement of teaching quality but also restricts student development. Therefore, it is urgent to innovate teaching aids. In this context, DL algorithms bring a glimmer of hope for breaking through. By utilizing the unique advantages of DL algorithms and innovatively proposing a concept understanding method for physical education teaching based on association learning, this study utilizes the powerful image feature extraction ability of convolutional neural networks to concretize obscure sports concepts. At the same time, constructing a student cognitive pattern diagnostic model (CPDM), digging deep into students’ long-term learning sequence data, and directly addressing the shortcomings of traditional methods. This plan focuses precisely on teaching difficulties, aiming to efficiently enhance students’ understanding of sports concepts, accurately diagnose cognitive patterns, inject technological vitality into sports teaching, and achieve the vision of personalized and high-quality teaching.

Methods and materials

The studies titled “Conceptual Understanding and Cognitive Patterns Construction for Physical Education Teaching Based on Deep Learning Algorithms” involving human participants were reviewed and approved by School of Marxism, China University of Political Science and Law (CUPL). The participants provided their written informed consent to participate in this study. All methods were carried out in accordance with relevant guidelines and regulations. All experimental protocols were approved by China University of Political Science and Law (CUPL) committee. Informed consent was obtained from all subjects and/or their legal guardian(s).

Conceptual understanding approach to PE based on the DL algorithm

As a DL technique, association learning can aid students in identifying and comprehending a variety of PE topics and relationships. The association learning model is able to assist PE by automatically learning and extracting image features related to a certain sport action or teaching concept from a large amount of PE image data. Figure 1 illustrates the method of using association learning theory.

The application process of the association learning theory.

Firstly, the input image data enters the convolutional layer (CL) for feature extraction. Next, the extracted features enter the fully connected layer (FCL) for further processing. The processed data is called correlation features, which will be used by the correlation discriminator. At the same time, associated labels also participate in this process and are used together with associated features for discrimination and learning. CNN excels in image feature extraction, which is why the study has opted to utilize CNN for modeling and adopt the ReLU activation function (AF) as the AF of the association discriminator in the association learning model design. There are at least 3 types of association labels for the relationship between two actual objects. These 3 association labels are encoded using one-hot. The model’s output is translated to a probability representation using the softmax function (SF) after the output variables are transformed into a format that the learning process can easily use. The association label is the one that matches the highest probability value17,18. The detailed form of the SF is shown in Eq. (1).

In Eq. (1), the real vectors are β and Z, respectively, and the vector dimension is L. In the association learning model, the SF form is shown in Eq. (2).

In Eq. (2), the model output feature mapping value is P, and the sample feature vector is u. The association category is m, and the weight parameter is w. The Softmax function can convert the output of the model into a probabilistic form, so that the label corresponding to the maximum probability value can be intuitively determined, that is, the associated label, which facilitates subsequent classification and judgment operations. Its detailed forms are shown in Eqs. (1) and (2) in the text. Equation (1) is the operation of real valued vectors, while Eq. (2) involves the model output feature mapping values, etc. Cross entropy is a measure of the difference between the probabilities of different distributions of the same random variable. It can represent the gap between the true and discriminative probability distributions19. When the cross-entropy value obtained in the training process is small, it means that the discrimination accuracy of the model is higher. The cross entropy loss function quantifies the prediction error of the model by calculating the difference between the true probability distribution and the predicted probability distribution of the model. In the process of model training, the cross entropy loss function plays a crucial role. It provides a quantifiable goal for the optimization algorithm to minimize the difference between the predicted probability distribution and the true probability distribution. By constantly adjusting the parameters of the model to reduce the cross-entropy loss, the model can gradually learn more accurate probability distribution, thus improving the discrimination accuracy. In practice, the analysis and optimization of the cross-entropy loss function can help the model learn the patterns in the data more effectively, thus reducing the degree of deviation between the predicted probability and the real probability, and finally achieving the purpose of improving the model understanding ability and prediction accuracy. The cross-entropy loss form \(Loss\) is shown in Eq. (3).

In Eq. (3), the probability value after the encoding of the true association is q, and the probability value of the discriminative association label is \(\hat {q}\). Adam’s optimization algorithm optimizes the loss function and calculates the adaptive learning rate by using the biased variance of the gradient and the mean of the first- and second-order moments20. It also introduces momentum gradient descent to update the gradient by exponentially weighted averaging to improve the efficiency of parameter updating. The model evaluation uses a 0–1 loss function as shown in Eq. (4).

In Eq. (4), the sample prediction value is A and the sample target value is \(f(U)\). The test accuracy \(Acc\) is used to measure the model training test results as shown in Eq. (5).

In Eq. (5), the true association label is I, and the sample instance pair is \({a_i}\). The association discriminant function is \(\hat {f}({u_i})\). \(N^{\prime }\) is the test samples. The study improves the CNN and proposes association image CNN (AICNN) model, as shown in Fig. 2.

AICNN model.

The AICNN model structure mainly consists of CL, PL, and FCL, and is mainly trained using datasets with pixels of 64 × 64. However, AICNN is prone to memory shortage when using different pixel samples for training. Therefore, the study adjusted the parameters of the CL and designed three CL models with different numbers of layers, including 3, 4 and 5 layers, by reducing or increasing the number of CLs. Moreover, the study uses K-nearest neighbor (KNN) algorithm instead of softmax discriminator for the dataset. In this dataset, the identification of associated images is performed using the association learning discriminant model and the extraction of associated features is performed by the VGG-19 model. This process reduces the original sample examples to 1000 dimensional feature vectors. Then, the KNN algorithm is used to train the association discriminator. The associated image feature extraction process is shown in Fig. 3.

Associated image feature extraction process.

A DL algorithm based cognitive patterns construction method for PEs

It is necessary to assess students’ mastery of knowledge points and determine their cognitive outcomes once they have finished the CU and PE course. To mine data from students’ lengthy learning sequences, the study suggests a neurocognitive diagnostic model based on hypergraphic convolution (HGC). The model takes into account the correlations between knowledge points and the hypergraphic relationships between exercises, while LSTM is introduced to extract long-time semantic information. Students select practice problems and record their answers. The study’s objective is to diagnose learning by constructing hypergraphs between practice problems and knowledge points. This approach allows for the understanding of the cognitive status of students and the capturing of their proficiency on specific knowledge concepts. The practice-knowledge point (PKP) relationship diagram is shown in Fig. 4.

Practice-knowledge point diagram.

Based on the similarity index, the F1 score is used to forecast student performance and assess the dichotomous model’s accuracy. The similarity is calculated as shown in Eq. (6).

In Eq. (6), when exercises \({q_1}\) and \({q_2}\) are answered sequentially, the similarity of the answers is \({F_1}({q_1},{q_2})\). After the construction of the PKP relationship graph is completed, if there is an association between different exercises, then they can be classified into the same group, which can be represented in the form of hypergraph in the knowledge point relationship graph. Moreover, determining the affiliation degree of each exercise belonging to a certain cluster can be realized by fuzzy clustering algorithm. The creation of a fuzzy affiliation function, which quantifies the extent to which each data object belongs to each cluster rather than requiring it to be classified into a specific cluster, is the fundamental component of the fuzzy clustering method21. Therefore, the probability of each sample being classified into each category can be used to describe its degree of belonging. Figure 5 illustrates the creation process of the question-knowledge point hypergraph.

Question-knowledge point hypergraph construction process.

PKPs hypergraphs are composed of hypergraphs and prioritized association matrices generated by clustering. On its left side is the hypergraph, where nodes and hyperedges represent samples and clustering centers, respectively. If the affiliation of the nodes and hyperedges is close to 1, it indicates that they have a high degree of similarity. On the contrary, if the affiliation degree is close to 0, then they are less similar. Therefore, it is required to continuously refine the clustering center in order to increase the affiliation matrix’s performance and make it converge. This is researched to be accomplished by the fuzzy C-means (FCM) technique. The FCM algorithm is represented as shown in Eq. (7).

In Eq. (7), the affiliation degree of modality \({S_i}\) corresponding to hyperedge \(q_{j}^{\prime }\) is \(ls_{{i,j}}^{b}\). The fuzzy degree of the clustering result is b, the modalities is \(N^{{\prime \prime }}\), and the hyperedges is \(M^{{\prime \prime }}\). The regularity metric is \({d_{i,j}}\), which is used to measure the distance between the student and the exercise \({q_j}\). The initial value of the hyperedge embedding is shown in Eq. (8).

In Eq. (8), the modal embedding is \({v_i}\) and the initial value of the hyperedge embedding is \(q_{j}^{{\prime 0}}\). The element maximum pooling operation is \(pool\). The regular metric obtained after the attention mechanism is shown in Eq. (9).

In Eq. (9), the transform weight matrix obtained by learning is \({W_s}\). In the training process, the PKP hypergraph is placed into the graph convolutional network. First, the standard orthogonal vectors and the diagonal matrix containing the corresponding non-negative eigenvalues are obtained using feature decomposition. Next, the Fourier transform of the signal is performed in the hypergraph. To reduce the computational cost, Chebyshev polynomial pairs are used for parameterization. Finally, the sum is processed and the hyperedge weight matrix is initialized to a unit matrix. The hyperedge CL constructed in this way is shown in Eq. (10).

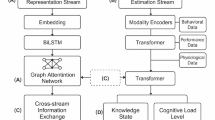

In Eq. (10), the layer l hypergraph signal is \({X^{(l)}}\). The nonlinear AF is \(\sigma\). The correlation matrix is H, and the hyperedge weight matrix is W. Based on this, the study proposes a CPDM as shown in Fig. 6.

Diagnostic model of cognitive patterns.

The model is able to fully mine the hyperdimensional relationships between different exercises using graph convolutional network (GCN) and LSTM. GCN can effectively extract the complex relationships between knowledge points and the relationships between PKPs, while LSTM can mine the long and short-term semantic information of students. The model starts by constructing a graph network of PKPs in order to fully extract the similarity between different exercises and the relationship between PKPs. Then, the hypergraph is constructed using the FCM clustering algorithm based on the similarity between different exercises obtained. Then, the hypergraph is mapped to the prioritized correlation matrix of the exercise questions and combined with performing HGC computation to obtain the hypergraph signal. Finally, the hypergraph signal is embedded with the one-hot codes of the exercises and the one-hot codes of the students as inputs to the LSTM for model training. By training the model, the students’ scores can be predicted and the cognitive state of the students’ knowledge can be analyzed based on the model parameters. The proficiency vector \({F^S}\) obtained in the embedding stage of the CP diagnosis process is shown in Eq. (11).

In Eq. (11), the student’s one-hot codes is \({x^S}\) and the trainable matrix is A. The knowledge point difficulty vector \({F^{di}}\) is represented as shown in Eq. (12).

In Eq. (12), the hypergraph embedding vector is X and the one-hot coding of the exercise is \({x^d}\). The knowledge point relevance vector \({Q^e}\) is calculated as shown in Eq. (13).

In Eq. (13), the labeled standard matrix is Q. The multidimensional items are dot-producted to obtain the inputs of the LSTM as shown in Eq. (14).

In Eq. (14), the input of LSTM is \(LST{M_{in}}\) and the differentiation vector is \({F^{dis}}\). The knowledge point importance vector is \({F^{imp}}\) and the answer speed vector is \({F^{ra}}\). Equation (15) displays the cross-entropy loss function \(loss^{\prime }\) of the diagnostic model.

In Eq. (15), the prediction label obtained by LSTM is y, and the real data label is r. The trained model gets the students’ scores by prediction, and the diagnosis result is the proficiency vector \({F^S}\), which is used to represent the students’ cognitive state of knowledge. Teachers can adjust the teaching method or provide targeted instruction according to the students’ cognitive state.

Results

Model performance test analysis

The experiment was conducted using an Intel Core i7-9700 K processor, NVIDIA GeForce RTX 3090 graphics card, and 1 TB NVMe solid-state drive on the Ubuntu 18.04.5 LTS operating system, with TensorFlow 2.5 as the deep learning framework, Python 3.8 as the compiler, and commonly used libraries such as NumPy and Pandas. The AICNN model performance test used the Leeds Sports Pose dataset, which was divided into training, testing, and validation sets in a 6:2:2 ratio. Study the influence of the number of training samples on the accuracy of AICNN models, and explore the changes in model testing accuracy before and after the improvement of convolutional layers. The cognitive pattern diagnosis model was validated using publicly available datasets from Assist0910, Assist1213, and EdNet education platform. The Q-matrix based cognitive diagnosis model, the adaptive organizational mapping neural network-based teaching cognitive diagnosis model, and the recurrent neural network-based cognitive diagnosis model were used as comparative models, with evaluation metrics including accuracy, root mean square error, and area under the curve. In addition, an analysis was conducted on the practical application effects of AICNN model and cognitive pattern diagnosis model on a university sports online teaching platform. The data preprocessing steps include data cleaning, removing duplicate, erroneous, or incomplete data records. Data normalization maps data feature values to specific intervals for model training and processing. Verify data labeling to ensure accuracy and compliance with research requirements. It may be necessary to perform cropping, scaling, and other operations on image data to unify the size and adapt to the input requirements of the model.

Figure 7 illustrates how the quantity of training samples affects the AICNN model’s accuracy. The AICNN model uses three convolutional layers, each containing 32, 64, and 128 convolution kernels, with a kernel size of 3 × 3. The pooling layer uses maximum pooling with a stride of 2. The fully connected layer contains 128 neurons and uses the ReLU activation function. The output layer uses the softmax function for multi classification. During the training process, a stochastic gradient descent optimizer is used with a learning rate of 0.01 and a batch size of 64.

Effect of the number of training samples on the accuracy of the AICCNN model.

In Fig. 7a, the training samples are 20,000 and 40,000. In Fig. 7b, the number of testing samples are 60,000 and 90,000. The testing accuracy of the AICNN model increases gradually as the training samples increases. The model’s accuracy at the 40th iteration is up to 0.74 when there are 20,000 training samples. At the thirty-first iteration, the model attains an accuracy of up to 0.78 using forty thousand training examples. By the 29th iteration, the model attains an accuracy of up to 0.79 using 60,000 training samples. By the 25th iteration, the model attains an accuracy of up to 0.84 using 90,000 training samples. The findings demonstrate that the AICNN model’s convergence can be sped up by adding more thresholds to the model. Before and after the improvement of the CL, the test accuracy of the model changes as shown in Fig. 8.

The test accuracy of the model changes before and after the convolution layer improvement.

The test accuracy of the model on various pixels before and after the CL improvement is compared in Fig. 8a. The test accuracy before and after CL improvement is 0.69 and 0.75 respectively when the image pixels are 64 × 64. The test accuracy before and after CL improvement is 0.71 and 0.82 respectively when the image pixels are 128 × 128. The test accuracy before and after CL improvement is 0.71 and 0.87 respectively when the image pixels are 256 × 256. The findings demonstrate that all of the upgraded CLs had improved test accuracy. In particular, the test accuracy of the improved CL is improved by 0.16 when the image pixels are 256 × 256. The improved CL has played a positive role, and as the image resolution increases, the advantages of this improvement become increasingly apparent. The improved CL has better recognition ability and accuracy when processing larger sized images. The difference in resolution has a significant impact on performance. Improved CL at higher resolutions can better capture image details and features, thereby improving the testing accuracy of the model. However, although the improvement effect at low resolutions is not as significant as at high resolutions, there is still improvement. The test accuracy comparison of the enhanced 3-, 4-, and 5-layer CLs is displayed in Fig. 8b. As the iterations increases, the test accuracy of each model gradually increases. The accuracy of the CL models with 3, 4 and 5 layers is up to 0.84, 0.85 and 0.85, respectively. The results show that the model’s does not increase with the number of CLs. The CPDM is validated using the public dataset of the education platform, which contains Assist0910, Assist1213, and EdNet. The Assist0910 and Assist1213 datasets were collected in the ASSISTments intelligent guidance system, which is a computer environment intelligent guidance system at Worcester Polytechnic Institute in the United States. Assist0910 was collected in 2009–2010, consisting of 3002 learners, 17,705 projects, 123 skills, and 277,540 interaction records. Assist1213 was collected in 2012, with 22,339 learners, 52,825 math problems, 265 skills, and approximately 2.7 million items, each problem corresponding to a skill. The EdNet dataset is a student system interaction dataset collected by Santa, a multi platform artificial intelligence tutoring service company. The EdNet used in the study randomly selected 5000 students from the original dataset to answer 12,000 questions, involving 190 skills and a total of 350,000 response interaction records. Table 1 displays the dataset’s statistics.

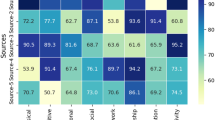

To validate the performance of the cognitive diagnostic model (Model 1) proposed in the study, the experiments used the Q-matrix-based cognitive diagnostic model (Model 2), the adaptive organizational mapping neural network-based instructional cognitive diagnostic model (Model 3), and the recurrent neural network-based cognitive diagnostic model (Model 4) as comparative models. The evaluation metrics include accuracy, root-mean-square error (RMSE) and area under curve (AUC). The different model CP prediction results are shown in Fig. 9.

Predictive results of the cognitive patterns of the different models.

Figure 9a shows the experimental results in the Assist0910 dataset. Models 1, 2, 3, and 4 have respective accuracy values of 0.76, 0.73, 0.72, and 0.69. The corresponding RMSEs are 0.27, 0.38, 0.42, and 0.46. The respective AUCs are 0.89, 0.84, 0.73, and 0.56. Figure 9b shows the experimental results in the Assist1213 dataset. The accuracy of Model 1, Model 2, Model 3 and Model 4 are 0.77, 0.74, 0.73 and 0.58, respectively. The RMSE is 0.29, 0.37, 0.43 and 0.45, respectively. The AUC is 0.89, 0.85, 0.77 and 0.51, respectively. Figure 9c shows the experimental results in the EdNet dataset. Models 1, 2, 3, and 4 had accuracy rates of 0.75, 0.70, 0.69, and 0.52 correspondingly. The corresponding RMSEs are 0.31, 0.42, 0.42, and 0.48. The relative AUCs are 0.49, 0.68, 0.71, and 0.75. The outcomes indicate that the study’s cognitive diagnostic model performs better than the other three comparable models in terms of stability and accuracy in predicting cerebral palsy. This model is capable of more accurately and consistently identifying students’ cognitive status, thereby providing valuable insights for educators to inform their teaching guidance and personalized learning strategies.

Modeling practice application analysis

To confirm the efficacy of the study’s suggested practical implementation of the CU and CP building method of PE, the experiment will use and analyze the model in a university sports online teaching platform. The effect of students’ understanding of PE concepts before and after the application of the AICNN model is shown in Fig. 10.

Students’ understanding of the concept of physical education teaching effect.

Figure 10a shows the students’ understanding of the PE concept. Class A uses the AICNN model and Class B does not use the AICNN model. At the completion of the lessons, students in Class A and Class B have 93% and 64% mastery of PE concepts and knowledge, respectively. In contrast, students in Class A have better mastery of PE concepts. After using the AICNN model, students’ mastery of PE concepts and knowledge can be improved by 29%. Teachers’ satisfaction with the instructional environment is depicted in Fig. 10b. In class A, 84% of teachers are satisfied with their current working environment. In class B, this percentage is 60%. The findings demonstrate that both instructor satisfaction and student learning outcomes can be enhanced by the AICNN model. In Fig. 11, the impact of CPDM application is displayed.

Application effect of the cognitive patterns diagnostic model.

Figure 11a shows the cognitive diagnostic prediction results. The prediction accuracy of CPDM fluctuates in the range of 0.6-1.0, with an average accuracy of 0.81. Figure 11b shows the satisfaction of students and teachers. As the experiment progressed, the satisfaction of students and teachers with CPDM gradually increases. Eventually, the satisfaction of students and teachers is 91% and 74%, respectively. The results show that CPDM performs well in terms of prediction accuracy and is widely recognized and accepted in practical applications. This indicates that CPDM has a positive role in improving the quality of education and optimizing teaching methods.

Discussion

In terms of model performance testing, by analyzing the impact of the number of training samples on the accuracy of the AICNN model and the changes in testing accuracy before and after convolutional layer improvement, it was found that the AICNN model can accelerate convergence by increasing the number of training samples, and the improved convolutional layer has better recognition ability and accuracy when processing larger scale images. In addition, the prediction results of the cognitive diagnosis model based on Q-matrix, the teaching cognitive diagnosis model based on adaptive organizational mapping neural network, and the cognitive diagnosis model based on recurrent neural network were compared, confirming that the proposed cognitive diagnosis model has high accuracy and stability in predicting cognitive patterns. In terms of practical application of the model, through the practical application and analysis of the AICNN model and cognitive pattern diagnosis model in a university’s sports online teaching platform, it was found that the AICNN model can improve students’ understanding of sports teaching concepts and teachers’ satisfaction with teaching situations. In addition, the application effect of cognitive pattern diagnostic models has been widely recognized by students and teachers, indicating that cognitive pattern diagnostic models have a positive role in improving education quality, optimizing teaching methods, and other aspects.

Conclusion

For physical education, the study used association learning and CNN to construct an AICNN model for understanding and recognizing physical education teaching concepts. Meanwhile, a neurocognitive diagnostic model based on hypergraph convolution has been proposed to diagnose students’ cognitive patterns and knowledge mastery. In the EdNet dataset, the accuracy, RMSE, and AUC of the cognitive diagnostic model proposed in the study were 0.75, 0.31, and 0.75, respectively, all of which were superior to the other three comparative models. In practical applications, the mastery levels of physical education concepts and knowledge among students in Class A and Class B are 93% and 64%, respectively. The satisfaction level of teachers with the teaching situation of Class A and Class B is 84% and 60% respectively. The prediction accuracy of the cognitive pattern diagnosis model fluctuates within the range of 0.6-1.0, with an average accuracy of 0.81. The satisfaction rates of students and teachers are 91% and 74%, respectively. The AICNN model can significantly improve students’ understanding and mastery of sports concepts, as well as teachers’ teaching satisfaction. The prediction accuracy of the cognitive pattern diagnosis model is relatively high, and the satisfaction of students and teachers towards it is gradually increasing. Overall, research has provided a new deep learning method for physical education teaching, which can effectively improve the quality and effectiveness of physical education teaching and have a certain promoting effect on the development of physical education. This method first requires collecting a large amount of physical education teaching data, preprocessing and labeling these data, and then using deep learning algorithms to train the data to generate conceptual understanding and cognitive patterns of physical education teaching. Finally, applying these generated concepts and cognitive patterns to practical teaching can help students better understand and master sports knowledge.

In practical applications, teachers can use the AICNN model to evaluate students’ understanding and mastery of physical education teaching concepts, adjust teaching content and methods based on the evaluation results, and improve teaching effectiveness. At the same time, teachers can also use cognitive pattern diagnostic models to analyze students’ cognitive states, understand their mastery of different knowledge points, and provide targeted teaching and guidance. In addition, the methods proposed in the study can also be extended to other disciplines. For example, in mathematics teaching, deep learning algorithms can be used to evaluate students’ understanding and mastery of mathematical concepts, and adjust teaching content and methods based on the evaluation results. In Chinese language teaching, deep learning algorithms can be used to evaluate students’ reading comprehension ability, and targeted teaching and tutoring can be provided based on the evaluation results. In summary, the method proposed in the study has broad application prospects in practical applications, which can provide potential scenarios for personalized learning or teaching improvement for teachers or educational institutions, thereby improving the quality and effectiveness of education.

Limitations and future works

The research only focused on the design and validation of models for physical education teaching in practical applications. In the future, the models can be applied to other educational fields to improve the quality of education. In terms of implementation, we can explore how to apply the AICNN model in more educational scenarios and how to combine the model with other educational methods to better meet students’ personalized learning needs. In addition, other deep learning models can be attempted to improve the performance and robustness of the model.

Data availability

The data supporting the findings of this study are available within the article. Additional datasets used and/or analyzed during the current study are available from the corresponding author upon reasonable request.

References

Szatkowski, M. Analysis of the sports model in selected Western European countries. J. Phys. Educ. Sport. 22(3), 829–839 (2022).

De Bock, T. et al. Sport-for-All policies in sport federations: An institutional theory perspective. Eur. Sport Manage. Q. 23(5), 1328–1350 (2023).

Zhao, Y., Guo, M., Sun, X., Chen, X. & Zhao, F. Attention-based sensor fusion for emotion recognition from human motion by combining convolutional neural network and weighted kernel support vector machine and using inertial measurement unit signals. IET Signal Proc. 17(4), 12201–12212 (2023).

Kesavavarthini, T., Rajesh, A. N., Srinivas, C. V. & Kumar, T. V. L. Bias correction of CMIP6 simulations of precipitation over Indian monsoon core region using deep learning algorithms. Int. J. Climatol. 43(8), 3749–3767 (2023).

Zhou, W., Guo, B. & Cao, F. Hybrid neural network-based exploration on the influence of continuous sensor data for the balancing ability of aerobics students. Wirel. Netw. 29(8), 3679–3692 (2023).

Tang, T., Hsu, H. & Li, K. Industrial anomaly detection with multiscale autoencoder and deep feature extractor-based neural network. IET Image Process. 17(1), 1752–1761 (2023).

Liu, C., Sepasgozar, S. M. E., Shirowzhan, S. & Mohammadi, G. Applications of object detection in modular construction based on a comparative evaluation of deep learning algorithms. Constr. innovation: Inform. process. Manage. 22(1), 141–159 (2022).

Amirabadi, M. A., Kahaei, M. H. & Nezamalhosseni, S. A. Low complexity deep learning algorithms for compensating atmospheric turbulence in the free space optical communication system. IET Optoelectron. 16(3), 93–105 (2022).

Arif, O. Prediction of daily average seawater temperature using data-driven and deep learning algorithms. Neural Comput. Appl. 36(1), 365–383 (2024).

Shen, B. Text complexity analysis of college English textbooks based on blockchain and deep learning algorithms under the internet of things. Int. J. Grid Util. Comput. 14(2), 146–155 (2023).

MacPhail, A., Tannehill, D., Leirhaug, P. E. & Borghouts, L. Promoting instructional alignment in physical education teacher education. Phys. Educ. Sport Pedag. 28(2), 153–164 (2023).

Zhang, L. Evaluation system of college physical education teaching reform based on wireless sensor network. J. Comput. methods Sci. Eng. 22(2), 373–384 (2022).

Ma, H. Design and application of teaching resources sharing platform for physical education major based on internet. J. Phys.: Conf. Ser. 1992(2), 22197–22203 (2021).

Guo, L., Du, J. & Zheng, Q. Understanding the evolution of cognitive engagement with interaction levels in online learning environments: Insights from learning analytics and epistemic network analysis. J. Comput. Assist. Learn. 39(3), 984–1001 (2023).

Liu, L., Zhao, B. & Rao, Y. On the cognitive load of online learners with multi-level data mining. Int. J. Inform. Commun. Technol. Educ.: Offi. Pub. Inform. Resour. Manage. Assoc. 18(1), 134–148 (2022).

Yang, S. et al. Cognitive diagnosis-based personalized exercise group assembly via a multi-objective evolutionary algorithm. IEEE Trans. Emerg. Top. Comput. Intell. 7(3), 829–844 (2023).

Shah, V. et al. Learner-centric MOOC model: A pedagogical design model towards active learner participation and higher completion rates. Educ. Tech. Res. Dev. 70(1), 263–288 (2022).

Ou, Z. et al. Early identification of stroke through deep learning with multi-modal human speech and movement data. Neural Regen. Res. 20(1), 234–241 (2024).

Yang, H., Zhuang, Z. & Pan, W. A graph convolutional neural network for gene expression data analysis with multiple gene networks. Stat. Med. 40(25), 5547–5564 (2021).

Bustos, F. J. Unveiling the brain’s symphony: Exploring the necessity and sufficiency of neural networks in behavior control. Neural Regen. Res. 20(1), 186–187 (2024).

Purohit, J. & Dave, R. Leveraging deep learning techniques to obtain efficacious segmentation results. Archi. Adv. Eng. Sci. 1(1), 11–26 (2023).

Funding

The authors declare that no funds, grants, or other support were received during the preparation of this manuscript.

Author information

Authors and Affiliations

Contributions

L.Z. data collection and analysis; G.W. conception and design; W.S. material preparation; X.M. review the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval

The studies titled “Conceptual Understanding and Cognitive Patterns Construction for Physical Education Teaching Based on Deep Learning Algorithms” involving human participants were reviewed and approved by School of Marxism, China University of Political Science and Law (CUPL). The participants provided their written informed consent to participate in this study.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhao, L., Wu, G., Shao, W. et al. Conceptual understanding and cognitive patterns construction for physical education teaching based on deep learning algorithms. Sci Rep 14, 31409 (2024). https://doi.org/10.1038/s41598-024-83028-9

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-83028-9