Abstract

Colorectal cancer (CRC) is the second popular cancer in females and third in males, with an increased number of cases. Pathology diagnoses complemented with predictive and prognostic biomarker information is the first step for personalized treatment. Histopathological image (HI) analysis is the benchmark for pathologists to rank colorectal cancer of various kinds. However, pathologists’ diagnoses are highly subjective and susceptible to inaccurate diagnoses. The improved diagnosis load in the pathology laboratory, incorporated with the reported intra- and inter-variability in the biomarker assessment, has prompted the quest for consistent machine-based techniques to be integrated into routine practice. In the healthcare field, artificial intelligence (AI) has achieved extraordinary achievements in healthcare applications. Lately, computer-aided diagnosis (CAD) based on HI has progressed rapidly with the increase of machine learning (ML) and deep learning (DL) based models. This study introduces a novel Colorectal Cancer Diagnosis using the Optimal Deep Feature Fusion Approach on Biomedical Images (CCD-ODFFBI) method. The primary objective of the CCD-ODFFBI technique is to examine the biomedical images to identify colorectal cancer (CRC). In the CCD-ODFFBI technique, the median filtering (MF) approach is initially utilized for noise elimination. The CCD-ODFFBI technique utilizes a fusion of three DL models, MobileNet, SqueezeNet, and SE-ResNet, for feature extraction. Moreover, the DL models’ hyperparameter selection is performed using the Osprey optimization algorithm (OOA). Finally, the deep belief network (DBN) model is employed to classify CRC. A series of simulations is accomplished to highlight the significant results of the CCD-ODFFBI method under the Warwick-QU dataset. The comparison of the CCD-ODFFBI method showed a superior accuracy value of 99.39% over existing techniques.

Similar content being viewed by others

Introduction

Colorectal cancer (CRC) is ranked third for incidence (6.1%) and second in fatality rate (9.2%) around the world. With regard to new cases and deaths, the global CRC burden will continue to be increased by 60% in 20301. The prompt and accurate diagnosis of CRC is crucial to optimize the efficiency of treatment and survivorship. The existing CRC diagnoses need visual monitoring with the help of skilled pathologists. Diagnosis is implemented by digital whole-slide images (WSIs) of the hematoxylin and eosin (H&E)-stained samples attained from frozen or formalin-fixed paraffin-embedded (FFPE) tissues2. In general, it is made through pathologists that automatically analyze the HIs of CRC tissues that remain the standard for tumour diagnosis and staging. On the other hand, the training, time assessment/pressure condition, and experience of pathologists may result in diagnosis judgment3. Thus, the automated classification of CRC for reasonable evaluation has considerable pathological significance. The pathologist’s state and the specific disease entities may vary according to the pathologist’s experience4. Moreover, the circling determination of cancer region and tumour content on H&E-stained samples to detect lesion regions for the downstream genomic analysis is a vital pre-analytical step to guarantee precise determination and improve the tumour content of genetic variants5. Early diagnosis of cancer has contributed significantly to diagnoses and increased the survival rate. Medical imaging techniques assist in earlier diagnosis and detection of cancer. As a result, medical imaging has been utilized to diagnose, detect, and classify tumours6.

The medical imageries are manually interpreted, but it is a time-consuming and very tedious process and may have errors because humans suffer from distractions, fatigue, and so on. This resulted in the implementation of a computer-aided diagnosis (CAD) scheme in the early 80s that assisted clinicians and doctors in interpreting medical images7. Machine Learning (ML) approaches and medical images are utilized in CAD systems. In ML methods, the feature extraction of images is the key step. Several researchers have identified various feature extraction methods for different medical imaging modalities and multiple kinds of cancer8. Deep learning (DL) is a state-of-the-art technique considered an advancement of ML because it uses numerous layers of NN to learn and progressively extract abstract features to decrease human interference in detecting image classes9. Lately, Convolutional neural networks (CNN) have shown promising solutions in image classification in the DL field, where an NN might have hundreds or dozens of layers to learn images with various characteristics. A convolution layer has a small kernel for generating advanced features and passes over an activation function as an output10. The major benefit of using CNN over a classical NN is that the model parameter is reduced for accurate outcomes.

This study introduces a novel Colorectal Cancer Diagnosis using the Optimal Deep Feature Fusion Approach on Biomedical Images (CCD-ODFFBI) method. The primary objective of the CCD-ODFFBI technique is to examine the biomedical images to identify colorectal cancer (CRC). In the CCD-ODFFBI technique, the median filtering (MF) approach is initially utilized for noise elimination. The CCD-ODFFBI technique utilizes a fusion of three DL models, MobileNet, SqueezeNet, and SE-ResNet, for feature extraction. Moreover, the DL models’ hyperparameter selection is performed using the Osprey optimization algorithm (OOA). Finally, the deep belief network (DBN) model is employed to classify CRC. A series of simulations highlights the significant results of the CCD-ODFFBI method under the Warwick-QU dataset.

Literature review

Hicham et al.11 developed a CAD system using CT colonography data to prevent CRC by categorizing scans as polyp or polyp-free. After the preprocessing stage, a DL approach is introduced with two variations: a 3D CNN-BN and a 3D CNN-BN & Dropout. 3D CT scans of the abdomen classification based on the polyps’ presence or absence using CNN is primordial to improve the possibility of earlier diagnosis. In12, a unique diagnostic tool, such as complementary Artificial Intelligence algorithms and Vision-based Surface Tactile Sensor (VS-TS), was presented. This model used statistical analysis (accuracy, sensitivity, and reliability) and Support Vector Machine (SVM) models. Using the VS-TS, the tumour types are classified by using the SVM model and employing the t-distributed Stochastic Neighbor Embedding approach to effectively detect the difficulty of polyp phantom classified according to the output image. In13, proposed a CNN-based DL approach. First, guided image filter and dynamic histogram equalization techniques filter and enhance the CRC images. Afterwards, a Single Shot MultiBox Detector (SSD) is utilized to recognize and categorize colorectal polyps from CRC images effectively. Lastly, a fully connected (FC) layer with dropout is used for the polyp classification. Alqushaibi et al.14 introduce an effective method for image synthesis and CRC segmentation, integrating an Attention U-Net and Pix2Pix Generative Adversarial Network (Pix2Pix-GAN) method guided by Sine Cosine Algorithm (SCA) for hyperparameter tuning within the GAN model. Using SCA has been instrumental in enhancing the fine balance between discriminator and generator dynamics. Mohamed et al.15 designed a strong CRC cancer diagnosis technique based on the feature selection approach. Initially, the images’ feature is extracted according to the CNN model. Squeezenet, Resnet50, AlexNet, and GoogleNet are utilized for the CNN. Next, the metaheuristic approach is used to reduce the feature count. This study exploits the grasshopper optimization algorithm to select the optimum features from the dataset. Ragab et al.16 developed an intelligent DL-based CC detection and classification (IDL-CCDC) approach. The proposed method includes a fuzzy filtering method for the noise reduction method. Furthermore, a water wave optimization (WWO) based EfficientNet architecture is applied for feature extraction. Additionally, chaotic glowworm swarm optimization (CGSO) based variational autoencoder (VAE) is employed for the CC segmentation into malignant or benign. The proposed model assists in increasing the overall classifier performance. Alzubaidi et al.17 present an effective pre-trained model for diagnosing and classifying CRC. First, the benchmark data of Warwick-QU is gathered to estimate effectiveness. Next, preprocessing is performed using noise removal and contrast enhancement. Lastly, the extracted feature outcomes are categorized using Mask Recurrent-CNN (Mask RCNN).

In18, a new architecture based on UNet for cancer segmentation is called Fovea-UNet. A pooling operation known as the Fovea Pooling (FP) model is developed to aggregate detailed and non-local context data based on the importance of pixel-level features. Furthermore, the lightweight backbone network with GhostNet is applied to lower the computation cost. Karaman et al.19 aim to improve real-time colorectal cancer (CRC) polyp detection systems by incorporating the artificial bee colony (ABC) method with the You Only Look Once (YOLO) object detection algorithm. Nur-A-Alam et al.20 present an ensemble ML approach for detecting colorectal cancer from colonoscopy images, utilizing preprocessing, feature fusion, and an ensemble classifier to improve accuracy in cancer or polyp detection. Obayya et al.21 present the Biomedical Image Analysis for Colon and Lung Cancer Detection using Tuna Swarm Algorithm with Deep Learning (BICLCD-TSADL) model for colon and lung cancer detection, utilizing Gabor filtering (GF) for preprocessing, GhostNet for feature extraction, AFAO for hyperparameter tuning, and Tuna Swarm Algorithm (TSA) with Echo State Network (ESN) for classification. Pacal and Karaboga22 improve YOLOv4 for real-time polyp detection by utilizing CSPNet, Mish activation, and DIoU loss. The study also optimizes YOLOv3/YOLOv4 with ResNet, VGG, DarkNet53, and Transformers, applying data augmentation, ensemble learning (EL), and NVIDIA TensorRT. Raju and Venkatesh23 introduce an EnsemDeepCADx system by integrating CNNs with TL via BILSTM and SVM. The model also utilizes pre-trained models, namely AlexNet, DarkNet-19, DenseNet-201, and ResNet-50, and features fusion from various image datasets with multiple CNN ensembles and SVM to optimize accuracy. Abd El-Aziz et al.24 propose a refined DL methodology for multi-classifying lung and colon cancers, incorporating ResNet-101V2, NASNetMobile, and EfficientNet-B0. Xia, Yun, and Liu25 propose two essential modules, the Multi-Scale Feature Fusion Block (MSFFB) and the Reducing Difference Block (RDB), to improve feature interactions and long-distance dependencies. Furthermore, Polarized Self-Attention (PSA) and Balancing Attention Module (BAM) refine local regions and improve foreground-background details. Pacal26 proposes an improved Swin Transformer with a Hybrid Shifted Windows Multi-Head Self-Attention (HSW-MSA) module and a Residual-based MLP (ResMLP) to improve accuracy, reduce memory usage, and speed up training. Karaman et al.27 present a DL approach using YOLOv5 for polyp detection optimized with the ABC model to enhance activation functions and hyper-parameters. Pacal28 introduced the Multi-Axis Vision Transformer (MaxViT), optimized for Pap smear data with a lightweight structure for better accuracy and speed. The study improves performance by replacing MBConv blocks with ConvNeXtv2 and MLP blocks with GRN-based MLPs.

Dwivedi, Srivastava, and Pradhan29 propose a novel nested feature fusion method by utilizing pre-trained EfficientNet models for early detection and classification of colorectal carcinoma. Pacal et al.30 propose DL methods for reliable polyp detection, improving YOLOv3 and YOLOv4 by integrating CSPNet for real-time performance. The study also uses advanced data augmentation and transfer learning (TL) and replaces activation functions with SiLU for improved detection, along with CIoU as the loss function. Singh et al.31 propose an ensemble classifier by integrating Random Forest (RF), SVM, and Logistic Regression (LR), using majority voting for predictions. Deep features from lung and colon images are extracted with VGG16 and LBP and then incorporated for classification. Sangeetha et al.32 present a Multimodal Fusion Deep Neural Network (MFDNN) methodology to integrate medical imaging, genomics, and clinical data for improved lung cancer diagnosis. It also discusses the ethical considerations, validation, and regulatory needs for deploying AI in clinical settings. Poalelungi et al.33 emphasize the importance of collaboration between physicians and tech experts to leverage AI’s potential fully. Sureshkumar et al.34 present a hybrid CAD model integrating CNN and pruned ensembled extreme learning machine (HCPELM) for breast cancer detection, utilizing ReLU activation and feature extraction with convolutional and fully connected layers. ELM handles classification, and TL mitigates parameters for easier detection. Srivastava, Chauhan, and Pradhan35 apply EL with Differential Evolution optimization and Condorcet’s Jury Theorem for lung and colon cancer detection, improving classification performance and reducing computational efforts. Gowthamy and Ramesh36 integrate pre-trained DL approaches, namely ResNet-50, InceptionV3, and DenseNet, with the Kernel Extreme Learning Machine (KELM) model for accurate lung cancer diagnosis using histopathology images. Feature fusion improves classification, while the Mutation Boosted Dwarf Mongoose Optimization Algorithm (MB-DMOA) optimizes model parameters for better accuracy and faster convergence. Ho et al.2 develop and validate a unique AI DL method by incorporating Faster-RCNN for glandular segmentation and a classical ML classifier to assist pathologists in screening colorectal specimens for malignancies, enhancing cancer detection with high sensitivity. Raju et al.37 developed TumorDiagX, a framework integrating DL and computer vision (CV) for precise cancer detection. The framework computes multiple CNNs, integrates diverse networks to improve detection, and utilizes U-Net for image segmentation to enhance the detection of malignant lesions.

Existing studies mainly depend on limited datasets, which affect the generalization of models to diverse populations and real-world scenarios. Many approaches must also address the challenge of varying image quality and artefacts, which can affect performance. Additionally, more research must be done on incorporating real-time diagnostic capabilities and confirming model interpretability for clinical adoption. These gaps emphasize the requirement for more robust, scalable solutions with improved adaptability and transparency in healthcare applications. Furthermore, there is a requirement for improved generalization of techniques across diverse patient populations and medical conditions to confirm widespread applicability and accuracy.

Materials and methods

This work introduces a novel CCD-ODFFBI technique. The major aim of the technique is to examine biomedical images for the identification of CRC. It encompasses different processes involved, such as noise removal, feature fusion, hyperparameter selection, and DBN-based CRC classification. Figure 1 establishes the entire flow of the CCD-ODFFBI method.

Working flow of the CCD-ODFFBI method.

Noise removal process

Initially, the CCD-ODFFBI technique utilizes the MF approach for the noise elimination process38. This model is chosen for noise elimination due to its simplicity, efficiency, and ability to preserve edges while removing noise. Unlike linear filters, MF is a nonlinear technique that replaces each pixel with its neighbours’ median, making it specifically effectual in reducing impulsive noise (e.g., salt-and-pepper noise) without blurring sharp edges. This is crucial in medical image processing, where retaining structural details is vital for precise diagnosis. Moreover, MF is computationally efficient and easy to implement, making it appropriate for real-time applications. Unlike other noise reduction methods like Gaussian or Wiener filtering, MF performs better in dealing with extreme noise values while maintaining essential image features. Figure 2 illustrates the structure of the MF model.

MF workflow.

Median filter (MF) is a popular image preprocessing method that eliminates noise while retaining edges. It moves a window of a predetermined size over all the image pixels, replacing the central pixel values with the median pixel value within the window. Unlike the mean filter, which could blur edges, MF is highly efficient in removing salt-and-pepper noise, a well-known type of impulsive noise where the pixel value is considerably distinct from the surroundings. The nonlinear nature of MF enables it to smoothen noise while retaining quick changes between dissimilar regions in an image, which makes it especially helpful in scenarios where edge preservation is crucial.

Feature extraction process

For feature extraction, the CCD-ODFFBI technique employs a fusion of three DL methods: MobileNet, SqueezeNet, and SE-ResNet. These techniques are chosen due to the complementary merits of every model in capturing both fine-grained and large-scale features. MobileNet is lightweight and optimized for efficiency, making it appropriate for mobile and edge computing environments. At the same time, SqueezeNet outperforms in mitigating the number of parameters without sacrificing accuracy, ideal for applications requiring low computational resources. With its attention mechanism, SE-ResNet improves feature representation by emphasizing crucial features and suppressing irrelevant ones, resulting in improved performance in complex image recognition tasks. By integrating these models, the system benefits from a balance of efficiency, accuracy, and adaptability, making it more robust than depending on any single model. This fusion approach presents the potential to attain greater performance while minimizing the computational burden, which is critical in real-time applications.

MobileNet model

MobileNet is a DL method proposed to be employed in low-cost hardware devices39. Object classification, identification, and segmentation are executed utilizing the MobileNet method. The MobileNet technique was recognized as MobileNet-V1, and MobileNet-V2 models were developed from the MobileNet-V1. When equated to the MobileNet-V2 method with the previous form, this novel technique provides the main contribution to the issues of linearity among the layers. If a linear bottleneck arises among the layers, the issues are set in this form. Its input size is \(\:224\text{x}224\) pixels, and its structure contains an in-depth (DW) separable filter. The performance of the model upsurges as it inspects DW, and the input feature is separated into dual layers. All the layers are sub-divided into the subsequent ones by uniting them with an output feature till the procedure is done. MobileNet-V2 technique utilizes ReLU among layers. Therefore, it permits the nonlinear output from the preceding layer to be linearly conveyed as an input to the subsequent layers in the future. The method ensures its training procedure till an easy step is made. In this method, the convolution layer spreads filters over input imageries and generates activation mappings. The activation mapping covers the feature and moves to the subsequent layers. Also, the pooling layer is employed in the MobileNet-V2 method. The matrices attained over these layers are transformed into small sizes. The mobileNet-V2 method was used as pre-trained, and the SVM technique was utilized in the stage of classification. Figure 3 depicts the working flow of the MobileNet model.

Workflow of the MobileNet technique.

SqueezeNet model

SqueezeNet is a thorough learning method of input size \(\:224\text{x}224\) pixels, including pooling, convolutional, fire, and ReLU layers. The SqueezeNet doesn’t contain full connection (FC) and dense layers. On the other hand, the Fire layer executes the function of these parallel layers. The benefit is that it executes surveys effectively by decreasing the number of parameters, thus declining the dimension of model ability. The squeezeNet method formed further effective outcomes, reducing the model cost. While the data regarding the layers is given in the MobileNet-V2 method, the Fire (F2, F3, and F9) layers look like a novel layer containing dual parts, i.e. Expansion and Compression. This method utilizes a \(\:1\text{x}1\) convolution filter to input imagery in the Compression part. Meanwhile, the expansion part utilizes \(\:1\text{x}1\) and \(\:3\text{x}3\) convolution filters for the input imagery. The Expansion and Compression retain similar sizes of feature maps. In the Compression part, an input image depth is decreased and enlarged. Next, the depth is enlarged in the Expansion part. Figure 4 demonstrates the SqueezeNet architecture.

Workflow of the SqueezeNet method.

SE-ResNet Model

The SE attention module is a sign of channel attention40. It mainly concentrates on the issue of interdependence among networks. The convolution (Conv) function initially combines every input channel and then totals the outcomes of Conv for every channel. This permits the spatial feature to be merged with the channel’s feature, resulting in a main diverse feature set. The SE element extracts this confusion and lets the DL technique absorb the channel feature straight. SE takes out the interdependency among feature channels, attaining the significance of every network. Every channel feature is biased, emphasizing significant features and overwhelming secondary ones. The SE module is effortlessly accessed to other network structures. This module contains three essential processes: excitation, compression, and measurement. \(\:W\), \(\:H\), and \(\:C\) denote width, height, and channels, correspondingly. Figure 5 shows the architecture of the SE-ResNet model.

Architecture of SE-ResNet Model.

Squeeze uses global pooling to reduce the spatial features of every network into a particular overall feature, efficiently incorporating the data from every network feature. Then, an FC layer is united to evaluate the importance of every channel depending upon the compacted global features attained. The weight value for every channel is defined by the SE module, which is multiplied by the matrix equivalent to the separate network in the new feature mapping. ResNet has a standard application in feature extraction through various areas. The ResNet module uses the shallow feature to get additional vital features. The residual element is employed as the foremost feature extractor structure in feature detection and identification tasks. After conducting numerous experiments, it was definite that the SE attention module and ResNet50 model should be employed in this research work:

Hyperparameter selection

Meanwhile, the DL models’ hyperparameter selection is performed using OOA. The OOA comprises two stages: the primary stage contains osprey finding the place of the fish and catching it (global exploration), and the secondary stage contains bringing the fish to a safe place to eat (local exploitation)41. This method was selected due to its robust exploration and exploitation capabilities, inspired by the natural hunting behaviour of osprey birds. OOA effectually balances global search and local refinement, confirming that it averts premature convergence while effectually narrowing down the optimal hyperparameter space. This makes it specifically appropriate for intrinsic DL methods that require fine-tuning to attain high accuracy. Additionally, the capability of the OOA model to handle large search spaces and multidimensional optimization problems enables it to work well with deep neural networks, where hyperparameter interactions are often complex. OOA gives a more adaptive and computationally efficient solution than other optimization techniques, such as grid or random search, resulting in enhanced performance and faster convergence. Its flexibility also allows for easy adaptation to different architectures, making it a versatile tool in hyperparameter optimization. Figure 6 represents the OOA structure.

Steps involved in the OOA methodology.

1) Population initialization.

The OOA is stimulated by hunting osprey behaviour by employing search and predation approaches to determine optimum performance for engineering problems. During this OOA, every osprey signifies the probable solution with its location under the searching space equivalent to the variable rates of the problem. Every osprey is defined by a vector, with all the elements equivalent to a problem variable rate. This method examines the complete solution space to acquire the optimum performance. The osprey population is a mathematical model demonstrated as a matrix (Eq. (1)). Primarily, the osprey positions are randomly initialized utilizing Eq. (2) for distributing them throughout the search space, so improving the search range.

Whereas \(\:n=\text{1,2},\cdots\:\:,\:N,m=\text{1,2},\cdots\:,\:M,\:\:X\) refers to the population matrix of osprey places, \(\:{X}_{n}\) stands for the \(\:{n}^{th}\) osprey (candidate performances), \(\:{x}_{n,m}\) denotes the \(\:{m}^{th}\) dimensional (problem variable), \(\:{N}_{o}\) signifies the osprey counts, \(\:M\) refers to the size of the variable, \(\:{r}_{n,m}\in\:\left[\text{0,1}\right]\) represents the random number, \(\:l{b}_{m}\), and \(\:u{b}_{m}\) stands for the lower and upper boundaries of \(\:{m}^{th}\) problem variable, correspondingly.

The fitness value of all the osprey’s items has been computed depending on the matching objective function (OF) values. Equation (3) defines the fitness values of every osprey, which are utilized to assess the quality of all the solutions.

In which \(\:F\) defines the vector OF values, and \(\:{F}_{n}\) represents the vector of the OF values for \(\:{the\:n}^{th}\) osprey.

2) Global exploration.

Once the fish were caught, the osprey launched an attack to catch them. During this OOA, hunting is demonstrated in the primary phase of population renewal. By simulating, the osprey positions in the population are changed dramatically, improving the exploratory capability of the method for identifying the optimum area and escape from the local optimum. During this OOA design, the set of positions for all the ospreys is expressed in Eq. (4).

\(\:F{P}_{n}\) refers to the set of positions, but fish is locked for \(\:{n}^{th}\) osprey, and \(\:{X}_{best}\) represents the osprey with the best position. The osprey randomly finds the fish’s position and initiates an attack. The effort of the osprey nearby fish is simulated and the osprey position is measured using Eqs. (5) and (6), correspondingly. Once the novel position enhances the OF value, the osprey position is upgraded based on Eq. (7).

Whereas \(\:{x}_{n}^{P1}\) denotes the novel place of \(\:{n}^{th}\) osprey at the initial stage, \(\:{x}_{n,m}^{P1}\) implies the novel place of \(\:{n}^{th}\) osprey at the initial stage, in its \(\:{m}^{th}\) dimensional, \(\:{F}_{n}^{P1}\) stands for the OF in its \(\:{m}^{th}\) dimensional, \(\:S{F}_{n}\) represents the fish chosen by \(\:{n}^{th}\) osprey, \(\:S{F}_{n,m}\) is its \(\:{m}^{th}\) dimensional, \(\:{r}_{n,m}\in\:\left[\text{0,1}\right]\) denotes the random number, and \(\:{I}_{n,m}\in\:\left\{\text{1,2}\right\}\) refers to the random number.

3) Localized exploitation.

During the OOA design, a novel random location was calculated for all the individuals from the population utilizing Eqs. (8) and (9), which signifies the position of an appropriate predatory fish. Once the OF value is enhanced at this novel place, the preceding location of Osprey has been upgraded based on Eq. (10).

In this case, \(\:{I}_{k}\) defines the iteration counter of the algorithm, \(\:{and\:I}_{k}=\text{1,2},\cdots\:T\) and \(\:T\) demonstrate the entire iteration count.

Whereas \(\:{x}_{n}^{P2}\) illustrates the novel place of \(\:{n}^{th}\) osprey, \(\:{x}_{n,m}^{P2}\) represents the novel position of \(\:{n}^{th}\) osprey from the \(\:{m}^{th}\) dimensional, \(\:{F}_{n}^{P2}\) signifies the OF, \(\:S{F}_{n}\) denotes the fish chosen by the \(\:{n}^{th}\) osprey, \(\:S{F}_{n,m}\) defines its \(\:{m}^{th}\) dimensional, and \(\:{r}_{n,m}\in\:\left[\text{0,1}\right]\) is a random number.

The OOA derives an FF to gain superior results of the classifier. It defines a positive integer to describe the enhanced efficiency of the solution candidate. Here, the reduction of the classifier error rate is assumed as the FF.

CRC classification using DBN

Lastly, the classification of CRC is implemented by using the DBN model42. This technique is chosen due to its capability to learn hierarchical representations of data through diverse layers of abstraction. DBNs are a DL method that outperforms extracting complex patterns from massive datasets, which is specifically useful for medical image analysis like CRC detection. Using unsupervised pretraining and fine-tuning, DBNs can effectually handle high-dimensional data and improve generalization, making them ideal for tasks with limited labelled data. Compared to conventional ML models, DBNs have shown superior performance in capturing nonlinear relationships and detecting subtle features critical in CRC detection. Furthermore, DBNs can be trained to automatically learn crucial features from raw data, reducing the requirement for manual feature engineering and allowing for a more robust and scalable model. Their capacity to model intrinsic decision boundaries makes DBNs highly effectual for classifying CRC cases, giving more accurate and reliable predictions. Figure 7 portrays the DBN architecture.

DBN architecture.

RBM is a special kind of Markov Random Filed (MRF). And is a two-layer stochastic network. Its two layers are the visible layer (VL) and the hidden layer (HL). The neurons in the VLs and HLs are fully connected and bidirectional. In a binary RBM, based on the VL parameters \(\:v=({v}_{1},\:{v}_{2},\:\dots\:,\:{v}_{n})\) and HL parameters\(\:\:h=({h}_{1},\:{h}_{2},\:\dots\:,\:{h}_{m})\), the joint distribution of the RBM’s VL and HL is represented as

Where

the normalization factor is called the partition function, and \(\:E(v,\:h)\) is the energy function.

The unit’s connection weight and bias define the probability distribution over the binary state vector \(\:v\) of the VL unit through the energy function.

Where \(\:\theta\:=(w,\:b,\:a)\) and \(\:{w}_{ij}\) signifies the symmetric interaction between \(\:{the\:i}^{th}\) and \(\:{j}^{th}\) VL and HL units, and \(\:{b}_{i}\) and \(\:{a}_{j}\) represent their biased terms. V and \(\:H\) are the number of VL and HL units. The model assigns a probability to the VL vector \(\:v\) is

The factorial \(\:p\left(v|h\right)\) and \(\:p\left(h|v\right)\) are conditional distributions because there are no visible-visible or hidden‐hidden links, as follows:

where \(\:\sigma\:\left(x\right)=(1+\text{e}\text{x}\text{p}\left(-x\right){)}^{-1}\). The RBM is trained to model the joint distribution of data and class labels; the VL vectors are connected to the binary vector.

Furthermore, \(\:p\left(1\right|v)\) is computed exactly by

The value of \(\:p\left(1\right|v)\) is efficiently calculated using the conditional independence of the HL units, which makes the marginalization time of the HL units linear in the number of HL units.

RBM has two training algorithms: gradient method and Contrastive Divergence (CD). Where the gradient method treats \(\:logp\left(v,\:\theta\:\right)\) as a likelihood function, which does not change monotonicity. The parameters are corrected along the gradient \(\:\partial\:logp(v,\:\theta\:)/\partial\:\theta\:\) to achieve higher learning efficiency. The details are as follows:

-\(\:\frac{\partial\:logp(v,{w}_{ij})}{\partial\:{w}_{ij}}={E}_{v}\left[p\left({h}_{i}|v\right)\text{*}{v}_{j}\right]-{v}_{j}^{\left(i\right)}\text{*}f\left({w}_{i}\text{*}{v}^{\left(i\right)}+{b}_{i}\right),\)

The update rule for the visible-hidden weights is based on the gradient of the joint probability function of the data and labels.

The expectation \(\:<{v}_{i}{h}_{{j}^{>}data}\) is the frequency of simultaneous occurrences of the VL unit \(\:{v}_{i}\) and the HL unit \(\:{h}_{j}\) in the training set. To accurately compute the \(\:<\cdot\:{>}_{mode1}\), it takes an exponential amount of time, so the CD approximation of the gradient is used. As shown in the following equation:

Where \(\:<\cdot\:>1\) denotes the expected value of the distribution of samples for running the Gibbs sampler, which is initialized with data and run for a whole step.

The energy of joint configuration for Gaussian-Bernoulli RBM is

The conditional distribution \(\:p(v;h,\:\theta\:)\) is a factorial distribution value because there is no visible-visible connection,

Here, \(\:N(\mu\:,\:V)\) refers to a Gaussian with mean \(\:\mu\:\) and variance \(\:V\). Besides, the Gaussian-Bernoulli RBM has the exact inference and learning rules as the binary RBM, except that the learning rate needs to be smaller.

A single hidden layer RBM needs to capture data features more precisely. After training the RBM, the learned features are fed into a second RBM as an input dataset. This layer-by‐layer learning system is used to build DBN. DBN is a DNN with multiple RBMs and a BPNN. As with other DNNs, the key of DBN is to initialize an FFNN with unsupervised pretraining using an unlabeled dataset, then fine‐tune the FFNN using labelled data. The initial RBM is trained using the CD method during the pretraining phase. The learning states of the HL units in the first RBM are used as input data for the VL units of the second RBM. The weights of all RBMs are trained layer‐by‐layer in the same way until the last RBM. The features of the previous RBM are those learned by the whole training system. The FFNN will use the highest RBM weights as its initial weights when the unsupervised pretraining of the RBM is finished. Using a back-propagation algorithm, the FFNN is subsequently trained or fine‐tuned on the labelled training data.

Performance validation

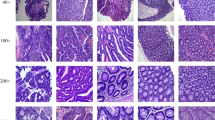

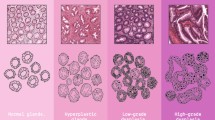

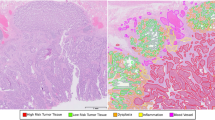

The simulation analysis of the CCD-ODFFBI method is examined using the Warwick-QU dataset43. The dataset contains 165 sample images with two classes illustrated in Table 1. Figure 8 exhibits the sample images.

Sample images.

Figure 9 shows the confusion matrices produced by the CCD-ODFFBI method in different epochs. The outcomes show that the CCD-ODFFBI method effectively detects benign and malignant samples under different classes.

Confusion matrices of CCD-ODFFBI technique (a-f) Epochs 500–3000.

Table 2 illustrates the overall classification outcomes of the CCD-ODFFBI technique under various epochs. The outcomes imply that the CCD-ODFFBI method has properly detected the benign and malignant samples. With 500 epochs, the CCD-ODFFBI technique gains an average \(\:acc{u}_{y}\) of 98.79%, \(\:pre{c}_{n}\) of 98.77%, \(\:sen{s}_{y}\) of 98.77%, \(\:spe{c}_{y}\) of 98.77%, and \(\:{F}_{score}\) of 98.77%. In addition, with 1000 epochs, the CCD-ODFFBI technique gains an average \(\:acc{u}_{y}\) of 99.39%, \(\:pre{c}_{n}\) of 99.46%, \(\:sen{s}_{y}\) of 99.32%, \(\:spe{c}_{y}\) of 99.32%, and \(\:{F}_{score}\) of 99.39%. Moreover, with 1500 epochs, the CCD-ODFFBI method obtains an average \(\:acc{u}_{y}\) of 96.36%, \(\:pre{c}_{n}\) of 96.91%, \(\:sen{s}_{y}\) of 95.95%, \(\:spe{c}_{y}\) of 95.95%, and \(\:{F}_{score}\) of 96.29%.

Figure 10 shows the CRC detection outcomes of the CCD-ODFFBI method under epochs 2000–3000. The result indicates that the CCD-ODFFBI approach has accurately detected the benign and malignant samples. With 2000 epochs, the CCD-ODFFBI approach obtains an average \(\:acc{u}_{y}\) of 96.97%, \(\:pre{c}_{n}\) of 97.00%, \(\:sen{s}_{y}\) of 96.87%, \(\:spe{c}_{y}\) of 96.87%, and \(\:{F}_{score}\) of 96.93%. Moreover, with 2500 epochs, the CCD-ODFFBI method obtains an average \(\:acc{u}_{y}\) of 98.18%, \(\:pre{c}_{n}\) of 98.23%, \(\:sen{s}_{y}\) of 98.10%, \(\:spe{c}_{y}\) of 98.10%, and \(\:{F}_{score}\) of 98.16%. Furthermore, with 3000 epochs, the CCD-ODFFBI method obtains an average \(\:acc{u}_{y}\) of 97.30%, \(\:pre{c}_{n}\) of 97.89%, \(\:sen{s}_{y}\) of 97.30%, \(\:spe{c}_{y}\) of 97.30%, and \(\:{F}_{score}\) of 97.54%.

Average outcome of CCD-ODFFBI technique (a-c) Epochs 2000–3000.

In Fig. 11, the training and validation accuracy outcomes of the CCD-ODFFBI technique are described. The accuracy value is calculated within the range of 0-1000 epochs. The figure shows that the training and validation accuracy value shows a growing tendency, which indicates the capability of the CCD-ODFFBI approach with enriched performance over dissimilar iterations. Furthermore, the training and validation accuracy remain closer over the epochs, which exhibits enhanced performance and indicates minimal overfitting of the CCD-ODFFBI approach, which guarantees consistent prediction on hidden samples.

\(\:Acc{u}_{y}\) curve of CCD-ODFFBI technique under 1000 epochs

In Fig. 12, the training and validation loss graph of the CCD-ODFFBI method is demonstrated. The loss value is calculated within the range of 0-1000 epochs. It is signified that the training and validation accuracy values demonstrated a declining tendency, which notified the capability of the CCD-ODFFBI method in balancing a tradeoff between data fitting and generalization. The continual reduction in loss values additionally ensures the superior performance of the CCD-ODFFBI method and tunes the predictive outcomes over time.

Loss curve of CCD-ODFFBI technique under 1000 epochs.

In Fig. 13, the PR inspection of the CCD-ODFFBI method under 1000 epochs offers an interpretation of its performance by plotting Precision against Recall for different classes. The figure indicates that the CCD-ODFFBI method continuously obtains superior PR values across various classes, representing its capability to maintain a considerable portion of true positive predictions amongst all the positive predictions (precision) while capturing a large proportion of actual positives (recall). The continuous rise in PR outcomes among all classes depicts the efficiency of the CCD-ODFFBI method in the classifier model.

PR curve of CCD-ODFFBI technique under 1000 epochs.

Figure 14 shows the ROC curve of the CCD-ODFFBI method under 1000 epochs. The outcomes indicate that the CCD-ODFFBI approach obtains superior ROC outcomes over all the classes, representing the substantial ability to discriminate them. This consistent trend of high ROC values over different classes represents the promising solution of the CCD-ODFFBI method on prediction class, which highlights the robust nature of the classification model.

ROC curve of CCD-ODFFBI technique under 1000 epochs.

Table 3; Fig. 15 illustrates a widespread comparison study of the CCD-ODFFBI technique under distinct aspects44,45,46. The ResNet-50 model with a 60 − 40 data split has lower \(\:acc{u}_{y}\) at 78.92% but high \(\:spe{c}_{y}\) at 93.99%. The ResNet-50 model with an 80 − 20 split shows improved \(\:acc{u}_{y}\) at 89.89% and \(\:sen{s}_{y}\) at 94.66%. VGG16, AlexNet, and Inception-v3 also portray robust performance with \(\:acc{u}_{y}\) values ranging from 96.84 to 98.06%. The MDCC-Net and SMADTL-CCDC models achieve improved results with \(\:acc{u}_{y}\) above 99%. The CCD-ODFFBI model attains the highest performance, with an \(\:acc{u}_{y}\) of 99.39%, \(\:sen{s}_{y}\) of 99.32%, and \(\:spe{c}_{y}\) of 99.32%.

\(\:Acc{u}_{y}\) outcome of CCD-ODFFBI method with existing techniques

Figure 16 shows the \(\:sen{s}_{y}\) analysis of the CCD-ODFFBI approach with existing techniques. The outcomes show that the RestNet-50 (60 − 40) technique has shown ineffective performance with \(\:sen{s}_{y}\) of 61.16%. Meanwhile, the DL-CP, DL-SC, RestNet-50 (80 − 20), and SMADTL-CCDC approaches have demonstrated moderately closer outcomes with \(\:sen{s}_{y}\) of 70.47%, 84.61%, 94.66%, and 98.18%. Moreover, the VGG16, AlxNet, Inception-v3, and MDCC-Net models have portrayed slightly improved \(\:sen{s}_{y}\) values of 96.70%, 97.47%, 97.97%, and 98.55%. However, the CCD-ODFFBI method outperforms the other techniques with an increased \(\:sen{s}_{y}\) of 99.32%.

\(\:Sen{s}_{y}\) outcome of CCD-ODFFBI method with existing techniques.

Figure 17 shows the \(\:spe{c}_{y}\) analysis of the CCD-ODFFBI approach with existing techniques. The outcomes show that the DL-CP approach has demonstrated ineffective performance with \(\:spe{c}_{y}\) of 71.37%. The RestNet-50 (80 − 20) and DL-SC methods have shown slightly enhanced outcomes with \(\:spe{c}_{y}\) of 84.34% and 82.11%. Meanwhile, the RestNet-50 (60 − 40) and SMADTL-CCDC approaches have illustrated moderately closer outcomes with \(\:spe{c}_{y}\) of 93.99% and 98.26%. Moreover, the VGG16, AlxNet, Inception-v3, and MDCC-Net models have portrayed slightly improved \(\:spe{c}_{y}\) values of 96.68%, 97.45%, 98.08%, and 98.59%. However, the CCD-ODFFBI method outperforms the other techniques with a high \(\:spe{c}_{y}\) of 99.32%.

\(\:Spe{c}_{y}\) outcome of CCD-ODFFBI method with existing techniques

Table 4; Fig. 18 demonstrate the ablation study of the proposed model. The CCD-ODFFBI model attained an \(\:acc{u}_{y}\) of 99.39%, \(\:sen{s}_{y}\) of 99.32%, and \(\:spe{c}_{y}\) of 99.32%. The MobileNet model had an \(\:acc{u}_{y}\) of 98.86%, \(\:sen{s}_{y}\) of 98.74%, and \(\:spe{c}_{y}\) of 98.70%. The SqueezeNet model showed an \(\:acc{u}_{y}\) of 98.20%, \(\:sen{s}_{y}\) of 98.07%, and \(\:spe{c}_{y}\) of 97.94%. The SE-ResNet model achieved an \(\:acc{u}_{y}\) of 97.56%, \(\:sen{s}_{y}\) of 97.46%, and \(\:spe{c}_{y}\) of 97.33%. Lastly, the OOA model demonstrated an \(\:acc{u}_{y}\) of 96.91%, \(\:sen{s}_{y}\) of 96.79%, and \(\:spe{c}_{y}\) of 96.80%.

Result analysis of the ablation study of CCD-ODFFBI method.

Conclusion

In this study, a novel CCD-ODFFBI technique is introduced. The CCD-ODFFBI technique aimed to examine the biomedical images for the identification of CRC. It utilized different processes such as noise removal, feature fusion, hyperparameter selection, and DBN-based CRC classification. Initially, the CCD-ODFFBI technique utilized the MF approach for the noise elimination process. Three DL models, namely MobileNet, SqueezeNet, and SE-ResNet, are employed for feature extraction. Meanwhile, the DL models’ hyperparameter selection was performed using OOA. Furthermore, the classification of CRC was accomplished by utilizing the DBN model. A series of simulations highlighted the significant results of the CCD-ODFFBI method under the Warwick-QU dataset. The comparison of the CCD-ODFFBI method showed a superior accuracy value of 99.39% over existing techniques. The CCD-ODFFBI method’s limitations include reliance on a single dataset, which may limit the model’s generalization to diverse populations or imaging conditions. Furthermore, image quality discrepancies and artefacts could affect the model’s performance. The study also lacks a comprehensive evaluation across diverse real-world scenarios, such as different stages of cancer or diverse histological types. Future work should focus on integrating larger, more varied datasets to enhance generalization. Moreover, integrating real-time diagnostic capabilities and addressing interpretability could improve the clinical application of the model.

Data availability

The datasets used and analyzed during the current study available from the corresponding author on reasonable request.

References

Skrede, O. J. et al. Deep learning for prediction of colorectal cancer outcome: a discovery and validation study. Lancet 395 (10221), 350–360 (2020).

Ho, C. et al. A promising deep learning-assistive algorithm for histopathological screening of colorectal cancer. Scientific reports, 12(1), p.2222. (2022).

Tsai, M. J. & Tao, Y. H. Deep learning techniques for the classification of colorectal cancer tissue. Electronics, 10(14), p.1662. (2021).

Kather, J. N. et al. Multi-class texture analysis in colorectal cancer histology. Sci. Rep. 6 (1), 1–11 (2016).

Dif, N. & Elberrichi, Z. A new deep learning model selection method for colorectal cancer classification. Int. J. Swarm Intell. Res. (IJSIR). 11 (3), 72–88 (2020).

Sarwinda, D., Paradisa, R. H., Bustamam, A. & Anggia, P. Deep learning in image classification using residual network (ResNet) variants for detection of colorectal cancer. Procedia Comput. Sci. 179, 423–431 (2021).

Mulenga, M. et al. Feature extension of gut microbiome data for deep neural network-based colorectal cancer classification. IEEE Access. 9, 23565–23578 (2021).

Bychkov, D. et al. Deep learning based tissue analysis predicts outcome in colorectal cancer. Scientific reports, 8(1), p.3395. (2018).

Rodríguez, J. V., Martínez, J. R. & Salazar, F. F. J. Colorectal Cancer prediction using machine learning and neutrosophic MCDM methodology: a Case Study. Full Length Article, 21(2), (2023). pp.118 – 18.

Zhang, D. et al. An efficient ECG denoising method based on empirical mode decomposition, sample entropy, and improved threshold function, Wireless Communications and Mobile Computing, vol. no. 2, pp. 1–11, 2020. (2020).

Hicham, K. et al. May. 3D CNN-BN: A Breakthrough in Colorectal Cancer Detection with Deep Learning Technique. In 2024 4th International Conference on Innovative Research in Applied Science, Engineering and Technology (IRASET) (pp. 1–6). IEEE. (2024).

Kara, O. C., Venkatayogi, N., Ikoma, N. & Alambeigi, F. A reliable and sensitive framework for simultaneous type and stage detection of colorectal cancer polyps. Ann. Biomed. Eng. 51 (7), 1499–1512 (2023).

Tanwar, S. et al. Detection and classification of colorectal polyp using deep learning. BioMed Research International, 2022(1), p.2805607. (2022).

Alqushaibi, A. et al. Enhanced Colon Cancer Segmentation and Image Synthesis through Advanced Generative Adversarial Networks based-Sine Cosine Algorithm. IEEE Access. (2024).

Mohamed, A. A. A., Hançerlioğullari, A., Rahebi, J., Ray, M. K. & Roy, S. Colon disease diagnosis with convolutional neural network and grasshopper optimization algorithm. Diagnostics, 13(10), p.1728. (2023).

Ragab, M. et al. Optimized Deep Learning Model for Colorectal Cancer detection and classification model. CMC-Comput Mat. Contin. 71 (3), 5751–5764 (2022).

Alzubaidi, L. H., Priyanka, K., Suhas, S. K., Kumar, B. K. & Malathy, V. ResNet110 and Mask Recurrent Convolutional Neural Network Based Detection and Classification of Colorectal Cancer. In 2024 International Conference on Distributed Computing and Optimization Techniques (ICDCOT) (pp. 1–4). IEEE. (2024).

Liu, Y. et al. Fovea-UNet: detection and segmentation of lymph node metastases in colorectal cancer with deep learning. BioMedical Engineering OnLine, 22(1), p.74. (2023).

Karaman, A. et al. Hyper-parameter optimization of deep learning architectures using artificial bee colony (ABC) algorithm for high performance real-time automatic colorectal cancer (CRC) polyp detection. Appl. Intell. 53 (12), 15603–15620 (2023).

Nur-A-Alam, M., Uddin, K. M. M., Manu, M. M. R., Rahman, M. M. & Nasir, M. K. An automatic system to detect colorectal polyp using hybrid fused method from colonoscopy images. Intelligent Systems with Applications, 22, p.200342. (2024).

Obayya, M. et al. Biomedical image analysis for colon and lung cancer detection using tuna swarm algorithm with deep learning model. IEEE Access. (2023).

Pacal, I. & Karaboga, D. A robust real-time deep learning based automatic polyp detection system. Computers in Biology and Medicine, 134, p.104519. (2021).

Raju, A. S. N. & Venkatesh, K. EnsemDeepCADx: Empowering Colorectal Cancer Diagnosis with Mixed-Dataset Features and Ensemble Fusion CNNs on Evidence-Based CKHK-22 Dataset. Bioengineering, 10(6), p.738. (2023).

Abd El-Aziz, A. A., Mahmood, M. A. & Abd El-Ghany, S. Advanced Deep Learning Fusion Model for Early Multi-Classification of Lung and Colon Cancer Using Histopathological Images. Diagnostics, 14(20), p.2274. (2024).

Xia, Y., Yun, H. & Liu, Y. MFEFNet: Multi-scale feature enhancement and Fusion Network for polyp segmentation. Computers in Biology and Medicine, 157, p.106735. (2023).

Pacal, I. A novel swin transformer approach utilizing residual multi-layer perceptron for diagnosing brain tumors in MRI images. Int. J. Mach. Learn. Cybernet., pp.1–19. (2024).

Karaman, A. et al. Robust real-time polyp detection system design based on YOLO algorithms by optimizing activation functions and hyper-parameters with artificial bee colony (ABC). Expert systems with applications, 221, p.119741. (2023).

Pacal, I. MaxCerVixT: a novel lightweight vision transformer-based Approach for precise cervical cancer detection. Knowl. Based Syst. 289, 111482 (2024).

Dwivedi, A. K., Srivastava, G. & Pradhan, N. NFF: A Novel Nested Feature Fusion Method for Efficient and Early Detection of Colorectal Carcinoma. In Proceedings of Fourth International Conference on Computer and Communication Technologies: IC3T 2022 (pp. 297–309). Singapore: Springer Nature Singapore. (2023).

Pacal, I. et al. An efficient real-time colonic polyp detection with YOLO algorithms trained by using negative samples and large datasets. Computers in biology and medicine, 141, p.105031. (2022).

Singh, O. & Singh, K. K. An approach to classify lung and colon cancer of histopathology images using deep feature extraction and an ensemble method. Int. J. Inform. Technol. 15 (8), 4149–4160 (2023).

Sangeetha, S. K. B. et al. An enhanced multimodal fusion deep learning neural network for lung cancer classification. Systems and Soft Computing, 6, p.200068. (2024).

Poalelungi, D. G. et al. Advancing patient care: how artificial intelligence is transforming healthcare. Journal of personalized medicine, 13(8), p.1214. (2023).

Sureshkumar, V. et al. Breast cancer detection and analytics using hybrid CNN and extreme learning machine. Journal of Personalized Medicine, 14(8), p.792. (2024).

Srivastava, G., Chauhan, A. & Pradhan, N. Cjt-deo: Condorcet’s jury theorem and differential evolution optimization based ensemble of deep neural networks for pulmonary and colorectal cancer classification. Applied Soft Computing, 132, p.109872. (2023).

Gowthamy, J. & Ramesh, S. A novel hybrid model for lung and colon cancer detection using pre-trained deep learning and KELM. Expert Systems with Applications, 252, p.124114. (2024).

Raju, A. S. N., Rajababu, M., Acharya, A. & Suneel, S. Enhancing Colorectal Cancer Diagnosis With Feature Fusion and Convolutional Neural Networks. Journal of Sensors, 2024. (2024).

Shah, A. et al. Comparative analysis of median filter and its variants for removal of impulse noise from gray scale images. J. King Saud University-Computer Inform. Sci. 34 (3), 505–519 (2022).

Toğaçar, M., Ergen, B. & Cömert, Z. COVID-19 detection using deep learning models to exploit Social Mimic Optimization and structured chest X-ray images using fuzzy color and stacking approaches. Computers in biology and medicine, 121, p.103805. (2020).

Gu, X., Tian, Y., Li, C., Wei, Y. & Li, D. Improved SE-ResNet Acoustic–Vibration Fusion for Rolling Bearing Composite Fault Diagnosis. Applied Sciences, 14(5), p.2182. (2024).

Chang, Y. & Bao, G. Enhancing Rolling Bearing Fault Diagnosis in Motors using the OCSSA-VMD-CNN-BiLSTM Model: A Novel Approach for Fast and Accurate Identification. IEEE Access. (2024).

Jiao, D. et al. Wavelength detection of serial WDM ultra-short fiber Bragg grating sensor networks based on a CCD interrogator using deep belief networks and sparrow search algorithm. Opt. Express. 32 (13), 22263–22279 (2024).

www.warwick.ac.uk/fac/sci/dcs/research/tia/glascontest/download

Ragab, M., Mahmoud, M. M., Asseri, A. H., Choudhry, H. & Yacoub, H. A. Optimal deep transfer learning based colorectal Cancer detection and classification model. Computers Mater. Continua, 74(2) (2023).

Qiu, S., Lu, H., Shu, J., Liang, T. & Zhou, T. Colorectal Cancer Segmentation Algorithm based on deep features from enhanced CT images. Computers Mater. Continua, 80(2). (2024).

Li, R. et al. Few-shot learning based histopathological image classification of colorectal cancer. Intelligent Medicine. (2024).

Acknowledgements

The authors extend their appreciation to the Deanship of Research and Graduate Studies at King Khalid University for funding this work through Large Research Project under grant number RGP2/267/45. Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2024R330), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia. Researchers Supporting Project number (RSPD2024R787), King Saud University, Riyadh, Saudi Arabia. This study is partially funded by the Future University in Egypt (FUE).

Author information

Authors and Affiliations

Contributions

Conceptualization: Sultan Refa AlotaibiData curation and Formal analysis: Mashael Maashi, Ahmed MahmudInvestigation and Methodology: Sultan Refa Alotaibi, Manal Abdullah AlohaliProject administration and Resources: Supervision; Manal Abdullah AlohaliValidation and Visualization: Hamed Alqahtani, Moneerah AlotaibiWriting—original draft, Sultan Refa AlotaibiWriting—review and editing, Manal Abdullah Alohali, Ahmed MahmudAll authors have read and agreed to the published version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval

This article does not contain any studies with human participants performed by any of the authors.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Alotaibi, S.R., Alohali, M.A., Maashi, M. et al. Advances in colorectal cancer diagnosis using optimal deep feature fusion approach on biomedical images. Sci Rep 15, 4200 (2025). https://doi.org/10.1038/s41598-024-83466-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-024-83466-5

Keywords

This article is cited by

-

Improving histopathological image classification with patch-based embeddings and ensemble learning: a study on enteroscope biopsy images

International Journal of Machine Learning and Cybernetics (2026)

-

An enhanced fusion of transfer learning models with optimization based clinical diagnosis of lung and colon cancer using biomedical imaging

Scientific Reports (2025)

-

CRCNet: convolutional multi-layer perceptron encoder with attention module for colorectal cancer segmentation

Evolving Systems (2025)

-

Integrated Deep Learning Based Optimization Algorithms for Medical Data: Fundamentals, Challenges, and Future Perspectives

Archives of Computational Methods in Engineering (2025)

-

A deep learning model for the punching shear strength of prestressed concrete slabs

International Journal of Machine Learning and Cybernetics (2025)