Abstract

With the growing demand for contactless human–computer interaction in the smart home field, gesture recognition technology shows great market potential. In this paper, a sparse millimeter wave point cloud-based gesture recognition system, RaGeoSense, is proposed, which is designed for smart home scenarios. RaGeoSense effectively improves the recognition performance and system robustness by combining multiple advanced signal processing and deep learning methods. Firstly, the system adopts three methods, namely K-mean clustering straight-through filtering, frame difference filtering and median filtering, to reduce the noise of the raw millimeter wave data, which significantly improves the quality of the point cloud data. Subsequently, the generated point cloud data are processed with sliding sequence sampling and point cloud tiling to extract the spatio-temporal features of the action. To further improve the classification performance, the system proposes an integrated model architecture that combines GBDT and XGBoost for efficient extraction of nonlinear features, and utilizes LSTM gated loop units to classify the gesture sequences, thus realizing the accurate recognition of eight different one-arm gestures. The experimental results show that RaGeoSense performs well at different distances, angles and movement speeds, with an average recognition rate of 95.2%, which is almost unaffected by the differences in personnel and has a certain degree of anti-interference ability.

Similar content being viewed by others

Introduction

With the rapid development of smart home technology, gesture recognition technology is gradually gaining attention as a convenient interaction method. In this paper, we propose RaGeoSense (Radar-based Gesture Sensing System), which monitors and recognizes eight commonly used gestures executed by users in the smart home environment by pre-processing the echo data of different gesture actions, extracting spatial and temporal features from the point cloud data, and realizing the interaction and control of gestures in the smart home.

The research of gesture interaction has a long history and involves many disciplines1,2,3,4. Sutherland’s Sketchpad5 lays the foundation for the design of modern graphical user interfaces and interactive systems; in the literature6, he put forward the concept of “immersive displays” to provide theoretical support for the development of virtual reality and interactive technology.

In recent years, with the development of technology, researchers have gradually explored the application of gesture recognition based on visual sensors, radiofrequency signals, acoustic wave, and millimeter-wave radar and other sensing technologies in contactless human-computer interaction in smart home scenarios. Among these technologies, millimeter-wave radar is gradually becoming a solution of great interest due to its unique advantages. Compared with visual sensors (e.g., RGB cameras and depth cameras)7,8,9,10,11, millimeter-wave radar is insensitive to ambient light conditions, can penetrate environmental disturbances such as mist and smoke, and is more advantageous in terms of privacy protection. Gesture recognition methods based on radio frequency signals (e.g., Wi-Fi)12,13,14,15,16,17,18,19,20,21, despite their penetration capability and strong environmental adaptability, have limited spatial resolution and face certain difficulties especially in capturing small-scale fine gestures. In addition, some Wi-Fi gesture recognition techniques require real-time processing of a large amount of signal data, such as channel state information14,15,16,17,18 and spectral features19,20,21, which puts a high demand on the computational resources of the device. Compared to acoustic wave technology22,23,24,25,26,27,28, millimeter-wave radar not only performs more stably in the case of high ambient noise, but also significantly outperforms acoustic voice assistants29 for interaction in scenarios that require quietness at night. In addition, the silent interaction characteristics of millimeter-wave radar make it more friendly to people with disabilities, such as deafness, providing convenience and accessibility for smart home use for this group.

Despite the superior performance of millimeter-wave radar in terms of accuracy, privacy protection, and environmental adaptability, existing research still faces a number of key challenges. For example, it has been shown that adversarial attacks can degrade the robustness of 3D deep learning models by destroying the intrinsic symmetry of the point cloud data through small perturbations, which poses a potential threat to the stability of the gesture recognition system, similar to the problem revealed by the SymAttack framework30. Maintaining the robustness of a gesture recognition system in complex environments requires not only focusing on the imperceptibility of adversarial attacks, but also reducing the flow-perception distortion to enhance the system’s adaptability to multiple disturbances31. In addition, some studies still do not fully utilize the spatio-temporal characteristics of millimeter-wave radar signals, which limits the further improvement of the system performance. These issues indicate that in the field of smart home human–computer interaction, how to construct a millimeter-wave radar gesture recognition system that combines high accuracy, low resource consumption and strong robustness is still an important research direction that needs to be solved.

To solve the above problems, this paper proposes a sparse millimeter-wave point cloud-based gesture recognition system, RaGeoSense, which takes full advantage of the high accuracy of millimeter-wave radar in capturing dynamic movements, and significantly improves the accuracy and robustness of the gesture recognition by optimizing the point cloud preprocessing method and the design of the deep learning model, making it more suitable for human–computer interaction in the smart home.

The main contributions of this paper include:

-

(1)

An in-air gesture recognition system based on millimeter-wave radar sparse 4D point cloud is constructed, which utilizes the 3D coordinates and Doppler velocities of the point cloud as the data source, and employs a deep learning architecture for recognizing and evaluating eight types of arm gestures of multiple users in a variety of smart home environments.

-

(2)

Sliding sequence sampling and point cloud tiling processing methods are proposed to effectively synthesize multi-frame point cloud data, which enhances the continuity of the time dimension and compensates for the insufficient information capacity of single-frame point clouds.

-

(3)

An integrated model is designed to introduce the spatial features extracted by GBDT and XGBoost into the LSTM network, and classify them by gated loop cells, which improves the classification efficiency and circumvents the gradient explosion problem.

Related work

In this section, we review past research work on recognizing motion gestures using different techniques and focus on radar sensing-based gesture recognition techniques. In recent years, research on gesture sensing has evolved, especially in the areas of smart home, virtual reality and human-computer interaction. We organize the related research by application areas and provide an overview of the current state-of-the-art gesture recognition techniques and methods.

Gesture recognition based on non-radar techniques

Non-radar technologies have a wide range of applications in the field of gesture recognition, mainly including wearable sensor devices, cameras, WiFi signals, and acoustic wave technologies. The following is a description of these technologies and their research results.

Wearable sensors realize gesture recognition by detecting biological signals (e.g., EMG signals, inertial data) of human movements, and commonly used devices include accelerometers, gyroscopes, and EMG sensors. For example, Wheeler et al. proposed a gesture recognition method based on electromyographic (EMG) signals, which enables the classification of common gestures by analyzing the activity patterns of arm muscles32. Kim et al. designed a low-power gesture recognition system based on inertial sensors (IMUs), which demonstrated its potential in health monitoring and virtual reality33. Although wearable sensors have efficient signal capture capabilities and good privacy protection properties, they need to be worn directly on the user’s body, limiting their application in contactless scenarios. In addition, the devices are prone to damage and have high maintenance costs.

Camera-based gesture recognition classifies images or videos by extracting key points, hand contours, and motion trajectories. RGB cameras and depth cameras (e.g., Kinect) are commonly used devices. Shotton et al. proposed a real-time gesture recognition method based on Kinect’s depth data, which achieves efficient estimation of human gestures using a random forest model10. In addition, Oberweger et al. developed a deep learning framework based on convolutional neural networks (CNNs) that significantly improved the recognition accuracy of complex gestures11. However, camera technology is more sensitive to light and occlusion and involves privacy protection issues, which limits its use in home and office environments.

WiFi signals enable gesture recognition by analyzing the Channel State Information (CSI) or Received Signal Strength (RSS) of the wireless signals. Pu et al. proposed the WiSee system, which utilizes the Doppler effect of WiFi signals to achieve non-contact recognition of simple gestures18. Wang et al. further utilized the time-frequency characteristics of the CSI signals to improve the accuracy of gesture recognition in complex scenes and robustness21. WiFi technology has the advantage of requiring no additional hardware, being able to penetrate obstacles and being deployed using existing WiFi infrastructure. However, its low spatial resolution makes it difficult to capture fine gestures, while real-time processing demands are high.

Acoustic gesture recognition senses gesture movements by detecting the time-delay and frequency-shift characteristics of ultrasonic or audio signals. Gupta et al. proposed the SoundWave system, which utilizes the speakers and microphones of smart devices to achieve gesture recognition through the Doppler effect of acoustic waves27. Kraljevi et al. extended acoustic wave technology to smart home scenarios by detecting gestures through acoustic wave reflections, which They realized contactless interaction with low power consumption28. Although acoustic wave technology does not require additional hardware and has low power consumption, it is susceptible to environmental noise interference and has low robustness in noisy scenes, which limits its application in complex environments.

Millimeter-wave radar-based gesture recognition

In recent years, millimeter-wave radar has gained widespread attention in the field of gesture recognition due to its non-contact, high robustness, and excellent environmental adaptability. Depending on the data processing method, millimeter-wave radar gesture recognition is mainly classified into two categories: methods based on Doppler images34,35,36,37,38,39,40 and methods based on point cloud data41,42,43,44,45,46,47.

Doppler image-based methods

Doppler image gesture recognition mainly utilizes the Doppler frequency shift induced by hand motion to capture velocity information and generate Doppler spectral images in the time-frequency domain, i.e., two-dimensional frequency-time maps. This method reflects the motion trend of the object by analyzing the information of velocity and frequency changes, and has high temporal resolution, but the spatial information is relatively limited, so it is suitable for recognizing dynamic and simple gestures such as sliding, waving, etc. Kim et al. proposed a method of generating a Doppler image by using the short-time Fourier transform (STFT) and classifying gestures by using the convolutional neural network (CNN), which realizes the dynamic gestures with high accuracy34. Wang proposed a method based on time-expanded 3D convolutional neural networks, which significantly improved the robustness of the system by reducing noise interference35. Lien et al. developed the Soli system using millimeter-wave radar to capture minute gesture movements and proposed a radar sensor development method optimized for human-computer interaction36. In addition, Hao et al. designed a lightweight neural network model, MultiCNN-LSTM, which significantly improves the richness and expressiveness of the recognition by fusing multiple features such as distance-time map, Doppler-time map and angle-time map37. The Doppler-image-based method has low computational complexity and fast response time, which makes it suitable for real-time systems. However, due to the lack of ability to describe spatial geometric features, the method has limited performance in static gesture and complex motion trajectory recognition.

Methods based on point cloud data

In contrast, point cloud data-based methods generate 3D or 4D (spatial+temporal) point cloud data through millimeter wave radar, and realize gesture recognition by analyzing the spatial structure and time series features of the point cloud. This method can comprehensively reflect the positional information and motion trajectory of the hand and is suitable for the unified recognition of static and dynamic gestures. Qi et al. proposed the PointNet network, which is able to directly process the sparse point cloud data and extract spatial geometric features from it for gesture recognition41. On this basis, Qi et al. further developed PointNet++, which significantly improves the processing capability of complex point cloud data through local neighborhood feature extraction and multi-scale sampling methods46. Liu et al. proposed an LSTM-based point cloud sequence analysis method, which achieves accurate recognition of successive complex gestures by combining temporal and spatial features43. Moon et al. proposed a method for gesture recognition using sparse time-series point cloud data generated by short-range millimeter-wave radar sensors, which showed better classification performance compared to methods such as recurrent neural networks and PointNet44. Chen et al. introduced graph neural networks into point cloud data processing, and designed a gesture recognition framework capable of adapting to complex scenarios45. Zhao et al. proposed a spatio-temporal dimensionality reduction method for millimeter-wave radar point clouds, which solves the problem of poor generalization performance of existing methods among different spatial locations and users, and significantly improves the accuracy of gesture recognition at long distances or large fields of view46.

With its ability to efficiently capture 3D spatial geometric features, point cloud data gesture recognition shows significant advantages, which is especially suitable for gesture recognition needs in smart home scenarios. Compared with Doppler image methods, point cloud data can not only capture the details of static gestures, but also handle complex dynamic trajectories, which makes it perform better in recognizing diverse gesture actions.

A comparison of each gesture recognition method is shown in Table 1. In smart home scenarios, gesture recognition often needs to cope with diverse interaction requirements in complex environments, such as high occlusion, low light, and dynamically changing backgrounds. The point cloud data can effectively cope with these challenges by virtue of its insensitivity to ambient light and robustness. Combined with the comparative analysis in the table, it can be found that millimeter-wave radar, especially the point cloud data-based approach, has the highest resolution, robustness, and privacy protection. In addition, it has lower cost and higher portability as the acquisition equipment for point cloud data is simpler than Doppler image-based methods.

Multi-user interaction is a common and complex scenario in smart home environments. Simultaneous execution of gestures by multiple users may lead to signal aliasing and feature obfuscation, thus increasing the difficulty of recognition. Drawing on threat detection and attribution techniques in the cybersecurity domain, such as Cskg4apt and ThreatInsight, provides new ideas for solving the multi-user interaction problem. For example, Cskg4apt identifies the same threat from different attack events by constructing an APT knowledge graph model, which reveals that similar knowledge graph techniques can be employed to distinguish and identify gesture signals from different users in multi-user interaction scenarios48. ThreatInsight analyzes IPs captured from HoneyPoints to detect potential threats, which suggests that similar real-time analysis and attribution techniques can be introduced in gesture recognition systems to improve the real-time performance and accuracy of the system49. The application of these techniques not only helps to improve the robustness of gesture recognition in complex interactive environments, but also shows great potential in the field of smart home and human–computer interaction, which is an important development direction for future gesture recognition technology.

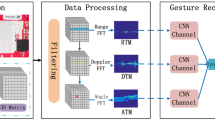

RaGeoSense system architecture diagram.

Proposed identification system

In this paper, we propose RaGeoSense, a gesture recognition framework based on sparse millimeter-wave radar point clouds. As shown in Fig. 1, the system consists of three main modules: data acquisition, preprocessing, and classification. In the data acquisition module, the FMCW radar transmits frequency-modulated continuous waves and receives echoes through antennas, which are then converted into 4D point cloud data containing spatial coordinates and Doppler velocity. The preprocessing module applies a tailored three-stage filtering pipeline-including K-means clustering, frame difference filtering, and median filtering-to suppress noise and extract clean gesture-related features. Unlike traditional gesture recognition methods that rely solely on Doppler spectrograms or end-to-end CNN/RNN models, RaGeoSense adopts a hybrid model architecture that separates spatial and temporal feature extraction. Specifically, GBDT and XGBoost are used to capture complex nonlinear spatial patterns from the point clouds, while LSTM networks extract temporal dependencies in gesture sequences. Additionally, techniques such as sliding sequence sampling, point cloud tiling, and data augmentation are employed to enrich feature representation and enhance robustness across environments. This design improves recognition accuracy, reduces computational overhead, and avoids training instability issues such as gradient vanishing common in deep learning-only models. By fusing spatial and temporal features effectively, RaGeoSense achieves high-precision, stable gesture recognition with strong adaptability to real-world smart home applications.

Raw data acquisition module

The millimeter wave radar point cloud generation process is based on the operating principle of FMCW (Frequency Modulated Continuous Wave) radar. First, the radar synthesizer generates a Chirp signal, which is transmitted through the transmitting antenna and a copy of the signal is sent to the mixer. The receiving antenna receives the echo signal reflected back from the object and transmits it to the mixer, where it is mixed with the transmitted signal to generate an IF signal. The entire signal processing flow is shown in Fig. 2 and contains the transmit and receive signals. The mathematical expressions of the transmit and receive signals are as follows:

where \(X_1\) and \(X_2\) denote the transmitted and received signals, respectively, \(\omega _1\) and \(\omega _2\) denote the frequency, t denotes the time, \(\phi _1\) and \(\phi _2\) denote the phase, and IF denotes the IF signal.

Next, a distance-dimensional Fast Fourier Transform (FFT) is performed on the IF signal to generate a distance-azimuth heat map. After two-dimensional constant false alarm rate (2D-CFAR) processing, the distance and azimuth information of the target is obtained. Then beamforming is performed in the vertical direction and pitch angle information is obtained by 1D peak search. Finally, a velocity-dimensional FFT is performed on the signal to extract the velocity information, and then the distance, azimuth, pitch angle and velocity data of the target point are obtained. The sparse point cloud can be output after combining the multi-frame data to provide support for RaGeoSense.

Signal processing flowchart.

Pre-processing module

This module mainly includes three tasks: point cloud filtering, data enhancement and input format processing. By optimizing the point cloud data quality, enriching the sample diversity and standardizing the input format in order to improve the adaptability of RaGeoSense to different gestures and complex environments, the subsequent data analysis and processing will be richer and more reliable.

Point cloud filtering

The point cloud filtering part includes straight pass filtering, frame difference filtering and median filtering based on clustering algorithm. The filtering process is shown in Fig. 3, and the pseudo-code is detailed in Table 2. The effects of the three point cloud filters on the experiment will be discussed in the Experiment and Evaluation section.

Point cloud filtering process.

In millimeter-wave radar gesture recognition, K-means clustering straight-through filtering is used to dynamically determine the clustering centers and window locations of the data to adaptively filter noise and outliers. This method is particularly suitable for dealing with complex nonlinear or high-dimensional data, and can effectively recognize and filter noise and outliers in the gesture point cloud to enhance the robustness of the system. Compared with the traditional fixed-window filtering method, K-means clustered straight-through filtering can automatically adjust the filtering window according to the dynamic characteristics of the gesture movement, thus maintaining a high recognition accuracy at different distances and angles.

Frame difference filtering removes the static background and retains the dynamic target by comparing the difference between two consecutive frames. In millimeter-wave radar gesture recognition, this method can effectively extract the dynamic features of gesture movements, which is especially suitable for dealing with gesture recognition under complex backgrounds. The frame difference filtering automatically determines the distance threshold by a statistical method, which can extract dynamic targets more accurately and avoid the error caused by setting the threshold manually. Compared with traditional methods, this method is more adaptable in complex environments.

Median filtering is a nonlinear filtering technique that can smooth the point cloud data and remove anomalies while keeping the data boundary clear. Experimental results show that although median filtering may slightly reduce the recognition accuracy of a single user, it effectively weakens the influence of individual features and improves the generalization ability of the model for multiple users.

Data enhancement

In millimeter-wave radar point cloud gesture recognition, data enhancement techniques are crucial to improve the generalization ability and robustness of the model. By performing diverse transformations on the training data, multiple gesture change scenarios in reality are simulated, thus making the model’s performance more stable and accurate in the face of unknown data.RaGeoSense mainly uses four kinds of data enhancement methods: additive noise, sequence inversion, temporal shifting, and temporal warping. The schematic diagram of data enhancement is shown in Fig. 4.

Schematic representation of data enhancements.

Adding noise enhances the model’s adaptability to noisy environments by introducing random perturbations, thus enriching the diversity of the data. Sequence inversion helps the model learn the symmetry of gestures by inverting the gesture sequence on the time axis, improving the ability to recognize symmetrical gestures. Time translation simulates the occurrence of gestures in different time points by moving the gesture data back and forth. Time warping simulates gesture changes at different speeds by stretching or compressing the time axis, helping the model adapt to differences in gesture rhythms.

Gesture rhythms vary depending on individual habits or environmental factors, and data augmentation helps the model capture these rhythmic changes to enhance recognition. It should be noted that excessive panning and distortion may lead to partial loss of gesture features and affect the recognition effect. Therefore, in our experiments we chose appropriate parameters of panning steps and time warping to balance the relationship between data diversification and gesture feature retention.

Input format processing

Sliding sequence sampling

In point cloud data sampling, a sliding window approach is used to gradually stack samples to increase the number of samples and better capture temporal continuity. This method naturally embeds the temporal characteristics of the point cloud data and avoids additional data processing. Sliding window sampling ensures that each frame of data is fully involved in the subsequent analysis, maintaining data integrity and independence. As shown in Fig. 5, the data of each frame is moved to the columns to realize the independence of the information on the rows, thus improving the sampling efficiency and quality.

Schematic diagram of sliding sequence sampling.

Traditional sampling methods are often difficult to reflect the data continuity due to insufficient samples, limiting the feature extraction ability of the model. Sliding-window sampling significantly enhances the expression of information in the time dimension, thus improving model performance and prediction accuracy. The effects of different sampling sequence lengths and slide lengths on the experiments and the optimal parameters will be discussed in the Experiments and Evaluation section.

Point cloud tiling

In data preprocessing, specific columns (x, y, z, v) of the point cloud data are extracted and flattened into one-dimensional arrays to standardize the data format. The processing step consists of reading the data from a CSV file, keeping the four variables for each frame. Each frame contains up to 8 points, so the data dimension is uniformly defined as 32 columns (4 variables \(\times\) 8 points). If the number of points in a frame is less than 8, subsequent columns are filled with zeros to ensure data integrity for model training and inference.The exact process of point cloud tiling is shown in Fig. 6.

This process unifies the data format and converts each time frame into a one-dimensional array of consistent length, simplifying the data processing process and improving efficiency. The tiled one-dimensional array is suitable for deep learning models, which reduces preprocessing complexity and computational cost, and helps speed up data processing and training. Due to the limited reflection signals of objects within a single frame, the information content of a single-frame point cloud is insufficient, which affects the recognition ability. Multi-frame trajectory synthesis takes into account spatial and temporal dynamics to capture the distribution and motion trajectories of objects, which leads to more accurate recognition and understanding of object behavior in complex scenes.

Schematic of point cloud tiling.

Sliding sequence sampling is combined with point cloud tiling to achieve multi-frame trajectory synthesis, which significantly enhances the system’s ability to recognize and understand dynamic objects. This approach is particularly important in object recognition and behavior analysis in complex environments, enhancing the system’s level of perception and understanding of dynamic behaviors.

Classification module

In the classification module, a combination of integrated learning and deep learning is used to extract and fuse spatial and temporal features of point cloud data. GBDT and XGBoost are used to extract spatial features in point cloud data, which can effectively capture nonlinear relationships and feature interactions in the data. LSTM is used to extract dynamic features in the time dimension, which can handle the temporal information of gesture actions. The combination of the two realizes the deep fusion of spatio-temporal features in millimeter wave radar gesture recognition. This integrated approach combines the strengths of different models and effectively improves the overall classification and recognition performance of RaGeoSense.

Spatial feature extraction

Point cloud data contains rich spatial information, which is crucial for understanding the shape, location and dynamic behavior of objects. However, the sparsity and complexity of point cloud data make it difficult for traditional machine learning methods to effectively capture its underlying patterns. The nonlinearity and high-dimensional feature interactivity of point clouds require models with strong modeling capabilities to identify and exploit this information.

In this context, the integrated learning models GBDT (Gradient Boosting Decision Trees) and XGBoost (Extreme Gradient Boosting) show unique advantages. They are able to capture nonlinear relationships and feature interactions in the data and excel in accuracy and robustness.RaGeoSense applies GBDT and XGBoost to the spatial feature extraction of point cloud data, which not only improves the model performance, but also enhances the feature representation and model interpretability, and lays a solid foundation for the subsequent deep learning modules, thus enhancing the overall system classification or recognition ability of the overall system.

GBDT

Gradient Boosting Decision Tree is based on the boosting method to build a combination of multiple weak learners (e.g., decision trees) to improve the model prediction accuracy through gradual iteration, the model structure is shown in Fig. 7.The GBDT initializes the model from the mean value of the data, see Eq. (4):

In each step, the model computes the residuals and constructs a new decision tree based on these residuals, and then updates the predictive power of the model:

where \(\nu\) is the learning rate (typically taking a value in the range of 0 to 1), which is used to control the magnitude of the update at each step.

GBDT model diagram.

XGBoost

XGBoost optimizes the loss function more accurately by introducing the second-order derivative information and uses a regularization term to control the model complexity, thus preventing overfitting. At the same time, it supports parallel computing and sparse data processing, which makes the model have significant efficiency advantages in dealing with large-scale data and complex tasks, and the model structure is shown in Fig. 8. The model update of XGBoost is as follows:

where \(\eta\) is the learning rate. The loss function of XGBoost contains a regularization term that controls the model complexity:

where \(\Omega (f_k) = \gamma T + \frac{1}{2} \lambda \sum _{j=1}^T w_j^2\) is the regularization term of the tree and the parameters \(\gamma\) and \(\lambda\) control the complexity of the tree.

XGBoost model diagram.

Temporal feature extraction

The integration of LSTM (Long Short-Term Memory Network) with GBDT and XGBoost can significantly improve the model performance, especially when dealing with time-series data such as millimeter-wave radar point clouds. LSTM has an advantage in capturing dependencies over long time spans, and is able to efficiently extract the dynamic features that change over time, thus improving the accuracy of the model. Its model structure is shown in Fig. 9.

LSTM cell structure and network structure.

LSTM controls the flow of information through forgetting gates, input gates and output gates. Irrelevant information is removed through forgetting gates, input gates update the state, and valid information is passed through output gates. The forgetting gate generates a weight vector to determine the retained or discarded information through a Sigmoid function:

Input gates control the introduction of new information and update the neuron state:

The final hidden state is then controlled through an output gate and used to pass to the next moment:

In the time series processing of millimeter-wave radar point cloud data, the excellent long-term dependent feature capturing ability of LSTM is especially important.

The network structure is shown in figure, including two layers of convolutional neural network, three layers of LSTM and attention mechanism, and finally connecting the fully-connected layer. The CNN excels in extracting local features, while the LSTM captures the time dynamics and avoids gradient vanishing and explosion. The attention mechanism, on the other hand, helps the model focus on key features, thus enhancing the classification performance.

By using the outputs of GBDT and XGBoost as feature inputs to the subsequent neural network, the classification module realizes the organic combination of spatial and temporal features. The combination of GBDT, XGBoost, and LSTM can significantly improve recognition accuracy by utilizing the spatial and temporal information in gesture data more comprehensively than a single deep learning model. Model fusion not only enriches the feature representation, but also improves the accuracy and robustness of the model, enabling the system to better cope with complex point cloud data recognition tasks. This approach of feature aggregation and model fusion provides an ideal solution to improve the classification performance and stability of the system.

Experimental setup

This section will describe in detail the equipment configurations, experimental environments, gesture categories, participant profiles and specific experimental steps used in the experiment.

Equipment configuration

The IWR1843BOOST evaluation board, shown in Fig. 10, was used in the experiments. It is an evaluation module for 77 GHz millimeter-wave sensors based on the IWR1843 single-chip device. Unlike traditional depth cameras, the IWR1843BOOST captures sparse 4D point cloud data (including x, y, z coordinates and Doppler velocity), which provides spatial and motion information directly from the radar echo. The board seamlessly connects to the Texas Instruments (TI) development kit ecosystem, facilitating software development, and is supported by mmWave tools and software.

IWR1843BOOST device map.

RaGeoSense transmits data via a built-in Cortex-R4F microcontroller (200 MHz) using a standard UART protocol. A frame rate of 15 frames per second (fps) was selected to balance data density and processing efficiency. The system provides a distance resolution of 0.044 m, a radial velocity resolution of 0.13 m/s, a maximum unambiguous detection range of 4.5 m, and a maximum radial velocity of 1.2 m/s. The starting frequency was 77 GHz, and the bandwidth was set to 4 GHz to achieve the required range resolution. Detailed radar parameters are listed in Table 3.

Experimental environment

RaGeoSense is applied to smart home control scenarios for scenarios such as remote gesture control of living room TV, bedroom air conditioning and office computers, etc. Two indoor environments are selected in the experiment to evaluate the performance of RaGeoSense. In different environments, the system will demonstrate its accuracy and reliability in real-world applications.

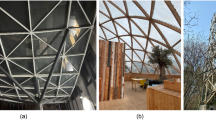

The first one is the open environment, the real environment of the experimental scenario is shown in Fig. 11a, and the schematic diagram of the experimental environment is shown in Fig. 11b, this environment has an area of 60 square meters and there is no furniture around the radar sensor. This environment is used for both single and multi-person experiments and helps to evaluate the basic performance of the system without interference and the ability of multi-target recognition.

(a) Open real environment map. (b) Open environment floor plan.

Secondly, the complex environment, the real environment of the experimental scenario is shown in Fig. 12a, and the schematic diagram of the experimental environment is shown in Fig. 12b, which covers an area of about 40 square meters, and the environment is relatively cluttered with desks, chairs and other office supplies. This environment is used to evaluate the effectiveness of the gesture recognition system in office scenarios, especially for remote control of devices such as computers.

(a) Complex real environment map. (b) Complex environment floor plan.

In order to obtain the best performance in different environments, we optimize the parameters of the radar equipment according to the specific environment. For example, coverage is increased in open spaces, while parameters are adjusted to minimize interference in complex spaces.

Gesture set

In this study, eight arm-over-air gestures, as shown in Fig. 13, were selected to cover the common requirements in smart home scenarios. The system is designed for mid-range gesture recognition, which can detect gestures operated within 5 m, and requires the use of body parts with a large radar cross-section for movement. Each gesture is experimented in different environments, distances and angles to fully test the performance of the system.

Gesture schematic.

Participants and experimental steps

To further increase the diversity of the data, the experimental participants covered individuals of different genders, ages, heights and weights. This ensures that the dataset is representative of a wide range of application scenarios, thus helping the model to better adapt to different practical application environments.

-

1.

Participant background: a total of 10 volunteers (5 of each gender) were recruited, ranging in age from 18 to 30 years old, with a weight range of 50 to 90 kg and a height range of 1.57 to 1.85 m. All participants were volunteers from the college laboratory.

-

2.

Experimental preparation: prior to data collection, the experimenter demonstrated the standardized movements of eight arm gestures via video. Participants completed the gestures sequentially and were checked for correctness by the experimenter on a laptop computer through point cloud visualization; incorrect gestures were asked to be re-executed by the participant.

-

3.

Dataset overview: the final dataset contained 7000 gesture samples, each completed within 3 s, with a total data recording time of approximately 31 h. After completing a certain type of gesture, participants were allowed to take an appropriate break before starting the next gesture.

Assessment of indicators

In order to comprehensively evaluate the performance of RaGeoSense, the experiments use the Accuracy, Acc. and the sparse categorical cross-entropy loss function as the main evaluation metrics. The loss function is formulated as:

where N is the number of samples and \(p_i\) is the model’s probability of predicting the correct category. In this setup, the output layer of the model uses a softmax activation function that converts the output to a probability distribution and computes the loss based on the real integer labels to guide the model training.

In addition, Confusion Matrix (CM) is reported experimentally to analyze in detail the performance of the system on various types of gestures. The robustness of the system is also verified through experiments in different environments and interference conditions to assess its stability and consistency. Finally, user feedback is collected through a questionnaire survey to analyze the user satisfaction of the system in terms of ease of use, accuracy, and responsiveness, thus providing a comprehensive assessment of the overall user experience and acceptance of the system.

Experimentation and evaluation

In this section, an experimental evaluation of RaGeoSense was conducted to assess the overall performance of the system by comparing different experimental dimensions. For this purpose, 10 users were recruited to participate in the training and an additional 4 users were recruited for testing the system’s recognition performance on 8 gestures.

Overall performance

The overall performance of gesture recognition in both simple and complex environments was first evaluated, and the impact of different experimental parameters on the model was analyzed. As shown in Fig. 14, the training loss continuously decreases and gradually approaches zero, indicating that the model effectively fits the training data. Meanwhile, the validation loss drops rapidly in the early stages and then stabilizes, and the validation accuracy rises significantly and eventually approaches the training accuracy. These trends suggest that the model not only converges well during training but also achieves high prediction accuracy on the validation set, demonstrating strong generalization ability without significant overfitting.

Training and validation loss vs. accuracy.

Overall, the training loss and validation loss decreased significantly, and both the training and validation accuracies were close to 97%, indicating that the model performed well on both the training and validation sets without significant overfitting. Figure 15 shows the confusion matrix for gesture eight classification. According to the confusion matrix, it can be seen that some of the gestures that are in conflict with other gestures have some features, such as (A) “raise” and (B) “lower” are performed in the y–z plane; (E) “pull” and (F) “push” both run along the y-axis but in opposite directions, and conflicting gestures share common features; e.g., (H) “draw a circle counterclockwise” and (G) “draw a circle clockwise” are the same as (A) “draw a circle in the y–z plane” and (A) “raise” and (B) “lower” have a tendency to move in the same direction as the z-axis, with the arm either moving away from the body or toward the body, and so have similar characteristics. (D) “Swipe left” and (C) “Swipe right” are actually the same gesture, but with the opposite direction of operation. (H) “Counterclockwise circle” is more distinctive than the other gestures, and the recognition accuracy under simple environmental conditions is very high. The recognition accuracy can reach 100% under simple environment conditions. Overall, the model is very accurate, with an overall accuracy of 96.8%, even in complex environments, the accuracy of all 8 gestures exceeds 94%.

Gesture categorization confusion matrix. (a) Simple environments. (b) Complex environments.

Effects of different distances and angles

Effects of different distances

We asked the experimenters to complete eight arm gestures at different distances and tested them in comparison in an open environment (empty hall) and a complex environment (laboratory), as shown in the setup in Fig. 16. The device distances were set to 1 m, 1.5 m, 2 m, 2.5 m, 3 m, 3.5 m, and 4 m to test the system performance and find the optimal device distance. The results show that the recognition accuracy is consistently higher in the hall than in the lab environment, which may be due to the complexity and additional interference in the lab environment. The average gesture recognition rate in both environments is more than 85%, which indicates that the system has a high gesture recognition performance.

Schematic of different distances with error rates.

The experimental results further show that the recognition rate of open scenes is consistently better than that of complex scenes, regardless of the distance. The recognition rate reaches its highest when the radar is about 2 m away from the subject. This is because the signal propagation is shorter at that distance, the signal attenuation is small, the sensing range is wider, and the reflected signal is stronger. When the distance exceeds 3.5 m, the recognition accuracy gradually decreases, which is due to the fact that the number of point clouds reflected from the human body decreases and the distribution of the point clouds becomes sparse, which reduces the amount of information and leads to the difficulty of extracting effective features. Meanwhile, as the distance increases, the point cloud generated by the gesture action is reflected in the smaller cross-section of the radar, which affects the integrity of the spatial structure features. In addition, the further the distance, the minimum distance for the radar sensor to discriminate between two targets increases, leading to a decrease in resolution, which in turn affects the accuracy of gesture recognition.

Effects of different angles

The performance of millimeter-wave radar gesture recognition systems is usually closely related to the angle of the visual axis. The radar has a field of view ranging from − 55° to + 55°, so the experiment set up five different angles of arrival: 0°, ± 10°, ± 20°, ± 30°, and ± 40° to test the system’s performance at different angles. The results of the experiment are shown in Fig. 17.

Schematic of different angles and error rates.

The experimental results show that the environment and the arrival angle have a significant effect on the gesture recognition rate. When the azimuth angle between the experimenter and the device is 0°, the overall recognition accuracy reaches 95%. When the user is located in the range of ± 20°, the recognition performance is still excellent and the accuracy rate remains around 90%. This is because in this range, the radar receives the shortest signal path, with less signal attenuation and stronger reflected signals, which helps to accurately extract features. However, when the azimuth angle between the experimenter and the device is ± 30°, the recognition accuracy drops significantly to about 75%. This is because as the angle increases, the signal path becomes longer, the signal attenuation increases, and the reflected signal weakens, resulting in increased difficulty in feature extraction. In addition, the signals received in the edge region of the radar field of view are weaker, thus increasing the uncertainty and error of recognition.

In addition, we note that the recognition accuracy at negative angles is slightly higher than that at positive angles. This difference may be due to the fact that at negative angles, the right hand gesture is closer to the center region of the radar’s field of view, and the radar receives a wider and clearer field of view, which reduces the error due to the edge effect of the field of view. At the same time, the hand movement is closer to the radar, and the radar is able to capture the details of the gesture more clearly with a stronger signal reflection, thus improving the recognition accuracy. In summary, RaGeoSense is able to maintain a high recognition performance within a small directional angle error, especially in the center region of the radar viewing angle.

Effects of different speeds

In order to evaluate the effect of different speeds on the performance of RaGeoSense gesture recognition, all gestures executed at three speeds, fast (1.5 s to complete), medium (3 s to complete), and slow (4.5 s to complete), by two participants at a distance of 2 m from the 0° position were recorded in the experiment. Participants completed the gestures based on their own perception of speed and there was no strict time limit for the experiment. Ultimately, a total of 1200 gesture instances were collected for testing. The results of the experiment are shown in Fig. 18.

Accuracy at different speeds.

The results show that the recognition accuracy of medium (96.28%) and fast (93.05%) gestures is consistently higher, while the recognition accuracy of slow gestures decreases by about 8%. This finding suggests that the speed of gesture execution has a significant effect on the recognition performance. Through further analysis, it was found that the CFAR algorithm showed high sensitivity to gesture speed. At lower speeds, the gesture’s movement changes more slowly, resulting in sparser point cloud data, which makes it more difficult for the algorithm to process and ultimately affects the accuracy of the recognition.

The reason for this phenomenon is that slower gesture movements lead to a sparse distribution of the point cloud data captured by the sensor, making it difficult to form a clear pattern of features. As a result, at slow speeds, it is difficult for the recognition algorithm to accurately track the complete trajectory and details of the gesture, thus affecting the recognition results. In order to improve the recognition performance of slow gestures, it is necessary to optimize the existing algorithm, enhance its robustness to sparse point cloud data, and improve the stability and accuracy of the system in slow gesture recognition.

Experimental results for multi-user scenarios

In order to more fully demonstrate the capability of the RaGeoSense system in a smart home environment, we designed two multi-user scenario experiments. In the experiments, the subject was located 2 meters from the radar and at the 0° position, and participants were gradually added to introduce interference. In the first experiment, participants executed different gestures from the subject at different positions and angles; in the second experiment, participants were required to walk in the background of the subject.

In addition, we optimized the filtering algorithm. During K-mean clustering and straight-through filtering, the system is able to identify multiple cluster centers and select the target closest to the radar as the subject person in the experiment. Based on this cluster center, the system automatically adjusts the appropriate window position and then performs the subsequent operations. Other experimental settings are consistent with single-user experiments.

Accuracy for different number of interlopers a. Making different gestures b. Walking in the background.

The experimental results are shown in Fig. 19. In most cases, the interference of multiple gestures has a notable impact on recognition accuracy. In an open environment, the recognition accuracy drops by 9.7 percentage points (from 95.7 to 86%) when multiple users perform gestures simultaneously. This is primarily due to the high complexity and similarity of gesture signals, which lead to signal aliasing and feature confusion-challenges that are more difficult to resolve than background noise or simple motion interference. In comparison, when multiple users walk around the subject without performing gestures, the recognition accuracy drops by 5.1 percentage points (from 95.7 to 90.6%), mainly due to increased background dynamics rather than misclassification. These two types of interference were chosen to reflect typical multi-user conditions in real-world smart home scenarios: concurrent interaction and passive background movement. To improve system robustness under these conditions, we optimized the K-means clustering in the preprocessing stage to detect multiple point cloud clusters and dynamically select the cluster closest to the radar as the primary subject. While multi-user interaction introduces challenges, the system still maintains high recognition accuracy, demonstrating strong anti-interference capabilities. We acknowledge that more complex multi-user scenarios, such as overlapping or continuous interactions between users, have not yet been explored and will be considered in future work to further enhance the system’s generalizability in practical applications.

Effect of point cloud filtering

In our gesture recognition system, point cloud filtering processing is one of the key steps to improve the recognition performance. In order to deeply analyze the effects of different filtering methods on the recognition accuracy, ablation experiments are designed to compare the overall recognition effects of no filtering, K-mean clustered straight-through filtering, frame-differential filtering, and median filtering at different distances (from 1 to 4 m) and at different angles (from 0° to ± 40°). The experimental results are shown in Fig. 20.

Comparison of point cloud filtering between different distances and angles.

Experiments show that the average contribution rate of K-mean clustering straight-through filtering is 29.4%. This filtering method can automatically determine the cluster center and window position according to the characteristics of the point cloud data, which improves the adaptivity of the system, especially under the conditions of longer distance and larger angle, its contribution rate can reach 38%, which is particularly significant to the system performance. By removing static backgrounds and retaining dynamic objects, frame differential filtering shows good results at different distances and angles, and its average contribution rate is 13.4%. The effectiveness of frame differential filtering lies in its ability to significantly enhance the detection of dynamic gestures, which is particularly beneficial for gesture feature extraction in specific backgrounds. In contrast, the median filter has an average contribution rate of only 5.3%, and although the effect is not as obvious as the other two filters, it plays a positive role in attenuating the effect of individual differences and helps to improve the generalization ability of the model. Therefore, median filtering can be used to improve the adaptability of the model among different users, although its improvement in the overall recognition rate is relatively limited.

In summary, the K-mean clustering straight-through filtering and the frame difference filtering perform most significantly in terms of recognition accuracy enhancement and are suitable for dealing with long-distance and large-angle scenarios, whereas the median filtering helps in enhancing the model’s generalization, and with the three proposed filtering methods, the quality of the human action point cloud can be significantly improved, which in turn can significantly improve the classification accuracy of RaGeoSense.

Effect of data enhancement

Data enhancement methods play an important role in improving the generalization ability and robustness of models. We analyze in detail the specific impact of these methods on model performance in terms of recognition accuracy and real-time performance. In the RaGeoSense system, the following four data enhancement methods are used: additive noise, time warping, time shifting, and sequence inversion. The experiments were conducted in open and complex environments to test the recognition accuracy in different scenarios and to compare the effect of a single enhancement method with the combination of enhancement methods, and the experimental results are shown in Table 4.

The data enhancement approach significantly improved the recognition accuracy of the model, especially in complex environments. The combined enhancement approach improved the recognition accuracy from 93.51 to 95.20% in open environments and from 90.77 to 92.56% in complex environments. In addition, data augmentation significantly improves the robustness of the model, especially when dealing with different gesture velocities. Time warping has the most significant effect on robustness improvement, but it also imposes the largest computational overhead of about 6%. When using all data enhancement methods combined, the computational overhead increases by about 10%, which has some impact on real-time performance, but is still within acceptable limits.

Effect of different sampling parameters

While the motion features within a single frame are not obvious, the arm motion in consecutive frames constitutes a spatio-temporal structure. To process these data, this experiment uses sliding sequence sampling with point cloud tiling, and evaluates the system performance by adjusting the number of samples in different time series.

We discuss the effects of different sampling sequence lengths and shift lengths on the experiment. As can be seen in Fig. 21, the experimental results for different sampling sequence lengths vary significantly. For data with sampling lengths in the range of 10–25 rows, the recognition accuracy is lower, although the data processing time is shorter and the time complexity is lower. As the sampling sequence length increases, the overall recognition rate rises above 90% when the sampling length reaches 30 lines. When continuing to increase the length of the sampling sequence, the recognition rate gradually improves, and when reaching 45 lines, the recognition rate in the open environment reaches 95.2%. However, beyond 45 lines, the accuracy begins to decrease. This is because too large a sequence window may lead to information redundancy, which in turn affects the recognition accuracy. In addition, as the number of synthesized lines increases, the time complexity and preprocessing time also increase. When the sampling length reaches 40 lines, the improvement in accuracy becomes less significant. Too short sampling sequences cannot effectively capture long-range dependencies and global patterns, leading to insufficient understanding of global features by the model; while too long sampling sequences may increase computational complexity, consume too much computational resources, and may cause some key features to be diluted in a large amount of data, which reduces the recognition effect.

In the RaGeoSense system, increasing the length of the sampling sequences can significantly improve the recognition accuracy because longer sequences provide richer spatio-temporal features that help the model better capture the dynamic changes of the gestures. However, this also brings about an increase in computational overhead, which affects the real-time and energy efficiency of the system. Smart home scenarios usually require low-latency and energy-efficient interactions, so an optimal balance between computational complexity and real-time performance must be found. Experimental results show that when the length of the sampling sequence reaches 45 lines, continuing to increase the length not only results in limited accuracy improvement, but also in a significant increase in computational overhead. According to the requirements of practical application scenarios, the length of the sampling sequence can be dynamically adjusted to balance the computational complexity and real-time performance. For example, in scenarios with high real-time requirements, the length of the sampling sequence can be appropriately shortened to reduce the computational overhead; in scenarios with high accuracy requirements, the length of the sampling sequence can be appropriately increased.

Different sampling sequence lengths and shift lengths.

In addition, the sliding length of the series has a significant effect on the experimental results. As the sliding length increases, the data is sampled faster and the number of samples is reduced, which allows the model to converge faster. However, the experiments revealed a significant decrease in recognition accuracy as the sliding length increased. Increasing the step length reduces the overlap between the generated time-series data, resulting in the model not being able to fully learn the continuous time-series features, and some important information may be skipped. In contrast, a smaller step size generates more overlapping data, which can help the model better understand the distribution of the data, while a larger step size may cause the model’s understanding of the data to become one-sided. Therefore, increasing the slide length in order to reduce the amount of computation is not applicable to this system, as it will lead to a decrease in the recognition performance.

Comparison between single models

This system uses integrated models to aggregate features to improve recognition accuracy. In order to evaluate the feature extraction effect of different single models, five models: random forest, XGBoost, GBDT, PointNet and decision tree are compared and the effect of different spatial feature extraction modules on the experimental results is evaluated. The experiment uses the set of gestures collected in an open environment with a ratio of training samples to test samples of 4:1. The comparison results are shown in Fig. 22.

Accuracy and error rates for different single models.

The comparison results show that the accuracy of Random Forest and Decision Tree is significantly lower than the other three models, which are all below 85%. The decision tree model is prone to overfitting the training data, especially when the data is noisy or the features are complex. Although Random Forest mitigates the overfitting problem by integrating multiple decision trees, there is still a performance bottleneck when dealing with high-dimensional and sparse millimeter-wave radar point cloud data. This is because the randomness of feature selection and sample subset when constructing each tree in Random Forest may lead to under-capturing of important features.

In the experiments, it is found that PointNet has higher recognition accuracy for some specific gestures, such as (E) “pull” and (F) “push”, which are of small amplitude. This is due to the fact that PointNet’s architectural design allows it to process point cloud data directly, preserving subtle geometric information and local features. However, PointNet is prone to confusion when processing gestures with opposite directions. The main reason is that gestures with opposite orientations tend to have symmetries, and PointNet may not be able to adequately capture the differences in these symmetries.

In contrast, XGBoost and GBDT are effective in overcoming this problem because they are able to handle nonlinear relationships in the data. Gestures with opposite orientations may exhibit nonlinear differences in the point cloud data, and these models are able to capture these nonlinear features through the split points of the decision tree, thus improving the recognition accuracy. The figure on the right shows the error rates of the five models, and the results show that the error rates of XGBoost and GBDT are significantly lower than the other models, and their feature extraction results are also better than the other models.

Comparison with existing work

to validate the performance of RaGeoSense, we compared it with existing millimeter-wave radar gesture recognition methods, and the experimental results are shown in Table 5. The Doppler image-based method mainly utilizes the Doppler frequency shift information to generate 2D spectral images, which is suitable for dynamic and simple gestures such as sliding and waving, but has limitations in the representation of spatial information. The first two experiments use the datasets of the respective methods. The first experiment uses a deep convolutional neural network (DCNN) to classify Doppler spectra, but real-time recognition accuracy is limited34. The second experiment significantly enhances the expressive power of gesture recognition by fusing multidimensional features such as distance-time maps, Doppler-time maps, and angle-time maps37, but is less robust to environmental disturbances.

The method based on point cloud data has richer spatial information, and the latter three experiments are evaluated using point cloud data and four-dimensional features from this paper. A neural network-based attention mechanism method improves robustness by adaptively focusing on important features and suppressing noise, but has limited improvement in real-time, with a recognition accuracy of 93.10%50. Methods such as PointNet, which directly process sparse point cloud data, can effectively extract spatial geometric features and show high accuracy (98.53%) in the gesture recognition task, but the computational complexity is high and real-time performance is poor51. In contrast, RaGeoSense enhances the continuity of temporal features through sliding sequence sampling and point cloud splicing, and comprehensively analyzes the spatial structure and temporal features of gestures by combining point cloud data, which improves the recognition accuracy while ensuring real-time response and significantly enhances the applicability of the system. In addition, some deep learning-based millimeter wave radar gesture recognition methods are prone to gradient vanishing or gradient explosion when dealing with complex gestures, which affects the model training and performance stability. RaGeoSense effectively circumvents the gradient problem by introducing GBDT and XGBoost to extract the spatial features and combining with the LSTM gated loop unit, which improves the stability of the model and the classification efficiency. Experimental results show that the average response time of RaGeoSense is only 103 ms, which is faster than most of the methods, and it takes into account the recognition accuracy and real-time performance, and it has a wider applicability in application scenarios such as smart home.

Conclusions and future work

In this paper, an innovative sparse millimeter-wave point cloud-based arm gesture recognition system, RaGeoSense, is proposed, which utilizes the spatio-temporal characteristics of millimeter-wave RF signals and combines the point cloud filtering technique, data augmentation method, and deep learning architecture to effectively improve the recognition accuracy and robustness. The system adopts K-mean clustering straight-through filtering, frame difference filtering and median filtering for noise reduction, and enhances temporal continuity through sliding sequence sampling and point cloud tiling. Combining the spatial features extracted by GBDT and XGBoost, and introducing LSTM network classification, the system realizes the efficient recognition of 8 kinds of arm gestures. Experimental results show that RaGeoSense has an excellent performance with an accuracy rate of up to 95.2% in a variety of environments, which is applicable to the fields of smart home and human–computer interaction.

Although the system performs well, there are still limitations. The current system relies only on point cloud spatial features and does not fully utilize the signal-to-noise ratio and reflection intensity information of the IWR1843 sensor, which may affect the recognition results in specific environments. Increasing the number of frames improves the temporal resolution and recognition accuracy, but also brings computational overhead, which affects real-time performance and energy efficiency. Future research will focus on integrating the signal-to-noise ratio and reflection intensity information, optimizing the algorithm to adapt to multiplayer scenarios and solving the problem of signal occlusion and point cloud aliasing. At the same time, the computational overhead will be optimized to improve the robustness of the system in complex environments and ensure broad applicability in applications such as smart home and virtual reality.

Data availability

The datasets generated during and/or analyzed during the current study are not publicly available due to [Data and processing information related to product development] but are available from the corresponding author on reasonable request.

References

Abner, N., Cooperrider, K. & Goldin-Meadow, S. Gesture for linguists: A handy primer. Lang. Linguist. Compass 9, 437–451 (2015).

Zhu, P., Zhou, H., Cao, S., Yang, P. & Xue, S. Control with gestures: A hand gesture recognition system using off-the-shelf smartwatch. In 2018 4th International Conference on Big Data Computing and Communications (BIGCOM) 72–77 (IEEE, 2018).

Dardas, N. H. & Alhaj, M. Hand gesture interaction with a 3d virtual environment. Res. Bull. Jordan ACM 2, 86–94 (2011).

Jaramillo-Yanez, A., Benalcázar, M. E. & Mena-Maldonado, E. Real-time hand gesture recognition using surface electromyography and machine learning: A systematic literature review. Sensors 20, 2467 (2020).

Sutherland, I. E. Sketchpad: A Man-Machine Graphical Communication System. Ph.D. thesis, Massachusetts Institute of Technology (1963).

Sutherland, I. E. The ultimate display. In Proceedings of the International Federation for Information Processing (IFIP) 506–508 (1965).

Jalal, A., Kim, Y. H. & Kim, Y. J. Robust human activity recognition from depth video using spatiotemporal multi-fused features. Pattern Recogn. 61, 295–308 (2017).

Radu, V. & Henne, M. Vision2sensor: Knowledge transfer across sensing modalities for human activity recognition. In IWMUT 21 (2019).

Molchanov, P. et al. Online detection and classification of dynamic hand gestures with recurrent 3d convolutional neural network. In Proceedings of CVPR 4207–4215 (2016).

Shotton, J., Sharp, T., Kipman, A., Fitzgibbon, A. & Finocchio, M. Real-time human pose recognition in parts from single depth images. In CVPR 2011 1297–1304 (2011).

Oberweger, M., Wohlhart, P. & Lepetit, V. Hands deep in deep learning for hand pose estimation. Unknown Journal (Provide Journal If Available) (2015).

Ma, Y., Zhou, G., Wang, S., Zhao, H. & Jung, W. Signfi: Sign language recognition using wifi. ACM IMWUT 2, 1–21 (2018).

Pu, Q., Gupta, S., Gollakota, S. & Patel, S. Whole-home gesture recognition using wireless signals. In Proceedings of MobiCom 27–38 (2013).

Tong, G., Li, Y., Zhang, H. & Xiong, N. A fine-grained channel state information-based deep learning system for dynamic gesture recognition. Inf. Sci. 636, 118912 (2023).

Kabir, M. H., Hasan, M. A. & Shin, W. Csi-deepnet: A lightweight deep convolutional neural network based hand gesture recognition system using wi-fi csi signal. IEEE Access 10, 114787–114801 (2022).

Tang, Z., Liu, Q., Wu, M., Chen, W. & Huang, J. Wifi csi gesture recognition based on parallel lstm-fcn deep space-time neural network. China Commun. 18, 205–215 (2021).

Gu, Y. et al. Wigrunt: Wifi-enabled gesture recognition using dual-attention network. IEEE Trans. Hum. Mach. Syst. 52, 736–746 (2022).

Pu, Q., Jiang, S. & Gollakota, S. Whole-home gesture recognition using wireless signals (demo). In Proceedings of the ACM SIGCOMM 2013 Conference on SIGCOMM 485–486 (Association for Computing Machinery, 2013).

Zhang, Y. et al. Hand gesture recognition for smart devices by classifying deterministic doppler signals. IEEE Trans. Microw. Theory Tech. 69, 365–377 (2021).

Uysal, C. & Filik, T. A new rf sensing framework for human detection through the wall. IEEE Trans. Veh. Technol. 72, 3600–3610 (2023).

Wang, W., Liu, A. X., Shahzad, M., Ling, K. & Lu, S. Device-free human activity recognition using commercial wifi devices. IEEE J. Sel. Areas Commun. 35, 1118–1131 (2017).

Guo, L., Lu, Z., Yao, L. & Cai, S. A gesture recognition strategy based on a-mode ultrasound for identifying known and unknown gestures. IEEE Sens. J. 22, 10730–10739 (2022).

Wang, H., Zhang, J., Li, Y. & Wang, L. Signgest: Sign language recognition using acoustic signals on smartphones. In 2022 IEEE 20th International Conference on Embedded and Ubiquitous Computing (EUC) 60–65 (2022).

Liu, Y., Fan, Y., Wu, Z., Yao, J. & Long, Z. Ultrasound-based 3-d gesture recognition: Signal optimization, trajectory, and feature classification. IEEE Trans. Instrum. Meas. 72, 1–12 (2023).

Wang, L. et al. Watching your phone’s back: Gesture recognition by sensing acoustical structure-borne propagation. Proc. ACM Interact. Mobile Wear. Ubiq. Technol. 5, 1–26 (2021).

Amesaka, T., Watanabe, H., Sugimoto, M. & Shizuki, B. Gesture recognition method using acoustic sensing on usual garment. Proc. ACM Interact. Mobile Wear. Ubiq. Technol. 6, 1–27 (2022).

Gupta, S., Morris, D., Patel, S. & Tan, D. Soundwave: Using the doppler effect to sense gestures. In Proceedings of ACM CHI Conference on Human Factors in Computing Systems (2012).

Kraljevic, L., Russo, M., Paukovic, M. & Šaric, M. A dynamic gesture recognition interface for smart home control based on croatian sign language. Appl. Sci. 10, 2300 (2020).

Irugalbandara, C., Naseem, A. S., Perera, S., Kiruthikan, S. & Logeeshan, V. A secure and smart home automation system with speech recognition and power measurement capabilities. Sensors 23, 5784 (2023).

Tang, K. et al. Symattack: Symmetry-aware imperceptible adversarial attacks on 3d point clouds. In Association for Computing Machinery (2024).

Tang, K. et al. Manifold constraints for imperceptible adversarial attacks on point clouds. Proc. AAAI Conf. Artif. Intell. 38, 5127–5135 (2024).

Wheeler, K. R. & Jorgensen, C. C. Gestures as input: Neuroelectric joysticks and keyboards. IEEE Pervas. Comput. 2, 56–61 (2003).

Kim, J., Mastnik, S. & Andre, E. Emg-based hand gesture recognition for real-time biosignal interfacing. In Proceedings of the International Conference on Bio-Inspired Models of Network, Information, and Computing Systems (BIONETICS) 30–39 (2008).

Toomajian, B. Hand gesture recognition using micro-doppler signatures with convolutional neural network. IEEE Access 4, 1 (2016).

Wang, Y., Wang, S., Zhou, M., Jiang, Q. & Tian, Z. Ts-i3d based hand gesture recognition method with radar sensor. IEEE Access 7, 22902–22913 (2019).

Lien, J. et al. Soli: Ubiquitous gesture sensing with millimeter wave radar. ACM Trans. Graphics 35, 1–19 (2016).

Hao, Z. et al. Millimeter wave gesture recognition using multi-feature fusion models in complex scenes. Sci. Rep. 14, 13758 (2024).

Yang, K., Kim, M., Jung, Y. & Lee, S. Hand gesture recognition using fsk radar sensors. Sensors 24, 349 (2024).

Wang, Y., Ren, A., Zhou, M., Wang, W. & Yang, X. A novel detection and recognition method for continuous hand gesture using fmcw radar. IEEE Access 8, 167264–167275 (2020).

Fan, T. et al. Wireless hand gesture recognition based on continuous-wave doppler radar sensors. IEEE Trans. Microwave Theory Tech. 64, 1 (2016).

Charles, R. Q., Su, H., Kaichun, M. & Guibas, L. J. Pointnet: Deep learning on point sets for 3d classification and segmentation. In 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) 77–85 (2017).

Qi, C. R., Yi, L., Su, H. & Guibas, L. J. Pointnet++: Deep hierarchical feature learning on point sets in a metric space. In Advances in Neural Information Processing Systems, vol. 30 (2017).

Liu, Y., Fan, B., Xiang, S. & Pan, C. Relation-shape convolutional neural network for point cloud analysis. In 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 8887–8896 (2019).

Moon, J., Kim, B.-K. & Kang, J. Fixed point cloud normalization and none-sequential modeling for hand gesture recognition based on short-range mmwave radar sensor’s sparse time-series point cloud. IEEE Sens. J. 24, 10656–10668 (2024).

Chen, C. et al. Clusternet: Deep hierarchical cluster network with rigorously rotation-invariant representation for point cloud analysis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR) 4989–4997 (2019).

Xia, Z. & Xu, F. Time-space dimension reduction of millimeter-wave radar point-clouds for smart-home hand-gesture recognition. IEEE Sens. J. 22, 4425–4437 (2022).

Xie, H., Han, P., Li, C., Chen, Y. & Zeng, S. Lightweight midrange arm-gesture recognition system from mmwave radar point clouds. IEEE Sens. J. 23, 1261–1270 (2023).

Ren, Y., Xiao, Y., Zhou, Y., Zhang, Z. & Tian, Z. Cskg4apt: A cybersecurity knowledge graph for advanced persistent threat organization attributions. IEEE Trans. Knowl. Data Eng. 35, 5695–5709 (2023).

Wang, Z. et al. Threatinsight: Innovating early threat detection through threat-intelligence-driven analysis and attribution. IEEE Trans. Knowl. Data Eng. 36, 9388–9402 (2024).

Du, C., Zhang, L., Sun, X., Wang, J. & Sheng, J. Enhanced multi-channel feature synthesis for hand gesture recognition based on cnn with a channel and spatial attention mechanism. IEEE Access 8, 144610–144620 (2020).

Salami, D., Hasibi, R., Palipana, S. & Popovski, P. A Lightweight Gesture Recognition System from Mmwave Radar Point Clouds. Arxiv, Tesla-Rapture (2021).

Funding

This work was supported by the National Natural Science Foundation of China (Grant 62262061, Grant 62162056, Grant 62261050) , Major Science and Technology Projects of Gansu (23ZDGA009), Science and Technology Commissioner Special Project of Gansu (23CXGA0086), Lanzhou City Talent Innovation and Entrepreneurship Project (2020-RC-116, 2021-RC-81), and Gansu Provincial Department of Education: Industry Support Program Project (2022CYZC-12).

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and design. H.C. Conceptualization, Funding Acquisition, Resources, Supervision, Writing-Review and Editing. X.J. Conceptualization, Methodology, Software, Investigation, Formal Analysis, Writing, Visualization. Z.H. Resources, Funding Acquisition, Supervision; Y.L. Visualization. H.Z Visualization. J.L. Visualization. B.X. Visualization.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

Ethical approval was obtained from the Ethics Committee of the School of Computer Science and Engineering, Northwestern Normal University.

Informed consent

Informed consent was obtained from all subjects involved in the study. Informed consent was obtained from all participants, authorizing the use and publication of their data for research purposes.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions