Abstract

Mask learning has emerged as a promising approach for Text-to-Image Person Search (TIPS), yet it faces two key challenges: (1) There tends to be semantic inconsistency between image regions and text phrases. (2) Current approaches primarily focus on masking text tokens to facilitate cross-modal alignment, overlooking the important role that text plays in guiding the learning of intricate details within images, which can lead to missed opportunities for capturing these details. In this paper, we are excited to introduce our proposed method called Image Region Semantic Enhancement and Symmetric Semantic Completion (RE-SSC). Specifically, our approach comprises two main components: Image Region Semantic Enhancement (IRSE) and Symmetric Semantic Completion (SSC). In IRSE, we initially apply superpixel segmentation to partition images into distinct patches based on low-level semantics. Subsequently, we leverage self-supervised consistency learning to transfer high-level semantic information from the global context of the image for local patches, enhancing local patch semantics. Within the SSC component, we have designed a symmetric semantic completion learning process that operates in both textual and visual directions, emphasizing global as well as local token learning to achieve effective alignment across modalities. We evaluated our method on three public datasets and are pleased to report competitive performance in addressing text-to-image pedestrian searches.

Similar content being viewed by others

Introduction

Text-to-Image person search (TIPS) involves retrieving images of individuals from a large-scale image gallery using text descriptions. Unlike image-based pedestrian search methods1,2,3, TIPS do not require the target pedestrian image as a query, thus overcoming the challenges associated with limited surveillance devices for pedestrian search. This attribute enhances its applicability to various scenarios.

The TIPS task confronts two primary challenges. Firstly, as a cross-modal retrieval task, it grapples with inherent modality heterogeneity between vision and language. Secondly, TIPS is essentially a fine-grained visual recognition task, presenting the challenges of large intra-class differences and small inter-class differences. Consequently, the model must effectively excavate discriminative features. Numerous feature matching methods have been developed to tackle these challenges. Early methods focused on the global representation4,5,6 when aligning images and text. However, they overlooked the fine-grained interaction between locally significant information in images and locally important words in the text. Subsequently, some local matching approaches7,8,9 have attempted to establish correspondences between person body parts in images and noun phrases in texts. In addition, there are methods that incorporate multi-level10,11, multi-granularity12,13,14 matching strategies and specific attention15,16,17 modules to focus on diverse local regions. Despite their superior retrieval performance, these methods require intricately designed models and involve high computational costs, which limits their application in large-scale scenarios.

On another pipeline, many previous methods6,11,14 have utilized backbone networks pre-trained on a single modality, such as Resnet for the image backbone network trained on the ImageNet dataset, and BERT pre-trained on large-scale text. Although these models excel in single-modal tasks, they lack the ability to understand cross-modal relationships and are unable to fully leverage the potential connections between images and text, resulting in limited performance on multimodal tasks. To overcome this limitation, various large-scale multimodal visual language pre-training models18,19,20,21 have been proposed. These models capture the complex relationships between images and text through joint training. Among them, CLIP22, which employs contrastive learning on large-scale image-text pairs, achieves cross-modal semantic alignment and has become a representative model. Building upon this concept, Han et al. pioneered the introduction of CLIP into the TIPS task and proposed the CFine23 method. This method fully leverages CLIP’s cross-modal alignment capabilities, enabling the TIPS task to benefit from CLIP’s pretrained advantages, thereby significantly improving task performance and propelling the field into a new stage of development56,57. However, the primary goal of the CLIP model is to achieve a global match between images and text, and its design does not specifically focus on specific regions or details within images, resulting in certain limitations when handling tasks that require fine-grained alignment.

Fortunately, the introduction of masked vision/text learning24,25,26,27 mechanism opens up a new way to capture fine-grained information. During the masked learning process, the model is tasked with predicting the masked part of the content, which not only demands a deep understanding of global information but also requires attention to the subtle changes in local details. This masked learning mechanism enables the model to more precisely capture the fine-grained correlations between images and text, thereby enhancing its representational power for multimodal tasks. Thanks to this feature of masked learning, researchers have introduced it into the TIPS task to improve the precision and accuracy of image-text interactions. For example, Jiang et al.28 proposed an innovative IRRA method, which cleverly utilizes the Masked Language Modeling (MLM) strategy to gain insight into the deep mystery of the cross-modal relationship between images and text. Similar work includes the APTM29, cMDTPS58 and DCEL30 methods. Nevertheless, these methods primarily focus on masking text tokens, utilizing image information to assist in the semantic recovery of text tokens, thereby learning the alignment between image regions and words. While this approach effectively promotes text-image alignment, it overlooks the direct capture of image details. Relying solely on text to recover image semantics may lead to insufficient attention to fine-grained information within images.

In the cross-modal matching task, the mask modeling can be utilized to facilitate fine-grained interaction. Ideally, the model is expected to fully utilize information from different modalities, using contextual clues from the unmasked parts and information from the other modality to complete the recovery of the masked content29. This symmetric semantic modeling promotes the semantic completion of missing information through inter-modal information exchange. In the following chapters, we refer to this method as Semantic Completion (SC), which ensures comprehensive learning of image and text details, effectively addressing the issue of insufficient learning of image information.

However, in the TIPS task, there are significant differences in the semantic characteristics of images and text, which add an additional layer of challenge to cross-modal alignment. Text is typically represented as discrete vocabulary that carries highly abstract semantic information, whereas images are expressed as continuous, boundary-ambiguous regions, with more nuanced and spatially structured representation. This inherent difference in form makes direct alignment of images and text challenging. Existing methods generally divide the image into a fixed number of regions and attempt to directly map these regions to words in the text. However, this simple segmentation approach may disrupt the semantic integrity of image regions, preventing the accurate capture of image details and context, leading to ambiguous correspondences between words and image regions. Considering that our pedestrian images are rich in various visual and spatial details, such as clothing, accessories, and backpacks, we optimize the segmentation of the image using super-pixel semantic segmentation31,32. This technique aggregates semantically similar pixel regions into unified superpixel blocks, effectively retaining the local semantic information of the image. Compared to traditional uniform segmentation, superpixel segmentation better handles the problem of boundary ambiguity in images, preserving fine-grained image information with greater accuracy during the segmentation process. This way, the image’s local regions, now with semantic consistency, can be aligned with the higher-level semantic information from the text, thereby improving the precision of image-text matching. Although superpixel segmentation performs well in retaining local semantic information, it primarily relies on low-level visual features (such as color and texture). Effective alignment between text and image requires higher-level semantic matching. To bridge this gap, we introduce a self-supervised learning mechanism that extracts higher-level semantic information from global image features through self-supervised consistency learning. Specifically, we transfer the global semantic information of the image to the local superpixel block encoding by sharing the encoding space, ensuring that these local regions can align semantically with the higher-level semantics of the text. This method enhances the local image regions with more precise semantics, enabling finer and more accurate cross-modal alignment between image and text.

Based on the aforementioned analysis, we propose a novel method, RE-SSC, which stands for image Regions semantic Enhancement and Symmetric Semantic Completion for Text-to-Image Pedestrian Search. Our method consists of two core modules: IRSE and SSC. The IRSE module achieves high-level semantic transfer from global visual features to local image block features through self-supervised consistency learning. This is done by sharing an encoding space between the original image encoder and the image momentum encoder. To enhance fine-grained information capture, the IRSE module uses superpixel semantic segmentation to partition pedestrian images into local regions, thereby preserving semantic consistency and solving boundary ambiguity issues. By incorporating high-level semantics, IRSE enables more precise cross-modal alignment between image and text. The SSC module includes two submodules: Local Semantic Completion (LSC) and Global Semantic Completion (GSC). LSC focuses on revealing the subtle connections between words in the text and local image regions, achieving accurate local-to-local alignment. GSC, on the other hand, restores semantic information of masked data by learning complementary knowledge from the corresponding labels in the other modality, ensuring accurate global-to-local alignment. Furthermore, to facilitate joint learning and enhance the retrieval accuracy of text-to-image and image-to-text alignment, we introduce mutual pattern alignment learning loss into our model during training. Experiments evaluations are conducted on three publicly available datasets to validate the effectiveness of our approach.

Our contributions can be summarized as follows:

-

1.

We have proposed a method that makes use of semantic enhancement of image regions and symmetric semantic complementation for text-to-image pedestrian search.

-

2.

We have developed an image region semantic enhancement method, IRSE, which first partitions image regions using a superpixel segmentation algorithm to enable more consistent low-level semantic information within the regions. The image regions are then semantically enhanced using self-supervised consistency learning and shared encoding space to transfer the high-level semantics learned in the global image to the local patches.

-

3.

We have presented a symmetric semantic completion method, SSC, which performs semantic completion in both image and text directions and recovers global and local features through cross-modal interactions. Additionally, the mutual pattern alignment loss is exploited to improve the retrieval accuracy.

-

4.

We conducted extensive experiments and analyses on CUHK-PEDES, ICFGPEDES, and RTSPReid datasets. The experimental results demonstrated that our approach achieved competitive performance.

Related work

Text-to-image Person Retrieval. The main challenge in TIPS is the efficient alignment of image and text features within a joint embedding space to achieve both fast and accurate retrieval. The existing methods primarily focus on feature representation and cross-modal alignment.

In terms of feature representation, early approaches utilized CNNs for visual feature extraction and RNNs for text feature extraction, as shown in related studies5,7,13,14. With the emergence of attention mechanisms, BERT has become a mainstream text feature extractor and has been used in TIPS task. However, these backbone networks (CNNs or BERT) are pre-trained on single-mode datasets. As a cross-modal matching task, TIPS requires the capture of cross-modal information. In 2021, the OpenAI team proposed the CLIP22 model, which was successfully applied to downstream tasks, bridging the text-image gap through a simple contrast learning method. CFine23 and IRRA28 subsequently employed the CLIP model in TIPS, elevating the performance to new heights. Consistent with these methods, our RE-SSC method also uses CLIP as the backbone network. However, our focus lies in the design of the IRSE module. It enhances the image feature representation by integrating low-level semantics (i.e., SP-Patch features) and high-level semantics (referring to the local region features of the image that have been fused with global semantic information after being processed by the IRSE module), further improving the model’s ability to capture cross-modal information. This is an area that existing methods have not adequately addressed.

In terms of cross-modal alignment, early studies have focused on globally aligned images and text representations, as exemplified by Zhang5 et al., who learned global representations before aligning images with sentences. However, such methods overlook details that affect the retrieval performance. Recent approaches emphasize multi-scale alignment, where local-level alignment complements global-level alignment. Gao et al.14 proposed non-local alignment of full-scale representations, highlighting the necessity of alignment between different scales. You et al.16 introduced a hierarchical Gumbel attention network, adaptively selecting semantically relevant image regions and words/phrases for precise alignment. Although these methods exhibit improved retrieval results, they require careful design of the alignment rules and feature complex network structures. Moreover, adaptive alignment with attention, although effective, requires storing all local areas and calculating the attention coefficients between all parts, incurring significant computational and memory overheads, making them less suitable for real-world scenarios. Our RE-SSC method proposes the SSC module, consisting of the LSC and GSC branches. It achieves local and global semantic completion in a relatively simple and effective way, optimizing the alignment between images and text. This not only improves retrieval performance but also reduces computational and memory costs, representing an important improvement over existing cross-modal alignment method.

Cross-modal semantic completion. Semantic completion involves recovering missing content within a context based on existing information and context and is commonly implemented through a mask mechanism. In Natural Language Processing (NLP), MLM inspired by the Cloze task33 has been adopted, particularly by BERT24. This strategy randomly masks the input tokens and predicts the original vocabulary IDs of masked words using contextual information. Building on the success of MLM in NLP, scholars have extended it to the field of vision by introducing Masked Vision Modeling (MVM). Studies such as BEiT25 and VIMPAC34 have been successfully explored for image classification and action recognition tasks. Specifically, BEiT employs a BERT-like pre-training strategy to recover the original visual tokens from the masked image patches. Other related studies include MAE35, mc-BeiT36, and VQ-VAE37. However, these methods conduct mask completion within the context of a single modality, limiting the effective consideration of multi-modal feature interactions and impeding the alignment of visual and textual modalities.

Recognizing the significance of intermodal interaction in cross-modal tasks, recent visual-language pre-training endeavors have delved into masking token/patch interactions. For example, M3 AE38 randomly masks a unified sequence of image patches and text tokens, encoding visible image patches into embeddings with the same dimensions as the language embeddings for the joint training of the two modalities. VLMAE39 introduced a region masking image modeling task, masking specific patches in the input image and reconstructing original pixels based on visible patches and corresponding text to enhance multi-modal feature fusion. Tu26 et al. propose a novel semantic completion learning task, complementing existing masked modeling tasks to facilitate global-to-local alignment. However, our RE-SSC method presents a new perspective on cross-modal semantic completion. Our SSC module, through its unique design, can better promote semantic interaction between images and text. While compensating for the insufficient consideration of multi-modal feature interaction in existing methods, it further improves the performance of cross-modal retrieval, which is also one of the important contributions of our manuscript.

Methodology

A. Overview

In this section, we will first briefly present the overall framework of our RE-SSC model, and then provide the overall framework. Subsequently, we describe the details of the image region semantic enhancement module RE and the symmetric semantic completion SSC module.

The RE-SSC method consists of two core modules that work collaboratively and complementarily: the IRSE module and the SSC module. The IRSE module aims to mitigate the semantic inconsistency between image regions and textual phrases by enhancing the local semantics of the image. Initially, the input image undergoes random augmentation to generate two distinct views (view1 and view2). Next, the image is divided into semantically consistent regions using the superpixel segmentation algorithm. Then, through self-supervised consistency learning and shared encoding space, the high-level semantic information of the global image is transferred to the local regions, providing support for subsequent cross-modal alignment and semantic completion.

The SSC module is primarily responsible for aligning the image and text, and further enhances cross-modal retrieval accuracy through symmetric semantic completion. This module consists of two branches: LSC and GSC. LSC focuses on aligning image local regions with textual phrases, while GSC is concerned with completing the global semantic alignment between the image and text. In the SSC module, the encoded image and text features are randomly masked and then inputted into the LSC and GSC branches, where cross-modal semantic completion occurs, recovering the masked image and text features. This process promotes more precise semantic alignment between the image and text.

During training, the IRSE and SSC modules are jointly optimized, and they mutually reinforce each other to improve model performance. The IRSE module provides more accurate image input to the SSC module, while the SSC module completes the semantic information of the image and text, which in turn retroactively optimizes the expression of image features. Ultimately, the collaborative interaction between the IRSE and SSC modules enables the RE-SSC method to effectively bridge the semantic gap between images and text, providing efficient cross-modal retrieval capabilities.

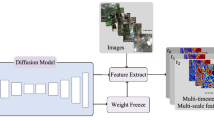

As illustrated in Fig. 1, our framework comprises two components: IRSE and SSC. In the IRSE module, the input image undergoes data augmentation to generate two views and is then divided into semantically consistent regions using SLIC. These regions are processed by the image encoder, and through self-supervised consistency learning, global high-level semantics are transferred to the local regions. Meanwhile, the text is encoded by the encoder ET . The encoded image and text features are randomly masked and passed into the SSC module. In SSC, the GSC branch handles global-local cross-modal feature completion, while the LSC branch focuses on local-to-local feature alignment. Using GSC and LSC loss functions, we achieve symmetric semantic completion in both image and text directions. During training, the IRSE and SSC modules are jointly optimized. IRSE enhances local semantics and transfers global semantics to support cross-modal alignment, while SSC refines the image encoder through image-text alignment, improving retrieval performance.

Overview of our RE-SSC framework.

B. Image regions semantic enhancement

An image is composed of consecutive pixel that are continuous in spatial location. At the same time, the information in an image may be ambiguous and the boundaries between objects are not always clearly recognizable. In contrast, semantics in text is typically built on high-level linguistic elements (e.g., words, phrases). These high-level concepts and structures may be difficult to map directly to an image. This semantic inconsistency causes difficulty in direct alignment between the two modalities. Therefore, in this paper, we design the IRSE module to enhance the image region semantics for better alignment with text semantics. Here we first describe the feature extraction of image and text, and then give the specific semantic enhancement implementation process of our IRSE.

Feature extraction. Let {Ii, Ti }Ni=1 be a training set of N image-text pairs, where Ii represents the person image and Ti denotes the corresponding text description. We use the pre-trained CLIP22 model as our feature extractor. Specifically, in the CLIP model, we use the ViT40 model to directly extract the image embedding from an input image Ii∈ RH×W×C, where H and W represent the height and width of the image, and C denotes the number of channels. The input image Ii is divided into non-overlapping patches with a fixed size of M = H×W/P2, where P denotes patch size. These patch sequences are then mapped to m-dimensional 1D tokens using a trainable linear projection. After incorporating positional embedding and adding an additional [CLS] token, the token sequence {Iicls, Ii1,…, IiM} is input into L-layer transformer blocks to capture correlations among individual patches. Finally, a linear projection maps Iicls into joint image-text embedding space to serve as a global image representation. For convenience, we use Ii instead of global image embedded.

According to existing work, we use the CLIP text encoder to extract the representation of input text Ti. The lower-cased byte pair encoding (BPE) with a 49,152-vocab size is used to tokenize the input text description. The tokenized text is then input into a transformer model with self-attention mechanisms to capture correlations among tokens. Finally, the embedded representation of the i-th token and the feature corresponding to [EOS] are designated as the overall embedded CLS token of Ti. The textual tokens are represented as {Tisos, Ti1,…, TiW, Ticls}, where W represents the total word count. For simplicity, we subsequently employ the abbreviation Ti to denote the global feature Ticls.

So far, we only extracted image and text features without any interaction between modalities. Following the approach in 24, we used a modal interaction module to enable interaction between image and text. As shown in the SSC module in Fig. 1, each layer in the interaction module includes two modality-specific self-attention blocks and two cross-attention blocks. For example, in the visual self-attention block, intra-modal interaction occurs, while in the language-to-visual cross-attention block, cross-modal interaction takes place.

Semantic Enhancement. Drawing inspiration from IBOT41, in order to obtain more meaningful visual regions, our IRSE module obtains high-level semantic information from global image features and transfers it to image local patches. This process is realized by self-supervised coherent learning and shared feature encoding space.

Concretely, in accordance with IBOT, given an input image I1, we generate two different views \({I_{1,view1}}\) and \({I_{1,view2}}\) by random augmentation strategies, including random cropping, horizontal flipping, and color jittering. These strategies are designed to enhance the model’s robustness to variations in perspective, symmetry, and color. These two views are then fed into the raw image encoders \({E_I}\) and the momentum image encoder \({\hat {E}_I}\) to obtain their respective [CLS] tokens \(I_{{1,view1}}^{{cls}}\) and \(I_{{1,view2}}^{{cls}}\). Both encoders have the same structure, where the momentum encoder is derived from, and its parameters are obtained via EMA the parameters of EI during the iterative updating process of the model:

where m is a momentum coefficient. In addition, Besides, we add two encoding heads \({H_I}\) and \({\hat {H}_I}\) on top of \({E_I}\) and \({\hat {E}_I}\) respectively. Each encoding head is an MLP module, which transforms the extracted image features into categorical distributions in encoding space. Here the parameters of \({\hat {H}_I}\) are also obtained via EMA the parameters of \({H_I}\).

We enforce semantic consistency between view1 and view2 by self-supervised learning constraints as follows:

where CE denotes cross entropy loss. Addition, for migrating the high-level semantics obtained above to the local patches, we introduce a MIM learning mechanism to randomly mask the local patches of view1 and use the MIM loss to constrain the semantic consistency between the local patches. Since our CLS token and patch token share the same encoding space, with this design we achieve the transfer from global high-level semantics to local semantics. the MIM loss is as follows:

where, M is the number of masked patches. The overall loss of our IRSE module is:

Image patch partitioning based on superpixel segmentation (SP-Patch). Pedestrian images contain a wide range of visual and spatial information, including intricate details of clothing, accessories, and bags. Clothing accessories come in all shapes, sizes, and styles. In the previous approach, ViT40 encoded the image evenly into a fixed number of non-overlapping blocks; however, this could potentially disrupt the structure of local objects. It is worth noting that in image processing, semantically similar regions are often spatially close due to the continuity of visual perception and the relevance of semantic information. Inspired by this, we utilize the semantic segmentation technology of superpixel to divide semantically similar regions into unified pixel blocks. This method ensures the structural integrity of each patch at the boundary.

Superpixel31 are clusters of pixels with similar properties that form larger, more representative elements. These new elements capture features of adjacent structures, such as texture, color, brightness, and other properties, enhancing semantic accuracy within pixel blocks. The SLIC42,43 algorithm is widely used due to its advantages in simplicity and speed. Therefore, in this study, to ensure the consistency of low-level semantics and spatial integrity of local regions in the image, we adopt the SLIC42 algorithm to generate image patches, replacing the traditional uniform patch partitioning. A predetermined value K is used in SLIC to regulate the formation of superpixel blocks, that is, the number of superpixel blocks obtained. In the implementation process, the initial number of blocks is set to K = 140, and after connected component analysis, the final number of superpixel blocks \(K^{\prime}\) is approximately 128, ensuring that each image patch retains semantic consistency (such as complete clothing or accessory areas). The generated superpixel block set P = {pi, i = 1, 2,..., K’} serves as visual tokens, which are aligned with the high-level semantics of the text. Ablation experiments on the CUHK-PEDES dataset show that, compared to uniformly divided image patches, SP-Patch design increases the R@1 accuracy by 0.98% (see Table 1). This result demonstrates that superpixel segmentation of image regions helps better preserve local structural information, thereby alleviating the semantic inconsistency between image and text.

Overall, in the IRSE module, we utilize SP-Patch to generate image patches, preserving the integrity of local structures (such as accessory edges) through low-level visual features like color and texture. Meanwhile, through self-supervised consistency learning (Eqs. (2–4)) and shared encoding space, we inject high-level semantics from the global image features (such as object categories and attribute combinations) into the local patch encoding, providing contextual guidance for fine-grained alignment. This design, through the synergy of local partitioning and global semantic transfer, achieves multi-level enhancement of the local image semantics.

C. Symmetric semantic completion for TIPS

In this section, we will describe our SSC module in detail. The inspiration behind it is that paired texts and images data provide different perspectives of the same semantics, and after cross-modal interaction, the missing semantics of masked data can be completed by capturing information from the other modality26. Therefore, we designed an SSC module to mask local tokens in images and texts respectively, and then use masked images (texts) data and complete semantic completion of unmasked texts (images) data. Since the combination of global and local cross-modal alignment has become the dominant paradigm, and following this pipeline, we also use this construct for cross-modal alignment. Then, two branches, LSC and GSC, are designed in our SSC module to focus on the local tokens and global CLS tokens information after completion, respectively. It is worth noting that our method is different from the previous method28,29 of masking only text tokens by adopting a symmetric method and carrying out symmetrically masking semantics in the text and image domains. Next, we will talk about our LSC and GSC branches in more detail. Subsequently, we will gradually introduce our LSC and GSC branches.

Local semantic completion (LSC). The LSC branch aims to complete the semantic completion of masked local tokens using unmasked data and attempts to uncover the relationships between words in text and local regions within the images. To elaborate, we first randomly mask the image or text tokens to obtain {Imask, T} and {I, Tmask}, respectively. Subsequently, the two couples of data were transmitted to the modal interaction module. The recovered features of masked data were obtained by leveraging information from the other modality to complete its missing semantic information:

here, IRe_t and TRe_t are the recovered output features of the masked data, and ICo_t and TCo_t refers to features of complete data.

Then, we simultaneously performed masked vision semantic completion and masked language semantic completion. Ultimately, we facilitated the alignment between the recovered features and their complete one in the form of contrastive learning. InfoNCE44 loss was adopted to ensure that the mask completion features closely resembled the corresponding pre-mask features:

Within the LSC branch, a mask language modeling, i.e., MLM loss, was introduced by randomly masking 15% of the text tokens substituted with either the [MASK] token, random words, or leaving them unchanged, with probabilities of 80%, 10%, and 10%, respectively. Subsequently, the model engaged in a vocabulary-based classification task to predict the masked words, resulting in the computation of classification loss:

where, Tmask represents the output masked token feature, φ is used as a classifier, and y denotes the original token ID. LSC uses cross-modal interactions to reconstruct the semantic features of masked local tokens, which can effectively learn the local-local correspondence between images and texts.

Global semantic completion (GSC) For each image-text pair, akin to Eq. (5), we sent two sets of mask data {Imask, T} and {I, Tmask} to the feature interaction encoder, respectively. Following the cross-modal interaction, the encoder reconstructed the global CLS token and produced recovered global features IRe and TRe derived from the masked data, along with the complete data global features ICo and TCo. GSC focuses on the global CLS token representation learning. The final constraint is to minimize the difference between the recovered global CLS feature and the complete global CLS feature. The contrastive learning loss on global CLS token is conducted as follows:

note that ICo and TCo represent the complete global features for image and text CLS tokens, while IRe and TRe denote the corresponding recovered global CLS tokens. The negative samples are global features of other complete images or texts in a batch. It is noteworthy that ICo and TCo were detached for gradient backward propagation, a strategy that can enhance the model’s emphasis on the recovery of global features.

Global semantic completion learning loss is defined as:

Minimizing Eq. (10) serves to establish similarity between the global feature IRe extracted from the masked image and ICo derived from the complete image (similarly for TRe and TCo). This minimization process facilitated the recovery of semantic information of the masked data. It enabled global representations to acquire supplementary knowledge from corresponding tokens in the other modality, thereby achieving precise global-local alignment.

SSC module losses are the sum of LSC and GSC losses:

In addition to the aforementioned losses, we incorporated ID losses5 and contrast losses45 applied to global features. Specifically, within a batch of image-text pairs, the text-to-image (T2I) and image-to-text (I2 T) contrast losses are as follows:

$$\begin{aligned} {L_{T2I}}= - \frac{1}{N}\sum\limits_{{i=1}}^{N} {\log \frac{{\exp (sim({T_i},{I_i})/\tau )}}{{\sum\nolimits_{{n=1}}^{N} {\exp (sim({T_i},{I_n})/\tau )} }}} \\ {L_{I2T}}= - \frac{1}{N}\sum\limits_{{i=1}}^{N} {\log \frac{{\exp (sim({I_i},{T_i})/\tau )}}{{\sum\nolimits_{{n=1}}^{N} {\exp (sim({I_i},{T_n})/\tau )} }}} \end{aligned}$$(12)

Ti and Ii denote the pair of positive samples, and Ti and In denote the pair of negative samples. The global feature contrast loss is:

Mutual pattern alignment (MPA). Previously, most of the backbone15,25 networks employed a straightforward approach of averaging losses in both directions, as shown in Eq. (13). However, the two directions were trained independently and unable to mutually enhance each other. Inspired by the principles of mutual learning46, we introduced a mutual pattern alignment47 scheme to promote each other together.

Taking T2I as an example, for the query text T, the image gallery is defined as DI. We firstly conducted cross-modal positive sampling for the query T and each neighbor Ii in DI. Concretely, for a given sample, we initiated a search for its positive matches in the opposite modality based on pairwise ground-truth information. In cases where multiple positives exist, we uniformly sampled one of them. This process yielded T′ = δ(Ii) and I′i = δ(Ti), where δ(·) signifying the sampling function. Afterward, we calculated two separate similarity distributions centered around I and T′, as follows:

Finally, the alignment loss LMA is defined based on Kullback–Leibler divergence DKL as:

The mutual alignment loss calculation procedure in I2T is similar to T2I. The final total loss function as follows:

Results

Datasets and implementation details

Dataset. We assessed the efficacy of our approach by conducting evaluations on three publicly available text-based person retrieval datasets, namely, CUHK-PEDES48, ICFG-PEDES17 and RSTPReid49.

CUHK-PEDES contains 40,206 images and 80,412 textual descriptions for 13,003 identities. The training set consists of 11,003 identities, and validation set and test set have 1,000 identities. ICFG-PEDES contains 54,522 images for 4,102 identities. Each image corresponds to one description. The training and test sets contain 3,102 and 1,000 identities respectively. RSTPReid is a new real scene cross-modal person re-identification dataset containing 20,505 images of 4101 different identities from 15 cameras, which is collected from the MSMT7 dataset. There are 5 images for each identity, which are taken under different camera, time, place, weather, lighting and viewpoint conditions, and each image has two text descriptions. The training, validation and test sets contain 3701, 200 and 200 identities respectively.

Evaluation metrics. We adopted the widely recognized Rank@k metrics, denoted as R@K (with K = 1, 5, 10), as our primary evaluation criteria. R@k quantified the probability that, when presented with a textual description as a query, at least one matching person image could be identified within the top-k candidate list. Furthermore, we calculated the mean average precision (mAP), the average precision across all queries.

Implementation details. As previously mentioned, we employed the pre-trained model CLIP22 as our backbone network due to its high performance and widespread use in this field. Moreover, the core of our RE-SSC method focuses on image semantic enhancement and symmetric semantic completion, employing CLIP helps eliminate any confusion regarding performance improvements, ensuring that they are attributed to our designed components rather than differences in backbone networks. To ensure the fairness of the experiment, we utilized the same version of CLIP- ViTB/16 as IRRA. In terms of data preprocessing, the images were first resized uniformly to 384 × 128 pixels and normalized. Subsequently, data augmentation techniques such as random horizontal flipping, random cropping with padding, and random erasing were applied. For text data, tokenization, stop-word removal, stemming, and lowercasing were performed. Additional text augmentation strategies, such as random masking, token replacement, and token deletion, were applied, with a maximum token sequence length set to 77. During training, we used the AdamW50 optimizer for a duration of 60 epochs, commencing with an initial learning rate of 1 × 10−5 and following a cosine learning rate decay schedule. The batch size employed was 64. In the experiment, the number of superpixel segments K was set to 140. After connected component analysis, the final number of superpixel blocks, denoted as K′, was 128. We used a standard SLIC compactness factor of 20. Within the LSC module, the mask ratios for images and text were set to 75% and 30%, respectively. Conversely, within the GSC module, we employed a higher mask rate, with masking rates set to 75% for images and 40% for text. Furthermore, the temperature parameter τ was set to 0.03.

Comparison experiments between RE-SSC and other state-of-the-art methods

In this section, we evaluated the effectiveness of our model on three benchmark datasets, namely CUHK-PEDES, ICFG-PEDES, and RSTPReid. Tables 2, 3 and 4 present a comprehensive comparison of our method with other comparative methods on different datasets. The performance metric data of all competing methods are sourced from the results reported in their original papers, and strictly adhere to the standard evaluation protocol for the TIPS task. To ensure the fairness of the experiments, we re-ran the baseline methods (e.g., IRRA) and our method (RE-SSC) under the same dataset partitions and experimental configurations. Please refer to the experimental section for detailed experimental procedures.

The comparison methods we selected primarily focus on the impact of the CLIP model. We included both competitive methods that do not use CLIP pre-training and advanced methods that integrate CLIP pre-training. The methods in the tables are categorized into two groups based on whether they use CLIP pre-training: “w/o CLIP” and “w/CLIP.” The results show that CLIP pre-trained models outperform their non-CLIP counterparts across all metrics, with a particularly significant difference in the R@1 metric. For example, in Table 2, the maximum performance improvement after integrating the CLIP model is 11.12% (R@1 increased from 65.59 to 76.71%). Similarly, Tables 3 and 4 show that, with CLIP integration, performance improved by 9.29% (from 59.02 to 68.31%) on the ICFG-PEDES dataset and by 18.72% (from 48.40 to 66.90%) on the RSTPReid dataset. These results demonstrate the strong knowledge transfer capability of the CLIP model, which significantly aids in the TIPS task.

Next, we conducted a comparative analysis of all methods using CLIP pre-training on the three datasets. We observed that CFine23 and RDE51 did not adopt the MLM mechanism, while the other methods, including ours (RE-SSC), did. The experimental results indicate that methods using the MLM mechanism slightly outperform those that do not, suggesting that MLM, by capturing the semantic correlations between different modalities, helps improve cross-modal alignment accuracy. Although RDE achieved promising results, it introduced a dedicated noise-handling module. In contrast, our method does not rely on an additional noise processing module, but instead leverages semantic completion learning to achieve effective alignment between image and text, thus simplifying the model structure.

Finally, we compared our RE-SSC method with existing methods that use mask learning techniques (e.g., RaSa51, IRRA52, and APTM29). The experimental results show that our method consistently outperforms these existing methods across all datasets, achieving competitive performance. The main advantage of our method lies in the use of the symmetric semantic completion strategy. Unlike RaSa, IRRA, and APTM, which only apply masking to text tokens, our RE-SSC model performs mask completion learning on both text and image modalities, which more effectively captures the fine-grained semantic relationships between image and text. Additionally, our IRSE module enhances the local semantics of the image, providing the SSC module with fine-grained image features. These two modules mutually promote and optimize the cross-modal alignment between image and text.

Three cases of retrieval results on the CUHK-PEDES dataset, comparing the IRRA method (first row) and our method (second row). Images are ranked by predicted similarity scores in descending order (left to right: Rank-1 to Rank-10), with green boxes indicating ground-truth matches.

In conclusion, the incorporation of the CLIP model significantly enhances retrieval performance, validating its powerful knowledge transfer capability. Our proposed IRSE and SSC strategies mutually reinforce each other, further optimizing the semantic alignment between image and text, and leading to a significant improvement in retrieval accuracy. The experimental results confirm the effectiveness of our proposed method in the TIPS task, demonstrating its robust cross-modal alignment capability.

Case Analysis. As the proposed RE-SSC method builds upon the IRRA baseline model, we conducted a case analysis using the CUHK-PEDES dataset to visually demonstrate the superior performance of RE-SSC. Specifically, for the same query text, we employed the RE-SSC method and the baseline IRRA method respectively to perform image retrieval. Subsequently, the retrieval results were arranged in descending order according to their similarity to the query text (where similarity diminishes from left to right). Figure 2 depicts the top 10 sorted retrieval results. In each instance, the query text is on the left, while the top-10 retrieval results of the two methods are exhibited on the right. The outcomes of the IRRA method are shown in the upper row, and those of the RE-SSC method are in the lower row. The positive sample images that truly match the query are demarcated with green borders. Evidently, a higher ranking of a correctly retrieved image signifies better retrieval performance of the corresponding method.

The visualization outcomes suggest that, in comparison with the baseline IRRA method, the RE-SSC method can notably elevate the ranking of positive samples within the sequence in the majority of cases. This leads to more precise and rational image sorting, which strongly corroborates its preeminent performance in fine-grained semantic alignment and cross modal retrieval accuracy. Conversely, the IRRA method can merely identify images that partially correspond to the text in certain retrieval results, rendering it arduous to attain complete alignment between images and text.

For instance, in Case (A), both methods zeroed in on the description of “an orange shirt with white letters”. Nevertheless, the IRRA method incorporated terms such as “boy” and “female” in the results, neglecting the vital descriptor “male”. A comparable scenario was witnessed in Case (B). All the retrieved images featured “a pink scarf” and “a dark purple jacket”, yet the IRRA method over - emphasized trivial details and disregarded the broader context of “a lady”, giving rise to results such as “a little girl wearing a helmet”. These two cases comprehensively underscore the advantages of the RE-SSC method. It can not only accurately capture fine- grained information but also effectively achieve multi-modal alignment on a global scale.

In Case (C), our method manifested greater accuracy in identifying matching images. Even under mismatching circumstances, our model could accurately discern the detail of “holding a little girl”. On the contrary, the IRRA method had a tendency to concentrate on the “shirt” aspect more often. In some cases, it even misinterpreted other pedestrians in the image as “holding a little girl”. This observation indicates that our method possesses comprehensive comprehension capabilities, being able to capture both local information and maintain a keen awareness of the overall semantics to accomplish holistic matching.

Ablation Study

In this section, we conducted an ablation study on CUHK-PEDES datasets to examine the effects and contributions of each component proposed our approach. Here, we use the IRRA method as the baseline network to evaluate the performance of our two modules, IRSE and SMC. Additionally, we incorporated MPA loss. Table 1 shows the results in terms of R@1, R@5, and R@10. Accuracy (%).

The IRSE module enhances the image feature representation by integrating low-level (i.e., SP-patch) and high-level semantic information (referring to the local region features of the image that have been fused with global semantic information after being processed by the IRSE module), thereby improving the model’s performance. According to the results in Table 1, the model’s performance was significantly improved after incorporating the IRSE module. It can be observed that the low-level semantics (Row 2) focus on the low-level texture and edge features of the image, making the semantics of local regions more consistent and leading to a 0.36% increase in R@1. The high-level semantics (Row 3) transfer the global high-level semantic information to the local regions of the image, endowing local image patches with more contextual information. This alleviates the semantic inconsistency between local image regions and text phrases, resulting in a further 0.62% increase in R@1. When both low-level and high- level semantics are utilized simultaneously (Row 4), the performance is improved by 0.76%, further validating the effectiveness of the IRSE module in enhancing the image semantic representation. These results indicate that the combination of low-level details and high-level semantics can more comprehensively characterize image features, thereby improving the retrieval accuracy. Moreover, the enhanced image features provide a more accurate image input for the subsequent SSC module.

The SSC module consists of two branches: LSC and GSC, aiming to improve the alignment between images and text. In Table 1, the LSC (Row 5) focuses on the alignment of local features between images and text, with a 2.3% increase in R@1, which verifies the importance of LSC for the model’s performance. After introducing the GSC (Row 10), R@1 is further increased by 2.3%, demonstrating the significance of both global and local semantic completion in cross modal alignment. The GSC branch complements the relationship between the global semantics of the image and the text, enabling the model to not only perform local region alignment but also conduct semantic completion on a global scale. Finally, when the IRSE module and the SSC module are used in combination (Row 14), the R@1 of the model reaches 76.71%, achieving the best performance. This result validates the synergistic effect of the two modules: the IRSE module enhances the image’s local semantics and transfers global semantics, improving the image’s feature expression ability and providing a more accurate image input for the SSC module. The SSC module, in turn, further optimizes the alignment between images and text through local and global semantic completion, enhancing the cross-modal retrieval performance.

The introduction of the MPA loss further optimizes the alignment between images and text, resulting in a certain performance improvement. In the IRSE module (comparing Row 3 and Row 4) and the SSC module (comparing Row 13 and Row 14), R@1 is increased by 0.05% and 0.08% respectively, indicating that the MA loss effectively promotes the retrieval capabilities of both text-to-image (T2I) and image-to-text (I2 T).

We conducted a t-test statistical verification between the IRRA and RE-SSC methods, analyzing the results of four metrics (R@1, R@5, R@10, and mAP) during training on three datasets. Figure 3 shows that the t-test results show that the p-values of all four indicators are less than 0.05, and the t-values are negative. This indicates that there are significant differences in the performance between the RE-SSC and IRRA methods, and the mean values of the RE-SSC method for these indicators are larger, which preliminarily reflects its performance advantage.

In the box plots, the medians of the RE-SSC method for the four indicators are all higher than those of the IRRA method, indicating that most of its data values are larger. The boxes of the RE-SSC method is generally shorter, and the whiskers are also shorter, suggesting that the data is more concentrated and stable, as seen in the mAP indicator. The RE-SSC method has fewer outliers, or the outliers have less impact on the overall data, while the IRRA method has more outliers far from the box in some indicators (such as R@5), which shows that the RE-SSC method is more reliable. In summary, the RE-SSC method shows significant differences from the IRRA method in the four key indicators. It also performs better in terms of central tendency, data dispersion, and outliers, indicating that its overall performance is superior.

Box plots of the t- test between RE-SSC and the baseline method.

The RE-SSC method we proposed innovatively introduces a visual image block partitioning strategy based on superpixel segmentation. Compared with the traditional regular block partitioning method, this strategy can perform more reasonable block partitioning according to the intrinsic features of the image. Determining the optimal number K of superpixel blocks is crucial for the accuracy and effectiveness of image retrieval. Therefore, a series of experiments were conducted to explore the influence of the K value on retrieval performance.

Figure 4 shows that the K value has a significant impact on the superpixel segmentation results. When the K value is too small (e.g., K = 40), the superpixel blocks are too large, and the semantics within the blocks are blurred and mixed, which is not conducive to capturing the key information of the image. On the contrary, when the K value is too large (e.g., K = 200), the image will be segmented into a large number of tiny regions, resulting in the loss of the overall semantic information. The segmentation results are fragmented, similar to the traditional regular segmentation.

Figure 5 presents the influence of different K values on the R@1 performance. As the K value increases, the R@1 accuracy shows an upward trend. This is because the increase in the number of superpixel blocks makes the semantics within the blocks more consistent, which is beneficial for capturing the detailed information of the image. However, when the K value exceeds a certain threshold, the R@1 will decline. The reason is that, in order to increase the number of blocks, the algorithm partitions pixels with similar semantics into different blocks, thus destroying the semantic coherence.

Results of superpixel visual patch division.

Quantitative experiments with the parameter K.

In our IRSE module, we use the image blocks obtained after SLIC segmentation as the input for the subsequent model. It is found that the number of image blocks obtained after SLIC segmentation is closely related to the overall retrieval performance of the model. To more intuitively demonstrate the influence of different K values on the retrieval results, we provide two image retrieval cases. Figure 6 shows the ranking results of the top-10 images retrieved under different K values during the training process on the CUHK-PEDES dataset. Among them, the green boxes represent the truly retrieved matching images. The higher the ranking, the better the retrieval performance and the higher the accuracy. Taking Case A as an example, when K = 40, the number of segmented blocks is small, and they contain too much heterogeneous information, resulting in the correctly retrieved images being ranked low. When K ≈ 130, the segmentation results are more refined, and the correctly retrieved images are ranked significantly higher, which is consistent with the results in Fig. 5. When the value continues to increase, an excessive number of superpixel blocks will destroy the semantic consistency, leading to a decline in retrieval performance. Case B shows a similar result trend to Case A.

Considering these two cases and the overall experimental results, the optimal R@1 performance can be achieved when K ∈ [125,135]. Therefore, to ensure the accuracy and efficiency of image retrieval, we set the number of superpixel blocks to 130.

CUHK-PEDES Dataset: Ranking of Top-10 Image Retrieval Results under Different Values of K.

Conclusion

In this paper, we proposed an image regions semantic enhancement and symmetric semantic completion method for TIPS. Firstly, for the existence of semantic inconsistency in cross-modal alignment of image and text, we designed the IRSE module to transfer the high-level semantics from the global visual features of the image to the image-local patches through self-supervised consistency learning and shared encoding space. Semantic enhancement is achieved for image local patches. Then we proposed SSC module to perform mask semantic complementation symmetrically in both image and text directions. The designed SSC recover the global and local features of mask data through cross-modal interaction to facilitate local-local and global-local cross-modal alignment. We conducted extensive experiments and demonstrated excellent performance on multiple benchmark datasets, proved the effectiveness of our method.

This study has limitations in two aspects. In terms of computational complexity, the current method has a relatively high number of parameters (170 M), which impacts the actual deployment efficiency. The insufficient scale and diversity of the existing data limit the generalization ability of the model. In the future, we will consider exploring model compression and pruning techniques and designing lightweight architectures to reduce the number of parameters. We will utilize diffusion models and other methods to generate diverse data and combine transfer learning to enhance cross - scenario adaptability, aiming to improve the performance in complex environments.

Data availability

The data used to support the findings of this study have been included within this published article.

References

Subramanyam, A. V. Meta generative attack on person reidentification. IEEE Trans. Circuits Syst. Video Technol. 33(8), 4429–4434 (2023).

Jambigi, C., Rawal, R. & Chakraborty, A. Mmd-reid: A simple but effective solution for visible-thermal person reid. In: 32nd British Machine Vision Conference 2021, BMVC 2021, Online, November 22–25, 2021, p.116. BMVA Press, Virtual (2021).

Gabdullin, N. Combining human parsing with analytical feature extraction and ranking schemes for high-generalization person reidentification. (2022). CoRRabs/2207.14243.

Fu, M. et al. Improving person reidentification using a self-focusing network in internet of things. IEEE Internet Things J. 9(12), 9342–9353 (2022).

Zheng, Z. et al. Dual-path convolutional image-text embeddings with instance loss. ACM Trans. Multim Comput. Commun. Appl. 16(2), 51–15123 (2020).

Zhang, Y. & Lu, H. Deep cross-modal projection learning for image-text matching. In Computer Vision ECCV 2018–15th European Conference, vol. 11205, pp.707–723 (2018).

Han, X., He, S., Zhang, L. & Xiang, T. Text-based person search with limited data, vol. abs/2110.10807, (2021). https://arxiv.org/abs/2110.10807

Niu, K., Huang, Y., Ouyang, W. & Wang, L. Improving description-based person re-identification by multi-granularity image-text alignments. IEEE Trans. Image Process. 29, 5542–5556 (2020).

Chen, Y., Zhang, G., Lu, Y., Wang, Z. & Zheng, Y. TIPCB: A simple but effective part-based convolutional baseline for text-based person search. Neurocomputing 494, 171–181 (2022).

Zhang, J. et al. Global-local graph convolutional network for cross-modality person re-identification. Neurocomputing 452, 137–146 (2021).

Wang, Z., Fang, Z., Wang, J. & Yang, Y. Vitaa: Visual-textual attributes alignment in person search by natural language. Eur. Conf. Comput. Vis. - ECCV. 12357, 402–420 (2020).

Farooq, A., Awais, M., Kittler, J. & Khalid, S. S. Axm-net: Implicit cross-modal feature alignment for person re-identification. In: Thirty-Sixth AAAI Conference on Artificial Intelligence, AAAI 2022, pp. 4477–4485 (2022).

Suo, W. et al. A simple and robust correlation filtering method for text-based person search. In Computer Vision ECCV 2022-17th European Conference, vol. 13695, pp. 726–742 (2022).

Gao, C. et al. Contextual non-local alignment over full-scale representation for text-based person search. (2021). CoRR abs/2101.03036.

Li, S., Cao, M. & Zhang, M. Learning semantic-aligned feature representation for text-based person search. In: IEEE International Conference on Acoustics, Speech and Signal Processing, ICASSP 2022, pp. 2724–2728 (2022).

You, K. et al. Cross-modal feature fusion-based knowledge transfer for text-based person search[J]. IEEE. Signal. Process. Lett., (2024).

Ding, Z., Ding, C., Shao, Z. & Tao, D. Semantically self-aligned network for text-to-image part-aware person re-identification. CoRR. abs (2021). /2107.12666.

Chen, Y. et al. More than just attention: Improving cross-modal attentions with contrastive constraints for image-text matching. In: IEEE/CVF Winter Conference on Applications of Computer Vision, WACV pp.4421–4429. IEEE(2023). (2023).

Li, J., Li, D., Xiong, C. & Hoi, S. C. H. BLIP: bootstrapping language-image pretraining for unified vision-language understanding and generation. In: International Conference on Machine Learning, ICML 2022, pp. 12888–12900 (2022).

Yang, J. et al. Vision-language pre-training with triple contrastive learning. In:IEEE/CVF Conference on Computer Vision and Pattern Recognition, CVPR2022, pp. 15650–15659 (2022).

Lee, N. et al. Survey of social bias in vision-language models. (2023). CoRR abs/2309.14381.

Radford, A. et al. Learning transferable visual models from natural language supervision. In:Proceedings of the 38th International Conference on Machine Learning, ICML2021,vol. 139, pp. 8748–8763 (2021).

Yan, S., Dong, N., Zhang, L. & Tang, J. Clip-driven fine-grained text-image person re-identification. IEEE Trans. Image Process. 32, 6032–6046 (2023).

Devlin, J., Chang, M., Lee, K. & Toutanova, K. BERT: pre-training of deep bidirectional transformers for language understanding. In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, NAACL-HLT, pp. 4171–4186 (2019).

Bao, H., Dong, L., Piao, S. & Wei, F. Beit: BERT pre-training of image transformers. In: The Tenth International Conference on Learning Representations, ICLR, (2022).

Tu, R. et al. Global and local semantic completion learning for vision-language pre-training. (2023). CoRR abs/2306.07096.

He, K. et al. Masked autoencoders are scalable vision learners. In: IEEE/CVF Conference on Computer Vision and Pattern Recognition, CVPR, pp.15979–15988 (2022).

Jiang, D. & Ye, M. Cross-modal implicit relation reasoning and aligning for text-to-image person retrieval. In: IEEE/CVF Conference on Computer Vision and Pattern Recognition, CVPR, pp.2787–2797 (2023).

Yang, S. et al. Towards unified text-based person retrieval: A large-scale multi-attribute and language search benchmark. In: Proceedings of the 31st ACM International Conference on Multimedia, pp. 4492–4501 (2023).

Li, S. et al. DCEL: deep cross-modal evidential learning for text-based person retrieval. In: Proceedings of the 31st ACM International Conference on Multimedia, pp. 6292–6300 (2023).

Eliasof, M., Zikri, N. B. & Treister, E. Unsupervised image semantic segmentation through superpixels and graph neural networks. CoRR. abs (2022). /2210.11810.

Meas. 70, 1–8 et al. Superpixel-based and spatially-regularized diffusion learning for unsupervised hyperspectral image clustering. IEEE Transactions on Geoscience and Remote Sensing, (2024). (2021).

Entin, E. B. Using the cloze procedure to assess program reading comprehension. In: Proceedings of the 15th SIGCSE Technical Symposium on Computer Science Education, SIGCSE, pp. 44–50 (1984).

Tan, H., Lei, J., Wolf, T. & Bansal, M. VIMPAC: video pre-training via masked token prediction and contrastive learning. CoRR abs/2106.11250 (2021).

Geng, X. et al. Multimodal masked autoencoders learn transferable representations. (2022). CoRR abs/2205.14204.

Li, X. et al. mc-beit: Multi-choice discretization for image BERT pre-training. In: Computer Vision - ECCV 2022–17th European Conference,vol. 13690, pp. 231–246 (2022).

Oord, A., Vinyals, O. & Kavukcuoglu, K. Neural discrete representation learning. In: Advances in Neural Information Processing Systems 30:Annual Conference on Neural Information Processing Systems, pp. 6306–6315 (2017).

Liu, H. et al. M3AE: multimodal representation learning for brain tumor segmentation with missing modalities. In: Thirty-Seventh AAAI Conference on Artificial Intelligence, AAAI, pp. 1657–1665 (2023).

He, S. et al. VLMAE: vision-language masked autoencoder. CoRR abs/2208.09374(2022).

Dosovitskiy, A. et al. Houlsby, N. :An image is worth 16x16 words: Transformers for image recognition at scale. In: 9th International Conference on Learning Representations, ICLR(2021).

Zhou, J. et al. ibot: Image BERT pre-training with online tokenizer. (2021). CoRR abs/2111.07832.

Ren, C. Y., Prisacariu, V. A. & Reid, I. D. gslicr: SLIC superpixels at over 250hz. (2015). CoRR abs/1509.04232.

Peng, H., Avil´es-Rivero, A. I. & Sch¨onlieb, C. HERS superpixels: Deep affinity learning for hierarchical entropy rate segmentation. In: IEEE/CVF Winter Conference on Applications of Computer Vision, WACV, pp. 72–81 (2022).

Liu, X. et al. Self-supervised learning: Generative or contrastive. IEEE Trans. Knowl. Data Eng. 35(1), 857–876 (2023).

Chen, Q., Huang, T., Zhu, G. & Lin, E. A dual-branch model with inter- and intra-branch contrastive loss for long-tailed recognition. Neural Netw. 168, 214–222 (2023).

Huang, W. F. et al. R´enyi divergence deep mutual learning. In: Machine Learning and Knowledge Discovery in Databases: Research Track - European Conference, ECML PKDD 2023, vol. 14170, pp. 156–172 (2023).

Qu, L. et al. Learnable pillar-based reranking for image-text retrieval. In: Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval, SIGIR, pp. 1252–1261 (2023).

Li, S. et al. Person search with natural language description. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition, CVPR, pp. 5187–5196 (2017).

Yan, S., Tang, H., Zhang, L. & Tang, J. Image-specific information suppression and implicit local alignment for text-based person search. (2022). CoRR abs/2208.14365.

Wang, Z. et al. CAIBC: capturing all-round information beyond color for text-based person retrieval. In: The 30th ACM International Conference on Multimedia, pp. 5314–5322 (2022).

Shao, Z. et al. Learning granularity-unified representations for text-to-image person re-identification. In: The 30th ACM International Conference on Multimedia, pp. 5566–5574 (2022).

Shu, X. et al. See finer, see more: Implicit modality alignment for text-based person retrieval. In: Computer Vision-ECCV 2022Workshops, vol. 13805, pp. 624–641 (2022).

Qin, Y. et al. Noisy-correspondence learning for text-to-image person re-identification. CoRR. abs (2023).

Bai, Y. et al. Rasa: Relation and sensitivity aware representation learning for text-based person search. In: Proceedings of the Thirty-Second International Joint Conference on Artificial Intelligence, IJCAI, pp. 555–563 (2023). https://doi.org/10.24963/ijcai.2023/62

Ji, Z., Hu, J., Liu, D., Wu, L. Y. & Zhao, Y. Asymmetric cross-scale alignment for text-based person search. IEEE Trans. Multim. 25, 7699–7709 (2023).

Cao, M. et al. An empirical study of clip for text-based person search. In: Proceedings of the AAAI Conference on Artificial Intelligence, AAAI, pp.38(1): 465–473 (2024).

Shen, W. et al. Enhancing visual representation for text-based person searching. Knowl. Based Syst. 309, 112893 (2025).

Nguyen, A. D. et al. cMDTPS: Comprehensive Masked Modality Modeling with Improved Similarity Distribution Matching Loss for Text-based Person Search. In: Conference on Information Technology and its Applications. Cham: Springer Nature Switzerland, 184–196 (2024).

Acknowledgements

The study was supported by National Natural Science Foundation of China (Grant No. 62271274, 62203238), Ningbo Municipal Public Welfare Technology Research Project (No. 2022S134), and Ningbo Major Research and Development Plan Program (No. 2023Z196).

Author information

Authors and Affiliations

Contributions

All authors contributed to the study conception and design. T. T. designed the study, performed research, analyzed data, and wrote the paper. L. G. as the corresponding author, oversaw the study design, implementation, and revision. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Tuo, T., Guo, L., Zhang, R. et al. Image region semantic enhancement and symmetric semantic completion for text-to-image person search. Sci Rep 15, 21224 (2025). https://doi.org/10.1038/s41598-025-00904-8

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-00904-8