Abstract

With the development of better communication networks and other related technologies, the IoT has become an integral part of modern IT. However, mobile devices’ limited memory, computing power, and battery life pose significant challenges to their widespread use. As an alternate, mobile cloud computing (MCC) makes good use of cloud resources to boost mobile devices’ storage and processing capabilities. This involves moving some program logic to the cloud, which improves performance and saves power. Techniques for mobility-aware offloading are necessary because device movement affects connection quality and network access. Depending on less-than-ideal mobility models, insufficient fault tolerance, inaccurate offloading, and poor task scheduling are just a few of the limitations that current mobility-aware offloading methods often face. Using fault-tolerant approaches and user mobility patterns defined by a Markov chain, this research introduces a novel decision-making framework for mobility-aware offloading. The evaluation findings show that compared to current approaches, the suggested method achieves execution speeds up to 77.35% faster and energy use down to 67.14%.

Similar content being viewed by others

Introduction

The Internet of Things is now one of the most important components of information technology due to the quick growth of networks and communication technologies1,2,3. Nevertheless, creating apps for mobile devices like smartphones, tablets, and portable computers presents significant difficulties due to their constrained processing, battery, and memory capacities4,5. Applications like image processing, speech recognition, and routing, for instance, demand a lot of processing power, which is frequently more than mobile devices can provide6,7,8. Cloud computing, an emerging technology, has become widely used in many different disciplines and industries in the past few decades9,10,11. This technology makes it possible to access processing and storage resources without requiring a substantial initial commitment by offering scalable and affordable services12. Fairness-aware task scheduling for converged networks has been established, offering insights into the equitable distribution of resources relevant to mobile cloud computing offloading decisions13. In this sense, the performance and efficiency of mobile devices have been effectively enhanced by MCC, a sub-branch of cloud computing14,15. In recent years, significant measures have been developed for multi-state systems, along with a framework for reliability assessment that can improve fault tolerance tactics in MCC16. MCC increases the capabilities of mobile devices while lowering energy consumption and processing power requirements by shifting the processing burden from mobile devices to cloud servers. This examines compute load in terrestrial satellite networks and offers insights on resource management that can guide MCC loading techniques17,18.

Even with MCC’s many benefits, one of the biggest problems in this industry is intelligently and effectively managing the processing load19,20,21. Mobility-aware offloading has the potential to significantly increase end-user quality of service by making offloading decisions depending on the user’s movement status and dynamic network changes22,23. Fault tolerance is just one of the numerous difficulties this kind of load shedding must overcome in its deployment. Fault tolerance is necessary in a dynamic and ever-changing cloud environment to guarantee service continuity and stability24. In MCC, fault tolerance refers to the system’s capacity to handle potential malfunctions and carry on with minimal interruption and delay25,26,27. These malfunctions could be brought on by hardware flaws, network issues, or even security intrusions. Several strategies, including data replication, load redistribution, and data reconstruction methods, are required to increase fault tolerance28. These methods ought to be applied effectively, taking into account the constraints of network and mobile resources29.

Therefore, studies and research in the area of mobile cloud computing’s mobility-aware offloading with fault tolerance contribute to better user experiences in addition to increasing the effectiveness and reliability of cloud services30. To create creative and effective solutions, this topic necessitates interdisciplinary efforts in data science, information security, and computer networks31,32. In the end, achieving these objectives may result in a fundamental shift in the way cloud services and mobile devices are utilized, both of which are indispensable in today’s modern society33.

A simple solution to these problems has been suggested: mobile cloud computing. Using the cloud’s storage and processing power, this technology increases the ability of mobile devices to run apps. Nevertheless, depending on the network circumstances and the mobility of the mobile device, shifting some application components to the cloud might not be the best course of action. The mobility of devices and network circumstances, which might impact execution time and energy consumption, must be considered when making an informed decision regarding offloading to the cloud. This research’s primary objective is to offer a mechanism for making decisions on mobility load shedding that is both informed and fault-tolerant. By forecasting visited networks and utilizing the Markov chain of user mobility, this technique chooses the best components for offloading. The potential for an outage during the unloading process and the return of the results to the mobile device are taken into account while making this option. This research is innovative because it models user mobility using a Markov chain and combines it with fault tolerance to increase efficiency and lower energy usage. This suggested approach builds a Markov chain of user mobility and identifies the proper components for offloading by gathering mobility information from the user, mobile device, application, cloud server, and network. On the basis of user experience and network profiles that have been visited, this Markov chain is extracted. The optimal choice to discharge the load is then determined by applying the evolutionary algorithm, which optimizes both the execution time and energy usage.

Implementing the suggested Mobility-Aware Fault-Tolerance (MAFO) loading mechanism on mobile devices entails considerable trade-offs. MAFO markedly enhances performance, facilitating expedited execution and energy conservation through the application of Markov chain-based mobility prediction and genetic algorithms (GAs) for optimal offloading determinations. These advantages enhance the user experience in resource-limited mobile settings. The computational complexity of GAs and Markov chain updates incurs overhead and may burden low-power devices, particularly during frequent network fluctuations due to mobility. Moreover, MAFO’s dependence on constant network connectivity may result in diminished performance during instances of frequent disruptions. The subsequent sections further examine these restrictions, underscoring the necessity for optimal algorithms and sufficient network support to facilitate practical implementation on mobile devices.

The main objectives of the MAFO, offloading technique are to ensure fault tolerance while reducing execution time and energy consumption in mobile cloud computing environments. MAFO optimizes the choice of application components for offloading by predicting user mobility and network conditions using a Markov chain. These objectives tackle the shortcomings of current methods, which frequently fall short of striking a balance between energy costs and computational efficiency under dynamic network situations. The main contributions of this research include the following:

-

Providing an informed mobility model based on the Markov chain that has the ability to predict the networks visited by the user and the duration of the stop in each network.

-

Combining fault tolerance with load shedding decision-making process that helps to improve efficiency and reduce energy consumption in the conditions of network changes and errors.

-

Using the genetic algorithm to optimize the decision-making process of offloading, which allows choosing the right components for offloading to the cloud with more accuracy and efficiency.

The rest of the article is organized as follows. “Related works” examines the work done in the field of decision-making for conscious movement. In “Mobility aware and fault tolerant offloading scheme (MAFO)”, the proposed fault-tolerant mobile aware load shedding scheme is reported. In “Proposed method”, the proposed algorithm for solving the load shedding problem is described. The evaluation criteria and the results obtained under different conditions and parameters are shown in “Evaluation and simulation”, and finally, in “Conclusion”, conclusions and suggestions for future work are presented.

Related works

MCC has attracted considerable interest recently, with several studies investigating offloading ways to improve the computational capability of resource-limited mobile devices. Review articles have methodically classified MCC methodologies, emphasizing pricing models, task allocation strategies, and decision-making frameworks for offloading. Nonetheless, mobility-aware offloading, which adjusts to fluctuating user movement and network conditions, remains a comparatively underexplored domain in extensive surveys, warranting a concentrated analysis of current methodologies34. This section examines essential mobility-aware offloading strategies, evaluating their methodology, advantages, and drawbacks to contextualize the proposed MAFO scheme. A live migration technique for associated virtual machines in cloud data centers is proposed in35, optimizing resource allocation under dynamic situations to enhance mobility-aware resource management in mobile cloud computing. In GACO, workflow services are associated with genes on a chromosome, with each gene designated to signify execution on either the mobile device or the cloud. The fitness of a chromosome is assessed according to execution time and energy consumption, choosing the design that minimizes both parameters. To address mobility, GACO utilizes the Random Waypoint (RWP) mobility model to assess connection length, thereby mitigating performance deterioration resulting from network fluctuations. This model presumes random movement patterns, which may not adequately represent actual user mobility, resulting in inefficient offloading decisions in highly dynamic situations. Moreover, GACO is deficient in resilient fault-tolerant methods, hence constraining its reliability during network disruptions. Although GACO excels in resource allocation optimization, its rudimentary mobility model and lack of error-handling mechanisms limit its application in intricate MCC environments.

A reinforcement learning-based resource allocation strategy for mobile networks is suggested in36, enhancing loading efficiency amid dynamic user mobility related to MCC. The lack of a specific strategy for predicting component loss during disruptions diminishes its robustness, especially in contexts characterized by frequent network variability. Moreover, the computational complexity of the ant colony algorithm may provide difficulties for resource-limited mobile devices, hence constraining its scalability. Researchers in37 suggested an effective prediction-based user recruitment method for mobile crowdsensing (PURE) that categorizes users into two groups based on distinct pricing plans: pay-as-you-go (PAYG) and pay-per-month (PAYM). When treating PAYM users as destinations, the cost reduction challenge transitions to acquiring consumers with the greatest likelihood of reaching a destination. Initially, they introduce a semi-Markov model to ascertain the probability distribution of user arrival times at points of interest (PoIs), subsequently deriving the likelihood of user contact. A novel prediction-based user recruiting technique is offered to optimize mobile crowdsensing and reduce data loading costs. Nonetheless, it presumes that job offloading occurs seamlessly, overlooking task granularity and failure tolerance38. This presumption constrains M2C2’s capacity to manage partial task failures or adjust to abrupt network alterations, hence diminishing its efficacy in dynamic MCC contexts. Moreover, the periodic scanning procedure elevates energy usage on mobile devices, potentially negating the advantages of offloading.

Another study focuses on mobility by facilitating concurrent access to several networks, including mobile and local wireless networks, to enhance offloading decisions39. This method assesses network conditions according to the demands of active processes, relocating activities to alternative networks where performance enhancements are attainable. Utilizing numerous network interfaces improves connection in mobile settings. Nonetheless, the approach emphasizes migrating complete operations to the cloud instead of delegating specific components, potentially resulting in suboptimal resource use. Furthermore, it lacks fault-tolerant features, rendering it susceptible to network disturbances. The disregard for mobility-induced latency fluctuations further constrains its efficacy in situations with frequent handovers, highlighting the necessity for more detailed offloading solutions.

A further approach identifies cloud service providers for offloading by analyzing the mobile device’s movement history and its closeness to the user’s trajectory40. It creates a spatio-temporal graph of the device’s trajectory, pinpointing the nearest cloud with adequate processing capability for job execution. This method guarantees that offloading decisions correspond with the device’s geographical position, potentially minimizing latency. Nonetheless, it neglects essential network attributes, like latency, bandwidth, and unpredictability, which profoundly influence execution time and energy usage41. Remote clouds with enhanced processing capabilities may surpass nearby but inferior servers; nonetheless, this approach favors proximity over performance. The lack of fault-tolerant techniques diminishes its reliability in dynamic contexts characterized by frequent network disruptions. These deficiencies underscore the necessity for a more holistic strategy for offloading decisions.

A approach based on Markov chains simulates device mobility to enhance offloading decisions, taking into account many probable movement trajectories42. It computes execution time by presuming total offloading to the cloud, incorporating mobility probabilities across Markov chain pathways. If cloud execution surpasses local execution in speed, the full program is transferred. This method successfully integrates mobility forecasts but neglects network interruptions and application specificity. By unloading complete programs, it neglects the advantages of component-level offloading, which can enhance resource use. Moreover, the absence of fault-tolerant methods diminishes its resilience in practical MCC environments, where network disruptions are common. The dependence on a rudimentary Markov chain model constrains its capacity to encapsulate intricate mobility patterns, hence requiring more sophisticated predictive methodologies.

An alternative method suggests a mobility-aware offloading system that utilizes software-defined networks (SDNs) to oversee mobility and minimize response latency. It seeks to facilitate seamless offloading for users moving between wireless networks, employing a network controller and remote storage to manage job distribution. This approach improves connection by adaptively responding to network fluctuations, rendering it appropriate for latency-sensitive applications. Nevertheless, its emphasis on network management neglects fault tolerance and component-level offloading, constraining its adaptability to failures or intricate application architectures. The computational burden of SDN controllers may also tax mobile device resources, diminishing energy efficiency. These constraints indicate that including fault tolerance and granular offloading may improve the method’s efficacy in dynamic mobile cloud computing environments.

The examined methods exhibit substantial advancements in mobility-aware offloading yet encounter prevalent obstacles, such as imprecise mobility models, restricted fault tolerance, and imprecise offloading strategies. The proposed MAFO system rectifies these deficiencies by including a Markov chain-based mobility model alongside fault-tolerant measures, including check pointing, to guarantee dependable task execution. MAFO provides a comprehensive solution for MCC applications by minimizing execution time and energy usage using genetic algorithm-based decision-making, as elaborated in the following sections. This thorough approach differentiates MAFO from current techniques, establishing a basis for effective and dependable offloading in dynamic mobile settings.

Mobility aware and fault tolerant offloading scheme (MAFO)

As previously noted, inadequate fault tolerance, the use of an inappropriate movement model, and the practice of emptying a portion of the application rather than applying knowledge are among the issues plaguing the work being done in the field of load shedding of conscious movement. This section presents a fault-tolerant mobility-aware load shedding solution that addresses these issues by gathering network data and tracking user mobility over time. Based on this data, the Markov chain and profile of the networks the user has visited are generated, and the duration of the stop in each network is predicted. Subsequently, it determines which components are suitable for releasing the load, considering the likelihood of traversing the different paths anticipated by the Markov chain and accounting for the potential for disruptions during load unloading, cloud execution, and mobile device output. GA are used to pick appropriate components for cloud evacuation because this is a hard decision.

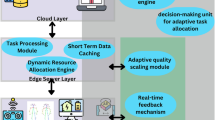

Suggested load unloading scheme.

A view of the suggested plan is presented in Fig. 1. The monitoring unit, knowledge unit, modeller, application application modeller, decision planner, and implementation unit are the five units that make up this structure, as shown in the picture.

-

Monitoring unit.

This unit gathers the data required to decide whether to unload. In particular, this data include information about the user’s movements, mobile devices, apps, cloud servers, and mobile networks. the user’s movements within each network, the time and duration of the user’s visits, the device’s central processor unit speed, the mobile device’s energy consumption rate per unit of time, and the energy consumption rate during the mobile device’s sending and receiving Information about the cloud service provider is gathered from the title of the mobile device’s information, the cloud service provider’s processor speed, the amount of time customers wait in queue, and the network’s data transfer and reception bandwidth43. Sections 3–3 contains application information.

-

Units of knowledge.

The monitoring unit’s data is processed by this unit, which also takes knowledge from it to incorporate into the load shedding decision algorithm. In particular, this information consists of the user’s movement’s Markov chain, their stopping patterns inside the networks they have visited, and the typical bandwidth of those networks. The Markov chain models the user’s potential paths as networks encountered along each path based on the user experience44. The user’s future movements are predicted using this chain. Based on the user’s prior experience, the length of time they spent in each of the current networks on the Markov chain and its bandwidth are retrieved and profiled on the Markov chain. Based on the average bandwidth that users have experienced in the network’s prior meetings, the average bandwidth of the networks is determined. The average bandwidth used for transmitting and receiving data linked to the user’s pause in each network, as well as a sample of the Markov chain derived by the knowledge unit, are displayed in Fig. 2.

Fig. 2 Markov chain sample profiled by knowledge unit.

-

Application modeling unit.

The application is divided into parts that can be run on a mobile device or cloud because it might not be the best idea to empty the complete application. Both statically and dynamically decomposing the application into smaller parts is possible. While static breaking is predetermined and carried out at the time of application implementation, dynamic breaking breaks the application based on the circumstances of the execution time. Based on the predetermined level of granularity, the application application modelling unit models the relationship graph of the application application components.

Application granularity can be specified at several levels, including function, object, service, and component class. Granularity is taken into consideration at the component level in the suggested approach, enabling generalisation to any of the feasible levels. As a result, every vertex in the application graph represents a application component, and the call between two neighbouring components is indicated by the line connecting two vertices45. Each edge in the extracted relation graph is weighted according to the size of the data called between two neighbouring components, and each vertex is weighted according to the number of linked instructions. Thus, the data needed by the application application to determine whether to unload the load consists of the relationship graph between the program’s components, the number of instructions needed for each component to execute, the quantity of data needed to send each component in the event that it is decided not to load it, and the quantity of data The output that is obtained from the cloud comes after the component has been executed. A sample application application and its relationship graph, which is based on the user’s level of function expertise, are displayed in Fig. 3.

Fig. 3 An example of an application program and a graph of the relationship between components.

-

Decision planning units.

In order to optimize the time and energy consumption of the application implementation as a whole, this unit is in charge of choosing whether to implement each application application component locally or on the cloud. In order to achieve this, the unit chooses the location for the execution of its components based on the knowledge unit’s extracted information as well as the relationship graph of the application application created using a genetic algorithm46. In actuality, the profiled Markov chain of user movement and the potential for error during load unloading are used to compute the amount of time and energy needed to run the application. The Markov chain is not sensitive to the kind of application because it is retrieved using the user’s prior experience, or the data gathered from the crossing paths. Additionally, there is no delay in the application’s execution because the Markov chain extraction is completed before a decision is made and the application is run. The programming unit’s output is shown, with [a1, a2,., an] chosen based on the number of components n and the location of the i component’s execution a.i., which is zero for local execution and execution on The value of the cloud will be one. In Sect. 4, the random decision application unit’s functioning will be further elucidated.

-

Execution units.

This unit executes each of the components of the application program locally or to the cloud based on the decision taken by the decision planning unit.

System model

The MEC environment used in this work is depicted in Fig. 3 and consists of T cloud computing servers installed in each base station. Every mobile user connects with a unique MEC server, and they are only able to connect with a single base station at a time. MEC servers are linked to the cloud via backhaul networks and to each other via X2 or Xn cables47,48. The cloud server serves, and the set of users is represented as U = {u1, u2, u3,., uj}, and the set of base stations is represented as BS = {bs1, bs2, bs3,., bsi}. as a content source server. It is expected that user locations are unchanged during the specified time interval, content requests are mutually independent, and each user may only request one content item in a particular time period.

Mobility model

Under this supposition, we take into account that a mobile user’s movement follows an arbitrary pattern, changing in both direction and angle over time49. This suggests that the trajectory of the user’s movement is not limited to a certain pattern or path and can alter dynamically in response to different stimuli. As a result, the following latitude and longitude represent the user’s path:

The movement trajectory of the bth user in t is indicated by \(\:{L}_{ub}^{t}\). where the notation \(\:{lat}_{ub}\left(t\right)\:\)represents the longitude point and \(\:{lon}_{ub}\left(t\right)\:\)represents the latitude point of the bth user’s mobile trajectory in t.

Task scheduling

Clustering-based task scheduling is a promising and effective solution when multi-resources (local device, WANET, Cloudlet DC, public cloud) are involved50. HEFT is a useful heuristic for mapping program tasks onto heterogeneous resources, though, because the fine-grained size of heterogeneous tasks and resources exists in multiple forms for the problem under consideration51. Regarding the suggested approach in this study, the MHEFT heuristic is adjusted since mob-cloud scheduling incorporates local mobile device, wireless network, and cloud computing jobs while traditional HEFT simply schedules tasks in cloud resources. Here, the execution times of a cluster of tasks, both local and remote, are computed using Eqs. (2) and (3), respectively52. Task vi, a distant cluster task, has an average computing time of: If the task priority is the same as the HEFT algorithm, then:

Comparable to how a task’s average calculation is determined in the local scheduler:

The job precedence requirements are expressed by the priority:

Equations (29) and (30) demonstrate how all tasks’ priority levels are recursively calculated starting at the task graph’s beginning and continuing until tasks are completed53.

Fault model

The phase of fault awareness is a crucial and indispensable part of mob-cloud, which is deeply integrated between the client and the server. Mobile computing servers’ failure probability is a complex problem that depends on a number of variables. Numerous factors, including hardware equipment quality, running time, workload status, and ambient conditions, influence the probability of failure54. This work makes the assumption that mobile computing servers experience isolated failure occurrences that adhere to a Poisson process. Consequently, the following is a further description of the failure probability, represented by \(\:{PF}_{k}\left(x\right)\), of a mobile computing server outfitted with a \(\:{bs}_{k}\) base station within a single time slot:

where \(\:\lambda\:\) is the failure rate (average rate of failure events), X is the number of failure occurrences, e is the base of the natural logarithm, \(\:{f}_{k}^{x}\:\)is the number of failures at base station \(\:{bs}_{k}\), and \(\:{T}_{k}\) is the time at which \(\:{bs}_{k}\) failure occurs55.

Queuing theory can be used to evaluate the failure probability of processing task \(\:{TF}_{k}\left(X\right)\:\)on mobile computing server \(\:{bs}_{k}\) when a user requests resources:

where CN indicates the server’s capacity and ρ stands for the system’s utilization rate (the ratio of task arrival rate to task processing rate). A base station stops accepting jobs from users when it malfunctions. Task execution is delayed, though, if a fault arises following a user request and makes it impossible to complete the task that was received:

where \(\:{d}_{{bs}_{k},{u}_{b}}\)indicates the communication distance between the base station and the b-th user, and \(\:{Cap}_{k}\) indicates the processing capacity of the k-th base station.

Problem formulation

The goal of the content caching problem is to minimize the delay of content delivery in cases where there are extra limits or needs. Our objective is to ensure the efficient distribution of the necessary material while adhering to each MEC’s time and capacity constraints with the least amount of latency possible56. As a result, the formal formulation of this issue is:

Constraint C1 states that the user’s requested material cannot be larger than the edge server’s capacity. The purpose of constraint C2 is to limit the variable’s integrity and non-negativity. The maximum distance of the user-requested material is indicated by constraint C3. The time it takes for a user to successfully transmit a request to the base station must be less than the delay brought on by a base station failure that prevents the processing of a specific user request job, according to Constraint C4.

Mitigating resource demands of genetic algorithms and Markov chains

The computational complexity of GA and Markov Chains presents difficulties for resource-limited mobile devices in the proposed MAFO system. The MAFO framework employs ways to reduce computational and energy overhead while maintaining the advantages of efficient task offloading. Initially, computationally demanding operations, such GA optimization and Markov Chain building, are delegated to the cloud during reliable network access, alleviating the processing load on the mobile device. The GA is designed with a diminished population size (e.g., 30 chromosomes) and a restricted number of iterations (e.g., 100), as established in “First category of evaluations: Selection of genetic algorithm parameters”, to reconcile optimization precision with computing efficiency. The computational expense of the Genetic Algorithm (GA) can be estimated as \(\:{\text{C}}_{\text{G}\text{A}}=\:\text{P}\cdot\:\text{I}\:\cdot\:{\text{C}}_{\text{i}\text{t}\text{e}\text{r}}\), where \(\:\left(P\right)\) represents the population size, \(\:\left(I\right)\), denotes the number of iterations, and \(\:{\text{C}}_{\text{i}\text{t}\text{e}\text{r}}\) signifies the execution time per iteration (generally 0.1–0.5 MIPS). By reducing \(\:\left(P\right)\) and \(\:\left(I\right)\), energy consumption, calculated as ( \(\:{\text{E}}_{\text{G}\text{A}}\) = \(\:{\text{C}}_{\text{G}\text{A}}.{\epsilon_{cpu}}\) ) where ( \(\:{\epsilon_{cpu}}\)= 0.4, \(\:mW/MIPS\) )), is markedly diminished.

To further alleviate resource demands, the Markov Chain is regularly updated using previous mobility data instead of in real-time, therefore decreasing the calculation frequency. The computational expense of updating the Markov Chain is estimated as \(\:{C}_{MC}\) = \(\:{N}_{s}\) \(\:.\:{C}_{state}\), where \(\:{N}_{s}\) represents the number of network states and \(\:{C}_{state}\) the execution time per state transition (generally 0.05–0.2 MIPS). Moreover, commonly utilized offloading decisions are stored on the mobile device to prevent repetitive GA calculations when network circumstances and mobility patterns are consistent. These solutions guarantee the MAFO scheme’s viability for mobile devices, which primarily manage lightweight tasks such as data collecting and decision caching.

Proposed method

This section explains the suggested algorithm used in the decision planning unit to handle the cargo unloading problem by taking error tolerance and mobility into account. The impact of the mobile device’s route and the potential for interruption during the route are taken into consideration when calculating the time and energy consumption of component g, as the time and energy required to run it on the cloud depend on the conditions of the covered network. The total likelihood of the mobile device traveling through each of the pathways reflected on the Markov chain is used to compute the amount of time and energy required to execute the g component on the cloud. Yes, that is feasible. As a result, while a component is operating on the cloud, its time and energy are determined using the Markov chain. In the end, the choice to apply load shedding is made in order to maximize the amount of time and energy used in the execution of each application program component. Owing to the intricacy of the problem, the suggested approach makes use of a genetic algorithm, wherein each chromosome has a collection of application program components, each of which is mapped to a gene and labeled according to where it is executed (on a mobile device or cloud). Yes, that is feasible. Finding the optimal execution time or energy consumption for the application program’s implementation is the ultimate solution to the chromozo load emptying problem. First, a random selection of an initial population of chromosomes is made in order to determine this response. Next, using a fitness function based on the overall time and energy used for the execution of all program components, the time and energy required for the execution of each chromosome is determined. During the processes of integration and mutation, the best pairs of chromosomes are chosen and become the chromosomes of the future generation based on the fitness of the chromosomes of the current population.

A child is produced from the combination of parents during the merging phase. Moreover, certain genes may mutate during the mutation phase according to a likelihood determined by the gene’s fitness ratio to the entire chromosome. Until the algorithm’s repetition rate falls below a predefined threshold or until subsequent iterations show no improvement over the preceding states, this process is repeated. Ultimately, the algorithm’s output is determined by selecting the best response that was received. The list of shortened symbols used in the load shedding problem modeling and the pseudocode of the suggested algorithm to solve this problem are displayed in Table 1 and Algorithm 1, respectively.

Modeling the load shedding problem.

The time complexity of Algorithm 1, crucial to the MAFO scheme, primarily stems from two elements: the genetic algorithm (GA) and the Markov chain-based mobility modeling. The Genetic Algorithm functions with a population of size P throughout G generations, assessing the fitness of each chromosome according to execution time and energy consumption. This fitness assessment entails calculating offloading decisions for NNN application components across M network states, yielding a complexity of \(\:\text{O}\left(\text{N}\cdot\:\text{M}\right)\) per chromosome. Consequently, the total complexity of the GA component is \(\:O\left(P\cdot\:G\cdot\:N\cdot\:M\right)\).

Furthermore, the construction and updating of the Markov chain for M states entails a complexity of \(\:\text{O}\left({\text{M}}^{2}\right)\) owing to the calculation of state transition probabilities. The overall time complexity of Algorithm 1, when both components are combined, is \(\:\text{O}\left({P\: \cdot G\: \cdot N\: \cdot M\:+\:}{\text{M}}^{\text{2}}\right)\).

The details of the suggested fitness function are provided in detail below since it is crucial in determining the best load discharge option while taking the device’s movement and error tolerance into account. As previously stated, a fitness function determines both the likelihood of a gene changing and which pair of chromosomes will be the greatest for the following generation. This function determines the fitness of a gene or chromosome by calculating its energy consumption or execution time while accounting for error tolerance and mobility. The suggested technique calculates a chromosome’s fitness function by deducting the fitness of each gene that makes up the chromosome from (9).

where \(\:{F}_{g}\)is the fitness of gene g and it is obtained from (10) or (11) based on the load shedding decision criteria

If \(\:{x}_{g}\) was an elimination factor; if the gene g has a local implementation, its value will be one; if not, it will also be one. The time and energy used when the g gene is executed locally are represented by \(\:{e}_{g}^{local}\)and \(\:{t\:}_{g}^{local}\), respectively, and for the cloud execution of the g gene, they are represented by \(\:{t\:}_{g}^{remote}\) and و \(\:{e}_{g}^{remote}\), respectively. The mobile device’s\(\:{e}_{g}^{local}\) and \(\:{t\:}_{g}^{local}\)ranges are determined by the number of application commands linked to that component \(\:{(Wl}_{g})\) the mobile device’s CPU speed(\(\:{C}_{m})\)and its energy consumption rate per time unit (\(\:{P}_{m}\)), respectively (12) and (13).

The influence of the changing route and the potential for an interruption during the route are factored into the computation of the time and energy required to operate the g component on the cloud, as these factors are dependent on the state of the covered network. The time and energy required to execute the g component on the cloud depending on the likelihood of the mobile device travelling through each of the paths reflected on the Markov chain are as follows, given that the device may follow any of them. It’s computed.

The probability of selecting the path \(\:P\left({path}_{i}\right)\)_\(\:\:{t\:}_{g,\:i\:}^{remote}\), and \(\:{e\:}_{g,\:i\:}^{remote}\)is denoted by \(\:{path}_{i}\).Thus, the time and effort required to carry out If the path is chosen, the g component on the cloud is \(\:{path}_{i}\)This issue should be taken into account while calculating \(\:{e\:}_{g,\:i\:}^{remote}\)and \(\:{t\:}_{g,\:i\:}^{remote}\) since the mobile device’s network connection may be disrupted during its movement from one network to another along the path \(\:{path}_{i}\) In order to achieve this, the execution time and energy consumption required to empty component g are calculated, keeping in mind that the process of discharging the load at the time of the outage involves one of the following methods: sending input data to the cloud, performing calculations there, or submitting the results of those calculations to a mobile device. However, as a result of this article, mobile devices that employ fault tolerance techniques, such as (checkpointing), are able to continue a component from the most recent interrupted point in the event of an interruption rather than having to repeat the emptying process. the load is from the start, the time and energy required in the\(\:\:{path}_{i}\:\)to execute a component, accounting for fault tolerance and the load discharge process’s progress status at the time of the outage, are computed through (16) and (17), where \(\:{P}_{t\:}\)is the mobile device’s stopping time in the current network, \(\:{d}_{i\:}\)and \(\:{d}_{o\:}\), respectively, the amount of input and output data, \(\:{d}_{i}^{,}\) and \(\:{d}_{o}^{,}\) the amount of data sent and received until the termination occurs, \(\:{Tx}_{i}^{{\prime\:}}\)and \(\:{Tx}_{o}^{{\prime\:}}\) the bandwidth of \(\:{R}^{*}\)is the amount of time needed to reconnect to the network after a disconnection, \(\:{C}_{c\:}\)is the server processor’s speed, and \(\:{Q}_{c\:}\)is the component in the service queue provider’s waiting time.

Put another way, there is an interruption in the input transmission mode if the stopping time of the device in the current network is shorter than the time needed to send the input data completely. In this case, the execution time of component g is equal to the total of the times spent for the transmission of the input data sent prior to the interruption. Getting back on track after a break, sending the last of the input data to the cloud, waiting in line at the cloud server, processing the data there, and sending the output results back to the mobile device. Similarly, the formula’s subsequent lines each indicate a state in which a cutoff was made: either when the cloud-based calculations were being executed, when the mobile device received the cloud-based results, or when the component’s unloading process was finished.

Evaluation and simulation

We used the MATLAB application to create the suggested approach and compared it to the most recent GACO47 and APTA50 methods, which is detailed in the prior works section, in order to assess it. The granularity of the application application and the GACO method’s superiority over alternative approaches in terms of time, energy, and uncertainty resulting from movement in the choice to unload the load are the main reasons for selecting it as the foundation for the assessments. Furthermore, compared to other recent methods, the GACO method has the closest approach to the proposed method when it comes to deciding when to load or unload based on user movement in order to optimize the execution time and energy consumption of the application program’s implementation.

The assessments have been divided into two groups based on how the suggested method uses GAs. The first group determines the optimal value for the genetic algorithm parameters (number of repetitions and population size) by examining their impact on the fitness attained using the two techniques. The second category evaluates the performance of the suggested technique in comparison with the GACO method under the influence of different elements of the application program, user mobility and network conditions by using the selected parameters of the genetic algorithm. The impact of variables like the quantity of parts, the volume of the input data, the number of commands issued by the application application, the length of the user’s downtime in each network, and bandwidth have all been assessed for this aim. With a 95% confidence interval, the average of a thousand repeats is used to calculate the findings. The parameters utilised in the evaluations are displayed in Table 2.

First category of evaluations: selection of genetic algorithm parameters

In the first set of assessments, the optimal values for the genetic algorithm parameters are looked at and chosen in light of the impact these factors have on the efficiency of the MAFO and GACO procedures. The likelihood of reaching a result closer to the optimal increases generally with an increase in the initial population and the number of genetic algorithm repetitions; however, this also increases the complexity and, consequently, the decision-making time. As a result, the values in which the algorithm’s fit is sufficient relative to values higher than that are the ideal values for these two parameters.

Effect of Genetic Algorithm Iterations on Execution Time.

Effect of Population Size on Execution Time.

The fitness value derived from the application program’s execution time for the quantity of genetic algorithm rounds is displayed in Fig. 4. It has been decided not to present the evaluation of the influence of the genetic algorithm’s repetition count on fitness in terms of energy consumption any further because the results are comparable. Table 2 shows how many parameters pertaining to the application application, user movement, and network circumstances were randomly considered during the required interval in order to arrive at a general result.

As can be seen in Fig. 5, the number of repeats increases with a decrease in the application program’s execution time; conversely, the more repetitions, the more complex the algorithm becomes. Consequently, this figure is regarded as the ideal repetition frequency for GAs, given that more than 100 repetitions did not significantly affect the fitness value. The impact of population size on fitness as determined by application application execution time is depicted in Fig. 5. The findings indicate that up to 30 chromosomes, temporal fitness diminishes with increasing population size and does not significantly improve beyond that point. As a result, in the following, 30 is taken into account as the starting population size for assessments to come. Similar to the number of repeats, the population size directly affects how complex the algorithm is.

Effect of Fault Tolerance on Execution Time.

Effect of Fault Tolerance on Energy Consumption.

Effect of Number of Application Components on Execution Time.

Effect of Number of Application Components on Energy Consumption.

The second category of evaluations: checking the performance of the proposed method

This group of evaluations aims to examine how well the suggested approach performs in contrast to the GACO method under various application application features, user movement, and network situations.

For this reason, the following sections will rank the effects of fault tolerance based on the likelihood of a failure when making changes to the network, the number of components, the size of the input data, the quantity of commands issued by the application application, and the length of time the user is unavailable. Every network has been assessed according to how much time and energy it uses. To ensure that the findings of each of these assessments remain separate from other input parameters pertaining to the application application, user mobility, and network conditions. Table 2 states that the values of these parameters are regarded as random within the specified interval. The size of the original population and the number of genetic algorithm repeats in all of the assessments of the second category are deemed to be equivalent to 30 and 100, respectively, based on the findings of the preceding section.

The effect of fault tolerance according to the probability of failure

This section demonstrates the impact of including error tolerance into the suggested technique by presenting the energy and time consumption of its application in two scenarios: one in which error tolerance is present in the probability of different certainty, and the other in which it is not. The suggested method’s execution time and energy usage with and without fault tolerance are depicted in Figs. 6 and 7, respectively. As both versions demonstrate, if error tolerance is not applied, the execution time the application rises exponentially as the certainty probability grows. The reason for this is that if the fault tolerance feature is not used, the application starts over from the beginning; if it is, however, the application continues from the point where it left off prior to the interruption, resulting in a reduction in the amount of time and energy required to run the application. The two approaches suggest that the use of error tolerance reduces the probability of low certainty with respect to decision errors. However, in the case of a decision with 100% certainty probability and a low certainty probability in practice, the difference between the two approaches does not reach statistical significance.

Evaluation of the impact of the number of components of the application program

Figures 8 and 9 compare the execution time and energy consumption of application execution between the MAFO and GACO methods, influenced by the number of components in the application. As the number of application components increases, both execution time and energy consumption rise accordingly. The figures demonstrate that the proposed MAFO method outperforms the GACO method across all component counts, owing to its accurate prediction of user mobility, network conditions, and disruption probabilities during offloading. This performance gap widens with larger component counts, as the accuracy of offloading decisions becomes more critical for complex applications.

Evaluating the impact of input data

As one of the important factors in the load shedding choice, Figs. 10 and 11 examine the impact of the amount of data called between the components on the application application execution’s time and energy consumption. Both approaches cost exponentially more as the size of the data rises, as would be expected. The suggested method outperforms the GACO method in terms of time and energy consumption for varying data sizes, as illustrated in Figs. 10 and 11. This is because it accurately predicts the movement and conditions of route networks, as well as the likelihood of disruptions during the load unloading procedure. This superiority becomes more noticeable as data size increases since larger data imposes a greater cost owing to incorrect decision-making because data transmission times grow.

Effect of Input Data Size on Execution Time.

Effect of Input Data Size on Energy Consumption.

Effect of Network Stop Time on Execution Time.

Effect of Network Stop Time on Energy Consumption.

Effect of Application Component Size on Execution Time.

Effect of Application Component Size on Energy Consumption.

Evaluation of the impact of downtime in networks

The impact of the halting time in the networks on the route on the application program’s time and energy consumption in the GACO and MAFO approaches is contrasted in Figs. 12 and 13. The statistics demonstrate that, for all stop times, the suggested method uses less time and energy than the GACO method because it properly accounts for movement and the likelihood of failure throughout the unloading process. Yes, that is feasible. It is evident that a longer downtime results in fewer connection disruptions and a shorter time needed to transition from the interruption to the execution time. As a result, the figure shows a declining tendency.

Evaluation of the impact of the size of application components

The impact of application component commands on time and energy usage is investigated in two ways in Figs. 14 and 15. The MAFO approach outperforms the GACO method in the quantitative theme, and both methods’ trends rise exponentially. Because more components of an application application are likely to be emptied onto the cloud the larger its component sizes are, movement awareness in decision-making becomes more evidently beneficial. The time and energy consumption for the implementation of the application application in the two techniques are similar when the application application is large and both methods tend to release the load.

Though many parameters are changed during program loading and service calls, the user-defined timeout limit for each program validates the earlier findings that the suggested MAFO method outperforms the basic approaches in both stable and unstable conditions. On the other hand, MAFO effectively adapts to dynamic change and maintains application performance without compromising, in contrast to existing techniques, as demonstrated by the results displayed in Figs. 16 and 17, and 18 for both stable environments without mobility and unstable environments with mobility. It has been shown that baseline methods deteriorated application performance during mobility and did not implement any dynamic adjustments.

Performance of 3D-game applications both with and without mobility.

Performance of healthcare applications both with and without mobility.

Performance of Business Applications With and Without Mobility.

One of the main issues in the mobile cloud environment is energy usage. The mobile thick client and virtual machine are both used by the integrated application to run different apps. Nonetheless, this work attempts to simultaneously lower the energy consumption of the cloud resource and the mobile device. Numerous fundamental research have attempted to lower the energy consumption of mobile devices or cloud resources in the context of mobile cloud environments, all the while preserving performance and partitioning according to predetermined goals. In the extensive analysis conducted by APTA and GACO, the energy consumption of MAFO throughout the execution of all programs is compared. However, Fig. 19 demonstrates that MAFO uses less energy than APTA and GACO because it handles task allocation and application partitioning at runtime, confirming that the mobile device uses Big Data.

Mobile device application energy consumption.

Resource demands versus energy savings

A trade-off study was conducted to evaluate the viability of the proposed MAFO scheme by quantifying the computational and energy overhead of GAs and Markov chains on mobile devices in relation to the energy savings achieved through optimal job offloading. Simulations in MATLAB used the approach outlined in “Evaluation and simulation” to quantify the energy overhead (10–50 mW for applications with 10–50 components), computational overhead (5–25 MIPS for GAs and 2–10 MIPS for Markov chain updates), and energy savings (200–800 mW/s relative to local execution). The net energy impact, defined as the difference between savings and overhead, remained positive (150–750 mW/s), showing the efficacy of MAFO. The cloud-assisted computing solution (“Mitigating resource demands of genetic algorithms and Markov chains”) diminished the mobile device overhead by 60–80%, rendering this approach viable for resource-constrained devices.

Energy trade-off for varying application component sizes.

Energy trade-off for varying network stop times.

The results depicted in Figs. 20 and 21 indicate that the energy overhead escalates with application size, however a little portion (5–10%) of the savings persists. In instances with brief network outages, the savings are more significant owing to precise offloading decisions. Delegating the GA and Markov Chain computations to the cloud significantly reduced the mobile device overhead to nearly nil (< 1 mWs) and optimized the savings. The analysis also accounted for the whole system energy, noting that the supplementary cloud use (50–100 mW/s each decision cycle) is insignificant.

Comparison of End-to-End energy consumption

To assess the total energy consumption of the proposed MAFO scheme, its energy usage is compared against three baseline methodologies: local execution (LE), where all tasks are processed on the mobile device without offloading; the GACO method47; and the UDQF method40, which employs optimization techniques for task offloading to mobile edge computing.

MAFO vs. Baseline End-to-End Energy Consumption.

Figure 22 illustrates that MAFO consistently attains reduced energy consumption across application sizes ranging from 10 to 50 components. The aggregate energy consumption of MAFO, encompassing the overhead of GAs and Markov chains (10 to 50 mW/s) as well as cloud computing (50 to 100 mW/s), varies from 150 to 300 mW/s. Conversely, LE exhibits the largest consumption at 400–900 mW owing to extensive local processing, GACO varies from 300 to 600 mW/s due to reduced discharge decisions, and Lang et al.‘s technique consumes 250–450 mW/s, constrained by the absence of Markov chain pre-basing. MAFO provides net energy savings ranging from 200 to 600 mW/s, 100 to 300 mW compared to GACO, and 50 to 150 mW relative to UDQF, with algorithmic overhead constituting merely 5 to 15% of the reductions.

Conclusion

This paper presents an aware mobility approach for mobile cloud computing that incorporates fault tolerance for load shedding. Being aware of one’s movements is crucial since they can alter the network and access point circumstances that a user encounters while moving. Consequently, the best course of action for unloading the load may derive from awareness of the movement. specific of the issues with the current approaches in this field are that they do not use an appropriate movement model, do not take mistake tolerance into account, empty a portion of the application, and do not take into consideration the possibility of emptying specific components depending on the level of granularity. The suggested Markov chain method uses the user’s movement experience to obtain the movement in order to overcome these difficulties. The probability of travelling through each of the anticipated paths on the Markov chain as well as the likelihood of an interruption along the path are then taken into account when calculating the time and energy required for execution using a fitness function.

Fault tolerance has been implemented due to the likelihood of failure, which might reduce the detrimental impacts of movement on the unloading process by continuing the execution process from the point of failure. The suggested method can, in turn, save up to 75% of the time and 65% of the energy required to implement the application application, according to the results of a comparison between it and other well-known moving methods in this field based on a number of parameters, including the size and number of application components, the size of the input data, and the network stop time.

This document exemplifies the practical implementation of the proposed MAFO scheme through a case study of a mobile augmented reality (AR) application utilized for real-time navigation within a smart city. In this situation, a user’s smartphone delegates resource-intensive operations, such as 3D rendering and path optimization, to an adjacent cloud server. MAFO utilizes a Markov chain-based mobility model to forecast user movement inside metropolitan networks, identifying appropriate offloading spots to save execution time and energy expenditure. For example, when the user switches between Wi-Fi and 5G networks, MAFO’s fault-tolerant algorithm guarantees task continuity by checkpointing essential AR components, thereby averting data loss during network interruptions. This yields a rendering speed increase of up to 77.35% and a reduction in energy consumption by 67.14% compared to GACO, hence improving the user experience in dynamic mobile contexts.

In the future, we plan to investigate whether making decisions more frequently is best when there is a large discrepancy between the actual network conditions and the forecasts that were generated. Naturally, this ought to be carried out with the overhead of the decision-making process and the benefit of repetition in mind. Furthermore, if a decision is made again, the elements that were previously operating on a mobile device or cloud will inevitably affect how ideal the new choice is, which is something that needs to be considered when calculating the load shedding solution.

Data availability

The datasets used and/or analyzed during the current study available from the corresponding author on reasonable request.

References

Kumari, P. & Kaur, P. A survey of fault tolerance in cloud computing. J. King Saud University-Computer Inform. Sci. 33 (10), 1159–1176 (2021).

Jin, X., Hua, W., Wang, Z. & Chen, Y. A survey of research on computation offloading in mobile cloud computing. Wireless Netw. 28 (4), 1563–1585 (2022).

Sadatdiynov, K. et al. A review of optimization methods for computation offloading in edge computing networks. Digit. Commun. Networks. 9 (2), 450–461 (2023).

Ghorbian, M. & Ghobaei-Arani, M. A survey on the cold start latency approaches in serverless computing: an optimization-based perspective. Computing 106 (11), 3755–3809 (2024).

Li, X. et al. Energy-efficient computation offloading in vehicular edge cloud computing. IEEE Access. 8, 37632–37644 (2020).

Jazayeri, F., Shahidinejad, A. & Ghobaei-Arani, M. A latency-aware and energy-efficient computation offloading in mobile fog computing: a hidden Markov model-based approach. J. Supercomputing. 77 (5), 4887–4916 (2021).

Katal, A., Dahiya, S. & Choudhury, T. Energy efficiency in cloud computing data centers: a survey on software technologies. Cluster Comput. 26 (3), 1845–1875 (2023).

Yang, L., Cao, J., Wang, Z. & Wu, W. Network aware mobile edge computation partitioning in multi-user environments. IEEE Trans. Serv. Comput. 14 (5), 1478–1491 (2018).

Ebrahimi, A., Ghobaei-Arani, M. & Saboohi, H. Cold start latency mitigation mechanisms in serverless computing: Taxonomy, review, and future directions. J. Syst. Architect. 103115 (2024).

Khezri, E. et al. DLJSF: data-locality aware job scheduling IoT tasks in fog-cloud computing environments. Results Eng. 21, 101780 (2024).

Ghorbian, M., Ghobaei-Arani, M. & Esmaeili, L. A survey on the scheduling mechanisms in serverless computing: a taxonomy, challenges, and trends. Cluster Comput. 27 (5), 5571–5610 (2024).

Wang, Z., Jin, Z., Yang, Z., Zhao, W. & Trik, M. Increasing efficiency for routing in internet of things using binary Gray Wolf optimization and fuzzy logic. J. King Saud University-Computer Inform. Sci. 35 (9), 101732 (2023).

Sun, G., Wang, Y., Yu, H. & Guizani, M. Proportional Fairness-Aware task scheduling in Space-Air-Ground integrated networks. IEEE Trans. Serv. Comput. 17 (6), 4125–4137. https://doi.org/10.1109/TSC.2024.3478730 (2024).

Zhang, L., Hu, S., Trik, M., Liang, S. & Li, D. M2M communication performance for a noisy channel based on latency-aware source-based LTE network measurements. Alexandria Eng. J. 99, 47–63 (2024).

Zhan, W. et al. Mobility-aware multi-user offloading optimization for mobile edge computing. IEEE Trans. Veh. Technol. 69 (3), 3341–3356 (2020).

Zhang, C. et al. Importance measures based on system performance loss for multi-state phased-mission systems. Reliab. Eng. Syst. Saf. 256, 110776. https://doi.org/10.1016/j.ress.2024.110776 (2025).

Sun, H., Yu, H., Fan, G. & Chen, L. QoS-aware task placement with fault-tolerance in the edge-cloud. IEEE Access. 8, 77987–78003 (2020).

Gong, Y., Yao, H. & Nallanathan, A. Intelligent sensing, communication, computation and caching for satellite-ground integrated networks. IEEE Netw. 38 (4), 9–16. https://doi.org/10.1109/MNET.2024.3413543 (2024).

Doost, P. A., Moghadam, S. S., Khezri, E., Basem, A. & Trik, M. A new intrusion detection method using ensemble classification and feature selection. Sci. Rep. 15 (1), 13642 (2025).

Ragmani, A. et al. Adaptive fault-tolerant model for improving cloud computing performance using artificial neural network. Procedia Comput. Sci. 170, 929–934 (2020).

Zhang, B. et al. Integrated heterogeneous graph and reinforcement learning enabled efficient scheduling for surface Mount technology workshop. Inf. Sci. 708, 122023. https://doi.org/10.1016/j.ins.2025.122023 (2025).

Kumari, R., Kaushal, S. & Chilamkurti, N. Energy conscious multi-site computation offloading for mobile cloud computing. Soft. Comput. 22 (20), 6751–6764 (2018).

Abd, S. K., Al-Haddad, S. A. R., Hashim, F., Abdullah, A. B. & Yussof, S. Energy-aware fault tolerant task offloading of mobile cloud computing. In 2017 5th IEEE International Conference on Mobile Cloud Computing, Services, and Engineering (MobileCloud). 161–164. (IEEE, 2017).

Hossain, M. D. et al. Fuzzy based collaborative task offloading scheme in the densely deployed small-cell networks with multi-access edge computing. Appl. Sci. 10 (9), 3115 (2020).

Almusaylim, A., Jhanjhi, N. Z. & Z., & Comprehensive review: privacy protection of user in location-aware services of mobile cloud computing. Wireless Pers. Commun. 111 (1), 541–564 (2020).

Sindhu, K. & Guruprasad, H. S. Computational offloading framework using caching and cloud service selection in mobile cloud computing. Int. J. Adv. Intell. Paradigms. 21 (3–4), 189–210 (2022).

Lakhan, A. & Li, X. Mobility and fault aware adaptive task offloading in heterogeneous mobile cloud environments. EAI Endorsed Trans. Mob. Commun. Appl. 5(16) (2019).

Wei, M., Yang, S., Wu, W., & Sun, B. (2024). A multi-objective fuzzy optimization model for multi-type aircraft flight scheduling problem. Transport 39(4), 313–322. https://doi.org/10.3846/transport.2024.20536

Chen, R. & Wang, X. Maximization of value of service for mobile collaborative computing through situation-aware task offloading. IEEE Trans. Mob. Comput. 22 (2), 1049–1065 (2021).

Mary, J. A. & Alyosius, A. Mobility and execution time aware task offloading in mobile cloud computing. Int. J. Interact. Mob. Technol. 16 (15), 30–45 (2022).

Kashyap, V., Ahuja, R. & Kumar, A. A hybrid approach for fault-tolerance aware load balancing in fog computing. Cluster Comput. 1–17 (2024).

Ding, D. et al. Q-learning based dynamic task scheduling for energy-efficient cloud computing. Future Generation Comput. Syst. 108, 361–371 (2020).

Uma, D., Udhayakumar, S., Tamilselvan, L. & Silviya, J. Client aware scalable cloudlet to augment edge computing with mobile cloud migration service. (2020).

Tang, D., Dai, R., Zuo, C., Chen, J., Li, K.,... Qin, Z. (2025). A Low-Rate DoS Attack Mitigation Scheme Based on Port and Traffic State in SDN. IEEE Transactions on Computers, 74(5), 1758-1770. doi: 10.1109/TC.2025.3541143

Sun, G., Liao, D., Zhao, D., Xu, Z. & Yu, H. Live migration for multiple correlated virtual machines in Cloud-Based data centers. IEEE Trans. Serv. Comput. 11 (2), 279–291. https://doi.org/10.1109/TSC.2015.2477825 (2018).

Huang, W., Li, T., Cao, Y., Lyu, Z., Liang, Y., Yu, L. & Li, Y. Safe-NORA:Safe reinforcement learning-based mobile network resource allocation for diverse user demands. In Paper Presented at the CIKM ‘23, New York, NY, USA. https://doi.org/10.1145/3583780.3615043 (2023).

Wang, E., Yang, Y., Wu, J., Liu, W. & Wang, X. An efficient Prediction-Based user recruitment for mobile crowdsensing. IEEE Trans. Mob. Comput. 17 (1), 16–28. https://doi.org/10.1109/TMC.2017.2702613 (2018).

Yin, L., Sun, J. & Wu, Z. An evolutionary computation framework for task off-and-downloading scheduling in mobile edge computing. IEEE Internet Things J. (2024).

Zhou, J. & Zhang, X. Fairness-aware task offloading and resource allocation in cooperative mobile-edge computing. IEEE Internet Things J. 9 (5), 3812–3824 (2021).

Long, T. et al. A mobility-aware and fault-tolerant service offloading method in mobile edge computing. In 2022 IEEE International Conference on Web Services (ICWS). 67–72. (IEEE, 2022).

Chen, M., Guo, S., Liu, K., Liao, X. & Xiao, B. Robust computation offloading and resource scheduling in cloudlet-based mobile cloud computing. IEEE Trans. Mob. Comput. 20 (5), 2025–2040 (2020).

Premalatha, B. & Prakasam, P. Optimal energy-efficient resource allocation and fault tolerance scheme for task offloading in IoT-FoG computing networks. Comput. Netw. 238, 110080 (2024).

Long, T. et al. Fault-tolerant mobile service offloading in mobile edge computing. IEEE Trans. Autom. Sci. Eng. (2025).

Lakhan, A., Ahmad, M., Bilal, M., Jolfaei, A. & Mehmood, R. M. Mobility aware blockchain enabled offloading and scheduling in vehicular fog cloud computing. IEEE Trans. Intell. Transp. Syst. 22 (7), 4212–4223 (2021).

Huang, H., Zhan, W., Min, G., Duan, Z. & Peng, K. Mobility-aware computation offloading with load balancing in smart city networks using MEC federation. IEEE Trans. Mobile Comput. (2024).

Ali, H. S. & Sridevi, R. Mobility and security aware real-time task scheduling in fog-cloud computing for IoT devices: a fuzzy-logic approach. Comput. J. 67 (2), 782–805 (2024).

Medishetti, S. K. et al. GACO: Resource aware scheduling in multi cloud environment using hybrid meta-heuristic algorithm. In 2024 2nd International Conference on Self Sustainable Artificial Intelligence Systems (ICSSAS). 1312–1319. (IEEE, 2024).

Liang, J. et al. Deep reinforcement learning based reliability-aware resource placement and task offloading in edge computing. In IEEE International Conference on Web Services. (IEEE, 2024).

Liang, J., Ma, B., Feng, Z. & Huang, J. Reliability-aware task processing and offloading for data-intensive applications in edge computing. IEEE Trans. Netw. Serv. Manage. 20 (4), 4668–4680 (2023).

Ma, Y., Zhao, H., Guo, K., Xia, Y., Wang, X., Niu, X. & Dong, Y. A fault-tolerant mobility-aware caching method in edge computing. CMES-Comput. Mode. Eng. Sci. 140(1) (2024).

Su, Q., Li, X., Ren, Y., Qiu, R., Hu, C., Yin, Y. (2025). Attention Transfer Reinforcement Learning for Test Case Prioritization in Continuous Integration. Appl Sci. 15(4), 2243.https://doi.org/10.3390/app15042243 https://doi.org/10.3390/app15042243

Kai, C., Zhou, H., Yi, Y. & Huang, W. Collaborative cloud-edge-end task offloading in mobile-edge computing networks with limited communication capability. IEEE Trans. Cogn. Commun. Netw. 7 (2), 624–634 (2020).

Huang, L. & Yu, Q. Mobility-aware and energy-efficient offloading for mobile edge computing in cellular networks. Ad Hoc Netw. 158, 103472 (2024).

Junior, W., França, A., Dias, K. & de Souza, J. N. Supporting mobility-aware computational offloading in mobile cloud environment. J. Netw. Comput. Appl. 94, 93–108 (2017).

Ayoubi, M., Li, M., Al-Daoar, A. & Rabih, R. Mamo-Edge: A Mobility-Aware Multi-Objective Approach to Improve Qos & Faulty Services in Federated Edges. Available at SSRN 4893110.

Dritsas, E., Ramantas, K. & Verikoukis, C. A Mobility-aware reinforcement learning proactive solution for state data migration in edge computing. In 2024 IEEE 29th International Workshop on Computer Aided Modeling and Design of Communication Links and Networks (CAMAD). 1–6. (IEEE, 2024).

Acknowledgements

This research was supported by Henan Province Undergraduate University Smart Teaching Special Research Project (Research on AI Smart Teaching Comprehensive Quality Evaluation System). This research was supported by National College Student Innovation and Entrepreneurship Training Program Project (No. 202310478002).

Author information

Authors and Affiliations

Contributions

All authors participated in the conception and design of the study. Data collection, simulation, and analysis were conducted by Ning Wang, Ya Li, Yuanbang Li, and Huanxin Nie.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wang, N., Li, Y., Li, Y. et al. Fault-tolerant and mobility-aware loading via Markov chain in mobile cloud computing. Sci Rep 15, 18844 (2025). https://doi.org/10.1038/s41598-025-02529-3

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-02529-3