Abstract

This paper introduces an approach to temperature prediction by employing three distinct machine learning models: K-Nearest Neighbors (KNN), Gaussian Process Regression (GPR), and Multi-layer Perceptron (MLP) which are integrated into Computational Fluid Dynamics (CFD). The dataset consists of inputs, represented by the features x and y, and the corresponding output, which is the temperature. The case study is fluid flow of nanofluid through a pipe and the nanofluid contains CuO particles. To enhance model efficacy, the Political Optimizer (PO) algorithm was utilized for fine-tuning purposes. The findings substantiate the capability of the optimized frameworks in delivering precise temperature estimations. GPR yielded a notable R2 value of 0.998, reflecting a strong concordance between the estimated and actual measurements. In a comparable manner, the KNN approach exhibited outstanding predictive performance, also attaining an R2 value of 0.998. MLP, although slightly lower in performance compared to the other models, still proves to be a reliable predictor of temperature values, with an R-squared score of 0.984. Overall, the combination of the PO algorithm and the machine learning models showcases promising results in temperature prediction. The study’s findings offer valuable insights for decision-making processes in a variety of domains that rely on accurate temperature forecasting.

Similar content being viewed by others

Introduction

Computational fluid dynamics (CFD) has been recognized as a great tool for simulation of complex fluid flow where the fluid flow in complex geometry can be simulated with great accuracy1,2,3. The recent development of nanofluids has opened new horizon for enhancement of heat and mass transfer in fluids which is facilitated with the aid of nanoparticles. Different nanoparticles have been used for nanofluids to enhance the heat transfer and the contribution of nanoparticles to total heat transfer efficiency has been investigated via numerical computations such as CFD method4,5,6,7.

The method of CFD has been recognized to be robust in simulation of fluid flow and nanofluids, however the method is complex and huge computational resources are needed for case studies with large geometries. Therefore, the less computationally expensive methods are preferred for industrial applications in order to execute simulations for large geometries. In this regards, machine learning models have been suggested for combination with CFD where they can be adopted for linking with CFD for better simulation of fluid flows with less computational times and expenses to enhance the simulation efficiency8,9.

In recent years, the field of Machine Learning (ML) has been a focal point of interest for scholars across a wide spectrum of academic disciplines. These models now serve as fundamental tools in contemporary research, facilitating advanced methods for identifying patterns, forecasting outcomes, categorizing data, and supporting complex decision-making processes10,11,12. In this study we used K-Nearest Neighbors (KNN), Gaussian Process Regression (GPR), and Multi-layer Perceptron (MLP) to calculate temperature parameter for simulation of heat transfer within a nanofluidic system. The models are used to learn synthetic data from a CFD simulation where temperature distribution in a pipe containing nanofluid is used.

The KNN algorithm offers distinct benefits due to its ability to estimate outcomes by averaging the values associated with the most proximate instances within the input space. This method determines the K most similar observations relative to a new input and infers the corresponding output by deriving the mean value from these selected reference points13. GPR is a non-parametric regression technique that utilizes a Gaussian process to model the data distribution. By fitting a flexible function to the training data, GPR captures complex relationships between input features and the target variable. This approach enables accurate predictions while accounting for uncertainty. GPR’s versatility and ability to model data distributions make it a powerful tool for regression tasks in diverse domains14. MLP is a widely used ANN-based model for regression tasks. Constructed with multiple layers of interconnected artificial neurons, the MLP model possesses the remarkable capability to capture intricate nonlinear patterns and dependencies within the dataset. By leveraging its deep architecture, MLP provides a powerful tool for accurate and flexible prediction in various domains15.

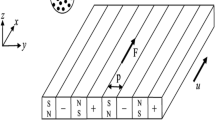

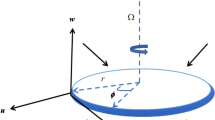

Dataset

The dataset utilized in the study includes a total of 2000 points, wherein the independent variables are represented by x and y, while the dependent variable is Temperature. The dataset is the same as data used in the past research as reported by Zhao9. In this system, x and y represent the spatial coordinate of the pipe geometry where CFD simulations based on finite volume method are executed to determine the temperature distribution for a fluid flow inside a pipe containing CuO nanoparticles. Transport phenomena equations are solved numerically via the finite volume scheme and the results are used for ML modeling. Figure 1 is a histogram that displays the distribution of the temperature values in the dataset9.

Distribution of the temperature of nanofluid.

Methodology

Gaussian process regression (GPR)

GPR is a probabilistic regression model that employs a collection of random variables to define a Gaussian process. The GPR model represents the target variable as a function of input variables and aims to estimate the underlying function based on observed data. The GPR model is defined as16:

where y represents the target variable, x denotes the input variable, f is the underlying function, and \(\varepsilon\) represents the residual error17,18.

K-Nearest neighbors (KNN)

KNN is a robust non-parametric ML model that estimates the target points by utilizing the collective information from the K nearest neighbors in the feature space. KNN utilizes the resemblance among adjacent data instances to examine the dataset’s local characteristics for predictive purposes. When presented with a novel input, the algorithm locates the K most comparable entries within the training data and determines the predicted value by averaging the outcomes associated with these nearby instances19,20.

The KNN model determines the predicted value y for a given test input x as13:

where \(\:{y}_{i}\) represents the target values of the K nearest neighbors.

Multilayer perceptron (MLP)

A MLP represents a type of artificial neural architecture distinguished by several hierarchical strata of interlinked processing units, often referred to as artificial neurons. In the architecture of the MLP, individual neurons utilize non-linear activation mechanisms on their received inputs21,22.

The output of an MLP model is calculated through forward propagation, which involves passing the input through the network layers. The predicted value y for a given input x can be expressed as23,24:

In this equation, \(\:{W}_{1}\) and \(\:{W}_{2}\) stand for the weight matrices, \(\:{b}_{1}\) and \(\:{b}_{2}\) denote the bias vectors, and \(\:{\upsigma\:}\) denotes the activation function applied element-wise.

Data preprocessing

Before applying the regression models, the following data preprocessing steps were performed:

-

Outlier Detection: Anomalous data (outlier) identification was conducted through application of the z-score technique. This approach measures how far an individual observation diverges from the distribution’s central tendency, expressed in terms of standard deviation units relative to the mean. By determining the z-score value for each data point, it is possible to identify outliers that deviate significantly from the average values. Typically, a threshold is set to determine whether a data point is considered an outlier25.

-

Normalization: In this study, normalization of the data was performed using the min-max scaler. By utilizing the min-max scaler, the values of each feature can be effectively transformed to a predetermined range, typically spanning from 0 to 1. During the scaling process, the minimum value of the feature is subtracted, and the result is divided by the range between the maximum and minimum values26.

Hyperparameter tuning

This research employed the Political Optimizer (PO) algorithm to adjust hyperparameters effectively. PO represents an optimization strategy that draws conceptual inspiration from political dynamics and societal interactions. The algorithm simulates political deliberation, wherein agents exchange perspectives, engage in mutual influence, and collaboratively progress toward a shared resolution27. The PO algorithm explores the hyperparameter domain through successive modifications of the positions associated with metaphorical political parties, where each party embodies a unique configuration of hyperparameters. Through processes akin to opinion shaping, mutual influence, and inter-party dynamics, the algorithm systematically steers the search toward optimal configurations that enhance the targeted performance criteria28,29.

Results and discussion

The predictive power of the trained models was determined using various performance metrics. Table 1 summarizes the results obtained.

GPR attained a remarkable coefficient of determination (R2) of 0.99893, reflecting an exceptionally strong alignment between the model’s predictions and the observed temperature data. The RMSE was found to be 1.2920E-01, demonstrating the model’s capability to accurately estimate temperature fluctuations. Additionally, the MAPE was measured at 2.97944E-04, indicating a small average deviation between predicted and actual values.

The KNN model likewise exhibited strong predictive capability, achieving an R2 value of 0.99852, indicative of a high level of agreement between predicted and actual outcomes. The recorded RMSE of 1.5231E-01 signifies the model’s proficiency in capturing temperature variations, indicating its ability to accurately estimate temperature values. This conclusion is further supported by the MAPE value of 2.00414E-04, which reinforces the model’s accuracy in predicting temperature values.

MLP, while slightly lower in performance compared to GPR and KNN, still provided reliable predictions. An R2 score of 0.98426 was achieved by this model, indicating a strong statistical association between the predicted results and the observed temperature measurements. The RMSE was measured at 4.9120E-01, reflecting the model’s ability to estimate temperature fluctuations. A MAPE of 9.27298E-04 signifies a notably low average discrepancy between the forecasted outcomes and the corresponding observed values, highlighting the model’s high predictive accuracy.

The obtained results collectively showcase the notable performance of the GPR, KNN, and MLP models in temperature prediction. Specifically, GPR and KNN exhibit superior accuracy and precision compared to MLP. These facts can be confirmed using fitting charts in Figs. 2, 3 and 4 which are comparisons of predicted and observed temperature values using all three models. Figures 5, 6 and 7 also show the three-dimensional surface of the final models, which are generally similar, but differ in smoothness9.

Comparison between estimated and actual temperature values obtained using the GPR model.

Comparison between predicted and actual temperature values obtained using the KNN model.

Comparison between predicted and actual temperature values obtained using the MLP model.

3D surface of GPR model.

3D surface of KNN model.

3D surface of MLP model.

Also, the GPR model was used as the best model to generate Figs. 8 and 9 which are the effect of coordinates on temperature. The 2D representation of T distribution is indicated in Fig. 10 which shows agreement with the results reported by Zhao for simulation of nanofluid flow9. The parabolic observation of T distribution can be attributed to the velocity profile and the effect of viscous forces inside the fluid which is in a pipe. In fact, the minimum T can be obtained at the center of tube due to the heat transfer flux imposed on the tube wall, while heat transfer is driven by convection and conduction through the fluid.

Temperature change with x-coordinate change.

Temperature change with y-coordinate change.

Contour plot of temperature.

Conclusion

This investigation examined the efficacy of three distinct machine learning models, specifically GPR, KNN, and MLP in the context of temperature forecasting. Ultimately, the findings of this study suggest that. The training of the models was conducted on a dataset comprising of input features, namely x and y, and their corresponding temperature values. The dataset underwent preprocessing procedures, and the models were optimized through the utilization of the PO (Political Optimizer) algorithm.

The findings indicate that the three models exhibited exceptional performance in forecasting temperature values. The GPR model attained a remarkable R2 of 0.99893, denoting a robust association between the projected and observed temperature values. The KNN algorithm exhibited exceptional performance, as evidenced by its R-squared of 0.99852. Despite obtaining a marginally reduced R2 value of 0.98426, the MLP model demonstrated dependable predictive capabilities.

The accuracy of the models in estimating temperature fluctuations was confirmed by the RMSE values. The GPR method yielded an RMSE of 1.2920E-01, whereas the KNN approach resulted in an RMSE of 1.5231E-01. The MLP model exhibited a comparatively elevated root mean square error (RMSE) value of 4.9120E-01, suggesting the presence of a certain degree of unpredictability in its forecasts.

Furthermore, the MAPE values were computed to evaluate the typical discrepancy between the projected and observed temperature values. The GPR and KNN models demonstrated reduced MAPE values (2.97944E-04 and 2.00414E-04, respectively), suggesting a diminished mean deviation. The MLP model exhibited a marginally elevated MAPE metric of 9.27298E-04; however, it yielded predictions that were reasonably precise.

Data availability

The datasets used during the current study are available from the corresponding author on reasonable request.

References

Bode, F. et al. Impact of realistic boundary conditions on CFD simulations: A case study of vehicle ventilation. Build. Environ. 267, 112264 (2025).

Luan, X. et al. Calibration and sensitivity analysis of under-expanded hydrogen jet CFD simulation based on surrogate modeling. J. Loss Prev. Process Ind. 94, 105535 (2025).

Yao, N. N. et al. Progress in CFD simulation for ammonia-fueled internal combustion engines and gas turbines. J. Energy Inst. 119, 101951 (2025).

Aldossary, S., Sakout, A. & El Hassan, M. CFD simulations of heat transfer enhancement using Al2O3–air nanofluid flows in the annulus region between two long concentric cylinders. Energy Rep. 8, 678–686 (2022).

Delavari, V. & Hashemabadi, S. H. CFD simulation of heat transfer enhancement of Al2O3/water and Al2O3/ethylene glycol nanofluids in a car radiator. Appl. Therm. Eng. 73(1), 380–390 (2014).

Natarajan, A. et al. CFD simulation of heat transfer enhancement in circular tube with twisted tape insert by using nanofluids. Mater. Today: Proc. 21, 572–577 (2020).

Zhang, S. et al. Turbulent heat transfer and flow analysis of hybrid Al2O3-CuO/water nanofluid: an experiment and CFD simulation study. Appl. Therm. Eng. 188, 116589 (2021).

Godasiaei, S. H. & Kamali, H. A. Evaluating machine learning as an alternative to CFD for heat transfer modeling. Microgravity Sci. Technol. 37(1), 6 (2025).

Zhao, H. Computational modeling of nanofluid heat transfer using fuzzy-based bee algorithm and machine learning method. Case Stud. Therm. Eng. 54, 104021 (2024).

Polikar, R. Ensemble learning. In Ensemble Machine Learning, 1–34 (Springer, 2012).

Sagi, O. & Rokach, L. Ensemble learning: A survey. Wiley Interdiscip. Rev. Data Min. Knowl. Discov.. 8(4), e1249 (2018).

Almehizia, A. A. et al. Numerical optimization of drug solubility inside the supercritical carbon dioxide system using different machine learning models. J. Mol. Liq. 392, 123466 (2023).

Kramer, O. & Kramer, O. K-nearest neighbors. Dimensionality reduction with unsupervised nearest neighbors, 13–23 (2013).

Wang, J. An intuitive tutorial to Gaussian processes regression. arXiv preprint arXiv:2009.10862 (2020).

Ramchoun, H. et al. Multilayer perceptron: Architecture optimization and training. (2016).

Rasmussen, C. E. Gaussian processes in machine learning. In Summer School on Machine Learning (Springer, 2003).

Ebden, M. Gaussian processes: A quick introduction. arXiv preprint arXiv:1505.02965 (2015).

Ruiz, A. V. & Olariu, C. A general algorithm for exploration with gaussian processes in complex, unknown environments. In IEEE International Conference on Robotics and Automation (ICRA). (IEEE, 2015).

Cover, T. Estimation by the nearest neighbor rule. IEEE Trans. Inf. Theory. 14(1), 50–55 (1968).

Chen, C. R. & Three Kartini, U. K-nearest neighbor neural network models for very short-term global solar irradiance forecasting based on meteorological data. Energies. 10(2), 186 (2017).

Gardner, M. W. & Dorling, S. Artificial neural networks (the multilayer perceptron)—a review of applications in the atmospheric sciences. Atmos. Environ. 32(14–15), 2627–2636 (1998).

Amendolia, S. R. et al. A comparative study of k-nearest neighbour, support vector machine and multi-layer perceptron for thalassemia screening. Chemometr. Intell. Lab. Syst. 69(1–2), 13–20 (2003).

Mielniczuk, J. & Tyrcha, J. Consistency of multilayer perceptron regression estimators. Neural Netw. 6(7), 1019–1022 (1993).

Noriega, L. Multilayer Perceptron Tutorial., vol. 4, 5 (School of Computing, Staffordshire University, 2005).

Aggarwal, V. et al. Detection of spatial outlier by using improved Z-score test. In 2019 3rd International Conference on Trends in Electronics and Informatics (ICOEI). (IEEE, 2019).

Kappal, S. Data normalization using median median absolute deviation MMAD based Z-score for robust predictions vs. min–max normalization. Lond. J. Res. Sci. Nat. Formal. (2019).

Askari, Q., Younas, I. & Saeed, M. Political optimizer: A novel socio-inspired meta-heuristic for global optimization. Knowl. Based Syst. 195, 105709 (2020).

Manita, G. & Korbaa, O. Binary political optimizer for feature selection using gene expression data. Comput. Intell. Neurosci. 2020. (2020).

Awad, R. Sizing optimization of truss structures using the political optimizer (PO) algorithm. In Structures (Elsevier, 2021).

Acknowledgements

The authors are thankful to the Higher Education of the Russian Federation for supporting this work (through project No. FENU-2023-0010) for their support of this work.

Author information

Authors and Affiliations

Contributions

Farag M. A. Altalbawy: Writing, Investigation, Methodology, Software, Supervision.Ahmad Alkhayyat: Writing, Investigation, Methodology, Software.Ramdevsinh Jhala: Writing, Investigation, Methodology, Validation.Anupam Yadav: Writing, Analysis, Methodology, Software, Validation.Ramachandran T.: Writing, Investigation, Methodology, Software, Validation.Aman Shankhyan: Writing, Investigation, Methodology, Software, Visualization.Karthikeyan A.: Writing, Investigation, Validation.Dhirendra Nath Thatoi: Writing, Investigation, Methodology, Resources, Validation.Vladimir Vladimirovich Sinitsin: Writing, Investigation, Methodology, Software, Validation.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Altalbawy, F.M.A., Alkhayyat, A., Jhala, R. et al. Computational fluid dynamic and machine learning modeling of nanofluid flow for determination of temperature distribution and models comparison. Sci Rep 15, 19073 (2025). https://doi.org/10.1038/s41598-025-03187-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-03187-1