Abstract

Sentiment analysis is an essential component of Natural Language Processing (NLP) in resource-abundant languages such as English. Nevertheless, poor-resource languages such as Telugu have experienced limited efforts owing to multiple considerations, such as a scarcity of corpora for training machine learning models and an absence of gold standard datasets for evaluation. The current surge of transformed based models in NLP enables the attainment of exceptional performance in many different tasks. Nevertheless, researchers are increasingly interested in exploring the potential of transformed based models that have been pre-trained in several languages for various natural language processing applications, particularly for languages with limited resources. This research examines the efficacy of four pre-trained transformed based models, specifically IndicBERT, RoBERTa, DeBERTa, and XLM-RoBERTa, for sentence-level sentiment analysis in the Telugu language. Evaluated the performance of all four models using our dataset, “Sentikanna,” which consists of numerous domain datasets for the Telugu language. We compared the performance of these models with three different datasets and observed a promising outcome. XLM-RoBERTa achieves a good accuracy of 79.42% for a binary sentiment classification. This work can be considered a reliable standard for sentiment analysis in the Telugu language.

Similar content being viewed by others

Introduction

Sentiment Analysis (SA), or opinion mining, is a fundamental application of Natural Language Processing (NLP) that entails identifying, extracting, and assessing sentiments in textual data to ascertain its emotional tone, positive, negative, or neutral1,2. It is essential for comprehending public sentiment, consumer feedback, and social media discussions. The escalating volume of user-generated content renders manual emotion analysis increasingly impractical. Recent breakthroughs in natural language processing and artificial intelligence have rendered sentiment analysis more accessible and economical, enabling both organizations and people to utilize it for decision-making processes3. Nevertheless, despite these developments, automated SA continues to encounter significant obstacles. This encompasses the precise identification of subjectivity and tone, comprehension of contextual dependencies and polarity, detection of irony and sarcasm, interpretation of negations, and processing of culturally distinctive expressions and multilingual content. The variety and intricacy of online language encompassing symbols, hashtags, accents, and colloquial expressions exacerbate the challenge. Conventional models frequently fail to encapsulate these subtleties, underscoring a distinct deficiency in existing methodologies. Transformed based architectures, especially those utilizing attention mechanisms, have become a formidable alternative by adeptly simulating long-range dependencies and subtle contextual signals. Their capacity to acquire complex representations of language renders them adept at tackling the nuances of contemporary sentiment analysis.

Usually, polarity is classified into one of three distinct categories: positive, negative, or neutral. However, accurately assessing current attitudes is problematic because of the high volume of reviews available and the diverse range of expressions used in these assessments. Prejudiced and fraudulent reviews exacerbate the complexity of predictive analytics. SA can be categorized into three levels: phrase level, document level, and aspect level. Based on language analysis, SA can be categorized as either monolingual, which involves analyzing one language, such as English, Hindi, Chinese, Arabic, etc., or multilingual, which involves analyzing a combination of two or more languages through techniques like codemixing and code-switching. SA research is categorized into mono-modal (text-only) and multimodal (combining multiple media types) approaches. Techniques for SA include lexicon-based, machine learning (ML)-based, deep learning (DL)-based, and hybrid methods. SA has diverse applications in fields like business, marketing, government, and politics, aiding decision-making. Research can expand to sub-tasks such as subjectivity analysis, sarcasm detection, and emotion analysis. SA on social media often involves NLP-based classification to automatically identify attitudes4.

India is a big, multi-cultural and multilingual country. Extensive research has been conducted on text categorization projects in major global languages like as English, Spanish, and Mandarin. Research on NLP has also been conducted for Indian languages, including Urdu as well as Hindi5. However, there has been a scarcity of research conducted on Dravidian languages. Dravidian languages play a significant role in Indian culture. They are utilized in various places worldwide for digital and face-to-face communication and publication, even beyond India. The limited amount of research conducted on NLP tasks in Dravidian languages can be attributed primarily to the scarcity of labelled datasets. In this instance, we generate a collection of texts in the Telugu language and extract binary sentiment categorization.

Telugu is a regional language that has a vast amount of data easily accessible on social media. However, it is challenging to find class labels for sentences in Telugu SA. Posting comments and evaluations on social networking platforms has become an essential practice for individuals. Lately, individuals have developed a tendency to utilize their mother tongues when sharing their academic work, as they find it more convenient to write in their native languages. Out of all the several regional languages, Telugu is renowned for its copious amount of information obtained from social media. Acquiring labeled data for Telugu Language Sentiment Classification is a challenging endeavor. Within the field of SA, there has been a lack of focus on the labelling of Telugu data. While there are numerous unprocessed collections of opinionated data available, it is still unknown whether any publicly accessible collections exist that contain Telugu phrases that have been annotated. Telugu is recognized as the major primary language of two neighboring Indian states: Andhra Pradesh and Telangana.

Telugu speakers have diverse dialects, with the Krishna and Godavari dialects being widely recognized and used in print, media, and broadcasts due to their standardization and broad accessibility. Our corpus was constructed with a specific emphasis on this particular language variety due to its prevalence and extensive representation in various online sources such as newspapers, blogs, and user comments. This paper elucidates the process of constructing a Telugu language corpus, wherein the sentences are annotated with their polarity as the gold standard. The dataset contains reviews from the areas of Recipes, Tourism, and Movies and is considered to hold the largest set of Telugu phrases with polarity annotations. This rich dataset inspires the creation of new approaches for classifying emotional expressions in Telugu. The post also explores different sentiment analysis techniques and ensemble models for integrating data from various features.

SA is a specific aspect of NLP that focuses on discerning individuals’ emotions, feelings, and views regarding various topics, including goods, services, occasions, motion pictures, news, people, and organizations. SA is a highly potent application of NLP that finds utility across various industries6. SA integrates NLP, text analysis, computational linguistics, and biometrics to detect and quantify emotional states and subjective information. Reviews are gathered and refined, with the proposed model assessed on a pre-existing dataset through parameter adjustments. SA can be applied at three levels: sentence-level (assessing the polarity of a sentence), document-level (analyzing the overall content of a document), and aspect-level (evaluating the polarity of specific aspects in a text on a word-by-word basis)7. Lack of a dataset with annotations is a challenge when working with Indian languages. Automated SA is a difficult job because of the intricacies involved in NLP, including the ambiguity of the writer’s objectives and the variability of the text’s sentiment based on the context. In addition, the limited availability of labelled data sets hinders the task of analyzing texts written in spoken languages apart from English. This study showcases the utilization of supervised ML methods to analyze a specific dataset to extract and label sentiment from different sources.

Telugu is positioned as the sixteenth most widely used language globally, as per the Ethnologue catalog8. Telugu is a prominent regional language in India, spoken by around 95.7 million individuals as their first tongue. Both Andhra and Telangana, two adjacent states in India, acknowledge Telugu as their predominant official language9. Within the Indian language landscape, Telugu is the second most prominent language in terms of regional data created by its speakers, following only Hindi10. The limited availability of annotated Telugu materials, along with the intricate morphology of the language, poses challenges for SA.

Telugu SA has been the subject of several recent papers11,12,13,14-15. Considerable and targeted efforts have been made to create pre-trained models for word embedding for the Telugu language. These efforts include employing Word2Vec in16 and training FastText embeddings using an n-gram based skip-gram model in17. The pre-trained word embeddings, which are based on Byte-Pair Encoding (BPE), are trained using Telugu Wikipedia, similar to the n-gram technique18.

Although the multilingual model BERT-m19 has certain cross-lingual properties, it has not undergone training using cross-lingual data20. Cross-lingual word embedding can be employed to convey meaning and transmit knowledge between various languages. These embeddings are derived from a corpus in any accessible language and subsequently utilized to train a model for predicting the polarity of texts in languages with limited resources.

XLM-RoBERTa is a robust multilingual transformed based model trained in more than 100 languages, including Hindi, and is exceptionally proficient in sentiment analysis tasks. It employs subword tokenization and deep contextual embeddings to comprehend the content and emotional nuance of Hindi utterances, even in intricate or low-resource contexts. By refining the model with Hindi-specific sentiment datasets, it can precisely categorize text as positive, negative, or neutral. Moreover, its cross-lingual capabilities facilitate the utilization of knowledge from different languages, permitting zero-shot or few-shot sentiment classification. XLM-RoBERTa is a resilient and adaptable instrument for sentiment analysis in Hindi across diverse domains such as reviews, social media, and news.

XLM-RoBERTa exhibits superior performance for Hindi compared to Telugu due to the availability of more training data, enhanced NLP resources, and broader research backing for Hindi. The script facilitates tokenization, hence enhancing the accuracy of sentiment analysis in Hindi. How XLM-Roberta plays a more crucial role in the Hindi language than Telugu is explained in the literature review section, with some papers published in the Hindi language.

As far as we know, the most advanced cross-lingual contextual embeddings, such as XLM-RoBERTa (XLM-R)21, have not yet been used for SA in the resource-limited Telugu language. To fill this gap, we assess the effectiveness of IndicBERT, RoBeRTA, DeBeRTA, and XLM-R, as well as transfer learning, in making predictions in the Telugu language. A language with limited resources is used to train the classification model, often Telugu. The proposed model surpasses existing state-of-the-art techniques and offers an effective solution for SA at the phrase level (namely, tweets) in a situation where resources are limited.

Therefore, the primary contribution of the present study is:

-

Creation of a Telugu Sentiment Analysis Dataset: We developed an extensive, sentence-level sentiment corpus for the Telugu language, encompassing many areas and a wide range of textual sources. This pertains to the deficiency of annotated data for Indian languages, specifically Telugu.

-

Thorough Model Assessment: We assessed the efficacy of multiple transformed based language models, IndicBERT, RoBERTa, DeBERTa, and XLM-RoBERTa on our dataset, establishing benchmarks for sentiment classification in Telugu.

-

Cross-Dataset Comparison: We evaluated model performance across various datasets, including our newly developed corpus, to examine the generalizability and resilience of transformed based models in processing Telugu text.

The article is structured as follows: Sect. 2 offers a concise overview of the previous research conducted in the field of Telugu sentiment analysis. This is followed by a detailed explanation of the approach employed in Sect. 3. Section 4 provides a comprehensive analysis of the model’s performance, while Sect. 5 delivers the conclusion.

Literature review

This study is separated into two sections: the related works portion, which covers Telugu sentiment analysis and transformed based sentiment analysis. Below are a few examples of literary works published in the Telugu language.

Telugu sentiment analysis

Identifying the sentiment of Telugu social media messages poses numerous difficulties. Telugu possesses a highly intricate morphology. There is a significant lack of research and exploration in the domain of SA in the Telugu language. Compared to the global languages, there has been significantly less research conducted on the Telugu language due to Insufficient readily accessible tools and resources.

Lexicon-based sentiment analysis

In 2017, Naidu7 demonstrated the use of a lexicon-based technique and machine learning (ML) for sentiment analysis (SA) in Telugu. By employing the Telugu SentiWordNet lexicon, the study identified subjective statements in the Telugu corpus. Additionally, various ML techniques, including Support Vector Machines (SVM), Naïve Bayes (NB), and Random Forest (RF), were used to classify emotions, achieving an accuracy of 85%, the highest achieved in the study.

The sentiment of the text was determined using SentiPhraseNet22, and the accuracy of the results was confirmed using ACTSA, an annotated corpora dataset. While the Telugu SentiPhraseNet serves as a dictionary of terms, it does not provide specific feelings for numerous phrases in ACTSA. Consequently, many ambiguous sentences need to be determined. In this study, TextBlob, an internet program, is utilized to ascertain the tone of a Telugu word in its English translation.

A further research paper outlines a project aimed at developing a meticulously annotated collection of Telugu sentences23, which would serve as a benchmark for Telugu SA. The Telugu phrases have undergone physical validation by native Telugu speakers to ensure compliance with our classification rules. They are currently included in the ACTSA corpus, also known as the Annotated Corpus for Telugu SA. This corpus has 5410 sentences that have been annotated by scholars, rendering it the most exhaustive resource available. Currently, the public community has access to labelling guidelines and a corpus of annotations.

This paper introduces a machine learning-based Telugu sentiment analysis system12. Over 7 lakh unannotated sentences were utilized to train a Doc2Vec model, which turned sentences into 200-dimensional vectors. The tagged dataset had 1,644 positive, negative, or neutral phrases. Naive Bayes, Logistic Regression, SVM, MLP, Decision Trees, Random Forest, and Adaboost were trained on these vectors. Both binary and ternary (positive/negative/neutral) sentiment classifications were used. In binary classification, Random Forest excelled in 84% accuracy, while Logistic Regression excelled in ternary classification with 68% accuracy. Stability was assessed using 5-fold cross-validation and many trials. Doc2Vec does well in representing Telugu text for sentiment categorization. Morphological analysis and adaptation to other Indian languages and code-mixed settings are suggested improvements.

The process of dataset generation and polarity assignment is outlined in11. After creating the corpora, the authors trained classifiers for accurate SA performance. While SA is typically trained on data from the same domain as the test data, combining data from multiple domains allows for the development of a more versatile sentiment analyzer, useful in cases of insufficient domain-specific data. The authors created a generic classifier based on the sentiment corpus, initially evaluating SA models from distinct data sources in both the same and different domains. They then constructed sentiment models using multi-domain datasets and assessed their performance. The study ultimately compares the effectiveness of three different SA methodologies.

Another study13 aims to improve sentiment analysis (SA) in Telugu by creating a thoroughly annotated corpus with word-level sentiment annotations. The authors explore the approach for polarity annotation and validate the resource using models such as Linear SVM, KNN, and RF. The project aims to develop a benchmark corpus that builds upon SentiWordNet and sets a baseline accuracy for sentiment prediction based on lexeme annotations. The primary focus is on machine learning methods and word-level sentiment annotations, with the annotation of bi-grams in the target corpus further enhancing precision.

A machine learning model using an SVM classifier, along with uni, bi, and skip-gram features, was employed to classify attitudes. The skip-gram and ML models outperformed the lexicon-based approach in terms of speed and efficiency. The dataset used, containing code-mixed data in Bengali and English, was sourced from The NLP Tool Contest, SAIL @ ICON 2017, and was classified as positive, negative, or neutral using a Convolutional Neural Network24. This methodology was later applied to a Telugu dataset, manually generated from internet movie reviews, to assess the system’s effectiveness in processing native scripts of Indian languages.

Authors14 classify attitudes as positive or negative, using TF-IDF and DOC2VEC for text preprocessing. They evaluated advanced ensemble techniques, including RF, stacking, and ADA-boosting, achieving favorable results. In25, sentiment was extracted from Telugu-English movie-related tweets using lexicons and machine learning techniques. The study used a lexicon-based approach to support transliteration and sentiment extraction. he Sentiphrasenet technique26 focuses on sentiment evaluation of Telugu text using annotated datasets, PoS tagging, and bigram/trigram rules. However, it does not classify emotions for entire sentences.

Deep learning based sentiment analysis

Authors27 developed an aspect-based approach for classifying Telugu movie reviews. They employed deep learning methods but found that aspect-based models struggled due to limited vocabularies. Kumar28 utilized BRNNs to analyze audience responses to Telugu films. The method involved correcting grammatical flaws and analyzing vector representations, enhancing sentiment analysis through text representation and optimization techniques.

Santosh Kumar10 developed a method to identify sarcastic expressions in Telugu by focusing on hyperbolic features like interjections and intensifiers. The hyperbolic feature-based approach was effective, but it worked with smaller datasets. A study29 focused on sentence-level SA using multi-domain datasets. By incorporating Bidirectional LSTM and GRU networks with Forward-Backward encapsulation, the model demonstrated improved performance, surpassing classical ML methods. It achieved an F1 score of 86% for song datasets, outperforming existing models.

The author30 introduces “Sentikanna”, an extensive Telugu speech corpus created to support Telugu SA in various domains, including Recipe, Tourism, and Movie reviews. “Sentikanna” is a corpus comprising Telugu sentences that have been preprocessed and manually annotated from several sources. The phrases are labeled according to our annotation criteria. All the Telugu scripts have been exhausted. Furthermore, the sentences have been allocated polarity in addition to the creation of the corpus. We have annotated a total of 3113 Reviews that have been collected from various domains. We have employed our dataset to evaluate the effectiveness of several SA methodologies. Afterwards, we utilized SVM and NB methods to assess sentiment using both our dataset and the “Sentirama” datasets. The performance evaluation has been completed, and it has been observed that SVM and NB exhibited favorable outcomes on our dataset in comparison.

The researcher31 developed a supervised learning-based framework using the “SentiKanna” corpus, which includes Telugu texts from diverse fields such as cooking, tourism, and movies. This corpus, consisting of 3113 manually annotated reviews, was used to perform binary classification of reviews. After testing various machine learning methods, the ensemble method was found to yield the best results. A comparative analysis of the Random Forest (RF) and Extreme Gradient Boosting (XGB) models showed that the RF model outperformed XGB across all categories.

Transformed based sentiment analysis

We can view some of the works that XLM-Roberta has uncovered, which are written in Indian as well as other languages.

This study32 investigates the use of “cross-lingual contextual word embeddings and zero-shot transfer learning” to apply sentiment analysis predictions from the well-resourced English language to the resource-constrained Hindi language. The XLM-R model is trained and fine-tuned using English data to achieve the best performance. The Benchmark SemEval 2017 dataset (Task 4 A) is utilized, and zero-shot transfer learning is then employed to evaluate the model on two Hindi sentence-level sentiment analysis datasets: the IITP-Movie dataset and the IITP-Product review dataset.

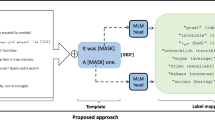

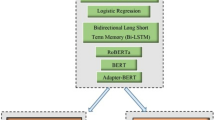

The authors33 propose a system designed to identify objectionable language in low-resource, code-mixed Tamil and English data using machine learning (ML), deep learning (DL), and pre-trained models such as BERT, RoBERTa, and adapter-BERT. For a similar classification task34, the Paraphrase XLM-RoBERTa model was employed, achieving F1-scores of 71.1, 75.3, and 62.5 for Tamil, Malayalam, and Kannada, respectively. In another approach35, the XLM-RoBERTa model, a multilingual transformed based model, was fine-tuned using English, Spanish, and Spanish-English code-mixed data. This system surpassed the official task baseline, achieving an average F1-score of 70.1% on the official leaderboard.

The multilingual BERT model performs well in the Hindi-English challenge, with an average F1-score of 0.6850, ranking the team 16th out of 62 participants36. In the Spanish-English task, the XLM-RoBERTa model scored an F1 of 0.7064, placing 17th out of 29. The study also examines Tamil-English and Malayalam-English code-switching using four BERT-based models37, emphasizing the benefits of task-specific pre-training over cross-lingual transfer, which leads to better results in both zero-shot and supervised settings. Furthermore, the research shows that multilingual BERT and XLM-RoBERTa are effective for sentiment analysis in Bengali, achieving a top accuracy of 95% in a two-class sentiment classification and establishing a benchmark for sentiment analysis in Bengali38.

The shared task outlined in39 included two sub-tasks centered around multi-class categorization. Sub-task A involved 11 different emotions, while sub-task B addressed 31 emotions. Fine-tuning of XLM-RoBERTa and DeBERTa base models was carried out for both sub-tasks. In sub-task A, XLM-RoBERTa achieved an accuracy of 0.46 and DeBERTa scored 0.45, securing the top position among 11 teams. In sub-task B, XLM-RoBERTa attained an accuracy of 0.33, while DeBERTa scored 0.26, placing second among seven teams.

The suggested XLM-Twitter model excels in Twitter-specific tasks40, especially multilingual sentiment analysis. It outperforms XLM-R and Twitter-trained RoBERTa variants in multilingual contexts. XLM-Twitter gains steadily on TweetEval but trails huge monolingual models like BERTweet. In zero-shot cross-lingual studies, it performs best in 6 of 8 languages, with notable gains in Hindi and Arabic. XLM-Twitter outperforms XLM-R in multilingual fine-tuning, especially in low-resource languages. Similarity analysis strengthens its adaptability to typologically and topically varied languages. The technique works well for multilingual social media text.

Furthermore, the Dravidian-CodeMix shared task at FIRE 202041 focused on code-mixing in Tamil, Malayalam, and Kannada. The task involved both intra-token and inter-token code-switching, with submissions for Tamil-English, Malayalam-English, and Kannada-English translation. The top-performing systems achieved weighted average F1-scores of 0.711, 0.804, and 0.630, respectively. This reflects a growing interest in code-mixed Dravidian languages, though further progress is needed to reach state-of-the-art performance.

This paper showcases the results achieved by Team Optimize Prime in the ACL 2022 shared task “Emotion Analysis in Tamil.” The objective of this assignment was to categorize social media comments based on emotions such as Joy, Anger, Trust, Disgust, and so on. The test was subdivided into two subtasks, one consisting of 11 overarching categories of emotions and the other consisting of 31 precise categories of emotion. We employed three distinct methodologies to address this issue: transformed based models, Recurrent Neural Networks (RNNs), and Ensemble models. XLM-RoBERTa achieved the highest performance on the initial task, attaining a macro-averaged F1 score of 0.27. Conversely, MuRIL yielded the most favorable outcomes on the second test, with a macro-averaged F1 score of 0.13.

Deep learning models have shown effectiveness in analyzing code-mixed Roman Urdu and English text, with XLM-RoBERTa (XLM-R) achieving a notable F1 score of 71% through optimized hyperparameters42. Another study43 highlights a method for sentiment polarity detection in code-mixed Malayalam-English and Tamil-English datasets from the Dravidian-Code Mix-FIRE 2020 competition. Using XLM-RoBERTa and K-folding ensemble learning, the approach achieved an F1 score of 0.74 and first place in Malayalam-English and an F1 score of 0.63, securing third place in Tamil-English.

The author used XLM-RoBERTa and Deep Pyramid Convolutional Neural Networks to identify objectionable Tamil, Malayalam, and Kannada44. DPCNN improves the model’s deep semantic features and long-range dependencies, while XLM-RoBERTa, trained on over 100 languages, provides strong multilingual embeddings. We classified XLM-RoBERTa’s last three hidden layers using DPCNN with the original [CLS] token output. Balanced training and reliable predictions were achieved using 5-fold stratified cross-validation and voting. Our model had F1-scores of 0.69 for Kannada (6th place), 0.92 for Malayalam (6th place), and 0.76 for Tamil (3rd place). These results prove our technique works for low-resource languages. Future research will refine the architecture for performance gains.

A multilingual model pre-trained on English tweets and machine translation (MT) for data augmentation is our simple but effective strategy45. Translations into French, German, Spanish, and Italian created five Twitter versions, five folding data. The model was fine-tuned on non-English datasets after pre-training on original and translated English tweets. Overall, this strategy improved results in all languages in our testing. Data enhancement raised Spanish F1PN from 72.7 to 78.2. Overall, multilingual models with pre-training and augmentation had macro F1 scores above 70 in all non-English languages. The results show that multilingual training with MT improves performance on low-resource language datasets.

This work46 presents a gold-standard dataset for Latin poetry sentiment analysis, utilizing eight Horace odes tagged at the sentence level as positive, negative, neutral, or mixed. Three experts annotated with moderate agreement (Cohen’s k = 0.5, Fleiss’s k = 0.48), and positive sentences predominated. A Latin Affectus lexicon-based sentiment analysis method and a zero-shot classification utilizing mBERT and XLM-RoBERTa fine-tuned on the English Go Emotions dataset were tested. The lexicon-based technique had 48% accuracy, highest on positive F1 = 0.62 and worst on mixed F1 = 0.00. Zero-shot classification had 32% (mBERT) and 30% (XLM-RoBERTa) accuracy on the Latin dataset, especially with positive and mixed classes. Both methodologies noted limited lexical coverage, negation neglect, and cross-lingual transfer to Latin poetry.

This work analyzes Kinyarwanda tweet sentiment using transfer learning and English pre-trained transformed based models47. Over 110,000 annotated tweets in 14 African languages were preprocessed and fine-tuned. English models used tweets translated into English. The Twitter-roberta-base-sentiment-latest model performed best on translated tweets with an F1 score of 0.686. An F1 score of 0.644 put KinyaBERT-large ahead of xlm-roberta-base-fine-tuned-kinyarwanda, which scored 0.598. Overall, English pre-trained models outperformed Kinyarwanda models, suggesting resource constraints. The study emphasizes the necessity for labeled data and NLP technologies for underrepresented languages like Kinyarwanda.

Furthermore, the EmoEvalEs@IberLEF2021 project48 focused on classifying Spanish tweets into seven emotion categories. The team utilized XLM-RoBERTa embeddings, combined with a Transformer Encoder and Text CNN for feature extraction, followed by classification through a fully connected layer. The approach ranked 14th, achieving a weighted F1 score of 0.5570 and an accuracy of 0.5368.

This research49 explores multilingual text classification using the XLM-RoBERTa model with transfer learning for Indonesian text categorization. Initially trained on an English News Dataset, the model achieved a Matthew Correlation Coefficient (MCC) of 42.2%. On a larger English dataset (37,886 samples), it performed excellently, with an MCC of 90.8% and accuracy, precision, recall, and F1 scores all at 93.3%. When tested on a substantial Indonesian News Dataset (70,304 samples), it achieved an accuracy of 86.4% and F1 scores of 90.2%. Training on a combined Mixed News Dataset (108,190 samples) further confirmed the model’s robustness across multilingual datasets.

The author introduces50 DCASAM (Deep Context-Aware Sentiment Analysis Model) approach improves aspect-level sentiment categorization by utilizing twin BERT models to extract both local and global contextual data. A CDM layer and conversational attention mechanism enhance local context, whereas a conversational attention encoder assimilates global semantics. The features are integrated and analyzed using DBiLSTM and a compact GCN, facilitating enhanced contextual comprehension. The model attains enhanced accuracy and F1 scores across several datasets, as confirmed by experimental research. DCASAM’s versatility indicates its potential for further applications, encompassing customer reviews and political discourse analysis.

This research presents51 a hybrid deep-learning methodology, RoBERTa-1D-CNN-BiLSTM, for Aspect-Based Sentiment Analysis (ABSA). By employing RoBERTa for feature extraction, 1D-CNN for local dependency capture and BiLSTM for contextual comprehension, the model attains a remarkable accuracy of 92.33% across three benchmark datasets. Experimental findings validate that our methodology exceeds current state-of-the-art techniques in sentiment analysis and product recommendation. The results underscore the model’s applicability in several domains, such as event detection and decision-making frameworks. Subsequent study may concentrate on refining accuracy and broadening the methodology to additional domains for increased efficacy.

This research52 formulated and assessed three hybrid models—RoBERTa-CNN-BiLSTM, BERT-BiLSTM and DistilBERT-BiLSTM—for the analysis of sentiment on Twitter. The integration of transformer embeddings with sequence-based algorithms significantly improves sentiment categorization. DistilBERT-BiLSTM attained the highest accuracy at 81%, succeeded by BERT-BiLSTM at 79% and RoBERTa-CNN-BiLSTM at 77%, illustrating the efficacy of hybrid architectures. Future study may investigate real-time and multilingual sentiment analysis for enhanced optimization.

This research53 assesses sentiment analysis models for twelve low-resource African languages, employing four advanced transformed based models. AfriBERTa proved to be the most proficient model, attaining an F1-score of 81% and an accuracy of 80.9%, surpassing the SemEval-2023 Task 12 and AfriSenti benchmarks. Nonetheless, overfitting persists as an issue, especially in AfriBERTa, underscoring the necessity for data augmentation to enhance generalization. Future study will concentrate on improving adaptation and robustness for sentiment analysis across varied linguistic environments.

This study54 presents a multi-task learning (MTL) methodology integrated with a switch-transformed based shared encoder to improve Arabic sentiment analysis (SA), tackling challenges associated with short sequences and low-resource datasets. Utilizing a mixture of experts (MoE) technique, the model adeptly handles intricate input-output interactions, enhancing adaptability for five-polarity and three-polarity sentiment analysis tasks. The suggested model attains accuracy rates of 84.02% (HARD dataset), 67.89% (BRAD dataset) and 83.91% (LABR dataset), illustrating its efficacy in Arabic dialect sentiment classification. Subsequent research may investigate other improvements to augment generalization across varied datasets.

Table 1 provides a comprehensive overview of the research studies that have utilized XLM-RoBERTa with various languages, including Indian languages.

After doing a thorough literature analysis, it was found that there is a scarcity of research on transformed learning in the Telugu language. Then, we intended to utilize transformed algorithms for the Telugu language.

IndicBERT and DeBERTa were selected over alternative multilingual models because of their proven efficacy in processing Indic languages. IndicBERT is specifically pre-trained on Indian language corpora, while DeBERTa provides superior representation through its disentangled attention mechanism, rendering them especially adept at capturing the linguistic subtleties of Telugu.

The objective of the study is to provide a sentence level SA of the Telugu corpus that has been compiled from several sources. The application of ML techniques has produced promising results. The methodology employed for handling the dataset and conducting its subsequent analysis will be further discussed in the next section.

Methodology

Proposed method

This work introduced an innovative approach for sentence-level sentiment analysis in Telugu by utilizing transformed methodologies in the language. As far as we know, transformed approaches have not been used for Telugu because there is no suitable dataset available. The Telugu language in India is significantly underutilized. Authors predominantly employed ML algorithms in the majority of the publications due to the limited availability of Telugu datasets. We performed an experimental analysis using the IndicBERT, RoBERTa, DeBERTa, and XLM-RoBERTa methodologies. When comparing earlier methodologies, there has been an enhancement in accuracy in specific instances. The researchers employ transformed based models to ascertain the sentiment polarity of sentences at the level, distinguishing between positive and negative.

Telugu has numerous linguistic obstacles that distinguish it from languages commonly employed in training standard transformed based models:

Agglutination (morphological complexity)

Telugu is an agglutinative language in which root words amalgamate with various suffixes to convey grammatical functions (e.g., tense, mood, case, number). This produces lengthy and intricate word forms that are rare in non-agglutinative languages.

Our Approach to This Matter: We utilized sub word tokenization methods (e.g., SentencePiece with byte-pair encoding or unigram language model) that are more effective for managing agglutinative morphology.

This enables the model to acquire significant representations of word segments and mitigates the sparsity resulting from rare word forms.

Dialectal variance

Telugu demonstrates considerable regional dialectal variance throughout Andhra Pradesh and Telangana. Variations in vocabulary, pronunciation, and phrase structure can affect model generalization.

Our Approach to This Matter: We gathered data from many sources encompassing multiple dialects (e.g., newspapers, social media, spoken transcripts) to ensure comprehensive dialectal representation.

Normalization techniques were employed during preprocessing to normalize specific orthographic and phonetic variances while maintaining dialect-specific meanings when applicable.

Code switching and script noise

Telugu speakers sometimes include English or Hindi lexicon in casual communication, particularly on social media platforms. Moreover, the transcription into Latin script is prevalent, resulting in inconsistencies.

Our Approach to This Matter: Sentences exhibiting excessive code switching or non Telugu material were eliminated to preserve linguistic purity when required.

We also instituted script filtering to eliminate or separately handle transliterated and Latin-script information that may perplex the model.

Insufficient annotated resources

In contrast to English, Telugu is deficient in extensive, high-quality annotated corpora, hence constraining the efficacy of supervised models.

Our Approach to This Matter: We somewhat alleviated this by employing cross-domain data augmentation and semi-supervised learning, utilizing unlabeled data in conjunction with a limited number of labeled samples.

We additionally conducted experiments with cross-lingual transfer learning when relevant, initializing models from multilingual checkpoints such as RoBERTa or XLM-R.

Datasets

Description of the dataset

This section outlines the procedure for gathering the Telugu corpus, the pre-processing measures taken and the generation of annotated data for four NLP tasks.

Process of collecting data

We have personally picked several Telugu websites that specifically publish movie reviews and news articles pertaining to business, politics, sports, as well as national and international matters. Information is gathered from Telugu websites such as Makemytrip.com, Facebook.com and Youtube.com. We obtained our corpus of 3,115 reviews by extracting data from these websites. In addition to The Telugu corpus has a vast number of sentences. Every review is stored in the system with a distinct identification, timestamp, username, message, star rating, emoticons, replies and other relevant information.

Pre-processing of the data

In order to guarantee that the input data for our models are of a high quality, the following measures were followed.

Elimination of stop words and sentences

Frequently used stop words, including “since,” “for,” and “so,” were discarded due to their minimal semantic contribution to the text. Furthermore, words comprising exclusively names, localities, or dates were omitted from the dataset, as they lack significant contextual relevance for the tasks involved.

Addressing data imbalance

To mitigate class imbalance, we gathered data from many domains to provide an adequate and representative sample size. Additionally, we employed both over-sampling and under-sampling methodologies throughout testing to equilibrate the class distribution across many tasks.

Removal of non-informative characters

Arrow symbols and Chinese characters were found and eliminated from the corpus to ensure consistency and relevancy of the textual material.

Exclusion of non-semantic phrases

Phrases consisting solely of names, localities, or dates were eliminated due to their lack of substantial semantic relevance for model training.

The elimination of Latin characters

The elimination of Latin characters and number digits was considered necessary, as they were thought irrelevant and frequently unrecognizable within the intended context. Consequently, they were omitted during preprocessing.

The process of data annotation

Annotation protocol

We adhered to task-specific annotation guidelines to provide high-quality labeled data for four distinct NLP tasks. The initial author, a native Telugu speaker, personally annotated the data, ensuring semantic and contextual precision.

-

1.

We curated data from several sectors, including Recipes, Tourism, and Movies, to guarantee diversity in word and sentence structure.

-

2.

Reviews initially in other languages were translated into Telugu via Google Translate, thereafter undergoing manual verification and revision to maintain contextual integrity.

-

3.

In sentiment analysis tasks, each sentence was evaluated for emotional tone, positive, negative, or neutral according to the whole context and diction.

-

4.

For classification tasks, we allocated labels according to the relevance and in formativeness of the text, following explicitly given instructions.

Verification procedure and bias reduction

To augment reliability and mitigate individual bias:

-

1.

A second annotator, a colleague proficient in Telugu, conducted an independent assessment of the labels.

-

2.

Discrepancies were addressed and reconciled through consensus.

-

3.

In the process of dataset refining, we eliminated reviews categorized as neutral or those failing to satisfy the quality criteria, hence concentrating on unequivocal sentiment-bearing samples (only positive and negative).

Inter-annotator concordance

We computed Cohen’s Kappa (κ) score to evaluate annotation consistency on a representative portion of the data annotated by both annotators. The attained score was [insert κ value, e.g., 0.81], signifying considerable concordance. This metric has been incorporated into the updated paper to substantiate the correctness of our observations.

In addition, we provide accurate labels for incorrectly annotated samples and provide an explanation for the chosen label. We repeat the aforementioned processes three times in order to guarantee that the level of accuracy and thoroughness of annotations reaches a good result.

Although pre-trained transformed based models (e.g., BERT, RoBERTa, XLM-RoBERTa) are potent instruments commonly employed in NLP tasks, our research substantially extends their conventional usage in the following manners:

Domain-specific fine-tuning

We refined transformed based models using a meticulously selected domain-specific dataset, allowing them to grasp contextual subtleties and specialized terminology absent in generic corpora.

Tailored preprocessing pipeline

Our preprocessing is specifically crafted for our application, encompassing the elimination of non-semantic elements (e.g., isolated names, locations, digits, special characters) and extraneous sentences guaranteeing that only significant and high-quality textual data is utilized for training.

Mitigating class imbalance

We employed both over-sampling and under-sampling methodologies to equilibrate classes during training. This stage is essential and frequently neglected in conventional applications, especially when addressing real-world imbalanced datasets.

Goal-Specific adaptation

The transformer architecture was tailored for our particular downstream goal by altering its classification head and integrating supplementary task-relevant information to enhance performance.

Cross-domain validation

Our model was assessed using data from many domains to guarantee generalizability and robustness, surpassing the conventional single-dataset evaluations.

Data statistics

Table 2 displays the exhaustive statistics of the data in every domain for every task. According to Table 2, there are 1009 reviews in the movie domain. In the field of tourism, we have collected 1085 reviews, and in the cooking domain, we have collected 1021 reviews. Therefore, the overall corpus consists of 3115 reviews, which translates to thousands of sentences. Table 3 displays several example sentences illustrating the polarity of a particular sentence. Table 4 describes the gold standard criteria for assessment.

In this work we used IndicBERT, RoBERTa, DeBERTa and XLM-RobBERTa to extract the SA in Telugu language. Transformed based models, originating from the seminal “Attention is All You Need” paper by Vaswani et al. in 2017, represent a paradigm shift NLP with their self-attention mechanisms that enable efficient and scalable processing of sequential data. These models have evolved into various specialized forms, each enhancing the foundational architecture to address specific needs. RoBERTa improves training efficiency and performance by optimizing BERT’s pre-training process. DeBERTa introduces advanced techniques like disentangled attention to better capture word relationships. XLM-RoBERTa extends these capabilities to a multilingual context, supporting a wide array of languages simultaneously. IndicBERT, tailored for Indic languages, leverages ALBERT’s efficiency to cater to the linguistic diversity of the Indian subcontinent. Together, these transformer variants demonstrate the versatility and adaptability of the transformed architecture in pushing the boundaries of NLP across different languages and applications. The following Fig. 1 describes the general flow of architecture.

General Architecture of XLM-RoBERTa for Multilingual NLP Tasks.

Transformers have revolutionized SA by leveraging their advanced self-attention mechanisms and contextual understanding to interpret the emotional tone of text. By capturing long-range dependencies and nuanced word relationships, transformers excel in understanding complex sentiment expressions. Pre-trained models like IndicBERT, RoBERTa, DeBERTa and XLM-RoBERTa fine-tuned on specific SA datasets, harness extensive language knowledge, enabling precise sentiment detection even with limited task-specific data. Additionally, their robustness to linguistic variations and capability to generalize across diverse data sources make them highly effective. For multilingual SA, models like XLM-RoBERTa extend these benefits across various languages, providing comprehensive SA solutions globally. While models like RoBERTa and DeBERTa focus on improving performance and robustness in a monolingual or multilingual context, IndicBERT and XLM-RoBERTa specifically address the needs of multilingual and cross-lingual tasks. IndicBERT focuses on Indic languages, while XLM-RoBERTa is designed for a broad set of languages globally.

This research investigates the use of BERT and XLM-RoBERTa for various NLP tasks, highlighting XLM-R as more effective than multilingual BERT due to its larger training corpus of around 100 languages. The study uses the xlm-roberta-large checkpoint and proposes fine-tuning techniques for both models, particularly for low-resource languages like Bengali. Fine-tuning allows for efficient training with limited data and reduces training time by avoiding the need to retrain all layers from scratch. All models were sourced from HuggingFace.

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., & Polosukhin, I. (2017). Attention is all you need. Advances in neural information processing systems, 30. Jacob Devlin, Ming-Wei Chang, Kenton Lee and Kristina Toutanova. 2019. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), pages 4171–4186, Minneapolis, MN, USA. Association for Computational Linguistics.

Conneau et al. (2020) in their paper “Unsupervised Cross-Lingual Representation Learning at Scale” introduce a method for unsupervised cross-lingual representation learning that does not require parallel data. The approach uses large multilingual datasets to create representations that work across various languages. The method was evaluated on multiple tasks and showed significant performance improvements, advancing the field of cross-lingual NLP. The study was presented at the 58th Annual Meeting of the Association for Computational Linguistics.

XLM-R transformed architecture is utilized for sentiment classification

For this work, we utilize Hugging Face’s implementation of the XLM-RoBERTa model. This model is based on the XLM training approach and incorporates the concepts of RoBERTa, enhancing its effectiveness for cross-language training tasks. Our network consists of 12 layers in the network layer, 768 levels in the hidden layer and 12 self-attention heads. The XLM-RoBERTa model transforms the input labeled corpus into feature maps that incorporate global feature information. We observe that the layer has a substantial number of parameters, although the frequency of parameter changes is quite low. This scenario is expected to result in the model overfitting and producing an unacceptable final classification outcome. Consequently, we utilize the XLM-RoBERTa model’s output as the input for the Transformed Encoder layer. This involves using the encoder to extract additional features from the information in the preceding layer. Due to the lower size of the parameters in the Transformed Encoder layer compared to the prior model, we see significant changes in its parameters during training and increased sensitivity to variations in the input data. This strategy successfully mitigates the problem of over-fitting in the findings and improves the overall ability of the model to generalize. The Transformed Encoder layer we have built consists of a single Transformed encoding block.

Algorithm

XLM-R is a transformed based multilingual masked language model that was launched by the Facebook AI team in November 2019. It is an updated version of the previous XLM-100 model. The sentence encoder is a cross-lingual model that has been trained on 2.5 terabytes of data from Common Crawl documents, covering 100 different languages. The primary enhancement of XLM-Roberta, in comparison to its initial iteration, is a substantial augmentation in the volume of training data18.

XLM-R outperforms other models on several cross-lingual benchmarks. The masked language model serves as a viable substitute for non-English NLP and has proven to be effective. An outstanding feature of XLM-R is its compatibility with both monolingual and cross-lingual benchmarks, effectively addressing the challenges posed by multilinguality. For this study, we initially optimize the pre-trained XML-R base method with the Sentikanna dataset. The XML-R cross-lingual transformed based model is used to train the classification model on Telugu, a low-resource language. The approach accurately predicts binary classification, namely positive and negative classes, in the resource-limited Telugu language.

We employ the XLM-R base architecture. The model is composed of around 355 million parameters, featuring 24 layers, 1,027 hidden states, 4,096 feed-forward hidden states, and 16 heads. The largest sequence length can be adjusted to the standard value of 512. This indicates that the algorithm has been able to handle input sequences that contain a maximum of 512 tokens and generate the corresponding sequence representation as the output. The initial word in the sequence is consistently [CLS], which encompasses the distinctive categorization embedding39,55. The initial token in each sequence is consistently a distinctive categorization token ([CLS]). The ultimate latent state, h, associated with this token is utilized as the comprehensive sequence encoding for categorization purposes. The XLM-R model is enhanced with an elementary softmax learner to calculate the probability of label c. This is illustrated in Eq. (2), in which W denotes the parameter matrix specific to the job.

We optimize the parameters of both XLM-R and W together by maximizing the algorithm of the likelihood of the proper label. Figure 2 displays the structure of the sentiment categorization architecture.

XLM- RoBERTa Architecture for Telugu Sentiment Classification.

Distinctions from XLM-R’s native encoder

Position inside the pipeline

XLM-RoBERTa comprises 12 (base) or 24 (large) transformed encoder layers inside its architecture, pretrained on multilingual datasets.

We incorporate our proprietary Transformed Encoder after the XLM-RoBERTa output to reprocess the contextual embeddings generated by the pretrained model.

Objective and operation

The native encoders of XLM-R are predominantly static or exhibit little parameter adjustments when fine-tuned on limited datasets, resulting in potential overfitting. Our bespoke layer adds an extra dimension of feature refinement, enhancing adaptability and generalization for Telugu sentiment categorization.

Architectural design

The unique Transformed Encoder comprises a singular encoding block, in contrast to the extensive stack utilized in XLM-R. It is lightweight and possesses fewer parameters, enabling it to capture nuanced variations in the domain-specific Telugu data.

Training conduct

The parameters of our Transformed Encoder undergo substantial alterations during training, indicating that the layer proficiently acquires task-specific patterns. This contrasts with the very static activity exhibited in the deeper layers of XLM-R during training on our dataset.

Results and discussion

In recent years, transformed based models have exhibited exceptional efficacy in numerous natural language processing (NLP) tasks. XLM-RoBERTa, an extension of RoBERTa tailored for multilingual text, has garnered considerable attention for its proficiency in managing multiple linguistic structures successfully. Nonetheless, the efficacy of such models may fluctuate based on the domain and intricacy of the dataset.

This study assesses the efficacy of XLM-RoBERTa on three distinct datasets, Movie, Tourism, and Recipe, by examining its Accuracy and F Score. The objective is to evaluate the model’s generalization across various text domains and to pinpoint potential areas for enhancement. Through the comparison of performance measures, we offer insights into the model’s strengths and shortcomings across several linguistic situations.

Metrics for performance

To evaluate the effectiveness of all the transformed based algorithms, the accuracy measurement has been used. In order to get optimal outcomes, we performed fine-tuning on the models and adjusted the hyperparameters. The hyperparameters used and changed are presented in the form of Table 4.

Accuracy is a metric used to assess the exactness of an algorithm. In Eq. (2), the accuracy equation can be expressed.

The F-measure is an accumulated factor that is used to look at how recall and precision affect total accuracy. F-score is the most common F-measure. In Eq. (3), the F-measure equation can be expressed.

Parameter setting

The hyperparameters employed in training the XLM-RoBERTa model were meticulously chosen to enhance performance and efficiency. An embedding size of 128 was used to optimize the trade-off between significant word representations and computational efficiency. A dropout rate of 0.3 was implemented to mitigate overfitting by randomly deactivating neurons during the training process. The model was trained using a batch size of 16, which ensured steady gradient updates and preserved computational efficiency. The Adam optimizer was employed for efficient weight modifications, utilizing adaptive learning rates and momentum to enhance convergence. Training was performed for a maximum of 15 epochs, facilitating enough learning while mitigating overfitting. These hyperparameters collectively guarantee robust performance across many datasets while preserving generalization capabilities. Table 5 illustrates the configuration of hyperparameters.

The custom encoder was trained with a 128-dimensional embedding size, a dropout rate of 0.3, a batch size of 16, and optimized using the Adam optimizer for 15 epochs. Under these lightweight configurations, the model exhibited a low computational footprint, enabling training on a single GPU (e.g., NVIDIA V100) within an acceptable duration, while still attaining competitive performance in sentiment classification.

The subsequent tables display the outcomes of an assessment conducted using the IndicBERT, RoBERTa, DeBERTa, and XLM-RoBERTa Classifier techniques that were suggested. The attained values for these approaches are displayed. Results were obtained by using multiple datasets, and multiple transformed based models are presented in Tables 6 and 7. After conducting a thorough comparison of all transformed based models, we discovered that the XLM-RoBERTa algorithm produced the most precise outcomes, as shown in Table 8. A comparison of all our datasets with the Tamil language using the XLM-RoBERTa classifier is described in Table 9.

Figures 3 and 4 display the bar graph illustrating the accuracy and F-score of the three Telugu datasets with all transformed models. A comparison of the accuracy and F-score bar graphs for the three Telugu datasets using XLM-RoBERTa is shown in Fig. 5. A comparison of all our datasets with the Tamil language using the XLM-RoBERTa classifier is shown in Fig. 6.

Comparative evaluation of all models’ Accuracy with three datasets.

Comparative evaluation of all models F-score with three datasets.

Comparative assessment of the accuracy and F-score of all datasets using XLM–RoBERTa model.

Comparative assessment of the accuracy and F-score of all datasets using XLM–RoBERTa model and the existing Tamil dataset.

The pre-trained model used in this project was acquired through the transformers hugging face collection and then optimized for training. To assess the efficacy of the categorization model, performed tests on three separate datasets: The Movie, tourist, and recipe review datasets. The Sentikanna Telugu corpora were partitioned into a set to be used for training and a set for validation using an 80:20 split. The classification model was then fine-tuned by manually adjusting the learning rate and optimal no. of epochs to improve efficiency on the validation set. The process of training XLM-RoBERTa on the Sentikanna data took around 190 min.

XLM-RoBERTa exhibits strong performance across several datasets, achieving exceptional results in structured text forms like recipes. Nonetheless, its marginally inferior performance in the tourism sector underscores the necessity for more refinement or domain-specific modifications. Future research may investigate model improvements by domain-adaptive pretraining to promote robustness across diverse datasets.

We recognize that, despite meticulous curation, the Sentikanna dataset may exhibit domain-specific, topical, or sentiment biases due to the characteristics of publicly published user evaluations (e.g., an abundance of favorable reviews in the tourist sector or bad reviews in film critiques).

-

Equitable sampling across three principal domains: Recipes, Tourism, and Movies, to enhance content diversity.

-

Manual annotation was performed by native Telugu speakers attuned to regional and cultural nuances.

-

We integrated both official and informal language, encompassing a variety of phrase patterns and tones.

-

Both positive and negative attitudes were systematically filtered to prevent overwhelming the class majority.

Constraints of pre-trained models (e.g., XLM-R) for telugu

Data Bias in Pre-Training Corpus XLM-R was trained on a multilingual corpus derived from Common Crawl. The representation of Telugu is rather restricted and frequently derived from unreliable web sources (e.g., blogs, forums), which may not accurately represent formal or specialized usage.

This may result in skewed representations or suboptimal performance for specific dialects or syntactic constructions.

Dialectal and regional variants

Telugu demonstrates significant dialectal diversity across several places. Nonetheless, the model does not explicitly consider this. Consequently, statements composed in colloquial or region-specific dialects may yield erroneous predictions.

Challenges of tokenization

Subword tokenizers, such as Sentence Piece, utilized in XLM-R, can decompose morphologically intricate Telugu words, potentially diminishing contextual comprehension.

Model dimensions and interpretability

Transformed based models, particularly big variants such as XLM-R, are resource-intensive and provide limited interpretability, complicating the identification of specific faults related to linguistic occurrences in Telugu.

Partial mitigation strategies

We mitigated several of these constraints by manually curating and annotating a dataset with diverse domains. Incorporating a bespoke Transformed Encoder layer to enhance the understanding of Telugu-specific semantics. Implementing stratified sampling in dialect-influenced areas to ensure balanced representation.

Novelty of this work

This work is innovative in its emphasis on Telugu, a morphologically intricate and low-resource language that poses distinct challenges for natural language processing due to its complicated word formations, inflections, and syntactic variants. This paper presents a substantial, domain-diverse, sentence-level sentiment analysis dataset specifically for Telugu, in contrast to prior research that mostly focuses on high-resource languages. This represents the inaugural attempt to create a comprehensive dataset and to evaluate a diverse array of transformed based models, including IndicBERT, RoBERTa, DeBERTa, and XLM-RoBERTa, on it. These models, equipped with self-attention mechanisms and the ability to capture long-range dependencies and subtle contextual nuances, are particularly adept at analyzing the complexities of Telugu sentiment expressions, including sarcasm, idioms, and polarity shifts. This study develops a novel basis for sentiment analysis in Telugu and provides significant insights into the usability of sophisticated transformed based models in low-resource language settings.

Conclusions

Every day, social media generates vast amounts of unorganized, multilingual, and multimodal data. Indeed, there is a clear and undeniable trend of social intelligence growing on these platforms. However, the diverse range of content is a challenge in terms of analyzing and comprehending thoughts. To address the analysis of sentiment at the sentence level in a scenario where resources are limited in the Telugu language, the corpus was generated using diverse resources in the Telugu language. The authors utilized all of the specified transformed algorithms to extract sentiment from the Telugu corpus. Remarkably, XLM-R outperforms the best models with an accuracy of 79.42 on the recipe benchmark dataset compared to other models. XLR-RoBERTA surpasses the state-of-the-art performance on the Movie dataset as well. Future work entails including additional regional language test datasets for categorization. Furthermore, it is worth noting that the present datasets used for training and testing are specifically designed for analyzing sentiment at the sentence level.

Future research can include more regional languages to analyze sentiment across low-resource languages. More domains and larger datasets can improve model generalizability in the “Sentikanna” dataset. Advanced transformed designs like GPT or T5 could be used for more complex sentiment analysis and social platform code-mixed language data management. Multi-modal sentiment analysis, text, photos, and audio is another intriguing option. Sentiment analysis, like emotion or sarcasm detection, could expand the scope. Developing practical applications like sentiment-based recommendation systems or real-time social media monitoring tools will also benefit regional language NLP. We want to enhance our work to fine-grained sentiment categories (e.g., very positive, sarcastic, uncertain) for future work.

Data availability

The datasets used and analyzed during the current study are available at http://ammapro.in/sai/kanna/dataset.csv and are available from the corresponding author on reasonable request.

References

Kaity, Mohammed, and Vimala Balakrishnan. "Sentiment lexicons and non-English languages: a survey." Knowledge and Information Systems 62, no. 12 (2020): 4445-4480.

Singh, N. K., Tomar, D. S. & Sangaiah, A. K. Sentiment analysis: a review and comparative analysis over social media. J. Ambient Intell. Humaniz. Comput. 11 (1), 97–117 (2020).

Hajiali, M. Big data and sentiment analysis: A comprehensive and systematic literature review. Concurrency Computation: Pract. Experience. 32 (14), e5671 (2020).

Akshi Kumar and Arunima Jaiswal. A deep swarm-optimized model for leveraging industrial data analytics in cognitive manufacturing. IEEE Trans. Industr. Info. 17, 4 (2020), 2938–2946. (2020). https://doi.org/10.1109/TII.2020.3005532

Anita & CN Subalalitha. and. Building discourse parser for Thirukkural. In Proceedings of the 16th International Conference on Natural Language Processing, pages 18–25. (2019).

Reddy Naidu 2018.Building SentiPhraseNet for Sentiment Analysis in Telugu, Council International Conference 15th India.

Reddy Naidu, S. K. & Bharti Korra Sathya Babu, and Ramesh Kumar Mohapatra (Sentiment analysis using telugu sentiwordnet, 2017).

TeluguRank.Ethnologue list. [Online]. Available: https://www.ethnologue.com/statistics/size

Suryachandra, P. & Reddy, P. V. S. Statistical approaches in parsing for Telugu language, International Conference on Communication and electronic systems [5] and Electronics Systems (ICCES), Coimbatore, 2016, pp. 1–5. (2016).

Bharti, S. K., Naidu, R. & Babu, K. S. Dynamic SentiPhraseNet to support Sentiment Analysis in Telugu Mathematical Modeling, Computational Intelligence Techniques and Renewable Energy: Proceedings of the First International Conference, MMCITRE 2020and Electronics Systems (ICCES).

Rama Rohit Reddy Gangula and Radhika Mamidi. Resource creation towards automated sentiment analysis in Telugu (a low resource language) and integrating multiple domain sources to enhance sentiment prediction. In Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC 2018), Paris, France. European Language Resources Association (ELRA) (2018).

Mukku, S. S., Choudhary, N. & Mamidi, R. Enhanced sentiment classification of Telugu text using ML techniques. In Proceedings of the 4th Workshop on Sentiment Analysis where AI meets Psychology (SAAIP 2016) collocated with 25th International Joint Conference on Artificial Intelligence (IJCAI 2016), New York City, USA, July 10, 2016, pages 29–34. (2016).

Sreekavitha Parupalli, V. A., Rao & Mamidi, R. Bcsat: A benchmark corpus for sentiment analysis in Telugu using word-level annotations, in Proceedings of ACL 2018, Student Research Workshop. pp. 99–104, Association for Computational Linguistics 3. (2018).

Srikiran Boddupalli, A. S., Saranya, U., Mundra, P. & Dasam Sentiment Analysis of Telugu data and comparing advanced ensemble techniques using different text processing methods, 5th International Conference on Computing Communication Control and Automation (ICCUBEA)-2019.

Tammina, S. A Hybrid Learning approach for Sentiment Classification in Telugu Language, International Conference on Artificial Intelligence and Signal Processing (AISP)2020.

Mukku, S. S., Oota, S. R. & Mamidi, R. Tag me a label with multi-arm: Active learning for telugu sentiment analysis. In International Conference on Big Data Analytics and Knowledge Discovery. Springer, 355–367. (2017).

Piotr Bojanowski, E., Grave, A., Joulin & Mikolov, T. Enriching word vectors with subword information. Transactions of the Association for Computational Linguistics 5 (2017), 135–146. (2017).

Benjamin Heinzerling and Michael Strube. BPEmb: Tokenization-free Pre-trained Subword Embeddings in 275 Languages. In Proceedings of the Eleventh International Conference on Language Resources and Evaluation (LREC 2018). European Language Resources Association (ELRA), Miyazaki, Japan. (2018).

Barriere V, Balahur A. Improving sentiment analysis over non-English tweets using multilingual transformers and automatic translation for data-augmentation. arXiv preprint arXiv:2010.03486. 2020 Oct 7.

Karthikeyan, K., Wang, Z., Mayhew, S. & Roth, D. Cross-lingual ability of multilingual BERT: An empirical study. In Proceedings of the International Conference on Learning Representations (ICLR’20). (2020).

Alexis Conneau, K. et al. Edouard Grave, Myle Ott, Luke Zettlemoyer, and Veselin Stoyanov (Unsupervised cross-lingual representation learning at scale, 2019).

Garapati, A., Bora, N., Balla, H. & Sai, M. SentiPhraseNet: an extended SentiWordNet approach for Telugu sentiment analysis. Int. J. Adv. Res. Ideas Innovations Technol. 5 (2), 433–436 (2019).

Mukku, S. S. & Mamidi, R. Actsa: Annotated corpus for telugu sentiment analysis. In Proceedings of the First Workshop on Building Linguistically Generalizable NLP Systems (pp. 54–58). (2017), September.

Shalini, K., Ravikurnar, A., Reddy, A. & Soman, K. P. Sentiment analysis of indian languages using convolutional neural networks. In 2018 International Conference on Computer Communication and Informatics (ICCCI) (pp. 1–4). IEEE. (2018), January.

Padmaja, S., Bandu, S. spsampsps Fatima, S. S. Text processing of Telugu–English code mixed languages. In Advances in Decision Sciences, Image Processing, Security and Computer Vision: International Conference on Emerging Trends in Engineering (ICETE), Vol. 1 (pp. 147–155). Springer International Publishing. (2020).

Garapati, A., Bora, N., Balla, H. & Sai, M. SentiPhraseNet: An extended SentiWordNet approach for Telugu sentiment analysis. (2019).

Regatte, Y. R., Gangula, R. R. R. & Mamidi, R. May. Dataset Creation and Evaluation of Aspect Based Sentiment Analysis in Telugu, a Low Resource Language. In Proceedings of The 12th Language Resources and Evaluation Conference (pp. 5017–5024). (2020).

Kumar RG, Shriram R. Sentiment analysis using bi-directional recurrent neural network for Telugu movies. International Journal of Innovative Technology and Exploring Engineering. 2019;9(2):241-5.

Chattu K, Sumathi D. Sentiment Analysis Using Deep Learning Approaches on Multi-Domain Dataset in Telugu Language. Journal of Information & Knowledge Management. 2024 Jun 10;23(03):2450018.

Chattu, K. & Sumathi, D. Sentiment Classification of Low Resource Language Tweets Using Machine Learning Algorithms. In 2023 2nd International Conference on Edge Computing and Applications (ICECAA) (pp. 1055–1061). IEEE. (2023), July.

Chattu, K. & Sumathi, D. Corpus creation in telugu: sentiment classification using ensemble approaches. SN Comput. Sci. 4 (6), 860 (2023).

Sentiment Analysis Using, X. L. M. R. Transformer and Zero-shot Transfer Learning on Resource-poor Indian Language, AKSHI KUMAR, Department of Computer Science & Engineering, Delhi Technological University, New Delhi, India VICTOR HUGO C. ALBUQUERQUE, Laboratory of Industrial Informatics, Electronics and Health, University of Fortaleza (UNIFOR).

Shanmugavadivel K, Sathishkumar VE, Raja S, Lingaiah TB, Neelakandan S, Subramanian M. Deep learning based sentiment analysis and offensive language identification on multilingual code-mixed data. Scientific Reports. 2022 Dec 13;12(1):21557.

Sentiment Analysis on Dravidian Code-Mixed YouTube Comments using Paraphrase XLM-RoBERTa Model Yandrapati Prakash Babu, Rajagopal Eswari, FIRE 2021: Forum for Information Retrieval Evaluation, December 13–17. India (2021).

Sultan A, Salim M, Gaber A, Hosary IE. WESSA at SemEval-2020 Task 9: Code-mixed sentiment analysis using transformers. arXiv preprint arXiv:2009.09879. 2020 Sep 21.

UPB at SemEval-2020. Task 9: Identifying Sentiment in Code-Mixed Social Media Texts using Transformers and Multi-Task Learning George-Eduard Zaharia, George-Alexandru Vlad, Dumitru-Clementin Cercel, Traian Rebedea, Costin-Gabriel Chiru University Politehnica of Bucharest, Faculty of Automatic Control and Computers {george.zaharia0806,george.vlad0108}@stud.acs.pub.ro, Proceedings of the 14th International Workshop on Semantic Evaluation, pages 1322–1330 Barcelona, Spain (Online), December 12, 2020.

Task-Specific Pre-Training and Cross Lingual Transfer for Code-Switched Data Akshat Gupta. Sai Krishna Rallabandi, Alan Black Carnegie Mellon University, Proceedings of the First Workshop on Speech and Language Technologies for Dravidian Languages, pages 73–79 April 20, 2021 ©2021 Association for Computational Linguistics.

Sentiment Analysis For Bengali Using Transformer Based Models Anirban Bhowmick Universität Hamburg anirbanbhowmick88@gmail.com Abhik Jana Universität Hamburg abhikjana1@gmail.com, Proceedings of the 18th International Conference on Natural Language Processing, pages 481–486 Silchar, India. December 16–19. ©2021 NLP Association of India (NLPAI) (2021).

Janvi Prasad, G., Prasad & Gunavathi, C. GJG@TamilNLP-ACL2022: Emotion Analysis and Classification in Tamil using Transformers. In Proceedings of the Second Workshop on Speech and Language Technologies for Dravidian Languages, pages 86–92, Dublin, Ireland. Association for Computational Linguistics. (2022).

Francesco Barbieri, L. E., Anke & Jose Camacho-Collados XLM-T: Multilingual Language Models in Twitter for Sentiment Analysis and Beyond. In Proceedings of the Thirteenth Language Resources and Evaluation Conference, pages 258–266, Marseille, France. European Language Resources Association. (2022).

Chakravarthi BR, Priyadharshini R, Thavareesan S, Chinnappa D, Thenmozhi D, Sherly E, McCrae JP, Hande A, Ponnusamy R, Banerjee S, Vasantharajan C. Findings of the sentiment analysis of dravidian languages in code-mixed text. arXiv preprint arXiv:2111.09811. 2021 Nov 18.

Younas, A., Nasim, R., Ali, S., Wang, G. & Qi, F. Sentiment Analysis of Code-Mixed Roman Urdu-English Social Media Text using Deep Learning Approaches, IEEE 23rd International Conference on Computational Science and Engineering (CSE), Guangzhou, China, 2020, pp. 66–71., Guangzhou, China, 2020, pp. 66–71. (2020).

Ou X, Li H. YNU@ Dravidian-CodeMix-FIRE2020: XLM-RoBERTa for Multi-language Sentiment Analysis. InFIRE (Working Notes) volume 2826 ,2020 Dec (pp. 560-565).

Zhao, Y. & Tao, X. ZYJ123@ DravidianLangTech-EACL2021: Offensive language identification based on XLM-RoBERTa with DPCNN. In Proceedings of the first workshop on speech and language technologies for dravidian languages (pp. 216–221). (2021), April.

Barriere V, Balahur A. Improving sentiment analysis over non-English tweets using multilingual transformers and automatic translation for data-augmentation. arXiv preprint arXiv:2010.03486. 2020 Oct 7.

Sprugnoli, R., Mambrini, F., Passarotti, M. & Moretti, G. Sentiment analysis of latin poetry: First experiments on the odes of horace. Computational Linguistics CliC-it 2021, 314. (2022).

Byakunda, R. Transfer Learning on Kinyarwanda Tweets Sentiment Analysis. (2023).

Qu, S., Yang, Y. & Que, Q. Emotion Classification for Spanish with XLM-RoBERTa and TextCNN. In IberLEF@ SEPLN (pp. 94–100). (2021).

Wiciaputra YK, Young JC, Rusli A. Bilingual Text Classification in English and Indonesian via Transfer Learning using XLM-RoBERTa. International Journal of Advances in Soft Computing & Its Applications. 2021 Nov 1;(PP 73-87)13(3).

Alturayeif, N. & Ahmad, I. EASE: an enhanced active learning framework for aspect-based sentiment analysis based on sample diversity and data augmentation. Expert Syst. Appl. 261, 125525 (2025).

Rana, M. R. R., Nawaz, A., Ali, T., Alattas, A. S. & AbdElminaam, D. S. Sentiment analysis of product reviews using transformer enhanced 1D-CNN and BiLSTM. Cybernetics Inform. Technol. 24 (3), 112–131 (2024).

Bouassida, Y. & Mezali, H. Enhancing Twitter sentiment analysis using hybrid transformer and sequence models. Japan J. Res. 6 (1), 089 (2025).

Aliyu Y, Sarlan A, Danyaro KU, Rahman AS, Abdullahi M. Sentiment analysis in low-resource settings: a comprehensive review of approaches, languages, and data sources. IEEE Access. 2024 May 8;12:PP.66883-909.

Baniata, L. H. & Kang, S. Switch-Transformer sentiment analysis model for Arabic dialects that utilizes a mixture of experts mechanism. Mathematics 12 (2), 242 (2024).

Bansal S. Nature-inspired-based multi-objective hybrid algorithms to find near-OGRs for optical WDM systems and their comparison. In Handbook of research on biomimicry in information retrieval and knowledge management 2018 (pp. 175-211). IGI Global.

Acknowledgements

The authors acknowledge that the research Universiti Grant, Universiti Kebangsaan Malaysia, Geran Translasi: UKM-TR2024-10 conducting the research work. Moreover, this research acknowledges Princess Nourah bint Abdulrahman University Researchers Supporting Project number (PNURSP2025R10), Princess Nourah bint Abdulrahman University, Riyadh, Saudi Arabia.

Author information

Authors and Affiliations

Contributions

K Chattu, K A N Reddy, S b veesam, and P S Chirumamilla made substantial contributions to design, analysis and characterization. V D Babu, S Bansal, K Prakash, participated in the conception, application and critical revision of the article for important intellectual content. M R I Faruque and K S A Mugren provided necessary instructions for analytical expression, data analysis for practical use and critical revision of the article purposes.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Conflict of interest

The authors declare no conflicts of interest.

Additional information