Abstract

Spatial interpolation is a frequent issue in geosciences, where the estimation of values of a variable of interest at unsampled locations is sought from some spatial samples. The techniques most frequently employed to address this issue, such as those considered in geostatistics, require an effort of modelling and characterisation of statistics. This has limited a greater use of these techniques in disciplines that work with spatial or spatio-temporal information. This paper presents a novel spatial analysis technique, which is an extension of a previously proposed ensemble spatial interpolation model. It aims to provide a methodology that is as data-driven as possible, useful for a more general geoscientific (or expert) audience, and capable of providing quality estimates without the need for specific classical geostatistical expertise, such as variographic analysis. Additionally, a reinterpretation of the ensemble spatial interpolation algorithm is presented as a generative Bayesian model, which offers a simple and insightful reinterpretation of the concept of spatial interpolation in general. Finally, an extensive series of experiments, using both real and synthetic data, is presented to test the limits of the proposed model in very demanding scenarios, comparing it with a traditional geostatistical model. The results obtained verify a good performance in the ability to capture the relevant spatial aspects, even in challenging conditions such as non-stationary cases or when there are few samples to perform the inference. In turn, the level of errors in validation contexts is similar to those obtained with traditional geostatistics (Ordinary Kriging method), in synthetic contexts that are suitable for the use of geostatistical techniques. In future work, further research can be considered to improve local spatial characterisation, as well as to use the proposed technique in spatial 3D case studies.

Similar content being viewed by others

Introduction

Spatial interpolation is a fundamental task in various branches of the geosciences, aiming to estimate the values of a variable at unmeasured locations based on data collected from neighbouring sites. Among the different spatial interpolation methods, Kriging stands out as a pioneering technique, initially developed for resource estimation in the mining industry, particularly for estimating gold reserves1,2. Its importance stems from its ability to provide accurate spatial estimates by incorporating the spatial correlation structure of the data.

Kriging is widely recognised as an unbiased linear estimator designed to minimize estimation error, and is often referred to as the Best Linear Unbiased Estimator (BLUE)3,4,5. One of Kriging’s key advantages is its stochastic nature, which allows for the calculation of the variance of the estimates, enabling the quantification of uncertainty in spatial predictions4,5. Over the years, these characteristics have established Kriging as the preferred method in geoscientific applications, providing superior performance compared to other interpolation methods such as Nearest Neighbour, Inverse Distance Weighting (IDW), and Splines3,6. In particular, it has become the standard method for resource estimation in the mining industry5.

Despite its success, Kriging exhibits some limitations, especially when applied to fields beyond resource estimation, such as soil property analysis7,8,9, groundwater level monitoring4,10, groundwater contamination detection11,12, seismic intensity assessment13, and rainfall prediction14. These applications have not fully embraced Kriging, primarily due to its sensitivity to parameter selection11,15,16. Incorrect parameter choices can lead to increased bias and uncertainty5,17, while the requirement for expert knowledge on spatial continuity introduces an entry barrier for many potential users8,18. Additionally, Kriging assumes stationarity of the spatial process, a condition that is often difficult to assess and unlikely to hold in real-world scenarios5,16,19. This assumption necessitates the use of complex modelling techniques that can reduce the overall accuracy of predictions11,19,20. Furthermore, in dynamic, real-time applications, such as meteorology or precision agriculture, where spatial structures evolve over time and data availability fluctuates, Kriging requires frequent re-evaluation of parameters, which can limit its practicality.

In short, the considerable technical knowledge and manual effort required to apply Kriging correctly is often not feasible in many practical contexts. To overcome these challenges, alternative spatial interpolation methods have been proposed that require minimal user input6,18,20 or that are capable of handling complex, non-stationary spatial structures3,16,20,21. Machine learning methods have been explored as more flexible and accessible alternatives6,8,20. However, most of these methods often do not capture the underlying spatial correlations, which deteriorates predictive performance3,22.

In response to these limitations, we introduce Adaptive Ensemble Spatial Interpolation (Adaptive ESI), an extension of the Ensemble Spatial Interpolation (ESI) model, independently proposed by Menafoglio et al.21 and Egaña et al.23. The ESI model provides a flexible, data-driven framework for spatial prediction across a wide range of geoscientific applications, regardless of user experience or the spatio-temporal complexity of the data. Whilst it was originally presented as an ensemble learning framework21,23, this paper proposes a reinterpretation of ESI as a Bayesian generative model. In this regard, in this paper we highlight that ESI actually estimates the posterior predictive distribution in unsampled locations without the need for assumptions of stationarity or manual modelling of spatial continuity. The proposed Adaptive ESI model retains the Bayesian ensemble scheme of ESI, where a stochastic space partitioning process serves as prior and local interpolators within relevant partition elements function as likelihood, with posterior estimates obtained by aggregating predictions across different partitions. The novelty lies in making these local interpolators adaptive, systematically exploring the optimal characterisation of spatial continuity within each partition cell.

The objective of this study is to evaluate the potential of Adaptive ESI, and to verify whether it can offer the same advantages as Kriging—such as unbiasedness, robustness and provision of uncertainty estimates—while significantly improving accessibility. Through a series of case studies, we compare the performance of Adaptive ESI with that of Ordinary Kriging, assessing their relative effectiveness and the extent to which user input influences the results. The article is then organised as follows: the next section outlines the proposed methodology applied in this study; the third section presents the design of experiments used; the fourth section provides the results obtained from the experiments; the fifth section offers a discussion of relevant aspects or findings; and the sixth section mentions conclusions and possible directions for future research.

Methods

Ensemble spatial interpolation

In general, ensemble methodologies seek a robust characterisation of an analysed variable by combining responses obtained from different models24,25,26. In our case, this can be synthesised into the generation of different hypotheses using a Bayesian generative model of the data. Namely, if we consider a space S, and random variables \(S^*\) and Z, where \(S^*\) represents a partition of S and Z a variable measured at a given location \(\varvec{x} \in S\), the generation of Z can be characterised by the simple Bayesian (probabilistic graphical) model \(S^* \rightarrow Z\), using the following probability distributions:

where the joint distribution \(p(Z, S^*)\) is expressed as a function of the conditional distribution \(p(Z|S^*)\). With this, Z can be characterised by its marginal distribution:

where \(\mathscr {S}(S)\) is the space of all possible partitions of S and \(\mu\) is a measure in \(\mathscr {S}(S)\). Using this relation, it is possible to model the spatial variable Z as a function of multiple (theoretically infinite) partitions of the space \(s^* \in \mathscr {S}(S)\). This leads naturally to the understanding that

Now, it remains to consider that Z is actually measured at (or indexed by) a position \(\varvec{x} \in S\). Then, if for simplicity we avoid using some kind of fuzzy partitioning scheme27, \(\varvec{x}\) can only belong to the (say) k-th element, or cell, of the partition \(S^*\) (\(S^*_k\)), in fact

It is interesting to note that this characterisation is more general than (and includes) the one used to formulate Kriging, since if \(S^* \in \mathscr {P}(S)\) is the partition where each element is a singleton containing \(\varvec{x} \in S\), then \(p(Z(\varvec{x}) \mid \varvec{x} \in S^*_{\varvec{x}})\) (where \(S^*_{\varvec{x}} \equiv \{\varvec{x}\}, \forall \varvec{x} \in S\)) is in fact the so-called random function16. In other words, Equation (4) can be considered as a kind of generalised random function.

Surprisingly, Eq. 4 resembles one of the most prevalent techniques in assembly methods, namely Bagging24,28. This technique, named after the acronym bootstrap-aggregating, initially entails the creation of \(n_T\) different scenarios through a sampling with replacement of all available information, based on the idea of Bootstrapping resampling29. Next, a model is fitted/trained with the data available in each scenario, resulting in \(n_T\) fitted models30,31. Finally, for a given query, the responses delivered by each fitted model are obtained and collapsed using some aggregation function32 (e.g. the average for continuous variables and the mode for categorical variables).

The Bagging scheme for ESI can be articulated as follows, using the proposed generative model of Equations (1) and (4): (1) the method for generating scenarios by sampling partitions from \(p(S^*)\); (2) the model used to characterise the data in each scenario by estimating \(p(Z(\varvec{x})| \varvec{x} \in S^*_k)\) using a local interpolator function (for further details, refer to Menafoglio et al.21 and Egaña et al.23); and (3) the aggregation function used, in this case, \(\mathbb {E}_{S^*}[\cdot ]\). In the context of this study, Adaptive ESI improves the local interpolator function by optimising the interpolation parameters within each cell, as opposed to using a global setup. These three aspects are shown as a methodological flowchart in Fig. 1. The details of each stage are mentioned below.

Flow chart of the Adaptive ESI methodology proposed.

Scenario generation

In this context, scenario generation is essentially achieved by sampling from \(p(S^*)\), which in practice consists of designing or choosing a stochastic process for generating random partitions. This raises the question of which properties should the aforementioned random partitions possess. In this regard, we focus on two aspects:

-

Data structure. It is advantageous that the partitions preserve the spatial arrangement of the data, thus facilitating efficient querying of data points within each cell. In one of the most widely used methods following the Bagging technique, Random Forest31, a decision tree model33 is fitted to each scenario obtained by Bootstrapping, partitioning variable’s domain. The primary advantage of this approach is the efficient implementation of the tree structure for both creation and querying34. Thus, the partition cells are encoded in the leaves, which represent the final level of characterisation of the tree. Accordingly, this work focuses on generative processes that produce random partitions that can be embedded within a tree structure.

-

Conditioning on sample data. The most common methodologies for generating a partition/tree employ conditioning on sample data. These methods optimise the partitioning of space in accordance with statistical criteria31, based on the available data. At the opposite end are techniques that perform this partitioning in a completely random manner35. Although the former have been the de facto standard in the 21st century, we focus on the latter, which have been a major breakthrough, primarily due to their favourable convergence and computational time characteristics.

Considering the above, the spatial context permits the partitioning of the analysed domain in multiple ways. Partitions, also known as tessellations, are typically based on specific geometric divisions, conditional on the available data, such as Voronoi and Poisson tessellations21,36. Considering this, and that these tessellations frequently utilise all the available spatial data to generate each scenario, they require significant expert analysis to adjust all the parameters of the process—a complexity this work aims to avoid. Recently, the Mondrian process, a completely random technique, has been proposed for statistical partitioning in spatial analysis23. Despite being independent of the spatial data, this method has demonstrated comparable performance to traditional partitioning techniques in classification and regression ensemble methods23,37.

The Mondrian process (MP) generates Mondrian scenarios (trees), under the Mondrian forest concept, producing tessellations aligned with the coordinate axes. This greatly reduces the parametrisation required for its use, making it particularly practical for non-specialist—i.e. non-geostatistical—use. This technique may not be optimal for capturing phenomena aligned with directions other than the X and Y axes of the coordinate system of a partition, however, the generation of many Mondrian partitions (Mondrian forest) allows to mitigate this situation, as different neighbourhoods (sizes and shapes) are tested for each position considered in the prediction stage. With this, in this work, we define:

where \(\Theta\) is the window containing the sample data. As can be observed, only two parameters are necessary to define a Mondrian process: (a) the number of scenarios to generate, \(n_T\), and (b) the granularity of the partition, \(\alpha\). \(n_T\) is a transversal parameter to any methodology that uses random forests31,35, and there is consensus that the greater the number of trees, the better the characterisation of the variable under analysis. The parameter \(\alpha \in (0, 1)\) is associated with the degree of fineness or coarseness of the partitioning, yielding coarser partitions for values of \(\alpha\) close to 0 and finer partitions when \(\alpha \rightarrow 1\). For further details on this technique, see the work of Egaña et al.23.

Local adaptive spatial interpolation

The most challenging aspect of the ESI model is the estimation of \(p(Z(\varvec{x})| \varvec{x} \in S^*_k)\), as it faces similar difficulties to methods like Kriging. However, in ESI, these challenges are confined locally to the \(S^*_k\) cells, where each position within each \(S_k\) is estimated through a local interpolator that uses the sample data contained within that cell. This implies that conditions such as stationarity, if required, are only necessary within the context of the specific cell. Menafoglio et al.21 and Egaña et al.23 proposed the use of a unique local interpolator for all cells across all partitions, an approach we refer to as Fixed ESI. Although this method has yielded promising results, it emphasises global spatial continuity properties, while failing to address local ones. This often results in a smoother interpolation that may overlook variations in anisotropy or disruptions in spatial continuity.

To address this issue, this paper proposes a simple yet flexible local interpolator, capable of adapting to the specific conditions of each cell. The objective is for the interpolator to be able to characterise diverse spatial behaviours by capturing local anisotropies, spatial continuities, and orientations of the analysed phenomenon. This approach will be referred to as Adaptive ESI.

Although it is possible to use traditional interpolation techniques (e.g., Ordinary Kriging) and other more elaborate techniques to deal with local contexts (e.g., locally variable anisotropy Kriging38) to address this adaptive context, in this paper we consider one of the simplest and most tractable techniques. This emphasizes the concept that capturing spatial structure rests more on the expressiveness of the partitioning process than on the complexity of the local interpolator. To this end, we propose the use of IDW (Eqs. 6, 7) as a local interpolator, as used by Egaña et al.23, but with a cell-by-cell adaptation of the exponent parameter p—which controls the weight of each sample \(\varvec{x}_i\) based on their distance to the location being estimated \(\varvec{x}\).

where:

This methodology generates a range of potential outcomes, where the edge cases are: \(p = 0\), where each sample is assigned the same weight in the interpolation (equivalent to the simple mean of the neighbours), and \(p \rightarrow \infty\), where the nearest sample is assigned a weight of 1, while all other samples are assigned a weight of 0 (equivalent to a nearest neighbour interpolation). Therefore, by modifying this parameter in accordance with the samples contained within the cell under analysis, a more robust interpolation can be achieved across the diverse local contexts.

Moreover, the spatial characterisation can be further enhanced by incorporating anisotropy into the IDW interpolator itself through a modification of the distance function \(d(\varvec{x}_i, \varvec{x})\). To achieve this, we introduce two novel interpolation parameters: the azimuth angle \(\phi\), which rotates the interpolation axes, and the anisotropy factor \(a_f\), which controls the contribution of each rotated axis. Let us consider a set of N two-dimensional samples of the form \((\varvec{x}_i \in \mathbb {R}^2, \, z_i \in \mathbb {R})\) for \(i=1, \cdots , N\). We propose an extended IDW interpolator \(z(\varvec{x}): \mathbb {R}^2 \rightarrow \mathbb {R}\) as follows:

In the above equations, \(w_i\) represents the weight assigned by the model to the i-th sample. \(d(\varvec{x}_i, \varvec{x})\) is the distance between \(\varvec{x}_i\) and \(\varvec{x}\), after the coordinate axes have been rotated by an azimuth angle \(\phi\), using the corresponding rotation matrix \(R_{\phi }\), followed by the adjustment of the rotated x-axis by an anisotropy factor \(a_f\). The operation ‘\(\times\)’ represents the matrix product, while ‘\(\cdot\)’ corresponds to the inner product or vector product. The anisotropy factor \(a_f > 0\) serves to regulate the contribution of the newly rotated x-axis for the computation of the distance \(d(\varvec{x}_i, \varvec{x})\), determining whether said contribution is greater (\(a_f > 1\)) or smaller (\(a_f < 1\)) than that of the y-axis. In particular, when \(a_f > 1\), greater spatial continuity is considered to exist along the new y-axis in comparison to the new x-axis. A similar phenomenon can be observed in the variographic study of a spatial variable, whereby greater range is observed in a variographic structure along a given direction of analysis, compared to another16.

Thus, the extended IDW considers the parameters p, \(\phi\) and \(a_f\). In order to select the optimal set of parameters for each partition cell, a methodology that minimises the estimation error is proposed, which employs the mean absolute error (MAE) within the context of a leave-one-out (LOO) validation. Formally, for the \(S^*_k\) cell within each partition, the optimal set of parameters \((p^*, \phi ^*, a_f^*)\) is searched for according to:

where: \(\hat{z}_i^{(LOO)}\) is the estimate for \(z_i\) obtained by employing the extended interpolator, taking into account all the samples in the cell, except for the sample associated with \(z_i\). This minimisation considers the following restrictions to ensure the generation of a valid interpolator, without drift or external trend: \(p > 0\), \(\phi \in [- \pi , \pi ]\) and \(a_f > 0\), where \(\phi\) represents the azimuth associated with the direction of greatest spatial continuity, while \(a_f\) denotes the anisotropy factor associated with the optimal means of characterising the spatial behaviour. The optimal parameters are determined by error minimisation, with the exception of cells where edge cases occur. As edge cases, we consider: the absence of samples within a cell, which results in missing estimates for that cell; and the presence of only one or two samples within a cell, in which case a default optimal parameter set is employed due to insufficient information for optimisation.

As a practical remark, the same set of optimal parameters \((p^*, \phi ^*, a_f^*)\) is used for the estimation of all positions within a given cell. Therefore, when performing interpolation on a large number of given positions (e.g., an estimation grid), it is more computationally efficient to pre-compute the optimisation of parameters for each cell, which allows for the minimisation to be performed just once.

Spatial aggregation function

In general, in bagging methods, the aggregation function utilised to derive a single response to a query is a statistical summary of the set of responses corresponding to the \(n_T\) fitted models. In the case of the proposed methodology, two results are obtained for each position \(\varvec{x} \in S\), where S is the spatial domain analysed. The first is the mean of the values delivered by each local model corresponding to the consulted position, which is used as the result of the interpolation with ESI. The second is the variance of the estimate, calculated as the experimental variance of the same dataset as the previous statistic, whose value provides insight into the uncertainty of the ensemble model at that position. The rationale for this is based on a technique widely used in Bayesian inference, where given a posterior distribution F, the best estimator for that distribution is obtained by minimising the precision function \(\mathscr {P}_{\mathscr {L}}(\tilde{x}) = \mathbb {E}_{x \sim F}[\mathscr {L}(x, \tilde{x})]\) (which in this case is a measure of uncertainty), where \(\tilde{x}\) is the estimator to be evaluated and \(\mathscr {L}(\cdot , \cdot )\) is a loss function that measures the cost of choosing that estimator, given the distribution in question. It can be seen that for the distribution F, when the loss function is \(\mathscr {L}(x, \tilde{x}) = (x - \tilde{x})^2\) (quadratic error), the precision function is minimised by the expected value \(\tilde{x} = \mathbb {E}_{x \sim F}[x]\)39,40. Therefore, in this case, the precision function corresponds indeed to the variance, which in this paper we call the variance of the estimate. It is important to note that all the above actually offers a powerful framework for building ad-hoc measures of uncertainty, at the cost of having to choose the estimator that minimises it.

In addition to enabling the estimation of a position, the extended IDW interpolator also permits the acquisition of data regarding the optimal parameters, calculated for each \(\varvec{x} \in S\). This enables supplementary analyses, including the local spatial structure employed by the proposed method. The parameters of this extended IDW interpolator (p, \(\phi\) and \(a_f\)) are identical at all positions included in each cell of a partition. Subsequently, they are aggregated for each \(\varvec{x} \in S\), as follows: the mean is used for the values of p and \(a_f\); in the case of azimuth, we first calculate the mean of the projections in x and y contributed by a unit vector with the optimal azimuth \(\phi ^*\) of each cell, and then use the function \(arctan(\cdot )\) to calculate the final angle \(\phi\). Considering the constraints of the minimisation problem performed in each cell to obtain the optimal parameters, as well as the aggregation function applied in each case, the final parameters (p, \(\phi\) and \(a_f\)) at each position maintain the same constraints as those established for each cell, i.e. \(p > 0\), \(\phi \in [- \pi , \pi ]\) and \(a_f > 0\). This procedure yields a map of each of these parameters within the same domain as the analysed variable, \(z(\varvec{x})\).

Experimental design

In this section, the experiments performed to evaluate the performance of Adaptive ESI are delineated. As outlined in the introduction, Kriging has established itself as the gold standard in spatial interpolation3,6,15 within both industry and academia. Consequently, a comparison with the latter is presented in the majority of experiments. Given the very different nature of these interpolators, and our objective to avoid Kriging’s frequent reliance on the user’s expertise, we employ diverse metrics to ensure a fair comparison.

Datasets

In this study we focus on two datasets:

-

To establish a solid basis for exhaustive comparison with Kriging we use a synthetic dataset produced by a generative model that is consistent with the hypotheses of that method. In this way we seek to recreate: (a) best conditions, and (b) challenging conditions for Kriging.

-

To avoid any interpretation bias produced by the generative model, we use a real dataset that presents highly challenging conditions for Kriging.

Synthetic data generation

We adopt a generative approach to obtain a stationary and controlled analysis environment. A turning-bands spectral algorithm41 is used to simulate stationary Gaussian vector random fields with unit variance on a \(200 \times 200\) grid, as illustrated in Fig. 2. The simulations cover multiple isotropic scenarios, characterised by different nugget effects (Fig. 2a with 0.0, Fig. 2b with 0.1 and Fig. 2c with 0.2) and a fixed offset distance of 50.0 for both the x-axis and the y-axis. In addition, we simulate an anisotropic scenario (Fig. 2d) with a nugget effect of 0.1, and lag distances of 70.0 in the x-axis and 35.0 in the y-axis.

Synthetic scenarios: stationary Gaussian random fields with different nugget effects \(C_0\). (a)–(c) Isotropic scenarios with \(C_0 = 0.0\), 0.1 and 0.2, respectively. (d) Anisotropic scenario with \(C_0 = 0.1\).

In addition, we examine more complex analysis environments, encompassing strongly anisotropic spatial continuities, a phenomenon often observed in the geosciences. These pose a major challenge to classical geostatistics. In this regard, we start with two synthetic datasets42, illustrating regular circular (Fig. 3a) and radial (Fig. 3b) anisotropies. Both images have a size of \(200 \times 200\) pixels.

Scenarios with complex anisotropies. (a) Circular anisotropy case. (b) Radial anisotropy case.

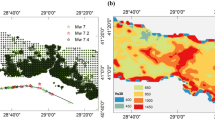

Real data

We use a series of training images43 based on real-world scenarios. These include a digital elevation map (DEM) of Walker Lake44 (Fig. 4a), where each pixel represents an elevation value; two binary training images representing channel structures44 (Fig. 4b, c), where pixels with a value of 0 indicate the absence of channels, while pixels with a value of 1 (in the case of Strebelle) or 128 (in the case of Channels) indicate the presence of channels; and four RGB images, where the values at each pixel represent brightness on a scale of 0 to 255. The latter comprise a stone wall (Fig. 4d), a satellite image of the Sundarbans region (Fig. 4e), mud cracks (Fig. 4f), and marble (Fig. 4g).

Real cases with scenarios of non-stationary conditions. (a) DEM case (260 × 300 pixels). (b) Strebelle case (250 × 250 pixels). (c) Channels case (400 × 340 pixels). (d) Stones case (200 × 200 pixels). (e) Sundarbans case (580 × 400 pixels). (f) Cracks case (440 × 288 pixels). (g) Marble case (408 × 335 pixels).

Sampling scheme of the conditioning data

As conditioning data, we use samples of varying sizes drawn from the datasets. These are generated by making random permutations of the data indices, with the first elements of said indices determining the points used for each sample. The observed percentages of data availability in different revised studies in the field of geosciences range up to 70%14. Considering this, we employed samples representing 1% and 5% of the total data, in addition to reduced-sized samples (50 points \(\approx\) 0.1%), which were deemed as reasonable. Figure 5 illustrates each case.

Examples of conditioning datasets. (a–c) Realisations with different sampling rate (5%, 1% and 50 points \(\approx\) 0.1%, respectively).

Description of the experiments

In order to obtain a comprehensive insight from this study, the experiments described below have been designed to both evaluate the performance of Adaptive ESI and assess its potential as a simple yet intelligent, data-driven interpolator.

Experiment 1: Local adaptiveness

Adaptive ESI is able to adapt to local structures of spatial continuity based solely on the data. This functionality, which we refer to as local adaptivity, allows the model to autonomously deal with non-stationary or intricate structures. To achieve this, the model optimises the adaptation parameters \((p^*, \phi ^*, a_f^*)\) within each partition cell.

In light of this, our analysis begins with an assessment of the impact of introducing adaptive capability into the ESI local interpolation function. To this end, we compare the performance of Fixed ESI (whose local interpolator is the same for all partition cells) and Adaptive ESI in both a controlled isotropic scenario, and a more complex highly anisotropic scenario.

In addition, we investigate the manifestation of local adaptability through adaptive parameters. To elucidate the contribution of these parameters to the model’s detection of spatial structures, we assess their importance by analysing their values in both scenarios.

Experiment 2: Adaptive ESI versus Gaussian modelling

Building on the analysis of the improvements introduced by Adaptive ESI in comparison to its Fixed counterpart, this experiment aims to evaluate these advancements in a more challenging context. To this end, we conduct a comparison between Adaptive ESI and Ordinary Kriging3,4,18. The comparison is conducted in controlled scenarios, thereby allowing us to present the most favourable conditions conceivable for Kriging.

The models are employed for the reconstruction of two different synthetic stationary Gaussian random fields, using samples of varying sizes. This setting offers Kriging two notable advantages. Firstly, the target images satisfy the hypothesis of stationarity, thereby mitigating the potential for user-induced error when determining stationary subdomains. Secondly, the underlying spatial continuity models of the target images are known, and consequently, they can be employed in Kriging, thus eliminating the inductive bias that occurs during the definition of the modelled variogram. These optimal conditions establish a high benchmark for ESI, which is solely provided with the samples. The comparison is structured to focus on the well-established strengths of Kriging: accuracy and unbiasedness.

As a last aspect to be analysed in this experiment, we seek to characterise the local uncertainty associated to the Adaptive ESI estimation method. It is worth mentioning at this point that the issue of uncertainty quantification is, in fact, an open line of research; there is no single way of defining uncertainty, but consistency in its assessment must be maintained. Although Kriging provides a way to quantify uncertainty through the prediction variance (Kriging variance), it is known that this value only takes into account geometric/spatial aspects and spatial continuity as a function of the modelled variogram used, without explicitly considering information from nearby spatial data45,46. Because of this, a comparison of the uncertainty quantification will be made with respect to the technique of Gaussian simulations, which are precisely the state of the art for this purpose in the spatial context47,48. The method used to generate the realisations of the Gaussian simulations is the turning-bands spectral algorithm41.

The results of the Adaptive ESI and Gaussian simulations are analysed, considering that both work with scenarios conditional on the data: each of the \(n_T\) fitted models in the case of Adaptive ESI, or each of the generated realisations in the case of the Gaussian simulation. In this way, the scenarios of each methodology can be aggregated/summarised using the minimisation of a loss function, as mentioned in the Methods section. With this procedure, the quantification of the local uncertainty can be assessed by calculating the local variance of both methodologies.

Experiment 3: Parameter dependency

It is widely acknowledged that accurately determining the parameters for Kriging is a challenging yet crucial aspect of achieving its optimal performance5,11,16. Attaining the requisite expertise in spatial analysis to obtain favourable outcomes can require years of practice. In order to avoid the adverse impacts of inductive biases, we aim for a model that exhibits parameter independence, whereby the accuracy of interpolations is determined by the quality of the data rather than by the parameters selected by the user. This approach serves to minimise the necessity for specialised knowledge and, consequently, enhances the accessibility of the model.

In the preceding experiment, the challenges associated with Kriging parameter selection were mitigated to the greatest possible extent. Conversely, in this experiment, said challenges are illustrated by evaluating parameter dependence in Ordinary Kriging, as well as Fixed ESI and Adaptive ESI. The objective is to ascertain the extent to which the efficacy of each model depends upon the appropriate selection of parameters; or, in other words, to determine the consequences of an erroneous parameter selection. To this end, a sensitivity analysis is conducted on each model’s user-selectable parameters. In this context, a model can be considered parameter-independent if it generates consistent results regardless of parameter variation.

Experiment 4: Non-stationary scenarios

The experiments described above are all performed across stationary, controlled scenarios, which are well suited to models such as Kriging, as they do not present additional difficulties in spatial continuity modelling. In contrast, this final experiment aims to illustrate the full potential of our adaptive local interpolator to autonomously capture intricate spatial continuities in the data. To illustrate this capability, we apply both Adaptive ESI and Ordinary Kriging to a collection of real-world, non-stationary spatial datasets.

In this context, only sample data are available, without any auxiliary information about the underlying equations. Given the considerable spatial complexity of many of the datasets analysed, variography modelling is carried out in great detail. In doing so, anisotropies are evaluated for consideration, as well as more than one structure of the modelled variogram. This is done by a Kriging expert.

Results

For ease of reading, the results of the experiments are shown below in the same order in which they were presented in the experimental design.

Local adaptiveness

First, to assess the impact of adaptive capacity, we compare the performance of the fixed and adaptive versions of the ESI model. We start by considering a simple, stationary, isotropic scenario, and then move on to a more intricate, highly anisotropic scenario. The accuracy of each model is assessed through an analysis of their corresponding estimates and errors.

Fixed versus Adaptive ESI in an isotropic scenario. (a) Reference scenario. (b) Estimates. (c) Errors.

Fixed versus Adaptive ESI in a highly anisotropic scenario. (a) Reference scenario. (b) Estimates. (c) Errors.

Figures 6 and 7 illustrate the target images (Fig. 6a, 7a) alongside the estimates (Figs. 6b, 7b), point errors (Figs. 6c, 7c), and mean average error (MAE) for both ESI models in isotropic and anisotropic scenarios, respectively.

In addition, we assess the ability of each model to capture spatial continuity structures through an analysis of experimental variograms, which are calculated using the Eqs. 13 and 14.

where, \(V_x(l)\) and \(V_x(l)\) represent the variogram for the x-axis and y-axis respectively, l is the offset distance between pixels, Z(i, j) represents the pixel value at position (i, j), \(N_x(l)\) and \(N_y(l)\) represent the number of pixel pairs for an offset distance l.

Figure 8 shows the reference variograms together with the experimental variograms for the estimates derived from the fixed and adaptive versions of ESI, for the isotropic scenario (Fig. 8a) and the anisotropic scenario (Fig. 8b).

Fixed versus Adaptive ESI experimental variograms. (a) Isotropic scenario. (b) Anisotropic scenario.

Analysis of local parameters

Figures 9 and 10 show the optimal mean parameter values for the isotropic and anisotropic scenarios, respectively.

Map with the optimal parameters found by Adaptive ESI for an isotropic scenario. (a) Exponent (\(p^*\)). (b) Azimuth (\(\phi ^*\)). (c) Anisotropy factor (\(a_f^*\)).

Map with the optimal parameters found by Adaptive ESI for a highly anisotropic scenario. (a) Exponent (\(p^*\)). (b) Azimuth (\(\phi ^*\)). (c) Anisotropy factor (\(a_f^*\)).

Adaptive ESI versus Gaussian modelling

We now turn to a comparative analysis of Adaptive ESI and Ordinary Kriging under controlled stationary conditions. The comparison is performed in a scenario of reconstructing two stationary Gaussian random fields—one isotropic and one anisotropic—and for three distinct sample sizes of the conditioning data: 5%, 1% and reduced (illustrated in Fig. 5). In this experiment, no hyperparameter tuning is performed for ESI; instead, fixed values of \(m = 100\) and \(\alpha = 0.9\) are employed. Kriging is run using the theoretical variogram with which the simulations were generated.

Accuracy

The accuracy of the estimates is evaluated by analysing the magnitude and spatial distribution of estimation errors. Figures 11 and 12 illustrate the estimates and errors obtained from the use of Adaptive ESI and Ordinary Kriging in the reconstruction of isotropic and anisotropic images, respectively.

Reconstruction of an isotropic scenario using Adaptive ESI and Ordinary Kriging with varying sample sizes. (a) Reference scenario. (b) Estimates. (c) Errors, determined as the difference between the estimated and the reference value.

Reconstruction of an anisotropic scenario using Adaptive ESI and Ordinary Kriging with varying sample sizes. (a) Reference scenario. (b) Estimates. (c) Errors, determined as the difference between the estimated and the reference value.

The mean absolute error (MAE), mean squared error (MSE) and mean absolute percentage error (MAPE) for the estimates are presented in Table 1, which includes additional sample sizes of 10% and 15%.

Figures 13 and 14 illustrate the reference variograms along with the experimental variograms, derived for the x and y axes using Eqs. 13 and 14, for the isotropic and anisotropic scenario, respectively.

Reference and experimental variograms for Adaptive ESI and Ordinary Kriging in an isotropic scenario. (a) Conditioned by 5% of the total data. (b) Conditioned by 1% of the total data. (c) Conditioned by 50 samples.

Reference and experimental variograms for Adaptive ESI and Ordinary Kriging in an anisotropic scenario. (a) Conditioned by 5% of the total data. (b) Conditioned by 1% of the total data. (c) Conditioned by 50 samples.

Unbiasedness

The unbiasedness property is assessed by evaluating the overall bias of the estimates. Figure 15 illustrates the distribution of the errors associated with the estimates for the isotropic and anisotropic scenarios. At the same time, the Table 2 presents the mean, median and mode of the estimation errors, together with the t-estimate and p value of a t test comparing the means of the ESI and Kriging errors. The null hypothesis of this test is that the ESI and Kriging estimation errors are identical, and since Kriging is considered unbiased, not rejecting this hypothesis would provide evidence that ESI is also unbiased.

Error histograms for Adaptive ESI versus Ordinary Kriging applied on two stationary images, conditional on 5% of the total data, 1%, and 50 samples, respectively. (a) Isotropic scenario. (b) Anisotropic scenario.

Uncertainty quantification

The ability of the models to quantify uncertainty is now illustrated. Figure 16 shows the local variance of Adaptive ESI estimation together with the local variance of the conditional simulations, with respect to the local mean of the simulations which, theoretically, is equivalent to the Kriging (used in the conditioning process49).

Local variance of the Adaptive ESI estimation versus local variance of the conditional Gaussian simulations in the reconstruction of two stationary Gaussian random fields. (a) Isotropic scenario. (b) Anisotropic scenario.

Parameter dependence

In order to ascertain the extent to which the performance of Fixed ESI, Adaptive ESI and Ordinary Kriging depends on the appropriate selection of parameters, a sensitivity analysis on the hyperparameters of each model is now performed (Fig. 17). To this end, a stationary isotropic reference image (Fig. 17a) is repeatedly reconstructed, with both ESI models (Fig. 17b with Fixed ESI and Fig. 17c with Adaptive ESI) applied for each \(\alpha \in \{ 0.3, 0.5, 0.7, 0.9\}\) and \(N_T \in \{ 50, 100\}\) and Ordinary Kriging (Fig. 17d) for each \(n_{data} \in \{5, 10, 20\}\) and cubic, exponential, Gaussian and spherical variogram models. The same sample, representing 1% of the total dataset, serves as conditioning data in all cases.

Sensitivity analysis of ESI and Kriging hyperparameters. (a) Reference scenario. (b–c) Fixed and Adaptive ESI estimates, with variations in the number of trees shown in columns and \(\alpha\) values in rows. (d) Kriging estimates, with variations in the number of data in the search neighbourhood shown in columns and variogram model in rows.

Non-stationary scenarios

In contrast to the previous controlled scenarios, we now compare the performance of Adaptive ESI and Ordinary Kriging on datasets representing spatial continuity structures for which the underlying equations are unknown. Two synthetic anisotropic datasets and seven cases with actual real data are evaluated. ESI is implemented with fixed parameters of \(N_T = 100\) and \(\alpha = 0.9\), while the modelling of spatial continuity and selection of parameters for Kriging is carried out on a case-by-case basis according to experienced expert judgement. The ANDES® geostatistical software50,51 was used as a support tool in the modelling of spatial continuity, which considers a semi-automatic method for obtaining the modelled variogram (necessary for Kriging method), based on the definition of the experimental variogram with an adjustment that minimises the mean squared error.

Adaptive ESI versus Kriging mismodelling

To exemplify the difficulties associated with modelling spatial continuity in Kriging under non-stationary conditions, we first examine a particularly complex scenario: the Sundarbans case44, where the choices made by different practitioners can vary. To this end, four distinct modelling alternatives are assessed, incorporating combinations of the use of either a single variogram for all the analysed directions (omni-directional) or a different variogram for each axis (two-directional), and the use of either one or two modelled variogram structures. The results are then compared with the data-driven estimate provided by Adaptive ESI.

Figure 18 illustrates the estimates derived from Adaptive ESI, alongside estimates from the different Kriging approaches, obtained when using a sample size of 5%. Table 3 presents the mean absolute error (MAE) and the mean absolute percentage error (MAPE) obtained when using sample sizes of 1% and 5%.

Estimates for the Sundarbans case using Adaptive ESI and different versions of Ordinary Kriging, with 5% of the data employed as conditioning data. (a) Reference scenario. (b) Adaptive ESI. (c) Kriging.

Adaptive ESI in challenging scenarios

This section presents an evaluation of two synthetic datasets with extreme anisotropy and seven real data cases, all of which are non-stationary. For these scenarios, the best-performing Kriging model found (by an experienced Kriging practitioner and the ANDES® software) is considered. In all cases, a sample size of 5% is used as conditioning data.

Adaptive ESI and Ordinary Kriging estimates, and experimental variograms for different non-stationary scenarios. (a) Circular case. (b) Radial case. (c) DEM case. (d) Strebelle case. (e) Channels case. (f) Stones case. (g) Cracks case. (h) Marble case. (i) Sundarbans case.

Figure 19 presents the Adaptive ESI and Ordinary Kriging estimates in each scenario, together with the reference variograms and the experimental variograms derived from the Eqs. 13 and 14. These scenarios include the Circular case (Fig. 19a), the Radial case (Fig. 19b), the DEM case (Fig. 19c), the Strebelle case (Fig. 19d), the Channels case (Fig. 19e), the Stones case (Fig. 19f), the Cracks case (Fig. 19g), the Marble case (Fig. 19h), and the Sundarbans case (Fig. 19i).

Table 4 presents the mean absolute error (MAE), operational error (OE) and mean absolute percentage error (MAPE) of all estimates. The OE is defined as the mean absolute error calculated with the data normalised to the dynamic range of the reference image, multiplied by 100; this metric allows for a normalised comparison between datasets with markedly disparate operational ranges.

Discussion

So far, in this study we have shown adaptive ESI as an alternative to traditional interpolation methods, presenting a brief theoretical background and a detailed experimental design to verify its scope. For the latter, we have taken the well-known and accepted Kriging method as a basis for comparison, addressing key issues such as the assumption of global stationarity and the dependence on expert-defined spatial models through a series of experiments, focusing on: (a) local adaptivity; (b) performance in accuracy, bias and uncertainty quantification; (c) dependence on user-defined parameters; (d) performance against challenging conditions, such as non-stationary domains. In the previous section we present the results obtained in the experiments and, in the discussion that follows, organised in the same way as these results are presented, we explore the implications of these results, comparing the strengths and limitations of Adaptive ESI in relation to Kriging.

Local adaptiveness

In the initial experiment, the influence of adaptive capacity was assessed by comparing the performance of Fixed ESI and Adaptive ESI in isotropic and anisotropic scenarios.

In Figs. 6 and 7, the Adaptive ESI estimates show a lower degree of smoothing—which responds to one of the main motivations for adding more dynamism to local estimation in ESI; that is, the excessive degree of smoothing of Fixed ESI—as well as lower error values, compared to the Fixed ESI. This difference is particularly evident in the anisotropic scenario.

The experimental variograms (Fig. 8) derived from Adaptive ESI estimates achieve a better representation of both the shape and magnitude of the reference variograms, compared to Fixed ESI—implying that Adaptive ESI shows a better ability to capture the structure of the underlying spatial continuity than its fixed counterpart. This improvement is particularly evident in Fig. 8b, where Adaptive ESI accurately captures the complex patterns of the reference variogram. This is further highlighted in the error maps from the isotropic case (Fig. 6c), where it can be observed that the Adaptive ESI model yields an error that does not reproduce the original spatial continuity structure, in contrast to the Fixed ESI model, where the errors exhibit a spatial continuity structure that mirrors that which is being inferred. This phenomenon, known in the literature as model drift, generally occurs when a model becomes incompatible with the internal structure of the data52.

Analysis of local parameters

In this context, it is also of interest to examine the information that can be provided by the resulting parameters from the local parameter optimisation process. For the same two scenarios, it is reasonable to think that in the isotropic case, the parameters \((p^*, \phi ^*, a_f^*)\) should be spatially homogeneous while in anisotropic scenarios they should exhibit some kind of spatial structure. In this sense, the values and spatial distribution of the locally optimised parameters were examined. In the isotropic scenario (Fig. 9), these parameters have lower and more homogeneous values, while in the anisotropic scenario (Fig. 10) they show some spatial structure, although not necessarily well correlated with the spatial structure of the original domain. However, this provides some evidence of the ability of the proposed approach to capture spatial continuity structures, where the aggregate analysis of the magnitudes and properties of the optimal parameters at each spatial location could serve as a valuable tool for spatial analysis.

Adaptive ESI versus Gaussian modelling

Accuracy

As shown in Figs. 11 and 12, the estimates and error maps produced by ESI and Kriging are visually similar. Furthermore, the error metrics for both models are comparable, as shown in Table 1. While Ordinary Kriging systematically obtains slightly lower MAE and MSE values, Adaptive ESI obtains lower MAPE values. Moreover, as expected, both models provide higher values of all error metrics and more pronounced smoothing as the number of observations decreases.

Subsequently, the ability of the estimates to capture the spatial continuum structure is analysed by comparing the experimental variograms of the reference and estimated images—the variograms are illustrated in Figs. 13 and 14 for the isotropic and anisotropic scenarios, respectively, and were derived for the x and y axes using the Eqs. 13 and 14. In both models, the latter show comparable performance in reproducing the shape of the reference curve, with Kriging showing slightly better accuracy in capturing its magnitude. At sample sizes of 5% and 1%, both models are able to capture the overall shape of the curve, although neither is able to account for the nugget effect. As the number of observations decreases, the variograms produced are increasingly different from the reference variograms, showing a decrease in the ability to capture the spatial continuity structure. This is illustrated in more detail in Figs. 13 and 14. In these figures it can be seen for for the 5% sample size, the error maps do not show any spatial continuity structure; however, for the 1% and reduced sample sizes, the spatial continuity structure that is intended to be inferred begins to manifest itself in the error maps, evidencing some model drift.

Unbiasedness

All histograms in Fig. 15 appear to show a normal distribution and are centred close to zero. This suggests that ESI, like Kriging, can be considered unbiased on a global scale. This is corroborated by the statistics presented in the table, which indicate that the mean, median and mode of the estimation errors are approximately zero in most cases. Furthermore, the t test yielded a p value greater than 0.18 in most cases, which is insufficient to state, at a commonly accepted level of significance, that the mean errors of the two methods are different. The only exception is the case of the small sample size of the isotropic hypothesis, where the null hypothesis can be rejected with more than 99% confidence. However, the Kriging mean error is not \(\approx 0\) in this scenario, most likely as an effect of the small sample size. Therefore, rejecting the null hypothesis in this case does not imply that ESI is biased. Moreover, the mean error of ESI is closer to 0, so it may have handled the limited data available better.

Uncertainty quantification

In Fig. 16, it can be observed that both models present relatively low variance values in all cases. This suggests a high level of precision for both models. Note that the variance of Adaptive ESI is lower in all cases. This could lead one to believe that the latter is more precise than the conditional simulation model. However, this is not necessarily the case. It suffices to recall that the ESI output is, in general, an estimate of \(p(Z(\varvec{x}))\) from Eq. (4) (i.e., an empirical posterior distribution), whose goal is, in fact, to improve the precision of that estimate - unlike a conditional simulation model, which aims to be expressive with respect to the variability of the simulated phenomenon. The two models have different foundations.

Finally, we note once again that for the reduced sample size case, model drift is shown for the case of the conditional simulations, as spatial structure appears in the variance map in both the isotropic and anisotropic cases. This is an undesirable aspect of Gaussian simulations, because it is evidence that the model does not fully capture the spatial structure, leaving a residual whose variography would resemble a geometric shape, observed when displaying the local variance. This situation is similar to when the residuals of a linear regression are analyzed, and phenomena such as heteroscedasticity appear53. It is further evidence that Adaptive ESI seems to have a better handling when little data is available.

From the above analysis it can be seen that Adaptive ESI is able to compete in the three main strengths: accuracy, unbiasedness and uncertainty quantification. However, it achieves this with less user input, operating almost autonomously.

Parameter dependence

In Fig. 17b it can be observed that the estimates from Fixed ESI are notoriously different across different parameter configurations, most notably \(\alpha\). Similarly, Kriging (Fig. 17d) shows significant variations in performance across different parameter settings. Its estimates are particularly sensitive to the choice of variogram model. When a suboptimal variogram model is selected (i.e., when mismodelling occurs), not only is performance substantially degraded, but the estimate also becomes more sensitive to the \(n_{data}\) parameter, as observed with the cubic and Gaussian models. In contrast, Fig. 17c shows that the performance of Adaptive ESI remains stable across different combinations of its parameters. Therefore, Adaptive ESI demonstrates a reduced parameter dependence in comparison to both Fixed ESI and Ordinary Kriging.

Non-stationary scenarios

Adaptive ESI versus Kriging mismodelling

As illustrated in Fig. 18, the images produced by Kriging exhibit significant variations across different modelling configurations. The metrics presented in Table 3 indicate that Kriging achieves the best performance when employing an omni-directional approach with two variogram structures. However, it is not trivial to ascertain this in practical applications where global error metrics, such as those presented in Table 3, cannot be calculated. Furthermore, Adaptive ESI obtained lower values of MAE and MAPE than all Kriging configurations.

Adaptive ESI in challenging scenarios

As demonstrated in Fig. 19 and Table 4, in most cases, ESI produces images that exhibit a higher degree of resemblance to the references and lower values for each error metric. The exceptions to this occur in the cases of Circular and Stones. Furthermore, Adaptive ESI shows slightly better performance in reproducing the shape and magnitude of the reference variogram, and thus, its estimates better reproduce the target spatial structure. This phenomenon is most pronounced in the DEM case, as illustrated in Fig. 19c, where Kriging fails to accurately capture the variability of the reference image, leading to a subrepresentation of higher values and, consequently, a flatter variogram. Moreover, high MAPE values are obtained in Table 4 for cases where there are 0 or very close to zero values in the reference images (e.g. Circular, Radial, Strebelle, etc.), since the MAPE divides by these values (considering a very small epsilon).

Conclusions and future work

In conclusion, this study has introduced Adaptive Ensemble Spatial Interpolation (Adaptive ESI) as a promising alternative to traditional geostatistical methods, effectively addressing key limitations such as the reliance on stationarity assumptions and the need for expert input in parameter selection. By leveraging the spatial structure inherent in the data, Adaptive ESI provides enhanced flexibility and accuracy in spatial estimation, while also offering uncertainty quantification, a key advantage of conventional geostatistical approaches. The results indicate that Adaptive ESI performs comparably to traditional methods, while offering greater accessibility and reduced dependence on manual intervention. Consequently, this method has significant potential for a broader range of geoscientific applications.

To study the scope of Adaptive ESI, we have conducted a comprehensive series of experiments. These have shown that this methodology provides state-of-the-art estimates and effectively deals with highly complex scenarios, both in varying anisotropy and stationarity. These results can be summarised as follows:

-

Adaptive ESI represents a significant improvement compared to the first ESI model, independently proposed by Menafoglio et al.21 and Egaña et al.23. Local optimisation can effectively overcome the problem that the method relies on asymptotic behaviour, dependent on the number of partitions, which can make it computationally inefficient. It has been observed that the optimised parameters of the local interpolator used in this study manifest, in a still very limited way, the spatial structure under study. The latter is still a large area to be explored and developed in the future.

-

It is possible to match the robust spatial analysis capabilities of traditional geostatistics by employing a data-driven interpolation algorithm that does not require the user to specify an explicit spatial continuity model.

-

Adaptive ESI shows minimal sensitivity to its hyperparameters—the number and coarseness of the partitions—which consequently reduces the risk of selecting suboptimal parameters. This facilitates accurate estimations without the need for specific geostatistical knowledge, which makes Adaptive ESI a direct alternative to traditional methods for users whose interest is focused on the inference about the phenomenon itself and not on the tool used for such inference.

-

In scenarios representing particularly complex spatial structures (non-stationary or highly anisotropic), which have been a major challenge for traditional geostatistics, Adaptive ESI has demonstrated good enough performance to be used in practical applications.

-

The locally optimised parameters were able to manifest part of the spatial structure studied, suggesting that they have the potential to become a useful tool for automated spatial analysis. Although this is a very preliminary observation, which requires much more study, it opens up an important field of research for the design of other, potentially simpler, local interpolators that can better express the characteristics of such spatial structure.

We believe it is also important to note that in this study we have also proposed an interpretation of the cause of spatial correlation using a very simple generative model. Despite its simplicity, and being only a very seminal proposal, we believe that it offers a more general and intuitive view than that addressed by classical geostatistical theory. However, its main limitation is that, in its most general form, the model requires the ability to formulate a prior process of random partition generation that is as expressive as possible. This constitutes a rich field for future research and development that could address not only the study of the stochastic generative process in question, but also of data structures suitable for building computational tools for practical use.

At the present stage, Adaptive ESI proved to be an effective initial strategy to address the above. However, further research is needed to improve its computational efficiency, especially in the optimisation of the local interpolator parameters in each cell of each partition—although this situation is not so critical, since, unlike the original versions of ESI, competitive results can be obtained with fewer and coarser partitions.

Another line of future research relates to the robustness of the method in challenging information settings, such as when there is little data available and/or preferential sampling of some sector of space. This could include the study of spatial variables of different nature, and the integration of possible secondary information available.

Finally, the results of this study provide an overview of the potential of Adaptive ESI as a powerful computational geostatistical tool for use by a more general audience, such as the geoscience community and communities focused on modelling environmental problems. This is where the use of classical geostatistics has been hampered by the barrier posed by the need for classical expert spatial modelling (such as variogram analysis), which can necessitate years to achieve a level that enables the resolution of complex and dynamic problems—such as climate change, to provide an emblematic example.

Data availability

The synthetic datasets generated during and/or analysed during the current study are available from the corresponding author on reasonable request. The real datasets generated during and/or analysed during the current study are available in the GAIA-UNIL repository, https://github.com/GAIA-UNIL/trainingimages.git.

Code availability

The Spatialize library, which implements the proposed methodology (Adaptive Ensemble Spatial Interpolation), is available on GitHub (https://github.com/alges/spatialize). Implemented in Python with a C++ core to improve performance, the library is designed to be user-friendly with minimal intervention required.

References

Kleijnen, J. Kriging: Methods and applications. CentER Discussion Paper Series No. 2017-047, Center for Economic Research (2017). https://doi.org/10.2139/ssrn.3075151.

Virdee, T. & Kottegoda, N. A brief review of kriging and its application to optimal interpolation and observation well selection. Hydrol. Sci. J. 29, 367–387. https://doi.org/10.1080/02626668409490957 (1984).

McKinley, J. & Atkinson, P. A special issue on the importance of geostatistics in the era of data science. Math. Geosci. 52, 311–315. https://doi.org/10.1007/s11004-020-09858-1 (2020).

Varouchakis, E., Hristopulos, D. & Karatzas, G. Improving kriging of groundwater level data using nonlinear normalizing transformations-a field application. Hydrol. Sci. J. 57, 1404–1419. https://doi.org/10.1080/02626667.2012.717174 (2012).

Abzalov, M. Applied Mining Geology, vol. 12 of Modern Approaches in Solid Earth Sciences (Springer, 2016).

Kirkwood, C., Economou, T., Pugeault, N. & Odbert, H. Bayesian deep learning for spatial interpolation in the presence of auxiliary information. Math. Geosci. 54, 507–531. https://doi.org/10.1007/s11004-021-09988-0 (2022).

Lachérade, L. et al. A sectorisation-based method for geostatistical modeling of pressuremeter test data: Application to the grand Paris express project (France). Eng. Geol. 324, 107270. https://doi.org/10.1016/j.enggeo.2023.107270 (2023).

de Sousa Mendes, W., Demattê, J. A., Souza, A., Urbina Salazar, D. & Amorim, M. Geostatistics or machine learning for mapping soil attributes and agricultural practices. Revista Ceres 67, 330–336. https://doi.org/10.1590/0034-737X202067040010 (2020).

Sun, W., Minasny, B. & McBratney, A. Analysis and prediction of soil properties using local regression-kriging. Geoderma171-172, 16–23, https://doi.org/10.1016/j.geoderma.2011.02.010 (2012). Entering the Digital Era: Special Issue of Pedometrics 2009, Beijing.

Kambhammettu, B. V. N. P., Allena, P. & King, J. P. Application and evaluation of universal kriging for optimal contouring of groundwater levels. J. Earth Syst. Sci. 120, 413–422. https://doi.org/10.1007/s12040-011-0075-4 (2011).

Pannecoucke, L., Le Coz, M., Freulon, X. & de Fouquet, C. Combining geostatistics and simulations of flow and transport to characterize contamination within the unsaturated zone. Sci. Total Environ. 699, 134216. https://doi.org/10.1016/j.scitotenv.2019.134216 (2020).

Hooshmand, A., Delghandi, M., Izadi, A. & Ahmadaali, K. Application of kriging and cokriging in spatial estimation of groundwater quality parameters. Afr. J. Agric. Res.6 (2011).

De Rubeis, V., Tosi, P., Calvino, G. & Solipaca, A. Application of kriging technique to seismic intensity data. Bull. Seismol. Soc. Am. 95, 540–548. https://doi.org/10.1016/S0065-2113(08)60673-2 (2005).

Fagandini, C. et al. Missing rainfall daily data: A comparison among gap-filling approaches. Math. Geosci. 56, 191–217. https://doi.org/10.1007/s11004-023-10078-6 (2024).

Wang, Y., Akeju, O. V. & Zhao, T. Interpolation of spatially varying but sparsely measured geo-data: A comparative study. Eng. Geol. 231, 200–217. https://doi.org/10.1016/j.enggeo.2017.10.019 (2017).

Chilès, J.-P. & Desassis, N. Fifty Years of Kriging, 589–612 (Springer, 2018).

Assibey-Bonsu, W. Professor danie krige’s first memorial lecture: The basic tenets of evaluating the mineral resource assets of mining companies, as observed in professor danie krige’s pioneering work over half a century. In Gómez-Hernández, J., Rodrigo-Ilarri, J., Rodrigo-Clavero, M. E., Cassiraga, E. & Vargas-Guzmán, J. A. (eds.) Geostatistics Valencia 2016, 3–25, https://doi.org/10.1007/978-3-319-46819-8_1 (Quantitative Geology and Geostatistics, 2017).

Fischer, M. & Getis, A. (eds.) Handbook of Applied Spatial Analysis: Software Tools, Methods and Applications (Springer, 2009).

Samson, M. & Deutsch, C. A hybrid estimation technique using elliptical radial basis neural networks and cokriging. Math. Geosci. 54, 573–591. https://doi.org/10.1007/s11004-021-09969-3 (2022).

Boroh, A. W., Kouayep Lawou, S., Mfenjou, M. L. & Ngounouno, I. Comparison of geostatistical and machine learning models for predicting geochemical concentration of iron: Case of the nkout iron deposit (south cameroon). J. Afr. Earth Sci. 195, 104662. https://doi.org/10.1016/j.jafrearsci.2022.104662 (2022).

Menafoglio, A., Gaetani, G. & Secchi, P. Random domain decompositions for object-oriented kriging over complex domains. Stoch. Env. Res. Risk Assess. 32, 3421–3437. https://doi.org/10.1007/s00477-018-1596-z (2018).

Nikparvar, B. & Thill, J.-C. Machine learning of spatial data. ISPRS Int. J. Geo-Inform.10. https://doi.org/10.3390/ijgi10090600 (2021).

Egaña, A. et al. Ensemble spatial interpolation: A new approach to natural or anthropogenic variable assessment. Nat. Resour. Res. 30, 3777–3793. https://doi.org/10.1007/s11053-021-09860-2 (2021).

Breiman, L. Bagging predictors. Mach. Learn. 24, 123–140. https://doi.org/10.1007/BF00058655 (1996).

Drucker, H., Cortes, C., Jackel, L. D., LeCun, Y. & Vapnik, V. Boosting and other ensemble methods. Neural Comput. 6, 1289–1301. https://doi.org/10.1162/neco.1994.6.6.1289 (1994).

Shahhosseini, M., Hu, G. & Pham, H. Optimizing ensemble weights and hyperparameters of machine learning models for regression problems. Mach. Learn. Appl. 7, 100251. https://doi.org/10.1016/j.mlwa.2022.100251 (2022).

Bezdek, J. C. & Harris, J. D. Fuzzy partitions and relations: An axiomatic basis for clustering. Fuzzy Sets Syst. 1, 111–127. https://doi.org/10.1016/0165-0114(78)90012-X (1978).

Altman, N. & Krzywinski, M. Ensemble methods: Bagging and random forests. Nat. Methods 14, 933–935. https://doi.org/10.1038/nmeth.4438 (2017).

Dixon, P. M. Bootstrap resampling. Encycl. Environ. https://doi.org/10.1002/9780470057339.vab028 (2006).

Skurichina, M. & Duin, R. P. Bagging for linear classifiers. Pattern Recogn. 31, 909–930. https://doi.org/10.1016/s0031-3203(97)00110-6 (1998).

Breiman, L. Random forests. Mach. Learn. 45, 5–32. https://doi.org/10.1023/A:1010933404324 (2001).

Grabisch, M. Aggregation Functions, vol. 127 (Cambridge University Press, 2009).

Quinlan, J. R. Learning decision tree classifiers. ACM Comput. Surv. (CSUR) 28, 71–72. https://doi.org/10.1145/234313.234346 (1996).

James, G., Witten, D., Hastie, T., Tibshirani, R. & Taylor, J. Tree-based methods. In An Introduction to Statistical Learning: with Applications in Python, 331–366, https://doi.org/10.1007/978-3-031-38747-0_8 (Springer, 2023).

Lakshminarayanan, B., Roy, D. M. & Teh, Y. W. Mondrian forests: Efficient online random forests. Adv. Neural Inform. Process. Syst. 27. https://doi.org/10.48550/arXiv.1406.2673 (2014).

Lantuéjoul, C. Geostatistical Simulation: Models and Algorithms (Springer, 2013).

Mourtada, J., Gaïffas, S. & Scornet, E. AMF: Aggregated Mondrian forests for online learning. J. R. Stat. Soc. Ser. B Stat. Methodol. 83, 505–533 (2021).

Boisvert, J. B. & Deutsch, C. V. Programs for kriging and sequential gaussian simulation with locally varying anisotropy using non-Euclidean distances. Comput. Geosci. 37, 495–510 (2011).

Berger, J. M. B. D. J. O. Objective Bayesian Inference (World Scientific Pub Co Inc, 2024).

Silvelyn Zwanzig, R. A. Bayesian Inference. Theory, Methods, Computations (CRC Press, 2024).

Emery, X., Arroyo, D. & Porcu, E. An improved spectral turning-bands algorithm for simulating stationary vector gaussian random fields. Stoch. Env. Res. Risk Assess. 30, 1863–1873. https://doi.org/10.1007/s00477-015-1151-0 (2016).

Emery, X. & Arroyo, D. On a continuous spectral algorithm for simulating non-stationary gaussian random fields. Stoch. Env. Res. Risk Assess. 32, 905–919. https://doi.org/10.1007/s00477-017-1402-3 (2018).

Mariethoz, G. Gaia Unil training images repository. https://github.com/GAIA-UNIL/trainingimages (2021).

Mariethoz, G. & Caers, J. Multiple-point Geostatistics: Stochastic Modeling with Training Images (Wiley, 2014).

Goovaerts, P. Geostatistics for Natural Resources Evaluation (Oxford University Press, 1997).

Journel, A. G., Kyriakidis, P. C. & Mao, S. Correcting the smoothing effect of estimators: A spectral postprocessor. Math. Geol. 32, 787–813 (2000).

Chiles, J.-P. & Delfiner, P. Geostatistics: Modeling Spatial Uncertainty (Wiley, 2012).

Deutsch, C. V. The place of geostatistical simulation through the life cycle of a mineral deposit. Minerals 13, 1400 (2023).

Emery, X. Conditioning simulations of gaussian random fields by ordinary kriging. Math. Geol. 39, 607–623 (2007).

Soto, F., Garrido, M., Díaz, G. & Silva, C. Rapid multivariate resource assessment. In Geomin Mineplanning. 5 International Seminar on Geology for Mining Industry. 5 International Seminar on Mine Planning (2017).

Soto, F. Andesite. https://www.andesite.cl (2025).

Ramírez, C., Silva, J. F., Tamssaouet, F., Rojas, T. & Orchard, M. E. Fault detection and monitoring using a data-driven information-based strategy: Method, theory, and application. Mech. Syst. Signal Process. 228, 112403. https://doi.org/10.1016/j.ymssp.2025.112403 (2025).

Casella, G. & Berger, R. Statistical Inference (CRC Press, 2024).

Acknowledgements

This material is based on work supported by grants from the Chilean National Agency for Research and Development (ANID), specifically through the Advanced Mining Technology Center (AMTC) of the University of Chile, Basal Project ANID AFB230001. We are especially grateful for the support of the entire team of the Advanced Laboratory for Geostatistical Super computing (ALGES) of the AMTC and the Department of Mining Engineering of the University of Chile. We are grateful for the availability of the images used in this study from the UNIL-GAIA repository, and for the editing support provided by Felipe Navarro of the ALGES laboratory.

Author information

Authors and Affiliations

Contributions

A.F.E and G.D developed the theory and experimental design. M.J.V and G.D carried out the experiments. All authors collaborated in writing and revising the manuscript.

Corresponding author

Ethics declarations

Competing interests

The author(s) declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Egaña, A.F., Valenzuela, M.J., Maleki, M. et al. Adaptive ensemble spatial analysis. Sci Rep 15, 26599 (2025). https://doi.org/10.1038/s41598-025-08844-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-08844-z