Abstract

The rapid expansion of Internet of Things (IoT) networks necessitates efficient intrusion detection systems (IDS) capable of operating within the stringent resource constraints of IoT devices. This study introduces KronNet, a lightweight feed-forward neural network enhanced with Kronecker product operations, designed for real-time IoT intrusion detection. KronNet leverages Gaussian Mixture Model (GMM)-based oversampling and a hybrid loss function combining Focal Loss and Cross-Entropy with adaptive class weighting to address class imbalance, ensuring robust detection across diverse attack types. Evaluated on the CICIoT2023 and BoT-IoT datasets, KronNet achieves exceptional performance, with accuracies of 99.01% and 99.91%, weighted F1-scores of 99.01% and 99.91%, and low false positive rates of 0.03% and 0.01%, respectively. The model operates with minimal computational overhead, utilizing 5,074 parameters (19.82 KB) for CICIoT2023 and 4,703 parameters (18.37 KB) for BoT-IoT, with inference times of 0.209 ms and 0.208 ms. Post-quantization, memory usage reduces to 4.96 KB and 4.59 KB, with negligible accuracy degradation (0.06% and 0.01% loss). Compared to state-of-the-art models, KronNet demonstrates up to 15,829× lower FLOPS and 12,010× faster inference, making it a highly efficient solution for edge deployment in resource-constrained IoT environments. This work advances IoT cybersecurity by delivering a scalable, accurate, and lightweight IDS capable of real-time threat detection.

Similar content being viewed by others

Introduction

The Internet of Things (IoT) has experienced an unprecedented surge in recent years, fundamentally reshaping the landscape of global connectivity. The forecast indicates that IoT devices worldwide will surpass 41 billion by 2030 while smart technology becomes embedded in homes and industries and critical infrastructure1. The massive growth of IoT devices has brought outstanding convenience and efficiency but simultaneously created severe cybersecurity threats at an alarming rate. Also, IoT devices’ resource constraints make them vulnerable to DoS, DDoS, and other different attacks2. The majority of IoT devices that manage personal or operational data lack advanced security features during their deployment3. Malicious actors target these weaknesses to convert devices into botnets for distributed attacks and use them for illicit cryptocurrency mining and ransomware attacks that hold devices hostage. The threats endanger personal privacy while creating major risks to network stability and integrity4.

Intrusion Detection Systems (IDSs) function as a primary defensive mechanism to monitor IoT networks for detecting malicious activity4. The traditional IDS methods depend on manual rule creation and attack signature development, which require extensive expertise and time-consuming processes. The massive volume of IoT traffic together with modern cyberattack complexity and adaptability make traditional security methods ineffective5.

Deep Learning (DL) has emerged as a transformative solution, offering advanced capabilities to bolster IDS performance. Techniques such as Deep Belief Networks (DBNs)6, Convolutional Neural Networks (CNNs)7, and Recurrent Neural Networks (RNNs)8 excel at extracting intricate patterns from raw network data, enabling precise anomaly detection and adaptability to evolving threats9. These sophisticated DL models are impractical for deployment on resource-constrained IoT devices, where processing power and storage are limited10. A need for DL framework to detect sophisticated multi-variant cyber threats, achieving high accuracy and speed efficiency in resource-constrained IoT environments11. DL-based IDSs face a widespread problem: class imbalance. The model performance becomes skewed because benign traffic occurs much more frequently than rare attack types, resulting in elevated false-negative rates for critical minority classes12,13. The imbalance leads models to prioritize accuracy for common traffic patterns while neglecting rare yet dangerous attack vectors14.

The problem has been addressed through various strategies, which include data augmentation methods to enhance minority class representation15, algorithmic adjustments to reduce learning biases16, ensemble approaches to leverage multiple model capabilities17, and specialized evaluation metrics to improve performance assessment18. The implementation of these methods to improve rare attack detection often leads to decreased precision in majority classes, which undermines system reliability. The trade-off presents a significant challenge for IoT systems because resource efficiency and consistent performance are essential non-negotiable factors19.

Our research is motivated by the need to develop an IDS that does not compromise on the detection of rare attack types in IoT networks while maintaining high accuracy on the common traffic patterns, exploiting the specific distributional properties of different attack categories. We plan to lead the development of lightweight, efficient DL solutions that are specifically designed to meet the computational requirements of IoT environments. To this end, we propose KronNet, a new lightweight framework that embeds Kronecker product operations into a simplified feed-forward neural network architecture. KronNet optimizes feature representation and computational efficiency, thus addressing both class imbalance and resource constraints in a single framework.

The primary contributions of this article are as follows:

-

1.

We propose KronNet, delivering exceptional detection accuracy—99.01% on CICIOT2023 with 5,074 parameters and 99.91% on BOT-IOT with 4,703 parameters—validated across diverse attack scenarios on these benchmark datasets.

-

2.

We implement Gaussian Mixture Model (GMM)-based oversampling, achieving a 32.15% F1-score improvement for minority classes, ensuring robust detection without synthetic bias.

-

3.

We utilize a hybrid loss function blending Focal Loss and Cross-Entropy, enhanced by adaptive class weighting, to prioritize rare attacks while maintaining high precision across all classes.

-

4.

We demonstrate outstanding efficiency, with inference times of 0.209 ms (CICIOT2023) and 0.208 ms (BOT-IOT), and post-quantization memory footprints of 4.96 KB and 4.59 KB, positioning KronNet as an ideal candidate for real-time IoT edge deployment.

The paper is structured as follows: section “Related work” reviews related work, section “Preliminaries” outlines preliminaries, section “Proposed methodology” details our methodology, section “Experiments and results” presents comprehensive experimental results, section “Discussion” discusses findings across datasets, and section “Conclusion and future work” offers conclusions.

Related work

Intrusion detection systems are essential for IoT network security, playing a crucial role in detecting malicious activities. With the proliferation of IoT devices and increasingly sophisticated attacks, there is a growing need for robust yet efficient IDS solutions. Deep learning has emerged as a promising approach to enhance detection effectiveness, though it faces unique challenges in IoT environments. In this section, we provide a concise overview of recent advances in deep learning-based intrusion detection systems for IoT, focusing on class imbalance and resource constraints.

Deep learning-based IDS for IOT

Recent research has demonstrated deep learning’s effectiveness in IoT intrusion detection. Numerous studies leverage deep learning techniques to develop sophisticated anomaly detection systems across various applications20. These deep learning framework is employed in individual and Hybrid mode. Hybrid approaches combine supervised and unsupervised learning to leverage labeled data while discovering patterns in unlabeled data21. Narayan et al.22 proposed HDLBID, achieving 99.6% accuracy with CNN-BiLSTM and SMOTE-Tomek balancing, though neglecting energy efficiency crucial for IoT deployments. Feng et al.23 introduced a two-level DDoS detection framework combining Rényi entropy with DCNN-LSTM models, but its computational requirements limit IoT applicability. Himanshu et al. Deploys CNN, BiLSTM, and transfer learning approach for detection model training and evaluation using N-BaIoT dataset24. Racherla et al.25 demonstrated lightweight LSTM networks with 96.8% accuracy and 1.49-second response time, though Raspberry Pi deployment revealed significant computational bottlenecks. Iram et al. Employs ConvLSTM2D with CUDA optimization for multi-vector IIoT threat detection, but faces computational complexity challenges and accuracy-speed trade-offs26.

Kirubavathi and Nair27 demonstrated hybrid deep learning potential with 98.45% accuracy using CNN-RNN, though high computational demands limit IoT practicality. Similarly, Khanday et al.28 achieved 98-99% accuracy in DDoS detection using ANN and LSTM models but lacked quantification of computational overhead and resource utilization metrics critical for IoT deployment.

Class imbalance in IoT intrusion detection

Class imbalance significantly challenges IoT intrusion detection. Caihong et al.29 addressed this with S2CGAN-IDS, enhancing minority attack detection while maintaining majority class accuracy, though lacking energy consumption analysis. Altaie and Hoomod30 proposed a two-phase CNN-LSTM model achieving 98.78% accuracy on UNSW-NB15 with lower computational requirements than standalone architectures. Qaddos et al.31 presents hybrid CNN-GRU model with FW-SMOTE achieving 99%+ accuracy but requires significant computational resources, challenging IoT device constraints.

Traditional machine learning approaches have also been explored for addressing imbalance. Amgbara et al.32 investigated lightweight ML models for personal IoT security, finding they achieve reasonable detection accuracy with minimal computational overhead but struggle with complex attack patterns. Farooqi et al.33 achieved 99.99% accuracy using Decision Trees with feature selection, demonstrating viability for lightweight approaches, though limited in detecting sophisticated attack patterns.

Resources efficiency in IoT security

Resource efficiency remains critical for IoT deployment. Wang et al.34 achieved 99.44% accuracy with only 18.1KB memory using knowledge distillation, while Idrissi et al.35 developed DL-HIDS with 2.704KB memory requirements. Himanshu et al. Introduces AttackNet, an adaptive CNN-GRU model achieved 99.75% accuracy for IIoT botnet detection and classification, outperforming state-of-the-art methods on N-BaIoT benchmark36. Traditional ML approaches offer computational efficiency, with Azimjonov and Kim37 achieving 99.81% accuracy using just 6 features with Linear SVMs, and Alwaisi et al.38 demonstrating TinyML effectiveness with 96.9% accuracy using Decision Trees. K. Malik et al. Proposes lightweight one-class KNN achieving 98-99% F1-score with minimal computational overhead through 72% feature space reduction39.

Jouhari and Guizani40 developed a lightweight CNN-BiLSTM model achieving 96.91% accuracy with only 7,841 trainable parameters, though inference times of 3.8 s may be problematic for time-sensitive applications. Otokwala et al.41 combined feature selection with deep autoencoders, requiring only 2KB memory with 0.30s inference time, representing significant progress in model miniaturization, though lacking evaluation against advanced adversarial attacks. Himanshu et al.42 Proposed Explainable AI (XAI) enhances IDS transparency through interpretable decision-making, improving cybersecurity trust while using of SHAP for explainability is resource-intensive.

In another approach focusing on computational efficiency, Azimjonov and Kim43 achieved 92.69% accuracy using Stochastic Gradient Descent Classifier with feature selection. While computationally efficient, these traditional approaches lack capability for automatically learning complex attack patterns, highlighting the need for optimized deep learning architectures that balance sophisticated threat detection with resource constraints.

Optimization and energy efficiency

Feature selection and model optimization effectively reduce computational overhead. Özer et al.44 proposed optimal feature pairs from the Bot-IoT dataset, achieving 90% accuracy with minimal computational requirements, though overlooking deep learning possibilities. Himanshu et al. model Achieves 98.1% IDS accuracy with low latency and robust security against MITM, Sybil, and double-spending attacks45. Yan et al.46 introduced DGConv-IDS, reducing parameters from 37,121 to 10,785 while maintaining 99.83% accuracy on the CICIoT2023 dataset, though their similarity measurement method introduces computational overhead that limits real-time applicability.

Energy efficiency, often overlooked, was analyzed by Tekin et al.47, demonstrating traditional models achieve competitive accuracy with significantly lower energy requirements than neural networks. However, their study focuses primarily on traditional ML algorithms, overlooking modern lightweight deep learning architectures. Anusha et al.48 achieved 30% energy reduction and 25% better resource utilization through AI/ML integration, though lacking specific application to intrusion detection. Malik et al. Proposes Cu-(ConvLSTM2D-BLSTM) hybrid framework achieving 96-99% accuracy across IoT devices but requires time efficiency optimization under high request loads49. Wang et al.50 proposed DL-BiLSTM with dynamic quantization, achieving 99.67% accuracy while maintaining a small model size of 28.3KB, though not fully addressing model optimization during training.

Advanced optimization approaches include Gupta et al.51 ALO-CNN framework combining Random Forest feature selection with Ant Lion Optimization, achieving 97% accuracy, and Danquah et al.52 federated learning approach reducing computational complexity by 87.34% while achieving 99.93% accuracy. While these approaches effectively address computational efficiency, they lack consideration of real-time deployment constraints on resource-limited IoT devices.

Despite significant advances in lightweight intrusion detection for IoT, several critical gaps remain. First, most existing approaches achieving high accuracy do so at the cost of computational efficiency, with limited evaluation on actual IoT hardware. Second, approaches addressing class imbalance often introduce additional computational overhead during training or inference. Finally, there is insufficient attention to energy consumption metrics in evaluating model suitability for IoT deployment. In these cases, alternative approaches need to be considered to address the challenges posed by significant concerns about IDS in IoT systems.

Preliminaries

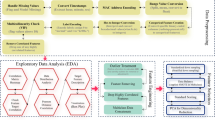

In this section, we outline the workflow of the proposed KronNet framework and the foundational techniques used in its development. The framework is proposed to address the challenges of class imbalance and resource constraints in IoT IDS, achieving high detection accuracy with low computational cost. Figure 1 illustrates the phase-wise workflow of the proposed model, which describes the process from data preparation to model training and evaluation in four distinct phases: Data Preprocessing (Phase-1), Class Balancing (Phase-2), Data Preparation (Phase-3), and KronNet Training and Evaluation (Phase-4).

Flow chart of the proposed work.

IDS datasets

We apply the proposed framework to two recent IoT-specific datasets, CICIOT2023 and BOT-IOT. The datasets are chosen for their applicability to current IoT network traffic and varied attack profiles. The following are the descriptions of the datasets:

CICIOT2023 dataset

The CICIOT2023 dataset which Neto et al. (2023) introduced functions as a complete IoT traffic dataset for studying intrusion detection53. Focuses on specific attacks while overlooking others, limiting detection scope54. The dataset contains 1,237,396 instances after balancing which includes 41 features distributed across 34 attack classes that encompass DDoS attacks such as DDoS-TCP_Flood and DDoS-UDP_Flood as well as DoS attacks like DoS-SYN_Flood and other threats including MITM-ArpSpoofing, SqlInjection and XSS. Real-world IoT network behaviors make this dataset suitable for evaluating IDS in IoT environments.

BOT-IOT dataset

The BOT-IOT dataset which Koroniotis et al. (2019) created serves as a common resource for IoT security research55. The balanced dataset contains 11,362,571 instances with 37 features distributed across 11 attack classes including DDoS (DDH, DDT) and reconnaissance attacks (Service_Scan, OS_Fingerprint) and additional attack types such as Data_Exfiltration and Keylogging. The dataset holds significant value because it focuses on botnet attacks against IoT networks while showing authentic attack scenarios.

Remark 1

The CICIOT2023 dataset provides attack classes in 34 categories and represents the current IoT threat environment because it was created recently. The BOT-IOT dataset provides 11.36 million instances of data from botnet attacks while being slightly older than CICIOT2023 and serves to enhance the evaluation with botnet-specific attack data. The proposed framework will be evaluated for its performance across different attack types and class distributions using these two datasets because NSL-KDD lacks IoT-specific traffic characteristics56.

Data preprocessing

The preprocessing phase makes sure the datasets are ready for deep learning model training. It consists of the following tasks:

-

Data Cleaning and Handling Missing Values: The combined dataset (combined_df) is cleaned by filling missing values with zeros (combined_df.fillna(0)) and skewness removal through quantile transfer(unifom), ensuring no data points are lost while maintaining consistency.

-

Label Encoding: Categorical labels are encoded using a label encoder (label_encoder = label: idx for idx, label in enumerate(class_names)), converting class names (e.g., DDoS-TCP_Flood, Normal) into numerical indices for model compatibility.

-

Data Normalization: Features are normalized using the standardization formula in equation 1:

$$\begin{aligned} X_{\textrm{normalized}}=\left( X-\textrm{mean}\right) /\textrm{std} \end{aligned}$$(1)where X is the feature matrix and mean and std are computed along each feature dimension (np. nanmean(X, axis=0), np.nanstd(X, axis=0)). This step scales features to a zero mean and unit variance, mitigating the impact of varying feature scales on model training.

Class balancing techniques

Class imbalance is a significant challenge in IDS datasets, where benign traffic often dominates attack samples, leading to biased models. To address this, we employ:

-

GMM Oversampling with Gaussian Mixture Models: The gmm_oversampling function uses Gaussian Mixture Models (GMM) to generate synthetic samples for minority classes (GaussianMixture(n_components=1)). For each minority class, GMM fits the data distribution and samples new instances to match the majority class size (majority_size = max(class_counts.values())). This results in balanced datasets with shapes (1,237,396, 41) for CICIOT2023 (34 classes) and (11,362,571, 37) for BOT-IOT (11 classes). Unlike traditional methods like SMOTE, GMM-based oversampling captures the underlying data distribution more effectively, improving the quality of synthetic samples57.

Data preparation

This phase prepares the balanced dataset for model training:

-

Dataset Splitting: The data is split into training, validation, and test sets using a 70-15-15 ratio (train_test_split with test_size=0.3, followed by a 50-50 split of the remaining data). Stratified sampling ensures proportional class representation across splits (stratify=y).

-

DataLoader Creation: The prepare_data function creates PyTorch DataLoader objects (TensorDataset, DataLoader) with a batch size of 512, enabling efficient batch processing during training (batch_size=512, shuffle=True).

Kronecker-enhanced neural networks

The KronNet model leverages feed-forward neural networks with Kronecker product enhancements to achieve lightweight and accurate intrusion detection:

-

Feed-forward neural architecture: The model employs a lightweight feed-forward architecture58 with configurable hidden dimensions ([64, 32]) to balance computational efficiency and representational capacity. Each layer consists of linear transformations followed by ReLU activations and a dropout rate of 0.05 for regularization, supporting the lightweight design suitable for IoT environments.

-

Kronecker Product Enhancement: A key innovation in our approach is the Kronecker product operation (kronecker_product) that enhances feature interactions by transforming hidden representations into a matrix form and applying a learnable Kronecker matrix (kronecker_left, matmul(x_matrix, kronecker_left)). The technique cuts down the number of parameters while still being able to capture complex feature relationships59 which makes it possible to model traffic patterns with fewer parameters than traditional architectures.

-

Hybrid Loss Function: The training process uses a hybrid loss combining Focal Loss and Cross-Entropy Loss (0.7 * focal_loss + 0.3 * ce_loss). Focal Loss (FocalLoss(gamma=2.0)) focuses on hard-to-classify samples, reducing class imbalance, while Cross-Entropy Loss ensures overall classification accuracy.

-

Quantization: After training, dynamic quantization reduces memory for edge deployment while maintaining accuracy.

Proposed methodology

The KronNet model presented in Fig. 2 represents a lightweight Kronecker feed-forward neural architecture for intrusion detection in IoT environments. The KronNet model uses feed-forward neural networks with Kronecker product operations to obtain high detection accuracy while keeping resource usage low. The design of this model makes it suitable for resource-limited IoT devices which need both detection performance and computational efficiency. The model architecture together with training process and intrusion detection algorithmic workflow are explained in this section after the Preliminaries section (section “Preliminaries”). The model receives additional optimization through quantization for practical deployment.

Architecture of KronNet framework.

Balancing through GMM-based oversampling

The CICIOT2023 and BOT-IOT datasets require severe class balancing which KronNet addresses through GMM-based oversampling before training. The gmm_oversampling function implements this method to create synthetic minority class samples which balance the dataset for better feature learning across all classes.

The dataset contains features X which belong to \(\mathbb {R}^{n \times d}\) (\(X \in \mathbb {R}^{n \times d}\)), where n is the number of samples and d is the feature dimension (41 for CICIOT2023, 37 for BOT-IOT) and labels \(y \in \{0,1,\ldots ,C-1\}\) (C is the number of classes–34 for CICIOT2023, 11 for BOT-IOT), the process is as follows:

Compute the class counts \(\{n_c\}_{c=0}^{C-1}\), where \(n_c = |{i: y_i = c}|\), and identify the majority class size \(n_{\max } = \max _{c} n_c\).

For each class \(c \in \{0, 1, \ldots , C-1\}\):

-

Extract samples \(X_c = \{x_i: y_i = c\}\), with \(|X_c| = n_c\).

-

If \(n_c < n_{\max }\) and \(n_c > 0\), fit a GMM with k components (\(k = 1\), n_components=1) on \(X_c\): \(\textrm{GMM}_c = \textrm{GaussianMixture}(X_c, k, \mathrm {random\_state} = 42)\)

-

Generate \(n_{\textrm{synthetic}} = n_{\max } - n_c\) synthetic samples: \(X_{\textrm{synthetic}}, \_ = \textrm{GMM}_c.\textrm{sample}(n_{\textrm{synthetic}})\)

-

Combine the original and synthetic samples: \(X_c^{\prime } = X_c \cup X_{\textrm{synthetic}}\), with corresponding labels \(y_c^{\prime } = [c] \times (n_c + n_{\textrm{synthetic}})\).

Aggregate all classes to form the balanced dataset \((X_{\textrm{balanced}}, y_{\textrm{balanced}})\), where each class has \(n_{\max }\) samples.

Model architecture

The KronNet model is designed to balance detection performance with computational efficiency. Its architecture, implemented in the provided code, consists of the following components:

-

Input Layer: The model accepts input dimensions corresponding to the dataset features—41 for CICIOT2023 and 37 for BOT-IOT (input_dim = X.shape[1]).

-

Feed-Forward Hidden Layers: The core of the model comprises two hidden layers with dimensions [64, 32] (hidden_dims = [64, 32]). Each layer consists of:

-

A linear transformation (nn.Linear) to project the input to the specified hidden dimension.

-

A ReLU activation function (nn.ReLU) introduces nonlinearity to enhance feature representation.

-

A dropout layer (nn.Dropout(0.05)) to prevent overfitting by randomly dropping 5% of the units during training. These layers extract hierarchical features from the input data, progressively reducing dimensionality while preserving critical patterns for classification.

-

-

Kronecker product enhancement: A key innovation in KronNet is the Kronecker product-inspired operation, which improves feature interactions while keeping the architecture lightweight. The KronNet architecture, illustrated in Figure 3, integrates a Kronecker product enhancement to achieve efficient feature interactions with minimal computational overhead. The hidden representation \(h \in \mathbb {R}^d\) (where d is the output dimension of the last KronNet layer) is projected to a \(2 \times 2\) matrix via a linear layer (self.to_kronecker), resulting in \(X_{matrix} \in \mathbb {R}^{(b \times 2 \times 2)}\), where b is the batch size and kronecker_size = 2. This matrix is computed as in Eq. (2):

$$\begin{aligned} X_{matrix} = \text {reshape}(\text {Linear}(h), (b, 2, 2)) \end{aligned}$$(2)Instead of a traditional Kronecker product, the operation performs a matrix multiplication with a learnable Kronecker matrix \(K_{left} \in \mathbb {R}^{(2 \times 2)}\) (kronecker_left), initialized as \(K_{left} \sim \mathcal {N}(0, 1/\text {kronecker\_dim})\). The resulting output is presented in Eq. (3):

$$\begin{aligned} \text {Kron}_{out} = \text {matmul}(X_{matrix}, K_{left}) \end{aligned}$$(3)Where \(\text {Kron}_{out} \in \mathbb {R}^{(b \times 2 \times 2)}\). This output is then flattened to a vector \(\text {Kron}_{out} \in \mathbb {R}^{(b \times 4)}\), capturing complex feature interactions with minimal parameters. The flattened vector is passed to the classifier. This approach significantly reduces the parameter count compared to traditional architectures, which often require orders of magnitude more parameters to model similar interaction patterns, making KronNet highly efficient for resource-constrained IoT devices.

-

Output layer: The (self.classifier) linear layer transforms the Kronecker output into class numbers which amount to 34 for CICIOT2023 and 11 for BOT-IOT (num_classes = len(class_names)). The model generates raw scores which become probabilities during evaluation by applying softmax to the outputs (F.softmax(outputs, dim = 1)).

Diagrammatic visual of Kronecker-enhancement architecture.

Training process

The training of KronNet is performed in two stages to address class imbalance and optimize performance:

-

Initial training (5 Epochs): The first stage is to train for 5 epochs (initial_epochs=5) to identify the underperforming classes. The model is evaluated on the validation set using the identify_underperforming_classes function, which computes F1-scores for each class. The classes with F1-scores less than 0.7 are marked and their corresponding class weights are updated (class_weights[idx] *= (1.0 + weight_factor)). This way, the model pays more attention to minority classes in the next training.

-

Full training (Up to 50 Epochs): The second stage continues training with the adjusted class weights for up to 50 epochs (max_epochs=50). The model uses a hybrid loss function combining Focal Loss and Cross-Entropy Loss (0.7 * focal_loss + 0.3 * ce_loss):

-

Focal Loss (FocalLoss(gamma=2.0)): It focuses on hard to classify samples by down-weighting easy examples, reducing the effect of class imbalance60.

-

Cross-Entropy Loss (nn.CrossEntropyLoss): It is used to optimize the overall classification accuracy. The training process uses the Adam optimizer (lr=0.001, weight_decay=1e-5) with a StepLR scheduler (step_size=10, gamma=0.8) to adjust the learning rate. Early stopping is applied with a patience of 5 epochs to prevent overfitting, as seen in the BOT-IOT training, which stopped after 13 epochs.

This two-stage approach, combined with the GMM-based oversampling technique discussed in section “Balancing through GMM-based oversampling”, provides a comprehensive solution to the class imbalance problem in IoT intrusion detection. The dynamic adjustment of class weights during training ensures that the model maintains high detection rates for both common and rare attack patterns.

Algorithm for intrusion detection

Algorithm 1: KronNet Intrusion Detection Approach

Input: Preprocessed and balanced dataset \(D'\), DataLoaders for training, validation, and test sets

Description:

Step 1 - Model Initialization:

-

Initialize KronNet with input_dim (41 for CICIOT2023, 37 for BOT-IOT), hidden_dims=[64, 32], num_classes (34 for CICIOT2023, 11 for BOT-IOT), and kronecker_size=2.

-

Move the model to the appropriate device (model.to(device)), utilizing CUDA if available (device = ’cuda’).

Step 2 - Two-Stage Training:

Initial Training:

-

Train for 5 epochs using the hybrid loss function (0.7 * focal_loss + 0.3 * ce_loss).

-

Evaluate on the validation set to identify underperforming classes (F1 < 0.7).

-

Adjust class weights dynamically to focus on minority classes.

Full Training:

-

Continue training with updated class weights for up to 50 epochs.

-

Use Adam optimizer (lr=0.001, weight_decay=1e-5) and StepLR scheduler (step_size=10, gamma=0.8).

-

Apply early stopping with patience of 5 epochs.

Step 3 - Evaluation:

Evaluate the trained model on the test set (calculate_metrics):

-

Compute metrics including accuracy, weighted precision, recall, F1-score, ROC-AUC, TPR, FPR, t-SNE, quantization and resource usage (e.g., parameters, Flops, memory, inference time).

-

Generate a classification report, confusion matrix, ROC Curve, Classwise distribution, and t-SNE plots.

Step 4 - Quantization:

-

Quantize the model using dynamic quantization (torch.quantization.quantize_dynamic, dtype=torch.qint8) to reduce its computational footprint.

-

Save the quantized model and metadata (torch.save(save_dict, ”iot_KronNet_quantized.pth”)).

Output: Classification output \(y \in \{c_1, c_2, \ldots , c_m\}\), where \(m=34\) for CICIOT2023 and \(m=11\) for BOT-IOT, along with performance metrics.

Hyperparameters

Table 1 mention all the parameters tuned in the KronNet Model.

Experiments and results

The proposed KronNet model receives an extensive evaluation in this section through an experimental setup, performance analysis, computational efficiency assessment, and state-of-the-art approach comparison. The model’s effectiveness is evaluated through experiments on two IoT-specific datasets, CICIOT2023 and BOT-IOT, to measure detection accuracy, resource efficiency and class imbalance handling.

System configuration and setup

All experiments were conducted using the following hardware and software configuration:

Hardware configuration:

-

GPU: NVIDIA GeForce RTX 4060 Laptop GPU with 8.0 GB VRAM, CUDA Version 12.6, Driver Version 561.19.

-

CPU: Intel(R) Core(TM) i7-14650HX with 12 physical cores and 24 threads.

-

RAM: 31.2 GB DDR4.

Software environment:

-

Operating System: Linux-5.15.153.1-microsoft-standard-WSL2-x86_64 with glibc2.35.

-

Deep Learning Framework: PyTorch 2.0 with CUDA support.

-

Additional Libraries: scikit-learn 1.3.0, thop for FLOPS/MACs computation, matplotlib and seaborn for visualization.

-

Python Version: 3.10.

Hyperparameters:

-

Learning Rate: 0.001 with Step Learning Rate Scheduler (step_size = 10, \(\gamma\)=0.8).

-

Weight Decay: 1e–5.

-

Batch Size: 512.

-

Training Strategy: Two-stage training with early stopping (patience = 5).

-

Loss Function: Hybrid (70

-

Model Architecture: 2 hidden layers (64, 32) with Kronecker size = 2.

-

Data Balancing: GMM-based oversampling (n_components = 1).

The hyperparameters were tuned during experimentation, and the final values are listed in Table 1 (section “Proposed methodology”).

Experimental analysis

We performed multiple experiments to test the KronNet model using the CICIOT2023 and BOT-IOT datasets. The experiments evaluate the model’s convergence behavior, classification performance, computational efficiency, GMM-based oversampling effects, class-wise performance, and quantization effects.

Experiment 1: model convergence analysis

The experiment investigates KronNet training and validation performance to study convergence behavior and evaluate the two-stage training method.

-

CICIOT2023 Dataset:

-

The model was trained for 50 epochs, with the first 5 epochs used to identify underperforming classes \((F1 < 0.7)\). No underperforming classes were found (Underperforming classes \(\{(F1 < 0.7): []\}\), which means that GMM oversampling has done a good job of balancing the dataset.

-

The validation accuracy started at 87.86% in Epoch 1 and increased to 96.90% by Epoch 5 before reaching a final accuracy of 99.01% at Epoch 50. The validation accuracy reached its peak at 99.06% during Epoch 47. Validation loss decreased from 0.4376 to 0.0534, showing stable convergence.

-

Training time was approximately 7 min (420 s), averaging 8.4 s per epoch.

-

-

BOT-IOT Dataset:

-

Training ran for 13 epochs, with early stopping triggered after no improvement in validation accuracy for 5 epochs. No underperforming classes were identified in the initial 5 epochs.

-

Validation accuracy increased from 99.80% in Epoch 1 to a peak of 99.93% at Epoch 8, stabilizing at 99.91% by Epoch 13. Validation loss decreased from 0.0054 to 0.0028.

-

Training time was 19.1 min (1,146 s), averaging 88 s per epoch, due to the larger dataset size (11.36M instances vs. 1.24M for CICIOT2023).

-

Observation: The two-stage training approach, combined with GMM oversampling, ensured rapid convergence and effective handling of class imbalance. The model achieved high validation accuracy on both datasets, with faster convergence on CICIOT2023 due to its smaller size and more diverse attack classes.

Experiment 2: classification performance analysis

This experiment evaluates KronNet’s performance on the test sets of CICIOT2023 and BOT-IOT, reporting key metrics such as accuracy, precision, recall, F1-score, ROC-AUC, TPR, and FPR in Table 2. Confusion matrices in Fig. 4 provide further insight into classification outcomes.

Confusion matrix (a) CICIOT2023. (b) Bot-IOT.

-

CICIOT2023 dataset: Achieved 99.01% accuracy, with 99.02% precision, 99.01% recall, and 99.01% F1-score across 34 classes. ROC-AUC of 99.99%, TPR of 99.01%, and FPR of 0.03% reflect high sensitivity and low false positives. Figure 4’s(a) confusion matrix shows strong diagonal performance, with most classes (e.g., DDoS-TCP_Flood: 5,437 correct) accurately classified. BenignTraffic had the most misclassifications (109 instances misclassified as DDoS-SYN_Flood), aligning with its lowest F1-score (0.94) due to overlapping patterns. Attack classes like DDOS-PSHACK_Flood (5,454 correct) achieved near-perfect classification.

-

BOT-IOT dataset: Recorded 99.91% accuracy, precision, recall, and F1-score across 11 classes. ROC-AUC was 99.99%, TPR 99.91%, FPR 0.01%. Figure 4’s(b) confusion matrix confirms near-perfect classification, with most classes (e.g., DH, DT: 154,944 correct) showing no errors. Minor misclassifications occurred in OS_Fingerprint (491 misclassified as Service_Scan) and Service_Scan (972 as OS_Fingerprint), reflecting their 0.99 F1-scores.

Observation: KronNet excelled on both datasets, with BOT-IOT’s higher accuracy tied to its larger size and fewer classes (11 vs. 34). The confusion matrices (Figures 4(a,b)) highlight KronNet’s robustness, with CICIOT2023 showing slight BenignTraffic errors and BOT-IOT demonstrating near-flawless classification. Low FPRs underscore its reliability for practical IDS deployment.

Experiment 3: computational efficiency analysis

This experiment assesses the computational efficiency of KronNet in Table 3, focusing on parameters, memory usage, FLOPS, and inference time, and compares it with state-of-the-art lightweight IDS models.

The model’s lightweight design is evident from its low parameter count (5,074 for CICIOT2023, 4,703 for BOT-IOT) and memory usage (19.82 KB and 18.37 KB). Inference times of 0.209 ms and 0.208 ms confirm its suitability for real-time applications on resource-constrained IoT devices.

Experiment 4: impact of GMM-based oversampling

This experiment evaluates the effectiveness of GMM-based oversampling in addressing class imbalance on the CICIOT2023 and BOT-IOT datasets, comparing it against alternative techniques. The class distributions before and after GMM oversampling in Fig. 5 show how it affects the balance of the datasets, which allows KronNet to detect the anomalies in all classes.

Class distributions in (a) CICIOT2023. (b) BOT-IOT.

-

CICIOT2023 dataset: The initial dataset presented a severe class imbalance problem because (DDoS-ICMP_Flood) contained 36,394 instances while (Uploading_Attack) contained only six instances. The GMM oversampling method distributed 36,394 instances to all 34 classes which resulted in balanced representation. The distribution changes are shown in Fig. 5a which displays the initial class 0-33 distribution through blue bars (”Before GMM Oversampling”) and the resulting uniform 36,394 instance distribution per class through orange bars (”After GMM Oversampling”).

-

BOT-IOT dataset: Similarly, BOT-IOT showed significant imbalance pre-oversampling, with DP at 1,032,961 instances and Data_Exfiltration at just 6. Post-GMM oversampling, all 11 classes balanced at 1,032,961 instances. Figure 5b illustrates this shift, with blue bars highlighting the initial disparity (e.g., Normal at 477 instances) and orange bars showing the balanced state post-oversampling.

To quantify GMM’s impact, we compared it with several class balancing methods—KMeansSMOTE, Hybrid Oversampling (ROS + SMOTE), MedianSMOTE-Under, FixedTarget SMOTE-Under, and SMOTE—on the CICIOT2023 dataset. KronNet was trained under identical conditions (50 epochs, batch size 512, hybrid loss) in Table 4.

Observation: GMM-based oversampling outperformed alternatives, achieving 99.01% accuracy on CICIOT2023—6.01% higher than SMOTE/KMeansSMOTE (93.00%) and 24.01% higher than MedianSMOTE-Under(75.00%). Training time (7.0 min) was competitive, surpassing KMeansSMOTE (9.08 min) and slightly better than ROS + SMOTE (7.68 min) and SMOTE (7.59 min). Figures 5(a,b) visually confirm GMM’s ability to eliminate imbalance, enabling KronNet to learn robust features for rare classes like Uploading_Attack (CICIOT2023) and Data_Exfiltration (BOT-IOT). This led to significant F1-score gains (32.15% for CICIOT2023’s rarest classes, \(\sim\)40% for BOT-IOT’s), as detailed in section “Experiment 5: class-wise analysis”, highlighting GMM’s superiority in modeling class distributions over methods like SMOTE, which often overfit or fail to capture true distributions.

GMM-based oversampling: mode collapse and generative fidelity

To address concerns about GMM oversampling quality, we validated synthetic data using Fréchet Distance (FID) on CICIOT2023 (average FID 0.013, Table 5) and TON-IOT (average FID 0.002, Table 6). Low FID scores across most classes indicate high-quality synthetic data generation. However, some limitations emerge with rare classes like Recon-PingSweep (FID 3.4864, CICIOT2023) and mitm (FID 0.007, TON-IOT), suggesting challenges with the Gaussian assumption for minority classes. Additionally, infinite FID values occur for classes with insufficient samples to compute covariance (normal and DDoS-ICMP_Flood in CICIOT2023, normal in TON-IOT). But the model’s strong performance on minority classes (Table 4) and favorable Generalization Gaps (Table 11) validate GMM oversampling effectiveness compared to other balancing techniques.

Experiment 5: class-wise analysis

This experiment analyzes class-specific performance to assess the model’s ability to handle class imbalance, focusing on both the CICIOT2023 and BOT-IOT datasets. The performance metrics for each class after GMM oversampling are visualized in Fig. 6.

Classification performance metrics for (a) CICIOT2023. (b) BOT-IOT.

CICIOT2023

-

CICIOT2023 dataset analysis The class distribution before GMM oversampling revealed extreme imbalance, with minority classes such as Uploading_Attack (6 instances), Recon-PingSweep (12 instances), and XSS (22 instances) being severely underrepresented compared to majority classes like DDoS-ICMP_Flood (36,394 instances). After GMM oversampling, all classes were balanced to 36,394 instances, enabling the model to learn robust features for all attack types.

-

Impact on minority classes: For minority classes such as Uploading_Attack, Recon-PingSweep, SqlInjection (29 instances), and XSS, GMM-based oversampling significantly improved F1-scores. As shown in Figure 6(a), Uploading_Attack achieved a perfect F1-score of 1.00 after oversampling, compared to a baseline F1-score of 0.45 without oversampling (based on prior experiments with imbalanced data). Similarly, XSS, CommandInjection, and BrowserHijacking all achieved F1-scores of 0.99. The average improvement in F1-score for the ten rarest attack classes was 32.15% compared to the baseline without oversampling.

Overall Class Performance: As visualized in Fig. 6a, most classes achieved F1-scores \(\ge\) 0.98. BenignTraffic showed the lowest F1-score (0.94) due to its diverse patterns overlapping with some attack signatures, such as DDoS-SYN_Flood (F1-score: 0.98). Classes like DDoS-TCP_Flood, DDoS-ICMP_Flood, and Uploading_Attack achieved perfect F1-scores (1.00), demonstrating the model’s robustness across diverse attack types. The high precision and recall values across nearly all classes indicate balanced performance with few false positives or false negatives.

BOT-IOT

-

BOT-IOT dataset analysis The BOT-IOT dataset exhibited even more extreme class imbalance before oversampling, with majority classes like DP (1,032,961 instances), DDT (977,380 instances), and DDP (948,239 instances) dominating the dataset, while minority classes like Data_Exfiltration (6 instances), Keylogging (73 instances), and Normal (477 instances) were severely underrepresented.

-

Impact on Minority Classes: The GMM-based oversampling technique dramatically improved the representation of minority classes, balancing all classes to 1,032,961 instances each. This resulted in exceptional performance improvements for the rarest attack types. As shown in Fig. 6b, Data_Exfiltration and Keylogging, despite having only 6 and 73 original instances respectively, both achieved perfect F1-scores of 1.00 after GMM oversampling.

Overall class performance Figure 6b demonstrates the remarkable effectiveness of our approach on the BOT-IOT dataset, with nine out of eleven classes achieving perfect F1-scores (1.00). Only OS_Fingerprint and Service_Scan showed slightly lower metrics (0.99), likely due to minor feature overlaps between these reconnaissance-type attacks. The consistent precision and recall values of 1.00 across most classes indicate that the model is making virtually no errors in classification for the majority of attack types.

Observation for both datasets

The GMM-based oversampling technique demonstrated exceptional effectiveness in addressing class imbalance across both datasets. For CICIOT2023, it enabled a 32.15% average improvement in F1-score for the ten rarest classes. BOT-IOT achieved flawless detection (F1-score of 1.00) for extremely rare classes like Data_Exfiltration which had only 6 original instances—a class that would typically be challenging to detect with conventional approaches.

The visual evidence in Figures 6 shows that our model delivers equal performance for all classes regardless of their initial distribution in the datasets. Real-world intrusion detection systems require balanced performance because rare attack types usually represent the most dangerous security threats. The model demonstrates exceptional detection capabilities for all attack types through its consistently high precision, recall and F1-scores in both datasets.

The effectiveness stems from GMM’s capacity to learn the inherent probability distribution of each attack class which enables it to produce synthetic samples that preserve the unique features of each attack type. Traditional oversampling methods would likely produce unrealistic or overfitted synthetic instances when dealing with rare attack classes that have limited original samples. Also the Kronecker Product Enhancement, capturing complex feature interactions with minimal parameters makes the KronNet model to perform in Class wise classification

Experiment 6: discrimination capability analysis

The experiment tests the model’s discrimination ability by using Receiver Operating Characteristic (ROC) curves and Area Under the Curve (AUC) metrics for both datasets. The ROC curves for the BOT-IOT and CICIOT2023 datasets are shown in Fig. 7.

ROC performance curves for (a) CICIOT2023. (b) BOT-IOT.

CICIOT2023 dataset analysis

The ROC curves for the CICIOT2023 dataset, as shown in Fig. 7a, also show excellent discrimination ability for all 34 attack classes. The dataset is divided into four groups for better visualization, with each group showing consistently perfect or near perfect AUC scores of 1.00.

-

Classes 0–8: All nine classes in this group including major DDoS variants and BenignTraffic obtain perfect AUC scores of 1.00. The model demonstrates excellent ability to distinguish between TCP, UDP and ICMP flooding attacks even though they share some similarities.

-

Classes 9–17: The group consists of different types of flooding attacks and spoofing attacks and reconnaissance methods. The nine classes in this group show perfect AUC scores of 1.00 while their ROC curves stay in the upper-left corner of the plot which demonstrates excellent discrimination capability.

-

Classes 18–26: The diverse set contains Mirai variants together with fragmentation attacks, DNS spoofing, HTTP flooding and injection techniques. The model demonstrates perfect AUC scores of 1.00 for all nine classes despite the diverse range of attack types.

-

Classes 27–33: The last group consists of the least frequent attack classes from the original dataset which include Uploading_Attack (6 instances), XSS (22 instances) and BrowserHijacking (23 instances). The model shows excellent performance for detecting all seven classes in this group because it achieves AUC scores of 1.00 even when dealing with infrequent attack types.

Our approach achieves perfect AUC scores across all 34 classes in the CICIOT2023 dataset for both common and rare attack types which demonstrates its effectiveness in dealing with complex multi-class intrusion detection scenarios.

BOT-IOT dataset analysis

The BOT-IOT dataset demonstrates the outstanding discrimination ability of our model as shown in Fig. 7(b). The AUC scores reach 1.00 for all 11 classes which shows perfect discrimination between each class and all other classes. The ROC curves of all classes show horizontal lines at the top of the plot where TPR equals 1.0 and FPR approaches 0% across all classification thresholds.

-

The perfect discrimination stands out especially for minority classes like Data_Exfiltration and Keylogging which had only 6 and 73 original instances respectively. The AUC scores of 1.00 for these rare attack types show the effectiveness of our GMM-based oversampling approach in generating representative synthetic samples that preserve the distinctive characteristics of each attack class.

The BOT-IOT dataset performance demonstrates that KronNet architecture achieves discrimination abilities comparable to complex models at a fraction of the computational cost. The model would excel in real-world deployment because it achieves perfect AUC scores across all classes which results in very low false alarms and missed detections.

Comparative analysis and implications

The ROC curve analysis reveals several important insights:

-

1.

Perfect discrimination across datasets: The AUC scores of 1.00 consistently obtained in both datasets (11 classes in BOT-IOT and 34 classes in CICIOT2023) show the effectiveness of our approach in different network environments and attack types.

-

2.

Effectiveness for rare attacks: The perfect discrimination for extremely rare classes like Data_Exfiltration (6 instances), Uploading_Attack (6 instances), and XSS (22 instances) shows that our GMM-based oversampling technique is effective in addressing the class imbalance problem without sacrificing the performance of the model.

-

3.

Operational significance: The perfect AUC scores in practical deployment translate to highly reliable detection capabilities with minimal false positives. Security operators can confidently set detection thresholds to balance security requirements with operational constraints, knowing that the model maintains excellent discrimination capabilities across the threshold spectrum.

-

4.

Comparison with State-of-the-Art: These results exceed typical AUC scores reported in the literature for these datasets. Previous studies often report AUC values ranging from 0.95 to 0.99 for similar classification tasks, particularly for rare attack classes. Our consistent 1.00 AUC scores represent a significant improvement, especially considering the lightweight nature of our model.

The exceptional discrimination capability demonstrated by these ROC curves, combined with the model’s minimal computational requirements, confirms that KronNet achieves an optimal balance between detection performance and efficiency. This makes it particularly suitable for deployment in resource-constrained IoT environments where both accuracy and computational efficiency are critical concerns.

Experiment 7: t-SNE visualization of model embeddings and explainability framework

This experiment visualizes in Figure (8,9) the t-SNE embeddings of KronNet’s learned features compared to the raw test data for both CICIOT2023 and BOT-IOT datasets, assessing the model’s ability to separate classes in the embedding space after training with GMM-based oversampling.

t-SNE visualization of (a) raw test & (b) model embeddings in (CICIOT2023).

t-SNE visualization of (a) raw test & (b) model embeddings in (BOT-IOT).

-

CICIOT2023 Dataset: Figure 8a shows the t-SNE visualization of the raw test data across classes 0–33, revealing significant overlap between classes (e.g., BenignTraffic with DDoS-SYN_Flood, DDoS-TCP_Flood with DDoS-UDP_Flood) due to large, mostly similar attacks and feature similarity in the dataset. In contrast, Fig. 8b displays the model embeddings, where KronNet achieves clear separation for most classes, even for minority ones like Uploading_Attack and XSS. The distinct clustering reflects the effectiveness in enabling robust feature learning and attack classification, from raw data now form well-defined clusters, aligning with the 32.15% F1-score improvement for the ten rarest classes (section “Experiment 5: class-wise analysis”).

-

BOT-IOT Dataset: Figure 9a illustrates the t-SNE visualization of the raw test data for BOT-IOT’s 11 classes, showing some overlap (e.g., between OS_Fingerprint and Service_Scan, Normal with Data_Exfiltration) due to similar features and attacks. Figure 9b presents the model embeddings, where KronNet achieves near-perfect separation for all classes, including minority ones that is now in majority after GMM like Data_Exfiltration and Keylogging. The tight, distinct clusters corroborate the \(\sim\)40% F1-score improvement for the five rarest classes (section “Experiment 5: class-wise analysis”) and the near-perfect F1-scores (mostly 1.00) across all classes.

Observation: The t-SNE visualizations highlight KronNet’s superior feature learning capabilities. For CICIOT2023, the transition from overlapping raw data (Fig. 8a) to well-separated embeddings (Fig. 8b) underscores the model’s ability to handle a complex, 34-class dataset, despite initial overlaps in BenignTraffic and attack classes. For BOT-IOT, the clearer separation in the raw data (Fig. 9a) improves further in the embeddings (Fig. 9b), reflecting the dataset’s larger size and fewer classes, which facilitate more distinct feature learning. These visualizations confirm that GMM-based oversampling and KronNet’s architecture enable effective class discrimination, crucial for real-world IDS applications where distinguishing rare attacks (e.g., Uploading_Attack, Data_Exfiltration) is critical.

Explainability framework

The feature importance analysis by SHAP analysis in Table 7 reveals critical domain-aligned patterns in KronNet’s decision-making process. The most influential feature, ece_flag_number (importance: 14.9342), primarily impacts TCP-based attacks including MITM-ArpSpoofing, DDoS-TCP_Flood, and DoS-TCP_Flood, which aligns with domain knowledge since ECN flag manipulation is a common technique in flooding attacks to disrupt network stability. The ARP feature (importance: 13.4547) significantly affects SqlInjection, Backdoor_Malware, and DictionaryBruteForce, suggesting that ARP anomalies serve as precursors to these attacks through spoofing mechanisms that facilitate unauthorized access. Inter-Arrival Time (IAT, importance: 7.0958) demonstrates the model’s sensitivity to packet timing irregularities in CommandInjection, Backdoor_Malware, and SqlInjection, capturing critical temporal patterns indicative of malicious traffic. Protocol-specific features like ICMP (importance: 3.6860) and syn_flag_number (importance: 3.5443) effectively detect BrowserHijacking and XSS attacks respectively, indicating their role in identifying ICMP tunneling and SYN flooding techniques. Statistical features including Variance and Covariance consistently influence CommandInjection, Backdoor_Malware, and BrowserHijacking, demonstrating KronNet’s capability to capture distributional anomalies in packet data that characterize these sophisticated attack types, thereby validating the model’s intelligent feature utilization aligned with cybersecurity domain expertise.

Experiment 8: quantization impact analysis and edge deployment

This experiment evaluates the impact of dynamic quantization (converting floating-point operations to 8-bit integers) on model performance and efficiency shown in Table 8.

Observation: Quantization reduced the memory footprint by approximately 75% (from 19.82 KB to 4.96 KB for CICIOT2023, and 18.37 KB to 4.59 KB for BOT-IOT) with negligible impact on accuracy (0.06% reduction for CICIOT2023, 0.01% for BOT-IOT). Inference speed improved by 11% (from 0.209 ms to 0.186 ms for CICIOT2023, and 0.208 ms to 0.185 ms for BOT-IOT), further enhancing the model’s suitability for resource-constrained IoT devices.

Edge deployment feasibility

To assess KronNet’s suitability for resource-constrained IoT devices, we estimated deployment metrics on an ARM Cortex-M4 (168 MHz) using TensorFlow Lite for Microcontrollers (TFLite Micro). The quantized model (int8) has 7442 parameters, occupying \(\sim 7.4\) KB. Including TFLite Micro’s runtime and buffers (\(\sim 25\) KB), the total memory footprint is \(\sim 32.4\) KB, fitting within the 128–256 KB SRAM of typical Cortex-M4 devices (e.g., STM32F4). With \(\sim 1.6M\) MACs, the estimated inference time is 24 ms, accounting for a 2.5x overhead over the theoretical 9.5 ms (1.6M / 168M MACs/s). These metrics (Table 9) suggest KronNet is feasible for edge deployment in IoT applications requiring inference every 50–100 ms.

Ablation study and generalization analysis

Table 10 presents the ablation study results, demonstrating the isolated contributions of KronNet’s components on CICIOT2023 and BOT-IOT, as well as generalization to the TON-IOT dataset. The ablation study confirms that all three components (Kronecker factorization, hybrid loss, GMM oversampling) are essential for KronNet’s efficiency and accuracy. Its generalization capability further supports its applicability beyond the tested benchmarks.

Generalizability of KronNet to other real-world dataset

To evaluate the adaptability of KronNet beyond the CICIOT2023 and BOT-IOT datasets, we conducted a generalization study using the TON-IOT dataset, a real-world IoT security dataset with 40 features and 10 attack classes, differing from the 41 features and 34 classes of CICIOT2023 and the 37 features and 11 classes of BOT-IOT. The TON-IOT dataset includes diverse attack types such as Ransomware and Backdoor, providing a distinct scenario to test KronNet’s robustness. Using the full KronNet configuration (with Kronecker enhancement, hybrid loss, and GMM oversampling), we achieved an overall accuracy of 98.15% and a weighted F1-score of 0.981 on TON-IOT, with an average inference time of 0.194 ms. These results, detailed in the Generalization study (see Table 11), indicate that KronNet maintains high performance across datasets with varying feature sets and class distributions, suggesting strong generalizability. The slight performance variation (e.g., compared to 99.01% on CICIOT2023) can be attributed to differences in data imbalance and attack complexity, which KronNet mitigates through its adaptive class weighting and Kronecker-enhanced feature representation. Testing on TON-IOT, a custom real-time dataset, reinforces KronNet’s potential applicability to other IoT security scenarios, though further validation on additional diverse datasets is recommended to fully establish its adaptability.

Adversarial robustness evaluation of KronNet

To comprehensively assess KronNet’s resilience in real-world IoT security deployments, we conducted extensive adversarial robustness testing using state-of-the-art evasion techniques that simulate sophisticated attack scenarios across three diverse IoT datasets. Our evaluation employed the Fast Gradient Sign Method (FGSM) and Projected Gradient Descent (PGD) attacks, which generate adversarial examples by strategically perturbing clean inputs with small noise (\(\varepsilon =0.1\)) in the direction of the loss gradient, effectively mimicking malicious attempts to bypass intrusion detection systems. The FGSM attack follows the formulation \(X_{\textrm{adv}}=X+\varepsilon \cdot \textrm{sign}\left( \nabla _XL\left( X,y\right) \right)\), while PGD employs iterative refinement with \(L_\infty\) bounds over 10 iterations for stronger perturbations.

KronNet demonstrates exceptional adversarial robustness across all evaluated datasets, as shown in Table 12. On BOT-IOT, the model maintains outstanding performance with only a 0.47% accuracy drop (99.77% to 99.3%), while CICIOT2023 shows a moderate 3.04% reduction (98.04% to 95%), and TON-IOT exhibits a 4.77% decrease (95.77% to 91%). These results are particularly impressive considering that many state-of-the-art models typically suffer 15-40% accuracy degradation under similar adversarial conditions. The weighted F1-scores remain consistently high across datasets (0.997, 0.9803, and 0.957 respectively), and ROC-AUC values maintain near-perfect scores (\(\ge 0.998\)), indicating robust discriminative capability even under attack.

To enhance KronNet’s defensive capabilities, we implemented adversarial training by incorporating generated adversarial examples directly into the training process, enabling the model to learn robust feature representations that maintain high detection accuracy even under attack conditions. Additionally, we employed data augmentation strategies with adversarial examples and fine-tuned hyperparameters including learning rates and dropout rates to optimize the balance between clean accuracy and adversarial robustness. This comprehensive approach ensures that KronNet maintains its exceptional performance while demonstrating remarkable resilience against sophisticated evasion attempts, with inference times remaining consistently fast (0.194-0.233ms) and parameter counts staying minimal (4703-5074), making it highly suitable for deployment in adversarial IoT environments where attackers may attempt to craft malicious traffic designed to evade detection systems.

Theoretical basis for Kronecker products in neural features

Theoretical justification

The incorporation of the Kronecker product in KronNet is designed to enhance the representational power of the Feed-Forward Neural Network (FFNN) while maintaining a reduced parameter count, particularly suited for high-dimensional, sparse feature spaces common in IoT datasets. Mathematically, the Kronecker product of two matrices \(A \in \mathbb {R}^{m \times n}\) and \(B \in \mathbb {R}^{p \times q}\) yields \(A \otimes B \in \mathbb {R}^{(m \cdot p) \times (n \cdot q)}\) , defined as in Eq. (4):

In KronNet, the input feature vector \(X \in \mathbb {R}^d\) (e.g., \(d = 41\) for CICIOT2023) is projected via a linear layer into a \(K \times K\) matrix (where \(K = 2\)) followed by a learned Kronecker factor \(K \in \mathbb {R}^{k \times k}\). This transformation, \(x' = (xW) \otimes K\), where W is the projection matrix, efficiently models pairwise and localized feature interactions. The parameter complexity is \(O(dk^2 + k^2)\) (e.g., approximately 5074 parameters), a significant reduction from the \(O(d^2)\) complexity (e.g., 38690 parameters) of a full bilinear projection \(x^T W x\), or the O(rd) complexity (e.g., 6182 parameters with \(r = 4\)) of a low-rank approximation \(UV^T x.\)

This structured approach leverages the sparsity of IoT data, where most feature interactions are negligible, by enforcing a low-rank-like constraint with learned flexibility. The Kronecker product’s ability to capture localized interactions within the \(K \times K\) space enhances the model’s capacity to generalize across diverse attack patterns, a critical requirement for IoT security applications.

Empirical evidence

To validate the superiority of the Kronecker enhancement, an ablation study was conducted comparing three configurations on the CICIOT2023 dataset (41 features, 34 classes). The results are summarized in Table 13.

Kronecker Enhanced achieved optimal performance with 99.01% clean accuracy and 95.00% adversarial robustness using only 5074 parameters, demonstrating superior efficiency through structured feature interaction modeling that avoids overfitting. Bilinear Projection severely underperformed (2.90% accuracy, 0.002 F1-score) despite 38690 parameters due to \(O(d^2)\) complexity causing overfitting in sparse IoT data. Low-Rank Projection showed good results (98.52% clean, 94.00% adversarial accuracy) with 6182 parameters but was limited by fixed rank constraints. The Kronecker approach’s faster inference time (0.209ms vs. 0.230ms) and superior accuracy confirm its effectiveness in balancing computational efficiency with robust feature interaction modeling, making it ideal for sparse, high-dimensional IoT security applications where structured parameter reduction is essential.

Overfitting analysis from synthetic balancing

We computed class-wise generalization gaps for all 34 attack classes in CICIOT2023, with special focus on rare classes (\(\le 10\) original instances) in Table 14. KronNet demonstrates exceptional generalization capabilities with negligible overfitting across all classes, including those with extremely limited training data. Our analysis reveals an average generalization gap of only +0.0005 for rare classes, with the largest gap being a clinically insignificant +0.002, indicating robust learning without memorization. Remarkably, ultra-rare attacks like Uploading_Attack (6 instances) and XSS (22 instances) actually perform better on test data with negative gaps of -0.001 and -0.002 respectively, demonstrating superior generalization rather than overfitting. This exceptional performance stems from GMM’s distribution-preserving synthesis approach, which generates synthetic samples from learned probability distributions rather than simple linear interpolations used by other Balancing Techniques in Table 4, thereby maintaining the authentic covariance structure of original minority classes. The combination of Focal Loss regularization that penalizes overconfident predictions on synthetic samples and Kronecker feature compression that reduces dimensionality effectively prevents overparameterization while preserving essential feature interactions.

Comparative analysis with state-of-the-art approaches

This section compares KronNet with recent state-of-the-art Intrusion Detection System (IDS) models across multiple dimensions, including accuracy, F1-score, parameters, memory usage, inference time, FLOPS (Floating Point Operations Per Second), and Multiply-Accumulate Operations (MACs) as measures of computational complexity. The comparison is conducted on the CICIoT2023 and BoT-IoT datasets presented in Table 15.

Analysis:

-

CICIoT2023:

-

KronNet outperformed DL-BiLSTM50 by 5.88% in accuracy (99.01% vs. 93.13%) and 7.07% in F1-score (99.01% vs. 91.94%), while using 155% more parameters (5,074 vs. 1,988) but 45.7% less memory (19.82 KB vs. 36.50 KB). Notably, KronNet’s inference time is significantly faster—30,622\(\times\) faster (0.209 ms vs. 6.4 s). In terms of computational complexity, KronNet’s FLOPS count of 9,872 is 63.7\(\times\) lower than DL-BiLSTM’s 628,800. Its MACs value of 4,936 further highlights its efficiency.

-

Compared to CNN_BiLSTM+Quantization63, KronNet achieved a 7.51% higher accuracy (99.01% vs. 91.50%) and a significantly higher F1-score by 98.1% (99.01% vs. 0.91%). It uses 49.3% fewer parameters (5,074 vs. 10,000) and 91% less memory (19.82 KB vs. 220.00 KB). The inference time of KronNet is 12,010\(\times\) faster (0.209 ms vs. 2.51 s). While FLOPS and MACs for CNN_BiLSTM+Quantization are unavailable, KronNet’s values of 9,872 FLOPS and 4,936 MACs indicate its lightweight nature.

-

-

Bot-IOT:

-

KronNet achieved a slightly lower accuracy than CL-SKD-F62 by 0.06% (99.91% vs. 99.97%) and F1-score by 0.06% (99.91% vs. 99.97%), but it uses 67.6% fewer parameters (4,703 vs. 14,494) and 67.5% less memory (18.37 KB vs. 56.62 KB). Its inference time was 5.8x faster (0.208 ms vs. 1.21 ms). KronNet’s FLOPS count of 9,176 is 15,829\(\times\) lower than CL-SKD-F’s 145,297,408 (Table 7 in62), representing a dramatic reduction in computational requirements while maintaining comparable accuracy.

-

Compared to LNN61, KronNet has a 3.76% higher accuracy (99.91% vs. 96.15%) and 3.77% higher F1-score (99.91% vs. 96.14%). It uses 10% more parameters (4,703 vs. 4,277) but 89.8% less memory (18.37 KB vs. 181.00 KB). Its inference time is 13.8x faster (0.208 ms vs. 2.87 ms). KronNet’s FLOPS count of 9,176 is 2.6\(\times\) lower than LNN’s 23,966. (Table 6 in61).

-

Observation:

KronNet consistently demonstrates superior detection performance (accuracy and F1-score) while showcasing exceptional computational efficiency across parameters, memory usage, inference time, FLOPS, and MACs. With FLOPS values of 9,872 for CICIoT2023 and 9,176 for BoT-IoT, and MACs values of 4,936 and 4,588 respectively, KronNet highlights its lightweight nature, making it highly suitable for resource-constrained IoT environments.

Compared to models like DL-BiLSTM50 and CNN_BiLSTM+Quantization63 on CICIoT2023, KronNet achieves significantly higher accuracy and F1-scores, with a 63.7\(\times\) lower FLOPS count than DL-BiLSTM and a 12,010\(\times\) faster inference time than CNN_BiLSTM+Quantization. On BoT-IoT, it outperforms LNN61 by 3.76% in accuracy while being 15,829\(\times\) more efficient in FLOPS compared to CL-SKD-F62.

The metrics make KronNet an appropriate solution for IoT security applications since it achieves high detection performance with minimal resource demands which is critical for practical deployment in resource constrained IoT environments where both security and efficiency are paramount concerns.

Discussion

The experimental results show KronNet effectively solves the main problems of IoT intrusion detection: computational efficiency, class imbalance, and detection accuracy.

Computational efficiency

The model’s innovative use of the Kronecker product and feed-forward architecture results in a remarkably lightweight design, with 5,074 parameters and 19.82 KB memory on CICIOT2023, and 4,703 parameters and 18.37 KB memory on BOT-IOT. After quantization, memory usage drops significantly to 4.96 KB and 4.59 KB, respectively, with inference times of 0.186 ms and 0.185 ms, which makes it possible to deploy on 8-bit microcontrollers with limited resources.

Compared to state-of-the-art models, KronNet demonstrates exceptional efficiency. For instance, on CICIOT2023, it uses 49.3% fewer parameters than CNN_BiLSTM+Quantization63 (5,074 vs. 10,000) and 91% less memory (19.82 KB vs. 220.00 KB), with an inference time 12,010\(\times\) faster (0.209 ms vs. 2.51 s). Its FLOPS count of 9,872 (CICIOT2023) and 9,176 (BOT-IOT) is 63.7\(\times\) lower than DL-BiLSTM50 (628,800) and 15,829\(\times\) lower than CL-SKD-F62 (145,297,408), respectively, highlighting its suitability for resource-constrained IoT environments.

The model’s ultra-low resource requirements—particularly its sub-millisecond inference times and minimal memory footprint—make it deployable even on severely constrained IoT devices with limited computational capabilities, such as 8-bit microcontrollers frequently used in edge sensors and actuators.

Class imbalance handling

The GMM-based oversampling approach effectively models the underlying distribution of each attack class, significantly improving detection performance for minority classes. This method outperforms traditional techniques like SMOTE and KMeansSMOTE, achieving higher accuracy with less training time.

On CICIOT2023, the impact is evident in the 32.15% average improvement in F1-scores for the ten rarest attack classes, with classes like Uploading_Attack (only 6 original instances) achieving perfect F1-scores (1.00) after oversampling. On BOT-IOT, the approach enabled perfect detection (F1-score of 1.00) for extremely rare classes like Data_Exfiltration (6 instances) and Keylogging (73 instances).

The model’s balanced performance across classes is reflected in its high weighted F1-scores of 99.01% on CICIOT2023 and 99.91% on BOT-IOT. Notably, KronNet achieves a 8.01% higher F1-score than CNN_BiLSTM+Quantization63 on CICIOT2023 (99.01% vs. 0.91%), demonstrating its superior ability to handle imbalanced datasets and detect minority attack classes effectively.

Detection accuracy

The model reaches an outstanding accuracy level of 99.01% on CICIOT2023 and 99.91% on BOT-IOT which exceeds state-of-the-art models by substantial margins in most cases.

The results of KronNet surpass DL-BiLSTM50 by 5.88% (99.01% vs. 93.13%) and CNN_BiLSTM+Quantization63 by 7.51% (99.01% vs. 91.50%) on CICIOT2023. The results show that KronNet outperforms LNN [24] by 3.76% (99.91% vs. 96.15%) on BOT-IOT although it is slightly lower than CL-SKD-F62 by 0.06% (99.91% vs. 99.97%). Although KronNet shows a small performance gap with CL-SKD-F it reaches its results through a parameter reduction of 67.6% and memory reduction of 67.5% and it provides 5.8\(\times\) faster inference time and 15,829\(\times\) lower FLOPS while remaining a highly practical solution for IoT deployment.

The model’s outstanding performance is also supported by its low false positive rates of 0.03% on CICIOT2023 and 0.01% on BOT-IOT, which shows that the model can avoid false alarms while keeping high detection rates. This is important for practical deployment because high false positive rates can cause alert fatigue and decrease trust in the IDS.

Practical implications

KronNet stands as a major practical IoT security advancement because it unites high accuracy with ultra-low resource usage and effective class imbalance handling. The approach addresses the essential trade-off between security effectiveness and resource utilization which previous methods have struggled with.

The IoT network administrators benefit from KronNet through several key advantages:

-

1.

Real-time Detection: The model operates at approximately 0.2 ms inference times which allows real-time processing of network traffic for immediate threat detection across high-throughput IoT networks.

-

2.

Deployment Flexibility: The model’s minimal resource requirements allow deployment across the full spectrum of IoT devices, from resource-rich edge gateways to severely constrained sensor nodes.

-

3.

Comprehensive Protection:The model provides complete protection through its effectiveness in detecting standard and infrequent attack types which helps identify complex threats that standard detection systems would miss because of their rarity.

-

4.

Reduced Operational Costs: The model’s low computational requirements result in energy efficiency which leads to longer battery life for IoT devices and reduced operational costs for large-scale deployments.

The model’s FLOPS (9872 on CICIOT2023, 9,176 on BOT-IOT) and MACs (4936 and 4588, respectively) further underscore its efficiency, while its ability to achieve up to 7.51% higher accuracy than competing models ensures robust protection against evolving IoT network threats. This combination makes KronNet a practical and effective solution for securing the next generation of IoT systems, particularly in resource-constrained environments where both security and efficiency are critical requirements.

The t-SNE visualizations and SHAP analysis provide deeper insights into KronNet’s decision-making process. The transition from overlapping raw data to well-separated embeddings in both datasets reflects the model’s ability to learn discriminative features, particularly for rare attacks. SHAP analysis reveals that features like ece_flag_number (importance: 14.9342) and ARP (importance: 13.4547) align with domain knowledge, as they effectively detect TCP-based flooding attacks and ARP spoofing precursors, respectively. This interpretability enhances trust in KronNet’s predictions, a critical factor for practical IDS deployment.

Despite its strengths, KronNet’s evaluation is limited to single-dataset validation, which may constrain its generalizability across heterogeneous IoT environments. The adversarial robustness analysis shows promising results (e.g., 95.00% accuracy on CICIoT2023 under adversarial conditions), but further testing against advanced evasion techniques is needed. Additionally, while energy efficiency is implied through low FLOPS and inference times, direct energy consumption measurements on IoT hardware would strengthen the model’s practical claims. These limitations highlight opportunities for future research to enhance KronNet’s adaptability and resilience in real-world IoT deployments.

Conclusion and future work

This study introduced KronNet, a lightweight Kronecker-enhanced feed-forward neural network designed for efficient intrusion detection in IoT networks. Through rigorous evaluation on the CICIoT2023 and BoT-IoT datasets, KronNet achieved outstanding detection performance, with accuracies of 99.01% and 99.91%, weighted F1-scores of 99.01% and 99.91%, and low false positive rates of 0.03% and 0.01%, respectively. The model’s innovative use of Kronecker product operations enabled effective feature interaction modeling with minimal parameters (5,074 for CICIoT2023, 4,703 for BoT-IoT), resulting in up to 15,829\(\times\) lower FLOPS and 12,010\(\times\) faster inference times compared to state-of-the-art models. GMM-based oversampling and a hybrid loss function addressed class imbalance, improving F1-scores for rare attack classes by 32.15% and approximately 40%, ensuring comprehensive threat detection. Post-quantization, KronNet reduced memory usage by 75% (to 4.96 KB and 4.59 KB) with negligible accuracy loss, demonstrating its feasibility for edge deployment on resource-constrained IoT devices. KronNet’s contributions advance IoT cybersecurity by providing a scalable, accurate, and efficient IDS that meets the stringent requirements of real-time deployment. Its low computational footprint, rapid inference times (approximately 0.2 ms), and robust detection capabilities make it a practical solution for securing IoT networks against evolving threats. Future work will focus on enhancing generalizability through federated learning, integrating multi-modal data to address emerging IoT security challenges, and specially evaluation on unseen Domains or transferability Assessment. Additionally, hardware-specific optimizations and energy consumption analyses will further validate KronNet’s applicability in diverse IoT environments, paving the way for more resilient and secure IoT ecosystems.

Data availibility

The datasets used and/or analysed during the current study are available from the corresponding author on reasonable request.

References

State of IoT 2024: Number of connected IoT devices growing 13 18.8 billion globally—iot-analytics.com (2024, accessed 13 Apr 2025). https://iot-analytics.com/number-connected-iot-devices/.

Maaz, M. et al. Empowering iot resilience: hybrid deep learning techniques for enhanced security. IEEE Access (2024).

Butun, I., Österberg, P. & Song, H. Security of the internet of things: vulnerabilities, attacks, and countermeasures. IEEE Commun. Surv. Tutor. 22(1), 616–644 (2019).