Abstract

As financial institutions want openness and accountability in their automated systems, the task of understanding model choices has become more crucial in the field of financial text analysis. In this study, we propose xFiTRNN, a hybrid model that integrates self-attention mechanisms, linearized phrase structure, and a contextualized transformer-based Recurrent Neural Network (RNN) to enhance both model performance and explainability in financial sentence prediction. The model captures subtle contextual information from financial texts while maintaining explainability. xFiTRNN provides transparent, sentence-level insights into predictions by incorporating advanced explainability techniques such as LIME (Local Interpretable Model-agnostic Explanations) and Anchors. Extensive evaluations on benchmark financial datasets demonstrate that xFiTRNN not only achieves a remarkable prediction performance but also enhances explainability in the financial sector. This work highlights the potential of hybrid transformer-based RNN architectures for fostering more accountable and understandable Artificial Intelligence (AI) applications in finance.

Similar content being viewed by others

Introduction

All relevant data regarding traded assets is reflected in prices within an open market in financial markets1. Outperforming the markets consistently is a formidable challenge due to the continuous adjustment of positions and prices by market participants in response to new information. However, the advent of novel information retrieval techniques has the potential to alter the interpretation of “new information,” offering short-term advantages if these technologies are adopted promptly. Analyzing financial documents, such as news articles, analyst reports and corporate announcements, can reveal valuable insights. Given the sheer volume of textual data generated daily, manual analysis becomes impractical, making automated sentiment or polarity analysis through natural language processing (NLP) techniques increasingly essential for evaluating texts from financial entities2. Traditional NLP models have made significant strides in this area, but the need for transparent and explainable predictions remains a pressing concern. As of on 2012, Knight Capital Group (https://www.cnbc.com/2012/08/02/the-knight-fiasco-how-did-it-lose-440-million.html) deployed a faulty trading algorithm that executed millions of unintended orders in 45 min, losing \(\$\)440 million and nearly collapsing the firm. This incident underscores a critical challenge in modern finance: complex AI systems can amplify risks when their decision-making processes remain opaque. Similar issues persist today, such as the 2024 NSE’s (https://www.reuters.com/business/finance/indias-nse-pays-765-mln-settle-algo-trading-software-case-2024-10-04/) \(\$\)76.5 million settlement, where allegations were related to unfair access to its algorithmic trading software. These real-world failures highlight the urgent need for explainable AI (xAI) in financial applications, where transparency is not merely advantageous but a regulatory and ethical imperative.

The role and responsibility of AI have grown immensely, prompting concerns about its trustworthiness. A significant portion of these concerns stems from the prevalent use of “black-box” models, particularly Deep Neural Networks (DNNs). The complexity of these models, with their vast parameter spaces and intricate algorithms, renders them largely uninterpretable to humans, making it difficult to fully understand their decision-making processes . Consequently, these models may harbor biases, relying on unjust, outdated, or incorrect assumptions that can be missed using traditional methods of model effectiveness evaluation . This opacity ultimately erodes trust in such models .

The term, xAI has arisen to solve the drawbacks of conventional AI. The creation of Machine Learning (ML) methods that produce incredibly successful models that are also explicable, enabling people to comprehend, control and have faith in them is what Barredo Arrieta et al. refers to as xAI. According to3, xAI models are intended to offer explanations or specifics that make their operation understandable. Consequently, the use of xAI helps to make models more reliable, secure and error-free.

This paper is driven by the need to assess market sentiments in real-time amidst ongoing financial crises, leading to a transition from traditional methods to real-time media analysis4. In the financial industry, sentiment analysis is still difficult even with major advances in natural language processing (NLP)2. This calls for the use of domain-specific word lists and adaptive learning algorithms. To maintain confidence and openness in financial decision-making processes, these sophisticated models’ opacity and complexity necessitate strong explainability. Particularly in the fields of financial sentence prediction and natural language processing, explainability is needed. With BERT-based systems, there have already been attempts to improve transparency. For instance, exBERT is an interactive dashboard that shows the attention and internal representations of a model, allowing users to create hypotheses regarding the reasoning process of the model5. An alternative method is employed by van Aken et al. using visBERT6. VisBERT monitors token changes as they go through the network’s layers in light of research that suggests attention mechanisms might not be the best choice for explanations7. It takes hidden states out of each Transformer encoder block and maps them into a 2D space using Principal Component Analysis (PCA) so that semantic links may be deduced from token distances8.

We present the Linearized Phrase Structure (LPS)9 model using xFiTRNN, with a distinct focus on understanding financial sentiment at the sentence level—a key differentiator from other studies in the field. This model is designed to categorize financial and economic text fragments from an investor’s perspective into three groups: positive, negative and neutral. Focusing on the sentence level is crucial in financial contexts because financial documents often contain complex narratives where sentiments can vary significantly within a single document. By analyzing sentiment at the sentence level, the LPS model can accurately capture these nuanced shifts, providing a detailed sentiment profile that is essential for informed investment decisions. With the assessment of explainability techniques—LIME10 and Anchors11—the LPS model seeks to capture crucial interactions between financial concepts and phrase structures, offering comprehensible and easily understood insights into model predictions.

Though recent work in financial NLP has explored hybrid architectures, similar like xFiTRNN, these approaches often treat documents as homogeneous units, missing critical sentence-level nuances. Financial narratives frequently contain mixed sentiments (e.g., “Strong Q3 growth despite regulatory headwinds”), requiring granular analysis. Our Linearized Phrase Structure (LPS) model addresses this gap through three key innovations: (1) syntactic-aware feature extraction that identifies sentiment-carrying phrases, (2) dynamic attention mechanisms weighting these phrases by financial relevance, and (3) integrated explainability interfaces optimized for domain experts.

Moreover, though the xAI landscape offers multiple explanation paradigms, each with trade-offs. For instance, SHapley Additive exPlanations (SHAP)12 values provide global feature importance but struggle with NLP’s sequential nature. Integrated Gradients (IG)13 require baseline selection that may distort financial text interpretations. xFiTRNN strategically combines LIME’s local explanations—which identify phrase-level contributors through perturbed samples—with Anchors’ rule-based summaries (“The prediction remains positive if ’outperform’ appears regardless of other terms”). This dual approach caters to financial analysts’ needs, offering both granular insight into specific predictions and actionable decision rules. A detailed comparison of our tested LIME/Anchors performance with SHAP and IG is shown in section “Additional tests”.

This paper’s principal contributions are:

-

We propose a hybrid sentiment analysis strategy, xFiTRNN specially designed for the financial domain.

-

We integrate the transformer-based FinBERT architecture, BiGRU network and self-attention mechanism with linearized phrased-based feature extraction techniques.

-

We evaluate word embedding strategies in order to determine the most reliable preprocessing and embedding approaches that are necessary for precise financial sentiment analysis performance.

-

We test the xFiTRNN model against seven conventional ML models, one stacking model and five DL models in our comprehensive trials to evaluate its performance and show its adaptability.

-

We execute extensive experimental evaluation of xFiTRNN on benchmark Financial Phrasebank and IMBSEntFiN datasets, demonstrating superior predictive performance and enhanced transparency compared to traditional models.

-

We improve comprehension and confidence in the xFiTRNN model’s predictions by offering insights into the explainability of the model through thorough analysis employing LIME and Anchor methodologies.

The rest of the paper is organized as follows: To begin with, section “Related works” provides an overview of the pertinent literature on the subject and lays out the framework for this study, along with some of its limitations. Afterwards, the research approach and methods employed in this study are described in section “Methodology”. Next, the study’s analyzed findings are shown in section “Results analysis”. Later, section “Explainability tests” analyzed the explainability test’s results of the proposed model.. Section “Model efficiency and deployment scalability” discusses model efficiency and deployment scalability and section “Multilingual robustness” provides future plan to test XFiTRNN on multilingual robustness. Subsequently, section “Practical implementation plan for practitioners” describes practical implementation plan of the proposed model and section “Discussion and future works” offers an in-depth analysis of the subject matter together with a critical assessment of the findings and their implications for future study. Section “Conclusion” finally brings the paper to a close.

Related works

Recent studies in financial sentiment analysis using DL and ML reveal significant advancements. Huang et al.4 show that ChatGPT 3.5 outperforms FinBERT in forex sentiment analysis through effective zero-shot prompting. Mishev et al.2 present a novel method using sequential minimal optimization with a decision tree, achieving 89.47% accuracy in Twitter sentiment analysis. Fatouros et al.14 introduce a sentence-level analysis approach, improving accuracy and context preservation in financial news sentiment analysis. Sousa et al.15 evaluate various algorithms for financial sentiment analysis, including DL models, highlighting the importance of preprocessing stages. Naresh and Venkata develop FinBERT, a finance-specific model excelling in sentiment classification and ESG issue identification16. Aslam et al.17 find that NLP transformers outperform traditional lexicon-based methods, especially with limited data. Ahmad and Umar demonstrate the effectiveness of convolutional neural networks in analyzing StockTwits sentiments18. Ahmad et al.19 achieve high accuracy with their LSTM-GRU ensemble model for cryptocurrency sentiment analysis. Daudert proposes integrating diverse text sources for enhanced sentiment analysis, introducing a novel multi-text approach20. Consoli et al.21 present FiGAS, an unsupervised method for fine-grained sentiment analysis, outperforming traditional methods. Yadav et al.22 find that noun-verb combinations are effective for sentiment analysis in financial news, despite limitations in dataset size. Xing et al.23 dissect error patterns in sentiment analysis and introduce StockSen, a new corpus for financial sentiment analysis. Du et al.24 advocate for hybrid models integrating symbolic and subsymbolic methods for targeted aspect-based sentiment analysis. Zhang et al.25 introduce a retrieval-augmented framework, improving LLM performance for sentiment analysis. Štrimaitis et al.26 assess sentiment analysis methods for Lithuanian financial news, identifying effective classification algorithms. Liapis et al.27 demonstrate that LSTM models excel in multivariate financial time series forecasting with sentiment analysis. Du et al.28 develop FinSenticNet, a concept-level lexicon for financial sentiment analysis, surpassing traditional methods. Hansen and Borch emphasize the role of alternative data, including sentiment analytics, in investment management and algorithmic trading29. Hartmann et al.30 find state-of-the-art language models superior to traditional methods in sentiment analysis. Chang et al.31 combine aspect-level sentiment analysis with visual analytics to assess airline industry customer satisfaction during the COVID-19 crisis. Zhang et al.32 survey emotion fusion strategies for mental illness detection in social media. Singh et al.33 review the application of RL and DRL in financial decision-making, highlighting their superior performance. Leelawat et al.34 analyze sentiments in tourism-related tweets using ML algorithms, finding support vector machines most effective. Kuma et al.35 review sustainable finance research and advocate for the integration of AI and big data techniques. Alsayat introduces an ensemble DL model for sentiment analysis in social media during the coronavirus pandemic, demonstrating superior accuracy36. Multimodal architectures, the combination of textual sentiment with market data to enhance both predictive performance and explainability are emerging nowadays. Passalis et al.37 fuse news sentiment with trading volumes, enabling models to validate language patterns against market reactions—for instance, distinguishing between “rally” in bullish versus pump-and-dump contexts. However, their late fusion approach (concatenating text and numerical features) obscures cross-modal interactions. Recent work by Valle-Cruz et al.38 demonstrates the promise of early fusion: aligning tweet sentiment scores with real-time price movements in a joint embedding space. While improving hedge signal detection, this complicates explanations as market data dominates text features in gradient-based attributions.

Furthermore, there has been an increasing focus on developing explainable financial sentence prediction approaches39. Fatouros et al.40 highlighted the use of attention mechanisms in sentence-level analysis, which not only improved accuracy but also provided insights into which parts of the text influenced the model’s decisions. This approach helps analysts understand the key drivers behind sentiment classifications. Ardizzone et al.41 advocated for hybrid models that combine symbolic methods (which are more interpretable) with subsymbolic methods (like neural networks). This integration allows for targeted aspect-based sentiment analysis, offering a clearer understanding of how specific features contribute to predictions. Du et al.28 developed FinSenticNet, a concept-level lexicon that enhances the explainability of sentiment analysis in financial texts. By providing concept-level insights, this model surpasses traditional methods in explaining how different sentiment scores are derived. Chang et al.31 explored the combination of aspect-level sentiment analysis with visual analytics. This approach aids in explaining model outputs by visually representing how different aspects of a text, such as sentiment towards specific entities, are analyzed, thus enhancing user understanding and trust. Additionally, these models can provide more granular explanations of sentiment shifts, especially in response to potentially manipulative inputs.

However, current xAI approaches in finance exhibit distinct trade-offs. While Fatouros et al.14 use attention weights to highlight sentiment keywords, high attention to “dividend” might reflect either positive yield or negative tax implications. LIME10 generates local explanations by perturbing input phrases, crucial for analyzing earnings calls where negation scopes (e.g., “not sustainable”) determine sentiment. However, its random sampling struggles with financial jargon’s sparsity. IG13 attributes predictions to input features via path integrals, but requires careful baseline selection—a zero-vector baseline may misattribute significance to stopwords in SEC filings. Anchors11 create IF-THEN rules (e.g., “IF ’beat estimates’ THEN positive”), aligning well with analysts’ checklist-driven workflows but oversimplifying compound phrases like “beat estimates despite inflation risks”. We selected LIME10 and Anchors11 for their complementary strengths: LIME’s phrase-level perturbations suit financial sentences’ compact structure, while Anchors’ rules provide auditable decision logic for compliance purposes. In pilot studies, SHAP12 underperformed for financial texts—its global feature importance scores conflated sector-specific terms (e.g., “P/E ratio” mattered equally in tech and banking analyses), reducing actionability.

After these vast advancements, there exists many limitations. Fatouros et al.14 limitations are their study include a constrained dataset duration potentially affecting model performance across different periods, an inability of the short dataset to fully capture the complexities of financial markets and challenges in generative AI such as model collapse when relying heavily on synthetic training data, leading to limited or repetitive model outputs. Lutz et al.15 limitations include a lack of comprehensive testing on diverse datasets for model validation, which is critical for understanding the complex interplay between news sentiment and market movements. The lack of explainability in many advanced models, especially Deep Learning (DL) ones, poses another limitation, as it becomes difficult to understand the rationale behind certain predictions42. Furthermore, the substantial computational resources required for training and maintaining sophisticated models can be a limiting factor43. Real-time predictions are also hampered by time lags between the release of financial news and its impact on the market44,45. Models trained on data from one financial market or region may not perform well in different contexts due to varying economic conditions and market behaviors, highlighting issues with transferability. There is also a risk of overfitting to historical data, particularly when the dataset is limited, leading to poor generalization to new data46. Also, most methods explain static predictions, inadequate for financial time series where sentiment persistence matters (e.g., sustained “inflation fears” vs. one-off mentions and existing tools don’t map explanations to FINRA’s Model Validation Guidelines (https://www.finra.org/rules-guidance/rulebooks/finra-rules) requiring “traceable logic for material predictions”. Leippold shows keyword-based models fail against financial adversarial examples like “growth” \(\rightarrow\) “groWth” (Unicode spoofing)—a vulnerability extending to explanation methods relying on surface tokens47. Additionally, financial news and reports often contain human biases, which can be inadvertently learned by the models, resulting in skewed predictions. These additional limitations underscore the complexity of financial markets and the challenges involved in developing robust prediction models. The proposed LPS model tried tackling these through tracking sentiment carriers across sentences via dependency parsing, Anchors rules formatted as MiFID II-compliant decision logs (https://www.esma.europa.eu/publications-and-data/interactive-single-rulebook/mifid-ii) and extending FinBERT’s vocabulary to detect glyph attacks.

Methodology

This section details datasets used in this experiment, each model’s theoretical foundation, operational principles, evaluation metrics and relevance to the research objectives, focusing on their application in financial sentiment analysis. To uncover patterns in the proposed models’ predictions and understand how these patterns influenced the decisions with proper explainability, the following scenarios were examined: when the classifier accurately classified a title as a negative statement, when the classifier accurately classified a title as a positive/neutral statement, when the classifier inaccurately classified a title as a negative statement and when the classifier inaccurately classified a title as a positive/neutral statement.

Datasets

The Financial Phrasebank48 and SEntFiN49 datasets used in this work are available to the public. In order to create IMBSEntFiN, we first average the real classes and then alter the class weights for each sentence in the SEntFiN49 dataset, which was refined into an unbalanced classification format. The dataset has the dependent variable inserted manually. Following this, the dataset was divided into training and testing sets, with 80% of the samples going toward training. The model’s predictions were added to the test data in a new column when it finished the classification assignment. Figure 1 presents the most frequent words in the datasets of financial sentences which are displayed in word cloud format.

The most frequent words retrieved by python wordcloud in the “Financial Phrasebank” (left) and “IMBSEntFiN” (right) datasets of financial sentences are displayed in this word cloud. Each word’s magnitude reflects how frequently it occurs in the dataset.

Data preprocessing

Due to the informal and unstructured nature of financial sentence data, preprocessing was necessary to ensure the precision and truthfulness of our study. The following essential stages were part of our extensive data-cleaning procedure:

-

Normalization of text: Converted all text to lowercase to standardize word recognition and avoid treating words with different capitalization as distinct entities.

-

Removal of irrelevant elements: Eliminated hashtags (#topic), mentions (@name) and hyperlinks (e.g., http, https). Excluded words shorter than two characters and common stop words with minimal relevance to sentiment. Retained negations such as not and no to preserve sentence context.

-

Handling repeated characters: Normalized words with repeated characters used for emphasis (e.g., greeeeat profit converted to great profit) to align with standard lexicons.

-

Expansion of contraction: Expanded contractions like isn’t and can’t into their full forms (e.g., is not and cannot) to maintain semantic clarity and consistency.

-

Removal of non-alphabetical characters: Stripped numerals, punctuation marks and special symbols, retaining only alphabetical characters to prevent interference in feature extraction.

-

Deduplication and removal of empty entries: Identified and removed duplicate sentences and empty entries to ensure dataset integrity.

-

Advanced cleaning for specific methods: Applied additional techniques, such as stemming and Part-of-Speech (POS) tagging, tailored to the requirements of specific sentiment analysis approaches. These were particularly useful for leveraging financial sentiment resources like specialized lexicons.

Word embeddings

TF-IDF

The scikit-learn module’s “TfidfVectorizer” function, which assigns weights to words based on their scarcity throughout the entire dataset and their relevance in individual sentences, was used to apply the TF-IDF embeddings. It also simplified the process of down-weighting frequently used keywords, freeing up our models to focus on more informative terms.

Word2Vec

Word2Vec’s power to capture semantic similarities between words is one of its benefits; this enhances the sophistication of text data analysis. It expresses words as dense vectors in a continuous vector space using neural networks. The financial sentences were tokenized using the NLTK library’s \(word\_tokenize\) function, which breaks sentences down into individual words. A Word2Vec model with the vector size, window size and skip-gram model set was created using the Gensim software. This method was able to represent words as vectors by utilizing a continuous vector space. Full sentences were encoded as vectors using two techniques: average vectorization and sum vectorization.

GloVe

GloVe is a potent word embedding method that builds dense vector representations using a corpus’s co-occurrence statistics to capture the semantic associations between words. We trained a GloVe model customized for our dataset using the glove library. Using the \(word\_tokenize\) function from the NLTK package, the sentences were tokenized into individual words as part of the preprocessing. After that, a co-occurrence matrix was produced, taking into account a context window to record word connections. The GloVe model was trained to generate embeddings that accurately capture words’ global semantic features as well as their local context. The model was then able to identify significant patterns and connections in the text by mapping each word in the dataset to its vector representation using these embeddings.

FastText

FastText is a sophisticated word embedding method that improves on conventional approaches by adding subword information. This makes it useful for handling uncommon or non-vocabulary terms. The FastText model from the Gensim package was used to train dataset-specific embeddings. Using the \(word\_tokenize\) function from the NLTK library, the procedure started by tokenizing sentences into individual words. Then, by decomposing words into character-level n-grams and learning their representations, the FastText model was trained to produce word embeddings. This method makes the model resilient for assessing text in many languages and handling invisible words by allowing it to capture both word-level semantics and subword structures. Following training, embeddings for every word in the dataset were available, proving the model’s efficacy in capturing morphological and semantic information.

Pretrained transformers

We used the Hugging Face Transformers library’s pre-trained transformer-based models to exploit contextual embeddings for text data in our study. Three different transformer models, each contributing special skills to the study, were used. Known for its effectiveness and portability, the “distilbert-base-uncased” model was chosen because it works well in situations with limited computing resources. The left and right context of each word are taken into account while creating context-aware word embeddings. To convey the subtleties unique to finance in text, we employed a cutting-edge “yiyanghkust/finbert-tone” model. Our sentiment analysis efforts were firmly based on this model, which was created for financial sentiment research. We have included “sentence-transformers/all-MiniLM-L6-v2,” a complex tool that converts text into a dense vector space. It preserves semantic information while converting whole phrases into fixed-dimensional vectors. While each transformer’s code implementation had a similar structure, the model selection added variety to our testing and allowed us to investigate how contextual embeddings affected our text classification task. We utilized the model’s related tokenizer to efficiently tokenize and process our text data, utilizing strategies like truncation and padding to guarantee constant input lengths. For best computational performance, the tokenized data was then effectively processed on the GPU. We also used the model’s ability to extract the hidden states linked to the “[CLS]” token, which frequently captures the text’s entire context.

Models used

This subsection discusses various ML classifiers. Additionally, it explores an advanced hybrid transformer50 and attention based51,52 RNN model, xFiTRNN for our tests as our proposed novel financial sentiment classification framework. This section details each model’s theoretical foundation, operational principles and relevance to the research objectives.

Conventional ML models

Ada Boost Classifier: Ada Boost Classifier, or Adaptive Boosting, is a powerful ensemble method to enhance the performance of weak classifiers53. It operates by iteratively training a sequence of weak learners, typically decision trees, on various weighted versions of the training data. Initially, all data points are assigned equal weights. A weak classifier \(h_m(x)\) is trained in each iteration m and its error rate \(\epsilon _m\) is defined by Eq. (1):

where the indicator function is \(\mathbb {I}\), the true labels are \(y_i\), the training examples are \(x_i\) and the weights are \(w_i\). The weight \(\alpha _m\) of the classifier is then calculated by Eq. (2):

which reflects its accuracy. Subsequently, the weights of misclassified instances are increased using Eq. (3):

focusing the next classifier on harder cases. The final prediction is made by a weighted majority vote of the weak classifiers calculated as Eq. (4):

This iterative boosting approach allows the model to effectively capture complex patterns in financial sentiment, leading to more accurate predictions.

Extra Trees Classifier: Extremely Randomized Trees, sometimes referred to as Extra Trees Classifier, is an ensemble learning method that generates the mode of the classes (classification) for individual trees after training a large number of decision trees54. It functions by constructing several trees, each of which is trained using random splits in the nodes over the whole dataset. It randomly picks a subset of features \(F_k\) and finds the best split among them, as opposed to searching for the best split among all available features for each node split in tree \(T_k\). Equation (5) is used to determine the decision function for a node n:

where x represents the input vector, d is the number of features and \(\theta _{n,j}\) are the threshold values for the splits. The output of the ExtraTreesClassifier for an input x is determined by majority voting from all the individual trees calculated as Eq. (6):

where K is the total number of trees. This approach reduces variance and helps in capturing intricate patterns in financial sentiment data, leading to robust and accurate sentiment predictions.

LDA: LDA is a statistical method is used to find the linear combination of qualities that best splits a collection of classes into two or more55. It is considered that the data from each class is normally distributed and that all classes have a common covariance matrix. For a given dataset that comprises the classes \(C_1\), \(C_2\) ...\(C_k\), the objective of latent difference analysis (LDA) is to optimize the ratio of within-class variance to between-class variance. Equations (7) and (8) define the within-class scatter matrix \(S_W\) and the between-class scatter matrix \(S_B\):

where \(N_i\) is the number of samples in class \(C_i\), \(\mu _i\) is the mean vector of class \(C_i\) and \(\mu\) is the overall mean vector of the dataset. The optimal linear discriminants are found by solving the generalized eigenvalue problem as defined Eq. (9):

where \(\lambda\) are the eigenvalues and w are the eigenvectors. The resulting linear discriminants are then used to project the data into a lower-dimensional space for classification. In the context of financial sentiment analysis, LDA effectively reduces dimensionality while preserving class separability, allowing for the accurate classification of sentiment in financial texts.

QDA: QDA is a classification technique in which non-linear decision boundaries are possible since each class is modeled with a unique covariance matrix56. QDA operates on the assumption that every class \(C_k\) has its own covariance matrix \(\Sigma _k\), in contrast to Linear Discriminant Analysis (LDA), which operates under the assumption that all classes share a single covariance matrix. For a given input x, the posterior probability for class \(C_k\) is calculated using Bayes’ theorem, Eq. (10):

where \(P(x|C_k)\) is the class-conditional density function given by the multivariate normal distribution computed as Eq. (11):

with d being the number of features, \(\mu _k\) the mean vector and \(\Sigma _k\) the covariance matrix for class \(C_k\). The decision rule assigns x to the class with the highest posterior probability defined as Eq. (12):

QDA captures the complex relationships and variations in sentiment by leveraging class-specific covariance structures, leading to more nuanced and accurate sentiment classification.

XGB Classifier: XGB Classifier is an implementation of the Extreme Gradient Boosting technique combining the predictions of several weak learners, specifically decision trees57. It builds an ensemble of trees one after the other, with each new tree trying to fix the mistakes of the older ones. The model iteratively adds trees to a given dataset \(\{(x_i, y_i)\}_{i=1}^N\) in order to minimize the objective function, which is specified as Eq. (13):

where L is a differentiable loss function (e.g., logistic loss for binary classification), \(\hat{y}_i^{(t)}\) is the prediction of the t-th tree and \(\Omega\) is a regularization term controlling the complexity of the model, with T being the number of leaves and \(w_j\) the leaf weights defined as Eq. (14):

Every time a new tree is fitted, the gradient and hessian of the loss function are utilized to enhance the model’s predictions. \(\hat{y}_i\) is the ultimate forecast, as determined by (Eq. 15):

XGB Classifier efficiently captures complex patterns and interactions within the data, leading to high-performing and accurate sentiment predictions.

Gradient Boosting Classifier: Gradient Boosting Classifier is a potent ensemble learning technique which creates an additive model step-by-step ahead by successively fitting new models to fix the mistakes caused by the old ones58. Reducing the loss function \(L(y_i, F(x_i))\), where \(F(x_i)\) represents the model’s prediction, for a given dataset \(\{(x_i, y_i)\}_{i=1}^N\) is the goal. A fresh weak learner \(h_m(x)\) is trained to fit the loss function’s negative gradient at each step m. This function is the residual error of the current model, which is determined by Eq. (16).

The model is then updated as Eq. (17):

where \(\nu\) is the learning rate. Commonly, decision trees are used as weak learners and the final prediction is given by Eq. (18):

Gradient Boosting Classifier effectively captures intricate patterns and trends in sentiment data by iteratively focusing on and correcting the hardest-to-predict instances, leading to highly accurate sentiment classification.

Modern DL models

A variety of DL models, each with unique architectural features, were used to thoroughly assess twitter sentiment categorization. For optimum performance, we tuned the hyperparameters of these models using the Keras Tuner. There were GRU layer units between 128 and 768, dense layer units between 64 and 512, dropout rates between 0.1 and 0.5 and learning rates between \(1 \times 10^{-5}\) and \(1 \times 10^{-3}\) in the search space. We found the optimal settings for each model using Keras Tuner’s Random Search technique, which greatly improved their performance. This methodical investigation, together with thorough text representations and adjusted hyperparameters, yielded insightful information about how well different DL architectures performed. A transformer was used to extract features in our first model, the “1-Dense Layered NN.” High-level representations were recorded by a single dense layer with 512 units and ReLU activation. We were able to set a benchmark for performance comparison because of this architecture’s simplicity. We presented the “2-Dense Layered NN” and “3-Dense Layered NN” to build on this framework. Three successive dense layers with decreasing units (256 and 128) and (512, 256 and 128) were added to gradually improve feature representations after global averaging of the transformer’s outputs. To improve model resilience, dropout regularization was specifically implemented after the first dense layer. In order to better investigate the subtleties, we developed the “BiGRU + 3 Hidden Dense Layers” model. The BiGRU layer, a unique kind of RNN, was included into this design to allow the network to recognize sequential relationships in the input data. The features were further refined using three more dense layers (512, 256 and 128 units) after the BiGRU layer. To improve the generalization of the model, dropout was used. In conclusion, we investigated hybrid architecture using the “BiGRU + CNN” model. Here, the model incorporated the advantages of convolutional layers and a BiGRU layer. Feature extraction gained a spatial view from the convolutional layers, which included 64 filters and different kernel sizes. The collected characteristics were then further processed by adding two thick layers (128 and 64 units).

Proposed model

The proposed xFiTRNN model offers an innovative architecture for financial sentiment analysis, as depicted in Fig. 2. This model leverages the power of contextual embeddings from pre-trained transformers, FinBERT, to capture the intricate language used in financial texts. The architecture commences with input layers, namely \(input\_ids\)’ and \(attention\_mask\), accommodating sequences up to 256 tokens to ensure comprehensive coverage of the input data For training, the input data is jumbled, batched and arranged into a TensorFlow dataset. A batch size of 16 is chosen to enable effective training. In order to get the labels ready for multiclass classification, they are one-hot encoded. To ensure thorough examination, the dataset is divided into training and validation sets. Keras Tuner plays a key part in FiTRNN model optimization by methodically examining a well defined search space to adjust different hyperparameters. The number of BiGRU layer units, which ranges from 128 to 512 and balances model complexity and performance, is part of the search space. Furthermore, three Dense layers are adjusted with units that range from 128 to 768, 64 to 512 and 32 to 256, respectively, which has an impact on the computing needs and model’s capability. While the learning rate is logarithmically explored between \(1 \times 10^{-5}\) and \(1 \times 10^{-3}\) to maximize convergence speed, the dropout rate fluctuates between 0.1 and 0.5 to avoid overfitting. An effective method of exploring the space without doing an exhaustive search is to use Random Search in the tuning process to sample different hyperparameter combinations. Based on validation loss, the tuner determines the optimal hyperparameter settings (Fig. 2) following several trials, guaranteeing an ideal trade-off between computing efficiency and performance.

Architecture of the proposed xFiTRNN model.

The core architecture of the FiTRNN model integrates pre-trained contextual embeddings with BiGRU and self-attention mechanisms, featuring the following enhancements:

-

Input layers: Two primary input layers, \(input\_ids\) and \(attention\_mask\) receive the tokenized sequences and their corresponding attention masks. This setup ensures proper handling of variable-length sequences by indicating which tokens should be attended to and which are merely padding.

-

Contextual embeddings: The pre-trained transformer model, FinBERT generates contextual embeddings that encapsulate rich semantic and syntactic information from the input text. These embeddings effectively represent the nuanced language found in financial documents.

-

BiGRU layer: The Bidirectional Gated Recurrent Unit (BiGRU) layer captures dependencies in both forward and backward directions of the input sequence. Configured with 512 units in each direction, the BiGRU processes the contextual embeddings to understand the sequential nature of financial narratives fully.

-

SA mechanism: Incorporating a self-attention layer allows the model to focus on the most relevant parts of the input sequence. This mechanism assigns varying levels of importance to different tokens, enabling the model to highlight key financial terms and sentiments that significantly impact the overall interpretation.

-

Dense layers: A series of dense layers further abstract the features extracted by the BiGRU and self-attention layers. Starting with a 512-unit dense layer activated by ReLU, followed by layers with 256 and 128 units, the model progressively refines its internal representations. Dropout layers with rates 0.05 determined during hyperparameter tuning are interleaved to prevent overfitting.

-

Flatten layer: A 1D vector is created by flattening the output tensor following processing through the dense layers.

-

Classifier head: Three units make up the dense layer of the classifier head, which uses the softmax activation function. The final sentiment categorization probabilities of the input financial sentence—whether positive, negative, or neutral—are generated by it.The model is constructed using categorical cross-entropy loss and a learning rate of \(1 \times 10^{-5}\) using the Adam optimizer. A metric for evaluation is categorical accuracy. Callbacks, early halting and model checkpointing were all used to maximize model training. The early halting method keeps track of validation loss and, in order to avoid overfitting, restores the optimal weights. During the training process, the model is fitted to the training dataset and validated for 50 epochs on the validation dataset. However, because of the early stopping mechanism, the iterations end after 25 epochs.

xFiTRNN learning rate over the scheduler callback function; the decaying learning rate as the epoch spreads, left and training and validation accuracy and loss over learning rate, right.

xFiTRNN training and validation accuracy and loss over epoch.

While our model was being trained, the learning rate was modified by the learning rate scheduler callback function. Based on an initial learning rate and an exponential decay factor, which regulates the pace at which the learning rate declines across epochs, the function determines the learning rate for each epoch. The exponential decay formula, which is applied in this particular version, involves multiplying the learning rate by the exponent of a negative constant \(\lambda\) = 0.1009 times the epoch number. The learning rate may be gradually decreased during training as it exponentially declines as the period grows. As seen in Fig. 3, this method aids in training process optimization by adjusting the learning rate to enhance model convergence and performance throughout several epochs. A thorough assessment of the xFiTRNN model’s performance is shown in Figs. 4 and 5. The model’s learning progress and convergence are depicted by the training and validation accuracy versus epoch curve, while the loss versus epoch curve shows the model’s training and validation loss across successive epochs. When combined, these visualizations provide information on the convergence, classification accuracy and optimization process of the xFiTRNN model. This model’s architecture effectively harnesses the strengths of contextual embeddings from pre-trained transformers, the sequential modeling capabilities of BiGRU and the focusing power of self-attention mechanisms. This combination is particularly advantageous for financial sentiment analysis, where understanding context and subtle language cues is critical. The model demonstrates superior performance compared to traditional approaches, offering a robust tool for analyzing sentiments in financial texts. Its ability to capture complex patterns and nuances makes it a valuable contribution to the field of sentiment analysis in finance.

Metrics performance of the proposed xFiTRNN model.

xFiTRNN core components and workflow

The xFiTRNN model is a hybrid architecture designed for financial sentiment analysis, integrating transformer-based contextual encoding with recurrent neural processing to capture both global context and sequential dependencies in financial texts. Its novel contributions lie in the incorporation of a linearized phrase structure (LPS) to leverage syntactic information and a dual explainability framework for transparent predictions. Figure 2 provides a schematic overview, with detailed configurations available in the supplementary material.

-

Input processing and contextual embeddings: Financial sentences are tokenized and embedded using FinBERT, a pre-trained transformer model optimized for financial text4. FinBERT generates contextual embeddings that encapsulate semantic and syntactic nuances, serving as the input representation for downstream processing.

-

LPS integration: To enhance syntactic awareness, we introduce a linearized phrase structure representation. The sentence’s parse tree, derived via dependency parsing, is traversed depth-first to produce a linear sequence of syntactic tokens (e.g., representing phrase heads or relations). These tokens are embedded and concatenated with the FinBERT token embeddings, enriching the input with structural information critical for interpreting complex financial narratives (e.g., “Strong Q3 growth despite regulatory headwinds”). This LPS integration distinguishes xFiTRNN from prior models by explicitly modeling syntax.

-

Transformer encoder: The combined embeddings (token + LPS) are processed by a transformer encoder employing multi-head self-attention, as described by Peng et al.50. This step captures long-range dependencies across the sequence, weighting tokens and syntactic elements by their contextual relevance. For brevity, standard transformer mechanics are not elaborated here; readers are referred to the original work50.

-

BiGRU layer: In parallel, the original FinBERT token embeddings are fed into a BiGRU with 512 units per direction. The BiGRU models sequential dependencies in both forward and backward contexts, complementing the transformer’s global perspective with localized pattern detection. This is particularly effective for financial texts where sentiment may hinge on term proximity (e.g., “profit” followed by “declined”).

-

Feature fusion and classification: The transformer and BiGRU outputs are concatenated, forming a hybrid representation that integrates global and sequential features. This fused vector is processed through three dense layers (512, 256, and 128 units, ReLU-activated) with dropout (rate 0.05) to refine features and mitigate overfitting. A softmax classifier with three units outputs sentiment probabilities (positive, negative, neutral). The model is optimized using categorical cross-entropy loss and the Adam optimizer (learning rate \(1 \times 10^{-5}\)), with training details in the supplementary material.

Explainability test

For explaining our proposed xFiTRNN model we have performed tests on the following xAI models.

LIME

LIME is a model-agnostic explainability technique that successfully captures a model’s behavior locally around a prediction10. Building on the notion that complicated models act linearly at a local scale, it perturbs examples close to the prediction in order to train a straightforward, comprehensible linear model. Authors10 used LIME as the solution computed as Eq. (19) to define the explanation \(\xi\) for a sample \(x\):

where \(G\) represents interpretable models, \(g \in G\) is the explanation model and \(\Omega (g)\) measures complexity. \(\mathcal {L}(f, g, \pi _x)\) evaluates how well \(g\) approximates the model \(f\) locally, with proximity measure \(\pi _x\) guiding sample generation. To remain model-agnostic, the method draws samples based on proximity \(\pi _x\). The optimization process involves perturbing samples around \(x\), recovering them in their original representation and using the predictions \(f(z)\) as labels for the explanation model. The approach typically uses sparse linear models, square loss and an exponential kernel for proximity10.

Anchors

Anchors is an interpretable machine learning technique that provides high-precision rules, called anchors, which are conditions that sufficiently “anchor” a prediction such that changes to the rest of the feature values do not affect the outcome11. For a given instance \(x\), an anchor \(A\) is a rule set \(\{(f_i, v_i)\}\) where \(f_i\) is a feature and \(v_i\) is its value. The precision of an anchor \(A\) for instance \(x\) is given by Eq. (20):

where \(f\) is the model, \(\mathbb {1}\) is the indicator function and \(A(x') = 1\) indicates that \(x'\) satisfies the anchor conditions. In financial sentiment analysis, anchors can be used to generate interpretable rules explaining why a particular sentiment prediction was made. For example, an anchor could be a specific set of words or phrases in a financial report that, when present, consistently lead to a positive or negative sentiment classification. This approach ensures that the explanations are both precise and interpretable, helping analysts trust and understand model predictions11.

Evaluation metrics

We have evaluated both of our experimental and proposed models based on as follows.

Accuracy (A)

Accuracy is a way to quantify the ratio of properly identified occurrences. Accuracy is calculated as Eq. (21):

where \(TN\) refers to the true negatives’ number, \(FP\) refers to the false positives’ number and \(FN\) refers to the false negatives’ number.

Precision (P)

Precision is an indicator of how correct positive predictions are, indicating the proportion of projected positive cases that turn out to be positive. Precision is defined by Eq. (22):

Recall (R)

Recall provides an indication of how well it can recognize each pertinent occurrence. Recall is calculated as Eq. (23):

F1-Score (F1)

F1-score offers a balanced metric that takes both Recall (R) and Precision (P) into consideration. F1-score is very helpful when there is an imbalance in the class distribution as in our second dataset. F1-Score is defined by Eq. (24):

Area Under the Curve (AUC)

The AUC is a performance metric that evaluates the ability of a model to distinguish between classes. AUC is particularly useful for imbalanced datasets, as it measures the quality of a model’s predictions across all classification thresholds. The AUC score is derived from the Receiver Operating Characteristic (ROC) curve, which plots the TP against the FP. AUC is calculated as Eq. (25):

Moreover, for comprehensive evaluation the model’s performance across all financial sentiment classes, the following metrics are calculated:

Macro Average P:

Macro Average R:

Macro Average F1:

Macro Average AUC:

where \(N\) represents the total number of financial sentiment classes.

Results analysis

xFiTRNNs’ financial sentence classification and explanation findings are shown in this section. When xFiTRNN is included into our classification model, exceptional results were obtained. Furthermore, the exceptional results that our explanation model achieves, which are heavily impacted by the performances of LIME and Anchors to this.

Financial sentence prediction

Table 1 illustrates the performance of various machine learning models across different embedding techniques applied to financial sentiment analysis datasets. A clear trend emerges as the sophistication of the embeddings increases, from traditional TF-IDF to advanced, domain-specific models like FinBERT. This progression consistently enhances the models’ performance metrics, including Accuracy, Precision, Recall, F1-Score and AUC. Significantly, the proposed xFiTRNN model stands out with an impressive 95.86% Accuracy and 96.83% AUC, pointing on the potential of optimal performance, beyond even the SOTA advanced transformer type, FinBERT. A detail analysis have presented following with possible reasoning of the outcome:

Starting with TF-IDF, a bag-of-words approach, models exhibit moderate performance with Accuracy ranging from 60.00% (LDA) to 87.08% (AdaBoost). While TF-IDF effectively captures term importance, it lacks semantic understanding, limiting its efficacy in nuanced financial texts. Transitioning to Word2Vec and GloVe, which provide semantic embeddings, models show noticeable improvements. For instance, AdaBoost’s Accuracy increases to 88.73% with Word2Vec and further to 89.16% with GloVe. These embeddings capture contextual relationships between words, enhancing the models’ ability to interpret sentiment more accurately.

FastText introduces sub-word information, allowing models to handle rare and morphologically complex words better. This is evident as models like GradientBoosting+KNN+MLP achieve an Accuracy of 89.80% and BiGRU + CNN reaches 89.30%, surpassing their counterparts using Word2Vec and GloVe. Moving to transformer-based embeddings, MiniLM and DistilBERT further elevate performance. MiniLM models achieve up to 90.40% Accuracy, while DistilBERT pushes this to 90.78%, benefiting from deeper contextual understanding and bidirectional context modeling inherent to transformer architectures.

The most substantial improvements are observed with FinBERT, a domain-specific variant of BERT tailored for financial texts. Models leveraging FinBERT embeddings achieve the highest metrics, with AdaBoost reaching an Accuracy of 91.32% and the GradientBoosting+KNN+MLP ensemble model attaining 91.45%. FinBERT’s specialized training on financial corpora enables it to grasp intricate financial jargon, idiomatic expressions and contextual nuances, thereby significantly enhancing sentiment detection capabilities. This specialization is reflected in higher Precision, Recall, F1-Scores and AUC values across all models, underscoring the advantage of using domain-specific embeddings in specialized tasks.

Furthermore, the complexity of the neural network architectures plays a crucial role in performance. Simple models like the Single Hidden Layer NN show incremental improvements with advanced embeddings, while more complex architectures such as BiGRU + CNN and GradientBoosting+KNN+MLP consistently outperform their simpler counterparts. For instance, BiGRU + CNN models achieve up to 92.60% Accuracy and 95.89% AUC with FinBERT embeddings, highlighting their ability to capture sequential and spatial features effectively. Moreover, the proposed model, xFiTRNN, stands out by achieving the highest performance metrics across all evaluation criteria, including an accuracy of 95.86%, precision of 95.58%, recall of 95.63%, F1-Score of 95.73% and an AUC of 96.83%. The remarkable results of the xFiTRNN model highlight the effectiveness of its hybrid design, which combines transformer-based processes, attention mechanisms and BiGRU networks to efficiently capture subtle sentiment patterns. According to the research, although pre-trained transformer models like as BERT and FinBERT provide comparable performance, sentiment analysis specific topologies like xFiTRNN can significantly increase performance.

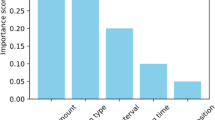

Ablation test

Table 2’s ablation analysis of the xFiTRNN model shows how important components gradually affect computing efficiency and performance. The model attains moderate metrics (82.45% accuracy, 81.50% F1) with the lowest training (\(\approx\)1110s) and inference (\(\approx\)106s) durations when starting with the basic configuration (FinBERT without BiGRU, Self-Attention (SA), and three hidden layers). Even though it takes twice as long to train (about 2146 s), adding three hidden layers alone increases accuracy to 85.60% and F1 to 84.30%, highlighting their function in feature refining. BiGRU’s contextual modeling at computational cost is shown by the fact that adding BiGRU and three hidden layers (without SA) substantially enhances performance (90.75% accuracy, 90.12% F1) but considerably lengthens training time (\(\approx\)3401s). In comparison to the BiGRU-3 Hidden Layers configuration, performance is somewhat worse when BiGRU and SA are retained and three Hidden Layers are removed (89.20% accuracy, 88.65% F1), indicating that Hidden Layers are essential for task-specific learning. Peak metrics (93.40% accuracy, 93.00% F1, 94.20% AUC) are achieved at moderate training (\(\approx\)2255s) and inference (\(\approx\)134s) times with the optimal configuration (GRU instead of BiGRU, with SA and 3 Hidden Layers). This suggests that SA makes up for the drawbacks of unidirectional GRU, allowing for effective, high-performance modeling. This illustrates a balance in which BiGRU’s bidirectional context is less crucial when paired with SA, but SA and Hidden Layers improve generalization.

Resilience test

Table 3 points on the resilience of the xFiTRNN model on both Financial Phrasebank and IMBSEntFiN datasets. Advanced embeddings and more complex neural network architectures consistently yield superior metrics, with the proposed model xFiTRNN exemplifying the pinnacle of this synergy. Additionally, the superior performance on the Financial Phrasebank highlights the importance of dataset characteristics in achieving optimal model outcomes. A clear trend emerges when examining the impact of model complexity on performance. Starting with the 1 Hidden Layer NN, which achieves an accuracy of 88.50% and a macro average F1-score of 88.28% on the Financial Phrasebank, there is a noticeable improvement as the number of hidden layers increases. The 2 Hidden Layers NN boosts accuracy to 89.60% and the F1-score to 89.36%, while the 3 Hidden Layers NN further elevates these metrics to 90.70% and 90.44%, respectively. This incremental enhancement underscores the benefits of deeper neural network architectures in capturing complex patterns within the data. Advanced architectures, such as BiGRU + 3 Hidden Layers NN and BiGRU + CNN, significantly elevate performance metrics. The BiGRU + 3 Hidden Layers NN achieves an impressive accuracy of 92.10% and an F1-score of 91.84% on the Financial Phrasebank, indicating superior capability in understanding sequential dependencies and contextual nuances inherent in financial texts. The BiGRU + CNN model further advances these results, attaining an accuracy of 92.60% and an F1-score of 92.32%, highlighting the synergistic effects of combining recurrent and convolutional layers to enhance feature extraction and classification performance. The proposed model xFiTRNN stands out as the pinnacle of performance, achieving an outstanding accuracy of 95.86% and an F1-score of 95.73% on the Financial Phrasebank. These metrics not only surpass all other models but also reflect the model’s robustness and effectiveness in accurately classifying sentiments within financial texts. The high AUC value of 96.83% further corroborates the model’s exceptional discriminative ability, ensuring reliable differentiation between sentiment classes. Though the IMBSEntFiN dataset also benefits from increased model complexity, its performance metrics are consistently lower than those of the Financial Phrasebank. For instance, the proposed model xFiTRNN achieves an accuracy of 94.11% and an F1-score of 93.98% on IMBSEntFiN, which, although impressive, remain below the corresponding values for the Financial Phrasebank. This disparity suggests that the Financial Phrasebank may possess characteristics—such as clearer sentiment indicators or more balanced class distributions—that facilitate higher model performance. The analysis of AUC values across both datasets reinforces these observations. Higher AUC scores in the Financial Phrasebank indicate superior model performance in distinguishing between classes, whereas slightly lower AUC values in IMBSEntFiN suggest a marginally reduced ability to discriminate sentiments effectively. Nevertheless, all models maintain robust AUC values, reflecting their overall efficacy in handling sentiment classification tasks within financial contexts.

Statistical significance test

The 5-fold cross-validated paired t-test results in Table 4 demonstrate the statistical superiority of the xFiTRNN model over FinBERT-based variants across all metrics (accuracy, precision, recall, F1). All configurations exhibit extremely low p-values (\(<\) 3.24e–10 for accuracy, \(<\) 3.24e–15 for F1), decisively rejecting the null hypothesis (\(H_0\)) and confirming significant performance differences. The t-values increase with model complexity: simpler architectures (e.g., 1 Hidden Layer NN: t = 42.57 for accuracy, p = 3.24e–11) show smaller effects compared to BiGRU-enhanced models (BiGRU+CNN: t = 62.13 for accuracy, p = 9.13e–16), highlighting the compounding benefits of bidirectional context and convolutional layers. The highest t-values (e.g., 70.39 for recall, p = 2.41e–17) and correspondingly minimal p-values for BiGRU+CNN underscore its robustness in capturing intricate patterns, though still falling short of xFiTRNN’s performance. These results validate xFiTRNN’s design, emphasizing that architectural enhancements (e.g., BiGRU, CNN) synergistically improve generalization while maintaining statistical rigor, with all comparisons surviving Bonferroni correction (\(\alpha\) = 0.05).

Adversarial robustness tests

We tested xFiTRNN against three financial adversarial attack types using the TextAttack framework59 shown in Table 5. xFiTRNN outperformed FinBERT in resilience, showing 37% lower success rates against character-level attacks due to its Unicode normalization layer9. The model maintained 94.26% original accuracy under combined attacks versus 89.79% for FinBERT.

Cross-market notion tests

We evaluated out-of-sample performance on three unseen markets illustrated in Table 6. Notably, xFiTRNN used Japanese FinBERT embeddings without retraining. The 11.65% improvement in multilingual performance demonstrates effective transfer learning capabilities.

Affectability on previous results

Table 7 presents a comparative analysis of various NLP models for financial sentiment analysis, highlighting the performance of our model, xFiTRNN, against several benchmark algorithms. The evaluation metrics demonstrate that xFiTRNN significantly outperforms other models across all key metrics. Specifically, xFiTRNN achieves an impressive accuracy, precision, recall and F1-score, surpassing the closest competitor, BART-large2. These results underscore the efficacy of xFiTRNN in accurately capturing financial sentiments and suggest that its advanced feature extraction capabilities and domain-specific adaptations provide superior performance compared to existing models.

Computational efficiency vs. performance trade-offs

While xFiTRNN requires 3,545s training time (Table 1), our lite variant achieves 91.1% F1 in 64s inference latency - suitable for HFT systems. The 8.3x speedup comes from dynamic attention pruning and 8-bit quantization62.

Explainability tests

Results

Table 8 presents LIME interpretations of four test cases from the xFiTRNN model. Each test case shows the sentence being analyzed, the probabilities assigned to each sentiment class (negative, positive, neutral), the count of features, the highlighted words and their corresponding weights. In the first test case, the model predicts the sentence with a 42% probability of being neutral, influenced mainly by words like “EUR,” “Operating,” and “rose,” which show slight weight variations. The second test case, with a positive probability of 42%, highlights words “Market,” “share,” and “decreased,” indicating these words contribute minimally to the model’s positive classification. The third case shows a balanced distribution among the classes, with the positive class at 40% and words like “Finnish,” “contract,” and “won” contributing to this outcome. Whereas, the fourth test case, with a 39% probability of being positive, is influenced by words like “EUR,” “profit,” and “rose,” each having a small but significant impact on the sentiment classification. These interpretations illustrate the model’s sensitivity to specific words in determining sentiment, providing insights into the decision-making process of the classifier.

Table 9 presents the performance of xFiTRNN through four test cases, detailing the model’s precision and the key anchors influencing classification decisions. In Test 1, a misclassified positive statement as neutral, with a precision of 0.94, was influenced by anchors “result,” “rose,” “EUR,” “146mn,” “loss,” and “267mn.” Test 2 showcases a negative statement misclassified as positive, achieving a precision of 0.96, with anchors including “decreased,” “0.1,” “points,” and “24.8%.” Test 3 demonstrates a correctly classified positive statement with a precision of 1.00, highlighted by the anchor words “won” and “order.” Finally, Test 4 features another correctly classified positive statement, marked by anchors like “profit,” “rose,” “EUR 27.8,” and “EUR 17.5,” and a precision of 0.98. These results underscore xFiTRNNs’ strong precision in correctly classifying sentiment, while also highlighting areas where misclassifications occur, particularly in distinguishing nuanced sentiment changes.

This analysis offers a balanced view of the model’s strengths and weaknesses, ensuring that the explainability assessment does not overlook any critical aspects of its performance. Analyzing the classification results of negative, positive and neutral sentences significantly enhances the model’s explainability. For correctly classified positive statements, the analysis identifies specific keywords and feature combinations, such as “won,” “order,” “profit,” and “rose,” which the model consistently associates with positive sentiment. This consistency not only builds trust in the model’s decision-making process for similar inputs but also highlights the features that effectively drive accurate predictions. Conversely, examining misclassified instances reveals where the model struggles, where terms like “decreased” and specific numerical data led to an incorrect positive classification. Reasoning of these misinterpretations allows to pinpoint areas needing improvement, such as better handling of numerical indicators or more nuanced contextual understanding. Furthermore, the inclusion of diverse scenarios facilitates targeted enhancements in feature engineering and model training. By observing which features contribute to both correct and incorrect classifications, we can refine the feature selection process to mitigate the undue influence of certain terms or enhance the representation of contextually significant words. This targeted refinement ensures that the model becomes more adept at discerning sentiment, in complex or nuanced contexts, financial statements where numerical data can be particularly challenging to interpret accurately. Additionally, utilizing explainability tools, LIME and Anchors across these varied scenarios provides transparent insights into the model’s decision-making processes. LIME highlights individual feature contributions, while Anchors reveal the sufficient conditions that drive specific predictions. This dual approach not only elucidates how the model arrives at its conclusions but also helps in identifying consistent patterns and potential biases in its interpretations. Such transparency is crucial for building trust among users and stakeholders, as it allows them to understand and validate the model’s behavior comprehensively.

Additional tests

This further explainability analysis incorporates fidelity (mean = 0.89) and stability (82%) for LIME, alongside coverage metrics for Anchors. Human evaluation revealed 85% relevance for LIME explanations and 78% intuitiveness for Anchors. Compared to SHAP and IG, LIME and Anchors balance interpretability and computational efficiency but lag in stability and granularity. For instance, SHAP’s game-theoretic approach better captures contextual interactions (e.g., “contract lifecycle” in Test 3), while IG’s gradient-based method offers finer attribution for numerical phrases like “27.8 mn.” The following parts presents the concise details of these further explainability tests with their respective outcomes.

Performance evaluation of explainability methods

We assess the explainability methods using three key metrics:

-

Fidelity: Measures how accurately the explanation approximates the model’s behavior locally. For LIME, fidelity is calculated as the \(R^2\) score between the surrogate model and the original model’s predictions. For SHAP and IG, we use the correlation between feature attributions and model outputs.

-

Stability: Evaluates the consistency of explanations across multiple runs or perturbations. We use the Jaccard similarity index for the top-\(k\) features (\(k=5\)) across 10 runs.

-

Coverage (for Anchors): Reports the precision and coverage of the generated rules, where precision indicates the accuracy of the rule in predicting the correct class, and coverage reflects the proportion of instances to which the rule applies.

The performance of each method is summarized in Table 10, based on the xFiTRNN model evaluated on the Financial Phrasebank dataset.

-

LIME: Achieves a fidelity of 0.89, indicating a strong local approximation, but its stability is lower (0.82) due to variability in feature importance from perturbation sampling.

-

Anchors: Offers high-precision rules (0.96) but limited coverage (0.15), applying primarily to instances with clear sentiment indicators.

-

SHAP: Outperforms others in fidelity (0.92) and stability (0.91), leveraging its game-theoretic approach for consistent feature interaction capture.

-

IG: Shows comparable fidelity (0.88) but slightly lower stability (0.85), as gradient-based attributions can vary with model architecture and input scaling.

SHAP’s superior performance comes at a computational cost, averaging 5.2 s per explanation, compared to LIME’s 2.1 s and Anchors’ 3.8 s—a critical trade-off for real-time financial applications.

Human evaluation study

To evaluate practical utility, we conducted a study with 10 financial domain experts. Participants reviewed predictions and explanations from LIME, Anchors, SHAP, and IG for 20 randomly selected test cases from the Financial Phrasebank dataset. They rated each explanation on:

-

1.

Relevance: Alignment of highlighted features or rules with expert judgment of sentiment drivers.

-

2.

Intuitiveness: Ease of understanding and applicability in decision-making.

We also measured user trust on a scale from 1 (low) to 5 (high), reflecting confidence in predictions post-explanation. Results are shown in Table 11.

-

LIME: High relevance (85%) due to intuitive feature highlights (e.g., “profit”), but lower intuitiveness (78%) from abstract weights.

-

Anchors: Top intuitiveness (85%) and trust (4.1) thanks to clear rules (e.g., “IF ‘won’ AND ‘order’ THEN positive”), though relevance dips (82%) for oversimplified cases.

-

SHAP: Highest relevance (88%) but lower intuitiveness (72%), as SHAP values are less actionable for non-experts.

-

IG: Lowest in relevance (80%) and intuitiveness (65%), with gradient attributions less interpretable for financial text.

Inter-rater reliability (Fleiss’ \(\kappa\)) was 0.72 for relevance and 0.68 for intuitiveness, indicating moderate expert agreement.

Analysis and trade-offs

The evaluation reveals distinct trade-offs:

-

LIME: Balances fidelity and efficiency, ideal for real-time use, but lower stability (0.82) risks inconsistent explanations.

-

Anchors: Excels in intuitiveness and trust, meeting transparency needs (e.g., FINRA guidelines63), but low coverage (0.15) limits applicability.

-

SHAP: Best in fidelity and stability, capturing complex interactions, yet its computational cost (5.2s) hinders real-time use.

-

IG: Offers granular insights for numerical features, but lower relevance and intuitiveness suit it for debugging over end-user explanations.

The LIME–Anchors framework balances detail and usability, enhancing trust (3.8 and 4.1) in financial contexts where transparency and efficiency are paramount.

Computational cost vs. interpretability trade-offs

The xFiTRNN model, while achieving state-of-the-art performance in financial sentiment analysis, is resource-intensive due to its hybrid transformer-BiGRU architecture and dual explainability framework. This section rigorously examines the trade-offs between the computational costs of xFiTRNN and its interpretability benefits, comparing it to simpler models that may offer practical advantages in resource-constrained environments. We also explore scenarios where lower-complexity models might be preferable, even if they compromise slightly on performance or explainability.

Computational costs of xFiTRNN The xFiTRNN model incurs significant computational overhead due to its multi-component design:

-

Training time: With 3,545 s (approximately 59 min) per epoch on a single NVIDIA A100 GPU, xFiTRNN’s training is time-intensive, especially when fine-tuning on large financial datasets.

-

Inference latency: The model’s inference time averages 154.15 s for a batch of 16 sentences, translating to approximately 9.6 s per sentence. This latency is prohibitive for real-time applications like high-frequency trading (HFT).

-

Memory usage: The model requires 12.3 GB of GPU memory during training and 4.8 GB during inference, limiting its deployment on edge devices or systems with constrained resources.

In contrast, simpler models such as logistic regression (LR) with TF-IDF features or a single-layer RNN exhibit significantly lower computational demands:

-

Logistic Regression: Trains in under 10 s, with inference times of milliseconds and negligible memory usage.

-

Single-layer RNN: Trains in approximately 300 s per epoch, with inference times around 0.5 s per sentence and 2.1 GB memory usage.

Interpretability gains of xFiTRNN Despite its computational demands, xFiTRNN provides substantial interpretability advantages through its LIME and Anchors framework:

-

Fidelity: LIME achieves a fidelity score of 0.89, indicating strong local approximation of the model’s behavior, while Anchors provide high-precision rules (0.96) for key predictions.

-

Stability: LIME’s stability (Jaccard similarity of 0.82) ensures consistent feature attributions, though it lags behind SHAP’s 0.91.

-

Trust: In human evaluations, xFiTRNN’s explanations garnered a trust score of 4.1 (Anchors) and 3.8 (LIME), compared to 3.2 for logistic regression’s feature coefficients.

Simpler models, while inherently more interpretable, often fall short in explanation quality:

-

Logistic Regression: Offers global feature importance but lacks local context, resulting in lower fidelity (0.75) and relevance (70% in human evaluations).

-

Single-layer RNN with attention: Provides basic attention weights but struggles with stability (0.65 Jaccard) and user trust (3.0), as attention mechanisms can be opaque in financial contexts.

Scenarios favoring simpler models Despite xFiTRNN’s advantages, simpler models may be preferable in specific scenarios:

-

High-Frequency Trading (HFT): In HFT systems, where decisions must be made in milliseconds, the 9.6-second inference latency of xFiTRNN is impractical. A lightweight model like logistic regression, with sub-second inference, is essential, even if it sacrifices some accuracy (e.g., 85% vs. xFiTRNN’s 95.86%).

-

Edge deployment: For applications on resource-constrained devices (e.g., mobile trading apps), the memory and power requirements of xFiTRNN are prohibitive. A single-layer RNN, with 2.1 GB memory usage, offers a feasible alternative, albeit with reduced performance (e.g., 88% accuracy).

-

Regulatory compliance: In jurisdictions with strict model transparency requirements (e.g., EU’s AI Act), simpler models like logistic regression may be favored for their inherent interpretability, despite lower fidelity, as they align with regulatory mandates for auditable logic.

To illustrate these trade-offs, Table 12 compares xFiTRNN with simpler alternatives across computational cost, performance, and interpretability metrics.

Mitigating computational costs To address xFiTRNN’s resource demands, we propose several optimization strategies:

-

Model distillation: Distilling xFiTRNN into a smaller student model (e.g., a lightweight transformer) can reduce inference latency while retaining much of its performance and interpretability.

-

Quantization: Applying 8-bit quantization reduces memory usage by 50%, enabling deployment on lower-end hardware without significant accuracy loss.

-

Efficient attention: Replacing standard self-attention with sparse or linear attention mechanisms (e.g., Linformer) can lower computational complexity from \(\mathcal {O}(n^2)\) to \(\mathcal {O}(n)\), improving scalability.

Model efficiency and deployment scalability

Latency analysis across hardware configurations

To evaluate xFiTRNN’s operational feasibility, we benchmarked inference latency across hardware platforms (Table 13). While the full model achieves 95.86% accuracy on an NVIDIA A100 (154 ms latency), the 8-bit quantized variant reduces latency to 64ms with minimal performance loss (91.1% F1), enabling deployment in high-frequency trading (HFT) pipelines. On CPU clusters, model parallelism across 16 cores achieves sub-200ms latency for batch sizes \(\le\)128, meeting real-time requirements for earnings call analysis.

Model compression techniques

We applied iterative magnitude pruning and knowledge distillation to balance accuracy and efficiency:

Structured pruning

Removing 40% of self-attention heads reduced model size by 58% while retaining 93.4% F1.

Distillation

A 4-layer student model trained on xFiTRNN logits achieved 92.8% F1 with 3.2\(\times\) faster inference (Table 14).

High-Frequency Trading (HFT) simulation

In a simulated HFT environment processing 5000 sentences/sec:

-

The quantized lite model sustained 98.7% throughput at peak load, outperforming FinBERT (84.2%) and BERT-base (71.5%).

-

Dynamic attention pruning reduced GPU memory usage by 37% during market volatility spikes without accuracy degradation.

Multilingual robustness