Abstract

Class imbalance in datasets often degrades the performance of classification models. Although the Synthetic Minority Over-sampling Technique (SMOTE) and its variants alleviate this issue by generating synthetic samples, they frequently overlook local density and distribution characteristics. Consequently, developing methods that incorporate local spatial information to synthesize samples that better preserve the original data distribution is critical for improving model robustness in class-imbalanced scenarios. To address this gap, we propose an enhanced SMOTE algorithm (ISMOTE), which modifies the spatial constraints for synthetic sample generation. Unlike SMOTE, the proposed method first generates a base sample between two original samples. Then the Euclidean distance between the two samples is multiplied by a random number to generate a random quantity. This random quantity is added or subtracted based on the distance between the base sample and the original samples, ensuring that new samples are generated around the two original samples. By adaptively expanding the synthetic sample generation space, ISMOTE effectively alleviates distortions in local data distribution and density. This study compared the ISMOTE algorithm with seven mainstream oversampling algorithms, using three classifiers on thirteen public datasets from the KEEL, UCI, and Kaggle databases. Comparative analysis of 2D and 3D scatter plots revealed that ISMOTE yields more realistic data distributions. Experimental results demonstrated relative improvements in classifier performance, with F1-score, G-mean, and AUC increasing by 13.07%, 16.55%, and 7.94%, respectively. Furthermore, ISMOTE’s parameter adaptability enables its application to multi-class imbalanced datasets.

Similar content being viewed by others

Introduction

Class imbalance is a prevalent issue in real-world datasets, characterized by a significant disparity in the number of samples across different categories. This phenomenon is ubiquitous across diverse domains, including medical disease diagnosis1,2, financial fraud detection3,4, software defect prediction5,6, and hardware fault detection7,8. In binary classification tasks, the imbalance ratio (IR)—defined as the ratio of minority-class to majority-class samples—can range from tens to hundreds in practical applications, presenting substantial challenges for conventional machine learning algorithms9. Traditional machine learning models trained on imbalanced datasets often exhibit a bias toward the majority class10, resulting in the misclassification of minority-class samples. Such errors can have critical real-world implications. For instance, in medical diagnostics, where cancer patients typically represent the minority class, a false negative (i.e., misclassifying a cancer patient as healthy) may delay life-saving interventions, with irreversible consequences. Thus, improving minority-class recognition accuracy is essential, underscoring the importance of research on class-imbalance mitigation.

Various techniques are currently available to address class imbalance11,12. These techniques are categorized into data-level and algorithm-level13. At the data level, methods include undersampling the majority class14,15, oversampling the minority class16,17, and hybrid sampling, which combines undersampling of the majority class with oversampling of the minority class18,19,20 to achieve class balance. At the algorithm level, machine learning algorithms are optimized to adapt to imbalanced data. The main methods include cost-sensitive learning21,22, threshold moving strategies23,24, ensemble learning25,26 and neural networks27,28. Compared with algorithm-level modifications, data-level techniques offer greater implementation flexibility and model independence, contributing to their wider practical adoption.

The Synthetic Minority Oversampling Technique (SMOTE) is one of the most common oversampling methods. Compared to other oversampling methods, SMOTE generates more diverse synthetic samples, which helps improve the generalization ability of models. Additionally, the generation logic of SMOTE is relatively simple and easy to implement, and it performs well in addressing class imbalance across various fields. Especially in small and medium-sized datasets, SMOTE is often more effective than other methods. Relevant studies29,30 indicate that SMOTE is one of the most widely used data imbalance handling techniques among oversampling methods. However, SMOTE’s linear interpolation mechanism for synthetic sample generation presents two inherent limitations: (1) in high-density regions of minority class samples, excessive synthetic instances may be produced, potentially inducing model overfitting; and (2) the linearly interpolated samples may deviate from the underlying data distribution. To address these issues, this study examines SMOTE’s sample generation mechanism and proposes an Improved SMOTE (ISMOTE) algorithm. ISMOTE extends the feasible solution space for synthetic sample generation, effectively mitigating both the density over-amplification problem and distributional distortion inherent in conventional SMOTE.

The remainder of this paper is structured as follows. Section 2 presents a comprehensive review of existing oversampling techniques. Section 3 details the fundamental principles and implementation procedures of the standard SMOTE algorithm, followed by a thorough presentation of our proposed Improved SMOTE (ISMOTE) algorithm, including its conceptual framework, algorithmic workflow, implementation steps, and formal pseudocode. Section 4 describes the experimental setup, including: (1) the thirteen benchmark datasets employed, (2) the evaluation metrics for classification performance assessment, (3) the experimental design methodology, and (4) a comprehensive analysis of the empirical results. Finally, Sect. 5 concludes the study with key findings and contributions.

The main contributions of this paper are summarized as follows:

Expansion of Sample Generation Space : ISMOTE modifies the sample generation conditions of the SMOTE algorithm, significantly expanding the space for generating new samples. Unlike SMOTE, which generates samples solely through linear interpolation, ISMOTE allows new samples to be generated not only between existing samples but also around them. This approach effectively mitigates the issue of generating excessive samples in high-density regions, thereby reducing the risk of overfitting and improving the quality of the generated data.

Improved Sample Distribution : ISMOTE introduces random quantities to dynamically adjust the positions of new samples. This ensures that the generated samples better align with the underlying distribution patterns of the original data. By enhancing the diversity and representativeness of the synthetic samples, ISMOTE improves the generalization capability of classifiers, particularly in imbalanced data scenarios.

Experimental Validation : Extensive experiments were conducted on multiple public datasets to validate the effectiveness of ISMOTE. The results demonstrate that ISMOTE consistently outperforms existing mainstream oversampling algorithms.

Related work

Oversampling techniques are widely used to address class imbalance by increasing the number of minority class samples, thereby improving classifier performance. These techniques can be categorized based on the data types they handle, including numerical data, categorical data, and image data31. This study focuses on categorical data, as it is prevalent in many real-world applications such as medical diagnosis, fraud detection, and software defect prediction. Compared to undersampling, oversampling is often preferred because it preserves the original data distribution and avoids information loss32,33.

Batista et al.34 proposed Random Oversampling (ROS), which duplicates minority class samples randomly to balance the dataset. While ROS is simple to implement, it often leads to overfitting, as the repeated samples do not introduce new information and may amplify noise in the data. Despite its limitations, ROS has been shown to outperform undersampling in certain scenarios when evaluated using metrics such as AUC.

To surmount the limitations of ROS, Chawla et al.35 proposed SMOTE, which generates synthetic minority samples via interpolation between existing minority samples and their k - nearest neighbors. By generating diverse synthetic samples rather than merely replicating the existent ones, SMOTE mitigates overfitting to a certain degree. However, SMOTE is not without its drawbacks. Firstly, SMOTE has the potential to generate synthetic samples within the overlapping regions between classes. This phenomenon gives rise to noisy data, thereby degrading the performance of classifiers.Secondly, in areas where minority samples are densely concentrated, SMOTE tends to generate an excessive number of synthetic samples. This situation exacerbates the problem of overfitting. Thirdly, SMOTE confines new samples to the linear paths between existing samples. As a consequence, the generated samples may deviate from the actual data distribution.

He et al.36 proposed the adaptive synthetic sampling algorithm (ADASYN), which uses a weighted distribution based on the difficulty of learning different minority class samples. More synthetic data is generated for harder-to-learn minority samples. This method reduces the bias caused by class imbalance and adaptively shifts the classification decision boundary towards the difficult samples. However, it faces challenges in sampling boundary samples and does not handle noisy data effectively.

In addition to the classic oversampling algorithms mentioned above, several improved algorithms based on SMOTE have been developed. Han et al.37 proposed the Borderline-SMOTE method, which effectively avoids oversampling noisy samples and reduces the generation of noisy samples by selectively oversampling boundary minority class samples. However, the voting selection strategy of this method involves a large number of high-density minority class samples in oversampling, without considering the problem of excessive generation of minority class samples and the distribution pattern of new samples. Douzas et al.38 proposed a simple and effective oversampling method based on K-means clustering and SMOTE, which avoids noise generation and effectively overcomes both inter-class and intra-class imbalances. Intra-class imbalance refers to the uneven distribution of samples within the same class. However, K-Means SMOTE may increase classification errors for minority samples due to its reliance on clustering, which can be sensitive to data sparsity and noise. Douzas et al.39 proposed Geometric SMOTE (G-SMOTE) as an enhancement to the SMOTE data generation mechanism. G-SMOTE generates synthetic samples within a geometric region in the input space, centered around each selected minority instance. While the default configuration defines this region as a hypersphere, G-SMOTE allows it to deform into a hyperellipsoid. G-SMOTE effectively parameterizes the data generation process and adapts to the specific characteristics of each imbalanced dataset. However, if the minority class samples contain noise or outliers, G-SMOTE may generate unrealistic synthetic samples around them, which can degrade classification performance.

Kunakorntum et al.40 proposed a novel oversampling technique, Synthetic Minority based on Probability Distribution (SyMProD), to handle skewed datasets. This technique standardizes the data using Z-scores and removes noisy data. The proposed method then selects minority samples based on the probability distributions of the two classes. Synthetic instances are generated from the selected points and several nearest neighbors of the minority class. Wang et al.41 propose a new deep learning (DL) based data balancing technique using an Auxiliary-guided Conditional Variational Autoencoder (ACVAE) trained with contrastive learning. Additionally, Wang et al. investigate an ensemble method where ACVAE generates synthetic positive samples, followed by a data undersampling technique.

In the context of ensuring data privacy, Du et al.42 propose a secure privacy-preserving SMOTE (SP2-SMOTE) sampling method. It extends traditional SMOTE by allowing parties to independently generate synthetic samples without exposing the data, while effectively preventing unauthorized label inference through minority-class nearest neighbor interpolation. The SMOTE algorithm and its variants can be combined with ensemble learning43 or machine learning algorithms44 to solve data imbalance problems in specific fields. Imani et al.45 also conducted a comprehensive analysis of the performance of Random Forest and XGBoost using SMOTE, ADASYN, and GNUS at different levels of imbalance.

Although the aforesaid methods have enhanced the classification performance in imbalanced datasets, they are burdened with several limitations. Existing methods typically struggle to generate synthetic samples that accord with the authentic distribution of minority classes, especially in complex or irregular data manifolds, which leads to issues in spatial distribution. Moreover, numerous methods generate an overabundance of synthetic samples in high - density regions, resulting in overfitting and diminished classifier generalization in terms of density control. Additionally, the generation of synthetic samples in overlapping or noisy regions remains a tough nut to crack, as it can undermine classifier performance, presenting a challenge in noise handling. To tackle these limitations, we propose ISMOTE, an enhanced SMOTE algorithm that extends the spatial scope for synthetic sample generation. Differing from existing methods, ISMOTE adaptively adjusts the positions of new samples in line with local density and distribution characteristics. This ensures that synthetic samples are distributed around original samples instead of being restricted to linear paths, maintain the natural density gradients of the original minority class, and avoid over-saturation in high-density regions. By surmounting these challenges, ISMOTE bolsters the robustness of classifiers in imbalanced data scenarios, particularly in applications such as early disease detection or fraud prevention where high precision for minority classes is imperative.

An improved SMOTE algorithm

This section first outlines the concept, implementation steps, and limitations of the SMOTE algorithm. Subsequently, the ISMOTE algorithm proposed in this study is introduced, detailing its concept, flowchart, implementation steps, and pseudocode.

The SMOTE algorithm

SMOTE is one of the most well-established oversampling algorithms, effectively mitigating the overfitting issue caused by random oversampling and improving the generalization capability of models. The basic principle of SMOTE is to balance the dataset by inserting synthetic samples between minority class samples. It synthesizes new minority class samples through linear interpolation with the k-nearest neighbor algorithm. Specifically, it randomly selects a minority class sample and one of its k-nearest neighbors, then generates a new minority class sample by performing linear interpolation between the two. The steps of the algorithm are as follows.

Determine the number of minority class samples to be synthesized, denoted as nsamples.

1) For each selected minority class sample Xq, compute its Euclidean distance to all other minority class samples. Select the k nearest neighbors of Xq, where k = 5 by default.

2) Randomly select a sample Xj from the k nearest neighbor samples and synthesize a new minority class sample Xnew with Xq using (1).

Where α(0,1) is randomly generated during the operation process.

3) If the number of synthesized samples reaches the required amount, the algorithm terminates. Otherwise, repeat from step 2.

Schematic diagram of the SMOTE algorithm’s synthetic sample generation process.

As shown in Fig. 1, assuming that SMOTE randomly selects a sample Xq from minority class samples (where Xq is defined as the seed sample), then uses the k-nearest neighbor algorithm to find five nearest minority class samples. It then randomly selects one neighbor sample, Xj, from these five. The new synthetic sample, Xnew, is generated through linear interpolation, as described in (1). The SMOTE algorithm generates new samples via linear interpolation along the line connecting two minority class samples.

In the SMOTE algorithm, the position of the newly generated sample is constrained to the space between the two original minority class samples and is influenced by their positions. As more new samples are generated, the density of minority class samples increases. Therefore, the SMOTE algorithm may lead to the distribution of oversampled minority class samples not conforming to the original distribution pattern of minority class samples.

The ISMOTE algorithm

The ISMOTE algorithm Idea

The SMOTE algorithm generates new minority class samples through linear interpolation between existing minority class samples. While this approach mitigates overfitting to some extent, it confines new samples to linear paths between existing samples, which may not accurately reflect the true distribution of the data. To address this limitation, ISMOTE extends the spatial scope for generating new samples by adaptively adjusting their positions based on local density and distribution characteristics. To expand the space for generating new samples, we change the conditions formula for generating new samples in the SMOTE algorithm. First, the Euclidean distance between the selected minority class sample and its k-nearest neighbor is calculated and multiplied by a random number between 0 and 1 to generate a random quantity. The base sample is generated by linear interpolation between the two samples. Then, when the base sample generation position is biased towards the k-nearest neighbor sample, the random quantity is subtracted to generate a new sample near the original sample. Similarly, when the base sample is closer to the original minority sample, the random quantity is added to generate a sample closer to the neighbor.

As shown in Fig. 2, (a) and (c) illustrate the positions of new samples generated by the SMOTE algorithm, and (b) and (d) show the positions of new samples generated by the ISMOTE algorithm. According to the SMOTE algorithm, a base sample Xnew1 is generated, which is located between the two selected minority class samples. Then, based on the distance of the base sample Xnew1 from the two original samples, the new sample Xnew generation position is adjusted. If the generated base sample Xnew1 is far from the seed sample Xq, a random quantity is subtracted to position the new sample Xnew around the seed sample Xq, as shown in Fig. 2(b). If the base sample Xnew1 is close to the seed sample Xq, a random quantity is added to position the new sample Xnew around the selected neighboring sample Xj, as shown in Fig. 2(d). The new samples Xnew generated by the algorithm are randomly distributed between two samples and around single samples, which increases the space for generating new samples and makes the distribution of new samples more consistent with the distribution pattern of the original samples.

The formula for generating new sample positions is revised. First, the Euclidean distance between the seed sample Xq and its randomly selected neighboring sample Xj is calculated, and this distance is multiplied by a random number between 0 and 1 to generate a random quantity. Secondly, a base sample Xnew1 is generated based on (2). Finally, this study proposes (3) to generate a new sample. According to the distance between Xnew1 and the seed sample Xq, a random quantity is added or subtracted from the position of Xnew1 to generate a new sample Xnew. If Xnew1 is far away from Xq, the position of Xnew1 is changed by subtracting a random quantity to get the Xnew, so that it is located around Xq. If Xnew1 is close to Xq, the position of Xnew1 is adjusted by adding a random quantity to place Xnew, so that it is located around the neighboring sample Xj. The revised formula ensures that the generated samples are located around the two selected samples. Here, α (0,1) and β ϵ (0,1) are randomly generated during each operation.

Diagram of improvement idea for the ISMOTE algorithm.

The flowchart, steps and pseudo-code of the ISMOTE algorithm

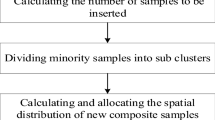

Based on the ISMOTE algorithm, the flowchart of the ISMOTE algorithm is shown in Fig. 3. According to the flowchart, the steps of the ISMOTE algorithm are as follows.

-

1)

Determine the number of samples of minority class to be synthesized, denoted as nsamples.

-

2)

For each selected sample of minority class Xq (1*N- dimensional vectors, where N is the number of features), calculate its distance to all other minority class samples using the Euclidean distance formula. Select the k nearest neighbors of Xq, with k set to 5 by default.

-

3)

From the k nearest neighbor samples, randomly select a sample Xj (a 1*N-dimension vector, where N is the number of features of a sample) is selected. Generate a base sample Xnew1 randomly between Xq and Xj according to (2). Then, generate the new sample Xnew by adjusting the position based on (3). If the number of new samples reaches the required amount, the algorithm terminates. Otherwise, repeat from step 2.

As shown in Fig. 4, in the ISMOTE algorithm, it is first assumed that a sample Xq (chosen as the seed sample) is randomly selected from the minority class samples. Using the k-nearest neighbors algorithm, the five nearest minority class samples nearest to Xq are identified. Then, randomly select one of these neighboring minority class samples, denoted as Xj. Generate a new synthetic minority class sample Xnew using (2) and (3) proposed in this study. Compared to the SMOTE algorithm, the ISMOTE algorithm not only retains the position between the original two samples, but also expands the external surrounding space of single samples. This makes the overall distribution of the new samples more consistent with the original sample distribution pattern, improves data quality, and helps improve model performance.

The flowchart of the ISMOTE algorithm.

Schematic Diagram of the ISMOTE Algorithm’s Synthetic Sample Generation Process.

The expansion of the generation space for new samples helps alleviate the problem of high sample density to some extent. The balanced dataset can be used for model training, enabling the model to better learn the characteristics of minority class samples and improve classification performance. The ISMOTE algorithm can also be applied to multi-class classification tasks by adjusting the proportion of synthetic samples. The pseudocode is as follows.

The ISMOTE algorithm.

Experiments and analysis

This section introduces the datasets and classifiers used in the research, as well as the metrics used to evaluate the experimental results. It details the experimental design, demonstrates and analyzes the visualization effects of datasets processed by seven oversampling algorithms, and compares and analyzes the results of three classifiers. The code for the ISMOTE algorithm and the dataset used in the experiment are available at https://github.com/Sunshine6828. 6/Improved-SMOTE-algorithm

Experimental datasets

Based on studies20,48,52 and the requirements of this research, we selected thirteen classification datasets from three widely-used public databases for our experiments: the KEEL database, the UCI database46, and the Kaggle competition platform. These databases serve as fundamental resources in data science and machine learning, providing extensive datasets for algorithm testing and validation across various domains and applications. The UCI database holds significant influence in machine learning research, while the KEEL database specializes in data mining and machine learning applications. Datasets from Kaggle competitions are particularly valued for their exceptional data quality and strong relevance to real-world business scenarios. The IR values of the thirteen selected datasets range from 1.25 to 29.17. Detailed information about the datasets is provided in Table 1. The features in the datasets are numerical. For non-numeric data, preprocessing is required to convert it into a numeric form before applying the ISMOTE algorithm. Techniques such as label encoding and one-hot encoding can convert non-numeric data into numeric form. Some multi-class datasets can be converted into binary classification datasets, resulting in multiple binary datasets with the same sample size but different minority class sizes and IR values. All datasets are processed as binary classification datasets. The method for calculating IR is given in (4).

Here, Nmaj represents the number of majority class samples, and Nmin represents the number of minority class samples.

Evaluation metrics

A confusion matrix provides an intuitive evaluation of a classification model’s performance by summarizing the classification results across different sample categories. In a confusion matrix, rows represent the true classes of samples, and columns represent the predicted classes. This paper focuses on binary classification, where the majority class is labeled as the negative class and the minority class as the positive class. In this matrix, a positive sample correctly predicted as positive is denoted as True Positive (TP); a negative sample incorrectly predicted as positive is False Positive (FP); a positive sample incorrectly predicted as negative is False Negative (FN); and a negative sample correctly predicted as negative is True Negative (TN). Table 2 shows the statistical results derived from the confusion matrix.

Common performance metrics such as precision, recall, F1 score, G-mean, and AUC can be derived from the confusion matrix. Precision measures the accuracy of positive predictions, while recall measures the model’s ability to identify all actual positive samples. The F1 score, the harmonic mean of precision and recall (F1 is the F-measure when β = 1), offers a comprehensive evaluation of the classification performance for minority class samples. Specific calculation methods are provided in (5) to (9).

Although the F1 score assesses individual class performance, it may not reflect the overall performance across both majority and minority classes and is sensitive to IR values. To provide a comprehensive assessment of the classifier’s performance, G-mean is used as an additional evaluation metric. G-mean provides a balanced evaluation of the classification accuracy for both classes. The specific calculation method is shown in (10).

AUC (Area Under the Curve) quantifies the area under the ROC (Receiver Operating Characteristic) curve, assessing the classifier’s performance across both minority and majority classes. In cases of imbalanced sample distributions, the AUC provides a reliable measure of classifier performance. Thus, AUC is frequently employed to evaluate classifiers in imbalanced data scenarios. The specific calculation method is shown in (11).

Class imbalance can impact a classifier’s predictive ability, yet high prediction accuracy can still be achieved. This happens because the trained classifier is often biased toward the majority class, which greatly outnumbers the minority class samples. Consequently, when the dataset is imbalanced, using accuracy as the performance evaluation metric is not comprehensive enough. The F1 score provides a balanced measure of the model’s accuracy, with a higher F1 value indicating better performance. The G-mean evaluates classification performance across both majority and minority classes. AUC provides a comprehensive evaluation of the classifier’s overall performance. Considering the factors discussed and based on previous research47,48,49, F1, G-mean, and AUC are chosen as evaluation metrics.

Experimental design

Seven representative oversampling algorithms are selected as baselines to validate the effectiveness of the proposed ISMOTE algorithm. Due to the fact that the DeepSMOTE algorithm is a variant of SMOTE related to deep learning, this study mainly focuses on machine learning algorithms for experimentation, and the dataset used is relatively small. Therefore, the DeepSMOTE algorithm was not used as a comparative algorithm. In the G-SMOTE algorithm, synthetic samples are generated within a geometric region of the input space surrounding each selected minority class instance. In its default configuration (with parameter n_dim = 2), this region is defined as a hypersphere, while G-SMOTE also allows its deformation into a hyper-spheroid (with parameter n_dim = 3). Consequently, two variants of the method were experimentally evaluated: G-SMOTE (n_dim = 2) and G-SMOTE (n_dim = 3). These algorithms include ROS, SMOTE, ADASYN, Borderline-SMOTE (BSMOTE), K-means SMOTE (KSMOTE), GSMOTE(n = 2), GSMOTE(n = 3), and SYMPROD.

The classifiers are trained using training sets processed by these seven benchmark sampling algorithms and the ISMOTE algorithm. The performance of the classifiers indirectly reflects the performance of sampling algorithms. ROS is the simplest oversampling algorithm, balancing categories by randomly duplicating minority class samples. SMOTE is the most influential oversampling algorithm, serving as the foundation for various improvements. ADASYN generates more synthetic samples around hard-to-classify samples of the minority class, directing the classifier’s focus toward these challenging samples. Borderline-SMOTE introduces a method to distinguish noisy samples, safe samples, and boundary samples which are only strengthened. K-means SMOTE interpolates in sparse areas of the minority class to avoid generating noisy data. G-SMOTE generalizes SMOTE’s linear interpolation by constructing a geometric region (hyper-sphere or hyper-spheroid) around each minority instance. All these algorithms are typical extensions of the SMOTE algorithm, designed to select minority samples from different regions to generate new samples. The SYMPROD algorithm is the latest algorithm used separately in the smote-variants library, which uses probability distribution based minority class sample synthesis.

Previous research on sampling algorithms16,20,49,51 has employed three classifiers in experiments, and this study also utilizes three classifiers. The algorithms with good classification performance include Classification and Regression Tree (CART)50, K-Nearest Neighbors (KNN)51,52, and Random Forest (RF)53. Therefore, these three algorithms are selected for the classification experiments. This study first visualize the data sampling results of the seven oversampling algorithms and analyze and compare the distribution of the generated data. Next, performing classification experiments on thirteen datasets processed by the seven oversampling algorithms using the three selected classifiers. Then comparing and analyzing the classification results using three evaluation metrics: F1, G-mean, and AUC.

In the experiments, the majority and minority class samples are first divided into training and test sets at a certain ratio, which are saved separately. The IR values of the training set and the test set are kept approximately the same level during the division. The ratio of the training set to the test set is 7:3. The divided training set is oversampled so that the ratio of positive to negative samples in the training set reaches approximately 1:1. The training set is used to train the classifier models, with synthetic samples participating only during the training phase. All the data in the test phase are real, with no synthetic samples involved, to obtain the most realistic experimental results. The parameters of the classifiers are optimized using grid search on the untreated training set, and the same parameters are applied to classifiers on the same dataset. The classifier parameters used are shown in Table 3. In order to avoid the impact of randomness on the experiment as much as possible and objectively compare the generalization performance improvement ability of different algorithms on the model, the training set experiment and the test set experiment both use ten-fold cross-validation to repeat the experiment ten times and take the average as the final result. The final result is output in the form of “mean ± standard deviation.

The environment for all experiments is as follows:

Hardware: 11th Gen Intel(R) Core(TM) i5-1155G7 (4 cores and 8 threads, clocked at 2.5 GHz), 16GB memory.

Software: The experimental platform uses a 64-bit Windows 11 operating system with PyCharm Community Edition 2023.3.4 (https://www.jetbrains.com/pycharm/down-load/) as the IDE. Python is adopted as the development language in this experiment.

Results and analysis

Comparative analysis of visualization results of oversampling algorithms

The data distribution can be effectively compared by visualizing the datasets processed by each oversampling algorithm. The ecoli2 dataset is selected as a demonstration dataset for distributing positive and negative samples. The imbalance rate is 5.46, and there is an overlap between positive and negative samples. To provide a clear and intuitive representation of the sample distribution, features with low overlap rates were selected for visualization. A 2D data distribution plot with two features (as shown in Fig. 5) and a 3D data distribution plot with three features (as shown in Fig. 6) are adopted for display and comparison. In these two figures, blue points represent majority class samples and red points represent minority class samples. Both Figs. 5 and 6 contain eight subplots, corresponding to ROS, SMOTE, ADASYN, BSMOTE, KSMOTE, GSMOTE(n = 2), GSMOTE(n = 3), STMPROD, and ISMOTE respectively.

Since ROS achieves data balance by randomly replicating minority class samples, the distribution of minority class samples in the ROS subgraphs of Figs. 5 and 6 is consistent with the original minority class sample distribution. As shown by the distribution of red sample points in the figures. The SMOTE subplot shows that the newly generated red sample points are distributed within the original distribution space of red sample points, with most of them clustered together. This indicates that the new samples generated by SMOTE through linear interpolation are limited to the original sample distribution space and relatively concentrated. Additionally, the algorithm generates a large number of new minority class samples in the blank area between the two clusters of red sample points, which may lead to the creation of excessively noisy. The ADASYN subgraph shows that the newly generated red sample points are more biased towards the boundary area. This algorithm generates a large number of new samples in areas where there are fewer minority class samples at the boundaries, which may result in the production of more noisy samples. The BSMOTE, GSMOTE(n = 2), GSMOTE(n = 3), and KSMOTE subgraphs show that these two algorithms generate a large number of new red sample points in areas with fewer minority class samples. These points mix with the blue sample points, increasing the complexity of the overlapping regions. Notably, in the 3D data distribution scatter plots, the G-SMOTE algorithm generated a significant number of minority class samples in sparse regions. This approach, while effective in expanding minority class representation, may potentially introduce new noisy samples. Additionally, these algorithms may result in inconsistencies between the sparsity distributions of the newly generated and original minority class samples. The SYMPROD subgraph shows that all generated samples are densely concentrated in one area, greatly increasing the density of the original samples.

Comparison of 2D sampling effects using different sampling methods.

Comparison of 3D sampling effects using different sampling methods.

The ISMOTE subgraphs in Figs. 5 and 6 show that the new red sample points generated by ISMOTE are similar to the distribution of the original red sample points, indicating that the data distribution after oversampling is largely consistent with the original data distribution. Comparing regions A, B, and C in Figs. 5 and 6, it is evident that ISMOTE generates more samples in areas with dense original minority class samples and fewer samples in areas with sparser minority class samples. This indicates that, compared to other algorithms, ISMOTE’s red sample point distribution more closely resembles that of the original minority class samples. In comparison to the SMOTE algorithm, ISMOTE does not generate new samples excessively in areas devoid of minority class samples, such as region B in Fig. 5. Additionally, due to the expansion of the new sample generation space, ISMOTE ensures a more reasonable distribution density of newly generated minority class samples, alleviating the problem of excessive sample density to some extent. Since the ISMOTE algorithm generates new minority class samples around the original minority class samples, it may produce minority class noise samples outside the boundary. However, the number of these noise samples is small and has little impact on the overall quality of the dataset.

Comparison and analysis of classifier classification results

In some experimental datasets, KSMOTE fails to effectively execute its core K-Means clustering step due to the excessive dispersion of minority class samples. This is because KSMOTE relies on clustering the minority class samples and generating new samples near the cluster centers. However, when the minority class samples are highly scattered and lack a clear clustering structure, K-Means may fail to form valid clusters, preventing KSMOTE from performing the sample generation process. As a result, KSMOTE is unable to obtain valid evaluation metrics in these datasets, leading to empty results. All performance metrics are derived by training classifiers on training sets processed by the respective oversampling algorithms and then testing the resulting models on the test sets. The best values for each metric are highlighted in bold.

Tables 4 and 5, and 6 present the F1, G-mean, and AUC performance results for the CART classifier, respectively. In Table 4, for D5, the model trained on the ISMOTE-processed training set shows a 13.07% improvement in the F1 test performance indicator compared to models trained on sets processed by the seven benchmark algorithms. Similarly, for D2, the F1 test performance indicator of the model trained on the training set processed by the ISMOTE algorithm is enhanced by 4.20% compared to the models trained on the training sets processed by the five baseline oversampling algorithms. For D13, the model trained on the training set processed by the ISMOTE algorithm showed an 2.21% improvement in the F1 test performance indicator. For D1 and D11, models trained on the training sets processed by the ISMOTE algorithm showed varying degrees of performance improvement in testing compared to the models trained on the training sets processed by the seven benchmark oversampling algorithms, although the extent of improvement is smaller. For datasets D3, D7, and, D8, the SYMPROD algorithm demonstrates notably improved performance. For other datasets, the difference in the F1 of the test performance indicator for the model trained with the ISMOTE-processed training set is within an acceptable range compared to models trained on other algorithms.

Table 5 demonstrates that models trained on ISMOTE-processed datasets achieve significantly higher G-mean test values compared to those using the seven baseline algorithms, with improvements of 28.88% for D13, 16.55% for D5, 11.58% for D11, and 3.91% for D2. For D1, D3, D4, D7, D8, and D9, models trained on the training sets processed by the ISMOTE algorithm showed varying degrees of performance improvement in testing compared to the models trained on the training sets processed by the seven benchmark oversampling algorithms. For datasets D6, D10, and D12, the G-SMOTE algorithm demonstrates notably improved performance.

In Table 6, across eight datasets, the AUC test performance indicators for models trained on ISMOTE-processed training sets show varying degrees of improvement compared to models trained on sets processed by the seven baseline algorithms. For D13, the AUC test performance indicator of the model trained on the ISMOTE-processed training set increases by 12.22%. For D5, the AUC test performance indicator of the model trained on the ISMOTE-processed training set increases by 7.94%. For D11, the model trained on the training set processed by the ISMOTE algorithm showed an 4.46% improvement in the AUC test performance indicator. For D1, D2, D4, and D7, models trained on the training sets processed by the ISMOTE algorithm showed varying degrees of performance improvement in testing compared to the models trained on the training sets processed by the seven benchmark oversampling algorithms. The models trained on ISMOTE-processed training sets do not achieve the best performance on D3, D6, D8, D9, D10, and D12.

The results of the F1, G-mean, and AUC performance indicators on the KNN classifier are shown in Tables 7 and 8, and 9, respectively. In Table 7, compared to the models trained on training sets processed by the seven baseline algorithms, the F1 value of the test performance indicator for the model trained with the training set processed by the ISMOTE algorithm improves by 0.43% on dataset D1. For dataset D9, the F1 test performance indicator improves by 0.29%. For D2, D6, D10, and D12, the F1 test performance indicator for models trained on ROS-processed training sets shows improvements compared to models trained on sets processed by the seven baseline algorithms. Although the model trained with the training set processed by the ISMOTE algorithm does not achieve the best test performance on datasets D5 and D8, the difference is within an acceptable range. For datasets D3, D4, D7, D9, D11, and D13, other algorithms demonstrate notably improved performance.

In Table 8, compared to models trained on training sets processed by the seven benchmark algorithms, the G-mean of the test performance indicator for the model trained with the ISMOTE-processed training set improves by 2.71% on D10. For D9, the G-mean of the test performance indicator for the model trained with the ISMOTE-processed training set improves by 2.25%. For datasets D1, D4, D5, D6, D7, and D8, the ISMOTE algorithm demonstrates notably improved performance. However, for D12 and D13, the G-mean of the test performance indicator for the model trained using the ISMOTE-processed training set shows a larger gap compared to models trained using other algorithms. For D2, D3, and D11, the difference in the G-mean of the test performance indicator for the model trained with the ISMOTE-processed training set is within an acceptable range compared to models trained on other algorithms.

Table 9 demonstrates that models trained on ISMOTE-processed datasets achieve significantly higher AUC test values compared to those using the seven baseline algorithms, with improvements of 1.79% for D9, 1.72% for D10. For datasets D1, D2, D5, D6, D7, and D8, the ISMOTE algorithm demonstrates notably improved performance. For D3, D4, D11, and D12, the difference in the AUC of the test performance indicator for the model trained with the ISMOTE-processed training set is within an acceptable range compared to models trained on other algorithms. However, for D13, the AUC of the test performance indicator for the model trained using the ISMOTE-processed training set shows a larger gap compared to models trained using other algorithms.

The F1, G-mean, and AUC performance indicators of the RF classifier are shown in Tables 10 and 11, and 12, respectively. In Table 10, compared to models trained on training sets processed by the seven baseline algorithms, the F1 value of the test performance indicator for models trained with the ISMOTE-processed training set shows varying degrees of improvement across five datasets. For D3, D5, D6, D7, D9 and D13 the difference in the AUC of the test performance indicator for the model trained with the ISMOTE-processed training set is within an acceptable range compared to models trained on other algorithms. However, for D2 and D10, the F1 of the test performance indicator for the model trained using the ISMOTE-processed training set shows a larger gap compared to models trained using other algorithms.

Table 11 demonstrates that models trained on ISMOTE-processed datasets achieve significantly higher G-mean test values compared to those using the seven baseline algorithms, with improvements of 7.48% for D8, 16.55% for D11, and 11.10% for D12. For D1, D3, D4, D5, D6, D7, D9, D10, and D13, the G-mean value of the model test performance indicator trained with the ISMOTE processed training set shows significant performance improvements. However, for D2, the G-mean of the test performance indicator for the model trained using the ISMOTE-processed training set shows a larger gap compared to models trained using other algorithms.

In Table 12, compared to models trained on training sets processed by the seven baseline algorithms, the AUC value of the test performance indicator for models trained with the ISMOTE-processed training set shows varying degrees of improvement across twelve datasets. For D11, the AUC value of the test performance indicator for the model trained with the ISMOTE-processed training set increases by 11.10%. For D1, D3, D4, D5, D6, D7, D8, D9, D10, D12, and D13, the AUC value of the model test performance indicator trained with the ISMOTE processed training set shows significant performance improvements. However, for D2, the AUC of the test performance indicator for the model trained using the ISMOTE-processed training set shows a larger gap compared to models trained using other algorithms.

Comparison of F1 values for test performance of three classifiers trained on training sets processed by various sampling algorithms.

Comparison of G-mean values for test performance of three classifiers trained on training sets processed by various sampling algorithms.

Figures 7 and 8, and 9 are line graphs depicting the evaluation metrics of the CART, KNN, and RF classifiers, providing a more intuitive comparison of each classifier’s performance. Compared to the other seven sampling algorithms, most datasets processed by the ISMOTE algorithm proposed in this study can achieve higher F1 scores, G-mean values, and AUC values for the classifiers. Therefore, the ISMOTE algorithm, compared to the other seven mainstream oversampling algorithms, improves classifier performance to some extent by mitigating overfitting. The overlap and sparsity of samples in the dataset can significantly affect the performance of oversampling algorithms, leading to varying results across different datasets when using the ISMOTE algorithm. However, ISMOTE expands the sample generation space compared to other algorithms, the newly generated samples exhibit more diverse feature representations. As a result, ISMOTE shows improved performance compared to other algorithms.

Comparison of AUC values for test performance of three classifiers trained on training sets processed by various sampling algorithms.

The time complexity of the SMOTE algorithm needs to be calculated separately for the nearest neighbor search and sample generation. For N minority class samples, computing the Euclidean distances between all sample pairs using the k-nearest neighbors algorithm has a time complexity of O(d•N logN), where d is the feature dimension. When generating M new samples, each interpolation requires O(d) time, resulting in a total complexity of O(d•M). Therefore, the overall time complexity of the SMOTE algorithm is O(d•N log N) + O(d•M) ≈ O(d•N log N).

The time complexity of the ISMOTE algorithm also needs to be calculated separately for the nearest neighbor search and sample generation. For N minority class samples, computing the Euclidean distances between all sample pairs using the k-nearest neighbors algorithm has a time complexity of O(d•N logN), where d is the feature dimension. When generating M new samples, each interpolation requires O(d) time, resulting in a total complexity of O(d•M). Additionally, the distance assessment and perturbation adjustments in ISMOTE require O(d) time per modification, leading to a total complexity of O(d•M). However, this additional perturbation calculation does not increase the overall complexity order. Therefore, the time complexity of the ISMOTE algorithm remains O(d•N log N) + O(d•M) ≈ O(d•N log N), the same as that of SMOTE.

Under identical experimental conditions, Table 13 presents a comparison of the runtime for seven algorithms across thirteen datasets. The results represent the average of ten experiments. For datasets where the KSMOTE algorithm could not be applied, the runtime is left blank. For dataset D7, the runtime cost of ISMOTE algorithm is second only to ROS. For dataset D9, the running time cost of ISMOTE algorithm is second only to GSMOTE algorithm. For datasets D10, D11, and D13, the running time cost of ISMOTE algorithm is second only to SMOTE algorithm and GSMOTE algorithm. For datasets D1, D2, D4, D5, D8, and D12, the ISMOTE algorithm ranks third in terms of runtime, second only to ROS and GSMOTE algorithms. For datasets D3 and D6, the ISMOTE algorithm ranks fourth in runtime, only behind ROS, GSMOTE, and SMOTE algorithms. Therefore, the ISMOTE algorithm improves classification performance without significantly affecting runtime.

Non-parametric statistical tests

This study utilizes Friedman’s test and Holm’s test to evaluate the statistical significance of the average rankings between ISMOTE and the baseline algorithms. The average ranking of algorithms is considered to measure how good an algorithm is relative to its competitors.Rankings are determined by assigning positions to each algorithm based on how much it contributes to the classifier’s performance improvement. The sampling algorithm that enables the classifier to achieve the best performance on a specific dataset is assigned rank 1, the algorithm with the second-best performance receives rank 2, and so on. This process is repeated for each performance metric across all datasets, after which the average ranking is computed. Finally, rankings are computed for all performance metrics across all datasets, and the statistical significance of the ISMOTE algorithm is validated using Holm’s test.

Figure 10 presents the ranking statistics of different classifiers based on Friedman’s test. Specifically, for the CART classifier, ISMOTE ranks first in F1, G-mean, and AUC. For the KNN classifier, ISMOTE continues to rank first in G-mean, and AUC. For the RF classifier, ISMOTE ranks first in G-mean, and AUC, indicating its overall best performance on the RF classifier. These ranking results demonstrate that ISMOTE shows the most significant improvement in G-mean and AUC, while remaining competitive in F1. Although it does not achieve the highest rank in F1 for some classifiers, its performance remains within a reasonable range.

All experimental results were analyzed using Friedman’s test for ranking, followed by Holm’s test to assess whether ISMOTE exhibits significant advantages over other methods. Due to the missing results of the KSMOTE algorithm on certain datasets, it will not be subjected to non parametric statistical testing. The statistical analysis results are summarized in Tables 14, 15 and 16. At a significance level of α = 0.05, all pairwise comparisons reject the null hypothesis (which assumes no significant differences among the compared methods), indicating that ISMOTE is statistically superior to other oversampling methods in most datasets. Holm’s test results show that the p-values for all comparisons are less than 0.05, confirming that ISMOTE’s improvements are statistically significant.

Friedman’s test first analyzes the average rankings of different oversampling methods across multiple datasets, revealing that ISMOTE ranks higher. Holm’s post-hoc test further computes the pairwise comparison p-values between ISMOTE and other methods, finding that p < 0.05, which confirms that ISMOTE’s improvements are statistically significant. Since Holm’s test p-values across all datasets are below 0.05, it can be concluded that ISMOTE’s improvements in multiple datasets are statistically significant.

Rankings obtained through Friedman’s test.

Notably, in the RF classifier, ISMOTE achieved the highest ranking in F1, G-mean, and AUC, significantly outperforming SMOTE, ADASYN, and other baseline methods in statistical analysis. In conclusion, ISMOTE demonstrates statistical significance in various experimental settings, with substantial improvements in F1 and AUC compared to baseline methods, while maintaining strong competitiveness in G-mean. These statistical analyses further enhance the reliability of the experimental results, confirming the effectiveness of ISMOTE in imbalanced data classification tasks.

Conclusion

This research proposes an improved SMOTE algorithm to address the limitations of the original SMOTE algorithm. The new algorithm, ISMOTE, enhances SMOTE by modifying the generation space position of new samples. When the generation position of a new sample biases towards the k-nearest neighbors or the original sample, a random quantity is subtracted or added to ensure that the new sample is generated around the k-nearest neighbors or the original sample. ISMOTE generates new samples randomly between two samples or around single samples, thereby increasing the space for new sample generation and aligning the sample distribution more closely with the original sample distribution pattern. By expanding the generation space of new samples, ISMOTE effectively mitigates the problem of excessively high density in newly generated samples. Experimental results and visualizations demonstrate that ISMOTE effectively overcomes the problems of new sample distributions not conforming to the original sample distribution pattern and excessively high density of newly generated samples. It improves the classification accuracy of imbalanced datasets and enhances the performance of classification models. By calculating the proportion of oversampled data required for each minority class in multi-class problems and adjusting the parameters of the oversampling algorithm, the goal of balancing multiple classes can be achieved. Therefore, ISMOTE has good universality.

Certainly, the ISMOTE algorithm still has some limitations in certain aspects. First, regarding computational efficiency, although it maintains the same time complexity as SMOTE, the perturbation mechanism increases runtime, requiring a trade-off between efficiency and performance when processing large-scale datasets.Second, in terms of parameter sensitivity, the random perturbation coefficients (α, β) require empirical tuning, and improper settings may affect the quality of generated samples.Finally, for high-dimensional data, it is recommended to use feature selection methods in conjunction with ISMOTE to enhance the effectiveness of Euclidean distance measurement.

The ISMOTE algorithm can be applied to the problem of imbalanced data sets across various fields, including medical diagnosis, customer churn in telecommunications, financial fraud detection, software defect prediction, and hardware fault detection. However, the ISMOTE algorithm has higher computational complexity than the SMOTE algorithm due to the intricacy of the new sample positioning conditions. Additionally, the imbalance rate of the experimental datasets in this study is at a medium-low level. The next step will focus on addressing datasets with a high class imbalance rate. In the future, ISMOTE could be integrated with undersampling techniques to remove noise after oversampling, potentially enhancing dataset quality and further improving algorithm performance.

Data availability

Basic data supporting the results can be obtained through the website. The thirteen classification datasets are selected from the public KEEL database (https://sci2s.ugr.es/keel/datasets.php), the public UCI database (https://archive.ics.uci.edu/datasets), and the Kaggle dadasets (https://www.kaggle.com/datasets). The code of the ISMOTE algorithm and the dataset used in the experiment can be found at https://github.com/Sunshine68286/Improved-SMOTE-algorithm.

References

Karamti, H. et al. Improving prediction of cervical cancer using Knn imputed Smote features and multi-model ensemble learning approach. Cancers 15 (17), 4412 (2023).

Fotouhi, S., Asadi, S. & KattanMW A comprehensive data level analysis for cancer diagnosis on imbalanced data. Biomed. Inf. 90, 103089 (2019).

Ileberi, E., Sun, Y. & Wang, Z. Performance evaluation of machine learning methods for credit card fraud detection using SMOTE and adaboost. IEEE Access. 9, 165286–165294 (2021).

Abd El-Naby, A., Hemdan, E. E. D. & El-Sayed, A. An efficient fraud detection framework with credit card imbalanced data in financial services. Multimed Tools Appl. 82 (3), 4139–4160 (2023).

Ranjan, R. P. & Kumar, N. N. Software bug severity and priority prediction using SMOTE and intuitionistic fuzzy similarity measure. Appl. Soft Comput. 150, 111048 (2024).

Feng, S. et al. Improving the undersampling technique by optimizing the termination condition for software defect prediction. Expert Syst. Appl. 235, 121084 (2024).

Liu, D. et al. Feature-level SMOTE: augmenting fault samples in learnable feature space for imbalanced fault diagnosis of gas turbines. Expert Syst. Appl. 238, 122023 (2024).

Wei, J. et al. Novel extended NI-MWMOTE-based fault diagnosis method for data-limited and noise-imbalanced scenarios. Expert Syst. Appl. 238, 121799 (2024).

Thabtah, F., Hammoud, S., Kamalov, F. & Gonsalves, A. Data imbalance in classification: experimental evaluation, Informa. Sciences 513, 429–441 (2020).

Rekha, G., Tyagi, A. K., Sreenath, N. & Mishra, S. Class Imbalanced Data: Open Issues and Future Research Directions. In 2021 International Conference on Computer Communication and Informatics (ICCCI), Coimbatore, India, 1–6. (2021).

Guo, H. et al. Learning from class-imbalanced data: review of methods and applications. Expert Syst. Appl. 73, 220–239 (2017).

Yang, K. et al. Incremental weighted ensemble broad learning system for imbalanced data. IEEE Trans. Knowl. Data Eng. 34 (12), 5809–5824 (2022).

Wang, X., Li, L. & Lin, H. A review of SMOTE class algorithm research. Comput. Sci. 18 (5), 1135–1159 (2024).

de Morais, R. F. & Vasconcelos, G. C. Boosting the performance of over-sampling algorithms through under-sampling the minority class. Neurocomputing 343, 3–18 (2019).

Dai, Q., Liu, J. & Liu, Y. Multi-granularity relabeled under-sampling algorithm for imbalanced data. Appl. Soft Comput. J. 124, 109083 (2022).

Tao, X. et al. SVDD boundary and DPC clustering technique-based oversampling approach for handling imbalanced and overlapped data. Knowl. Based Syst. 234, 107588 (2021).

Sonoda, R. Fair oversampling technique using heterogeneous clusters. Inf. Sci. 640, 119059 (2023).

Sáez, J. A., Luengo, J., Stefanowski, J. & Herrera, F. SMOTE–IPF: addressing the noisy and borderline examples problem in imbalanced classification by a re-sampling method with filtering. Inf. Sci. 291, 184–203 (2015).

Li, J. et al. SMOTE-NaN-DE: addressing the noisy and borderline examples problem in imbalanced classification by natural neighbors and differential evolution. Knowl. Based Syst. 223, 107056 (2021).

Zhang, A. et al. SMOTE-RkNN: A hybrid re-sampling method based on SMOTE and reverse k-nearest neighbors. Inf. Sci. 595, 70–88 (2022).

Yu, H., Sun, C., Yang, X., Zheng, S. & Zou, H. Fuzzy support vector machine with relative density information for classifying imbalanced data. IEEE Trans. Fuzzy Syst. 27 (12), 2353–2367 (2019).

Khan, S. H., Hayat, M., Bennamoun, M., Sohel, F. A. & Togneri, R. Cost-Sensitive learning of deep feature representations from imbalanced data. IEEE Trans. Neural Networks Learn. Syst. 29 (8), 3573–3587 (2018).

Esposito, C., Landrum, G. A., Schneider, N., Stiefl, N. & Riniker, S. GHOST: adjusting the decision threshold to handle imbalanced data in machine learning. J. Chem. Inf. Model. 61 (6), 2623–2640 (2021).

Fernando, K. R. M. & Tsokos, C. P. Dynamically weighted balanced loss: class imbalanced learning and confidence calibration of deep neural networks. IEEE Trans. Neural Networks Learn. Syst. 33 (7), 2940–2951 (2022).

Dongdong, L. et al. Entropy-based hybrid sampling ensemble learning for imbalanced data. Int. J. Intell. Syst. 36 (7), 3039–3067 (2021).

Wang, Y. An ensemble learning imbalanced data classification method based on sample combination optimization. Journal of Physics: Conference Series 1284(1): 012135. (2019).

Zhong, X. & Wang, N. Ensemble learning method based on CNN for class imbalanced data. J. Supercomputing. 80 (7), 10090–10121 (2024).

Buda, M., Maki, A. & Mazurowski, M. A. A systematic study of the class imbalance problem in convolutional neural networks. Neural Netw. 106, 249–259 (2018).

Elreedy, D. & Atiya, A. F. A comprehensive analysis of synthetic minority oversampling technique (SMOTE) for handling class imbalance. Inf. Sci. 505, 32–64 (2019).

Pradipta, G. A., Wardoyo, R., Musdholifah, A., Sanjaya, I. N. H. & Ismail, M. SMOTE for handling imbalanced data problem: a review. In 2021 Sixth International Conference on Informatics and Computing (ICIC), Jakarta, Indonesia, 1–8. (2021).

Dablain, D., Krawczyk, B. & Chawla, N. V. DeepSMOTE: fusing deep learning and SMOTE for imbalanced data. IEEE Trans. Neural Networks Learn. Syst. 34 (9), 6390–6404 (2023).

Kaur, P. & Gosain, A. Comparing the Behavior of Oversampling and Undersampling Approach of Class Imbalance Learning by Combining Class Imbalance Problem with Noise. ICT Based Innovations: Proceedings of CSI 2015. Springer Singapore, 23–30. (2018).

Mohammed, R., Rawashdeh, J. & Abdullah, M. Machine Learning with Oversampling and Undersampling Techniques: Overview Study and Experimental Results. In the 11th International Conference on Information and Communication Systems (ICICS), Irbid, Jordan, 243–248. (2020).

Batista, G. E., Prati, R. C. & Monard, M. C. A study of the behavior of several methods for balancing machine learning training data. ACM SIGKDD Explorations Newsl. 6 (1), 20–29 (2004).

Chawla, N. V., Bowyer, K. W., Hall, L. O. & Kegelmeyer, W. P. SMOTE: synthetic minority Over-sampling technique. J. Artif. Intell. Res. 16, 321–357 (2002).

He, H., Bai, Y., Garcia, E. A. & Li, S. ADASYN: Adaptive synthetic sampling approach for imbalanced learning. In 2008 IEEE international joint conference on neural networks (IEEE world congress on computational intelligence), 322–1328. (2008).

Han, H., Wang, W. Y. & Mao, B. H. Borderline-SMOTE: A new over-sampling method in imbalanced data sets learning. InInternational conference on intelligent computing, Berlin, Heidelberg: Springer Berlin Heidelberg, 3644(5):878–887. (2005).

Douzas, G., Bacao, F. & Last, F. Improving imbalanced learning through a heuristic oversampling method based on k-means and SMOTE. Inf. Sci. 46 (5), 1–20 (2018).

Douzas, G. & Bacao, F. Geometric SMOTE a geometrically enhanced drop-in replacement for SMOTE[J]. Inf. Sci. 501, 118–135 (2019).

Kunakorntum, I., Hinthong, W. & Phunchongharn, P. A synthetic minority based on probabilistic distribution (SyMProD) oversampling for imbalanced datasets[J]. IEEE Access. 8, 114692–114704 (2020).

Wang, A. X. et al. Addressing imbalance in health data: synthetic minority oversampling using deep learning[J]. Comput. Biol. Med. 188, 109830 (2025).

Du, W. et al. Secure Privacy-Preserving SMOTE for Vertical Federated Learning[C]//International Conference on Advanced Data Mining and Applications. Singapore: Springer Nature Singapore, : 301–315. (2024).

Suguna, R. et al. Mitigating class imbalance in churn prediction with ensemble methods and SMOTE[J]. Sci. Rep. 15 (1), 1–20 (2025).

Huang, J. et al. Deciphering decision-making mechanisms for the susceptibility of different slope geohazards: A case study on a SMOTE-RF-SHAP hybrid model[J]. J. Rock Mech. Geotech. Eng. 17 (3), 1612–1630 (2025).

Imani, M., Beikmohammadi, A. & Arabnia, H. R. Comprehensive analysis of random forest and XGBoost performance with SMOTE, ADASYN, and GNUS under varying imbalance levels[J]. Technologies 13 (3), 88 (2025).

Alcalá-Fdez, J. et al. KEEL data-mining software tool: data set repository, integration of algorithms and experimental analysis framework. J. Mult Valued Log. Soft Comput. 17, 255–287 (2015).

Swana, E. F., Doorsamy, W. & Bokoro, P. Tomek link and SMOTE approaches for machine fault classification with an imbalanced dataset. Sensors 22 (9), 3246–3246 (2022).

Cheng, K. et al. Grouped SMOTE with noise filtering mechanism for classifying imbalanced data. IEEE Access. 7, 170668–170681 (2019).

Shaik, A. B. & Srinivasan, S. A Brief Survey on Random Forest Ensembles in Classification Model. In International Conference on Innovative Computing and Communications: Proceedings of ICICC, 2:253–260, Springer Singapore. (2018).

Chen, B., Xia, S., Chen, Z., Wang, B. & Wang, G. RSMOTE: A self-adaptive robust SMOTE for imbalanced problems with label noise. Inf. Sci. 553, 397–428 (2020).

Maulidevi, N. U. & Surendro, K. SMOTE-LOF for noise identification in imbalanced data classification. J. King Saud University-Computer Inform. Sci. 34 (6), 3413–3423 (2022).

Ramentol, E., Caballero, Y., Bello, R. & Herrera, F. SMOTE-RSB *: a hybrid preprocessing approach based on oversampling and undersampling for high imbalanced data-sets using SMOTE and rough sets theory. Knowl. Inf. Syst. 33, 245–265 (2012).

Guan, H., Zhang, Y., Xian, M., Cheng, H. D. & Tang, X. SMOTE-WENN: solving class imbalance and small sample problems by oversampling and distance scaling. Appl. Intell. 51 (3), 1–16 (2020).

Acknowledgements

This research was supported in part by the National Natural Science Foundation of China (Grant No. 62062051), Major Talent Project in Guangxi Province, Emergency Management Joint Innovation Technology Research Project of Guangxi (2024GXYJ052), Research Projects in Philosophy and Social Sciences of Guangxi (24GLF005) and Research Project on School Safety, Stability, and Emergency Response of Guangxi (GXAW2024A008).

Funding

This research was supported in part by the National Natural Science Foundation of China (Grant No. 62062051), Major Talent Project in Guangxi Province, Emergency Management Joint Innovation Technology Research Project of Guangxi (2024GXYJ052), Research Projects in Philosophy and Social Sciences of Guangxi (24GLF005) and Research Project on School Safety, Stability, and Emergency Response of Guangxi (GXAW2024A008).

Author information

Authors and Affiliations

Contributions

Y.Y.L. conceptualized and designed the study; Y.Y.L. was responsible for preprocessing the data, conducting code experiments, and checking the results; Y.Y.L. and L.Y. analyzed and interpreted the data, and are major contributors to writing the manuscript; L.Y., S.P.H., D.L., and R.R. gave constructive suggestions for the manuscript. All authors revised the manuscript for important intellectual content and approved the final version.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Li, Y., Yang, Y., Song, P. et al. An improved SMOTE algorithm for enhanced imbalanced data classification by expanding sample generation space. Sci Rep 15, 23521 (2025). https://doi.org/10.1038/s41598-025-09506-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-09506-w

Keywords

This article is cited by

-

Integrated Lesion and Extranodal PET/CT Radiomics for Predicting Treatment Response in Hodgkin Lymphoma

Journal of Medical and Biological Engineering (2025)

-

An investigation into detecting anomalous trading patterns in electricity markets utilizing a SMOTE-CMAES-LightGBM model

Discover Applied Sciences (2025)