Abstract

Currently, millimeter-wave imaging system plays a central role in security detection systems. Existing concealed object detectors for millimeter-wave images can only detect pre-trained categories and fail when encountering new, unseen categories. Accurately identifying the increasingly diverse types and shapes of concealed objects is a pressing challenge. Therefore, this paper proposes a novel open vocabulary detection algorithm: Open-MMW, capable of recognizing more diverse and untrained objects. This is the first time that open vocabulary detection has been introduced into the task of millimeter-wave image detection. We improved the YOLO-World detector framework by designing Multi-Scale Convolution and Task-Integrated Block to optimize feature extraction and detection accuracy. Additionally, the Text-Image Interaction Module leverages attention mechanisms to address the challenge of feature alignment between millimeter-wave images and text. Extensive experiments conducted on public and private datasets demonstrate the effectiveness of Open-MMW. Compared to the baseline model, Open-MMW improves recall by 13.7%, precision by 13.9%, mAP@0.5 by 14.2%, and mAP@[0.5–0.95] by 10.3%.The performance improvements are even more significant compared to state-of-the-art multimodal interaction models, showcasing powerful zero-shot detection capabilities not present in traditional closed-set detection.

Similar content being viewed by others

Introduction

As criminal methods continuously evolve, security screening technologies have become indispensable in various settings such as airport checkpoints, train stations, schools, and other institutions1,2,3. Concealed objects are small, difficult to detect, and their design shapes are constantly evolving demands on the generalization capabilities of these technologies. Traditional security screening methods, including X-ray systems4, metal detectors, and infrared detectors5, exhibit significant drawbacks. For instance, X-ray systems generate intense radiation that poses health risks, metal detectors are incapable of detecting non-metallic objects, and the weak infrared radiation limits the accuracy of detection. In recent years, millimeter-wave (MMW) scanners based on NF-SAR 3D imaging technology6,7,8,9,10 have been widely applied in various security screening scenarios, demonstrating several superior capabilities: (1) MMW signals are non-ionizing and pose minimal health risks to humans; (2) they eliminate the need for extensive manual searches; (3) they can penetrate non-conductive objects, clothing, and smoke with minimal signal attenuation11,12,13,14. Therefore, MMW scanners hold significant potential and offer vast development prospects in fields such as close-range target detection15,16.

However, millimeter-wave (MMW) images also present the following unavoidable issues: (1) Compared to optical images, MMW images have lower resolution and poorer image quality. (2) Environmental noise at the detection site can confuse the model’s judgments. (3) MMW reflection is weak for small objects, and the received waves may be obscured by reflections from the human body, directly affecting the model’s detection rate1,17. Therefore, efficiently detecting concealed objects remains a challenging task in current MMW image detection.

In addition to the common challenges discussed above, MMW image detection faces a new, era-specific challenge. The open real world introduces a variety of hidden objects, and traditional MMW image detection cannot include all objects in the pre-training samples. Moreover, in typical MMW application scenarios, acquiring a large and comprehensive annotated dataset for model pre-training is challenging. These complex conditions pose new challenges to the generalization capabilities of the models. To address these challenges, we have made new improvements to the detectors.

Over the years, researchers have designed various model algorithms to meet the demands of millimeter-wave (MMW) image detection. Early work primarily relied on statistical learning and regression algorithms18,19,20,21,22. As discussed in23, these methods often fail to meet the requirements of real-world environments because they can only detect pre-set datasets and typically involve simple objects with few features and monotonous backgrounds. In recent years, with the outstanding performance of deep learning in optical image object detection24,25,26, researchers have gradually proposed methods to apply deep learning frameworks to the detection of concealed object in MMW images27,28,29. However, these methods focus mainly on improving image resolution and detection accuracy. They require large amounts of training data and can only detect predefined object categories, which fundamentally limits their adaptability to open and unknown practical environments.

In this paper, we propose Open-MMW. As described in Fig. 1, compared to previous methods, our proposed Open-MMW combines the YOLO detector with a multimodal interaction model, offering fast detection speed, high efficiency, and strong zero-shot capabilities. Specifically, Open-MMW encodes the input text using a text encoder to obtain text embeddings, extracts rich multi-scale image features through the improved YOLO detector26 and Task-Integrated Block (TIB), and effectively aligns and interacts the lexical features of natural language with the visual features of millimeter-wave images in the Text-Image Interaction Module (TIIM) to obtain high-quality image-text pairs. Experimental results show that Open-MMW exhibits strong zero-shot performance in millimeter-wave image detection tasks, demonstrating a large vocabulary detection capability that traditional detection lacks. Moreover, training with more data can further enhance the open vocabulary capabilities.

Overview of our open-MMW: by utilizing text embedding and image feature matching, open-MMW achieves open vocabulary detection and can also be deployed offline.

In this work, we improved the YOLO-World detector. Although YOLO-World has been fully trained on visible light images, its performance on millimeter-wave images is very poor. Millimeter-wave images contain more random noise compared to optical images. To address this, we propose the Task-Integrated Block (TIB), which effectively enhances target detection and image super-resolution. First, we calculate the respective weights of three different dimensional detection maps generated during the forward process using an attention mechanism33. We then select the detection map for small objects as the reference and assign the weights of the other two detection maps to the reference map, further achieving information interaction and fusion. This method more efficiently extracts features from millimeter-wave images, allowing the model to better focus on edge and corner information in the images. In this way, TIB optimizes the accuracy and efficiency of feature extraction, significantly improving the detection performance of millimeter-wave images.

We also proposed the Text-Image Interaction Module (TIIM), which enables efficient interaction and alignment of text and image features33,34. The TIIM module consists of two key layers: the I-TM layer and the T-IA layer. The I-TM layer updates and enhances image features using text content, while the T-IA layer further optimizes text features by extracting information from the updated image features. Through this bidirectional interaction mechanism, the TIIM module can incrementally refine the information features between text and images over six layers of step-by-step updates, achieving more precise and comprehensive visual-semantic representations. Furthermore, to improve the deployment efficiency of the model in practical applications, we applied a method to remove the text encoder during the inference phase. Specifically, the pre-generated text embeddings can be re-parameterized as the weights of the TIIM module. This not only retains the influence of text information but also significantly reduces computational overhead, enabling efficient deployment35,36.

Additionally, we proposed a new convolution block based on multi-scale feature extraction31,32, called Multi-Conv, to replace the traditional convolution layers in the YOLO series. Multi-Conv consists of multi-scale fusion convolution layers, with three parallel convolutional branches (1 × 1, 3 × 3, 5 × 5) in a single layer, each capturing features at different scales. Smaller kernels capture fine details, while larger kernels capture more macro features. Each branch independently performs convolution operations on the input image, and the extracted feature maps are fused to form the final multi-scale feature output. This enhances the model’s expressiveness and robustness, improving feature extraction for target objects and suppressing background interference. By effectively capturing multi-level features, the model reduces missed detections and increases recall rates.

In summary, the main contributions of this paper can be summarized as follows:

-

1.

Methodological Innovation: We introduced open vocabulary detection to the field of millimeter-wave (MMW) for the first time. Our proposed Open-MMW model demonstrates powerful zero-shot performance, capturing the complex relationships between MMW images and textual vocabulary. It effectively addresses the evolving shapes and types of hidden objects in real-world environments. This prompt-then-detect paradigm offers new research directions for applying multimodal interaction models to the task of detecting hidden objects in MMW images.

-

2.

Technical Contribution: We proposed a module named the Text-Image Interaction Module (TIIM), which has achieved significant results in MMW image detection tasks. TIIM successfully generates high-quality image-text pairs and optimized model weights. During inference, this module can re-parameterize offline vocabulary embeddings as the weights of convolutional or linear layers, enabling efficient offline deployment. This innovation not only improves detection accuracy but also significantly enhances the overall performance and applicability of the system.

-

3.

Model Improvement: We integrated Multi-Conv into the YOLOv8 detector and proposed a new Task-Integrated Block (TIB) to further extract effective learning information. These improvements enable the model to effectively separate the high-frequency and low-frequency features of MMW images, better meeting the needs of specific tasks. Through this integration, the system not only improves the precision of feature extraction but also enhances its adaptability to complex scenarios.

-

4.

Experimental Results: To verify the effectiveness of our proposed methods, we conducted extensive experiments on private and public datasets. The results showed significant improvements in precision, recall, MAP@0.5, and MAP@[0.5–0.95], with increases of 13.9%, 13.7%, 14.2%, and 10.3% respectively over baseline models. Compared to existing closed-set MMW image detection models, our model also demonstrated superior performance. Notably, our model exhibited large vocabulary detection capabilities not possessed by traditional methods, further proving its applicability and advantages in complex scenarios.

Related work

Concealed object detection in AMMW

Figure 2 shows a 3D active millimeter-wave imaging system. The 3D active millimeter-wave imaging system by emitting hundreds of millimeter-wave signals and receiving the reflected signals from objects. It uses multi-angle scanning and signal analysis techniques to reconstruct a three-dimensional image of the object. The main advantage of active millimeter-wave imaging lies in its ability to penetrate clothing and non-metallic materials, while being unaffected by environmental lighting conditions. This makes it ideal for applications such as security screening and medical imaging. In contrast, passive millimeter-wave imaging relies on the natural millimeter-wave radiation emitted by objects or the human body for imaging, and is typically used for detection in low-light environments.

Description of 3D active millimeter-wave imaging system.

Specifically, when a person walks through the scanner, the body is scanned from all angles in positions such as standing, with arms naturally hanging or slightly extended. This generates millimeter-wave images from both the front and the back, where the human body remains stationary. In the AMMW image sequence, the person remains stationary, but the suspicious objects or their locations on different individuals may vary. This study focuses on images taken from the front and back views. Due to the penetrating characteristics of millimeter waves, the millimeter-wave body images can reveal hidden objects, which is a key advantage for security screening.

Due to the unclear imaging and diverse types of suspicious objects in active millimeter-wave (AMMW) images, target detection in AMMW images remains a challenging task. Current research on AMMW image detection mainly focuses on closed-set detection54,55,56, where object detectors are trained on datasets with predefined categories. Early research on detecting suspicious objects in AMMW images primarily relied on traditional statistical methods and manual designs18,19,20, which suffer from poor detection efficiency and low robustness.

With the rapid development of deep learning in the field of machine vision, neural network-based methods are being applied to this field. Machine learning can help people accurately detect objects. Popular methods such as R-CNN24 and Faster-RCNN25 have emerged, leveraging convolutional neural networks (CNN) to compute feature maps for entire images. These methods use selective search algorithms to generate region proposals on the feature maps, perform RoI pooling to extract fixed-size features from these proposals, and then classify and regress bounding boxes using fully connected layers to achieve object detection. Yeom et al.37,38 designed a multi-layer expectation-maximization (EM) method to separate hidden targets from human regions, employing principal component analysis (PCA) to extract target features and measure similarity between targets and ground truth. Dandan Guo et al.39 designed a Siamese Network to compare differences between the left and right limbs of the human body, transforming the detection task into a binary classification problem. Su et al.29 enhanced MMW image resolution through wavelet transformation and detected MMW images based on the YOLOv8 network. López-Tapia et al.16 used classical machine learning algorithms such as support vector machines and random forests for detection. However, these methods are all closed-set detections, tending to classify or regress predefined anchors or regions, thus showing poor generalization capabilities.

Subsequently, researchers broadened their perspectives. Cheng Guo et al.40 explored a denoising coarse-to-fine transformer (DCFT) which is a query-based object detection method using transformers. However, this detection method essentially remains within the realm of traditional detection.

The Open-MMW network model proposed in this paper aims to detect objects beyond fixed vocabularies, possessing strong zero-shot detection capabilities. The model completes information interaction between images and text boxes through the Text-Image Interaction Module (TIIM) and uses attention mechanisms to extract as much information as possible from the raw images to achieve better output representation and enhance the model’s learning ability. To our knowledge, this is the first study to combine open-set detection with MMW images, demonstrating powerful zero-shot detection capabilities.

Open-vocabulary object detection

Traditional object detectors can only be trained on a predefined set of fixed categories, such as the Objects36541 dataset and COCO dataset42. This means that the detectors can only recognize and detect the categories seen during training, limiting their applicability to open scenarios. Open Vocabulary Object Detection (OVD)43 has become increasingly important in practical environments where the types of suspicious items being carried are constantly evolving. Its goal is to detect objects beyond the predefined categories. OVD is not limited to predefined fixed categories and can detect and recognize new categories that were not seen during training. This makes OVD more flexible and adaptable.

Early work by Gu et al. proposed the ViLD44 model, which allows for the detection of new (unknown) classes by training a detector on a set of base classes. However, it is limited by its reliance on training within a specific domain using a limited dataset, thus lacking the ability to generalize to other domains. Other methods45,46,47 use text encoders from pre-trained visual-language models to generate class text embeddings, framing open vocabulary object detection as an image-text matching problem. Matthias Minderer et al. proposed OWLVits48, leveraging large-scale image-text data for pre-training. This data includes a substantial number of images paired with corresponding text descriptions. Through this pre-training approach, the model learns the association between vision and language, thereby enhancing its ability to understand and recognize open vocabulary objects. Grounding DINO49 combines the strengths of Deformable DETR and DINO by utilizing deformable attention mechanisms to efficiently compute self-attention over multi-scale feature maps. It also introduces dynamic anchor mechanisms to localize target areas, achieving precise object detection. However, these methods typically employ heavy detectors with Swin-L50 as the backbone. The drawbacks of this include high model complexity, significant computational resource consumption, extended training and inference times, and high demands for large-scale data and hardware environments, limiting their application in resource-constrained scenarios.

Subsequently, researchers proposed multimodal models with broader applicability. CLIP51 uses contrastive learning to simultaneously train image and text encoders, aligning matched images and texts in the vector space for mutual understanding and matching. BERT52 by Devlin et al. leverages a bidirectional Transformer architecture, pre-trained with tasks like Masked Language Model (MLM) and Next Sentence Prediction (NSP) to generate deep language representations, fine-tuned for various natural language processing tasks. However, these methods suffer from slow inference speed, inefficiency in handling long texts, and limited generalization capabilities to specific domains.

Illustration of the comparison between traditional detectors and YOLO-world.

Recently, as shown in Fig. 3, YOLO-World30 proposed a prompt-then-detect paradigm, where users generate a series of prompts based on the actual situation, which are then encoded into offline vocabulary. These can be reparameterized for deployment to achieve zero-shot detection. The lightweight model is more suitable for integration into MMW image detection tasks. Inspired by this, our core challenge is to adapt the baseline model, originally applied to visible light images, for MMW images and achieve better text-image pairing. However, the precision of YOLO-World in detecting small objects is not ideal and tends to become imbalanced with low-quality data. Therefore, our core challenge is to improve this baseline model for better performance in MMW image detection and achieve more accurate text-image pairing.

Method

In this section, we will comprehensively explain the working principles of multi-scale convolution, the Task-Integrated Block (TIB), and the Text-Image Interaction Module (TIIM). These designs enhance the model’s feature extraction capabilities for millimeter-wave images, generate effective image-text pairs, and achieve alignment and fusion between the lexical features of natural language and the visual features of millimeter-wave images.

Illustration of feature extraction and concatenation using three different sizes of convolutional kernels.

Multi-scale convolution

Multi-scale convolution refers to the design of multiple parallel convolutional kernel branches within a single convolutional layer. Each branch has kernels of different sizes to capture features at various scales in the image. Smaller kernels can capture fine details, while larger kernels can capture more macro-level features. Each branch independently performs convolution operations on the input image, and then the extracted feature maps from each branch are fused and concatenated to form the final multi-scale feature output. By extracting features at different scales, multi-scale convolution helps in capturing targets of various sizes and details in the image. The fusion of low-level detail information and high-level semantic information improves the recognition of small objects. We integrated multi-scale convolution into the YOLOv8 detector to enhance the model’s ability to recognize diverse targets in millimeter-wave images, thereby improving the model’s performance and robustness.

Assuming we have an input feature map and multiple convolutional kernels, where i denotes different scales, and each convolutional kernel has a different size (e.g., 1 × 1, 3 × 3, 5 × 5), as shown in Fig. 4, the convolution operation for each scale i can be represented as:

Where \(\:\ast\:\) denotes the convolution operation, \(\:{\text{n}}_{\text{i}}\)is the output feature map obtained after using the convolutional kernel \(\:{\text{K}}_{\text{i}}\). The specific representation is shown in Eq. (2), where \(\:\text{i}\) and \(\:\:\text{j}\) are the row and column indices of the output feature map \(\:{\text{n}}_{\text{i}}\), \(\:\text{m}\) and \(\:\text{n}\) are the row and column indices of the convolutional kernel, \(\:\text{I}\left(\text{i}\cdot \:\text{s}+\text{m},\:\text{j}\cdot \:\text{s}+\text{n}\right)\) is the pixel value of the input feature map \(\:\text{I}\) at position \(\:\left(\text{i}\cdot \:\text{s}+\text{m},\:\text{j}\cdot \:\text{s}+\text{n}\right)\), \(\:\text{s}\) denotes the stride, and \(\:\text{K}\left(\text{m},\:\text{n}\right)\) is the weight value of the convolutional kernel \(\:{\text{K}}_{\text{i}}\) at position \(\:\left(\text{m},\:\text{n}\right)\).

The final output of multi-scale convolution can be obtained by combining the output feature maps of all scales through operations such as concatenation or weighted summation. The final output can be represented as follows:

\(\:\left[\cdot \:\right]\:\)represents the concatenation operation of the feature map.Traditional convolution methods struggle to adequately capture features at different scales, often resulting in missed detections of small objects. To address this, we integrate Multi-conv into the YOLO detector, forming the Multi-YOLO framework specifically designed for millimeter-wave images, as illustrated in Fig. 5. By extracting fine-grained features at small-scale convolutional layers and global features at large-scale convolutional layers, Multi-YOLO effectively enhances object detection and image super-resolution performance.

Overview of our Multi-YOLO. The blue blocks represent our proposed Multi-conv, which replace the original traditional convolutional layers.

Many popular algorithms have adopted similar approaches. For instance, the Faster R-CNN25 object detection algorithm uses a Feature Pyramid Network (FPN) to improve detection performance. GoogLeNet53 processes features at different scales in parallel at the same hierarchical level, significantly enhancing model performance.

In our approach, we replace the traditional convolution operations of the YOLO detector with multi-scale convolution fusion using three different sizes of convolutional kernels. This fusion is crucial for integrating low-level detail information with high-level semantic information in millimeter-wave images. Our experiments confirm the effectiveness of this approach.

Task-integrated block

To fully utilize image feature information and enhance the ability of the model to detect small objects, we integrate the detection maps at three different scales in the YOLO detector for joint training. Before the interaction between image features and text embeddings, we propose the Task-Integrated Block (TIB). This block employs both cross-attention and self-attention mechanisms. The self-attention mechanism dynamically allocates weights to different regions of the input image’s feature map, enabling better capture of important features. This effectively integrates high-level and low-level feature information, enhancing feature representation capability, and consequently improving object localization accuracy and overall detection performance. Cross-attention captures the correlations between feature maps, aiding the model in more accurately locating and identifying target objects.

Specifically, the three different scale detection maps are used to detect large, medium, and small objects, respectively. However, in the task of millimeter-wave image detection, large and medium objects are almost impossible to be hidden on the human body without being noticed. Therefore, we assign the detection map for small objects as the primary weight map to ensure efficient detection of small targets.

In this way, the Task-Integrated Block optimizes the accuracy and efficiency of feature extraction, allowing the model to exhibit stronger performance and adaptability in detecting hidden objects in millimeter-wave images.

First, the self-attention coefficients are calculated for the obtained detection maps \(\:{\text{F}}_{1}\), \(\:{\text{F}}_{2}, {\text{F}}_{3}\) to enhance their internal feature representation. Self-attention adjusts the features of each position by computing the dependencies between every feature position and all other positions. The calculation formulas for Query \(\:{\text{Q}}_{\text{i}}\), Key \(\:{\text{K}}_{\text{i}}\) 和 Value\(\:{\:\text{V}}_{\text{i}}\)are as follows:

Here, \(\:{\text{C}}_{\text{i}}\left(\text{x},\text{y}\right)\in\:{\mathbb{R}}^{\text{D}}\:\)represents the feature value of the feature map at coordinates\(\:\left(\text{x},\text{y}\right)\), and\(\:{\:\text{P}}_{\text{i}}\left(\text{x},\text{y}\right)\in\:{\mathbb{R}}^{\text{D}}\) represents the positional encoding at position \(\:\left(\text{x},\text{y}\right)\). The specific calculation formulas are as follows:

Here, \(\:\left[\text{x},\text{y},\text{w},\text{h}\right]\)is the position information of the anchor box generated by the query result, where\(\:\text{n}\:\)represents the value in\(\:\:\left[\text{x},\text{y},\text{w},\text{h}\right]\), \(\:\text{j}\) is the position index, and \(\:\text{D}\) is the dimension of the embedding vector.

Next, we will calculate the attention score and update each feature map.

Where \(\:{\text{d}}_{\text{k}}\:\)is the number of queries and\(\:{\:\text{F}}_{\text{i}}^{\ast\:}\:\)is the updated feature map.

The cross-attention mechanism is used to integrate information from different feature maps. First, we select the detection map for small objects as the reference because it contains information closest to the target information. We have designated it as \(\:{\text{F}}_{1}^{\ast\:}\)and then integrate the information from \(\:{\text{F}}_{2}^{\ast\:}\), \(\:{\text{F}}_{3}^{\ast\:}\) into \(\:{\text{F}}_{1}^{\ast\:}.\)

Among them, \(\:\left[{\text{Q}}_{\text{i}}^{\ast\:},{\text{K}}_{\text{i}}^{\ast\:},{\text{V}}_{\text{i}}^{\ast\:}\right]\:\)is the Query, Key, and Value calculated using formulas (4), (5), and (6) on \(\:{\text{F}}_{\text{i}}^{\ast\:}\).

As shown in Fig. 6, the Image Fusion Layer updates the feature maps layer by layer, integrating the three different scale feature maps of the YOLO detector using the attention mechanism. By calculating the correlation between each feature and all other features, a weighted feature representation is generated, effectively capturing both global and local information relationships. This method allows the model to automatically emphasize key features while diminishing irrelevant or redundant ones, thereby enhancing the expressive power of feature representation. When dealing with complex backgrounds and partial occlusions, the self-attention mechanism helps the model better distinguish target objects, improving detection accuracy and robustness. By combining features of different scales, the self-attention mechanism further enhances the model’s generalization ability and overall detection performance, which will be validated in the experimental section.

Illustration of the task-integrated block (TIB): the TIB is composed of six image fusion layers.

Text-image interaction module

In millimeter-wave image detection in open scenarios, achieving interaction between text and images is crucial due to the diverse and variable nature of objects in open scenes. Traditional closed-set detection methods struggle to handle all possible targets. To address this issue, we designed the Text-Image Interaction Module (TIIM), as shown in Fig. 7. TIIM efficiently facilitates the transfer of information between text and images. This module includes the I-TM layer, which updates image content with text content, and the T-IA layer, which updates text information based on the updated image information.

Illustration of the text-image interaction module (TIIM): the proposed TIIM uses the text-guided I-TM layer to inject linguistic information into image features and the T-IA layer to aware text embeddings.

The I-TM layer updates image content with text content, achieving cross-modal synchronization by superimposing text information onto image features, thus enhancing the expressive power of image features. The T-IA layer updates text information based on the updated image information, using the attention mechanism to feed key information from image features back into text embeddings, making the text representation more precise and semantically rich. Through six layers of step-by-step updates, TIIM further refines the information features between text and images, improving the visual semantic representation capability of open vocabulary.

During inference, TIIM can reparameterize offline vocabulary embeddings into weights for convolutional or linear layers, enabling efficient model deployment. This approach not only improves the accuracy of text and image information interaction but also enhances adaptability to complex scenes. The design of TIIM effectively improves the performance of open vocabulary detection, making it more flexible and efficient in practical applications.

The key advantages of this method lie in its hierarchical information interaction mechanism and flexible weight parameterization strategy, allowing the model to excel in multimodal data processing. This is particularly evident in millimeter-wave image detection tasks, where it demonstrates strong zero-shot detection capabilities and high-precision feature extraction.

For the I-TM layer, given the text embedding \(\:\text{T}\) and the image features \(\:\text{F}\), in the final block, the text features are aggregated into the image features using the following method:

Among them, \(\:{\text{F}}^{^\circ\:}\) is the output feature map, \(\:\partial\:\) represents the Sigmoid function, and \(\:{\text{T}}_{\text{e}}\) is the e-th vector of the text embedding \(\:\text{T}\). We start the calculation from the first vector of the text embedding \(\:\text{T}\), extracting the highest weight value and assigning it to the feature map to update the image information. The purpose of this is to make the image pay more attention to regions that have a higher relevance to the text information, thereby enhancing the image’s ability to incorporate the text.

For the T-IA layer, to enhance text embeddings using image perception information, we update the text embeddings by aggregating image features. In the T-IA layer, due to the mismatch between text embeddings and visual feature maps, direct interaction is not feasible. Therefore, we first pool the visual feature map to obtain 3 × 3 regions, generating a total of 9 patch tokens\(\:\text{W}\in\:{\mathbb{R}}^{9\text{x}\text{D}}\). The text embeddings are then updated as follows:

Here, the Query is the text embedding \(\:\text{T}\), while the Key and Value are the patch tokens \(\:\text{W}\). Through MultiHead-Attention, the attention weights are assigned to the text embeddings, which are then returned to obtain the new text embeddings \(\:{\text{T}}^{^\circ\:}\).

The proposed Text-Image Interaction Module (TIIM) significantly enhances the correlation between images and text. This interactive approach allows the model to better understand the semantic information of images while using text information to optimize the accuracy of object detection and classification. By combining text descriptions with image features, the model can locate and identify more complex and subtle objects in the image, improving overall recognition capability and precision. This fusion not only enhances the robustness of the model but also extends its application range in multimodal tasks, increasing the practicality and intelligence of Open-MMW in real-world applications.

Text detecter

We use a pre-trained Transformer text encoder as the Text Encoder to generate the corresponding text embeddings \(\:\text{T}=\text{T}\text{e}\text{x}\text{t}\text{E}\text{n}\text{c}\text{o}\text{d}\text{e}\text{r}\left(\text{t}\right)\in\:{\mathbb{R}}^{\text{C}\ast\:\text{D}}\:\), where \(\:\text{C}\) is the number of nouns and \(\:\text{D}\) is the embedding dimension. Compared to a pure text language encoder, the Transformer text encoder provides better visual semantic capabilities for linking visual objects and text.

Traditional object detection methods, including the YOLO series26, are trained using instance annotations \(\:{\upsigma\:}={\left\{{\text{b}}_{\text{i}}\right.\left.,{\text{c}}_{\text{i}}\right\}}_{\text{i}=1}^{\text{N}}\), which consist of bounding boxes \(\:\left\{{\text{b}}_{\text{i}}\right\}\) and class labels \(\:\left\{{\text{c}}_{\text{i}}\right\}\). In this paper, we reframe instance annotations as region-text pairs \(\:{\upsigma\:}={\left\{{\text{b}}_{\text{i}}\right.\left.,{\text{t}}_{\text{i}}\right\}}_{\text{i}=1}^{\text{N}}\), where \(\:{\text{t}}_{\text{i}}\)is the corresponding text of the region \(\:{\text{b}}_{\text{i}}\). Specifically, the text \(\:{\text{t}}_{\text{i}}\) can be class names, noun phrases, or object descriptions. When the input text is subtitles or referential expressions, we apply a simple n-gram algorithm to extract noun phrases from the input sentence text. Through the interactive training of image \(\:\text{F}\) and text \(\:\text{T}\:\), the output is the prediction boxes\(\:\:\left\{{\text{b}}_{\text{n}}\right\}\) and corresponding awakening embeddings \(\:\left\{{\text{e}}_{\text{n}}\right\}\). We then calculate the similarity\(\:{\upalpha\:}\) between the text and \(\:\left\{{\text{e}}_{\text{n}}\right\}\). The calculation formula is as follows:

Among them, \(\:{\upomega\:}\) is a learnable scaling factor, and \(\:{\upphi\:}\) is a shifting factor, used to adjust and optimize the distribution of the data so that the model can learn and generalize better. \(\:{\text{L}}_{2}-\text{N}\text{o}\text{r}\text{m}(\ast\:)\) denotes the norm (Euclidean norm) used to normalize the vectors. By evaluating the similarity between different feature descriptors, the system can more accurately determine the type of hidden objects and identify targets in complex backgrounds.

In the inference stage, we applied a prompt-then-detect strategy with an offline vocabulary. Users can set a series of prompts, including nouns or phrases, which are input into the model for encoding to obtain offline vocabulary embeddings, updating the Open-MMW corpus, and further enhancing the model’s generalization ability.

Experiments

This section discusses how to fine-tune the model to meet the specific needs of millimeter-wave images. Due to their unique imaging method and low resolution, millimeter-wave images exhibit significant differences in visual features compared to conventional RGB images. Therefore, we need to not only improve the network structure but also prepare an appropriate dataset and optimize parameters such as learning rate and training iterations to enhance model performance, making it better suited for millimeter-wave image applications. Ultimately, we obtained model weights adapted for the millimeter-wave image detection task and conducted a comprehensive evaluation. The fine-tuned Open-MMW model demonstrated very strong detection capabilities in zero-shot evaluation. Specifically, the model not only accurately recognized objects of known categories but also effectively detected previously untrained hidden objects. This result indicates that our approach holds great potential in the fields of open vocabulary detection and multimodal interaction models.

Dataset

We constructed and curated a specialized millimeter-wave image dataset for training and testing. To ensure the diversity and representativeness of the data, We collected a large number of public and private millimeter-wave image samples, including various types of hidden objects and different shooting angles. These datasets provide a solid foundation for model training and evaluation.The data collected for this study comes from a third-party database, Beijing SIG Matrix Technology Co., Ltd. approved this study. All volunteers have obtained written informed consent to participate in the experiment. The authors confirm that all methods were carried out in accordance with relevant guidelines and regulations.

Our millimeter-wave image dataset is generated by a millimeter-wave imaging system operating in the Ka band with 64 frequency points (24.4–27.8 GHz, with a bandwidth of 5 GHz). The dataset is rich and diverse: we selected eight categories of suspicious objects for the experiments, including firearms, explosives, knives, and more. We also set diverse carrying positions for the objects, such as the thighs, back, abdomen, arms, and boots of the human body, as shown in Fig. 8. Each volunteer, when entering the scanning platform, is imaged by a millimeter-wave body scanner, producing front and back images. Each millimeter-wave image is 440 × 840 pixels in size.

Additionally, we included nearly 30% of samples without object annotations (negative samples) in the dataset. This helps the model learn background information and distinguish between target and non-target objects, thereby improving the model’s robustness and generalization ability.

To investigate whether the Open-MMW model can maintain good performance on a broader range of data, we prepared three datasets of different sizes: MMW-S, MMW-M, and MMW-L. The data scales are shown in Table 1. Among them, MMW-M is specially designed for zero-shot evaluation, while MMW-S is sourced from the public THz dataset57 to evaluate our algorithm’s performance on the public dataset. In MMW-S and MMW-L, the data for the training set, testing set, and validation set are divided in a ratio of 8:1:1, as shown in Table 2.

Visualization of the eight categories of concealed objects in the dataset. One front view and one back view for each category.

Implementation details and evaluation metrics

The proposed Open-MMW algorithm is configured in a Python 3.9 virtual environment using the PyTorch 2.3.1 framework. The experiments were conducted on a computer equipped with an NVIDIA RTX 3060 GPU. The annotation files are created in COCO format. Due to the stylistic differences between optical images and millimeter-wave images, we made adaptive adjustments to the model.

Specifically, we first fine-tuned the visual encoder to better capture the unique textures and object contours in millimeter-wave images. This step included adjusting parameters such as the number of iterations for the encoder, particularly focusing on the image feature extraction layer to adapt to the lower contrast and resolution of millimeter-wave images. Then, we conducted experiments to determine the optimal learning rate and batch size, which are crucial for avoiding overfitting and ensuring the model’s generalization ability on new data.

During training, we used cross-validation to monitor the model’s performance on an independent validation set and adjust the training strategy accordingly. To optimize the model, we set the batch size to 8 and the epoch to 7, and used the AdamW optimization algorithm with an initial learning rate of 0.0002. Through these adjustments and optimizations, the fine-tuned CLIP model demonstrated significant improvements in the application of millimeter-wave images. The model can accurately interpret and match the association between millimeter-wave images and corresponding text descriptions, providing strong support for subsequent applications in security detection and automatic recognition.

Additionally, we evaluated the model’s accuracy using a set of performance metrics: recall, precision, mAP@0.5, and mAP@[0.5–0.95]. Precision measures the reliability of the detector during the inference process. Recall measures the detector’s comprehensiveness in sample detection. The mAP@0.5 metric calculates the mean average precision at an Intersection over Union (IoU) threshold of 0.5. An IoU of 0.5 means that the overlap area between the predicted bounding box and the actual bounding box is at least 50% of their total combined area. The mAP@[0.5–0.95] metric calculates the mean average precision averaged over different IoU thresholds (from 0.5 to 0.95, with a step size of 0.05, totaling 10 thresholds). This is a more stringent evaluation standard, considering a broader range of IoU thresholds.

Zero-shot evaluation

Zero-Shot Learning (ZSL) is a technique for making predictions on new classes (zero-shot classes) that are not seen in the training set. It aims to enable the model to recognize and classify categories not encountered during the training phase. This method is significant for hidden object detection because annotated datasets often cannot cover all possible categories, especially new or rare ones. In zero-shot learning, the model learns the word vector representations and image features of known classes and the text-image pairs during the training phase. During the testing phase, it uses semantic information, such as noun phrases or class name embeddings, to identify these unseen categories. These unseen categories only require a prompt word, eliminating the need for traditional annotated data, thereby freeing hidden object detection from tedious annotation work.

We evaluated the model on the MMW-M dataset, where the training set contains 6 known categories (Baby_oil, Small_folding_knife, Lighter, Powder, Rectangular_explosive, Saucer_explosive), the test set contains 2 categories not present in the training set (Gun, Fruit_knife), and the validation set includes data from known categories as well as some pseudo-zero-shot samples (Fruit_knife, Baby_oil, Small_folding_knife). This allocation scheme effectively evaluates the model’s performance on known and unknown categories and ensures the rationality and scientific validity in zero-shot detection scenarios.

We compared the performance of our model with three common multimodal interaction models and our baseline model on the MMW-L benchmark. These comparisons were conducted in a zero-shot setting, where the model makes predictions without having seen samples of the test categories. The results, shown in Table 3, clearly indicate that our algorithm significantly outperforms other common models in all metrics. Compared to the baseline model, our metrics improved by 14.1%, 13.1%, 10.4%, and 9.5%, respectively. This validates the strong zero-shot detection capability of Open-MMW on millimeter-wave images.

The following section introduces some common detection methods in image detection, including open-set detection methods and closed-set detection methods.

Open-set detection:

-

CLIP51: This model consists of an image encoder (such as ResNet or Vision Transformer) and a text encoder (such as Transformer). The two encoders respectively transform images and text into vector representations, then are trained through contrastive learning to maximize the similarity of correct image-text pairs and minimize the similarity of incorrect pairs.

-

Grounding DINO49: This model combines the advantages of Deformable DETR and DINO. It uses the Deformable Attention mechanism to efficiently compute self-attention on multi-scale feature maps and locates target areas through a dynamic anchor mechanism.

-

Swin Transformer50: This hierarchical network architecture utilizes the shifted window mechanism to calculate self-attention within local windows and gradually expands the receptive field across different layers, achieving feature extraction from local to global, making it suitable for processing high-resolution images.

Closed-set:

-

Faster R-CNN25: This is a two-stage object detection model. It first uses a Region Proposal Network (RPN) to generate candidate regions (proposal boxes), and then applies a Convolutional Neural Network (typically ResNet or VGG) to these candidate regions for fine classification and bounding box regression, achieving high-precision object detection.

-

EfficientNet54: This is a series of convolutional neural networks designed for image classification. It employs a compound scaling strategy to systematically adjust the network’s depth, width, and resolution, optimizing the model’s parameter efficiency and computational efficiency, significantly reducing the need for computational resources while maintaining high accuracy.

-

RetinaNet55: This is a single-stage object detection model that uses a Feature Pyramid Network (FPN) as the backbone. It performs predictions on feature maps at different scales and uses the innovative Focal Loss to address the class imbalance problem, achieving efficient object detection.

-

YOLOv856: This model adopts an improved network architecture and optimization techniques, integrating object detection, classification, and segmentation tasks into an efficient single-stage model. By using CSPNet and other optimization strategies, it provides higher detection accuracy and inference speed.

Comparative experiments

In Table 4, we compare the Open-MMW algorithm with state-of-the-art closed-set and open-set detection algorithms. The comparative experiments were conducted on the MMW-L dataset, using a computer equipped with an NVIDIA RTX 3060 GPU. Training parameters such as epochs and batch size were uniformly set, and the experiments were carried out in the same environment. All models were implemented on MMDetection.

It is worth noting that, by inheriting the lightweight reparameterization structure of the baseline model, our model achieves better results with fewer parameters and faster inference speed. On the metrics of map@0.5 and map@[0.5-0.95], compared to the current state-of-the-art Open-set algorithms, our model outperforms Grounding DINO by 33.2% and 17.3%, Clip by 16.4% and 17.8%, Swin-T by 15.3% and 10.9%, and Yolo-World by 14.2% and 10.3%. These results strongly demonstrate that, in the field of Open-set algorithms, our model is absolutely leading. Furthermore, compared to Closed-set algorithms, we outperform Faster R-CNN by 5.4% and 3.1%, Efficient Net by 36.2% and 30.4%, Retina Net by 3.8% and 6.3%, and Yolo v8 by 3.3% and 4.8%. Although the detection performance difference compared to Closed-set algorithms is not large, our algorithm, being Open-set, has a powerful zero-shot detection capability, enabling it to detect objects that have not been trained on. This is a novel multimodal interaction detection model, which is an advantage that Closed-set algorithms do not have.

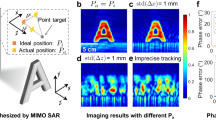

Visualization of the experimental results of state-of-the-art open-set and closed-set detection methods and open-MMW.

As shown in Fig. 9, we present the results of eight comparative experiments. While most models can detect some suspicious objects, there are significant differences in detection accuracy and stability. Many traditional models, such as Faster R-CNN and RetinaNet, tend to miss or falsely detect objects in complex backgrounds or partially occluded scenarios. Multimodal models like CLIP and Swin-T perform well when the target objects have clear semantic information and strong visual contrast, but they adapt poorly to the specific characteristics of millimeter-wave images, resulting in unstable detection outcomes. YOLO v8 has an advantage in detection speed, but it needs improvement in detail processing and small object detection.

In contrast, our proposed model, after incorporating multi-scale convolution and cross-attention mechanisms, can better integrate feature maps of different scales. This enhances the detection accuracy and robustness of suspicious objects of varying sizes and shapes. Particularly in complex backgrounds and partially occluded scenarios, our model demonstrates higher detection accuracy and stability, significantly reducing missed and false detections.

Ablation experiments

We conducted ablation experiments on the four modules: Multi-Conv, TIB, I-TM, and T-IA. The results are shown in Table 5. Multi-Conv replaces the original ordinary convolution in the YOLO detector’s backbone with multi-scale convolution. TIB is a method for attention training on the three different dimensional output feature maps produced by the YOLO detector. The I-TM layer updates image features with text features, while the T-IA layer updates text information based on the updated image information.

The experimental results show that in the mAP@0.5 metric, using Multi-Conv improves performance by 7.9%, using TIB improves it by 4.7%, and using the I-TM and T-IA layers improve it by 1.4% and 1.2%, respectively. These data indicate that our improvements are highly effective.

Specifically, the Multi-Conv module successfully enables the model to learn multi-band information from the original features, combining high-resolution shallow features, which is more conducive to improving the detection accuracy of small objects. The TIB module effectively captures long-range dependencies between different positions in the image by integrating output feature maps of different dimensions, enhancing overall detection performance. The self-attention mechanism dynamically adjusts the weight of each feature by calculating the similarity between input features, allowing the model to focus on more meaningful features while ignoring noise and irrelevant features, thus improving detection accuracy.

Although the improvements in accuracy from the I-TM and T-IA layers are relatively small, they play a crucial role in recognizing previously unseen categories, i.e., zero-shot detection. These layers more effectively integrate semantic and text features, generating high-quality image-text pairs, providing the model with richer contextual information, thus enhancing the model’s adaptability and recognition performance in open scenarios.

In conclusion, the ablation experiment results clearly demonstrate that the Multi-Conv and TIB modules play significant roles in improving detection accuracy, while the I-TM and T-IA layers enhance the model’s zero-shot detection capabilities. These improvements enable our Open-MMW model to perform exceptionally well in millimeter-wave image detection tasks, providing a solid foundation for future research on multimodal interaction models.

In the research of the Open-MMW model, we found that increasing the size of the training dataset significantly enhances the model’s object detection performance. The results are shown in Table 6. This performance improvement is mainly attributed to the model’s exposure to a wider and more diverse range of samples when trained on a larger dataset, enabling it to learn richer and more comprehensive feature representations. This not only enhances the model’s adaptability to different scenes and complex environments but also improves its robustness and generalization ability in practical applications. By extracting fine-grained features from a large amount of data, the Open-MMW model can more accurately identify and locate targets, significantly improving the precision and reliability of detection results. The experimental results also fully demonstrate that the Open-MMW model can be trained on a broader dataset and achieve better outcomes, showcasing the powerful learning capability advantages of open-set detection.

In the selection of the text encoder, we compared two different text encoders: BERT text encoder and CLIP text encoder. The BERT text encoder works by employing a bidirectional Transformer architecture, encoding the input text through a self-attention mechanism while considering the context of words. It learns deep language representations through pre-training tasks such as Masked Language Model (MLM) and Next Sentence Prediction (NSP), generating rich text vector representations. The CLIP text encoder works by using a Transformer architecture to encode the input text, capturing contextual relationships in the text through a self-attention mechanism and generating a fixed-length text vector representation. This text vector is compared with the image vector generated by the image encoder during multimodal contrastive learning, ensuring that matching image-text pairs have similar representations in the vector space, thus achieving semantic alignment and mutual understanding between images and text.

During training, we set two modes: Frozen and Fine-tune. The results, as shown in Table 7, indicate that using the CLIP text encoder improves the mAP@0.5 metric by 11.4% and the mAP@[0.5–0.95] metric by 10%. This is because the CLIP text encoder, pre-trained on image-text pairs, has better vision-centric embedding capabilities. Freezing the CLIP text encoder achieves better accuracy, likely due to the low quality and high noise of the millimeter-wave image data. The text encoder might overfit the training data, losing the general features and semantic information learned during pre-training, leading to a decline in generalization ability. Therefore, using a frozen CLIP text encoder is more suitable for our proposed model.

Discussion

In this study, we proposed a novel open vocabulary detection algorithm, Open-MMW, successfully applied to millimeter-wave image detection. We integrated the Yolo-World detector framework and added modules that enhance the feature extraction capabilities of millimeter-wave images, significantly improving the model’s performance in complex backgrounds and zero-shot detection. We conducted extensive experiments to validate the effectiveness of the proposed method, including tests on both private and public datasets. The experimental results show that the model improves the baseline model by 13.9% in precision, 13.7% in recall, 14.2% in mAP@0.5, and 10.3% in mAP@[0.5–0.95]. These improvements not only significantly enhance the overall detection capability of the model but also demonstrate strong zero-shot detection performance, effectively recognizing unseen categories and hidden objects.By introducing Multi-Scale Convolution (Multi-Conv) and Task-Integrated Block (TIB), the model better separates high and low-frequency features in millimeter-wave images, improving the accuracy of small object detection. The Text-Image Interaction Module (TIIM) further strengthens the association between images and text, enabling the model to more accurately understand and match images with textual descriptions.The main contribution of this research is the introduction of open vocabulary detection into the field of millimeter-wave images for the first time, demonstrating powerful zero-shot performance. This innovation not only provides a new solution for millimeter-wave image detection but also opens up new research directions for the application of multimodal interaction models in the future. Through a series of improvements and optimizations, the Open-MMW model has shown high practicality and intelligence in real-world applications, providing strong technical support for security detection and automatic recognition applications. In the future, we will conduct our research in three aspects:

-

1.

Dataset Expansion and Diversity Enhancement: To improve the robustness and generalization ability of the model in practical applications, the existing dataset should be further expanded and enriched. Specifically, it is necessary to collect more diverse images of concealed objects and human bodies under various scenarios—especially millimeter-wave images captured from different poses, angles, and with various clothing occlusions. This will help enhance the representativeness of the dataset and enable the model to better adapt to the complex and dynamic detection tasks in real-world environments.

-

2.

Lightweight Models and Real-Time Applications: Focusing on the light-weighting and optimization of the model is crucial. For example, research can be conducted on how to reduce the number of model parameters and computational complexity through techniques such as model pruning and quantization while maintaining high detection accuracy. Additionally, exploring ways to further improve inference speed for real-time detection will enable the model to run efficiently on mobile devices or in resource-constrained environments.

-

3.

Cross-Domain Applications and Collaboration: The technology of the model can be extended to other fields, such as medical imaging, traffic monitoring, and smart home systems. By engaging in cross-domain collaboration and applications, the potential of millimeter-wave imaging and multi-modal fusion technology in various scenarios can be explored.

Data availability

The datasets generated during and/or analysed during the current study are available from the corresponding author on reasonable request.

References

Yurduseven, O. Indirect microwave holographic imaging of concealed ordnance for airport security imaging systems[J]. Progress Electromagnet. Res. 146, 7–13 (2014).

Accardo, J. & Chaudhry, M. A. Radiation exposure and privacy concerns surrounding full-body scanners in airports. J. Radiation Res. Appl. Sci. 7 (2), 198–200 (2014).

Yang, X. et al. A novel deformable body partition model for MMW suspicious object detection and dynamic tracking. Sig. Process. 174, 107627 (2020).

Strecker, H. A local feature method for the detection of flaws in automated x-ray inspection of castings. Sig. Process. 5 (5), 423–431 (1983).

Chen, H. M. et al. Imaging for concealed weapon detection: a tutorial overview of development in imaging sensors and processing. IEEE. Signal. Process. Mag. 22 (2), 52–61 (2005).

Sheen, D. M., McMakin, D. L. & Hall, T. E. Cylindrical millimeter-wave imaging technique for concealed weapon detection[C]. 26th AIPR Workshop: Exploiting New Image Sources and Sensors. SPIE 3240, 242–250 (1998).

Sheen, D. M., McMakin, D. L. & Hall, T. E. Three-dimensional millimeter-wave imaging for concealed weapon detection. IEEE Trans. Microwave Theory Tech. 49 (9), 1581–1592 (2001).

García-Rial, F. et al. Combining commercially available active and passive sensors into a millimeter-wave imager for concealed weapon detection. IEEE Trans. Microwave Theory Tech. 67 (3), 1167–1183 (2018).

Andrews, D. A. et al. Active millimeter wave sensor for standoff concealed threat detection. IEEE Sens. J. 13 (12), 4948–4954 (2013).

Sheen, D. M. et al. Near-field millimeter-wave imaging for weapons detection[C]. Applications of Signal and Image Processing in Explosives Detection Systems. SPIE 1824, 223–233 (1993).

Vahidpour, M. & Sarabandi, K. Millimeter-wave doppler spectrum and polarimetric response of walking bodies. IEEE Trans. Geosci. Remote Sens. 50 (7), 2866–2879 (2012).

Bertl, S. & Detlefsen, J. Effects of a reflecting background on the results of active MMW SAR imaging of concealed objects. IEEE Trans. Geosci. Remote Sens. 49 (10), 3745–3752 (2011).

Zheng, C. et al. A passive millimeter-wave imager used for concealed weapon detection. Progress Electromagnet. Res. B. 46, 379–397 (2013).

Al-Shoukry, S. An automatic hybrid approach to detect concealed weapons using deep learning. ARPN J. Eng. Appl. Sci. 12 (16), 4736–4741 (2017).

Liu, C., Yang, M. H. & Sun, X. W. Towards robust human millimeter wave imaging inspection system in real time with deep learning. Progress Electromagnet. Res. 161, 87–100 (2018).

López-Tapia, S., Molina, R. & de la Blanca, N. P. Using machine learning to detect and localize concealed objects in passive millimeter-wave images. Eng. Appl. Artif. Intell. 67, 81–90 (2018).

Nguyen, N. D. et al. An evaluation of deep learning methods for small object detection. J. Electr. Comput. Eng. 2020 (1), 3189691 (2020).

Grossman, E. N. & Miller, A. J. Active millimeter-wave imaging for concealed weapons detection[C]. Passive Millimeter-Wave Imaging Technology VI and Radar Sensor Technology VII. SPIE 5077, 62–70 (2003).

Kemp, M. C. Millimetre wave and terahertz technology for detection of concealed threats-a review. 2007 Joint 32nd International Conference on Infrared and Millimeter Waves and the 15th International Conference on Terahertz Electronics. IEEE, : 647–648. (2007).

Haworth, C. D. et al. Detection and tracking of multiple metallic objects in millimetre-wave images. Int. J. Comput. Vision. 71, 183–196 (2007).

Shen, X. et al. Detection and segmentation of concealed objects in Terahertz images. IEEE Trans. Image Process. 17 (12), 2465–2475 (2008).

Martínez, O. et al. Concealed object detection and segmentation over millimetric waves images[C]//2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition-Workshops. IEEE, : 31–37. (2010).

Liu, T. et al. Concealed object detection for activate millimeter wave image. IEEE Trans. Industr. Electron. 66 (12), 9909–9917 (2019).

Bharati, P. & Pramanik, A. Deep learning techniques—R-CNN to mask R-CNN: a survey. Computational Intelligence in Pattern Recognition: Proceedings of CIPR 2019, : 657–668. (2020).

Ren, S. et al. Faster r-cnn: towards real-time object detection with region proposal networks. Adv. Neural. Inf. Process. Syst., 28. (2015).

Redmon, J. et al. You only look once: Unified, real-time object detection. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. : 779–788. (2016).

Pang, L. et al. Real-time concealed object detection from passive millimeter wave images based on the YOLOv3 algorithm. Sensors 20 (6), 1678 (2020).

Sunkara, R. & Luo, T. No more strided convolutions or pooling: A new CNN building block for low-resolution images and small objects[C]. Joint European Conference on Machine Learning and Knowledge Discovery in Databases. Cham: Springer Nature Switzerland, : 443–459. (2022).

Su, Y. et al. Enhancing concealed object detection in active millimeter wave images using wavelet transform. Sig. Process. 216, 109303 (2024).

Cheng, T. et al. Yolo-world: Real-time open-vocabulary object detection. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. : 16901–16911. (2024).

Yu, F. & Koltun, V. Multi-scale context aggregation by dilated convolutions. arXiv preprint arXiv:1511.07122 (2015).

Sun, P. et al. Multi-source aggregation transformer for concealed object detection in millimeter-wave images. IEEE Trans. Circ. Syst. Video Technol. 32 (9), 6148–6159 (2022).

Niu, Z., Zhong, G. & Yu, H. A review on the attention mechanism of deep learning. Neurocomputing 452, 48–62 (2021).

Zhu, Y. et al. Conditional text image generation with diffusion models[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. : 14235–14245. (2023).

Cho, H., Wang, J. & Lee, S. Text image deblurring using text-specific properties[C]. Computer Vision–ECCV. : 12th European Conference on Computer Vision, Florence, Italy, October 7–13, 2012, Proceedings, Part V 12. Springer Berlin Heidelberg, 2012: 524–537. (2012).

Matsushima, T. et al. Deployment-efficient reinforcement learning via model-based offline optimization[J]. arXiv preprint. arXiv:2006.03647 (2020).

Yeom, S. et al. Real-time outdoor concealed-object detection with passive millimeter wave imaging. Opt. Express. 19 (3), 2530–2536 (2011).

Yeom, S. et al. Real-time concealed-object detection and recognition with passive millimeter wave imaging. Opt. Express. 20 (9), 9371–9381 (2012).

Guo, D. et al. Suspicious Object Detection for Millimeter-Wave Images with Multi-View Fusion Siamese Network[J] (IEEE Transactions on Image Processing, 2023).

Guo, C., Hu, F. & Hu, Y. Concealed object detection for passive millimeter-wave security imaging based on task-aligned detection transformer. IEEE Trans. Instrum. Meas. 72, 1–13 (2023).

Shao, S. et al. Objects365: A large-scale, high-quality dataset for object detection[C]. Proceedings of the IEEE/CVF International Conference on Computer Vision. : 8430–8439. (2019).

Lin, T. Y. et al. Microsoft coco: Common objects in context[C]. Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6–12, 2014, Proceedings, Part V 13. Springer International Publishing, : 740–755. (2014).

Zareian, A. et al. Open-vocabulary object detection using captions. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. : 14393–14402. (2021).

Gu, X. et al. Open-vocabulary object detection via vision and Language knowledge distillation. arXiv preprint arXiv:2104.13921(2021).

Zhong, Y. et al. Regionclip: Region-based language-image pretraining[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. : 16793–16803. (2022).

Zhou, X. et al. Detecting twenty-thousand classes using image-level supervision[C]. European Conference on Computer Vision. Cham: Springer Nature Switzerland, : 350–368. (2022).

Du, Y. et al. Learning to prompt for open-vocabulary object detection with vision-language model[C]. Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. : 14084–14093. (2022).

Minderer, M. et al. Simple open-vocabulary object detection[C]. European Conference on Computer Vision. Cham: Springer Nature Switzerland, : 728–755. (2022).

Liu, S. et al. Grounding Dino: marrying Dino with grounded pre-training for open-set object detection. arXiv preprint arXiv:2303.05499 (2023).

Liu, Z. et al. Swin transformer: Hierarchical vision transformer using shifted windows[C]. In: Proceedings of the IEEE/CVF International Conference on Computer Vision. : 10012–10022. (2021).

Lorenzo, P., Bayliss, M. T. & Heinegård, D. A novel cartilage protein (CILP) present in the mid-zone of human articular cartilage increases with age. J. Biol. Chem. 273 (36), 23463–23468 (1998).

Devlin, J. et al. Bert: pre-training of deep bidirectional transformers for language understanding[J]. arXiv preprint arXiv:1810.04805 (2018).

Al-Qizwini, M. et al. Deep learning algorithm for autonomous driving using googlenet[C]//2017 IEEE intelligent vehicles symposium (IV). IEEE, : 89–96. (2017).

Tan, M., Le, Q. & Efficientnet Rethinking model scaling for convolutional neural networks[C]//International conference on machine learning. PMLR : 6105–6114. (2019).

Li, Y. & Ren, F. Light-weight retinanet for object detection[J]. (2019). arXiv preprint arXiv:1905.10011.

Jocher, G., Chaurasia, A. & Qiu, J. YOLO by ultralytics. Ultralytics[J]. (2023).

Liang, D., Xue, F. & Li, L. Active Terahertz imaging dataset for concealed object detection[J]. arXiv preprint arXiv:2105.03677 (2021).

Acknowledgements

C.Y.L and X.J.Z. contributed equally to this work. The authors acknowledge financial support from the National Natural Science Foundation of China (52272087 and 22201181), Natural Science Foundation of Shanghai (23ZR1458600), and Shanghai Rising-Star Program (24QB2706800 and 24YF2745300).

Funding

This work was supported by the Double First-Class Innovation Research Project for People’s Public Security University of China (No. 2023SYL06) and the Opening Project of Shanghai Key Laboratory of Crime Scene Evidence (2024XCWZK02).

Author information

Authors and Affiliations

Contributions

Conceptualization, C . J . and C. L.; Data curation, C. J.; Formal analysis, C.J. and C. L.; Funding acquisition, C. L.; Investigation, C. J., C. L. and C. L.; Methodology, C. J. and C. L.; Project administration, C. L.; Resources, C. J.; Software, C. J. and C. L.; Supervision, C. L.; Validation, C. J. and C. L.; Visualization, C. J.; writing—original draft, C. J.; writing—review and editing, C. J., C. L. and C. L.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Jiang, C., Li, C. & Zhao, X. Open vocabulary detection for concealed object detection in AMMW image. Sci Rep 15, 29863 (2025). https://doi.org/10.1038/s41598-025-13935-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-13935-y