Abstract

During regional seismic monitoring, data is automatically analyzed in real-time to identify events and provide initial locations and magnitudes. Monitoring networks may apply automatic post-processing to small events (M < 3) to add and refine picks and improve the event before analyst review. Recently, machine learning algorithms, particularly for phase picking, have matured enough for use in regional monitoring systems. The Southern California Seismic Network has implemented the deep-learning picker PhaseNet in our event post-processing, resulting in about 2–3 times as many picks, particularly S phases, with slightly better pick accuracy than the previous STA/LTA picker (relative to analyst picks). These improvements have led to better epicenter accuracy. We have also developed an automatic post-processing pipeline (ST-Proc) for sub-network triggers, which are collections of nearby phase picks that the real-time system could not associate into an event. ST-Proc uses PhaseNet to find phase picks and the machine learning algorithm GaMMA to associate events. This pipeline is capable of correctly detecting events in 65–70% of triggers containing events with a low false event rate around 5%. Additionally, the GaMMA-determined epicenters are generally accurate (within a few kilometers of the final). Both pipelines have helped to reduce analyst workload and streamline event processing.

Similar content being viewed by others

Introduction

Regional seismic networks are tasked with monitoring seismicity over a specified area. To do this efficiently, they employ automated systems to detect events which may be further reviewed and refined by analysts. Some seismic networks implement post-processing for small magnitude events (M < ~ 3) to improve phase picks and event origins prior to analyst review to reduce workloads and speed up the review process.

Over the past several years, machine learning algorithms, particularly for phase picking and event detection, have become better developed and are ready for use in real-time monitoring environments, though better quantification of their accuracy may be needed1. One example of a successful use is PhaseWorm2, which uses the deep-learning phase picker PhaseNet3 along with pre-existing monitoring software. Another approach by regional seismic networks has been to use machine learning methods to enhance real-time catalogs. The Oklahoma Geological Survey developed easyQuake, a machine-learning-based earthquake detection package that they run daily to find small events missed by the real-time system4. The additional events are added to the earthquake catalog after analyst review. The Texas seismological network has taken a similar approach, using a near real-time machine-learning system to enhance their earthquake catalog and reduce analyst workloads5. This system runs with 10-minute latency for a specific part of western Texas.

The Southern California Seismic Network (SCSN) is the authoritative organization for earthquake monitoring in southern California, USA. For this task, it operates over 400 real-time seismic stations6,7 and imports data from around 200 stations operated by partner networks (Fig. 1). At the SCSN, we decided to first introduce machine learning algorithms into near real-time post-processing. This approach was a promising way to improve our monitoring systems that was easier to implement than modifying the algorithms to work in real-time within our existing system. Our first use was to replace the existing phase picker in our event post-processing pipeline with PhaseNet. Our second use was to develop an automatic processing pipeline for subnet triggers (groups of unassociated picks) that uses PhaseNet for picking and the machine-learning algorithm GaMMA8 for event association. Both of these uses have resulted in more accurate automatic phase picks and event locations, leading to reduced analyst workloads. Both pipelines are currently running in our monitoring operations (at the time of publication).

Map of stations operated by the Southern California Seismic Network (SCSN; blue triangles) or shared by partner networks (light blue squares). The orange pentagon outlines the SCSN’s authoritative monitoring region. The pink star marks the epicenter of the 2019 M7.1 Ridgecrest earthquake, and labels denote places mentioned in the text. Thin grey lines trace major faults13. Upper inset shows regional map with the black box outlining the main mapped area. Maps produced using Matlab R2022b (www.mathworks.com) and a basemap hosted by Esri (www.esri.com).

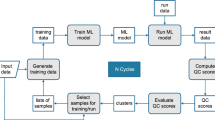

Incorporating machine learning in event post-processing

The SCSN uses the Earthworm/AQMS real-time monitoring system9,10, which was developed in the late 1990 s and remains commonly used by regional seismic networks. It uses a short-term average/long-term average (STA/LTA)11 phase picker (pickEW) and includes an event post-processing module (hypomag). As diagramed in Fig. 2, the real-time system makes phase picks effectively as data is received (i.e., about every second or so) and stores them in a pick ring. The associator module can then retrieve picks from the pick ring to create events. After 70 s from origin time, the event is closed (i.e., no more picks can be added) and moved on to the next steps (magnitude, relocation, post-processing, etc.). Unassociated picks can be grouped into subnet triggers based on manually-determined subsets of stations.

Prior to this study, the event post-processing workflow implemented by the SCSN was as follows: after the real-time system produces an event with a magnitude below 3, it is sent to the hypomag module. The event waveforms with buffers before and after the event determined by the magnitude (usually a ~ 1–2 min window) are sent through pickEW to get a new set of picks. Based on the initial event location, only picks within ± 1.5 s of expected phase arrivals are retained. Initial picks from the real-time system are replaced by the new picks or else retained. After the picks are updated, the event is relocated using the hypoinverse software12, and the magnitude is recalculated. This entire process is completed within a few minutes of the event occurring. As described in this section, we have now implemented the deep-neural-network picker PhaseNet in our event post-processing by using it in place of the pickEW algorithm in the hypomag module to create hypoPN (Fig. 2). We note that hypoPN was implemented on the same server systems as hypomag, and there were no noticeable changes in system performance; however, we also did not conduct a thorough performance analysis as part of this study.

Diagram showing the workflow of the real-time AQMS system (red) and the post-processing modules, hypomag/hypoPN (blue) and ST-Proc (purple, see Sect. 3). Arrows show data movement through the system with the dotted arrow indicating a pathway that is used when ST-Proc events cannot proceed through hypoPN (e.g., no events found, no magnitude determined). Bolded text and box indicate new additions from this study.

Development of hypoPN

The development of hypoPN included several tests (Table 1) that helped to identify issues and challenges that needed to be resolved before the workflow could be brought into operational use. Here, we briefly describe these tests and the more notable challenges that were addressed. We hope this will provide insight to those who are interested in bringing research-grade software into a real-time or near real-time operational environment or in developing software for such use.

Initial evaluation and development

Before full development of hypoPN, we first conducted test 1 to evaluate the potential benefits and drawbacks of switching pickers. For this test, we ran the PhaseNet picker on archived waveforms for each of 1,116 events that were finalized in April 2023. For cases where multiple picks of one phase were made on one station (e.g., P pick on HHZ and HNZ channels), we chose to retain the pick with the highest phase score. Both the phase picks (number, quality, etc.) and locations derived from those picks showed promise for improvement over hypomag, so we proceeded with developing an operational-quality workflow.

From the beginning, we knew of a couple challenges that would need to be addressed. First, the existing version of PhaseNet did not determine polarities (aka first motions) of the phases, which are needed for determining focal mechanisms with the algorithm that the SCSN uses. The Earthworm/AQMS system determines polarities as part of the pickEW algorithm, so we rewrote this portion of pickEW in Python and added it to PhaseNet.

Second, PhaseNet determines a “phase score” that indicates the algorithm’s uncalibrated confidence in the pick. The phase score is a continuous value between 0 and 1. In contrast, the Earthworm/AQMS system expects an integer quality value between 0 and 4, which is later converted into a weight (1.00 to 0.00, respectively) used by the locator. Thus, we needed to create a scheme to map phase score to quality. We tested three different mapping options to see which resulted in the most accurate locations, relative to the analyst-reviewed catalog locations: uniform quality (i.e., all equal), equal spacing (i.e., steps of 0.1 in phase score), and manually determined (Supplementary Table S1). The lattermost was produced by looking at the average, median, and mean of time accuracy (relative to analyst picks) for PhaseNet picks in each mapped quality category. The phase score thresholds were chosen such that the time accuracy statistics were as close as possible to the time errors assigned for each quality. In testing, the uniform quality scheme produced the least accurate locations, although results from all three options were fairly similar. The manually-determined mapping resulted in the most accurate locations, so that approach was used moving forward. We also note that this step may not be as important for networks in which pick qualities do not vary as much or may not apply to those that do not use qualities in this way.

Tests on existing backlog events

Once the initial hypoPN workflow was set up, we conducted tests 2 & 3 on a subset of existing backlog events from April 2020 (Table 1). These test events had gone through the hypomag process but had not yet been reviewed by analysts. This allowed the analysts to review and finalize the events starting with the hypoPN picks and locations while also providing direct comparisons with hypomag. Since test 1 focused on aggregate, statistical evaluation, this approach also provided expert opinions on algorithm performance based on individual picks. Once events are finalized, we do not want the analysts to reanalyze them, so we used a new set of events for each subsequent test.

For test 2, hypoPN was run on 183 events from 6 to 7 April 2020, using the hypomag origin to determine expected phase arrivals. Two notable issues were identified during this test as well as some minor technical adjustments. First, we ran into an issue with picks not properly associating with stations in the database. This was addressed by changing the way PhaseNet outputs channel codes from two letters to the full three letter codes. Unlike pickEW which makes picks on individual channels, PhaseNet uses all three components, when available, to make a pick. Therefore, we decided the easiest approach was to always assign P picks to the vertical channel and S picks to the first horizontal channel. Second, during manual review, the analysts found that many picks were being made on less desirable, lower sampling rate (40 Hz) BH channels even when clear phases could be picked on higher rate (100 Hz) HH channels. Upon inspection, PhaseNet was making the HH channel picks on many occasions, but they had lower phase scores than the BH picks and were tossed. Switching from prioritizing picks by phase score to prioritizing by channel type increased the number of picks retained on desired channel types while allowing for less desirable ones when needed.

For test 3, hypoPN was run on 181 events from 12 to 15 April 2020, using the real-time origin to determine expected phase arrivals and the channel-type pick prioritization. The goals of this test were to ensure that the fixes made after test 2 addressed the identified issues and to reassess hypoPN performance. No additional major issues were discovered, though analysts commented after both tests 2 & 3 that the quality mapping was not ideal, so we discussed testing signal-to-noise ratio (SNR) in the mapping determination.

Near real-time production tests

Once hypoPN was ready for operational use, we conducted tests 4 & 5 in the near real-time production systems, replacing the existing hypomag workflow with hypoPN (Table 1). Test 4 was primarily intended as a technical test of hypoPN in operations and was run on 29 September 2023, during which 93 events were recorded. When the technical issues were sorted out and a new version of PhaseNet that calculated the SNR was set up, we conducted test 5. This test occurred from 8 to 17 November 2023 with 226 events recorded during that period. The only other change from test 4 besides the SNR calculation was removing picks beyond 100 km. Since most of the events going through post-processing are low magnitude (M < ~ 2), we found in previous tests that many of the more distant picks were of low quality or false and were being deleted.

After test 5, we reevaluated the quality mapping scheme. Comparisons of SNR, phase score, and time accuracy of the picks showed that there was less correlation between SNR and time accuracy, particularly for S picks, than there was between phase score and time accuracy (Supplementary Fig. S1). After also testing combinations of SNR and phase score for mapping, we decided to continue with the simplest option of using only phase score, since adding the SNR provided little additional benefit. Additionally, we noticed that the automatic focal mechanisms produced during tests 4 & 5 were low quality or failing due to a lack of available polarities. Because only quality 0 and 1 P-picks are used for determining polarities, we adjusted the quality mapping such that the P picks would be approximately evenly distributed among qualities 0, 1, and 2 rather than more heavily skewed toward quality 2 (Supplementary Table S2), which improved the focal mechanism issues by adding more polarities. This quality distribution is also more similar to that produced by the pickEW algorithm.

With the exception of the quality mapping, we were very satisfied with the outcome of test 5 and decided to leave the hypoPN module running indefinitely. We did one follow-up (test 6) after hypoPN had been running for a few months to ensure that it was still performing well and to determine any areas for further tuning or improvement. Test 6 consisted of 1,903 events from January and February 2024 (Table 1). The relatively large number of events allowed for more thorough analysis than previous tests, such as a performance evaluation by channel/sensor type.

Test performance results

To evaluate the performance of PhaseNet and hypoPN compared to hypomag, we did a direct channel-by-channel comparison of the phase picks from each module and the final set of picks approved by an analyst. We categorized PhaseNet/hypoPN/hypomag picks as retained (analyst keeps or adjusts automatic pick on the same channel), deletion (analyst removes automatic pick from a channel), or miss (pick made by analyst but not automatically on a channel). We then analyzed the time accuracy of retained picks and the hypocenter accuracy of the event relocations using the updated set of picks. One caveat is that because this is a direct channel comparison, an automatic pick that is manually repicked on a different channel on the same station (e.g., CI.JEM.--.HHE to CI.JEM.--.HHN) will count as both a deletion (on the initial channel) and a miss (on the new channel) even if the pick time is about the same. In the AQMS system, each pick is associated with a single channel of a station, typically P with the vertical channel and S with either horizontal. Because PhaseNet always assigns S picks to the first horizontal channel, hypoPN may have a higher number of picks repicked on a different channel than we would otherwise expect (e.g., high quality automatic picks that end up on the noisier channel are deleted and repicked by an analyst on the quieter channel).

We also note that the differences between tests may be somewhat dependent on the events analyzed. Tests 2 & 3 were conducted on backlog events that had not yet been revised by analysts. These tended to be smaller magnitude events that were dominated by aftershocks of the 2019 M7.1 Ridgecrest earthquake and a swarm on the San Jacinto fault (especially test 2). In contrast, tests 1 & 4–6 were conducted on recent or real-time seismicity and, therefore, had more widely dispersed events throughout the region, though both tests 1 & 6 contained small swarms (~ 150 events) in the Imperial Valley. Generally, more accurate real-time picks and origins are likely to be less improved by the change in picker algorithm, so events in regions with better station coverage, quieter stations, etc. are expected to have smaller differences among the hypoPN, hypomag, and final picks and origins.

Overall, PhaseNet/hypoPN produced about twice as many picks as hypomag; however, a somewhat higher percentage were deleted or repicked on a different channel by an analyst (Fig. 3a). This was especially true for the first two tests that prioritized picks by phase score rather than channel type. On the other hand, PhaseNet/hypoPN missed significantly fewer picks than hypomag (Fig. 3b). The increase in the number of picks was less for P picks (roughly 50%) compared to S picks (roughly 200–300%) (Fig. 3c).

We also considered the time accuracy of the picks compared to the final analyst-approved picks. For channels with both an automatic and final pick, we calculated the absolute time difference of the picks and then calculated aggregate statistics for each module and each test (Fig. 3d–f). For all phases together, PhaseNet/hypoPN picks had slightly better median time accuracy, somewhat better mean time accuracy, and lower standard deviation than hypomag picks. While this was also true for P phases, the differences between the two modules were larger for the S phases.

Comparison of phase pick performance results from hypoPN tests. HM (red/yellow) indicates the hypomag performance. PN (dark/light blue) indicates the PhaseNet or hypoPN performance. (a) The average number of picks per event for each test. Lighter colors denote retained picks, and darker colors and percentages indicate picks that were deleted or repicked on a different channel during analyst review. (b) The average number of missed picks per event for each test. (c) The average number of retained picks per event for each test. Darker and lighter colors denote P and S picks, respectively. (d–f) The median (small marker) and average (large marker) of the absolute pick time accuracy (relative to analysts) for each test and the specified pick type (all, P, S). The lines indicate the standard deviation from the average. Red/yellow circles and blue/light blue triangles mark hypomag and PhaseNet/hypoPN performance, respectively.

To evaluate whether the improved picks also resulted in improved event origins, we compared the epicentral locations and depths from hypomag and hypoPN/PhaseNet to the final ones. As previously noted, the location and depth are somewhat affected by the pick weights determined from the quality ratings. Tests 1–5 all used the same quality mapping thresholds (0.98, 0.95, 0.85, 0.5), although test 1 included a couple other options as well. While test 6 used only slightly different thresholds (0.97, 0.93, 0.6, 0.5) determined after test 5, the distribution of qualities was notably different because the phase score distribution skews toward higher values (e.g., Supplementary Table S2, Supplementary Fig. S1). For all tests, picks with phase scores below 0.5 were excluded and not used.

Test 3 provided the best comparison of the hypomag and hypoPN modules because it was conducted after major technical issues were addressed, which resulted in more picks being properly included, and before hypomag was replaced in the operational system. All of the earthquakes in this test set were well-located by both hypomag and hypoPN, with epicentral deviations from the final within 5 km and depth differences within 7.5 km (Fig. 4). The hypoPN epicenters were more accurate (all within ~ 1 km) and had lower standard deviation. While the average depth differences from both modules were approximately equal, the hypoPN depths had a lower standard deviation. Both modules produced depths that tended to be shallower than the final, especially hypoPN. The distributions of differences between the epicenters and depths from both modules show that they agreed on many events (Fig. 4c,f). Two regional events were not finalized and excluded from the location comparisons.

Location performance results from hypoPN test 3. Histograms of (a–c) epicentral and (d–f) depth differences among hypomag, hypoPN, and final origins. (g, h) Comparisons of epicentral and depth differences, respectively, between hypomag and final origins and hypoPN and final origins.

Location performance results from hypoPN test 6 for 1,470 events that were not finalized from hypoPN origins (i.e., events needing manual adjustment). Histograms of (a–c) epicentral and (d–f) depth differences among hypomag, hypoPN, and final origins. Events with differences > 10 km not included. (g, h) Comparisons of epicentral and depth differences, respectively, between hypomag and final origins and hypoPN and final origins. In (g), 3 outliers (offsets > 130 km) are not plotted.

For the operational performance assessment, we focus on test 6 since it has the largest event set and compare it to the real-time system epicenters and depths. Similar to previous tests, hypoPN produced more accurate epicenters and similar, though skewed shallow, depths compared to the real-time system with lower standard deviations (Fig. 5). Note that the default starting depth for the location inversion was 10 km for the real-time system and 0 km for post-processing at the time of analysis, which may partially account for the shallower hypoPN depths (1-D velocity models were identical). Locations with poorer initial (i.e., real-time) epicenters were less likely to be improved, including three outliers with offsets from final of > 130 km. Because the post-processing modules enforce a maximum 1.5-second deviation from the expected arrival, a very poor initial location is difficult to correct. For a few events, hypoPN was farther off from the final epicenter than the real-time system (i.e., made the event worse). Because this test had a wider range of event locations, we also looked for any spatial trends (Fig. 6). The events hypoPN performed the worst on were primarily in areas with relatively high seismicity and/or sparser station coverage (e.g., near the Mexican border, offshore). The real-time system also tends to have more difficulty in these areas, which may affect hypoPN’s performance; however, its poorly located events are also more widespread, including in areas where hypoPN performs well (e.g., Fig. 6c). Overall, hypoPN is providing a clear improvement in epicenters and depths compared to the real-time origins.

In general, hypoPN performs more accurately on both phase picks and origins than hypomag and the real-time system. Figure 7 shows waveforms, picks, and expected phase arrivals for an example event that demonstrates a typical benefit of using hypoPN in event post-processing. The real-time system misidentified some of the S picks as P picks, which led to a poor location. After applying the hypomag process, the event looked similar with the same mistakes made, since hypomag works very similarly to the real-time system. However, hypoPN can correct the misidentified phase picks, leading to a more accurate origin.

Maps of epicentral distance offsets from test 6 for 1,470 events that were not finalized from hypoPN origins (i.e., those with manual adjustment). Markers indicate final epicenter of event. In (a, b), colors show the absolute offset from final for real-time (RT) and hypoPN (PN), respectively, for all events. Though the color scale is saturated at 10 km, events with greater values are plotted. In (c), colors show the difference in offsets from (a, b) for events with an absolute offset difference > 5 km. Purple (or green) indicates that the hypoPN (or real-time) location was more accurate. Maps produced using the matplotlib basemap package (https://doi.org/10.5281/zenodo.7275322).

Example event (2023-11-07 22:20) from (a) the real-time system, (b) hypomag, and (c) hypoPN. Plots are from our operational web-based event review page. Light green bars indicate expected phase arrivals based on the module’s event origin. Red and pink flags indicate P and S picks, respectively. Waveforms are colored by channel type.

Performance results for the initial evaluation of using PhaseNet + GaMMA for subnet trigger processing. GaMMA-detected events or misses from triggers manually converted to events in the catalog counted by (a) event and geographic type and (b) magnitude. Maps of (c) missed and correctly detected events (final catalog locations) and (d) false events (GaMMA locations). In (c), the colors indicate the number of events associated from a single trigger. (e) Histogram and (f) map of the epicenter offsets for correctly detected events. Two events with offsets >60 km not shown in histogram (one is an additional detection). Markers plotted at the location of the GaMMA-determined epicenter. Maps produced using the matplotlib basemap package (10.5281/zenodo.7275322).

Effects on analyst workload

We conducted a timed review test to compare how quickly an analyst (author ZN) could review a hypomag event versus a hypoPN event. We chose 32 pairs of unreviewed events that had similar magnitudes and locations (Supplementary Fig. S2). After review and adjustment, the final locations were mostly within 10 km (max 40 km) of each other and the magnitudes within ~ 0.2 units (max 0.4 units). The hypoPN events were typically faster to review, on average by ~ 30 s, though the times varied based on magnitude, location, and amount of waveform data available (Supplementary Fig. S3a). Adding new picks typically takes analysts about the same time as adjusting existing picks and more time than deleting picks, which is very quick, so starting a review with more picks can help speed it up, especially if most need little-to-no adjustment.

During large earthquake sequences or swarms, the number of events can greatly outpace the ability of the analysts to manually review them. This results in a backlog of detected events with automatic origins and phases but still waiting for analyst review and finalization. The SCSN experienced a large backlog after the M7.1 Ridgecrest sequence in July 2019. One of our hopes with developing hypoPN was that it would be able to speed up backlog review by providing better automatic phases and origins. We tested this with 1,064 unreviewed events from January 2020 that had gone through hypomag but were not good enough to be finalized without adjustment. We applied hypoPN to these events and recorded how many met the quality criteria for an analyst to finalize from the automatic phases and origins (i.e., without manual adjustment). Overall, 476 (45%) of the events were finalized directly from hypoPN, with numbers varying from 17 to 70% per day (Supplementary Fig. S3b), allowing a few weeks of analyst work to be completed in less than a workday. This is very promising for speeding up backlog processing, at least for periods with a relatively low-to-moderate seismicity rate. Further testing will be required to see if this holds for very high seismicity rates, such as those observed at the beginning of the Ridgecrest sequence when it was common for each event window to contain 3 or more earthquakes.

Automatic subnet trigger processing (ST-Proc)

The real-time monitoring system maintains an updated list of phase picks that it has detected in the data, many of which get associated into events. The picks that remain may be compiled into subnet triggers, collections of unassociated phase picks that may be related. Subnets are manually-determined subsets of the network consisting of ~ 15–25 stations that are located in a smaller region and are likely to detect similar events. While most subnet triggers are noise, a small percentage (< ~ 10%) do contain local, regional, or teleseismic events. Reviewing triggers, specifically adding local events to the catalog from them, can be time-consuming for analysts, so having automatic trigger processing could be very helpful for reducing analyst workload. With this in mind, we designed ST-Proc, a pipeline for automatically finding events in subnet triggers. It uses PhaseNet to find phase picks and the machine-learning-based associator GaMMA to identify potential events from those picks (Fig. 2). If any events are detected, they are inserted into the event database and then sent through hypoPN to add data from network stations outside the subnet and improve the event before analyst review.

Initial evaluation testing

Our first goal was to test whether GaMMA is capable of accurately finding and locating events in the subnet triggers while maintaining a low false event rate. We used the same version of PhaseNet as for hypoPN. We first applied GaMMA to a set of hypoPN picks from a known event to determine an initial configuration of parameters. We then tested 380 canceled triggers (i.e., with no events) from 4 to 11 April 2024 and 52 triggers that had been converted into events, 15 from 4 to 11 April 2024 and 37 from February 2024. In this test, GaMMA was able to recover events from 71% (37/52) of good triggers with only a 3% (10/380) false event rate (Fig. 8a). Additionally, GaMMA found two events each in two of the triggers, which were confirmed as events that the analysts had added to the catalog. The GaMMA-derived locations of the events were also generally accurate, with most located within 2 km of the analyst location and only a couple outliers with large distance offsets (Fig. 8e,f). The missed events tended to be low magnitude (M < 1) events in sparser parts of the network or events near the edges of the network (Fig. 8b,c). Typically, there were not enough phases (minimum of 4) detected on the subnet stations to associate the event. In contrast, the false events were mostly located in areas with dense station coverage and high noise levels, primarily the greater Los Angeles metro region (Fig. 8d). The 3% false event rate was deemed acceptable as it does not exceed the observed false event rate of our real-time system (roughly ~ 10% of detected events are deleted by analysts).

Several parameter adjustments were tested, but nearly all had similar performance to the initial configuration. Given that the missed events typically had too few phase picks, this suggests that the GaMMA configuration has less influence on ST-Proc’s ability to correctly identify events than the stations chosen to compose a subnet.

We conducted a second evaluation test on 486 triggers recorded from 26 to 31 May 2024 (Supplementary Fig. S4). These dates included a high trigger rate and a swarm in Baja California on the southernmost boundary of the SCSN reporting region, which provided a more challenging test for ST-Proc. This test resulted in a 68% (27/40) true positive rate and a 6% (28/446) false positive rate. Eight of the triggers generated multiple events, for a total of 44; about half of the 17 additional events were true events. The other half were either bad/noise events or split events from the Baja swarm (e.g., the picks on nearby and distant stations were associated into separate events). At least a few of the false positives were true events: a couple were deleted due to locating outside our monitoring region, and a few appeared to be duplicates of events already in the catalog, particularly for the Baja swarm. The distance offsets were not as good, with many > 100 km off; however, the poor locations were mostly for the split Baja events. Overall, ST-Proc was able to perform similarly with a more difficult dataset, which was reassuring for its use in operations.

Development of ST-Proc

Because the results from the evaluation tests were promising, we moved to developing an operational version of ST-Proc. The primary technical hurdle was determining how to have ST-Proc interact with the event database, particularly for cases of multiple events in a single trigger. We decided to have it produce arcin files (hypoinverse format), which our current system is designed to handle. This required updates to AQMS to allow for inserting new events and updating existing event identification numbers.

Once the technical hurdles were addressed, we conducted a backlog test on 236 triggers from 9 to 31 January 2020, which primarily included local earthquakes below M1 (Supplementary Fig. S5). This set of triggers had previously been briefly checked by analysts who removed most of the non-event triggers. This test resulted in a 70% (135/194) true positive rate and a 40% (17/42) false positive rate. Upon further inspection, however, only 2 of the “false” events were truly false (for a 5% false positive rate); the remainder included 1 teleseism, 7 low magnitude regionals, 6 local duplicates (i.e., events that already exist in the database), and 1 event that couldn’t be confidently located, all of which were not wanted in our catalog and deleted. Note that this test did not include analysis of additional events per trigger due to a technical issue that prevented them being inserted into the database. Of the detected non-teleseismic events, 81% (107/132) were located within 5 km of the final epicenter. Before analyst review, all events identified by ST-Proc were passed through hypomag to be assigned a magnitude and, for those that were successfully assigned magnitudes, through hypoPN to be repicked on more channels and relocated. For this test, 91% (139/152) of ST-Proc events received a magnitude and were processed by hypoPN. These results were consistent with the initial evaluation test results, so we continued development.

We started our near real-time production test of ST-Proc on 18 November 2024. As noted above, this implementation involved the transfer of an event between different pipelines (ST-Proc, hypomag, hypoPN), which added complexity and resulted in some technical difficulties, such as infinite loops, that took a couple weeks to identify and address. Therefore, we chose to analyze December 2024 for our production test, when the system was fully automated and functional (Supplementary Fig. S6). The 65% (47/72) true positive rate was slightly lower than previous tests, and the 7% (78/1,079) false positive rate was slightly higher. None of the triggers generated additional events. For the false positives, 6 were regional events and 5 were duplicate events, all of which were deleted from the catalog. The remainder were likely from noise, or may have been events with too few good picks to keep, and were mostly located around the greater Los Angeles area. For the true events except one teleseism, 61% (28/46) were located within ~ 10 km of their final location, most within 2 km. The two detected regionals were poorly located (> 166 km), consistent for events outside the region near the analyzed stations. Most of the remaining poorly located events were below magnitude 0.8. The analysts reported that they were often able to pull small magnitude events from poor GaMMA events, which is consistent with these location analysis results. For these events, there may not have been enough true phase picks available in the trigger for a good location. The missed events were mostly located in areas with lower station coverage, including around the Salton Sea, in the Ridgecrest area, and in the northwestern corner of the monitoring region (Fig. 1). Given the results of this test, we decided to continue using ST-Proc in operations and will look for ways to further improve its performance in the future.

Effects on analyst workload

Unlike hypoPN, ST-Proc is a new automated pipeline that did not have a pre-existing equivalent, making it difficult to directly and quantitatively assess the impacts of its implementation on analyst workloads. However, the SCSN analysts have reported that ST-Proc has helped them to streamline their daily tasks and finish them more quickly. They added that the benefits of not having to manually pull as many events from the subnet triggers, a process requiring several manual steps and waiting for algorithms to run, outweighs the minor trade-off of having more false events that need to be deleted, which has largely been unnoticed. Overall, they have found ST-Proc beneficial for their workflows and support its continued use.

Conclusions

We have incorporated the deep-learning algorithms PhaseNet and GaMMA into post-processing at the Southern California Seismic Network. Replacing the STA/LTA picker with PhaseNet in the event post-processing pipeline has resulted in more picks, especially S phases, with slightly better accuracy, leading to more accurate automatic locations. This enhancement has helped to speed up review and finalization of events, thereby reducing analyst workload. The newly developed subnet trigger processing pipeline, ST-Proc, has reliably been able to identify and locate events from ~ 65–70% of event-containing triggers throughout the SCSN’s monitoring region. The ST-Proc event locations are generally accurate, with most within ~ 5 km of the final location. The false event rate is low (~ 5%), and missed events typically have too few phases recorded in the subnet triggers to associate an event. We were able to incorporate the “out-of-the-box” machine-learning software without additional training or alteration, except for a couple minor changes to PhaseNet (adding polarities and changing channel code outputs). Future work may include a reconfiguration of the subnets to help increase the number of events pulled from triggers and a tuning of the machine learning algorithms, or implementation of different ones, to generate fewer false picks and events.

We hope that this detailed description and analysis will serve as a valuable reference for other seismic networks looking to integrate machine learning algorithms into their operations and can also help algorithm developers better understand the practical applications and integration requirements for use of their algorithms in seismic monitoring systems. Despite the challenges and effort required for integration, machine learning algorithms can improve automatic event picks and locations and help reduce analyst workload when used strategically in seismic monitoring operations.

Data availability

The earthquake catalog and waveform data are provided by the Caltech/USGS Southern California Seismic Network6 and archived at the Southern California Earthquake Data Center7 (https://scedc.caltech.edu/). PhaseNet and GaMMA are available from GitHub (https://github.com/AI4EPS). The AQMS system is available through GitLab (https://gitlab.com/aqms-swg). The hypoPN/ST-Proc software, including the modified version of PhaseNet, will be available from the SCEDC GitHub (https://github.com/SCEDC). Figures were produced with Matlab R2022b (www.mathworks.com) and the matplotlib14 v3.6.2 Python package (10.5281/zenodo.7275322).

References

Mousavi, S. M. & Beroza, G. C. Machine learning in earthquake seismology. Annu. Rev. Earth Planet. Sci. 51, 105–129. https://doi.org/10.1146/annurev-earth-071822-100323 (2023).

Retailleau, L. et al. A wrapper to use a machine-learning‐based algorithm for earthquake monitoring. Seismol. Res. Lett. 93 (3), 1673–1682. https://doi.org/10.1785/0220210279 (2022).

Zhu, W. & Beroza, G. C. PhaseNet: a deep-neural-network-based seismic arrival-time picking method. Geophys. J. Int. 216 (1), 261–273. https://doi.org/10.1093/gji/ggy423 (2019).

Walter, J. I. et al. Putting machine learning to work for your regional seismic network or local earthquake study. Seismol. Res. Lett. 92 (1), 555–563. https://doi.org/10.1785/0220200226 (2021).

Chen, Y., Savvaidis, A., Siervo, D., Huang, D. & Saad, O. M. Near real-time earthquake monitoring in Texas using the highly precise deep learning phase picker. Earth Space Sci. https://doi.org/10.1029/2024EA003890 (2024).

California Institute of Technology (Caltech). Southern California Seismic Network. International Federation of Digital Seismograph Networks (Other/Seismic Network, 1926).

SCEDC. Southern California Earthquake Center. Caltech Dataset https://doi.org/10.7909/C3WD3xH1 (2013).

Zhu, W., McBrearty, I. W., Mousavi, S. M., Ellsworth, W. L. & Beroza, G. C. Earthquake phase association using a Bayesian Gaussian mixture model. J. Geophys. Research: Solid Earth https://doi.org/10.1029/2021JB023249 (2022).

Johnson, C. E. et al. A flexible approach to seismic network processing. IRIS Newsl. 14 (2), 1–4 (1995).

Hartog, J. R., Friberg, P. A., Kress, V. C., Bodin, P. & Bhadha, R. Open-source ANSS quake monitoring system software. Seismol. Res. Lett. 91 (2A), 677–686. https://doi.org/10.1785/0220190219 (2020).

Allen, R. V. Automatic earthquake recognition and timing from single traces. Bull. Seismol. Soc. Am. 68 (5), 1521–1532. https://doi.org/10.1785/BSSA0680051521 (1978).

Klein, F. W. User’s guide to HYPOINVERSE-2000, a fortran program to solve for earthquake locations and magnitudes. US Geol. Surv. Open-File Rep. 2002 – 171. https://doi.org/10.3133/ofr02171 (2002).

U.S. Geological Survey and California Geological Survey, Quaternary fault and fold database for the United States. (Accessed 30 June 2025). https://www.usgs.gov/natural-hazards/earthquake-hazards/faults.

Hunter, J. D. & Matplotlib A 2D graphics environment. Computing Science Engineering. 9 (3), 90–95 (2007).

Acknowledgements

Allen Husker provided helpful comments on the manuscript and necessary support as the SCSN manager. The SCEDC and SCSN are funded through U.S. Geological Survey Grant G10AP00091 and the Southern California Earthquake Center, which is funded by NSF Cooperative Agreement EAR-0529922 and USGS Cooperative Agreement 07HQAG0008.

Author information

Authors and Affiliations

Contributions

G.T. contributed to the planning and design of the project, software development, and data analysis and collection; conducted the performance analysis; and prepared and revised the manuscript. E.Y. contributed to the planning and design of the project, software development, and data access. A.B. and R.T. contributed to the planning and design of the project and software development. W.Z. provided software support and assisted with software development as needed. Z.N., E.J., and N.S. contributed to the data analysis and collection. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Tepp, G., Yu, E., Bhaskaran, A. et al. Improvements from incorporating machine learning algorithms into near real-time operational post-processing. Sci Rep 15, 28938 (2025). https://doi.org/10.1038/s41598-025-14491-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-14491-1

Keywords

This article is cited by

-

Next-gen earthquake monitoring: leveraging generative AI and deep learning for early warning and response

Journal of Seismology (2026)