Abstract

Remote Sensing (RS) images capture spatial–temporal data on the Earth’s surface that is valuable for understanding geographical changes over time. Change detection (CD) is applied in monitoring land use patterns, urban development, evaluating disaster impacts among other applications. Traditional CD methods often face challenges in distinguishing between changes and irrelevant variations in data, arising from comparison of pixel values, without considering their context. Deep feature based methods have shown promise due to their content extraction capabilities. However, feature extraction alone might not be enough for accurate CD. This study proposes incorporating spatial–temporal dependencies to create contextual understanding by modelling relationships between images in space and time dimensions. The proposed model processes dual time points using parallel encoders, extracting highly representative deep features independently. The encodings from the dual time points are then concatenated and passed through Long Short Term Memory (LTSM) layers and a decoder. The output from the LSTM is then concatenated with that from the decoder in a space–time feature fusion. This optimizes information representation of spectral, spatial and temporal details, in RS images before further analysis of change. This approach aims to maximize authentic information while reducing noise interference by introducing the context of change. Compared to conventional CD methods, the proposed technique achieves higher overall accuracy of 97.4%, an F1 Score of 89% and an intersection over union (IoU) of 86.7 when evaluated on the EGY-BCD dataset. The results demonstrate the potential of incorporating spatial–temporal dependencies in CD tasks for RS images.

Similar content being viewed by others

Introduction

Remote sensing (RS) imagery serves as a valuable tool to track earth surface changes while revealing spatial and temporal environmental alterations. Change detection (CD) stands as a core function in RS that finds multiple uses including land use tracking and urban expansion analysis together with disaster response and environmental resource management1. The precise identification of changes proves difficult because spectral noise from seasonal changes together with lighting conditions, atmospheric disturbances and sensor discrepancies cause background fluctuations that mask genuine changes2.

Traditional CD methods—such as pixel-based (PBCD) and object-based change detection (OBCD)—have been extensively studied. While PBCD techniques (e.g., image differencing, CVA, PCA) are computationally simple, they are highly sensitive to radiometric noise and often yield false positives3. In contrast, OBCD methods attempt to mitigate these issues by incorporating shape, texture, and contextual information. However they become computationally expensive and need manual parameter tuning which restricts their scalability3,4,5,6,7 and8.

High-resolution and multi-temporal RS datasets have become more accessible9 thus has driven the transition from handcrafted, rule-based approaches to data-driven methods. Deep learning (DL) has established itself as a ground breaking solution which automates feature extraction while enhancing CD robustness. Chatrabhuj et al. highlight the need to understand subtle fluctuations in river systems through spatial patterns and contextual interpretation by integrating remote sensing, GIS, and AI to monitor complex environmental systems like river dynamics10.

Convolutional Neural Networks (CNNs) and Long Short-Term Memory (LSTM) networks are particularly prominent in RS research for their ability to model spatial patterns and temporal dynamics, respectively9,11,12,13,13,14,15,16,17,18,19,20 and21. While CNNs have proven effective at capturing spatial hierarchies, they remain limited in modelling dependencies across time. To overcome this, LSTM-based architectures have been introduced into CD frameworks to process paired RS images22,23,24,25,26 and27. Hybrid models have been found to improve performance by learning inter-image transitions and capturing context between temporal states28,29,30 and31. Despite this, challenges remain because CNNs face limitations when working with large resolution images. This is because they have limited receptive fields, hence lack temporal awareness when used alone to detect time-related patterns32 and33.

Recent advances in remote sensing have demonstrated the value of integrating spatial, temporal, and semantic data for environmental monitoring10,34,35 and36. Spatial modelling helps detect subtle structural modifications, while temporal modelling is essential for capturing gradual transformations, such as vegetation growth patterns or city expansion29,37,38 and39. Chatrabhuj et al. (2023) emphasized the synergy between remote sensing, GIS, and artificial intelligence in river management systems, demonstrating that combining multi-source datasets enables more accurate monitoring of subtle hydrological changes. Their work highlights the growing importance of contextual analysis and the use of machine learning10. Deep learning models which combine these dimensions offer a more comprehensive understanding of land cover changes by decreasing false detections resulting from noise or insufficient contextual information30 and40.

Architectures such as U-Net have gained popularity due to their encoder-decoder structure, which supports multi-scale feature extraction and spatial detail preservation41,42 and43. The Siamese U-Net variant have further advanced CD by comparing bi-temporal inputs in parallel and highlighting feature-level changes14,15,16 and17. Yet, most existing models struggle when dealing with real-world RS applications when handling temporal misalignment, scale variations and atmospheric inconsistencies.

To address these challenges, this paper introduces DuSTiLNet—a Dual-time point Space–Time fusion LSTM Network—for high-resolution change detection. The proposed DuSTiLNet architecture fuses spatial features from bi-temporal RS images using parallel convolutional encoders and integrates an LSTM layer to model temporal dependencies. The space–time fusion decoder aligns and integrates spatial and temporal representations, enabling the model to effectively capture nuanced changes across time and space. Our model demonstrates improved performance in accurately detecting building changes particularly in complex urban settings. We perform a thorough assessment of the proposed method on the EGY-BCD and WHU datasets, in comparison to other baseline methods to assess its performance.

Methodology

Overview

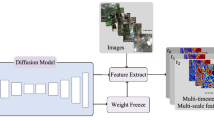

We propose DuSTiLNet, a deep neural network architecture designed to handle sequential image data for change detection and other tasks requiring precise temporal and spatial feature integration. The model consists of two key modules: a custom dual encoder that processes distinct time points independently, followed by a space–time feature fusion mechanism in the decoder, leveraging Long Short-Term Memory (LSTM) networks and dual concatenation points for enhanced spatial–temporal sequential feature modelling. The encoder part extracts hierarchical features and the decoder part up samples and refines the spatial information. The proposed architecture has dual concatenation mechanism allowing DiSTiLNet to produce high-resolution, spatial–temporal aware output maps, making it suitable for detailed change detection and sequence analysis. The LSTM layers introduce a temporal element, capturing dependencies between the input images at different time points. The temporal aspect, with the use of two time points extracting encodings using fully convolutional layers in a custom CNN and concatenating the encodings before processing in LSTM layers, is a unique feature of the architecture. Finally, the decoder structure with an innovative up sampling mechanism then aligns LSTM-driven temporal insights with spatial encodings, enhancing the model’s sensitivity to fine-grained space–time patterns.

The training architecture

Figure 1 below represents the training architecture. After loading the data, data was pre-processed by resizing it to a common shape (64 by 64), normalizing the pixel values and reshaping to a batch size of 1. The model takes two input images representing distinct time points, t1 and t2, each with a shape of 64 × 64 × 3, denoting the height, width, and RGB colour channels. These images are processed through two separate encoders (one for each time point) that apply identical sequential convolutional and pooling layers to capture spatial features independently for each time slice. A 2D convolutional layer with 32 filters, kernel size 3 × 3, ReLU activation, and same padding is applied to the input image at each encoder Convolution Layer 1. This layer captures low-level spatial features in a globally temporal and locally spatial way. A 2 × 2 max-pooling layer with same padding then reduces the spatial dimensions by half, retaining critical features while reducing computation at Pooling Layer 1. A second convolutional layer with 64 filters, kernel size 3 × 3, ReLU activation, and same padding further refines spatial representations. A second 2 × 2 max-pooling layer further reduces the spatial dimensions. The final convolutional layer with 128 filters and a 3 × 3 kernel is followed by a dropout layer (rate = 0.3) to prevent overfitting and introduce regularization.

Overview of the proposed architecture. (a) Bi-temporal input images after pre-processing fed to (b), two identical subnetworks with shared weights for feature extraction in the encoder stage. The encodings are fused in (c) where we have stacking of the spatial features before further processing in LSTM layers in (d), (e) is the decoder stage before fusing both the spatial and temporal features at (f) for further processing of the informed representation for a final greyscale image output highlighting areas of change at (h).

Let \({I}_{1}\in {R}^{64*64*3}\) and \({I}_{2}\in {R}^{64*64*3}\) represent the two input images at time points \({t}_{1}\) and \({t}_{2}\), respectively. The first input tensor I1 goes through the following operations:

\({X}_{1}^{1}=ReLU({W}_{1}*{I}_{1}+{b}_{1})\) where \({W}_{1}\in {R}^{3*3*3*32}\) is the convolution filter, \({b}_{1}\in {R}^{32}\) is the bias, and * denotes the 2D convolution operation with padding same. The output being \({X}_{1}^{1}\in {R}^{64*64*32}\).

The Max-pooling operation \({X}_{1}^{2}=MaxPool({X}_{1}^{1}(\text{2,2}))\), reduces the spatial dimensions by a factor of 2, resulting in \({X}_{1}^{2}\in {R}^{32*32*32}\).

The 2nd Convolution is represented as \({X}_{1}^{3}ReLu({W}_{2}*{x}_{1}^{2}+{b}_{2})\) where \({W}_{2}\in {R}^{3*3*32*64}\) and the output being \({X}_{1}^{3}\in {R}^{32*32*64}\).

The second MaxPooling operation \({X}_{1}^{4}=MaxPool({X}_{1}^{3}(\text{2,2}))\) results in \({X}_{1}^{4}\in {R}^{16*16*64}\).

The third Convolution \({X}_{1}^{5}=ReLU({W}_{3}*{X}_{1}^{4}+{b}_{3})\), where \({W}_{3}\in {R}^{3*3*64*128}\) outputs \({X}_{1}^{5}\in {R}^{16*16*128}\).

After encoding both time points, the resulting spatial features from each encoder are fused via an initial concatenation step. This operation creates a unified representation that incorporates spatial features from both time points, allowing the model to analyse inter-temporal dependencies effectively. The outputs of the two CNN branches are concatenated along the depth axis as

The concatenated feature map is then flattened and reshaped to serve as input for the temporal modelling layer. This reshaped feature vector is passed through a stack of two LSTM layers, each designed to capture temporal dependencies across the two time points. The first LSTM layer with 128 units and ReLU activation processes the reshaped features and retains sequential output, allowing for deeper temporal feature extraction. A second LSTM layer with 64 units refines the temporal representation, outputting a feature vector that condenses critical temporal relationships across time points. The flattening and re-shaping operations are represented mathematically as \({X}_{flat} =Flatten({X}_{concat})\) . The flatten operation converts the tensor to a 1D vector \({X}_{flat}\in {R}^{65536}\).

The reshaped tensor becomes \({X}_{reshape} \in {R}^{1\times 1\times 65536}\), suitable for LSTM input.

The decoder’s structure follows a progressive upsampling strategy. Up sampling Layers where two 2 × 2 up sampling layers gradually increase the spatial dimensions, restoring the feature map to match the original input resolution. Following each upsampling step, a convolutional layer (64 and 32 filters, respectively) with 3 × 3 kernels and ReLU activation further refines the spatial features.

In addition, the decoder adopts a new strategy to concatenate features in the space–time domain to merge the encodings obtained from the decoder, as well as from the LSTM block. To integrate temporal insights with spatial features in the reconstruction process, the output from the LSTM layer undergoes reshaping and up sampling. The LSTM output is reshaped into a 1 × 1 × 64 tensor and up sampled using a 64 × 64 up sampling layer to align its spatial dimensions with the encoder outputs. Mathematically, represented as \({h}_{1} =LSTM({X}_{reshape},128)\) and \({h}_{2} =LSTM({h}_{1},64)\) where \({h}_{1}\in {R}^{1*128}\) is the hidden state output from the first LSTM and the output being \({h}_{2}\in {R}^{64}\).

The LSTM output is then reshaped and upsampled, to match the spatial dimensions of the earlier convolutional features, making it compatible for concatenation as follows: \({h}_{3}=Reshape((\text{1,1},64){h}_{2})\) resulting to \({h}_{3}\in {R}^{1*1*64}\). The upscaling operation \({h}_{4}=UpSampling2D((\text{64,64}){h}_{3})\) increases the dimensions to match the previous convolution output \({h}_{4}\in {R}^{64*64*64}\).

This up sampled LSTM output is then concatenated with the final layer of the decoder in a second space–time concatenation step, enabling the model to combine the LSTM’s temporal insights with spatial feature maps from the encoder-decoder pathway, modelling the context of changes in remote-sensed images, represented below:

The last part of the model features the final convolutional layer processing the fused space–time features, which produces the result showing a binary mask indicating the existence of alterations, in this scenario. A final convolutional layer with 1 filter, 3 × 3 kernel, sigmoid activation, and same padding produces a single-channel output representing the reconstructed or predicted spatial map.

Experimental results

Dataset

The Egypt Building Change Detection (EGY BCD) dataset aims to identify alterations, in buildings using satellite images with a resolution of 0.25 m per pixel. This dataset consists of pairs of images totalling 6091, each sized at 256 × 256 × 3 pixels, taken from four areas in Egypt; New Mansoura, El Galala City, New Cairo and New Thebes. These images were captured in 2017 and 2022 from Google Earth. The EGY BCD dataset showcases images featuring complex changes such as buildings that blend seamlessly with their surroundings, posing a challenge for advanced machine learning techniques. To train the proposed network, the training sample includes labelled ground-truth data categorized into two groups: “no-change” and “change,” for each pair of images.

Figure 2 below shows a sample of our input data, which represents an image at time point 1, T1 which represents images taken in the year 2017 and image taken at time point 2, T2 which represents images taken in the year 2022, over New Mansoura, El Galala City, New Cairo, and New Thebes in Egypt. Figure 1 also shows and sample of the label in the exact same location. These labels are collected ground truth labels that are categorized as either representing a region that has changed or one with no change.

A sample of the input data where column (a) showing images at time T1 in 2017, column (b) showing images at time T2 in 2022 and column (c) showing a sample of the label in the exact same location.

The WHU dataset is a comprehensive remote sensing dataset developed by Wuhan University for building extraction and change detection tasks. It consists of bi-temporal aerial images captured over urban areas in China, from the years 2012 and 2016. The original imagery, captured at a spatial resolution of 0.075 m, is resampled to 0.3 m per pixel for processing. Each data sample comprises a pair of co-registered image patches of size 512 × 512 pixels, representing the same geographic area at two different time points. The corresponding binary change mask serves as ground truth: white pixels (value = 1) denote areas where building change (construction or demolition) has occurred, and black pixels (value = 0) indicate unchanged regions. The dataset includes 18,000 + image pairs collected from 56 cities, and is curated specifically to support the training and evaluation of deep learning algorithms in building-level bi-temporal change detection.

Experiment setup

A summary of our experiment setup is shown in Table 1 below. The data was split with 70% allocated to the training set, 20% to the validation set, and 10% to the test set. The model was trained for 20 epochs with early stopping based on validation loss, using a batch size of 15. The Adam optimizer was used with a learning rate of 1e-4. Input images were resized to 64 × 64 pixels and normalized to pixel values between 0 and 1 by dividing pixel values by 255. Binary labels were generated using a threshold of 128. The binary cross-entropy loss function was employed for the change detection task. A validation split of 20% was used, and early stopping was triggered after 5 epochs without improvement. Inference time was measured over 50 runs (after 10 warm-up iterations), resulting in an average inference time of 15.12 ms per sample on the GPU.

All comparative methods (DMINet, SNUNet-CD) were trained under identical conditions (epochs, batch size, optimizer, and learning rate) for fairness. DuSTiLNet comprises 3.40 million trainable parameters and requires approximately 0.92 GMac FLOPs, with a peak GPU memory usage of 228 MB.

Our output is basically a grayscale image as shown below in Fig. 3. It shows highlighted change detected part between two images only. This means highlighting the area where there is change that happened from first timestamp image. All maps were automatically generated using Python 3.11 and OpenCV 4.8.0 within Google Colaboratory (https://colab.research.google.com), an online Jupyter notebook environment provided by Google. The environment included a Tesla T4 GPU with CUDA 12.4 support.

A comparisons of images taken at different times with their corresponding ground truth labels and predicted image showing occurring changes on the EGY-BCD dataset in the test set (a) shows a sample of images taken at time T1 in 2017, (b) shows a sample of images taken at time T2 in 2022, (c) is the ground truth image used in the training dataset and (d) shows the predicted image by SNUNet (e) shows the predicted image by DMINet and (f) shows the predicted image by DuSTiLNet. Predicted image indicates change occurring between years 2017 and 2022.

Figure 3 above shows a tabulation of images at time T1, T2, the ground truth images used for training and what our model actually predicts as the output as a sample of our results.

Performance evaluation

I) EGY-BCD Dataset: A tabulation of the performance of various existing methods on the EGY-BCD dataset are shown in Table 2 below. The visual results of the performance evaluation are also seen in Fig. 4 below. The proposed DuSTiLNet model achieved a high accuracy with an overall accuracy (OA), F1 score and intersection over union (IoU) of 97.4%, 89% and 86.7%, respectively. Although the proposed method’s overall accuracy outperforms the AFDE-Net method’s OA by a factor of 3.1, it unexpectedly slightly improves the AFDE-Net method’s evaluation of the intersection over union as shown in Table 4 below. Variations in the data collected from the dataset over the different time periods, and different angles of view between the two temporal images may cause it to struggle. These environmental transformations and the viewpoint deviations during image capture pose challenges to both the model detection and the accuracy of the ground truth labelling used in conjunction with the proposed methodology.

A comparison of images taken at different times with their corresponding ground truth labels and predicted image showing occurring changes on the WHU dataset (a) shows a sample of images taken at time T1 in 2012, (b) shows a sample of images taken at time T2 in 2016, (c) is the ground truth image used in the training dataset, (d) shows the predicted image by SNUNet (e) shows the predicted image by DMINet and (f) shows the predicted image by DuSTiLNet. Predicted image indicates change occurring between years 2012 and 2016.

II) WHU-Dataset: An additional dataset – the WHU dataset, was also used to evaluate the performance of DuSTiLNet. The results are presented in Table 3 below. These results are also visualized in Fig. 4 below. By comparing the experimental results, it can be seen that the DuSTiLNet method outperforms other methods in change detection tasks. The visual outputs are in close agreement with the ground truth reference images, with clear boundaries and fewer false positives. The proposed method shows a slight accuracy improvement of 0.05 as compared to the AFDE-Net as shown in Table 4 below.

To evaluate the computational efficiency of DuSTiLNet, we conducted comprehensive benchmarking against two state-of-the-art change detection methods: DMINet and SNUNet-CD. All models were trained under identical conditions to ensure fair comparison. Performance metrics were measured on the same hardware configuration, with inference times averaged over multiple runs.

Table 5 below presents the computational complexity comparison across key metrics. DuSTiLNet demonstrates competitive performance with 3.40 M parameters and 0.92 GMac FLOPs, achieving an average inference time of 15.12 ms with 228 MB peak GPU memory usage. Notably, our model requires significantly fewer computational operations compared to DMINet (18.8 × fewer FLOPs) and SNUNet-CD (5.1 × fewer FLOPs), while maintaining competitive inference speeds.

Ablation study

An ablation study was performed during this study, to evaluate the contribution of each component of our proposed network on the EGY-BCD dataset. A comparative study of the performance of each base model is presented in Table 6 below. The results show that removing the LSTM layer (CNN-Only Model) leads to a bigger drop in recall (~ 5–6%), meaning the model misses more real changes. This leads to the F1-score and IoU dropping because LSTM captures time-dependent features. On the other hand, removing dropout slightly increases accuracy but reduces recall, meaning the model overfits to seen data. Changing the position of LSTM placement slightly lowers recall and IoU, but the impact is small (~ 2–3%). Replacing UpSampling2D with Conv2DTranspose provides a small improvement (~ 0.8%) in F1-score and IoU, suggesting that learning an adaptive upsampling method is slightly better than interpolation.

Discussion

Pixel based methods, approaches that focus on analysing changes at the pixel level through comparing spectral characteristics or pixel attributes across different images or time periods, encounter difficulties in determining the thresholds for detecting changes and in accounting for spatial links between adjacent pixels48. On the hand object based CD (OBCD) methods, which inherently incorporate spatial features, address these challenges by segmenting images into objects, hence examining changes at the object level as noted by49. However, OBCD methods are computationally expensive, due to their reliance on handcrafted features and often requires manual tuning, reducing its efficiency in large-scale applications. These factors have driven the exploration of more automated, learning-based approaches, innovative network structures and intelligent machine learning approaches, that would create complex feature representations, through their strong modelling and learning capabilities11,12 and13. The outcomes of our model reinforce findings from other domains, such as river monitoring, where integrated spatial–temporal frameworks have enhanced the detection of gradual or subtle environmental changes. For instance,10 successfully applied a fusion of remote sensing, GIS, and machine learning in tracking fine-scale fluctuations, to model river system dynamics.

Unlike pixel based approaches, CNNs can be utilized to learn spatial features directly from the image data. Incorporating CNN in an encoder-decoder structure that preserves spatial information throughout the down sampling and up sampling processes is crucial for tasks requiring high-resolution output, such as CD in RS. This structure is particularly beneficial in tasks requiring precise localization of changes as spatial contextual information is captured. Deep Learning CD methods lack complementary information to colour and texture in RSCD decisions. According to3,50 and30, colour and texture features do not effectively capture the complexity and multidimensionality of changes in remote sensing images. CNN has a fixed receptive field size. Hence it tends to capture local spatial features such as edges and textures within relatively small regions, often missing the global context or long-range dependencies within the image. Focusing on local patches, may not accurately capture subtle changes between the two frames, especially if those changes span across different spatial regions. Therefore, in the context of RSCD, the CNN may fail to capture important changes in large-scale structures or between frames, limiting the model’s accuracy in detecting changes over time.

To address the above limitation, we explore an approach that integrates additional contextual information, such as spatial context and temporal dependencies to complement DL methods. In this work, we employ Long Short-Term Memory (LSTM) networks to model temporal dependencies between bi-temporal image pairs—two images acquired at distinct time points—treating them as minimal sequences rather than long time series. Recent studies have demonstrated that LSTM modules can effectively capture temporal dynamics between two images, enhancing change detection performance. For instance, Mou, Bruzzone, and Zhu introduced a Recurrent Convolutional Neural Network (ReCNN) that integrates convolutional layers with LSTM units to jointly learn spectral-spatial–temporal features from bi-temporal multispectral images23. Evaluated on the strictly bi-temporal LEVIR-CD and WHU-CD datasets, their model significantly outperformed non-recurrent baselines, confirming LSTM’s capability to capture temporal transitions between two images rather than requiring longer sequences. Similarly, Papadomanolaki et al., proposed a deep multi-task learning framework coupling semantic segmentation with fully convolutional LSTM blocks for urban change detection on the OSCD Sentinel-2 bi-temporal dataset24. Their method leverages LSTM to model temporal dependencies between two images, achieving superior change detection accuracy by effectively fusing temporal context. More recently, a dual-path 3DCNN-LSTM architecture demonstrated robust semantic change detection by fusing bi-temporal Zhuhai-1 Orbita hyperspectral and multi-season Sentinel-2 images25. Moreover Jing et al., applied a Trisiamese-LSTM network to object-based VHR bi-temporal image pairs, showing that LSTM layers effectively learned temporal interactions between co-registered feature sequences26.

The LSTM components can model the temporal dynamics between the bi-temporal image pair, facilitating the identification of meaningful changes over time51 and23. LSTMs are particularly adept at learning from sequences, allowing the model to understand the temporal dynamics of the changes being detected. The combination of spatial and temporal analyses enhances the accuracy and reliability of CD endeavours. Models that take into consideration both aspects are better equipped to distinguish changes, from background noise or anomalies leading to precise and actionable insights51,52,53,54,55 and56. By incorporating spatial features, models can capture the patterns and relationships between different land cover types, urban areas, vegetation, water bodies and other elements found in RS images. This spatial awareness is crucial for spotting changes in land use, land cover and environmental conditions as evidenced by57. Conversely, environmental changes caused by nature or human activities often evolve over time necessitating the analysis of temporal dynamics to detect these transformations58. Temporal dynamics encompass changes that happen from one year to another, season to season or even day by day. By taking into account temporal aspects, models can track the progress of changes, monitor trends, and identify anomalies that may indicate disturbances or events of interest. Many environments showcase interplays between spatial and temporal elements. For instance the growth patterns of vegetation are influenced by both the terrain and seasonal variations in rainfall and temperature. Through integrating spatial and temporal data, models can adapt to these intricacies offering a more nuanced comprehension of environmental shifts. The insights derived from detecting changes hold immense value for decision making across various domains, like urban planning, conservation efforts, disaster response initiatives, and policy development. By sharing timely updates, about changes these models help in making decisions that can reduce risks, optimize resources and support sustainable growth. The Long Short Term Memory (LSTM) layer plays a significant role in enabling the model to handle data effectively, by capturing long term dependencies within the information. In contrast to traditional recurrent neural networks (RNNs), LSTMs are engineered to retain information for extended periods, making them particularly useful for tasks that involve sequential data where the sequence of events is crucial. This includes applications like time series analysis, natural language processing and identifying changes in RS data by concatenating features from images captured at time intervals.

The inclusion of LSTM units introduces a temporal dimension to the analysis, making this approach more akin to a hybrid method that incorporates aspects of both pixel-based and temporal analysis59. The proposed method represents a novel approach that integrates aspects of these traditional methods while leveraging the power of deep learning to address the complexity of CD in RS imagery. Hybrid methodologies have shown promise in providing higher accuracy compared to relying solely on traditional CD methods48,60 and61.

The integration of the dual-time point encoder-decoder network with dual concatenation and LSTMs enables the simultaneous consideration of spatial and temporal information, which is essential for remote sensing CD, due to various reasons supported by scientific evidence51 and23. The multi-scale and multi-level feature extraction capabilities of the CNN in the encoder can effectively capture the diverse spatial and contextual characteristics of the input images at multiple scales62. This emphasizes spatial relationships at varying granularity. Our model implements multi-scale concatenation which has been shown to present richer semantic information while effectively representing change regions according to28 and63. Multi-scale concatenation, as shown in Eq. 1 above, involves combining features extracted at different scales or receptive fields to emphasize spatial relationships at different levels of detail. The proposed model architecture implicitly includes residual connections by concatenating the outputs of the encoder branches from two different time points Time 1 and Time 2 images, before flattening and reshaping the sequence of features to apply to the LSTM layer.

This concatenation of features at varying scales, aims to capture the changes or spatial relationships between images at different levels of detail. By combining features extracted from multiple scales, the model gains insight into how things evolve in images at both big levels as in the case of a new building and small levels as in the case of road crevices. This aids the model in forming an understanding of the differences between two timestamps. The output after applying the LSTM layer is further concatenated with the encoder subnetwork output to allow for learning of spatial–temporal dependencies before further processing of the more informative representation of the context of change on the ground.

To overcome the challenges seen in existing methods where model designs may lack interpretability during data analysis due to a lack of relationships between inputs and outputs, we propose using bi-temporal images with advanced computer vision networks.

This architecture is a customized version that utilizes the strengths of deep learning architectures for spatial feature extraction and integrates across time points using LSTMs for processing temporal information. It incorporates elements of a dual CNN encoder with a balanced decoder and RNNs/LSTMs to perform CD over time.

In learning models like those employed for CD in RS images, feature fusion involves combining features extracted from various sources or stages of the model to create a more comprehensive representation of the input data as represented in Eq. 2 above. To address CNN’s fixed kernel limitation, we introduce spatial–temporal feature fusion for contextual modelling, which increases the capacity of the feature map to hold more information by increasing the number of channels. This allows CNN to access spatial relationships present during subsequent layers, hence having a dimensional impact that extends CNN’s capability. This second concatenation is essential for integrating spatial and temporal information, crucial aspects in RS data analysis. The space–time fusion process typically includes concatenating the multi-scale fused features extracted by the encoder and processed through the LSTM with the decoder output. This step merges the spatial and temporal information into a unified single representation, for further processing.

By concatenating the spatial features from the decoder with the temporal features from the LSTM, the model enhances information representation. The spatial features provide context about the structure of the objects in the images, while the temporal features bring in information about how these objects or patterns change over time. In typical deep learning models, the decoder by itself works only with spatial features and could miss out on temporal dependencies. By introducing temporal features from the LSTM into the decoder via concatenation, the model can make more informed detections that consider both spatial and temporal patterns.

The model processes the integrated features further through additional convolution layers to refine the representation and make predictions based on the combined information. The convolutional layers in the decoder are responsible for extracting high-level spatial features from the input images. These features capture important information about the spatial structure (such as edges, shapes, textures) at different levels of abstraction. Further processing after merging spatial and temporal information through feature fusion ensures that the model gains a more nuanced understanding of changes in RS images. This enriched representation improves the accuracy of CD, making it valuable for tasks like monitoring urban growth, tracking deforestation or assessing agricultural productivity over time.

An important aspect of evaluating a change detection (CD) model is its ability to minimize false detections, particularly false positives—where the model incorrectly identifies unchanged regions as changed. False positive rate (FPR) directly measures this, reflecting the model’s sensitivity to noise, seasonal variation, and sensor inconsistencies. In our comparative analysis, the proposed DuSTiLNet model achieved the lowest FPR of 1.22%, outperforming other state-of-the-art methods across this critical metric. By contrast, the FPRs of several baseline models were notably higher as seen in Table 7 below.

Our temporal fusion strategy particularly the use of an LSTM module to model inter-temporal dependencies proves effective in reducing false alarms. DuSTiLNet uses time-based context-aware feature transition learning instead of pixel-wise spectral differences to detect and eliminate false change detections from shadows, sensor noise and seasonal lighting variations. The outcome produces a dependable system for real-world applications particularly in high-resolution densely built areas because false alarms would otherwise result in incorrect decisions and unnecessary actions. To empirically substantiate this design choice, we conducted comparative experiments in Table 7, demonstrating that our LSTM-based model outperformed baseline architectures e.g., simple concatenation, 2D-CNN fusion by a lower FPRs on the EGY-BCD dataset. Similar to64 that used LSTM across multiple bi-temporal change detection datasets, including LEVIR-CD, WHU-CD, and CLCD, our ablation studies demonstrate F1-scores improvements ranging from 0.2 to 7.5%. Empirically showing the advantage LSTM has over non-recurrent baselines.

The results show DuSTiLNet reaches 97.4% accuracy while maintaining superior precision in critical scenarios through its balanced detection sensitivity and error reduction capabilities. These findings reinforce the importance of evaluating CD models beyond accuracy alone and highlighting FPR which presents a meaningful metric for operational readiness assessment.

The novelty of the proposed DuSTiLNet lies in its architectural design, which integrates spatial and temporal modelling in a unified deep learning framework. This design uniquely addresses two key limitations: CNN-only architectures often lack the capacity to model both spatial and temporal context because of CNN’s receptive field constraint, while LSTM-only models can struggle to preserve fine spatial details. DuSTiLNet resolves both by fusing detailed spatial encodings with temporally-aware transitions, improving sensitivity to real changes while suppressing false positives caused by sensor noise, lighting variation, and seasonal shifts.

The proposed method’s performance dropped on the WHU dataset, as compared to that of the EGY-BCD dataset as seen on Table 4, which is worth analysing further. We believe that this discrepancy is due to seasonal variations present in the dataset. Additionally, sensor noise, in form of the angle of view between the bi-temporal images and the type of sensor used, likely affected the perceived building shapes, ultimately impacted the method’s performance.

The computational analysis reveals important trade-offs in our patch-based approach. DuSTiLNet achieves a favourable balance between model complexity and computational efficiency, with 50% fewer parameters than DMINet while maintaining comparable inference speeds (15.12 ms vs 16.34 ms). This efficiency stems from our LSTM-integrated architecture design, which enables effective bi-temporal modelling with significantly reduced computational overhead—requiring 19 × fewer FLOPs than DMINet and 5 × fewer than SNUNet-CD.

The memory footprint of 228 MB positions DuSTiLNet as practically deployable on standard GPU hardware, with usage comparable to DMINet (251 MB) and only moderately higher than SNUNet-CD (68 MB). This memory efficiency, combined with competitive inference times, makes our approach suitable for real-world applications where both accuracy and computational constraints are considerations.

Conclusion

This paper has shown evidence that incorporating spatial features and temporal dynamics in RS change detection is vital, for achieving precise and meaningful results. Through experimentation, our work has shown that increasing the total number of features available at each spatial location (i,j) leads to feature enrichment that leads to a richer, more informative representation. This complements deep learning CD methods’ strength, by extracting more detailed and informative representations from the input data to leveraging spatial–temporal dependencies. In addition, our model has shown that information integration for contextual awareness allows the model to access both spatial/static (structure and content) and temporal/dynamic (how it changes) data at each spatial location. This increases the model’s ability to recognize patterns sensitive to both contexts, such as CD between two frames. The combined feature map C processed through additional convolutional layers improves the model’s capability to identify changes in an image sequence by analyzing both contexts simultaneously.

Further, this paper has shown that without the LSTM layers, the model would rely purely on CNNs, which may only detect abrupt, highly localized changes but fail to recognize more nuanced, long-term transformations. This is because LSTM excels at understanding gradual or long-term changes by maintaining long-term memory of previous states, hence has improved subtle change detection. Hence LSTM has been shown to help the model achieve higher accuracy in detecting gradual changes, which are common in RS applications. The recurrent layer has also been seen to be significant in sequential learning of feature evolution. This is mainly because RS images often exhibit complex changes over time, for example seasonal variations or gradual infrastructure development, which might be missed by CNNs due to their focus on static spatial features. Operating on sequences of extracted features, allows the model to learn how these features evolve no matter how slow. The forget gate in LSTMs allows the network to maintain only the most relevant temporal information, discarding noise or less relevant patterns from earlier time steps. This memory-based mechanism makes the combined space–time model, well-suited to filter out noise and retain important but subtle temporal features. The spatial and temporal fusion allows the model to focus on gradual, small changes rather than reacting to one-off events (spatial changes) or noisy frames in the time series. CNNs capture local spatial details, while LSTMs are designed to manage sequential data; hence they filter out short-term noise effectively.

DuSTiLNet achieves a strong trade-off between performance and efficiency. With an inference time of 15.12 ms, low parameter count (3.40 M), and modest GPU memory usage (228 MB), it is competitive with both SNUNet and DMINet. Most notably, DuSTiLNet requires only 0.92 GMac, offering up to nineteen times greater FLOP efficiency than DMINet and five times more than SNUNet, highlighting its suitability for deployment in compute-limited environments. Notably, our method achieved the lowest false positive rate (FPR) of 1.22%, compared to significantly higher rates in existing models such as SNUNet (2.11%), and DMINet (1.56%). This indicates that DuSTiLNet is highly effective at suppressing false change detections, a crucial requirement for real-world applications like urban planning, disaster response, and infrastructure monitoring. The success of change detection depends on maximizing real change. By optimizing information representation, this paper maximizes the authentic information carried over from the input.

Data availability

The datasets analysed during this study are openly available in the [https://drive.google.com/u/0/uc?id = 16LLC1iSJQuFPrfuXCJSeSsEzKhXodhzp&export = download]. It is also available to be sent directly on request to gmugambi@jkuat.ac.ke (GM).

References

Bachmann-Gigl, U. & Dabiri, Z. Cultural heritage in times of crisis: Damage assessment in urban areas of ukraine using Sentinel-1 SAR data. ISPRS Int. J. Geo-Inf. 13(9), 319. https://doi.org/10.3390/IJGI13090319 (2024).

Doshi, J., Basu, S. & Pang, G. From satellite imagery to disaster insights. Accessed 25 Aug 2024. http://arxiv.org/abs/1812.07033

Shafique, A., Cao, G., Khan, Z., Asad, M. & Aslam, M. Deep learning-based change detection in remote sensing images: A review. Remote Sens. 14(4), 871. https://doi.org/10.3390/RS14040871 (2022).

Hussain, M., Chen, D., Cheng, A., Wei, H. & Stanley, D. Change detection from remotely sensed images: From pixel-based to object-based approaches. ISPRS J. Photogramm. Remote Sens. 80, 91–106. https://doi.org/10.1016/J.ISPRSJPRS.2013.03.006 (2013).

Wu, C., Du, B., Cui, X. & Zhang, L. A post-classification change detection method based on iterative slow feature analysis and Bayesian soft fusion. Remote Sens. Environ. 199, 241–255. https://doi.org/10.1016/J.RSE.2017.07.009 (2017).

Cao, G., Li, Y., Liu, Y. & Shang, Y. Automatic change detection in high-resolution remote-sensing images by means of level set evolution and support vector machine classification. Int. J. Remote Sens. 35(16), 6255–6270. https://doi.org/10.1080/01431161.2014.951740 (2014).

Ma, L. et al. Object-based change detection in urban areas: The effects of segmentation strategy, scale, and feature space on unsupervised methods. Remote Sens. 8(9), 761. https://doi.org/10.3390/RS8090761 (2016).

Zhang, Y., Peng, D. & Huang, X. Object-based change detection for VHR images based on multiscale uncertainty analysis. IEEE Geosci. Remote Sens. Lett. 15(1), 13–17. https://doi.org/10.1109/LGRS.2017.2763182 (2018).

Shi, W., Zhang, M., Zhang, R., Chen, S. & Zhan, Z. Change detection based on artificial intelligence: State-of-the-art and challenges. Remote Sens. 12(10), 1688. https://doi.org/10.3390/RS12101688 (2020).

Chatrabhuj, Meshram, K., Mishra, U. & Omar, P. J. Integration of remote sensing data and GIS technologies in river management system. Discov. Geosci. 2(1), 1–22. https://doi.org/10.1007/S44288-024-00080-8 (2024).

Ball, J. E., Anderson, D. T. & Chan, C. S. Comprehensive survey of deep learning in remote sensing: theories, tools, and challenges for the community. J. Appl. Remote Sens. 11(04), 1. https://doi.org/10.1117/1.JRS.11.042609 (2017).

Zhong, Y., Ma, A., Soon Ong, Y., Zhu, Z. & Zhang, L. Computational intelligence in optical remote sensing image processing. Appl. Soft Comput. 64, 75–93. https://doi.org/10.1016/J.ASOC.2017.11.045 (2018).

Ma, L. et al. Deep learning in remote sensing applications: A meta-analysis and review. ISPRS J. Photogram. Remote Sens. 152, 166–177 (2019).

Dotel, S. et al. Disaster assessment from satellite imagery by analysing topographical features using deep learning. ACM Int. Conf. Proc. Ser. https://doi.org/10.1145/3388818.3389160 (2020).

Storie, C. D. & Henry, C. J. Deep learning neural networks for land use land cover mapping. Int. Geosci. Remote Sens. Symp. 2018, 3445–3448. https://doi.org/10.1109/IGARSS.2018.8518619 (2018).

Araujo, M. S., Davila, D. S., Blaisdell, S. G. & Van Horn, A. Automated remote monitoring of offshore assets using satellite imagery and machine learning. Proc. Annu. Offshore Technol. Conf. 4, 2582–2600. https://doi.org/10.4043/28718-MS (2018).

Prakash, N., Manconi, A. & Loew, S. Mapping landslides on EO data: Performance of deep learning models vs. traditional machine learning models. Remote Sens. 12(3), 346. https://doi.org/10.3390/RS12030346 (2020).

Zhou, J., Yu, B. & Qin, J. Multi-level spatial analysis for change detection of urban vegetation at individual tree scale. Remote Sens. 6(9), 9086–9103. https://doi.org/10.3390/RS6099086 (2014).

Kussul, N., Lavreniuk, M., Skakun, S. & Shelestov, A. Deep learning classification of land cover and crop types using remote sensing data. IEEE Geosci. Remote Sens. Lett. 14(5), 778–782. https://doi.org/10.1109/LGRS.2017.2681128 (2017).

Chen, J. et al. DASNet: Dual attentive fully convolutional siamese networks for change detection in high-resolution satellite images. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. https://doi.org/10.1109/JSTARS.2020.3037893 (2021).

Zhang, M., Xu, G., Chen, K., Yan, M. & Sun, X. Triplet-based semantic relation learning for aerial remote sensing image change detection. IEEE Geosci. Remote Sens. Lett. 16(2), 266–270. https://doi.org/10.1109/LGRS.2018.2869608 (2019).

Pan, F. et al. A temporal-reliable method for change detection in high-resolution bi-temporal remote sensing images. Remote Sens. 14(13), 3100. https://doi.org/10.3390/RS14133100 (2022).

Mou, L., Bruzzone, L. & Zhu, X. X. Learning spectral-spatialoral features via a recurrent convolutional neural network for change detection in multispectral imagery. IEEE Trans. Geosci. Remote Sens. 57(2), 924–935. https://doi.org/10.1109/TGRS.2018.2863224 (2019).

Papadomanolaki, M., Vakalopoulou, M. & Karantzalos, K. A deep multitask learning framework coupling semantic segmentation and fully convolutional LSTM networks for urban change detection. IEEE Trans. Geosci. Remote Sens. 59(9), 7651–7668. https://doi.org/10.1109/TGRS.2021.3055584 (2021).

Wen, D., Jiang, Y., Chen, D. & Tian, Y. Fusion of bi-temporal Zhuhai-1 Orbita hyperspectral and multi-season sentinel-2 remote sensing imagery for semantic change detection based on dual-path 3DCNN-LSTM. IEEE Geosci. Remote Sens. Lett. https://doi.org/10.1109/LGRS.2025.3528020 (2025).

Jing, R. et al. Object-based change detection for VHR remote sensing images based on a Trisiamese-LSTM. Int. J. Remote Sens. 41(16), 6209–6231. https://doi.org/10.1080/01431161.2020.1734253 (2020).

Papadomanolaki, M., Vakalopoulou, M. & Karantzalos, K. Urban change detection based on semantic segmentation and fully convolutional lstm networks. ISPRS Ann. Photogramm. Remote Sens. Spat. Inf. Sci. V-2–2020(2), 541–547. https://doi.org/10.5194/ISPRS-ANNALS-V-2-2020-541-2020 (2020).

Lu, D., Cheng, S., Wang, L. & Song, S. Multi-scale feature progressive fusion network for remote sensing image change detection. Sci. Rep. 12(1), 1–19. https://doi.org/10.1038/s41598-022-16329-6 (2022).

Yuan, Q. et al. Deep learning in environmental remote sensing: Achievements and challenges. Remote Sens. Environ. 241, 111716. https://doi.org/10.1016/J.RSE.2020.111716 (2020).

Parelius, E. J. A review of deep-learning methods for change detection in multispectral remote sensing images. Remote Sens. 15(8), 2092. https://doi.org/10.3390/RS15082092 (2023).

Rußwurm, M. & Krner, M. Multi-temporal land cover classification with sequential recurrent encoders. ISPRS Int. J. Geo-Inf. 7(4), 129. https://doi.org/10.3390/IJGI7040129 (2018).

Mountrakis, G., Im, J. & Ogole, C. Support vector machines in remote sensing: A review. ISPRS J. Photogramm. Remote Sens. 66(3), 247–259. https://doi.org/10.1016/J.ISPRSJPRS.2010.11.001 (2011).

Shah, S. & Tembhurne, J. Object detection using convolutional neural networks and transformer-based models: A review. J. Electr. Syst. Inf. Technol. 10(1), 1–35. https://doi.org/10.1186/S43067-023-00123-Z (2023).

Das, M. & Ghosh, S. K. Data-driven approaches for spatio-temporal analysis: A survey of the state-of-the-arts. J. Comput. Sci. Technol. 35(3), 665–696. https://doi.org/10.1007/S11390-020-9349-0 (2020).

Chen, H. & Shi, Z. A spatial-temporal attention-based method and a new dataset for remote sensing image change detection. Remote Sens. https://doi.org/10.3390/rs12101662 (2020).

Li, C., Zhang, H., Wang, Z., Wu, Y. & Yang, F. Spatial-temporal attention mechanism and graph convolutional networks for destination prediction. Front. Neurorobot. 16, 925210. https://doi.org/10.3389/FNBOT.2022.925210 (2022).

Zhang, M., Chen, F., Liang, D., Tian, B. & Yang, A. Use of Sentinel-1 GRD SAR images to delineate flood extent in Pakistan. Sustain. 12(14), 5784. https://doi.org/10.3390/SU12145784 (2020).

Saibi, H. et al. Applications of remote sensing in geoscience. Recent Adv. Appl. Remote Sens. https://doi.org/10.5772/INTECHOPEN.75995 (2018).

Oreikio, A. E., Harry, A. A., Charles, A. U. & Rowland, E. D. Implication of landscape changes using google earth historical imagery in Yenagoa Bayelsa State, Nigeria. Sumerianz J. Sci. Res. 51, 20–31. https://doi.org/10.47752/SJSR.51.20.31 (2022).

Gaetano, R., Ienco, D., Ose, K. & Cresson, R. MRFusion: A deep learning architecture to fuse PAN and MS imagery for land cover mapping. (2018) Accessed 04 Sept. 2024. https://arxiv.org/abs/1806.11452v1

Xie, X., Ye, L., Kang, X., Yan, L. & Zeng, L. Land use classification using improved U-Net in remote sensing images of urban and rural planning monitoring. Sci. Program. https://doi.org/10.1155/2022/3125414 (2022).

Siddique, N., Paheding, S., Elkin, C. P. & Devabhaktuni, V. U-Net and its variants for medical image segmentation: A review of theory and applications. IEEE Access 9, 82031–82057. https://doi.org/10.1109/ACCESS.2021.3086020 (2021).

Kamdem De Teyou, G., Tarabalka, Y., Manighetti, I., Almar, R. & Tripodi, S. Deep neural networks for automatic extraction of features in time series optical satellite images. Int. Arch. Photogramm. Remote Sens. Spat. Inf. Sci. ISPRS Arch. 43(B2), 1529–1535. https://doi.org/10.5194/ISPRS-ARCHIVES-XLIII-B2-2020-1529-2020 (2020).

Holail, S., Saleh, T. & Xiao, X. Deep supervision based-unet to detect buildings changes from VHR aerial imagery. Int. J. Environ. Ecol. Eng. 16(10), 2022 (2023).

Holail, S., Saleh, T., Xiao, X. & Li, D. AFDE-Net: Building change detection using attention-based feature differential enhancement for satellite imagery. IEEE Geosci. Remote Sens. Lett. 20(June), 1–5. https://doi.org/10.1109/LGRS.2023.3283505 (2023).

Fang, S., Li, K., Shao, J. & Li, Z. SNUNet-CD: A densely connected siamese network for change detection of VHR images. IEEE Geosci. Remote Sens. Lett. 19, 1–5. https://doi.org/10.1109/LGRS.2021.3056416 (2022).

Feng, Y., Jiang, J., Xu, H. & Zheng, J. Change detection on remote sensing images using dual-branch multilevel intertemporal network. IEEE Trans. Geosci. Remote Sens. https://doi.org/10.1109/TGRS.2023.3241257 (2023).

Cheng, G. et al. Change detection methods for remote sensing in the last decade: A comprehensive review. Remote Sens. 16(13), 2355. https://doi.org/10.3390/RS16132355 (2024).

Ennouri, K., Smaoui, S. & Triki, M. A. Detection of urban and environmental changes via remote sensing. Circ. Econ. Sustain. 1(4), 1423. https://doi.org/10.1007/S43615-021-00035-Y (2021).

Jiang, H. et al. A survey on deep learning-based change detection from high-resolution remote sensing images. Remote Sens. 14(7), 1552. https://doi.org/10.3390/RS14071552 (2022).

Deng, Y. et al. TChange: A hybrid transformer-CNN change detection network. Remote Sens. 15(5), 1219. https://doi.org/10.3390/RS15051219 (2023).

Corley, I., Robinson, C. & Ortiz, A. A change detection reality check. (2024). https://doi.org/10.48550/ARXIV.2402.06994

Si Salah, H., Goldin, S. E., Rezgui, A., Nour El Islam, B. & Ait-Aoudia, S. What is a remote sensing change detection technique? Towards a conceptual framework. Int. J. Remote Sens. 41(5), 1788–1812. https://doi.org/10.1080/01431161.2019.1674463 (2020).

Kharroubi, A., Poux, F., Ballouch, Z., Hajji, R. & Billen, R. Three dimensional change detection using point clouds: A review. Geomatics 2(4), 457–485. https://doi.org/10.3390/GEOMATICS2040025 (2022).

Moser, G., Anfinsen, S. N., Luppino, L. T. & Serpico, S. B. Change detection with heterogeneous remote sensing data: From semi-parametric regression to deep learning. Int. Geosci. Remote Sens. Symp. https://doi.org/10.1109/IGARSS39084.2020.9323831 (2020).

Che, M., Vizziello, A. & Gamba, P. Spatio-temporal urban change mapping with time-series SAR data. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 15, 7222–7234. https://doi.org/10.1109/JSTARS.2022.3203195 (2022).

Woodcock, C. E., Loveland, T. R., Herold, M. & Bauer, M. E. Transitioning from change detection to monitoring with remote sensing: A paradigm shift. Remote Sens. Environ. 238, 111558. https://doi.org/10.1016/J.RSE.2019.111558 (2020).

Zhu, Z., Liu, B., Wang, H. & Hu, M. Analysis of the spatiotemporal changes in watershed landscape pattern and its influencing factors in rapidly urbanizing areas using satellite data. Remote Sens. 13(6), 1168. https://doi.org/10.3390/RS13061168 (2021).

Codegoni, A., Lombardi, G. & Ferrari, A. TINYCD: A (not so) deep learning model for change detection. Neural Comput. Appl. 35(11), 8471–8486. https://doi.org/10.1007/S00521-022-08122-3/FIGURES/6 (2023).

Nativel, S. et al. Hybrid methodology using Sentinel-1/Sentinel-2 for soil moisture estimation. Remote Sens. 14(10), 2434. https://doi.org/10.3390/RS14102434 (2022).

Pan, J., Li, X., Cai, Z., Sun, B. & Cui, W. A self-attentive hybrid coding network for 3D change detection in high-resolution optical stereo images. Remote Sens. 14(9), 2046. https://doi.org/10.3390/RS14092046 (2022).

Yang, Y., Gu, H., Han, Y. & Li, H. An end-to-end deep learning change detection framework for remote sensing images. Int. Geosci. Remote Sens. Symp. https://doi.org/10.1109/IGARSS39084.2020.9324076 (2020).

Tang, Y., Cao, Z., Guo, N. & Jiang, M. A siamese swin-unet for image change detection. Sci. Rep. 14(1), 1–9. https://doi.org/10.1038/s41598-024-54096-8 (2024).

Wu, Z., Ma, X., Lian, R., Zheng, K. & Zhang, W. CDXLSTM: Boosting remote sensing change detection with extended long short-term memory. 1 (2025) Accessed 02 July 2025. https://arxiv.org/pdf/2411.07863v3

Author information

Authors and Affiliations

Contributions

Data search, G.M.; investigation, G.M. and C.M.; methodology, G.M.; project administration, R.R. and M.K.; validation, G.M.; writing-original draft, G.M.; writing-review and editing, G.M., R.R., M.K., C.G. and E.M.; supervision, R.R. and M.K. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Mugambi, G., Rimiru, R., Kimwele, M. et al. Spatial temporal fusion based features for enhanced remote sensing change detection. Sci Rep 15, 34074 (2025). https://doi.org/10.1038/s41598-025-14592-x

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-14592-x