Abstract

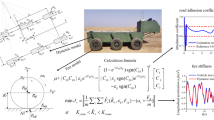

Yaw stability is essential for vehicle lateral control and is strongly influenced by the nonlinear dynamics of tire-road interaction. Tire Lateral Stiffness (TLS), a key parameter in this process, varies with tire properties and road conditions. Accurate TLS estimation is crucial for autonomous driving safety, especially during aggressive maneuvers or on low-friction surfaces. This paper proposes a novel TLS identification framework using modified Gradient Descent Methods (GDM) inspired by deep learning. A theoretical link is established between Recursive Least Squares (RLS) and GDM, revealing that RLS is a special case of GDM with adaptive learning rates. Based on this, improved RLS variants are developed for real-time use. Simulations compare different GDM and RLS algorithms, showing effective TLS tracking under varying conditions. Adaptive methods like Adam, which adjust gradients and learning rates, achieve improved performance, with Relative Steady-State Error (RSSE) below 5% and t10 (response time to reach 10% error band) under 3 s. These results offer practical guidance for estimator selection in safety-critical autonomous vehicle systems.

Similar content being viewed by others

Introduction

Tire lateral stiffness (TLS) reflects the tire–road interaction during lateral maneuvers and is fundamental to vehicle handling and stability. In autonomous driving, accurate and real-time TLS estimation supports precise path tracking, effective obstacle avoidance, and robust performance under diverse road conditions. As a core parameter in vehicle dynamics modeling, TLS directly affects control accuracy and system responsiveness, especially under rapidly changing driving scenarios1,2. Due to the dynamic nature of TLS, this task becomes particularly challenging, as TLS fluctuates with tire wear, tire pressure differences, temperature changes, road friction variations, and load variations3,4. Advanced control algorithms, such as Model Predictive Control (MPC)5,6 and Linear Quadratic Regulator (LQR)7,8 require TLS as key parameters to achieve optimal performance. These model-based methods involve predictive modeling, state estimation, and input optimization, utilizing TLS to improve steering accuracy, handling performance, and overall vehicle dynamics.

Existing methods for TLS estimation can be broadly classified into offline measurement methods and online estimation methods. The offline measurement method relies on direct measurements through extensive tests9. However, these methods often require large amounts of experimental data and complex testing equipment, which makes them lack real-time adaptability and unsuitable for meeting the real-time estimation needs of autonomous driving systems. In contrast, online estimation methods combine real-time sensor data with vehicle dynamics models, allowing continuous updating of TLS parameters and providing real-time feedback for dynamic control.

Dynamic-based TLS estimation methods

Among online estimation methods, some directly use sensor data to derive TLS through vehicle dynamics relationships10,11,12. For example, Bevly used GPS/INS fusion technology to measure high-precision vehicle slip angle data10, combining a two-degree-of-freedom vehicle model and Newtonian mechanics to successfully estimate TLS in real time using the linear relationship between lateral force and slip angle. Similarly, Wang11 adopted a vehicle dynamics model based on longitudinal tire force differences and implemented a recursive estimation algorithm to update the TLS in real time. However, these methods often rely on specific vehicle models and ideal sensor data. In practical applications, factors such as complex road conditions, sensor noise, and model uncertainties can affect the accuracy and robustness of the estimation. To improve the robustness of the estimation results, researchers have gradually introduced least squares methods to handle measurement uncertainties and model errors13,14,15,16,17. For example, Song15 applied the Recursive Least Squares algorithm for real-time TLS estimation using the Dugoff tire model. Yang17 constructed an RLS regression model to estimate time-varying parameters by decomposing the TLS into nominal values and dynamic uncertainties. Although these methods can effectively improve the accuracy of the estimation in certain cases, the stability and real-time performance of algorithms remain challenged, particularly in noisy environments. In long-duration and large-scale driving scenarios, error accumulation and algorithm convergence speed can significantly impact practical performance.

Kalman filter-based TLS estimation methods

Moreover, Kalman filtering techniques are widely used for TLS estimation due to their strong noise suppression capabilities18,19,20. For instance, Baffet18 combined a sliding mode observer (SMO) with an Extended Kalman Filter (EKF) to estimate TLS in real-time, while Park19 extended the state vector and designed an Interactive Multiple Model (IMM) Kalman Filter, integrating kinematic and dynamic models to estimate both TLS and vehicle states using low-cost sensor data. However, Kalman filtering methods heavily rely on system models, and their performance may significantly degrade in nonlinear and time-varying environments, resulting in insufficient estimation accuracy.

Adaptive and deep learning-based methods

In recent years, with the continuous development of computational power and algorithms, online estimation methods based on adaptive estimation and neural networks have gradually become research hotspots21,22,23,24,25,26,27. For example, NI21 used experimental data modeling and real-time vehicle state parameter estimation to achieve online TLS estimation while adaptively adjusting the road friction coefficient. Lopes23 proposed a nonlinear adaptive algorithm combining Lyapunov stability theory, projection operations, and persistent excitation conditions to accurately estimate TLS. Jeon26 decomposed tire forces into linear and nonlinear components learned through neural networks and achieved online TLS estimation by combining vehicle dynamics models and real-time measurements. Although these methods exhibit strong adaptability and real-time performance under complex driving conditions, they also have limitations. Adaptive methods depend on accurate model assumptions, and once the model deviates from reality, estimation accuracy decreases. Neural network methods usually require large amounts of training data and may encounter over-fitting issues. Furthermore, balancing computational complexity and real-time performance remains a challenge, especially for autonomous driving platforms with limited computational resources.

In conclusion, existing online TLS estimation methods still face challenges in accuracy, robustness, and real-time performance, especially under high noise and rapidly changing conditions. To address these issues, this paper proposes a real-time estimation framework based on gradient descent and least squares methods. A key innovation is the introduction of a dual-error model, which constructs two error equations using different vehicle signals. Compared with traditional frameworks, this model enhances robustness by incorporating more vehicle dynamics information, making it more reliable in complex driving environments. Simulation comparisons under various conditions validate the effectiveness and improved performance of the proposed approach.

The main contributions of this paper are as follows.

-

(1)

A unified dual-error modeling framework is established to support both GDM and RLS-based identification methods. This framework effectively tackles the limitations of existing approaches in achieving sufficient accuracy and robustness for real-time TLS estimation under dynamic and uncertain driving scenarios.

-

(2)

To improve the adaptability and performance of online TLS estimation, enhancements are introduced to traditional GDM and RLS algorithms. Specifically, GDM’s limitations in convergence speed and sensitivity to learning rates are addressed using adaptive strategies inspired by deep learning. Additionally, a modified RLS scheme is developed to better accommodate non-stationary environments and improve estimation reliability.

-

(3)

Extensive validation is performed under diverse and realistic driving conditions. In contrast to prior work, which often overlooks variable speeds or rapid changes in TLS, the proposed method consistently achieves high estimation accuracy and rapid adaptation, demonstrating both theoretical rigor and strong practical applicability for real-time vehicle control.

The structure of this paper is as follows: First, the background of vehicle lateral dynamics modeling and TLS estimation is introduced. Then, the application of gradient descent and least squares methods in real-time TLS estimation is elaborated, and the performance of the algorithm under different historical step size information is compared. Finally, numerical experiments are conducted to verify the effectiveness of the proposed methods.

Theoretical framework

Modeling of the vehicle lateral dynamics

This paper adopts a model-based approach for vehicle lateral motion control and TLS estimation. The tire-road interaction characteristics, represented by the lumped TLS, vary with vehicle (e.g., temperature) and road (e.g., icy surface) conditions. High-frequency variations are effectively filtered by the vehicle system, leaving only the relevant low-frequency components. A 2-DOF model of the vehicle’s lateral and yaw motion is then developed for TLS adaptation.

To establish the two-degree-of-freedom (2-DOF) vehicle model, the following assumptions are made:

-

1.

A body-fixed xy coordinate system is attached to the vehicle’s center of mass and moves along with the vehicle.

-

2.

The longitudinal velocity \(v_x\) is assumed to be approximately constant during the steering maneuver.

-

3.

The steering angles applied to the front wheels are small. Therefore, the small-angle approximation holds:

$$\begin{aligned} \sin {\left( \delta \right) }\approx \delta , \cos {\left( \delta \right) }\approx 1. \end{aligned}$$ -

4.

The lateral velocity \(v_y\) and yaw rate \(\omega _z\) are relatively small compared to the longitudinal velocity \(v_x\). As a result, the front and rear wheel slip angles can be approximated as:

$$\begin{aligned} \tan ^{-1}{\left( \frac{v_y+l_f\omega _z}{v_x}\right) }\approx \frac{v_y+l_f\omega _z}{v_x}=\beta +\frac{l_f\omega _z}{v_x}, \end{aligned}$$$$\begin{aligned} \tan ^{-1}{\left( \frac{v_y-l_r\omega _z}{v_x}\right) }\approx \frac{v_y-l_r\omega _z}{v_x}=\beta -\frac{l_r\omega _z}{v_x} \end{aligned}$$where \(\beta\) is the vehicle sideslip angle.

-

5.

The directions of the front and rear wheel sideslip angles are defined as shown in Fig. 1. Based on this convention, the lateral tire forces \(F^f_y\) and \(F^r_y\) act in the y-direction, while the longitudinal driving forces \(F^f_x\) and \(F^r_x\) act in the x-direction.

Vehicle bicycle model.

According to Newton’s law of motion, the relationship between the lateral acceleration and the external forces acting at the front and rear wheels is governed by Eq. (1). Similarly for the vehicle yaw dynamics as in Eq. (2):

where, \(v_x\) and \(v_y\) represent the longitudinal and lateral velocities, respectively; \(F_x^f\) and \(F_x^r\) the longitudinal forces at the front and rear wheels, and \(F_y^f\) and \(F_y^r\) the respective lateral forces; \(l_f\) and \(l_r\) the distances from the vehicle mass center to the respective front and rear axle. \(\delta\) is the steering angle of the front wheels; m the vehicle mass; and \(I_z\) the yaw moment of the vehicle; \(\omega _z\) the yaw velocity. \(\beta\), \(\beta _f\) and \(\beta _r\) are the respective vehicle, front and rear wheel side-slip angle.

Tire lateral forces.

Front and rear lateral forces \(F^f_y\) and \(F^r_y\) are the most critical and interesting part of the model, depending nonlinearly on the respective front and rear sideslip angle \(\beta _f\) and \(\beta _r\), as illustrated in Fig. 2. These nonlinear relationships can be linearized locally around the operating sideslip angle \(\beta _f\) and \(\beta _r\), yielding:

where \(C_f\) and \(C_r\) represent the lumped equivalent TLS, and sideslip angle \(\beta _f\) and \(\beta _r\) are further expressed as:

by substituting Eqs. (3), (4) and (5) into Eqs. (1) and (2), results in:

for the system control, the matrix version of the model is often preferred, and given in the following:

in which \(\mathbf{x}=[v_y,\omega _z]^T\) is the system state; \(\mathbf{u}=[\delta ]\) the control input. State matrix \(\mathbf{A}\) and control matrix \(\mathbf{B}\) are as below

\(C_f\) and \(C_r\) are lumped TLS for estimation, based on the vehicle model been developed, which is the core of this paper.

Dual-error model for TLS estimation

This section presents an online estimation algorithm for vehicle TLS, integrated into a closed-loop control framework for autonomous vehicles in dynamic road environments. As shown in Fig. 3, the system consists of three main components: the road system, the control system with the TLS estimation algorithm, and the physical system. The process starts with the onboard perception system, which collects real-time data, such as road curvature, vehicle state, and obstacle information. This data is used to generate a reference trajectory that meets the vehicle’s dynamic constraints. By transforming the vehicle state to global coordinates and comparing it with the reference trajectory, key deviations in lateral position (\(e_y\)) and yaw angle (\(e_\varphi\)) are captured.

Vehicle adaptive lateral control architecture.

Within this framework, the TLS parameters, \(C_f\) and \(C_r\), are required for model-based control as time-varying elements in the vehicle dynamics equations. The yellow region in the figure represents the identification system framework. The error models between the observed and measured vehicle states form the basis of the online TLS estimation, enabling the control system to adapt to changing conditions in real time.

Based on the 2DOF vehicle model, this section constructs the lateral dynamics error model Eq. (11) and the yaw rate error model Eq. (12). When the estimates of the TLS reach the real values, both error equations will approach zero, thereby confirming the accuracy of the parameter estimates and the effectiveness of the model. The detailed derivation proceeds as follows: The output of the system is designed as \(\mathbf{y}\ =\ \left[ a_y,\ \varepsilon _z\right]\), initially. For the convenience reason, Eqs. (6) and (7) are rewritten as:

where \({\hat{C}}_f\) and \({\hat{C}}_r\) are the respective estimated TLS, \({\hat{a}}_y\) and \({\hat{\varepsilon }}_z\) the modeled outputs of lateral and yaw accelerations.

Bearing in mind that the acceleration \(a_y\) includes the centrifugal one in lateral direction, namely

this way of handling \({\hat{a}}_y\) is due to the fact the vehicle acceleration sensor captures the corresponding vehicle centrifugal information indistinguishably. The error model \(\mathbf{e}_y\) describes the discrepancy between the estimation and measurement, is defined as:

in which \(a_y\) and \(\varepsilon _z\) are the measured lateral and yaw accelerations. Note that since \(a_y\) and \(\varepsilon _z\) are the functions of actual TLS \(C_f\) and \(C_r\), thus error function can be represented by the term of estimation both \({\hat{C}}_f\) and \({\hat{C}}_r\) as:

in this way, the error model for \(e_{ay}\) and \(e_{\varepsilon z}\) are represented by the estimation error \(e_{cf}\), \(e_{cr}\), these expressions are further tided up as:

where

it is clear that both \(e_{ay}\) and \(e_{\varepsilon z}\) contains the TLS error information. \(e_{ay}\ =\ 0\) and \(e_{\varepsilon z}\ =\ 0\) leads to \({\hat{C}}_f-C_f=0\) and \({\hat{C}}_r-C_r=0\), as long as \(v_y \ne 0\) and \(\omega _z\ne 0\). This is the basis of accurate \(C_f\) and \(C_r\) estimation

Bear in mind that the TLS are independent parameters and therefore must be estimated at the same time. Numerical results (as shown in Fig. 4) show that using only the lateral error or the yaw rate error cannot achieve accurate TLS estimation. This can be explained more clearly from a mathematical point of view. Specifically, the Jacobian matrix of the lateral error model in Eq. (13) and that of the yaw rate error model in Eq. (14) each have a rank of 1, while there are two unknown parameters to estimate: \(C_f\) and \(C_r\). This means that each model alone provides only one independent equation, and the solution space contains an infinite number of possible \((C_f, C_r)\) combinations that minimize the error. As a result, the problem becomes underdetermined.

However, the solution spaces (or null spaces) of the two models are generally different. By combining both error models into a single cost function, the overlap of the two solution spaces narrows down to a unique solution under ideal conditions. This explains why the dual-error model performs better: it uses information from both lateral and yaw motion to constrain the estimation, which helps avoid ambiguity and improves accuracy. Based on this understanding, a TLS identification framework using the dual-error model is developed in section “Algorithm Implementation”.

Figure 4 shows the convergence of the estimated TLS over 50s under a Scenario 60 km/h (present in Fig. 12), using the gradient descent method. Figure 4a presents the results for the front tires, and Fig. 4b for the rear tires. Each plot includes four curves, representing the estimated TLS values obtained using three error models: the lateral acceleration error model, the yaw acceleration error model, and the dual-error model, alongside the reference TLS values.

TLS estimation with different error models under Scenario 60 km/h.

The results clearly indicate that the dual-error model outperforms the single-error models in terms of estimation accuracy, with errors measured using the Root Mean Square Error (RMSE) metric. When the algorithm reaches steady state, the RMSE values for the TLS are significantly lower for the dual-error model compared to the single-error models. These findings demonstrate that the dual-error model achieves superior performance in both convergence speed and estimation precision. This result supports the earlier analysis regarding the advantages of using a dual-error model in parameter estimation. By incorporating both lateral and yaw dynamics, the dual-error model offers a more comprehensive representation of the vehicle’s behavior, leading to notably improved estimation accuracy. The detailed RMSE values for the TLS can be found in Table 1.

Algorithm implementation

Given that the vehicle parameters \(l_f\) and \(l_r\) are known, and the system states \(a_y\) and \(\varepsilon _z\) are measured, the optimal estimation of TLS can be obtained by minimizing the errors of \(e_{ay}\) and \(e_{\varepsilon z}\). Commonly employed approaches include the gradient descent and least squares methods, along with their respective variants. The subsequent sections will provide a detailed discussion of these techniques, as well as from multiple perspectives to identify the most effective and efficient strategy for online TLS estimation.

Gradient descent method

Gradient descent is a widely used optimization method for parameter estimation28, where the algorithm iteratively updates the model parameters in the direction of the negative gradient of a cost function to minimize the error between the predicted and observed outputs29. In TLS estimation, gradient descent is employed to refine the estimates by reducing discrepancies between the estimated and reference values. The principle of gradient descent is based on calculating the gradient (or derivative) of the error with respect to the parameters and adjusting the parameters to move towards the minimum error.

Principle of gradient descent method

The Gradient Descent Method (GDM) employed in this paper is an iterative optimization algorithm in which, at each iteration, the search direction is opposite to the gradient of the cost function J. This approach ensures rapid convergence to the optimal solution, provided that the cost function is convex and differentiable. The update rule for GDM is given by:

where \(J(\theta )\) represents the cost function, \(\nabla J\) is the gradient, \(\lambda\) is the learning rate, and \(\theta \ =\ \left[ {\hat{C}}_f,\ {\hat{C}}_r\right] ^T\) is the parameters to be estimated. The task of determining an appropriate cost function \(J(\theta )\) central to the algorithm’s design, and will be addressed in the following section. Figure 5 illustrates the application of GDM with the cost function. Figure 5a demonstrates the general working principle of gradient descent in the parameter space, while Fig. 5b emphasizes the strictly convex nature of the cost function. The convexity ensures that the algorithm converges reliably to a unique global minimum.

Gradient descent method illustration.

The gradient of a function indicates the direction along which the function increases most rapidly. Therefore, the negative gradient points in the fastest decrease direction. This is why the negative gradient is used in Eq. (15) for updating the system parameter \({\hat{C}}_f\) and \({\hat{C}}_r\).

Gradient direction and search path.

As shown in Fig. 6, the gradient direction is perpendicular to the contour of the function. The directional derivative of unit vector \(\mathbf{d}\) is governed by:

when \(\alpha\) is the assumption between the gradient \({\nabla J}(\theta )\) and the directional vector \(\mathbf{d}\) when \(\alpha =180^\circ\) (i.e., When cos\(\alpha =-1\)), the directional derivative \(D_\mathbf{d}J(\theta )\) reaches its minimum. In this case, \(\mathbf{d}\) points in the opposite direction to the gradient, causing the function to decrease at the fastest rate. This demonstrates that the negative gradient direction, \(-{\nabla J}({\theta })\), leads to the greatest reduction in the cost function. Thus, the gradient descent method applies the negative gradient to iteratively update the parameters, ensuring that the cost function is minimized effectively. This principle underpins the application of gradient descent in the TLS estimation algorithm.

Frameworks of gradient descent method

Squared error cost function for error \(e_{ay}\) and \(e_{\varepsilon z}\) is constructed. This cost function is then used by the gradient descent method to estimate \({\hat{C}}_f,\ \ {\hat{C}}_r\). Let the cost function for error \(e_{ay}\) and \(e_{\varepsilon z}\) be:

Accurate estimation of TLS requires the consideration of at least two distinct error models, namely \(e_{ay}\) and \(e_{\varepsilon z}\). As outlined in the previous section, combining these cost functions is essential for the reliable estimation of TLS. The joint error function for the dual-error model is given by:

where \(\gamma _{ay}\) and \(\gamma _{\varepsilon z}\) represent the weights assigned to the \(e_{ay}\) and \(e_{\varepsilon z}\) error models, respectively. The vectors \(e_{ay}\) and \(e_{\varepsilon z}\) are concatenated into a matrix, with the error terms \(e_{ay}\) and \(e_{\varepsilon z}\) arranged sequentially over time. The structure of these error vectors is as follows:

in which \(e_{ay}^i\), \(i = [1, 2,..., n]\) represents \(a_y\) error at the time step i. Referring to Eq. (13), the corresponding states and control input become \(v_x^i\), \(v_y^i\), \(\omega _z^i\) and \(\delta ^i\), respectively. It is similarly for \(e_{\varepsilon z}^i\). The gradient of cost function wrt \({\hat{C}}_f\), \({\hat{C}}_r\) is as:

the terms \(\frac{\partial e_{ay}}{\partial \widehat{C_f}}\), \(\frac{\partial e_{ay}}{\partial \widehat{C_r}}\), \(\frac{\partial e_{\varepsilon z}}{\partial \widehat{C_f}}\), and \(\frac{\partial e_{\varepsilon z}}{\partial \widehat{C_r}}\) are given as follows:

note that \(\otimes\) here represents the element-wise multiplication operation (point-wise operation) between vectors and/or matrices. where \(\mathbf{v}_x = \left[ v_x^1, v_x^2,...v_x^n\right] ^T\), \(\mathbf{v}_y= \left[ v_y^1, v_y^2,...v_y^n\right] ^T\), \(\mathbf {\delta } = \left[ \delta ^1, \delta ^2,...\delta ^n\right] ^T\), and \(\mathbf {\omega }_z= \left[ \omega _z^1, \omega _z^2,...\omega _z^n\right] ^T\) . The vector version of the gradient, as given in Eq. (17), is concise and mathematically efficient, but it hides several important details that are crucial for a complete understanding of the system’s structure. Thus, the following equivalent version with more direction expression is given:

where \({\nabla }J_i\) is the gradient of the local cost function \(J_i=\frac{1}{2}\left( \gamma _{ay}e_{ay}^ie_{ay}^i+\gamma _{\varepsilon z}e_{\varepsilon z}^ie_{\varepsilon z}^i\right)\). Hence, global cost function as in Eq. (16) can be re-written as:

consequently, the local gradient \({\nabla }J_i\) is further expressed as:

where, Jacobian matrix \(\left[ \frac{\partial e_{ay}^i}{\partial {\hat{C}}_f}, \frac{\partial e_{ay}^i}{\partial {\hat{C}}_r}; \frac{\partial e_{\varepsilon z}^i}{\partial {\hat{C}}_f}, \frac{\partial e_{\varepsilon z}^i}{\partial {\hat{C}}_r}\right]\) represents the instantaneous realization of Eq. (18) at the given time step.

It is now clear that the gradient as defined by Eq. (17) is just an average of all the local gradients from time step 1 to n, and is therefore heavily filtered once n is sufficient large. Essentially the late gradient in the time serious has almost no effect with the TLS estimation. Thus, the system is not able to follow a quick variation with the road conditions which could sometime dominate the TLS. To address this, three gradient descent methods are developed in this paper, each utilizes different sizes of the local gradients, and these methods are proposed for the online estimation of TLS. A comparison and analysis of the effectiveness of these three methods will be presented later in the section.

Single-Step Gradient Descent (sGD) method: In this approach, only the most recent sample is applied to calculate the global gradient. This allows for efficient update the TLS at each iteration with this single-step gradient, thus can follow rapid change of road and tire conditions. with sGD, the cost function becomes

and gradient as

Bearing in mind that n hereby stands for the current time step. sGD has apparent drawbacks that it will introduce randomness when updating with the instantaneous gradient. The randomness may originate from external condition variations, measurement noise, and uncertainties in modeling and numerical approximations.

Mini-Batch Gradient Descent (bGD) method: In the mini-batch algorithm framework, the system gradient is composed of the average of local gradients over the past k time steps. By appropriately selecting the mini-batch size k, this method effectively balances the suppression of unnecessary fluctuations in the estimates (stability) with the ability to track rapid dynamic changes in the parameters(convergence). Defining \(n_k=n-k+1\ge 0\), yields the cost function of bGD

and gradient as

Full Gradient Descent (fGD) method: This is the method developed at the beginning of this subsection, which offers a most stable and smooth estimation, and which has the slowest speed in response to the parameter changes. The cost function and gradient of fGD are by Eqs. (16) and (19). For the completeness reason, they are re-given as

and

Please note that n here is a rolling variable, which grows along with the time. The estimation algorithms of sGD, mGD, and fGD are compared and summarized in Fig. 7. The loss functions \(J_i\) as well as their development with time-step are illustrated in Fig. 8. It is clear that the loss function gradually converges over the time.

Gradient descent algorithms, \(m_k=m-k+1\).

Illustration of cost functions for gradient descent methods.

Here, x represents the vehicle state, y represents the system output, u represents the system input, and \(C_f^*\) is the reference value for TLS. Each of above gradient descent methods provides unique trade-off in terms of convergence speed and system stability.

In order to analyze, evaluate, and compare the performance of the three methods in estimating TLS, numerical simulations were conducted. As described in the previous section, the test condition is Scenario 60 km/h, and the specific details of the condition will be provided Fig. 12. The method used for estimation is sGD, bGD and fGD.Figure 9a and b show the convergence of the TLS over a 50s period, respectively. Each plots four curves, representing the estimated TLS with different approaches gradient, alongside the reference TLS. The purpose of these simulations is to assess the effectiveness of these methods.

TLS estimation with different gradient methods under Scenario 60 km/h.

The results indicate that for the estimation of TLS, sGD and bGD methods can all converge rapidly; however, the fGD method experiences a slower convergence rate and difficulty in accurate estimation due to the averaging of the global gradient (as shown in Fig. 9c). Therefore, the subsequent discussion will not focus on the fGD method. The specific RMSE values are presented in Table 2.

Improvements of the gradient descent search

Directly apply of the gradient descent method, especially sGD, will result in the fluctuation with the parameter estimation. It is widely known that two consecutive search directions are often perpendicular. Thus, the gradient decent search is not so effective in general, and several improvements are introduced as below.

Momentum: With the momentum method30, the current gradient is no longer used directly to update the parameters but the filtered version is instead of. The filtered gradient gives a name as the gradient velocity, and it is computed as a weighted average of the previous velocity and the current gradient. Thus, this is effectively a first-order filter.

where \(\mathbf{M}_g\) is the filtered gradient, with \(w_m\) controlling the filter bandwidth. When consecutive gradients align, the gradient velocity increases, leading to larger parameter updates. Conversely, frequent direction changes reduce the velocity, decreasing the update step and preventing oscillations.

RMSProp: The core concept of the RMSProp is to assign different learning rate based on the magnitude of recent gradients. Specifically, RMSProp uses an exponentially weighted moving average of squared gradients, ensuring a smooth adapting process.

where \(s_g^i\) is the filtered \(l^2\) norm of the local gradient \({\nabla }\mathbf{J}_{n}\), with \(w_r\) controlling the filter’s bandwidth. A small positive \(\epsilon\) prevents division by zero. RMSProp’s key advantage is its ability to adapt the learning rate, avoiding monotonically decreasing rates over time.

Adaptive Moment Estimation (Adam): Adam is a popular optimization algorithm in deep learning, combining Momentum and RMSProp. It adapts the learning rate for each parameter by estimating the first moment (mean) and second moment (variance) of the gradient31.

Adam’s adaptive learning rate excels in varying gradient and parameter conditions, but it introduces additional hyperparameters (e.g., \(w_{a1}\) and \(w_{a2}\)) that require careful tuning for optimal performance.

The previously mentioned gradient descent methods and the associated improvements in the descent direction and learning rate are briefly summarized in Table 3.

Recursive least-squares method

The recursive least squares method builds upon the traditional least squares approach by enabling online parameter estimation. This method retains the effectiveness of historical data, and can be designed to improve computational efficiency significantly.

Cost function of least-squares method

In order to construct the cost function for recursive Least-Squares Method, the error model of the system as given by Eqs. (13) and (14) is represented in a matrix format as

or in a more compact way of

Comparing to Eq. (23), the scaled output \(y_m\) and the equivalent state \(x_\phi\) (which is the combination of the system states and parameters) becomes clear. This error model can be further reduced for incorporating with the weighted sum of \(e_{ay}\) and \(e_{\varepsilon z}\) as in Eq. (16),

where the weight vector \(\mathbf {\gamma }=\left[ \gamma _{ay},\gamma _{\varepsilon z}\right] ^T\) . Note that the combined error e and equivalent output y are scalar variables. Then, yields the cost function for least-squares

where

the estimation of parameter \(\theta\) is rooted in minimizing the cost function J

\(\theta =\theta ^*\) when cost function J is at minimum, which is best prediction.

Principle of least-squares method

The principle of least-squared method is that the accurate parameter estimation \(\theta\) shall minimize the above cost function J, implying the minimized output error between the measurement and the observation, which is similar to that of the gradient descent. The derivation process of the estimation is as below

where n is the number of samples. Taking directive of J wrt \(\theta\), yielding

the minimum \(J(\theta )\) arrives when the partial derivative is diminished (namely \(\partial J/\partial \theta \ =\ 0\)), hence

Similar to Eq. (15), the loss function in Eq. (25) represents the sum of all errors from step 1 to step n. As n becomes sufficiently large, the errors in the later part of the time series have almost no effect on the estimation of TLS, making it difficult to track the variation of TLS during actual driving. To address this issue, three least-squares methods based on different size of are proposed, aimed at better capturing and tracking the dynamic changes of TLS.

Note that, the number of simples n does not appear in the solution for estimating \(\theta\). Therefore, the squared error or the mean squared error as the cost function make no difference from parameter estimation point of view. Thus, hereinafter, the squared error cost function is adopted. Though the inverse of \(X^TX\) does present as long as \(n\ge 2\), the above way of deriving \(\theta\) is both impractical and inefficient when n becomes sufficient large. Note here that \(X^TX\) is the Hessian matrix of the cost function J.

Frameworks of least-squares methods

Iterative least squares methods are proposed in this section for the purposes of efficient computation and fast response.

Recursive Least Squares (RLS): This approach updates the estimation by processing all available data sequentially. When new data become available, RLS incrementally adjusts the estimation without needing to recompute from scratch, making it efficient for real-time applications.

Re-packing the output vector \(\mathbf{Y}\) and the equivalent state matrix \(\mathbf{X}\) in Eq. (26) as:

then yields a new version of Eq. (27) as

introducing

by applying the Sherman-Morrison formula which is a special case of the Woodbury matrix identity of

a recursive expression of \(P_\mathbf{1}^\mathbf{n}\) becomes

instituting the above into Eq. (28), the expression for the recursive least-squares method becomes

The derivation of the above representation is detailed in Chapter 9 of Hayes32. Both frameworks operate in an online setting, but they offer distinct advantages in terms of computational cost and convergence speed based on the scope of each update. Defining the feedback gain \(k_{rls}\) as

then

where \(e=y_n-x_n^T\theta ^{n-1}\) is the error between the weighted output and the observation based on the current estimation.

The error model contains the parameter information though indirectly, making \(e=0\) will lead to \(e_\theta =0\), where \(e_\theta =\left[ e_{cf},e_{cr}\right] ^T\). Namely, by regulating the parameters \(C_f\) and \(C_r\), the observed \(a_y\) and \(\varepsilon _z\) will trace the measured \(a_y\) and\(\varepsilon _z\) according to the modeled system, and the estimated TLS will converge to the true value automatically.

Repairing Eq. (26) as:

where

Apparently, \({\nabla J}_n\) denotes the gradient of the cost function at time-step n. As shown in Eq. (15), both the gradient descent method (GDM) and recursive least squares (RLS) share a similar structure in updating parameters. The main difference lies in the learning mechanism: GDM uses a manually tuned learning rate \(\lambda\), while RLS determines the update rate through the Hessian matrix \(\mathbf{X}^T\mathbf{X}\), i.e., the second derivative of the cost function.

In addition, the original GDM computes an average gradient over past steps, which improves stability but reduces responsiveness to TLS changes. RLS, by contrast, uses only the current gradient but incorporates second-order information recursively, enabling faster adaptation. To address these trade-offs, improved GDM variants–widely adopted in deep learning–are discussed, and flexible RLS versions are introduced in the subsequent analysis to enhance adaptability.

Single-step learning rate RLS (sLS): In this framework, only the most Hessian matrix is used to calculate the global learning rate. This allows a quick update of the learning rate, hence being able to follow rapid changes in road and tire conditions as a return. sLS is managed by the following:

Please note here \(\lambda\) is a \(2\times 2\) matrix. This is a major difference from the learning rate of the gradient method, which is a scalar.

Mini-Batch learning rate RLS (bLS): In this framework, only a subset of previous Hessian matrices is applied for updating the learning rate. This selective updating makes it more efficient at large time-step n, where updates must be made rapidly under some special external conditions. bLS is managed by the following:

where \(n_k=n-k+1\), \(n_k=1\) if \(k \ge \ n\). The first two steps above basically calculate,

but in an iterative and efficient way.

Full-size learning rate RLS (fLS): In this framework, all the previous Hessian matrices are involved in the learning rate calculation. Thus, as n grows large, the learning rate will remain nearly unchanged. This is favorable in terms of learning rate stability, but unfavorable when estimating varying TLS. fLS is managed by

The proposed variants of LS methods are briefly summarized in Table 4.

Figure 10 illustrates the convergence of the TLS over a 50s period under the Scenario 60 km/h, aiming to compare the effectiveness of different historical step size information in least squares estimation. The figure includes four curves, representing the TLS estimates obtained using three LS algorithms (sLS, bLS, and fLS), along with the reference TLS values.

TLS estimation with different LS methods.

As shown in Fig. 10, the sLS method can track the actual value to some extent, but it is highly sensitive to noise, leading to significant oscillations in the estimates. Similarly, the fLS method accumulates historical data over time, making it difficult for the algorithm to converge quickly and reducing its sensitivity to changes in TLS. Therefore, both the sLS and fLS methods are not suitable for TLS estimation.

In contrast, the bLS method achieves a good balance between stability and convergence speed. By limiting the length of the historical data window, this method improves both stability and accuracy.

Thus, in real-time estimation tasks, the bLS method demonstrates superior convergence and robustness, making it more suitable for estimating TLS. The subsequent discussion will focus solely on the bLS method, with the other two algorithms no longer considered.

Algorithm summary

This section introduces two categories of algorithms for online estimation of TLS: GDM and RLS, along with several of their respective variants. A central contribution of this work is the recognition that RLS inherently operates as a gradient-based method with an adaptive learning rate. Based on this observation, a modified RLS framework is proposed, in which the learning rate component is redesigned to enhance responsiveness. This modification effectively addresses the traditional RLS method’s limited real-time adaptability, improving its performance in dynamic environments.

The primary difference between GDM- and RLS-based approaches lies in their respective adaptation strategies. GDM-based frameworks perform adaptive gradient direction updates, while RLS-based methods focus on adjusting the learning rate. This distinction enables both classes of algorithms to accurately and efficiently track changes in TLS under varying road and vehicle conditions.

As shown in Table 5, representative variants from each algorithmic family demonstrate these differences: GDM variants adapt the gradient descent direction, whereas RLS variants adjust the learning step size through recursive updates. The cost function employed throughout this study is constructed as a sum of squared errors derived from a dual-error model. This model retains strict convexity even in the presence of sensor noise, which ensures consistent convergence to the global minimum for both algorithm types under nominal conditions. Theoretical stability analyses of such convex optimization-based adaptive algorithms have been extensively studied in the literature, such as in Data-Driven Model-Free Controllers33, where detailed proofs are provided. As the focus of this study is on algorithmic improvement and real-time application, these analyses are not repeated here.

Although the mathematical formulations may not immediately reflect the comparative advantages of these approaches, the combination of strict convexity, low computational complexity, and efficient memory usage makes both GDM and RLS frameworks suitable for real-time embedded implementation. Detailed performance evaluations under various scenarios are provided in the subsequent simulation sections.

Simulation and analysis

This section evaluates the performance of the different algorithms. The simulations are primarily conducted using Matlab/Simulink(2023a) to build both the vehicle model and the estimation algorithm as illustrated in Fig. 11. In order to assess the accuracy of the estimation methods, the white noises are argued to the measured output signals. Based on the analysis and simulations conducted earlier in this paper, the subsequent simulations and analyses include six algorithms. These consist of two gradient-based algorithms utilizing different time step sizes(sGD, bGD), two variants of the gradient algorithms(Momentum, Adam), and the bLS algorithm.

Algorithm framework in Matlab/Simulink.

Experimental setup

Three representative driving scenarios are considered in this study: Scenario 20 km/h, 60 km/h, and 120 km/h, corresponding to low-, medium-, and high-speed driving conditions, respectively. The 20 km/h scenario is derived from a campus environment to represent typical low-speed operation. The 60 km/h case follows settings commonly used in the literature34 for medium-speed evaluation, while the 120 km/h scenario reflects high-speed highway conditions. These scenarios collectively cover a wide range of real-world driving situations. The corresponding road profiles are illustrated in Fig. 12.

To simulate real-world disturbances, white Gaussian noise is added at the output of the vehicle model. The injected noise has a bandwidth of 10 Hz, with amplitude adjusted based on the magnitude of the output signal–typically within ±0.02 or ±0.05. This ensures that the robustness and accuracy of the estimation algorithm are evaluated under realistic conditions. Detailed vehicle parameters and the sampling time Ts are listed in Appendix Symbols, while the hyper-parameter settings used in the proposed algorithms are discussed in section “Design of hyper-parameters”.

Test scenarios.

The satellite image was obtained from the official website of Baidu Maps (https://map.baidu.com)35.

Design of hyper-parameters

The algorithmic framework proposed in this study involves several hyper-parameters whose appropriate selection significantly affects estimation performance. These include learning rates, mini-batch sizes, and algorithm-specific parameters such as momentum factors or exponential decay rates. This section does not attempt to exhaustively list all parameter values–those are summarized in Table 6, but instead focuses on illustrating the general methodology used for parameter tuning.

To demonstrate the selection process, three representative examples are presented: the tuning of the learning rate in the sGD algorithm, the mini-batch size in the bGD algorithm and the learning rate in Adam algorithm. In these cases, simulation experiments were conducted at Scenario 60 km/h to evaluate the effect of different parameter values on estimation performance.

Figure 13 presents the estimation results of the sGD algorithm for five different learning rates. A learning rate that is too small leads to slow convergence, while one that is too large causes oscillations or divergence. A reasonably chosen learning rate strikes a balance between convergence speed and estimation stability.

Estimation performance of sGD with different learning rates in Scenario 60 km/h.

Figure 14 shows the performance of the bGD algorithm with five mini-batch sizes. A smaller mini-batch size causes larger oscillations and slower convergence, as it makes the algorithm more sensitive to TLS variations. In contrast, a larger mini-batch size results in slower convergence and reduced sensitivity to changes in TLS. A reasonably chosen batch size ensures a balance between convergence speed and responsiveness.

Estimation performance of bGD with different mini-batch sizes under Scenario 60 km/h.

Figure 15 illustrates the impact of different learning rates in the Adam algorithm. As with sGD, a learning rate that is too small slows convergence, while one that is too large induces oscillations or divergence. A reasonably chosen learning rate provides a balance between convergence speed and stability.

Estimation performance of Adam with different learning rates under Scenario 60 km/h.

The parameter selection process was based on empirical testing, ensuring a balance between convergence speed, stability, and responsiveness for real-time TLS estimation. The chosen values for parameters were determined through systematic simulation and analysis. Other algorithm parameters were also carefully tuned, as summarized in Table 6, which lists the values for these parameters used throughout the experiments.

Results and analysis

Dynamic response curves

To assess the response of the proposed algorithms to varying TLS under various driving condition, 60s simulation was performed with a sampling time being 0.01s. A deliberate sudden change in TLS with a \(20\%\) increase is injected into the system at \(t=30s\). This is mainly for validating the system performance under extreme road conditions.

Results of \(C_f\) estimation.

Results of \(C_r\) estimation.

Figures 16 and 17 present the simulation results for TLS estimation across six methods. Each figure consists of three subplots representing the Scenario 20 km/h, 60 km/h, and 120 km/h, showing the response curves for six methods: sGD, bGD, Momentum, RMSProp, Adam, and bLS. The results indicate that three methods–RMSProp, Adam, and bLS–converge to the reference values for both TLS across all conditions.

While sGD converges quickly at medium and high speeds, its performance significantly deteriorates at low speeds due to large gradient variations and the fixed learning rate, which limits its adaptability. Similarly, bGD and Momentum also face convergence issues. bGD shows smoother gradient variations but still struggles with adaptability due to its fixed learning rate, converging only in high-speed conditions. Momentum, despite utilizing historical data, remains affected by the fixed learning rate, preventing effective adaptation to speed changes.

In contrast, both RMSProp and Adam exhibit fast, accurate convergence across all speeds. Their gradient normalization reduces the impact of varying driving conditions, ensuring stable performance even in dynamic environments. This allows both algorithms to maintain convergence without being affected by speed fluctuations or noise.

The bLS method, relying on a small batch of historical data, demonstrates good stability and faster response. This enables the algorithm to effectively handle noise while quickly adapting to changes in the system.

The following sections will analyze the accuracy, adaptability, and performance of RMSProp, Adam, and bLS in more detail, discussing the strengths and limitations of these methods under varying driving conditions.

Analysis

In order to further analyze the performance of the algorithms proposed in this study, three evaluation metrics are introduced to provide a comprehensive assessment of their effectiveness. These metrics are designed to evaluate different aspects of algorithm performance, allowing for a thorough comparison based on responsiveness, accuracy, and stability. The metrics are as follows:

Relative Steady-State Error (RSSE): The average absolute error during the steady-state period, normalized by the reference value, and expressed as a percentage of the actual value.

Response Time (t10): The time required for the estimated value to enter the \(\pm 10\%\) error band after a step change is triggered.

Overshoot (\(\sigma \%\)): The relative percentage of the peak estimation error compared to the true value.

These three evaluation metrics were chosen to comprehensively assess different aspects of algorithm performance. The RSSE evaluates both steady-state accuracy and fluctuation control, providing an immediate measure of the algorithm’s precision and consistency..t10 measures the speed at which the algorithm adapts to changes, reflecting its ability to track dynamic variations with minimal delay. \(\sigma \%\) indicates the algorithm’s stability, with a smaller overshoot signifying better control over large deviations and preventing oscillations. Together, these metrics provide a balanced evaluation of responsiveness, stability, and accuracy, enabling a thorough comparison of the algorithms’ strengths and weaknesses for real-time TLS estimation.

Performances of different TLS estimation methods.

Figure 18 presents a comparison of the RMSProp, Adam, and bLS algorithms across three evaluation metrics. A comprehensive analysis of these metrics reveals that, under Scenario 20 km/h, both the RSSE and t10 values perform significantly worse compared to the other two conditions. This is due to the fact that, at lower speeds, noise constitutes a larger proportion of the system’s output. As a result, under the same noise intensity, the low-speed condition is more susceptible to the effects of noise. Additionally, the RSSE for the front tires at low speeds is noticeably higher than that for the rear tires. This can be attributed to the fact that the front tire estimation is influenced by the steering angle, making the algorithm more complex compared to the rear tire estimation.

The three algorithms perform well across all three evaluation metrics, meeting the requirements for real-time TLS estimation. In the 20 km/h scenario, the bLS algorithm exhibits a faster response compared to the two gradient-based variants, though it comes with a slightly higher \(\sigma \%\). Meanwhile, the difference between RMSProp and Adam is marginal; RMSProp yields a slightly higher RSSE but a lower t10 compared to Adam.z‘

Overall, all three algorithms are capable of performing online TLS estimation, with only minor differences in performance. These differences can be leveraged to apply the most suitable algorithm depending on the specific control objectives, leading to improved control performance in various scenarios.

Conclusion

This study separately introduces Gradient Descent Methods (GDMs) from deep learning and Recursive Least Squares (RLS), commonly used for parameter identification. Based on the dual-error model, these algorithms are modified to enable their application in online TLS estimation. The results demonstrate that the three algorithms–RMSProp, Adam, and bLS–perform well across three key metrics: RSSE, t10, and \(\sigma \%\). Specifically, RMSProp and Adam show good stability in TLS estimation, while the bLS algorithm delivers superior response time, particularly in low-speed conditions.

Different algorithms exhibit varying strengths depending on the specific conditions of the control system. RMSProp and Adam are particularly well-suited for scenarios involving relatively stable parameter variations, offering high stability and reliable steady-state performance. Conversely, the bLS algorithm demonstrates superior responsiveness, making it more appropriate for highly dynamic environments or low-speed operations. Nevertheless, certain limitations were observed–for example, RMSProp and Adam tend to exhibit slower responsiveness under steady-state conditions (e.g., during constant-speed straight-line driving), and the presence of noise can degrade performance in low-speed situations.

The proposed algorithms are characterized by computational simplicity and minimal memory consumption, which makes them well-suited for real-time deployment on embedded systems in automotive applications. Their efficiency and capacity for online adaptation underscore their potential for practical use in autonomous vehicle platforms. Future research will aim to further improve their robustness and estimation accuracy by analyzing the influence of sensor noise and addressing the common challenge of insufficient excitation in steady-state conditions–an inherent issue in gradient-based approaches. Additionally, ongoing work will explore extensions to accommodate more complex and dynamic driving scenarios, with the goal of integrating these algorithms seamlessly into autonomous vehicle control architectures.

Data availability

The data used and/or analysed during the current study available from the corresponding author on reason-able request.

Abbreviations

- \(a_y\) :

-

Measured vehicle lateral acceleration (m/s2)

- \({\hat{a}}_y\) :

-

Observed vehicle lateral acceleration (m/s2)

- \(C_f\) :

-

Front tire stiffness, 62618 (N/rad)

- \({\hat{C}}_f\) :

-

Observed front tire stiffness (N/rad)

- \(C_r\) :

-

Rear tire stiffness, 110185 (N/rad)

- \({\hat{C}}_r\) :

-

Observed rear tire stiffness (N/rad)

- \(e_y\) :

-

Combined \(e_{ay}\) and \(e_{\varepsilon z}\) (-)

- \(e_{ay}\) :

-

Error of the measured and observed lateral acceleration (m/s\(^2\))

- \(\mathbf{e}_{ay}\) :

-

Vector version of \(e_{ay}\) (m/s\(^2\))

- \(e_{cf}\) :

-

Error of the real and estimated front tire stiffness (N/rad)

- \(e_{cr}\) :

-

Error of the real and estimated rear tire stiffness (N/rad)

- \(e_{\varepsilon z}\) :

-

Error of the measured and observed yaw acceleration (rad/\(\text{s}^2\))

- \(\mathbf{e}_{\varepsilon z}\) :

-

Vector version of \(e_{\varepsilon z}\) (rad/s2)

- E :

-

Error model (–)

- \(\mathbf{E}\) :

-

Vector version of E (–)

- \(F_y^f\) :

-

Front axle steering force (N)

- \(F_y^r\) :

-

Rear axle steering force (N)

- \(I_z\) :

-

Vehicle yaw moment of inertia, 3885 (kgm\(^2\))

- J :

-

Cost function for gradient descent and least-squares (–)

- k :

-

Mini-batch size (–)

- \(l_f\) :

-

Distance from mass centre to the front axle, 1.46 (m)

- \(l_r\) :

-

Distance from mass centre to the rear axle, 1.50 (m)

- m :

-

Vehicle mass, 1818 (kg)

- \(M_g\) :

-

First moment of the gradient (–)

- \(s_g\) :

-

Second moment of the gradient (–)

- t10:

-

Response time (s)

- \(T_s\) :

-

Sample time, 0.01 (s)

- \(v_x\) :

-

Vehicle longitudinal speed (m/s)

- \(v_y\) :

-

Measured vehicle lateral speed (m/s)

- \({\hat{v}}_y\) :

-

Bbserved vehicle lateral speed (m/s)

- \(x_\phi\) :

-

Equivalent states (–)

- \(y_m\) :

-

Scaled outputs (–)

- \(\beta\) :

-

Vehicle side-slip angle (rad)

- \(\beta _f\) :

-

Front tire side-slip angle (rad)

- \(\beta _r\) :

-

Rear tire side-slip angle (rad)

- \(\delta\) :

-

Steering angle for the front tire (rad)

- \({\nabla J}\) :

-

Gradient of the cost function (–)

- \(\varepsilon _z\) :

-

Measured vehicle yaw acceleration (rad/\(\text{s}^2\))

- \({\hat{\varepsilon }}_z\) :

-

Observed vehicle yaw acceleration (rad/\(\text{s}^2\))

- \(\lambda\) :

-

Learning rate of gradient descent method (–)

- \(\theta\) :

-

Array of the tire-stiffness estimation (–)

- \(\sigma \%\) :

-

Overshoot (–)

- \(\omega _{a1}\) :

-

Hyperparameter in Adam (–)

- \(\omega _{a2}\) :

-

Hyperparameter in Adam (–)

- \(\omega _{m}\) :

-

Hyperparameter in Momentum (–)

- \(\omega _{r}\) :

-

Hyperparameter in RMSProp (–)

- \(\omega _z\) :

-

measured vehicle yaw speed (rad/s)

- \({\hat{\omega }}_z\) :

-

Observed vehicle yaw speed (rad/s)

- GDM:

-

Gradient descent method

- bGD:

-

Mini-batch gradient descent

- fGD:

-

Full-size gradient descent

- sGD:

-

Single-step gradient descent

- RLS:

-

Recursive least-squares

- bLS:

-

Mini-batch recursive least-squares

- fLS:

-

Full-size recursive least-squares

- sLS:

-

Single-step recursive least-square

- RMSE:

-

Root mean square error

- RSSE:

-

Relative steady-state error

- TLS:

-

Tire lateral stiffness

References

Pacejka, H. B. Tire and Vehicle Dynamics 3rd edn. (Butterworth-Heinemann, Oxford, 2012).

Rajamani, R. Vehicle Dynamics and Control 2nd edn. (Springer, New York, 2012).

Furlan, M. & Mavros, G. On the relationship between tyre cornering stiffness and road surface roughness. Veh. Syst. Dyn. https://doi.org/10.1080/00423114.2025.2467937 (2025).

Braghin, F., Cheli, F. & Sabbioni, E. Environmental effects on Pacejka’s scaling factors. Veh. Syst. Dyn. 44, 547–568. https://doi.org/10.1080/00423110500520065 (2006).

Kim, S., Lee, J., Han, K. & Choi, S. B. Vehicle path tracking control using pure pursuit with MPC-based look-ahead distance optimization. IEEE Trans. Veh. Technol. 73, 53–66. https://doi.org/10.1109/TVT.2023.3304427 (2024).

Tian, Y., Yao, Q., Hang, P. & Wang, S. A gain-scheduled robust controller for autonomous vehicles path tracking based on LPV system with MPC and \(h^\infty\). IEEE Trans. Veh. Technol. 71, 9350–9362. https://doi.org/10.1109/TVT.2022.3176384 (2022).

Peterson, M. T., Goel, T. & Gerdes, J. C. Exploiting linear structure for precision control of highly nonlinear vehicle dynamics. IEEE Trans. Intell. Veh. 8, 1852–1862. https://doi.org/10.1109/TIV.2022.3171734 (2023).

Zhang, L., Wang, Y. & Wang, Z. Robust lateral motion control for in-wheel-motor-drive electric vehicles with network induced delays. IEEE Trans. Veh. Technol. 68, 10585–10593. https://doi.org/10.1109/TVT.2019.2942628 (2019).

Spielberg, N. A., Brown, M., Kapania, N. R., Kegelman, J. C. & Gerdes, J. C. Neural network vehicle models for high-performance automated driving. Sci. Robot. 4, eaaw1975. https://doi.org/10.1126/scirobotics.aaw1975 (2019).

Bevly, D. M., Ryu, J. & Gerdes, J. C. Integrating ins sensors with GPs measurements for continuous estimation of vehicle sideslip, roll, and tire cornering stiffness. IEEE Trans. Intell. Transp. Syst. 7, 483–493. https://doi.org/10.1109/TITS.2006.883110 (2006).

Sierra, C., Tseng, E., Jain, A. & Peng, H. Cornering stiffness estimation based on vehicle lateral dynamics. Veh. Syst. Dyn. 44, 24–38. https://doi.org/10.1080/00423110600867259 (2006).

Wang, R. & Wang, J. Tire–road friction coefficient and tire cornering stiffness estimation based on longitudinal tire force difference generation. Control. Eng. Pract. 21, 65–75. https://doi.org/10.1016/j.conengprac.2012.09.009 (2013).

Lian, Y., Zhao, Y., Hu, L. & Tian, Y. Cornering stiffness and sideslip angle estimation based on simplified lateral dynamic models for four-in-wheel-motor-driven electric vehicles with lateral tire force information. Int. J. Automot. Technol. 16, 669–683. https://doi.org/10.1007/s12239-015-0068-4 (2015).

Han, K., Choi, M. & Choi, S. B. Estimation of the tire cornering stiffness as a road surface classification indicator using understeering characteristics. IEEE Trans. Veh. Technol. 67, 6851–6860. https://doi.org/10.1109/TVT.2018.2820094 (2018).

Song, R., Fang, Y. & Huang, H. Reliable estimation of automotive states based on optimized neural networks and moving horizon estimator. IEEE/ASME Trans. Mechatron. 28, 3238–3249. https://doi.org/10.1109/TMECH.2023.3262365 (2023).

Devos, T., Uva, I. N. & Naets, F. A least-squares identification method for vehicle cornering stiffness identification from common vehicle sensor data. Veh. Syst. Dyn. https://doi.org/10.1080/00423114.2024.2389212 (2024).

Yang, C. et al. Likelihood-based modified Kalman filter for estimating vehicle lateral states with random measurement delay. SCIENCE CHINA Technol. Sci. 68, 1320802. https://doi.org/10.1007/s11431-024-2876-y (2025).

Baffet, G., Charara, A. & Lechner, D. Estimation of vehicle sideslip, tire force and wheel cornering stiffness. Control. Eng. Pract. 17, 1255–1264. https://doi.org/10.1016/j.conengprac.2009.05.005 (2009).

Park, G. Vehicle sideslip angle estimation based on interacting multiple model Kalman filter using low-cost sensor fusion. IEEE Trans. Veh. Technol. 71, 6088–6099. https://doi.org/10.1109/TVT.2022.3161460 (2022).

Wang, Y. et al. Estimation of sideslip angle and tire cornering stiffness using fuzzy adaptive robust cubature Kalman filter. IEEE Trans. Syst. Man Cybernet. Syst. 52, 1451–1462. https://doi.org/10.1109/TSMC.2020.3020562 (2022).

Ni, J., Hu, J. & Xiang, C. Relaxed static stability based on tyre cornering stiffness estimation for all-wheel-drive electric vehicle. Control. Eng. Pract. 64, 102–110. https://doi.org/10.1016/j.conengprac.2017.04.011 (2017).

Berntorp, K. & Di Cairano, S. Tire-stiffness and vehicle-state estimation based on noise-adaptive particle filtering. IEEE Trans. Control Syst. Technol. 27, 1100–1114. https://doi.org/10.1109/TCST.2018.2790397 (2019).

Lopes, A. & Araújo, R. E. Vehicle lateral dynamic identification method based on adaptive algorithm. IEEE Open J. Veh. Technol. 1, 267–278. https://doi.org/10.1109/OJVT.2020.3013149 (2020).

Zhu, G. & Hong, W. Autonomous vehicle motion control considering path preview with adaptive tire cornering stiffness under high-speed conditions. World Electric Veh. J. https://doi.org/10.3390/wevj15120580 (2024).

Abbas, S. J., Husain, S. S., Al-Wais, S. & Humaidi, A. J. Adaptive integral sliding mode controller (SMC) design for vehicle steer-by-wire system. SAE Int. J. Vehic/ Dyn. Stab. NVH 8, 383–396. https://doi.org/10.4271/10-08-03-0021 (2024).

Jeon, W., Chakrabarty, A., Zemouche, A. & Rajamani, R. Simultaneous state estimation and tire model learning for autonomous vehicle applications. IEEE/ASME Trans. Mechatron. 26, 1941–1950. https://doi.org/10.1109/TMECH.2021.3081035 (2021).

Song, S. et al. Composite neural learning-based adaptive actuator failure compensation control for full-state constrained autonomous surface vehicle. Neural Comput. Appl. 37, 6369–6381. https://doi.org/10.1007/s00521-024-10651-y (2025).

Li, B. et al. Adaptive gradient-based optimization method for parameter identification in power distribution network. Int. Trans. Electr. Energy Syst. 2022, 9300522. https://doi.org/10.1155/2022/9300522 (2022).

Tao, G. Adaptive Control Design and Analysis (John Wiley & Sons, Hoboken, 2003).

Polyak, B. Some methods of speeding up the convergence of iteration methods. USSR Comput. Math. Math. Phys. 4, 1–17. https://doi.org/10.1016/0041-5553(64)90137-5 (1964).

Kingma, D. P. & Ba, J. Adam: A method for stochastic optimization (2017) arXiv:1412.6980.

Hayes, M. H. Statistical Digital Signal Processing and Modeling (Wiley, New York, 1996).

Precup, R.-E., Roman, R.-C. & Safaei, A. Data-Driven Model-Free Controllers 1st edn. (CRC Press, Boca Raton, 2021).

Artuñedo, A., Moreno-Gonzalez, M. & Villagra, J. Lateral control for autonomous vehicles: A comparative evaluation. Annu. Rev. Control. 57, 100910. https://doi.org/10.1016/j.arcontrol.2023.100910 (2024).

Inc., B. Baidu maps. https://map.baidu.com (2025). Accessed on April 13, 2025.

Acknowledgements

The study is supported by Fujian Province Science and Technology Project (Project No: 2024H6037).

Author information

Authors and Affiliations

Contributions

Q.Z. Conceptualization, W.J. Software, L.P. Formal analysis, H.Y. Data Curation, G.J. Validation, H.H. and C.Y. Visualization, S.S. Supervision and Review & Editing. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Qin, Z., Wang, J., Liu, P. et al. Real time tire stiffness estimation using enhanced GDM and RLS for autonomous vehicles. Sci Rep 15, 31863 (2025). https://doi.org/10.1038/s41598-025-15220-4

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-15220-4