Abstract

Border ownership is a critical component of biological vision systems, enabling object segmentation and figure-ground organization. Understanding border ownership-centered segmentation is essential for advancing vision neuroscience and computer vision. In our prior work, “Border Ownership, Category Selectivity, and Beyond” (Chen et al., 2022)6, we introduced a channel-based representation for coding border ownership and category selectivity. Building on this foundation, this paper addresses the remaining challenges in border ownership-centered segmentation. We explore binocular disparity representation, the generation of binocular border ownership for contrast- and disparity-defined objects, and border ownership generation for illusory and contour-defined objects, including the Rubin Face-Vase illusion. We also propose the creation of ‘object pointers’ through hypothetical active neurons to address the ‘surface filling-in’ process in neuroscience and generate ‘instance masks’ in computer vision. Finally, synthesizing these findings, we present a comprehensive model for border ownership-centered figure-ground organization. This model integrates global context awareness, distributed processing, and multi-cue representations, bridging gaps between biological vision and computational applications.

Similar content being viewed by others

Introduction

Object segmentation is a core task in computer vision, which has advanced significantly in recent years due to deep-learning techniques. However, current state-of-the-art methods in computer vision do not fully align with the concept of ‘figure-ground organization’—a neuroscientific term for object segmentation in biological vision—where border ownership plays a crucial role.

Border ownership refers to a vision phenomenon where a visual border (also known as ‘occluding contours’) between two regions in a scene or image is perceived as belonging to one of the regions, known as the ‘border owner’. A region is then perceived as either a ‘figure’ or ‘background’, with the border carrying an orthogonal owner-side direction towards its ‘border owner’ region. At the border, the owner region is positioned in front of the other. Therefore, the ‘figure-ground organization’ in neuroscience is a border ownership-centered segmentation, where the ‘figure’ corresponds to what is referred to as an ‘object’ in computer vision.

What’s the difference?

Figure 1 illustrates the difference between conventional segmentation methods—which do not explicitly encode border ownership—and border-ownership-centered segmentation. While both segment an image into ‘object’ or ‘background’ regions, the latter takes it further by using border ownership to identify which object at a shared border is positioned in front. Essentially, border ownership enables 3D segmentation (bottom image in Fig. 1), whereas most conventional state-of-the-art remains in 2D (middle image in Fig. 1). In biological vision, border ownership itself is integral to depth perception.

Object Segmentation with or without Border Ownership. Top: An RGB image from the Virtual Kitti 2 dataset (Cabon et al., 2020)5 to be segmented. Middle: An instance segmentation map was generated by a state-of-the-art Segment-Anything (Kirillov et al., 2023)24 with no border ownership. Bottom: A 2-channel border ownership map generated using the coding method from our prior work (Chen et al., 2022)6 where red represents borders (occluding contours) with owners on the ‘below’ or ‘left’ sides, and green represents borders with owners on the ‘above’ or ‘right’ sides. A shared border between two objects indicates depth ordering, where the border-owner object is positioned in front.

From a segmentation processing perspective, border ownership-centered segmentation begins with contour-based border ownership generation (identifying surrounding borders of objects with border ownership; bottom image in Fig. 1) followed by ‘surface filling-in’ (determining the location and spatial coverage of object interiors enclosed by these borders). In this context, ‘surface’ is largely equivalent to ‘object’ in computer vision. In contrast, most state-of-the-art methods, which segment objects by direct pixel-wise labeling for their belonging in class and identity, are region-based (middle image in Fig. 1), bypassing the use of explicit borders. Essentially, region-based methods perform ‘surface filling-in’ without borders.

Most modern segmentation methods (see surveys: Hafiz et al., 202018; Kirillov et al., 201825; Elharrouss et al., 202114; Sharma et al., 202239; Gu et al., 202217) integrate semantic classification and object detection to achieve segmentation, using class or object information as guidance. In contrast, border-ownership-centered segmentation typically involves limited, targeted classification via category selectivity (Chen et al., 20226; and Sect. “Category selectivity”). In biological vision, border ownership-centered segmentation precedes large-scale classification and recognition (Qiu et al., 200535; Von der Heydt, 202343); where category selectivity is rather a byproduct of the segmentation at the early vision stage.

The output of border ownership segmentation (bottom of Fig. 1) resembles panoptic segmentation (Kirillov et al., 201825; Elharrouss et al., 202114) in computer vision, which aims to segment everything visible in a scene. Meanwhile, category-selective segmentation (Fig. 13) is more akin to instance segmentation (Hafiz et al., 202018; Sharma et al., 202239; Gu et al., 202217) for selected categories.

Current status in brief

Border ownership coding

In computer vision, contour-based, border ownership-centered segmentation remains underdeveloped compared to region-based approaches. This disparity stems from the limited understanding of border ownership, a concept identified only recently by Zhou et al. (2000)53, and the inherent complexity of replicating biological vision mechanisms. In contrast, region-based segmentation—both classical and deep learning-based—has benefited from over half a century of research, making it more mature and widely adopted.

Three major challenges hinder the development of border ownership-centered segmentation in computer vision:

-

1.

The greatest challenge lies in representing border ownership, where borders are treated as directed contours with orthogonal owner-side directions. A comparable analogy is the right-hand rule in physics, which links the directions of a magnetic field and moving charge: knowing one direction allows you to determine the other. In contrast to physics, object borders lack inherent directionality, and the orthogonal owner-side direction may lie on either side of the border.

-

2.

The second major challenge involves the generation of border ownership. Williford et al., (2013)50 provide a comprehensive review of border ownership and related topics from a neuroscience perspective, discussing three distinct models related to border ownership coding. All the models, explicitly or implicitly, assume that occluding contour generation and border ownership assignment are separate processes. Consequently, border ownership is assigned to occluding contours based on cues like T-junctions or through top-down guidance from higher-level vision hierarchies. These approaches face significant challenges in neuroscience (see discussions in Sect. “Integrative discussion – understanding of border ownership”), particularly in explaining the ‘short latency’ of border ownership generation and the presence of ‘global context awareness’, which appear contradictory. Additionally, in computer vision, T-junction detection is fragile and error-prone, and the logic behind top-down guidance for grouping contour segments remains underspecified and difficult to reproduce algorithmically.

-

3.

The final challenge involves ‘surface filling-in’, which is the task of generating object regions from contour-based border ownership within a ‘short latency’. This task has received less attention in computer vision due to the limited progress in addressing the first two challenges.

In our prior work (Chen et al., 2022)6, we proposed a practical and straightforward solution for border ownership coding in computer vision, effectively addressing the first two major challenges. This approach enables a plausible explanation for vision phenomena related to border ownership observed in neuroscience (see Sect. “Integrative discussion – understanding of border ownership”), resolving a key component of border ownership-centered segmentation. It also demonstrates that occluding contour generation and border ownership assignment can occur simultaneously without the need for T-junction detections or top-down guidance.

Surface filling-in

As noted by Breitmeyer (2013)3, a visual object can be defined as any “bounded segment of the visual field” that is distinguishable “by virtue of its contour and surface properties”. He further emphasized that “without surface qualia, there is no conscious object vision”.

Thus, contour-based border ownership generation represents only half the process required for complete segmentation. While it establishes a foundational structure by identifying object boundaries, it must be complemented by ‘surface filling-in’ which determines the interiors of objects—their location and spatial coverage. In neuroscience, this process is also referred to as ‘binding’ (Roelfsema, 2006)36, similarly, Zhu et al. (2020)54 describe the identity or control of ‘surface’ as ‘object pointers’.

In computer vision, the outcomes of ‘surface filling-in’ are typically represented by ‘instance masks’, ‘semantic masks’, or ‘panoptic masks’ (Hafiz et al. 202018; Kirillov et al., 201825; Elharrouss et al., 202114; Sharma et al., 202239; Gu et al., 202217), where each pixel is labeled according to instance or class identity. Meanwhile, most state-of-the-art segmentation methods perform ‘surface filling-in’ without explicitly relying on borders, using class and object information as guidance. In contrast, biological vision is thought to achieve classification and object recognition after segmentation, with contour-based border ownership likely serving as a critical precursor to these subsequent processes.

In this paper, we embrace the biological perspective by proposing a framework for ‘surface filling-in’ that is explicitly based on the generated border ownership (see Sect. “Surface filling-in”).

Prior works worth mentioning

Williford and von der Heydt (2013)50 provide a comprehensive review of border ownership and related neural models, while Von der Heydt (2023)43 offered an updated and extensive review of border ownership, which also explores the concept of ‘object pointer’. Both are foundational for understanding the neuroscience behind border ownership and provide essential context for this study.

Craft et al. (2007)8 introduced a border ownership representation model from a neuroscience perspective, proposing a top-down guided approach driven by hypothetical high-level ‘Grouping cells’ (‘G cells’) that utilize local cues such as T-junction. In contrast, our prior work (Chen et al., 2022)6 proposed a border ownership representation rule from a computer vision perspective, where border ownership is generated directly by a trained deep neural network without relying on top-down guidance or T-junctions. While the mechanisms for generating border ownership differ, both approaches are nearly identical from a pure representation standpoint (excluding category selectivity). These distinct methodologies offer complementary insights into border ownership; see Sect. “Integrative discussion – understanding of border ownership” for further discussion, and Sect. “Monocular border ownership” for a recap of our prior work.

In computer vision, the Deep Occlusion Estimation (DOC) model by Wang et al. (2016)46 is a notable deep-learning method that combines edge and orientation maps, employing a left-hand rule to encode border ownership. However, as discussed in Sect. “Border ownership coding” and “Monocular border ownership”, DOC does not closely align with neuroscience findings on border ownership properties and is limited to directed contours, making it more akin to category-selective segmentation than a comprehensive border ownership representation. In contrast, our coding method applies to any complex scenarios without such limitations, naturally integrating category selectivity along with border ownership in a manner consistent with biological vision (see Sect. “Category selectivity”).

The work by Arbeláez et al. (2011)1 represented a state-of-the-art approach for contour detection and image segmentation prior to the deep learning era. Their code and the Berkeley Segmentation Dataset (BSDS) have been integral to our research on border ownership. By adapting their code, we found T-junctions to be unreliable and overly complex as cues for border ownership, motivating our research for alternative approaches. As a byproduct, a set of sophisticated tools was developed for segmenting contours, which were later used to generate border ownership ground truth for our studies—a surprising and valuable outcome worth mentioning.

Organization of this paper

In our prior work (Chen et al., 2022)6, we addressed a key aspect of border ownership: its representation. For completeness, a recap of that work is provided in Sect. “Monocular border ownership”. Building upon this foundation, the present study investigates the remaining pieces of the puzzle surrounding border ownership-centered segmentation and their broader implications for neuroscience and computer vision. The main structure of the paper:

Sect. “Border ownership”: Border ownership begins binocular vision (“Binocular vision and border ownership”), covering binocular disparity representation (“Binocular disparity”) and generation of binocular border ownership with disparity on contrast- and disparity-defined objects (“Binocular border ownership”). It then extends to illusory objects (“Illusory objects and illusory contours”) and analyzes the Rubin Face-Vase illusion (“Rubin Face-Vase illusion”), followed by experiments on contour-defined objects (“Contour objects”).

Sect. “Integrative discussion – understanding of border ownership”: Integrative Discussion – Understanding of Border Ownership, consolidates key findings from Sect. “Border ownership” and offers a broader interpretation of border ownership from both neuroscientific and computational perspectives.

Sect. “Surface filling-in”: Surface Filling-in, which introduces a feedforward framework linking border ownership signal to surface filling-in, grounded in the concept of hypothetical ‘active neurons (pixels)’.

Sect. “A border ownership centered figure-ground organization model”: Border Ownership-Centered Model, where we propose an integrated model for figure-ground organization, bridging neuroscience-inspired concepts with computational efficiency.

Sect. “Limitations and future directions” addresses current limitations and suggests directions for future research. Section “Summary” concludes with a summary of key contributions and broader implications.

The primary objective of this paper is to establish a foundational understanding of border ownership-centered segmentation. While optimizing the border ownership coding method or enhancing the TcNet deep learning network remains valuable, they are not the central focus of this work. Instead, this paper highlights how the current coding method aligns closely with neuroscientific findings on border ownership properties.

Throughout this paper, the 2-channel border ownership coding method is used consistently. Border ownership outputs are displayed using the same red-green-color coding scheme as illustrated in Fig. 2 (c) of this paper or Fig. 1(c) of Chen et al. (2022)6. In this scheme, the ‘left’ or ‘bottom’ sides of a red-colored border segment represent the border owners, while the ‘right’ or ‘above’ sides of a green-colored border segment represent the border owners. These red and green segments are represented in separate channels.

Border ownership

Monocular border ownership

This section recaps our prior work (Chen et al., 2022)6, which introduced a channel-based representation for coding border ownership and category-selectivity. We also presented an encoder-decoder neural network, TcNet, capable of generating these representations from monocular input.

Border Ownership Coding (adapted from Fig. 1 in Chen et al., 2022)6. (a) an example chair image from the FlyingChairs dataset (Aubry et al., 2014)2; (b) occluding contour map of the chair; (c) 2-channel border ownership coding: in the 1 st channel, red-colored contours indicate border owners on the ‘left’ or ‘below’ sides; while in 2nd channel, green-colored contours indicate border owners on the ‘right’ or ‘above’ sides. (d) 4-channel border ownership coding: red, green, blue, and yellow-colored contours are placed in 1 st, 2nd, 3rd, and 4th channels respectively, representing border owners on the ‘left’, ‘right’, ‘below’, and ‘above’ sides.

In Sect. 2.1 of our prior paper, we proposed that border ownership can be encoded using multiple channels, grounded in two key neuroscience findings (Von der Heydt, 2015)42: (1) “Border ownership preference is a fixed property of neurons”, and (2) “each neuron has a fixed preference for location of one side or the other”.

To implement this, object contours are decomposed into relatively straight occluding segments, then separated into different channels based on their border owner sides (left, right, above, or below), following the ‘opposite channel rule’: occluding segments with opposite border owner sides are put into different channels. The rule mirrors the neuroscience notion (Von der Heydt, 2015)42 that “each neuron has a fixed preference for location of one side or the other”.

Figure 2 illustrates this concept: (a) shows an input image; (b) displays its occluding contour map; (c) and (d) demonstrate 2-channel and 4-channel border ownership coding, respectively. Theoretically, this border-ownership coding representation can be extended to 8, 16, or more channels using similar approaches.

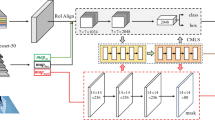

TcNet Multi-Branch Architecture for Multi-Category-Selective Border Ownership (adapted from Fig. 3 in Chen et al., 20226). In this example, a single Encoder Pyramid is shared across multiple Decoder Pyramid branches for feature extraction. Each of the four Decoder Pyramid branches, from top to bottom, is used to process all contours, border ownership, and two selected categories, respectively. The border ownership for the two selected categories is computed by taking the inner product (denoted by the circled ‘X’ in the diagram) of the associated category channels and border ownership channels.

In addition to border ownership, we (Chen et al., 2022)6 introduced a channel-based representation for object category-selectivity: all occluding contours for each category are put into one separate channel, referred to as category-selective channels or category channels. Border ownership specific to individual object categories can then be derived by taking the inner product of border ownership channels with the object category channels (Fig. 3).

Figure 3 (identical to Fig. 3 in Chen et al., 20226) also illustrates our encoder-decoder neural network, TcNet, which can be trained to jointly generate border ownership and (optional) category-selective maps.

All experiments in Chen et al. (2022)6 used monocular input. For clarity, we now refer to this version as TcNet01, distinguishing it from the binocular TcNet02 introduced in this paper. However, the underlying coding schemes for border ownership and category-selectivity remain consistent across both versions.

Binocular vision and border ownership

By default, a healthy human vision system uses two eyes, making binocular vision more significant than monocular vision. The slightly different views from each eye, known as binocular disparities, provide one of the primary cues for depth perception in the biological vision system (Parker, 2007)31.

Neuroscience studies by Qiu et al. (2005)35 suggest that the binocular disparity-selective neurons may play a role in border ownership generation and contribute to the formation of a ‘figure-ground organization’, though the exact role of binocular disparity in border ownership generation remains unclear.

In our prior work (Chen et al., 2022)6, we introduced a channel-based representation of border ownership for monocular input. Before extending this framework into binocular border ownership generation, we first explore how binocular disparity can be represented or encoded within this process.

Binocular disparity

In computer vision, binocular disparity is defined as the positional difference between the projections of an object point onto the left and right eye (or camera) images. According to the Epipolar geometry of binocular vision (Fig. 4), the (horizontal) binocular disparity of a point P is inversely proportional to its depth when the image planes of both eyes are parallel (Eq. 3.1). If the alignment is not initially present, a process called ‘rectification’ is applied to achieve it, ensuring accurate disparity estimation.

Notably, this binocular configuration in Fig. 4 can be interpreted as two observations of P by a monocular eye under horizontal motion parallax.

Epipolar Geometry on Binocular Vision. An object point P is observed by the left and right eyes (or cameras) OL and OR, separated by a distance (called ‘baseline’) b. Together, P, OL, and OR define the Epipolar plane. The image plane is positioned at a distance f away from the baseline and perpendicular to the paper. A 3-dimensional reference system is used with the x-axis pointing to the right, the y-axis pointing to the paper and pointing to the paper, and the z-axis pointing up, where z (the distance between P and the baseline) is referred to as the depth of point P, i.e. z = Depth. Assuming both eyes share the same focal length f, the x, and x’ coordinates represent the locations of P appearing in the left and right reference system, respectively. Then the (horizontal) disparity is inversely proportional to the depth, as described by (Eq. 3.1), where k = f*b is a known constant for fixed cameras (eyes) and their location.

Binocular Vision Examples (image from Virtual Kitti 2 dataset, Cabon et al., 2020)5. The first two images are the stereo left and right images, respectively; the 3rd image is a stereo anaglyph combining the two; the 4th image is a 2-channel border ownership coding map when viewed from the left camera; the 5th image is the ‘Near’ (Epipolar) disparity map where the greater depth appear lighter in red; the 6th image is the ‘Far’ (logarithmic) disparity map, also using red for depth coding, preserving depth order across distances.

This formulation assumes one-to-one pixel correspondence across views. However, in real-world 3D scenes, due to occlusion or noise, such pointwise correspondence may not exist, making disparity maps eye- or camera-oriented.

Moreover, (Eq. 3.1) becomes unreliable at large depths where disparity values approach the noise level. As shown in the 5th image in Fig. 5, disparities at far distances ‘fade’ into white noise. This phenomenon has been observed in other computer-processed disparity studies, such as ‘Depth Anything’ by Yang et al. (2024)52.

In natural vision, human eyes can seamlessly perceive depth across short and long distances. This suggests that (Eq. 3.1) alone is insufficient for robust depth representation. Neuroscience studies offer complementary insights. Binocular disparity is classified into ‘absolute disparity’ and ‘relative disparity’. According to Cumming et al. (1999)9, ‘absolute disparity’ measures the angular difference between the projections of a single point on the two retinas, as illustrated in Fig. 4, while the ‘relative disparity’ measures the difference between their respective absolute disparities. The disparity in (Eq. 3.1) corresponds to an absolute disparity with fixation at infinity.

Poggio (1995)32 reported the presence of neurons tuned to ‘Far’ and ‘Near’ disparities in the V1, although Prince et al. (2002)33 found no distinct neuron classes for ‘Far’ and ‘Near’ disparities. Nevertheless, these disparity types exist, regardless of whether they are generated by different neurons or not.

It appears that limited research has been conducted on disparity at large distances. For instance, Palmisano et al. (2010)30 explored depths up to 248 m, barely capturing the span shown in Fig. 5. This gap in understanding motivates alternative representations.

Inspired by logarithmic depth representations (Elgen et al., 2014)13, we propose a logarithmic form for (Eq. 3.1), shown in (Eq. 3.2), where C = log(k) is a constant. The 6th image in Fig. 5 is an example of (Eq. 3.2) converted from the depth map of the 5th image.

Visual inspection and evaluation of disparity maps in Fig. 5 suggest that the 5th image excels at presenting short-distance disparity, while the 6th image excels at long-distance disparity. The logarithmic form preserves ordinal depth order of all objects and backgrounds across distances and flattens the magnitude difference between small and large disparities (i.e., long and short depth).

Combining (Eq. 3.1) and (Eq. 3.2), a candidate representation for a disparity-depth relationship could be expressed as the following function:

where

and G denotes a function combining the ‘Near’ and ‘Far’ disparities, Fn(Depth) represents ‘Near’ (Epipolar) disparity while Ff(Depth) represents ‘Far’ (logarithmic) disparity. Neuroscience studies suggest that these disparities Fn and Ff, classified as ‘absolute disparities’, are likely generated in the V1 (Cumming et al., 1999)9.

Building on the channel-based representation of border ownership from our prior paper (Chen et al., 2022)6, we organize these into a ‘layered disparity representation’—each disparity type in a separate channel. Neuroscience findings (Poggio, 199532; Prince et al., 200233) suggest the existence of additional types of disparities beyond ‘Near’ and ‘Far’, and this ‘layered disparity representation’ offers greater flexibility for the combination function G in (Eq. 3.3), potentially allowing the inclusion of other disparities or optical flow derived from motion parallax in future extensions.

As Qiu et al. (2005)35 showed, binocular border ownership is generated in the V2 with the involvement of disparity-selective neurons. Section “Binocular border ownership” evaluates the effectiveness of ‘Far’ and ‘Near’ disparities in the generation of binocular border ownership.

Finally, rearranging (Eq. 3.1) and (Eq. 3.2) yields (Eq. 3.1') and (Eq. 3.2') below, we will revisit this mathematical symmetry between Disparity and Depth in greater detail in Sect. “Binocular disparity and depth”.

Binocular border ownership

In our prior paper (Chen et al., 2022)6, we introduced a channel-based representation for border ownership and category selectivity, along with an encoder-decoder neural network, TcNet, designed for monocular image input. In this study, we extend the same border ownership channel representation, with optional category-selectivity, to binocular images, using a similar TcNet architecture.

To ensure a fair comparison between the border ownership generation results from monocular and binocular inputs, we adopt a version of TcNet closely aligned with that used in Chen et al. (2022)6, making only minimal modification. Specifically, TcNet01 processes monocular input, while TcNet02 handles binocular inputs, as described in Sect. “Monocular border ownership”.

It is important to note that improving the performance of TcNet is not the primary focus of this paper.

Neural network setup

According to the neuroscience study by Qiu et al. (2005)35, disparity-selective neurons are involved in binocular border ownership generation. Building on the layered disparity representation introduced in Sect. “Binocular disparity”, we designed three neural network configurations (Fig. 6) to test the varying levels of disparity involvement in binocular border ownership generation.

Binocular configurations

-

1.

First configuration (Fig. 6(a)): Binocular images are stacked as input into TcNet02, with the expectation that the TcNet02 will implicitly learn the knowledge of disparities and generate border ownership maps.

-

2.

Second configuration (Fig. 6(b)): In this two-step process, one or both ‘Far/Near’ disparities are explicitly generated as intermediate results by TcNet02. These intermediate disparities are then combined with binocular images and fed into TcNet02CM, which outputs the border ownership maps.

-

3.

Third configuration (Fig. 6(c)): Similar to the second configuration, but in this case, the ‘Far’ and ‘Near’ disparities are generated separately by two separately trained TcNet02 networks.

Monocular configurations for comparison

Depth can also be perceived monocularly under the assumption that monocular depth can be represented by a disparity map. For comparison, we include results from monocular configurations (Fig. 7), where Fig. 7(a) mirrors Fig. 6(a), and Fig. 7(b) mirrors Fig. 6(b).

Design choices

The primary difference between the monocular networks (TcNet01/TcNe01CM) in Fig. 7 and the binocular networks (TcNet02/TcNet02CM) in Fig. 6 lies in the number of inputs. Minimal modifications were intentionally made to ensure comparable results. Additionally, this design reflects the perspective that the monocular process is a special case of the binocular processing, albeit with a few exceptions where monocular approaches may fail.

Notably, for simplicity, the same neural network (TcNet) was used for both disparity and border ownership generation, which is convenient but not strictly necessary.

Three Neural Network Configurations for Binocular Border Ownership Generation. (a): In this architecture, binocular images are stacked as input into TcNet02, expecting the network to implicitly learn the knowledge of disparities and generate border ownership maps. (b): A two-step process in this architecture where one or both ‘Far/Near’ disparities are explicitly generated as intermediate results by TcNet02. These intermediate disparities are then combined with binocular images as input to TcNet02CM, which outputs border ownership maps. (c) is similar to (b) except the ‘Far’ and ‘Near’ disparities are generated separately by two independently trained TcNet02 network.

Neural Network Configuration for Monocular Border Ownership Generation, used for comparison with their binocular counterparts in Fig. 6. (a): TcNet01 with monocular input, producing border ownership maps as described in Chen et al. (2022)6. (b): similar to case (b) in Fig. 6 except that the intermediate disparities are generated from TcNet01 with monocular image input (left image, or image 1); and the stacked input to TcNet01CM includes monocular image and disparities.

Notation:

‘F’:

1-channel ‘Far’ disparity map.

‘N’:

1-channel ‘Near’ disparity map.

‘F + N’:

2-channel map of both ‘Far/Near’ disparities.

‘=>’:

Output.

‘=> B’:

Output includes 1-channel all-contour map (referred to as ‘enforcement channel’ in Chen et al., 20226) and 2-channel border ownership maps, For the Virtual Kitti 2 dataset (discussed in Sect. “Datasets”), the output includes an extra 1-channel vehicle category contour map.

(2im = > B):

Binocular image input directly generating border ownership maps.

(2im + N + F = > B):

Binocular image input combined with ‘Near/Far’ disparities, producing border ownership maps.

(img1 = > B):

Monocular image input from image 1, producing border ownership maps.

Due to differences in input and output, TcNet02 and TcNet02CM, TcNet01 and TcNet01CM were separately trained for each configuration.

Datasets

For our experiments on binocular border ownership generation, we used two datasets: the Random Dot Stereograms (RDS) dataset, where objects are disparity-defined, and a modified version of the Virtual Kitti 2 dataset, where objects are contrast-defined.

Unless otherwise specified, all binocular disparity and border ownership maps throughout this study are referenced from the left eye (camera) perspective.

Random Dot Stereograms (RDS) Dataset, generated using the modified method from Marino (2007)28. Top row: Left and right RDS images, where the left image is a pure random dot image, while the right image is created from the left image by shifting random numbers (1 to 6) of randomly located rectangle-shaped areas. The shifted amount of each area is randomly selected from values of 2, 4, 6, 8, 10 and 12 pixels; Middle row: The left image is a ‘Near’ disparity map, where darker red rectangles indicate closer objects (larger shifts), and lighter red rectangles indicate farther objects (smaller shifts). The right image represents a ‘Far’ disparity map, which is the logarithmic form of the ‘Near’ disparity map. Bottom row: The left image shows a 2-channel border ownership map for the ‘disparity-defined’ objects; the right is an anaglyph of the two RDS images.

Random dot stereograms

Random Dot Stereograms (RDS) are regarded the “gold standard” for studying border ownership in neuroscience (Qiu et al., 2005)35. Since the pioneering work by Bela Julesz (1971)22, various forms of RDS have been widely used in cognitive science and perception research.

We adopted a modified RDS generation method based on Marino (2007)28. As shown in Fig. 8, ‘image 1’ (left image) is a pure random dot pattern, while ‘image 2’ (right image) is generated by shifting a random number (1 to 6) of randomly located rectangular patches from ‘image 1’. When viewed binocularly, these shifted patches appear as ‘disparity-defined’ objects in depth; when viewed monocularly, no objects are visible. The bottom-right image in Fig. 8 shows a stereo anaglyph of the RDS pair.

Our RDS dataset is more complex than those typically used in neuroscience studies, allowing for overlapping ‘disparity-defined’ objects. It is divided into a ‘train’ set and a ‘validation’ set for training, and a separate ‘test’ set for evaluation.

Modified virtual kitti 2

We selected a modified version of the Virtual Kitti 2 (VKitti) photorealistic computer-generated dataset (Cabon et al., 2020)5 as the second experimental dataset for contrast-defined objects, reflecting real-life human scenarios (see Fig. 5 for an example). The original VKitti dataset provides multiple sets of ground truth including binocular RGB images, depth maps, class and instance segmentation maps, and more.

We divided the dataset into three non-overlapping groups: ‘train’ and ‘validation’ sets for training, and a separate ‘test’ set for evaluation.

Each data group includes:

-

Binocular RGB images.

-

‘Near’ disparity maps, computed from the depth maps using (Eq. 3.1).

-

‘Far’ disparity maps, derived by applying using (Eq. 3.2) to ‘Near’ maps.

-

1-channel all-contour maps (referred to as the ‘enforcement’ channel in Chen et al., 20226).

-

2-channel border ownership maps.

-

1-channel vehicle category maps.

To ensure the disparity magnitudes are on a similar scale, the ‘Near’ disparity maps were scaled down by a factor of 10 (see discussion in Sect. “Binocular disparity and depth”).

Contour maps were extracted from the original class and instance mask maps. Border ownership was determined by computing the depth difference across border segments, the side with the closer depth was assigned as the border owner, and coded using the ‘opposite channel rule’ (described in Sect. “Monocular border ownership” in this paper and Sect. 2.1 of Chen et al., 20226). However, due to potential errors in estimating depth difference, some border ownership ground truth annotations may exhibit minor inaccuracies (see the 4th image in Fig. 5).

Statistical evaluation criteria

It is important to note that only the final outputs —contour maps, border ownership maps, and category-selective maps—are statistically evaluated, while intermediate (disparity) results are not included in the evaluation.

The statistic evaluation criteria used in this paper are nearly identical to those described in Sect. 3.2 in Chen et al., (2022)6:

(a) The mean average precision (mAP) was calculated by averaging the ratio of correctly predicted contour points over all predicted contour points across all test cases, specifically,

where G is ground truth, Pc and Pall represent, respectively, correct prediction and all prediction (both correct and incorrect);

-

(b)

A threshold (> 0.1) was applied to the results, and all contours were ‘thin’ processed before statistical calculations;

-

(c)

A 1-neighbor ground truth (around contour lines) was used, where a prediction was counted correct only if the predicted contour point was within a 1-pixel distance from ground truth contour points.

Results

We conclude with the following observations:

-

1.

The channel representation for border ownership and category selectivity, as developed in our previous work (Chen et al., 2022)6 is applicable to binocular cases.

-

2.

On the RDS dataset (Table 1), the configurations with explicit intermediate disparity learning (rows 2 to 5) substantially outperform the direct border ownership generation configuration (row 1), which expects to learn disparity implicitly.

-

3.

On the VKitti dataset (Table 2), the direct border ownership configuration (row 1) performs comparably to the configuration incorporating both ‘Far’ and ‘Near’ disparities (rows 4 and 5), and clearly outperforms the configurations that use one of ‘Far’ or ‘Near’ disparity (rows 2 or 3).

-

4.

Across both datasets, the configurations incorporating both ‘Far’ and ‘Near’ disparities (rows 4 and 5 in Tables 1 and 2) outperform those utilizing only ‘Far’ or ‘Near’ disparity (rows 2 and 3 in Tables 1 and 2).

-

5.

On the RDS dataset (Table 1), a comparison of rows 2, 3, and 4 indicates that the ‘Near’ disparity contributes more significantly than the ‘Far’ disparity. Conversely, the same comparison in the VKitti dataset (Table 2) suggests that the ‘Near’ and ‘Far’ disparities contribute similarly.

-

6.

For both datasets, the configurations incorporating both ‘Far’ and ‘Near’ disparities (rows 4 and 5 in Tables 1 and 2) show similar performance, regardless of whether the intermediate ‘Far’ and ‘Near’ disparities were generated separately, suggesting that jointly learning these channels within a shared network is sufficient.

-

7.

Table 1 (row 6) demonstrates that the monocular TcNet01, when trained on the RDS dataset (image 2), fails to detect any objects, confirming that disparity-defined objects in RDS (image 2) are invisible monocularly, while image 1 consists purely of random dots.

-

8.

On the VKitti dataset (Table 2), monocular TcNet01 configurations slightly underperform their binocular counterparts (TcNet02). Specifically, row 6 vs. row 1 (without disparity), row 7 vs. row 2 (with ‘Near’ disparity), and row 8 vs. row 4 (with both ‘Near’ and ‘Far’ disparities). For further discussion, refer to Sect. “Border ownership with or without explicit binocular disparities”.

Illusory objects and illusory contours

We demonstrated that our border ownership coding method and TcNet can effectively handle binocular disparity-defined objects where object borders are invisible to a single human eye. It is of great interest to explore another type of invisible border: the ‘illusory contours’ of the ‘illusory objects.’ According to Wikipedia48, illusory (or subjective) contours are “visual illusions that evoke the perception of an edge without a luminance or color change across that edge”.

Neuroscience research by Von der Heydt et al. (1984)44 indicates that illusory contours are extracted in the V2 region, where binocular border ownership is also generated (Qiu et al., 2005)35, suggesting a potential connection between the two processes. Additionally, a study by De Haas et al. (2018)10 also revealed “few systematic differences between retinotopic responses to illusory contours, occlusion, and subtle luminance stimuli in early visual cortex”, implying that border ownership generation for illusory contours occurs at an early vision stage, similar to other types of occluding contours.

In this Section, we demonstrate that the same border ownership coding method and monocular TcNet01 described in Chen et al. (2022)6 can be applied to several classic illusory contour types commonly studied in psychology and neuroscience, including Kanizsa figures, Ehrenstein illusion, Neon color spreading, and Illusory shading (Kanizsa, 197623; Bressan et al., 19974; Coren, 19727; Von der Heydt et al., 198444).

In addition, Coren (1972)7 suggested that “both monocular and binocular subjective contours result from the presence of depth cues”, often causing the perceived object to float up in front of other elements in the image. This phenomenon is evident in Fig. 9, where the perceived object appears to be elevated. We tested Coren’s hypothesis using synthetic (Near) disparity on Kanizsa figures to induce depth perception before (monocular and binocular) border ownership generation.

Illusory contour datasets

Kanizsa figures

Kanizsa figures are one of the most renowned examples of illusory contours (Kanizsa, 197623; Coren, 19727).

Training sets consisted of triangle/quadrilateral Kanizsa figures (‘true’ cases), and disoriented counterparts (‘false’ cases) by rotating the endpoints of a Kanizsa figure by 90o. Test sets included novel pentagon and hexagon shapes not seen during training (Fig. 9 (a)–(d)).

Illusory Contours of Illusory Objects. (a) Left: A Kanizsa illusory figure; Right: Its 2-channel border ownership coding; (b) Left: A false Kanizsa figure; Right: its border ownership map (empty); (c) Left: A pentagon-shaped Kanizsa figure; Right: its evaluated border ownership map; (d) Left: A hexagon-shaped Kanizsa figure; Right: Its evaluated border ownership map; (e) Left: An Ehrenstein illusory figure; Right: Its 2-channel border ownership coding; (f) Left: A false Ehrenstein illusory or Neon color spreading figure; Right: Its border ownership map (empty); (g) Left: A Neon (red) color spreading illusion; Right: Its border ownership map; (h) Similar to (g) but with light-white Neon color.

Ehrenstein illusion and Neon color spreading

The Ehrenstein illusion is closely related to the Kanizsa figure, creating an illusory perception of a white-filled circle intersecting radial line segments (Dresp-Langley, 200911), whereas Neon color spreading is described as “an optical illusion with transparency effects, characterized by fluid borders between the edges of a colored object and the background in the presence of black lines” (Wikipedia49).

Given their structural similarity—Ehrenstein illusions are effectively Neon figures with zero transparency—these were merged into a unified dataset. ‘True’ cases included variations in circle color/transparency (Fig. 9 (e), (g) and (h)); ‘false’ cases disrupted radial consistency (Fig. 9 (f)).

Illusory shading

Illusory shading is a phenomenon where shading cues create the perception of illusory contours (Coren, 19727).

To test shading-induced illusions, we used images with 1–4 synthetic shaded letters and trained on their 2-channel border ownership maps (e.g., “F D S T” in Fig. 10). For simplicity, the 2nd channel of the 2-channel border ownership map was used as input.

Illusory Shading. Left: shading letters (‘F D S T’), derived from the 2nd channel of the 2-channel border ownership map; Right: their corresponding 2-channel border ownership map.

Kanizsa figures with synthetic disparity

To test border ownership generation with synthetic disparity (disparity = 2), we used the following configurations:

Monocular configuration

Mirrors row 7 in Table 2 for TcNet01 on the VKitti dataset (see also Fig. 7 (b)):

TcNet01(img1 = > N) + TcNet01CM(img1 + N = > B).

where the synthetic (Near) disparity is generated as an intermediate result before border ownership.

Binocular configuration

Mirrors row 2 in Table 2 for TcNet02 on the VKitti dataset (see also Fig. 6(b)):

TcNet02(img1 + img1 = > N) + TcNet02CM(img1 + img1 + N = > B).

where the binocular inputs are identical Kanizsa figure, simulating both eyes observing the same image.

Results

The evaluation results from the trained TcNet01 include a 1-channel all-contour map and 2-channel border ownership maps (refer to Fig. 3). The statistical evaluation for contours or border ownership maps follows the same criteria described in Sect. “Statistical evaluation criteria”. For ‘false’ (empty) cases, the evaluation is based on the accuracy of predicting empty maps. Specifically, it is calculated as the ratio of correctly predicted empty cases to the total number of ground-truth empty cases.

We conclude with the following observations:

-

(1)

The experiments (Tables 3, 4 and 5) confirm our hypothesis that the channel representation of border ownership and TcNet, as described in our prior paper (Chen et al., 2022)6, is applicable to the illusory contour generation of illusory objects, including illusory Kanizsa figure, Ehrenstein illusion, Neon color spreading, and Illusory shading. Its performance is comparable to that observed for disparity- and contrast-defined objects.

-

(2)

For the Kanizsa figure tests (Table 3), it is noteworthy that pentagon and hexagon-shaped Kanizsa figures, which were not included in the training, achieved surprisingly strong evaluation results (also see Fig. 9 (c) and (d)).

-

(3)

For Neon color spreading and Ehrenstein illusion tests (Table 4), performance varies with transparency levels. The opaquest Ehrenstein illusion yields the strongest results among these tests.

-

(4)

A comparison of Table 3 (without synthetic disparity), Table 6 (monocular with synthetic disparity) and Table 7 (binocular with synthetic disparity) reveals that border ownership generation on Kanizsa figures with synthetic disparity performs substantially worse than those without synthetic disparity. See Sect. “Illusory objects, resulting from depth cues?” for further discussion.

Rubin Face-Vase illusion

In our prior paper (Chen et al., 2022)6, we argued that the ‘global context awareness’ is a ‘fixed property’ embedded within a group of border ownership-selective neurons, and potentially within category-selective neurons as well. If this hypothesis holds, visual perception is determined by the prevailing global context at the moment it occurs.

The Rubin Face-vase illusion (Rubin, 191538; see Fig. 11) depicts an image that can be perceived as either two faces or one vase. Crucially, the same borders can be assigned opposite border ownership, depending on the viewer’s perception. The dynamic nature of this perception, driven by alternating border ownership assignments, makes it a compelling case for testing this hypothesis.

If the neurons responsible for border ownership can handle such perceptual reversals without additional top-down input, it would further support the claim that ‘global context awareness’ is an intrinsic (‘fixed property’) feature of these neurons. However, the mechanism underlying dynamic perceptual shifting, which itself is not directly related to border ownership assignment, falls outside the scope of this study.

(sourced from Google). Middle: The 2-channel border ownership map when the image is perceived as two faces; in this map, the ‘left’ or ‘bottom’ sides of red-colored occluding segments are border owners, the ‘right’ or ‘up’ sides of green-colored occluding segments are border owners. Right: The 2-channel border ownership map when the image is perceived as a vase; here, the border owners are reversed.

Rubin Face-Vase Illusion. Left: A Rubin Face Vase image.

Neuroscientists have used the rectangle-window object (Qiu et al., 2005)35 to simulate opposite border ownership assignments similar to those seen in the Rubin Face-Vase illusion, in which an ambiguous figure can be interpreted as either a rectangle (object) or a window (hole). Each interpretation yields opposite border ownership assignments (see Fig. 12). In the following section, we use the rectangle-window object to investigate how ‘global context awareness’ determines border ownership assignments.

Experiment setup

We designed an experiment with two complementary rectangle-window datasets simulating two contrasting ‘global contexts’ (see Fig. 12):

-

(1)

‘Darker-region-as-object’: in this scenario, darker rectangles or windows, compared to their surrounding background, are treated as objects (See the left image in Fig. 12).

-

(2)

‘Lighter-region-as-object’: in this scenario, lighter rectangles or windows, compared to their surrounding background, are treated as objects (See the right image in Fig. 12).

Results

A monocular TcNet01 was trained separately on the two distinct rectangle-window datasets and then evaluated on both datasets.

Examples of the Rectangle-Window ‘Darker-region-as-object’ and ‘Lighter-region-as-object’ Datasets, with border ownership maps overlaid on rectangle-window images. Left: An example of ‘Darker-region-as-object’ includes two rectangle objects and one window object, where each object (rectangle or window) is darker than its background; Right: An example of ‘Lighter-region-as-object’ includes four rectangle objects and no windows, where each object is lighter than its background. In both cases (Left/Right), rectangle objects have red-colored right and top borders, and green-colored left and bottom borders. Conversely, window objects have opposite border ownership, with green-colored right and top borders, and red-colored left and bottom borders.

We conclude with the following observations:

-

1)

Table 8(a) shows that a monocular TcNet01 trained on the ‘Darker-region-as-objects’ dataset, with the ‘global context’ of ‘darker-region-as-object’, consistently treats darker regions as objects when evaluated on both datasets. The 0% accuracy on the ‘Lighter-region-as-object’ column indicates that the border ownership assignments are entirely opposite to the ground truth of ‘Lighter-region-as-object’ context.

-

2)

Table 8(b) shows that a monocular TcNet01 trained on the ‘Lighter-region-as-objects’ dataset, with the ‘global context’ of ‘Lighter-region-as-object’, consistently treats lighter regions as objects when evaluated on both datasets. The 0% accuracy on the ‘Darker-region-as-object’ column indicates the border ownership is assigned opposite to the ground truth of ‘Darker-region-as-object’ context.

-

3)

In both cases, the occluding contours (‘All contour’ columns in Table 8) were mostly generated correctly, regardless of the ‘global context’ being ‘Darker-region-as-object’ or ‘Lighter-region-as-object’.

-

4)

The experiment provides compelling evidence that the ‘global context’, learned during training, determines perception. Neurons likely encode both ‘global contexts’, and the dominant context at the moment of perception dictates whether the perceived object is ‘darker’ or ‘lighter’. This perception can dynamically switch between the two contexts, depending on which one prevails.

Contour objects

It is of great interest to explore another extreme case of objects on border ownership and category selectivity generation, where the images consist solely of occluding contours, devoid of any color or texture. In such cases, objects are perceived purely from their occluding contours, demonstrating how borders alone convey object identity and structure.

Experiment setup

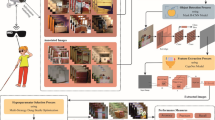

For this experiment, we re-use the modified Synscapes dataset from our prior work (see Sect. 3.1 in Chen et al., 20226), which includes ground truth border ownership and category selectivity. Instead of using the RGB image (shown as the 1 st image in Fig. 13), we use the occluding contour map (shown as the 2nd image in Fig. 13) as input to train a monocular TcNet01.

The output consists of 2-channel border ownership maps along with three 1-channel category-selectivity maps: ‘person’, ‘vehicle’, and ‘traffic light/blocks’, as illustrated in Fig. 13.

Results

We conclude with the following observations:

-

1)

Based on Tables 9 and random visual inspections, the evaluation results (row 1) are comparable to a similar evaluation (row 2) previously conducted on the same dataset with contrast-defined objects (see Table 1 in Chen et al., 20226, on the Synscapes dataset (c.2)).

-

2)

The all-contour channel (referred to as the ‘enforcement’ channel’ in Chen et al., (2022)6, or the first output branch in Fig. 3), which is equivalent to the combination of the border ownership channels, performs significantly better than the other channels, likely due to the input being composed solely of contour maps.

Contour Objects. 1 st image: RGB image (Synscapes dataset by Wrenninge et al., 201851); 2nd image: all-contour map (for all objects), used as input to monocular TcNet01. 3rd image: 2-channel border ownership map, including objects within three categories: ‘person’, ‘vehicle’, and ‘traffic-light/block’. 4th image: ‘person’ category-selective map; in the original Synscape dataset, bicycles are categorized under ‘person’. 5th image: ‘vehicle’ category-selective map. 6th image: ‘traffic-light/block’ category-selective map.

Integrative discussion – understanding of border ownership

We aim to deepen the understanding of border ownership and its related topics by integrating insights from our prior work (Chen et al., 2022)6, the current studies presented in this paper, and supporting evidence from neuroscience. By bridging perspectives from both computer vision and neuroscience, our goal is to establish a robust foundation for understanding and simulating the border ownership-centered segmentation in biological vision systems.

While our findings contribute to a clearer conceptualization of border ownership, we acknowledge that certain claims require further substantiation.

Definition of border ownership and object

Border ownership refers to the phenomenon where a visual border (or ‘occluding contour’) between two regions is perceived as belonging to one of the regions, referred to as the ‘border owner’. Initially, this concept applies only to ‘real’, visible borders defined by contrast or luminance differences separating objects from each other or the background; now it has since been extended to include both visible and illusory borders.

Consequently, an ‘object’ (in computer vision) or a ‘figure’ (in neuroscience) is defined as a region surrounded by borders encoded with border ownership, excluding image boundaries. In contrast, a region surrounded by borders without such encoding does not constitute an ‘object’ or ‘figure’—more precisely, it is not a border ownership-defined object.

We define an ‘object border’ as any contour, whether visible or illusory, encoded with border ownership. (see detailed discussion in Sect. “Edges vs. object borders – intrinsic nature of border ownership”)

Neuroscience research shows that the border ownership-selective neurons in the visual cortex exhibit two key properties (Von der Heydt, 2015)42: “Border ownership preference is a fixed property of neurons” and “each neuron has a fixed preference for location of one side or the other”. These properties play a crucial role in determining figure-ground organization in neuroscience or object segmentation in computer vision.

These findings form the basis for our border ownership coding method: opposite channel rule (see Sect. “Monocular border ownership”; also Chen et al., 20226, Sect. 2.1). Using an encoder-decoder neural network, TcNet, we have demonstrated its effectiveness across monocular and binocular vision, as well as for objects defined by contrast, disparity, illusory contours, and occluding contours—in both our prior and current work.

Global context awareness, short-latency, and gestalt principles

Global context awareness refers to the visual system’s ability to incorporate contextual information from large areas of the visual field when computing border ownership (Williford, et al., 2013)50. In our prior paper (Chen et al., 2022)6, we argued that global context awareness is a built-in, fixed property of border-ownership- and category-selective neurons. These neurons, inherently knowledgeable about ‘global context’, enabling them to generate the border ownership and select target categories without top-down guidance.

All experiments—monocular (Chen et al., 2022)6, binocular, and illusory (this paper)—support this view. TcNet, learns and applies ‘global context’, emulating inherently knowledgeable neurons and functioning like a self-sufficient ‘mold’. Qiu et al. (2005)35 provide additional neuroscience evidence further support this with notion that “G cells”—hypothetical top-down sources of global context—may not exist.

Short-latency

The ‘global context awareness’ embedded in border ownership-selective neurons also well explains the short latencies observed in border ownership generation. Zhou et al. (2000)53 reported that “the cortical processing that leads to border-ownership discrimination requires no more than ~ 25msec”, described as “ultra-fast” by Von der Heydt (2023)43. This rapid processing implies that the global context information is locally available within the neurons, eliminating the need for time-consuming transfer across neurons.

The “ultra-fast” short-latency imposes a strict constraint, ruling out any models that suggest the ‘global context’ is distant from the V2 area, where border ownership is generated.

Global context as prior knowledge

Although described as a ‘fixed property’ of neurons, ‘global context awareness’ is unlikely to remain entirely ‘fixed’ forever. Instead, ‘global context’ can be viewed as a form of prior knowledge that evolves and refines through exposure to new visual experiences or environmental changes, allowing the neurons to develop more sophisticated interpretations of contextual information.

This ‘prior knowledge’ spans monocular and binocular vision and applies to a variety of contour types, including contrast-, disparity-, illusory-, and contour-defined. As demonstrated in the Rubin Face-Vase illusion, border ownership can dynamically switch depending on the prevailing ‘global context’ at the moment of perception.

Connection to gestalt principles

Border ownership generation is also influenced by Gestalt principles, such as closure and proximity (Qiu et al., 2005)35, which likely form the foundation of the ‘prior knowledge’. Thus, it is reasonable to conclude that the ‘global context awareness’ is a biological embodiment of Gestalt organization, providing a robust framework for interpreting complex visual scenes.

Distributedness

The assertion that the ‘global context’ is embedded within border-ownership selective neurons implies that it is distributed across these neurons, rather than centralized.

This perspective indirectly explains why the hypothetical centralized “G cells” proposed by Qiu et al. (2005)35 are unlikely to exist. Likewise, this implies that the centralized “object pointer”, proposed by Von der Heydt et al. (2018)45 as a centralized representation of objectness (encompassing an object, its identity, and features), is unlikely to exist either, particularly not in the V2 area where border ownership is generated. Notably, Von der Heydt et al. (2018)45 acknowledge the absence of such a centralized ‘object pointer’ in V2. Instead, the completion of border ownership likely to a great extent, signifies the partial completion of object segmentation or ‘figure-ground organization’, suggesting a form of ‘distributed objectness’ or ‘distributed object pointer’.

The concept of ‘distributed objectness’ aligns with prior notion of ‘distributed representation’ in works by Roelfsema (2006)36 and Roelfsema et al. (2011)37, but our findings provide complementary support from a computational modeling perspective.

Distributed memory and architecture

O’Herron et al. (2009)29 proposed the existence of short-term memory for figure-ground organization to support persistence and continuity of perception. If such memory exists, we argue that it is likely distributed as well.

A distributed architecture offers greater efficiency than a centralized one. In such a system, the upper limit of objects tracked per neuron is predictable and equal to the maximum number of pixels covered per neuron. In contrast, the upper limit for neurons in a centralized architecture could be significantly higher and unpredictable, making centralized architecture inherently less efficient.

Conclusion

In conclusion, border ownership generation at the early visual stage (V2 area) is most likely a distributed process, with distributed object representation such as ‘distributed object pointers.’ If a centralized ‘object pointer’ does exist, it may emerge during subsequent visual processes, such as those involving focused attention.

This idea will be further examined in Sect. “Surface filling-in”, where we propose a ‘surface filling-in’ framework grounded in distributed processing.

Category selectivity

Our prior work (Chen et al., 2022)6 and this paper have demonstrated that category selectivity can be encoded and generated alongside border ownership, aligning perfectly with the neuroscience findings by Silson et al. (2022)40 and Uyar et al. (2016)41: the “human visual cortex is organized broadly according to two major principles: retinotopy and category-selectivity”. Furthermore, these studies highlight that “retinotopic maps and category-selective regions show considerable overlap”, where ‘retinotopy’ refers to the spatial mapping of the retina onto the cortex, closely related to border ownership-centered figure-ground organization.

As noted in Sect. 2.2 in our prior work (Chen et al., 2022)6, the “channel representation makes things simple” such that “border-ownership generation and category-selective generation can be done in one shot.” Consequently, the ”considerable overlap” between “retinotopic maps and category-selectivity” can be efficiently represented by the inner product of border ownership maps and category-selective maps. These representations can be jointly generated by a neural network, as visualized in Fig. 3 in this paper (and Fig. 3 in Chen et al., 20226).

Moreover, as noted in Sect. 4.4 in our prior work (Chen et al., 2022)6, our method of joint coding and generation of category selectivity alongside border ownership provides insights into why categories are pre-selected and why the number of categories is inherently limited. In our approach, each category is represented by a dedicated channel, making it practically unfeasible to use thousands of channels to represent thousands of object categories. This limitation aligns with neuroscientific findings by Wardle & Ritchie (2014)47, which suggest that the visual system’s category-selective architecture is inherently constrained to a finite set of categories.

Monocular and binocular

We have demonstrated that the border ownership and category-selective coding method functions effectively in both monocular and binocular settings using an encoder-decoder neural network, TcNet. The only exception is the Random Dot Stereograms (RDS) dataset, which, as expected, fails under monocular processing: each RDS image appears random in isolation, unless binocularly compared.

A binocular scenario involving two eyes (cameras) can also be conceptualized as a motion view captured at two locations by a single eye (camera). When motion approaches zero (static), binocular input effectively reduces to a monocular view, suggesting that monocular processing can be considered a special case of binocular processing.

This conceptual continuity was reflected in our design: (binocular) TcNet02 closely resembles (monocular) TcNet01, differing only in the number of input channels. Their comparable performance on VKitti (see Sect. “Results”(8)) supports this view that monocular and binocular processing share a common framework, except for disparity-critical cases like RDS.

On binocular fusion

We disagree with the binocular fusion model proposed by Dresp-Langley et al. (2016)12, which assumes that the binocular boundaries are fused from the left and right monocular boundaries. This model has three key limitations:

-

1.

It requires additional time-consuming correspondence matching, violating the ‘short latency’ constraint;

-

2.

In general 3D viewing, monocular boundaries often lack consistent point-to-point correspondence;

-

3.

It underutilizes binocular disparity, despite evidence (Qiu et al., 2005)35 that disparity-selective neurons directly contribute to border ownership generation.

Instead, our findings support a disparity-aware mechanism, where binocular input is processed jointly.

Binocular disparity and depth

Binocular disparity plays a critical role in depth perception and contour processing, with contributions observed in both V1 and V2 areas of the visual cortex. Disparity-selective neurons have been linked to border ownership generation (Qiu et al., 2005)35, although the precise mechanisms remain unclear.

Layered channel representation

To address the wide range of depths perceived by human vision, we adopt a layered channel representation that organizes ‘Near’, ‘Far’, and potentially other disparities into separate channels (Sect. “Binocular disparity” and “Binocular border ownership”). This structure aligns with neuroscientific observations (Poggio, 199532; Prince et al. 200233) and offers flexibility for incorporating additional cues such as optic flow from motion parallax, which shares structural similarities with disparity maps (Glennerster et al., 2018)15.

The channels—‘Near’ (Epipolar, linear) and ‘Far’ (logarithmic, non-linear)—do more than simply separate spatial ranges. Together, they form a richer basis for encoding depth structure, with the trained network implicitly learning their respective weights. The ‘Near’ channel offers high precision at short distances but becomes unreliable at long range. The ‘Far’ channel flattens disparity magnitudes while preserving ordinal depth relationship, effectively preventing nearby objects from overshadowing distant ones in disparity space. Empirical results in Sect. “Binocular border ownership” demonstrate that combining both channels improves the generation of border ownership assignments.

Absolute and relative disparity

Both ‘Near’ and ‘Far’ disparities are forms of absolute disparity and are processed in V1 (Cumming et al., 1999)9. the role of ‘relative disparity’, computed in V2, remains an open question in border ownership generation. Nevertheless, it is likely feasible to handle ‘relative disparity’ as ‘absolute disparity’ within the ‘layered disparity representation’ framework.

Depth and disparity

Unlike human vision, which encodes disparity via neurons but lack direct depth sensors, computer vision systems can leverage direct depth sensors (e.g. laser radar) but lack disparity sensors. Reformulations of Epipolar equation (Eq. 3.1') and its logarithmic form (Eq. 3.2') show that disparity and depth occupy reciprocal roles, suggesting that the ‘layered disparity representation’ could be extended to a combined depth/disparity representation in computer vision systems. Such hybrid representations may improve segmentation in complex scenes with wide depth ranges, as in autonomous vision.

Absolute vs. relative values

For border ownership generation, the relative magnitude of disparity (or depth) is more important than its absolute value. Thus, constants like k in (Eq. 3.1) and C in (Eq. 3.2) may be adjusted from their original meanings during neural network training to ensure neither disparity map overwhelms the other. For instance, a 10x scale-down factor was applied to the constant k in (Eq. 3.1) for the VKitti dataset (see Sect. “Modified virtual kitti 2”). However, it is important to distinguish between mathematical relativity used in training and the neuroscientific definition of relative vs. absolute disparity (see Sect. “Binocular disparity”).

Border ownership with or without explicit binocular disparities

As evaluated in Sect. “Binocular border ownership”, we examined border ownership generation with varying levels of binocular disparity involvement across two datasets: Random Dot Stereograms (RDS), which provides disparity-defined objects suitable for theoretical and neuroscience-related studies, and a modified photorealistic Virtual Kitti (VKitti), which includes contrast-defined objects spanning a wide depth range, closely resembling real-world scenes.

Disparity-aware vs. disparity-free

Across both datasets, models using both ‘Far’ and ‘Near’ disparities consistently outperformed those using only one disparity type, confirming the complementary nature of the two channels (Sect. “Results”(4)).

On RDS, incorporating explicit disparity channels substantially improved border ownership performance over disparity-free models (Sect. “Results”(2)), suggesting that explicit disparities are essential under camouflage-like conditions. This result is particularly notable because RDS stimuli are designed to eliminate monocular shape cues, isolating binocular disparity as the sole source of depth information (Julesz, 1971)22. Therefore, the strong disparity-dependent improvement in RDS highlights the critical role of explicit disparity input for border ownership generation when monocular context is absent.

On VKitti, disparity-free models performed comparably to those using both ‘Far’ and ‘Near’ channels (Sect. “Results”(3)), suggesting that TcNet02 is capable of learning disparities implicitly from photorealistic binocular input—indicating that disparity-related information is still encoded and utilized, even when not explicitly provided.

Contribution of layered disparities

For VKitti, the similar contributions from both ‘Near’ and ‘Far’ disparities highlight the importance of layered disparity representation. The logarithmic ‘Far’ channel complements the Epipolar ‘Near’ channel in encoding distant structures (Sect. “Results”(5)), confirming the practical value of the dual representation introduced in Sect. “Binocular Disparity”.

Conversely, on RDS, the ‘Near’ disparity dominates, with ‘Far’ disparity offering little additional benefit (Sect. “Results”(5)). This aligns with the nature of RDS, which contain predominantly nearby objects, and may lack the physical scale to require large-depth representation.

Limitations and future directions

This study represents a first systematic attempt to simulate and investigate border ownership generation with binocular disparities. While the RDS dataset offers high-fidelity ground truth, the modified VKitti suffers from depth estimation artifacts—particularly along tilted borders—resulting in an estimated ~ 70% accuracy based on visual inspection.

Despite these limitations, the common performance trends across datasets lend credence to the findings. Future work may extend this framework with broader accuracy-enhanced datasets and additional motion cues (e.g., optic flow), further improving generalizability.

Illusory objects

We demonstrated that border ownership can be generated for various illusory objects (see Sect. “Illusory objects and illusory contours”), including Kanizsa figures, the Ehrenstein illusion, Neon color spreading, and Illusory shading (excluding Kanizsa figures with synthetic disparity, discussed separately in Sect. “Illusory objects, resulting from depth cues?”)—using process closely resembling those for contrast-defined objects. This aligns with the neuroscience findings by Von der Heydt et al. (1984)45, who showed that illusory contours are extracted in the V2 region—the same region where binocular border ownership for contrast-defined objects is generated (Qiu et al., 2005)35.

Additional support comes from De Haas et al. (2018)10, who observed “few systematic differences between retinotopic responses to illusory contours, occlusion, and subtle luminance stimuli in the early visual cortex”. suggesting a shared early vision mechanism. (see further discussion in Sect. “Edges vs. object borders – intrinsic nature of border ownership”).

As shown in Sect. “Results”, border ownership generation for ’illusory objects’ relied on ‘prior knowledge’ learned during training. Furthermore, experiments on the Ehrenstein illusion and Neon color spreading (Sect. “Results”(3)) also revealed that performance degrades with increasing transparency (or decreasing opacity), consistent with human perception, where higher transparency reduces illusory contour salience.

Illusory objects, resulting from depth cues?

Stanley Coren (1972)7 suggested that “both monocular and binocular subjective contours result from the presence of depth cues”. However, our experiments (see Sect. “Results”(4)) do not support this hypothesis. Specifically, border ownership generation with synthetic disparity performed significantly worse than without it, contrary to the expectation that performance would improve or at least remain comparable if Coren’s claim were correct. Instead, our findings align more closely with the theory by Qiu et al. (2007)34, which attributes the observed ‘floating’ perception of subjective objects to ‘attention enhancement,’ with little or no involvement in border ownership generation.

Unlike datasets such as the VKitti and RDS (see Sect. “Datasets”), 2D Kanizsa shapes lack true depth or disparity cues, further challenging Coren’s hypothesis. A similar argument applies to the Rubin Face-Vase illusion. In this case, the object of attention-focus holds the border ownership and benefits from ‘attention enhancement,’ this appears unrelated to the border ownership generation. Rather, the dominant ‘global context’ dictates whether ‘face’ or ‘vase’ is perceived as figure (see Sect. “Results” and “Global context awareness, short-latency, and gestalt principles”).

However, if Random Dot Stereograms (RDS) are considered illusory, as Coren (1972)7 suggested, their border ownership generation does depend on a specific depth cue— binocular disparity.

In summary, depth cues are not strictly necessary for border ownership generation in monocular or binocular illusions. However, depth cues may play a role in attracting attention, indirectly influencing perceptual organization without directly impacting the generation of border ownership.

T-junctions and other key points

T-junctions, where two intersecting borders form a ‘T’ shape, have been proposed (Von der Heydt, 201542; Von der Heydt et al., 201845) as a cue for determining border ownership. The horizontal bar is typically seen as occluding the vertical, making the ‘above’ side of ‘T’ the border owner (see Fig. 14, Left).

(adapted from Fig. 4 in Chen et al., 20226). Left: Remade from part of FIGURE 5 of Von der Heydt, (2015)42 showing with two overlapping rectangular objects as an example. Top Right: The two overlapping rectangles are coded with our 2-channel border ownership coding method; the overlapping segments at T-junctions (black-color circled) are coded in different channels. Bottom Middle: An imperfect T-junction resembles a Y-junction, demonstrating the difficulty of determining occlusion relationships with traditional methods. Bottom Right: A 4-fold junction example.

T-junction and Y-junctions.

In our prior work (Chen et al., 2022)6, we presented a simplified solution (see Fig. 14, Top Right) to the example on the left, arguing that the T-junction is merely an irrelevant visual perception, and is an unreliable cue for the determination of border ownership in cases such as Y-junction (Fig. 14, Bottom Middle) or N-fold junctions where N ≥ 4 (Fig. 14, Bottom Right).

From a computational perspective, our 4-channel border ownership coding can better support key-point detection by identifying overlapping points between channels, which may signal potential junction points.

While these junctions present challenges in computer vision, neuroscience evidence (Craft et al., 20078; Heitger et al., 199219 suggests that end-stopped cells in biological vision play a critical role in processing corners and junctions. This implies that biological vision systems may utilize a more sophisticated mechanism for interpreting junction—a topic we revisit in Sect. “Active neurons and end-stopped cells”.

Edges vs. object borders – intrinsic nature of border ownership

Pioneering studies by Hubel and Wiesel (1959)20 demonstrated that V1 neurons are responsive to oriented edges and lines arising from contrast or luminance differences, forming the foundation of visual processing.

Traditional border ownership models (e.g., Craft et al. (2007)8; Von der Heydt (2015)42) assume a two-step process: first, occluding contours are detected from visible edges; and second, border ownership is ‘assigned’ to these contours based on cues like T-junctions. Consequently, the border ownership generation is often referred to as border ownership assignment.

However, illusory contours challenge this assumption. Our experiments (Sect. “Illusory objects and illusory contours”) show that border ownership can be generated for illusory objects with accuracy comparable to contrast- and disparity-defined contours, suggesting that explicit post-hoc ‘assignment’ may be unnecessary. Instead, border ownership generation appears to be an intrinsic process occurring simultaneously with contour formation.

Redefining ‘object border’

We define an ‘object border’ as any contour, whether visible or illusory, encoded with border ownership, as established in Sect. “Integrative discussion – understanding of border ownership”. This expanded definition highlights the inclusion of both visible and inferred boundaries, emphasizing that object borders are not strictly tied to contrast-based edges.