Abstract

Visual servoing technology is an important direction for realizing the intelligence of robotic arms, which enhances the flexibility and robustness of robotic arms by introducing visual feedback information. Image processing is the core part of this technology, but its real-time performance and stability are currently facing significant challenges. To solve the above problems, this study designs an image edge detection algorithm by combining breadth first search algorithm, Canny algorithm, Harris algorithm, and parallel processing strategy, and establishes a robotic arm visual servo system. The results showed that with the continuous increase of data volume, the performance of the proposed algorithm was still superior to other image edge detection algorithms throughout the entire process, and the accuracy, recall, and F1 score indicators exceeded 95%, 86%, and 90%. Under the image size of 4096*2160, the research algorithm could achieve a computational efficiency of 110FPS, and the average running time in the test dataset was at least 30.28ms. In practical application, the research method could converge the tracking error to a smaller range and greatly improve the dynamic tracking performance. The above data indicate that the research method can effectively improve the performance and reliability of the robotic arm visual servo system, and achieve perception of complex application environments.

Similar content being viewed by others

Introduction

The traditional visual servoing theory was founded in the 1980s and has formed two main lines: image-based visual servoing and location-based visual servoing. The former maps two-dimensional feature errors to camera velocity through the image Jacobian matrix, avoiding three-dimensional reconstruction but being limited by depth estimation errors and singular configurations; The latter relies on accurate 3D pose estimation, but is extremely sensitive to camera calibration errors and is prone to failure in complex environments1,2,3. The improvement strategies such as mixing and partitioning that emerged afterwards have to some extent eased the contradiction, but they still have difficulty breaking through the three fundamental limitations, namely, the assumption of geometric model rigidity requires precise knowledge of camera parameters, robot kinematics, and environmental structure, which cannot adapt to lighting changes and dynamic occlusion in unstructured scenes4,5. The low-level features such as edges and corners designed manually lack the ability to express high-level semantics, such as “grasping handles” rather than “rectangular edges”; The control law constructed based on Lyapunov stability frequently gets stuck in local minima in high-dimensional state spaces, leading to tracking failures. It is precisely these theoretical bottlenecks that have given rise to the emergence of Visual Language Action (VLA) models, which utilize large-scale multimodal pre training to achieve end-to-end joint learning of vision, language, and action. By using models based on contrastive language image pre training and large-scale language models, natural language instructions are directly mapped into robot action strategies, freeing them from dependence on precise geometric modeling. By using a cross task imitation learning framework based on behavior cloning and robot operation Transformer to learn cross scenario robust strategies in simulation environments, the requirement for calibration accuracy is significantly reduced; And use Transformer architecture to model temporal information, respond in real-time to moving targets and partial observations, providing stable and generalizable tracking capabilities for highly dynamic and complex tasks. Studies have shown that the VLA model can solve the problem of multimodal encoders learning visual-language interaction through more discriminative visual and language representations. The VLA model can distinguish the differences in object perception and foreground and background, improving the effect of contrastive learning6,7. Traditional visual servoing technology, based on precise geometric modeling and closed-loop control theory, has long been the standard solution for robotic arm control in structured environments. However, its reliance on rigid geometric constraints and deterministic models limits its adaptability in dynamic or unstructured environments. In recent years, the rapid development of embodied intelligence has brought revolutionary changes to robot systems, among which the VLA model can meet the multimodal interaction needs of robots in practical applications; Traditional visual servoing relies on pre-set geometric features, while VLA models gain semantic understanding through large-scale pre training and can generalize to new tasks without explicit programming. Faced with the trend of VLA models, traditional visual servoing technology adapts through two paths. Firstly, in tasks that require sub millimeter level accuracy such as precision assembly, traditional geometry based visual servoing is still irreplaceable, while VLA models are responsible for high-level task decomposition; The second is to combine the semantic segmentation results of the VLA model with traditional edge features to enhance feature robustness in complex backgrounds. However, currently the Robotic Arm Visual Servo (RAVS) system is facing significant challenges. Its image processing requires a large amount of computing resources, which limits the processing efficiency of the system. When the image resolution is high, it will have a negative impact on the real-time performance of the system. In response to the above issues, this study first designs the BFS-Canny Image Edge Detection (BFS-Canny-IED) algorithm by combining the Breadth First Search (BFS) algorithm, Canny algorithm, and parallel processing strategy, and then builds the RAVS system. The purpose of the research is to improve the image processing efficiency and accuracy of Visual Servoing Systems (VSS), and apply them in more complex and dynamic environments to meet a wider range of application needs. The innovation mainly includes the following two aspects. One is to build a RAVS system to ensure stability and reliability in tracking dynamic targets. The second is to design a BFS-Canny-IED algorithm to process acquired images at high speed and improve image quality and real-time performance.

Related works

As a key equipment in industrial automation, robotic arms have gradually become an indispensable part of modern manufacturing, agriculture, logistics and transportation industries. Its unique design and functionality enable the robotic arm to efficiently perform tasks in various complex environments, not only improving production efficiency but also ensuring the safety of operations. Numerous scholars have conducted in-depth analysis and exploration on this matter. V. D. Cong integrated image-based visual servoing into a vision-based sorting robot system to improve system flexibility and performance. The experiment revealed the time efficiency and performance robustness of the system, which can be used in industrial processes to reduce the required time and improve the performance and flexibility of the production line8. Y. Matsuda et al. developed a mobile robot control system with an object grasping arm and designed an image processing method for extracting target objects. This study conducted experiments using actual robot systems and found that even under different conditions (such as lighting conditions), the action of object grasping can still be successfully achieved9. J. Li et al. proposed a novel image-based visual servo controller for robotic arms, utilizing optimized extreme learning machine algorithms and offline reinforcement learning algorithms. The effectiveness and feasibility of this method were verified in simulation experiments conducted on a 6-degree-of-freedom robotic arm10. H. Xiao et al. designed a new framework based on Kalman filtering and multi rate model prediction, and applied it to the VSS of robots. Compared with conventional visual servoing techniques, the error reduction rate of the research method could reach 95% when the target was moving at high speed, the visual measurement speed was slow, and information could not be provided promptly11. H. Saito et al. proposed a background subtraction method using two color difference edge images and introduced template matching to improve detection accuracy. The patrol robot not only successfully detected suspicious objects but also detected lost objects, improving its detection performance12. Wang et al. designed an architecture for edge artificial intelligence autonomous mobile robots to detect human behavior in real-time. The average accuracy of single action and continuous action recognition using research methods was about 97.58% and 86%13. Naji et al. proposed a new method for extracting square groove feature points, which improves the automation level of welding manufacturing. To process images, this study introduced a Haralicks surface model based on the Canny algorithm to extract the centerline of laser stripes. In the detection results, the maximum relative error was less than 3.19%, which proved the rationality of this method14.

Based on the above content, the current research results mainly focus on the VSS and IED methods of robots, but the time delay problem of robotic arms and the problem of high-speed visual servoing are difficult to solve. Therefore, this study proposes a high-speed image feature extraction method based on the BFS-Canny-IED algorithm.

Design of RAVS system based on BFS-Canny-IED algorithm

To achieve high-speed extraction of image features in the RAVS system, this study designs a high-speed extraction method based on the BFS-Canny-IED algorithm for the image feature extraction part, and constructs the RAVS system.

Theoretical background

BFS was originally a “layer by layer diffusion” algorithm in graph theory. Moving it onto the image means using confirmed strong edge pixels as “seeds” and spreading them outward in an 8-neighborhood queue to re stick weak edges into a complete contour. This step on the CPU was once a bottleneck; On the GPU, each thread is allowed to process one pixel simultaneously, transforming the serial search of O (N) into thousands of threads spreading in parallel, achieving “disconnection and reconnection” without slowing down the overall frame rate.

Canny proposed three hard indicators in 1986: high detection rate, high positioning accuracy, and single pixel response. The algorithm consists of four steps: Gaussian denoising, Sobel gradient, non maximum suppression, and dual threshold lag connection. The lag connection in the final step is exactly where BFS comes in: high threshold provides seeds, low threshold provides growable areas, and GPU-BFS “grows” the edges into continuous curves at once.

Harris defines a corner as a position where there is a significant change in both directions, and uses the eigenvalues of a 2 × 2 structural tensor to determine it. The traditional approach is to calculate pixel by pixel using a sliding window. The CUDA scheme assigns each thread responsibility for one pixel, moves local windows into shared memory, and completes tensor computation and corner response in parallel at once, reducing the extraction of corners from seconds to milliseconds for the entire image.

GPU parallel acceleration has thousands of cores and excels in SIMD batch computing. On the one hand, by using separable convolutions to split two-dimensional Gaussian into two one-dimensional convolutions, the computational complexity is significantly reduced; On the other hand, there is a shared memory patch, where 32 × 32 small blocks reside in the chip cache, and threads collaborate to complete convolution, non maximum suppression, or Harris tensor, avoiding repeated access to video memory. When BFS, Canny, and Harris are all rewritten as shared memory cores, the entire feature extraction chain can still maintain 110 FPS at 4 K resolution.

Previous studies often only moved Canny or Harris separately to GPUs, or used BFS to supplement edges before performing CPU level processing, and have not yet formed a complete “three in one” solution that can directly drive robot closed-loop. This study fully GPU based BFS, Canny, and Harris, and fed them to the visual servo controller through a unified data interface, filling the gap between high-speed and high-precision feature extraction and real-time robot control.

Feature extraction method based on BFS-Canny-IED algorithm

Image edge detection aims to identify locations where grayscale or color changes sharply, providing geometric clues for high-level visual tasks. The specific process of the image processing algorithm integrated into visual servoing technology is as follows. The traditional Canny algorithm suppresses noise through Gaussian smoothing, obtains gradient amplitude and direction using Sobel operator, retains local extremum through non maximum suppression, and finally connects strong edges with high and low dual thresholds to suppress weak edges, forming a continuous, single pixel wide contour. Furthermore, Harris corner detection calculates the structural tensor through sliding windows, analyzes curvature changes, and marks pixels with larger eigenvalues as corner points, providing robust and reproducible features for visual servoing. Visual servoing technology closed-loop controls robot motion based on the difference between camera observations and expected images. The image-based method directly defines the error in the two-dimensional image space, linearly maps the pixel error to the six dimensional camera velocity through the image Jacobian matrix, and then uses the robot Jacobian to obtain joint velocity instructions, avoiding the uncertainty of three-dimensional pose estimation while maintaining sub-pixel level accuracy at high frame rates. Breadth first search starts from seed pixels in a queue and expands eight neighborhoods sequentially, which can effectively connect broken edges and reduce false contours. Modern GPU parallel architecture maps image blocks to a thread grid, where each thread independently completes convolution or corner response calculations, reducing global memory access latency through shared memory and achieving millisecond level processing.

Firstly, the study adopts image-based visual servoing. The system only extracts two types of image features, namely edge pixel chains and Harris corner points. Firstly, the BFS Canny IED algorithm completes Gaussian filtering, gradient calculation, non maximum suppression, and dual threshold detection in parallel on the GPU, and then uses breadth first search to connect candidate edges into continuous pixel chains, forming contours; Subsequently, the parallel Harris operator is run on the same gradient map to obtain the corner response function through the structural tensor, and the local maximum points are output as corner points. All edge chains and corner points are represented with sub-pixel accuracy and directly used as feature error inputs in the visual servo control law, thereby achieving fast and accurate recognition of target contours and key corner points while ensuring real-time performance at 110 FPS. Canny algorithm is one of the classic algorithms in computer vision and image processing. It can effectively detect subtle edges in images and suppress noise through multi-level processing such as Gaussian filtering, gradient calculation, non maximum suppression, and dual threshold detection15,16. However, it involves multiple convolution operations and complex logical judgments, resulting in a large computational load and limited computational efficiency. In view of this, this study introduces the BFS algorithm to optimize the Canny algorithm, and combines the above method with the Harris algorithm to achieve high-speed feature extraction through parallelization processing. Therefore the BFS-Canny-IED algorithm is developed to enhance edge connectivity and improve the noise resistance of edge detection. The process and pixel gradient map of Canny algorithm are shown in Fig. 1.

Canny algorithm flow and pixel gradient display diagram.

In Fig. 1, the Canny algorithm convolves the image using a Gaussian filter and calculates the corresponding gradient strength and direction, while removing pixels with weaker gradient strength and preserving local maxima at the edges. In addition, it distinguishes strong edge, weak edge, and non-edge pixels by setting high and low thresholds. In the Gaussian filtering section, the calculation is shown in Eq. (1).

In Eq. (1), \(GF(x,y)\) and σ are the Gaussian function and the standard deviation of the filter. The image gradient is calculated using the Sobel operator, as expressed in Eq. (2).

In Eq. (2), \(G{F_x}\) and \(G{F_y}\) correspond to the gradient values in the horizontal and vertical directions. * and \({I_{{\text{gray }}}}\) are convolution operators and grayscale pixel values. The calculation of gradient strength \(SH(x,y)\) and gradient direction \(F(x,y)\) is shown in Eq. (3).

The formula for the non-maximum suppression part is shown in Eq. (4).

In Eq. (4), \(S{H_L}\) represents the low threshold of \(SH(x,y)\), which is the offset value of \(F(x,y)\). Among them, the different gradient segmentation methods of the non-maximum suppression module are shown in Fig. 2.

Different gradient segmentation methods for non-maximum suppression modules.

In Fig. 2, to simplify the processing, this study sets the gradient direction as the following specific angles, namely 0°, 45°, 90°, and 135°. For each pixel, the gradient strength of neighboring pixels along its gradient direction is compared. If the gradient strength of the current pixel is greater than that of its neighboring pixels, the pixel is retained; Otherwise, the pixel is suppressed and set to 0. The calculation of the dual threshold processing part is shown in Eq. (5).

In Eq. (5), \(S{H_H}\) is the high threshold of \(SH(x,y)\). \(Non{-}edge\), \(Weak\), and \(Strong\) correspond to non edge, weak edge, and strong edge. After completing the above operations, the BFS algorithm can be used for optimization, mainly in the following three aspects. One is to use separable convolution to optimize the Gaussian filtering part, to reduce computational complexity. The second is to introduce the maximum inter class variance threshold segmentation optimization algorithm (Nobuyuki Otsu, N-OSTU) to obtain segmentation thresholds. The third is to improve image edge connection through BFS algorithm. In Optimization one, Gaussian filtering calculation can be converted into Eq. (6).

In Optimization 2, firstly, the grayscale values of the image are divided into two categories, \({H_0}=\left\{ {0,m} \right\}\) and \({H_1}=\left\{ {m+1,Z - 1} \right\}\), with corresponding probabilities as shown in Eq. (7).

In Eq. (7), \({P_j}\) is the probability of the grayscale value corresponding to the pixel. The expressions for the average grayscale value \({\bar {I}_{grey}}\) and the inter class variance \({L^2}(m)\) are shown in Eq. (8).

In Eq. (8), the average grayscale values corresponding to \(\bar {I}_{{grey}}^{0}\) and \(\bar {I}_{{grey}}^{1}\) traverse all grayscale values to obtain the m value that maximizes \({L^2}(m)\), which determines the segmentation threshold that can achieve the best segmentation effect. To further expand the gray level categories, this study divides them into three categories: \({H^{\prime}_0}=\left\{ {1,{m_H}} \right\}\), \({H^{\prime}_1}=\left\{ {{m_H},{m_g}} \right\}\), and \({H^{\prime}_2}=\left\{ {{m_{g+1}},Z - 1} \right\}\), and then calculates the inter-class variance, as shown in Eq. (9).

In Eq. (9), \({m_g}\) and \({m_H}\) are the low and high thresholds for optimal segmentation performance. In Optimization 3, the image edge connection principle based on BFS algorithm is shown in Fig. 3.

Principle of image edge connection based on BFS algorithm.

In Fig. 3, the confirmed edge points in the original edge image are placed into a category. Secondly, the position of 225 pixels is used as the starting point for traversal. In this process, if all adjacent pixels have undetermined pixels, they need to be added to the category of BFS, and then the newly added 8 neighborhoods need to be traversed. If there are unvisited pixels, they can continue to be added to the corresponding category until all pixels that are the same as the selected point have been traversed, and then stop. After completing the above steps, high-speed parallel processing methods can be designed. Firstly, the image is transferred to the host memory, and secondly, the CUDAMalloc function in the unified device architecture runtime library is computed to allocate Graphics Processing Units (GPUs) to the original and final output images. Then, the core function CUDAMemCpy of the copied image data is used for processing, and the image processing is completed through the kernel function. Finally, the processed graphics are copied and processed into the host memory through CUDAMemCpy, thereby completing the release of memory space.

In addition, image corner features are also a key component for achieving precise control in RAVS systems. The commonly used corner extraction algorithm is the Harris corner detection algorithm, which can accurately detect corners in the image. Specific operation: Firstly, place the center of the sliding window at a random position in the grayscale image, with the corresponding pixel grayscale value denoted as \(IE\left( {x,y} \right)\), and perform sliding processing. The grayscale value of the pixel in the new position is denoted as \(IE\left( {x+z,y+q} \right)\). The grayscale value change during window movement is shown in Eq. (10).

In Eq. (10), \((x,y)\) is the window function. To further improve computational efficiency, this study uses Taylor series for first-order approximation and simplifies the process to obtain Eq. (11).

In Eq. (11), J is the structural tensor. The corner is determined by the corner response function, as expressed in Eq. (12).

In Eq. (12), \({t_1}\) and \({t_2}\) are eigenvalues of J. γ represents hyperparameters. \(\det J\) and \(trace{\text{ }}J\) are the determinant and diagonal elements of a matrix. To achieve high-speed extraction of image corner features, this study implements parallel processing of multi-Harris algorithm through GPU based on the above method, that is, each thread processes the corresponding pixels in the image. Firstly, the parallel BFS-Canny algorithm is used to process the edge information of the image, and then the feature values obtained through gradient covariance are used to identify corner points. Based on the above content, the design of the BFS-Canny-IED algorithm can be completed. BFS Canny IED can stably output edges and corners within 9 ms through GPU parallel, ensuring real-time updates of feature vectors s, thereby directly improving the convergence speed and robustness of image spatial errors, rather than being used for 3D coordinate estimation.

Construction of RAVS system

In today’s technological world, robot technology has become a research hotspot. Moreover, among numerous branches, the RAVS system provides strong support for improving industrial production efficiency and quality, expanding space exploration, etc. due to its unique advantages and broad application prospects17,18,19. This system is a comprehensive technology that integrates computer vision, image processing, and robot control. By obtaining image information, it achieves precise control of robot motion20. The KUKA-KR16 robotic arm has high flexibility and can complete complex point and trajectory control tasks, adapting to different experimental scenarios and task requirements. Its repeated positioning accuracy can reach 0.04 mm, which can achieve high-precision operation in experiments21,22,23. In addition, its structure is sturdy and durable, capable of long-term stable operation, reducing experimental interruptions caused by equipment failures24. Therefore, this study chose it as the experimental subject. When modeling the dynamics of a robotic arm, it is necessary to first clarify the key transformation relationships in the RAVS system. The speed conversion relationship and general vector transformation diagram in the RAVS system are shown in Fig. 4.

Velocity conversion relationship and general vector transformation diagram in RAVS system.

Figure 4a shows the key speed conversion relationships in the system, which mainly include the following three types. The first is to convert the information obtained through the camera into the camera speed used by the system. The second is the end velocity of the robotic arm obtained through calibration. The third is the rotational speed of different joints in the robotic arm. Figure 4b is a general vector transformation diagram during the construction process of the kinematic model of the robotic arm. \({O_0} - {X_0}{Y_0}{Z_0}\) and \({O_1} - {X_1}{Y_1}{Z_1}\) correspond to the reference Coordinate System and the Coordinate System in which the pose of the target is fixedly connected to the target position. The coordinate representation of vector \(A_{{TA}}^{0}\) in \({O_0} - {X_0}{Y_0}{Z_0}\) is shown in Eq. (13).

In Eq. (13), \({a_x}\), \({a_y}\), and \({a_z}\) correspond to the coordinate values of \(A_{{TA}}^{0}\) on the X, Y, and Z axes. T is the transpose of \(A_{{TA}}^{1}\). The calculation of the rotation matrix \({}_{1}^{0}R\) of \({O_1} - {X_1}{Y_1}{Z_1}\) relative to \({O_0} - {X_0}{Y_0}{Z_0}\) and the process of converting \({O_1} - {X_1}{Y_1}{Z_1}\) to \({O_0} - {X_0}{Y_0}{Z_0}\) is shown in Eq. (14).

In Eq. (14), \({}^{0}{\hat {X}_1}\), \({}^{0}{\hat {Y}_1}\), and \({}^{0}{\hat {Z}_1}\) are all the projection components of the unit vector of the object’s posture in the \({O_0} - {X_0}{Y_0}{Z_0}\) Coordinate System through the \({O_1} - {X_1}{Y_1}{Z_1}\) axis direction. \(^{1}A\) represents a vector in \({O_1} - {X_1}{Y_1}{Z_1}\). The expression for the homogeneous transformation matrix is shown in Eq. (15).

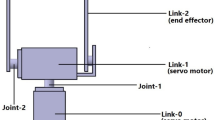

The display diagram of the joint axis linkage parameters of the experimental object is shown in Fig. 5.

Experimental object joint axis linkage parameter display diagram.

For the convenience of describing the complex shapes of robotic arms in the future, this study sets the Coordinate System of each link, and the relationship between links is usually described using the Denavit-Hartenberg parameter method25. Figure 5 shows the kinematic parameters used to describe the connecting rod. \({b_j}\) and \({\zeta _j}\) represent the distance and angle from \({\hat {Z}_j}\) to \({\hat {Z}_{j+1}}\) along the \({\hat {X}_j}\)-axis. \({d_j}\) and \({\theta _j}\) correspond to the distance and angle from \({\hat {X}_{j - 1}}\) to \({\hat {X}_j}\) along the \({\hat {Z}_j}\) axis. To further solve the position of the end effector of the experimental object relative to \({O_0} - {X_0}{Y_0}{Z_0}\), this study uses a forward kinematics model of the robot for description, as shown in Eq. (16)26.

In Eq. (16), \({\psi _{end}}\) and f are the end poses and joint angles of the experimental subjects. Through kinematics, it can be divided into N sub-problems, namely the transformation problem between the Coordinate Systems of linkages. Then, the sub-problem is further divided into four sub problems, namely the four kinematic parameters used to describe the connecting rod. The transformation process corresponding to the second child problem is shown in Eq. (17).

The general expression for \(_{j}^{{j - 1}}Q\) is shown in Eq. (18).

The pose transformation matrix and homogeneous transformation matrix from \({O_0} - {X_0}{Y_0}{Z_0}\) to the End Coordinate System \({O_5} - {X_5}{Y_5}{Z_5}\) are shown in Eq. (19).

In Eq. (19), \({S_{e,n}}\) and \({B_{e,n}}\) correspond to the posture and position of the end effector of the experimental object. By substituting the Denavit-Hartenberg parameters of the robotic arm into the \(_{6}^{0}Q'\) expression, the construction of the forward kinematics model of the experimental object can be completed. Due to the output of image feature bit velocity information in the RAVS system, this study calculates the Jacobian matrix between the end velocity and different joint speeds based on the above model. Firstly, the two points on the connecting rod are j and k, corresponding to linear velocities \({v_j}\) and \({v_k}\). The position of k relative to j is set as \({S_{j,k}}\), and the angular velocity of the connecting rod during rotation is \({\omega _j}\). The relationship between velocity and \({\omega _j}\), as well as the six dimensional velocity vector relationship, are shown in Eq. (20).

In Eq. (20), \({V_j}\) and \({W_j}\) are the linear velocity and angular velocity of connecting rod point j. \(\left[ {{S_{j,k}} \times } \right]\) is a cross product matrix. For the joints of the robotic arm, the calculation of \({V_j}\) and \({W_j}\), as well as the expression of the end velocity vector relationship, are shown in Eq. (21).

In Eq. (21), \({\beta _j}\) and \({r_j}\) are the unit vectors of rotation speed and rotation direction of joint j. Due to the influence of joint rotation on the end velocity mentioned above, the coefficient matrix can be set as \({G_j}\), and the following relationship can be obtained, as shown in Eq. (22).

In Eq. (22), the end velocity can be regarded as the sum of the end velocities of different axes, and the end Cartesian velocity \({v_d}\) is shown in Eq. (23).

In Eq. (23), \(G\left( r \right)\) and \(\dot {\theta }\) are the Jacobian matrix and rotational speed of the experimental object. In practical applications, the inverse problem of \(G\left( r \right)\) is the joint velocity required to achieve \({v_d}\). If \(G\left( r \right)\) is a square matrix and not singular, then transposition can be performed to obtain Eq. (24).

Based on the above, the \(G\left( r \right)\) of the robotic arm can be obtained. In the RAVS system, the image quality obtained through the camera has a significant impact on operational stability and image processing methods, and the correction of camera intrinsic parameters is beneficial for improving image quality and accuracy27,28. This study chooses to conduct calibration experiments on the internal participation distortion technique. The camera calibration experiment is as follows. The first is to prepare a 9*6 chessboard calibration board and select a grid with a side length of 20 cm for measurement and recording according to actual needs. It is necessary to ensure that the camera is working properly, the lens is clean, and there are no scratches or stains. At the same time, it is needed to choose an environment with uniform light and no direct sunlight to avoid difficult recognition of corners on the calibration board due to uneven light29,30,31. During each shooting, the calibration board should cover different areas of the camera’s field of view, and try to distribute the corners of the calibration board in various parts of the image. Due to the fact that the camera speed output in the RAVS system needs to be converted into the end velocity of the experimental object, it is necessary to convert it through the positional relationship between the camera and the experimental object. This study introduces Tsai’s two-step calibration method to obtain corresponding positional relationships. The schematic diagram of hand eye calibration is shown in Fig. 6.

Schematic diagram of hand eye calibration (Icon source: https://www.iconfinder.com/icons/3069183/cam_camera_device_media_photo_video_icon).

In Fig. 6, the position of the base Coordinate System and the calibration plate is fixed, so their relative positions remain unchanged. The position relationship of the calibration board relative to the camera can be obtained using external image features of the camera. The position of the camera relative to the end of the experimental object is calculated as shown in Eq. (25).

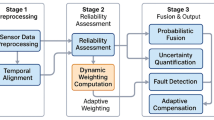

In Eq. (25), \(_{{cam}}^{{end}}R\) is the rotational component between the Camera Coordinate System and the Robotic Arm Coordinate System. In summary, the calibration of the RAVS system and the overall framework of the RAVS system can be completed, as shown in Fig. 7.

Overall framework diagram of RAVS system.

In Fig. 7, the user inputs the target pose through the control interface layer, which receives visual feature errors in real-time and outputs joint velocity commands; The instruction is converted into EtherCAT servo current by the driver layer to drive the KUKA-KR16 robotic arm. The arm end drives the camera to capture the original image and sends it to the BFS Canny IED algorithm layer through USB 3.2; The algorithm layer completes Gaussian filtering, BFS edge connection, and Harris corner detection in parallel on the GPU. The generated edge map and corner coordinates are returned to the control interface layer through shared memory for error calculation, forming a 110 FPS visual servo closed-loop.

Analysis of RAVS system results based on BFS-Canny-IED algorithm

To verify the feasibility of the proposed RAVS system, this study first tests the performance of the BFS-Canny-IED algorithm in the basic part, and then analyzes the overall processing effect of the RAVS system.

Performance analysis based on BFS-Canny-IED algorithm

To test the performance of the BFS Canny IED algorithm, this study uses software Matlab for simulation. And the experimental platform consists of KUKA KR16 R1420 six axis industrial robot, FLIR Blackfly S USB3.2 camera, and NVIDIA RTX 3080 GPU. The robot has a payload of 16 kg and a repeat positioning accuracy of 0.04 mm. The camera is 2.3 MPixel, with a global shutter speed of up to 110 FPS and a lens size of 12 mm F1.4; GPU memory 10 GB. The camera is connected to the industrial computer via USB 3.2 Gen 2, and the robotic arm communicates with the industrial computer through a 1 kHz EtherCAT bus. The experimental site is 4 m by 3 m, with a 4000 K diffuse LED strip on the top and a gray light absorbing screen on the background to avoid direct and reflective light. The dataset selection is the Berkeley Segmentation Dataset 500 (BSD500) provided by the University of California, Berkeley. It is a standard test set for image segmentation, containing 500 natural images and manually annotated segmentation information. This study selects commonly used metrics for edge image detection, namely Accuracy (AY), Recall (RL), F1 value, Speed Up (SU), Edge Points (EP), Number of Four Connected Domains (NFCD), and Eight Connected Domains (ECD), for evaluation. The continuity of ECD/EP and image edges is related, and the smaller the corresponding value, the lower the continuity. ECD/NFCD is related to the single edge response of image edges, and the smaller the corresponding value, the better the representation method meets the requirements of single edge response. Table 1 shows the specific experimental parameters.

This study first randomly selects three different types of images for testing, labeled as Image 1 to Image 3. The size of the first two images is 256*256, and the size of the last image is 512*512. The current mainstream threshold calculation methods are compared, namely Fixed Threshold (FT) and Adaptive Threshold (AT). The performance comparison of threshold calculation methods for different image segmentation is shown in Fig. 8.

Performance comparison of threshold calculation methods for different image segmentation (Image 1 Source from: https://openxlab.org.cn/datasets/OpenDataLab/BSDS500/preview/main?offset=32; Image 2 Source from: https://openxlab.org.cn/datasets/OpenDataLab/BSDS500/preview/main?offset=16; Image 3 Source from: https://openxlab.org.cn/datasets/OpenDataLab/BSDS500/preview/main?offset=6).

Figure 8a shows the image for testing. Figure 8b–d correspond to the performance comparison of different methods in Images 1–3. Among different types of images, the research method obtains the lowest EP value, while the FT method obtains the highest EP value. In the comparison of ECD/EP, the BFS-Canny-IED algorithm is relatively higher, with an average value of 0.01927. Among the ECD/NFCD results of different image types, the research method has the lowest corresponding indicator value. The above results may be due to the fact that the FT method is relatively simple and difficult to adapt to local changes in the image, while the AT method is suitable for selecting global thresholds but more sensitive to noise. In addition, the size of the image will have an impact on the effectiveness of edge detection to a certain extent. The higher the resolution of the image, the more the number of edge points will increase significantly. This indicates that the research method can provide better IED performance, especially when dealing with high levels of noise or significant changes in image edge density. To further test the performance of edge extraction algorithms, this study compares commonly used algorithms, namely Edge Detection Based on Pyramid Structure (EDPS), Improved of Beetle Optimization Algorithm Canny (IBOA-Canny), and Kirsch Combined High and Low Dual Thresholds (KCHLDT). This study conducts performance testing on the BSD dataset and obtained Fig. 9.

Performance results of different image edge detection algorithms.

Figure 9a–c show the variation curves of AY, RL, and F1 values for different image detection algorithms. With the continuous growth of data volume, the performance of BFS-Canny-IED algorithm is still superior to other IED algorithms throughout the process, and the AY, RL, and F1 values exceed 95%, 86%, and 90%. The performance of the IBOA-Canny algorithm is relatively good, with the average values of AY, RL, and F1 corresponding to 92.37%, 83.45%, and 86.74%. The EDPS algorithm performs the worst on IEDs, with average AY, RL, and F1 values of 81.36%, 71.63%, and 75.84%. This indicates that the research method performs well in datasets with various types of images, laying a solid foundation for the subsequent application of RAVS systems. To further analyze the acceleration performance of the research method, the following four image sizes are set for experiments, namely 256*256, 512*512, 1024*960, and 4096*2160, denoted as Sizes 1–4. In addition, the detection time of each parallel part is analyzed, and Table 2 is obtained.

In Table 2, the computational speed of the research method is significantly improved under different image sizes, with the SU index of Size 4 category showing the most significant improvement at 25 times. This is because studying separable convolution and constant memory can effectively optimize the computational complexity of convolution calculations. In the comparison of detection time performance between serial and parallel processing methods, under Size 4, the computational efficiency of parallel processing is 8.56 times higher than that of serial processing, and a running speed of 110FPS can be achieved. In the comparison of computational efficiency among different IED algorithms, the average running times of BFS-Canny-IED, IBOA-Canny, KCHLDT, and EDPS are 30.28ms, 34.4ms, 44.95ms, and 47.68ms.

Analysis of results based on RAVS system

To study the effectiveness of RAVS system in practical applications, different application environments are set up. In environment A, the research system needs to perform visual servoing tasks with long motion distances, while rotating 13.65° along the Z-axis from the starting point to the desired feature point coordinates. The distance between the target position and the starting state is the same as the expected state, which is 0.2 m. Environment B assigns tasks with moderate movement distance and sets the rotation angle to 33.88° to better demonstrate the effectiveness of the research system in executing tasks with larger rotation angles. Environment C sets the expected position at the edge of the image to explore whether the research system can reduce redundant motion. The six joints in the robotic arm are denoted as G1 ~ G6. The application effect of RAVS system in environment A is shown in Fig. 10.

Application effect of RAVS system in environment A.

Figure 10a,b show the joint angles and states of the robotic arm in the RAVS system. The RAVS system exhibits excellent performance in both the initial stage and the stage near the desired position during the execution of visual servoing tasks. In addition, all error state change curves gradually decrease and approach 0 with the increase of time. This indicates that the research system can effectively reduce errors and improve the accuracy and stability of the RAVS system in practical applications. The application effect of RAVS system in environment B is shown in Fig. 11.

Application effect of RAVS system in environment B.

Figure 11a,b correspond to the joint angle and state results of the research system under environment B. The proposed RAVS system dynamically adjusts towards the desired position uniformly, and the corresponding image feature points are concentrated around the straight line between the starting position and the desired position. The above analysis indicates that the redundant motion of image feature points rapidly reduces over a large range, and the state overshoot is zero, resulting in a significant improvement in convergence speed. The variation curve of the robotic arm joint angle is also smoother. The application effect of RAVS system in environment C is shown in Fig. 12.

Application effect of RAVS system in environment C.

Figure 12a,b show the joint angles and state results of the RAVS system under environment C. The research system is capable of quickly and accurately directing vital signs at large angles during the execution of high difficulty visual servoing tasks, and has successfully completed the tasks. In this complex application environment, the state overshoot of the research method is still effectively suppressed and can reach the endpoint in a straight line like manner. To further explore the tracking performance of research methods in practical applications, this study uses conventional VSS to investigate the tracking performance of low-speed images, as shown in Fig. 13.

Comparison of tracking effects of different VSSs in practical applications.

Figure 13a,b show the comparison of camera and target motion trajectories and error variation curves in different VSS. Conventional VSS has poor performance in dynamic target tracking tasks because it does not consider the motion of the target object. At the same time, the camera cannot obtain real-time motion information of the target object at a fast speed, ultimately resulting in tracking errors that are difficult to converge. The designed RAVS system, due to the BFS-Canny-IED algorithm effectively improving the efficiency of image processing, can converge tracking errors to a smaller range and greatly improve dynamic tracking performance.

Conclusion

RAVS system is an important research direction in the field of robotics, which uses visual information as feedback to perform non-contact measurements of the environment, which can improve the flexibility and accuracy of robot systems. However, the speed and stability of image processing are currently key factors affecting visual servoing performance. Therefore, this study constructed a RAVS system and designed a high-speed extraction method based on the BFS-Canny-IED algorithm. In the experiment, the computational speed of the research method was significantly improved under different image sizes, and the SU index of size 4 category showed the most significant improvement at 25 times. In the comparison of detection time performance between serial and parallel processing methods, under size 4, the computational efficiency of parallel processing was 8.56 times higher than that of serial processing, and a running speed of 110FPS could be achieved. In different application environments, the RAVS system could effectively suppress overshoot and accurately achieve target tracking. In summary, the research method can process images more efficiently and stably, effectively improving the performance and applicability of VSS. However, there are still certain shortcomings in this study. The research method implements high-speed image processing of RAVS system through GPU, but its power consumption is high when performing large-scale parallel computing, which is a huge challenge for embedded devices that run for a long time. Therefore, in future research, hardware acceleration can be achieved through multi-core processors and field programmable gate arrays.

Data availability

DATA AVAILABILITYThe datasets used and/or analysed during the current study available from the corresponding author on reasonable request.

References

Mei, W., Zheng, Y. & Gu, Y. Design of intelligent 3D collaborative manufacturing platform for non-holonomic mobile industrial robots based on improved binocular vision. Int. J. Intell. Robot Appl. 7(4), 740–751. https://doi.org/10.1007/s41315-023-00293-z (2023).

Wang, D., Yang, J., Huang, S. & Yang, J. Research and development of a closed-loop inspection system for cartesian robots based on a self-diagnosis model. Exp. Tech. Manage. 41(9), 131–137. https://doi.org/10.16791/j.cnki.sjg.2024.09.018 (2024).

Chen, G. et al. Autonomous gait switching method and experiments of a hexapod walking robot for Mars environment with multiple terrains. Intell. Serv. Robot. 17(3), 533–553. https://doi.org/10.1007/s11370-023-00508-z (2024).

Hua, D. et al. Correction to: A new industrially magnetic capsule medrobot integrated with smart motion controller. Int. J. Adv. Manuf. Technol. 133(9–10). https://doi.org/10.1007/s00170-024-14083-9 (4579, 2024).

Keshvarparast, A., Battini, D., Battaia, O. & Pirayesh, A. Collaborative robots in manufacturing and assembly systems: literature review and future research agenda. J. Intell. Manuf. 35(5), 2065–2118. https://doi.org/10.1007/s10845-023-02137-w (2024).

Liu, K., Wang, C., Han, X., Liu, Y. J. & Chen, B. Generalized robot vision-language model via linguistic foreground-aware contrast. Int. J. Comput. Vis. 133(6), 3481–3518. https://doi.org/10.1007/s11263-024-02340-z (2025).

Zhang, B. et al. Visual-guided hierarchical iterative fusion for multi-modal video action recognition. Pattern Recognit. Lett. 186, 213–220. https://doi.org/10.1016/j.patrec.2024.10.003 (2024).

Cong, V. D. Visual servoing control of 4-DOF palletizing robotic arm for vision based sorting robot system. Int. J. Interact. Des. Manuf. 17(2), 717–728. https://doi.org/10.1007/s12008-023-01264-1 (2023).

Matsuda, Y., Sato, Y. & Egashira, T. S. G. Control system for a mobile robot with object grasping arm by combining manual operation with visual servoing. Int. J. Innov. Comput. Inf. Control. 17(6), 2081–2092. https://doi.org/10.24507/ijicic.17.06.2081 (2021).

Li, J. et al. Adaptive visual servoing for the robot manipulator with extreme learning machine and reinforcement learning. Asian J. Control. 26(1), 280–296. https://doi.org/10.1002/asjc.3208 (2024).

Xiao, H. & Chen, X. Robotic target following with slow and delayed visual feedback. Int. J. Intell. Robot Appl. 4(4), 378–389. https://doi.org/10.1007/s41315-020-00151-2 (2020).

Saito, H. & Hoshino, S. Background Subtraction using template matching with color difference-based edge images for Spatial change detection by patrolling robots. J. Robot Soc. Jpn. 41(1), 72–81. https://doi.org/10.7210/jrsj.41.72 (2023).

Wang, S. T., Li, I. H. & Wang, W. Y. Human action recognition of autonomous mobile robot using Edge-AI. IEEE Sens. J. 23(6), 1671–1682. https://doi.org/10.1109/JSEN.2022.3225158 (2023).

Naji, O. A. A. M., Shah, H. N. M., Anwar, N. & Johan, N. F. Square groove detection based on förstner with canny edge operator using laser vision sensor. Int. J. Adv. Manuf. Technol. 125(5–6), 2885–2894. https://doi.org/10.1007/s00170-023-10862-y (2023).

Hasanvand, M., Nooshyar, M., Moharamkhani, E. & Selyari, A. Machine learning methodology for identifying vehicles using image processing. Artif. Intell. Appl. 1(3), 170–178. https://doi.org/10.47852/bonviewAIA3202833 (2023).

Bhosle, K. & Musande, V. Evaluation of deep learning CNN model for recognition of devanagari digit. Artif. Intell. Appl. 1(2), 114–118. https://doi.org/10.47852/bonviewAIA3202441 (2023).

Engel-Rodriguez, A. et al. A role for laparoscopy in the age of robotics: A retrospective cohort study of perioperative outcomes between 2D laparoscopic radical prostatectomy vs 3DHD laparoscopic radical prostatectomy. World J. Urol. 41(2), 443–448. https://doi.org/10.1007/s00345-022-04276-w (2023).

Yang, M., Sun, F. & Hu, X. Knitting from nature: Self-sensing soft robotics enabled by all-in-one knit architectures. ACS Appl. Mater. Interfaces. 15(37), 44294–44304. https://doi.org/10.1021/acsami.3c09029 (2023).

Wang, H., Xia, H. & Yang, W. Improving the performance of poly(caprolactone)-cellulose acetate-tannic acid tubular scaffolds by mussel-inspired coating. Biomacromolecules 24(9), 4138–4147. https://doi.org/10.1021/acs.biomac.3c00493 (2023).

Tu, R. & Sodano, H. A. Highly stretchable printed poly(vinylidene fluoride) sensors through the formation of a continuous elastomer phase. ACS Appl. Mater. Interfaces. 15(20), 22320–22331. https://doi.org/10.1021/acsami.3c01168 (2023).

Cao, C., Boutilier, M. S. H. & Kim, S. Low-profile, large-range compressive strain sensing using micromanufactured CNT micropillar arrays. ACS Appl. Mater. Interfaces. 15(30), 38665–38673. https://doi.org/10.1021/acsami.3c06299 (2023).

Wang, C. et al. Task attention-based multimodal fusion and curriculum residual learning for context generalization in robotic assembly. Appl. Intell. 54(6), 4713–4735. https://doi.org/10.1007/s10489-024-05417-x (2024).

Kuo, P. H. et al. Intelligent optimization algorithms for control error compensation and task scheduling for a robotic arm. Int. J. Intell. Robot Appl. 8(2), 334–356. https://doi.org/10.1007/s41315-024-00328-z (2024).

Ding, D. W., Xu, F. Y., Huang, Z. R. & Jiang, G. P. Error compensation and path planning for sheet metal bending process based on improved modeling algorithms. Int. J. Adv. Manuf. Technol. 135(7), 3363–3377. https://doi.org/10.1007/s00170-024-14665-7 (2024).

Zenhari, S. Mhring, H. C. & Torbati, A. V. Comprehensive analysis of kinematic models based on the DH method and screw theory for a five-axis machine tool. Procedia CIRP 130, 1745–1751. https://doi.org/10.1016/j.procir.2024.10.310 (2024).

Cu, Y., Liu, S., Shu, Z., Lv, Z. & Li, L. Positioning error prediction and compensation for the multi-boom working mechanism of a drilling Jumbo. J. Zhejiang Univ. Sci. A. 26(1), 66–77. https://doi.org/10.1631/jzus.A2300594 (2025).

Li, C., Gao, F. & Chi, C. Z. J. Efficient and accurate parameter extraction for quantum-well DFB lasers: A comprehensive approach integrating multiple mathematical models. Opt. Express. 32(11), 19230–19241. https://doi.org/10.1364/OE.516299 (2024).

Song, T., Zhang, Y. & Zhu, X. X. Data-driven adaptive negative sequence current control method for PWM rectifier under unbalanced grid. IEEE Trans. Power Electron. 39(4), 4771–4780. https://doi.org/10.1109/TPEL.2024.3350205 (2024).

Mei, D., Luan, Y., Li, X. & Wu, X. Light field camera calibration and point spread function calculation based on differentiable ray tracing. Opt. Lett. 49(4), 965–968. https://doi.org/10.1364/OL.507898 (2024).

Yang, F., Zhao, Y. & Wang, X. Common pole-polar properties of central catadioptric sphere and line images used for camera calibration. Int. J. Comput. Vis. 131(1), 121–133. https://doi.org/10.1007/s11263-022-01696-4 (2023).

Liu, Y. et al. Comparison study of three camera calibration methods considering the calibration board quality and 3D measurement accuracy. Exp. Mech. 63(5), 289–307. https://doi.org/10.1007/s11340-022-00905-y (2023).

Author information

Authors and Affiliations

Contributions

Y.L.Y. processed the numerical attribute linear programming of communication big data, and the mutual information feature quantity of communication big data numerical attribute was extracted by the cloud extended distributed feature fitting method. Y.L.Y. and S.S.M. Combined with fuzzy C-means clustering and linear regression analysis, the statistical analysis of big data numerical attribute feature information was carried out, and the associated attribute sample set of communication big data numerical attribute cloud grid distribution was constructed. Y.L.Y. and S.S.M. did the experiments, recorded data, and created manuscripts. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Yan, Y., Mokri, S.S. Performance analysis of robotic arm visual servo system based on BFS-canny image edge detection algorithm. Sci Rep 15, 35355 (2025). https://doi.org/10.1038/s41598-025-19221-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-19221-1