Abstract

Multiple Cervical Length (CL) measurements are typically acquired throughout the course of twin pregnancy to detect the early stages of labour and identify pregnancies at a high risk of preterm delivery. This study uses Machine-Learning (ML) approaches to determine the optimal timing of repeated CL measurements when used for predicting spontaneous preterm birth (sPTB) in twin pregnancies. Serial CL measurements from ultrasounds performed between 16 and 28 weeks of gestation were retrospectively acquired from 2,095 patients carrying twin pregnancies. These measurements were used for creating several CL feature sets, which were subsequently evaluated for their utility in predicting PTB < 37, sPTB < 37, sPTB < 34, and sPTB < 32 weeks. The highest accuracies for predicting sPTB < 37, sPTB < 34, and sPTB < 32 were found for the Logistic Regression model, which performed at 58%, 63%, and 73%, respectively. Post-hoc analysis showed that using multiple CL measurements did not significantly improve the sPTB prediction accuracy, irrespective of the ML model. Specifically, a single CL measurement at 18–20 weeks of gestation was sufficient for predicting sPTB < 32 weeks with the same accuracy. Future work should expand patient cohorts by including early CL measurements and investigating the time between a CL exam and sPTB from a regression standpoint.

Similar content being viewed by others

Introduction

Preterm birth (PTB), defined as delivery occurring after 24 weeks and before 37 weeks of gestation, remains a significant global health concern, responsible for approximately 17% of infant-related deaths worldwide1,2. Among the various factors contributing to neonatal mortality and morbidity, twin pregnancies are particularly vulnerable, with PTB being a primary risk factor3,4. Recent data from the National Vital Statistics Reports for 2021 demonstrate the escalating prevalence of PTB, with an overall rate of 10.49%, marking a 4% increase from 2020 and the highest rate since 20075.

PTB is characterized by both gestational age at birth and the underlying cause of early delivery. Gestational age-based classifications encompass late preterm birth (34–37 weeks), moderate preterm birth (32–34 weeks), very preterm birth (28–32 weeks), and extreme preterm birth (< 28 weeks)4. Additionally, PTB can be classified based on its cause, distinguishing between spontaneous preterm birth (sPTB) and medically indicated preterm birth4. Spontaneous preterm birth occurs when preterm labor ensues with intact membranes or as a result of preterm premature rupture of membranes (PPROM). In contrast, medically indicated preterm birth involves early induction due to maternal or fetal complications4. Given the unpredictability of PTB onset, early and effective clinical intervention is imperative.

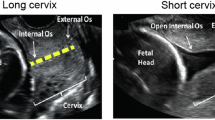

To facilitate risk-specific treatment, identifying factors associated with PTB is essential. Risk factors include maternal age, interpregnancy interval, previous PTB history, uterine or cervical abnormalities, maternal infections, low pre-pregnancy body mass index, maternal race, and socioeconomic factors6,7,8,9,10,11,12,13,14,15. Notably, cervical length (CL) emerges as a robust predictor of PTB in both singleton and twin gestations16,17,18,19,20,21,22,23,24,25,26,27,28. Transvaginal ultrasonography assessment of CL demonstrates high specificity (> 90%) and a strong positive likelihood ratio (> 5), particularly in high-risk pregnancies and twin gestations17. Thus, integrating these relevant risk factors can enhance the accuracy of predicting PTB for specific subgroups.

Current literature on PTB prediction varies by target demographic and methods of prediction and analysis8,19,26,29,30,31,32,33,34,35,36,37,38,39,40,41,42. The methods used for prediction depend on the input data provided to the machine learning algorithm. A review of machine learning approaches for sPTB diagnosis34 demonstrates the use of Decision Trees, Naïve Bayes, Logistic Regression (LR), Random Forest (RF), Support Vector Machines (SVM), Recurrent Neural Networks, Artificial Neural Networks, and Convolutional Neural Networks, depending on the input data type. For numerical electronic health data, Lee and Ahn34 show that Logistic Regression, Random Forest and Artificial Neural Networks perform best. Sun et al.41 developed multiple machine-learning models for predicting preterm birth using demographic factors, physical examinations, blood tests, urine test strips and gynecological examinations. From the collected data, they devised a method to average variables measured repeatedly based on the gestational age the variable was acquired. Sun concluded that the Random Forest algorithm obtained the highest accuracy of 81% from measurements up to 27 weeks of gestation. Tran et al.43 used a large cohort of electronic data of singleton pregnancies, from which they only included features < 25 weeks of gestation to avoid features indicating the predicted outcome already. They achieved a 0.81 Area Under the Receiver Operating Characteristic Curve (AUC-ROC) on predicting preterm birth of < 37 weeks using Random Gradient Boosting. Lastly, Melamed et al.39 hypothesized that CL measurements every 3–4 weeks improve the prognosis of preterm birth for asymptomatic pregnant patients with twin gestations. The researchers observed that a short cervix was associated with preterm birth before 32 weeks of gestation, with a short cervix between weeks 28 and 32 having the largest odds ratio correlation of 23.1 95% CI [8.3–6.4]39. Melamed also created a stepwise algorithm using cutoff CL values to classify PTB; using four CL periods, he obtained a higher AUC-ROC than that of a single CL measurement (0.917 vs. 0.613, p < 0.001)39. These studies do not differentiate well between the time and type of preterm birth, do not include CL data, use CL data but focus on single measurements, or focus on singleton pregnancies only.

This study aims to develop a predictive model specifically tailored to predict PTB and sPTB in twin pregnancies. It seeks to assess the significance of multiple CL measurements in predicting sPTB, determine the optimal timing for conducting CL exams, and compare the performance of different ML models in predicting sPTB considering various risk factors and pregnancy characteristics. By addressing these objectives, this study aims to provide valuable insights into the identification of sPTB risk and clinical management in twin gestations.

Methods

Dataset

The dataset utilized in this study was obtained from the medical records of patients with twin pregnancies attending the Twins Clinic at Sunnybrook Hospital, located in Toronto, Ontario, Canada. Data collection spanned from January 2012 to March 2022, encompassing a total of 2,095 patients with 2,104 pregnancies. Ethical approval for the utilization of these medical records was obtained from Sunnybrook Hospital research ethics board (REB #5462) and Toronto Metropolitan University research ethics board (REB #2022 − 363). All methods were performed in accordance with the relevant guidelines and regulations. The requirement for informed consent was waived by Sunnybrook Hospital research ethics board (REB #5462) and Toronto Metropolitan University research ethics board (REB #2022 − 363) because of the retrospective study design using fully anonymized health data, with minimal potential harm.

The collected medical information comprises a comprehensive array of variables, including maternal and fetal characteristics, previous medical history, preterm birth categorization, sonographic estimations, pregnancy complications, and neonatal outcomes. Notably, each patient underwent a series of CL measurements (“CL exams” or “exams”) at regular intervals, typically every 2–3 weeks, commencing on weeks 16–18 of gestation, and continuing until weeks 28–32.

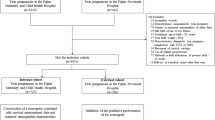

Preprocessing

The data preprocessing phase, depicted in Fig. 1, serves as the initial step in data utilization for predicting PTB within twin gestations. A comprehensive list of the primary features extracted from the medical charts is detailed in Supplementary Table S1 online. Subsequently, rigorous exclusion criteria were applied to refine the dataset. Instances meeting any of the following criteria were excluded: (1) missing CL measurements during gestation; (2) instances involving cervical cerclage; (3) cases with ambiguous pregnancy dating; (4) instances of indicated preterm delivery occurring before 34 weeks due to maternal or fetal indications; (5) instances with a birth weight of less than 500 g or missing birth weight data for either twin, or with a gestational age of less than 24 weeks at delivery; (6) occurrences of stillbirth for either fetus; (7) instances involving monoamniotic twins; (8) occurrences of monochorionic twins complicated by twin-to-twin transfusion syndrome (TTTS); (9) instances involving genetic or major structural anomalies in either twin; and (10) instances with exams conducted before 12 weeks or after 32 weeks of gestation. Following the application of these criteria, a total of 850 patients were deemed ineligible for inclusion in the dataset, having met the established exclusion criteria outlined in Fig. 1.

The distribution of labels is shown in Table 1. As gestational age increases, a greater number of pregnancies are represented in the cohort because PTB at an earlier gestational age are included in later ones. Notably, the number of exams per pregnancy throughout all four classifications remains similar, allowing for multiple CL features to be extracted and compared. A lack of unique values or features with low variance can lead to low predictive power and unnecessarily increase the dimensionality of the feature space. As such, features with < 5% distinct values in their distribution were removed.

Certain features within the dataset were excluded due to their lack of importance in predicting preterm birth. For instance, features deemed appropriate for data visualization purposes, such as “SamePreg” and “ExamNoInPreg,” (Supplementary Table S1) were removed from the dataset. Features containing post-birth information, such as “IsNICU,” were also excluded, although they may be relevant for future fetal outcome predictions. Moreover, the dataset was evaluated for outliers, with extreme values either rectified or eliminated to ensure data integrity and reliability. Supplementary Table S2 shows the number of missing values for each feature and indicates whether the feature was removed, retained, or processed using a combination of missing value imputation and outlier removal. Features with a high number of missing values, such as “CL with Fundal Pressure”, were removed entirely due to their low predictive power. In contrast, features with fewer missing values, such as “BMI Current”, had their missing values imputed using population averages. Since machine learning models cannot infer meaningful patterns from imputed values, this approach primarily serves to retain exam records, even if some features become less relevant.

The proposed pipeline for the prediction of preterm birth in twin gestations. The dataset size (n) represents the number of pregnancies in the dataset.

Feature engineering and feature selection

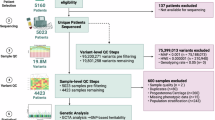

In our analysis of the impact of fetal biometry features, we applied various feature engineering techniques to optimize our approach. Due to the longitudinal nature of our dataset, where patients underwent between one and seventeen serial exams, not necessarily at the same time points (gestational weeks), it was crucial to develop effective methods for feature aggregation. Figure 2 illustrates the distribution of CL measurements, showing a skewed distribution, with most patients undergoing approximately five examinations.

Histogram depicting the number of CL exams each participant underwent.

To standardize the input length for machine learning models while accounting for the varying number of exams per patient, we explored several aggregation strategies. One approach involved binning CL measurements into specific gestational age ranges, as shown in Fig. 3. By binning exams into gestational age periods and ensuring a proportional representation within each bin, we aimed to reduce missing values. Table 2 presents the resulting classification datasets, each balancing different levels of temporal detail against the potential for missing data.

We included four distinct datasets to examine the impact of specific gestational age ranges on CL exams. These datasets are:

-

General: Uses an exam aggregation method that prevents missing values by consolidating multiple exams into a single feature. For instance, the dataset includes a minimum and maximum CL measurement regardless of whether a patient had one or seventeen exams.

-

Single: Aligns with the conventional approach of using only one CL exam per patient.

-

GA-16-28: Aggregates CL measurements into bins spanning a broader gestational age range (16 to 28 weeks).

-

GA-18-24: Focuses on a narrower gestational age window (18 to 24 weeks) for binning exams.

In datasets where CL measurements were binned, the values were averaged within their respective gestational age bins. Unlike the other datasets, the General dataset does not rely on gestational age ranges but instead integrates multiple exams into summary statistics, ensuring a consistent feature representation across patients.

The General dataset had no removal criteria (“None”, as shown in Table 2) since it accommodates all patients by deriving features regardless of the number of exams. In the Single dataset, missing values arose when patients did not have a CL exam during the 18-to-20-week gestational age window. For the GA-16-28 and GA-18-24 datasets, patients with only one exam were removed, while those with two or more exams had their missing binned CL values interpolated.

Histogram of the number of CL examinations performed within each gestation period in the dataset.

Features related to outcomes post-delivery, such as mode of delivery (CS), birth weight, and APGAR score at five minutes, were excluded from the classification algorithm to focus specifically on PTB prediction. Our primary predictive outcomes were PTB before 37 weeks of gestation (PTB37), spontaneous preterm birth before 37, 34 and 32 weeks of gestation (sPTB37, sPTB34 and sPTB32, respectively). To explore the relationship between CL and sPTB, we plotted the average CL measurements in binned gestational age ranges for normal twin pregnancies carried to term (no PTB), preterm birth that does not include spontaneous PTB (PTB), and sPTB populations (Fig. 4). A two-way ANOVA comparing PTB status and GA bin showed significant main effects of PTB status (p < 0.001) and GA bin (p < 0.001), as well as a significant interaction between PTB status and GA bin (p < 0.001). Statistical analysis indicates that the average CL of the sPTB diverges from that of the other two groups starting at 21 weeks of gestation and persists throughout the remainder of the pregnancy (p < 0.05). Statistically significant divergence between sPTB and no PTB only emerges at 15 weeks of gestation (p < 0.05).

Scatter plot of the average cervical length measurement for each binned gestational age period (every two weeks). Standard deviation error bars are shown for each average. Post-hoc Tukey Honestly Significant Difference is shown as significant (p < 0.05) for no PTB vs. sPTB (*), no PTB vs. PTB (†), and PTB vs. sPTB (‡).

Model selection

A range of machine learning classification methods were employed to identify the model which most accurately predicts preterm birth in twin pregnancies. We conducted preliminary tests comparing several classifiers, including Random Forest44Logistic Regression45Support Vector Machine46Extreme Gradient Boosting (XGBoost)47and K-Nearest Neighbours (KNN)48. These classifiers were selected based on their prior success in PTB and sPTB classification29,33. Upon evaluation, the RF and LR classifiers emerged as the top performers (Supplementary Table S3), thus warranting a detailed examination of their performance in our study.

The Random Forest (RF) algorithm44,49 operates as an ensemble-based classification or regression method built upon decision trees50. Decision trees (DT) are constructed hierarchically, employing conditional statements to partition the input feature set into smaller subsets, maximizing information gain at each node49. Each DT culminates in a final prediction at its terminal node49. To mitigate overfitting, the RF algorithm employs bootstrapping, randomly sampling the training set with replacement49. Additionally, it selects features randomly from the input feature set, reducing correlation among decision trees within the RF ensemble49. The collective class prediction of multiple trees is then determined through a majority vote49.

Logistic regression is an algorithm used for binary classification, predicting the probability of an outcome given a set of variables45. To predict binary outcomes, it applies a logistic (sigmoid) function to a linear combination of the input features45. Due to its simplicity and ease with small datasets, we test its usage here. All models were implemented using the Scikit-Learn Python library51.

Evaluation metrics

Various evaluation techniques are employed to assess the performance of a classification algorithm52. In our study, the algorithm predicts a binary outcome for each feature, forming a confusion matrix from which several metrics were derived to evaluate the model’s efficacy.

Accuracy (1) measures the proportion of correct predictions made by the model over the total number of samples52. While intuitive, accuracy alone does not distinguish between false positives (FP) and false negatives (FN), and it may yield optimistic results when dealing with class-imbalanced training sets biased towards the majority class.

Precision (2) indicates the proportion of correct predictions of a specific class among all predictions made for that class52. It provides insight into the Positive Predictive Value (PPV), reflecting the model’s accuracy in positive predictions52. Recall, or True Positive Rate (TPR) (3), quantifies the ratio of correct positive predictions to the total number of actual positive instances52. Conversely, the False Positive Rate (FPR) (4) illustrates the model’s propensity for incorrect positive predictions52.

A classifier with high PPV minimizes false positives, while high recall minimizes false negatives. Therefore, achieving high scores in both recall and precision is desirable to reduce the occurrences of FP and FN. The harmonic mean between precision and recall yields the F1 Score (5)53.

An alternative approach to evaluating classification models involves constructing a Receiver Operating Characteristic Curve (ROC). This curve depicts the TPR and FPR of the model across various threshold values for classification52. While a threshold of 50% is commonly used, the Area under the ROC Curve (AUC) is employed to discern any differences in performance resulting from varying thresholds.

Training implementation and optimization

Table 3 demonstrates the sample size for each dataset. To mitigate bias during model fitting, we applied random undersampling of the majority class within the training set to achieve class balance. Consistent with the trend in Table 1, spontaneous preterm birth before 32 weeks had the smallest sample size across all datasets. Amongst the datasets, the General dataset contained the largest cohort due to its lack of feature exclusions. In contrast, the Single dataset had the second-largest cohort, as shown in Fig. 3, while the 18-to-20-week bin included the second-largest cohort.

Subsequently, hyperparameters were selected using a 5-fold cross-validation grid search on the full dataset. Model performance was then estimated via 10-fold cross-validation using the optimized parameters. This approach was chosen so as to maintain a sufficiently large training set size for convergence, while reducing bias by using a separate CV for validation53. For statistical analysis, the aggregated CV folds were bootstrapped to obtain 95% confidence intervals. We conducted a one-way ANOVA for each label and metric to assess differences among the four datasets. Whenever the ANOVA indicated significance (p < 0.05), we performed pairwise independent t-tests with the Bonferroni correction to identify which dataset differed significantly.

Model training and optimization were carried out utilizing the scikit-learn platform54leveraging the computational power of an AMD Ryzen Threadripper 3960 × 24-Core Processor, operating at 3801 Mhz.

Code availability

Feature engineering and feature selection, along with the model selection, training, optimization and evaluation portion of this work, is archived on Zenodo here https://doi.org/10.5281/zenodo.15872009. Dataset cleaning is not available due to the specific nature of the dataset worked on.

Results & discussion

We created multiple datasets to assess which CL data aggregation method resulted in the highest predictive PTB performance using the RF and LR models. Figure 5 shows that for PTB < 37 weeks, performance of the Random Forest model remains relatively consistent across the four data aggregation strategies. Accuracy ranges between 59% and 62%, while precision slightly exceeds recall (between 61% and 66% vs. 49% to 53%). There was no statistically significant difference between any CL data aggregation for any of the performance metrics. For the remaining labels, LR outperformed RF, as summarized in Supplementary Table S3 and illustrated in Figs. 6, 7 and 8 that follow.

As shown in Fig. 6, which focuses on sPTB < 37 weeks, accuracy for the LR model generally ranges between 51% (Single) and 59% for the three other datasets. Recall remains similar throughout (50% to 53%), whereas precision shows a difference in datasets ranging between 51% and 60%. An ANOVA followed by pair-wise t-tests with Bonferroni correction for multiple comparisons yielded no statistical significance between the CL groups, indicating no clear advantage in PTB prediction accuracy irrespective of the CL data aggregation.

Test performance for PTB < 37 weeks for different dataset aggregation methods using Random Forest. On the x-axis are the various averaged metrics and their bootstrapped 95% confidence intervals. The four different data aggregation methods are colour-coded.

In Fig. 8 (sPTB < 32 weeks), the General dataset achieves an accuracy of 73.04% [66.70%–78.50%] and an F1 score of 70.41% [62.73%–77.25%]. The Single dataset shows lower performance, with an accuracy of 61.08% [51.06%–71.14%] and an F1 score of 62.18% [50.00%–73.47%]. The GA 16–28 dataset attains 70.21% [57.41%–81.48%] accuracy and 67.31% [51.22%–81.37%] F1, while the GA 18–24 dataset yields an accuracy of 63.27% [51.61%–75.79%] and an F1 score of 61.22% [44.90%–75.37%]. Despite the lack of statistically significant differences in our post-hoc analysis, the Single dataset consistently underperforms in AUC, accuracy, and precision. This indifference may be partly due to the sample size and lower variance in predictive power for this label (Table 3).

Test performance for sPTB < 37 weeks for different dataset aggregation methods using Logistic Regression. On the x-axis are the various averaged metrics and their bootstrapped 95% confidence intervals. The four different data aggregation methods are colour-coded.

Test performance for sPTB < 34 weeks for different dataset aggregation methods using Logistic Regression. On the x-axis are the various averaged metrics and their bootstrapped 95% confidence intervals. The four different data aggregation methods are colour-coded.

Test performance for sPTB < 32 weeks for different dataset aggregation methods using Logistic Regression. On the x-axis are the various averaged metrics and their bootstrapped 95% confidence intervals. The four different data aggregation methods are colour-coded.

Neither the Random Forest nor the Logistic Regression model showed statistically significant improvement in PTB prediction accuracy for multiple CL measurements versus a Single measurement made between 18 and 20 weeks of gestation. One of the study’s limitations was the insufficient number of CL measurements after 32 weeks (Fig. 3), which did not enable us to explore the prediction accuracy of sPTB at more specific GA ranges. For sPTB < 32, the Single dataset did not show a significant underperformance; however, we believe there may not have been enough serial measurements before 32 weeks to remove the considerable variance in measurements from our data. Figure 4 demonstrates that CL trajectories for those who deliver extremely early diverge more distinctly from those who do not, particularly in the later stages of gestation. These findings suggest that CL measurements better capture critical cervical changes, making them more clinically relevant for predicting neonatal outcomes. Importantly, significant intra- and inter-observer variability exists in CL measurements, suggesting that our predictive power for PTB could be enhanced by using accurate, repeatable CL measurements. Additionally, incorporating further clinical data (e.g., obstetric history or biomarkers) and improving the handling of missing values could potentially strengthen the model’s predictive power.

Our approach differs from earlier studies by focusing on twin pregnancies rather than singletons, differentiating PTB to various labels, incorporating multiple sequential CL measurements throughout later gestational stages, and utilizing RF and LR classifiers, both of which are well-suited for handling electronic health data. Building on the work of Melamed et al. [39], we enhance the feature set by capturing repeated CL measurements over multiple time points and labels. More importantly, we clearly distinguish between PTB and sPTB, whereas most prior studies do not. In our results, we demonstrate that a Single CL is meaningful enough compared to multiple CL measurements, with a predictive performance of 73% [66.7%–78.5%] accuracy using the General dataset, which incorporates both Single and multiple serial CL measurements as mixed features.

Despite limitations, such as the varying number of CL exams per patient and missing values, our findings highlight the importance of including CL measurements as a predictor for sPTB < 32 weeks. Future research should focus on expanding patient cohorts and investigating specific changes in CL at later stages of gestation. Furthermore, identifying the optimal number of exams to maintain predictive accuracy could help reduce the number of ultrasound exams a patient needs to undergo.

Conclusion

This study aimed to build a predictive model for PTB and sPTB in twin pregnancies by evaluating the utility of serial cervical length (CL) measurements, comparing machine learning models, and identifying optimal measurement windows. While predictive performance for sPTB < 34 and < 37 weeks was modest across SVM, RF, LR, KNN, and XGBoost algorithms, LR achieved notably higher performance for sPTB < 32 weeks. This suggests that early changes in CL may capture key physiological shifts preceding very preterm delivery. Importantly, we found that a single CL measurement between 18 and 20 weeks of gestation remains a clinically valuable predictor, achieving an AUC-ROC of 0.62. Our analysis further examined the impact of using serial CL measurements across gestational ages, identifying periods when additional measurements may provide marginal predictive benefit. Future work should focus on expanding patient cohorts, improving missing data handling, and determining the optimal frequency and timing of CL assessments to enhance prediction of PTB and sPTB.

Data availability

The datasets generated during and/or analyzed during the current study are not publicly available due to legal restrictions but are available from the corresponding author on reasonable request of a data sharing agreement.

References

Perin, J. et al. Global, regional, and National causes of under–5 mortality in 2000–19: an updated systematic analysis with implications for the sustainable development goals. Lancet Child. Adolesc. Health. 6, 106–115 (2022).

Practice Bulletin No. 171: management of preterm labor. Obstet. Gynecol. 128, e155–e164 (2016).

Garite, T. J., Clark, R. H., Elliott, J. P., Thorp, J. A. & the Pediatrix/Obstetrix Perinatal Research Group. Twins and triplets: the effect of plurality and growth on neonatal outcome compared with Singleton infants. Am. J. Obstet. Gynecol. 191, 700–707 (2004).

Goldenberg, R. L., Culhane, J. F., Iams, J. D. & Romero, R. Epidemiology and causes of preterm birth. Lancet 371, 75–84 (2008).

Osterman, M. J. K., Hamilton, B. E., Martin, J. A., Driscoll, A. K. & Valenzuela, C. P. Births: final data for 2021. Natl. Vital Stat. Rep. Cent. Dis. Control Prev. Natl. Cent. Health Stat. Natl. Vital Stat. Syst. 72, 1–53 (2023).

Abbasi, N., Barrett, J. & Melamed, N. Outcomes following rescue cerclage in twin pregnancies. J. Matern Fetal Neonatal Med. 31, 2195–2201 (2018).

Adeyemi, O. & Osoba, L. The role of phosphorylated insulin-like growth factor binding protein–1 in predicting pre-term labour in twin pregnancies. J. Obstet. Gynaecol. 30, 571–573 (2010).

Brubaker, S. G. & Gyamfi, C. Prediction and prevention of spontaneous preterm birth in twin gestations. Semin Perinatol. 36, 190–194 (2012).

Conde-Agudelo, A. & Romero, R. Cervicovaginal fetal fibronectin for the prediction of spontaneous preterm birth in multiple pregnancies: a systematic review and meta-analysis. J. Matern Fetal Neonatal Med. 23, 1365–1376 (2010).

Passini, R. et al. Brazilian multicentre study on preterm birth (EMIP): prevalence and factors associated with spontaneous preterm birth. PLoS ONE. 9, e109069 (2014).

Qiu, L. et al. Efficacy of ultrasound-indicated cerclage in twin pregnancies: a retrospective case-control study matched by cervical length. Am. J. Obstet. Gynecol. MFM 5, 100847 (2023).

Roman, A. et al. Cerclage in twin pregnancy with dilated cervix between 16 to 24 weeks of gestation: retrospective cohort study. Am. J. Obstet. Gynecol. 215, 98e1–98e11 (2016).

Romero, R. et al. Vaginal progesterone for preventing preterm birth and adverse perinatal outcomes in Singleton gestations with a short cervix: a meta-analysis of individual patient data. Am. J. Obstet. Gynecol. 218, 161–180 (2018).

Romero, R. et al. Vaginal progesterone decreases preterm birth and neonatal morbidity and mortality in women with a twin gestation and a short cervix: an updated meta-analysis of individual patient data. Ultrasound Obstet. Gynecol. 49, 303–314 (2017).

Zhang, Y. J. et al. Prevalence of preterm birth and risk factors associated with it at different gestational ages: A multicenter retrospective survey in China. Saudi Med. J. 43, 599–609 (2022).

Andersen, H. F., Nugent, C. E., Wanty, S. D. & Hayashi, R. H. Prediction of risk for preterm delivery by ultrasonographic measurement of cervical length. Am. J. Obstet. Gynecol. 163, 859–867 (1990).

Barros-Silva, J., Pedrosa, A. C. & Matias, A. Sonographic measurement of cervical length as a predictor of preterm delivery: a systematic review. J. Perinat. Med. 42, 281–293 (2014).

Berghella, V. et al. Cervical ultrasonography compared with manual examination as a predictor of preterm delivery. Am. J. Obstet. Gynecol. 177, 723–730 (1997).

Conde-Agudelo, A. & Romero, R. Prediction of preterm birth in twin gestations using biophysical and biochemical tests. Am. J. Obstet. Gynecol. 211, 583–595 (2014).

Crane, J., Vandenhof, M., Armson, B. & Liston, R. Transvaginal ultrasound in the prediction of preterm delivery: Singleton and twin gestations. Obstet. Gynecol. 90, 357–363 (1997).

Domin, C. M., Smith, E. J. & Terplan, M. Transvaginal ultrasonographic measurement of cervical length as a predictor of preterm birth: A systematic review with Meta-Analysis. Ultrasound Q. 26, 241–248 (2010).

Goldenberg, R. L. et al. The preterm prediction study: risk factors in twin gestations. Am. J. Obstet. Gynecol. 175, 1047–1053 (1996).

Heath, V. C. F., Southall, T. R., Souka, A. P., Elisseou, A. & Nicolaides, K. H. Cervical length at 23 weeks of gestation: prediction of spontaneous preterm delivery: cervical assessment and preterm delivery. Ultrasound Obstet. Gynecol. 12, 312–317 (1998).

Iams, J. D. et al. Cervical competence as a continuum: A study of ultrasonographic cervical length and obstetric performance. Am. J. Obstet. Gynecol. 172, 1097–1106 (1995).

Imseis, H. M., Albert, T. A. & Iams, J. D. Identifying twin gestations at low risk for preterm birth with a transvaginal ultrasonographic cervical measurement at 24 to 26 weeks’ gestation. Am. J. Obstet. Gynecol. 177, 1149–1155 (1997).

Okitsu, O., Mimura, T., Nakayama, T. & Aono, T. Early prediction of preterm delivery by transvaginal ultrasonography: prediction of preterm birth. Ultrasound Obstet. Gynecol. 2, 402–409 (1992).

Purisch, S., Schwartz, N., Romero, J., Elovitz, M. & Levine, L. Short cervical length remains a risk factor for preterm birth in multiparous women. Am. J. Obstet. Gynecol. 212. 876, S417–S418 (2015).

Riley, L., Frigoletto, F. D. & Benacerraf, B. R. The implications of sonographically identified cervical changes in patients not necessarily at risk for preterm birth. J. Ultrasound Med. 11, 75–79 (1992).

Włodarczyk, T. et al. Machine learning methods for preterm birth prediction: A review. Electronics 10, 586 (2021).

Koivu, A. & Sairanen, M. Predicting risk of stillbirth and preterm pregnancies with machine learning. Health Inf. Sci. Syst. 8, 14 (2020).

Park, S. et al. Predicting preterm birth through vaginal microbiota, cervical length, and WBC using a machine learning model. Front. Microbiol. 13, 912853 (2022).

Yang, Q. et al. Reporting and risk of bias of prediction models based on machine learning methods in preterm birth: A systematic review. Acta Obstet. Gynecol. Scand. 102, 7–14 (2023).

Sharifi-Heris, Z., Laitala, J., Airola, A., Rahmani, A. M. & Bender, M. Machine learning approach for preterm birth prediction using health records: systematic review. JMIR Med. Inf. 10, e33875 (2022).

Lee, K. S. & Ahn, K. H. Application of artificial intelligence in early diagnosis of spontaneous preterm labor and birth. Diagnostics 10, 733 (2020).

Weber, A. et al. Application of machine-learning to predict early spontaneous preterm birth among nulliparous non-Hispanic black and white women. Ann. Epidemiol. 28, 783–789e1 (2018).

Reicher, L., Fouks, Y. & Yogev, Y. Cervical assessment for predicting preterm Birth-Cervical length and beyond. J. Clin. Med. 10, 627 (2021).

Raja, R., Mukherjee, I. & Sarkar, B. K. A Machine Learning-Based Prediction Model for Preterm Birth in Rural India. J. Healthc. Eng. 1–11 (2021). (2021).

Gao, C. et al. Deep learning predicts extreme preterm birth from electronic health records. J. Biomed. Inf. 100, 103334 (2019).

Melamed, N. et al. Do serial measurements of cervical length improve the prediction of preterm birth in asymptomatic women with twin gestations? Am. J. Obstet. Gynecol. 215, 616e1–616e14 (2016).

Premru-Srsen, T., Verdenik, I., Steblovnik, L. & Ban-Frangez, H. Early prediction of spontaneous twin very preterm birth: a population based study 2002–2012. J. Matern Fetal Neonatal Med. 28, 1784–1789 (2015).

Sun, Q. et al. Machine Learning-Based Prediction Model of Preterm Birth Using Electronic Health Record. J. Healthc. Eng. 1–12 (2022). (2022).

Oskovi Kaplan, Z. A. & Ozgu-Erdinc, A. S. Prediction of Preterm Birth: Maternal Characteristics, Ultrasound Markers, and Biomarkers: An Updated Overview. J. Pregnancy 1–8 (2018). (2018).

Tran, T. et al. Preterm Birth Prediction: Deriving Stable and Interpretable Rules from High Dimensional Data. (2016). https://doi.org/10.48550/ARXIV.1607.08310

Breiman, L. Random forests. Mach. Learn. 45, 5–32 (2001).

Stoltzfus, J. C. Logistic regression: A brief primer. Acad. Emerg. Med. 18, 1099–1104 (2011).

Support Vector Machine. in. Integrated Series in Information Systems 207–235 (Springer US, 2016). https://doi.org/10.1007/978-1-4899-7641-3_9

Chen, T., Guestrin, C. & XGBoost A Scalable Tree Boosting System. (2016). https://doi.org/10.48550/ARXIV.1603.02754

Cover, T. & Hart, P. Nearest neighbor pattern classification. IEEE Trans. Inf. Theory. 13, 21–27 (1967).

Louppe, G. Understanding Random Forests: From Theory to Practice. (2014). https://doi.org/10.48550/ARXIV.1407.7502

Quinlan, J. R. Induction of decision trees. Mach. Learn. 1, 81–106 (1986).

Buitinck, L. et al. API design for machine learning software: experiences from the scikit-learn project. (2013). https://doi.org/10.48550/ARXIV.1309.0238

Hicks, S. A. et al. On evaluation metrics for medical applications of artificial intelligence. Sci. Rep. 12, 5979 (2022).

Raschka, S. & Model Evaluation Model selection, and algorithm selection in machine learning. (2018). https://doi.org/10.48550/ARXIV.1811.12808

Pedregosa, F. et al. Scikit-learn: machine learning in python. J. Mach. Learn. Res. 12, 2825–2830 (2011).

Acknowledgements

This work was supported by a MITACS Accelerate award #IT31929 (Sussman) and Toronto Metropolitan University. The authors would like to thank Isabel Arruda for assistance with data extraction and de-identification.

Author information

Authors and Affiliations

Contributions

A.C: Data curation, Formal Analysis, Methodology, Writing – original draft, Writing – review & editing, Software, Visualization. M.S: Data Curation, Validation, Software, Writing - review & editing, Visualization. N.M: Conceptualization, Funding acquisition, Writing – review & editing, Supervision. D.S: Conceptualization, Methodology, Funding acquisition, Project administration, Writing – original draft, Writing – review & editing, Resources, Supervision. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Conflict of interest

D. Sussman and N. Melamed report that financial support was provided by Mitacs (#IT31929). If there are other authors, they declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Costanzo, A., Szymanowski, M., Melamed, N. et al. Prediction of preterm birth from cervical length measurements in twin pregnancies using machine learning. Sci Rep 15, 35844 (2025). https://doi.org/10.1038/s41598-025-19803-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-19803-z