Abstract

Because of solar power’s inherent intermittency and stochastic nature, accurate photovoltaic (PV) generation forecasting is critical for the planning and operation of PV-integrated power systems. Thus, accurate power forecasting becomes vital for maintaining good power dispatch efficiency and power grid operational security. Several PV forecasting methods based on machine learning algorithms (MLAs) have recently emerged. This paper presents machine learning methods for multi-label forecasting of PV and AC power delivered to the grid of a building-applied PV plant. Various algorithms representing multiple groups are evaluated, including linear regression (LR), polynomial regression (PR), neural networks (NN), deep learning (DL), gradient-boosted trees (GBT), random forests (RF), decision trees (DT), k-nearest neighbor (k-NN), and support vector machines (SVM). The models use real-time collected data from sensors over one year for solar irradiance, ambient temperature, wind speed, and cell temperature to predict PV and AC power outputs. Forecast performance over multiple time horizons is validated using four datasets: 24 h, one week, one month, and sudden variations. Models are evaluated based on performance metrics such as absolute error (AE), root mean square error (RMSE), normalized absolute error (NAE), relative error (RE), relative root square error (RRSE), and correlation coefficient (R). Results show that RF, DT, and DL consistently achieved the highest accuracy (R ≈ 99.8–100%) with minimal errors (RMSE within 0.014–0.022, AE within 0.008–0.015) across various forecasting scenarios. These models demonstrated strong adaptability and predictive reliability across short-term, medium-term, and long-term forecasts, making them the most effective choices for PV and AC power prediction. The accurate forecasts generated in this study have the potential to aid grid operators in forecasting PV power output variability and planning for integrating intermittent PV power into the grid. Understanding how PV generation will fluctuate given different meteorological conditions allows operators to ensure the consistent integration of this weather-dependent power source. Moreover, multi-label prediction of DC and AC power enables inverter efficiency optimization and grid integration analysis. The average actual and predicted efficiencies of the inverter are 0.96688 and 0.9638, providing valuable insights.

Similar content being viewed by others

Introduction

Background

Renewable energy sources, especially solar, have become a primary focus in recent years due to their ability to be used for extended periods without causing harm to the environment. Emerging energy issues, such as unreliable supply and regional power shortages, could be mitigated by evolving renewable energy technologies. However, the use of diverse but unstable energy sources from renewables is crucial due to the unpredictability of the energy market and the intermittent nature of these alternatives. Figuring out how to smoothly manage fluctuations from renewable energy remains a core challenge. Monitoring energy usage more precisely can boost overall energy system efficiency. Applying energy forecasting can help governments at all levels better develop, implement, and adjust energy policies1. Properly incorporating and managing these resources into the existing infrastructure has become a major responsibility for the energy industry, particularly in areas heavily reliant on climate-sensitive energy supplies1. Output from these systems fluctuates based on power creation, storage capacity, and demand, endangering the reliability and stability of the overall energy system2. Managing and integrating distributed energy resources has created new prospects for developing novel business and market models leveraging the benefits of these technologies. Enabling technologies like blockchain, the Internet of Things, and especially artificial intelligence aim to facilitate these advances. Integrating PV arrays with sophisticated grid management using AI enables perceptive power flow optimization and reduced losses. This opens up opportunities for new business models, encouraging consumers to trade surplus energy and participate in demand response programs2. Numerous researchers have extensively researched and advanced the ability to harness renewable energy sources such as solar, wind, and hydropower. It is expected that, in the near future, 100% of energy will come from these sustainable resources. Consequently, renewable technologies are expected to play a vital role in Egypt’s energy landscape, with solar energy being the most predominant renewable resource in the country3.

The variability in PV generation poses substantial challenges for managing the current power infrastructure. The fluctuating output from solar panels makes it difficult to match with electricity demand, creating issues in maintaining an immediate supply-demand balance. This becomes increasingly complex and costly as solar penetration grows, affecting decisions related to backup resources, scheduling, storage, and long-term planning. Integrating high levels of solar PV necessitates significant adjustments in power system management and electricity trading3,4. Forecasting solar power accurately is essential to addressing these challenges. Various techniques, including numerical weather prediction, image-based analysis, statistical methods, and hybrid neural network approaches, are used to estimate solar irradiance and PV output4. Emerging technologies like artificial intelligence (AI) and machine learning (ML) are improving solar forecasting by enhancing accuracy and efficiency. AI and ML help power grid operators make informed decisions, plan operations, and optimize energy market strategies, reducing costs associated with balancing intermittent solar power fluctuations5,6. Forecasting models fall into two main categories: indirect and direct. Indirect forecasting predicts solar irradiance using various methods and then estimates PV generation through simulation software7. Direct forecasting uses historical data to predict the PV power output. Both methods aim to improve PV power forecasting for grid integration. Forecasts can be categorized by their horizon: very short-term (seconds to minutes), short-term (1 day to several days), medium-term (1 week to 1 month), and long-term (1 month to 1 year). Each type serves different needs, from minute-by-minute fluctuations to seasonal and annual trends, guiding resource planning and investment2,7.

Literature review

Several prior studies developed PV power forecasting models using machine learning techniques. Dan Assouline et al.8 applied support vector machine (SVM) models based on the kernel technique to estimate rooftop PV potential across urban Switzerland. In9, Stefan Preda et al. focus on hybrid renewable energy power forecasting by applying the SVM algorithm using real data generated from sensors. By processing large datasets, their results validated that big data analysis enhances predictive accuracy for renewable energy resources. Muhammad W. et al.10 studied the accuracy, stability, and computational cost of two tree-based models, mainly extra trees (ET), and random forest (RF) for PV power hourly prediction. The authors suggested, as a future study, the development of models based on weather classification, including clear and cloudy days. Also, they suggested the need to explore big data analysis tools for training and installing renewable energy forecast models. Rogério C. Costa11 utilized deep learning to predict residential PV system generation. Real data was employed to evaluate the forecast accuracy of long-short-term memory (LSTM), convolutional, and hybrid convolutional-LSTM networks across horizons, compared against Prophet in terms of MAE, RMSE, and NRMSE error metrics. In12, Mario Tovar et al. proposed a five-layer CNN-LSTM model for PV power predictions using real data for a location in Mexico. The results showed that the hybrid NN model has better predictions.

Maneesha P. et al.7 presented a forecast approach based on particle swarm optimization. Tests over four forecast resolutions and horizons revealed the proposed method reduced the mean absolute scaled error (MASE) by 3.81%. Grzebyk et al.13 proposed a single machine-learning model to forecast the power output of a large distributed solar fleet containing 1,102 PV systems. When evaluating the hourly forecast accuracy of the proposed XGBoost model at daily resolution, it achieved a mean absolute error (MAE) of 0.877 kW and a mean absolute percentage error (MAPE) of 23%. Ferlito et al.14 compared various machine learning methods with differing levels of complexity to determine if higher complexity models provide better forecasting performance. Additionally, they evaluated the considered techniques using both online and offline training methodologies to identify the most effective approach. Ibtihal A. A. et al.15 presented an ensemble stacked machine learning model for hourly forecasts of two photovoltaic systems differing in size and age. The study benchmarked three machine learning algorithms—random forest, gradient boosting, and multiple linear regressions—against a baseline linear regression model and the physical reference model for PV power prediction. Shadrack T. Asiedu et al.16 studied and compared the performance of single, ensembles, and hybrid machine learning models in predicting solar PV output power over four different time horizons (a day, a week, two weeks, and one month ahead). A summary of representative studies on PV power forecasting, including algorithms, dataset characteristics, and forecast horizons, is provided in Table 1.

Motivation

The increasing penetration of solar photovoltaic systems in power grids worldwide necessitates accurate forecasting capabilities to ensure grid stability and optimal energy management. Current grid operators face significant challenges in balancing supply and demand due to the intermittent nature of solar generation, which varies based on weather conditions, seasonal changes, and daily patterns. Effective forecasting models are crucial for enabling grid operators to make informed decisions regarding energy dispatch, storage utilization, and backup power activation.

Research gap

Despite the considerable advances demonstrated in the literature, previous research has predominantly focused on single-label PV power forecasting approaches that predict only one output parameter. This limitation overlooks the potential benefits of simultaneously predicting multiple power system parameters, which could provide enhanced value for comprehensive system analysis. Incorporating a modeling approach that includes both PV and AC power predictions can provide additional value for applications such as grid integration analysis, inverter optimization, energy management systems, and financial planning algorithms for building-applied photovoltaic (BAPV) systems.

Research objectives and contributions

This study addresses the identified research gap by developing and proposing multi-label forecasting models for predicting the PV array and AC power outputs of a BAPV plant. To analyse this multi-label forecasting framework, various machine learning algorithms (MLAs) spanning different model classes are implemented, including linear (LR) and polynomial regression (PR) models, artificial neural networks (ANNs), and deep learning (DL) models, random forests (RF), gradient-boosted trees (GBT), decision trees (DT), the K-nearest neighbour (k-NN) algorithm, and support vector machines (SVM). Meteorological variables such as solar irradiance (Srad-W/m2), ambient temperature (Tamb-oC), and wind speed (Ws-m/s) collected on-site using a weather station attached to the PV plant, along with cell temperature (Tcell-oC) readings, are used as inputs. The measured PV array and AC power output values obtained from the BAPV plant data loggers are used as labels for the ML model. All algorithms are trained and tested on a high-frequency dataset covering one full year (from October 2022 to September 2023) of 5-minute interval measured plant performance data, ensuring the representation of different seasonal conditions. Afterward, the ML models are validated for short-, medium-, and long-term forecasts across multiple time horizons: 1 day (for sunny and cloudy conditions), 1 week, and 1 month ahead. Additionally, the models are also assessed for their ability to capture sudden variations in solar generation. For the prediction horizons in the year 2024 (one day, one week, and one month), each model was provided with actual measured weather inputs for the target periods, which were excluded from the training phase. The PV outputs for these periods were unknown to the models and were predicted solely from the unseen meteorological data. This setup allowed us to evaluate and compare the generalization performance of the different models under real measured weather conditions. All models are implemented and tested using the RapidMiner software environment.

Paper organization

The paper is structured into four main sections: Sect. 2 presents the BAPV plant description; Sect. 3 outlines the methodology, including a description of the environmental and power plant parameters, the data pre-processing and feature selection techniques employed, and the evaluation metrics used to assess MLA performance. A detailed analysis and discussion of the results are presented in Sect. 4. Finally, Sect. 5 summarizes the key findings and conclusions of the study.

BAPV power plant description

The grid-connected BAPV system is located in New Nozha, Cairo, Egypt, at 30° 7’ 49.44’’ N latitude and 31° 22’ 48’’ E longitude, facing south (zero azimuth) with a fixed tilt angle of 26o (Fig. 1a). The total capacity of the PV array system is 30.26 kW, arranged into two sub-arrays, each with a capacity of 15.13 kW. Each sub-array includes 34 (TallMax 445 W) panels arranged in two parallel strings of 17 modules in series (Fig. 1b). The mono-crystalline silicon PV module (TallMax-TSM-DE17M (II)) has a maximum power of 445 W and an efficiency of 20.4% under standard test conditions (STC) of 1000 W/m2 and 25 °C17.

These PV modules are connected to a 27.6 kW three-phase inverter (ABB TRIO-27.6-TL-OUTD) –Table 2 demonstrates all electrical characteristics of PV modules and the inverter. Meteorological data collected from an on-site weather station, alongside electrical parameter measurements, were systematically recorded using a Solar-Log Base 100 data logger. This data acquisition unit recorded measurements at regular intervals, functioning as the central monitoring device that interfaces the PV station and weather sensors. High temporal resolution data were gathered from the PV system and weather monitors, sampled at 5-minute intervals. The collected parameters included solar irradiance (Srad-W/m2), ambient temperature (Tamb-oC), wind speed (Ws-m/s), cell temperature (Tcell-oC), as well as PV array output power (PPV) and AC power fed to the grid (PAC).

This dataset is transferred from the on-site monitoring equipment to a PC installed at the ERI building for long-term storage and analysis. With measurements logged every 5 min, intra-hour and short-term variations in solar generation and atmospheric conditions could be accurately tracked.

(a) PV array at ERI rooftop, (b) Schematic diagram of the PV plant.

Methodology

In this study, machine learning algorithms are employed to develop multi-label forecasts of the PV and AC power output for a BAPV plant. Different groups of machine learning algorithms and datasets are proposed to apply the forecasting model to ensure the accuracy and reliability of these models. The proposed MLAs consist of neural-based methods, including neural networks (NN), deep learning (DL), regression-based methods (linear regression (LR), tree-based methods including gradient-boosted trees (GBT), random forests (RF), and decision trees (DT), lazy-based methods (K-NN), and finally support vector machines (SVM).

RapidMiner Studio’s graphical user interface software is used in the modeling implementation. RapidMiner is a data analytics software platform created in 2001 by Ralf Klinkenberg, Ingo Mierswa, and Simon Fischer18. It offers a range of operators and repositories for processes like data preparation, transformation, modeling, and evaluation. Specific functionality includes tools for process control and utilities, repository access, importing/exporting, data manipulation, and model building. This research utilized Rapid Miner Studio version 10.1 Educational edition. Its comprehensive toolset is aimed to support the end-to-end data science workflow, covering aspects such as parameter tuning, model training, validation, and performance evaluation.

Figure 2 shows the flowchart implemented in this study, comprising input, training, and forecasting phases. The input phase involves data collection, providing environmental inputs of solar irradiance, ambient temperature, wind speed, and cell temperature measured on-site. Recorded PV and AC power outputs are the data labels to be predicted. Data pre-processing, discussed in Sect. 3.2, is then conducted. This important step refines the inputs before modeling to improve accuracy. During training, machine learning algorithms are developed using the pre-processed, historical input-output datasets. Models learn the relationships between inputs and targets to perform forecasts.

The forecasting phase allows for predicting PV and AC power production into the future. As new, real-time data is collected daily, models are continuously trained and used to issue multi-horizon power generation forecasts. The dataset used in the study is one year in length, ranging from October 2022 to September 2023, representing all seasons of the year, including winter, with high fluctuation in solar radiation and prediction complexity.

After the raw data were pre-processed, feature selection techniques such as feature importance ranking and Pearson correlation were then applied to identify the most predictive independent variables to include in the models. The pre-processed data was divided into training, validation, and test subsets using split sampling with a 70:30 ratio for training and testing, respectively. Machine learning algorithms were trained on the training set to iteratively learn patterns in the data and optimize model parameters through multiple iterations. Concurrently, hyper-parameter tuning using the validation set helped configure aspects of model structure not learned during training, such as the number of hidden layers in an NN and the kernel number in SVM. Once fully specified, the trained machine learning models were evaluated on their predictive performance using previously unseen observations from the held-out test set, to objectively measure how well the models generalize to new data. This workflow helps develop robust and optimized statistical learning models for reliable predictive accuracy assessments.

As part of the supervised learning process, the models developed using each MLA experienced further refinement and performance evaluation on independent validation data. The internal parameters of the algorithms were fine-tuned to optimize predictive accuracy. A variety of error and correlation metrics were then computed to assess and compare forecast quality across MLAs. These included absolute error (AE), root mean square error (RMSE), normalized absolute error (NAE), relative error (RE), relative root square error (RRSE), and correlation coefficient (R).

Flowchart of the proposed ML-based power forecasting methodology.

The workflow steps are summarized as follows:

-

a)

The PV plant data are collected through the weather station and data logger. This includes all meteorological data and measured outputs of the PV plant over one year with a 5-minute resolution.

-

b)

The data are prepared according to the required forecasting horizon (short, medium, or long-term).

-

c)

RapidMiner software is used to implement the MLAs. The data are retrieved for training and validation.

-

d)

Data filtering is performed to remove outlier values.

-

e)

Feature selection identified important input features and labels to predict.

-

f)

A data normalization technique is chosen based on the ML modeling approach.

-

g)

The pre-processed data was split into 70% for training and 30% for testing subsets.

-

h)

A multi-label machine learning models are applied to forecast AC power and PV power outputs.

-

i)

A validation model is then applied to a new dataset based on multiple time horizons to verify the model.

-

j)

The performance indicators of the model are evaluated and printed.

Environmental parameters and data collection

The relationship between meteorological factors and photovoltaic (PV) power output is location-dependent, dictated by geographical and climatic conditions. Consequently, the degree of correlation between weather inputs and PV generation differs between sites. However, forecasting model accuracy hinges on the input-output correlation structure19, and a site-tailored approach is necessary. Different factors affect PV forecasting accuracy, making such predictions a sophisticated process. It depends on factors such as forecasting horizons, forecast model inputs, and performance estimation20.

Meteorological parameters play a pivotal role in PV power forecasting performance, as they directly influence generation levels. The most significant input is solar irradiance, followed by ambient temperature, as both are strongly correlated with PV output. The movement of clouds also determines sudden and abrupt changes in PV power production. This study selects all key meteorological factors that comprehensively represent site conditions as input features. Solar irradiance is the dominant parameter, given its direct energy relationship. Ambient temperature further impacts efficiency, especially at higher levels. Cell temperature and wind speed round out the input set. The performance of a prediction model is directly affected by the season of the year4; thus, seasonal effects necessitate balanced data representation during model training. As performance varies by weather patterns throughout the year, an equally distributed dataset covering all four seasons was used. This improves model generalization beyond any single season and avoids bias. Also, optimizing input selection is important to maximize accuracy while constraining computational overhead. In this case, all primary meteorological drivers are incorporated as inputs to provide a holistic view of influencing conditions impacting PV generation. Seasonal weighting also strengthens model robustness.

In this study, the meteorological data are measured from Oct 2022 to Sept 2023. This dataset comprises eight attributes, including date, time, radiation, ambient temperature, wind speed, cell temperature, PV power, and AC power. Data was recorded via the data logger at five-minute intervals (comprising more than 105 K data samples per parameter). The initial dataset is split to be trained and tested (70%-30%). The original dataset is measured every 5-minute record, hourly, and daily measurements. In this study, all meteorological parameters that affect PV production are selected as input features; this includes solar irradiance as a dominant parameter, ambient temperature as the second factor that has an impact at high temperatures, cell temperature, and finally wind speed. The PV power and AC power are selected as the targets for the multi-label machine learning model.

Figure 3 demonstrates the variation in meteorological parameters and cell temperature over the selected year of the study. Solar irradiance changes from 0 to 1000 W/m2, with some minor readings up to 1200 W/m2 under clear weather conditions, especially in April and May. Ambient temperature varies from 10 to 44 °C, with minimum night temperatures near 10 °C and maximum daily temperatures approaching 44 °C. Cell temperature spans 10–68 °C. Wind speed fluctuates from 1 to 9 m/s, with repeating values at low levels, as Cairo has typical moderate to low wind speeds. Before modeling, this dataset goes through pre-processing to improve model accuracy. Pre-processing includes two main processes: filtration and data normalization. Outlier filtration removes odd readings while data normalization scales variables to the same measurement units, positioning data for optimal training. The appropriate data normalization methodology was selected according to the machine learning approach. As shown in Fig. 3, environmental patterns exhibit substantial seasonal and daily fluctuations influencing PV generation levels.

Full-year meteorological data recordings (a) Srad (b) Tamb, (c) Ws, and (d) Tcell.

Feature selection

Feature selection is an important step to identify the most influential input variables and discard irrelevant or redundant features. This helps develop a suitable feature subset for the model while improving performance and interpretability. In this study, the Pearson correlation coefficient6 was used to evaluate the relationship between each input predictor variable and the target (label) variable, due to the inherent characteristics of PV data. R is a widely applied metric of linear correlation between two quantitative variables, with a value between − 1 and 1. It indicates both the direction and magnitude of association - whether an increase in one variable tends to be accompanied by an increase or decrease in the other. Mathematically, the R between a feature x and target y is defined as the covariance of the two variables divided by the product of their standard deviations21,22:

Where, \(\:{x}_{i}\) and \(\:\bar{x}\) are the values and the mean of the x-variable in a dataset, respectively, \(\:{y}_{i}\) and \(\:\bar{y}\) are the values and the mean of the y-variable in a dataset, respectively. In our case, \(\:{x}_{i}\) represents input features (Srad, Tamb, Ws, and Tcell), while y represents output labels to be predicted (PPV, and PAC).

Data pre-processing

Input solar power and meteorological data require pre-processing to enhance model accuracy and improve computational efficiency. Raw datasets may contain transient spikes and non-stationary components due to unpredictable weather. Issues like outliers, sparsity, and abnormal records are common and can interfere with modeling patterns. Pre-processing aims to refine datasets before ML model development. A series of filtering steps was applied to both photovoltaic power output and meteorological data. First, physically improbable values such as negative numbers, null readings due to sensor errors, or periods of missing sensor recordings were removed. Streamlining datasets aimed to reduce improper training problems and computational costs from irregularities, outliers, and irrelevant inputs. Providing full high-resolution datasets, including night-time null PV values, could negatively impact training and accuracy due to data sparsity. To address this, the night-time sampling frequency was reduced without a complete removal. Filters removed implausible outliers and days with extensive missing values.

In our study, a filtration strategy was applied to remove negative irradiance values, ensuring data consistency. Additionally, sparse night-time data, where PV power is null, was identified as a potential factor affecting model performance. To address this, the night-time dataset was reduced but not eliminated, as these data points represent the cyclic behavior of solar irradiation absence, which is essential for capturing realistic system dynamics. Furthermore, days with insufficient data records were filtered out to maintain dataset integrity and prevent inconsistencies during model training. By thoroughly cleaning and conditioning the datasets before model development, this strategy helped minimize potential training issues and computational burdens that incomplete or improper inputs may introduce. Pre-processing steps aimed to remove faulty inputs, address sparsity, isolate consistent patterns, and balance dataset properties for optimized machine learning. This facilitated proper learning of historical trends and enhanced forecasting performance.

The second step after applying filtration is data normalization. This study implements two data normalization techniques: range transformation and z-transformation. The selection criterion was based on the proposed machine learning algorithm. Range transformation normalized data to a fixed range between 0 and 1. In the range normalization technique, the input data are rescaled to fall within a smaller common range from their original wider range. Specifically, all variables are scaled between 0 and 1. This approach helps reduce regression errors by restricting values to a narrow interval while maintaining correlations between input parameters. It prevents variables with inherently high values from dominating those with smaller scales. Normalizing data in this way further allows machine learning algorithms to treat each feature equally during training, improving both training speed and convergence. Variables standardized to the same scale can then be directly compared in terms of their relative impact.

Mathematically, the min-max scalar transforms data according to the formula23:

Where,\(\:\:\widehat{zs}\) is the scaled value, \(\:zi\) is the measured value, \(\:{z}_{max}\) and \(\:{z}_{min}\) are the maximum and the minimum values of the dataset.

Range normalization rescales input data to a standardized interval; it transforms a wider range of data values into a restricted range between zero and one. In this study, the “min-max scalar” method is employed for range normalization. This normalization provides data rescaling to a constrained range that minimizes regression errors, preserves correlations within datasets, and improves precision. Moreover, range normalization addresses scale differences among input features that could otherwise dominate the modeling process. By standardizing to a common scale, variances in value magnitudes between parameters are mitigated. As a result, all inputs can be treated equally during training without some features overriding others. This optimized weighting boosts algorithm calculation speeds and convergence. The range normalization is used for all models tested in this study except support vector machines.

SVM instead used z-transformation, which standardizes variables by removing the mean and scaling them to unit variance. Compared to min-max normalization, the z-score transformation provides more accurate predictive results when using SVMs. The approach of standardizing by mean and variance yields superior model performance for SVMs versus other machine learning algorithms. This processing fits the SVM algorithm, which relies on calculating distances between samples. This normalization is a commonly used technique to standardize data values. It transforms values by subtracting the original mean and dividing by the standard deviation. The result is distributed data with a mean of 0 and a standard deviation of 1. This preserves the original shape of the distribution while making values dimensionless and comparable on the same scale24.

The z-score normalization formula applied to each value in the dataset is:

Where, \(\:\mu\:\) is the mean of the data, and \(\:\sigma\:i\) is the standard deviation of the data.

Machine learning algorithms

Function-based algorithms

Regression analysis is a statistical method used for numerical prediction. It quantifies the relationship between a dependent or target variable (i.e., the label attribute) and multiple independent variables (regular attributes)25,26.

There are various regression techniques; two are used in this research:

-

a.

Linear regression (LR)

The mathematical representation of PV predicted power using LR is as follows:

Where Srad, Tamb, Ws, and Tcell are input features. \(\:{\beta\:}_{0}\) is the model intercept, \(\:{\beta\:}_{1},\:{\beta\:}_{2},\:{\beta\:}_{3},\:{\beta\:}_{n}\) are the predictor coefficients. \(\epsilon\) is the error term.

-

b.

Polynomial regression (PR)

Artificial neural network-based algorithms

Artificial neural networks (ANNs) are computational models inspired by biological neural circuits in the brain. An ANN contains interconnected units called neurons that process information via weighted links resembling synapses. Most commonly, ANNs are adaptive systems that can change their structure based on information flow during training. This enables them to model complex input-output relationships and discover hidden patterns in data. A feed-forward neural network is a basic ANN architecture where connections only transmit information in one direction, from input to output nodes, without cycles. Within this acyclic flow, data passes through one or more hidden layers of nodes that help extract higher-level features. During learning, feed-forward networks are presented with training examples, and weights are adjusted iteratively via back-propagation of error signals to minimize loss. This allows the network to gradually tune its synapse-like parameters until it can accurately map new inputs to predicted outputs29.

The back-propagation algorithm is a supervised learning technique for neural networks composed of two primary phases - propagation and weight updating. These phases operate through iterative training cycles. During propagation, input data is fed forward through the network to generate output predictions. The output values are then compared to true targets using an error function to quantify performance. Error signals derived from this analysis are then propagated backward through the network. This initiates the weight updating phase, where connection weights between nodes are adjusted in a direction that reduces the overall error. Repeated application of these two phases typically drives the network towards a stable state of minimal error, indicating it has learned the underlying relationships in the training data26.

A multilayer perceptron (MLP) is a type of artificial neural network well-suited for classification and regression problems. It employs a feed-forward architecture with one or more hidden layers of nodes situated between the input and output layers. All nodes are interconnected via bidirectional connections trained with back-propagation. Each node applies a nonlinear activation function, commonly sigmoid, to introduce nonlinearity. This allows MLPs to learn complex patterns across large input spaces30,31. In this work, two NN-based algorithms are implemented, which are:

-

a.

Neural network

The ML model architecture used in this study is a feed-forward multi-layer perceptron NN trained with a back-propagation algorithm. Within this framework, a standard sigmoid activation function is applied to the nodes. To suit the range expected by the sigmoid function, all input attributes were normalized to scale between − 1 and 1 using a normalization preprocessing step. Moreover, the output node uses either a sigmoid or linear activation function depending on the type of problem. Specifically, a sigmoid output activation was used since the task involves forecasting/classifying a variable, which represents a classification problem. On the other hand, a linear activation would be more appropriate for numeric regression tasks where the exact target value is to be predicted32,33. The mathematical representation of PV predicted power using NN is as follows:

-

b.

Deep learning

The deep Learning model34,35,36, in this work, is based on a multi-layer feed-forward artificial neural network that is trained with stochastic gradient descent with back-propagation. The deep Learning algorithm in Rapidminer uses H2O optimization18. The network contains a large number of hidden layers consisting of neurons with tanh, rectifier, and max-out activation functions. The mathematical representation of PV predicted power using DL is as follows:

Where X is (Srad, Tamb, Ws, and Tcell), \(\:L\) Total number of layers in the deep neural network. \(\:\varvec{\upomega\:}\) is the weight matrix for layer i. \(\:{\text{b}}^{\left(i\right)}\)is the bias vector for layer i. \(\:{f}^{\left(i\right)}\) and \(\:{f}_{\text{out\:}}\)are activation functions for layer i and the output layer, respectively.

Trees-based algorithms

-

a.

Decision tree

A decision tree is a tree-based model that uses a hierarchical approach to classify or estimate target variables based on a set of input features or independent variables. The model contains a root node, internal decision nodes, and leaf nodes18. At each internal node, the data is split using a decision rule based on the value of a single predictive variable. The split separates the data into two or more homogeneous sets to be directed to the next child nodes. New child nodes are recursively generated from parent nodes until a stopping criterion is reached, such as a perfect split or a predefined depth limit. Predictions are determined depending on the majority class (for classification) or average value (for regression) within each terminal leaf node. For regression problems, the goal is to reduce the error of predicting a numerical target variable in the most optimal way. The hierarchical structure allows decision trees to model complex relationships between features and uncover interaction effects that may improve prediction performance. The mathematical representation of PV predicted power using DT is as follows:

Where \(\:{R}_{i}\) is a region in the feature space where the ith rule applies.\(\:\:{c}_{i}\) is a predicted constant value for the region \(\:{R}_{i}\). \(\:I\left(\text{X}\in\:{R}_{i}\right)\) is an indicator function, which is 1 if \(\:\text{X}\) belongs to the region \(\:{R}_{i}\) and 0.

-

b.

Random forest

A random forest is an ensemble method that trains multiple decision trees on bootstrapped subsets of the original training data. The number of trees comprises a hyper-parameter called the “number of trees”. During training, each tree node represents a rule for splitting the data based on the optimal values of a random subset of predictors. This splitting criterion aims to reduce errors in target value estimation.

New nodes are recursively added in this splitting manner until meeting the stopping criteria. Only a fraction of available features specified by the “subset ratio” is considered at each potential split point to minimize correlation between trees. Once fully grown, the forest combines the predictions of its constituent trees via averaging (regression). This introduces diversity that mitigates over-fitting to any single training sample or feature subset, resulting in an accurate and robust predictive model37,38,39,40.

The mathematical representation of PV predicted power using RF is as follows:

Where \(\:T\)is the total number of DTs in the RF. \(\:{P}_{PV}^{\left(t\right)}\) is the prediction from the tth DT, which is computed using the rules of that tree.

\(\:{{c}_{i}}^{\left(t\right)}\) is the constant value predicted by the tth tree for the region \(\:{{R}_{i}}^{\left(t\right)}\). \(\:I\left(\text{X}\in\:{{R}_{i}}^{\left(t\right)}\right)\) is the Indicator function for whether \(\:\text{X}\) belongs to the region \(\:{{R}_{i}}^{\left(t\right)}\). \(\:{{R}_{i}}^{\left(t\right)}\) is a region i of the feature space defined by the tth tree.

-

c.

Gradient-boosted trees

GBT is an ensemble learning technique that builds and combines weak prediction models sequentially to optimize predictive performance41. Like other boosted tree algorithms, it trains a series of decision tree models on adjusted versions of the training data. Specifically, trees are added one by one to minimize a differentiable loss function via gradient descent, providing a more structured and interpretable approach compared to other boosted tree techniques. This gradual, error-focused learning procedure improves the accuracy and controls the complexity of the trees to avoid over-fitting. While slower than a single model, the ensemble of tweaked decision trees combines to perform better than any single estimator42,43,44,45. The mathematical representation of PV predicted power using GBT is as follows:

Where \(\:M\) is the total number of trees in the model.\(\:\:{T}_{m}\left(\text{X}\right),\:\)prediction from the \(\:{M}^{th}\) decision tree.\(\:\:\eta\:\) is the learning rate.

Lazy-based algorithms

Lazy learning algorithms, also known as instance-based algorithms, make predictions based on similarity to previously stored examples or instances, without explicit generalization.

-

a.

k-Nearest neighbor

The most common lazy learning model is the k-nearest neighbor (k-NN) algorithm. The k-NN algorithm makes predictions based on the closest training examples in a feature space46. During training, k-NN simply stores the feature vectors and corresponding target values and does not perform any explicit generalization. To classify a new example, k-NN finds the k closest examples already stored (based on a distance measure like Euclidean) and predicts the most common class among those neighbors. For regression, it averages the target variable values of the nearest neighbors to forecast a continuous value. Nearer neighbors may be weighted more heavily to influence the prediction. Feature values are often normalized before distance calculations to avoid biases from variations in scale. In this paper, common preprocessing min-max scaling is applied. An advantage of k-NN is that it is simple to implement and understand. A disadvantage is that it requires lots of memory to store all training examples and has a high computational cost for classification16,47,48,49,50. The mathematical representation of PV predicted power using K-NN is as follows:

For each training data, compute the distance \(\:d\left(\text{X},{\text{X}}_{i}\right)\) between the features and the training point. A common distance metric is Euclidean distance, which is given by:

Support vector machine-based algorithms

The model in this work uses the mySVM software package developed by Stefan Ruping for support vector machine (SVM) modeling. Specifically, it utilizes mySVM’s Java-based implementation, which restricts SVM modeling to a linear kernel function18. However, this linear kernel approach results in a parsimonious SVM model that only contains the linear coefficient terms. This more compact representation allows for faster deployment and application of the trained model compared to other SVM kernel types that have greater complexity. By leveraging mySVM’s efficient linear SVM formulation, the proposed methodology aims to balance predictive accuracy with reduced computational overhead for practical solar power forecasting applications. The mathematical representation of PV predicted power using SVM is as follows:

Where \(\:\text{X}\) is the feature vector. \(\:b\) is a bias term that shifts the decision boundary. \(\:\varvec{\upomega\:}\) is a weight vector orthogonal to the decision hyperplane.

Performance indices

In order to evaluate the performance of each machine learning algorithm (MLA) used in this work and assess their predictive accuracy, various statistical metrics are employed. These metrics include the absolute error (AE), root mean square error (RMSE), normalized absolute error (NAE), relative root square error (RRSE), and correlation coefficient (R). The Absolute Error is the default function of the RapidMiner software51.

Where \(\:{y}_{i}\) is the measured power value, \(\:{y}_{p}\) is the predicted power value.

Results and discussion

Models train and test

In this study, we used weather data and cell temperature to train various MLAs for predicting PV and AC power. We utilized a one-year dataset for training and testing, employing RapidMiner software. Our main goal was to minimize the absolute error as the default function. After data pre-processing and feature selection, 70% of the dataset was used for training and validation, while the remaining 30% was kept for testing. The proposed MLAs include a neural-based method (NN, DL), regression-based methods (LR, PR), trees-based methods (GBT, RF, DT), and the k-NN) lazy-based method, and SVM.

The dataset used for training, testing, and validation consists of high-resolution measurements recorded at 5-minute intervals over a full year from 1st October 2022 to 30th September 2023 (30.26 kW PAPV plant), comprising 105,000 data samples. This ensures the model captures various seasonal and environmental variations. The training and testing phases utilize the entire year’s data to establish robust learning patterns across different weather conditions. For forecasting, validation is performed using only input weather variables—solar radiation, ambient temperature, cell temperature, and wind speed—without direct knowledge of future power output. The model then predicts PV power for different horizons, including short-term (one-day), medium-term (one-week), and long-term (one-month) forecasts. This approach allows the model to generalize effectively across time horizons while maintaining accuracy by leveraging historical relationships between meteorological variables and PV output.

-

Short-term horizon: A 24-hour input period, including one sunny day and one cloudy day in 2024 (dataset: 288 samples/parameter).

-

Medium-term horizon: A seven-day input period in 2024 (dataset: 2016 samples/parameter).

-

Long-term horizon: A one-month (30-day) input period in 2024 (dataset: 7823 samples/parameter).

The data is normalized to a range of [0, 1]. Normalizing the data has the advantage of eliminating the impact of data units on the model, speeding up convergence, and reducing training time during the learning process. The performance test set is used to assess the accuracy of the model’s forecasts. The PC specifications used to execute the software: Intel® core™ i7-4702MQ – 2.2 GHz, RAM 8 GB.

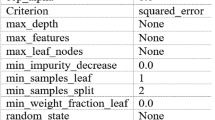

Table 3 shows the evaluation indices of the tested MLAs. Based on the performance metrics presented in the table, our analysis reveals that the top-performing MLAs consist of RF, DL, and DT, which consistently achieve superior accuracy with RMSE values between 0.022 and 0.023, absolute errors of 0.015 and 0.016, and excellent correlation coefficients of 99.7%. These algorithms demonstrate remarkable precision in PV power forecasting, maintaining relative errors between 6.04% and 12.48%, significantly lower than their counterparts. LR, NN, and K-NN still deliver strong results with slightly higher error metrics (RMSE: 0.024–0.025, AE: 0.017) while maintaining excellent correlation coefficients between 99.6% and 99.7%. However, their relative errors show greater variation, ranging from 12.26% to 33.65%. While SVM, GBT, and PR demonstrate substantially higher and relative errors, PR is particularly underperforming across all metrics. DT offers the optimal balance with the fastest execution time (1 s) while achieving near-best performance across all metrics, including the lowest relative error (6.04%) among all algorithms. These results have critical practical implications for grid operations across different timeframes. DT offers the ideal balance of speed and accuracy for real-time grid operations, with its 1-second execution time and RE of 6.04% providing grid operators with highly reliable short-term forecasts that can be updated frequently. Figure 4 compares the yearly predicted PV and AC power output to the actual output, including environmental variables. This figure is for the RF algorithm, which has the best performance. As shown, the actual and predicted values follow a similar trend throughout the different seasons and times of the year. Figure 5 highlights the benefits of predicting both PV and AC power output together. It shows the actual and predicted inverter efficiency. Both values follow about the same trend, with only minor differences at low radiation levels. However, at high radiation levels, the actual and predicted inverter efficiencies are almost identical. In that case, the actual and predicted average efficiencies of the inverter are 0.96688 and 0.9638, respectively.

PV and AC power variation with environmental conditions based on RF algorithm prediction.

Actual and predicted inverter efficiency based on the RF algorithm.

Multiple forecasting horizons validation

This section evaluates the performance of different MLAs (NN, DL, LR, GBT, RF, DT, NN, and SVM) for different forecasting horizons under varying weather conditions.

For short-term forecasting, validation data from a sunny day and a cloudy day were used in this model. Figure 6 compares the measured and predicted power outputs from all models for a sunny day, representing the short-term forecasting horizon. The prediction data used in this test contained 288 values at 5-minute intervals. All models achieved a good level of accuracy in predicting power output, whereas the results seemed similar across all models, with slightly higher accuracy for the NN and RF models. The RF model provided the best results. Table 4 shows the average evaluation metrics of the MLAs for the sunny day validation data. The table shows that the sunny day performance data demonstrates the superiority of RF, DL, DT, and NN algorithms. Under sunny conditions, these algorithms achieve RMSE values between 0.023 and 0.026 and absolute errors between 0.015 and 0.018, confirming their reliability across different weather patterns. Notably, all four algorithms achieve perfect correlation coefficients (R = 100%) except for DL (99.9%), indicating exceptional pattern-matching capabilities during clear sky conditions when solar irradiance follows more predictable daytime patterns. A key finding emerges when examining relative error (RE) metrics for sunny day forecasting. DT demonstrates remarkable RE of just 8.91%, substantially outperforming other MLAs. Even more impressive is K-NN, which achieves the lowest RE at 5.39%. This suggests that K-NN’s instance-based learning approach may be particularly effective at capturing the characteristic patterns of sunny day PV generation. RF maintains strong performance with 14.57% RE. NN shows a somewhat higher relative error at 50.29% despite its low RMSE and perfect correlation coefficient. These results have vital practical implications for grid operators and energy market participants. During sunny days when PV production is at its peak, selecting a forecasting algorithm significantly impacts prediction accuracy. For real-time operations requiring frequent forecast updates, DT offers an optimal combination of speed (1 s) and accuracy (RE: 8.91%). The perfect correlation coefficients achieved by several algorithms (LR, GBT, RF, DT, NN) during sunny days indicate that they all capture the fundamental diurnal pattern of solar production under clear conditions.

Short-term power forecasting of a sunny day (a) solar radiation, (b) Temperature (cell and ambient), (c) PV power variation, and (d) AC power variation.

Figure 7 shows that the power output values forecasted by the MLAs using cloudy day (288 samples at 5-minute intervals) validation data are very close to each other and have good agreement with the measured values. The error metrics RMSE, AE, and RE are also small. The performance of the DL, DT, and RF models was superior to the other models, especially under highly variable weather conditions like a cloudy day. Table 5 presents the evaluation metrics of the different MLA models for the cloudy day validation data. This table reveals a difference in MLAs’ effectiveness compared to sunny conditions. LR and DT emerge as influential performers during cloudy days, achieving the lowest RMSE (0.016 and 0.018) and AE (0.009) values while maintaining speedy execution times of just 1 s each. The relative error (RE) patterns during cloudy conditions show that DT performs well with 11.27% RE, followed closely by RF at 17.08%. The substantial improvement in SVM’s relative error suggests it may be particularly adept at handling the complex, non-linear relationships characterizing cloudy day PV generation. Most algorithms maintain high correlation coefficients (R ≥ 99.9%) during cloudy conditions, with only PR showing a significantly lower correlation (84.5%). This indicates that most algorithms successfully capture the temporal patterns of PV generation even under variable cloud conditions. However, the absolute and relative error metrics reveal important differences in prediction magnitude accuracy. GBT and PR continue demonstrating poor performance with exceptionally high relative errors (1332.01% and 1142%, respectively), confirming their unsuitability for PV forecasting across weather conditions. The cloudy day analysis suggests that simpler, faster algorithms like LR and DT are preferable for forecasting during variable cloud conditions, offering both superior accuracy and computational efficiency.

Short-term power forecasting of a cloudy day (a) solar radiation, (b) Temperature (cell and ambient), (c) PV power variation, and (d) AC power variation.

Having evaluated short-term forecasting performance for full sunny and cloudy days, further analysis was done to assess the models’ accuracy during sudden changes in conditions. In this test, the validation data contained 90 records where solar radiation suddenly varied between 200 and 1000 W/m2, incrementing/decrementing by 200 W/m2 each time (Fig. 8). This tested the models’ ability to predict abrupt shifts in radiation levels. The results showed very accurate forecasting by all the MLAs and programs in this step-change scenario. NN and RF models demonstrated the best prediction accuracy. As Table 6 shows, the evaluation metrics for sudden variations in solar radiation represent the most critical test case for PV forecasting algorithms, as these rapid transitions from low to high irradiance (or vice versa) present significant challenges for grid stability and economic dispatch. LR demonstrates strong performance during sudden variations, achieving an RMSE of 0.018 and an AE of 0.012, with the lowest relative error among all algorithms at just 3.79%. This is particularly noteworthy given LR’s computational efficiency (1-second execution time) and relative simplicity compared to more complex algorithms. NN also gives an impressive RE of 3.61%, suggesting its ability to capture complex non-linear patterns becomes particularly valuable during rapid irradiance transitions. RF maintains its strong overall performance with low RMSE (0.017) and AE (0.012) values, though its RE (3.98%) is slightly higher than LR and NN. DT and DL follow closely with similar error metrics and excellent correlation coefficients, demonstrating their reliability across varied conditions. For grid operators concerned with ramp events and sudden production changes, these results suggest that simpler algorithms like LR and NN offer excellent performance for detecting and predicting rapid transitions in PV output while maintaining high computational efficiency.

Short-term power forecasting with sudden variation (a) solar radiation, (b) Temperature (cell and ambient), (c) PV power variation, and (d) AC power variation.

For validating model performance at the medium-term forecasting horizon, data spanning a full week was utilized, consisting of 2016 samples. Figure 9 depicts a comparison between measured PV plant output and the predicted values generated by all machine learning models under evaluation. Various error and correlation metrics were calculated to summarize and compare the forecasting accuracy attained by each machine learning algorithm, with results presented in Table 7. The evaluation metrics for one-week time horizon forecasting provide valuable insights into algorithm performance for medium-term PV prediction, which is crucial for weekly energy market participation and operational planning. This analysis reveals distinct patterns in prediction accuracy and computational efficiency for this important forecasting timeframe. DT is particularly impressive for one-week forecasting, achieving the lowest relative error (7.79%) while maintaining the fastest execution time (1 s) along with LR. LR and RF also demonstrate excellent performance with low RMSE values (0.018) and absolute errors (0.011), though their relative errors (19.48% and 14.95%, respectively) are somewhat higher than DT. NN, DL, and K-NN have similar RMSE values between 0.017 and 0.020 and identical absolute errors of 0.012. However, their relative errors vary significantly—DL achieves 17.17% RE, while NN and K-NN show higher values around 62%. This disparity between absolute and relative error metrics suggests these algorithms may struggle with consistent magnitude prediction across different production levels in the week-long horizon, despite capturing temporal patterns accurately. SVM presents an interesting case for weekly forecasting, achieving a competitive relative error (13.58%) with reasonable computational efficiency (5 s), representing its best performance across all analyzed scenarios. This suggests SVM may be particularly well-suited for capturing the complex patterns spanning multiple days within a week. GBT and PR continue their pattern of poor performance, with PR showing an extremely high relative error (991.58%) and GBT exhibiting an RE value of 153,521%, indicating complete unsuitability for week-long forecasting. This analysis suggests DT for practical applications, which offers the optimal combination of accuracy and computational efficiency. DT provides reliable predictions with minimal computational overhead, while RF offers slightly improved absolute accuracy at a higher computational cost for applications where execution time is less constrained.

Medium-term power forecasting for one week (a) solar radiation, (b) Temperature (cell and ambient), (c) PV power variation, and (d) AC power variation.

To evaluate model performance under long-term forecasting, the models were validated using a dataset spanning one full month of generation records. Figure 10 shows measured versus predicted PV output for April 2024, consisting of 7823 samples. Table 8 provides the analysis of machine learning algorithms for one-month PV power forecasting, revealing that DT delivers excellent performance, achieving the lowest RE (5.75%) while requiring minimal computational time (1 s). This outstanding efficiency-accuracy balance makes DT particularly valuable for operational long-term forecasting systems. RF and DL also demonstrate excellent performance with identical absolute errors (0.008) and low RMSE values (0.014), though RF achieves perfect correlation (R = 100%) at the cost of substantially higher computational demands (20 s). SVM shows notable improvement in monthly forecasting with competitive relative error (7.87%), but its extreme computational cost (193 s) limits practical application. NN and K-NN maintain strong performance with slightly higher error metrics, while LR offers reasonable accuracy with excellent computational efficiency. GBT and PR perform extremely poorly with relative errors exceeding 1,500%, confirming their unsuitability for PV forecasting. Most algorithms successfully capture the seasonal patterns in monthly PV generation (R ≥ 99.9%), but the significant variations in relative error despite similar correlation values highlight the importance of comprehensive performance evaluation. DT is the optimal choice for utilities and system operators requiring monthly PV forecasts for long-term planning and grid management, providing reliable predictions with minimal computational overhead while maintaining consistent excellence across multiple time horizons and weather conditions.

Long-term power forecasting for a month (a) solar radiation, (b) Temperature (cell and air), (c) PV power variation, and (d) AC power variation.

Conclusion

This paper developed multi-label forecasting models for predicting the PV array and AC power outputs of a BAPV plant, utilizing different machine-learning algorithms and meteorological variables for short-, medium-, and long-term forecasts. The models were trained on a high-frequency dataset spanning one year of actual performance data, implemented and tested in the Rapidminer environment, and validated through experiments conducted at the ERI rooftop in Cairo, Egypt.

Key findings indicate that solar irradiance and ambient temperature significantly influence PV and AC power outputs. RF, DT, and DL models consistently demonstrated superior forecasting accuracy across all cases, achieving high correlation (R ≈ 99.8–100%) and low errors. For short-term forecasting, RF and NN performed best on sunny days, with RMSE as low as 0.023 and AE around 0.015, while DL, DT, and RF handled cloudy conditions well with RMSE ≈ 0.018 and AE ≈ 0.009. During sudden irradiance changes, RF, NN, and DL maintained high accuracy (R ≈ 99.8%) and minimal errors (RMSE ≈ 0.017–0.019). Medium-term forecasts over a week reinforced RF and DT as top models, achieving RMSE between 0.017 and 0.020, while K-NN and DL also performed well with RMSE ≈ 0.019–0.023. Long-term predictions over a month confirmed RF and DL’s dominance, with RMSE ≈ 0.014–0.018 and AE ≈ 0.008–0.012, ensuring stable and accurate forecasting. PR and GBT repeatedly exhibited extreme instability, with RMSE reaching 0.315 and relative errors exceeding 1,500%, making them unreliable for precise PV power forecasting. SVM demonstrated a strong correlation (99.9%) but suffered from excessive computation time (up to 193 s), reducing its practicality. DT remained the fastest model (1 s) while RF balanced accuracy and efficiency. Overall, RF, DT, and DL emerged as the best-performing models, offering the highest reliability, adaptability, and accuracy across different forecasting time horizons and weather conditions. The results across all time horizons demonstrate that the proposed models can accurately predict PV output from unseen measured meteorological data. The high correlation achieved, therefore, reflects the models’ strong capability to map known weather conditions to PV output, confirming their robustness and generalization ability under real-world conditions. The accurate forecasts produced can assist grid operators in anticipating variability in PV power output, thereby facilitating the integration of intermittent solar energy into the grid. Understanding how PV generation fluctuates under varying meteorological conditions is crucial for ensuring consistent integration of this weather-dependent power source. Furthermore, the multi-label predictions for both DC and AC power support inverter efficiency optimization and grid integration analysis, offering valuable insights for industry practitioners.

Future work should focus on incorporating additional variables and real-time data to enhance model robustness. This approach could lead to the creation of a generalized model capable of performing effectively under various weather conditions, thereby improving the reliability and efficiency of solar power integration into the grid.

Data availability

The datasets used and/or analysed during the current study are available from the corresponding author on reasonable request.

Abbreviations

- AE:

-

Absolute error

- AI:

-

Artificial intelligence

- BAPV:

-

Building-applied photovoltaic

- DL:

-

Deep learning

- DT:

-

Decision trees

- ET:

-

Extra trees

- GBT:

-

Gradient-boosted trees

- k-NN:

-

K-nearest neighbor

- LSTM:

-

Long-short-term memory

- L:

-

Total number of layers in the deep neural network

- LR:

-

Linear regression

- MAE:

-

Mean absolute error

- MAPE:

-

Mean absolute percentage error

- MASE:

-

Mean absolute scaled error

- ML:

-

Machine learning

- MLAs:

-

Machine learning algorithms

- MLP:

-

A multilayer perceptron

- NAE:

-

Normalized absolute error

- NN:

-

Neural networks

- PAC:

-

AC power fed to the grid

- PPV:

-

PV array output power

- PR:

-

Polynomial regression

- R:

-

Correlation coefficient

- RE:

-

Relative error

- RF:

-

Random forests

- RMSE:

-

Root mean square error

- RRSE:

-

Relative root square error

- Srad-W/m2:

-

Solar irradiance

- STC:

-

Standard test conditions

- SVM:

-

Support vector machines

- T:

-

The total number of dts in the RF

- Tamb-oC:

-

Ambient temperature

- Tcell-oC:

-

Cell temperature

- Ws-m/s:

-

Wind speed

- \({b}_{j}^{\left(1\right)},\:{b}^{\left(2\right)}\) :

-

The biases for the hidden layer and output layer

- \({\beta}_{0}\) :

-

Model intercept

- \({\beta}_{1},{\beta}_{2},{\beta}_{3},{\beta}_{n}\) :

-

Predictors coefficients

- \({{c}_{i}}^{\left(t\right)}\) :

-

The constant value predicted by the tth tree

- \(\epsilon\) :

-

Error term

- \({f}_{\text{h\:}}\), \({f}_{\text{out\:}}\) :

-

The activation functions for the hidden layer and the output layer

- \(\mu\) :

-

The mean of the data, and σi is the standard deviation of the data

- \({\upomega}\) :

-

Weight matrix for layer

- \({x}_{i}\), \(\bar{x}\) :

-

The values and the mean of the x-variable in a dataset

- \({y}_{i}\), \(\bar{y}\) :

-

The values and the mean of the y-variable in a dataset

- \(\widehat{zs}\) :

-

The scaled value, \(zi\) is the measured value

- \({z}_{max}\), \({z}_{min}\) :

-

The maximum and the minimum values of the dataset

References

Cabello-López, T., Carranza-García, M., Riquelme, J. C. & García-Gutiérrez, J. Forecasting solar energy production in Spain: A comparison of univariate and multivariate models at the national level, Appl. Energy 350, 1–16 https://doi.org/10.1016/J.APENERGY.2023.121645 (2023(.

Caltillo-Luna, S., Moreno-Chuquen, R., Celeita, D. & Anders, G. Deep and machine learning models to forecast photovoltaic power generation. Energies 16, 4097–24 https://doi.org/10.3390/en16104097 (2023).

Dawan, P. et al. Comparison of power output forecasting on the photovoltaic system using adaptive Neuro-Fuzzy inference systems and particle swarm Optimization-Artificial neural network model. Enrgies 13, 351 (2020).

Dimd, B. D., Voller, S., Cali, U. & Midtgard, O. M. A review of machine Learning-Based photovoltaic output power forecasting: nordic context. IEEE Access. 10, 26404–26425. https://doi.org/10.1109/ACCESS.2022.3156942 (2022).

Munawar, U. & Wang, Z. A framework of using machine learning approaches for Short-Term solar power forecasting. J. Electr. Eng. Technol. 15 (3), 561–569. https://doi.org/10.1007/s42835-020-00346-4 (2020).

Vennila, C. et al. Forecasting solar energy production using machine learning. Int. J. Photoenergy 2022 https://doi.org/10.1155/2022/7797488 (2022).

Perera, M., De Hoog, J., Bandara, K. & Halgamuge, S. Multi-resolution, multi-horizon distributed solar PV power forecasting with forecast combinations. Expert Syst. Appl. 205, 117690. https://doi.org/10.1016/j.eswa.2022.117690 (2022).

Assouline, D., Mohajeri, N. & Scartezzini, J. L. Quantifying rooftop photovoltaic solar energy potential: A machine learning approach. Sol. Energy. 141, 278–296. https://doi.org/10.1016/J.SOLENER.2016.11.045 (2017).

Preda, S., Oprea, S. V., Bâra, A. & Belciu, A. PV forecasting using support vector machine learning in a big data analytics context. https://doi.org/10.3390/sym10120748 (2018).

Ahmad, M. W., Mourshed, M. & Rezgui, Y. Tree-based ensemble methods for predicting PV power generation and their comparison with support vector regression. Energy 164, 465–474. https://doi.org/10.1016/J.ENERGY.2018.08.207 (2018).

de Costa, R. L. Convolutional-LSTM networks and generalization in forecasting of household photovoltaic generation. Eng. Appl. Artif. Intell. 116, 105458. https://doi.org/10.1016/j.engappai.2022.105458 (2022).

Tovar, M., Robles, M. & Rashid, F. PV Power Prediction, using CNN-LSTM hybrid neural network model. Case of study: Temixco-Morelos, México. https://doi.org/10.3390/en13246512 (2020).

Grzebyk, D. et al. Individual yield nowcasting for residential PV systems. Sol. Energy 251, 325–336. https://doi.org/10.1016/J.SOLENER.2023.01.036 (2023).

Ferlito, S., Adinolfi, G. & Graditi, G. Comparative analysis of data-driven methods online and offline trained to the forecasting of grid-connected photovoltaic plant production. Appl. Energy 205, 116–129. https://doi.org/10.1016/J.APENERGY.2017.07.124 (2017).

Abdelmoula, I. A., Elhamaoui, S., Elalani, O., Ghennioui, A. & Aroussi, M. E. A photovoltaic power prediction approach enhanced by feature engineering and stacked machine learning model. Energy Reports 8, 1288–1300 https://doi.org/10.1016/j.egyr.2022.07.082 (2022).

Asiedu, S. T., Nyarko, F. K. A., Boahen, S., Effah, F. B. & Asaaga, B. A. Machine learning forecasting of solar PV production using single and hybrid models over different time horizons. Heliyon 10 (7), e28898. https://doi.org/10.1016/j.heliyon.2024.e28898 (2024).

Hassan, A. A., Atia, D. M., ElMadany, H. T. & Eliwa, A. Y. Performance assessment of a 30. 26 kW grid-connected photovoltaic plant in Egypt. Clean. Energy. 8 (6), 120–133 (2024).

Ralf, K. & Mierswa, I. RapidMiner Studi o Manual. RapidMiner Stud. RapidMiner Account http://docs.rapidminer.com/downloads/RapidMiner-v6-user-manual.pdf (2014).

Das, U. K. et al. April., Forecasting of photovoltaic power generation and model optimization: A review. Renew. Sustain. Energy Rev. 81, 912–928 https://doi.org/10.1016/j.rser.2017.08.017 (2017).

Ahmed, R., Sreeram, V., Mishra, Y. & Arif, M. D. A review and evaluation of the state-of-the-art in PV solar power forecasting: techniques and optimization. Renew. Sustain. Energy Rev. 124 https://doi.org/10.1016/j.rser.2020.109792 (2020).

Essam, Y. et al. Investigating photovoltaic solar power output forecasting using machine learning algorithms. Eng. Appl. Comput. Fluid Mech. 16 (1), 2002–2034. https://doi.org/10.1080/19942060.2022.2126528 (2022).

Jumin, E. et al. Machine learning versus linear regression modelling approach for accurate Ozone concentrations prediction. Eng. Appl. Comput. Fluid Mech. 14 (1), 713–725. https://doi.org/10.1080/19942060.2020.1758792 (2020).

Luo, X., Zhang, D. & Zhu, X. Deep learning based forecasting of photovoltaic power generation by incorporating domain knowledge. Energy 225, 120240. https://doi.org/10.1016/j.energy.2021.120240 (2021).

Wu, Y. K., Huang, C. L., Phan, Q. T. & Li, Y. Y. Completed review of various solar power forecasting techniques considering different viewpoints. Energies 15 (9), 1–22. https://doi.org/10.3390/en15093320 (2022).

Alizamir, M., Kim, S., Kisi, O. & Zounemat-Kermani, M. A comparative study of several machine learning based non-linear regression methods in estimating solar radiation: case studies of the USA and Turkey regions. Energy 197 https://doi.org/10.1016/j.energy.2020.117239 (2020).

Thaker, J. & Höller, R. Hybrid model for intra-day probabilistic PV power forecast. Renew. Energy. 232, 121057. https://doi.org/10.1016/J.RENENE.2024.121057 (2024).

Moradi, K., Jafari, F., Moghaddam, F. & Shafiee, Q. Modeling of Photovoltaic-Thermal Systems Using Multivariate Polynomial Regression, IFAC-PapersOnLine 58 (2), 136–143 https://doi.org/10.1016/J.IFACOL.2024.07.104 (2024).

Islam, M. K., Hassan, N. M. S., Rasul, M. G., Emami, K. & Chowdhury, A. A. An off-grid hybrid renewable energy solution in remote doomadgee of Far North queensland, australia: optimisation, techno-socio-enviro-economic analysis and multivariate polynomial regression. Renew. Energy. 231, 120991. https://doi.org/10.1016/J.RENENE.2024.120991 (2024).

Talayero, A. P., Melero, J. J., Llombart, A. & Yürüşen, N. Y. Machine Learning models for the estimation of the production of large utility-scale photovoltaic plants, Sol. Energy 254, 88–101 https://doi.org/10.1016/J.SOLENER.2023.03.007 (2023).

Thaker, J., Höller, R. & Kapasi, M. Short-Term solar irradiance prediction with a hybrid ensemble model using EUMETSAT satellite images. Energies 17 (2). https://doi.org/10.3390/en17020329 (2024).

Kumaravel, G., Kirthiga, S., Al Shekaili, M. M. H. & Othmani, Q. H. S. A. A. L. A Solar Photovoltaic Performance Monitoring and Statistical Forecasting Model Using a Multi-Layer Feed-Forward Neural Network and Artificial Intelligence, Baghdad Sci. J. 21 (5), 1868–1877 https://doi.org/10.21123/bsj.2024.10736 (2024).

Huang, Q. & Wei, S. Improved quantile convolutional neural network with two-stage training for daily-ahead probabilistic forecasting of photovoltaic power. Energy Convers. Manag. 220, 113085. https://doi.org/10.1016/J.ENCONMAN.2020.113085 (2020).

du Plessis, A. A., Strauss, J. M. & Rix, A. J. Short-term solar power forecasting: Investigating the ability of deep learning models to capture low-level utility-scale Photovoltaic system behaviour, Appl. Energy 285, 116395 https://doi.org/10.1016/J.APENERGY.2020.116395 (2021).

Zhou, H., Zheng, P., Dong, J., Liu, J. & Nakanishi, Y. Interpretable feature selection and deep learning for short-term probabilistic PV power forecasting in buildings using local monitoring data. Appl. Energy. 376, 124271. https://doi.org/10.1016/J.APENERGY.2024.124271 (2024).

Shabbir, N. et al. Enhancing PV hosting capacity and mitigating congestion in distribution networks with deep learning based PV forecasting and battery management. Appl. Energy. 372, 123770. https://doi.org/10.1016/J.APENERGY.2024.123770 (2024).

Nguyen Trong, T., Vu Xuan Son, H., Do Dinh, H., Takano, H. & Nguyen Duc, T. Short-term PV power forecast using hybrid deep learning model and variational mode decomposition. Energy Rep. 9, 712–717. https://doi.org/10.1016/J.EGYR.2023.05.154 (2023).

Sadorsky, P. Forecasting solar stock prices using tree-based machine learning classification: how important are silver prices? North. Am. J. Econ. Financ. 61, 101705. https://doi.org/10.1016/j.najef.2022.101705 (2022).

Deng, S. et al. Multi-type load forecasting model based on random forest and density clustering with the influence of noise and load patterns, Energy 307, 132635 https://doi.org/10.1016/J.ENERGY.2024.132635 (2024)

Niu, D., Wang, K., Sun, L., Wu, J. & Xu, X. Short-term photovoltaic power generation forecasting based on random forest feature selection and CEEMD: A case study. Appl. Soft Comput. 93, 106389. https://doi.org/10.1016/J.ASOC.2020.106389 (2020).

Ait tchakoucht, T., Elkari, B., Chaibi, Y. & Kousksou, T. Random forest with feature selection and K-fold cross validation for predicting the electrical and thermal efficiencies of air based photovoltaic-thermal systems, Energy Rep. 12, 988–999 https://doi.org/10.1016/J.EGYR.2024.07.002 (2024).

Nie, P., Roccotelli, M., Fanti, M. P., Ming, Z. & Li, Z. Prediction of home energy consumption based on gradient boosting regression tree. Energy Rep. 7, 1246–1255. https://doi.org/10.1016/j.egyr.2021.02.006 (2021).

Ahmed, U. et al. Short-term global horizontal irradiance forecasting using weather classified categorical boosting. Appl. Soft Comput. 155, 111441. https://doi.org/10.1016/J.ASOC.2024.111441 (2024).

Weyll, A. L. C. et al. Medium-term forecasting of global horizontal solar radiation in Brazil using machine learning-based methods. Energy 300, 131549. https://doi.org/10.1016/J.ENERGY.2024.131549 (2024).

Stippel, L., Camal, S. & Kariniotakis, G. Multivariate federated tree-based forecasting combining resilience and privacy: application to distributed energy resources. Electr. Power Syst. Res. 234, 110730. https://doi.org/10.1016/J.EPSR.2024.110730 (2024).

Tahir, M. F., Tzes, A. & Yousaf, M. Z. Enhancing PV power forecasting with deep learning and optimizing solar PV project performance with economic viability: A multi-case analysis of 10 MW Masdar project in UAE, Energy Convers. Manag. 311, 118549 https://doi.org/10.1016/J.ENCONMAN.2024.118549 (2024).

Dong, Y., Ma, X. & Fu, T. Electrical load forecasting: A deep learning approach based on K-nearest neighbors. Appl. Soft Comput. 99, 106900. https://doi.org/10.1016/j.asoc.2020.106900 (2021).

Shijer, S. S., Jassim, A. H., Al-Haddad, L. A. & Abbas, T. T. Evaluating electrical power yield of photovoltaic solar cells with k-Nearest neighbors: A machine learning statistical analysis approach. e-Prime - Adv. Electr. Eng. Electron. Energy. 9, 100674. https://doi.org/10.1016/J.PRIME.2024.100674 (2024).

Al-Dahidi, S., Hammad, B., Alrbai, M. & Al-Abed, M. A novel dynamic/adaptive K-nearest neighbor model for the prediction of solar photovoltaic systems’ performance. Results Eng. 22, 102141. https://doi.org/10.1016/J.RINENG.2024.102141 (2024).

Mas’ud, A. A. Comparison of three machine learning models for the prediction of hourly PV output power in Saudi Arabia. Ain Shams Eng. J. 13 (4), 101648. https://doi.org/10.1016/J.ASEJ.2021.11.017 (2022).

Liu, W. et al. Machine learning applications for photovoltaic system optimization in zero green energy buildings. Energy Rep. 9, 2787–2796. https://doi.org/10.1016/J.EGYR.2023.01.114 (2023).

Kuo, W. C., Chen, C. H., Hua, S. H. & Wang, C. C. Assessment of different deep learning methods of power generation forecasting for solar PV system. Appl. Sci. 12, 1–16. https://doi.org/10.3390/app12157529 (2022).

Funding

Open access funding provided by The Science, Technology & Innovation Funding Authority (STDF) in cooperation with The Egyptian Knowledge Bank (EKB).

Author information

Authors and Affiliations

Contributions

Amal A. Hassan: Conceptualization, Methodology, Software, Validation, Formal Analysis, Investigation, Resources, Data Curation, Writing—Original Draft, Writing—Review & Editing, Visualization. Doaa M. Atia: Conceptualization, Methodology, Formal analysis, Investigation, Data Curation, Writing—Original Draft, Writing—Review & Editing, Visualization. Hanaa T. El- Madany: Writing. Fatma ElGhannam: Conceptualization, Methodology, Formal Analysis.

Corresponding author

Ethics declarations