Abstract

Accurate copper price forecasting is crucial and challenging due to the uncertainty and complex fluctuations caused by various factors of financial markets. In this area, the single-factor point prediction methods have made significant contributions but do not fully consider the influence of multiple factors and the robustness of the predictions. This study develops a novel hybrid interval prediction framework that combines multi-objective optimization with quantile deep learning for copper price prediction. The framework holistically evaluates the fluctuation range by assessing the distribution of copper prices and incorporates multiple variables chosen through diverse feature selection methods, which are crucial for accurate copper price prediction. The proposed framework encompasses two sub-stages: (1) initial interval prediction and quantile deep learning models of copper price; (2) multi-objective optimization procedure. In the first phase, four probabilistic forecasting algorithms are employed to sharpen prediction accuracy and provide a comprehensive picture of the interpretation of the outcome parameters by evaluating the distribution. The subsequent phase delves deeper to enhance prediction precision. Four multi-objective optimization algorithms are harnessed to refine the predictions, aiming to boost their reliability and resolution. The experiment findings underscore the superior predicted capabilities of the Quantile Regression Long Short-Term Memory (QRLSTM) model when optimized using the Multi-Objective Salp Swarm Algorithm (MOSSA), achieving a Prediction Interval Coverage Probability of 94.5205%, a Prediction Interval Normalized Average Width of 0.0066, and an Average Interval Score of -373.9687 at 95% confidence levels. The probabilistic forecasting framework developed in this research is reliable and comprehensive, considering many factors influencing the copper price.

Similar content being viewed by others

Introduction

Copper is among the early exploited natural resources utilized for diverse human needs1, serving as a vital resource with extensive industrial applications2,3, including electrical cabling, construction projects, and machinery production. Furthermore, copper prices significantly influence associated industries and broader economic systems due to their inherent volatility and susceptibility to numerous interacting factors4. For instance, fluctuations in industrial energy costs, price movements in related metals, and changes in exchange rates among major copper-producing countries such as Chile, China, Peru, and Australia contribute significantly to copper price variability5,6. The interplay of these factors results in considerable uncertainty, complicating copper price forecasting and underscoring copper’s strategic importance to national economic stability and social security7.

Copper also possesses financial characteristics, functioning not only as an industrial raw material but also as a medium of trade financing. Global copper resources are geographically concentrated, notably in regions such as South America and Australia. As the world’s leading industrial consumer, China’s copper demand heavily depends on imports, accounting for approximately half of global consumption8. Recent events, including international trade tensions, geopolitical conflicts, and the COVID-19 pandemic, have exacerbated copper price volatility, reinforcing its role as an economic barometer reflective of global economic conditions. Generally, a flourishing global economy drives copper demand upward, increasing prices, whereas economic downturns reduce demand and depress copper prices. Hence, copper market dynamics often serve as indicators of broader global economic health. Additionally, fluctuations in copper prices considerably affect China’s macroeconomic stability, financial market equilibrium, and demand for industrial inputs, triggering economic ripple effects across copper-producing countries and heightening economic security risks in regions such as South America. Consequently, accurate forecasting of copper prices carries significant implications for analyzing global economic fluctuations.

Over recent decades, various techniques have been introduced to improve the accuracy of copper price forecasting, though most emphasize point predictions. Classical time series models such as Autoregressive Integrated Moving Average (ARIMA)9 and Generalized Autoregressive Conditional Heteroskedasticity (GARCH)10 have been commonly employed for copper price prediction. ARIMA, a linear model designed for point forecasting, struggles to handle data where volatility changes over time, which is often the case in copper price fluctuations. GARCH models, while effective at capturing volatility dynamics, rely on the assumption of normally distributed residuals, an assumption that does not always align with real-world copper price behavior11,12. Furthermore, traditional single-variable point prediction models fail to consider the multiple external variables that significantly influence copper prices. Machine learning models have been applied to commodity price forecasting in recent years to handle high-dimensional data better and capture non-linear complex interactions. The Regression Tree (RT) model, a simple decision-learning method, was initially utilized in predicting the copper price and demonstrated the prediction ability with accuracy and reliability over both short durations (days) and more extended periods (years)13. Gaussian Process Regression (GPR) has proven to be effective in modeling uncertainties in time series predictions14,15,16. Recent studies highlight the growing potential and usefulness of neural networks in capturing complex time series patterns, with advancements in their application to various forecasting tasks17,18. Extreme Learning Machine (ELM) model and other Artificial Neural Networks (ANN) perform well in predicting the non-linear and non-stationary time series19,20,21,22. These presented models shown in Table 1 primarily concentrate on point forecasting but inadequately capture some uncertainty forecasting information.

Given copper prices’ inherent uncertainty and complex fluctuations influenced by diverse financial market variables, accurate price forecasting remains notably challenging1. Consequently, incorporating uncertainty quantification through interval forecasting or distributional predictions becomes essential. Prediction intervals, providing probabilistic ranges within which actual copper prices are expected to fall, effectively reflect this uncertainty and support improved risk assessment and informed market decision-making24. In addressing these complexities, statistical methodologies proficient at capturing intricate relationships and distributional information within data become increasingly valuable. Traditional regression techniques, while capable of identifying average relationships between dependent variables and predictors, inadequately represent underlying distributional characteristics25,26. In contrast, Quantile Regression (QR) comprehensively captures relationships across multiple quantiles, robustly handling outliers and offering interpretability regarding price distributions. As such, QR emerges as a powerful method for interval prediction, effectively managing copper price uncertainty27,28.

QR offers parametric interpretability but requires substantial assumptions about the underlying data generation processes. Machine learning techniques are employed to transition from these assumption-based models to more data-driven methods. These techniques effectively handle multi-dimensional data and apprehend intricate non-linear associations without imposing additional assumptions on the data. As deep learning techniques, Recurrent Neural Network (RNN) are aptly designed for addressing array-related issues29. Unlike standard RNN, Long Short-Term Memory network (LSTM) contains memory cells with gates that can store and retrieve information for an extended time. The gates manage the flow of information entering and exiting the memory cells, which makes LSTM better capture the long-term dependencies in sequences than standard RNN. Therefore, LSTM has been widely applied for sequence learning tasks where long-term dependencies are important30. GRU is a version of LSTM, which stems from the simplified gate structure of LSTM to shorten the training duration31. Bidirectional Long Short-Term Memory network (BiLSTM) consists of two LSTM networks: one processes the sequence in a forward direction, while the other handles it in a backward manner32. Despite the potent predictive capabilities, these learning methods lack transparency, often known as the “black box” problem. This makes it challenging to elucidate the reasoning behind a particular prediction made by the models. Consequently, integrating QR and machine learning methods represents a balanced trade-off between interpretability and precise predictive power. Combination methods excel at capturing intricate patterns in data sequences, yet their predictions can be further enhanced by optimizing interval coverage rate and width.

To address this, multi-objective optimization algorithms are employed to enhance forecasting convergence rate and interval width simultaneously. An optimal prediction interval should meet two key criteria: it must provide a valid coverage rate for finite samples and minimize the width of the prediction interval within the given input space33. A valid coverage rate allows for a more dependable data conveyance to system operators, facilitating decision-making within acceptable risk boundaries. A narrower interval offers precise information at a specific Prediction Interval Nominal Confidence (PINC). To illustrate with an intense instance, an infinitely wide prediction interval would render copper price prediction meaningless34. Applying multi-objective optimization algorithms offers several advantages, including reduced error rates, decreased human intervention, and faster solution times.

This article introduces a multi-objective optimization structure that combines multivariate QR and machine learning. The framework effectively characterizes the distribution of the dependent variable and employs various optimization algorithms to refine the prediction intervals, thus enhancing prediction accuracy and robustness with high coverage rates. By utilizing multiple feature selection methods and adhering to the principle of Occam’s razor to simplify the model, a comprehensive grasp of the most crucial factors influencing copper price fluctuations exists. The primary goal of this framework is to attain interpretability, robustness, and accuracy in prediction results.

As mentioned earlier, the primary contributions and advancements of this structure are summarized as follows:

-

(1)

The application of various dimensionality reduction and variable selection methods can filter out the primary influencing factors, resulting in more robust models. Utilizing a combination of multiple variable selection methods helps identify the most significant factors impacting the copper price. This approach aligns with the parsimony rule, emphasizing simplicity and elegance in model explanations while strengthening the robustness of dimensionality reduction and prediction algorithms.

-

(2)

Probabilistic forecasting algorithms are employed to sharpen prediction accuracy and provide a comprehensive picture of the interpretation by evaluating the distribution. Multivariate probability forecasting enables a more comprehensive interaction of the factors’ fluctuations. Quantile regression and machine learning achieve a harmonious equilibrium between interpretability and predictive prowess. This methodology facilitates a more precise delineation of the dependent variable’s distribution, culminating in heightened prediction accuracy.

-

(3)

A novel hybrid interval prediction framework has been introduced, fusing multi-objective optimization with QR deep learning to predict copper price. Multi-objective optimization techniques are utilized to concurrently refine and harmonize the upper and lower bounds of the prediction interval. Experimental results highlight the enhanced predictive prowess of the four multi-objective optimization algorithms. These algorithms refine predictions and optimize forecasts’ convergence rate and interval width, aiming to elevate their reliability and precision. This framework adeptly navigates the challenges of constructing optimal prediction models for both the upper and lower interval bounds, leading to significantly improved reliability in interval predictions.

-

(4)

The study evaluates the predictive capabilities of multi-objective optimization algorithms optimized for different forecasting models using an array of evaluation metrics. Moreover, compared to benchmark interval construction methods, multi-objective optimization algorithms tailored for forecasting models exhibit superior performance and achieve exact predictions.

The remaining parts of this study are structured as follows: In section 2, the probabilistic forecasting framework is presented. Section 3 presents four fundamental variable selection methods, introduces five base probability prediction models, describes three categories of evaluation indicators, and introduces four multi-objective optimization algorithms. In section 4, we demonstrate a case study and analyze the outcome. Finally, section 5 discusses the analysis and conclusions drawn from the study results.

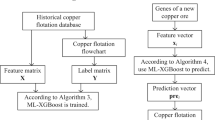

Probabilistic forecasting framework

This paper introduces a novel hybrid interval prediction framework that combines multi-objective optimization with quantile deep learning for copper price forecasting. The framework consists of three modules. The first module focuses on feature selection, where we initially apply several variable selection methods. The feature sets chosen by these several methods are then intersected and used in the prediction model. This process effectively filters out the primary influencers, resulting in more robust models. It aids in identifying the factors with the most significant impact on copper prices. We integrate QR and machine learning techniques in the second module to enhance prediction accuracy. This integrated approach helps mimic the distribution of the dependent variable, which can be interpreted through the core features selected in the first module. Finally, in the third module, we employ multi-objective optimization techniques to simultaneously fine-tune and balance the convergence rate and predicted interval. The comprehensive framework is illustrated in Fig. 1.

The proposed framework aims to improve prediction accuracy, model robustness, and interpretability while identifying key factors affecting the copper price.

The flowchart of the proposed forecasting framework.

Methodology

This section outlines the methodology of the proposed framework and provides the mathematical foundations for the multi-objective optimization framework.

Specifically, in subsection 3.1, we present the multivariate selection methods. Subsection 3.2 discusses the QR and probability prediction models. Subsection 3.3 introduces evaluation indicators, and subsection 3.4 describes the fundamentals of multi-objective optimization algorithms and the improvement strategies.

Multivariate selection methods

The practice of utilizing dimensionality reduction and variable selection techniques aids in filtering out primary influential factors, resulting in enhancing the robustness and interpretability of models35. Two pivotal categories of feature selection methods documented in the literature are filter and embedded models. In this study, we showcase the Pearson Correlation Coefficient (PCC) and Maximal Information Coefficient (MIC) as representatives of filter methods, while the Least Absolute Shrinkage and Selection Operator (LASSO) and Random Forest (RF) exemplify embedded models. Undoubtedly, feature selection is instrumental in determining an optimal feature subset, profoundly affecting a model’s performance. Pruning redundant features paves the way for more straightforward and more interpretable models. However, choosing the appropriate feature selection technique tailored for particular forecasting models across diverse datasets remains challenging. This complexity arises because different models possess unique attributes, each with pros and cons. Consequently, the application of various feature selection methods yields disparate feature subsets. The association between forecasting models and a specific feature selection method might introduce potential instability. Moreover, there’s an inherent risk in failing to identify the best feature selection method, especially when dealing with datasets marked by intricacy and volatility36. Our approach employs four distinct feature selection techniques to identify common features. Utilizing these diverse methods is fundamental to recognizing the predominant factors influencing the copper price. This strategy complies with the principle of parsimony, emphasizing the simplicity and transparency of model representations. At the same time, it enhances the robustness of the prediction frameworks. For further details, refer to subsection 3.1.1 on the PCC, subsection 3.1.2 discussing the MIC, subsection 3.1.3 focusing on LASSO, and subsection 3.1.4 presenting RF.

Pearson correlation coefficient (PCC)

The Pearson coefficient is a statistical method employed to evaluate the linear correlation between features and stands as the most prominent among various interaction coefficients. It begins by calculating the two variables’ averages separately and then determining each variable’s deviations from its mean. The product of these two deviations captures the degree of interaction between the features. A specific formula can then be applied to ascertain the pearson coefficient for this two-dimensional vector.

where \({\bar{x}}\) symbolizes the average of the components in vector 1, and \({\bar{y}}\) signifies the mean value of the components in vector 2. \(\gamma _{xy}\) indicates the extent of interaction among various features, and m represents the total count of data points37.

Maximal information coefficient (MIC)

The concept of MIC was introduced by Reshef et al. published in Science in 201138. The MIC emerged as a response to the limitations of existing correlation measures, like the PCC, which primarily captures linear relationships. The intention behind MIC is to create a standard that can equally and effectively capture associations of any functional form for measuring the strength and character of the relationship between two variables. MIC is a member of a group of maximal information-based nonparametric exploration (MINE) statistics designed to detect and describe a broad spectrum of relationships in large datasets, both linear and nonlinear. It turns the value of the information into a metric ranging from zero to one. MIC approaches one when a relatively high relationship exists between the two variables, as indicated by the correlation. On the other hand, when the variables are probably independent, the MIC approaches zero36.

Least absolute shrinkage and selection operator (LASSO)

LASSO is a widely adopted regularization technique primarily employed in regression analysis. However, its principles have been applied in various modeling contexts, rendering it a useful instrument for researchers and practitioners39. It is particularly useful when dealing with high-dimensional datasets, offering the capability to perform feature selection to identify the most critical predictors. The Lasso technique enforces a stringent model by introducing a penalty function that reduces specific coefficients. This process encourages sparsity within the model by driving some feature coefficients to zero. This feature selection property is particularly advantageous in scenarios where the objective is to identify the most pivotal predictors from a vast array of potential features. Consequently, it not only streamlines the subset of variables but also aids in pinpointing the most influential factors among a multitude of potential variables36.

Random forest (RF)

RF is a versatile and powerful ensemble learning algorithm proficient in classification and regression tasks and a valuable feature selection tool. It is a multi-purpose learning method encompassing multiple randomized decision trees and combining their predictions via averages. Random Forest functions by creating a collection of decision trees. Each tree is created utilizing a randomly selected portion of the data and, crucially, a random subset of the available features. This inherent randomness and diversity among the trees are pivotal to its modeling and feature selection effectiveness. The algorithm can assess the relevance of individual feature variables. Ranking each variable’s importance allows for identifying a selection of essential features36,40,41.

We can draw the following conclusions: PCC belongs to the filter model of variable selection methods for linear relationships, used to identify features with linear correlations. At the same time, MIC can capture the association between variables in any functional form, regardless of whether they are linear or nonlinear. It proves effective in handling correlations, even in large datasets. Both PCC and MIC fall into the category of filter models of variable selection. This means they rank and select features based on their relationships with other features without interacting with any specific model before feature selection. LASSO is a powerful feature selection tool and a practical dimension reduction method in complex and high-dimensional datasets. It employs an embedded approach, automatically selecting the most significant features during the model training process. In recent years, RF has gained prominence as an ensemble algorithm that can be employed for variable selection. Unlike artificial intelligence algorithms such as deep learning, RF, with its ensemble of tree structures, offers interpretability in variable selection results. Both LASSO and RF, being embedded algorithms, are well-equipped to handle larger and higher-dimensional datasets.

An optimal feature subset profoundly impacts a model’s performance. Removing redundant features simplifies models and enhances their interpretability. While each method has unique strengths and applications, integrating these feature selection techniques to obtain an optimal set of commonly selected features helps identify the most significant factors influencing the copper price. This approach prioritizes simplicity and elegance in model explanations while bolstering the predictions’ robustness.

Quantile regression and probability prediction models

QR and probabilistic forecasting algorithms are utilized to enhance prediction precision and offer a holistic interpretation through the assessment of the distribution. When QR is combined with deep learning models, we can perform interval prediction by obtaining different prediction results by choosing different quantile values. Meanwhile, the balance between interpretability and predictive strength is achieved. This approach enables a more accurate depiction of the dependent variable’s distribution, improving prediction accuracy. Subsequently, Kernel Density Estimation (KDE) is a nonparametric smoothing method to estimate the comprehensive copper price distribution across all quantiles. For more in-depth information, subsection 3.2.1 explains QR, subsection 3.2.2 details the Quantile Regression Neural Network (QRNN), and subsection 3.2.3 covers Quantile Regression Long Short-Term Memory (QRLSTM). subsection 3.2.4 explores Quantile Regression Bi-Directional Long Short-Term Memory (QRBiLSTM), subsection 3.2.5 discusses the Quantile Regression Gated Recurrent Unit (QRGRU), and subsection 3.2.6 describes the Kernel Density Estimation (KDE).

Quantile regression (QR)

Quantile regression is a method with strong interpretability for estimating various conditional quantiles of a response variable42, which thoroughly depicts the association between dependent and independent variables. The linear QR is mathematically expressed as follows43.

In this context, \(\overline{\overline{\xi }}(q) = \langle \xi _0(q), \xi _1(q), \dots , \xi _m(q) \rangle\) represents the regression coefficient matrix, the conditional quantile at the q-th level is represented by \(Q_{Z_t}(q|\Lambda _t)\), while the estimated value of \(\overline{\overline{\xi }}(q)\) is denoted as \(\hat{\xi }(q)\) and are mathematically expressed as follows:

Here, \(\Phi _{q}(\cdot )\) represents an asymmetric function, with its expression given as:

Subsequently, the q-th conditional quantile of the dependent variables can be estimated using the following expression:

QR enables the investigation of the relationship between predictor variables and designated quantiles of the outcome variable. Hence, it offers a more comprehensive view of potential causative associations between variables.

Quantile regression neural network (QRNN)

The Quantile Regression Neural Network (QRNN) was developed to address the limitation of traditional linear models, which fail to model the nonlinear associations between variables. The input layer, several hidden layers, and an output layer are connected by fully linked neurons across the layers in QRNN. Each neuron’s output in the hidden layers is processed through a nonlinear activation function before being passed as input to the subsequent layer44.The conditional quantile \(Q_{y_n}(q|x_t)\) of the response variable \(y_n\) at \(q(0<q<1)\) quantile gained by QRNN is calculated as follows:

where \(b_n(q)=b_{n,k,j}(k=1,2,...)\) represents the weight associated with the connection between an input and a hidden layer node, \(o_n(q)=o_{n,k,j}(k=1,2,...,I)\) denotes the weight vector linking the hidden layer with the output layer. \(B(q)=a_n(q)(n=1,1,...,N)\) and \(O(q)=o_n(q)(n=1,2,...,I)\) can be obtained in the activation function \(f_1(\cdot )\) and \(f_2(\cdot )\) are commonly assigned as a hyperbolic tangent sigmoid function and a linear model, respectively. Introducing regularization parameters \(\lambda _1\) and \(\lambda _2\) aids in mitigating overfitting. The model’s training parameters include a maximum of 300 epochs, and the gradient threshold is set to 1. An initial learning rate of 0.001 is specified, which is adjusted according to a piecewise schedule. The learning rate is scheduled to drop every 100 epochs, with each drop reducing the learning rate to 25% of its previous value. The training is conducted in mini-batches of 100 examples each, optimizing the balance between computational efficiency and model update granularity.

The combination of QR and Neural Network uses the neural network to model complex non-linear relationships. Then it applies QR to these relationships to capture the comprehensive effect across different quantiles of the data distribution. This allows for improved prediction accuracy, especially in cases where the data has a complex structure or the underlying distribution is not normal.

Quantile regression long short-term memory (QRLSTM)

Since the original LSTM was published in 1997, multitudinous theoretical and practical studies have been published on this variant of an RNN, and numerous studies have highlighted the exceptional outcomes obtained in a diverse range of application areas involving sequential data. LSTM network effectively tackles gradient explosion and vanishing issues in RNN due to the presence of memory units and gated memory units that store past data and control information flow, enabling long-term state retention45. Consequently, they provide more scientific outcomes for data exhibiting short-term or long-term dependencies. QRLSTM, a fusion of QR and LSTM, addresses nonlinear problems involving temporal information and quantifies prediction uncertainty. For every quantile q, the main calculations are as follows:

where square brackets represent two linked vectors, The notation \(\times\) represents matrix multiplication, while \(\cdot\) signifies the product of matrix elements. \(W_{\cdot ,q}\) denotes a specific unit’s parameter vector. \(F_{t,q}^L\) and \(E_{t,q}^L\) are the forget and input gates, respectively, determining the amount of information entering memory unit \(U_{t,q}^L\) at time t. The activation functions of sigmoid and tanh are represented by \(\because (\cdot )\) and \(tanh(\cdot )\), respectively. \(\Omega _{t,q}\) is the output gate, which is multiplied by the number of cells in the memory \(U_{t,q}^L\) and the final updated information \(P_{t,q}^L\) is obtained using the activation function \(tanh(\cdot )\). The last dense layer takes in \(P_{t,q}^L\) and is associated with the parameter \(W_{L,q}\), ultimately yielding the quantile predictive value \(y_{n,t,q}^L\). The training is configured to run for a maximum of 800 epochs, with an initial learning rate set at 0.01. The learning rate follows a piecewise schedule, meaning it will decrease at specified intervals. Specifically, the learning rate will drop by a factor of 0.2 every 400 epochs.

When QR and LSTM are integrated, the resulting QRLSTM network leverages the strengths of both techniques. It employs the LSTM network to model complex temporal dependencies and then applies QR to these dependencies. This combination allows it to provide predictions across different quantiles of the data distribution. The result is an improved predictive model that can handle complex temporal structures and non-normal distributions, offering a more comprehensive and accurate forecast.

Quantile regression bi-directional long short-term memory (QRBiLSTM)

BiLSTM networks consist of two LSTMs, a forward LSTM and a backward LSTM, which facilitate data processing in both directions. This structure allows the network to leverage information from times t-1 and t+1 simultaneously at time t. Consequently, BiLSTM models are more accurate than unidirectional LSTMs in general.

where \(f_t\) decides which section of the historical information to eliminate, the outcome value lies within the interval of 0 to 1. \(z_t\) determines which data should be inputted into the network, generating an output value spans from 0 to 1. \(l_t\) decides which network outputs to utilize for the final production, with an output value spans from 0 to 1. \(l_t\) determine which elements of the current unit should be transmitted to the hidden layer(\(h_t\)), with the output value spans from zero to one. \(\hat{y_t}\) is the expression for the predicted output46. The parameters of the QRBiLSTM model are the same as the QRLSTM model.

When QR and BiLSTM networks are combined, the resulting QRBiLSTM network capitalizes on the strengths of both techniques. It engages the BiLSTM network to model intricate temporal dependencies in both forward and backward directions and then applies QR to these dependencies. This combination is an advanced predictive model that can handle complex bidirectional temporal structures and non-normal distributions, offering a more comprehensive and accurate forecast. By considering both the past and future context in the modeling process, QRBiLSTM can capture complex data patterns that unidirectional models may miss, leading to enhanced prediction accuracy and more robust forecasting models.

Quantile regression gated recurrent unit (QRGRU)

The Gated Recurrent Unit (GRU) is a more streamlined version of the Long Short-Term Memory (LSTM) network architecture. GRU’s internal structure is less complex, with only two gates instead of LSTM’s three. GRU update and reset gates selectively preserve and filter information, preventing it from being discarded over time. With a certain probability, the held information is transmitted to the next unit, overcoming the issue of RNN’s inability to handle lengthy time series47. The mathematical expression of GRU is as follows:

where \(M_r,M_h,M_u,N_r,N_h\) and \(N_u\) denote the weight matrixes, meanwhile, \(b_r, b_h\), and \(b_u\) represent the respective bias vectors. \(h_{t-1}\) denotes the previous hidden state. The sigmoid activation function is symbolized by \(\sigma (x)\). The Hadamard product multiplies corresponding matrix elements and is denoted by \(\odot\). By combining the QR and GRU models, the QRGRU network structure is derived. Assuming the GRU model output is acquired as \(Q_{Y_k}(X_k) = f(X_k, \phi )\), with \(\phi\) representing the GRU model parameters, the QRGRU output is determined as follows:

The maximum number of epochs is set to 700. The initial learning rate is specified as 0.005, and the mini-batch size is 100. A gradient threshold is applied at 1 to prevent the explosion of gradient values. Specifically, the learning rate drops by a factor of 0.25 every 100 epochs.

The GRU network, adept at modeling complex temporal dependencies, is engaged. However, unlike LSTM, or BiLSTM, GRU simplifies the recurrent unit’s gating mechanism to modify the memory component. This simplified structure often allows GRU to train faster and perform comparably to LSTM over many tasks while using fewer computational resources. Upon modeling these temporal dependencies, QR is then applied to these dependencies to render predictions across different quantiles of the data distribution. This combination, unique to QRGRU, enhances prediction accuracy, mainly when dealing with intricate temporal or sequential data structures.

Kernel density estimation (KDE)

Commonly recognized, KDE, a traditional non-parametric estimation approach, estimates the distribution of random variables without any prior assumptions48. By employing QR, the conditional quantiles \(G_t = [\hat{Q}_{yt}(q_1 | x_t), \hat{Q}_{yt}(q_2 | x_t), \ldots , \hat{Q}_{yt}(q_Z | x_t)]\) are estimated, where \(t = 1, 2, \ldots , m\). A conditional quantile can be seen as a corresponding value in the conditional density distribution49. The QR models mentioned above are used to generate sets of predicted values for each quantile as the input of the kernel function. In this study, we chose the epanechnikov kernel for KDE. Its functional formula is:

where \(\delta\) is the indicator function, assigning a value of one when the condition enclosed in the brackets holds true and zero when the condition is false. Combining QR and KDE in this way allows us to estimate the comprehensive distribution of copper prices across all quantiles. This combination is significant as it combines the strengths of QR and the flexibility of KDE in making no prior assumptions about the distribution of the random variables. This results in a versatile and powerful tool for estimating the complete distribution of the copper price, enhancing the accuracy and robustness of the prediction.

Hogg et al.(2005) and Luo et al.(2022) use statistical principles to estimate the copper price as t-distribution, Estimating the confidence interval at the \(1-\alpha\) confidence level for the forecasted value, and the formula is presented as follows:

In this scenario, (x, y) denotes the coordinates of the observed value. y symbolizes the lower and upper bounds of the confidence interval at the \(1 - \alpha\) confidence level for the predicted value (x, y). Moreover, n signifies the test set size, \(\bar{x}\) is the mean value, \(x_i\) is the ith subscript of the observation, and \(s_e = \sqrt{\sum _{i = 1}^{n}(y_i - \hat{y_i})^2 / (n - 2)}\) represents the standard deviation of the estimate50,51.

Assuming that the Data Generating Process (DGP) follows a standard or t-distribution is a significant presumption. In real-world data, this normality assumption may not always hold. Hence, adopting data-driven methods is imperative for a more accurate approximation of the true DGP. The framework discussed here integrates QR with kernel density estimation, providing a data-driven hybrid approach that accurately represents the actual DGP.

The t-distribution inherently accounts for a data distribution’s thick tail, potentially resulting in broader prediction intervals. In contrast, resilient to outliers, QR facilitates flexible modeling of various segments of the copper price distribution without prescribing its overall form. When paired with KDE, a nonparametric smoothing method that avoids prior distributional assumptions, we gain a robust tool for estimating the entire distribution across all quantiles. Experimental results show that the copper price exhibits a thin-tailed distribution determined by the combined QR and KDE approach. This suggests that the prediction interval estimated by this framework more accurately aligns with real-world data generation.

By integrating QR with kernel density estimation, we not only improve the accuracy but also strengthen the robustness of the analysis, offering a more adaptive forecasting tool for copper prices. Combined with interval prediction and Kernel Density Estimation (KDE), this method allows for a nuanced representation of the copper price distribution across various quantiles. Hence, this combined approach may outperform the t-distribution method, yielding enhanced forecasting results for copper price prediction. This comprehensive approach streamlines our forecasting, ensuring interpretability and predictive strength in analyzing copper price distributions.

Evaluation indicators

Reliability, resolution, and sharpness are commonly assessed in evaluating probabilistic interval forecasting. Our study used Prediction Interval Coverage Probability (PICP) and Prediction Interval Normalized Average Width (PINAW) to gauge reliability and resolution. Moreover, we employed the Average Interval Score (AIS) as a comprehensive metric to thoroughly appraise interval forecasts, considering sharpness. The formulas for all evaluation indices used in this paper are provided below.

Prediction interval coverage probability (PICP)

Reliability refers to the statistical consistency between the distributional forecasts and the observations52. To evaluate the forecasting interval’s reliability, PICP measures the percentage of actual values encompassed by the predicted range’s upper and lower limits. The following definition illustrates this concept:

In this formula, \(LB_i\) and \(UB_i\) symbolize the respective lower and upper limits of the prediction interval, while RV signifies the actual value. The interval forecasting in our study relies on confidence levels of \(\alpha = 0.05\) and \(\alpha = 0.1\). Consequently, the Prediction Interval Nominal Confidence (PINC) is expressed as \(PINC = (1-\alpha ) \times 100\%\). The interval forecasting is considered reliable if the PICP matches the PINC. For example, the prediction interval is considered to be reliable if the \(PICP=90\%\) match the \(PINC=90\%\)52.

Prediction interval normalized averaged width (PINAW)

PINAW is a measurement for evaluating the resolution (width of the forecast interval) of the prediction interval. A larger forecast interval width indicates that the interval contains less valuable uncertainty information and becomes less practical. Therefore, it is important to examine the width of the interval when forecasting the copper price.

Here, R denotes the testing sequence’s range.

Average interval score (AIS)

Sharpness indicates the concentration of the predictive distributions and is an inherent characteristic of the forecasts. The greater the concentration of the predictive distributions, the sharper the forecasts become53. In general, sharper forecasts are considered better. AIS is a metric for evaluating the sharpness of the prediction interval. Forecasting intervals provide insights into uncertainty by computing the Lower Bound (LB) and Upper Bound (UB) at a specified confidence level \(\alpha\). In this study, we determine the upper and lower bounds using the 0.95 and 0.05 quantiles to calculate the prediction interval at a 90% confidence level. By using the 0.975 and 0.025 quantiles as the upper and lower limits, we generate a forecasting with 95% confidence. The AIS serves as a metric that evaluates interval forecasts based on the width (resolution) and coverage (reliability) of the interval. This makes AIS a comprehensive measure considering PICP and PINAW43.

In this context, \(\alpha\) denotes the confidence level, n refers to the testing set’s length, and \(RV_i\) refers to the i-th observed value. The resolution is measured by \(\phi _i(\alpha )\), which is defined as \(\phi _i(\alpha ) = UB_i(\alpha ) - LB_i(\alpha )\). In this case, \(UB_i(\alpha )\) and \(LB_i(\alpha )\) denoted the forecasted upper and lower bounds at the \(\alpha\) confidence level, respectively.

Semi-interval indicators

A semi-interval includes observed values in conjunction with either the higher or lower boundary of the interval. Consequently, the metrics for assessing the dependability and precision of the semi-interval can be formulated as \(\widehat{PICP}_{semi}(q)\) and \(\widehat{PINAW}_{semi}(q)\). Two metrics for assessing the upper semi-interval can be established:

Where A is the actual value; upperbound(q) is the upper bound at quantile q; L represents the length of test values, and R denotes the range of test values, respectively.

Similarly, representations for the lower semi-interval indicators are conveyed as follows:

Multi-objective optimization algorithm

Addressing numerous conflicting goals and diverse constraints, multi-objective structural optimization presents a complex hurdle to overcome54. This complexity is especially evident in probabilistic forecasting within the context of QR deep learning models, where it becomes essential to optimize the reliability and resolution of the prediction interval simultaneously.

The optimal prediction interval focused on developing a robust and accurate probabilistic forecasting model by using multi-objective optimization algorithms that simultaneously optimize reliability and resolution. This methodology is crucial as it enables the handling of trade-offs between the two aspects. Improving one without adequately considering the other might result in a model that either overestimates its certainty, exhibiting high reliability with poor resolution, or delivers overly vague and non-specific predictions, characterized by high resolution but low reliability. The PICP indicates the percentage of target values contained within the upper and lower bounds of the interval. Meanwhile, The PINAW quantifies the width of the predicted interval, with a preference for maintaining the narrowest possible width55. Consequently, the multi-objective optimization function in this research is formulated as follows:

To improve the predictive accuracy of the QR deep learning model for forecasting copper prices, the data was initially divided into training, testing, and validation sets. The model was trained on the training set and used to forecast intervals for two quantile pairs (0.05 and 0.95; 0.025 and 0.975), which establish the prediction intervals’ lower and upper limits. Subsequently, optimization algorithms were applied to refine these interval predictions by searching for optimal “interval modification factors” within a 0-2 range. These factors aim to balance reliability and resolution, minimizing the associated trade-offs in the objective function. Upon identifying the best modification factors through multi-objective optimization, they are applied to the original interval forecasts on the validation set to adjust the prediction intervals. This adjustment process is replicated on the test dataset to produce the final, optimized prediction intervals.

Where q is the quantile used for interval forecasting and f is the interval modification factor produced by the optimization algorithms.

To optimize the hybrid interval prediction system, we employ various multi-objective optimization algorithms, including the Multi-Objective Multi-verse Optimization Algorithm (MOMVO), the Multi-Objective Ant Lion Optimizer (MOALO), the Multi-Objective Dragonfly Algorithm (MODA), and the Multi-Objective Salp Swarm Algorithm (MOSSA). Given the similarity of these multi-objective optimization algorithms, we focus on the details of MOMVO in this parts.

Influenced by the notion of multiple universes and their communication through white holes, black holes, and wormholes, the Multi-Objective Multi-Verse Optimizer (MOMVO) algorithm is formulated56. Objects in a multi-verse can transition between universes via a passage from a white hole to a black hole or via wormholes, which enable movement between universes without white or black holes. Like other evolutionary algorithms, MOMVO can merge results and retain the optimal ones. White and black holes are randomly generated in the universes to combine outcomes, initiating object action.

The optimization process begins by generating various random universes, with each solution treated as a universe. Two parameters influence the changes in solutions during the optimization process and are employed to renew the solutions: Wormhole Existence Probability (WEP) and Traveling Distance Rate (TDR)57,58. The calculations for these parameters are as follows:

In this case, \(p=6\) represents the precision of the exploitation, while LB and UB denote the lower and upper bounds of WEP, respectively. The symbol l refers to the current iteration quantity, and L corresponds to the maximum iteration limit.

To achieve equilibrium, objects are transferred between different universes. It is assumed that an object exchange tunnel always exists between the local and optimal universe, increasing the likelihood of a higher expansion rate. The following equation represents this structure, according to56:

In this setting, in the \(i^{th}\) universe, \(x_j\) represents the \(j^{th}\) variable, while \(x_j^*\) signifies the \(j^{th}\) variable in the optimal universe. WEP and TDR correspond to the wormhole existence probability and the traveling distance rate, respectively. Additionally, \(ub_j\) and \(lb_j\) denote the upper and lower limits of the \(j^{th}\) dimension, while \(r_2\) to \(r_4\) are random values.

Optimization algorithms play a pivotal role in balancing various objectives, such as improving the reliability and precision of prediction intervals. Several multi-objective optimization algorithms, including MOMVO, MOALO, MODA, and MOSSA, have been employed to enhance predictive performance. The initial configurations for several multi-objective optimization algorithms are as follows: The MOALO algorithm is designed for a maximum of 500 iterations with a population size of 500 and an archive size limit of 200. The MODA algorithm operates with similar iteration limits but supports a smaller population of 300 and an archive size of 100. For the MOMVO algorithm, the settings include a maximum operational time of 200, a population size of 100, and an archive limit of 100, with parameters maximum WEP set at 1 and minimum WEP at 0.2. Lastly, the MOSSA algorithm permits up to 300 iterations, a population of 200, and maintains an archive size of 200. Each algorithm’s settings are critical in determining how they process and optimize data, thus directly impacting their efficiency and effectiveness in solving complex optimization challenges.

In the upcoming section, the effectiveness of these algorithms through experimental results will be prominently present. This study evaluates the predictive capabilities of multi-objective optimization algorithms that have been tailored for various forecasting models, utilizing a range of evaluation metrics. These algorithms refine predictions by optimizing the convergence rate and interval width of forecasts to improve our predictions’ reliability and precision. This highlights the pivotal role these algorithms play within the framework.

Case study

As discussed in the introduction, the novel hybrid interval prediction framework has a wide range of applications across various domains. Its ability to adapt and perform well in diverse contexts is a testament to its robust generalization capabilities. This case study delves into forecasting the copper price. The framework of this case study is shown in Fig.2.

The plot of the proposed forecasting framework.

The initial module of the framework of this case study is feature selection, employing four variable selection methods: PCC, MIC, LASSO, and RF. The intersection of the feature sets identified by these methods is utilized in the predictive model, effectively isolating the primary factors and generating more robust models. This strategy assists in pinpointing the variables most influential to the copper price. The next module uses an amalgamation of QR and deep learning methods to augment prediction accuracy. This combined approach facilitates the replication of the dependent variable’s distribution, interpreted through the core features identified in the preceding module. The final module employs multi-objective optimization strategies to optimize and balance the convergence rate and predicted interval. Alongside the process illustration, the figure also highlights key results from our experimental analysis, reinforcing the accuracy and dependability of the developed framework.

Data preparation

The copper price data over a 30-year monthly period from December 1992 to December 2022, specific to the Chinese market. To facilitate analysis, the data is partitioned as follows: 80% for training, of which 25% is reserved for validation, and the remaining 20% is allocated for testing. Upon an exhaustive examination of pertinent literature, 12 primary variables have been spotlighted as influential factors. These variables include energy prices (crude oil and natural gas), other industrial metal prices (gold, iron ore, nickel, silver, and aluminum), and four different exchange rates (USD-PEN, USD-CLP, USD-AUD, USD-CNY). Table 2 and Fig. 3 present significant data characteristics.

The historical data of the influencing factors.

Feature selection module

The selection of factors via a single method can lead to instability and inaccuracy, particularly in handling complex and volatile data sets. This study presents an approach that leverages various variable selection techniques. By extracting the common feature set from these techniques, we identify the principal factors influencing probability forecasting models, enhancing their robustness. Employing a range of variable selection methods helps identify the most impactful factors affecting copper prices. This strategy not only complies with the principle of parsimony, favoring straightforward model interpretations, but it also enhances the robustness of dimensionality reduction and prediction algorithms. In the present study, four feature-selection methods, PCC, MIC, LASSO, and RF, are employed, and commonly selected features are chosen. Table 3 presents the results of these four variable selection methods.

The copper price is influenced by myriad interrelated factors associated with other commodities. Notably, the synchronicity of price movements among nickel, gold, iron ore, and crude oil reflects broader economic shifts. Crucially, aluminum prices, identified by LASSO, PCC, and RF, directly affect copper demand; a surge in aluminum prices propels industries towards copper alternatives, elevating its value. The USD-CNY exchange rate, emphasized by LASSO and MIC, holds significance due to China’s substantial copper consumption; variations in this rate modulate China’s purchasing power, impacting copper demand. Silver, pinpointed by PCC, MIC, and RF, aligns with copper’s market trajectory, largely due to its parallel dynamics with gold as a financial refuge during economic volatility. Nevertheless, the influence of aluminum, USD-CNY, and silver on copper is only uniformly supported by some analytical methods, highlighting the need for multifaceted analyses.

From an overarching economic vantage point, crude oil, gold, iron ore, and nickel are primary variables intricately tied to the copper price. Gold’s reputation as a safe-haven asset sways investor sentiment, particularly towards industrial metals such as copper. A diminished dollar value amplifies commodity prices, while copper’s diverse applications position it as an economic health barometer. The intertwined mining dynamics of copper and nickel and the determinative role of the crude oil price on mining expenses further solidify this intricate relationship. The industrial reliance on nickel and iron ore, especially in infrastructure, further complicates these demand dynamics. This complex web of influences underscores the deeply intertwined nature of global commodity markets in determining the copper price.

In conclusion, by selecting the common feature set across these techniques, the copper price is significantly influenced by crude oil, gold, iron ore, and nickel prices. These factors reflecting industrial activity, investor sentiment, and manufacturing sector performance profoundly impact copper prices. A multi-method approach, combining LASSO, PCC, MIC, and RF, offers a holistic view of the complex dynamics driving copper price, underscoring the importance of integrating data-driven methods with economic and financial theory.

Probability forecasting module

In this section, we introduce and delve into the outcomes from probabilistic forecasting models when PINC=90% and PINC=95% were designed to predict copper prices. By employing QR and probabilistic forecasting techniques, we aim to boost prediction accuracy and provide a thorough understanding of the data by examining the predicted quantiles, thereby closely reflecting the data’s distribution. By marrying QR with deep learning, we have devised a data-driven approach that adeptly approximates the population, circumventing any presumptions about the data generation process.

Leveraging QR and probabilistic forecasting techniques, we aim to enhance prediction precision and meticulously emulate the data’s distribution through quantile evaluation. Integrating QR with deep learning enables a data-driven strategy that accurately represents the population distribution, sidestepping preconceived notions about the data generation process. Notably, numerous studies predict the copper price based on assumptions of data following a normal or t-distribution. The t-distribution naturally accommodates data distributions with thick tails, potentially broadening prediction intervals. In contrast, when QR is coupled with kernel density estimation, it reveals a thin-tailed distribution for the copper price. Experimental outcomes underscore this by confirming a thin-tailed trend using the integrated QR and deep learning approach. This suggests that our predictive framework provides intervals that better mirror real-world data trends, yielding a more precise estimation.

Predicted probability density curves.

The probability density prediction curves for the QRLSTM, QRbiLSTM, QRGRU, and QRNN models, as derived from the 5th-70th moments of the test set, are illustrated in Fig.4. A noticeable feature of the QRLSTM model’s curve is its pronounced peak and narrowness, an indication of the model’s superior precision and lower variance in predictions. The location of the peak on a probability density prediction curve is suggestive of the most probable predicted value by the model. Remarkably, the QRLSTM model’s peak is consistently closer to the actual values than the other models, suggesting its superior performance. While heavier tails in a model’s probability density prediction curve would imply a higher likelihood of extreme predictions, posing potential risks, it is noteworthy that all the models’ curves in this analysis exhibit lighter tails. This characteristic points towards a lower likelihood of extreme predictions, offering a more reliable forecasting performance. The QRLSTM model, with its peak-and-narrow curve and lighter tails, demonstrates the best performance among the evaluated models in terms of precision, consistency, and reliability.

PICP and PINAW value for four probability forecasting models.

Table 4 presents detailed numerical performance metrics of the quantile regression probabilistic forecasting models at 90% and 95% confidence levels. Fig.5 visually compares the forecasting performance of the four quantile regression probabilistic model based on the PICP and PINAW at both 90% and 95% confidence levels. Reliability, represented by the PICP, measures the capability of the models to encompass the true values within the predicted intervals. A higher PICP value that closely matches the PINC, as exhibited by QRLSTM at 98.6301% and 100.0000% respectively, at PINC=90%, signifies a greater probability of capturing the true values, indicating superior forecasting reliability. This is crucial as it reflects the model’s trustworthiness in practical applications. Resolution, on the other hand, is about the informativeness of the predicted intervals. The QRLSTM model, with the smallest PINAW values of 0.0061 and 0.0072 at PINC=90% and 95% respectively, indicates narrower intervals. This demonstrates that the model provides more precise information about uncertainty, which is essential for making informed decisions based on the model’s outputs. Sharpness, represented by the AIS, balances the coverage and interval width, offering a holistic measure of the prediction intervals’ quality. The AIS values for QRLSTM are -618.4602 and -364.344 at PINC=90% and 95%, respectively, which are the highest among the models, indicating that QRLSTM achieves a superior balance between reliability and resolution. This balance is vital as it ensures that the model’s predictions are reliable and informative, contributing to its practical utility.

Considering each metric’s specific values and implications, the QRLSTM model emerges as a standout performer, demonstrating robust comprehensive performance in prediction accuracy and uncertainty representation. It achieves a high level of reliability with a PICP of 98.6301% at PINC=90%, provides the most informative intervals with a minimal PINAW of 0.0061, and maintains a superior balance as indicated by its AIS value of -618.4602. While other models like QRGRU and QRbiLSTM exhibit commendable performances in certain aspects, such as QRGRU’s high PICP of 100.0000% and QRbiLSTM’s low PINAW of 0.0066 at PINC=90%, none of them exhibit a consistent lead across all evaluation metrics. This underscores the importance of considering the trade-offs between different aspects of performance depending on the application’s specific requirements. Enriched analysis, integrating both specific data points and a deeper understanding of the evaluation metrics, underscores the exemplary and balanced performance of the QRLSTM model, reinforcing its significant application value.

Optimization module

This section presents an analysis of a novel hybrid interval prediction framework that combines multi-objective optimization techniques with quantile deep learning for copper price prediction. The framework refines the upper and lower bounds of the prediction interval concurrently, enhancing the forecasts’ reliability and precision. The evaluations consider four models that the MOMVO algorithm has enhanced. Additionally, a comparison is made between the effects of different optimization algorithms namely, MOMVO, MOALO, MODA, and MOSSA when they are applied to the QRLSTM model. The aim of this analysis is to identify the optimal combination of model and optimization algorithm for accurate and efficient copper price forecasting.

PICP, PINAW, and AIS values of 8 forecasting models when PINC=90%.

PICP, PINAW, and AIS values of 8 forecasting models when PINC=95%.

Table 5 presents a performance comparison between four multi-objective optimization models integrated with the QRLSTM model. Fig.6 and 7 illustrates a radar chart and bar plot comparison of eight forecasting models in terms of PICP, PINAW, and AIS values at a 90% confidence level and 95% confidence level. The visualization clearly shows each model’s strengths and weaknesses across these metrics. Fig.8 presents a focused comparison of PICP and PINAW values specifically for the four QRLSTM models optimized using multi-objective algorithms (MOALO-QRLSTM, MODA-QRLSTM, MOSSA-QRLSTM, and MOMVO-QRLSTM). Reliability, represented by the PICP, measures the capability of the models to encompass the true values within the predicted intervals. A higher PICP value that closely PINC, as exhibited by MODA-QRLSTM and MOSSA-QRLSTM at 91.7808% and 90.4110% respectively, at PINC=90%, signifies a greater probability of capturing the true values, indicating superior forecasting reliability. This is crucial as it reflects the model’s trustworthiness in practical applications. Resolution, on the other hand, is about the informativeness of the predicted intervals. The MOSSA-QRLSTM model, with the smallest PINAW values of 0.0051 and 0.0066 at PINC=90% and 95% respectively, indicates narrower intervals. This demonstrates that the model provides more precise information about uncertainty, which is essential for making informed decisions based on the model’s outputs. Sharpness, represented by the AIS, balances the coverage and interval width, offering a holistic measure of the prediction intervals’ quality. The AIS values for MOMVO-QRLSTM are -619.127 and -376.1261 at PINC=90% and 95%, respectively, indicating a good balance between reliability and resolution. The MOALO-QRLSTM model also performs well, with PICP values of 95.8904% and 94.5205% at PINC=90% and 95%, respectively, and AIS values of -672.9247 and -418.1952, showing a commendable performance in sharpness.

PICP and PINAW value for four multi-objective algorithms optimized QRLSTM models.

In terms of the specific performance of each model, the MODA-QRLSTM model performs the best at 90% confidence level, achieving high reliability with a PICP value of 91.7808%, and providing the narrowest prediction intervals with a PINAW of 0.0055. The model also demonstrates a good balance with its AIS of -576.7966, showcasing its robustness in forecasting accuracy and uncertainty representation. For the 95% confidence level, the MOSSA-QRLSTM model excels, achieving a PICP of 94.5202% and the narrowest intervals with a PINAW of 0.0066. The AIS value of -373.9687 for MOSSA-QRLSTM further highlights its well-rounded performance in both reliability and resolution.

While MOMVO-QRLSTM and MOALO-QRLSTM also show commendable performance in certain metrics–such as MOMVO-QRLSTM’s high PICP of 95.8904% and MOALO-QRLSTM’s competitive PINAW of 0.0065 at PINC=90%–they do not consistently outperform the MODA-QRLSTM and MOSSA-QRLSTM models across all evaluation metrics. These findings emphasize the importance of considering the trade-offs between different performance aspects when choosing a model for a specific application. MOMVO-QRLSTM and MOALO-QRLSTM may perform well in scenarios where high coverage is prioritized, but for applications that require more precise intervals, MODA-QRLSTM and MOSSA-QRLSTM are the superior choices.

In conclusion, the in-depth analysis and comparisons presented in Table 5 and Figures 6, 7, and 8 underscore the outstanding and balanced performance of the MODA-QRLSTM and MOSSA-QRLSTM models. These models exhibit excellent reliability, resolution, and sharpness, making them highly valuable for practical forecasting applications that require accurate and informative predictions.

Conclusion

In recent years, compounded by factors such as trade wars, the pandemic, geopolitical tensions, the Russia-Ukraine conflict, and the European energy crisis, copper prices have experienced significant volatility, serving as a barometer of economic health and triggering a chain reaction in the non-ferrous metal market. The development of the copper market is closely linked to global economic vitality. Fluctuations in the copper price have a significant impact on China’s macroeconomy and social stability, as well as on the economic security of other copper-supplying countries. Therefore, accurately forecasting the copper price is of great importance for studying global economic fluctuations.

This study introduced a sophisticated interval prediction framework by merging multi-objective optimization with quantile deep learning, targeting accurate copper price predictions. The framework is structured across three main phases: First, this study applied various dimensionality reduction and variable selection methods and chose the commonly selected way to identify primary influencing factors. Second, the use of QR deep learning models to achieve probabilistic and interval forecasting to improve prediction accuracy and uncertainty and provide a comprehensive picture of copper price by evaluating the distribution. It fuses multi-objective optimization with quantile deep learning, further elevating the prediction accuracy of the copper price. These algorithms not only refine predictions but also optimize the convergence rate and interval width at the same time. The evaluation of multi-objective optimization algorithms optimized for different forecasting models using a variety of evaluation metrics confirms the superior performance of these algorithms compared to benchmark interval construction methods. The forecasting framework proposed in this paper is able to achieve accurate predictions of the extreme volatility of copper prices, which can serve as an important reference for the prediction of non-ferrous metal prices, thereby providing a theoretical basis for the demand forecasting of industrial raw materials. Moreover, the volatility of the copper price can be used to anticipate financial crises in advance. Hence, it has significant implications for the study of global economic fluctuations.

In light of these findings, the proposed framework offers a promising approach to forecasting the copper price and is widely applicable to other domains. Its capacity to consider a broad array of influencing factors and its robustness in generating reliable and precise forecasts make it a versatile tool that can be adapted to various forecasting scenarios. However, one limitation of the current approach is that the influencing factors affecting copper prices may change in the future. While our variable selection module is designed to be adaptable and universal, enabling the incorporation of additional influential factors in future framework iterations. In future research, additional variables could be integrated, allowing the framework to remain adaptive as new trends and external influences emerge.

Beyond copper price forecasting, the framework has potential applications in other areas, such as stock price forecasting, demand forecasting in supply chain management, and predictive modeling in sectors like healthcare and climatology. Consequently, our work contributes significantly to the field of copper price forecasting while also paving the way for further innovation in various domains that rely on predictive modeling. Future studies could refine the framework to better account for specific market dynamics, integrate more granular variables, and explore its use in diverse environments. By doing so, this framework can support advancements in predictive modeling that remain relevant and adaptive as new challenges and data sources arise.

Data Availability

Data that support the findings of this study are available at https://cn.investing.com

References

Zhang, H., Nguyen, H., Vu, D.-A., Bui, X.-N. & Pradhan, B. Forecasting monthly copper price: A comparative study of various machine learning-based methods. Resour. Policy 73, 102–189. https://doi.org/10.1016/j.resourpol.2021.102189 (2021).

Kahraman, E. & Akay, O. Comparison of exponential smoothing methods in forecasting global prices of main metals. Miner. Econ. 36(3), 427–435 (2023).

Cortez, C. A. T. et al. Determining the embedding parameters governing long-term dynamics of copper prices. Chaos, Solitons & Fractals 111, 186–197 (2018).

Raza, S. A., Masood, A., Benkraiem, R. & Urom, C. Forecasting the volatility of precious metals prices with global economic policy uncertainty in pre and during the covid-19 period: Novel evidence from the garch-midas approach. Energy Economics 120(106), 591 (2023).

Khurshid, A., Chen, Y., Rauf, A. & Khan, K. Critical metals in uncertainty: How Russia-Ukraine conflict drives their prices?. Resour. Policy 85, 104 000 (2023).

Charlot, P. & Marimoutou, V. On the relationship between the prices of oil and the precious metals: Revisiting with a multivariate regime-switching decision tree. Energy Economics 44, 456–467. https://doi.org/10.1016/j.eneco.2014.04.021 (2014).

Alameer, Z., Elaziz, M. A., Ewees, A. A., Ye, H. & Jianhua, Z. Forecasting copper prices using hybrid adaptive neuro-fuzzy inference system and genetic algorithms. Nat. Resour. Res. 28, 1385–1401 (2019).

Wang, M., Chen, W., Zhou, Y. & Li, X. Assessment of potential copper scrap in China and policy recommendation. Resour. Policy 52, 235–244. https://doi.org/10.1016/j.resourpol.2016.12.009 (2017).

Ospina, R., Gondim, J. A., Leiva, V. & Castro, C. An overview of forecast analysis with ARIMA models during the covid-19 pandemic: Methodology and case study in brazil. Mathematics 11(14), 3069 (2023).

Huang, Y., Dai, X., Wang, Q. & Zhou, D. A hybrid model for carbon price forecastingusing GARCH and long short-term memory network. Appl. Energy 285, 116–485. https://doi.org/10.1016/j.apenergy.2021.116485 (2021).

Hu, Y., Ni, J. & Wen, L. A hybrid deep learning approach by integrating LSTM-ANN networks with GARCH model for copper price volatility prediction. XXPhys. A 557, 124–907. https://doi.org/10.1016/j.physa.2020.124907 (2020).

Cohen, G. & Aiche, A. Forecasting gold price using machine learning methodologies. Chaos, Solitons & Fractals 175, 114 079 (2023).

Li, N. et al. A novel copper price forecasting ensemble method using adversarial interpretive structural model and sparrow search algorithm. Resour. Policy 91, 104 892 (2024).

Jin, B. & Xu, X. Machine learning predictions of regional steel price indices for east China, Ironmaking & Steelmaking, p. 03 019 233 241 254 891, (2024).

Jin, B. & Xu, X. Pre-owned housing price index forecasts using gaussian process regressions. J. Model. Manag. 19(6), 1927–1958 (2024).

Jin, B. & Xu, X. Palladium price predictions via machine learning. Materials Circular Economy 6(1), 32 (2024).

Jin, B. & Xu, X. Wholesale price forecasts of green grams using the neural network, Asian Journal of Economics and Banking, no. ahead-of-print, (2024).

Jin, B., Xu, X. & Zhang, Y. Thermal coal futures trading volume predictions through the neural network. J. Model. Manag. 20(2), 585–619 (2025).

Zhang, H. et al. Proposing two novel hybrid intelligence models for forecasting copper price based on extreme learning machine and meta-heuristic algorithms. Resour. Policy 73, 102–195 (2021).

Awe, O. O. & Dias, R. Comparative analysis of ARIMA and artificial neural network techniques for forecasting non-stationary agricultural output time series. AGRIS on-line Papers in Economics and Informatics 14(4), 3–9 (2022).

Wang, C., Zhang, X., Wang, M., Lim, M. K. & Ghadimi, P. Predictive analytics of the copper spot price by utilizing complex network and artificial neural network techniques. Resour. Policy 63, 101–414. https://doi.org/10.1016/j.resourpol.2019.101414 (2019).

Liu, W., Wang, C., Li, Y., Liu, Y. & Huang, K. Ensemble forecasting for product futures prices using variational mode decomposition and artificial neural networks. Chaos, Solitons & Fractals 146, 110 822 (2021).

Liu, C., Hu, Z., Li, Y. & Liu, S. Forecasting copper prices by decision tree learning. Resour. Policy 52, 427–434. https://doi.org/10.1016/j.resourpol.2017.05.007 (2017).

Liu, J., Wang, P., Chen, H. & Zhu, J. A combination forecasting model based on hybrid interval multi-scale decomposition: Application to interval-valued carbon price forecasting. Expert Syst. Appl. 191, 116 267 (2022).

Yang, C., Zhang, H. & Weng, F. Effects of covid-19 vaccination programs on eu carbon price forecasts: Evidence from explainable machine learning, International Review of Financial Analysis, p. 102 953, 2023.

Weng, F. et al. Gold price forecasting research based on an improved online extreme learning machine algorithm. J. Ambient. Intell. Humaniz. Comput. 11, 4101–4111 (2020).

Yu, K., Lu, Z. & Stander, J. Quantile regression: Applications and current research areas. Journal of the Royal Statistical Society Series D: The Statistician 52(3), 331–350 (2003).

Niemierko, R., Töppel, J. & Tränkler, T. A d-vine copula quantile regression approach for the prediction of residential heating energy consumption based on historical data. Appl. Energy 233–234, 691–708. https://doi.org/10.1016/j.apenergy.2018.10.025 (2019).

Zhang, M., Yuan, Z.-M., Dai, S.-S., Chen, M.-L. & Incecik, A. LSTM RNN-based excitation force prediction for the real-time control of wave energy converters. Ocean Eng. 306, 118 023 (2024).

Wang, Y., Liu, J. & Qian, G. Hierarchical FFT-LSTM-GCN based model for nuclear power plant fault diagnosis considering spatio-temporal features fusion. Prog. Nucl. Energy 171, 105 178 (2024).

Hong, J., Liang, F., Yang, H. et al., Multi-forword-step state of charge prediction for real-world electric vehicles battery systems using a novel lstm-gru hybrid neural network, eTransportation, p. 100 322, (2024).

Ying, H. et al. Short-term prediction of wind power based on phase space reconstruction and BILSTM. Energy Rep. 9, 474–482 (2023).

Pearce, T., Brintrup, A., Zaki, M. & Neely, A. High-quality prediction intervals for deep learning: A distribution-free, ensembled approach, International conference on machine learning, PMLR, pp. 4075–4084, (2018).

Hu, J., Luo, Q., Tang, J., Heng, J. & Deng, Y. Conformalized temporal convolutional quantile regression networks for wind power interval forecasting. Energy 248, 123–497. https://doi.org/10.1016/j.energy.2022.123497 (2022).

Liu, Z., Yang, J., Wang, L. & Chang, Y. A novel relation aware wrapper method for feature selection. Pattern Recogn. https://doi.org/10.1016/j.patcog.2023.109566 (2023).

Wang, J., Zhou, H., Hong, T., Li, X. & Wang, S. A multi-granularity heterogeneous combination approach to crude oil price forecasting. Energy Economics 91, 104–790. https://doi.org/10.1016/j.eneco.2020.104790 (2020).

Chen, H. & Chang, X. Photovoltaic power prediction of LSTM model based on Pearson feature selection. Energy Rep. 7, 1047–1054 (2021).

Reshef, D. N. et al. Detecting novel associations in large data sets. Science 334(6062), 1518–1524 (2011).

Kamkar, I., Gupta, S. K., Phung, D. & Venkatesh, S. Stable feature selection for clinical prediction: Exploiting ICD tree structure using tree-lasso. J. Biomed. Inform. 53, 277–290. https://doi.org/10.1016/j.jbi.2014.11.013 (2015).

Yang, C., Abedin, M. Z., Zhang, H., Weng, F. & Hajek, P. An interpretable system for predicting the impact of covid-19 government interventions on stock market sectors, Annals of Operations Research, pp. 1–28, (2023).

Weng, F., Zhu, J., Yang, C., Gao, W. & Zhang, H. Analysis of financial pressure impacts on the health care industry with an explainable machine learning method: China versus the usa. Expert Syst. Appl. 210, 118 482 (2022).

Chu, Y., Yin, Z. & Yu, K. Bayesian scale mixtures of normals linear regression and bayesian quantile regression with big data and variable selection. J. Comput. Appl. Math. 428, 115–192. https://doi.org/10.1016/j.cam.2023.115192 (2023).

Zhang, D., Wang, S., Liang, Y. & Du, Z. A novel combined model for probabilistic load forecasting based on deep learning and improved optimizer. Energy 264, 126–172. https://doi.org/10.1016/j.energy.2022.126172 (2023).

He, Y., Cao, C., Wang, S. & Fu, H. Nonparametric probabilistic load forecasting based on quantile combination in electrical power systems. Appl. Energy 322, 119–507. https://doi.org/10.1016/j.apenergy.2022.119507 (2022).

Gers, F., Schraudolph, N. & Schmidhuber, J. Learning precise timing with LSTM recurrent networks. J. Mach. Learn. Res. 3, 115–143. https://doi.org/10.1162/153244303768966139 (2002).

Ying, H. et al. Short-term prediction of wind power based on phase space reconstruction and BILSTM. Energy Rep. 9, 474–482 (2023).

Zhang, C. et al. Evolutionary quantile regression gated recurrent unit network based on variational mode decomposition, improved whale optimization algorithm for probabilistic short-term wind speed prediction. Renewable Energy 197, 668–682 (2022).

Rosenblatt, M. Remarks on Some Nonparametric Estimates of a Density Function. Ann. Math. Stat. 27(3), 832–837. https://doi.org/10.1214/aoms/1177728190 (1956).

Guo, Z., Zhou, K., Zhang, X. & Yang, S. A deep learning model for short-term power load and probability density forecasting. Energy 160, 1186–1200 (2018).

Hogg, R., McKean, J. & Craig, A. Introduction to mathematical statistics: Pearson education (Upper Saddle River, NJ, 2005).

Luo, H., Wang, D., Cheng, J. & Wu, Q. Multi-step-ahead copper price forecasting using a two-phase architecture based on an improved LSTM with novel input strategy and error correction. Resour. Policy 79, 102–962 (2022).

Nowotarski, J. & Weron, R. Recent advances in electricity price forecasting: A review of probabilistic forecasting. Renew. Sustain. Energy Rev. 81, 1548–1568 (2018).

Gneiting, T., Balabdaoui, F. & Raftery, A. E. Probabilistic forecasts, calibration and sharpness. J. R. Stat. Soc. Ser. B Stat Methodol. 69(2), 243–268 (2007).

Kumar, S. et al. A two-archive multi-objective multi-verse optimizer for truss design. Knowl.-Based Syst. https://doi.org/10.1016/j.knosys.2023.110529 (2023).

Serrano-Guerrero, X., Briceño-León, M., Clairand, J.-M. & Escrivá-Escrivá, G. A new interval prediction methodology for short-term electric load forecasting based on pattern recognition. Appl. Energy 297, 117–173 (2021).

Mirjalili, S., Jangir, P., Mirjalili, S. Z., Saremi, S. & Trivedi, I. N. Optimization of problems with multiple objectives using the multi-verse optimization algorithm. Knowl.-Based Syst. 134, 50–71 (2017).

Ikram, R. M. A. et al. Application of improved version of multi verse optimizer algorithm for modeling solar radiation. Energy Rep. 8, 12063–12080 (2022).

Nazari, N., Mousavi, S. & Mirjalili, S. Exergo-economic analysis and multi-objective multi-verse optimization of a solar/biomass-based trigeneration system using externally-fired gas turbine, organic rankine cycle and absorption refrigeration cycle. Appl. Therm. Eng. 191, 116–889 (2021).

Acknowledgements