Abstract

To detect frozen coal residue in railcars during winter transportation in mid-to-high latitude regions, this paper proposes a method using a spin-type multi-line LiDAR system. The LiDAR is positioned above the railcar, with its rotation axis aligned to the train’s direction, capturing point cloud data as the train moves. The data processing involves three steps: first, extracting point cloud data within the railcar’s range based on the LiDAR-railcar positioning; then correcting for contour tilt and motion distortion and stitching the data using a motion displacement fusion algorithm. Statistical filtering and voxel grid methods are applied to filter, simplify, and smooth the stitched data. Finally, a 360-degree ray and alpha-blending algorithm extracts contour slices used to estimate frozen coal volume. Experiments were conducted to optimize voxel grid size and slice spacing parameters. With optimal configuration, the proposed technique achieves over 93.5% accuracy, addressing the inaccuracy of manual estimation and supporting frozen coal removal planning.

Similar content being viewed by others

Introduction

Coal is a fossil fuel with a stable supply and serves as a critical energy pillar for the economic development of many nations. Globally, a substantial quantity of coal resources is consumed annually. Railway transportation has become the primary mode of coal transport due to its strong capacity, large volume, high efficiency, and minimal restriction by weather and other factors. However, during winter in high-altitude and cold regions, coal transported by railways tends to freeze on the carriage walls, making complete unloading challenging. Statistics indicate that the probability of coal freezing on carriage walls during winter in high-altitude and cold regions exceeds 95%, with the maximum residual amount reaching up to one-fifth of the carriage’s volume. This issue not only affects the efficiency of coal loading and unloading operations, but also results in significant tonnage loss (“under-discharge”) and increases both labor costs and safety risks due to the required manual intervention. To ensure complete unloading, workers often need to climb onto the carriage using tools to visually inspect the residual coal and then determine the number of personnel required to enter the carriage for frozen coal removal. This process is labor-intensive, operationally complex, and poses considerable safety hazards. Therefore, developing an effective method for detecting residual frozen coal within the carriage and formulating a corresponding removal plan is of great significance for improving the operational efficiency of coal transportation during winter in cold regions. However, frozen coal detection presents multiple technical challenges. On the one hand, frozen coal may adhere to various internal surfaces of the carriage, with irregular shapes and spatially uncertain distributions. On the other hand, the harsh detection environment—characterized by low temperatures, high humidity, and airborne dust—makes it difficult for sensors to stably acquire accurate and reliable data.

Currently, computer vision detection is the most commonly used method for cabin detection1,2. Xie3 utilized a monocular camera to capture vehicle images. They converted the color images into grayscale and applied image processing techniques to extract cabin information, though the error rate remained high. Mrovlje4 and Li5 employed a binocular camera to detect the cabin interior. The accuracy was high under favorable natural conditions. Existing solutions for frozen coal residue detection are primarily based on computer vision or multi-sensor fusion approaches, which exhibit significant limitations when applied to the harsh and variable conditions of coal unloading stations. Vision-based methods are particularly susceptible to environmental interference, including coal dust, persistent mechanical vibrations, and unstable illumination resulting from outdoor operations, all of which lead to degraded image quality and reduced detection reliability. On the other hand, the surface texture of frozen coal is typically uniform and lacks distinctive visual features, which severely constrains the effectiveness of texture-based depth estimation and segmentation methods. Multi-sensor systems, such as those combining LiDAR and camera technologies, require precise temporal and spatial calibration between sensors, thereby increasing both system costs and maintenance complexity.

With the maturation of lidar technology in recent years6, detection accuracy has progressively improved, leading to its widespread application in complex scenarios such as target detection7, deformation monitoring8, and autonomous driving9,10,11,12. It has also been employed for detecting bulk cargo in fixed spaces. Ding13 proposed a rapid volume measurement algorithm for irregular bulk cargo. The algorithm generates a slice matrix through dimensionality reduction and rasterization. After filling the slice matrix area using the X-ray scanning line algorithm, the area of each slice matrix is integrated to calculate the volume of the entire point cloud. To efficiently obtain the point cloud volume of railway tank cars and containers, Zhang14 proposed a method for point cloud data acquisition, processing, and volume calculation. Experimental results demonstrate that using 3D laser scanning technology for volume measurement of railway tank cars and containers is more accurate, safe, efficient, and automated. Shao15 proposed an adaptive simplification algorithm based on particle swarm optimization (PSO) to reconstruct granary surfaces and estimate the volume of bulk grain. Compared to the average distance method, the point set obtained through the proposed algorithm is more evenly distributed, with a reduction rate of only 6.96%. The relative error in volume estimation is less than 0.3%. This algorithm, coupled with a 3D laser scanner, can be used to achieve online real-time measurement of bulk grain storage in granaries. Estimating the volume of frozen coal residue in an open car is a typical fixed-space volume estimation, suitable for detection using laser scanning. The spinning LiDAR, in particular, can scan the surrounding space 360°, making it ideal for fixed-space volume estimation.

The point cloud data collected by the spinning LiDAR is highly dense and partially overlapping, necessitating point cloud filtering. Currently, point cloud data filtering methods include irregular triangulation filtering16,17, digital morphological filtering algorithms18,19,20, and slope-based filtering methods21,22. Lindenberger23 were the first to apply morphological filtering algorithms to the processing of 3D point cloud data in 1993, proposing the use of a one-dimensional mathematical morphological algorithm. Zhang24 designed and developed a progressive digital morphological filter to process non-ground point cloud data of various shapes and sizes. By gradually increasing the window size, cars, trees, and buildings on the ground can be removed without a fixed threshold, making it suitable for removing large-scale interference. Husain25 utilized voxelized grids to collect point clouds within the grid and filter outliers, making it more suitable for small-scale, precise volume estimation. Furthermore, some non-conventional methods have shown remarkable effectiveness in processing large-scale point cloud data. For example, Mahphood26 proposed the Tornado algorithm, which utilizes a vertically inverted conical filter to eliminate non-ground points by evaluating the distance between each point and the cone’s central axis. Subsequent enhancements to this algorithm27 significantly reduced Type I errors, leading to superior filtering performance in complex environments characterized by the presence of buildings, bridges, and dense vegetation.

Additionally, point cloud volume calculation is another critical technology for spatial volume estimation. For regular point cloud volumes, conventional mathematical geometry methods can be employed, while various transformation methods are required for irregular point cloud volumes. Zhi28 proposed a point cloud volume calculation method based on slicing, which segments point cloud data and ultimately derives the volume through noise reduction. This method may lead to contour boundary expansion and “morphological distortion” of the contour polygon, potentially causing errors in the final calculated volume. Li29 proposed a point cloud slicing method (PCSl), which performs continuous slicing at uniform intervals in a specific direction, sequentially identifies the contour boundary polygons of the point cloud slices based on slicing order and polygon area, and ultimately calculates the volume of each point cloud slice and the gap between adjacent slices. By summing the volumes of each slice, the total volume of the scanned object is calculated, effectively overcoming the limitations of the point cloud segmentation method. To detect the volume of large, irregular ore bodies, Meng30 investigated an enhanced point cloud slicing method. This method first segments the point cloud data at fixed intervals, then employs the Euclidean clustering method to segment the slice contour. By adjusting slice thickness, this method is suitable for estimating the volume of concave spaces at different scales with high accuracy.

Based on existing LiDAR detection technology, this paper designs and develops a novel detection algorithm flow for frozen coal residue in carriages using spinning LiDAR. The algorithm comprises three components: carriage point cloud extraction, carriage point cloud preprocessing, and frozen coal volume estimation. The main contributions are:

-

(a)

To address the morphological characteristics of the short-side carriage of an open car, a method for identifying the short boundary based on height difference is designed.

-

(b)

To address the characteristics of dynamic data collected by the spinning LiDAR, a method for removing motion distortion is designed. The number of rows and columns of the point cloud data is calculated based on its attributes, and the displacement motion of each frame is corrected for each column.

-

(c)

To address the issue of non-perpendicularity between the radar plane and the carriage contour, a method for correcting the dynamic point cloud contour using the static point cloud is proposed. The static point cloud data in the same column is selected, the radar deflection angle is calculated through fitting, and the collected carriage data is then rotated and corrected as a whole.

-

(d)

To address the issue of splicing multi-frame point clouds, a point cloud data splicing method based on mobile displacement fusion compensation is proposed. Based on the train’s uniform speed, the displacement of the single-frame point cloud is calculated, and the multi-frame point data is uniformly adjusted to the same frame coordinate system to achieve the splicing of the multi-frame point cloud data, thereby forming a complete carriage point cloud image.

-

(e)

For frozen coal volume estimation, a method combining the alpha and 360-degree scanning algorithms is proposed. The point cloud contour is determined in two steps: first, the alpha algorithm is used to identify the inner and outer contour boundaries generated by the projection, and then the ray 360-degree scanning algorithm is used to sort the point cloud data. Angle and distance thresholds between two adjacent points are set to eliminate invalid boundary points, thereby improving the accuracy of area calculation.

Materials and methods

Hardware system construction

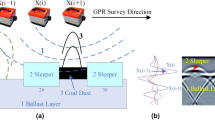

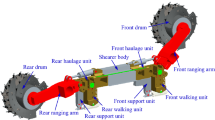

The hardware of the data acquisition system primarily includes a VLP-16 LiDAR, a computer, a radar bracket, and a data transmission line. The VLP-16 LiDAR system obtains three-dimensional coordinates and reflectance intensity data for each measurement point through the reception and analysis of reflected laser pulses, thereby demonstrating excellent adaptability to the complex industrial environments characteristic of coal handling and transportation facilities.In this study, the LiDAR is mounted directly above the carriage, with its rotation axis oriented horizontally relative to the train’s direction of travel. The LiDAR emission array is aligned parallel to the train’s movement and perpendicular to the carriage’s cross-section. The setup is illustrated in Fig. 1, where the Z-axis of the LiDAR coordinate system is aligned with the direction of train movement, the Y-axis is oriented vertically upward, perpendicular to the underside of the train, and the X-axis is defined as orthogonal to the Y–Z plane.The VLP-16 LiDAR operates at a frequency of 10 Hz with a rotational speed of 600 r/min, generating 10 LiDAR scan frames per second ( In this paper, each “frame” specifically refers to a complete set of point cloud data generated during a single rotational cycle of the LiDAR, herein termed a “LiDAR scan frame”). At a scanning distance of 5 m, it provides a horizontal resolution of 1.73 cm and a vertical resolution of 17.44 cm, with an accuracy of ± 3 cm. This configuration ensures that the LiDAR can perform a complete scan of the train as it enters the loading and unloading station at normal operating speed.The software component of the system comprises dual operating systems: Ubuntu 16.04 and Windows 10. The Ubuntu 16.04 system, equipped with ROS, collects real-time radar data and converts it into a format compatible with the PCL library. The Windows system is equipped with Visual Studio 2019 and configured with the PCL 1.10 point cloud processing library.

Schematic diagram of scanning carriage.

Data processing procedure

The frozen coal residue detection method proposed in this study is centered on three key components: point cloud data extraction of the carriage, preprocessing of the extracted data, and estimation of the frozen coal volume. The overall workflow is illustrated in Fig. 2. In accordance with the scanning characteristics of rotary LiDAR and the specific requirements for detecting frozen coal residues in railway carriages, tailored point cloud processing algorithms have been designed to implement these three functional modules effectively.

Data processing flowchart.

Carriage Point Cloud Data Extraction: Extracts data from each carriage based on the scanned data and assigns numbers to the carriages.

Carriage Point Cloud Data Preprocessing: Includes several steps: point cloud tilt correction to address equipment offset caused by environmental influences on hardware; point cloud slicing to remove redundant point clouds from each scanning cycle; and point cloud denoising and smoothing to eliminate anomalous data and create a three-dimensional smooth grid of the carriage.

Frozen Coal Volume Estimation: Utilizes the three-dimensional smooth grid of the carriage to calculate the volume of residual frozen coal by accumulating the cross-sectional areas.

Detection methods

Carriage point cloud data extraction

Given that the acquisition of LiDAR point cloud data is untargeted and undifferentiated, it is necessary to extract the scanning data of individual open carriages from a large volume of continuous data. The process involves identifying the head and tail of the open carriages based on one cycle of point cloud data, determining the head and tail of individual open carriages by observing the cycle change in relation to the continuous point cloud data, and subsequently extracting the point cloud data for all carriages.

In this study, the LiDAR moves relative to the long side of the wagon, making the identification of the wagon’s short side crucial for determining the head and tail. First, point cloud data is obtained from one complete rotation of the radar. Since the carriage width is fixed at 3.3 m, a threshold value is applied to filter out the two side walls of the carriage. Then, the carriage height extremes are detected by calculating the maximum and minimum Y-coordinate values of the point cloud. If the point cloud corresponds to the edge of the carriage, the difference between the maximum and minimum Y-coordinate values should exceed the threshold, indicating the presence of the carriage edge.

Following the identification of the carriage boundary, a conditional filtering approach is applied to extract the target point cloud corresponding to the carriage. The valid range of the target point cloud data X is determined based on the LiDAR’s vertical offset from the carriage floor and its lateral distance from the inner walls. Point cloud data falling outside this defined spatial range are excluded. The specific filtering criteria are given in Eq. (1).

Here, \(I\) represents the initial point cloud data set, \(\left( {X,Y\left. {,Z} \right)} \right.\) represent the three-dimensional spatial coordinates of each point in the point cloud, \(F\) denotes the conditionally filtered point cloud data, and \(\delta_{1} ,{ }\delta_{2}\) represent the threshold bounds for the x-axis in the conditional filtering process, which are empirically calibrated based on field-collected data.

Carriage point cloud pre-processing

Point cloud data correction

-

(1)

Tilt correction based on static point cloud

By selecting two sets of point cloud data on the wall, the deviation angle from the Z-axis is calculated using straight-line fitting. This angle is then applied to a rotation matrix, performing an RM matrix transformation on the overall point cloud data of the compartment, resulting in the corrected point cloud, as shown in Fig. 3. In three dimensions, the matrix \(RM\) for rotating the data points by an angle \(\theta_{z}\) around the Z-axis is represented in Eq. (2).

-

(2)

Lidar motion distortion correction

(a) Normal state of point cloud, (b) inclined state of point cloud.

The relative motion between the scanning equipment and the scanned object introduces distortion in the point cloud data. This paper employs a uniform aberration correction method. First, the position parameters of the point cloud are determined based on the scanning sequence. Next, the rows and columns corresponding to the point cloud in each frame are calculated from the point cloud’s coordinates. Finally, the displacement compensation is computed and added to the original point cloud coordinates to obtain the corrected coordinate values is represented in Eq. (3)

where \(Z_{u}\) is the correction quantity, V denotes the approximate average speed of the train, \(u\) is the number of columns in a frame of point cloud data in which the point cloud is located.

Point cloud data stitching

Trains operate at varying speeds, leading to differing overlaps in radar scanning data across each cycle. Therefore, after converting the coordinate system of single-frame point cloud data and restoring the scanned compartment point cloud data, it is essential to stitch the collected frame point cloud data to display the entire point cloud of the compartment. This paper proposes a method based on moving displacement fusion compensation, which is determined by the train’s running speed. This method assigns a new Z-value to the Z-axis coordinates of the scanned point cloud. Each frame of point cloud data is panned in the forward direction of the train according to the scanning order, based on the total number of frames, and the complete point cloud image is obtained within the carriage coordinate system. Assuming the train moves forward at a uniform speed \(V\) and the LiDAR operates at 10Hz, the distance traveled by the train along the Z-axis direction in 0.1 s is \(\frac{V}{10}\). This indicates that the difference in Z-value between the starting positions of each adjacent frame of the point cloud is \(V/10\). Using this concept, displacement compensation is applied to the point cloud data, as shown in Eq. (4)

where \(Z_{ij}^{\prime }\) represents the Z-coordinate value of the \(j\) point in the \(i\) frame of point cloud data after displacement compensation, \(Z_{ij}\) represents the Z-coordinate value of the \(j\) point in the \(i\) frame of point cloud data under the original coordinate system of the \(i\) frame data, \(N\) is the number of recorded point cloud frames, and \(I\) is the number of current frames. When the direction of the positive half-axis of the Z-axis of the LiDAR aligns with the running direction of the train, the above displacement compensation takes the “+” sign; otherwise, it takes the “−” sign.

Using this method, we obtain the 3D data information with the bottom of the carriage as the coordinate origin, and the 3D coordinate value of the \(j\) data point in the \(i\) frame of point cloud data is(\(x_{ij}^{\prime } ,y_{ij}^{\prime } ,Z_{ij}^{\prime }\)), h is the vertical distance from the LiDAR to the bottom of the carriage,as shown in Eq. (5).

Point cloud data denoising and smoothing

-

(1)

Point cloud data filtering

In order to remove noise from the carriage point cloud data, this paper combines conditional filtering and statistical filtering to form a comprehensive denoising algorithm. Conditional filtering extracts target area data by setting appropriate threshold ranges, thereby narrowing down the research area and enhancing the efficiency of point cloud processing. Statistical filtering calculates the average Euclidean distance from each sampling point to its neighboring points and sets a threshold to remove outliers. This combined method effectively compensates for the limitations of individual algorithms and maximizes the elimination of noise data.

-

(2)

Point cloud data downsampling

Point cloud downsampling is a technique used to reduce the number of points in a point cloud dataset. This process can effectively eliminate noisy point clouds, typically employing the voxel grid method31. The voxel grid method involves adjusting the size of the overall voxel grid and introducing an adaptive distance d to prevent anomalous point cloud data from being located at the grid’s boundaries. The corrected distance is as shown in Eq. (6).

\(X_{max} ,{ }Y_{max} ,{ }Z_{max}\) represent the maximum values of the point cloud coordinates, while \(X_{min} ,{ }Y_{min} ,{ }Z_{min}\) represent the corresponding minimum values. \(l_{x} ,{ }l_{y} ,{ }l_{z}\) denote the differences between the maximum and minimum coordinate values along the X, Y, and Z axes, respectively, d refers to the adaptation distance.

After completing the correction, the side length of the cube grid needs to be determined. An appropriately sized cube grid enhances search efficiency while minimizing the loss of key point cloud information. Let \(N\) be the total number of point clouds, and let the side length of the cube grid be \(b\), which numerically represents the average number of point clouds per unit side length. Divide \(l_{x} ,l_{y} ,l_{z}\) into \(u\), \(v\), and \(w\) segments, respectively, based on \(b\). The total number of small grids will then be \(u*v*w\). If the expected average number of point clouds in each grid is \(G\), as shown in Eqs. (7)–(9).

Among them, \(\left\lfloor \cdot \right\rfloor\) is a rounding function, which means not less than its smallest integer.

Then the grids are numbered to determine the grid position of the point cloud. If the coordinates of any point \(P_{i}\) are (\(X_{i} ,{ }Y_{i} ,{ }Z_{i}\)), the grid position number is \(\left( {s, t, r} \right)\), as shown in Eqs. (10).

Finally, the centroid of each cube grid is calculated, and the distance between each point cloud within the grid and the centroid is assessed. If a point cloud is located at the centroid, it replaces all other point clouds within the grid. If no point cloud is at the centroid, the point closest to the centroid is used to replace the other points in the grid, with the remaining data being removed. This process completes the simplification and downsampling of the point cloud.

-

(3)

Point cloud data smoothing

After filtering and splicing the point cloud data, ghosting artifacts may appear on the side walls of the carriage, significantly impacting the volume calculation process. Therefore, it is essential to further eliminate this noise to achieve a smooth surface in the point cloud data. We employ the moving least squares method32 to smooth the data, which provides an improved fit by adjusting the weight influence area.

Point cloud slice area and volume calculation

Point cloud slicing

By projecting the point cloud onto two slicing surfaces, the outline of the point cloud segment can be determined. This paper selects the direction of the train’s movement as the slicing direction and segments the car model point cloud accordingly, as shown in Eq. (11).

Among them, \(i\) is the serial number of the slice, \(Z_{i}\) is the position of the cutting plane, \(l\) is the interval distance of the slice, \(Z_{max}\) represents the maximum value of the point cloud coordinates in the Z-axis direction, \(Z_{min}\) represents the minimum value of the point cloud coordinates in the Z-axis direction, \(m\) is the number of cutting planes, and ⌈·⌉ is the upward rounding function.

Boundary outline definition

The alpha algorithm33 and the ray 360-degree scanning algorithmare two traditional methods for contour definition. The alpha algorithm is versatile and can handle irregular point cloud shapes effectively; however, it often extracts both the inner and outer boundaries, resulting in double contours. In contrast, the ray 360-degree scanning algorithm defines the order of point clouds based on calculated angles, connecting them sequentially to form contours for irregular sections. However, its accuracy decreases with high-density point clouds.

This paper addresses these issues by combining both methods: First, the alpha algorithm is used to perform an initial identification of the projected point cloud, determining both the inner and outer contour boundaries and eliminating the point cloud between them. Subsequently, the ray 360-degree scanning algorithm is employed to scan and sort these double contours. To resolve the issue of double boundaries, a threshold judgment method is introduced. The point cloud is sorted by scanning angle, and the angle difference and distance between adjacent points are evaluated. If the angle difference is less than a predefined threshold and the distance exceeds a minimum set distance, the points are considered as approximately at the same angle. The relationship between the inner and outer points is determined based on their distance from the centroid. The inner point closer to the centroid is selected as the boundary, while the outer point is discarded.

Figure 4 illustrates an ideal cross-section of the car point cloud. The shaded area represents the projection surface of the coal residue. Point \(O\) is the centroid of the cross-section point cloud, and Point \(P_{0}\) is the starting point for the 360-degree scanning algorithm. \(OP_{0}\) serves as the reference angle line, with scanning proceeding counterclockwise. Point A is a point on the coal surface, forming an angle α with the reference line \(OP_{0}\), while Point \(B\) is a data point at the bottom of the car, forming an angle β with \(OP_{0}\) After sorting the angles formed by the ray and the point cloud, points \(A\) and \(B\) are adjacent data points. It is evident that points \(A\) and \(B\) lie on different contour boundaries, and the coordinate of point \(A\) is required, not point \(B\).

Schematic diagram of coal profile determination.

An angle threshold γ is set to determine candidate inner and outer boundary points. If the angle difference between adjacent point cloud data after sorting is less than γ, the two points are considered potential candidates for the inner and outer boundaries. To accurately distinguish between them, a distance threshold \(l\) is introduced. If \(\left| {{\text{AB}}} \right| < {\text{l}}\), points \(A\) and \(B\) are on the same boundary; otherwise, they are on the inner and outer boundaries. In this case, the distance from the centroid \(O\) to points \(A\) and \(B\) is evaluated. The point cloud closer to the centroid is retained to define the inner surface contour of the frozen coal.

Cross-sectional area calculation

After determining the contour boundary of the point cloud slice, the next step is to calculate the contour boundary area. This paper employs the Shoelace Theorem34 for this purpose. As illustrated in Fig. 5, the outer frame represents the cross-sectional contour. The area \(S_{i} \left( {i = 1,2,3 \ldots n} \right)\) of each small triangle, formed by adjacent boundary points and the centroid, is calculated sequentially, with n representing the number of contour boundary vertices. Subsequently, the areas of these triangles are summed to obtain the total contour area, as shown in Eq. (12).

Schematic diagram of irregular polygon area calculation.

Among them, s is the area of the slice section, the number of vertices in the section is n, and the coordinates of the i-th vertex on the section can be expressed as (\(x_{i} ,y_{i}\)), where \(\left( {x_{i + 1} ,y_{i + 1} } \right)\) = \(\left( {x_{i} ,y_{i} } \right)\), that is, the n + 1 point is equal to the first point, forming a closed contour.

Point cloud volume calculation

After calculating the area of each cross-section, multiply it by the segmentation interval and then accumulate these values to obtain the point cloud volume. This study focuses on determining the volume of the residual coal, necessitating a volume conversion. By measuring on-site and referring to relevant technical data, the cross-sectional area \(S\) of the carriage when empty is determined. The volume of the remaining coal in the carriage is then calculated by subtracting the cross-sectional area of the coal-filled carriage from that of the empty carriage, multiplying the difference by the slice interval, and accumulating the results. The formula for calculating the coal volume \({\text{V}}_{coal}\) is as shown in Eq. (13):

Among them, \({\text{V}}_{coal}\) represents the overall volume of the point cloud, \(A_{j}\) is the contour cross-sectional area.

Experiments and discussion

To validate the feasibility of the proposed method, an experiment was conducted at the Datun Coal Preparation Plant in Peixian County, Jiangsu Province. The plant uses the C70 general open car model, which has a body length of 13,000 mm ± 10 mm. The hardware for data acquisition included a LiDAR, computer, radar bracket, and data transmission line. The LiDAR was fixed directly above the carriage at a height of 5.70 m from the carriage bottom, with its scanning plane oriented as perpendicular as possible to the direction of motion. The LiDAR continuously acquires point cloud data of the carriage during operation as shown in Fig. 6.

Arrangement of on-site data acquisition equipment.

During data collection, the train moved at a relatively slow speed of approximately 1.5 m/s, resulting in a short advance distance between adjacent frames and significant overlap in scanning ranges. Consequently, up to 1600 frames of point cloud data were collected per carriage, totaling millions of data points and creating a substantial processing load. Given the slow speed of the train and the radar’s scanning range of − 15° to 15° (2.9 m), each frame covered a distance of 0.15 m. To manage computational load, the sampling frequency was set at 10 Hz, with data sampled every 10 frames. This approach ensured that the overall point cloud presentation remained accurate while reducing data volume.

The collected point cloud data were extracted, preprocessed, and visualized using a grid size of 0.03 m. Different colors were assigned to point cloud data at various heights for better visualization, as shown in Fig. 7a, b. By comparing the processed 3D model of the carriage and frozen coal residue with actual photos, it was confirmed that the algorithm effectively identified the residual frozen coal at the carriage bottom and the junction between the side wall and bottom.

(a) Carriage frozen coal residue site image, (b) Visualization of the 3D model of the carriage and frozen coal residue.

To further validate the accuracy of the proposed method for estimating the volume of residual frozen coal and analyze the impact of various parameters on the results, an experiment was conducted. A fixed number of bags, each with a volume of 0.085 m3, were placed in an empty carriage. The bags were thrown in the direction of train operation and distributed as follows: 5 bags in 20 cars, 10 bags in 18 cars, 15 bags in 19 cars, 20 bags in 18 cars, and 25 bags in 17 cars. After the radar completed its scanning, the volume of the residual cargo was estimated. The arrangement of bags and the results of the volume estimation are illustrated in Fig. 8.

-

(1)

Analysis of the impact of voxelized grid size

Schematic diagram of scanning carriage.

The voxel grid method involves varying grid sizes, which can affect the number of point clouds contained within each grid. This paper determines the optimal grid size by evaluating how different sizes impact the preservation of point cloud features. The results of this comparison are illustrated in Fig. 9.

Sampling effect diagram of different grid sizes.

From the point cloud downsampling results, it is observed that as the grid size increases, the number of eliminated point clouds also rises. To more clearly demonstrate the effect of downsampling, this study calculates the average Euclidean distance between points in the downsampled point cloud using the k-Nearest Neighbors (k-NN) algorithm, as shown in Fig. 10.

Relationship between grid size and average spacing of point cloud.

From the figure, it is evident that when the side length of the grid is 0.03 m, the minimum average spacing between point clouds is 2.3 cm. At this size, the point cloud data volume and density are maximized, with minimal distance fluctuation between point clouds, resulting in a stable overall appearance. Increasing the grid side length to 0.05 m reduces the number of point clouds by more than half, and the average spacing between point clouds increases by approximately 53.6%. With a grid side length of 0.1 m, the number of point clouds drops to 24,521, leading to sparse point cloud density, significant variance in point cloud distances, and loss of key information. Based on this analysis, a grid size of 0.05 m is selected to calculate the volume, balancing data quantity and point cloud density.

-

(2)

Slice spacing impact analysis

The 92 sets of collected data were divided into five groups based on different loads: 5, 10, 15, 20, and 25 bags. For each load group, the point cloud volume was calculated using different slice distances, and the results were averaged. The experiment employed two evaluation metrics: absolute error and relative error. Absolute error represents the absolute difference between the measured value and the true value, while relative error is the ratio of the absolute error to the true value. Selected experimental results are presented in Tables 1.

According to the on-site measurement data, the calculation accuracy of the car body point cloud volume decreases as the slice spacing increases (e.g., Fig. 11a, b). When the slice spacing is small, the absolute error of the point cloud volume calculation is smaller, resulting in higher accuracy; however, this also leads to longer program run times (e.g., Fig. 11c). The absolute errors are consistently positive, primarily because the larger slice areas used as the base lead to overcompensation for sections smaller than the maximum area, a trend that worsens with increased slice spacing.

The impact of slice spacing on detection errors under different loads.

Comparing the relative errors of slices with different loading volumes reveals that the relative error decreases to a certain extent as the detection volume increases. Conversely, the absolute errors increase proportionally with the loading volume. This is due to the inherent measurement error of the LiDAR; as the detection volume grows, the relative error’s change is less pronounced compared to the volume change, leading to a decrease in relative error with larger detection volumes.

-

(3)

Comparison of volume estimation methods

We extract the car body, stitch the point cloud data, and apply grid filtering to create the grid point cloud data for the vehicle. Using a slice spacing of 0.05, we processed 92 sets of data with three different algorithms: the ray 360-degree scanning algorithm, the alpha algorithm, and the proposed fusion algorithm. The results of this data comparison are shown in Table 2.

The absolute errors of the various algorithms are all greater than 0, primarily due to the limitations of LiDAR detection. Points outside the detection contour range cannot be captured, leading to point clouds being distributed on the inner side of concave surfaces. As shown in Table 2 and Fig. 12, with a slice thickness of 0.05, our proposed fusion algorithm yields the smallest absolute error. The alpha algorithm has a smaller absolute error compared to the 360-degree scanning algorithm. The absolute errors for all three algorithms increase with the detection volume. The 360-degree scanning algorithm shows a rapid increase in absolute error with larger volumes, whereas the alpha algorithm and our fusion algorithm exhibit a more gradual increase.

(a) Comparison of absolute errors in three different volume estimation methods, (b) Comparison of relative errors in three different volume estimation methods.

Comparing relative errors, our fusion algorithm consistently shows a smaller relative error compared to the other two algorithms, and this error decreases with increasing volume. The relative error of the 360-degree scanning algorithm fluctuates significantly, averaging around 10%, while the alpha algorithm’s relative error remains relatively stable at approximately 8%.

In point cloud data preprocessing, using a 0.05m voxelized grid results in a relatively dense point cloud, which affects the accuracy of slice contour extraction by the 360-degree scanning algorithm, leading to lower volume estimation accuracy. The alpha algorithm extracts both inner and outer boundaries when determining contours. For residual coal in the carriage, the extracted contour includes both the bottom point cloud and the scanned object surface, creating a double boundary that reduces detection accuracy. Our proposed fusion algorithm combines the alpha algorithm for preliminary screening, which simplifies the point cloud and eliminates non-boundary points, followed by the 360-degree scanning algorithm to sort the contours, achieving improved detection results.

Conclusion

To address the challenge of detecting the volume of frozen coal residue in open wagons during winter and to facilitate timely detection and formulation of an effective removal plan, this paper introduces a novel method for volume detection of frozen coal residue in open wagons using spinning LiDAR. This method involves extracting point cloud data from the wagon by identifying its boundary, obtaining the contour point cloud through correction, splicing, and voxelized grid filtering, and employing an alpha and 360-degree scanning fusion algorithm for volume estimation of the frozen coal residue.

First, the relationship between voxelized grid size and sampling quality was experimentally demonstrated. As the voxelized grid size increases, the number of point clouds decreases rapidly, while the average spacing between them increases linearly, and the variance in spacing grows exponentially. This is primarily because larger voxelized grid sizes lead to the loss of detection target features. Despite a reduction in the amount of subsequent processing information, accuracy diminishes significantly. Consequently, a voxel grid size of 0.05 m is selected to significantly reduce the number of point clouds while preserving the characteristics of the detection target and enhancing detection accuracy.

Secondly, the relationship between slice spacing and estimation accuracy in the proposed fusion volume estimation method was analyzed experimentally. For a constant detection volume, the calculation accuracy decreases as slice spacing increases. With constant slice width, as detection volume increases, the relative error in volume detection increases linearly, whereas the absolute error decreases. This phenomenon occurs because the LiDAR measurement error is constant. As detection volume increases, the relative error change is proportionally less than the increase in volume, resulting in a decrease in relative error. To ensure both the timeliness and accuracy of detection, a slice spacing of 0.05 m is chosen.

Finally, the detection accuracy of our proposed fusion algorithm, compared to other algorithms, under a slice thickness of 0.05 m, is analyzed experimentally. The relative error of our proposed fusion algorithm is smaller than that of the other two algorithms and decreases with increasing volume. The relative error of the 360-degree scanning algorithm fluctuates significantly, approximately 10%, while the alpha algorithm shows slight fluctuations, maintaining around 8%. These results demonstrate that our proposed algorithm exhibits superior detection accuracy and stability compared to the other two algorithms, which suffer from defects leading to lower accuracy and stability.

Our proposed method for detecting winter frozen coal residue demonstrates high accuracy. This method is applicable to detecting residual cargo in open-type vehicles, including those used in road and water transport. The detection hardware can also be mounted on movable transport equipment to assess concave surfaces, such as ground and slopes, or to measure cave volumes, demonstrating broad application potential. However, the multi-frame point cloud splicing method based on displacement fusion compensation, as proposed in this paper, is optimized for trains traveling at a constant speed. For trains traveling at variable speeds, the spatiotemporal relationships among the collected multi-frame point cloud data become uncertain, necessitating improvements in point cloud splicing effectiveness. Furthermore, the use of bags to simulate residual frozen coal in the experiment fails to capture the complex surface characteristics of actual frozen coal residues. Although the experimental materials employed in this study demonstrate the feasibility of the proposed algorithmic framework, future research should involve collecting authentic coal slag samples from unloading sites to establish a representative dataset. Subsequent error analysis should be conducted on this dataset to optimize both the point cloud segmentation and volume estimation algorithms.

Data availability

The datasets used and/or analyzed during the current study available from the corresponding author on reasonable request.

References

Spencer, B. F., Hoskere, V. & Narazaki, Y. Advances in computer vision-based civil infrastructure inspection and monitoring. Engineering 5, 199–222. https://doi.org/10.1016/j.eng.2018.11.030 (2019).

Wu, Z., Huang, Y., Huang, K., Yan, K. & Chen, H. A review of non-contact water level measurement based on computer vision and radar technology. Water. https://doi.org/10.3390/w15183233 (2023).

Xie, P. & Zhou, J. Rectangle positioning algorithm simulation based on edge detection and hough transform. Open Mech. Eng. J. 8, 58–62. https://doi.org/10.2174/1874155X01408010058 (2014).

Mrovlje, J. & Vrančić, D. Automatic detection of the truck position using stereoscopy. In 2012 IEEE International Conference on Industrial Technology. https://doi.org/10.1109/ICIT.2012.6210029 (2012).

Li, S., Jiang, X., Qian, H. & Xu, Y. Vehicle 3-dimension measurement by monocular camera based on license plate. In 2016 IEEE International Conference on Robotics and Biomimetics (ROBIO). https://doi.org/10.1109/ROBIO.2016.7866421.

Li, N. et al. A progress review on solid-state LiDAR and nanophotonics-based LiDAR sensors. Laser Photonics Rev. 16, 2100511. https://doi.org/10.1002/lpor.202100511 (2022).

Wu, Y. et al. Deep 3D object detection networks using LiDAR data: A review. IEEE Sens. J. 21, 1152–1171 (2020).

Luo, R. et al. 3D deformation monitoring method for temporary structures based on multi-thread LiDAR point cloud. Measurement 200, 111545 (2022).

Yao, S. et al. Radar-camera fusion for object detection and semantic segmentation in autonomous driving: A comprehensive review. IEEE Trans. Intell. Veh. 9, 2094–2128. https://doi.org/10.1109/TIV.2023.3307157 (2023).

Rufei, L., Jiben, Y., Hongwei, R., Bori, C. & Chenhao, C. Research on a pavement pothole extraction method based on vehicle-borne continuous laser scanning point cloud. Meas. Sci. Technol. 33, 115204. https://doi.org/10.1088/1361-6501/ac875c (2022).

Ma, X., Yue, D., Li, S., Cai, D. & Zhang, Y. Road potholes detection from MLS point clouds. Meas. Sci. Technol. 34, 095017. https://doi.org/10.1088/1361-6501/acdb8d (2023).

Zhao, M. et al. Quantitative detection technology for geometric deformation of pipelines based on LiDAR. Sensors (Basel) 23, 9761. https://doi.org/10.3390/s23249761 (2023).

Ding, W., Zhang, K. & Shao, C. A fast volume measurement method for obtaining point cloud data from bulk stockpiles. Meas. Sci. Technol. 34, 105204. https://doi.org/10.1088/1361-6501/acdc43 (2023).

Zhang, Z. A new measurement method of three-dimensional laser scanning for the volume of railway tank car (container). Measurement 170, 108454. https://doi.org/10.1016/j.measurement.2020.108454 (2021).

Qing, S. et al. Point cloud simplification algorithm based on particle swarm optimization for online measurement of stored bulk grain. Int. J. Agric. Biol. Eng. 9, 71–78. https://doi.org/10.3965/j.ijabe.20160901.1805 (2016).

Kang, C. et al. A triangular grid filter method based on the slope filter. Remote Sens. 15, 2930. https://doi.org/10.3390/rs15112930 (2023).

Zhang, J. & Lin, X. Filtering airborne LiDAR data by embedding smoothness-constrained segmentation in progressive TIN densification. ISPRS J. Photogramm. Remote. Sens. 81, 44–59. https://doi.org/10.1016/j.isprsjprs.2013.04.001 (2013).

Han, X.-F. et al. A review of algorithms for filtering the 3D point cloud. Signal Process. Image Commun. 57, 103–112. https://doi.org/10.1016/j.image.2017.05.009 (2017).

Zhang, K. et al. A progressive morphological filter for removing nonground measurements from airborne LIDAR data. IEEE Trans. Geosci. Remote Sens. 41(4), 872–882. https://doi.org/10.1109/TGRS.2003.810682 (2003).

Zhang, W. et al. An easy-to-use airborne LiDAR data filtering method based on cloth simulation. Remote Sens. 8(6), 501. https://doi.org/10.3390/rs8060501 (2016).

Vosselman, G. Slope based filtering of laser altimetry data. Int. Arch. Photogramm. Remote Sens. 33(B3/2; PART 3), 935–942 (2000).

Susaki, J. Adaptive slope filtering of airborne LiDAR data in urban areas for digital terrain model (DTM) generation. Remote Sens. 4(6), 1804–1819. https://doi.org/10.3390/rs4061804 (2012).

Lindenberger, J. Laser-profilmessungen zur topographischen gelandeaufnahme, Zugl.: Stuttgart University, Dissertation, 1992 (1993).

Keqi, Z. et al. A progressive morphological filter for removing nonground measurements from airborne LIDAR data. IEEE Trans. Geosci. Remote Sens. 41, 872–882. https://doi.org/10.1109/tgrs.2003.810682 (2003).

Husain, A. & Vaishya, R. C. Road surface and its center line and boundary lines detection using terrestrial Lidar data. Egypt. J. Remote Sens. Space Sci. 21, 363–374. https://doi.org/10.1016/j.ejrs.2017.12.005 (2018).

Mahphood, A. & Arefi, H. Tornado method for ground point filtering from LiDAR point clouds. Adv. Space Res. 66(7), 1571–1592 (2020).

Mahphood, A. & Arefi, H. Improved Tornado method for ground point filtering from LIDAR point clouds. ISPRS Ann. Photogramm. Remote Sens. Spatial Inf. Sci. 10, 429–436 (2023).

Zhi, Y., Zhang, Y., Chen, H., Yang, K. & Xia, H. A method of 3D point cloud volume calculation based on slice method. In 2016 International Conference on Intelligent Control and Computer Application (ICCA 2016), 155–158 (Atlantis Press).

Li, B., Wei, J., Wang, L., Ma, B. & Xu, M. A comparative analysis of two point cloud volume calculation methods. Int. J. Remote Sens. 40, 3227–3246. https://doi.org/10.1080/01431161.2018.1541111 (2018).

Meng, X. et al. Enhanced point cloud slicing method for volume calculation of large irregular bodies: Validation in open-pit mining. Remote Sens. 15, 5006. https://doi.org/10.3390/rs15205006 (2023).

Carvalho, L. E. & von Wangenheim, A. 3D object recognition and classification: a systematic literature review. Pattern Anal. Appl. 22, 1243–1292. https://doi.org/10.1007/s10044-019-00804-4 (2019).

Shi, B., Yang, M., Liu, J., Han, B. & Zhao, K. Rail transit shield tunnel deformation detection method based on cloth simulation filtering with point cloud cylindrical projection. Tunn. Undergr. Space Technol. 135, 105031. https://doi.org/10.1016/j.tust.2023.105031 (2023).

Pei, H., Zhang, P., Du, S. & Luo, M. A point cloud preprocessing method for complicated thin-walled ring parts with irregular section. Measurement 214, 112807. https://doi.org/10.1016/j.measurement.2023.112807 (2023).

Lee, Y. & Lim, W. Shoelace formula: Connecting the area of a polygon and the vector cross product. Math. Teach. 110, 631–636. https://doi.org/10.5951/mathteacher.110.8.0631 (2017).

Author information

Authors and Affiliations

Contributions

Xiang-lun Mo: Writing—review and editing, draft, methodology, conceptualization, validation, supervision, resources. Xiang-geng Wu: Writing—original, investigation, validation, software. Zhou Wei: Validation, supervision, resources. Yang Liu: Investigation, visualization, validation. Shu-qi Dong: Investigation, data curation. Chen Zhao: Investigation, data curation. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Mo, Xl., Wu, Xg., Zhou, W. et al. Estimate the volume of residual frozen coal in railway carriage using a rotating LiDAR. Sci Rep 15, 36745 (2025). https://doi.org/10.1038/s41598-025-20690-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-20690-7