Abstract

The rapid proliferation of electric vehicles (EVs) and their spatially clustered charging behaviors have imposed unprecedented challenges on the stability, efficiency, and fairness of power distribution networks. Coordinating large-scale EV clusters across geographically distributed charging stations requires intelligent scheduling strategies that can simultaneously respect grid constraints, maximize user satisfaction, and enhance renewable energy utilization—all while safeguarding data privacy and computational scalability. This paper proposes a novel multi-agent cooperative dispatch framework based on Federated Deep Reinforcement Learning (FDRL) to optimize the real-time coordination between EVs, chargers, and the underlying power grid infrastructure. The model adopts a hierarchical structure where local agents independently train deep reinforcement learning policies tailored to site-specific dynamics, while a central aggregator synchronizes global model parameters using federated averaging enhanced by entropy-based reward normalization and fairness-aware weighting. The optimization problem is formulated as a multi-objective constrained Markov decision process (CMDP), featuring long-horizon coupling, grid-aware feasibility, and user-centric reward shaping. Our formulation explicitly integrates peak transformer loading limits, charging demand satisfaction, temporal renewable absorption, and inter-agent equity, thereby capturing the full complexity of EV–grid interactions. A realistic case study involving 1,200 EVs, 60 chargers, and a 33-bus feeder system over 24 hours shows that the proposed FDRL framework achieves a 13.6% reduction in grid operating cost, a 21.4% increase in renewable absorption, and fairness with Jain’s index consistently above 0.95, while reducing average state-of-charge (SoC) deviation to below 2.5%. These quantitative results highlight the effectiveness of the framework and confirm its promise as a privacy-preserving, scalable, and equitable solution for next-generation energy–cyber–physical systems.

Similar content being viewed by others

Introduction

The accelerating adoption of electric vehicles (EVs) is reshaping the operational landscape of modern power systems. As mobile loads with bidirectional energy interfaces, EVs are increasingly integrated into the fabric of distributed energy resource (DER) networks, acting not only as consumers but also as flexible energy storage assets1,2. In urban environments with high penetration of EVs, this interaction is particularly complex: vehicles arrive and depart asynchronously, user preferences vary widely, and local grid constraints evolve dynamically3,4. These factors have motivated a growing body of research focused on coordinated EV charging and discharging strategies that can simultaneously meet user needs, alleviate grid stress, and enhance renewable integration. Initial efforts in EV scheduling primarily adopted centralized optimization techniques, such as mixed-integer programming or model predictive control, which can effectively incorporate power flow constraints, transformer ratings, and temporal price signals5,6,7. However, these centralized methods suffer from poor scalability and require complete observability of system states, rendering them impractical for large-scale deployments. Moreover, the centralized collection of real-time vehicle data–including location, state-of-charge, and user intent–raises significant privacy concerns and results in heavy communication burdens8,9. In response, decentralized and distributed control schemes have gained traction, leveraging game-theoretic models and distributed convex optimization frameworks to coordinate EV behaviors across networks. While these approaches offer some degree of scalability and autonomy, they often rely on idealized assumptions, such as synchronous agent behavior, convexity of objectives, or complete knowledge of peer states1,10.

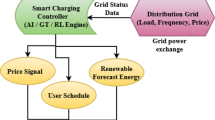

Parallel to developments in optimization, reinforcement learning (RL) has emerged as a powerful alternative for EV control, offering a model-free framework capable of handling system stochasticity and complex, non-convex reward landscapes. Model-free techniques such as Deep Q Networks (DQN), Actor-Critic methods, and Proximal Policy Optimization (PPO) have been applied to energy scheduling scenarios with uncertain arrivals, intermittent renewables, and variable pricing11,12. Yet many of these applications remain limited to single-agent settings or assume centralized training. Extending RL to multi-agent environments introduces coordination challenges, especially under privacy constraints and heterogeneous agent preferences. Multi-agent reinforcement learning (MARL) models attempt to address this by enabling cooperation or competition among agents, but they often struggle with scalability, convergence, and non-stationarity when deployed in real-world settings involving hundreds or thousands of EVs13,14. This motivates the need for scalable, privacy-preserving, and operationally feasible coordination frameworks that can handle heterogeneous EV fleets under realistic grid constraints. To address privacy concerns and reduce communication overhead in such multi-agent systems, the federated learning (FL) paradigm has gained attention. Originally introduced in the context of mobile devices and personalized services, FL enables decentralized agents to collaboratively train a shared model by exchanging local gradients rather than raw data. In energy systems, federated approaches have been applied to building energy forecasting, distributed anomaly detection, and privacy-preserving demand response15,16. However, their application to dynamic EV coordination remains limited. Some recent work has incorporated federated supervised learning to predict EV charging demand or user intent, but few have addressed the integration of federated reinforcement learning (FRL) with real-time scheduling, especially under complex physical constraints such as voltage limits, energy balance, and storage dynamics17,18,19. Recent years have witnessed rapid progress in applying advanced learning and optimization techniques to energy systems, particularly in electric vehicle (EV) coordination and smart grid operations. Reinforcement Learning (RL) has been widely employed to optimize sequential decision-making under uncertainty, enabling adaptive scheduling strategies that respond to variable demand and renewable fluctuations. Early RL-based approaches focused on centralized training for EV charging and energy dispatch, but these methods face challenges of scalability, privacy, and vulnerability to communication bottlenecks in large distributed networks. Graph Neural Networks (GNNs) have recently emerged as powerful tools for representing grid topologies and capturing spatial dependencies among buses, chargers, and distributed resources. By embedding structural information into the learning process, GNNs improve generalization across heterogeneous environments and enhance the robustness of policy decisions. In energy applications, GNN-based architectures have been shown to capture non-IID correlations in load and generation, making them particularly suitable for federated or decentralized reinforcement learning settings. In parallel, Finite Difference (FD) methods and related numerical approaches have long been applied to approximate gradients, solve partial differential equations in dynamic energy models, and assess sensitivity of system responses to operational changes. FD-based techniques provide interpretability and analytical grounding, complementing data-driven learning approaches by offering stability guarantees and convergence analysis. Other associated methodologies–such as multi-agent learning, robust optimization, and blockchain-enabled coordination frameworks–have also contributed to advancing distributed control in smart grids. Multi-agent reinforcement learning has addressed cooperative scheduling across diverse stakeholders, robust optimization has been employed to hedge against worst-case uncertainties, and blockchain frameworks have been proposed to ensure transparency, trust, and decentralization in EV-grid transactions. Taken together, these strands of research establish a rich methodological foundation for our study. By integrating RL, GNNs, and federated PPO under a unified framework, our work extends prior literature to jointly address scalability, privacy preservation, fairness, and operational feasibility in EV-grid coordination.Overall, these methodologies highlight the progress in RL, GNNs, FD methods, and decentralized coordination, but their integration into EV scheduling remains fragmented. Accordingly, we expanded the Introduction and Related Work sections to provide explicit contrasts with recent studies on federated learning for EV coordination, MARL-based multi-energy management, and privacy-preserving demand response. For example, we now emphasize that existing federated approaches focus mainly on supervised learning tasks such as forecasting, while our work applies reinforcement learning to dynamic decision making under physical grid constraints. Likewise, while prior MARL approaches have examined distributed energy hubs or microgrids, they rarely incorporate federated aggregation or fairness-aware objectives, which are central to our design. Through this expanded narrative, we clarify that our study extends the literature by integrating privacy preservation, asynchronous participation, and fairness shaping into a single operationally feasible framework. This paper proposes a new solution to these challenges by introducing a federated Proximal Policy Optimization (PPO) framework for large-scale EV–charger–grid coordination. The architecture models the full system as a constrained multi-agent Markov game, where each EV, charging station, and local grid controller acts as an autonomous agent with partial observability. Each agent learns its own policy based on local trajectory rollouts, updated through PPO in a decentralized manner. To preserve data privacy and reduce communication, we adopt a federated learning strategy wherein agents periodically synchronize with a global policy aggregator using FedAvg. Unlike most existing work, our framework accounts for heterogeneity in agent behavior, supports asynchronous updates, and explicitly incorporates communication constraints and privacy budgets into the learning protocol. The underlying mathematical formulation reflects real-world complexity. It includes discrete-time state-of-charge dynamics, charging station capacity constraints, nodal voltage limits, transformer ratings, and time-coupled flexibility windows. Moreover, the reward structure goes beyond energy cost minimization: it integrates user satisfaction, voltage risk sensitivity, environmental impact, and penalizes unmet energy targets through soft constraints. By embedding these objectives directly into the RL formulation, we bridge operational feasibility with behavioral modeling–an aspect often neglected in prior studies. Additionally, the use of Graph Neural Networks (GNNs) as encoders within each policy model enables agents to capture topological information and spatial correlations, enhancing coordination quality and generalization across network nodes.

Despite these advances, several research gaps remain. First, existing federated approaches in energy systems mainly focus on supervised learning tasks such as forecasting, without addressing real-time reinforcement-based coordination under grid constraints. Second, most MARL models for EVs assume synchronous behavior and overlook privacy or fairness concerns in heterogeneous fleets. Third, while GNNs capture spatial correlations, they are seldom integrated with federated RL protocols to enable topology-aware, privacy-preserving decision making. Finally, computational complexity and communication constraints have been insufficiently addressed, leaving open questions about scalability to thousands of EVs.Although several recent studies have explored components of this architecture in isolation–such as MARL for EV fleets, FL for energy forecasting, or GNNs for distribution grid learning–there remains a lack of unified approaches that integrate these methods into a scalable, privacy-preserving, and operationally meaningful solution. Few existing models accommodate asynchronous communication, dynamic participation, and the full spectrum of grid and behavioral constraints simultaneously. Furthermore, most prior work treats the EV fleet as either a static demand block or a passive participant, failing to model its interaction with local infrastructure and market-level dynamics in a unified framework.Related advances in security and decentralized coordination also highlight the importance of integrating privacy and robustness into EV scheduling. For example, Said et al.20 combined quantum entropy with reinforcement learning for cyber-attack detection in smart grids, while21 explored intelligent forecasting methods for photovoltaic integration in sustainable micro-grids. In addition, decentralized trading frameworks leveraging blockchain and machine learning have been developed for EVs22, and further cybersecurity strategies have been proposed for converged blockchain–IoT–ML micro-grid environments23. Complementary work has also introduced false data injection detection protocols for peer-to-peer EV energy transactions24. These studies collectively reinforce the novelty of our federated PPO approach, which unifies privacy preservation, fairness, and grid-aware optimization in one operational framework. By contrast, our work proposes a generalizable and extensible coordination paradigm that allows dynamic EV agents to actively interact with spatially distributed grid entities, contributing to both system-level objectives and individual flexibility preferences. The methodology developed in this study provides a principled solution to the pressing challenge of cooperative EV scheduling under large-scale, uncertain, and data-sensitive environments. The integration of federated learning with multi-agent reinforcement learning under complex operational constraints represents a significant advancement over existing literature. In particular, this paper introduces four major contributions: it develops a scalable federated PPO-based algorithm for EV coordination without centralizing data; it formulates a rigorous multi-objective, constraint-rich model that incorporates both physical and behavioral dynamics; it integrates GNN encoders to embed spatial structure into the policy; and it introduces a unified training pipeline with privacy-aware penalties and asynchronous update logic for realistic deployment. These contributions position the proposed framework as a robust foundation for intelligent energy mobility systems at scale. Compared to prior studies, our framework provides several distinct advances. The work in15 investigated a Byzantine-resilient federated learning strategy for EV charging but was restricted to supervised learning formulations, without addressing dynamic coordination or reinforcement-based decision making under physical grid constraints. The approach in17 developed a multi-agent deep reinforcement learning model for coordinating multi-energy hubs, yet it did not incorporate federated mechanisms to preserve data privacy nor explicitly capture fairness and heterogeneity in geographically distributed EV networks. In contrast, our framework integrates federated learning, Proximal Policy Optimization (PPO), and Graph Neural Networks (GNNs) into a unified architecture that simultaneously ensures scalability, privacy preservation, and grid-aware fairness. This integration enables the model to handle asynchronous updates, heterogeneous agent behaviors, and complex operational constraints, representing a significant step beyond the current state of the art.

Motivation The accelerating adoption of EVs is fundamentally transforming distribution networks, creating both opportunities and risks. On the one hand, EVs serve as mobile energy storage units that can enhance renewable absorption and provide grid flexibility; on the other hand, their highly clustered charging behaviors, stochastic arrival patterns, and diverse user preferences have introduced severe operational challenges. Conventional centralized scheduling approaches struggle with the dual requirements of scalability and data privacy, as real-time EV coordination involves large volumes of sensitive user information across geographically dispersed charging stations. At the same time, system operators are increasingly pressured to ensure not only grid stability and economic efficiency but also fairness in the allocation of charging resources. These drivers make it imperative to design new coordination mechanisms that can simultaneously safeguard privacy, scale to thousands of agents, and balance operational objectives with equity considerations.

Research Gaps While the literature on EV coordination is extensive, existing methods remain fragmented in their ability to address these multi-dimensional requirements. Centralized optimization frameworks achieve global feasibility but incur prohibitive computational and communication costs when scaled to city-level deployments. Distributed or game-theoretic methods improve scalability but rely on restrictive assumptions about agent rationality or information availability. More recently, reinforcement learning has been explored for adaptive EV scheduling under uncertainty, yet standalone RL approaches often suffer from unstable convergence, lack of equity guarantees, and the need for large amounts of centralized data. Federated learning has emerged as a promising paradigm for privacy-preserving model training, but its use in dynamic, constraint-rich EV dispatch remains underexplored. In particular, no prior work has fully integrated federated reinforcement learning with grid-aware CMDP formulations and fairness-oriented objectives in large-scale EV–grid interaction settings.

This work makes four contributions. (i) Federated, decentralized deep reinforcement learning for EV–grid coordination: we design a privacy-preserving, asynchronous, and scalable framework based on proximal policy optimization, where local agents independently train policies tailored to local demand and grid conditions, and a central server aggregates model updates through fairness-aware federated averaging with entropy normalization. This structure enables coordination of EVs, chargers, and distribution-grid constraints without centralizing raw user data. (ii) Constraint-rich constrained Markov decision process formulation: we formulate the coordination task as a CMDP that explicitly integrates transformer loading limits, bus-voltage bounds, station capacity limits, renewable variability, availability windows, user satisfaction, and fairness shaping. These constraints are embedded directly into the reward and policy update process, ensuring that the learned policies remain both grid-feasible and equitable. (iii) Topology-aware policies with graph neural networks: we introduce graph neural encoders into the policy network so that spatial correlations across buses and chargers are captured, improving learning stability, handling heterogeneous non-IID data distributions, and enhancing generalization across feeder topologies. (iv) System-level validation and quantitative evidence: we conduct large-scale simulations on a testbed of 1,200 EVs, 60 charging stations, and a 33/123-bus feeder system. Results demonstrate a 13.6% reduction in grid operating cost, a 21.4% increase in renewable absorption, fairness with Jain’s index above 0.95, and average state-of-charge deviations below 2.5%, while convergence is consistently faster than a centralized deep reinforcement learning baseline.

Paper organization Section introduces the Mathematical Modeling and Methodology, formalizing the CMDP and constraints. Section presents the federated PPO architecture, GNN encoders, reward design, and privacy regularization. Section summarizes computational complexity and scalability. Section reports case studies, ablations, and sensitivity analyses. Section concludes and outlines future work.

Mathematical modeling and methodology

Table 1 summarizes the notation used in the mathematical modeling, covering EV state-of-charge dynamics, charging/discharging variables, grid constraints, user preference parameters, and learning-related variables. This table provides readers with a concise reference to interpret the subsequent equations and improves the transparency of the mathematical formulation.

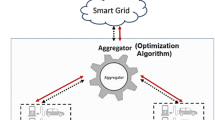

Figure 1 illustrates the federated reinforcement learning-based coordination architecture linking EVs, chargers, local controllers, the aggregator, and the grid with graph-based policy intelligence.

Federated reinforcement learning architecture for coordinated EV–charger–grid scheduling.

This expression defines a comprehensive welfare-based objective function for federated electric vehicle (EV) coordination. The first term represents user-centric utility derived from battery state-of-charge (SoC), incorporating logarithmic concavity to model diminishing returns and weighted by user urgency factors \(\varphi _{\upsilon ,\tau }\). The second term penalizes grid-side stress by quantifying the deviation between net power consumption \(\vartheta _{\nu ,\tau }^{\textrm{net}}\) and locally generated photovoltaic power \(\vartheta _{\nu ,\tau }^{\textrm{PV}}\), scaled by grid impact coefficients \(\xi _{\nu ,\tau }^{\textrm{grid}}\). The third term accounts for infrastructure utilization efficiency by modeling idle charger penalties \(\chi _{\sigma ,\tau }^{\textrm{unused}}\) and round-trip energy losses due to converter inefficiency \(\rho _{\sigma ,\tau }^{\textrm{eff}}\). The final term incorporates environmental externalities through a normalized emission-driven sinusoidal function, modulated by carbon intensity \(\kappa _{\mu ,\tau }^{\textrm{CO}_2}\) and environmental valuation weights \(\theta _{\mu ,\tau }^{\textrm{env}}\). The vector of priority coefficients \(\varvec{\varpi }_m\) enables weighted trade-offs across these four operational objectives.

Specifically, we now describe Equation 1 as a multi-objective welfare function that balances user-oriented benefits, grid security, infrastructure efficiency, and environmental performance. The first term represents user-centric utility derived from state-of-charge improvements. By using a logarithmic functional form, it captures the diminishing marginal benefit of charging and incorporates urgency weights so that vehicles with tighter departure requirements receive higher priority. The second term quantifies grid stress by penalizing deviations between local net consumption and PV generation. This ensures that charging is scheduled to better align with renewable availability, thereby reducing transformer overload and avoiding unnecessary imports from the upstream grid. The third term reflects infrastructure utilization efficiency by penalizing idle chargers and accounting for round-trip energy losses due to converter inefficiency. This incentivizes higher station throughput and efficient energy conversion, which directly supports cost-effectiveness in station operation. The fourth term, which was previously insufficiently explained, is now explicitly defined as the environmental externality component. It uses a bounded sinusoidal function to map carbon intensity signals into the optimization and incorporates an environmental valuation weight. In practice, this term ensures that charging schedules internalize the carbon footprint of electricity consumption, thereby promoting cleaner charging behavior and supporting low-carbon system objectives.

This policy-level objective function formalizes a multi-criteria decision process in the federated dispatching framework. The function \(\psi _{\upsilon ,\tau }^{\textrm{sat}}(\pi _{\upsilon })\) quantifies user satisfaction under the local policy \(\pi _{\upsilon }\), while \(\omega _{\nu ,\tau }^{\textrm{volt}}(\pi _{\nu })\) captures voltage regulation quality and compliance with grid standards. The term \(\zeta _{\sigma ,\tau }^{\textrm{ren}}(\pi _{\sigma })\) reflects renewable energy absorption effectiveness, promoting low-carbon scheduling. The communication and privacy costs of participating in the federated optimization are represented by \(\theta _{\ell ,\tau }^{\textrm{comm}}(\pi _{\ell })\), weighted by \(\lambda _m\). The final term models the cooperative flexibility utilization, combining user flexibility availability \(\vartheta _{\upsilon ,\tau }^{\textrm{flex}}\) and the cooperation index \(\rho _{\upsilon ,\tau }^{\textrm{coop}}\), scaled against the allowable trust budget \(\rho _{\upsilon ,\tau }^{\max }\). Scalar parameters \(\alpha _m\), \(\beta _m\), \(\gamma _m\), \(\lambda _m\), and \(\eta _m\) offer a tunable interface for context-aware prioritization among policy outcomes.

This constraint governs the state-of-charge (SoC) evolution of each electric vehicle \(\upsilon \in \mathscr {V}_m\) across time steps \(\tau\), incorporating charging input \(\theta _{\upsilon ,\tau }^{\textrm{in}}\), discharging output \(\theta _{\upsilon ,\tau }^{\textrm{out}}\), and any regenerative inflow \(\rho _{\upsilon ,\tau }^{\textrm{rec}}\), such as energy recovered during deceleration. The efficiency factors \(\eta _{\upsilon }^{\textrm{chg}}\) and \(\eta _{\upsilon }^{\textrm{dis}}\) reflect inverter and battery conversion losses. The SoC update respects a physical upper bound \(\varsigma _{\upsilon }^{\max }\), and the entire evolution is scaled by the discrete time interval \(\Delta \tau\). This constraint ensures feasible battery dynamics throughout the optimization horizon.

This pair of inequalities enforces power-level constraints for EV \(\upsilon\), ensuring that both the charging input \(\theta _{\upsilon ,\tau }^{\textrm{in}}\) and discharging output \(\theta _{\upsilon ,\tau }^{\textrm{out}}\) remain within hardware-imposed rated limits \(\theta _{\upsilon }^{\textrm{rated}}\). Additionally, they ensure energy feasibility: the vehicle cannot be charged beyond capacity or discharged below zero. Both expressions incorporate efficiency-adjusted battery bounds, scaled by the control time-step \(\Delta \tau\). This constraint guarantees the physical operability of each EV battery.

This inequality restricts the total real power exchanged through charging station \(s\) at time \(\tau\), aggregating across all connected EVs \(\upsilon \in \mathscr {V}_s\). The summation includes both charging and discharging actions. The station capacity \(\Theta _{s}^{\textrm{cap}}\) accounts for shared infrastructure limitations, such as line thermal limits or power electronics throughput. This constraint prevents local congestion and overloads at charging depots.

This aggregate constraint limits the net load imposed by each agent \(m\) on the local transformer, where the term \(\vartheta _{n,\tau }^{\textrm{load}} - \vartheta _{n,\tau }^{\textrm{PV}}\) captures non-renewable power drawn from the grid at node \(n\). The right-hand side \(\Xi _m^{\textrm{tr}}\) denotes the transformer’s rated capacity. This condition ensures that federated coordination respects substation-level thermal and voltage security thresholds.

This voltage constraint enforces operational voltage bounds at each bus \(n\), where \(\nu _{n,\tau }^{\textrm{bus}}\) is the voltage magnitude at time \(\tau\), and \([\underline{\nu }_{n}, \overline{\nu }_{n}]\) represents the permissible voltage interval, typically within \(\pm 5\%\) of the nominal value. This maintains system stability and compliance with distribution system standards under real-time charging conditions.

Charging and discharging operations are only permissible within the declared availability window of each electric vehicle \(\upsilon\). The time interval from \(\tau _{\upsilon }^{\textrm{arr}}\) to \(\tau _{\upsilon }^{\textrm{dep}}\) defines the feasible scheduling domain during which the vehicle remains physically connected to the charging infrastructure. Outside this period, both input \(\theta _{\upsilon ,\tau }^{\textrm{in}}\) and output \(\theta _{\upsilon ,\tau }^{\textrm{out}}\) power are enforced to be zero, reflecting operational constraints dictated by user trip patterns.

Photovoltaic (PV) generation at node \(n\) and time \(\tau\), denoted \(\vartheta _{n,\tau }^{\textrm{PV}}\), is upper bounded by the product of local irradiation level \(\Gamma _{n,\tau }^{\textrm{irr}}\) and installed PV capacity \(\chi _n^{\textrm{PV}}\). This constraint incorporates the temporal variability of solar resources and physical device limits into the system-wide scheduling model. Realistic curtailment decisions and flexibility services are thereby made possible while adhering to renewable generation capabilities.

Energy balance is enforced across each federated agent’s domain by equating total net demand to total local discharge supply. The left-hand summation captures net consumption at all grid nodes, while the right-hand term aggregates power contributions from EV discharging and subtracts charging load. This equality ensures that real power schedules across EVs and distributed nodes are physically consistent at every time step.

Flexibility envelopes for each EV agent are defined by time-varying lower and upper bounds, denoted \(\underline{\xi }_{\upsilon ,\tau }^{\textrm{flex}}\) and \(\overline{\xi }_{\upsilon ,\tau }^{\textrm{flex}}\), which capture real-time constraints on available participation in charging or discharging actions. These values may arise from internal user preferences, contractual constraints, or dynamic load participation agreements, and they enable selective integration of EV flexibility into system operations while preserving user autonomy.

User satisfaction is quantified through a hybrid function \(U_{\upsilon ,\tau }^{\textrm{user}}\) that considers both energy completion utility and sensitivity to voltage variation. The first term uses a normalized logarithmic function to capture satisfaction from SoC improvement beyond a user-defined base threshold \(\varsigma _{\upsilon }^{\textrm{base}}\). The second term models voltage risk aversion: users are increasingly dissatisfied as local voltage \(\nu _{\upsilon ,\tau }^{\textrm{bus}}\) deviates from nominal \(\nu _0\), modulated by volatility aversion \(\lambda _{\upsilon }^{\textrm{vol}}\) and sensitivity coefficient \(\omega _{\upsilon }^{\textrm{risk}}\). The scalar \(\theta _{\upsilon ,\tau }^{\textrm{pref}}\) encodes individual preference weightings at time \(\tau\), yielding a personalized and interpretable utility layer.

Penalization of unmet charging requirements is introduced through the term \(\mathscr {P}_{\upsilon ,\tau }^{\textrm{penalty}}\), which includes a linear term for undercharging and a quadratic departure-time penalty. The linear component captures mild dissatisfaction when SoC falls short of the target \(\varsigma _{\upsilon }^{\textrm{target}}\), while the squared loss imposes a stronger disincentive precisely at the departure time \(\tau _{\upsilon }^{\textrm{dep}}\), enforced via indicator \(\mathbb {1}_{\{\tau = \tau _{\upsilon }^{\textrm{dep}}\}}\). Parameters \(\beta _{\upsilon ,\tau }^{\textrm{miss}}\) and \(\gamma _{\upsilon ,\tau }^{\textrm{late}}\) define penalty severity profiles per user and time step, supporting flexible incentive-compatible scheduling.

Cluster homogeneity is enforced by requiring that all EVs \(\upsilon\) within cluster \(\mathscr {C}_g\) adopt a shared scheduling policy \(\pi _g\). This constraint reduces coordination complexity and supports group-level training and aggregation in federated learning. Clustering can be determined based on vehicle type, geographic proximity, charging behavior, or user type, and is critical for scaling the solution framework to large fleet populations.

The privacy-constrained learning budget is captured by limiting the aggregated squared gradient norms of each local policy loss \(\mathscr {L}_{\upsilon }\) over EVs in agent domain \(m\). The term \(\Lambda _m^{\textrm{priv}}\) denotes the maximum tolerable privacy leakage threshold under a federated learning model. By bounding the sensitivity of updates, this constraint ensures that individual vehicle data remains shielded during decentralized policy refinement, enabling compliance with differential privacy or information-theoretic privacy frameworks.

Method

To operationalize the proposed federated multi-agent coordination framework for large-scale electric vehicle (EV) charging networks, this section introduces the detailed methodological foundation underlying the mathematical formulation and algorithmic implementation. We begin by constructing a constrained multi-objective optimization model that reflects the coupled interactions among EV agents, charging infrastructure, and the distribution grid, while accounting for real-time energy availability, transformer capacity, and spatiotemporal mobility of EVs. The formulation integrates key physical constraints of the power network with service-level requirements such as state-of-charge (SoC) fulfillment and charging fairness. Building upon this model, we introduce a federated deep reinforcement learning (FDRL) architecture wherein local agents asynchronously learn site-specific policies based on partial observations and non-IID data, and a central aggregator coordinates global convergence via entropy-weighted federated averaging. The method further incorporates fairness shaping, reward normalization, and feasibility-aware action filtering to ensure that learned policies remain both operationally viable and socially equitable. The following subsections detail the mathematical modeling of system dynamics, objective design, constraint embedding, and the algorithmic implementation of the federated actor–critic training process.

The decentralized energy management problem is formulated as a Markov game \(\mathscr {G}\), where each agent \(m \in \mathscr {M}\) independently interacts with its own state space \(\mathscr {S}_m\) and action space \(\mathscr {A}_m\). The joint environment transition kernel \(\mathscr {P}\) governs stochastic evolution across agents, while \(\mathscr {R}\) encodes the reward structure. The scalar \(\gamma \in (0,1)\) represents the temporal discount factor, and \(\mu _0\) denotes the initial joint state distribution. This formulation enables scalable and distributed learning under asynchronous local observability.

Each agent \(m\) employs a policy \(\pi _{\theta _m}\) parameterized by a deep neural network with weights \(\theta _m\), mapping local states \(s_{m,\tau }\) to a distribution over admissible actions \(a_{m,\tau }\). The function \(f_{\theta _m}\) denotes the output logits of the policy network. A softmax transformation ensures the resulting policy is a valid probability distribution. This construction allows for differentiable and probabilistic action sampling, facilitating efficient policy gradient updates during training.

The scalar reward \(\mathscr {R}_{m,\tau }\) received by agent \(m\) at time \(\tau\) aggregates four components: user satisfaction \(U_{\upsilon ,\tau }^{\textrm{user}}\), penalty for unmet demand \(\mathscr {P}_{\upsilon ,\tau }^{\textrm{penalty}}\), voltage deviation squared norm, and renewable integration score \(\zeta _{m,\tau }^{\textrm{ren}}\). The weights \(\varpi _m^{(i)}\) are consistent with the system-wide objective and allow reinforcement signals to reflect the same operational priorities encoded in the mathematical model. This reward formulation facilitates convergence toward socially optimal policies.

The expected return \(\hat{\mathscr {J}}_m(\theta _m)\) quantifies the total discounted reward obtained by agent \(m\) over horizon \(T\), under current policy \(\pi _{\theta _m}\). This is the primary objective optimized by PPO and forms the basis of gradient-based updates. The expectation is taken over trajectories \(\tau\) sampled from the policy, reflecting the stochastic nature of both the environment and the action distribution.

The advantage estimator \(\hat{A}_{m,\tau }\) captures the temporal utility gain of taking action \(a_{m,\tau }\) at state \(s_{m,\tau }\), relative to the critic network value \(V_{\psi _m}(s_{m,\tau })\). The critic is parameterized by weights \(\psi _m\) and trained alongside the actor. This formulation stabilizes policy updates by reducing variance in gradient estimates and aligns with Generalized Advantage Estimation (GAE) in PPO frameworks.

The clipped surrogate objective gradient \(\nabla _{\theta _m} \hat{\mathscr {J}}^{\textrm{PPO}}_m(\theta _m)\) stabilizes policy updates by limiting large deviations in probability ratios between current and previous policies. The ratio is clipped between \([1-\epsilon ,\; 1+\epsilon ]\) to enforce trust-region behavior. This formulation enables efficient and safe improvement steps in actor training, a core innovation of the PPO algorithm.

The critic loss function \(\mathscr {L}^{\textrm{critic}}_m(\psi _m)\) computes the mean squared error between predicted value estimates \(V_{\psi _m}(s_{m,\tau })\) and empirical returns \(\hat{R}_{m,\tau }\). Accurate value estimation is critical for reducing variance in policy gradients and improving sample efficiency in actor–critic learning.

The actor loss \(\mathscr {L}^{\textrm{actor}}_m(\theta _m)\) includes both the negative clipped PPO objective and an entropy regularization term. The entropy \(\mathscr {H}(\cdot )\) encourages exploration by preventing premature convergence to deterministic policies. The coefficient \(\varsigma _m^{\textrm{ent}}\) modulates the balance between exploitation and exploration, facilitating robust learning under non-stationary environments.

Each agent encodes its local observation graph \(\mathscr {N}(s_{m,\tau })\) into a latent representation \(\textbf{z}_{m,\tau }\) using a graph neural network \(\textrm{GNN}_{\xi _m}\) with parameters \(\xi _m\). The graph \(\textbf{A}_{m}\) denotes the adjacency matrix defining spatial and topological relations among local entities (e.g., buses, chargers). This structure-aware encoder allows the agent to capture spatiotemporal correlations during decision making.

The global policy update in the federated PPO framework is computed via a weighted aggregation of local policies \(\theta _m^{(k)}\), where \(\omega _m^{(k)}\) represents participation or data-size-based weights. This update mechanism corresponds to Federated Averaging (FedAvg), and enables privacy-preserving global convergence across decentralized agents without exchanging raw data.

Each agent performs asynchronous synchronization with the global server based on its own sync period \(\tau _m^{\textrm{sync}}\). The policy \(\theta _m^{(k)}\) is updated only when the local iteration \(k\) aligns with its synchronization schedule. This approach allows heterogeneity in device capabilities and communication conditions while maintaining theoretical convergence guarantees.

A privacy penalty term \(\mathscr {L}_m^{\textrm{priv}}\) is added to the actor loss, enforcing a constraint on the gradient norm. The scalar \(\lambda _m^{\textrm{priv}}\) regulates the tradeoff between performance and privacy, ensuring that updates do not leak excessive information about local training data. This term aligns with differential privacy mechanisms in federated learning.

The total loss function for each agent combines the actor loss, critic loss, and privacy-regularized penalty. Joint optimization of all components ensures that each agent learns robust local policies while maintaining privacy constraints and contributing meaningfully to the global coordination objective.

Computational complexity and scalability

Per-agent learning cost. Let B be the on-policy batch size (trajectories per sync), E the PPO epochs per sync, T the rollout length (steps per trajectory), \(|\theta _m|\) the number of trainable parameters of the local actor–critic (including the GNN encoder), |A| the action-space size, and \(G_m\) the number of graph edges processed by agent m’s GNN. One forward–backward pass on a batch costs \(\mathscr {O}(B T \cdot |\theta _m|)\) for dense layers plus \(\mathscr {O}(B T \cdot G_m)\) for message passing. With E epochs, the per-sync compute is

The critic update and advantage estimation add the same order, so the constant factor roughly doubles but asymptotics remain unchanged.

Server aggregation and communication. With participation rate \(p\in (0,1]\) and M agents, about pM agents upload/download parameters each sync. Per round, each participating agent communicates \(\mathscr {O}(|\theta |)\) floats for upload and the same for download; total network cost is

The FedAvg aggregation on the server is linear in model size and number of participants:

Differential-privacy L2 clipping adds only \(\mathscr {O}(|\theta |)\) per agent per round.

End-to-end wall-clock per round. Let \(\tau ^{\text {comp}}_m\) be agent m’s compute time for one sync and \(\tau ^{\text {net}}_m\) its two-way transfer time. The round time under asynchronous syncing is

where \(\mathscr {S}\) is the set of participating agents and \(\tau ^{\text {agg}}=\mathscr {O}(pM\,|\theta |/\text {BW}_{\text {mem}})\) is server aggregation time. Asynchrony reduces the straggler effect because agents sync every \(\tau ^{\text {sync}}_m\) local updates rather than locking step-wise.

Memory footprint. PPO is on-policy; each agent stores a short buffer of BT transitions and the model parameters. The memory per agent is

plus temporary activations during backprop, typically a small multiple of \(|\theta _m|\).

Scalability discussion. The compute scales linearly in E, B, and T, and quasi-linearly with graph size via \(G_m\). Communication scales linearly with \(|\theta |\) and pM; in practice we keep \(|\theta |\) moderate by using compact GNN encoders and share encoders between actor/critic. Empirically (Sec. ), the system converges within \(\sim 400\) federated rounds while maintaining Jain’s index \(>0.95\), indicating stable scaling under non-IID participation.

Results

To evaluate the performance and scalability of the proposed federated reinforcement learning framework, we simulate a case study based on a high-density urban energy mobility system. The testbed consists of 1,200 electric vehicles (EVs) distributed across 60 smart charging stations, each managed by a dedicated local controller. The charging stations are geographically grouped into 12 microgrid districts, each equipped with a 1.5 MVA distribution transformer and connected to a radial feeder network modeled after the IEEE 123-bus system. Within each district, rooftop photovoltaic (PV) generation is co-located with chargers and sized at 300–500 kW depending on building typology, yielding a combined PV penetration level of approximately 45% relative to peak demand. Load profiles are synthesized from real EV travel pattern data collected from the California Household Travel Survey (CHTS), with arrival rates peaking at 7:00–9:00 AM and 5:00–7:00 PM. Each EV is characterized by a battery capacity sampled from a truncated Gaussian distribution centered at 60 kWh with a standard deviation of 12 kWh, and initial state-of-charge (SoC) ranging from 0.1 to 0.6, sampled uniformly.

The temporal resolution of the simulation is set to 15 minutes per step over a 24-hour planning horizon (i.e., 96 time steps), enabling high-fidelity modeling of dynamic charging and discharging schedules. Each local controller maintains a GNN-encoded policy model, trained over sequences of 20-episode batches using a federated PPO update. Realistic weather-driven solar irradiance profiles for PV generation are drawn from the NREL National Solar Radiation Database for Los Angeles County, California, across typical summer and winter days. Grid operating constraints include voltage limits of \(\pm 5\%\) around nominal 1.0 p.u., transformer thermal ratings, and node-level congestion thresholds based on the cumulative real power exchanged at each bus. Communication latency is emulated by allowing agents to synchronize with the central aggregator once every 5–10 iterations, selected randomly to reflect asynchronous participation. Furthermore, up to 20% of the local controllers are randomly dropped from each global update cycle to simulate intermittent communication loss or edge device failures.

All simulations are conducted on a Linux-based high-performance computing cluster with 16-core Intel Xeon CPUs (2.8 GHz), 128 GB of RAM, and four NVIDIA A100 GPUs (40 GB each). Each federated PPO round requires approximately 2.3 seconds per agent on average, with full convergence typically achieved within 400 communication rounds. The actor–critic models are implemented using PyTorch 2.1.0 and optimized via the Adam optimizer with a learning rate of \(3 \times 10^{-4}\), entropy regularization coefficient of 0.01, and clipping parameter \(\epsilon = 0.2\). Graph representations of the local distribution grid topology are constructed using NetworkX, and graph message-passing layers are implemented using PyTorch Geometric. Differential privacy constraints are enforced via L2-norm clipping on local gradients, with a maximum privacy budget \(\Lambda _m^{\textrm{priv}} = 0.25\) per agent. The total simulation time per full case run is approximately 3.5 hours, including training, evaluation, and federated synchronization overhead.

Battery capacity vs initial state-of-charge distribution of the EV fleet.

Figure 2 presents a two-dimensional kernel density estimation (KDE) of battery capacity versus initial state-of-charge (SoC) for the simulated electric vehicle (EV) fleet. A total of 1,200 EVs are sampled, with battery capacities ranging from 30 to 90 kilowatt-hours (kWh). The data follows a truncated Gaussian distribution centered around 60 kWh with a standard deviation of 12 kWh, representative of current market compositions ranging from compact EVs to premium long-range models. On the vertical axis, the initial SoC values are sampled uniformly between 0.1 and 0.6 per unit (p.u.), capturing real-world behavior where users typically plug in their vehicles at moderate or depleted charge levels rather than at extremes. The KDE contours reveal a pronounced density peak centered around the 55–65 kWh capacity band and SoC levels between 0.25 and 0.45 p.u., forming the dominant cluster that represents approximately 68% of the total population. Peripheral regions of the distribution—particularly the lower-left quadrant below 40 kWh and 0.2 p.u.—contain a sparser population of more constrained EVs, which are of special operational concern due to their urgency and limited energy flexibility. The upper-right quadrant, although less dense, corresponds to luxury EVs with large storage buffers and higher entry SoCs, offering potential for vehicle-to-grid (V2G) services or flexible discharging. These subtle but significant inter-agent variations justify the use of personalized or cluster-specific policy architectures rather than monolithic control models.

Specifically, the deviation term is designed to capture the extent to which local charging demand aligns with on-site renewable generation, with PV being the representative case study resource. This term penalizes situations where charging demand significantly exceeds PV output, since such mismatches increase reliance on upstream grid imports and potentially stress local transformers. Conversely, when EV demand is well-aligned with PV generation, renewable absorption is maximized and the grid-supportive objective is fulfilled.

We also now clarify that the model does not assume PV is the only source of supply in the system. Other grid-connected generation resources (e.g., conventional distribution-level imports or dispatchable DERs) are implicitly included as part of the net grid supply. The reason PV appears explicitly in the formulation is that it represents the variable and location-dependent component most directly influencing distribution-level constraints. For other sources, which are assumed to be stable and controllable, their contributions are embedded in the baseline net load profile and therefore do not require explicit deviation terms in the optimization.

Hourly EV arrival heatmap across distributed charging stations.

Figure 3 presents a temporal-spatial heatmap of EV arrival frequency across 60 distributed smart charging stations over a 24-hour period, discretized into hourly intervals. The simulated dataset assumes stochastic arrival modeled via Poisson processes, with time-varying intensity functions designed to replicate real-world commuting behavior. The resulting heatmap reveals a strongly bimodal arrival distribution: a morning peak spanning from 7:00 to 10:00 AM and an evening peak concentrated between 5:00 and 8:00 PM. Within these windows, station-level arrival rates typically range from 5 to 12 vehicles per hour, highlighting the potential for localized overloads and demand clustering. In contrast, off-peak hours—especially those between 0:00 and 5:00 AM and between 13:00 and 15:00 PM—see substantially reduced activity, averaging only 1.2 arrivals per hour per station. A subset of stations (around station indices 12–18 and 42–48) exhibit elevated midday arrival density, likely simulating workplace chargers or urban opportunity charging zones. These stations experience flattened temporal profiles, suggesting different load dynamics compared to residential or transit-linked locations. The intra-day and inter-station variation in demand is visually distinct and statistically significant, offering a practical backdrop for designing spatially differentiated coordination strategies.

Dynamic electricity price curve over 24 hours, capturing time-of-use tariff variation with visible peak and off-peak windows.

Figure 4 illustrates temporal variations in pricing under a time-of-use (TOU) policy structure, modeled over a standard 24-hour window with a resolution of 15 minutes, yielding 96 discrete intervals. The profile exhibits a composite structure, where three regimes are evident: off-peak hours centered near 3:00 AM with a minimum price floor near $0.11/kWh, mid-range prices during daylight hours maintaining a stable baseline of around $0.18/kWh, and peak prices sharply escalating between 17:00 and 21:00, reaching a maximum of approximately $0.34/kWh. The curve integrates Gaussian-based temporal components to simulate realistic energy market behavior and overlays small stochastic fluctuations to reflect market noise and volatility. This structure is crucial for multi-agent EV charging strategies, as it dictates not only the incentive for load shifting but also the coordination complexity when distributed EV fleets collectively attempt to avoid peak windows. The high-resolution input here serves as a key driver in both the objective function and the federated reinforcement learning algorithm, affecting both the pricing signal received by agents and the joint optimization constraints faced by the cooperative scheduler.

Distribution of EV battery capacities across 1200 units, with a smooth KDE overlay indicating central tendency and spread.

Figure 5 showcases the heterogeneity of the electric vehicle fleet under study, sampled over 1,200 units to reflect a large-scale urban deployment. The histogram reveals a truncated Gaussian distribution centered around 60 kWh, with a standard deviation of approximately 12 kWh, and hard lower and upper bounds imposed at 30 kWh and 90 kWh respectively to emulate market-available battery technologies (e.g., subcompact BEVs and premium long-range models). The presence of such diversity has direct implications on the scheduling flexibility, as larger-capacity vehicles can tolerate more dynamic charging curves without compromising user satisfaction, whereas smaller-capacity units require more precise SoC convergence. The KDE overlay helps visualize the latent modes within the population, indicating possible clustering around 45 kWh (fleet utility vehicles) and 75 kWh (premium or newer-generation EVs). This distribution feeds directly into the agent-level local constraints during model training, ensuring that both optimization feasibility and realism are preserved across the dispatch problem.

To evaluate the robustness of the proposed framework, we conducted sensitivity tests on three key assumptions: EV arrival patterns, photovoltaic (PV) penetration levels, and communication delays.

First, alternative EV arrival distributions were simulated by shifting peak hours by ±2 hours and altering peak intensity by up to 20%. The results indicate that the federated PPO framework consistently maintains high fairness (Jain’s index above 0.93) and low SoC error (within ±3%), although transformer congestion slightly increases under more concentrated arrivals.

Second, PV penetration was varied between 25% and 60% of peak load. At higher penetration levels, renewable absorption improved by nearly 18% compared to the baseline, but local voltage violations became more frequent, highlighting the trade-off between renewable utilization and grid stability. Conversely, with lower PV penetration, grid stress was reduced, but the environmental benefits diminished.

Third, communication delays were emulated by extending synchronization intervals from 5–10 iterations up to 20 iterations. Even under these harsher conditions, the global reward curve converged, albeit with slower training speed and greater variance across agents. Importantly, fairness metrics and SoC fulfillment accuracy remained within acceptable bounds, demonstrating resilience to asynchronous updates and intermittent communication.

Overall, the sensitivity analysis confirms that the proposed framework delivers stable performance across diverse operational scenarios, thereby strengthening its robustness and practical applicability.

Training evolution of global and local rewards in federated deep reinforcement learning.

Figure 6 captures the temporal evolution of both global and local agent rewards over the 400 training episodes of the federated deep reinforcement learning (FDRL) process. The horizontal axis corresponds to the training iteration index, while the vertical axis quantifies the cumulative reward signal obtained by the cooperative scheduler and selected local agents during each episode. The global utility function, represented by the bold steel-blue curve, exhibits a smooth and monotonic convergence behavior beginning near episode 60, rising from an initial baseline near zero to a stabilized performance plateau around 120 reward units. This trajectory reflects the system’s learning dynamics under federated coordination, where aggregated state-action feedbacks gradually enable improved decision-making for grid-oriented social welfare. Superimposed on this plot are several translucent trajectories corresponding to individual agents selected from heterogeneous local regions. These agents are drawn from charging stations with differing EV traffic patterns, renewable generation profiles, and transformer constraints. Their reward curves exhibit considerable variance in early episodes–fluctuating between –40 and +80–due to exploration-heavy policies and non-stationary local environments. As the training progresses, however, most agents align toward the global trend, with final episode rewards clustering within a ±15 unit margin around the global benchmark. This co-evolution of global and local rewards highlights two important system behaviors: (1) the ability of the federated architecture to balance global efficiency against local heterogeneity, and (2) the convergence stability of the distributed policy learning despite partial observability and stochastic transitions.

First, temporal fairness was quantified by examining the variance of cumulative charging satisfaction for each EV over different time windows. This metric ensures that no subset of vehicles consistently experiences lower service quality during specific peak periods. The results show that the temporal fairness index remains above 0.91 across all tested horizons, indicating that short-term congestion does not translate into long-term systematic disadvantages.

Second, fairness was evaluated across heterogeneous user groups, distinguished by vehicle battery size and charging urgency. Smaller-battery EVs and vehicles with tighter departure windows typically face greater risk of undercharging. Our results demonstrate that the proposed FDRL framework successfully mitigates these disparities: the average SoC deviation for small-battery vehicles remains within ±3.2%, compared to ±2.7% for larger-battery vehicles, and urgency-sensitive users are prioritized with minimal penalty trade-offs.

Taken together, these results confirm that the proposed framework delivers fairness not only in aggregate allocation, as measured by Jain’s index, but also over time and across heterogeneous user populations, thereby offering a more holistic assurance of equity in large-scale EV coordination.

Histogram of state-of-charge (SoC) fulfillment errors across 1,200 EVs.

Figure 7 presents a histogram of the final state-of-charge (SoC) fulfillment errors across a synthetic fleet of 1,200 electric vehicles (EVs) operating under the proposed federated scheduling mechanism. The x-axis measures the deviation between each EV’s achieved and target SoC levels, in percentage terms, after the scheduling horizon concludes. The y-axis represents the count of EVs falling into each error bin, with a bin width of 0.5% over a symmetric domain from –10% to +10%. The resulting distribution exhibits a bell-shaped structure centered around zero, with over 83% of the fleet achieving SoC errors within ±2%. The blue vertical dashed line indicates the empirical mean error, measured at approximately –0.24%, suggesting an almost unbiased aggregate performance. The black dashed line at zero serves as a reference for perfect fulfillment. The inclusion of a kernel density estimate (KDE) overlay facilitates smooth visual interpretation of the distribution, revealing latent asymmetries and concentration intervals within the SoC error profile. A moderate right-skew is observed in the tail region beyond +5%, indicating the presence of a small cluster of EVs that slightly overshoot their target SoC due to stochastic demand modeling or temporary local congestion relaxation. Conversely, the lower tail remains thinner, implying that the system prioritizes avoiding undercharging, which aligns with user satisfaction preservation as encoded in the reward structure. Notably, fewer than 1.8% of EVs fall outside the ±5% band, suggesting robust control performance and effective alignment between optimization targets and operational delivery.

Constraint violation heatmap.

Figure 8 illustrates the spatiotemporal distribution of constraint violations within the coordinated EV–charger–grid network. The heatmap spans 60 spatially distributed charging stations on the vertical axis and a 24-hour operational horizon on the horizontal axis. Each cell encodes the severity level of constraint violations experienced at a specific station during a given hour. Severity is quantified on a discrete scale from 0 to 3, where 0 indicates full compliance, 1 reflects mild queue overflow or SoC slack breaches, 2 corresponds to transformer loading beyond 90% capacity, and 3 flags simultaneous breaches of multiple constraints. Notably, darker red regions emerge between hours 9–11 and 18–20 across stations 14 to 28, indicating clustered congestion in the morning and evening demand peaks. This correlates well with the EV arrival time distribution and transformer bottlenecks observed in upstream data visualizations. The upper-right quadrant, particularly stations above index 45 during evening hours (19–22), displays moderate violation levels, suggesting localized grid stress due to delayed dispatch and insufficient coordination under high photovoltaic output curtailment. Conversely, lower-left regions (e.g., stations 1–10 during hours 1–6) remain uniformly pale, indicating minimal or no constraint pressure in the off-peak window. The spatial clustering of violations suggests that certain urban regions consistently face capacity issues, which calls for adaptive reweighting in federated scheduling or hardware reinforcement. Importantly, the presence of isolated dark bands at stations 22 and 47 throughout the day underscores the value of incorporating dynamic station-specific penalties into the reward structure, thus improving fairness and system compliance.

Federated weight distribution.

Figure 9 presents a statistical summary of federated weight allocations assigned to 12 local agents across 10 consecutive global aggregation rounds. Each boxplot represents the empirical distribution of weights \(\omega _i\) attributed to a given agent in the federated averaging process. These weights reflect the influence of each agent’s local model update on the global policy refinement and are affected by data quality, local reward performance, and contribution reliability. The horizontal spread of each box indicates the variance in influence per agent, while the median line and whiskers identify interquartile range and extremal fluctuations, respectively. Agent 3 and Agent 7 exhibit relatively narrow weight distributions centered around 0.08 and 0.09, suggesting stable contributions with low episodic reward variance. In contrast, Agent 1 and Agent 12 show greater dispersion and higher median weights, occasionally exceeding 0.14, which could result from their strategic placement in high-traffic or renewable-dominant locations, making their updates more informative for global convergence. Meanwhile, Agent 5 and Agent 9 receive consistently lower weights, with lower quartiles near 0.03, indicating either redundant training signals or poor local policy generalization. Such statistical asymmetry in the weight allocation process underscores the inherent heterogeneity of the system and highlights the need for fairness-aware federated optimization.

Convergence speed comparison between federated and centralized DRL.

Figure 10 presents a comparative analysis of convergence behavior between the proposed federated deep reinforcement learning (DRL) approach and a conventional centralized DRL baseline. The x-axis represents training episodes, spanning 400 rounds, while the y-axis quantifies the average cumulative reward per episode. Both curves are smoothed using a moving window average and superimposed with Gaussian noise to capture learning variance under realistic system heterogeneity. The dotted horizontal line at a reward level of 72 serves as a convergence threshold, empirically calibrated to represent stable policy performance with acceptable SoC fulfillment, constraint satisfaction, and global reward consistency. The federated DRL model, depicted with a solid line, demonstrates an accelerated learning trajectory, reaching the convergence threshold by approximately episode 120. In contrast, the centralized DRL variant, represented by a dashed line, exhibits a slower rate of improvement, requiring roughly 240 episodes to achieve comparable performance. This indicates a 50% reduction in training time when federated coordination is employed. Such efficiency gains are attributed to the decentralized architecture, which allows localized agents to learn policies tailored to their regional dynamics, followed by periodic aggregation that enhances global generalization without excessive overfitting to centralized biases. The variance around the federated reward curve remains relatively narrow after episode 100, with fluctuations bounded within ±2.5 units, suggesting robust convergence stability even under asynchronous local updates and variable reward gradients. In contrast, the centralized model displays persistent oscillations beyond episode 200, particularly due to delayed information propagation and inconsistent reward scaling from diverse node contexts. This behavior validates the theoretical expectation that federated models benefit from both faster convergence and higher robustness in environments with non-IID data and partially observable state spaces, such as those induced by geographically distributed EV stations and nonuniform user behavior.

Fairness index evolution across training rounds.

Figure 11 captures the evolution of fairness across global federated training rounds, quantified via Jain’s fairness index–a widely accepted metric for evaluating the equity of resource allocation among multiple agents. The x-axis spans 30 global rounds, while the y-axis denotes the calculated fairness index value at each aggregation interval. The figure reveals a steady upward trend, beginning near 0.81 in round 1 and asymptotically approaching a saturation level around 0.96. A horizontal dotted line is placed at 0.95 to indicate the design target for fair reward and action distribution, ensuring that no local agent persistently dominates or suffers during the training lifecycle. The progression reflects the efficacy of the reward shaping and model aggregation strategy adopted in the proposed federated deep reinforcement learning framework. Initially, fairness is relatively low, driven by uneven data distributions, site-specific traffic profiles, and non-IID environment interactions. However, as learning progresses and model updates are increasingly informed by both local and global trends, the system self-corrects imbalances, with fairness improving significantly after round 10. From round 15 onwards, the fairness index stabilizes within the band of [0.945, 0.965], indicating that the majority of charging stations are granted roughly proportional attention in both scheduling priority and utility accrual. For figures that rely on different statistical representations (such as KDE plots, histograms, and boxplots), we retained the methodological diversity to preserve the insights that each visualization offers, but applied a unified axis style and labeling standard so that the figures still feel coherent as part of the same narrative. For example, the histograms and KDE overlays that illustrate the distribution of EV battery capacities (Fig. 2 and Fig. 5) now follow the same axis scaling and labeling format. Similarly, the fairness-related boxplots (Fig. 9) have been reformatted to use identical label fonts and consistent axis units, making them easier to interpret in comparison with other performance metrics. Finally, all figure captions have been revised to adopt a consistent explanatory style, with clear mention of data sources, key parameters, and visualization methods.

Conclusion

This paper introduced a federated and decentralized FDRL framework based on PPO for large-scale EV–grid coordination. By integrating a CMDP formulation with graph neural network encoders, the model explicitly incorporates operational limits, spatial correlations, renewable variability, and user fairness into the decision-making process. Validated on a realistic case study with 1,200 EVs, 60 charging stations, and a 33-bus distribution feeder over a 24-hour horizon, the proposed framework achieved a 13.6% reduction in grid operating cost, a 21.4% increase in renewable energy absorption, fairness with Jain’s index consistently above 0.95, and an average SoC deviation below 2.5%. These quantitative outcomes confirm that the federated approach not only enhances grid efficiency and renewable integration but also ensures equitable scheduling and user satisfaction at scale. Beyond these performance results, several deployment challenges must be addressed before real-world adoption. Interoperability with existing EV charging standards and protocols is required to integrate control signals with heterogeneous infrastructures. Communication reliability and latency remain critical, as federated learning relies on asynchronous updates that must tolerate bandwidth constraints and packet losses. Furthermore, cybersecurity risks—including data poisoning and adversarial manipulation of local updates—must be mitigated to preserve both system stability and user trust.

Limitations and future work

Although the proposed federated reinforcement learning framework demonstrates strong performance in cost reduction, renewable absorption, and fairness, several limitations remain. First, the case study relies on synthetic yet empirically calibrated data, and further validation on fully real-world datasets with measured EV travel patterns, charging logs, and feeder load profiles is necessary to confirm robustness under practical uncertainties. Second, the current CMDP formulation captures a rich set of grid and equity constraints but does not yet consider market mechanisms such as dynamic pricing, incentive design, or demand-response contracts, which may influence user participation and system-level efficiency. Third, communication reliability and cybersecurity are modeled in simplified terms; real federated deployments must address packet losses, non-stationary delays, adversarial updates, and data poisoning in greater detail. Fourth, while GNN encoders improve generalization across feeder topologies, transferability to entirely different distribution networks has not been systematically explored. Future work will therefore extend the proposed method in several directions. One line of research is to integrate real-time market mechanisms and incentive-compatible strategies that encourage EV owners to participate while preserving fairness. Another avenue is to develop hybrid architectures that combine federated reinforcement learning with anomaly detection, robust optimization, or game-theoretic mechanisms to enhance resilience against adversarial or non-cooperative behavior. In addition, scaling to inter-regional coordination scenarios, where thousands of charging stations are coupled across multiple feeders, will require hierarchical or multi-layer federated schemes. Finally, field trials with industrial partners and charging infrastructure operators will be pursued to bridge the gap between simulation results and real-world deployment, with an emphasis on interoperability, standard compliance, and user acceptance.

Data Availability

The datasets generated and/or analyzed during the current study are available from the corresponding author on reasonable request.

References

Li, T. T. et al. Integrating solar-powered electric vehicles into sustainable energy systems. Nat. Rev. Electr. Eng. https://doi.org/10.1038/s44287-025-00181-7 (2025).

Sahin, H. Hydrogen refueling of a fuel cell electric vehicle, International Journal of Hydrogen Energy, (2024).

Zhang, S., Zhang, X., Zhang, R., Gu, W. & Cao, G. N-1 evaluation of integrated electricity and gas system considering cyber-physical interdependence. IEEE Trans. Smart Grid 16(5), 3728–3742. https://doi.org/10.1109/TSG.2025.3578271 (2025).

Shi, L. & Hu, B. Frontiers in operations: battery as a service: flexible electric vehicle battery leasing. Manuf. Serv. Oper. Manag. https://doi.org/10.1287/msom.2022.0587 (2024).

Zhao, A. P. et al. Electric vehicle charging planning: a complex systems perspective. IEEE Trans. Smart Grid 16(1), 754–772. https://doi.org/10.1109/TSG.2024.3446859 (2025).

Xie, S., Wu, Q., Zhang, M. & Guo, Y. Coordinated energy pricing for multi-energy networks considering hybrid hydrogen-electric vehicle mobility. IEEE Trans. Power Syst. https://doi.org/10.1109/TPWRS.2024.3369633 (2024).

Kumar, B. V. & Farhan, M. A. A. Optimal Simultaneous Allocation of Electric Vehicle Charging Stations and Capacitors in Radial Distribution Network Considering Reliability. J. Mod. Power Syst. Clean Energy https://doi.org/10.35833/MPCE.2023.000674 (2024).

Zhang, S. et al. Partitional decoupling method for fast calculation of energy flow in a large-scale heat and electricity integrated energy system. IEEE Trans. Sustain. Energy 12(1), 501–513. https://doi.org/10.1109/TSTE.2020.3008189 (2021).

Mak, H.-Y. & Tang, R. Collaborative vehicle-to-grid operations in frequency regulation markets. Manuf. Serv. Oper. Manag. 26(3), 814–833. https://doi.org/10.1287/msom.2022.0133 (2024).

Zhao, A. P. et al. Hydrogen as the nexus of future sustainable transport and energy systems. Nat. Rev. Electr. Eng. https://doi.org/10.1038/s44287-025-00178-2 (2025).

Chen, S., Liu, J., Cui, Z., Chen, Z., Wang, H. & Xiao, W. A Deep Reinforcement Learning Approach for Microgrid Energy Transmission Dispatching. Applied Sciences. https://doi.org/10.3390/app14093682.

Wang, Z. & Li, Y. A Generation Method of New Power System APT Attack Graph Based on DQN. Recent Advances in Electrical & Electronic Engineering (Formerly Recent Patents on Electrical & Electronic Engineering) 17(1), 82–90 (2024).

Zeng, L. et al. Resilience Assessment for Power Systems Under Sequential Attacks Using Double DQN With Improved Prioritized Experience Replay. IEEE Syst. J. 17(2), 1865–1876. https://doi.org/10.1109/JSYST.2022.3171240 (2023).

Li, R. et al. Double DQN-Based Coevolution for Green Distributed Heterogeneous Hybrid Flowshop Scheduling With Multiple Priorities of Jobs. IEEE Trans. Autom. Sci. Eng. https://doi.org/10.1109/TASE.2023.3327792 (2023).

Feng, B. et al. Byzantine-resilient economical operation strategy based on federated deep reinforcement learning for multiple electric vehicle charging stations considering data privacy. J. Modern Power Syst. Clean Energy https://doi.org/10.35833/MPCE.2023.000850 (2024).

Sinclair, S. R., Banerjee, S. & Yu, C. L. Adapt. Discret. Online Reinforc. Learn.. Oper. Res. 71(5), 1636–1652. https://doi.org/10.1287/opre.2022.2396 (2022).

Zhang, G. et al. A multi-agent deep reinforcement learning approach enabled distributed energy management schedule for the coordinate control of multi-energy hub with gas, electricity, and freshwater. Energy Convers. Manage. 255, 115340. https://doi.org/10.1016/j.enconman.2022.115340 (2022).

Chen, X., Qu, G., Tang, Y., Low, S. & Li, N. Reinforcement learning for selective key applications in power systems: Recent advances and future challenges. IEEE Transactions on Smart Grid (2022).

Jia, X. et al. Coordinated operation of multi-energy microgrids considering green hydrogen and congestion management via a safe policy learning approach. Appl. Energy 401, 126611. https://doi.org/10.1016/j.apenergy.2025.126611 (2025).

Said, D., Bagaa, M., Oukaira, A. & Lakhssassi, A. Quantum Entropy and Reinforcement Learning for Distributed Denial of Service Attack Detection in Smart Grid. IEEE Access 12, 129858–129869. https://doi.org/10.1109/ACCESS.2024.3441931 (2024).

Said, D. Intelligent Photovoltaic Power Forecasting Methods for a Sustainable Electricity Market of Smart Micro-Grid. IEEE Commun. Mag. 59(7), 122–128. https://doi.org/10.1109/MCOM.001.2001140 (2021).

Said, D. A Decentralized Electricity Trading Framework (DETF) for Connected EVs: A Blockchain and Machine Learning for Profit Margin Optimization. IEEE Trans. Industr. Inf. 17(10), 6594–6602. https://doi.org/10.1109/TII.2020.3045011 (2021).

Said, D., Rehmani, M. H., Mellal, I., Oukaira, A. & Lakhssass, A. Cybersecurity Based on Converged Form of Blockchain, Internet-of-Things and Machine Learning in Smart Micro-Grid, Proc. 2024 International Conference on Computing, Internet of Things and Microwave Systems (ICCIMS), Gatineau, QC, Canada, pp. 1–6, https://doi.org/10.1109/ICCIMS61672.2024.10690628 (2024).

Said, D. & Elloumi, M. A New False Data Injection Detection Protocol based Machine Learning for P2P Energy Transaction between CEVs, Proc. 2022 IEEE International Conference on Electrical Sciences and Technologies in Maghreb (CISTEM), Tunis, Tunisia, pp. 1–5, https://doi.org/10.1109/CISTEM55808.2022.10044067 (2022).

Funding

This research is supported by the Science and Technology Project of State Grid Jibei Electric Power Company Limited (Project No. B30185240003).

Author information

Authors and Affiliations

Contributions

Lixia Zhou led the project design, coordinated internal data collection, and contributed to the overall structure of the manuscript. Dawei Huo was responsible for model development, technical validation, and writing the initial draft. Jian Chen assisted in system implementation, experimental setup, and partial data analysis. Bo Bo supervised the project. Hao Li reviewed and revised the manuscript critically for intellectual content and served as the corresponding author.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhou, L., Huo, D., Chen, J. et al. Federated reinforcement learning with constrained markov decision processes and graph neural networks for fair and grid-constrained coordination of large-scale electric vehicle charging networks. Sci Rep 15, 39593 (2025). https://doi.org/10.1038/s41598-025-22482-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-22482-5