Abstract

ST-segment elevation myocardial infarction (STEMI) is a life-threatening cardiovascular event influenced by meteorological conditions and air pollution. Traditional statistical methods often fail to capture the complex, nonlinear relationships between environmental factors and STEMI risk. This study analyzed hospitalization data from Harbin, China (2014–2023), alongside meteorological and air pollution data. Seven machine learning models, including LightGBM and XGBoost, were used to predict STEMI risk. Key predictors were identified via recursive feature elimination, and model performance was assessed with nested cross-validation. Shapley Additive Explanations (SHAP) were applied to interpret the impact of key predictors. Model performance was evaluated using metrics such as AUC, accuracy, and recall. LightGBM achieved the best performance, with an AUC of 0.84 on the validation set, demonstrating high predictive accuracy and generalizability. Moreover, the LightGBM model improved its interpretability and predictive power by incorporating lagged effects along with recursive feature elimination (RFE). SHAP analysis identified PM\(_{2.5}\), SO\(_{2}\), NO\(_{2}\), and meteorological factors (e.g., air pressure, humidity, wind speed) as critical contributors, with significant lag effects observed. These findings underscore the cumulative impact of prolonged environmental exposure on cardiovascular health. This study developed a robust, interpretable predictive model that elucidates the complex relationship between environmental factors and STEMI incidence. The results provide valuable insights for early prevention, public health policymaking, and resource allocation.

Similar content being viewed by others

Introduction

ST-segment elevation myocardial infarction (STEMI) is a life-threatening cardiovascular condition caused by acute coronary artery occlusion1. Despite advances in prevention and treatment, STEMI remains a significant global health burden, particularly in developing and economically disadvantaged regions2,3,4. Traditional risk factors, including age, sex, lifestyle, and comorbidities such as diabetes and hypertension, are well-established contributors to STEMI5,6. However, the high incidence and mortality rates in certain populations suggest that external environmental factors may also play a critical role7,8,9.

Meteorological conditions, such as temperature, humidity, and atmospheric pressure, have been consistently linked to cardiovascular events in epidemiological studies10,11,12. Extreme weather, including heatwaves and cold spells, can increase cardiovascular strain, leading to acute events13,14. Similarly, air pollutants, particularly fine particulate matter (PM\(_{2.5}\), PM\(_{10}\)), nitrogen dioxide (NO\(_{2}\)), and ozone (O\(_{3}\)), are known to induce systemic inflammation, oxidative stress, and endothelial dysfunction, increasing the risk of acute myocardial infarction15,16,17,18,19. Although the associations between environmental factors and STEMI have been reported, most studies rely on linear statistical models, which are limited in capturing the complex, nonlinear interactions and lag effects inherent in these relationships.

Machine learning (ML) techniques offer a powerful alternative for analyzing large, complex datasets20,21. Unlike traditional methods, ML models can uncover hidden patterns and interactions among variables, making them particularly suited for studying the multifactorial etiology of STEMI22. This study leverages seven ML algorithms, including LightGBM and XGBoost, to predict STEMI incidence based on meteorological and air pollution data. By employing Shapley Additive Explanations (SHAP), the study not only identifies key environmental predictors but also provides interpretable insights into their contributions. This approach aims to enhance our understanding of the environmental determinants of STEMI, offering a robust tool for early prevention and public health policy development.

Methods

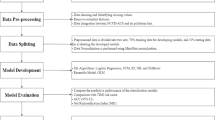

Data collection and preprocessing

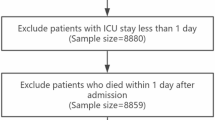

This retrospective study analyzed STEMI hospitalization data from 2014 to 2023 using the Cold Region Climate and Disease Database (CRCDD). The CRCDD is a disease registry that records all cardiovascular events diagnosed at the First Affiliated Hospital of Harbin Medical University, the largest teaching and referral hospital in Heilongjiang province. Diagnoses are confirmed by cardiology specialists based on clinical symptoms, electrocardiographic findings, and laboratory test results. For this study, STEMI cases were identified through ICD-10 codes, while patients with NSTEMI, unstable angina, incomplete records, or non-residency were excluded. Detailed data distributions for this database are presented in Table 1. A key strength of the CRCDD lies in its large-scale, high-quality documentation of more than a decade of rigorously confirmed cardiovascular events, providing a solid foundation for investigating disease patterns and their associations with environmental and epidemiological risk factors.

Meteorological and air pollution data were obtained from 54 monitoring stations in Heilongjiang Province, covering parameters such as temperature, humidity, air pressure, PM\(_{2.5}\), SO\(_{2}\), and NO\(_{2}\). Patient residences were geocoded to calculate the nearest station data using the Haversine formula. Time-series features, meteorological variables, and air pollutant concentrations were included, accounting for lag effects of up to 30 days.

Machine learning methods

Seven machine learning models–LightGBM, XGBoost, AdaBoost, Stochastic Gradient Boosting Trees (SGBT), K-Nearest Neighbors (KNN), Naive Bayes, and Logistic Regression(LR)-were employed to predict STEMI risk. These algorithms were selected for their distinct strengths in handling complex datasets.

LightGBM is a high-performance gradient boosting framework optimized for large-scale datasets, featuring efficient computation and memory usage. It utilizes histogram-based feature binning, Gradient-based One-Side Sampling (GOSS), and Exclusive Feature Bundling (EFB) to manage high-dimensional data. Its leaf-wise tree growth strategy improves both training speed and predictive accuracy23,24. KNN is a non-parametric classification method that determines the target class based on the similarity (e.g., Euclidean distance) of the K nearest neighbors. It is particularly effective for nonlinear datasets due to its lack of distributional assumptions25. Naive Bayes is a probability-based classifier that assumes feature independence and calculates posterior probabilities using Bayes’ theorem. It is computationally efficient and particularly suited for high-dimensional, sparse data problems, such as text classification26. AdaBoost is an ensemble method that combines weak classifiers iteratively, with each iteration focusing on previously misclassified samples. By adjusting sample weights, AdaBoost builds a strong classifier that effectively handles nonlinear relationships and works well for both binary and multiclass problems27. XGBoost is a gradient boosting decision tree algorithm known for its computational efficiency and accuracy. It optimizes the loss function via second-order Taylor expansion, includes an L2 regularization term to mitigate overfitting, and supports missing value handling, making it ideal for large-scale data modeling28. SGBT minimizes the loss function by optimizing tree structure and weights through stochastic gradient descent. By randomly sampling data and features, SGBT enhances model generalization and is well-suited for large-scale datasets.LR is a widely used classification algorithm that maps input features to probabilities via a sigmoid function. Its simplicity, interpretability, and suitability for linearly separable problems make it foundational in disease risk prediction and epidemiological research29.

Feature selection

The features used to predict peak hospitalization days for cardiovascular disease included time-series features, meteorological condition features, and air pollutant features. Epidemiological evidence suggests that the effects of meteorological conditions and air pollutants on STEMI admissions often exhibit lagged effects, which vary depending on regional environmental factors30,31,32. To account for these lagged effects, an overdispersed Generalized Additive Model (GAM) with a quasi-Poisson distribution was employed to analyze the influence of daily meteorological conditions and air pollutants on STEMI admissions. Lag selection was guided by the minimum Generalized Cross-Validation (GCV) value from the fitted model33,34. Both single-day lag effects (lag0–lag30) and cumulative lag effects (lag01–lag030) were considered35.Penalized spline methods were applied to adjust for potential confounding factors, including long-term trends, seasonality, specific dates, and meteorological influences36. To further strengthen the inference regarding environmental lag effects and the applicability of the GAM model, we conducted additional sensitivity analyses under multiple lag structures. Detailed results are presented in the Supplementary Materials (Fig. S1). The supplementary file, Supplementary materials.pdf, is provided separately. Figure S1 and Table S1 are included in it. Ultimately, the final independent variables for predicting peak cardiovascular disease hospitalization days comprised 99 features. Table 2 provides a comprehensive summary of these features.Among them, the variables were denoted as [Factor]_[Duration]_[Statistic]_lagX, where [Factor] includes air pollutants (AQI, PM\(_{2.5}\), PM\(_{10}\), SO\(_{2}\), NO\(_{2}\), O\(_{3}\), CO) and meteorological parameters (e.g., pressure, temperature, humidity). [Duration] refers to 8h or 24h running average, [Statistic] indicates mean, max, min, or std. “lagX” represents exposure X days prior, while “lag0X” indicates cumulative exposure over the current day plus the preceding X days.

To further refine the feature set and enhance model performance and stability, we employed the Recursive Feature Elimination (RFE) method. RFE is a widely used feature selection technique that iteratively removes less important features to identify the optimal subset for maximizing model performance37. Initially, the raw data were standardized to eliminate the influence of differing feature scales. The target variable was binarized using quantile thresholds, creating a dataset suitable for binary classification tasks.

During each RFE iteration, the feature contributing least to model performance (based on training weights) was removed, producing feature subsets of varying sizes. To ensure robust evaluation, a nested cross-validation approach was applied: outer cross-validation assessed the overall performance of the model, while inner cross-validation optimized hyperparameters. For each feature subset, performance metrics were calculated on both training and testing datasets, with the mean and standard deviation of these metrics recorded across multiple experiments to assess the model’s stability and generalizability.

The final model was selected based on the highest AUC score on the validation set, with the corresponding optimal feature subset and model parameters retained for subsequent analyses. To further investigate the model’s sensitivity to feature selection, metrics such as AUC, accuracy, and F1-score were plotted against the number of features, providing visual insights into how feature reduction influenced predictive performance.

Model development and validation

Features selected through RFE were input into those above seven models, and nested cross-validation was employed to ensure model stability and generalizability.

Specifically, external cross-validation utilized a Stratified K-Fold approach, dividing the data into five subsets while preserving the class proportions of the overall dataset. This ensured consistent class distribution across validation folds. For each fold, separate training and testing sets were used for both internal and external cross-validation, effectively mitigating the risk of model overfitting.During each round of external cross-validation, internal cross-validation also employed Stratified K-Fold cross-validation, splitting the data into three subsets for hyperparameter optimization. This dual cross-validation approach minimized data leakage and ensured the selection of optimal hyperparameters, enhancing model performance and reliability.Grid search was used to identify the optimal hyperparameter configurations for each model, which were then applied to the selected feature subset. The final hyperparameters and their corresponding classification performance improvements are detailed in Table 3. This rigorous methodology ensured reliable, robust, and generalizable predictions for the target task.

Model evaluation

To evaluate the predictive performance of the models, we calculated the AUC using receiver operating characteristic (ROC) analysis. The DeLong test was performed to statistically compare the AUCs of the seven machine learning models, with significance set at p < 0.0538. In addition, evaluation metrics derived from the confusion matrix–including accuracy, precision, recall, specificity, and F1-score–were employed to analyze the relationship between the actual and predicted values of STEMI peak hospitalization demand. These metrics provided a comprehensive assessment of each model’s ability to accurately and reliably predict STEMI incidence peaks.In addition, to more objectively assess the consistency between predicted probabilities and actual risks, we employed calibration curves in combination with the Brier score and the Expected Calibration Error (ECE) to further enhance the potential applicability of the models within a risk prediction system. This multi-metric evaluation framework ensured a robust and thorough comparison of model performance, offering insights into the strengths and limitations of each algorithm in addressing the prediction task.

In this study, the dataset exhibited a significant class imbalance, with 7.5% of the days having patient records (positive class) and 92.5% of the days without records (negative class). This imbalance posed a challenge for classification models, which often favor the majority class (negative class) while neglecting the minority class (positive class). However, accurately identifying the minority class is critical for addressing the research objective.To address the issue of class imbalance more effectively, we adopted targeted strategies at three levels: the data level, algorithmic level, and prediction level. Specifically, we applied SMOTE at the data level, scale_pos_weight at the algorithmic level, and classification threshold optimization at the prediction level.

Model interpretation

Interpreting machine learning models is often challenging. To enhance model interpretability, this study employed the Shapley Additive Explanations(SHAP) method to interpret the optimal predictive model. SHAP, a game-theory-based technique, quantifies the contribution of each feature to the prediction outcome, offering both local (individual prediction) and global (overall model behavior) explanations. This approach effectively addresses the “black-box” nature of machine learning models.By generating feature importance plots and feature dependence plots, SHAP provided visual insights into the contribution of each feature to the model’s predictions39. This enhanced the model’s transparency and interpretability, making the results more accessible and applicable for clinical and public health decision-making.

Results

Evaluation and comparison of prediction models

Based on the training dataset, we predicted the peak incidence of STEMI admissions in an independent test dataset, with ROC curves shown in Fig. 1. The AUC values for the models in the validation and training datasets were as follows: LightGBM (0.84/0.98), KNN (0.75/1.00), Naive Bayes (0.70/0.71), AdaBoost (0.83/0.84), XGBoost (0.84/0.93), SGBT (0.84/0.87), and Logistic Regression (0.80/0.80). LightGBM, XGBoost, and SGBT performed comparably on the validation set (AUC = 0.84), with LightGBM achieving the highest AUC (0.98) on the training set, demonstrating strong fitting capabilities. Overall, LightGBM exhibited stable performance across datasets, indicating robust generalizability.

Comparison of ROC curves for different models.

Additionally, calibration curves were used to assess the agreement between the predicted probabilities and actual outcomes. These curves evaluate how well a model’s predicted probabilities correspond to observed outcomes, with the ideal calibration curve closely following the diagonal line (y = x), indicating perfect alignment. Significant deviations from the diagonal suggest a mismatch between predicted and actual probabilities.In the training set, LightGBM, SGBT, and XGBoost demonstrated good calibration, with their calibration curves closely following the ideal diagonal, indicating accurate probability predictions. In the validation set, LightGBM and XGBoost maintained their advantage, showing relatively stable calibration performance. Although some fluctuations were observed in specific probability intervals, the overall calibration performance of these models remained superior compared to KNN and SGBT, which exhibited overfitting in the validation set, characterized by larger fluctuations and unstable calibration. Naive Bayes and Logistic Regression, on the other hand, showed substantial calibration deviations, particularly in the high-probability region, where performance declined significantly.Based on the Brier score and Expected Calibration Error (ECE), LightGBM achieved the lowest Brier and ECE values, making it the best-performing model. It demonstrated stable calibration performance, indicating strong generalizability and reliability.The calibration curves are presented in Fig. 2. The Brier score and ECE are provided in Supplementary materials (Table S1).

Comparison of calibration curves for different models.

To evaluate model performance, the AUC was selected as the primary metric, as it reflects the model’s ability to distinguish between classes across all classification thresholds. Additional metrics, including accuracy, precision, recall, and F1 score, were also analyzed to provide a comprehensive assessment of model performance.The results revealed that while KNN achieved the highest performance on the training set (AUC = 0.9999, Accuracy = 0.9984), it exhibited a substantial decline in performance on the validation set (AUC = 0.75467), indicating severe overfitting. LightGBM, on the other hand, demonstrated more stable performance, achieving an AUC of 0.8395 on the validation set. It maintained a good balance across other metrics, including Accuracy = 0.9110, and demonstrated a lower standard error. Although models such as XGBoost and AdaBoost achieved AUC values close to that of LightGBM, they underperformed in precision, recall, and F1 score, particularly in recognizing the minority class.Considering the ROC curve, calibration curve, and performance metrics summarized in Table 4 and Table 5, LightGBM was selected as the optimal model for this study. It achieved superior performance on the validation set, effectively handled the imbalanced data, and maintained stability and balance across multiple metrics, making it the most reliable choice for this prediction task.

Model explanation

As demonstrated in the previous section, the LightGBM model achieved the best performance, providing robust predictive metrics for forecasting peak STEMI admission dates. Consequently, we utilized the LightGBM model to further explore the relationship between meteorological factors, air pollutants, and STEMI incidence. Figure 3 illustrates the RFE process for the LightGBM model. Using AUC as the primary evaluation metric, the model achieved optimal performance on the validation set with 73 features. This result highlights the ability of feature selection to effectively balance model complexity and performance, further enhancing generalizability.

Recursive feature elimination process of LightGBM model.

In the SHAP summary bar chart, feature importance is ranked by the average absolute SHAP values, arranged in descending order. The results identified AQI_std_lag18, AQI_max_lag18, SO\(_{2}\)_24h_std_lag17, PM\(_{2.5}\)_max_lag17, and Year as the five most influential features in the model. These findings emphasize the significant role of lagged effects of the Air Quality Index (AQI) and air pollutants, such as SO\(_{2}\) and PM\(_{2.5}\), in predicting STEMI incidence. The analysis of SHAP values not only elucidates the extent to which meteorological factors and air pollutants influence the model’s predictions but also provides a scientific basis for understanding the underlying causes of STEMI admission peaks. These results underscore the critical importance of accounting for the lag effects of AQI and air pollutants in forecasting STEMI incidence, offering valuable insights for clinical prevention and public health interventions. The SHAP results are illustrated in Fig. 4.

Features importance ranking based on LightGBM model.

To comprehensively evaluate the impact of meteorological factors and air pollutants on STEMI incidence, this study classified and ranked the 73 selected features based on their SHAP values, visualized through a SHAP summary dot plot. This plot provides an intuitive representation of the strength and direction of each feature’s influence on the model’s predictions, offering deeper insights into the relationships between environmental factors and STEMI risk. The results highlight the relative importance of individual features, as well as their positive or negative contributions to the prediction outcomes. The SHAP summary dot plot is presented in Fig. 5.

SHAP summary dot plot of LightGBM.

SHAP analysis reveals that the AQI and its related indicators at different lag times have a significant impact on STEMI incidence, with AQI_std_lag18 and AQI_max_lag18 emerging as key contributors based on their SHAP value distributions. Additionally, fine particulate matter (PM\(_{2.5}\)) and coarse particulate matter (PM\(_{10}\)) concentrations and statistical features contribute notably to the model’s predictions. Among gaseous pollutants, sulfur dioxide (SO2) and nitrogen dioxide (NO2) at various lag times strongly influence STEMI risk, with SO\(_{2}\)_mean_lag17, SO\(_{2}\)_24h_std_lag17, NO\(_{2}\)_24h_min_lag19, and NO\(_{2}\)_24h_min_lag19 making substantial contributions. Similarly, ozone (O3) and carbon monoxide (CO) indicators also show significant effects. For instance, O\(_{3}\)_8h_24h_mean and O\(_{3}\)_24h_max at lag17 days highlight a negative correlation between high O3 concentrations and STEMI risk. In contrast, CO_mean_lag17, CO_24h_max_lag18, and CO_24h_mean_lag17 exhibit strong positive correlations, suggesting that changes in CO concentration and lag effects increase STEMI risk.

Meteorological factors also play a critical role in STEMI incidence. Sea Level Pressure_lag030 and Surface Pressure_lag026 demonstrate substantial SHAP distributions, with prolonged high pressure showing a strong positive correlation with STEMI risk. Precipitation and snowfall exert a negative influence, while Relative Humidity_lag029 and Wind Speed at 10m_lag030 contribute significantly, with wind speed fluctuations potentially impacting the dispersion and deposition of air pollutants, thereby influencing STEMI incidence. Features related to atmospheric structure and solar radiation, such as Low-level Cloud Cover_lag030 and Net Solar Radiation_lag030, show strong SHAP contributions, with higher levels positively correlated with STEMI risk. Furthermore, instability-related weather indicators, such as Thunderstorm Probability_lag030 and the K Index_lag018, suggest that extreme weather events may increase STEMI risk.

Discussion

Performance of machine learning

This study compared the performance of seven widely used machine learning models–LightGBM, XGBoost, AdaBoost, SGBT, KNN, Naive Bayes, and LR–in predicting STEMI incidence peaks. The results highlighted significant differences in model performance, with ensemble learning models, particularly LightGBM, demonstrating a clear advantage in terms of predictive accuracy, stability, and generalizability.LightGBM proved to be the best-performing model, delivering the highest AUC, minimal errors, and exceptional generalization on the validation set. It effectively managed the highly imbalanced dataset while achieving an optimal balance between training efficiency, predictive accuracy, and interpretability. In summary, LightGBM showed the best performance in our dataset and may be promising for STEMI risk prediction. Furthermore, when combined with SHAP analysis, the LightGBM model not only ensures high predictive accuracy but also offers enhanced interpretability, making it a practical solution for clinical applications. Its capability to quantify individual risk based on environmental exposure further strengthens its potential in personalized prevention strategies.Moreover, the predictive factors utilized in this study were derived from routine meteorological monitoring programs, which are publicly available and easily accessible. This enhances the practicality of the model, enabling its integration into real-world clinical workflows and offering effective support for clinical decision-making.

The application of the RFE method effectively addressed the challenge of balancing model complexity with clinical applicability by narrowing the initial feature set to 73 key variables. This refinement not only reduced the risk of including redundant or non-causal predictors but also enhanced the model’s robustness and clinical relevance. With a streamlined feature set, the model became more interpretable and easier to implement in practice, thereby supporting efficient decision-making in healthcare settings. Furthermore, nested cross-validation safeguarded against data leakage and ensured the stability of model performance, while SHAP summary bar plots highlighted the top five influential features, further strengthening transparency and interpretability. Collectively, these strategies increased the model’s adaptability to real-world scenarios and its value as a decision-support tool in clinical practice.

Environmental determinants of STEMI

This study, leveraging machine learning models integrated with SHAP analysis, elucidates the significant impact of meteorological factors and air pollutants on STEMI onset. The findings are largely consistent with prior research. Specifically, PM\(_{2.5}\) and PM\(_{10}\) are recognized for inducing systemic oxidative stress and inflammatory responses, which contribute to arterial plaque instability and endothelial dysfunction. Furthermore, NO\(_{2}\) and SO\(_{2}\) are implicated in promoting thrombosis and platelet aggregation by enhancing sympathetic nerve activity, vasoconstriction, and arrhythmogenic risk, ultimately triggering STEMI8,40. In addition, air pollutants have been associated with reduced heart rate variability, a precursor to myocardial ischemia18. Short-term ozone exposure may further exacerbate cardiovascular risk by impairing vascular endothelial function, inducing coronary vasoconstriction, triggering systemic inflammation, and destabilizing arterial plaques41.

Meteorological factors also play a pivotal role. For instance, ultraviolet (UV) exposure promotes the release of stored nitric oxide (NO) from the skin into the bloodstream, leading to vasodilation, reductions in blood pressure, and improvements in cardiovascular health. Additionally, vitamin D synthesis induced by UV exposure may confer cardioprotective effects by modulating inflammatory responses, maintaining calcium homeostasis, and enhancing endothelial function42. Other extreme weather-related variables, including temperature, humidity, precipitation, and thunderstorms, have been shown to influence STEMI onset, potentially through mechanisms such as autonomic nervous system dysregulation, heightened vasoconstriction, increased platelet adhesiveness, and inflammatory activation43.

Analysis of lag effects on STEMI

The feature design in this study emphasized the lag effects of meteorological factors and air pollutants, adopting a dynamic analytical approach that overcomes the limitations of traditional statistical models. This approach enables machine learning models to more effectively capture the temporal variations of environmental factors and their influence on disease incidence. Significant lag effects of air pollutants (e.g., PM\(_{2.5}\), SO\(_{2}\)) and meteorological factors were observed, potentially linked to prolonged endothelial dysfunction caused by sustained inflammation and oxidative stress44. These effects may accumulate over days or weeks, increasing the likelihood of STEMI. Interactions between meteorological conditions and pollutants, such as high humidity amplifying particulate toxicity, further highlight the complexity of these relationships.Studies have demonstrated that humid heat significantly increases the risk of cardiovascular and respiratory diseases during the first two days (lag 0-2 days) and remains a notable risk factor from lag 4 to 8 days. Notably, the relative risk associated with humid heat for cardiovascular diseases exceeds that observed when considering temperature effects alone45. Furthermore, short-term exposure to PM\(_{2.5}\) (lag 0-5 days) has been shown to significantly increase the risk of rehospitalization among STEMI survivors46. Similarly, short-term exposure to PM\(_{10}\), NO\(_{2}\), SO\(_{2}\), and CO exerts significant effects on AMI hospitalizations within two days, whereas O\(_{3}\) demonstrates a more pronounced impact at lag 4 days. Among these pollutants, the effects of NO\(_{2}\) and SO\(_{2}\) are particularly notable, suggesting that these pollutants may contribute to adverse cardiovascular outcomes via oxidative stress and inflammatory pathways47.

In contrast to previous studies, our findings indicate that the influence of most meteorological factors and air pollutants on STEMI onset is primarily concentrated after a two-week lag period. This discrepancy may stem from our utilization of machine learning methods, which uncovered the long-term and persistent effects of air pollutants on STEMI onset. There are also related studies emphasizing the cumulative long-term effects of multi-year average PM\(_{2.5}\) concentrations on cardiovascular mortality, rather than short-term exposure effects (e.g., over days or weeks)48. This is consistent with our findings. Previous research has identified temperature as a major risk factor for STEMI, with extreme temperatures significantly contributing to STEMI onset over extended lag periods31,49. However, in our study, recursive feature elimination revealed that temperature was excluded as a significant predictor. This finding underscores the complexity of temperature’s influence on STEMI and suggests that its impact cannot be evaluated in isolation. Instead, it is necessary to consider multiple factors, including regional variability and potential interactions. Previous studies have also reported that short-term (lag 0-7 days) exposure to reduced sunshine duration increases the risk of AMI hospitalization, while prolonged sunshine duration further amplifies this risk. This may be attributed to the biological effects of sunlight exposure, such as the protective effects of ultraviolet (UV) radiation on blood vessels42. This observation aligns with our SHAP analysis results, which suggest that cloud cover and solar radiation collectively influence STEMI onset. Prolonged exposure to excessive solar radiation (e.g., overexposure to UV) may elevate oxidative stress levels in the skin and body, thereby contributing to STEMI risk.

In addition, several other meteorological factors, such as precipitation, wind speed, atmospheric pressure, thunderstorm probability, and the K-index, were found to influence STEMI onset in our study. For instance, precipitation may temporarily reduce particulate matter concentrations, but it can also exacerbate local pollution exposure through resuspension. The lagged effects of precipitation may indirectly increase STEMI risk by influencing particulate matter resuspension and causing short-term fluctuations in air quality. For example, heavy rainfall may lead to a temporary increase in local air pollution due to particulate matter resuspension. Increased wind speed may accelerate heat dissipation, causing body temperature fluctuations and sympathetic nerve activation. The delayed effects of high wind speeds could also be associated with alternating cold and heat, enhanced air circulation, and redistribution of pollutants. Atmospheric pressure fluctuations may affect coronary hemodynamics, thereby delaying the onset of acute cardiovascular events. Indicators of unstable weather, such as thunderstorm probability and the K-index, also demonstrated significant effects, highlighting the potential impact of extreme weather on disease risk. Environmental stressors may trigger innate and adaptive immune responses, leading to the release of pro-inflammatory mediators (e.g., cytokines, chemokines), which exacerbate systemic inflammation50.

Limitations

This study has several limitations that should be acknowledged. First, the analysis was based on data from Harbin, a cold-region city in China, which may limit the generalizability of the findings to other geographic regions with different climates, pollution profiles, and healthcare systems. External validation using datasets from multiple regions would be necessary to confirm the robustness and applicability of the models. Second, while the models incorporated a wide range of meteorological and environmental variables, individual-level health information and socioeconomic factors (e.g., comorbidities, income, lifestyle) were not available in the database. These variables are known to influence cardiovascular risk and, if integrated in future work, could substantially improve model precision and clinical relevance. Third, temporal drift should be considered: changes in air pollution control policies, urbanization, and climate patterns over the 2014–2023 study period may have influenced exposure–outcome relationships. Although temporal indicators (e.g., year, seasonality) were included to adjust for long-term trends, the models primarily capture average associations across the study window, without explicitly testing for policy-era or climate-related shifts. Finally, as an observational study, causal inferences cannot be definitively established despite the interpretability provided by SHAP analysis. Addressing these limitations through external validation, integration of individual-level data, and explicit modeling of temporal heterogeneity would enhance the generalizability and robustness of future research.

Data Availability

The Cold Region Climate and Disease Database is not a publicly accessible database. It is an internal database of the First Affiliated Hospital of Harbin Medical University and is not open to the public. Access to this database has been authorized by the First Affiliated Hospital of Harbin Medical University. If needed, you may contact the first author or corresponding author to request the original data from the database.

References

Reed, G. W., Rossi, J. E. & Cannon, C. P. Acute myocardial infarction. The Lancet 389, 197–210. https://doi.org/10.1016/S0140-6736(17)30007-7 (2017).

Bhatt, D. L., Lopes, R. D. & Harrington, R. A. Diagnosis and treatment of acute coronary syndromes: A review. JAMA 327, 662–675. https://doi.org/10.1001/jama.2022.6185 (2022).

Virani, S. S. et al. Heart disease and stroke statistics-2020 update: A report from the American Heart Association. Circulation 141, e139–e596. https://doi.org/10.1161/CIR.0000000000000757 (2020).

Windecker, S., Bax, J. J., Myat, A., Stone, G. W. & Marber, M. S. Future treatment strategies in st-segment elevation myocardial infarction. The Lancet 382, 644–657. https://doi.org/10.1016/S0140-6736(13)61452-X (2013).

Wienbergen, H. et al. Lifestyle and metabolic risk factors in patients with early-onset myocardial infarction: A case-control study. Eur. J. Prev. Cardiol. 29, 2076–2087. https://doi.org/10.1093/eurjpc/zwac132 (2022).

De Luca, G. et al. Impact of covid-19 pandemic and diabetes on mechanical reperfusion in patients with stemi: Insights from the isacs stemi covid 19 registry. Cardiovasc. Diabetol. 19, 1–13. https://doi.org/10.1186/s12933-020-01196-0 (2020).

Newby, D. E. et al. Expert position paper on air pollution and cardiovascular disease. Eur. Heart J. 36, 83–93. https://doi.org/10.1093/eurheartj/ehu458 (2015).

Claeys, M. J., Rajagopalan, S., Nawrot, T. S. & Brook, R. D. Climate and environmental triggers of acute myocardial infarction. Eur. Heart J. 38, 955–960. https://doi.org/10.1093/eurheartj/ehad105 (2017).

Brook, R. D. et al. Particulate matter air pollution and cardiovascular disease: An update to the scientific statement from the American Heart Association. Circulation 121, 2331–2378. https://doi.org/10.1161/CIR.0b013e3181dbece1 (2010).

Yu, G. et al. Extreme temperature exposure and risks of preterm birth subtypes based on a nationwide survey in China. Environ. Health Perspect. 131, 087009. https://doi.org/10.1289/EHP10831 (2023).

Biondi-Zoccai, G. et al. Impact of environmental pollution and weather changes on the incidence of st-elevation myocardial infarction. Eur. J. Prev. Cardiol. 28, 1501–1507. https://doi.org/10.1177/2047487320928450 (2021).

Hong, Y., Graham, M. M., Rosychuk, R. J., Southern, D. & McMurtry, M. S. The effects of acute atmospheric pressure changes on the occurrence of st-elevation myocardial infarction: A case-crossover study. Can. J. Cardiol. 35, 753–760. https://doi.org/10.1016/j.cjca.2019.02.015 (2019).

Ni, W. et al. Short-term effects of lower air temperature and cold spells on myocardial infarction hospitalizations in Sweden. J. Am. Coll. Cardiol. 84, 1149–1159. https://doi.org/10.1016/j.jacc.2024.07.006 (2024).

Jiang, Y. et al. Cold spells and the onset of acute myocardial infarction: A nationwide case-crossover study in 323 Chinese cities. Environ. Health Perspect. 131, 087016. https://doi.org/10.1289/EHP11841 (2023).

Akbarzadeh, M. A. et al. The association between exposure to air pollutants including pm10, pm2.5, ozone, carbon monoxide, sulfur dioxide, and nitrogen dioxide concentration and the relative risk of developing stemi: A case-crossover design. Environ. Res. 161, 299–303. https://doi.org/10.1016/j.envres.2017.11.020 (2018).

Jiang, Z. et al. Co-exposure to multiple air pollutants, genetic susceptibility, and the risk of myocardial infarction onset: A cohort analysis of the uk biobank participants. Eur. J. Prev. Cardiol. 31, 698–706. https://doi.org/10.1093/eurjpc/zwad384 (2024).

Hopke, P. K. et al. Triggering of myocardial infarction by increased ambient fine particle concentration: Effect modification by source direction. Environ. Res. 142, 374–379. https://doi.org/10.1016/j.envres.2015.06.037 (2015).

Chen, R. et al. Hourly air pollutants and acute coronary syndrome onset in 1.29 million patients. Circulation 145, 1749–1760. https://doi.org/10.1161/CIRCULATIONAHA.121.057179 (2022).

Kuźma, Ł et al. Effect of air pollution exposure on risk of acute coronary syndromes in Poland: A nationwide population-based study (ep-particles study). Lancet Region. Health-Europe https://doi.org/10.1016/j.lanepe.2024.100910 (2024).

Esteva, A. et al. Dermatologist-level classification of skin cancer with deep neural networks. Nature 542, 115–118. https://doi.org/10.1038/nature22985 (2017).

Qiu, H. et al. Electronic health record driven prediction for gestational diabetes mellitus in early pregnancy. Sci. Rep. 7, 16417. https://doi.org/10.1038/s41598-017-16665-y (2017).

Gunčar, G. et al. An application of machine learning to haematological diagnosis. Sci. Rep. 8, 411. https://doi.org/10.1038/s41598-017-18564-8 (2018).

Yan, J. et al. Lightgbm: Accelerated genomically designed crop breeding through ensemble learning. Genome Biol. 22, 1–24. https://doi.org/10.1186/s13059-021-02492-y (2021).

Ke, G. et al. Lightgbm: A highly efficient gradient boosting decision tree. Adv. Neural Inf. Process. Syst. 30, 1–9 (2017).

Bian, Z., Vong, C. M., Wong, P. K. & Wang, S. Fuzzy knn method with adaptive nearest neighbors. IEEE Trans. Cybernet. 52, 5380–5393. https://doi.org/10.1109/TCYB.2020.3031610 (2020).

Ye, Z., Song, P., Zheng, D., Zhang, X. & Wu, J. A naive bayes model on lung adenocarcinoma projection based on tumor microenvironment and weighted gene co-expression network analysis. Infect. Dis. Model. 7, 498–509. https://doi.org/10.1016/j.idm.2022.07.009 (2022).

Zhang, P.-B. & Yang, Z.-X. A novel adaboost framework with robust threshold and structural optimization. IEEE Trans. Cybernet. 48, 64–76. https://doi.org/10.1109/TCYB.2016.2623900 (2016).

Hou, N. et al. Predicting 30-days mortality for mimic-iii patients with sepsis-3: A machine learning approach using xgboost. J. Transl. Med. 18, 1–14. https://doi.org/10.1186/s12967-020-02620-5 (2020).

LaValley, M. P. Logistic regression. Circulation 117, 2395–2399. https://doi.org/10.1161/CIRCULATIONAHA.106.682658 (2008).

Chen, K. et al. Temporal variations in the triggering of myocardial infarction by air temperature in Augsburg, Germany, 1987–2014. Eur. Heart J. 40, 1600–1608. https://doi.org/10.1093/eurheartj/ehz116 (2019).

Ban, J. et al. The effect of high temperature on cause-specific mortality: A multi-county analysis in China. Environ. Int. 106, 19–26. https://doi.org/10.1016/j.envint.2017.05.019 (2017).

Chen, T.-H., Li, X., Zhao, J. & Zhang, K. Impacts of cold weather on all-cause and cause-specific mortality in Texas, 1990–2011. Environ. Pollut. 225, 244–251. https://doi.org/10.1016/j.envpol.2017.03.022 (2017).

Zhu, X. et al. Risks of hospital admissions from a spectrum of causes associated with particulate matter pollution. Sci. Total Environ. 656, 90–100. https://doi.org/10.1016/j.scitotenv.2018.11.240 (2019).

Qiu, H. et al. Attributable risk of hospital admissions for overall and specific mental disorders due to particulate matter pollution: A time-series study in Chengdu, China. Environ. Res. 170, 230–237. https://doi.org/10.1016/j.envres.2018.12.019 (2019).

Qiu, H. et al. Machine learning approaches to predict peak demand days of cardiovascular admissions considering environmental exposure. BMC Med. Inform. Decis. Mak. 20, 1–11. https://doi.org/10.1186/s12911-020-1101-8 (2020).

Chen, G. et al. Attributable risks of emergency hospital visits due to air pollutants in China: A multi-city study. Environ. Pollut. 228, 43–49. https://doi.org/10.1016/j.envpol.2017.05.026 (2017).

Deng, F., Zhao, L., Yu, N., Lin, Y. & Zhang, L. Union with recursive feature elimination: A feature selection framework to improve the classification performance of multicategory causes of death in colorectal cancer. Lab. Invest. 104, 100320. https://doi.org/10.1016/j.labinv.2023.100320 (2024).

DeLong, E. R., DeLong, D. M. & Clarke-Pearson, D. L. Comparing the areas under two or more correlated receiver operating characteristic curves: A nonparametric approach. Biometrics 44, 837–845 (1988).

Wang, K. et al. Interpretable prediction of 3-year all-cause mortality in patients with heart failure caused by coronary heart disease based on machine learning and shap. Comput. Biol. Med. 137, 104813. https://doi.org/10.1016/j.compbiomed.2021.104813 (2021).

Tian, F. et al. Differentiating the effects of air pollution on daily mortality counts and years of life lost in six Chinese megacities. Sci. Total Environ. 827, 154037. https://doi.org/10.1016/j.scitotenv.2022.154037 (2022).

Ruidavets, J.-B. et al. Ozone air pollution is associated with acute myocardial infarction. Circulation 111, 563–569. https://doi.org/10.1161/01.CIR.0000154546.32135.6E (2005).

Chen, Y. et al. Association of sunshine duration with acute myocardial infarction hospital admissions in Beijing, China: A time-series analysis within-summer. Sci. Total Environ. 828, 154528. https://doi.org/10.1016/j.scitotenv.2022.154528 (2022).

Han, Y. et al. Association between synoptic types in Beijing and acute myocardial infarction hospitalizations: A comprehensive analysis of environmental factors. Sci. Total Environ. 934, 173278. https://doi.org/10.1016/j.scitotenv.2024.173278 (2024).

Münzel, T. et al. Effects of gaseous and solid constituents of air pollution on endothelial function. Eur. Heart J. 39, 3543–3550. https://doi.org/10.1093/eurheartj/ehy481 (2018).

Liang, C. et al. The influence of humid heat on morbidity of megacity Shanghai in China. Environ. Int. 183, 108424. https://doi.org/10.1016/j.envint.2024.108424 (2024).

Liu, H. et al. Fine particulate air pollution and hospital admissions and readmissions for acute myocardial infarction in 26 Chinese cities. Chemosphere 192, 282–288. https://doi.org/10.1016/j.chemosphere.2017.10.123 (2018).

Liu, H. et al. Air pollution and hospitalization for acute myocardial infarction in China. Am. J. Cardiol. 120, 753–758. https://doi.org/10.1016/j.amjcard.2017.06.004 (2017).

Chen, H. et al. Ambient fine particulate matter and mortality among survivors of myocardial infarction: Population-based cohort study. Environ. Health Perspect. 124, 1421–1428. https://doi.org/10.1289/EHP185 (2016).

Bai, L. et al. Temperature, hospital admissions and emergency room visits in Lhasa, Tibet: A time-series analysis. Sci. Total Environ. 490, 838–848. https://doi.org/10.1016/j.scitotenv.2014.05.024 (2014).

Bai, L. et al. Exposure to ambient air pollution and the incidence of congestive heart failure and acute myocardial infarction: A population-based study of 5.1 million Canadian adults living in Ontario. Environ. Int. 132, 105004. https://doi.org/10.1016/j.envint.2019.105004 (2019).

Acknowledgements

The authors acknowledge the contribution and collaboration of all those who participated in this study.

Funding

This work was supported by the Key Project of Natural Science Foundation of Heilongjiang Province (ZL2024H002)

Key research and development program of Heilongjiang Province (2023ZX02C10)

Heilongjiang Postdoctoral Research Foundation (LBH-Q20110).

Author information

Authors and Affiliations

Contributions

HX proposed and designed the study. HX and HG performed the experiments and analyzed the data. HX,TS and HG collected the data and performed the statistical analyses. HX and TS wrote the manuscript. TS revised the manuscript. All authors have read and approved the final manuscript.

Corresponding author

Ethics declarations

Ethical Statement

Informed consent was obtained from all subjects. All methods were carried out in accordance with relevant guidelines and regulations.

Ethics approval and consent to participate

This study is approved by Ethics Committee of the First Affiliated Hospital of Harbin Medical University (No.2023138).

Consent for Publication

All authors agree to publication, and there are no permissions needed.

Declaration of conflicting interests

No potential conflicts of interest were disclosed by the author(s) with regard to the research, writing, or publication of this paper.

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Xu, H., Guan, H., Zhang, Y. et al. Machine learning prediction of STEMI incidence with SHAP interpretation of environmental determinants. Sci Rep 15, 40245 (2025). https://doi.org/10.1038/s41598-025-24045-0

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-24045-0