Abstract

Real-time monitoring of rock stability and effective control of pressure concentration areas are crucial for ensuring the safety of personnel and equipment during mineral resource extraction. Microseismic and blasting signals, as early indicators of rock rupture, can effectively predict potential disasters. This study proposes a binary Harris hawks optimization algorithm with kernel search (bKSHHO) for the recognition of microseismic and blasting signal data. By integrating bKSHHO with a kernel extreme learning machine (KELM), we construct a prediction model, termed bKSHHO-KELM, which predicts microseismic and blasting signals, thereby enabling early warning of rock hazards. Experimental studies validate the optimization capability of the proposed KSHHO algorithm by comparing it with ten peer algorithms using the IEEE CEC 2022 benchmark functions. The bKSHHO-KELM method was then applied to predict microseismic and blasting signals, achieving an accuracy of 95.625%, a recall of 93.964%, a precision of 92.632%, and an F1 score of 0.931. This provides an efficient and accurate early warning solution for microseismic hazards in mine safety management.

Similar content being viewed by others

Introduction

With the deepening of underground mineral resource development, especially in the mining process of deep mines, mining operations are facing more and more severe challenges. Factors such as high ground stress, high ground temperature and complex geological environment not only increase the technical difficulty of mining operations, but also increase the risk of potential safety hazards1. Therefore, dynamic disasters in mines, especially rock bursts, occur frequently, seriously threatening the life safety of operators and the normal operation of mining equipment. In order to cope with these severe safety issues, microseismic monitoring technology has gradually become an indispensable and important means for underground mine safety monitoring2. Microseismic monitoring can realize real-time assessment of rock stability by collecting elastic wave signals released by rock mass during fracture or rupture through precision instruments3. By analyzing the characteristics of microseismic signals, especially parameters such as waveform, frequency and energy, early warning can be provided before disasters occur, thereby providing strong support for the safety management of mining operations. However, the application of microseismic monitoring technology still faces some challenges, especially the high similarity between microseismic signals and other geological disturbance signals in waveform and spectrum characteristics, which makes the accurate identification and classification of microseismic signals a major bottleneck in the implementation of the technology4. Therefore, improving the recognition accuracy of microseismic signals and blasting vibration signals and developing more accurate signal processing algorithms are important aspects for researchers to enhance the effectiveness and reliability of microseismic monitoring technology in deep mining applications.

In recent years, the rise of machine learning and deep learning has provided new research ideas for the classification and prediction of microseismic blasting signals. For example, Di et al.5 constructed a new prediction model for microseismic, acoustic emission and electromagnetic radiation data based on multiple neural networks. Field verification shows that the proposed model can provide quantitative prediction data for rockburst monitoring. Luo et al.6 proposed combined hierarchical information model (CHIM-Net) to improve the prediction accuracy of microseismic events in high-energy mining through deep learning technology. The model effectively improves the accuracy of time, space and intensity prediction by combining two prediction branch modules through data decomposition and partitioning. Experimental results show that CHIM-Net outperforms other models in multiple evaluation indicators. Basnet et al.7 compared the application of parametric and non-parametric machine learning models in short-term rockburst risks prediction, and used logistic regression and support vector machine (SVM) to evaluate data sets. The results show that the parameterized model performs better on the normally distributed data set and can effectively predict the short-term rockburst risk, especially when the amount of data is limited. Shu et al.8 combined the histogram of oriented gradients (HOG) and ML techniques for microseismic waveform recognition. They extracted HOG features from images of event waveforms and evaluated five distinct classifiers. The experimental outcomes demonstrated SVM has the highest accuracy of 97.1%. Goulet et al.9 developed a random forest model to predict the seismic response intensity using 379 mining development blasting data, and verified the model performance by analyzing an additional 100 cases. Otaíza et al.10 used a SVM model to classify earthquakes and blasting activities recorded by an open-pit copper mine seismic network. The experimental results showed that the proposed method showed extremely high overall accuracy.

The above studies show that the existing models have achieved high performance in microseismic blasting prediction. However, the prediction accuracy of these models still has room for further improvement. The microseismic monitoring system collects data characterized by high signal volume and a wide variety of complex features. Therefore, redundant features in the features may cause the prediction model to overfit. At the same time, irrelevant features in the data can interfere with the model’s learning process, ultimately reducing its prediction accuracy. Thus, it is necessary to perform feature selection (FS) on the collected microseismic blasting data, extract effective key features, and improve the prediction performance.

Metaheuristic algorithms have been applied to FS tasks in recent years due to their good global search capabilities and ability to find approximate optimal solutions in a limited time. These algorithms, such as genetic algorithms (GA)11, differential evolution (DE)12, sine cosine algorithm (SCA)13, particle swarm optimization (PSO)14, Harris hawks optimization (HHO)15, moss growth optimization (MGO)16, artemisinin optimization (AO)17, and polar lights optimization (PLO)18 can efficiently explore complex search spaces to identify the most discriminative feature subsets for prediction tasks. Kwakye et al.19 combined PSO with bald eagle search to improve the global search performance. The FS performance was verified using 27 classification data sets. Lee et al.20 studied the effectiveness of FS based on harmony search and GA in improving the performance of diagnosing sarcopenia. The experimental results show the potential of metaheuristic selection in advancing sarcopenia diagnosis based on its competitive advantage. Beheshti et al.21 proposed a fuzzy transfer function and applied it to binary PSO (FBPSO). They evaluated the effectiveness of both atomic and composite transfer functions within binary PSO, as well as several other binary metaheuristic algorithms, by comparing them to FBPSO. The experimental outcomes demonstrated that FBPSO outperformed the other algorithms, achieving the lowest error rates and the highest FS rates. Ali et al.22 combined evolutionary algorithms and local search techniques to propose a machine learning model that can identify network intrusions. The synthetic minority over-sampling technique is used to solve the data imbalance problem and improve the model accuracy. The proposed method performs well on the CSE-CIC-IDS2018 and KDD CUP 99 datasets and can effectively improve the ability of intrusion detection. Al-Thanoon et al.23 proposed to use Kruskal-Wallis test and one-way ANOVA to initialize binary metaheuristic algorithms. Experimental results show that these methods outperform the comparison methods in classification accuracy, number of FS and running time on high-dimensional datasets. Bagadi et al.24 introduced an innovative robust hybrid metaheuristic approach for FS aimed at enhancing the accuracy of speech emotion recognition (SER) tasks. Experimental results on SER datasets show that their approach achieved high-level emotion recognition rates of 94.35% and 96.78%, respectively. Armaghani et al.25 combined three metaheuristic algorithms with SVM to propose an efficient hybrid model for rockburst trend prediction. Experimental results on 259 rockburst examples show that these hybrid models are superior to traditional SVM models in both prediction accuracy and generalization ability.

The above studies show that the model’s prediction accuracy can be effectively improved by combining metaheuristic algorithms with ML models. However, in the field of microseismic blasting, few people have studied how to apply metaheuristic algorithms to FS to improve the performance of prediction models. To this end, this study proposes a FS method based on the improved HHO15 for microseismic blasting events prediction. HHO is a metaheuristic algorithm that exhibits a robust global search capability and achieves rapid convergence. However, the optimization process of the original HHO is mainly guided by the current optimal individual and is prone to fall into the local optimum. This study introduces a kernel search-based HHO variant (KSHHO), which incorporates kernel functions to enhance the algorithm’s search space and reduce the likelihood of getting stuck in local optima. The CEC 2022 benchmark function is used to qualitatively analyze KSHHO, and the performance of KSHHO is compared with 10 state-of-the-art (SOTA) algorithms. A binary version of KSHHO (bKSHHO) was then proposed, and the bKSHHO-KELM model was proposed based on bKSHHO and KELM for microseismic blasting prediction tasks. Experiments were conducted on the microseismic blasting dataset with 7 classic classifiers, and the results were compared using four evaluation metrics. The main contributions of this study are as follows:

-

(1)

A HHO variant is proposed utilizing a kernel search strategy. This strategy effectively improves the algorithm’s search capability and avoids the algorithm falling into local optimality.

-

(2)

bKSHHO-KELM microseismic blasting prediction is proposed. It aims to improve the recognition accuracy of microseismic and blasting events.

-

(3)

KSHHO’s optimization performance is evaluated using the CEC 2022 benchmark function.

-

(4)

Based on the features extracted from microseismic and blasting images, the bKSHHO-KELM model was employed to identify the most optimal subset of features, thereby successfully achieving high-precision recognition of microseismic blasting events.

The subsequent sections are structured as follows: section “Materials and methods” details the microseismic blasting dataset utilized in this study and also introduces the specific implementation details of the original HHO, KSHHO and bKSHHO-KELM in detail. Section “Experiment and results” analyzes the experimental results of KSHHO in optimization experiments and bKSHHO-KELM in prediction tasks. In section “Discussion”, a detailed discussion regarding the limitations of the present study is provided, as well as suggestions for future improvements. Section “Conclusion and future works” summarizes the whole study and gives future prospects.

Materials and methods

Description of microseismic and blasting data

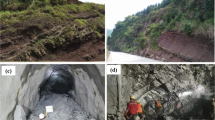

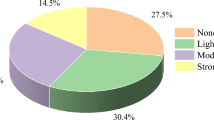

The data used in this study were collected from the online microseismic monitoring system deployed in the Daikoutou mining section of the Jiuqu mining area at the Linglong Gold Mine. This system was jointly developed by Linglong Gold Mine and Central South University and employs IMS microseismic monitoring technology to conduct long-term monitoring of deep ground stress conditions. As mining depth has exceeded 900 m, ground stress has increased significantly, leading to a higher risk of geological hazards such as rock bursts. To obtain reliable microseismic data to support the construction of disaster prediction models, the research team collected a large volume of representative waveform data at several critical levels, including − 620 m, -670 m, and − 694 m. The raw data are in the form of waveform images captured by the microseismic monitoring system. Each time a microseismic or blasting event occurs, vibration signals are recorded by multiple triaxial sensors and converted into images using the system’s built-in software, TRACE, which also logs the arrival times of P-waves and S-waves, sensor trigger times, and trigger sequences.

Due to the fundamental differences in the generation mechanisms of blasting and microseismic events, their waveform representations exhibit distinct characteristics. Figures 1 and 2 show representative waveform images of a blasting event and a microseismic event, respectively. As shown in Fig. 1, the waveform of the blasting event demonstrates a sharp rise in amplitude concentrated at the front end, followed by rapid attenuation, with no clear S-wave phase, resulting in a waveform that is short and intense. In contrast, the waveform image of the microseismic event, shown in Fig. 2, exhibits a longer duration, more evenly distributed energy, clear transitions between P-wave and S-wave phases, and significantly lower amplitude compared to the blasting event. In this study, by manually screening and interpreting waveform images, the events were accurately classified, and a clearly labeled image dataset of microseismic and blasting events was constructed. Subsequently, color moments (CM)32, gray-level co-occurrence matrix (GLCM)33, and local binary patterns (LBP)34 were employed to extract image features from multiple perspectives, including global statistical properties, spatial structural relationships, and local texture details. These features formed the basis for the training and testing of subsequent machine learning models.

Waveform image of a blast event.

Waveform image of a microseismic event.

Harris hawks optimization

HHO is a population-based optimization algorithm inspired by the cooperative hunting behavior of Harris hawks when pursuing prey, such as rabbits15. By mimicking the natural predation mechanisms of Harris hawks, the algorithm converts complex optimization problems into a dynamic problem-solving framework. At its core, HHO models candidate solutions as Harris hawks that iteratively approach the global optimal solution through cooperative and adaptive search strategies. The HHO process consists of two phases: exploration and exploitation, corresponding to global search and local search. These phases alternate to balance the breadth and depth of the search process. The HHO algorithm contains specific formulas as shown in Table 1.

In the exploration phase (Eqs. 1–3), the algorithm enhances diversity by deploying a randomly distributed population of Harris hawks to widen the search range. This phase simulates hawks scouting for prey, including guarding random locations or moving to new ones. The main goal of this phase is to improve population diversity and avoid premature convergence to local optima. In the exploitation phase, the algorithm refines the search space to pinpoint the optimal solution with precision. Strategies in this phase mimic various scenarios of Harris hawks attacking prey, including soft besiege (Eqs. (10)-(13)) and hard besiege (Eqs. (4)-(9)), targeting prey with strong or weak escape capabilities, respectively. HHO has been widely applied to various optimization problems, showing excellent performance in benchmark tests26,27 and practical engineering scenarios28,29. Detailed algorithm implementation, including mathematical formulations and performance analysis, is provided in reference15.

Harris hawks optimizer with kernel search

Addressing the challenges of nonlinear function optimization has long been a significant difficulty in modern optimization. Kernel methods, originally developed in machine learning, map data into high-dimensional feature spaces, transforming nonlinear problems—difficult in lower dimensions—into linearly separable problems in higher dimensions.

Kernel search optimization (KSO) algorithm utilizes a kernel mapping function to transform a multidimensional nonlinear function into a linear form, simplifying the optimization process30,31. Specifically, given an objective function \(y=f\left( x \right)\),\(x=\left( {{x_1},~{x_2},~ \cdots ,~{x_n}} \right)\), KSO applies the kernel mapping function \(u=\varphi \left( x \right)\),\(~u=\left( {u,~{u_2},~ \cdots ,~{u_n}} \right)\), to convert it into a linear form.

where w denotes the mapping from the original space vector \(a=\left( {{a_1},~{a_2},~ \cdots ,~{a_n}} \right)\) to a high-dimensional space vector, while \(\varphi \left( a \right)\) represents the normal vector of the hyperplane in the high-dimensional space. The kernel function \(K\left( {a,~x} \right)\) implicitly computes the similarity in the high-dimensional space through the inner product.

To address nonlinear problems more effectively, the KSO algorithm utilizes the radial basis function (RBF) as its kernel. The RBF kernel is widely used due to its strong generalization ability and adaptability to nonlinear data. Its form is given by:

The KSO algorithm introduces two sets of random vectors \(\left( {x_{1}^{\prime },~x_{2}^{\prime },~ \cdots ,~x_{n}^{\prime }} \right)\) and \(\left( {x_{1}^{{''}},~x_{2}^{{''}},~ \cdots ,~x_{n}^{{''}}} \right)\) to estimate the parameters a and \(\sigma\) of the kernel function. The algorithm replaces the \(jth\) component of the vector \(\left( {{x_1},~{x_2},~ \cdots ,~{x_n}} \right)\) with the \(jth\) components of the random vectors, yielding the corresponding target values \(y_{j}^{\prime }\) and \(y_{j}^{{''}}\). By comparing the objective function values at different points, the algorithm iteratively adjusts \(\:a\) and \(\:\sigma\:\). The specific computation formula is as follows:

Based on the above formula, the candidate optimal solution \({x_{candi}}\) for minimizing the objective function is determined by the following condition:

where \(UB\) and \(LB\) represent the upper and lower bounds of the search space, respectively.

By employing the concept of kernel mapping to address the inherent limitations of HHO, such as slow convergence and susceptibility to local optima, the position of the current candidate solution is updated after determining the kernel function parameters as follows:

where \(MaxFEs\) denotes the maximum number of evaluations, \(FEs\) represents the current evaluation number, \({X_{candi}}\) denotes the candidate solution obtained through kernel mapping, and \({X_{rabbit}}\left( t \right)\) represents the current optimal solution.

In summary, this study introduces a kernel-search HHO algorithm (KSHHO), with the algorithm’s specific steps outlined in Appendix A.1. The detailed process is as follows:

Step 1: Initialize two key parameters: population size and the maximum number of evaluations. Then, generate random initial position vectors for \(\:N\) hawks.

Step 2: In each optimization round, calculate the fitness value of each hawk in the current population.

Step 3: Set the position of the hawk with the highest fitness value in the current population as the position of the rabbit.

Step 4: Update the energy value \(\:E\) of the rabbit according to Eq. (3) and randomly generate the jump intensity \(\:J\). The energy value \(\:E\) decreases as the number of evaluations increases, simulating the energy expenditure of the prey during its escape process.

Step 5: Determine whether the algorithm should enter the exploration or exploitation phase based on the energy value \(\:E\). When \(\:\left|E\right|\ge\:1\), the algorithm enters the exploration phase, during which the hawks perform wide-range random searches. When \(\:\left|E\right|<1\), the algorithm enters the exploitation phase, where the hawks focus on searching around the known optimal solution.

Step 6: In the exploration phase, hawks update their positions based on Eq. (1) to expand the search range and explore new solution spaces. In the exploitation phase, hawks update their positions based on the encircling strategy in Eq. (4) to (13), gradually approaching the optimal solution.

Step 7: To prevent convergence to local optima and improve the diversity of candidate solutions, the kernel search method is applied to update the hawk’s position according to Eq. (19).

Step 8: After each iteration, verify if the stopping criteria are met. If not, repeat Steps 2 to 7.

Step 9: Return the position of the hawk with the best fitness in the current population as the global optimal solution of the algorithm.

Binary Harris hawks optimizer with kernel search

In the above content of this study, KSO is used to improve HHO and proposed KSHHO. In this section, a FS model based on KSHHO and KELM (bKSHHO-KELM) is proposed. In the FS problem, 1 or 0 is used to indicate the selection or non-selection of a feature. Therefore, the FS task is a binary problem. In this case, the KSHHO mentioned above for continuous problems needs to be modified to a version suitable for solving discrete problems. Thus, the transfer function (TF) is leveraged to update the position of the search individual and revise its position to the binary search space. Specific definitions of TF and the position correction function are as follows:

where \(erf\left( \cdot \right)\) represents the error function and r is a random number in the range [0,1]. Equation (20) calculates the error function value V of the input search individual position applicable to continuous problems. Subsequently, by comparing the random number r, its corresponding position in the discrete space is determined.

In the process of finding the optimal feature subset, the fitness function is used to determine whether the current subset is optimal. The fitness function is defined in Eq. (22). The objective of FS is to identify a subset of features that best captures the data’s characteristics and exhibits high predictive performance. To achieve this goal, the fitness function consists of two parts: the classification error rate and the number of feature subsets. Two weight coefficients \({w_1}\) and \({w_2}\) are used to assign weights to these two parts. Therefore, in the process of searching for feature subsets, the goal is to minimize the fitness function used.

where \({w_1}\) and \({w_2}\) are 0.99 and 0.01, respectively.

Appendix A.2 shows the flowchart of bKSHHO-KELM. First, the feature extraction methods are used to extract features from the waveform image. This study uses three feature extraction methods: CM32, GLCM33, and LBP34 to extract texture, color, shape and other features of microseismic and blasting images. Specifically, CM extract the color features of an image by calculating the statistical moments of color distribution in the image. The GLCM constructs a statistical representation that characterizes the interactions between pairs of pixels with identical or similar gray levels at specified orientations and separations, thereby enabling the extraction of an image’s texture features. LBP generate a binary code by assessing the intensity of a central pixel relative to its surrounding neighbors. For each individual pixel, the grayscale value of its neighboring pixels is compared to that of the central pixel. LBP generates an encoding that identifies specific local features within the original image, including edges, corners, flat regions, and feature points.

Then, the FS process and prediction model training are carried out. The data set is divided into a training set and a test set. This study adopts ten-fold cross validation (CV) to avoid the influence of randomness on the model performance. During the training process, the population is first initialized and the parameters in the bKSHHO algorithm are set. Then, the bKSHHO algorithm searches for feature subsets by simulating the hunting behavior and kernel search strategy of Harris hawk. And the algorithm evaluates the fitness value of each feature subset (individual). The bKSHHO algorithm updates the position of the individual based on fitness value, thereby gradually optimizing the feature subset. The updating process continues until the maximum number of iterations is reached. And the current optimal feature subset is output. In the model training process, this study uses KELM35 as a classifier. KELM is a machine learning method based on extreme learning machine, which enhances its expressive power on nonlinear problems by introducing kernel functions.

Based on the selected optimal feature subset, the model is then evaluated using the test set and the corresponding evaluation indicators are calculated. After completing the evaluation of one fold, it is determined whether the training and verification process of all ten folds has been completed. If not all folds have been completed, the training and evaluation process of the next fold will be entered; if all folds have been completed, the average of the evaluation indicators of the 10 folds will be calculated as the final evaluation result of the model.

Experiment and results

Optimization capability validation via benchmark functions

Experimental setup

There are 12 test functions in CEC 2022 benchmark function36, including one unimodal function (F1), four basic functions (F2–F5), three hybrid functions (F6–F8), and four composition functions (F9–F12). Each function operates within a 20-dimensional space. Detailed descriptions of these test functions are presented in Table 2.

KSHHO will compare with ten SOTA algorithms, including 6 SOTA metaheuristic algorithm variants (HG_SMA37, ACWOA38, ALCPSO39, CGSCA40, ISNMWOA41, SRWPSO42 and 4 other related original metaheuristic algorithms (HHO43, RIME44, FATA45, PSO14, to evaluate the performance of KSHHO. Table 3 summarizes the main parameter settings of all algorithms. All parameter values of the comparative algorithms were taken directly from their respective original articles.

For fairness of comparison, all algorithms were limited to the maximum number of \(\:10000\times\:D\), where \(\:D\) is the problem dimension of the. Additionally, each algorithm on each test function was executed independently 30 times to reduce experimental errors stemming from the algorithms’ inherent randomness. Differences between algorithms were evaluated by analyzing the average optimal convergence value and standard deviation obtained from 30 independent runs. The significance of the differences between algorithms was compared by Wilcoxon signed-rank test (WSRT)46 and a Friedman test (FRT)47, with the significance level set at 0.05. The symbols “+∕-∕=” respectively indicate that KSHHO is better than, worse than or equal to the competing algorithms, while “b/w/s” counts the number of its corresponding test functions respectively. To visually demonstrate the algorithm’s convergence process, we plotted a convergence curve. Additionally, a box plot was used to represent the overall distribution of the optimal solutions obtained from 30 runs.

Qualitative analysis of KSHHO

This section will evaluate the optimization performance of KSHHO from three aspects: search history, trajectory result and fitness value. We selected four test functions to present the experimental results. These functions range from simple to complex and cover various optimization characteristics, providing a comprehensive demonstration of the search behavior and adaptability of the proposed algorithm across diverse problem structures. The results are shown in Fig. 3. The first column shows the three-dimensional visualization of the corresponding test function. The second column displays the search history based on x1 and x2, where red dots signify the optimal individual positions and black dots represent the search individuals. The third column illustrates the trajectory of the first search individual, while the fourth column displays the average fitness across all search individuals.

In the early stage of optimization, the trajectory curve of the search individual changes significantly, because at this time it is necessary to search the entire search space as much as possible. Therefore, it can also be found that in the corresponding search history graph, the search individuals basically cover the entire search space. As the number of iterations increases, the trajectory curve gradually becomes stable indicating that algorithm gradually transitions from global exploration to local exploitation, concentrates on exploitation within a certain range, and approaches the global optimal individual. In the composition function, the average fitness of all search individuals levels off for a period of time and then drops sharply, which shows that KSHHO successfully avoids the stagnation of particles in the local optimum.

The above results show that the optimization behavior based on the kernel search strategy in KSHHO can avoid the search individual falling into the local minimum and converge around the global optimum in the final stage.

Qualitative analysis result of KSHHO. Each row corresponds to one test function \(\:{F}_{i}\).From left to right: (1) the 3D surface plot of \(\:{F}_{i}\), illustrating the function landscape; (2) the search history, where black dots represent visited candidate solutions and the red dot marks the best solution found; (3) the trajectories of agents over iterations, showing convergence behavior in the decision space; and (4) the average fitness curve of all agents, reflecting convergence in the objective space.

Comparison results analysis

Appendix A. 3 shows the average value (Avg) and standard deviation (Std) results of all algorithms. The table results indicate that KSHHO performs relatively well in basic and hybrid functions, among which the optimal solutions obtained in F4 and F8 are due to the solutions obtained by other compared algorithms. Although KSHHO’s performance in unimodal and composition functions is not so outstanding, its optimization ability in these two types of functions is also relatively good among these compared algorithms. Figure 4 illustrates the final ranking outcomes for each algorithm. The proposed KSHHO attained the highest average ranking of 3.42, ranking first, followed by RIME and SRWPSO.

The average rank result based on Avg and Std between KSHHO and comparison algorithms.

Table 4 shows the WSRT results of KSHHO in comparison with other algorithms. Results indicate that KSHHO significantly outperforms ACWOA, CGSCA, and FATA in optimization performance across all 10 test functions. Significantly better than HG_SMA, HHO, PSO in 9 test functions. It is worth noting that in the comparison with RIME, KSHHO’s performance is significantly better than RIME in only 4 test functions, and its performance is significantly weaker than RIME in 6 test functions. Table 5 shows the FRT results. The proposed algorithm achieved an average FRT ranking of 3.75, ranking first. RIME followed in second place, and SRWPSO attained the third rank. The above results verify the excellent optimization performance of KSHHO.

Figure 5 plots the convergence curves of all algorithms on the test functions. The steeper the slope of the curve relative to the horizontal axis, the faster the convergence rate during the current evaluation interval. Note that most algorithms, such as HHO, ALCPSO, and ISNMWOA, show premature entrapment in local optimality in most test functions. Taking F4 as an example, although ALCPSO and SRWPSO show faster convergence speed at the beginning of the evaluation, ALCPSO falls into the local optimality at about 1000 evaluation times, and the curve tends to stabilize. The algorithm proposed in this paper continues to decline and eventually converges to the minimum value. While KSHHO does not achieve the highest convergence accuracy on every test function, its overall convergence performance surpasses that of the other algorithms.

Figure 6 presents the box plot of the optimal values obtained by all algorithms across 30 independent runs. It is evident that the shape for KSHHO is more compact, with fewer outliers. It shows that the optimal value found by KSHHO in 30 independent runs has a smaller range of variation and better stability. However, the box lengths of the comparison algorithms in different test functions are different and the stability is poor. In summary, the KSHHO has better optimization capabilities than other algorithms.

Convergence curves of KSHHO and comparison algorithms in different test functions.

Box plot of KSHHO and comparison algorithms in different test functions.

Case study on microseismic and blasting phenomena

Experiment setup

This study employs techniques such as CM, GLCM, and LBP to extract the shape features from waveform image data obtained by each sensor in microseismic and blasting signals. To improve the convergence of the model and eliminate the influence of different dimensions, the extracted features were normalized. All feature values were mapped to the range [-1, 1] to ensure equal contribution of each feature during model training. To ensure fairness and objectivity of the results, we use a stratified 10-fold CV method on the entire dataset to ensure fairness and objectivity. In each CV iteration, the data are randomly partitioned into 10 equal folds. One fold is held out as the test set, and the remaining nine folds form the training set. After cycling through all ten folds, we report the mean and standard deviation of the evaluation metrics across folds as the final result.

To evaluate the performance of the bKSHHO-KELM method in predicting microseismic and blasting signals, several commonly used classification metrics were applied, including accuracy, recall, precision, and F1 score. The detailed formulas for these metrics are provided in Table 6. The method was compared with several baseline models, including light gradient boosting machine (LightGBM)48, categorical boosting (CatBoost)49, random forest (RandomF)50, adaptive boosting (AdaBoost), fuzzy k-nearest neighbors (FKNN)51, extreme gradient boosting (XGBoost)52, and KELM35, as well as its counterparts, including binary specular reflection learning enhanced HHO with KELM (bHHOSRL-KELM)53, binary PSO with KELM (bPSO-KELM)54, binary moth-flame optimization with KELM (bMFO-KELM)55, binary ant colony optimization with KELM (bACO-KELM)56, bDE-KELM, binary artificial bee colony with KELM (bABC-KELM)57, and bHHO-KELM. Due to the FS technique, the algorithm’s dimensionality was adjusted to correspond to the number of features in the dataset, with a population size of 20. All implementations were executed in the same simulation environment.

Simulation results and analysis

This section aims to perform classification prediction using the extracted microseismic and blasting data, evaluating the effectiveness of the proposed bKSHHO-KELM method in real-world seismic forecasting. A comparative experiment was conducted between the bKSHHO-KELM method and traditional classification techniques (e.g., LightGBM, XGBoost, CatBoost, RandomF, FKNN), evaluating its accuracy, recall, precision, and F1 score.

Figure 7 illustrates the performance of the bKSHHO-KELM method and seven other classification models in predicting microseismic and blasting phenomena, with box plots showing the distribution of each evaluation metric. It is evident that the bKSHHO-KELM method outperforms the other models across all metrics, particularly in accuracy and F1 score, demonstrating its effectiveness in predicting microseismic and blasting phenomena. Notably, the LightGBM and XGBoost models also exhibited relatively good performance in predicting microseismic and blasting phenomena. Furthermore, the FKNN model performed relatively poorly, particularly in terms of stability, with significant fluctuations in its predictions.

Table 7 presents the performance metrics of the bKSHHO-KELM method and other models in predicting microseismic and blasting phenomena. The bKSHHO-KELM method clearly outperforms the other models in predicting microseismic and blasting phenomena. Its accuracy (95.625%) and recall (93.964%) are higher than those of LightGBM (94.197% and 90.033%) and XGBoost (94.197% and 90.061%), while its F1 score (0.931) also surpasses both (0.910). Additionally, the bKSHHO-KELM method has a smaller standard deviation, indicating more stable prediction results. In contrast, the FKNN model performed the worst, with an accuracy of only 90.326% and poor stability, as reflected by its larger standard deviation, further highlighting the superiority of bKSHHO-KELM.

Box plots of the results of bKSHHO-KELM with other models predicting microseismic and blasting phenomena.

The above experiments demonstrate that the wrapper-based FS method, which combines the KELM classifier with the proposed bKSHHO algorithm, exhibits significant performance advantages in predicting microseismic and blasting phenomena compared to traditional classification methods, effectively addressing the limitations of individual classifiers. To evaluate the effectiveness of the bKSHHO-KELM method compared to other algorithms such as bHHOSRL, bPSO, bMFO, bACO, bDE, bABC, and bHHO, the goal is to determine whether the bKSHHO-KELM method outperforms these algorithms in predicting microseismic and blasting phenomena.

Figure 8 presents the performance of the bKSHHO-KELM method and various peer methods in predicting microseismic and blasting phenomena. Overall, all methods exhibited good predictive performance, but the bKSHHO-KELM method clearly outperformed the others across several performance metrics. According to the results in Table 8, bKSHHO-KELM outperforms other optimized KELM variants in terms of accuracy (95.625%) and recall (93.964%), leading bHHOSRL-KELM (accuracy 94.783%, recall 92.026%) and bPSO-KELM (accuracy 94.619%, recall 91.685%). Moreover, bKSHHO-KELM also outperforms all other methods in terms of precision (92.632%) and F1 score (0.931), particularly when compared to bABC-KELM (precision 90.526%, F1 score 0.902) and bDE-KELM (precision 90.526%, F1 score 0.908), demonstrating superior balance and overall performance.

Box plots of the results of bKSHHO-KELM with peer methods predicting microseismic and blasting phenomena.

Table 9 presents the detailed experimental results of the bKSHHO-KELM method in predicting microseismic and blasting phenomena. The first column represents the fold numbers of the 10-fold cross-validation, and the second column shows the size of the optimal feature subset obtained by the bKSHHO method. The remaining columns show the accuracy, recall, precision, and F1 score used in the analysis.

From the experimental results, the bKSHHO-KELM method shows stable performance in 10-fold cross-validation, with an average accuracy of 95.625%, recall of 93.964%, precision of 92.632%, and F1 score of 0.931. The fluctuations between folds are minimal, with standard deviations of 0.019, 0.052, 0.043, and 0.028, indicating high stability. Overall, the prediction performance is excellent, demonstrating the effectiveness and reliability of the method in predicting microseismic and blasting phenomena.

Discussion

The proposed bKSHHO-KELM model has shown promising performance in classifying microseismic events and blasting events in underground mining environments. While the experimental results are encouraging, several important aspects deserve further discussion to provide a more comprehensive understanding of the model’s strengths, limitations, and future research directions.

Although the bKSHHO-KELM model achieves high accuracy, recall, precision, and F1 scores while using a relatively small subset of features, several limitations need to be acknowledged. First, the feature selection process is based on bKSHHO, which is sensitive to the randomness of the initial population. This can lead to slight variability in selected feature subsets and classification performance across different runs. To address this, future studies could explore more robust initialization strategies, such as opposition-based learning or adaptive sampling techniques, to improve consistency and solution quality58,59. Second, the optimization performance of KSHHO is limited on unimodal and composition benchmark functions, as demonstrated in our evaluation using the CEC 2022 test suite. This indicates that the algorithm may lack sufficient global exploration or local exploitation in specific problem landscapes. Future research could consider hybridizing bKSHHO with local search techniques or incorporating adaptive parameter control mechanisms to enhance both global and local search capabilities. Moreover, the current model focuses only on classifying two types of events (microseismic and blasting), and its performance on detecting or identifying other rare or unknown seismic events remains unexplored. Expanding the classification framework to include multi-class capabilities60,61 or anomaly detection models62 could improve its applicability in more diverse mining scenarios.

In real underground mining environments, seismic monitoring systems inevitably capture a wide variety of signals beyond the intended microseismic and blasting events. These additional signals may originate from drilling operations, machinery movement, transportation of equipment, and other mechanical systems such as ventilation fans or conveyor belts63,64. Such signals often exhibit relatively stable frequency characteristics or low-frequency continuous vibrations, which can overlap with the time-frequency features of genuine seismic events, leading to potential misclassification by automated systems65. The presence of these non-target signals poses a significant challenge to the robustness and reliability of seismic event classification. Without careful preprocessing and discrimination, the model may incorrectly label operational noise or man-made disturbances as seismic phenomena, thereby reducing its practical effectiveness in safety monitoring. Addressing this issue requires the integration of advanced signal processing techniques capable of capturing subtle distinctions in frequency content and temporal patterns. Approaches such as time-frequency analysis66, wavelet transform67, empirical mode decomposition68, and even deep learning-based feature extraction69,70 could provide richer representations of the signals, enhancing the separability of different source types. Additionally, the adoption of unsupervised learning71 or clustering methods72 could enable the automatic detection of unknown or mixed signal types, further improving the adaptability of the classification system. To strengthen the model’s resilience in real-world deployment, future work should focus on constructing a more comprehensive and representative dataset that encompasses a broader spectrum of seismic and non-seismic sources encountered in mining environments. Such efforts would facilitate the development of more generalizable models capable of maintaining high classification accuracy even under complex and noisy conditions.

In recent years, deep learning methods such as convolutional neural networks, long short-term memory networks, and transformer-based models have been successfully applied to seismic signal classification, fault detection, and event localization73,74,75. These models are capable of learning complex spatial-temporal patterns from raw data, often achieving high performance in large-scale applications. However, deep learning models typically require large labeled datasets, high computational resources, and may lack interpretability, which poses challenges in underground mining environments where real-time decision-making and limited data are common constraints. In contrast, our bKSHHO-KELM model offers the advantage of achieving high prediction performance using fewer features and lower computational cost, making it more suitable for practical deployment in resource-constrained settings. In future work, it may be beneficial to explore hybrid approaches that combine the interpretability and efficiency of bKSHHO-KELM with the powerful feature extraction capabilities of deep learning models.

The proposed bKSHHO-KELM model demonstrates strong potential for microseismic and blasting event classification using compact and efficient feature subsets. This makes it well-suited for deployment in underground mines where computational resources are limited, and timely decision-making is crucial. However, to fully validate the generalizability of the model, further testing is needed across multiple mining sites, geological conditions, and varying noise environments. Future studies could also apply this methodology to other geophysical applications, such as earthquake early warning, structural health monitoring, or landslide detection, where reliable event classification is critical. Additionally, incorporating online learning, adaptive signal separation, and anomaly detection into the current framework could further enhance the system’s robustness and broaden its application scope.

Conclusion and future works

This study introduces a microseismic blasting prediction model that integrates an HHO variant with KELM. Firstly, a kernel search strategy is employed to strengthen the search efficiency of the original HHO, effectively avoiding local optima and enhancing the algorithm’s convergence accuracy. CEC 2022 benchmark function is used to evaluate the performance of KSHHO comparing with 10 SOTA algorithms. The experimental results show that the optimization performance of the proposed KSHHO is better than the comparison algorithm. Subsequently, the bKSHHO-KELM prediction model is proposed. Experimental comparisons are carried out with classical classifiers on microseismic blasting data. The experimental results show that the proposed bKSHHO-KELM performs well, with accuracy, recall, precision, and F1 of 95.625%, 93.964%, 92.632%, and 0.931 respectively.

There are several potential directions for future research regarding our proposed method. First, from the optimization experimental results, it can be found that KSHHO lacks the ability to find the best in unimodal and composition functions. Therefore, in the future, we can consider adopting more effective strategies to improve the optimization ability of the algorithm in these two types of test functions. Secondly, the quality of the optimal solution produced by the algorithm is affected by the randomness of the initial solution generation. To solve this, a novel initialization strategy could be developed in the future to improve the quality of the optimal solutions. Finally, we can apply the proposed model to other optimization application fields to further verify and expand the application scope of the proposed model.

Data availability

The data involved in this study can be downloaded through https://github.com/Fighttttjay/microseismic_and_blasting.

References

An, H. & Mu, X. Contributions to rock fracture induced by high ground stress in deep mining: a review. Rock Mech. Rock Eng. (2024).

Shirani Faradonbeh, R., Ryoza, M. G. & Sepehri, M. Chap. 12 - Application of artificial intelligence in distinguishing genuine microseismic events from the noise signals in underground mines. In Applications of Artificial Intelligence in Mining and Geotechnical Engineering (eds Nguyen, H. et al.) 197–220 (Elsevier, 2024).

Liu, F. et al. Applications of microseismic monitoring technique in coal mines: a state-of-the-art review. Appl. Sci. 14, 2563. https://doi.org/10.3390/app14041509 (2024).

Liu, S. et al. Deep neural network for distinguishing microseismic signals and blasting vibration signals based on deep learning of spectrum features. J. Earthquake Eng. 28 (14), 3905–3924 (2024).

Di, Y. et al. Predicting microseismic, acoustic emission and electromagnetic radiation data using neural networks. J. Rock Mech. Geotech. Eng. 16 (2), 616–629 (2024).

Luo, H. et al. CHIM-Net: a combined hierarchical information model for predicting time, space and intensity of mining microseismic events. Rock Mech. Rock Eng. (2024).

Basnet, P. M. S., Jin, A. & Mahtab, S. Applying machine learning approach in predicting short-term rockburst risks using microseismic information: a comparison of parametric and non-parametric models. Nat. Hazards (2024).

Shu, H., Dawod, A. Y. & Dong, L. Recognition and classification of microseismic event waveforms based on histogram of oriented gradients and shallow machine learning approach. J. Appl. Geophys. 230, 105551 (2024).

Goulet, A. & Grenon, M. Managing seismic risk associated to development blasting using random forests predictive models based on geologic and structural Rockmass properties. Rock Mech. Rock Eng. 57 (11), 9805–9826 (2024).

Otaíza, J. R. et al. Machine Learning (ML) model for blasting and seismic event classification on Chilean copper mines. In 58th U.S. Rock Mechanics/Geomechanics Symposium (2024).

Goldberg, D. E. & Holland, J. H. Genetic algorithms and machine learning. Mach. Learn. 3 (2), 95–99 (1988).

Storn, R. & Price, K. Differential evolution – a simple and efficient heuristic for global optimization over continuous spaces. J. Global Optim. 11 (4), 341–359 (1997).

Mirjalili, S. A sine cosine algorithm for solving optimization problems. Knowl. Based Syst. 96, 120–133 (2016).

Kennedy, J. & Eberhart, R. Particle swarm optimization. In Proceedings of ICNN’95 - International Conference on Neural Networks (1995).

Heidari, A. A. et al. Harris Hawks optimization: algorithm and applications. Future Generation Comput. Syst. 97, 849–872 (2019).

Zheng, B. L. et al. The moss growth optimization (MGO): concepts and performance. J. Comput. Des. Eng. 11 (5), 184–221 (2024).

Yuan, C. et al. Artemisinin optimization based on malaria therapy: algorithm and applications to medical image segmentation. Displays 2024, 102740 (2024).

Yuan, C. et al. Polar lights optimizer: algorithm and applications in image segmentation and feature selection. Neurocomputing 607, 128427 (2024).

Kwakye, B. D. et al. Particle guided metaheuristic algorithm for global optimization and feature selection problems. Expert Syst. Appl. 248, 123362 (2024).

Lee, J. et al. Metaheuristic-Based feature selection methods for diagnosing sarcopenia with machine learning algorithms. Biomimetics 9, 256. https://doi.org/10.3390/biomimetics9030179 (2024).

Beheshti, Z. A fuzzy transfer function based on the behavior of meta-heuristic algorithm and its application for high-dimensional feature selection problems. Knowl. Based Syst. 284, 111191 (2024).

Ali, A. H. et al. Enhanced intrusion detection based hybrid Meta-heuristic feature selection. In Advances in Computational Collective Intelligence (Springer Nature, 2024).

Al-Thanoon, N. A. & Algamal, Z. Y. Improving Meta-Heuristic algorithms for feature selection in multiclass classification. In Recent Trends and Advances in Artificial Intelligence (Springer Nature Switzerland, 2024).

Bagadi, K. R. & Sivappagari, C. M. R. A robust feature selection method based on meta-heuristic optimization for speech emotion recognition. Evol. Intel. 17 (2), 993–1004 (2024).

Armaghani, D. J. et al. Toward precise long-term rockburst forecasting: a fusion of Svm and cutting-edge meta-heuristic algorithms. Nat. Resour. Res. 33 (5), 2037–2062 (2024).

Peng, L. et al. Hierarchical Harris Hawks optimizer for feature selection. J. Adv. Res. (2023).

Wang, X. et al. Crisscross Harris Hawks optimizer for global tasks and feature selection. J. Bionic Eng. 20 (3), 1153–1174 (2023).

Dev, K. et al. Energy optimization for green communication in IoT using Harris Hawks optimization. IEEE Trans. Green. Commun. Netw. 6 (2), 685–694 (2022).

Ali, A. et al. Harris Hawks optimization-based clustering algorithm for vehicular ad-hoc networks. IEEE Trans. Intell. Transp. Syst. 24 (6), 5822–5841 (2023).

Dong, R. et al. An advanced kernel search optimization for dynamic economic emission dispatch with new energy sources. Int. J. Electr. Power Energy Syst. 160, 110085 (2024).

Dong, R. et al. Boosted kernel search: framework, analysis and case studies on the economic emission dispatch problem. Knowl. Based Syst. 233, 107529 (2021).

Theoharatos, C. et al. Color-based image retrieval using vector quantization and multivariate graph matching. In IEEE International Conference on Image Processing (2005).

Haralick, R. M., Shanmugam, K. & Dinstein, I. Textural features for image classification. IEEE Trans. Syst. Man. Cybern. 30 (6), 610–621 (1973).

Mäenpää, T. & Pietikäinen, M. Texture analysis with local binary patterns. In Handbook of Pattern Recognition and Computer Vision 197–216 (World Scientific, 2005).

Huang, G. B. et al. Extreme learning machine for regression and multiclass classification. IEEE Trans. Syst. Man. Cybernetics Part. B (Cybernetics). 42 (2), 513–529 (2012).

Luo, W. et al. Benchmark Functions for CEC 2022 Competition on Seeking Multiple Optima in Dynamic Environments (ArXiv, 2022). abs/2201.00523 (2022).

Wang, M. et al. Optimizing deep transfer networks with fruit fly optimization for accurate diagnosis of diabetic retinopathy. Appl. Soft Comput. 147, 110782 (2023).

Elhosseini, M. A. et al. Biped robot stability based on an A–C parametric Whale optimization algorithm. J. Comput. Sci. 31, 17–32 (2019).

Chen, W. et al. Particle swarm optimization with an aging leader and challengers. IEEE Trans. Evol. Comput. 17 (2), 241–258 (2013).

Kumar, N. et al. Single Sensor-Based MPPT of partially shaded PV system for battery charging by using cauchy and Gaussian sine cosine optimization. IEEE Trans. Energy Convers. 32 (3), 983–992 (2017).

Peng, L. et al. Information sharing search boosted Whale optimizer with Nelder-Mead simplex for parameter Estimation of photovoltaic models. Energy. Conv. Manag. 270, 116246 (2022).

Li, Y. et al. bSRWPSO-FKNN: a boosted PSO with fuzzy K-nearest neighbor classifier for predicting atopic dermatitis disease. Front. Neuroinform. 2023, 16 (2023).

Heidari, A. A. et al. Harris Hawks optimization: algorithm and applications. Future Gener. Comput. Syst. Int. J. Escience 97, 849–872 (2019).

Su, H. et al. A physics-based optimization. Neurocomputing 532, 183–214 (2023).

Qi, A. et al. An efficient optimization method based on geophysics. Neurocomputing 607, 128289 (2024).

Derrac, J. et al. A practical tutorial on the use of nonparametric statistical tests as a methodology for comparing evolutionary and swarm intelligence algorithms. Swarm Evol. Comput. 1 (1), 3–18 (2011).

García, S. et al. Advanced nonparametric tests for multiple comparisons in the design of experiments in computational intelligence and data mining: experimental analysis of power. Inf. Sci. 180 (10), 2044–2064 (2010).

Ke, G. et al. LightGBM: A Highly Efficient Gradient Boosting Decision Tree (ed. Guyon, I.) (Wiley, 2017).

Prokhorenkova, L. et al. CatBoost: unbiased boosting with categorical features. In Proceedings of the 32nd International Conference on Neural Information Processing Systems. Curran Associates Inc.: Montréal, Canada 6639–6649 (2018).

Breiman, L. & Forests, R. Mach. Learn. 45 (1), 5–32 (2001).

Keller, J. M., Gray, M. R. & Givens, J. A. A fuzzy K-nearest neighbor algorithm. IEEE Trans. Syst. Man Cybern. 15 (4), 580–585 (1985).

Chen, T. & Guestrin, C. XGBoost: a scalable tree boosting system. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. 2016, Association for Computing Machinery: San Francisco, California, USA 785–794 (2025).

Hu, J. et al. Detection of COVID-19 severity using blood gas analysis parameters and Harris Hawks optimized extreme learning machine. Comput. Biol. Med. 142, 105166 (2022).

Kennedy, J. & Eberhart, R. Particle swarm optimization. In ICNN’95 - International Conference on Neural Networks (1995).

Mirjalili, S. Moth-flame optimization algorithm: a novel nature-inspired heuristic paradigm. Knowl. Based Syst. 89, 228–249 (2015).

Dorigo, M., Maniezzo, V. & Colorni, A. Ant system: optimization by a colony of cooperating agents. IEEE transactions on systems, man, and cybernetics. Part. B Cybern. IEEE Syst. Man. Cybern. Soc. 26 (1), 29–41 (1996).

Akay, B. & Karaboga, D. A modified artificial bee colony algorithm for real-parameter optimization. Inf. Sci. 192, 120–142 (2012).

Zhao, Y. & Liu, H. Opposition-based learning Harris Hawks optimization with steepest convergence for engineering design problems. J. Supercomputing. 81 (1), 148 (2024).

Serbet, F. & Kaya, T. New comparative approach to multi-level thresholding: chaotically initialized adaptive meta-heuristic optimization methods. Neural Comput. Appl. 37 (14), 8371–8396 (2025).

Antonijevic, M. et al. Intrusion detection in metaverse environment internet of things systems by metaheuristics tuned two level framework. Sci. Rep. 15 (1), 3555 (2025).

Egala, R. & Sairam, M. V. S. Multi-layer stacked residual coordinate termite alate network for multi-class lung diseases detection from chest X-ray images. Appl. Soft Comput. 179, 113393 (2025).

Madhuridevi, L., Sree Rathna, N. V. S. & Lakshmi Metaheuristic assisted hybrid deep classifiers for intrusion detection: a bigdata perspective. Wireless Netw. 31 (2), 1205–1225 (2025).

Wang, J. et al. Spatiotemporal clustering of microseismic signals in mining areas: a case study of the Baoji lead–zinc mine in Shaanxi, China. Eng. Geol. 352, 108057 (2025).

Zhang, X. et al. Enhancing microseismic monitoring through controlled blasting: waveform Analysis, Location, and magnitude determination in Dongtan coal Mine, China. Seismol. Res. Lett. 96 (4), 2273–2284 (2025).

Ma, K. et al. Analysis on time-frequency characteristics and construction response of microseismic events on high and steep rock slopes: a case study of Dongzhuang water conservancy project. Tunn. Undergr. Space Technol. 159, 106499 (2025).

Peng, L. et al. TwoStream-EQT: a microseismic phase picking model combining time and frequency domain inputs. Comput. Geosci. 204, 105991 (2025).

Zhang, Y. et al. CNN-KA: a hybrid P-Phase picking method for microseismic source location in deep mine with complex geological conditions. IEEE Trans. Geosci. Remote Sens. 63, 1–12 (2025).

He, Y. & Kim, Y. S. Application of feature mode decomposition to microseismic signal detection. Artif. Intell. Geosci. 2025 (1), 1–5 (2025).

Lara-Cueva, R. et al. A Deep Learning Framework for the Automatic Classification of Microseisms at Cotopaxi Volcano. In Applications of Computational Intelligence (Springer Nature Switzerland, 2025).

Chen, K. et al. Enhancing microseismic event detection with transunet: a deep learning approach for simultaneous pickings of P-wave and S-wave first arrivals. Artif. Intell. Geosci. 6 (1), 100129 (2025).

Zhou, L. et al. Automated P-wave arrival picking in microseismic monitoring: integrating multi-feature clustering and enhanced AIC-STA/LTA. Measurement 256, 118143 (2025).

Qin, L., Huang, Z. L. & Zhang, J. Identification of weak microseismic signals in hydraulic fracturing using 3D-Unet with ASPP feature fusion. IEEE Trans. Geosci. Remote Sens. 63, 1–10 (2025).

Liu, Q., Chen, L. & Qu, L. Micro-seismic resolution in mining engineering by combining denoising processing and improved CNN algorithm. Appl. Earth Sci. 2025, 25726838251342677 (2025).

Wang, X. & Lv, M. Research on microseismic periodic noise suppression method based on long short-term memory network. Pure. appl. Geophys. 182(1), 107–123 (2025).

Song, D. et al. A multi-scale CNN-Transformer hybrid network for microseismic signal arrival picking: model analysis and engineering application. Eng. Geol. 353, 108109 (2025).

Acknowledgements

This study was funded by the Open Project of the Key Laboratory of Green Mining of Coal Resources of the Ministry of Education in Xinjiang (KLXGY-KA2401). This work was also supported in part by National Natural Science Foundation of China (52204263).

Author information

Authors and Affiliations

Contributions

Author Contribution information Wei Zhu: Writing – Original Draft, Writing – Review & Editing, Software, Visualization, Investigation, Funding Acquisition.Yuting Bian: Writing – Original Draft, Writing – Review & Editing, Software, Visualization, Investigation, Conceptualization, Methodology, Formal Analysis. Duo Lin: Writing – Review & Editing, Software, Visualization, Investigation. Lei Liu: Writing – Original Draft, Writing – Review & Editing, Software, Visualization, Investigation. Huiling Chen: Conceptualization, Methodology, Formal Analysis, Investigation, Writing – Review & Editing, Supervision, Project administration. Guoxi Liang: Conceptualization, Methodology, Formal Analysis, Investigation, Writing – Review & Editing, Supervision, Project administration.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical statement

The manuscript has not be submitted to more than one journal for simultaneous consideration and has not been published elsewhere in any form or language.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhu, W., Bian, Y., Lin, D. et al. A prediction model for microseismic signals based on kernel extreme learning machine optimized by Harris Hawks algorithm. Sci Rep 15, 40472 (2025). https://doi.org/10.1038/s41598-025-24211-4

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-24211-4