Abstract

The double-step-length procedure presents an inventive approach by incorporating two corrections during each iteration, which improves the robustness of the iterative process. The idea that if one correction fails, the other might still help guide the system in the right direction seems likely to reduce the chance of getting divergence, a common challenge in nonlinear solvers. This article presents a more effective approach to solving systems of nonlinear equations by combining the double-step length iterative method with the Picard-Mann hybrid technique. This combination improves performance. We streamline the process by employing a positive scalar to approximate the Jacobian matrix in Newton’s method, thereby eliminating the need to calculate derivatives. An inexact line search technique was used to determine the values of the two-step lengths. The proposed method converges globally under mild conditions. The numerical tests highlight its efficiency, proving that it is more effective than existing double-direction and length methods. Furthermore, we have effectively implemented this technique in motion control applications, showcasing its practicality and efficacy.

Similar content being viewed by others

Introduction

Nonlinear systems of equations (NSE) play a vital role in a broad range of domains, including physics, engineering, economics, and applied mathematics, especially in solving systems of nonlinear differential equations1,2,3 . These systems arise in scenarios where the relationship between variables cannot be accurately described using linear equations, making them more complex to solve4,5,6. Researchers are actively working to develop efficient and reliable iterative methods for finding approximate solutions to these equations . A typical nonlinear system of equations can be expressed in the following form:

where \(\mathfrak {F}: \mathbb {R}^n \rightarrow \mathbb {R}^n\) is a nonlinear mapping.

These problems can be solved using a variety of iterative strategies. These include methods that do not depend on derivatives7,8,9,10,11,12,13, as well as Newton’s method (NM)14 and quasi-Newton methods (QNM)15,16. Notably, Newton’s method is one of the most effective approaches because it is straightforward to implement and converges rapidly. However, it does require the calculation and storage of the Jacobian matrix (JM) at each iteration. These techniques employ a recursive formula to generate a sequence of points.

where \(\alpha _k\) is the step size, \(d_k\) is the search direction, and the current and the previous iterations are \(s_{k+1}\) and \(s_{k}\), respectively17,18. The direction \(d_k\) of the NM is determined by solving:

Here, \(\mathfrak {F}^{\prime }(s_k)\) is the Jacobian matrix of \(\mathfrak {F}(s_k)\) at \(s_k\). However, one limitation of Newton’s scheme is that it requires the derivative of JM to be computed at each iteration, which may not always be available or may not be precisely determined. In such cases, NM cannot be applied directly.

To solve this problem, researchers created QNM. These methods improve traditional optimisation techniques by replacing the JM or its inverse with a new approximation that updates during each step. This change makes the optimisation process more efficient. In QNM, the search direction is determined by:

The problem (1) may be derived from an unconstrained optimisation problem19. Let \(\mathfrak {h}\) be defined as a norm function represented by the equation:

Consequently, the nonlinear equations problem (1) can be equivalently expressed as the following global optimisation problem:

The NM and QNM are among the classical and efficient algorithms for solving optimization problems. However, their major limitation lies in the requirement for significant matrix storage due to the calculation or estimation of the Jacobian at each iteration step. This drawback makes Newton-type algorithms less suitable for large-scale problems. To address this issue, numerous studies have developed matrix-free methods. One effective matrix-free algorithm is the Double Direction Method (DDM)20, which generates sequences of iteration points via:

where \(a_{k}\) and \(b_{k}\) define the search directions. The main motivation behind the DDM algorithm is that it incorporates two corrections in the formula described by (5). correction If one of the correction schemes fails during the iterative process, the other serves as a backup mechanism to ensure convergence and stability of the system.

Recently, Petrovi\(\acute{\textrm{c}}\) and Stanimirovi\(\acute{\textrm{c}}\)20 introduced a new concept to the unconstrained optimization known as the accelerated double direction approach. This pioneering approach incorporates an acceleration parameter \(\delta _{k} > 0\) to formulate the Hessian matrix approximation, which is expressed as follows:

with \(I\) denoting the identity matrix and \(\bigtriangledown ^{2} \mathfrak {h}(s_{k})\) representing the Hessian of \(\mathfrak {h}(s_{k})\). To improve the robustness of this algorithm, Petrovi\(\acute{c}\) further refine the formula in21 by introducing a double-step length method (DSM) as follows:

where \(\alpha _{k}\) and \(\beta _{k}\) denotes the two independently chosen step lengths, allowing for a more adaptive and flexible approach to convergence. Preliminary results in21 show that this modified algorithm demonstrates superior performance compared to the initial DDM, attaining a reduction in the iteration count required in addition to decrease in processor time.

To further improve the convergence and robustness of the algorithm, Petrovi\(\acute{\textrm{c}}\) et al.22 consider hybridizing the DDM with the Picard-Mann Hybrid Iterative Process (PMHP), presented by Khan23. This hybridization utilizes the strengths of both schemes, which results in improved performance when addressing different benchmark problems. Incorporating the PMHP improves the complexity of the scheme, leading to improvements in both numerical efficiency and convergence of the scheme.

Definition 1.1

The PMHP is characterized by three relations:

with \(T: \Omega \longrightarrow \Omega\) denoting the mapping on a nonempty convex subset \(\Omega\) of a normed space \({\bf E}\). The sequences \(y_k\) and \(s_k\) are follow from the iterative procedure defined in (9) and (8), and \(\{\eta _k\}_{k\ge 0}\) is a sequence of positive values within the interval \((0,1)\).

Building on the hybridization rule introduced in23,24 proposed a double-step length scheme equipped with a convergence procedure. This innovative approach was designed to refine the DSM described in21, thereby overcoming its earlier limitations.

The current literature regarding derivative-free DDMs for solving NSE is notably scarce. This identified gap in research prompted Halilu and Waziri9 to develop a derivative-free methodology that employs a DDM to effectively address the equation presented in (1), as articulated in equation (5). Subsequently, in25, they developed a modified version of the algorithm in9. Their study establishes that the proposed method exhibits global convergence. The new scheme proposed by25 lays foundation for reliable solutions to nonlinear equations without requiring derivatives. Building upon the existing framework, Abdullahi et al.11 developed another modification DDM by revising the original formula in9 and incorporating a conjugate gradient scheme tailored for symmetric nonlinear equations. Their scheme not only retain the derivative-free property of the formula but also facilitated global convergence, which was achieved using the modified line search approach proposed by Li and Fukushima19. In another attempt, Halilu and Waziri26 further modified the DDM discussed in (5) and established global convergence of the new scheme under some mild assumptions. Extensive computational experiments demonstrated the efficiency and robustness of the method. In their insightful studies presented in12 and27, the authors built upon the foundational concepts in equation (6) by introducing the DSM. This innovative method enhances the efficacy of the DDM described in9. Their results are particularly encouraging, indicating that the DSM achieves significantly faster convergence than the previous method. These findings not only highlight the potential of their approach to optimize numerical algorithms but also pave the way for further advancements in this area of research.

Motivated by the concepts presented by Petrovi\(\acute{\textrm{c}}\) in24, this study aims to develop an advanced hybrid DSM, as outlined in12, for addressing the NSE using the PMHP proposed in23. The objective is to enhance both the efficiency and accuracy of identifying solutions to Problem (1).

The contributions from this study are as follows:

-

This paper introduces a new iterative algorithm for systems of nonlinear equations.

-

To develop a derivative-free approach, the Jacobian matrix is approximated using the acceleration parameter.

-

We propose a new algorithm that ensures global convergence.

-

The correction parameter is derived using Picard-Mann iterative schemes to enhance the numerical performance of the proposed method.

-

The proposed method is successfully applied to address issues related to motion control.

The organisation of this paper is outlined as follows: The subsequent section will detail the derivation of the proposed approach. A comprehensive convergence analysis of the suggested algorithm will be presented in “Convergence analysis”. “Numerical experiments” will illustrate various numerical tests and motion control applications of the proposed method. Finally, the article will be concluded in “Conclusion”, while the list of abbreviations is provided in “Abbreviations” section. In this paper, we refer to \(\mathbb {R}^n\) as the \(n\)-dimensional real space, with the notation \(\Vert \cdot \Vert\) representing the Euclidean norm.

Preliminaries and algorithms

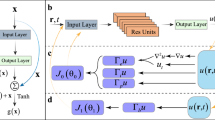

In this section, we present the motivation behind and the algorithm for the proposed method. First, we consider the accelerated DSM for the unconstrained optimisation problem defined in (4). Petrovi\(\acute{\textrm{c}}\)21 finds the minimiser of (4) using the DSM. By approximating the Hessian matrix through the acceleration parameter and employing a second-order Taylor expansion, the author derives the following acceleration parameter:

To enhance the convergence properties and the numerical results of the method in21, Petrovi\(\acute{c}\) in24 hybridised it with the PMHP by Khan23, resulting in the following acceleration parameter.

The enhancement not only improves the algorithm’s efficiency but also ensures greater accuracy in reaching solutions, thereby advancing the overall effectiveness of the method in practical applications. This improvement is made possible by the addition of the correction parameter \(\eta _k\) in the scheme.

Now, let’s refer to DSM in12. The approach created a derivative-free method for solving (1) via

where \(\delta _{k}>0\). The procedure in12 generate the iterates of \(\{s_k\}\) such that \(s_{k+1}=s_{k}+x_k\), where \(x_k=(\alpha _{k}+\beta _{k})a_{k}\) and the direction \(a_{k}\) is given as

The acceleration parameter is derived using a first-order Taylor expansion as follows:

where \(v_{k}=\mathfrak {F}(s_{k+1})-\mathfrak {F}(s_{k})\). The method presented in12 converged globally. However, its numerical performance is unsatisfactory when \(\delta _{k}\) approaches or equals 1. This constraint leads us to suggest a hybrid technique that produces better numerical results. Consequently, inspired by the approach in24, we are interested in hybridising it with the PMHP. Therefore, we define the mapping \(T\) in Definition 1.1 as

Therefore, from (8), (9), and (13), we have the following three relations:

where \(\eta _k\in (0,1)\) is a correction parameter. Consequently, we have the following lemma.

Lemma 2.1

Let the accelerated double-direction scheme be generated by the three term iterative process in (14) and (15), then the proposed scheme is given by

Proof

By substituting (15) into (14), we have

Taking \((\eta _k+1)=\rho _k\in (1,2)\), we have (16). \(\square\)

Now, we are finding the updated value of the acceleration parameter by employing the first-order Taylor expansion of the proposed iteration (16).

where \(\xi \in [s_{k}, s_{k+1}]\) can be expressed as \(\xi =s_{k}+\varrho (s_{k+1}-s_{k}), \quad 0\le \varrho \le 1\). By selecting \(\varrho =1\), we have

From (10) and (17), it not difficult to verify

\(x_k=s_{k+1}-s_{k}\).

By multiplying both sides of Eq. (18) by the transpose of \(v_k^T\), the proposed search direction and acceleration parameter are respectively defined as:

Remark 2.2

In this paper, we adopt a consistent parameterisation by setting \(\rho _k = \rho\) for all \(k \ge 0\). The parameter \(\rho\) is set to be in the interval \((1, 2)\). This selection is based on its influence on the algorithm’s convergence properties.

The proposed method algorithm is specified as follows:

Modified double step size approach (MIDSL).

Convergence analysis

We present how the proposed Algorithm 1 (MIDSL) converges globally in this section. To begin, let’s define the level set.

Assumption 3.1

The following assumptions have been stated:

-

1.

There exists \(s^*\in \mathbb {R}^n\) such that \(\mathfrak {F}(s^*)=0\).

-

2.

The function \(\mathfrak {F}(s)\) is smooth in some neighborhood say Q of \(s^*\) containing \(\Omega\).

-

3.

The Jacobian of \(\mathfrak {F}(s)\) is bounded symmetric and positive definite on Q, i.e., there exist positive constants \(H_1>H_2>0\) such that

$$\begin{aligned} \Vert \mathfrak {F}^\prime (s)\Vert \le H_1, \quad \forall s\in Q, \end{aligned}$$(24)and

$$\begin{aligned} H_2\Vert d\Vert ^{2}\le d^{T}\mathfrak {F}^\prime (s)d, \quad \forall s\in Q, d\in \mathbb {R}^n. \end{aligned}$$(25)

Remark 3.2

We make the following remark:

Assumption 3.1 implies that there exist constants \(H_1>H_2>0\) such that

Since \(\rho ^{-1}\delta _{k}I\) approximates \(\mathfrak {F}^\prime (s_{k})\) along \(x_{k}\), the following assumption can be made.

Assumption 3.3

\(\rho ^{-1}\delta _{k}I\) is a good approximation to \(\mathfrak {F}^\prime (s_{k})\), i.e.,

where, \(\varepsilon \in (0,1)\) is a small quantity.

Lemma 3.4

Let \(\{s_{k}\}\) be produced by the MIDSL algorithm, supposing Assumption 3.3is true. Then \(d_{k}\) is a sufficient descent direction for \(\mathfrak {h}(s_{k})\) at \({x_{k}}\) i.e.,

Proof

From (3), (19), and (28), we have

Applying the Cauchy–Schwarz inequality, we have,

Since \(\epsilon \in (0,1)\), taking \(c=1-\epsilon\), Lemma 3.4 is true.

We can conclude from Lemma 3.4 that the norm function \(\mathfrak {h}(s_{k})\) is a descent along \(d_{k}\), which means that \(\Vert \mathfrak {F}(s_{k+1})\Vert \le \Vert \mathfrak {F}(s_{k})\Vert\) is true. \(\square\)

Lemma 3.5

Let \(\{s_{k}\}\) be produced by the MIDSL algorithm, supposing Assumption 3.3is true. Then \(\{s_{k}\}\subset \Omega\).

Proof

From Lemma 3.4, we have \(\Vert \mathfrak {F}(s_{k+1})\Vert \le \Vert \mathfrak {F}(s_{k})\Vert\). Furthermore, for all k,

This means that \(\{s_{k}\}\subset \Omega\). \(\square\)

Lemma 3.6

Let \(\{s_{k}\}\) be produced by the MIDSL algorithm, supposing Assumption 3.3is true. Then there exists a constant \(H_2>0\) such that for all k,

where \(x_k=(\alpha _k+\beta _k)d_k\).

Proof

From mean-value theorem and (25),

\(v_{k}^Tx_{k}=x_{k}^T(\mathfrak {F}(s_{k+1})-\mathfrak {F}(s_{k}))=x_{k}^{T}F^\prime (\xi )x_{k}\ge H_2\Vert x_{k}\Vert ^{2}\).

Where \(\xi =s_{k}+\kappa (s_{k+1}-s_{k})\) , \(\kappa \in (0,1)\).

As shown in the related Lemma 3.6 and (27), the following inequality is true.

\(\square\)

Lemma 3.7

Let \(\{s_{k}\}\) be produced by the MIDSL algorithm, supposing Assumption 3.3is true. Then we have

and

Proof

From (21) for all \(k>0\)

By summing the above inequality, we have

Based on the level set and the fact that the sequence \(\{\chi _{k}\}\) meets the criterion in (22), it follows that the series \(\displaystyle \sum _{i=0}^{\infty }\Vert (\alpha _i+\beta _i)d_{i}\Vert ^{2}\) converges. This leads to the conclusion in (34). By applying the same reasoning as above, but now considering \(\omega _{1}\Vert (\alpha _k+\beta _k)\mathfrak {F}_{k}\Vert ^{2}\) on the left side, we arrive at (35). \(\square\)

Lemma 3.8

Let \(\{s_{k}\}\) be produced by the MIDSL algorithm, supposing Assumption 3.3is true. Then there exists a constant \(M_1>0\) such that for all\(k>0\),

Proof

From (19), (20), and (27), we have

Taking \(M_1=\frac{\rho \Vert \mathfrak {F}(s_{0})\Vert H_1}{H_2^{2}}\), we have (38). \(\square\)

Theorem 3.9

Let \(\{s_{k}\}\) be produced by the MIDSL algorithm, supposing Assumption 3.3is true. Assume further for all \(k>0\),

where \(\lambda\) is some positive constant. Then

Proof

From Lemma 3.8 we have (38). Also, from (34) and the boundedness of \(\{\Vert d_{k}\Vert \}\), we have

Also, from (19) we have,

Since

Then,

Therefore from (45) we have,

Consequently,

Hence,

The proof is complete. \(\square\)

Numerical experiments

In this section, the study discusses the computational efficiency of the methods on a system of nonlinear equations with application to motion control problems, illustrating the efficiency and relevance of the proposed method real-world situations.

Experiments on system of nonlinear equations

The robustness and efficiency of the new (MIDSL) scheme was assessed by comparing with other existing algorithms including:

-

1.

A double step length method (IDS) presented in12.

-

2.

A modified double-direction method (MDFDD) is presented in25.

The computer codes used in this study were developed using Matlab version 9.4.0 (R2018a) and executed on a PC that features an 8 GB of RAM and 1.80 GHz CPU processor. All three methods run under similar line search method, as detailed in equation (21). Throughout the experiments, the parameters for the IDS and MDFDD algorithms were set according to the settings outlined in12 and25, respectively. For the MIDSL method, the following parameter values were used: \(\omega _{1} = \omega _{2} = 10^{-4}\), \(\rho = 1.2\), \(q = 0.2\), \(r = 0.3\) and \(\chi _{k}=\displaystyle \frac{1}{(k+1)^{2}}\). The program execution terminates if the iteration count exceeds 1000 or if \(\Vert \mathfrak {F}(s_{k})\Vert \le 10^{-5}\). The symbol ’–’ denotes a failure when the number of iterations exceeds 1000 without discovering a point of \(s_k\) that meets the established stopping criterion. To demonstrate the comprehensive numerical experiments conducted with the three methods, we applied these techniques to six benchmark test problems, using various initial points and dimensions ranging from 1000 to 100,000.

The initial guesses presented in Table 1 were utilized in the experiments:

and the subsequent test problems were selected based on the SNE to illustrate the performance of the proposed algorithm.

Problem 17

\(\mathfrak {F}_{1}(s)=s_{1}-e^{\cos \left( \frac{s_{1}+s_{2}}{n+1}\right) }\),

\(\mathfrak {F}_{i}(s)=s_{i}-e^{\cos \left( \frac{s_{i-1}+s_{i}+s_{i+1}}{n+1}\right) }\),

\(\mathfrak {F}_{n}(s)=s_{n}-e^{\cos \left( \frac{s_{n-1}+s_{n}}{n+1}\right) }\), \(i=2,3,\ldots ,n-1\).

Problem 212

\(\mathfrak {F}_{i}(s)=(1-s_{i}^{2})+s_{i}(1+s_{i}s_{n-2}s_{n-1}s_{n})-2\), \(i=1,2,\ldots ,n\).

Problem 39

\(\mathfrak {F}_{i}(s)=s_i-3s_i\left( \frac{\sin s_i}{3}-\frac{33}{50}\right) +2\), \(i=1,2,\ldots ,n\).

Problem 427

\(\mathfrak {F}_{i}(s)=s_{i}- \left( 1- \frac{c}{2n} \sum\nolimits_{j=1}^{n}\frac{\mu _is_j}{\mu _i+\mu _j}\right) ^{-1}\), \(i=1,2,\ldots ,n, \quad j=1,2,\ldots ,n\) with \(c\in [0, 1)\) and \(\mu =\frac{i-0.5}{n}\). (In our experiment we take c =0.1).

Problem 525

\(\mathfrak {F}_{i}(s)=2s_{i}-\sin |s_{i}|\), \(i=1,2,\ldots ,n.\)

Problem 625

\(\mathfrak {F}_{1}(s)=(s_1^2+s_2^2)s_1-1,\)

\(\mathfrak {F}_{i}(s)=(s_{i-1}^2+2s_{i}^2+s_{i+1}^2)s_{i}-1,\)

\(\mathfrak {F}_{n}(s)=(s_{n-1}^2+s_{n}^2)s_n\), \(i=2,3,\cdots ,n-1\).

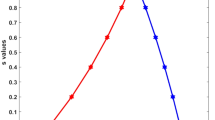

Performance profile based on the number of iterations within a factor \(\tau\) of the best time.

Performance profile based on the function evaluation within a factor \(\tau\) of the best time.

Performance profile with respect to CPU time (in seconds) within a factor \(\tau\) of the best time.

Detailed results of the numerical experiment are reported in Tables 2, 3 and 4. In evaluating these methods, we focused on three key metrics: the number of iterations (Iter), function evaluations (Fun), CPU time in seconds (Time(s)) required for each approach to attain a solution, and the residual norm \(\Vert \mathfrak {F}(s_{k})\Vert\). Below, we now analyse these metrics and their significance.

The number of iterations represents the cycles the algorithm undergoes to find a solution. Typically, a lower number of iterations indicates a more efficient algorithm. The tables show that the MIDSL method requires fewer iterations than both the IDS and MDFDD methods, demonstrating its effectiveness in solving the problem. Function evaluations denote the frequency with which the algorithm computes the value of the objective function during its operation. While the function evaluations for the proposed methods are considerably higher than those for the IDS method, the proposed methods still achieve a solution more rapidly than IDS. CPU time refers to the actual duration required by the algorithm to compute a solution, influenced by the complexity of each iteration and the overall number of iterations. In practical terms, a shorter CPU time signifies a faster and more efficient method. Our findings indicate that the MIDSL consistently outperforms the other methods in terms of CPU time, often arriving at a solution more quickly than both IDS and MDFDD. Moreover, the accuracy of the solution is evaluated through the residual norm, which indicates how well the computed solution meets the equations of the original problem. The tables reveal that all three methods achieve a sufficiently low residual norm, ensuring their effectiveness in solving problem (1). Upon analysing the results, it becomes evident that all three methods seek to address the issue defined by equation (1). Nevertheless, the proposed method not only converges to the answer more rapidly but also requires significantly fewer iterations and less CPU processing time than those used for comparison. In addition, the MIDSL method demonstrates its superiority by successfully solving all the problems that the MDFDD method was unable to tackle. A notable example is found in Problem 4, where the MDFDD method failed to arrive at a solution, while the proposed method delivered effective results. This highlights the reliability and efficiency of the proposed method in addressing the nonlinear problems.

Leveraging the performance profile established by Dolan and Mor\(\acute{\textrm{e}}\)28, we have generated Figures 1, 2 and 3 to provide a detailed overview of the performance and efficiency of three distinct methods. Each figure conveys essential information about the fraction \(P(\tau )\) of problems for which a particular method operates within a factor \(\tau\) of the optimal execution time. From Figs. 1 and 3 , it is clear that the curves representing the MIDSL method outperform those of both the IDS method and the MDFDD method. In most cases, the MIDSL method delivers superior performance. Nevertheless, Fig. 2 reveals instances where the IDS method significantly surpasses the MIDSL method for a specific set of problems, highlighting that while the MIDSL method is generally more effective, there are particular scenarios in which it may not be the optimal choice. Ultimately, these observations suggest that the proposed MIDSL method excels in efficiency, evidenced by a reduction in both the number of iterations required and the CPU time consumed (measured in seconds). The graphical representations in the figures demonstrate that our method is adept at solving large-scale nonlinear systems of equations, establishing its value in practical applications.

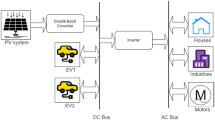

Application of the proposed method in motion control models

In this section, the problems arising from motion control involving three-joint planar robotic manipulators are considered. Since (1) is equivalent to the global optimisation problem (4), we can apply the MIDSL algorithm to solve this problem. The kinematics model provides a detailed framework for understanding the orientation and position of each component within a robotic system. This model represents the dynamics of the various components and their interactions, facilitating a comprehensive analysis of the robot’s overall functionality and performance. The model can be described as follows.

where the kinematics are mapped using the function \(\varphi (\cdot )\), \(a_1=l_1\cos (\theta _1)\), \(a_2=l_2\cos (\theta _1 + \theta _2)\), \(a_3=l_3\cos (\theta _1 + \theta _2+\theta _3)\), \(b_1=l_1\sin (\theta _1)\), \(b_2=l_2\sin (\theta _1 + \theta _2)\), and \(b_3=l_3\cos (\theta _1 + \theta _2+\theta _3)\). Additionally, the \(j^{th}\) rod’s lengths are \(l_1\), \(l_2\) and \(l_3\). The vectors \(\varphi (\theta )\in \mathbb {R}^3\) and \(\theta \in \mathbb {R}^3\) correspond to the end effector position vector and joint angle vector, respectively. Now, at any time interval \(t_k\in [0, t_f]\), the minimisation issue may be addressed as

where \(t_f\) is the end of task duration and \(u_{t_k}\in \mathbb {R}^2\) denotes the vector of the desired path at time \(t_k\). According to29, the rod lengths are chosen to be equal to 1, and a Lissajous curve is tracked to control the end effector as

We set the following parameters for this experiment: \(t_f=10\) seconds, \(\omega _{1} = \omega _{2} = 10^{-4}\), \(\rho = 1.2\), \(q = 0.2\), \(r = 0.3\), and the starting point \(\theta _0=[\theta _1,\theta _2,\theta _3]=[0,\frac{\pi }{3},\frac{\pi }{2}]^T.\) For fairness of comparison, the parameters of IDS and MDFDD are chosen precisely as specified in12 and25, respectively. Furthermore, the work duration [0, 10] is divided into 200 equal portions.

Figures 4, 5, 6 and 7 provide a comprehensive visualisation of the robot trajectories generated by the MIDSL algorithm. The patterns observed in these figures indicate that the MIDSL algorithm not only meets the given task objectives but does so with high efficiency. Specifically, Figures 6 and 7 highlight the precision of three algorithms by showing that the errors incurred in MIDSL and MDFDD are both approximately \(10^{-5}\), whereas one in IDS algorithm is around \(10^{-1}\). This minimal error margin emphasises the reliability and effectiveness of our proposed method in achieving accurate results in robotic trajectory planning. Overall, these findings support the practical applicability of the MIDSL algorithm in real-world problems.

Manipulator trajectories.

End effector trajectory and desired path.

Tracking errors on the horizontal x-axis.

Tracking errors on the vertical y-axis.

Conclusion

This study constructed a hybridized step size algorithm for solving systems of nonlinear equations, utilizing the Picard-Mann hybrid iterative scheme as articulated in23. The proposed scheme was derived by incorporating a correction parameter that improve convergence. An important feature of the proposed algorithm is its derivative-free scheme, which enhance the efficiency of the algorithm for large-scale problems. To evaluate the efficiency of the new scheme, the study conducted extensive numerical experiment on a set of large-scale test problems. Preliminary findings show that the proposed algorithm outperformed other existing algorithms by consistently requiring fewer iteration count and less CPU time. The study further extended the new scheme to solve motion control model with three degrees of freedom, demonstrating its practical applicability. Findings from the application problem demonstrate the method’s effectiveness in real-world situations. Future studies aim to explore the construction of a double-step size algorithm that does not rely on the Lipschitz assumption. This approach if successful will further improve the efficiency and robustness of the method.

Data availability

All data generated or analyzed during this study are contained within the manuscript.

Abbreviations

- NSE:

-

Nonlinear systems of equations

- JM:

-

Jacobian matrix

- NM:

-

Newton’s method

- QNM:

-

Quasi-Newton method

- DDM:

-

Double direction method

- DSM:

-

Double-step length method

- PMHP:

-

Picard–Mann hybrid iterative process

References

Sinha, V. K. & Maroju, P. New development of variational iteration method using quasilinearization method for solving nonlinear problems. Mathematics 11(4), 935. https://doi.org/10.3390/math11040935 (2023).

Sinha, V. K. & Maroju, P. A Numerical Approach to Variational Iteration Method for System of Nonlinear Ordinary Differential Equations. https://doi.org/10.1063/5.0224795 (2024).

Sinha, V. K. & Maroju, P. Numerical algorithm for solving real-life application problems of Lane–Emden type equation. J. Comput. Sci.75. https://doi.org/10.1016/j.jocs.2023.102185 (2024).

Sambas, A. et al. A new hyperjerk system with a half line equilibrium: Multistability, period doubling reversals, antimonotonocity, electronic circuit, FPGA design, and an application to image encryption. IEEE Access 12, 9177–9194 (2024).

Sambas, A., Vaidyanathan, S., Mamat, M., Ws, M. S. & Prastio, R. P. Design, analysis of the Genesio–Tesi chaotic system and its electronic experimental implementation. Int. J. Control Theory Appl. 9(1), 141–149 (2016).

Sambas, A. et al. A 3-D multi-stable system with a peanut-shaped equilibrium curve: Circuit design, FPGA realization, and an application to image encryption. IEEE Access 8, 137116–137132 (2020).

Halilu, A. S. & Waziri, M. Y. A transformed double step length method for solving large-scale systems of nonlinear equations. J. Numer. Math. Stoch. 9, 20–32 (2017).

Awwal, A. M. et al. Derivative-free method based on DFP updating formula for solving convex constrained nonlinear monotone equations and application. AIMS Math. 6(8), 8792–8814 (2021).

Halilu, A. S. & Waziri, M. Y. An improved derivative-free method via double direction approach for solving systems of nonlinear equations. J. Ramanujan Math. Soc. 33, 75–89 (2018).

Halilu, A. S. & Waziri, M. Y. Enhanced matrix-free method via double step length approach for solving systems of nonlinear equations. Int. J. Appl. Math. Res. 6, 147–156 (2017).

Abdullahi, H., Halilu, A. S. & Waziri, M. Y. A modified conjugate gradient method via a double direction approach for solving large-scale symmetric nonlinear systems. J. Num. Math. Stoch. 10(1), 32–44 (2018).

Halilu, A. S. & Waziri, M. Y. Inexact double step length method for solving systems of nonlinear equations. Stat. Optim. Inf. Comput. 8, 165–174 (2020).

Halilu, A. S., Majumder, A., Waziri, M. Y., Ahmed, K. & Awwal, A. M. Motion control of the two joint planar robotic manipulators through accelerated Dai-Liao method for solving system of nonlinear equations. Eng. Comput. https://doi.org/10.1108/EC-06-2021-0317 (2022).

Dennis J.E. & Schnabel R.B. Numerical Methods for Unconstrained Optimization and Nonlinear Equations. (Prentice Hall, 1983).

Waziri, M. Y., Leong, W. J. & Hassan, M. A. Jacobian-free diagonal Newton’s method for solving nonlinear systems with singular Jacobian. Malays. J. Math. Sci. 5, 241–255 (2011).

Yuan, G. & Lu, X. A new backtracking inexact BFGS method for symmetric nonlinear equations. Comput. Math. Appl. 55, 116–129 (2008).

Malik, M., Mamat, M., Abas, S. S. & Sulaiman, I. M. A new spectral conjugate gradient method with descent condition and global convergence property for unconstrained optimization. J. Math. Comput. Sci. 10(5), 2053–2069 (2020).

Mohammed, I. S. et al. A modified nonlinear conjugate gradient method for unconstrained optimization. Appl. Math. Sci. 9(54), 2671–2682 (2015).

Li, D. & Fukushima, M. A global and superlinear convergent Gauss-Newton based BFGS method for symmetric nonlinear equation. SIAM J. Numer. Anal. 37, 152–172 (1999).

Petrovic, M. J. & Stanimirovic, P. S. Accelerated double direction method for solving unconstrained optimization problems. Math. Probl. Eng. 2014, 1–8 (2014).

Petrovic, M. J. An accelerated double step size model in unconstrained optimization. Appl. Math. Comput. 250, 309–319 (2015).

Petrovi\(\acute{\rm c}\), M.J., Stanimirovi\(\acute{\rm c}\), P.S., Kontrec, N., & Mladenovi\(\acute{\rm c}\), J. Hybrid modification of accelerated double direction method. Math. Probl. Eng.8, 1523267. https://doi.org/10.1155/2018/1523267 (2018).

Safeer, H. K. A Picard-Mann hybrid iterative process. Fixed Point Theory Appl. 69, 10 (2013).

Petrović, M. J. Hybridization rule applied on accelerated double step size optimization scheme. Filomat 33(3), 655–665 (2019).

Kiri, A. I., Waziri, M. Y. & Halilu, A. S. Modification of the double direction approach for solving systems of nonlinear equations with application to Chandrasekhar’s integral equation. Iran. J. Numer. Anal. Optim. 12(2), 426–448 (2022).

Halilu, A. S. & Waziri, M. Y. Solving systems of nonlinear equations using improved double direction method. J. Nigerian Math. Soc. 32(2), 287–301 (2020).

Halilu, A. S., Majumder, A., Waziri, M. Y. & Abdullahi, H. Double direction and step length method for solving system of nonlinear equations. Eur. J. Mol. Clin. Med. 7(7), 3899–3913 (2020).

Dolan, E. & Moré, J. Benchmarking optimization software with performance profiles. J. Math. Prog. 91, 201–213 (2002).

Zhang, Y. et al. General four-step discrete-time zeroing and derivative dynamics applied to time-varying nonlinear optimization. J. Comput. Appl. Math. 347, 314–329 (2019).

Acknowledgements

The Researchers would like to thank the Deanship of Graduate Studies and Scientific Research at Qassim University for financial support (QU-APC-2025).

Author information

Authors and Affiliations

Contributions

Conceptualization; A.S.H. and K.A.; Methodology, A.S.H.; and K.A.; Software, M.A.S.; S.M.I.; and B.A.; Validation, M.A.M, A.M.; and A.Z.A.; Formal analysis, S.M.I.; B.A.; and A.Z.A.; Investigation, A.S.H.; K.A.; and A.M.; Resources, M.A.S.; A.Z.A; and B.A.; Data curation, A.S.H.; K.A.; and S.M.I.; Writing—original draft preparation, A.S.H.; M.A.M.; and K.A.; Writing—review and editing, A.M.; S.M.I.; M.A.S.; A.Z.A.; Visualization, B.A.; S.M.I.; and A.S.H.; Supervision, A.M; and M.A.M.; Funding acquisition, M.A.S.; A.Z.A; and B.A. The final version of the manuscript was read by all the authors and agreed to be published in its current form.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Halilu, A.S., Mohamed, M.A., Saleh, M.A. et al. Modified iterative double step length model for solving nonlinear systems with application to motion control. Sci Rep 15, 40764 (2025). https://doi.org/10.1038/s41598-025-24537-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-24537-z