Abstract

Understanding regional climate variability is essential for effective climate risk management, particularly in areas with complex terrain like Yunnan Province, China. Traditional regional climate models (RCMs), such as RegCM, face limitations in predictive accuracy and computational efficiency due to their reliance on nonlinear physical simulations. To address these challenges, this study introduces a comprehensive framework to evaluate regional climate predictions using artificial intelligence (AI) models. Specifically, we assess the performance of five mainstream AI models—CNN, LSTM, Transformer, CNN-LSTM, and LSTM-Transformer—in predicting key climate variables: temperature, precipitation, and relative humidity. Daily meteorological observations from 25 national stations (2004–2018) were employed, with dimensionality reduction and temporal feature encoding enhancing the sequence-based learning models. Model performance was evaluated using RMSE, MAE, and Pearson correlation coefficient (R). The results demonstrate that AI models substantially outperform RegCM, particularly for temperature and humidity predictions. Among them, the LSTM-Transformer achieved the highest accuracy in temperature (RMSE = 0.7410, R = 0.9938) and humidity (RMSE = 3.7054, R = 0.9710), while CNN-LSTM was most effective for precipitation (RMSE = 4.7260, R = 0.8559). These findings highlight the potential of artificial intelligence for advancing multivariate climate prediction in regions with significant spatial heterogeneity, providing a data-driven basis for more accurate climate risk assessment and early warning applications.

Similar content being viewed by others

Introduction

Climate change is a major challenge to global sustainable development in the 21 st century, and its impact has permeated agricultural production1, energy security2, water resource management3, public health4 ecological environment5, while also exacerbating the frequency and intensity of extreme disasters such as droughts, floods, and wildfires6,7,8. Yunnan, China’s southwestern ecological barrier, is located in the complex terrain of the Yungui Plateau. Its diverse topography (including mountains, hills, and basins) results in significant spatial heterogeneity in the regional climate. According to statistics from the Yunnan Provincial Department of Emergency Management, Yunnan has suffered multiple meteorological disasters such as forest fires, droughts, and floods between 2020 and 2024, resulting in cumulative direct economic losses exceeding 7 billion yuan, exposing the current inadequacy of regional climate prediction capabilities in emergency response.

In the face of escalating climate risks, developing high-precision, high-efficiency climate prediction models has become a critical task. Traditional regional climate models (RCMs) are based on physical mechanisms such as the global water vapor cycle, external forcings, and ecosystems, utilizing nonlinear partial differential equations (PDEs) such as energy conservation, thermodynamics, and fluid dynamics9 to establish mathematical models simulating changes in atmospheric fluid motion, temperature, humidity, and pressure10,11. However, RCMs’ high dependence on complex equations and physical processes, as well as their focus on atmospheric and oceanic physical mechanisms12,13,14, result in prediction accuracy and efficiency that are insufficient to meet emergency response requirements in complex terrain regions such as high mountains and high-altitude areas.

In contrast, artificial intelligence (AI) models utilize machine learning and data-driven methods15 to uncover nonlinear relationships between inputs and outputs from historical data16, without relying on physical equations, thereby demonstrating the potential to overcome the limitations of traditional models. In recent years, AI models have achieved significant breakthroughs in fields such as finance17, healthcare18, and transportation19,20, and are increasingly being applied to climate prediction practices, such as support vector regression models21, graph neural network models22, convolutional neural network models23, recurrent neural network models24, long short-term memory network models25, and CNN-LSTM models26,27, among others, have demonstrated superior performance in climate prediction, including temperature28,29, precipitation30,31,32, and relative humidity33. Among these, CNN excels at extracting local climate features, LSTM can capture long-term dependencies and periodic changes, Transformer leverages self-attention mechanisms for advantages in long-term sequence modeling, and CNN-LSTM integrates spatial and temporal features, making it suitable for complex terrain climates. Additionally, the LSTM-Transformer model combines the long-term memory capabilities of LSTM with the global dependency modeling advantages of Transformer and has achieved good application results in fields such as hydrology34,35, natural language processing, and healthcare36, but there has been limited in-depth research in the field of climate prediction. Therefore, this study systematically evaluates the climate prediction performance of the LSTM-Transformer model based on meteorological data from the complex terrain of Yunnan Province.

In summary, this study constructs and evaluates AI models such as CNN, LSTM, Transformer, CNN-LSTM, and LSTM-Transformer based on daily meteorological data from 25 national meteorological stations in Yunnan Province from 2004 to 2018. Using indicators such as RMSE, MAE, and R, it systematically compares the current regional climate model RegCM37, which has the best prediction performance in this region. The aim is to reveal the advantages of AI models in capturing local climate nonlinear characteristics and to explore effective pathways and methodological support for climate modeling in complex terrain regions.

Materials and methods

Research area

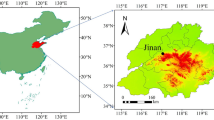

Yunnan Province is located in the southwestern part of China on the Yungui Plateau, between 21°8′ and 29°15′ north latitude and 97°31′ and 106°11′ east longitude (Fig. 1). The terrain slopes from northwest to southeast, with elevations ranging from 76.4 m to 6,740 m. The province has a diverse topography, including mountains, plains, hills, and terraces. The northern part of the province is the southern edge of the Qinghai-Tibet Plateau, featuring mountain ranges such as the Gaoligong Mountains, the Nujiang Mountains, and the Yunling Mountains. The Nujiang River, the Lancang River, and the Jinsha River flow southward in an alternating pattern, forming the unique “Three Parallel Rivers” landscape. The southern part belongs to the Hengduan Mountains, including the Ailao Mountains, Wuliang Mountains, and Bangma Mountains, with the terrain gradually transitioning southward and southwestward, forming open river valleys and broad valley basins. The unique geographical location and complex terrain have created significant climatic vertical differences and diversity in Yunnan, with climate types ranging from tropical and subtropical to temperate and plateau climates. The hottest month of the year is July, with an average monthly temperature of 20 °C to 24 °C; the coldest month is January, with an average monthly temperature of 7 °C to 13 °C. Precipitation is unevenly distributed throughout the year, with the wet season concentrated from May to October, accounting for over 85% of annual precipitation, while the dry season runs from November to April of the following year, accounting for approximately 15%.

Map of the study area and meteorological stations.

Research data

This study utilizes daily meteorological data from 25 national stations in Yunnan Province, China, obtained from the China National Meteorological Data Center (https://data.cma.cn), spanning the period from 2004 to 2018. The dataset comprises 12 core variables, including average, maximum, and minimum temperature; segmented precipitation (20:00–08:00 and 08:00–20:00) and total precipitation (20:00–20:00); average and minimum relative humidity; wind speed (average, maximum, and extreme); and sunshine duration. To enhance feature representation, additional variables were derived, including daily temperature range, sine and cosine encodings of month and day, and seasonal indicators (spring, summer, autumn, and winter), resulting in a total of 18 features.

After removing missing values and physically implausible outliers, variables measured in 0.1 °C or 0.1 mm units were converted to standard scales (°C and mm). Subsequently, to ensure feature comparability across variables, all data were standardized using Z-score normalization based on the training-set statistics, as shown in Eq. (1):

Where: \(\:\text{x}\) is the original variable, and \(\:\mu\:\) and \(\:{\upsigma\:}\) are the mean and standard deviation of the training set, respectively.

In this study, seasonal variations were intentionally preserved rather than removed, as they play a crucial role in regional climate variability and fire-weather dynamics in Yunnan. Instead of de-seasonalizing the series, cyclical encodings (month_sin, month_cos, day_sin, day_cos) and a categorical season indicator were introduced to represent intra-annual periodicity. This design enables the subsequent models to capture both the physical seasonality of meteorological variables and their temporal evolution across different fire seasons.

To reduce dimensionality and feature redundancy, principal component analysis (PCA) was applied to the standardized variables. We retained the principal components explaining 90% of the cumulative variance, reducing the feature space from 18 to 9 components while preserving most of the total information. PCA was conducted via eigen decomposition of the covariance matrix Σ, as formulated in Eq. (2):

Where: Σ is the covariance matrix, \(\:{v}_{i}\) is the feature vector, and \(\:{{\uplambda\:}}_{i}\) is the corresponding eigenvalue. The top k principal components with cumulative variance contribution exceeding 90% are selected to construct the model input subspace.

To better interpret the retained components from a meteorological perspective, we analyzed the feature loadings of the nine principal components. The cumulative variance threshold was set to 90%, which was already exceeded by the first eight components (cumulative variance 90.7%). Ultimately, nine components were retained (the ninth contributed only marginally, 2.5%, but was included to maintain completeness and avoid potential information loss), together explaining 93.2% of the total variance. As summarized in Attachment 2 Table A5, PC1 is dominated by relative humidity and temperature difference (minimum relative humidity, average relative humidity, temperature difference), PC2 primarily reflects wind speed variability (maximum wind speed, extreme wind speed, month cosine), and PC3 is associated with maximum and minimum temperature as well as seasonal effects (maximum temperature, minimum temperature, season). Together, the first three components account for approximately 59% of the total variance, indicating that key meteorological information—humidity, temperature, and wind—is well preserved after dimensionality reduction.

To explore their meteorological relevance, we further examined the dynamic relationships between the nine principal components and the main meteorological variables (temperature, humidity, and precipitation). Stationarity of all time series was confirmed using the Augmented Dickey–Fuller (ADF) test (p < 0.05), and bidirectional Granger causality tests were performed with a maximum lag of five days. The results (Attachment 2 Table A6) indicate significant bidirectional causality between temperature and several components (particularly PC1, PC2, PC5, and PC7), while humidity is mainly influenced by PCs associated with relative humidity and wind (PC2, PC3, PC7). Precipitation shows unidirectional causality from components representing precipitation accumulation and diurnal cycles (PC4, PC6, PC8), which correspond to higher-order components that capture precipitation- and diurnal-related signals, complementing the leading modes and preserving additional meteorological variability. These relationships reinforce the physical interpretability of the PCA-derived components.

For sequence modeling, a sliding window approach with a fixed length of 120 days was adopted to capture temporal dependencies and seasonal patterns, enabling the prediction of the next day’s target variable. The 120-day window corresponds to the typical duration of a meteorological season in Yunnan, allowing the model to learn both intra- and inter-seasonal dynamics. Data augmentation was implemented using a window-offset strategy with shifts of 0–3 days to enhance sample diversity and model robustness. Additionally, temporal perturbation was introduced during training by adding small random noise (standard deviation = 0.05) to time-related features, helping the model adapt to time-series irregularities and seasonal shifts. Finally, the dataset was chronologically divided into training (2004–2015, 80%), validation (2016,10%), and testing (2017–2018, 10%) subsets, maintaining temporal integrity and avoiding information leakage across time.

Methods

Model construction

CNN

Convolutional neural networks (CNNs) primarily consist of convolutional layers and pooling layers. Convolutional layers support one-dimensional, two-dimensional, or multi-dimensional convolution operations. One-dimensional convolution (1D CNN) has demonstrated significant advantages in feature extraction and analysis of time series38, while two-dimensional convolution (2D CNN) is widely used in image processing39, while multidimensional convolutions are suitable for modeling high-dimensional complex data40. For the prediction of temperature, precipitation, and relative humidity in the Yunnan region, the model employs a two-layer stacked 1D CNN to efficiently extract local temporal features (without pooling layers). The input channels for the first convolutional layer are the feature dimensions after PCA dimensionality reduction, while the subsequent layers use the outputs from the previous layer as input. All convolutional layers have a uniform channel count of 64. Each convolutional layer is followed by a ReLU activation function and batch normalization (BN). The convolutional features are flattened and normalized before being fed into dual output heads: a classifier and a regressor. The classifier uses a Sigmoid activation function to determine whether precipitation occurs, while the regressor uses a linear layer and ReLU activation to predict climate values. For precipitation tasks, the model is trained using a joint classification and regression approach (with weights of 0.3/0.7), while non-precipitation tasks are handled solely by regression. The training uses the AdamW optimizer (initial learning rate 0.001, weight decay 1e-4), combined with CosineAnnealingLR learning rate schedule, iterated 200 times, batch size 64, early stopping set to 15 iterations, and the best model and metrics are saved. The CNN model structure is shown in Fig. 2.

CNN model structure diagram.

LSTM

LSTM is a special type of recurrent neural network41 that controls the flow of information between different time steps by introducing an input gate (which regulates the activation signals flowing into the memory cells, thereby determining which information is written), a forget gate (which scales the internal state of the memory cells, adaptively selecting whether to forget or retain memories), and an output gate (which regulates the information output from the memory cells to the rest of the network)42,43. This study’s model uses a stacked LSTM network to extract nonlinear dynamic features from time series. The structure consists of two layers of LSTM, each with 128 units, sigmoid and tanh activation functions, and a dropout ratio of 0.5 to suppress overfitting. The final hidden state of the LSTM undergoes flattening and layer normalization before entering the classification and regression branches, with subsequent parameters and settings the same as those of the CNN model. The specific structure is shown in Fig. 3.

LSTM framework structure diagram (Ct is the cell state (memory state), Xt is the input information, and ht-1 is the hidden state (obtained based on Ct)).

Transformer

Transformer is a deep learning model based on self-attention mechanisms, consisting of an encoder and a decoder44. It primarily uses multi-head attention mechanisms to dynamically focus on temporal step correlations and possesses efficient parallel processing capabilities45,46, enabling it to ignore distance constraints between output sequences when capturing complex dependencies in time series. This study’s Transformer model adopts a 3-layer Encoder and 1-layer Decoder architecture, combined with position encoding to model long-term dependency features in time series. Each layer of the Encoder has a feedforward network dimension of 128, configured with 8 attention mechanisms, a Dropout ratio of 0.1, and a ReLU activation function. The decoder decodes the Encoder features using query vectors, and the final features are normalized before entering the classification and regression branches. The subsequent parameters and settings are the same as those of the CNN model. The specific network structure is shown in Fig. 4.

Transformer framework structure diagram.

CNN-LSTM

CNN-LSTM is a hybrid model that combines the spatial feature extraction capabilities of CNN38 with the temporal dependency modeling capabilities of LSTM41. It demonstrates outstanding performance in capturing complex climate patterns and improving prediction accuracy27,31. The CNN-LSTM model in this study first uses a two-layer one-dimensional convolutional structure to extract local features from climate time series, with 64 and 32 convolutional channels, a kernel size of 5, and a stride of 2, followed by ReLU activation and batch normalization layers. The extracted feature vectors are then fed into a bidirectional LSTM network, which consists of 128 units, with a Dropout rate of 0.2 and activation functions of sigmoid and tanh, thereby capturing bidirectional dependency features in the time series. Finally, the final time-step features from the LSTM enter the classification and regression branches, with subsequent parameter settings identical to those of the CNN model. The specific block structure is shown in Fig. 5.

CNN-LSTM framework structure diagram.

LSTM-Transformer

The LSTM-Transformer model combines the long-term dependency modeling capabilities of LSTM42,43 with the global feature extraction advantages of the Transformer, enabling it to effectively capture nonlinear relationships in time series and dynamically adjust feature weights. The LSTM–Transformer model in this study first uses stacked bidirectional LSTM layers to extract bidirectional temporal dependency features from climate sequences. Each layer contains 128 units, with sigmoid and tanh activation functions. The extracted features are then fed into three layers of Transformer Encoder modules (with a feedforward layer size of 128, 8-head attention, a Dropout rate of 0.2, and ReLU activation functions) to capture global dependency features across time scales. Finally, the final time step of the LSTM and the global feature vector of the Transformer are fused, and mapped to target values via a classifier and regressor. The subsequent parameter settings are the same as those of the CNN model. The specific block structure is shown in Fig. 6.

LSTM-Transformer framework structure diagram.

RegCM

RegCM simulates the climate system through physically driven mechanisms, and its construction requires the integration of the geographical characteristics of the study area and multi-scale parameterization schemes. This study is based on the CORDEX-EA Phase I experimental framework47,48, which has been extensively validated and widely applied in previous studies over Yunnan and Southwest China. The selection of the Phase I configuration ensures comparability and methodological continuity with existing regional climate assessments. The model is tailored to the complex topography of Yunnan Province. The configuration process is as follows: first, input boundary conditions and data, including ERA-Interim reanalysis data (initial field and lateral boundaries), NOAA OISST Sea surface temperature, and global baseline data such as USGS topography and MODIS land cover. Second, the simulation region is defined, centered on the geographical center of Yunnan Province (25°30′N, 101°36′E), with a horizontal resolution of 10 km, a buffer zone expanded by 10 grid cells, and 18 σ layers vertically. The dynamic and physical parameterization schemes include a non-hydrostatic dynamic framework, MIT-Emanuel cumulus convection, BATS land surface processes, Holtslag planetary boundary layer, and CCM3 radiation scheme. The map projection uses the Lambert projection. The simulation period spans from January 1, 2003, to December 31, 2018 (with 2003 designated as the spin-up period). Model outputs include four types of files: ATM (atmospheric state), SRF (meteorological data), RAD (radiative flux), and CHE (model end state). Post-processing is implemented using the netCDF library, GrADS, the R language, and the NCAR Command Language.

To ensure comparability between RegCM and the AI-based prediction models, the gridded RegCM outputs were bilinearly interpolated to the locations of the 25 national meteorological stations in Yunnan Province. Consistent with reference37, no additional bias correction was applied, as the interpolated RegCM results effectively captured the major temporal patterns and seasonal variations across the province. Nevertheless, it is recognized that model biases may still exist due to local-scale processes and topographic heterogeneity. Future research will incorporate bias-correction techniques, such as quantile mapping or empirical-statistical adjustment, to further refine RegCM’s regional performance and enhance its comparability with data-driven models. The model framework is illustrated in Fig. 7.

RegCM framework structure diagram.

Evaluation methods

After training all models, the model performance is ultimately evaluated by calculating the RMSE, MAE, and R of the training set, validation set, and test set. Among these, RMSE is a statistical measure of the difference between the model’s predicted values and the actual values, with lower values indicating higher prediction accuracy; MAE is an indicator that measures the average magnitude of the deviation between the predicted values and the true values, calculated as the average of the absolute values of all prediction errors; R is a statistical measure that quantifies the strength and direction of the linear relationship between two variables, with values ranging from − 1 to 1. The specific formulas are as shown in Eqs. 3, 4, and 5.

Where: \(\:{a}_{i}\)is the actual data, \(\:{b}_{i}\)is the predicted data, and \(\:{N}_{i}\) is the data volume.

Where: \(\:n\)is the number of data points, \(\:{Y}_{i}\) is the i^(th) true value, and \(\:{\widehat{Y}}_{i}\) s the i^(th) predicted value.

Where: \(\:{a}_{i}\) is the true value, \(\:{b}_{i}\) is the predicted value, \(\:\stackrel{-}{a}\) is the true mean, \(\:\stackrel{-}{b}\) is the predicted mean, and \(\:n\) is the data volume.

Results

Temperature

Table 1 presents the performance of six models in temperature prediction across training, validation, and test sets. The traditional regional climate model, RegCM, exhibited the lowest performance, with a test set RMSE value of 3.5519, MAE value of 2.2880, and R value of 0.8475, indicating limited capability in capturing nonlinear temperature variations. Both CNN and LSTM outperformed RegCM, with LSTM achieving a test RMSE value of 1.0186 and an R value of 0.9876, highlighting its strength in temporal modeling. The Transformer, despite its global attention mechanism, reached an R value of 0.9613, slightly below LSTM. CNN-LSTM further improved accuracy, achieving a test R value of 0.9922 with reduced error metrics. Among all models, LSTM-Transformer demonstrated the best performance, attaining a test RMSE value of 0.7410, MAE value of 0.5760, and R value of 0.9938, indicating superior capability in capturing complex nonlinear temperature patterns.

Figure 8 illustrates the test set performance of the six models in temperature prediction (training and validation results are provided in Appendix 1). The traditional RegCM model exhibits a wide dispersion of points and a clear deviation from the 45° line, reflecting a systematic bias and a limited ability to capture nonlinear temperature variations. AI-based models substantially improve this pattern, with scatter points clustering more tightly around the diagonal. LSTM and CNN-LSTM display enhanced temporal and spatio-temporal representation, respectively, producing smoother and more consistent fits. The LSTM-Transformer model shows the most concentrated and symmetric distribution, with minimal deviation across the full range of values, indicating strong capability in reproducing temperature trends and short-term variability. Overall, the visual patterns confirm that hybrid AI architectures, particularly LSTM-Transformer, outperform the traditional RegCM in accuracy and structural stability.

Scatter plot of temperature forecasts.

Figure 9 presents the error distributions for temperature prediction on the test set (training and validation results are provided in Appendix 1). RegCM exhibits the largest errors (Std = 3.54), with numerous deviations beyond ± 6 °C, reflecting systematic bias and high variability. CNN and Transformer show moderate improvements, with errors generally within ± 6 °C. LSTM reduces variability, concentrating most errors within ± 4 °C, while the hybrid models, CNN-LSTM and LSTM-Transformer, further confine errors within ± 2 °C. Among all models, LSTM-Transformer achieves the smallest standard deviation (0.74), a highly symmetric distribution, and minimal residuals outside ± 2 °C, indicating strong capability for accurate nonlinear fitting and robust error control. Consequently, AI models generally outperform RegCM in both accuracy and stability, with hybrid models producing more consistent error distributions across the test set.

Histogram of temperature prediction error distribution (Mean is the mean value, Std is the standard deviation, and N is the sample size).

Figure 10. Taylor plots of temperature predictions for the training, validation, and test sets. RegCM points are the furthest from the reference, indicating limited capability to reconstruct spatiotemporal temperature structures. CNN and Transformer show moderate improvements, with better control of standard deviation but weaker temporal consistency. LSTM demonstrates enhanced alignment, particularly in capturing temporal patterns. Hybrid models further improve performance: CNN-LSTM achieves high correlation (R = 0.9922) and reduced CRMSE, while LSTM-Transformer aligns most closely with reference points across all datasets, with the highest correlation (R = 0.9938) and the smallest CRMSE. These findings indicate that hybrid AI architectures better capture temperature patterns and variability, with LSTM-Transformer showing relatively close alignment with the reference points.

Temperature forecast Taylor chart.

Precipitation

Table 2 summarizes model performance in precipitation forecasting. RegCM showed the poorest results, with a test RMSE value of 4.8871 and an R value of 0.4585, reflecting challenges in modeling highly variable precipitation. Among AI models, CNN-LSTM achieved the best overall accuracy, with a test RMSE value of 4.7260, MAE value of 2.5422, and R value of 0.8559, effectively capturing spatiotemporal heterogeneity. CNN had a slightly lower RMSE value than RegCM but exhibited unstable errors. LSTM showed limited improvement, while Transformer performed poorly, with a test RMSE value of 7.6654 and R value of 0.4645, due to sensitivity to extreme events. LSTM-Transformer outperformed most other models, achieving an R of 0.8454, but was slightly below CNN-LSTM and still exhibited limitations in handling strong non-stationarity and local sudden changes. Overall, CNN-LSTM generally provided the most consistent performance for precipitation prediction on the evaluated datasets, suggesting good potential for accurate modeling and practical application in this context.

Figure 11 presents the scatter plot distributions for precipitation prediction over the 2004–2018 period, illustrating the pronounced variability and non-stationarity of precipitation compared with temperature. The RegCM model shows dispersed scatter points and weaker correlation with observations, consistent with challenges in representing rapid precipitation changes. The reduction in dispersion by CNN and LSTM is not substantial, as significant scatter persists during high-intensity rainfall events. The CNN-LSTM model exhibits closer alignment with the diagonal line, particularly within moderate and low precipitation ranges, while the LSTM-Transformer achieves comparable consistency across most cases but shows slight divergence under extreme conditions. The Transformer model produces a more irregular distribution, with less stability in regions of strong variability. Therefore, CNN-LSTM and LSTM-Transformer generally show improved consistency in precipitation prediction compared with other models, although challenges remain in capturing extreme rainfall events.

Scatter plot of precipitation forecasts.

Figure 12 presents the spatial distributions of prediction errors for precipitation across Yunnan (training and validation results are provided in Appendix 1).Except for the CNN model, most prediction deviations for the other models fall within approximately ± 15.The CNN-LSTM model produces the smallest overall error (Std = 4.73), with more than 95% of errors confined within ± 10, while the LSTM-Transformer shows slightly greater variability (Std = 4.81), with the majority of deviations concentrated between 15 and + 10.In contrast, the Transformer model displays a right-skewed error distribution (Std = 7.67) with long tails extending up to 50, suggesting relatively limited capability in capturing extreme precipitation events. Taken together, the hybrid AI models—particularly CNN-LSTM and LSTM-Transformer—provide better error control and stability than RegCM and single deep-learning models, highlighting their potential for improving precipitation forecasts in complex terrain.

Histogram of precipitation forecast error distribution (Mean is the mean value, Std is the standard deviation, and N is the sample size).

Figure 13. Taylor plots of precipitation predictions for the test set. RegCM and Transformer exhibit low correlation (R < 0.5), large deviations in standard deviation, and high CRMSE, reflecting challenges in capturing precipitation variability and extremes. CNN and LSTM improve correlation (R > 0.77) and reduce errors, though extreme events remain difficult to fit. CNN-LSTM shows the closest alignment with the ideal reference (R = 0.8559), effectively reconstructing precipitation intensity and variability. LSTM-Transformer achieves slightly lower correlation (R = 0.8454) but maintains favorable standard deviation and CRMSE, particularly for moderate- to low-intensity precipitation. Overall, hybrid models outperform single DL models and RegCM in stability and accuracy, though modeling extreme precipitation remains challenging.

Taylor diagram for precipitation prediction.

Relative humidity

Table 3 shows model performance in relative humidity prediction. RegCM had the lowest accuracy, with a test RMSE value of 4.8233, MAE value of 3.0280, and R value of 0.8443, demonstrating difficulty in capturing humidity trends. LSTM and its variants outperformed RegCM, with LSTM achieving a test R value of 0.9459. CNN-LSTM further improved accuracy, with a test RMSE value of 3.9061 and R value of 0.9649, by integrating spatial and temporal features. LSTM-Transformer achieved the highest performance, with a test RMSE value of 3.7054, MAE value of 2.8825, and R value of 0.9710, highlighting its strength in modeling nonlinear sequences. Transformer underperformed, with a test RMSE value of 7.8586 and MAE value of 6.0399, reflecting limited suitability for relatively stable variables like humidity. These results, based on the evaluated datasets, indicate that the LSTM-Transformer generally outperforms other models in terms of accuracy and correlation.

Figure 14 presents the scatter distributions of the six models for relative humidity prediction (training and validation results are provided in Appendix 1). The RegCM model shows dispersed points deviating from the diagonal, suggesting notable residuals and weak consistency with observations. CNN partially improves this pattern but still displays deviations at low-humidity levels. The LSTM model produces a more compact and uniform distribution, reflecting enhanced temporal representation. The CNN-LSTM model further refines this fit, exhibiting near-symmetric alignment around the diagonal and reduced scatter. The LSTM-Transformer model demonstrates the tightest clustering and minimal deviation, capturing humidity variations with greater stability and generalization. In contrast, the Transformer model remains less consistent, particularly under high-humidity conditions. Overall, hybrid AI models—especially LSTM-Transformer—yield more accurate and stable humidity predictions than both RegCM and single-architecture neural networks.

Scatter plot of relative humidity predictions.

Figure 15 shows the error distributions and kernel density estimation (KDE) curves for relative humidity prediction (training and validation results are provided in Appendix 1). The Transformer model exhibits the highest variability (Std = 7.86), with most errors distributed within approximately ± 30. In contrast, the other models show much narrower error ranges, generally confined within ± 15. Among them, RegCM (Std = 4.82), CNN (Std = 4.63), and LSTM-Transformer (Std = 3.71) display the most compact distributions, with the majority of deviations lying between − 10 and + 10. In summary, the hybrid AI models—particularly LSTM-Transformer—tend to yield more stable and symmetric error patterns than RegCM and single deep-learning models, suggesting their potential applicability for improving relative humidity prediction.

Histogram of relative humidity prediction error distribution (Mean is the mean value, Std is the standard deviation, and N is the sample size).

Figure 16. Taylor plots of relative humidity predictions for the test set. RegCM points are the furthest from the reference, with low correlation (R < 0.85) and high CRMSE, indicating limited ability to capture humidity amplitude and trends. Transformer shows modest improvement but retains high variability. LSTM aligns more closely with observations (R = 0.9459), while CNN-LSTM further reduces CRMSE and achieves strong variance recovery (R = 0.9649). LSTM-Transformer nearly coincides with the reference, showing the highest correlation (R = 0.9710), closely matching standard deviation, and the lowest CRMSE. Overall, LSTM-Transformer demonstrates superior fitting accuracy, statistical consistency, and stability, followed by CNN-LSTM and LSTM, whereas RegCM and Transformer exhibit comparatively lower performance.

Taylor diagram for relative humidity prediction.

Comprehensive comparison of model performance

Table 4 summarizes the comprehensive and total scores of the six models across three meteorological variables: temperature, precipitation, and relative humidity. Scores are based on normalized weighted calculations of RMSE, MAE, and correlation coefficient (R) across the training, validation, and test sets, providing an integrated assessment of each model’s overall performance in multi-variable climate prediction (see Appendix 2 for details). RegCM shows the lowest overall score (0.4075), primarily due to limited accuracy in temperature and precipitation. Transformer achieves a moderate score for temperature (0.6245), but lower performance in precipitation and humidity results in a total score of 0.3636, slightly below RegCM. CNN exhibits relatively balanced scores across all variables, with a total of 0.5546, while LSTM performs well in temperature prediction (0.7878) but less effectively in precipitation and humidity, yielding a total score of 0.6191. CNN-LSTM achieves the highest score for precipitation (0.6081), whereas LSTM-Transformer leads in temperature (0.8470) and relative humidity (0.6853), attaining the highest overall score of 0.7043, slightly above CNN-LSTM (0.7022). These results suggest that LSTM-Transformer, by integrating temporal modeling with attention mechanisms, generally offers relatively strong adaptability and stability across multiple variables, making it a promising choice for multi-variable climate prediction.

The visualization results from Figs. 8, 9, 10, 11, 12, 13, 14, 15 and 16 corroborates these findings. Scatter plots show that AI models, particularly LSTM-Transformer and CNN-LSTM, generally produce predictions closely aligned with observed values, with minimal deviation in temperature and humidity. Error histograms highlight superior residual control for hybrid models, with LSTM-Transformer exhibiting the tightest and most symmetrical distributions across all three variables. Taylor plots further confirm that LSTM-Transformer and CNN-LSTM achieve higher correlation, closer standard deviations, and lower CRMSE, reflecting strong spatio-temporal consistency and prediction stability. In contrast, RegCM shows dispersed errors and lower correlation, while Transformer demonstrates moderate improvement but comparatively lower stability. Overall, these results indicate that AI models integrating convolutional, temporal, and attention mechanisms—particularly LSTM-Transformer—offer robust, stable, and interpretable performance for multi-variable climate prediction in complex terrain.

Discussion

Validity and limitations of experimental results

The results of this study indicate that the artificial intelligence model demonstrates highly consistent predictive accuracy and minimal error fluctuations across the training, validation, and testing phases, showcasing excellent generalization capabilities and model stability. Taking the LSTM-Transformer model as an example, the Root Mean Square Error (RMSE) between the training and testing datasets for temperature prediction differed by only 0.027 (Train: 0.7151, Test: 0.7410), with the correlation coefficient consistently maintaining above 0.9937, indicating highly consistent fitting performance; the R-value for relative humidity in the testing dataset reached 0.9710, significantly outperforming the traditional RegCM model’s 0.8443. CNN-LSTM also demonstrated robust performance in precipitation prediction, with a test set R-value of 0.8559, a significant improvement over RegCM’s 0.4585. Across the test set, AI models consistently demonstrated high correlation coefficients for temperature (R > 0.94) and relative humidity (R > 0.85), while precipitation correlations were slightly lower (R ≈ 0.46–0.86). In comparison, RegCM showed lower correlations across all three variables (R = 0.46–0.85), indicating generally weaker predictive performance. The L2 regularization used in training effectively controlled model complexity and improved generalization ability49. In addition, a five-fold time-series cross-validation was conducted further to evaluate the models’ temporal stability and transferability. The results showed that the fluctuations in RMSE, MAE, and R values among different folds were within ± 5%, confirming the robustness of the AI models across different time periods (Attachment 2 Table A7–A9).

Despite their promising performance, the models have notable limitations. The narrow input feature set—excluding factors such as wind speed, air pressure, and terrain elevation—constrains representation of physical processes, and the data-driven models’ lack of physical constraints limits interpretability under extreme conditions. For instance, the Transformer model underperformed in precipitation forecasting, likely due to sparse and noisy rainfall data affecting the self-attention mechanism. Furthermore, reliance on station-based datasets underscores the need for validation with independent observational and reanalysis data to evaluate model transferability across broader temporal and spatial contexts. Future work should integrate diverse data sources, including remote sensing, IoT, and reanalysis datasets, and employ physics-informed neural networks (PINNs) to embed physical laws into deep models, thereby enhancing physical plausibility, robustness, and spatio-temporal generalization in climate predictions.

Recommendations

Although RegCM is currently the most effective regional climate model for predicting weather in Yunnan Province, its predictive accuracy is still limited by the fact that it has not been specifically customized to account for the complex terrain and climate heterogeneity of the region. It is important to note that this is not a flaw in the model itself, but rather a result of its general framework design, which does not fully reflect regional characteristics. The construction of an RCM typically involves the following steps: (1) Building an integrated physical parameterization system based on a three-dimensional system of mathematical equations (such as fluid dynamics and thermodynamics equations); (2) Embedding high-resolution geographic and climate data into a global climate model (GCM) to mitigate scale biases caused by the GCM’s coarse resolution; (3) Setting boundary conditions and side boundary conditions based on the feedback mechanisms of regional representative climate systems (e.g., terrain-precipitation coupling, cloud microphysical processes); (4) Generate multi-domain output data (e.g., atmospheric state, surface fluxes) through computationally intensive numerical simulations. This process not only relies on high-precision input data (e.g., terrain elevation, vegetation cover) and specialized computational facilities (e.g., supercomputer clusters) but also requires long-term tuning and validation by interdisciplinary expert teams, resulting in significant resource consumption (including human, time, and financial costs). Therefore, constructing a localized RCM for Yunnan requires a sustained investment of massive resources.

In contrast, AI models that learn complex nonlinear features from historical observational data can achieve competitive prediction accuracy while significantly reducing computational resource requirements. They offer a flexible framework capable of capturing intricate spatio-temporal patterns that traditional statistical models may overlook. Future research can further advance AI applications in two directions: first, integrating diverse data sources such as remote sensing, IoT, and reanalysis datasets to enrich input features; second, embedding physical constraints through physics-informed neural networks (PINNs) to improve interpretability, robustness, and generalization under extreme conditions. It should be emphasized that such limitations do not reflect inherent flaws in the models themselves but rather indicate areas where additional data or methodological enhancements could yield further improvements. By addressing these aspects, AI-based approaches can complement traditional methods and achieve competitive prediction accuracy across a range of climate prediction tasks.

Conclusion

This study systematically evaluated the predictive performance of a traditional regional climate model (RegCM) and five advanced artificial intelligence (AI) models—CNN, LSTM, Transformer, CNN-LSTM, and LSTM-Transformer—for forecasting temperature, precipitation, and relative humidity across the complex terrain of Yunnan Province.

The results indicate that AI models generally achieve higher accuracy and greater stability than RegCM, particularly under highly variable and extreme meteorological conditions. Among the AI approaches, CNN-LSTM showed relatively strong performance in precipitation prediction (test R ≈ 0.86 vs. RegCM R ≈ 0.46), while LSTM-Transformer demonstrated superior predictive capability for temperature (R ≈ 0.994) and relative humidity (R ≈ 0.971), capturing nonlinear and spatio-temporal dependencies effectively.

Data availability

The meteorological data used in this study were originally obtained from the China National Meteorological Data Center (http://data.cma.cn). Due to policy changes implemented in 2022, these datasets are no longer directly available for public download. Interested researchers may apply for access directly from the China National Meteorological Data Center. During the review process, the author (Junfan Zhao, email: 2661608843@qq.com) can provide access to the dataset upon reasonable request, in compliance with data-sharing policies.

References

Kim, K. H. et al. Prospects for Enhancing Climate Services in Agriculture. Bull. Am. Meteorol. Soc. 104, E352–E358. https://doi.org/10.1175/BAMS-D-22-0123.1 (2023).

Li, X. C., Zhao, L., Qin, Y., Oleson, K. & Zhang, Y. Elevated urban energy risks due to climate-driven biophysical feedbacks. Nat. Clim. Change. 14, 1056–1063. https://doi.org/10.1038/s41558-024-02108-w (2024).

Kuang, X. et al. The changing nature of groundwater in the global water cycle. Science 383, eadf0630. https://doi.org/10.1126/science.adf0630 (2024).

Tsui, J. L. H. et al. Impacts of climate change-related human migration on infectious diseases. Nat. Clim. Change. 14, 793–802. https://doi.org/10.1038/s41558-024-02078-z (2024).

Bousfield, C. G., Morton, O. & Edwards, D. P. Climate change will exacerbate land conflict between agriculture and timber production. Nat. Clim. Change. 14, 1071–1077. https://doi.org/10.1038/s41558-024-02113-z (2024).

Yuan, X. et al. A global transition to flash droughts under climate change. Science 380, 187–191. https://doi.org/10.1126/science.abn6301 (2023).

Huang, X. & Swain, D. L. Climate change is increasing the risk of a California megaflood. Sci. Adv. 8, eabq0995. https://doi.org/10.1126/sciadv.abq0995 (2022).

Canadell, J. G. et al. Multi-decadal increase of forest burned area in Australia is linked to climate change. Nat. Commun. 12, 6921–6921. https://doi.org/10.1038/s41467-021-27225-4 (2021).

O’Gorman, P. A. & Dwyer, J. G. Using machine learning to parameterize moist convection: potential for modeling of climate, climate Change, and extreme events. J. Adv. Model. Earth Syst. 10, 2548–2563. https://doi.org/10.1029/2018MS001351 (2018).

Mendoza, V., Pazos, M., Garduño, R. & Mendoza, B. Thermodynamics of climate change between cloud cover, atmospheric temperature and humidity. Sci. Rep. 11, 21244–21244. https://doi.org/10.1038/s41598-021-00555-5 (2021).

Kendon, E. J. et al. Do Convection-Permitting regional climate models improve projections of future precipitation change? Bull. Am. Meteorol. Soc. 98, 79–93. https://doi.org/10.1175/BAMS-D-15-0004.1 (2017).

van der Meer, M., de Roda Husman, S. & Lhermitte, S. Deep learning regional climate model emulators: A comparison of two downscaling training frameworks. J. Adv. Model. Earth Syst. 15, e2022MS003593. https://doi.org/10.1029/2022MS003593 (2023).

Gutowski, W. J. et al. The ongoing need for High-Resolution regional climate models: process Understanding and stakeholder information. Bull. Am. Meteorol. Soc. 101, E664–E683. https://doi.org/10.1175/BAMS-D-19-0113.1 (2020).

Schär, C. et al. Kilometer-Scale climate models: prospects and challenges. Bull. Am. Meteorol. Soc. 101, E567–E587. https://doi.org/10.1175/BAMS-D-18-0167.1 (2020).

Slater, L. J. et al. Hybrid forecasting: blending climate predictions with AI models. Hydrol. Earth Syst. Sci. 27, 1865–1889. https://doi.org/10.5194/hess-27-1865-2023 (2023).

Materia, S. et al. Artificial intelligence for climate prediction of extremes: state of the art, challenges, and future perspectives. WIREs Clim. Change. 15, e914. https://doi.org/10.1002/wcc.914 (2024).

Petrone, D., Rodosthenous, N. & Latora, V. An AI approach for managing financial systemic risk via bank bailouts by taxpayers. Nat. Commun. 13, 6815–6815. https://doi.org/10.1038/s41467-022-34102-1 (2022).

Yip, M. et al. Artificial intelligence Meets medical robotics. Science 381, 141–146. https://doi.org/10.1126/science.adj3312 (2023).

Jiang, W. Cellular traffic prediction with machine learning: A survey. Expert Syst. Appl. 201, 117163. https://doi.org/10.1016/j.eswa.2022.117163 (2022).

Haupt, S. E. et al. The History and Practice of AI in the Environmental Sciences. Bull. Am. Meteorol. Soc. 103, E1351–E1370. https://doi.org/10.1175/BAMS-D-20-0234.1 (2022).

Salcedo-Sanz, S., Deo, R. C., Carro-Calvo, L. & Saavedra-Moreno, B. Monthly prediction of air temperature in Australia and new Zealand with machine learning algorithms. Theor. Appl. Climatol. 125, 13–25. https://doi.org/10.1007/s00704-015-1480-4 (2016).

Li, P., Yu, Y., Huang, D., Wang, Z. H. & Sharma, A. Regional Heatwave Prediction Using Graph Neural Network and Weather Station Data. Geophys. Res. Lett. 50, https://doi.org/10.1029/2023GL103405 (2023) (e2023GL103405).

Asfaw, T. G. & Luo, J. J. Downscaling seasonal precipitation forecasts over East Africa with deep convolutional neural networks. Adv. Atmos. Sci. 41, 449–464. https://doi.org/10.1007/s00376-023-3029-2 (2024).

Raj, S., Tripathi, S. & Tripathi, K. C. ArDHO-deep RNN: autoregressive deer hunting optimization based deep recurrent neural network in investigating atmospheric and oceanic parameters. Multimed Tools Appl. 81, 7561–7588. https://doi.org/10.1007/s11042-021-11794-z (2022).

Suleman, M. A. R. & Shridevi, S. Short-Term weather forecasting using Spatial feature attention based LSTM model. IEEE Access. 10, 82456–82468. https://doi.org/10.1109/ACCESS.2022.3196381 (2022).

Guo, Q., He, Z. & Wang, Z. Prediction of monthly average and extreme atmospheric temperatures in Zhengzhou based on artificial neural network and deep learning models. Front. Glob Change. 6, 1249300. https://doi.org/10.3389/ffgc.2023.1249300 (2023).

Hou, J., Wang, Y., Zhou, J. & Tian, Q. Prediction of hourly air temperature based on CNN–LSTM. Geomatics Nat. Hazards Risk. 13, 1962–1986. https://doi.org/10.1080/19475705.2022.2102942 (2022).

Kieu, T. T. T., Jin, M. B. S., Hamidreza, V. A. & K. S. & Review of neural networks for air temperature forecasting. Water 13, 1294–1294. https://doi.org/10.3390/w13091294 (2021).

An, H. et al. Forecasting daily extreme temperatures in Chinese representative cities using artificial intelligence models. Weather Clim. Extremes. 42, 100621. https://doi.org/10.1016/j.wace.2023.100621 (2023).

Wang, J. et al. STPF-Net: Short-Term precipitation forecast based on a recurrent neural network. Remote Sens. 16, 52. https://doi.org/10.3390/rs16010052 (2023).

Guo, Q., He, Z. & Wang, Z. Monthly climate prediction using deep convolutional neural network and long short-term memory. Sci. Rep. 14, 17748–17748. https://doi.org/10.1038/s41598-024-68906-6 (2024).

Espeholt, L. et al. Deep learning for twelve hour precipitation forecasts. Nat. Commun. 13, 5145–5145. https://doi.org/10.1038/s41467-022-32483-x (2022).

Qadeer, K., Ahmad, A., Qyyum, M. A., Nizami, A. S. & Lee, M. Developing machine learning models for relative humidity prediction in air-based energy systems and environmental management applications. J. Environ. Manage. 292, 112736. https://doi.org/10.1016/j.jenvman.2021.112736 (2021).

Shi, J., Wang, S., Qu, P. & Shao, J. Time series prediction model using LSTM-Transformer neural network for mine water inflow. Sci. Rep. 14, 18284. https://doi.org/10.1038/s41598-024-69418-z (2024).

Li, W. et al. An interpretable hybrid deep learning model for flood forecasting based on transformer and LSTM. J. Hydrol. Reg. Stud. 54, 101873. https://doi.org/10.1016/j.ejrh.2024.101873 (2024).

Xia, L. et al. Hybrid LSTM–Transformer model for the prediction of epileptic seizure using scalp EEG. IEEE Sens. J. 24, 21123–21131. https://doi.org/10.1109/JSEN.2024.3401771 (2024).

Deng, X., Zhang, Z., Zhao, F., Zhu, Z. & Wang, Q. Evaluation of the regional climate model for the forest area of Yunnan in China. Front. Glob Change. 5, 1073554. https://doi.org/10.3389/ffgc.2022.1073554 (2023).

Lara-Benítez, P., Carranza-García, M. & Riquelme, J. C. An experimental review on deep learning architectures for time series forecasting. Int. J. Neural Syst. 31, 2130001. https://doi.org/10.1142/S0129065721300011 (2020).

Alzubaidi, L. et al. Review of deep learning: concepts, CNN architectures, challenges, applications, future directions. J. Big Data. 8, 53–53. https://doi.org/10.1186/s40537-021-00444-8 (2021).

Li, Z. W., Liu, F., Yang, W. J., Peng, S. H. & Zhou, J. A. Survey of convolutional neural networks: Analysis, Applications, and prospects. IEEE Trans. Neural Netw. Learn. Syst. 33, 6999–7019. https://doi.org/10.1109/TNNLS.2021.3084827 (2022).

Hochreiter, S. & Schmidhuber, J. Long Short-Term memory. Neural Comput. 9, 1735–1780. https://doi.org/10.1162/neco.1997.9.8.1735 (1997).

Tran, T. T., Bateni, S. M., Ki, S. J. & Vosoughifar, H. A review of neural networks for air temperature forecasting. Water 13, 1294–1294. https://doi.org/10.3390/w13091294 (2021).

Thi Kieu Tran, T., Lee, T., Shin, J. Y., Kim, J. S. & Kamruzzaman, M. Deep Learning-Based maximum temperature forecasting assisted with Meta-Learning for hyperparameter optimization. Atmosphere 11, 487. https://doi.org/10.3390/atmos11050487 (2020).

Vaswani, A. et al. Attention Is All You Need. Preprint at (2017). https://arxiv.org/abs/1706.03762

Islam, S. et al. A comprehensive survey on applications of Transformers for deep learning tasks. Expert Syst. Appl. 241, 122666. https://doi.org/10.1016/j.eswa.2023.122666 (2024).

Sajun, A. R., Zualkernan, I. & Sankalpa, D. A. Historical survey of advances in transformer architectures. Appl. Sci. 14, 4316–4316. https://doi.org/10.3390/app14104316 (2024).

Yu, K., Hui, P., Zhou, W. & Tang, J. Evaluation of multi-RCM high-resolution hindcast over the CORDEX East Asia phase II region: Mean, annual cycle and interannual variations. Int. J. Climatol. 40, 2134–2152. https://doi.org/10.1002/joc.6323 (2020).

Li, T., Liu, G., Fang, J. & Tang, J. Dynamical downscaling of inland and offshore near-surface wind speed over China in CORDEX East Asia phase I and II experiments. Int. J. Climatol. 42, 6996–7012. https://doi.org/10.1002/joc.7625 (2022).

Sejuti, Z. A. & Islam, M. S. A hybrid CNN–KNN approach for identification of COVID-19 with 5-fold cross validation. Sens. Int. 4, 100229. https://doi.org/10.1016/j.sintl.2023.100229 (2023).

Funding

This research was supported by the Yunnan Fundamental Research Projects, China (Grant No. 202301AT070223), the Yunnan Reserve Projects for Young and Middle-Aged Academic and Technological Leading Talent (Grant No. 202405AC350034), and the National Natural Science Foundation of China (Grant No. 32160374).

Author information

Authors and Affiliations

Contributions

Junfan Zhao: Data curation, Methodology, Software, Formal analysis, Investigation, Visualization, Writing – original draft, Writing – review & editing.Fan Zhao: Conceptualization, Supervision, Project administration, Funding acquisition, Writing – review & editing.Hang Deng: Methodology, Data curation, Validation.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Zhao, J., Zhao, F. & Deng, H. Evaluation of climate prediction models in Yunnan, China: traditional methods and AI approaches. Sci Rep 15, 43347 (2025). https://doi.org/10.1038/s41598-025-27326-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-27326-w