Abstract

Air pollution datasets typically exhibit a right-skewed distribution. These conditions are caused by the presence of extreme events leading to imbalanced data distribution. This imbalanced regression poses a notable challenge in predictive modeling, as the models tend to be biased towards frequent normal events while underperforming extreme events. Therefore, to address this issue, the resampling approach is crucial in handling these extreme events to improve model performance. In this study, a modified resampling strategy, called Moving Block Bootstrapping with Relevance Weighting (MBB-RW), is proposed to address imbalanced regression problems. By integrating MBB with relevance weighting, the time-series dependence of air pollution data is preserved while placing greater emphasis on extreme events. The findings demonstrate that MBB-RW can mitigate data imbalance and enhance model prediction accuracy for extreme events. These enhancements are evident in the performance of the Extreme Gradient Boosting (XGBoost) model in predicting PM10, where the evaluation metrics showed significant reductions after applying MBB-RW: RMSE dropped from 108.3010 to 39.1846 (63.8188%) and MAE from 85.1041 to 27.1082 (68.14700%). The key contribution of this study is the development of MBB-RW resampling strategy designed to improve extreme values in an imbalanced regression dataset, with our focus on the air pollution dataset. Simultaneously, this method can be implemented to enhance the accuracy of PM10 concentration predictions, specifically during extreme air pollution events.

Similar content being viewed by others

Introduction

Air pollution has become a severe environmental and public health issue, particularly in urban and industrial regions. Major causes include vehicle emissions, industrial activity, open burning, and transboundary haze, which can sometimes be triggered by forest fires in neighbouring countries1. Primary pollutants that impact most countries include Particulate Matter (PM), Nitrogen Dioxide (NO2), Carbon Monoxide (CO), Ozone (O3), and Sulphur Dioxide2. However, Particulate Matter 10 (PM10) is a major pollutant in the air, and it has a larger impact on humans than other pollutants. In Malaysia, PM10 concentration is always higher than any other pollutant3. Therefore, the urge to predict the PM10 concentration, especially in extreme events, has become a crucial and tough task with growing motor and industrial developments4,5.

Air pollution poses a challenge in making accurate predictions, requiring robust modeling techniques that can capture both common and extreme events. One of the major challenges in modeling air pollution data is the imbalance in the target distribution6. An abnormally high PM10 concentration level can result in the existence of extreme values in air pollution data7. Extreme values in air pollution result in imbalance problems, described by the combination of two factors: (i) skewed distribution of the target variable, and ii) user preferences towards underrepresented cases8.

Concentrations of pollutants are often right-skewed. Most measurements are very modest; however, a small proportion of observations reach quite high levels during extreme events. According to9, extreme values are defined as events that occur significantly less often than common events. Extreme values or extreme events are normally generated by haze from forest fires, industrial hazards, thermal inversion, and sometimes caused by monitoring faults10. Neglecting extreme values can lead to inaccurate predictions when using the original data set directly due to its skewed distribution11. The effect of imbalance and skewness is evident. Models trained on imbalanced data typically follow normal cases, as minimizing overall error inherently skews the algorithm toward the majority class. Hence, predictions for extreme pollution events are often inaccurate, with models struggling to cater for rare but important new environmental and social events12,13. Therefore, it is fundamental to correctly deal with the problem of extreme values in imbalanced distribution to boost the performance of prediction models, which has become a crucial topic in current research.

Imbalanced regression

In real life, data imbalance is an inherent, typical, encountering challenge encountered across various research disciplines. There are two categories of imbalanced datasets: classification and regression. In classification problems, an imbalanced dataset occurs through the presence of an underrepresented class (minority class) compared to another one (majority class)14. Meanwhile, in regression problems, the target or dependent is continuous, and indicates a complex definition, because the target observation is not bound to a limited set of discrete values, unlike in classification problems, where the target value represents specific categories or classes14. The imbalance regression problem occurs when the frequency of some target values in the regression dataset is extremely low, and these target values are often ignored by the model, resulting in poor prediction performance of the model on these samples8,15. The imbalanced regression requires studying the distribution of the data more thoroughly to predict the value more accurately16.

Most of the existing strategies for dealing with imbalanced data are limited for classification problems, that is, the target value is a discrete index of different categories. Nonetheless, this issue also arises in numerical prediction tasks, for example, regression, when users are more interested in accurately predicting extreme (rare) values of the target variable16. In fields like finance, meteorology, or environmental sciences, the goal is often to predict uncommon events, also known as extreme cases8. According to 14, to better understand the different approaches for dealing with imbalance regression problems, the strategies are categorized into three main groups: (i) learning process modification, (ii) regression models, and (iii) evaluation metrics. Organizing these strategies into three distinct categories can yield a better understanding of the approaches and support the selection of the most effective strategy for handling imbalanced regression problems. One of the popular strategies that is often used by previous researchers in handling imbalanced regression is learning process modification or also known as data preprocessing, data preparation, or resampling8,17,18,19,20. The strategies operate by either removing samples from common cases or generating synthetic samples for extreme events. Data preprocessing techniques have the advantage of permitting the use of any learning algorithm simultaneously, without affecting the understandability of the model17. Data preprocessing or resampling procedures modify the original data distribution before implementing the learning algorithm21. The purpose of modifying the target variable distribution is to drive the learning algorithm to focus on the extreme value cases22. These solutions are popular because data preprocessing allows the use of any learning algorithm and are simple to implement because the modification is only on the original data set distribution. However,12 emphasized that the effectiveness of such methods depends on how the data distribution is adjusted, which remains a challenge for researchers.

Previous studies have investigated several resampling approaches to address imbalanced regression problems. These approaches include Synthetic Minority Oversampling Technique for regression (SMOTER)13, Synthetic Minority Oversampling Technique with Gaussian Noise (SMOGN)12, SMOTEBoost19, Resampled Bagging for Imbalanced Regression (REBAGG)18, Weighted Relevance-based Combination Strategy (WERCS)17, Geometric SMOTE23 and others. SMOTE for regression (SMOTER), SMOTE with Gaussian Noise (SMOGN) and Geometric SMOTE generate synthetic samples through interpolation or noise rejection. Even though these methods boost the depiction of extreme values, these methods may potentially generate noisy and unrealistic data. This may result in misleading patterns that lower the generalisation capacity of machine learning models. Weighted Relevance-based Combination Strategy (WERCS) operates probabilistic oversampling and undersampling based on a relevance function that allocates more weights to extreme values18. Though this approach is customizable and maintains the entire target range covered, the data’s temporal and spatial correlations are not incorporated. For time-dependent pollution data, this restriction can lead to an unrealistic training set that reduces model performance during sequence prediction.

The Resampled Bagging for Imbalanced Regression (REBAGG) algorithm has been proposed to address the issue of the imbalanced regression task by employing an ensemble method based on bagging and combined resampling techniques18. Although the ensemble method boosts robustness and often achieves outstanding performance, it is computationally costly and ignores temporal dependencies. By examining the association between the distribution of the target value and the test error of the prediction model24, introduced the concept of deep imbalanced regression. According to the properties of imbalanced regression data, they implemented label distribution smoothing (LDS) and feature distribution smoothing (FDS). However, this approach still generates minority regression samples using the data interpolation method, which is prone to overfitting. Introducing synthetic data that lacks real-world representativeness, along with the elimination of common examples that provide important aspects for predictive tasks, may negatively impact the prediction performance.

Therefore, to avoid the synthetic artificial data and preserve the temporal dependencies inherent in the dataset, Moving Block Bootstrapping (MBB) is proposed as a resampling method in handling the imbalanced air pollution dataset. MBB is a well-known resampling technique25. MBB is one of the popular methods that originates from Block Bootstrapping. Unlike traditional bootstrapping, which resamples individual observations, MBB resamples blocks of successive data points26. This strategy is practicable for time series or spatial data, where maintaining dependencies between observations is vital. MBB can upgrade data quality by preserving data dependency within blocks27. Resampling entire blocks of data helps control the relationships between variables, offering the model a more realistic learning context. Furthermore, MBB avoids the addition of artificial data by resampling only from observed values, ensuring data originality while improving the training set with extreme but authentic observations.

Moving block bootstrapping (MBB)

Moving Block Bootstrapping (MBB) was introduced as an adaptation of the ordinary bootstrap for serially correlated data2829, demonstrated that applying MBB helps to generate more reliable and robust parameter estimates for hydrological models by accounting that the calibration data represent a limited sample drawn from an unknown underlying distribution26. compare the standard optimisation model with the block bootstrap optimization models, and they conclude that block bootstrap provides more stable estimates of investment portfolios with a higher rate of diversification. Furthermore, when comparing the forecasts predicted by the ARMA model with the bootstrap forecasts and the actual price relations in the forecasted period, it was observed that each block bootstrap method forecasted the silver futures contract price closer to the actual execution than the ARMA model30. The MBB approach demonstrates its strength in preserving the temporal structure of time series data in its process and successfully boosts the estimates of missing rainfall data imputation by utilizing multiple imputation based on the MBB approach associated with normal ratio methods, compared to the conventional bootstrap approach27. suggested MBB for environmentalists as an alternative approach for better estimation of environmental datasets.

Although MBB has been demonstrated to be advantageous for time series data, it has its limitations.31, demonstrated that the MBB resampling strategy can significantly enhance the performance of baseline forecasting models, which yield accurate predictions on normal events but perform poorly on extreme events. This is because the MBB approach puts equal weights on the blocks under the assumption that the blocks have the same underlying process. However, this is not the case when the data contains extreme observations due to temporary events such as a forest fire.

To address this challenge, we propose to improve the MBB approach by acknowledging that the blocks do not necessarily have the same process. This is done by integrating the MBB approach with a relevance weighting threshold (denoted MBB-RW), which allocates higher importance to target the extreme values. Relevance weighting is inspired by the relevance concept from utility-based learning8,32. In this approach, the extreme events are better represented during model training, and the inherent temporal structure is simultaneously preserved, resulting in more robust and accurate predictions for extreme cases.

For the development of the PM10 prediction model, Extreme Gradient Boosting (XGBoost) will be utilized, as previous studies on air pollution modelling have revealed XGBoost as one of the best machine learning models used for air pollution prediction33,34,35. Specifically, in tree tree-based model,4 demonstrated that XGBoost is particularly well-suited for predicting extreme pollution values. Our results indicate that this novel approach (MBB-RW) gives a superior performance compared to the standard MBB approach and shows good operating characteristics.

Methodology

Research flow

The overall workflow of this article is shown in Fig. 1, which attempts to develop an effective resampling strategy for dealing with extreme events in imbalanced air pollution data in Shah Alam, Malaysia, using the modified Moving Block bootstrapping with relevance weighting (MBB-RW) approach. This study employs air quality data from 2013 to 2022 provided by the Department of Environment (DOE), Malaysia. The process begins with data retrieval, followed by extensive data preprocessing, with a particular focus on addressing imbalanced data treatment, MBB-RW. Next, a machine learning model called Extreme Gradient Boosting (XGBoost) is applied to compare the effectiveness of MBB-RW resampling approach against a baseline without resampling. The model performance is evaluated based on the accuracy of Root Mean Square Error (RMSE) and Mean Absolute Error (MAE). Finally, the MBB-RW approach is examined to determine whether it can serve as an effective resampling approach or vice versa.

Research Flowchart.

Data description

This study obtained secondary data from the Malaysian Department of Environment (DOE), Malaysia, from 2013 to 2022. The station is in Shah Alam, Selangor, and comprises 83,431 hourly air quality data points for 10 variables, air pollutants and meteorological parameters. The air pollutants featured: Particulate Matter with an aerodynamic diameter of less than or equal to 10 µg (PM10), Sulphur Dioxide (SO2), Nitric Oxide and Nitrogen Dioxide (NOx), Nitrogen Dioxide (NO2), Ozone (O3) and Carbon Monoxide (CO), whereas meteorological parameters involved: Wind Speed (WS), Wind Direction (WD), Relative Humidity (RH) and Temperature (T). These variables were the independent variables (IV) used to predict the PM10 concentrations for the next 24 h (PM10,t+24 h). PM10, t+24 h serves as a dependent variable.

The incorporation of both meteorological parameters and air pollutants as input variables gives a broader framework for prediction. Air pollutants such as SO₂ and NO₂ operate as trackers of burning sources that regularly contribute to particulate matter, enhancing the model’s ability to detect emission-associated variability in PM₁₀36,37,38. Meteorological variables, including wind speed, wind direction, relative humidity, and temperature, control the dispersion and accumulation processes that identify whether emissions contribute to normal or extreme PM₁₀ episodes39,40.

Therefore, including both air pollutant and meteorological parameters ensures that the model supports the influence of emission signals and atmospheric dynamics, at the same time improving predictive performance and robustness for extreme PM₁₀ events. Table 1 presents the variables in this study, along with their respective levels of measurement and roles.

Data preprocessing

Data preprocessing incorporates missing value imputation, data transformation, imbalanced data treatment, and data partition (random split). A substantial amount of missing data may introduce biases or weaken the statistical power of the analysis41. Missing values may derive from multiple sources, such as sensor failures, environmental influences, or data transmission issues41. Linear interpolation is widely used as an imputation method, particularly to treat small gaps of missing data42. As noted by43, the missing air pollution data in Malaysia is referred as Missing at Random (MAR), and the use of the linear interpolation method suggests that the pattern of missing values does not significantly alter the inherent trends in the data. Therefore, in handling missing values, missing data are imputed using the linear imputation method to ensure that these gaps do not affect the performance of the predictive models. For data transformation, the units of air pollutants SO₂, NO₂, NOₓ, O₃, and CO in ppm are transformed to ppb since the ppm unit is too diminutive, thus compromising the accuracy of the results. The temperature and relative humidity features went through the removal of invalid and abnormal values, where the observations that have the temperature more than 50°c, and the relative humidity below 40% were removed. According to the Malaysian Meteorological Department, the range for average daily minimum humidity can be as low as 40% during the dry months44. The WD variable, which is expressed in degrees, has been converted to wind direction index (dimensionless)45. According to46, the formula for conversion is

Where θ is the wind direction in radians.

In this study, data partition is performed by implementing standard splitting methods. The dataset is divided into two subsets, allocating 80% for training (model development) and 20% for testing (performance evaluation). 6 suggest that allocating 80% of the data for training and 20% for testing is empirically effective for generating optimal results. To ensure unbiased distribution, random sampling is applied to partition the data into train and test sections47,48. The training set (80%) is used to develop and fit machine learning models, giving the learning algorithms to understand patterns and relationships between PM10 concentrations and independent variables such as meteorological variables and gaseous pollutants. Meanwhile, the testing set (20%) is not revealed to the model during training. It is used for performance evaluation, yielding an unbiased assessment of the model’s ability to generalize to unseen data. This approach helps to minimize the risk of overfitting, where the model performs well on training data but poorly on new data.

Imbalanced regression

Imbalanced regression refers to problems where the target variable exhibits skewness.49 noted that most regression tasks tend to assume a uniform evaluation of target values’ relevance. In discrete imbalanced classification tasks, discovering which category is more relevant is relatively straightforward, specifically when one class is significantly underrepresented, making it as the main focus of prediction. However, in an imbalanced regression task, this becomes more difficult due to the continuous inherent of the target variable. For such scenarios, the researchers need to define which value ranges are considered crucial, as these conditions significantly influence the modelling approach.

To determine the important of target values, 32 propose the definition of relevance function

where, y represents the domain of the target variable Y, and a relevance value of 1 represents priority area.

A relevance threshold, tE is launched to represent a threshold or boundary for the definition of relevant values19. Its purpose is to identify the values that researcher considers most relevant in their domain environment. This approach enables the learning process to concentrate more on extreme events by defining a relevance threshold that distinguishes extreme and normal events8. Given this threshold, the set of extreme events, DE and the set of normal events, DN, as follows:

where, DE represents the set of extreme events, DN represents the set of normal events, y is the target variable and x is the independent variable.

This study deals with the challenge of imbalanced regression in predicting extreme PM10 air pollution concentrations. At this stage, it is crucial to provide a good definition of the relevance threshold. Table 2 shows the calculation of the breakpoint concentration for PM10 corresponding to each Air Pollution Index (API) category, which is categorised as good, moderate, unhealthy, very unhealthy, and hazardous, which can be an air quality management level for data interpretation processes. API is an effortless and encompassing technique for defining air quality conditions that is easily understood3. API with 101–200 µg/m³, indicating that the air quality status is unhealthy. This unhealthy API corresponds to the PM10 breakpoint concentration, which is 155 µg/m³. Therefore, in this study, a PM₁₀ concentration for extreme threshold, tE of 155 µg/m³ is marked as the relevance threshold, since the established breakpoint of PM10 concentration in Table 2 indicates that a concentration that is greater than or equal to 155 µg/m³ corresponds to an unhealthy API. For air pollution modelling, defining a relevance threshold based on air quality breakpoints ensures that the model emphasises predictions that are most critical for public health interventions. Defining an appropriate relevance threshold is important for the development of the moving block bootstrapping (MBB) stages. Integrating this threshold into the resampling strategy enables the MBB-RW procedure to concentrate more informative blocks, thus enhancing model performance.

Block bootstrapping

Given the situation of imbalanced regression above, the importance of this study is to produce methods that are efficient of developing the representation of the decision regions regarding extreme events. Resampling strategies are the most widely used method for addressing imbalanced datasets, as they change the data distribution to balance the targets50. In this study, one of the popular block bootstrapping methods, the Moving Block Bootstrapping (MBB) or also known as Overlapping Block Bootstrapping, is introduced to solve the problems of imbalance regression.

Moving block bootstrapping (MBB)

The MBB is a resampling method used to assess the accuracy of statistical estimates when dealing with observations that are in the form of a finite time series of correlated data51. The MBB performs the sampling procedure only within a row of formed blocks. The initial assumption is that the general block length will continue to increase with additional observations in the original sample. Unlike the traditional bootstrap method, the MBB resampled the data in contiguous blocks, rather than by individual values52. As a result of such a procedure, the time series structure of the original data remains unchanged within every single block of data53.

Table 3 shows the summary of the MBB process, which consists of 3 steps. Meanwhile, Fig. 2 shows the visualization of MBB process. According to 25, the MBB method splits the original sample (Y1,…,Yn) into overlapping blocks of size Ɩ, defined as:

For i = 1,…., n-Ɩ+1, the set of blocks are formed:

where Bi represents the ith block that was obtained from the original sample, Y is the target variable, n is the total number of observations, and l is the length of each block. The number of sampled blocks, B is given by:

Let \(\:{B}_{1}^{*}\),…,\(\:{B}_{b}^{*}\) be a random sample drawn with replacement from original blocks {B1,…,Bn−Ɩ+1},

b is the number of resampled blocks that will be pasted together to form a pseudo-time series. The ith resampled block, \(\:{B}_{i}^{*}\) consist of the following observations:

Then the full MBB sample then constructed by concatenating these resampled blocks, written as:

A more detailed illustration of MBB procedure is shown in Fig. 2. The process started with the original dataset, which is then divided into overlapping blocks of fixed length. The total number of blocks is determined by Eq. (6). The next process is the number of resampled blocks. A random sample of each selected block is then drawn with replacement. The number of resampled blocks is determined by the Eq. (7). Finally, all these resampled blocks are concatenated to form a new MBB dataset.

Moving Block Bootstrapping (MBB) visualization process.

Modified moving block bootstrapping with relevance weighting (MBB-RW)

Relevance weighting is a technique used to assign varying levels of importance (weights) to different target values in a regression problem based on their relevance to the study objective13. Relevance-weighted modifications of resampling approaches, inspired by the relevance concept from utility-based learning8,32 are proposed to handle both temporal dependencies and target imbalance. Values below the extreme threshold, tE (155 µg/m3) are considered as normal or moderate events. Meanwhile, values above the tE are considered extreme events. Once the PM10 has been set, the values which is below the threshold are assigned as 0, and the values that are above the threshold are assigned as 1. During the MBB-RW process, relevance-weighted sampling is implemented to address the rarity of these extreme events in the dataset. Each overlapping block of 24-hour observations is allocated a binary relevance score, where blocks comprising at least one extreme value are considered relevant. According to 18, sampling weights are introduced to provide a higher diversity in the modified block bootstrapping dataset, which will include samples that can be either balanced or more favourable to the rare or normal cases. In this study, sampling weights at which a higher weight of 5 is assigned to extreme blocks and a lower weight of 1 is allocated to normal blocks. The 5:1 weight ratio represents a compromise designed to increase exposure to extreme events and avoid bias. The selected ratio is chosen as the target percentage of extreme cases to include in the new training set, with the remaining cases drawn from the set of normal observations18. This increases a higher diversity in the adjusted training sets, which will include samples that are either balanced or more favourable to the extreme or normal cases. Previous research on relevance-based resampling provides empirical validation for this method, such as REBAGG18 and WERCS8. This step can effectively increase the chance of selecting rare but important events. These sampling weights are scaled to create a probability distribution to guide the resampling process, thus ensuring a balanced representation of extreme and normal pollution episodes in the bootstrapped dataset while preserving temporal dependencies. The probability weight for extreme events and normal events is derived as below:

Let:

Weight (extreme) (we) = 5 (weight assigned to extreme blocks).

Weight (normal) (wn) = 1 (weight assigned to normal blocks).

Ne: Number of extreme blocks.

Nn: Number of normal blocks.

Then,

Table 4 shows that the algorithm of MBB-RW, that integrated with relevance weight. First, the air pollution dataset with PM10 for the next day as a target variable, y is inputted. The block length of 24 h was chosen when this study aimed to predict PM10 concentrations after 24 h. Set the threshold tE=155 (for upgrading extreme relevance) and sampling weight, ws (Extreme block weight we=5, normal block weight wn=1). In the MBB-RW procedure, each observation is assigned a relevance weight, 1 if the PM10 concentration is more than tE=155, else assigned as 0. Next, the blocks are constructed, with the total number of blocks given by B = N-ɭ+1. After forming these blocks, each block is assigned a sampling weight, ws. Each block is assigned as we=5 if it contains at least one observation with relevance weight 1, otherwise, it is assigned as sampling weight wn=1. When dealing with weighted resampling, especially in a random sampling process, the algorithm requires sampling probabilities, not raw weights, to determine how likely each block is to be chosen. In the context of moving block bootstrapping with relevance-based weighting, converting sampling weights into probabilities is a crucial step to maintain the functionality of the random sampling process. Next, a set of indices {s1,s2,….sN} is sampled from the full list of possible blocks. Each index is selected based on the probability that corresponds to its assigned sampling weight, reflecting the associated importance of the corresponding block. Higher sampling probability may appear multiple times in the resampled dataset. Once all the sampled blocks have been extracted, they are combined sequentially to form a new MBB-RW dataset. This combined dataset improves the representation of rare but important events.

Figure 3 provides a clearer illustration of the MBB-RW procedure. The key differences between standard MBB and MBB-RW stem from the integration of relevance weights, sampling weights and sampling probabilities. Those elements are introduced in the MBB-RW to increase the representation of extreme events in the dataset. The procedure started with the original dataset, which was then divided into overlapping blocks of fixed length. The total number of blocks is determined by Eq. (6). Then, the relevance threshold, te is applied to each observation within the blocks. Next, a sampling weight of 5 is assigned to each block that contains at least one extreme observation, reflecting their importance. Afterwards, blocks are sampled with replacement from the original set, using probabilities corresponding to their assigned weights, prioritising blocks containing extreme events. Finally, all these resampled blocks are concatenated to form a new MBB-RW dataset.

MBB-RW illustration process.

Model development

Extreme gradient boosting (XGBoost)

In this study, XGBoost will be applied for model development of air pollution dataset. XGBoost is a decision tree ensemble based on a gradient boosting framework that is highly scalable, designed for supervised learning tasks, including both classification and regression problems54,55. Several studies utilise XGBoost for predicting air pollution in environmental modelling4,34,56,57,58.

Unlike traditional gradient boosting algorithms, XGBoost incorporates several novel enhancements. The objective function of XGBoost comprises a loss function and a regularisation term59. XGBoost build an additive expansion of the objective function by minimising a loss function59. XGBoost exclusively focuses on decision trees as its base classifiers, and a variation of the loss function is used to control the complexity of the trees54. It operates by sequentially building an ensemble of decision trees, where each new tree attempts to correct the prediction errors made by the previous ensemble to produce a single prediction60. Different from gradient boosting, the XGBoost objective function involves a regularisation term to avoid overfitting54.

Objective Function- Assume that a dataset is D= {(xi, yi): i = 1…n} where xi represents the input features and yi the corresponding target values. Let \(\:{\widehat{\text{y}}}_{\text{I}}\) denote the predicted output produced by an ensemble model, represented by the generalised model as follows61

here,fk is a regression the kth regression tree in the ensemble.

fk(xi) is the prediction (or score) given by the kth tree for the i-th observation in the data.

K is the total number of trees in the model.

To learn the functions fk, XGBoost aims to minimise the following regularised objective function.

where,

\(\:{\sum\:}_{\text{i}}\text{L}\left({\widehat{\text{y}}}_{\text{i}},{y}_{i}\right)\:\) is the custom loss function. Loss function, L is a differentiable function that measures the difference between the prediction \(\:{\widehat{\text{y}}}_{\text{I}}\) and the actual yi 59. This loss function can be embedded into the split criterion of decision trees, leading to a pre-pruning strategy.

\(\:{\Omega\:}{(\text{f}}_{\text{k}})\) is a regularisation term that limits the complexity of each tree fk to prevent overfitting61. The penalty term or regularisation term Ω is included as follows:

where,

λ and γ are the parameters controlling the penalty for the number of leaves T and the magnitude of leaf weights w, respectively.

Parameter setting for XGBoost

All models were developed using the default parameter settings provided by the respective libraries (scikit-learn) to evaluate the performance of XGBoost in predicting PM10 concentrations in Malaysia. Using general parameters allows for a fair comparison and provides a reasonable baseline to adjusted models, suggesting that general parameter settings are an appropriate starting point for model evaluation. The XGBoost regressor is an ensemble algorithm method based on boosting and employs relatively aggressive parameter settings. In this study, the parameter setting (max_depth = 6, learning_rate = 0.3, seed = 100 and n_estimator = 100) was obtained through empirical tuning to achieve the best adjustment between accuracy and generalization. These parameters were evaluated, and the selected configuration that produced the lowest RMSE effectively captured the nonlinear behaviour of PM10. These configurations tries to balance computational efficiency between speed and accuracy, with built-in regularisation capabilities to prevent overfitting.

Model performance

In the context of air pollution modelling, accurate prediction of PM10 concentrations is crucial. To evaluate the accuracy of prediction models, several performance indicators are commonly used. Among them are Root Mean Square Error (RMSE) and Mean Absolute Error (MAE). According to62, a comparison of the best statistical PM10 forecasting methods with the lowest values of RMSE was conducted to select the best fit prediction model. The difference between the estimated and observed values is obtained to investigate the performance of each estimation method. The most appropriate methods are selected based on the lowest value of each statistical evaluation. The criteria formulas are shown below:

where,

n is the total number of hourly measurements of a particular station.

\(\:{\widehat{\text{Y}}}_{\text{i}}\) is the estimated value of PM10,t+24 h.

\(\:{\text{Y}}_{\text{i}}\) is the observed value of PM10,t+24 h.

Result and discussion

Descriptive statistics summary for PM10 concentration before and after resampling MBB-RW

Table 5 shows the descriptive statistics for PM10 concentrations in Shah Alam from 2013 to 2022 without and with resampling using the modification of Moving Block Bootstrapping with relevance weighting (MBB-RW) approach. From the table, the number of observations is approximately the same, which is without resampling (N = 82431) and when resampling with MBB-RW (N = 82416). After the MBB-RW approach, the number of normal events decreases from 81,499 to 78,243, and the number of extreme events increases from 932 to 4173. The mean, median, and standard deviation show an increase following the application of the MBB-RW approach. Specifically, the mean value rose from 41.7078 µg/m3 to 54.35 µg/m3. The median increased from 35 µg/m3 to 38 µg/m3 and the standard deviation widened from 31.7368 µg/m3 to 55.394 µg/m3. These changes are due to the additional extreme PM10 values introduced from the MBB-RW strategy. The skewness of the PM10 concentrations was evaluated to understand the symmetry and presence of extreme values with and without the MBB-RW. Following the MBB-RW procedure, the skewness decreases from 4.574 to 3.6010. This reduction is attributed to the introduction of additional extreme PM10 values through the MBB-RW strategy, which increased the frequency of extreme values in the upper tail.

Boxplot of hourly PM10 concentration in Shah Alam.

Figure 4 demonstrates the boxplot of hourly PM10 concentrations in Shah Alam, Malaysia, from 2012 to 2022. During years 2013 to 2016, these years reveal higher median concentrations, larger variability, the broadest interquartile ranges (IQRs) (Upper quartile Q3 – Lower quartile Q1) and various extreme values, with some exceeding 500. This shows that regular and extreme pollution episodes frequently occur throughout these years. These frequent and extreme values in 2013–2016 are likely due to transboundary haze or industrial sources63,64,65. Meanwhile, from 2017 to 2019, the medians and interquartile ranges (IQRs) decreased substantially. Outliers are still present, but less extreme and frequent. Air quality is improving, but continues to fluctuate. The most marked improvement is observed in the year 2020 to 2022, which reveals the lowest median values, narrowest IQRs, and minimal extreme values. These PM10 concentration improvements may be linked to enhanced pollution control measures, changes in emission sources, and reduced industrial and vehicular activity during the COVID-19 pandemic66. All distributions are positively skewed, indicated by extended upper whiskers and high-value outliers. This means that while most hourly values are low and regular, there are occasional spikes in pollution.

The correlation heatmap of the air pollution dataset in Shah Alam.

Figure 5 shows the correlation heatmap in Shah Alam, representing the correlation coefficients between different air pollution variables. The colour gradient scales from blue (strong negative correlation, −1) to red (strong positive correlation, + 1), with pale shades indicating weaker correlations. This heatmap yields valuable discoveries into the relationship between meteorological and air pollutants parameters. Regarding our main variable of interest, PM10, we found that all variables related exhibit positive correlation with PM10, except for Relative Humidity. From the correlation heatmap, there was a negative relationship between PM10 and Relative Humidity (RH) (r=−0.03). These findings are supported by67 when their study shows a negative correlation between PM10 and RH. This suggests that as the humidity level rises, PM10 is prone to decrease slightly. Understanding these correlations helps in air quality analysis, especially when developing a model and predicting extreme pollution events.

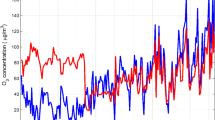

Distribution plot without resampling and with MBB-RW resampling strategy.

Two-Dimensional Kernel Density Estimation of PM10 concentrations.

Figure 6 and Fig. 7 illustrate the distribution of PM10 without resampling and after applying the MBB techniques. From Fig. 6, the original dataset (blue line) exhibits a sharp peak at lower concentrations and a thin right tail, reflecting a highly right-skewed distribution, consistent with a skewness of 4.574. After MBB-RW (red line), the distribution remains right-skewed. However, the peak flattens, indicating a moderate reduction in skewness to 3.6010. This visual change suggests that the MBB-RW strategy introduced an additional observation of extreme values, resulting in a distribution with reduced skewness in the air pollution dataset. As a result, the distribution appears more balanced with reduced skewness. Figure 7 shows a Two-Dimensional Kernel Density Estimation (2D KDE) of PM10 contour plot, demonstrating the joint distribution between the original PM10 concentrations and the PM10 values generated through MBB-RW approach. The density contours illustrate regions of differing concentration frequency, with the red contour indicating the top density and the blur regions indicating gradually lower densities.

A red contour showing that both the original (without resampling) and with MBB-RW of PM₁₀ values range approximately between 0 and 50 µg/m³. This high-density area implies that most of the PM10 observations in both datasets (with and without MBB-RW) reflect to low PM₁₀ concentrations, corresponding with normal air quality patterns in non-extreme conditions. However, a significant difference is seen in the MBB-RW values compared to the original data. The MBB-RW process generated a broader range of air pollution events, particularly skewed towards higher concentrations. This vertical extension of the density contours reveals that the MBB-RW approach effectively generates wider variability of extreme PM₁₀ values. This pattern shows that the MBB-RW strategy enhances the representation of extreme air pollution events in the dataset, providing a more balanced distribution for further analysis.

Performance of XGBoost without resampling

Table 6 presents the performance of machine learning model, Extreme Gradient Boosting (XGBoost) evaluated on the overall dataset before the imbalanced data treatment, Moving Block Bootstrapping with Relevance Weight (MBB-RW) for hourly PM10 concentration predictions in Shah Alam station from the period of 2013 to 2022. The performance indicator, Root Mean Squared Error (RMSE) and Mean Absolute Error (MAE) are provided for overall air pollution dataset, normal and extreme events, along with the total number of observations (N) in each category. Overall dataset referring to air pollution dataset, without distinguishing between normal and extreme events. Normal events represent observations below the threshold, te, typically corresponding to common air pollution levels. Meanwhile, extreme events denote observations exceeding the relevance threshold, te, reflecting rare and extreme episodes of air pollution events.

The results reveal a distinct discrepancy in model performance across different levels. Overall, the RMSE and MAE values for the original air pollution dataset are 19.8421 and 14.2396, respectively, reflecting the average difference between predicted and actual values. When split by event type, the model performs better during normal events, with a reduced RMSE of 18.2636 and an MAE of 13.4048. However, performance extensively worsens during extreme events, with the RMSE increasing to 108.3010 and the MAE rising to 85.1041. These sharp increases in error values suggest that the model struggles to accurately predict extreme pollution levels. These findings are strongly supported by 4, whose study shows that extreme values affect the model’s performance.

Furthermore, the distribution of events within the dataset is highly imbalanced. Out of 16,486 total observations for testing XGBoost model, only 192 observations (approximately 0.23%) correspond to extreme events. Conversely, normal events account for 16,295 instances. This major imbalance in distribution causes the model’s poor predictive performance in extreme cases, as it is more biased toward the more frequently occurring normal events during model’s training8,17,68.

The model indicates good performance in predicting normal air pollution concentrations but presents limitations in catering extreme pollution events. These results emphasize the necessity of utilizing appropriate resampling strategies to enhance model robustness and accuracy, especially for underrepresented extreme cases.

Performance of XGBoost with resampling – moving block bootstrapping with relevance weight (MBB-RW) resampling approach

Table 7 presents the model’s performance after applying the MBB-RW technique enhanced with relevance weighting. This table summarizes quantitatively the performance in terms of RMSE and MAE, measured separately for the full dataset, normal events, and extreme events. Additionally, the sample sizes (N) for each category are reported.

The after MBB-RW results demonstrate improvements in model performance, especially in the prediction of extreme events. The RMSE for extreme events reduced significantly from 108.3010 (without resampling) to 39.1846, constituting a reduction of approximately 63.8% in prediction error. Similarly, the MAE declines sharply from 85.1041 to 27.1082, aligning closely with the MAE observed for normal events. These results portray a notable improvement in the model’s ability to capture extreme pollution patterns. Simultaneous reduction in RMSE and MAE validates the conclusion that model accuracy for extreme events improved meaningfully.

The enhancements can be attributed to the increased representation of extreme events in the dataset after MBB-RW strategy. The number of extreme cases rose from 192 to 769, enhancing the model’s exposure to rare events during training. This more balanced distribution, enhanced by relevance weighting, allowed the model to effectively capture and generalize the underlying characteristics of both normal and extreme events. This is supported by 31 when the Block Bootstrapping approach can enhance the performance on extreme events prediction. Moreover, several studies have proven that block bootstrapping can serve as a powerful resampling method when it can generate robust estimates, producing stable estimation, especially in environmental analysis27,53.For normal events, performance also improved slightly. The RMSE decreased from 18.2636 to 16.8257, and the MAE decreased from 13.4048 to 12.3299. These enhancements suggest that the MBB-RW technique minimally affects the model’s performance on frequent cases while simultaneously addressing the underperformance in extreme scenarios. The modification of MBB with relevance weighting (MBB-RW) substantially mitigated the effects of data imbalance and enhanced the model’s predictive capability, particularly for extreme pollution events. This finding highlights the effectiveness of resampling strategies in improving model prediction in the context of imbalanced regression tasks.

Comparison of XGBoost Performance Metrics with and without MBB-RW Resampling.

Table 8 shows how the model’s performance compares with and without the modified MBB-RW method. The results are compared for the overall dataset, normal events, and extreme events using three error measures: RMSE (Root Mean Squared Error) and MAE (Mean Absolute Error). The number of data points (N) in each group is also presented.

After applying MBB-RW, the model’s performance improved overall. For the full dataset, the RMSE reduced from 19.84 to 18.44, and MAE dropped from 14.24 to 13.16. This shows that the model became more accurate overall. For normal events, the model also achieved slightly better. RMSE reduce from 18.26 to 16.83 and MAE from 13.40 to 12.33. This suggests a small but consistent improvement for predicting normal pollution events.

The most obvious improvement occurred in extreme events. without resampling approach, the model faced high error performance—RMSE was 108.30 and MAE was 85.10. After MBB-RW, these reduced sharply to 85.1041 and 27.1082, respectively. Moreover, the number of extreme cases increased from 192 to 769, giving the model more data to learn from. A clearer visualization of each performance evaluation with and without resampling is presented in Fig. 8, where the RMSE and MAE for extreme events dropped dramatically when the MBB-RW method was applied.

Actual vs. predicted of the XGBoost model before and after MBB-RW.

Figure 9 presents the actual vs. predicted plot for PM10 concentrations without and with data resampling using MBB-RW. (A) represents the actual vs. predicted of PM10,24 H using XGBoost model for extreme events without data resampling, and (B) represents the actual and predicted of PM10,24 H using XGBoost model for extreme events with data resampling using MBB-RW. The actual event observations are depicted in blue, while the predicted extreme observations are shown in orange. Referring to plot A, the predicted values mostly underestimate the actual PM10 concentrations for extreme events. The orange prediction line lies significantly below the blue line for most of the observations. This underestimation suggests that the model, trained on the original imbalanced air pollution dataset, is biased toward frequent events and lacks exposure to high extreme events. From plot B, model predictions after the training data were improved using the MBB-RW approach. The visual difference is outstanding. The predicted value demonstrates closer consistency with actual observations. There is a significant improvement in the model’s ability to produce the peaks of the true PM10 concentrations, indicating that MBB-RW helped mitigate the imbalance problems by increasing the extreme cases in the training set. This improvement suggests that modification of MBB-RW enabled the model to better analyse and respond to complex patterns associated with extreme pollution events. In summary, applying MBB-RW helped the model make better predictions, especially for extreme pollution events, while also slightly improving accuracy for normal events. This shows that MBB-RW is an effective method to handle imbalanced data in this study.

Conclusion and recommendations

This study highlights the challenges of predicting extreme air pollution events using XGBoost machine learning model trained on imbalanced datasets. Initial results before the MBB showed that the model consistently underpredicted extreme PM₁₀ concentrations. The modification of the Moving Block Bootstrap with relevance weighting (MBB-RW) resampling technique significantly improved the model’s performance by better representing the extreme events in the training data when the RMSE, and MAE shown by the reduction of error before and after MBB-RW from 108.3010 to 39.1846 and 85.1041 to 27.1082. Visual comparisons with and without resampling demonstrated significant improvements in peak alignment, variability, and magnitude accuracy of predictions. These findings emphasize the effectiveness of MBB-RW in addressing data imbalance and enhancing model prediction towards extreme events. Overall, integrating appropriate resampling methods such as MBB-RW is essential for improving the air quality forecasting models.

This study has several limitations that should be considered. First, the resampling method is designed specifically for datasets with similar statistical properties to air pollution data, such as positive, skewed distributions with extreme events. Second, the analysis was limited to PM10 data from a single monitoring station in Shah Alam, Malaysia, which may restrict the generalizability of the findings to other regions with different meteorological profiles.

Based on the findings of this study, several recommendations can be built for future work. Comparative studies using multi-station data may highlight robustness in imbalanced data studies and improve air quality forecasting frameworks. Future research could apply the proposed MBB-RW framework beyond PM₁₀ to include other air pollutants such as PM₂.₅, NO₂, SO₂, and O₃, together with different stations in Malaysia and other regions. Moreover, the method shows potential for adaptation to other imbalanced time-series problems beyond the air pollution area, including extreme rainfall prediction, energy demand forecasting, and financial time-series analysis. Such extensions would demonstrate the versatility of the MBB-RW approach and strengthen its contribution to modelling extreme events in diverse domains.

A specific comparison of MBB-RW against these established previous methods would further demonstrate whether weighting improves the handling of rare, extreme events, other than what traditional resampling strategies can achieve. Beyond XGBoost, the proposed resampling method, MBB-RW should be integrated with other machine learning models such as Random Forest, Artificial Neural Network and Light GBM. This would show the adaptability of the approach and help identify whether certain algorithms are more effective in handling extreme value prediction under different conditions.

Data availability

The air quality dataset analyzed in this study was obtained from the Department of Environment (DOE) Malaysia and is subject to confidentiality restrictions. As such, the data cannot be publicly shared. Researchers interested in accessing the data may submit a formal request to the Department of Environment Malaysia www.doe.gov.my. Approval is subject to DOE Malaysia’s data-sharing policies and compliance requirements. Further information about the dataset and how it was used in this study can be obtained from the corresponding author, Dr. Ahmad Zia Ul-Saufie (email: ahmadzia101@uitm.edu.my).

References

Aini, Q. et al. Factors that contribute to air pollution in Malaysia. Malaysian J. Bus. Econ. 8, 43–58 (2023).

Gulati, S. et al. Estimating PM2.5 utilizing multiple linear regression and ANN techniques. Sci Rep. 13, 22578 (2023).

Rani, N. L. A., Azid, A., Khalit, S. I., Juahir, H. & Samsudin, M. S. Air pollution index trend analysis in Malaysia, 2010-15. Pol. J. Environ. Stud. 27, 801–808 (2018).

Muhammad, M., Ul-Saufie, Z. & Radi, A. A. Evaluating the Performance of Tree-Based Model in Predicting Haze Events in Malaysia. International Journal of Advanced Computer Science and Applications. 16, 1127-1135 (2025).

He, Z., Guo, Q., Wang, Z. & Li, X. A. Hybrid Wavelet-Based Deep Learning Model for Accurate Prediction of Daily Surface PM2.5 Concentrations in Guangzhou City. Toxics. 13, (2025).

Gupta, N. S. et al. Prediction of air quality index using machine learning techniques: A comparative analysis. J. Environ. Public. Health. 2023, 1–26 (2023).

Martins, L. D. et al. Extreme value analysis of air pollution data and their comparison between two large urban regions of South America. Weather Clim. Extrem. 18, 44–54 (2017).

Ribeiro, R. P. & Moniz, N. Imbalanced regression and extreme value prediction. Mach. Learn. 109, 1803–1835 (2020).

Jafarigol, E. & Trafalis, T. B. A Review of Machine Learning Techniques in Imbalanced Data and Future Trends. (2023).

Jaffe, D. A. et al. Wildfire and prescribed burning impacts on air quality in the United States. Journal of the Air and Waste Management Association 70, 583–615 https://doi.org/10.1080/10962247.2020.1749731Preprint at (2020).

Noor, N. M., Deak, G., Ul-Saufie, A. Z., Mohd, Z. & Rozainy, R. Modeling of Particulate Matter (PM10) during High Particulate Event (HPE) in Klang Valley, Malaysia. www.ijcs.ro (2022).

Branco, P., Ribeiro, R. P., Torgo, L., Krawczyk, B. & Moniz, N. SMOGN: a Pre-processing Approach for Imbalanced Regression. in Proceedings of Machine Learning Research. 74, 36–50 (2017).

Torgo, L., Ribeiro, R. P., Pfahringer, B. & Branco, P. SMOTE for regression. in Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics) 8154 LNAI 378–389 (2013).

Avelino, J. G., Cavalcanti, G. D. C. & Cruz, R. M. O. Resampling strategies for imbalanced regression: a survey and empirical analysis. Artif Intell. Rev. 57, 1-42 (2024).

Wang, C., Deng, C., Wang, S. & Imbalance-XGBoost Leveraging Weighted and Focal Losses for Binary Label-Imbalanced Classification with XGBoost. http://arxiv.org/abs/1908.01672 (2019).

Liu, X. & Tian, H. Research on Imbalanced Data Regression Based on Confrontation. Processes. 12, (2024).

Branco, P., Torgo, L. & Ribeiro, R. P. Pre-processing approaches for imbalanced distributions in regression. Neurocomputing 343, 76–99 (2019).

Branco, P. et al. REBAGG: Resampled Bagging for Imbalanced Regression. in Proceedings of Machine Learning Research. 94 67–81 (2018).

Moniz, N., Ribeiro, R., Cerqueira, V. & Chawla, N. SMOTEBoost for regression: Improving the prediction of extreme values. in Proceedings – 2018 IEEE 5th International Conference on Data Science and Advanced Analytics, DSAA 150–159 (Institute of Electrical and Electronics Engineers Inc., 2018). 150–159 (Institute of Electrical and Electronics Engineers Inc., 2018) https://doi.org/10.1109/DSAA.2018.00025 (2018).

Silva, A., Ribeiro, R. P. & Moniz, N. Model Optimization in Imbalanced Regression. in International Conference on Discovery Science https://doi.org/10.48550/arXiv.2206.09991 (2022).

Felix, E. A. & Lee, S. P. Systematic literature review of preprocessing techniques for imbalanced data. IET Software 13, 479–496 https://doi.org/10.1049/iet-sen.2018.5193 Preprint at (2019).

Torgo, L. & Ribeiro, R. Predicting rare extreme values. in Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics) 3918 LNAI 816–820 (2006).

Fonseca, J. & Bacao, F. Geometric SMOTE for imbalanced datasets with nominal and continuous features. Expert Syst. Appl. 234, 121053 (2023).

Yang, Y., Zha, K., Chen, Y. C., Wang, H. & Katabi, D. Delving into Deep Imbalanced Regression. inInternational Conference on Machine Learning. https://doi.org/10.48550/arXiv.2102.09554 (2021).

Kuffner, T. A., Lee, S. M. S. & Young, G. A. Block bootstrap optimality and empirical block selection for sample quantiles with dependent data. Biometrika Trust. 103, 1–18 (2018).

Radovanov, B. & Marcikic, A. A comparison of four different block bootstrap methods. Croatian Oper. Res. Rev. 5, 189–202 (2014).

Burhanuddin, D. Shaadan. Controlled sampling approach in improving multiple imputation for missing seasonal rainfall data. Res. Sq. https://doi.org/10.21203/rs.3.rs-679692/v1 (2021).

Mader, M., Mader, W., Sommerlade, L., Timmer, J. & Schelter, B. Block-bootstrapping for noisy data. J. Neurosci. Methods. 219, 285–291 (2013).

Ebtehaj, M., Moradkhani, H. & Gupta, H. V. Improving robustness of hydrologic parameter Estimation by the use of moving block bootstrap resampling. Water Resour. Res 46, W07515 (2010).

Vogel, R. M. The Moving Blocks Bootstrap versus Parametric Time Series Models (1996).

Chen, X., Gupta, L. & Tragoudas, S. Improving the forecasting and classification of extreme events in imbalanced time series through block resampling in the joint Predictor-Forecast space. IEEE Access. 10, 121048–121079 (2022).

Torgo, L. & Ribeiro, R. LNAI 4702 - Utility-Based Regression (in (Springer, 2007). https://doi.org/10.1007/978-3-540-74976-9_63

Ayus, I., Natarajan, N. & Gupta, D. Comparison of machine learning and deep learning techniques for the prediction of air pollution: a case study from China. Asian J. Atmospheric Environment. 17, 48732–48745 (2023).

Dao, T. H. et al. PTI,. Analysis and Prediction for Air Quality Using Various Machine Learning Models. in Proceedings of the Seventh International Conference on Research in Intelligent and Computing in Engineering. 33 89–94 (2023).

Verma, A., Ranga, V. & Vishwakarma, D. K. Combating Respiratory Health Issues with Intelligent NO2 Level Prediction from Sentinel 5P Satellite. in IEEE 20th India Council International Conference, INDICON 2023 882–886 (Institute of Electrical and Electronics Engineers Inc., 2023). 882–886 (Institute of Electrical and Electronics Engineers Inc., 2023) https://doi.org/10.1109/INDICON59947.2023.10440910 (2023).

Azid, A. et al. Prediction of the level of air pollution using principal component analysis and artificial neural network techniques: A case study in Malaysia. Water Air Soil. Pollut. 225, 1-14 (2014).

Guo, Q., He, Z. & Wang, Z. The characteristics of air quality changes in Hohhot City in China and their relationship with meteorological and Socio-economic factors. Aerosol Air Qual. Res. 24, 230274 (2024).

Kumar, A. S. et al. wiley,. Recent Developments of Bioethanol Production. in Bioenergy Research: Evaluating Strategies for Commercialization and Sustainability 175–208 (2021). https://doi.org/10.1002/9781119772125.ch9

Syed, A. et al. Spatial and Temporal air quality pattern recognition using environmetric techniques: A case study in Malaysia. Environ. Sciences: Processes Impacts. 15, 1717–1728 (2013).

Latif, M. T. et al. Long term assessment of air quality from a background station on the Malaysian Peninsula. Sci. Total Environ. 482–483, 336–348 (2014).

Khadijah Arafin, S., Ul-Saufie, Z., Azura Md Ghani, A., Ibrahim, N. & Alam, S. N. Feature selection methods using RBFNN based on enhance air quality prediction: insights from Shah Alam. IJACSA) Int. J. Adv. Comput. Sci. Applications. 15, 509-514 (2024).

Noor, N. M., Bakri Abdullah, A., Yahaya, M. M. & Ramli, N. A. A. S.Trans Tech Publications Ltd,. Comparison of linear interpolation method and mean method to replace the missing values in environmental data set. in Materials Science Forum. 803, 278–281 (2015).

Libasin, Z., Ul-Saufie, Z. & Hasfazilah, A. A. identifying missing data mechanisms among incomplete air pollution datasets in Malaysia. https://doi.org/https://doi.org/10.1007/978-3-031-43922-3_18 doi:https://doi.org/10.1007/978-3-031-43922-3_18. (2024).

Malaysian Meteorological Department. Malaysia’s climate. Malaysian Meteorological Department (2025).

Srivastava, C., Singh, S. & Singh, A. P. Estimation of air pollution in Delhi using machine learning techniques. in. International Conference on Computing, Power and Communication Technologies, GUCON 2018 304–309 (Institute of Electrical and Electronics Engineers Inc., 2019) https://doi.org/10.1109/GUCON.2018.8675022 (2018).

Kumar, A. & Goyal, P. Forecasting of air quality index in Delhi using neural network based on principal component analysis. Pure Appl. Geophys. 170, 711–722 (2013).

Ditrich, J. Data representativeness problem in credit scoring. ACTA OECONOMICA PRAGENSIA. 23, 1-17 (2015).

Verma, A., Ranga, V. & Vishwakarma, D. K. Forecasting of Satellite Based Carbon-Monoxide Time-Series Data Using a Deep Learning Approach. in International Conference on Innovative Trends in Information Technology, ICITIIT 2023 (Institute of Electrical and Electronics Engineers Inc., 2023). (Institute of Electrical and Electronics Engineers Inc., 2023) https://doi.org/10.1109/ICITIIT57246.2023.10068609 2023).

Crone, S. F., Lessmann, S. & Stahlbock, R. Utility based data mining for time series analysis - Cost-sensitive learning for neural network predictors. in Proceedings of the 1st International Workshop on Utility-Based Data Mining, UBDM ’05 59–68 https://doi.org/10.1145/1089827.1089835 (2005).

Moniz, N., Branco, P., Torgo, L. & Krawczyk, B. Evaluation of Ensemble Methods in Imbalanced Regression Tasks. Proceedings of Machine Learning Research 74 http://www.kdd.org/kdd-cup (2017).

Mignani, S. & Rosa, R. The moving block bootstrap to assess the accuracy of statistical estimates in Ising model simulations. Computer Phys. Communications. 92, 203-213 (1995).

Sroka, Ł. Applying block bootstrap methods in silver prices forecasting. Econometrics 26, 15–29 (2022).

Radovanov, B. & Marcikic, A. Testing the performance of the investment portfolio using block bootstrap method. Economic Themes. 52, 166–183 (2014).

Martínez-Munoz, G., Bentejac, C. & Csorg, O. B. Gonzalo Martínez-Munoz, A. A Comparative Analysis of XGBoost. https://www.researchgate.net/publication/337048557 (2019).

Shahani, N. M., Zheng, X., Liu, C., Hassan, F. U. & Li, P. Developing an XGBoost regression model for predicting young’s modulus of intact sedimentary rocks for the stability of surface and subsurface structures. Front Earth Sci. (Lausanne) 9, 761990 (2021).

Jing, H. & Wang, Y. Research on Urban Air Quality Prediction Based on Ensemble Learning of XGBoost. in E3S Web of Conferences 165EDP Sciences, (2020).

Kumar, K. & Pande, B. P. Air pollution prediction with machine learning: a case study of Indian cities. Int. J. Environ. Sci. Technol. 20, 5333–5348 (2023).

Nguyen, A. T., Pham, D. H., Oo, B. L., Ahn, Y. & Lim, B. T. H. Predicting air quality index using attention hybrid deep learning and quantum-inspired particle swarm optimization. J Big Data. 11, 49-58(2024).

Chen, T., Guestrin, C. & XGBoost: A Scalable Tree Boosting System. https://doi.org/10.1145/2939672.2939785 doi:10.1145/2939672.2939785 (2016).

Mienye, I. D., Sun, Y. A. & Survey of Ensemble Learning: Concepts, Algorithms, Applications, and Prospects. IEEE Access 10, 99129–99149 https://doi.org/10.1109/ACCESS.2022.3207287 Preprint at (2022).

Pan, B. Institute of Physics Publishing,. Application of XGBoost algorithm in hourly PM2.5 concentration prediction. in IOP Conference Series: Earth and Environmental Science. 113 (2018).

Abdullah, S., Ismail, M., Ahmed, A. N. & Abdullah, A. M. Forecasting particulate matter concentration using linear and non-linear approaches for air quality decision support. Atmosphere (Basel). 10, 1-24 (2019).

Shaziayani, W. N., Ul-Saufie, Z., Ahmat, H. & Al-Jumeily, D. Ahmad, Coupling of quantile regression into boosted regression trees (BRT) technique in forecasting emission model of PM 10 concentration. https://doi.org/10.1007/s11869-021-01045-3/Published (2021).

DOE. Department of Environment:Malaysia Quality Report 2016. Kuala Lumpur: Ministry of Energy, Science, Technology, Environment and Climate Change, Malaysia. (2016).

Mohd Shafie, S. H. et al. Influence of urban air pollution on the population in the Klang Valley, malaysia: a Spatial approach. Ecol Process. 11, 1-16 (2022).

DOE. Department of Environment:Malaysia Environmental Quality Report. Kuala Lumpur: Ministry of Energy, Science, Technology, Environment and Climate Change, Malaysia. (2021). (2021).

Rahim, N. A. A. A. et al. Institute of Physics,. Predicting Particulate Matter (PM10) during High Particulate Event (HPE) using Quantile Regression in Klang Valley, Malaysia. in IOP Conference Series: Earth and Environmental Science. 1216 (2023).

Ren, J., Zhang, M., Yu, C. & Liu, Z. Balanced MSE for Imbalanced Visual Regression. in Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition vols -June 2022 7916–7925 (IEEE Computer Society, 2022).

Acknowledgements

We would like to express our sincere thanks to Faculty of Computer Science and Mathematics and Universiti Teknologi MARA (UiTM) for their unwavering support throughout this study. Additionally, we also extend our heartfelt appreciation to the Department of Environment (DOE) Malaysia for supplying air quality data that made this research possible.

Funding

This research was funded by the Malaysian Ministry of Higher Education, grant number UiTM.800-3/2FRGS (003/2025).

Author information

Authors and Affiliations

Contributions

M.M, A.Z.U.S performed the method development, data analysis, and writing the manuscript. N.F.A.R and A.G provided guidance and reviewed the manuscript N.M.N reviewed the manuscript. All authors reviewed and approved the final version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Muhammad, M., Ul-Saufie, A.Z., Radi, N.F.A. et al. Modified resampling strategy for extreme values in imbalanced air pollution data using moving block bootstrapping approach with relevance weighting (MBB-RW). Sci Rep 16, 290 (2026). https://doi.org/10.1038/s41598-025-28416-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-28416-5