Abstract

Extracting structured data from clinical notes remains a key bottleneck in clinical research. We hypothesized that with minimal computational and annotation resources, open-source large language models (LLMs) could create high-quality research databases. We developed Strata, a low-code library for leveraging LLMs for data extraction from clinical reports. Trained researchers labeled four datasets from prostate MRI, breast pathology, kidney pathology, and bone marrow (MDS) pathology reports. Using Strata, we evaluated open-source LLMs, including instruction-tuned, medicine-specific, reasoning-based, and LoRA-finetuned LLMs. We compared these models to zero-shot GPT-4 and a second human annotator. Our primary evaluation metric was exact match accuracy, which assesses if all variables for a report were extracted correctly. LoRa-finetuned Llama-3.1 8B achieved non-inferior performance to the second human annotator across all four datasets, with an average exact match accuracy of 90.0 ± 1.7. Fine-tuned Llama-3.1 outperformed all other open-source models, including DeepSeekR1-Distill-Llama and Llama-3-8B-UltraMedical, which obtained average exact match accuracies of 56.8 ± 29.0 and 39.1 ± 24.4 respectively. GPT-4 was non-inferior to the second human annotator in all datasets except kidney pathology. Small, open-source LLMs offer an accessible solution for the curation of local research databases; they obtain human-level accuracy while only leveraging desktop-grade hardware and ≤ 100 training reports. Unlike commercial LLMs, these tools can be locally hosted and version-controlled. Strata enables automated human-level performance in extracting structured data from clinical notes using ≤ 100 training reports and a single desktop-grade GPU.

Similar content being viewed by others

Introduction

Artificial intelligence (AI) tools in medicine aim to improve patient care by learning patterns across massive, well-annotated datasets. These annotations, describing key patient characteristics, are often derived from clinical notes, such as pathology and radiology reports. Extracting ground-truth labels from clinical notes remains a critical research bottleneck. As a result, our study focuses on research database curation, namely the systematic process of turning massive collections of clinical notes into clean tabular datasets. The traditional approach to database curation is manual chart review, where researchers annotate thousands of reports. If the research team wishes to add a new variable to their database, a new round of exhaustive annotation is required. It is intractable to curate health-system scale research databases with retrospective manual annotation. There is an unmet need for scalable and human-level data extraction from clinical reports with minimal engineering overhead.

Automated tools, including deep learning1,2, statistical machine learning3,4,5,6, and rule-based7,8,9 methods, have been proposed to extract structured information from clinical reports. While these systems have achieved promising performance, they require substantial effort to develop and deploy. As a result, their adoption by the research community remains limited. Large language models (LLMs) have demonstrated exciting capabilities in general text understanding, detecting errors in radiology reports10, streamlining radiology report impressions11,12, and automatic synoptic reports13. Recent works14,15 present powerful methods for data-scarce few-shot learning, particularly in relation extraction and named entity recognition tasks. However, they require non-trivial model customization and pretraining strategies. Ideally, researchers could structure information extraction as a question-answering task using a shared, minimal-engineering setup. Commercial LLMs offer promising capabilities, yet they are ill-suited for health-system-scale research database curation due to reproducibility, cost, and privacy concerns. Commercial LLMs are continually updated, and if new versions of the LLM perform unexpectedly worse given the same inputs, it can be infeasible to reproduce prior extractions after model updates. Furthermore, costs associated with using commercial LLMs can pose significant barriers, and institutional agreements are often required to address privacy concerns. Ideally, researchers could locally host their LLMs (“self-hosting”) on cheap “desktop-grade” hardware; these local LLMs would enable reproducible research while minimizing costs and avoiding the transfer of sensitive data.

Open-source LLMs, such as Llama16,17,18 and Mistral19, offer a promising solution for efficient “self-hosted” information extraction. Small LLM variants (i.e., < 11 billion parameters) with instruction-tuning allow for efficient general text understanding and cheap customization. Recent medically fine-tuned LLMs, including PMC-Llama 13B and Lama-3-8B-UltraMedical, have demonstrated compelling performance on biomedical question-answering benchmarks20,21. Small versions of the DeepSeek-R1 model have demonstrated strong general reasoning performance while building on small-scale general LLM backbones22, Llama-8B and Qwen-2.5-7B. While these LLMs offer exciting performance across general benchmarks, it remains unclear how these tools perform in clinical information extraction.

In this study, we focus on empowering individual researchers to curate large-scale research databases at their institution, using minimal computing (i.e., a few hours on a single GPU) and annotation resources (i.e., < 100 reports annotated). Our strategy aligns with the practical constraints and foundational research workflows of academic medical centers. Following this strategy, we do not develop massive 400B + open-source LLMs that require multiple H100 servers to host or generic universal LLMs that can generalize across institutions. We hypothesized that with simple fine-tuning techniques, small-scale open-source LLMs could enable individual researchers to create high-quality datasets customized to their institution, empowering their downstream AI research. Our extraction quality goal is “human-level performance”; specifically, we test the ability of LLMs to achieve statistically non-inferior performance to a medical student extracting the same information from clinical notes. The comparison to medical students is not meant to suggest equivalence to specialist performance, but rather to assess whether LLMs can match the standard level of accuracy currently used to curate large-scale research databases. We benchmark a wide range of “self-hosted” LLM approaches on four diverse real-world datasets from ongoing AI research projects at our institutions. To facilitate the broader adoption of customized open-source LLMs, we release a low-code library, Strata, that supports the fine-tuning, evaluation, and deployment of LLMs for clinical information extraction (Fig. 1).

Dataset construction flowchart for our breast (a), kidney (b), prostate (c), and MDS (d) datasets. UCSF = University of California, San Francisco, VUMC = Vanderbilt University Medical Center, EHR = electronic health records, MDS = Myelodysplastic Syndrome.

Materials and methods

We collected four diverse clinical text datasets underlying active research projects at our institutions across breast, kidney, prostate, and bone marrow cancer (Myelodysplastic Syndrome, MDS). These real-world datasets represent our intended use cases, where LLM-based tools empower the automatic creation of large-scale structured datasets to support downstream research at a single medical center. Our breast, kidney, and prostate datasets were annotated by medical students, and our MDS dataset was annotated by a hematologist. The medical students were specifically trained for the task by attending physicians with 8 to 11 years of post-graduate clinical experience. We describe each dataset in detail, including its creation and annotation process, in Appendix A1 of our supplementary methods.

Extracting structured information using customized large Language models

State-of-the-art LLMs are trained to follow instructions specified in text prompts when generating their answers. This process, called instruction-tuning23, enables users to define tasks with instruction prompts. To leverage instruction-tuned LLMs for clinical information extraction, a user can write out the desired task (e.g., extract the cancer subtype from a pathology report) in a text prompt before inputting the full clinical report. Given the prompt and the clinical report, the LLM will generate an answer in the text format, which can be parsed into the desired structured format using a simple post-processing script. This process is illustrated in Fig. 2.

Process for leveraging open-source LLMs to extract structured information from clinical reports. The input to the LLM consists of a user prompt, which specifies the task (e.g., cancer subtyping), paired with the report text. An open-source LLM contains pre-trained weights (e.g., 8B parameters for Lamma-3.1 8B) learned across web-scale datasets; when fine-tuning an LLM, we introduce additional LoRA weights (~ 400 M parameters) to customize the model. The model generates an answer in text, parsed by a user-defined “parse function” into a tabular form.

In this work, we are interested in customizing local LLMs for clinical information extraction. To enable the full research community to benefit from this resource, we focus on LLMs that can be easily hosted on a single consumer desktop-grade GPU, such as the Nvidia RTX 3090 or 4090. As a result, we leverage three classes of small LLMs: (1) medically fine-tuned QA models, including PMC-LLaMA 13B and Llama-3-8B-UltraMedical, (2) accessible versions of leading reasoning models, including DeepSeek R1 distilled to Llama-3.1 8B and Qwen2.5-Math 7B, and (3) popular base instruction-tuned models, such as Llama-3.1 8B, Gemma-2-9B, and Mistral-v0.3 7B. These billion-parameter LLMs are orders of magnitude smaller than state-of-the-art LLMs such as Llama-3.1 405B and GPT-4. To enable smaller LLMs to achieve human-level performance in clinical information extraction, we fine-tune them. Specifically, we leverage simple preprocessing scripts to convert human-annotated structured labels (i.e., in a tabular format) to text answers for the LLM to generate (e.g., “My answer is: {“DCIS”: “yes”, “ILC”: “no”}”). Given a dataset of pairs of clinical reports and answer texts, we can train the LLM to correctly predict the intended answer from the user prompt and clinical report. This process is illustrated in Fig. 3. To fine-tune LLMs using desktop-grade hardware, we employ Low-Rank Adaption (LoRA)24. Instead of directly optimizing the billions of parameters in our LLMs, LoRA keeps the initial weights of the LLM frozen and adds a small number of new parameters (e.g., < 400 M parameters) across the LLM to customize the model’s behavior.

Process of using Strata to create research databases. The researcher labels a subset of the reports, defines prompts, and creates parsing functions to interpret the model’s response. Given this information, Strata supports LLM fine-tuning and deployment. In LoRA fine-tuning, the model’s LoRA weights are trained to generate the templated answers. Strata supports model evaluation and efficient bulk-inference. Bulk-inference is used to create a research database from all unlabeled reports at the institution.

To enable the broader clinical and research communities to fine-tune and deploy their LLMs on local hardware, we developed Strata, a lightweight library for clinical information extraction. As illustrated in Fig. 3, Strata is used in three phases. First, researchers annotate a small dataset of clinical reports with their desired structured labels, which are partitioned into a training, development, and testing set. In addition to these annotations, researchers define the task for the LLM by specifying a prompt, a parsing script, and a template function. As described above, the parsing script maps the LLM’s answer to the desired structured format, and the template function maps the structured annotation to a text-based answer. Given an annotated dataset, Strata supports LoRA-based fine-tuning and flexible hyperparameter optimization. Finally, Strata offers a rich automated evaluation suite for model selection. We leverage Strata across all our experiments.

LLM development and evaluation

Across all datasets, we developed prompts, parsing scripts, and template functions to maximize the performance of our open-source LLMs on the development sets. In these experiments, the models were used without fine-tuning (i.e. zero-shot). For the DeepSeek-R1 models, we modified the prompts to allow for additional reasoning preceding the final answer, as shown in Supplementary Table S1. For evaluation, we ensure that responses are complete in Supplementary Table S2, then explicitly excluded the reasoning content between the “Think:” and “Answer:” tags and used only the final output in our accuracy calculations. This ensured that DeepSeek was evaluated on the same basis as other models and avoided penalizing models for verbosity or non-standard formatting in intermediate steps. Given a final set of prompts and scripts, we used LoRA to fine-tune our best-performing non-reasoning model, Llama-3.1. We show detailed examples of templates and responses in Appendix A2.

LLM fine-tuning requires selecting multiple hyperparameters, which leads to a variety of design choices on model architecture, data augmentation, and training recipes. LoRA includes two hyperparameters, namely rank and alpha. LoRA rank determines the size of the trainable parameters in fine-tuning, and alpha scales the output of these trainable layers relative to the original model’s outputs. In the context of the MDS dataset, we also experimented with class balancing, where rare values are oversampled during training. Finally, we also tuned the number of training epochs and the learning rate of the optimizer. Using Strata, we performed a grid search over possible hyperparameters in mixed-dataset fine-tuning on the development set. We applied the optimal hyperparameters in all other settings.

We considered three possible variants of LLM fine-tuning. First, we trained a customized LLM for each task within each dataset separately (i.e., question-specific fine-tuning). Next, we explored dataset-specific fine-tuning, in which all tasks within a single dataset (e.g., specimen site, cancer presence, and subtyping) were included in fine-tuning a model. Finally, we also considered training a single fine-tuned LLM across all datasets and tasks (i.e., mixed-dataset fine-tuning).

We leverage the annotations of our first human annotator as our reference labels in all evaluations. Our primary evaluation metric is “exact-match accuracy,” where we evaluate the ability of the LLM to extract all components of user-specified queries correctly. For instance, if the reference (i.e., first human annotator) response for breast cancer subtyping were “{DCIS: Yes, ILC: No, IDC: Yes},” the LLM predictions would have to match across all fields to gain a point in our cancer subtyping exact-match accuracy calculation. For dataset-level exact match accuracies, the LLM must correctly extract the information across all user queries for that clinical report. In addition to our stringent exact match accuracies, we also evaluate average accuracies and macro-F1, precision, and recall scores in our Supplementary Tables S3, S4, and S5.

We compare our open-source LLM results to GPT-4 and a second human annotator. All LLMs and the second human annotator were given the same prompts, and their extractions were compared to the first human annotator extractions as reference labels, using the metrics described above. The accuracy of our second human annotator serves as our benchmark for “human-level performance”. We hypothesize that fine-tuned LLMs would be non-inferior to the second human annotator in clinical information extraction. Our goal is not to outperform the second human annotator in matching the first human annotator; we expect disagreements between human annotators to reflect ambiguity in clinical reports. The second human annotator for each test set was a medical student who received training on the relevant pathology classifications and the specific criteria for annotation for each dataset. Both annotators receive appropriate training, so each of their performance individually is on par with what we would expect in real-world database curation. We compare the labels of the two readers using Cohen’s kappa coefficient and inter-annotator agreement rates.

To understand the dependence of fine-tuned LLM performance on labeled data, we performed learning curve analyses. We randomly sampled subsets of each training set (consisting of 25% and 50% of the full training data) and fine-tuned using only the subsets at the dataset-specific level. We then evaluated the model performance with different subsets against the second human annotator to determine the number of labeled reports needed for human-level performance.

To understand the effect of hyper-parameters on our performance, we performed a sensitivity analysis. For each hyperparameter (i.e., LoRA rank, LoRA scaling, number of epochs, and learning rate), we varied one hyperparameter value while holding the others constant. For all fine-tuning experiments, we used a linear learning rate scheduler with a warmup of 5 steps. The learning rate increases during the warmup phase and then decays linearly over the remainder of training. We did not perform additional tuning of the learning rate schedule. This supports our broader aim of evaluating how well models perform under minimal configuration. In particular, this setup offers a robust and practical starting point for low-code workflows, where researchers can fine-tune models with minimal effort and domain-specific engineering. We evaluated the difference in exact match accuracy.

Statistical analysis

We used scikit-learn (version 1.5.1; scikit-learn.org) and SciPy (version 1.14.1; scipy.org) to compute all evaluation metrics, including F1-score, precision, and recall. We computed evaluation metrics across 5000 bootstrap samples (16) to obtain 95% confidence intervals (CIs). To compare the exact match accuracy of LLMs to the accuracy of a second human annotator, we utilized a non-inferiority t-test using the stats R package (version 4.4.1; cran.r-project.org). Given the size of each test set, 80% desired power, a 0.05 p-value, and the standard deviation of the second human annotator accuracy, we computed the margin of error measurable by each test set. This power calculation was performed using the pwr R package (version 1.3-0; cran.r-project.org), and we utilized dataset-specific margins in each non-inferiority calculation. Given 294, 130, 72, and 218 reports in the breast, prostate, kidney, and MDS test sets, this resulted in non-inferiority margins of 6.3%, 8.2%, 13.5%, and 5.9%, respectively.

Ethical compliance

This study was approved by the University of California, San Francisco (UCSF) Institutional Review Board. The requirement for written informed consent was waived by the UCSF Institutional Review Board. All experiments were conducted in accordance with the relevant guidelines and regulations.

Results

Report characteristics

Our breast, kidney, prostate, and MDS datasets contained 978, 238, 431, and 724 reports (Fig. 1). Of these reports, 294, 72, 130, and 218 were randomly selected for held-out testing for breast, kidney, prostate, and MDS extractions, and these reports contained an average of 766, 688, 423, and 785 words, respectively. Amongst breast, kidney, and prostate test sets, 65%, 94%, and 85% respectively contained a confirmation of cancer. 7% of the MDS test set confirmed untreated MDS. We report detailed text and label statistics for all four datasets in Table 1.

Inter-annotator analysis

We report our prompts for each dataset and task in Table 2 and a detailed model performance evaluation in Table 3. Our primary performance metric, exact match accuracy, evaluates the ability of a model to correctly extract all fields for a report, using the labels of the first human annotator as the reference. Our second human annotators obtained exact match accuracies of 75.5 (95% CI 70.1, 80.3), 83.3 (95% CI 73.6, 90.3), 83.1 (95% CI 76.2, 88.5), 85.8 (95% CI 80.7, 89.9) in the breast, kidney, prostate, and MDS test set, respectively. These correspond to average Cohen’s kappa coefficient scores of 0.87, 0.78, 0.88, and 0.54 for each dataset and inter-annotator agreement among 97.3%, 94.0%, 93.3%, and 92.4% of fields. This extraction-level error rate aligns with prior studies on medical record abstraction, which report error rates ranging from 0.7% to 20.8%25. The inter-annotator agreement sources of disagreement are reported in Supplementary Figure S1.

Our manual review revealed that on average across all datasets, 48.7% of disagreements stemmed from human error and 39.4% from inherent ambiguity in the pathology reports. In the breast dataset, many human errors were due to omission of the lymph node in the specimen source, which are often buried within long reports describing multiple breast samples. In the kidney dataset, left-right labeling errors were common. In the MDS dataset, many errors seemed to stem from the complexity of the reports. Annotators have to extract multiple pieces of information from long, detailed narratives. More detailed metrics are shown in Supplementary Fig. S2.

LLM performance

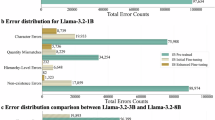

We compare model accuracies to the accuracies of independent second human annotators using non-inferiority tests. We consider a model to have obtained “human-level performance” if it meets the accuracy of our second human annotator. When leveraging dataset-specific fine-tuning, Llama-3.1 8B obtained exact-match accuracies of 91.5 (95% CI 88.1, 94.6), 87.5 (95% CI 77.8, 94.4), 91.5 (95% CI 86.2, 95.4), 89.4 (95% CI 84.9, 93.1) in the breast, kidney, prostate, and MDS test set, respectively. This model obtained higher accuracies than the second human annotator in all four datasets, and the result was statistically non-inferior in all test sets (p < 0.001). These results are summarized in Fig. 4, and we report additional detailed metrics by data field in Table 4. We report additional metrics, including average accuracies and precision, recall, and F1 scores, in Supplementary Tables S3, S4, and S5.

Summarized dataset-level results. Bar graph shows exact match accuracy for each dataset. Selected models include the second human annotator, zero-shot GPT-4, and fine-tuned and zero-shot Llama 3.1 8B. The human, snowflake, and flame icons represent the human, zero-shot, and fine-tuned models respectively.

Without fine-tuning (i.e. zero-shot), open-source LLMs obtain significantly worse performance than our second human annotator, who had an average exact match accuracy of 81.9%. DeepSeek-R1-Distill-Llama-8B obtains the highest overall performance, with an average exact match accuracy of 56.8% and 72.4 (95% CI 67.0, 77.6), 66.7 (95% CI 55.6, 76.4), 80.8 (95% CI 73.1, 86.9), and 7.3 (95% CI 4.1, 11.5) on breast, kidney, prostate and MDS, respectively. Llama-3.1 8B obtained similar zero-shot performance averaging 55.8 over the four datasets, and 42.9 (95% CI 37.1, 48.6), 75.0 (95% CI 63.9, 84.7), 28.5 (95% CI 20.8, 36.9), and 76.6 (95% CI 70.6, 82.1) for each dataset. Critically, Llama-3.1 8B obtained this performance without the use of any intermediate reasoning steps and, hence, was readily amenable to further fine-tuning. Gemma-2-9B and Mistral-v0.3 7B both obtained worse performance than Llama-3.1 8B, with average exact match accuracies of 52.1% and 48.6%, respectively. We found that LLama-3-8B-UltraMedical and PMC-LLaMA 13B both performed worse than Llama 3.1 8B, with 39.1 and 16.7 average exact match across datasets.

Llama-3.1 8B obtained qualitatively similar results across fine-tuning settings, obtaining average exact match accuracies ranging from 85.5% to 90.0% compared to 81.9% by second human annotators. These results suggest that researchers can obtain human-level results if they annotate data for a single task (i.e., question-specific finetuning); alternatively, institutions can train a single model to support all tasks (i.e., mixed-dataset finetuning) to obtain human-level results. Dataset-specific fine-tuned Llama-3.1 8B significantly outperformed its zero-shot counterparts across all datasets (p < 0.05), with high average precision and recall of 93.9 and 91.0. We visualize model predictions for clinical tasks in Supplementary Figure S2. GPT-4, which received no fine-tuning, obtained an average exact match accuracy of 86.1% across the four datasets. GPT-4 was non-inferior (p < 0.001) to the second human reader in breast, prostate, and MDS test sets. GPT-4 did not obtain non-inferior accuracy relative to the second human annotator (80.6% vs. 83.3%, p = 0.09) in the kidney test set.

Learning curve analysis and hyperparameter sensitivity

To understand the minimum number of training samples required to achieve human-level performance, we retrained our dataset-specific fine-tuned Llama-3.1 8B model with subsets of our training set (Fig. 5). Across each test set, we report the performance of our second human annotator on our dotted line. Our fine-tuned Llama-3.1 8B matched human performance when leveraging only 100, 95, 43, and 72 training reports for the breast, kidney, prostate, and MDS test sets, respectively. Each dataset-level model took 1–3 h to train using a single A40 GPU. At the time of writing, these experiments would cost between $0.80 to $2.40 on a private cloud. Next, we assessed the sensitivity of our models to the optimal choice of training hyper-parameters, including learning rate, number of training epochs, LoRa scaling (i.e., alpha), and LoRa rank (Fig. 6). We found that our fine-tuning results are sensitive to the choice of learning rate; while leveraging a learning rate of 1e-4 obtains a dataset-average exact-match accuracy of 89.3%, learning rates of 1e-3 and 1e-5 obtain degraded accuracies of 5.0% and 80.3%, respectively.

Learning curves analysis across all four datasets. Blue line graphs show the exact match accuracy for dataset-specific finetuned Llama 3.1 when leveraging 0%, 25%, 50%, and 100% of the training data. The dotted line displays the performance of the second human annotator. The stars show the number of reports used to match performance of the second human annotator.

Hyperparameter sensitivity analysis for mixed-dataset fine-tuned Llama 3.1 8B. Heat maps display the average dataset-level exact match accuracy across datasets, across a range of values for each hyper-parameter. Llama 3.1 is robust to the choice of training epochs, LoRA (low-rank adaptation) scaling, and LoRA rank, but the choice of learning rate can significantly alter performance.

Discussion

We developed Strata, a lightweight open-source library for LLM-based clinical information extraction. Strata supports a wide range of LLMs, prompt development, parameter-efficient fine-tuning, model evaluation, and deployment. Using Strata and minimal compute resources, namely a single commodity GPU (Nvidia A40) per experiment, we demonstrated that fine-tuned open-source LLMs achieve human-level performance in diverse, real-world clinical research applications across prostate MRI, breast pathology, kidney pathology, and myelodysplastic syndrome pathology reports. Our fine-tuned Llama-3.1 8B model was non-inferior to a second human annotator in all test sets. Moreover, Llama-3.1 8B demonstrated human performance leveraging only 100, 95, 43, and 72 training reports in our breast, kidney, prostate, and MDS test sets. Under the guidance of expert clinicians, Strata now supports multiple LLM-backed research databases at our institutions. This deployment reflects not just technical performance, but also clinician acceptance of the tool’s outputs in real research workflows. We demonstrate that locally hosted fine-tuned LLMs offer a practical solution for clinical information extraction, requiring limited computational and human effort; our “low-code” open-source library is designed to empower individual researchers to curate high-quality (i.e., human-level) databases at their instruction, supporting their downstream AI research.

Much of the prior work on using LLMs for clinical information extraction has focused on commercial models such as ChatGPT26. In our study, we found that GPT-4 demonstrated human-level zero-shot performance in three out of four test sets, namely breast, prostate, and MDS, and it was not non-inferior to our second annotator in the kidney dataset. Relying on commercial models to develop research databases poses several challenges. Using commercial models for protected health information requires institutional agreements to address privacy concerns, creating a significant access barrier. Moreover, rigorous research practices require reproducibility; as commercial LLMs are updated without user oversight, researchers cannot easily reproduce prior model queries or ensure consistent extraction quality. In contrast, our small open-source LLMs can be locally hosted, version-controlled, and easily customized for new applications.

While there has been rapid progress in adapting open-source LLMs to medical20,21 and general reasoning tasks22, we found that both biomedically fine-tuned LLMs and general reasoning models did not outperform the base instruction-tuned Llama-3.1 8B model in clinical information extraction. Specifically, we found that Llama-3-8B-UltraMedical and PMC-Llama 13B obtained substantially worse performance than Llama-3.1 8B. Leading reasoning LLMs, including deepseek-r1-distill-llama-8b, obtained similar performance to Llama-3.1 8B, despite the use of intermediate reasoning steps. In contrast, our fine-tuned Llama-3.1 significantly exceeded the zero-shot Llama-3.1 model, obtaining human-level performance.

We found that open-source LLMs address the limitations of traditional NLP techniques. Trivedi et al.27 previously evaluated the performance of conventional NLP techniques applied to breast pathology reports and found that performance was lower for cases over 1024 characters with an F-measure of 0.83. In contrast, we saw consistent performance across our datasets, where all reports across all test sets contained over 1024 characters. Yala et al.6 developed statistical machine-learning tools for parsing breast pathology reports; while the tool achieved an exact-match accuracy of 90%, it required over 6000 annotations to reach this performance. In contrast, we demonstrated that we could achieve human-level results leveraging only ≤ 100 training set reports, addressing the primary limitation of this prior work. As a flexible framework for low-code evaluation of new models, Strata complements recent advances in LLM adaptation for low-resource biomedical settings. Given Strata, valuable directions for future work include detailed investigations in targeted prompt tuning, output correction strategies, and alternative learning rate schedules.

Our study has limitations. We used datasets from two tertiary academic institutions, which may not account for variability in pathology report structures, terminology, and style across different institutions. Our clinical information extraction tasks only capture a fraction of the data elements in clinical reports. Additional work is needed to broaden our results to more tasks and institutions.

Conclusion

Our study shows that fine-tuned open-source LLMs can achieve human-level performance while leveraging modest annotation (i.e., ≤ 100 reports) and computational resources (i.e., <$3.00). Self-hosted open-source LLMs offer a compelling and high-performance alternative to commercial LLMs. We release Strata, our lightweight library, to broaden access to these tools.

Data availability

The institutional data used in this study are not publicly available due to compliance with patient privacy protection but are available from the corresponding author on reasonable request.

Code availability

The underlying code for this study is available at https://github.com/YalaLab/strata.

References

Gao, S. et al. Using case-level context to classify cancer pathology reports. PLOS ONE. 15, e0232840. https://doi.org/10.1371/journal.pone.0232840 (2020).

Alawad, M. et al. Multimodal data representation with deep learning for extracting cancer characteristics from clinical text n.d.

Oleynik, M., Patrão, D. F. C. & Finger, M. Automated Classification of Semi-Structured Pathology Reports into n.d.

Kavuluru, R. Automatic Extraction of ICD-O-3 Primary Sites from Cancer Pathology Reports n.d.

Wieneke, A. E. et al. Validation of natural Language processing to extract breast cancer pathology procedures and results. J. Pathol. Inf. 6, 38. https://doi.org/10.4103/2153-3539.159215 (2015).

Yala, A. et al. Using machine learning to parse breast pathology reports. Breast Cancer Res. Treat. 161, 203–211. https://doi.org/10.1007/s10549-016-4035-1 (2017).

Breischneider, C., Zillner, S., Hammon, M., Gass, P. & Sonntag, D. Automatic Extraction of Breast Cancer Information from Clinical Reports. IEEE 30th Int. Symp. Comput.-Based Med. Syst. CBMS, Thessaloniki: IEEE, 2017, pp. 213–8.https://doi.org/10.1109/CBMS.2017.138 (2017).

Schroeck, F. R. et al. Development of a Natural Language Processing Engine to Generate Bladder Cancer Pathology Data for Health Services Res. Urol. ;110:84–91. https://doi.org/10.1016/j.urology.2017.07.056. (2017).

Nguyen, A. N., Moore, J., O’Dwyer, J. & Philpot, S. Automated Cancer Registry Notifications: Validation of A Medical Text Analytics System for Identifying Patients with Cancer from a State-Wide Pathology Repository.

Gertz, R. J. et al. Potential of GPT-4 for detecting errors in radiology reports: implications for reporting accuracy. Radiology 311, e232714. https://doi.org/10.1148/radiol.232714 (2024).

Doshi, R. et al. Quantitative evaluation of large Language models to streamline radiology report impressions: A multimodal retrospective analysis. Radiology 310, e231593. https://doi.org/10.1148/radiol.231593 (2024).

Zhang, L. et al. Constructing a large Language model to generate impressions from findings in radiology reports. Radiology 312, e240885. https://doi.org/10.1148/radiol.240885 (2024).

Bhayana, R. et al. Large Language models for automated synoptic reports and resectability categorization in pancreatic cancer. Radiology 311, e233117. https://doi.org/10.1148/radiol.233117 (2024).

Guo, Q. et al. Bridging generative and discriminative learning: Few-Shot relation extraction via two-Stage Knowledge-Guided Pre-training 2025. https://doi.org/10.48550/arXiv.2505.12236

Guo, Q. et al. BANER: Boundary-Aware LLMs for Few-Shot named Entity Recognition 2024. https://doi.org/10.48550/arXiv.2412.02228

Dubey, A. et al. The Llama 3 Herd of Models. (2024).

Touvron, H. et al. LLaMA: Open and Efficient Foundation Language Models (2023).

Touvron, H. et al. Llama 2: Open Foundation and Fine-Tuned Chat Models. (2023).

Jiang, A. Q. et al. las, et al. Mistral 7B (2023).

Wu, C. et al. PMC-LLaMA: Towards Building Open-source Language Models for Medicine. https://doi.org/10.48550/ARXIV.2304.14454 (2023).

Zhang, K. et al. UltraMedical: Building Specialized Generalists in Biomedicine. https://doi.org/10.48550/ARXIV.2406.03949 (2024).

DeepSeek-AI, Guo, D. et al. DeepSeek-R1: incentivizing reasoning capability in LLMs via Reinforcement Learning. https://doi.org/10.48550/arXiv.2501.12948 (2025).

Ouyang, L. et al. Training language models to follow instructions with human feedback (2022).

Hu, E. J. et al. LoRA: Low-Rank Adaptation of Large Language Models (2021).

Garza, M. Y. et al. Error Rates of Data Processing Methods in Clinical Research: A Systematic Review and Meta-Analysis of Manuscripts Identified Through PubMed. https://doi.org/10.21203/rs.3.rs-2386986/v2 (2023).

Le Guellec, B. et al. Performance of an Open-Source large Language model in extracting information from Free-Text radiology reports. Radiol. Artif. Intell. 6, e230364. https://doi.org/10.1148/ryai.230364 (2024).

Trivedi, H. M. et al. Large scale Semi-Automated labeling of routine Free-Text clinical records for deep learning. J. Digit. Imaging. 32, 30–37. https://doi.org/10.1007/s10278-018-0105-8 (2019).

Acknowledgements

We want to thank Trevor Darrell for thoughtful feedback on this study and Li-Ching Chen and Natalia Harguindeguy for beta-testing Strata. We also want to thank the UCSF Facility of Advanced Computing team, including Hunter McCallum, Sandeep Giri, Rhett Hillary, Marissa Jules, Sean Locke, and John Gallias, for their invaluable work in supporting our computational environment.

Author information

Authors and Affiliations

Contributions

AY and MC conceived of the project, and determined the methodology together with L Liu, L Lian, and YH. L Liu, L Lian, YH, and L Lai worked on the investigation, formal analysis, validation, and software. EK, NH, AP, YP, and MC curated the data. L Liu, L Lian, AY, and MC wrote the original draft. All authors reviewed the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Liu, L., Lian, L., Hao, Y. et al. Human level information extraction from clinical reports with finetuned language models. Sci Rep 15, 45239 (2025). https://doi.org/10.1038/s41598-025-28767-z

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-28767-z