Abstract

Tracking of nightly oral parafunctional activities, such as grinding and clenching, is useful to monitor sleep bruxism. Although polysomnography remains the gold standard for overnight observations, home-based long-term recordings are wished, to ensure time-distributed data and better patient compliance. Technologies based on inertial measurement unit (IMU) sensors allow the production of compact, and easy-to-use sensing instruments. This study aimed to develop a proof of concept using IMUs to capture mandibular motion for sleep bruxism assessment and to evaluate the accuracy of machine learning algorithms in classifying motions. A mobile recording setup was developed, incorporating an IMU equipped with tri-axial accelerometer, gyroscope, and magnetometer. A set of 21 in-vivo recordings from three individuals containing grinding and opening/closing mandibular movements was collected for ML algorithm training. The data was manually labeled and divided into train and test data (80/20). Several models were trained to classify the mandibular motion. Overall, the trained models were capable of correctly classifying up to 96% of the test data. The best results were obtained when data from all three sensors were used simultaneously. The results indicate that IMU sensors are a valuable option to assess mandibular motion for a small-scale wearable device.

Similar content being viewed by others

Introduction

Bruxism is defined as a repetitive masticatory muscle activity characterized by excessive teeth grinding, bracing, or thrusting of the mandible. Depending on whether these parafunctional behaviors occur during sleep or while the person is awake, it is distinguished between Sleep Bruxism (SB) and Awake Bruxism (AB)1. Clinical signs include dental damage such as wear, cracking, fractures, morning jaw stiffness, and grinding noises. Symptoms such as headache, muscle pain, or prosthodontic complications might be related to bruxism2. However, not all symptoms pose a significant burden on the patient’s health.

The prevalence of AB in today’s society is between 22.1 to 31%. SB on the other hand affects 15-40% of children and 8-10% of adults3.

While the presence of AB is generally established subjectively via self-reporting, questionnaires, ecological momentary assessment (EMA), or oral examination4,5, SB can be recognized, with an increasing degree of certainty, by patients’ self-evaluation, standardized questionnaires, clinical oral examination, or objective instrumental assessment.6,7. Furthermore, due to the categorization as a sleep-related movement disorder, SB has become a subject of research in the sleep medicine field. Therefore, instrumental assessment of SB has been the subject of many research studies in the past decades8. The current state of the art for the instrumental assessment of SB is polysomnography (PSG). The test is usually performed in a sleep laboratory under clinical supervision. Bruxism is known to be influenced by psychosocial factors such as stress and anxiety, and often presents itself in a fluctuating fashion9,10. Therefore, long-term field monitoring in the patients’ natural environment should be preferred to polysomnographic recordings, which are usually performed as a single-night measurement and have a high risk of false negatives. Additionally, they represent a cost for both patients and healthcare systems, due to their sophisticated technical requirements and the necessity of employing sleep specialists to manually analyze the results11.

The development of wearable, small-scale mobile devices that can be operated in a home setting by the patient represents an asset to clinicians for a definite assessment of SB. Additionally, it would open up the possibility of long-term monitoring and clarify the correlation between this behavior and stressors affecting the patients’ well-being. That would enable the identification of causes for increased bruxism and raisee the self-awareness of the patients toward their behavior. Furthermore, it might shed light on the still unclear correlation between muscle overuse and the development of orofacial pain. The current literature presents conflicting evidence regarding the association between bruxism and orofacial pain. Some studies report a positive correlation12,13, whereas others find no significant association14. A third line of research suggests that bruxism may co-occur with certain headache disorders, though the underlying mechanisms remain unclear15.

Such devices should possess specific characteristics, in order to become attractive for clinical use. The recording element must be simple enough for the patient to operate at home. The number of applied sensors should be kept as low as possible and the application itself should be simple. Finally, data analysis should be automated to reduce the costs of medical experts. The ideal device setup should have the least impact on the patient’s sleep quality while providing sufficient data to detect SB.

Polysomnographic recordings in the same extensive manner, as in the laboratory are not realistic in the home setting. Portable PSG devices have been developed in recent years, with a reduced acquisition setup compared to in-lab tests, resulting in improved sleep quality16,17 but also less data aquired. Data reduction requires a selection of the best data to acquire for the specific aim. Many studies have focused on the use of electromyography (EMG) for the detection of bruxism. However, it has been shown that pure EMG acquisitions were insufficient for detecting SB compared to standard polysomnographic scoring18. Possible confounding factors are other facial muscle activities/motion artifacts that might mimic bruxism events. In other studies, to improve SB scoring rate, audio or video recordings were coupled to EMG19. Other wearable devices developed include smart instrumented splints equipped with force sensors, to detect teeth contact during sleep20,21. However, these systems find very little clinical application due to their invasive setup involving the presence of cables/batteries in the oral cavity, which makes them unattractive to patients.

Detection of mandibular motion is another method to help identify SB, as mandibular movements have specific patterns during grinding/clenching. Recognition of mandibular motion with EMG stand-alone data is challenging. A previously conducted study attempted to classify oral tasks based on EMG signals and the role of the involved muscles in 30 different movements. Based on the relative contribution of the involved muscles, they were able to group them into six separate clusters22.

Inertial movement sensors (IMU) represent a valid solution for the detection of mandibular movements in field recording, due to their characteristics, such as small dimension, ease of use, and low cost. Furthermore, they are already used in three-dimensional motion tracking systems and wearable devices and are widely accepted in the field of biomechanics23. The use of machine learning (ML) for the automatic classification of the data can improve and speed up the analysis. It reduces human bias and can increase the reproducibility of the results.

The aim of this study was to design and evaluate a proof of concept for the utilization of IMU sensors in the acquisition of mandibular motion data for the assessment of SB and to evaluate the accuracy of ML algorithms in the classification of motion.

Methods

The hardware and software design for this proof of concept was limited by the minimum requirements set for the project and each component. The details are explained in the sections dedicated to each part of the project.

Hardware

A high-level block diagram of the overall system architecture for the envisioned mobile recording device to detect SB with an IMU is shown in Fig. 1a. The system incorporates a 9-axis IMU to be placed on the chin (Fig. 1b) to capture the motion parameters of the jaw.

Overview of the IMU recorder system: (a) architecture diagram, (b) IMU placement, and (c) recorder placement.

The IMU LSM9DS1 (STMicroelectronics NV, Plan-les-Ouates, Switzerland) is integrated by the manufacturer into the LSM9DS1 breakout board (Adafruit Industries LLC, New York City, NY, USA), which is connected via the Qwiic system to a data recorder utilizing the \(\text{ I}^2\)C bus system. As a recorder, a IoT DEV-22462 data logger (SparkFun Electronics Inc., Boulder, CO, USA) is used and mounted on a chest strap shown in Fig. 1c. It is equipped with a Bluetooth transceiver and a USB-C connector for data communication to a laptop or smartphone, LEDs as function indicators, and a recording button. The Li-ion polymer battery (Honcell Energy Company Ltd., Shenzhen, China) used for the power supply is rechargeable via the USB-C interface. To protect all the recorder’s electronic components, they are encased in a 3D-printed housing. Table 1 contains a summary of the hardware requirements that were defined for this project.

Recording IMU-sensor data involved a series of defined steps, as shown in Fig. 2. After the hardware is turned on, all the components are initialized, and the presence and function of an SD card are checked. After the recording starts, a continuous loop is initiated. Within this loop, the system tests if a USB or Bluetooth connection is enabled. If one such connection exists, data will be transmitted to an external computing device. If not, data will be recorded to the local SD card. As the sleep mode is not activated, the loop continues running. This process was programmed with the graphical integrated development environment Flowcode (Matrix Technology Solutions Ltd. (2024), Halifax, United Kingdom).

Flowchart of the IMU-sensor data recording process.

Hardware testing

Battery life was evaluated to establish the hardware’s reliability regarding the application. For this purpose, the recording device was charged and placed on a step motor. Over the duration of the test period, the step motor performed two rotations per minute to simulate the movement of a patient during the night. A long-term recording was conducted, and the battery levels were recorded during this period. The chosen time frame for the recording was 12 hours, which represents a significantly higher duration than an individual’s average sleep period. From the recordings, the battery performance was investigated to evaluate whether it was sufficient for our use.

Movement acquisition and classification

As part of the proof of concept, a total of 21 sample recordings were collected from three individuals. All participants were healthy volunteers who provided informed consent for the use of their data in algorithm training and the dissemination of study findings. Ethics approval was not required for the study, as the project did not fall within the scope of the Swiss Human Research Act. A formal waiver was granted by the Cantonal Ethics Committee of the State of Zurich (BASEC-ID: Req-2025-00836). Accordingly, all methods were carried out in accordance with the relevant guidelines and regulations. The data was recorded with a sampling frequency of 20 Hz using the IMU. The recordings featured two types of jaw movements—mouth opening/closing and teeth grinding—chosen to assess whether grinding can be distinguished from other non-grinding movements.

Each recording session started with a rest period, followed by specific movement sequences (grinding, open-close), with relaxation intervals between movements. The final dataset consisted of 526 recorded sequences (a total of 10,520 data points), distributed as follows: 350 labeled others, 89 labeled open/close, and 87 labeled grinding. Additional others sequences were excluded beforehand to reduce class imbalance. To increase the variability and add dimensions to the recorded dataset, participants performed the movements in different body orientations, including sitting or standing, lying on the left or right side, prone (face down), and supine (face up).

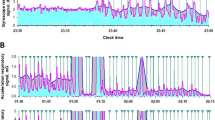

The signals of the X, Y, and Z Cartesian coordinates were acquired using the built-in accelerometer, gyroscope, and magnetometer of the IMU, resulting in a total of nine signal axes.

At first, the orientation of the sensor in the room was determined by using the ecompass function provided by MATLAB (The MathWorks Inc. (2024), version: 24.1.0 (R2024a), Natick, MA, USA), after preprocessing the data with a band-pass (1-15 Hz) and a Savitzky-Golay smoothing filter of 3rd order with a window size of 5. In the next step, the dataset was prepared for classification with machine learning algorithms (classifiers). The nine raw IMU signals were divided into fixed-size sections of 20 data points with a recording frequency of 20 Hz each, which corresponds to a 1 second time segment. These sections served as training data for the classifiers. Based on the information from the previous filtering and orientation recognition functions, each snippet was manually labeled as opening-closing, grinding, or no movement, following the protocol.

After splitting the signals into labeled categories, snippets were used to train various ML classifier models. The snippets did not include a label identifying which participant the data originated from. All data was treated equally and combined in one data set. A classifier model refers to the learned function, after training an algorithm on a dataset, which applies the trained output. For training classifiers, an 80/20 data split was used: 80% of the data were used to train the classifier model and 20% were used for testing.

To evaluate the impact of data representation and processing, four approaches were defined: (i) raw data, (ii) feature extraction (Table 2b), (iii) preprocessing (Table 2c), and (iv) preprocessing followed by feature extraction. Within each approach, a total of 16 distinct data constellations were constructed, including the individual axes of each sensor (\(3 \times 3 = 9\) constellations), the combined axes per sensor (accelerometer, gyroscope, magnetometer = 3 constellations), pairwise sensor combinations (accelerometer–gyroscope, accelerometer–magnetometer, gyroscope–magnetometer = 3 constellations), and the full combination of all three sensors (1 constellation). For every constellation, 11 classifiers (Table 2a) were used to train output models, resulting in a total of \(4 \times 16 \times 11 = 704\) models (Figure 3).

Overview of the relevant steps followed to create the input data for the algorithms and their output.

Algorithm classification accuracy calculation

The accuracy of each algorithm was calculated as follows: The labels were predicted in the test dataset and compared with the ground truth labels. The number of correctly predicted labels was determined for each label category. Accuracy was defined as the ratio of correctly predicted labels to the total number of labels in the test dataset, as shown in Eq. 1. To increase the understanding of the algorithm performance, Cohen’s \(\kappa\), the F-1 score, and Wilson score interval as a representation for binomial proportion were computed.

where:

Results

Hardware

The battery life was tested for suitability to our requirements. A comparison of the battery level versus time showed that over 12 h of recording time, the power unit level lowered from 100 to 45%, which was therefore acceptable for our application.

Movement acquisition and classification

The chosen classifiers underwent a comprehensive performance assessment based on the training dataset provided. Their predictions for model accuracies, based on signal or feature predictions, are summarized in Table 3. The highest classification precision was obtained by the model including the nine signals from the three IMU sensors simultaneously, regardless of the approach used. Overall, the highest classification accuracy was 96% and was reached by CatBoost with the preprocessing approach. The best classifications were reached with the preprocessing approach in 55% of the cases and by feature extraction in the remaining 45%. For feature-based prediction, the best results were achieved with CatBoost and Extra Trees. For preprocessing with feature extraction, the best classifiers were Extra Trees and Light GBM. Considering the different combinations of sensors as input, for both signal-based classification approaches (raw signal and preprocessing), the maximum accuracy was reached in 82% of the cases using the 9-axis sensor fusion classification. For the feature-based approach, the maximum accuracy was achieved in 64% of the cases using 9-axis sensor fusion and 46% with the data from the accelerometer. The preprocessing andfeature extraction approach gave 73% accuracy with 9-axis and the remaining 18% using the combination of gyroscope and magnetometer, and 9% with the gyroscope and accelerometer combination. While comparing the 9-axes sensor fusion across the four approaches, the highest accuracy was reached by CatBoost with the preprocessing approach (96%). Catboost was the algorithm performing better than others, also for the raw-data and the feature extraction approaches (87% and 90% , respectively), whereas for the preprocessing and feature extraction approach, the best accuracy was reached by Extra Trees (85%). The lowest accuracy results were obtained with the Naive Bayes Classifiers for all four approaches, the lowest being preprocessing and feature extraction (76%).

Box plots displaying the algorithm accuracies for the different sensor combinations.

Figure 4 compares the prediction accuracy of all algorithms for each sensor combination, excluding the combination of all sensors simultaneously. The magnetometer as a standalone sensor showed the lowest precision in all four training scenarios. The highest accuracies were achieved for the combination of at least two sensors.

Comparison algorithm Accuracy for the combined models for the sensor fusion based on the four different preparation approaches.

Figure 5 displays the overall accuracy for all algorithms and each trained model. Generally, the algorithms that performed well for one approach tended to achieve relatively high accuracies for the other approaches, too. The highest classification accuracy was achieved by the CatBoost algorithm with signal preprocessing, with a classification precision of 96%. The model demonstrated robust performance, with a Cohen’s \(\kappa\) of 0.93, an F1-score of 0.96, and a Wilson score confidence interval between 0.92 and 0.95, indicating a high degree of classification reliability.

Discussion

This study evaluated the suitability of IMU sensors combined with ML algorithms for detecting SB events in field recordings. For this goal, a custom-built device was developed, and its performance was evaluated at hardware, movement acquisition, and movement classification levels.

The development of a small, portable device for jaw movement recognition is challenging because it requires a balance between preserving sleep comfort and acquiring a sufficient amount of qualitatively acceptable bio-signals. Selecting the appropriate sensors is a critical step. To the authors’ knowledge, techniques like ML for movement recognition have not yet been applied to create a system for classifying mandibular motion using standalone IMU sensors.

To assess the feasibility of real-world deployment, the hardware setup and its impact on usability and data quality were evaluated. The prototype obtained in this work was assembled with off-the-shelf hardware components and custom-built parts. As a result, we were able to produce a device smaller and less complex than several previously used mobile devices for SB detection32,33. However, a direct comparison with other devices is not possible due to differences in use case and target context, as these systems were designed specifically for SB monitoring rather than for detecting jaw movement alone.

The performance tests focused on the system’s capability to record throughout the night while being minimally invasive to sleep comfort and ensuring reliable data acquisition. The battery test confirmed the feasibility of long-term recordings over 12 hours without recharging the power source.

Our results showed that the in-vivo experimental setup produced a dataset of adequate quality to be classified by an ML algorithm.

In our experimental setup, when analysing raw data (approach 1), we achieved a classification accuracy between 67% and 87%, showing that the sensor sensitivity and signal resolution were adequate for classifying mandibular motion.

The obtained results were determined by a combination of several factors, including the positioning and stability of the sensor, the quality of the dataset, and the algorithm metrics. Studies have shown that the input data quality is highly relevant to achieving good performances in subsequent learning34 and that the bare collection of a large dataset, regardless of the data quality, is not the most effective way to train a robust model35.

The classification accuracy varied among classifiers and approaches. However, the accuracy levels remained proportionally similar among classifiers between the approaches. Overall, the gradient boosting algorithms outperformed others. This is likely due to their iterative refinement process36. This might show that gradient boosting classifiers learned well based on the errors of their predecessors. The four used gradient boost classifiers (XGBoost, LightGBM, CatBoost, H2O GBM) are all using gradient boosting decision trees as an ensemble technique, but differ in speed, categorical data handling, and susceptibility to overfitting. CatBoost was especially relevant for our task due to its native handling of categorical features, which allows for direct input without label encoding. It preserves the structure of the input data and captures complex categorical interactions internally, which was beneficial for our classification.

Due to the small, imbalanced dataset used for training, the models were at a higher risk of overfitting, especially in algorithms such as LightGBM, which is best suited for large datasets, due to its leaf-wise growth. The XGBoost, the H20 GBM and the CatBoost are less prone to overfitting. CatBoost is the only algorithm appropriate for small-to-medium datasets that does not require any additional fine-tuning of the classification algorithm parameters.

The Decision Tree, on the other hand, had difficulties handling the data. This could be due to the high variance of the dataset, which makes it prone to overfitting. Only with preprocessing was this algorithm able to improve its classification accuracy, most likely because of reduced noise. The randomness in feature splits allowed the Extra Trees algorithm to handle the data better. Nevertheless, it struggled with unprocessed signals. For algorithms sensitive to feature scaling, such as KNN, it was expected that performance would improve on preprocessed data with more balanced features. Similar conditions apply to SVM, which has trouble organizing raw, noisy data within its kernels. This algorithm would benefit from additional preprocessing steps, such as scaling, as well as kernel refinement. The Random Forest algorithm handles noise much better. However, because it is already robust to noise, preprocessing does not provide a significant improvement compared to raw data. The architecture of Naive Bayes assumes feature independence, which leads to low performance when that assumption is violated. This algorithm is better suited for simple, separable, and clean data. The Neural Network showed good performance with preprocessed data since it benefits from steps like normalization. However, it is generally not well-suited for small datasets. The LightGBM algorithm is designed for complex patterns and large datasets. Its performance decreases when the data is noisy or unstructured, as seen with the raw data approach. Algorithms such as XGBoost use powerful boosting techniques, which improve classification accuracy when paired with appropriate preprocessing. However, they require careful regularization and precise parameter tuning. The CatBoost algorithm is well-suited for categorical features and complex real-world data. Therefore, it performed well across all four approaches. The lower performance observed during preprocessing alone can be explained by the loss of information, which causes the algorithm to lose some of its key advantages.

In all four approaches, the highest classification accuracy was achieved using all nine signals simultaneously to train the model. Although, in one approach, an alternative signal combination (Accelerometer & Gyroscope) reached an equally high accuracy. This indicates that taking all signals at the same time for training is the best way to achieve the highest results. This improvement is likely attributable to the classifiers benefiting from the increased amount of sensor information, without showing evidence of performance degradation due to information redundancy.

The highest classification accuracy was achieved by a model not using feature extraction. Based on previous publications, feature extraction often included a time and frequency domain combination37,38. This could suggest that our extracted features were not the most significant or relevant, or that the number of features was not sufficient for the model. The reduction of classification accuracy based on feature extraction with extensive preprocessing compared to feature extraction with minimal preprocessing was expected. The extensive preprocessing of the data also eliminates information that might be crucial for the extraction of signal features.

While the results provide valuable insights into the classification of mandibular motion, some limitations may affect the generalization and interpretation of the findings. Regarding the experimental setup, the sensing unit was attached directly to the skin with tape, which may have led to small deviations in the geometrical relationship of the sensor with respect to the head during the recordings. These shifts may have impacted signal quality and, consequently, classification performance. To address this drawback, a more stable and easy-to-attach solution is currently being developed to improve both reliability and repeatability of the measurements.

Due to the small sample size of three healthy volunteers, the study’s findings should be interpreted with caution. This limited dataset was deliberately chosen for proof-of-concept purposes to evaluate the initial feasibility of the sensor system. However, the restricted size and diversity of the dataset reduced the potential for broad generalization. Furthermore, the absence of participant identifiers limits the ability to assess inter-individual variability in classifier performance.

Another limitation arises from the nature of the data acquisition process. The data were collected from bruxism events simulated in a laboratory setting rather than natural occurrences, which may affect the classification of real-world mandibular movements. Bruxism movements are driven by involuntary contraction of the masticatory muscles that result in clenching and grinding of the teeth. Although the specific sequences of muscle activations occurring in a real bruxism event cannot be reproduced in a laboratory setting, we can assume that the final movement would not substantially differ from a simulated one. However, this clearly represents a limitation of the current study setup, and further analysis is planned to include data from bruxers and to validate the device against gold standard measurement systems such as polysomnography. Additionally, recorded movements did not cover all the possible activities that might occur during sleep. To address this issue, additional movement data has to be labeled and added to the dataset would improve the robustness of classification models and their ability to distinguish between different sleep-related activities.

A further issue concerns the data preprocessing. During the recording protocols, the proportion of time without movement was substantially larger than that involving movement. This balance may have biased the classifiers towards non-movement detection. Balancing out the datasets, where each class is represented more equally, could enhance the classification performance.

Finally, the presence of class imbalance posed a persistent challenge. Methods such as undersampling, oversampling, or weighting the loss function may have a positive effect on the classification accuracy39.

Future studies should include a larger and more diverse group of participants to enhance both the accuracy and generalization of the classification model. Alternatively, individual-specific models could be trained to better capture individual characteristics of mandibular movement. Expanding the training dataset to include a wider variety of oral behaviors would improve the model’s robustness and promote generalization.

Improvements in sensor design and placement are also planned. Refining the attachment and optimizing the location of the recorder unit (currently mounted on the chest) could address comfort issues and stability, therefore improving signal quality and user compliance.

Regarding data processing, all models in the current setup underwent identical preprocessing and/or feature extraction steps to allow a comparison. While this was useful for comparison, individualizing these two steps for each algorithm could lead to better outcomes, as different classifiers tend to benefit from distinct data characteristics.

Classifier accuracy might be improved by reevaluating the role of the magnetometer signal. Since its classification accuracy consistently underperformed in most models, future work could focus solely on classification based on accelerometer and gyroscope data. Finally, adaptation and sophistication of the feature extraction methods could be explored to increase the overall classification performance.

Conclusion

The results from this proof-of-concept study suggest that combining IMU sensors with machine learning algorithms may provide a viable strategy for assessing and classifying mandibular movements, potentially enabling the detection of bruxism events. Further refinement of the hardware, an expanded training dataset, and classification protocols could improve accuracy and should be explored in future studies.

Data availability

The data that support the findings of this study were collected by the authors and are available from the corresponding author upon reasonable request.

References

Lobbezoo, F. et al. International consensus on the assessment of bruxism: Report of a work in progress. J. Oral Rehabil. 45, 837–844. https://doi.org/10.1111/joor.12663 (2018).

Matusz, K. et al. Common therapeutic approaches in sleep and awake bruxism - An overview. Neurol. Neurochir. Polska 56, 455–463. https://doi.org/10.5603/PJNNS.a2022.0073 (2022).

Lal, S. J., Sankari, A. & Weber, K. K. Bruxism management. In StatPearls [Internet] (StatPearls Publishing, 2025). Accessed 1 May 2024.

Koyano, K., Tsukiyama, Y., Ichiki, R. & Kuwata, T. Assessment of bruxism in the clinic. J. Oral Rehabil. 35, 495–508. https://doi.org/10.1111/j.1365-2842.2008.01880.x (2008).

Botelho, J. et al. Relationship between self-reported bruxism and periodontal status: Findings from a cross-sectional study. J. Periodontol. 91, 1049–1056. https://doi.org/10.1002/JPER.19-0364 (2020).

Raphael, K. G. et al. Validity of self-reported sleep bruxism among myofascial temporomandibular disorder patients and controls. J. Oral Rehabil. 42, 751–758. https://doi.org/10.1111/joor.12310 (2015).

Raphael, K. G., Santiago, V. & Lobbezoo, F. Is bruxism a disorder or a behaviour? Rethinking the international consensus on defining and grading of bruxism. J. Oral Rehabil. 43, 791–798. https://doi.org/10.1111/joor.12413 (2016).

Cid-Verdejo, R. et al. Instrumental assessment of sleep bruxism: A systematic review and meta-analysis. Sleep Med. Rev. 74, 101906. https://doi.org/10.1016/j.smrv.2024.101906 (2024).

Van Selms, M. K., Visscher, C. M., Naeije, M. & Lobbezoo, F. Bruxism and associated factors among Dutch adolescents. Commun. Dent. Oral Epidemiol. 41, 353–363. https://doi.org/10.1111/cdoe.12017 (2013).

Câmara-Souza, M. B. et al. Awake bruxism frequency and psychosocial factors in college preparatory students. Cranio - J. Craniomandibul. Sleep Pract. 41, 178–184. https://doi.org/10.1080/08869634.2020.1829289 (2023).

Yoshida, Y. et al. Association between patterns of jaw motor activity during sleep and clinical signs and symptoms of sleep bruxism. J. Sleep Res. 26, 415–421. https://doi.org/10.1111/jsr.12481 (2017).

Carra, M. C., Huynh, N. & Lavigne, G. Sleep bruxism: A comprehensive overview for the dental clinician interested in sleep medicine. Dent. Clin. N. Am. 56, 387–413. https://doi.org/10.1016/j.cden.2012.01.003 (2012) (sleep medicine and dentistry).

Khoury, S., Carra, M. C., Huynh, N., Montplaisir, J. & Lavigne, G. J. Sleep bruxism-tooth grinding prevalence, characteristics and familial aggregation: A large cross-sectional survey and polysomnographic validation. Sleep 39, 2049–2056. https://doi.org/10.5665/sleep.6242 (2016). https://academic.oup.com/sleep/article-pdf/39/11/2049/26673294/aasm.39.11.2049.pdf.

Bartolucci, M. L. et al. Sleep bruxism and orofacial pain in patients with sleep disorders: A controlled cohort study. J. Clin. Med. 12. https://doi.org/10.3390/jcm12082997 (2023).

Moreno-Hay, I. & Bender, S. D. Bruxism and oro-facial pain not related to temporomandibular disorder conditions: Comorbidities or risk factors? J. Oral Rehabil. 51, 196–201. https://doi.org/10.1111/joor.13581 (2024). https://onlinelibrary.wiley.com/doi/pdf/10.1111/joor.13581.

Matsuo, M. et al. Comparisons of portable sleep monitors of different modalities: Potential as naturalistic sleep recorders. Front. Neurol. 7. https://doi.org/10.3389/fneur.2016.00110 (2016).

Withers, A. et al. Comparison of home ambulatory type 2 polysomnography with a portable monitoring device and in-laboratory type 1 polysomnography for the diagnosis of obstructive sleep apnea in children. J. Clin. Sleep Med. 18, 393–402. https://doi.org/10.5664/jcsm.9576 (2022).

Miettinen, T. et al. Polysomnographic scoring of sleep bruxism events is accurate even in the absence of video recording but unreliable with EMG-only setups. Sleep Breath. Physiol. Disord. https://doi.org/10.1007/s11325-019-01915-2 (2019).

Lin, J. Y., Weibel, N., Griswold, B. & Patrick, K. Mobile health tracking of sleep bruxism for clinical, research, and personal reflection. In Technical Report (University of California, 2013).

Kim, J. H., McAuliffe, P., O’Connel, B., Diamond, D. & Lau, K. T. Development of bite guard for wireless monitoring of bruxism using pressure-sensitive polymer. In 2010 International Conference on Body Sensor Networks, BSN 2010. 109–116. https://doi.org/10.1109/BSN.2010.62 (2010).

Robin, O., Claude, A., Gehin, C., Massot, B. & McAdams, E. Recording of bruxism events in sleeping humans at home with a smart instrumented splint. Cranio J. Craniomandibul. Pract. 40, 1–9. https://doi.org/10.1080/08869634.2019.1708608 (2020).

Farella, M., Palla, S., Erni, S., Michelotti, A. & Gallo, L. M. Masticatory muscle activity during deliberately performed oral tasks. Physiol. Meas. 29, 1397–1410. https://doi.org/10.1088/0967-3334/29/12/004 (2008).

van der Kruk, E. & Reijne, M. M. Accuracy of human motion capture systems for sport applications; state-of-the-art review. Eur. J. Sport Sci. 18, 806–819. https://doi.org/10.1080/17461391.2018.1463397 (2018).

Prokhorenkova, L., Gusev, G., Vorobev, A., Dorogush, A. V. & Gulin, A. Catboost: Unbiased boosting with categorical features (2019). arXiv: 1706.09516.

H2O.ai. h2o: Python Interface for H2O (2022). Python Package Version 3.42.0.2.

Pedregosa, F. et al. Scikit-learn: Machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011).

Microsoft Corporation. Lightgbm. https://github.com/microsoft/LightGBM (2016). Accessed 10 Mar 2025.

Chen, T. & Guestrin, C. Xgboost: A scalable tree boosting system. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining. 785–794. https://doi.org/10.1145/2939672.2939785 (ACM, 2016).

McKinney, W. Data structures for statistical computing in Python. In Proceedings of the 9th Python in Science Conference ( van der Walt, S. & Millman, J. Eds.) . 56 – 61. https://doi.org/10.25080/Majora-92bf1922-00a (2010).

Harris, C. R. et al. Array programming with NumPy. Nature 585, 357–362. https://doi.org/10.1038/s41586-020-2649-2 (2020).

Virtanen, P. et al. Fundamental algorithms for scientific computing in Python. SciPy 1.0. Nat. Methods 17, 261–272. https://doi.org/10.1038/s41592-019-0686-2 (2020).

Martinot, J. B. et al. Artificial intelligence analysis of mandibular movements enables accurate detection of phasic sleep bruxism in OSA patients: A pilot study. Nat. Sci. Sleep 13, 1449–1459. https://doi.org/10.2147/NSS.S320664 (2021).

Doering, S., Boeckmann, J. A., Hugger, S. & Young, P. Ambulatory polysomnography for the assessment of sleep bruxism. J. Oral Rehabil. 35, 572–576. https://doi.org/10.1111/j.1365-2842.2008.01902.x (2008).

Morán-Fernández, L., Bólon-Canedo, V. & Alonso-Betanzos, A. How important is data quality? Best classifiers vs best features. Neurocomputing 470, 365–375. https://doi.org/10.1016/j.neucom.2021.05.107 (2022).

Nguyen, T., Ilharco, G., Wortsman, M., Oh, S. & Schmidt, L. Quality not quantity: On the interaction between dataset design and robustness of clip (2023). arXiv: 2208.05516.

Natekin, A. & Knoll, A. Gradient boosting machines, a tutorial. Front. Neurorobot. 7. https://doi.org/10.3389/fnbot.2013.00021 (2013).

Lara, Óscar. D. & Labrador, M. A. A survey on human activity recognition using wearable sensors. IEEE Commun. Surv. Tutor. 15, 1192–1209. https://doi.org/10.1109/SURV.2012.110112.00192 (2013).

Barandas, M. et al. Tsfel: Time series feature extraction library. SoftwareX 11. https://doi.org/10.1016/j.softx.2020.100456 (2020).

Altalhan, M., Algarni, A. & Alouane, M.T.-H. Imbalanced data problem in machine learning: A review. IEEE Access 13, 13686–13699. https://doi.org/10.1109/ACCESS.2025.3531662 (2025).

Acknowledgements

This study was entirely supported by the standard financial plan of the University of Zurich.

Funding

This study was entirely supported by the standard financial plan of the University of Zurich.

Author information

Authors and Affiliations

Contributions

Barbara Schlaepfer: study conceptualization and methodology, software, data curation, formal analysis, investigation, visualization, writing - original draft, review & editing. Julian Langer: investigation, visualization, writing - original draft, review &editing. Stefan Erni: software, hardware development, validation, resources, writing - review & editing. Vera Colombo: study conceptualization and methodology, supervision, resources, project administration, writing - review & editing.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Schlaepfer, B., Langer, J., Erni, S. et al. A new approach for the field detection of sleep bruxism based on inertial sensor data and machine learning classification. Sci Rep 16, 443 (2026). https://doi.org/10.1038/s41598-025-29679-8

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-29679-8