Abstract

Considering the importance of oil as a major global energy source and the decreasing discovery of new reservoirs, enhanced oil recovery (EOR) methods have attracted considerable attention to maximize production from existing fields. Among these methods, polymer flooding, especially using Xanthan gum, is highly effective. Xanthan gum was chosen as the focus of this study due to its wide availability, low cost and environmental compatibility which make it one of the most practical biopolymer candidates for EOR applications. However, EOR processes are sensitive and require extensive investigations, while field and laboratory experiments are often costly and time-consuming. This study introduces a machine learning-based model which enables petroleum engineers to quickly assess whether Xanthan gum polymer flooding is feasible for a given reservoir, eliminating the need for extensive experiments. Ten parameters API gravity, initial oil saturation (%), polymer concentration (ppm), porosity (%), salinity (wt%), rock type, pore volume flooding, permeability (md), oil viscosity (cp.), and temperature (°C) were used as inputs to five machine learning models, including neural networks such as multilayer perceptron (MLP), convolutional neural network (CNN), radial basis function (RBF), gated recurrent units (GRUs), and support vector regression (SVR). Among these, MLP (R² = 0.9930) and GRUs (R² = 0.9933) achieved the best predictions. These models provide a fast and cost-effective tool for petroleum engineers to make timely decisions regarding the use of Xanthan gum in EOR operations.

Similar content being viewed by others

Introduction

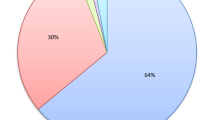

The oil industry relies on petroleum engineering breakthroughs to fulfill worldwide demand because only a tiny proportion of new fields remain for exploration, as most of the prospective petroleum-bearing formations have undergone extensive exploration1. Many unexplored basins are located in remote areas or environmentally significant regions (e.g., the Arctic)2. The production and supply of oil as the primary consumable for energy supply in global equations has many effects today3. Enhanced oil recovery (EOR) methods can cover a significant part of the world’s oil demand by increasing the oil production from the mature oil fields4. These methods become important because fossil fuels, especially oil, are still the main source of energy production in the world compared to renewable energy5. Effective planning in choosing the proper technique is very important for the success of EOR implementation6,7. Compared to secondary recovery, applying EOR technologies in emerging fields typically requires more rigorous planning to mitigate implementation challenges and cost inefficiencies8. Hydrocarbon production from an oil reservoir generally proceeds through three distinct phases, commencing with primary recovery driven by natural reservoir energy. This initial stage is primarily governed by the pressure differential between the reservoir and the production well9, and 30% of the oil harvest is obtained. The second stage is done using pressure maintenance techniques, including the injection of water and gas, which does not change the reservoir rock. The third stage, which is the tertiary recovery or EOR, is used when the secondary recovery is not affordable or unresponsive9,10,11. This progressive approach to oil recovery maximizes resource extraction efficiency and plays a critical role in extending the productive life of reservoirs, especially as conventional recovery methods reach their technical and economic limits12. EOR techniques are generally classified into three primary categories: chemical flooding, thermal flooding, and gas flooding. Among these, chemical flooding is a crucial method wherein various chemical agents such as polymer solutions are injected into the reservoir to improve the displacement efficiency and enhance the overall recovery factor. This method plays a significant role in maximizing oil extraction, especially in reservoirs where conventional recovery techniques have reached their limitations13,14. Polymer flooding has become a standard approach for maximizing recovery in reservoirs that have undergone primary and secondary recovery processes15. According to the investigations carried out in the EOR context, 11% of EOR projects around the world are chemical floods, of which more than 77% are polymer floods and 23% are combined polymer with surfactants9. The viscosity-enhancing properties of polymers modify fluid rheology, creating superior sweep efficiency compared to unmodified water injection. Additionally, by reducing water permeability in the porous medium, they enhance the mobility ratio and facilitate more efficient oil displacement, ultimately improving oil recovery16. This method has been used for more than 50 years due to its many advantages, including the consumption of less water compared to flooding with water and cost-effectiveness9.

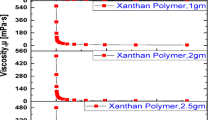

Xanthan gum is a microbial biopolymer synthesized by Xanthomonas campestris and is widely used as a viscosity-enhancing and stabilizing agent3. Due to its relatively thickening properties and resistance to high salinity and temperatures17, it is applied in EOR, oil drilling, and pipeline cleaning. Xanthan gum also offers favorable rheological properties, strong safety performance, and low environmental impact, making it suitable for EOR processes9. It is primarily used in polymer flooding operations to improve mobility control and increase oil recovery3. Unlike polyacrylamide (PAM), which requires low-hardness injection water and performs best under mild conditions, Xanthan gum maintains stability in relatively harsh environments, such as high temperature and salinity, where PAM fails. Additionally, Xanthan gum can modify oil wettability, improving oil displacement and recovery17.

In general, the application of EOR techniques without comprehensive testing and detailed analysis carries significant risk, and traditional experiments such as micromodel and core flooding are both costly and time-consuming, requiring sophisticated and maintenance-intensive equipment. Therefore, adopting artificial intelligence (AI) and machine learning (ML) in EOR studies has significantly increased in recent years. ML techniques provide fast, efficient, and reliable tools capable of accurately forecasting oil recovery performance, thereby reducing or even replacing the need for expensive and time-intensive laboratory tests such as micromodel and core-flooding experiments10.

Through comprehensive testing of various models, this paper examines five ML models, including a multilayer perceptron (MLP), Convolutional neural network (CNN), Support vector regression (SVR), Radial basis function (RBF), and Gated recurrent units (GRU). MLP creates a nonlinear functional model that enables the prediction of output data from a set of input parameters18. The MLP is one of the most widely used types of neural networks19, known for its ability to autonomously learn and extract complex nonlinear relationships from large datasets. CNN models can identify intricate spatial patterns, enhance data representation, and improve predictive accuracy, making them valuable for applications such as reservoir characterization and geophysical modeling20. SVR maps input data into a high-dimensional feature space using kernel functions, achieving high prediction accuracy in nonlinear problems21. RBF networks are popular in petroleum engineering due to their simplicity, fast learning, and capability in handling nonlinear functions22. GRU networks efficiently process sequential data and capture long-term dependencies while mitigating vanishing and exploding gradient issues in traditional recurrent neural networks (RNNs)23.

To develop a high-accuracy predictive model, it is essential to consider that the success of a polymer flooding project largely depends on crude oil characteristics and reservoir formation parameters. Establishing proper screening criteria during the early stages of an EOR project is critical, as such projects involve substantial financial investment and may result in significant setbacks if they fail16. Based on the evaluation of rock and fluid properties, ten key parameters were selected as input variables for the proposed model. Given the wide variability in reservoir and fluid characteristics, and the extensive dataset analyzed using various artificial neural network (ANN) architectures, the aim was to build a highly accurate and efficient predictive tool. This model is designed to reduce dependency on traditional EOR laboratory methods, such as micromodel experiments and core flooding tests, by reliably forecasting recovery performance. Ultimately, this approach can help minimize experimental and field-testing costs, thereby saving time and resources.

Methodology

Various EOR methods are currently employed across different geographical regions, utilizing specialized tools and techniques tailored to specific reservoir conditions and facility infrastructures. Understanding these variations provides valuable insights for future research and optimization strategies. In the present study, a comprehensive dataset was compiled from both field investigations and controlled laboratory experiments, focusing specifically on a biopolymer-based EOR technique. To analyze and predict reservoir performance, five ML models were developed and tested. The primary aim of applying multiple models was to identify a reliable, data-driven alternative to costly and time-consuming laboratory testing, thereby enabling practical field-scale evaluations without compromising scientific rigor.

Data collection

The essential dataset for Xanthan gum polymer flooding simulation was collected from five independent peer-reviewed research studies3,9,18,19,20. It comprises 234 data points obtained from laboratory experiments and field measurements. To ensure robust model training and evaluation, an 80:20 train-test split was applied with shuffling enabled, using a fixed random state to ensure reproducibility. All input features were standardized using the Standard Scaler method before model training. All analyses and model development were performed using Python in Google Colab. Each feature value x was transformed according to the formula\(\:\:{x}^{{\prime\:}}=\frac{x-\mu\:}{\sigma\:}\:\:\:\)where µ and σ represent the mean and standard deviation of the feature, respectively. This step ensured consistent scaling and improved the stability of the models. Based on an extensive review of the literature and analysis of EOR mechanisms, ten key parameters were selected as inputs to improve the prediction accuracy and optimize Xanthan gum polymer flooding performance. One of the input features, “rock type,” was a categorical variable with three classes: Artificial core (made by glass), sandstone, and carbonate. As neural networks require numerical inputs, this categorical variable was transformed into three binary columns using one-hot encoding, increasing the total number of input features from 10 to 12. The other numerical inputs are API gravity, initial oil saturation (%), polymer concentration (ppm), porosity (%), salinity (wt%), pore volume flooding, permeability (md), oil viscosity (cp.), and temperature (°C) (Table 1). The model’s output variable was the EOR performance (%), represented as a continuous numerical value indicating recovery efficiency.

Model architectures and hyperparameter configurations

In this study, five different models were implemented to predict the target variable. Each model was carefully designed and tuned to achieve optimal performance. Preprocessing steps, including feature scaling and one-hot encoding for categorical features, were consistently applied across all models.

Table 2 provides a detailed summary of each model’s architecture, hyperparameters, optimizer settings, and evaluation metrics, allowing for clear comparison and reproducibility of results.

Multilayer perceptron (MLP)

The MLP constitutes a neural network framework structured with sequential processing layers: input, hidden (for intermediate computations), and output18. Among various neural network topologies, this particular configuration has gained remarkable popularity and widespread adoption in both research and industry19. The signal transmission pathway in this neural network involves forward propagation through an input-hidden-output layer sequence, wherein a specific weight value characterizes every inter-neuronal linkage. That is updated step by step throughout the training process20. One factor affecting the network’s performance is the architectural depth (quantity of hidden layers) and layer-wise neuronal density (neurons per hidden layer)18. Additionally, the performance of the MLP model’s behavior is determined by the input variable types, the composition of the training data, and the specified number of learning cycles20. This neural network predicts output data from input data using predefined outputs and nonlinear functions through a supervised learning method20.

where \(\:{y}_{m}\) is the output of the model, \(\:{w}_{mj}\) is the magnitude of the weighted connections bridging the input and hidden layer neurons, \(\:{x}_{j}\) represents the inputs to the network, \(\:{b}_{m}\) is the bias vector which helps the model achieve the best result, and \(\:{F}_{m}\) is the nonlinear transformation function governing neuronal output activation levels.

Support vector regression (SVR)

SVR constitutes a ML technique employing labeled data to solve continuous-value prediction problems. Unlike traditional regression methods, SVR seeks to derive a predictive mapping function that transforms input variables into continuous-valued outputs while avoiding model complexity. In this method, a predictive regression model is developed to establish mathematical relationships between input predictors and corresponding observed outputs21. Regression is a generalization of the classification problem, where the constructed model estimates and returns a continuous output, as opposed to classification, which has discrete outputs. SVR is an extension of the support vector machine (SVM) classification algorithm21. In SVR, the support vectors represent the most informative data instances located exterior to the classification margin, and as the margin becomes less strict, more points fall outside it, increasing the number of support vectors22. SVR strikes a balance between model sophistication and generalization error, performing well with high-dimensional data and enabling the estimation of a function with absolute values. The linear kernel is the simplest type of SVR and is helpful regarding time efficiency. The polynomial kernel is widely used and applied in image processing. The RBF kernel is a versatile kernel that is primarily used when there is no prior knowledge about the data. The advantages of SVR include its high accuracy in predicting complex data, its capacity to process high-dimensional datasets while maintaining an optimal trade-off between model sophistication and generalization error.

where f(x): Prediction function, \(\:{a}_{i}:\) Lagrange multiplier, \(\:{a}_{i}^{*}\): Lagrange multiplier, \(\:k\left({x}_{i},x\right)\): Kernel function, \(\:{x}_{i}\): Support vector, \(\:x\): Input data point, b: Bias term.

Convolutional neural network (CNN)

CNN, as a cornerstone of modern deep learning, convolutional networks employ unique connectivity patterns where neurons interact with limited receptive fields rather than full layers. This localized connectivity provides dual benefits: (1) dramatic parameter compression that facilitates rapid model convergence, and (2) computational economy through shared-weight filters. The architecture incorporates inherent feature selection, automatically suppressing less discriminative patterns to streamline network complexity23. CNN is a type of feedforward neural network inspired by the natural visual perception mechanism of living organisms24. This network uses a convolutional structure, which differs from traditional methods of manually extracting features. The architecture incorporates three distinct layer categories: fully-connected layers for high-level reasoning, convolutional layers for feature representation learning, and pooling layers for spatial down-sampling. Specifically, convolutional layers autonomously extract hierarchical feature patterns from input data. Specifically, Neurons form weighted connections solely with proximal units in the immediately preceding layer24. The main advantage of CNN compared to traditional methods is its ability to learn local and complex features from the input data, making these networks very powerful and flexible. Additionally, the use of shared weights and dimensionality reduction increases processing speed and reduces memory requirements for storing models.

Radial basis function (RBF)

The RBF neural network has an advantage over the MLP architecture, owing to its exceptional nonlinear modeling precision. In this network, inputs are transferred from the hidden layer neurons to the output layer. Sparse data are commonly used in many engineering problems to address technical and non-technical issues. RBF approximation plays an effective role in handling unstructured data in higher dimensions. Applications of RBF can be seen in neural networks, fuzzy systems, and pattern recognition25. Two parameter groups govern the network’s connectivity: (1) input-to-hidden synaptic weights and (2) hidden-to-output projection weights13. This network enables us to handle arbitrary sparse data mapped to a multidimensional space with ease and high accuracy18. Three principal strategies exist for RBF center selection: random sampling from input distribution, cluster centroid identification via ML algorithms, and density-based spatial analysis, each offering distinct computational and accuracy trade-offs26.

\(\:{w}^{T}\) is the transposed vector of weights and \(\:\phi\:\left({x}_{i}\right)\) is the kernel function that maps the input features \(\:{(x}_{i})\) to a new feature space.

Gated recurrent unit (GRU)

This neural network utilizes stochastic gradient descent through backpropagation over time to minimize the loss function. This means that each parameter update includes information about the entire network’s state from the beginning up to the present. As a result, the influence of the evolving state representation intrinsically preserves both instantaneous input signals and accumulated hidden state information through sequential time steps27. GRU networks perform well in sequential learning tasks and handle long-term dependencies better than traditional RNNs, particularly in addressing the vanishing gradient problem more effectively28. This significantly mitigates the vanishing gradient issue in RNNs through the gating mechanism, simplifying the structure28. There is an input layer consisting of several neurons. The Input layer neuron population scales with feature dimensions, paralleled by output layer neurons aligning with prediction space requirements. The network’s hidden layers host memory cells that execute GRU’s essential gating mechanisms and state preservation functions28.

Model evaluation

Comprehensive performance analysis of predictive modeling approaches serves as a fundamental requirement in assessing their accuracy and reliability. Several statistical metrics are commonly used for this purpose, each offering a different perspective on model performance. Some of the most widely used metrics include the coefficient of determination (R²), mean squared error (MSE), and root mean squared error (RMSE). R² indicates how well the model explains the variance in the dependent variable based on the independent variables. A value closer to 1 suggests a better fit between the model and the data, whereas lower values indicate weaker predictive power and the potential omission of key influencing factors29. The MSE computes the mean absolute deviation between observed and forecasted values. A lower MSE value signifies higher model accuracy, implying that predictions are closer to actual observations. However, since this metric is expressed in squared units, it may not always be intuitive to interpret. The RMSE, the square root of MSE, provides a deviation quantification maintaining dimensional consistency with the target quantity. This makes it a more interpretable measure for understanding the deviation of predictions from actual values, making it one of the most commonly used accuracy indicators.

Results and discussion

Feature importance analysis

To quantify the relative contribution of each input parameter to the EOR prediction, we applied permutation importance on the trained neural network model. This method measures the decrease in model performance (R²) when each feature is randomly shuffled, allowing us to rank the relative importance of all input features. The results highlight that some features have a strong influence on model predictions, while others have minimal impact. Identifying these key features is essential for practical field decision-making, as it guides which parameters should be prioritized to improve EOR performance. The ranked importance of input features is summarized in Table 3, which clearly shows the contribution of each feature.

Optimum MLP structure

Findings from the sensitivity examination are presented in Table 4. As mentioned, the MLP network consists of three main components: input, hidden layer, and output. Table 4 examines the effect of the influence of architectural complexity (hidden layer count and neuron population) on predictive accuracy. Additionally, Table 5 illustrates the impact of the number of epochs on the model’s accuracy. In this study, 80% of the collected data was allocated for training, and the remaining 20% was used for testing. According to Table 4 the optimal number of neurons was determined. It was observed that as the number of neurons increased, the model’s accuracy improved. However, after reaching seven neurons, further increases in the number of neurons had little impact on the model’s performance. Therefore, seven neurons were considered the optimal number of neurons.

The MLP neural network, trained with 6 neurons and 200 epochs, provided the best result, which is shown in Fig. 1. This figure displays the comparison between the actual EOR values and the values predicted by the model. As can be seen, by adjusting the number of neurons and epochs, the aim was to achieve a high level of accuracy in matching the data, and the result was found to be satisfactory.

Comparative analysis of actual EOR and MLP values.

The network error after training and normalization is shown in Fig. 2 which demonstrates the high accuracy of the model and its logical error. As observed, significant accuracy has been achieved by using various optimization methods and adjusting multiple parameters of the neural network. Additionally, using Google’s Tesla Generation 4 (T4) Graphics Processing Unit (GPU) in the training process has greatly contributed to accelerating the process and improving the model’s performance.

MLP error chart.

A detailed comparison between the model’s predicted values and the actual observed data from the training phase used to evaluate the model’s generalization ability; Fig. 3, displays the R², which measures how well the model’s predictions align with the real data. As can be observed, the plotted lines exhibit minimal deviation from one another, suggesting a strong predictive performance and a close fit between the model’s output and the target values. Notably, at no point do the predicted training values surpass the actual data, indicating that overfitting did not occur. This outcome reflects not only the robustness of the model itself but also the reliability and correctness of the implemented training procedure and underlying code.

Comparison of R2 of actual and training data.

Optimum SVR structure

The developed predictive model is based on SVR, offering a structured and reliable framework for both implementation and evaluation. By appropriately tuning hyperparameters and selecting suitable performance metrics, the SVR model is trained using specific configurations, including the RBF kernel that is particularly advantageous in regression and classification tasks, as it effectively captures nonlinear relationships between input features and target values. For the SVR model with RBF kernel, several hyperparameters were tuned, including the penalty parameter (C), the error tolerance (ε), the kernel coefficient (γ), the tolerance (tol), and the coefficient term (coef0). The optimized values of these hyperparameters and the corresponding predictive performance metrics are summarized in Table 6, which highlights how appropriate tuning of the SVR–RBF model parameters led to improved accuracy and generalization ability. Its ability to model local interactions enables the algorithm to detect complex patterns within the data. In the implemented code, the model’s predictive performance is thoroughly assessed using both error-based metrics, such as MSE, and explanatory power metrics, such as the R². The predictive accuracy of the SVR model is influenced by several factors, including the choice of kernel, hyperparameter optimization, and the quality and preprocessing of the input data. Careful fine-tuning of these parameters leads to a significant improvement in the forecasting capabilities of the algorithm, thereby enhancing both its robustness and generalization performance.

A clear indication of the model’s predictive superiority and statistical reliability is illustrated through a comparative analysis between the predicted and experimental data (Fig. 4). This visualization highlights how well the model’s outputs align with real-world observations, underscoring the effectiveness of both the chosen kernel function and the hyperparameter tuning strategy. The overall shape of the prediction curve, in conjunction with supporting evaluation metrics such as MSE and the R², further validates the model’s precision. Notably, the application of the RBF kernel played a pivotal role in enhancing the model’s capacity to capture nonlinear dependencies, thereby significantly improving prediction accuracy.

Comparative analysis of actual EOR and SVR values.

The chart plotting target and output on the X and Y axes (Fig. 5) respectively, is one of the most essential tools for evaluating the performance of the SVR model. This chart demonstrates the relationship between actual values (target) and the model’s predictions (output), providing an empirical evaluation of model fidelity across performance metrics and efficiency of the model. By closely examining the distances between the target and output, a deeper understanding of the model’s predictive accuracy can be gained, and its strengths can be identified. As a visual tool, this chart provides valuable information about the model’s performance and helps readers better understand the results.

Correlative analysis of SVR network output and the EOR target data.

Optimum GRU structure

GRU models are widely used in forecasting and temporal pattern extraction applications, given their demonstrated efficacy in modeling both instantaneous variations and complex, extended-duration temporal correlations within sequential data structures. To achieve optimal performance, it is crucial to optimize the structure of these models. This section of the article explores some key strategies employed in the code to optimize the GRU architecture:

-

Adjusting the number of units: The number of units in the GRU layer can be increased or decreased (e.g., 64, 128, or 256) to identify the best configuration for the data, thereby enhancing the model’s accuracy.

-

Adding more layers: By stacking multiple GRU layers (e.g., two or three layers), the model is enabled to learn more complex patterns.

These strategies provide flexibility in tailoring the GRU structure to better suit the characteristics of the dataset, leading to improved predictive performance. Increasing or decreasing the number of units can markedly affect the model’s operational performance. Moreover, architectural expansion through additional GRU layers facilitates the learning of sophisticated hierarchical patterns. Dropout was also employed to prevent overfitting, with its rate carefully adjusted. The results of these actions are presented in Table 7, which demonstrates the effect of the number of layers and the size of sub-layers with a fixed 300 epochs. Similarly, it shows the impact of the number of epochs on the accuracy and efficiency of the model with a fixed 12-layer model. The analysis reveals a trend where accuracy increases with the number of epochs until reaching a maximum threshold, beyond which accuracy begins to decline.

A detailed comparison between actual and predicted EOR values derived from multiple literature sources is visually presented in a subsequent figure, namely Fig. 6. This chart contrasts empirical data with the model’s outputs, which were generated using a configuration of 300 training epochs, as shown in Table 8, and a neural network architecture comprising 11 hidden layers. The goal was to achieve a high level of predictive fidelity by systematically tuning key model parameters, particularly the number of neurons and the duration of training. As illustrated, the alignment between predicted and actual EOR values indicates that the model was able to reproduce complex patterns within the dataset with commendable accuracy. This level of agreement suggests that the selected architecture and training settings were effective in capturing the underlying relationships across diverse data sources. Such results reinforce the model’s generalization capacity and validate the applied optimization strategy.

Comparison between actual EOR and GRU values.

A practical means of assessing the model’s prediction error is illustrated through a residual plot, which is later shown in Fig. 7. In this figure, the Y-axis represents the difference between the model’s predicted values and the actual test data, where the test set was generated using a randomized split. The X-axis corresponds to the sequence of data points, allowing for a point-by-point analysis of the residuals. This visualization serves as a diagnostic tool for identifying any systematic deviation patterns or outliers that may indicate bias or variance-related issues in the model. The close clustering of residuals around zero further reflects the model’s ability to generalize effectively to unseen data.

GRU error chart.

A clear representation of the GRU model’s predictive performance is provided by a output vs. target chart, which is shown in Fig. 8. This figure serves as a fundamental visual tool for evaluating the model’s ability to approximate real values. The degree of alignment between these two sets of values offers insight into the model’s accuracy and overall reliability. As observed in the chart, the data points are densely clustered along the ideal target line, indicating a strong correlation between the predicted and actual values. The limited dispersion around this line further reinforces the model’s precision and the effectiveness of its internal structure in capturing complex temporal dependencies within the dataset.

Correlative analysis GRU network output and the EOR target data.

Optimum RBF structure

The RBF neural network represents a unique class of function approximators utilizing localized radial basis kernels as nonlinear activation operators in its hidden layer computation. This model is particularly effective for function approximation and modeling nonlinear relationships in data. Adding a white kernel to the model enabled it to account for noise effectively, distinguishing between signal and noise, a crucial feature for real-world data. Selecting hyperparameters such as length scale and noise level has had a significant impact on the model’s performance.

A comparative visualization of the model’s EOR predictions versus empirical data collected from published scientific sources is presented in Fig. 9. This figure includes both training and test data points, with specific emphasis on how well the model generalizes to previously unseen test samples. The close alignment observed between the predicted outputs and the experimental data highlights the consistency and accuracy of the designed model. Notably, the percentage of predicted recovery follows the same trend as the reported values in the literature, suggesting that the model captures key patterns across diverse datasets. This level of agreement not only validates the model’s predictive power but also reinforces its potential for reliable application in real-world scenarios involving enhanced recovery estimations.

Comparison between actual EOR and RBF values.

The distribution of the model’s predicted outputs relative to the target values is visualized through a scatter plot, as illustrated in Fig. 10, in which the fit line represents the ideal target, and the clustering of predicted data points around this line reflects the accuracy of the training process. The relatively tight dispersion indicates that the selected parameters and training configurations were effective in minimizing prediction error. Such consistency not only validates the model’s internal structure but also suggests its robustness and potential reliability when applied to new, unseen datasets. This visual confirmation reinforces the model’s generalizability and practical value in broader predictive tasks.

Correlative analysis of RBF network output and EOR target data.

Optimum CNN structure

In this section, the performance-tuned architectural configuration of the CNN is discussed, which includes several convolutional layers and dense layers. The model consists of multiple convolutional layers (Conv1D) designed using the rectified linear unit (ReLU) activation function. These convolutional filters adaptively distill essential features from the input data, and by increasing the number of filters in different layers, the model can learn more complex features. Flatten is used to convert the multi-dimensional output of the convolutional layers into a one-dimensional format so it can be connected to the dense layers. The dense layers are designed to learn the complex nonlinear mappings connecting input features to target outputs. Under these conditions, Adam is used as the optimization algorithm, one of the state-of-the-art algorithms demonstrating optimal computational efficiency in deep learning. Given the structure and settings provided, this CNN model is recognized as a suitable option for analyzing data and predicting outcomes in various problems.

Following the methodological framework of preceding models, the following analysis examines and evaluates the accuracy of the model against experimental data. The objective is to assess the alignment of the model’s output with the collected experimental data from various studies. Additionally, the impact of the number of hidden layers, neurons, and epochs on model accuracy is investigated. As shown in Table 9, a sensitivity analysis was performed by varying the number of hidden neurons to evaluate their effect on model performance. Furthermore, Table 10 illustrates how different numbers of training epochs influence the final accuracy of the CNN model.

The prediction error associated with EOR estimations is visualized through an error distribution plot, Fig. 11, which represents the discrepancies between observed values and those predicted by the model on the test dataset. A significant majority of the errors lie within the range of −5 to 5, indicating a high level of predictive accuracy. The narrow spread of error values suggests that the model has effectively captured and reproduced the complex nonlinear relationships inherent in the data. Furthermore, the distribution appears symmetrical and centered around zero, implying that the model exhibits no systematic bias toward overestimation or underestimation. This balanced performance reinforces the reliability and generalization capability of the model when applied to previously unseen data.

CNN error chart.

The fitting plot comparing the model’s predicted outputs to the actual target values is depicted in Fig. 12. In this figure, circular markers represent the predicted EOR values for each corresponding target, while the solid blue line indicates the ideal fit where predictions perfectly match the targets. The close clustering of the predicted points around this ideal line demonstrates the model’s high predictive accuracy. Minimal dispersion of output data around the fit line further confirms that the model has effectively captured the complex relationships between input features and target variables. Visual inspection of the plot also reveals a symmetrical distribution of points without noticeable bias or imbalance across the data range, underscoring the robustness and reliability of the proposed model architecture. The strong correlation between predicted and actual values validates the model’s overall successful performance in forecasting EOR outcomes.

Relationship between CNN network output and EOR target data.

The R² values reflecting the predictive performance of the model for both actual and trained data are illustrated in Fig. 13. This figure clearly demonstrates a consistent upward trend in R², with values converging toward 1, indicating the model’s strong capability to capture the underlying data patterns accurately. The minimal fluctuations observed in both training and validation curves suggest a stable and effective learning process. Moreover, the training data closely follows the actual data without exceeding it, which confirms that the model has generalized well without exhibiting overfitting. These results collectively underscore the reliability and robustness of the developed model.

Comparison of R2 of actual and training data.

Practical relevance and field implementation

Core-flooding experiments typically take one to two months and involve complex procedures such as core preparation, fluid saturation, rheology and stability testing, followed by waterflooding and proposed fluid injection. Conducting these experiments requires advanced laboratory equipment, skilled personnel, chemical and oil materials, and precise control of operational conditions. In addition, system-, operator-, and environment-related errors, as well as potential leakage or flow disturbances, increase the cost and risk of the project.

In contrast, the proposed model in this study was developed using Python, a lightweight and cost-effective tool that does not require high-end computing systems. It can also be run on Google Colab using Google’s GPU, making the computations feasible even on standard computers. The model’s accuracy, with R² above 0.9, demonstrates high reliability and precise prediction of EOR performance.

The results clearly indicate that a reservoir engineer can rapidly and accurately evaluate the feasibility of using Xanthan gum in a given reservoir without spending one to two months performing complex core-flood experiments and manually analyzing the results. This predictive model allows the assessment of potential EOR performance, identification of optimal injection scenarios, and prioritization of strategies in a significantly shorter time frame.

From an operational perspective, this capability enables engineers to make critical decisions regarding the design and implementation of EOR projects more quickly and confidently, without incurring the high costs of laboratory experiments, human errors, or issues related to high-pressure equipment and core leakage. Furthermore, using this model as a decision-support tool during the early stages of project design facilitates the identification of suitable polymer formulations and optimal operating conditions, significantly reducing the time and financial resources spent on laboratory and field studies.

Ultimately, this approach assists reservoir engineers and project teams in designing efficient and cost-effective hydrocarbon recovery strategies based on reliable predictive data, substantially increasing the likelihood of success for EOR projects.

Conclusions

EOR processes are inherently complex and associated with high costs, rendering traditional trial-and-error methods in reservoir studies largely impractical. Moreover, laboratory experiments for EOR evaluation demand significant time, advanced instrumentation, and considerable financial resources, posing further challenges. In response to these limitations, this study employed five different ML architectures to develop predictive models that closely approximate real field data, aiming to provide accurate and efficient alternatives to conventional methods.

After an extensive review of the literature and careful selection based on screening criteria relevant to Xanthan gum polymer flooding applications in EOR, key parameters were identified and incorporated as inputs to the models. Among the tested models, the MLP exhibited superior predictive performance, achieving an R² of 0.9930, MSE of 1.8391, and RMSE of 1.2987. GRU network also delivered comparable accuracy, with an R² of 0.9933, MSE of 2.0566, and RMSE of 1.4269, demonstrating the robustness of recurrent architectures for this application.

The developed approach not only provides a reliable predictive framework but also offers a cost-effective solution by significantly reducing the need for exhaustive laboratory testing and field trials. The high accuracy attained (R² ≈ 0.993) confirms the model’s capability to simulate Xanthan gum polymer flooding processes with precision, enabling stakeholders to conduct pre-implementation analyses that are both timely and economically viable.

In summary, the proposed ML models, especially the MLP, represent a valuable tool for reservoir engineers and researchers to optimize EOR strategies. This work contributes to advancing computational methodologies in the petroleum engineering domain and encourages the integration of ML techniques for more sustainable and efficient hydrocarbon recovery.

Data availability

The datasets used and/or analyzed during the current study available from the corresponding author on reasonable request.

References

Wang, X. et al. Recent Advancements in Petroleum and Gas Engineering. MDPI. p. 4664. (2024).

Løset, S. Science and technology for exploitation of oil and gas—an environmental challenge. in Proc Eur Networking Conf on Research in the North, Svalbard, Norway. (1995).

Hublik, G. et al. Investigating the potential of a transparent Xanthan polymer for enhanced oil recovery: A comprehensive study on properties and application efficacy. Energies 17 (5), 1266 (2024).

Ahmadi, M. A. & Bahadori, A. A simple approach for screening enhanced oil recovery methods: application of artificial intelligence. Pet. Sci. Technol. 34 (23), 1887–1893 (2016).

Khattab, H. et al. Assessment of a novel Xanthan gum-based composite for oil recovery improvement at reservoir conditions; assisted with simulation and economic studies. J. Polym. Environ., 1–29. (2024).

Manrique, E. et al. Effective EOR decision strategies with limited data: field cases demonstration. in SPE Improved Oil Recovery Conference? SPE. (2008).

Karović-Maričić, V., Leković, B. & Danilović, D. Factors influencing successful implementation of enhanced oil recovery projects. Podzemni radovi 2014(25), 41–50.

Zekri, A., Jerbi, K. Oil Economic Evaluation of Enhanced Oil Recovery. & Gas Science and Technology-revue De L Institut Francais Du Petrole - Oil Gas Sci Technol, 57: 259–267. (2002).

Said, M. et al. Modification of Xanthan gum for a high-temperature and high-salinity reservoir. Polymers 13 (23), 4212 (2021).

Gomaa, S. et al. Machine learning models for estimating the overall oil recovery of waterflooding operations in heterogenous reservoirs. Sci. Rep. 15 (1), 14619 (2025).

Anikin, O. V. et al. Gas Enhanced Oil Recovery Methods for Offshore Oilfields: Features, implementation, Operational Status (Petroleum Research, 2024).

Hashemizadeh, A. & Kyani, A. Successful case studies on the use of polymers to EOR by polymer flooding. (2022).

Shayan Nasr, M. et al. Application of artificial intelligence to predict enhanced oil recovery using silica nanofluids. Nat. Resour. Res. 30 (3), 2529–2542 (2021).

Seidy-Esfahlan, M., Tabatabaei-Nezhad, S. A. & Khodapanah, E. Comprehensive review of enhanced oil recovery strategies for heavy oil and bitumen reservoirs in various countries: global perspectives, challenges, and solutions. Heliyon 10 (18), e37826 (2024).

Ogunkunle, T. F. et al. Comparative analysis of the performance of hydrophobically associating polymers, Xanthan and Guar gum as mobility controlling agents in enhanced oil recovery application. J. King Saud University-Engineering Sci. 34 (7), 402–407 (2022).

Saleh, L. D., Wei, M. & Bai, B. Data analysis and updated screening criteria for polymer flooding based on oilfield data. SPE Reservoir Eval. Eng. 17 (01), 15–25 (2014).

Han, D. K. et al. Recent development of enhanced oil recovery in China. J. Petroleum Sci. Eng. - J. PET. SCI. Eng. 22, 181–188 (1999).

Saberi, H., Esmaeilnezhad, E. & Choi, H. J. Artificial neural network to forecast enhanced oil recovery using hydrolyzed polyacrylamide in sandstone and carbonate reservoirs. Polymers 13 (16), 2606 (2021).

Popescu, M. C. et al. Multilayer perceptron and neural networks. WSEAS Trans. Circuits Syst. 8 (7), 579–588 (2009).

Taud, H. & Mas, J. F. Multilayer perceptron (MLP). Geomatic approaches for modeling land change scenarios, pp. 451–455. (2018).

Zhang, F. & O’Donnell, L. J. Chap. 7 - Support vector regression, in Machine Learning, A. Mechelli and S. Vieira, Editors. Academic. 123–140. (2020).

Awad, M. et al. Support vector regression. Efficient learning machines: Theories, concepts, and applications for engineers and system designers, : pp. 67–80. (2015).

Li, Z. et al. A survey of convolutional neural networks: Analysis, Applications, and prospects. IEEE Trans. Neural Networks Learn. Syst. 33 (12), 6999–7019 (2022).

Gu, J. et al. Recent advances in convolutional neural networks. Pattern Recogn. 77, 354–377 (2018).

Wu, J. Introduction to convolutional neural networks. National Key Lab for Novel Software Technology. Nanjing Univ. China. 5 (23), 495 (2017).

Majdisova, Z. & Skala, V. Radial basis function approximations: comparison and applications. Appl. Math. Model. 51, 728–743 (2017).

Ghorbani, M. A. et al. A comparative study of artificial neural network (MLP, RBF) and support vector machine models for river flow prediction. Environ. Earth Sci. 75, 1–14 (2016).

Dey, R. & Salem, F. M. Gate-variants of gated recurrent unit (GRU) neural networks. in IEEE 60th international midwest symposium on circuits and systems (MWSCAS). 2017. IEEE. 2017. IEEE. (2017).

Mirshekari, S. et al. Enhancing Predictive Accuracy in Pharmaceutical Sales Through an Ensemble Kernel Gaussian Process Regression Approach. arXiv preprint arXiv:2404.19669, (2024).

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author information

Authors and Affiliations

Contributions

Seyed Hossein Rezaei : Methodology, Investigation, Formal analysis, Data curation, Writing – original draft.James J. Sheng: Conceptualization; Supervision; Writing – review & editing.Ehsan Esmaeilnezhad: Conceptualization, Methodology, Investigation, Formal analysis, Data curation, Writing – review & editing, Supervision, Project administration. All authors have read and agreed to the published version of the manuscript.Conflicts of Interest: The authors declare no conflict of interest.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Rezaei, S.H., Sheng, J.J. & Esmaeilnezhad, E. Performance investigation of Xanthan gum polymer flooding for enhanced oil recovery using machine learning models. Sci Rep 15, 45420 (2025). https://doi.org/10.1038/s41598-025-30156-5

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-30156-5