Abstract

The research on 3D model reconstruction from a single image using deep learning technology has achieved remarkable progress. However, compared with images, sketches lack sufficient visual information, which challenges the reconstruction algorithm’s ability to correctly interpret sketches. Herein, we introduce a streamlined network architecture for sketch-to-3D mesh generation, designed to address the challenge of reconstructing high-fidelity 3D models from single-hand sketches. Our approach deploys the expressive PowerMLP architecture within an encoder-decoder framework, surpassing traditional MLP implementations in representation capability. By integrating 3D shape constraints instead of relying on conventional discriminators, we achieve geometric fidelity in a collaborative generation process. Experimental results demonstrate state-of-the-art (SOTA) performance on both synthetic stylized sketches and real-world handwritten inputs, validating the method’s robustness and adaptability.

Similar content being viewed by others

Introduction

Driven by the rapid evolution of computer graphics and VR/AR technologies, there has been a significant increase in the demand for 3D content creation across various domains. Sketch-based 3D reconstruction has emerged as an intuitive and efficient design paradigm, attracting considerable attention from both academic and industrial communities. This approach aims to rapidly generate 3D models from simple hand-drawn sketches, thereby reducing the technical complexity associated with traditional 3D modeling techniques and addressing the growing need for 3D content in fields such as film and television animation, game development, industrial design, and architectural design1,2,3. Current methods for sketch-based 3D modeling can be broadly categorized into three groups. The first group employs 3D model retrieval techniques, which match user-provided sketches against pre-existing 3D model libraries to generate the most similar 3D shapes3,4,5,6. The second group leverages multi-view 2D sketches and utilizes deep neural networks to reconstruct 3D shapes directly from these sketches7,8,9,10,11. More recently, cross-modal generation techniques have gained prominence by integrating sketch-based and text-to-3D generation methods, thereby expanding the scope and flexibility of 3D content creation12,13,14.

However, these existing methods suffer from several limitations that hinder their widespread adoption. For instance, methods relying on 3D model retrieval often lack the ability to customize the retrieved models, thereby limiting the creative freedom of users. Other approaches that require multi-view sketches demand users to provide input sketches from multiple perspectives, which can be challenging for those without professional drawing skills. Similarly, methods integrating text prompts and sketch images also pose significant difficulties for non-expert users, as they necessitate both textual and visual inputs, further complicating the modeling process.

Moreover, sketches are fundamentally different from real images. Composed primarily of simple lines, sketches lack effective visual information such as texture and lighting cues, which are crucial for algorithms to accurately interpret the intended 3D geometry15,16,17. The paucity of visual cues constitutes a formidable obstacle to reliable 3D-shape inference from sketches, necessitating algorithms that are both robust and contextually aware.

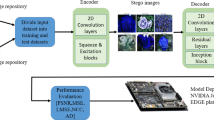

To address the aforementioned challenges, we propose a novel end-to-end neural network architecture, termed Single View Sketch-to-Mesh (SS2M), designed to provide an intuitive and efficient 3D model reconstruction framework for users. The SS2M network enables rapid and effective generation of customized, high-fidelity 3D mesh models from a single input sketch that captures the user’s conceptual intent. In the SS2M network, the input sketch is first encoded into viewpoint and shape representations within a latent space via an encoder module. Subsequently, these latent encodings are decoded into a 3D mesh model through a decoder module, as illustrated in Fig. 1. This streamlined architecture allows users to bypass the complexities associated with multi-view sketching or additional textual inputs, thereby significantly lowering the technical barriers for non-expert users while maintaining high reconstruction quality.

The architecture of SS2M network(The 3DViewer18 is used to render the 3D models displayed in the figure).

The SS2M network is designed as an encoder-decoder architecture with a streamlined structure. It incorporates PowerMLP19 neural network technology, which exhibits superior expressive capabilities compared to conventional MLP. Building upon the strengths of CNNs20, the SS2M encoder is capable of transforming sketch data from the input image space into a more compact and expressive low-dimensional feature representation. This enables the network to effectively capture complex shape patterns and spatial structures within sparse sketches. During the decoding process, the low-dimensional latent encoding is mapped into 3D shape data with depth information, facilitating the reconstruction of high-fidelity 3D models.

Given that traditional encoder-decoder generative networks often struggle to produce high-quality 3D shapes, and considering that discriminator-based methods15,16 are prone to convergence difficulties during training21, we introduce 3D shape constraints to replace the conventional discriminator network. This innovation serves a dual purpose: it simplifies the overall network architecture while enforcing the generation of more realistic 3D shapes by directly constraining the output of the generation network. This approach ensures that the generated 3D meshes are geometrically accurate and faithful to the input sketch, thereby enhancing the overall performance and robustness of the SS2M.

In summary, our approach has achieved superior 3D generation results through a more concise network architecture design.

Specifically, our primary contributions are summarized as follows:

-

1.

We propose an end-to-end network model based on an encoder-decoder architecture with a streamlined structure. This model allows users to input a single-view sketch that captures their conceptual intent and rapidly generate customized, high-fidelity 3D mesh models with high efficiency.

-

2.

To facilitate the encoding-decoding process from image space to geometric space, we introduce PowerMLP neural network technology, which exhibits stronger expressive capabilities compared to traditional MLP. This enhancement enables the SS2M network to achieve superior generation performance within a comparable training timeframe.

-

3.

During network training, we incorporate 3D shapes from real datasets to compute similarity with the generated 3D models, serving as shape constraints to guide the network’s output. This approach enforces the generation of realistic 3D shapes, thereby improving the accuracy and plausibility of the reconstructed models.

-

4.

Extensive experiments and evaluations conducted on Shapenet-synthetic and Shapenet-sketch datasets demonstrate that the SS2M can accurately interpret user input sketches and generate corresponding high-fidelity 3D mesh models with enhanced precision and fidelity.

Related works

3D reconstruction techniques

3D model reconstruction is a vital research direction within the field of computer vision. By leveraging computational methods to reconstruct the shape, structure, texture, and other attributes of objects in three dimensions, this technology enables multi-dimensional understanding and visualization of objects. It has garnered extensive attention and found wide application across various domains, including medical health, industrial production, cultural heritage preservation, game development, and virtual reality22,23.

Existing 3D reconstruction technologies can be broadly categorized into three types: (1) Manual geometric modeling using software tools, which involves the creation of 3D models through manual design and manipulation in specialized software environments. (2) 3D scanning-based reconstruction, where devices such as laser radar or structured-light cameras are employed to capture the geometry of objects, and the resulting data is processed to generate 3D models. (3) Image-based 3D reconstruction, which utilizes single or multiple images and employs computer vision techniques to infer and reconstruct the 3D structure of objects from these images.

Among these approaches, image-based 3D reconstruction has gained significant popularity in practical applications due to its low cost, ease of operation, and high fidelity in reconstruction outcomes22,23.

Single-view image-based 3D model reconstruction

Single-view image-based 3D object reconstruction aims to recover the 3D shape of an object from a single input image. Unlike multi-view 3D reconstruction, which leverages multiple perspectives to infer geometric information, single-view reconstruction is inherently challenging due to the limited geometric and semantic information available from a single viewpoint. The task of restoring a 3D shape from a single image is fundamentally ill-posed, as it involves significant ambiguity in depth and spatial relationships24,25.

Early approaches to single-view 3D reconstruction primarily relied on visual cues such as lines, shadows, and textures within the image to make geometric assumptions and reconstruct the 3D surface of the object24,25. However, these methods are often domain-specific and lack generalizability, resulting in poor scalability and limited applicability to diverse object classes and scenarios.

With the advent of large-scale 3D model datasets11,26,27 and the rapid advancement of deep learning techniques, the landscape of single-view 3D reconstruction has significantly evolved. Notably, the emergence of differentiable rendering technology in recent years28,29,30,31 has enabled the integration of rendering processes into end-to-end learning frameworks. This development, combined with the availability of extensive datasets, has facilitated the training of robust models capable of adapting to various complex variations in object shapes, appearances, and viewpoints15,22,23,28,31,32,33,34,35,36,37. Consequently, single-view 3D object reconstruction has become increasingly viable through large-scale data-driven approaches, paving the way for more accurate and generalizable solutions.

Single-view sketch-based 3D model reconstruction

Single-view sketch-based 3D modeling has long been a topic of active research in the field of computer graphics and vision. Existing methods can be broadly categorized into two types: end-to-end methods and interactive methods.

Interactive methods rely on the decomposition of sketch drawing into multiple steps or the use of specific gestures38,39. These approaches require users to possess a certain level of strategic knowledge and expertise, which may pose challenges for non-professional users. On the other hand, end-to-end methods based on templates or retrieval can produce satisfactory results but often lack the flexibility for customization, thereby limiting their applicability in scenarios where personalized designs are desired.

In recent years, there has been a growing interest in leveraging deep learning-based end-to-end methods to directly reconstruct 3D models from sketches5,8,12,15,16,17. This approach treats the task as a single-view 3D reconstruction problem, aiming to bridge the gap between sketch inputs and 3D outputs in a more seamless and automated manner. By harnessing the power of deep neural networks, these methods have shown promise in generating high-quality 3D models directly from sketches, thereby offering a more efficient and accessible solution for users.

Sketch-based modeling is intrinsically challenging compared to traditional single-view 3D reconstruction, due to the abstraction and sparsity of sketch information. Sketches are characterized by their simplicity and lack of detailed visual cues such as texture, lighting, and shadows, which are essential for inferring 3D geometry. This scarcity of information makes the task of generating high-quality 3D shapes from sketches particularly demanding.

In this paper, we address the problem of reconstructing single-view 3D objects from single-view sketches. Building on the work in15,28, we propose a streamlined end-to-end neural network architecture based on an encoder-decoder framework. Our method reconstructs a 3D mesh model from a single 2D sketch of the input object by leveraging PowerMLP technology and 3D shape constraints. This integrated approach effectively improves the accuracy and plausibility of the generated 3D models.

Method

We first establish a general baseline method for 3D model generation from a single-view sketch, as introduced in28. Building upon this baseline, we propose our SS2M network, which is specifically designed to produce 3D mesh model from a single-view sketch.

Baseline

The architecture of baseline method(The 3DViewer18 is used to render the 3D models displayed in the figure).

As illustrated in Fig. 2, the input single sketch, denoted as I, is encoded into a shape code \(Z=E(I)\) in the latent space via an encoder comprising the CNN20 and MLP. The shape code Z is then passed through a decoder, D, to generate a 3D mesh model, \(M=D{\text{(}}Z{\text{)}}\), that closely resembles the input sketch I. The generated mesh model M is composed of vertices and faces, and can be represented as \(M={\text{(}}{F_M},{V_M}{\text{)}}\), where \({V_M}\) denotes the vertex vector and \({F_M}\) represents the face vector in the mesh model.

During the training process, the generated mesh model M is rasterized by the rasterizer R28 under a specified view angle V to produce a rendered grayscale silhouette image \({S_r}\). This rendered image \({S_r}\) is then compared with the ground-truth rendered image \({S_{gt}}\) corresponding to the input sketch at the same view V to perform loss calculation. Finally, the parameters of the generator network are updated through error backpropagation to optimize the network performance.

The aforementioned process can be mathematically formulated as follows:

It is important to note that the shape decoder D does not directly decode Z into the 3D mesh model M, but rather into the biases of the vertex vector \({V_M}\). This is because the output mesh M is constructed by deforming an isotropic sphere with a predefined set of K-vertices. Assuming that the vertex vector of the original K-vertex sphere is denoted as \(B{\text{(}}{F_{b}}{V_b})\), the generated mesh M also comprises K vertices. The \({F_M}\) remains as the \({F_{b}}\), but the \({V_M}\) is calculated as follows:

The SS2M network architecture

The SS2M builds upon the baseline model described in Sect. 3.1 by incorporating a viewpoint prediction branch as in Sketch2Model15. This branch predicts the corresponding perspective information while generating the 3D model, thereby guiding the sketch generation process to determine the shape under viewpoint constraints. However, unlike Sketch2Model, we have streamlined the network architecture, eschewing additional complex branch designs in favor of a more concise structure. Furthermore, we have integrated PowerMLP19 technology into the encoder-decoder framework to enhance the network’s expressive capabilities.

Regarding the design of loss functions, conventional methods such as those in15,16,37 typically focus solely on the projection error loss between the 2D silhouette image of the generated 3D model and the input sketch. However, it is widely recognized that single-view sketches lack substantial 3D information, making it challenging to produce high-quality 3D shapes using only silhouette information for constraint and supervision. To address this limitation, we introduce a 3D shape constraint mechanism in SS2M, which directly calculates the 3D difference between the predicted shape and the ground-truth shape. This difference is incorporated as an additional term in the loss function, thereby ensuring that the generative network produces high-fidelity 3D results.

In the following section, we will elaborate on the working principle of the SS2M network in conjunction with Fig. 1.

Overall architecture

Assuming the input single sketch is denoted as I, and its corresponding output mesh is represented as \(M=({F_M},{V_M})\), where \({F_M}\) and \({V_M}\) denote the face and vertex vectors of the mesh, respectively. Initially, we extract image features from the sketch I using the encoder E and map them into two separate latent spaces: the viewpoint latent space and the shape latent space. This process yields the viewpoint encoding \({Z_v}\) and the shape encoding \({Z_s}\). Mathematically, this can be expressed as:

The viewpoint decoder \({D_v}\) decodes \({Z_v}\) into a predicted viewpoint vector \({V_p}={D_v}({Z_v})\). The loss between \({V_p}\) and the ground-truth viewpoint V in the sketch is then calculated. Meanwhile, the shape decoder \({D_s}\) decodes \({Z_s}\) into a predicted 3D mesh model M. The vertex vector \({V_M}\) and face vector \({F_M}\) in M are computed as the baseline mentioned in Sect. 3.1.

The generated mesh M is subsequently compared with the ground-truth 3D model \({M_{gt}}\) corresponding to the sketch using a 3D shape similarity function, thereby achieving constraint control over the 3D shape fidelity. Meanwhile, under the constraint of the viewpoint V, the mesh M is rendered into a silhouette image \({S_r}=R(M,V)\) via a differentiable renderer R. The rendered silhouette image \({S_r}\) is then compared with the ground-truth rendered silhouette image \({S_{gt}}\) corresponding to the viewpoint V to compute the loss. A detailed introduction to the loss function will be provided in Sect. 3.4.

Encoder and decoder

The architecture of the encoder E is a hybrid of CNN20 and PowerMLP19, leveraging the strengths of both components. Specifically, the CNN is employed to extract salient feature information from the input sketch, focusing on essential stroke shape features while minimizing the influence of non-essential information such as painting style. Building on this foundation, two independent PowerMLP branches are utilized to map the extracted image spatial features into the viewpoint space and shape space, respectively, thereby facilitating the encoding of \({Z_v}\) and \({Z_s}\). For the decoding process, two separate PowerMLP branches are employed to decode \({Z_v}\) and \({Z_s}\) independently. Specifically, the viewpoint latent space encoding \({Z_v}\) is decoded into the viewpoint vector \({V_p}\), while the shape latent space encoding \({Z_s}\) is decoded into the vertex vector \({V_M}\) of the generated mesh M.

PowerMLP

Recently, a novel neural network architecture known as the Kolmogorov Arnold Network (KAN)40 has garnered significant attention from researchers. Grounded in the Kolmogorov-Arnold representation theorem, KAN aims to enhance the approximation capabilities and interpretability of neural networks through an innovative mathematical design. Unlike traditional MLP, KAN introduces a unique architectural feature by placing activation functions on the edges (i.e., weights) of the network rather than on the nodes. This distinctive design enables KAN to approximate complex functions with a reduced number of parameters, which are typically parameterized using B-spline functions, thereby offering greater flexibility.

KAN has demonstrated remarkable performance in various tasks, including function fitting and solving partial differential equations. Recently, an increasing number of scholars have applied KAN or its variants to the field of computer vision, such as image and graphic classification and segmentation19,41,42,43,44. These applications have effectively validated the efficacy of KAN’s architecture, highlighting its potential for broader adoption in related domains.

However, the training process of KAN is significantly more complex and considerably slower than that of the traditional MLP19,45,46. The primary bottleneck stems from the recursive iteration process involved in calculating spline functions. In response to this challenge, PowerMLP, as a novel neural network architecture, employs a simplified representation of spline functions. By introducing a non-iterative spline function representation based on ReLU power, PowerMLP circumvents the recursive calculations required in KAN and completes the process through direct matrix operations. This approach not only retains the expressive advantages of KAN but also substantially reduces computational complexity. The k-th power of ReLU is defined as follows:

PowerMLP is an L-layer neural network defined as Eq. (6):

In this context, \(\alpha\), \(\omega\), and \(\gamma\) are all trainable arrays, \({\sigma _k}\) denotes the activation function, which is the k-th power of ReLU, and \(b(x)\) represents the basis function, defined in the same manner as in KAN. Figure 3 illustrates a structural diagram of a PowerMLP with three layers.

Structure of PowerMLP (3 layers).

Under the same parameter quantity, PowerMLP exhibits similar computational speed to traditional MLP, yet both are significantly faster than KAN. Moreover, PowerMLP demonstrates certain advantages in tasks such as machine learning. In this work, we adopt PowerMLP as the fundamental building block for the encoder and decoder in our network, replacing the conventional MLP. This choice enables more effective handling of the complex transformation from sketch image space to viewpoint and shape spaces. For detailed information regarding PowerMLP, please refer to Reference19.

Shape constraint

In conventional 3D generative network models15,16,37, two primary approaches are typically employed for shape constraints on the generated models: (1) utilizing sketches and projected silhouettes of the generated 3D models for loss calculation to enforce shape constraints, and (2) implementing shape constraints through a GAN-based discriminator network. However, we argue that 2D projected silhouette images lack essential depth information and necessary shape and line details, thereby failing to fully represent the 3D model’s geometry. Additionally, the GAN-based discriminator network introduces unnecessary complexity to the overall network architecture.

In this work, we propose an alternative approach by employing 3D shape similarity measures as constraints. Specifically, we calculate the similarity between the generated 3D model and the ground-truth model to enforce shape constraints on the generated model. This method directly leverages 3D information, ensuring a more accurate and comprehensive representation of the model’s geometry.

In practice, common metrics for measuring the similarity between two 3D models include Chamfer Distance, Voxel IoU, Hausdorff distance, and One-sided Hausdorff distance47,48, among others. These metrics can be individually or jointly employed as the shape-constrained loss function \({L_{sc}}\). During training, the feedback from \({L_{sc}}\) is utilized to optimize the generative network, thereby compelling the generated meshes to exhibit realistic 3D shapes while preserving their correctness and plausibility. In this paper, the selection and definition of \({L_{sc}}\)will be detailed in Sect. 3.5.

Loss function

We have meticulously designed the loss function for training our network, which comprises several components: the progressive silhouette Intersection over Union (IoU) loss \({L_{ps}}\) between the projected silhouettes of the generated model and the ground-truth model; the shape geometric regularization loss15,28,31 of the generated model, which includes the Laplacian loss \({L_{lap}}\) and smoothing loss \({L_{fl}}\), the viewpoint loss \({L_v}\) between the predicted viewpoint and the ground-truth viewpoint, and the 3D shape constraint loss \({L_{sc}}\).

We first define the IoU loss \({L_{iou}}\), which quantifies the Intersection over Union between the projected silhouette \({S_r}\) of the generated model and the projected silhouette \({S_{gt}}\)of the ground-truth model. The definition of \({L_{iou}}\) is as follows:

During the training process, we employed a multi-scale, i-layer pyramid to render silhouette images to achieve finer rendering silhouettes. The IoU loss for the i-th layer silhouette within the pyramid is denoted as \(L_{{iou}}^{i}\). The progressive IoU loss \({L_{ps}}\) is then defined as follows:

In this context, N denotes the number of layers in the pyramid, and \({\lambda _{si}}\) is the hyperparameter associated with the IoU loss of the i-th layer silhouette.

The Laplacian Loss is employed to enhance the smoothness of the generated mesh. Let \({v_i}\) denote an arbitrary point within mesh M, and \(v{'_i}\) represent the centroid of the neighboring vertices of \({v_i}\), and \({L_l}_{{ap}}\) is mathematically defined as follows:

The Smoothing Loss minimizes the discrepancy in normal directions between adjacent triangular faces, thereby promoting the overall smoothness of the mesh surface. Specifically, the loss is formulated to penalize deviations in the normal angles between neighboring faces, where \({\theta _i}\) denotes the angle between the normal vectors of any two adjacent faces. The loss is calculated as follows:

\({L_v}\) calculates the loss between the predicted viewpoint and the ground-truth viewpoint. Assuming that the distance between the viewpoint and the camera is fixed, the viewpoint is represented using Euler angles. The definition is as follows:

In this work, for simplicity, we employ the Chamfer Distance as a sole measure of similarity between the generated 3D model and the ground-truth model. A smaller Chamfer Distance indicates a higher degree of similarity between the two models. We define a loss function \({L_{sc}}\) based on the Chamfer Distance to impose shape constraints on the generative model. During training, \({L_{sc}}\) leverages 3D shapes from the real dataset to compute the similarity between the generated mesh model M and the ground-truth 3D model \({M_{gt}}\) using the Chamfer Distance. The feedback from the \({L_{sc}}\) loss is then utilized to optimize the generative network.

The \({L_{sc}}\) loss function, defined using the Chamfer Distance, is formulated as follows:

In this context, \({V_M}\) and \({V_{M\_gt}}\) correspond to the vertex vectors of M and \({M_{gt}}\), respectively, and n denotes the number of vertices in the 3D mesh model.

In summary, the total loss function \(Loss\) of the training network is composed of the weighted sum of the aforementioned components:

Experiments

We trained and evaluated the SS2M network on the Shapenet-synthetic dataset11 and further conducted hand-drawn sketch tests using the Shapenet-sketch dataset15 to demonstrate the effectiveness of our method. Additionally, we compared our approach with baseline methods and the state-of-the-art Sketch2Model15, highlighting the advantages of our proposed method through comprehensive experiments.

Datasets

The Shapenet-synthetic dataset comprises 13 sub-datasets, each corresponding to a specific category of 3D model data, such as airplanes, cars, and tables, spanning a total of 13 categories. Each sub-dataset contains thousands of distinct instances, with each instance comprising 3D model data, 20 viewpoint data, and 2D edge (sketch) silhouette images corresponding to the rendered 3D models from these viewpoints. The Shapenet-synthetic dataset is primarily utilized for both training and testing processes.

The Shapenet-sketch dataset also consists of 13 sub-datasets corresponding to the categories in Shapenet-synthetic. Each sub-dataset contains 100 instances, with each instance comprising 3D model data, a single specified viewpoint, and the rendered image of the 3D model from that viewpoint. Moreover, for each rendered image, there is a corresponding hand - drawn sketch created by a human volunteer. This situation unavoidably leads to a domain gap, which may create a significant distinction between the rendered images and real - world visual scenes. Consequently, any 3D shape recovered from such sketches cannot be rigorously quantified against the corresponding ground-truth model; That is, if there is a significant difference between the manual sketch and the actual image, the closer the reconstruction adheres to the sketch, the larger its discrepancy with the actual 3D model becomes. The Shapenet-sketch dataset is primarily used for testing purposes.

The partitioning of the training, validation, and test sets in both the Shapenet-synthetic and Shapenet-sketch datasets is consistent with that described in15.

Experimental details

The configuration of the experimental environment

The configuration of the experimental environment is shown in Table 1.

Network model configuration

For both the baseline method and SS2M, the encoder employs ResNet-1815,20 as the CNN to extract image features. The batch size is set to 64 and the layers of PowerMLP are 3. The Adam optimizer is utilized with a learning rate of \({l_r}=1 \times {10^{ - 4}}\), which is multiplied by 0.3 every 800 epochs. \({\beta _1}=0.9\), \({\beta _2}=0.999\). Training is conducted separately for each subclass, with a training duration of 2000 epochs. Additionally, the hyperparameters are configured as\({\lambda _{ps}}=1\), \({\lambda _{lap}}=5 \times {10^{ - 3}}\), \({\lambda _{fl}}=5 \times {10^{ - 4}}\),\({\lambda _v}=1\)and\({\lambda _{sc}}=1 \times {10^{ - 3}}\).

Results

We evaluated the performance of SS2M by comparing it with baseline models and state-of-the-art (SOTA) models15 through a series of experiments.

Experiments with the Shapenet-synthetic dataset

We first evaluated the performance of our model using the voxel Intersection over Union metric (\(Voxel\_IoU\)), the calculation of which is detailed in Eq. (14)48.

Here, \(M'\) and \(M{'_{gt}}\) correspond to the voxelized representation of the generated mesh model M and the ground-truth 3D model \({M_{gt}}\), respectively.

The results are presented in Table 2.

As shown in Table 2, our method outperformed both the baseline and the latest existing methods, achieving the highest results in 10 out of 13 subclasses. It is important to note that Sketch2Model (pred) takes a sketch as input and predicts the viewpoint to generate the model, whereas Sketch2Model (GT) requires both the sketch and a specified viewpoint as inputs to produce the generated model. Clearly, the latter has a more complex structure (unless otherwise specified in this article, all references to Sketch2Model models refer to Sketch2Model (pred) models). Our network, by contrast, takes only a sketch as input and, overall, achieves the best average performance among the methods compared.

Additionally, we calculated the Chamfer Distance, as shown in Table 3.

As shown in Table 3, our method also achieved the best average performance overall.

Moreover, we quantified the standard deviation(SD) across all samples within each category (Tables 4 and 5), thereby corroborating the robustness of SS2M.

As shown in Table 4, SS2M also achieves the highest overall mean SD performance in terms of voxel IoU.

As shown in Table 5, SS2M likewise attains the highest overall mean SD performance on Chamfer Distance. As evidenced by the SD metrics reported in Tables 4 and 5, the proposed model exhibits superior generalization capacity and robustness compared with the baselines.

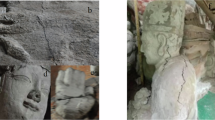

The visual results obtained on the Shapenet-synthetic dataset

We further selected representative experimental results illustrated in Figs. 3, 4 and compared them with the latest existing technologies. Overall, the models generated by our method are visually closer to the shapes of real 3D objects, thereby further demonstrating the effectiveness of our approach in generating models with higher structural quality and fidelity.

Selected representative results on the Shapenet-synthetic Dataset(The 3DViewer18 is used to render the 3D models displayed in the figure).

The visual results obtained on the Shapenet-sketch dataset

Additionally, we sampled a representative subset of hand-drawn sketches from the Shapenet-sketch corpus and contrasted the reconstructions generated by our framework with those of Sketch2Model. As evidenced in Fig. 5, our SS2M consistently produces silhouettes that align more closely with the input sketches and recovers overall geometries that more faithfully encode the draftsperson’s intent, furnishing further empirical support for the superiority of the proposed method.

Selected representative results on the Shapenet-sketch Dataset(The 3DViewer18 is used to render the 3D models displayed in the figure).

Assessing the runtime performance of 3D modeling

As previously described, our SS2M network adopts a structurally simplified encoder-decoder architecture. We evaluated the inference speed of the network on a PC equipped with an NVIDIA GeForce RTX 4080 S GPU. Our method achieved an average generation speed of 0.021 s, which is 33.3% faster than Sketch2Model (0.028 s). This result further demonstrates the advantages of our method in terms of network structure and computational efficiency.

Ablation study

We conducted ablation studies to validate the effectiveness of the SS2M network structure by calculating the Voxel Intersection over Union (Voxel-IoU) index, with the results presented in Table 6. The findings demonstrate that, compared to the baseline method, the incorporation of PowerMLP (PM) and shape constraints (SC) significantly enhanced the performance of the generative network, as shown in Table 6.

We further illustrate that, compared to the absence of shape constraints (SC), our method, which incorporates shape constraints, can generate models with higher fidelity, as demonstrated in Fig. 6. For instance, in Fig. 6, the tail wing and engine components of the aircraft, as well as the rear area of the car, have been accurately reconstructed under the guidance of shape constraints.

Experimental results of shape constraint effectiveness on Shapenet-sketch dataset(The 3DViewer18 is used to render the 3D models displayed in the figure).

Conclusion

We introduce SS2M, a streamlined sketch-based 3D mesh generation network that requires only a single sketch as input to produce high-fidelity 3D mesh models. By integrating PowerMLP into the encoder-decoder architecture, our model capitalizes on its superior expressive capabilities to achieve more accurate reconstructions. Additionally, we replace traditional discriminator networks with 3D shape constraints to directly control the shape of the generated 3D models, thereby enhancing the fidelity of the reconstructed meshes. Extensive experiments on both the Shapenet-synthetic and Shapenet-sketch datasets demonstrate that our method achieves state-of-the-art (SOTA) performance for both synthesized and hand-drawn sketches. Moreover, ablation studies validate the effectiveness of the key components in our proposed approach.

In this study, we employed an early ResNet architecture for feature extraction of the sketches. Future research may benefit from incorporating state-of-the-art image feature extractors, such as the recently introduced Mamba framework49,50, to further enhance the fidelity of the synthesized models. Moreover, the recent emergence of generative AI, most notably diffusion models51,52, has advanced to the point where their integration into sketch-to-3D pipelines for stochastic 3D variant generation is now a viable and promising research avenue. Furthermore, given our emphasis on sketch-based 3D reconstruction within known categories, we note that existing 3D benchmarks derived from Shapenet remain limited in both sample size and categorical coverage. Future work will therefore investigate category-agnostic 3D object generation networks with enhanced generalization capabilities. Concurrent advances in federated learning frameworks53,54 and industrial metaverse infrastructures55,56 offer a privacy-preserving, regulation-compliant mechanism for multi-party collaboration. By orchestrating distributed training, inference and optimization within these secure environments, we can (i) expand the semantic and stylistic expressiveness of generative models across heterogeneous sketch domains and (ii) systematically enhance the geometric fidelity and plausibility of sketch-driven 3D reconstruction.

Data availability

The Shapenet Datasets are available at: https://shapenet.org. Further data will be made available from the corresponding author on reasonable request.

References

Wang, M., Lyu, X., Li, Y. & Zhang, F. VR content creation and exploration with deep learning: A survey. Comput. Visual Media. 6, 3–28. https://doi.org/10.1007/s41095-020-0162-z (2020).

He, Y., Xie, H. & Miyata, K. Sketch2Cloth: Sketch-Based 3D Garment Generation With Unsigned Distance Fields. In Proceedings of the Nicograph International (NicoInt), Hokkaido, Japan (2023).

Qi, A. et al. Toward fine-grained sketch-based 3d shape retrieval. IEEE Trans. Image Process. 30, 8595–8606. https://doi.org/10.1109/TIP.2021.3118975 (2021).

Yuan, S., Wen, C., Liu, Y. & Fang, Y. Retrieval-specific view learning for sketch-to-shape retrieval. IEEE Trans. Multimedia. 19, 1–12. https://doi.org/10.1109/TMM.2023.3287332 (2023).

Zhu, C. et al. Sketch-Based 3D Shape Retrieval With Multi-View Fusion Transformer. In Proceedings of the 2024 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Seoul, Korea, 14–19 April 2024 (2024).

Yang, H. et al. Sequential learning for sketch-based 3D model retrieval. Multimedia Syst. 28, 761–778. https://doi.org/10.1007/s00530-021-00871-w (2022).

Colom, J. & Saito, H. Reconstruction from Multi-view sketches: an inverse rendering approach. J. WSCG. 3. https://doi.org/10.24132/JWSCG.2023.5 (2023).

Delanoy, J., Aubry, M., Isola, P., Efros, A. A. & Bousseau, A. 3D Sketching using Multi-View Deep Volumetric Prediction. Proceedings of the ACM on Computer Graphics and Interactive Techniques 1, 1–22, (2018). https://doi.org/10.1145/3203197

Lun, Z., Gadelha, M., Kalogerakis, E., Maji, S. & Wang, R. 3D Shape Reconstruction from Sketches via Multi-view Convolutional Networks. In Proceedings of the 2017 International Conference on 3D Vision (3DV), Qingdao, China; 67–77. (2017).

Zhou, J., Luo, Z., Yu, Q., Han, X. & Fu, H. GA-Sketching: shape modeling from multi-view sketching with geometry-aligned deep implicit functions. Comput. Graphics Forum. 42. https://doi.org/10.1111/cgf.14948 (2023).

Kar, A., Häne, C. & Malik, J. Learning a multi-view stereo machine. In Proceedings of the NIPS’17: Proceedings of the 31st International Conference on Neural Information Processing Systems, Long Beach, USA; 364–375. (2017).

Liu, F., Fu, H., Lai, Y. K., GaoAuthors, L. & SketchDream Sketch-based Text-To-3D generation and editing. ACM Trans. Graphics (TOG). 43, 1–13. https://doi.org/10.1145/3658120 (2024).

Zheng, W. et al. October. Sketch3D: Style-Consistent Guidance for Sketch-to-3D Generation. In Proceedings of the Proceedings of the 32nd ACM International Conference on Multimedia, New York, USA, 28 2024; 3617–3626. (2024).

Chen, M. et al. Sketch2NeRF: Multi-view Sketch-guided Text-to-3D generation. arXiv https://doi.org/10.48550/arXiv.2401.14257 (2024).

Zhang, S., Guo, Y. & Gu, Q. Sketch2Model: View-Aware 3D Modeling from Single Free-Hand Sketches. In Proceedings of the In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition(CVPR), Nashville, USA; 6012–6021. (2021).

Chen, T. et al. Deep3DSketch+: Rapid 3D Modeling from Single Free-hand Sketches. In Proceedings of the International Conference on Multimedia Modeling, Bergen, Norway, 31 March; 16–28. (2023).

Wang, F. & Sketch2Vox Learning 3D Reconstruction from a Single Monocular Sketch. In Proceedings of the In European Conference on Computer Vision(ECCV), MiCo, Milano; 57–73. (2024).

Microsoft. 3D Viewer(7.2506.10022.0). Available online: https://apps.microsoft.com/detail/9NBLGGH42THS?hl=zh-cn&=CN&ocid=pdpshare

Qiu R, Miao Y, Wang S, et al. PowerMLP: An efficient version of KAN[C]//Proceedings of the AAAI Conference on Artificial Intelligence. 2025, 39(19), 20069-20076.

He, K., Zhang, X., Ren, S. & Sun, J. Deep Residual Learning for Image Recognition. In Proceedings of the In Proceedings of the IEEE conference on computer vision and pattern recognition, Las Vegas, USA, 2016; 770–778. (2016).

Saad, M. M., O’Reilly, R. & Rehmani, M. H. A survey on training challenges in generative adversarial networks for biomedical image analysis. Artif. Intell. Rev. 57. https://doi.org/10.1007/s10462-023-10624-y (2024).

Cao, L. et al. Single-view 3D object reconstruction based on deep learning:a survey. J. Image Graphics. 1–34. https://doi.org/10.11834/jig.240389 (2024).

Hang, Y., Rui, C., Shipeng, A., Hao, W. & Heng, Z. The growth of image-related three dimensional reconstruction techniquesin deep learning-driven era: a critical summary. J. Image Graphics. 28, 2396–2409. https://doi.org/10.11834/jig.220376 (2023).

Zhang, R., Tsai, P., Cryer, J. E. & Shah, M. Shape-from-shading: a survey. IEEE Trans. Pattern Anal. Mach. Intell. 21, 690–706. https://doi.org/10.1109/34.784284 (1999).

Witkin, A. P. Recovering surface shape and orientation from texture. Artif. Intell. 17, 17–45. https://doi.org/10.1016/0004-3702(81)90019-9 (1981).

Chang, A. X. et al. Shapenet: an Information-rich 3d model repository. arXiv https://doi.org/10.48550/arXiv.1512.03012 (2015).

Mo, K. et al. A Large-Scale Benchmark for Fine-Grained and Hierarchical Part-Level 3D Object Understanding. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Long Beach, USA; 909–918. (2019).

Liu, S., Li, T., Chen, W. & Li, H. Soft rasterizer: A differentiable renderer for image-based 3d reasoning. In Proceedings of the In Proceedings of the IEEE/CVF International Conference on Computer Vision, Seoul, Korea; 7708–7717. (2019).

Loper, M., Black, M. & OpenDR, M. J. An approximate differentiable renderer. In Proceedings of the Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6–12 (2014).

Niemeyer, M., Mescheder, L., Oechsle, M. & Geiger, A. Differentiable Volumetric Rendering: Learning Implicit 3D Representations Without 3D Supervision In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, USA; 3501–3512. (2020).

Kato, H., Ushiku, Y. & Harada, T. Neural 3D Mesh Renderer. In Proceedings of the In Proceedings of the IEEE conference on computer vision and pattern recognition(CVPR), Salt Lake, USA; 3907–3916. (2018).

Liu, R. et al. Zero-1-to-3: Zero-Shot One Image to 3D Object. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, Paris, France; 9298–9309. (2023).

Wang, Z. et al. Single Image to 3D Textured Mesh with Convolutional Reconstruction Model. In Proceedings of the In European Conference on Computer Vision (ECCV), Milan, Italy, (2024).

Tochilkin, D. et al. TripoSR: Fast 3D object reconstruction from a single image. arXiv https://doi.org/10.48550/arXiv.2403.02151 (2024).

Zhou, W., Shi, X., She, Y., Liu, K. & Zhang, Y. Semi-supervised Single-view 3D reconstruction via multi shape prior fusion strategy and Self-Attention. Computers Graphics. 126. https://doi.org/10.1016/j.cag.2024.104142 (2025).

Wang, Y., Lira, W., Wang, W., Mahdavi-Amiri, A. & Zhang, H. Slice3D: Multi-Slice Occlusion-Revealing Single View 3D Reconstruction. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, Seattle, USA; 9881–9891. (2024).

Xu, J., Yang, B. & Chen, X. Unified Multi-Category 3D object generation from sketches. J. Computer-Aided Des. Comput. Graphics. 36, 1171–1180. https://doi.org/10.3724/SP.J.1089.2024.19914 (2024).

Li, C., Pan, H., Bousseau, A. & Mitra, N. J. Sketch2CAD: sequential CAD modeling by sketching in context. ACM Trans. Graphics (TOG). 39, 1–14. https://doi.org/10.1145/3414685.341780 (2020).

Olsen, L., Samavati, F. F., Sousa, M. C. & Jorge, J. A. Sketch-Based modeling: A survey. Computers Graphics. 33, 85–103. https://doi.org/10.1016/j.cag.2008.09.013 (2009).

Liu, Z. et al. KAN: Kolmogorov-Arnold networks. arXiv https://doi.org/10.48550/arXiv.2404.19756 (2024).

Kashefi, A. PointNet with KAN versus pointNet with MLP for 3D classification and segmentation of point sets. arXiv https://doi.org/10.48550/arXiv.2410.10084 (2024).

Mohan, K., Wang, H. & Zhu, X. KANs for computer vision: an experimental study. arXiv https://doi.org/10.48550/arXiv.2411.18224 (2024).

Eric, R., Ramakrishnan, D. & Gleyzer, S. SineKAN: Kolmogorov-Arnold networks using sinusoidal activation functions. Front. Artif. Intell. 7, 1462952 (2025).

Ali, K. PointNet with KAN versus pointNet with MLP for 3D classification and segmentation of point sets. arXiv https://doi.org/10.48550/arXiv.2410.10084 (2024).

Chen, Z. & Zhang, X. L. S. S. S. K. A. N. Efficient Kolmogorov-Arnold Networks based on Single-Parameterized Function. arXiv https://doi.org/10.48550/arXiv.2410.14951 (2024).

Chen, Z., Zhang, X. & LArctan-SKAN Simple and Efficient Single-Parameterized Kolmogorov–Arnold Networks using earnable Trigonometric Function. arXiv https://doi.org/10.48550/arXiv.2410.19360 (2024).

Williams, F. point-cloud-utils. Available online: https://github.com/fwilliams/point-cloud-utils.

Liu, H. et al. Fully Sparse 3D Occupancy Prediction. In Proceedings of the In European Conference on Computer Vision, Milan, Italy; 54–71. (2024).

Xu, R. Y., Wang, S., Yu, Y., Cai, B. D. & Chen, H. Visual Mamba: A Survey and New Outlooks. arXiv https://doi.org/10.48550/arXiv.2404.18861 (2024).

Zhu, L. L., Zhang, B., Wang, Q., Wenyu, X. & Wang, L. X. Vision Mamba: Efficient Visual Representation Learning with Bidirectional State Space Model. arXiv https://doi.org/10.48550/arXiv.2401.09417 (2024).

Anciukevičius, T. X. et al. P. RenderDiffusion: Image Diffusion for 3D Reconstruction, Inpainting and Generation. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, Canada, 06.18; 12608–12618. (2023).

Wang, C. P., Liu, H., Gu, Y. & Hu, J. Diffusion models for 3D generation: A survey. Comput. Visual Media. 11, 1–28. https://doi.org/10.26599/CVM.2025.9450452 (2025).

Suzuki, T. & Fed 3DGS: Scalable 3D Gaussian Splatting with Federated Learning. arXiv https://doi.org/10.48550/arXiv.2403.11460 (2024).

Wu, M. J., Li, J., Deng, X. & Wu, H. D. FedGAI: Federated style learning with cloud-edge collaboration for generative AI in fashion design. arXiv https://doi.org/10.48550/arXiv.2503.12389 (2025).

Yao, X. M. & Zhang, N. Enhancing wisdom manufacturing as industrial metaverse for industry and society 5.0. J. Intell. Manuf. 35, 235–255. https://doi.org/10.1007/s10845-022-02027-7 (2024).

Smith, H. The metaverse-industrial complex. Inform. Communication & Society. 28, 797–814. https://doi.org/10.1080/1369118X.2024.2423346 (2024).

Acknowledgements

Acknowledgments: We sincerely thank all the authors of the Shapenet-Sketch and Shapenet-Synthetic datasets for providing the free datasets.

Funding

This work was partially supported by the National Nature Science Foundation of China (No. 62462055, No. 62262056), and the Natural Science Youth Foundation of Qinghai Province(No.2023-ZJ-947Q).

Author information

Authors and Affiliations

Contributions

Conceptualization, Yingbin Wu and Dan Zhang; methodology, Yingbin Wu; software, Yingbin Wu; validation, Yingbin Wu, Fubo Wang and Peng Zhao; formal analysis, Yingbin Wu, Fubo Wang and Mingquan Zhou; investigation, Yingbin Wu and Peng Zhao; resources, Yingbin Wu, Fubo Wang and Peng Zhao; data curation, Fubo Wang and Peng Zhao; writing—original draft preparation, Yingbin Wu and Fubo Wang; writing—review and editing, Yingbin Wu, Fubo Wang, Peng Zhao, Mingquan Zhou, Shengling Geng, Dan Zhang; visualization, Yingbin Wu, Fubo Wang and Peng Zhao; supervision, Mingquan Zhou, Shengling Geng and Dan Zhang; project administration Shengling Geng and Dan Zhang; funding acquisition, Shengling Geng and Dan Zhang. All authors have read and agreed to the published version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wu, Y., Wang, F., Zhao, P. et al. High-fidelity 3D mesh generation from a single sketch using shape constraints. Sci Rep 16, 1127 (2026). https://doi.org/10.1038/s41598-025-30843-3

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-30843-3