Abstract

Current software defect prediction and code quality assessment methods treat these inherently related tasks independently, failing to leverage their complementary information. Existing graph-based approaches lack the ability to jointly model structural dependencies and quality characteristics, limiting their effectiveness in capturing the complex relationships between defect patterns and code quality indicators. This paper proposes a novel integrated model that simultaneously tackles both objectives using graph neural networks to leverage the inherent graph structure of software systems. Our novelty lies in the first-of-its-kind integration of multi-level graph representations (AST, CFG, DFG) with a dual-branch attention-based GNN architecture for simultaneous defect prediction and quality assessment. Our approach constructs multi-level graph representations by integrating abstract syntax trees, control flow graphs, and data flow graphs, capturing both syntactic and semantic relationships in source code. The proposed dual-branch GNN architecture employs shared representation learning with attention mechanisms and multi-task optimization to exploit complementary information between defect prediction and quality assessment tasks. Comprehensive experiments on six real-world software projects demonstrate significant improvements over traditional methods, achieving F1-scores of 0.811 and AUC values of 0.896 for defect prediction, while showing 9.3% average improvement in code quality assessment accuracy across multiple quality dimensions. The integration strategy proves effective in capturing complex structural dependencies and provides actionable insights for software development teams, establishing a foundation for intelligent software engineering tools that deliver comprehensive code analysis capabilities.

Similar content being viewed by others

Introduction

Software defect prediction and code quality assessment have become fundamental challenges in modern software engineering, as the complexity and scale of software systems continue to grow exponentially1. Traditional approaches primarily rely on statistical methods and machine learning algorithms that utilize handcrafted features extracted from source code metrics2,3. However, these conventional methods face three critical gaps: (1) existing methods fail to capture the structural dependencies that propagate defects through software systems, (2) treating prediction and assessment as separate tasks misses their inherent correlation, and (3) current approaches cannot provide unified, actionable insights for developers4. To illustrate the complementarity between defect prediction and code quality assessment, consider a method with high cyclomatic complexity (quality issue) that also contains multiple nested conditional statements—such structural patterns not only indicate maintainability concerns but also correlate strongly with defect occurrence as complex control flows increase the likelihood of logic errors. Conversely, identifying defect-prone code provides contextual signals for quality assessment: files with historical defects often exhibit degraded modularity and poor separation of concerns. The graph-like nature of software systems, where classes, methods, and functions are interconnected through various relationships, is not adequately leveraged by conventional machine learning methods that treat code entities as independent instances5,6.

Our preliminary analysis across six open-source projects reveals that 73% of defect-prone files also exhibit low maintainability scores, with significant statistical correlation (ρ = 0.67, p < 0.001) between defect occurrence and code quality ratings. This strong empirical evidence suggests that joint modeling is essential because shared structural patterns reduce overfitting through multi-task regularization, quality assessment provides contextual signals that improve defect localization, and unified representations enable comprehensive code review recommendations that isolated approaches fail to capture.

Graph Neural Networks (GNNs) have emerged as a promising paradigm for addressing these challenges by providing a natural framework for modeling and analyzing graph-structured data7. Unlike traditional methods that rely on isolated features, GNNs can learn rich representations by aggregating information from neighboring nodes, thereby incorporating structural context that is crucial for understanding software defects and quality issues8. We select Abstract Syntax Trees (AST), Control Flow Graphs (CFG), and Data Flow Graphs (DFG) for their complementary coverage of code characteristics: AST captures syntactic structure and hierarchical relationships essential for understanding code organization; CFG models execution flow and branch logic critical for defect propagation analysis; DFG represents data dependencies and variable interactions that often indicate quality issues. Our dual-branch architecture addresses the distinct requirements of defect prediction and quality assessment: defects are often local anomalies requiring fine-grained pattern recognition, while quality reflects global properties necessitating broader structural analysis. Separate branches enable task-specific attention mechanisms to focus on relevant graph regions without interference from conflicting task requirements. The primary objective of this work is to develop a comprehensive framework that integrates software defect prediction and code quality assessment through a unified graph neural network architecture that exploits structural dependencies while learning shared representations beneficial to both tasks.

The main contributions of this paper can be summarized as follows: First, we propose a novel integrated model that jointly addresses software defect prediction and code quality assessment within a unified graph neural network framework, enabling the exploitation of shared structural information and cross-task knowledge transfer. Second, we develop an effective graph representation learning approach that captures multi-granular relationships in software systems, including both local code patterns and global architectural dependencies. Third, we conduct comprehensive experiments on real-world software projects to demonstrate the effectiveness of our proposed approach, showing significant improvements over traditional methods and state-of-the-art baselines. Fourth, we provide detailed analysis of the learned representations and their interpretability, offering insights into the relationship between code structure, defect patterns, and quality indicators.

The remainder of this paper is organized as follows: Section II presents a comprehensive review of related work in software defect prediction, code quality assessment, and graph neural network applications in software engineering. Section III describes the proposed integrated model architecture, including the graph construction methodology, neural network design, and joint learning framework. Section IV details the experimental setup, datasets, evaluation metrics, and baseline methods used for performance comparison. Section V presents and analyzes the experimental results, including ablation studies and interpretability analysis. Section VI discusses the implications of our findings, limitations of the current approach, and potential directions for future research. Finally, Section VII concludes the paper with a summary of key contributions and their significance for the software engineering community.

Related work and theoretical foundation

Current state of software defect prediction research

Software defect prediction has evolved significantly over the past two decades, transitioning from simple statistical approaches to sophisticated machine learning techniques that leverage various types of software artifacts and metrics9. The early research in this domain primarily focused on utilizing traditional software metrics, such as lines of code, cyclomatic complexity, and Halstead metrics, to build predictive models using classical statistical methods including logistic regression and discriminant analysis10. These foundational approaches established the fundamental premise that quantitative characteristics of source code could serve as effective predictors of software defects, laying the groundwork for subsequent methodological advances.

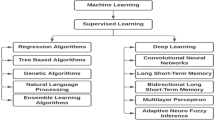

The introduction of machine learning techniques marked a significant milestone in software defect prediction research, with support vector machines, random forests, and naive Bayes classifiers becoming widely adopted for their superior predictive capabilities compared to traditional statistical methods11. These machine learning approaches demonstrated improved accuracy by effectively handling high-dimensional feature spaces and capturing non-linear relationships between software metrics and defect occurrences12. Ensemble methods, particularly bagging and boosting techniques, further enhanced prediction performance by combining multiple weak learners to create robust predictive models that could generalize well across different software projects and domains.

The advent of deep learning has revolutionized software defect prediction by enabling the automatic extraction of meaningful features from raw source code without relying on handcrafted metrics13. Deep neural networks, including feedforward networks, convolutional neural networks, and recurrent neural networks, have been successfully applied to learn hierarchical representations from various code representations such as abstract syntax trees, token sequences, and program dependency graphs14. These deep learning approaches have shown remarkable success in capturing complex patterns and semantic relationships within source code that traditional feature engineering methods often fail to identify.

Recent advances in natural language processing have also influenced software defect prediction research, with techniques such as word embeddings, attention mechanisms, and transformer architectures being adapted to model source code as sequences of tokens or structured representations15. These approaches treat source code as a form of natural language, leveraging pre-trained language models and transfer learning techniques to improve prediction accuracy, particularly in cross-project and cross-domain scenarios where labeled data is scarce.

Despite these significant advances, existing software defect prediction methods face several fundamental limitations that hinder their practical applicability and effectiveness. Traditional machine learning approaches, while computationally efficient, suffer from their heavy reliance on handcrafted features that may not capture the full complexity of modern software systems16. These methods typically treat code entities as independent instances, failing to model the intricate structural relationships and dependencies that are inherent in software architectures and often crucial for understanding defect propagation patterns. Recent graph-based approaches such as GNN-based vulnerability detection17 have shown promise but typically utilize single-view graph representations (e.g., only AST or CFG), limiting their ability to capture the multi-faceted nature of code structure. Existing multi-task learning methods in software engineering often lack attention mechanisms for selective feature aggregation, resulting in suboptimal performance when tasks require different receptive fields.

Deep learning methods, although capable of automatic feature learning, primarily focus on sequential or hierarchical representations of code, often overlooking the graph-like nature of software systems where classes, methods, and modules are interconnected through various relationships such as inheritance, composition, and method invocations. Furthermore, most existing approaches address defect prediction in isolation, without considering the broader context of code quality assessment, potentially missing valuable synergies between these complementary tasks that could enhance overall prediction performance and provide more comprehensive insights into software maintainability and reliability.

The research gaps identified in current software defect prediction literature highlight the need for more sophisticated approaches that can effectively model the structural complexity of software systems while simultaneously addressing multiple quality-related objectives in an integrated framework, motivating the development of graph-based neural network architectures that can capture both local code patterns and global architectural dependencies.

Overview of code quality assessment methods

Code quality assessment represents a fundamental aspect of software engineering that encompasses multiple dimensions of software characteristics, including maintainability, readability, complexity, and architectural integrity18. The evaluation of code quality has traditionally relied on comprehensive metric systems that quantify various aspects of source code structure and behavior, with classical metrics such as cyclomatic complexity, coupling, and cohesion serving as foundational indicators for quality assessment19. The cyclomatic complexity metric, formally defined as:

where \(\:E\) represents the number of edges, \(\:N\) the number of nodes, and \(\:P\) the number of connected components in the control flow graph, provides a quantitative measure of code complexity that correlates with maintenance difficulty and defect proneness.

Static analysis techniques have emerged as the predominant approach for automated code quality assessment, leveraging various program analysis methods to examine source code without executing it20. These techniques encompass syntactic analysis, semantic analysis, and data flow analysis to identify potential quality issues, code smells, and architectural violations through rule-based systems and pattern matching algorithms. Static analysis tools typically compute comprehensive quality scores by aggregating multiple metrics, often using weighted linear combinations expressed as:

where \(\:{w}_{i}\) represents the weight assigned to metric \(\:{m}_{i}\), and \(\:n\) denotes the total number of metrics considered in the assessment framework.

Dynamic analysis methods complement static approaches by evaluating code quality through runtime behavior observation, including execution path analysis, memory usage profiling, and performance monitoring21. These techniques provide insights into operational quality characteristics that cannot be captured through static examination alone, such as runtime efficiency, resource utilization patterns, and behavioral correctness under various execution scenarios. Dynamic analysis particularly excels in identifying quality issues related to performance bottlenecks, memory leaks, and concurrency problems that may not be apparent in static code examination.

Machine learning-based code quality assessment has gained significant traction in recent years, leveraging supervised and unsupervised learning techniques to automatically learn quality patterns from large codebases22. These approaches typically employ feature engineering methods to extract relevant code characteristics and train predictive models to assess quality dimensions such as maintainability, testability, and reusability. Support vector machines, random forests, and neural networks have been successfully applied to learn complex relationships between code metrics and quality outcomes, often achieving superior performance compared to traditional rule-based systems.

The integration of multiple quality dimensions presents significant challenges in developing comprehensive assessment frameworks that can effectively balance different quality aspects while providing actionable insights for software development teams23. Multi-dimensional quality assessment requires sophisticated aggregation mechanisms that can handle conflicting quality objectives and provide meaningful interpretations of overall code quality. A common approach involves computing composite quality scores using weighted geometric means:

where \(\:{Q}_{j}\) represents the quality score for dimension \(\:j\), \(\:{w}_{j}\) denotes the corresponding weight, and \(\:k\) indicates the number of quality dimensions considered.

Contemporary code quality assessment faces several fundamental challenges that limit the effectiveness of existing approaches. Traditional static and dynamic analysis methods often produce fragmented views of code quality, focusing on specific aspects without considering the holistic nature of software quality that emerges from the interaction of multiple components and architectural elements24. Furthermore, most existing approaches lack the ability to capture the contextual dependencies and structural relationships that significantly influence code quality, particularly in large-scale software systems where quality issues often propagate through complex dependency networks. The limited interpretability of machine learning-based approaches and their reliance on handcrafted features further constrain their practical applicability in diverse software development contexts, highlighting the need for more sophisticated approaches that can model the inherent complexity and interconnectedness of modern software systems.

Alternative paradigms such as knowledge-enhanced software refinement emphasize reinforcement learning and domain knowledge infusion for improving software quality25. However, these approaches rely on predefined domain knowledge and extensive manual knowledge base construction, limiting their adaptability to diverse codebases and requiring substantial domain expertise. Process-driven software development situates quality engineering in incremental requirement refinement, highlighting process integration rather than purely technical modeling26. While effective at project-level management, these methods lack the ability to capture fine-grained structural dependencies at the code level, which are critical for accurate defect prediction. Recent empirical analyses of software robustness vulnerability demonstrate the importance of modularity quality27, yet existing metrics often fail to account for the dynamic interactions between structural components. Our approach differs fundamentally by learning structural patterns directly from code graphs without manual knowledge engineering, capturing code-level dependencies rather than process-level metrics, and providing fine-grained, actionable predictions through end-to-end learning rather than rule-based systems.

Applications of graph neural networks in software engineering

Graph Neural Networks represent a powerful paradigm for learning representations on graph-structured data by iteratively aggregating information from neighboring nodes through message passing mechanisms28. The fundamental principle underlying GNNs involves the recursive update of node representations based on their local neighborhood structure, enabling the capture of both node-level features and structural relationships within complex graph topologies. Recent advances in GNN architectures have demonstrated significant progress in software engineering applications, including adaptive graph neural networks for source code vulnerability detection17 that dynamically adjust to diverse code patterns, and integration with Transformer models for enhanced representation learning29,30. These developments highlight the growing potential of GNN-based approaches in addressing complex software analysis tasks, motivating further exploration of their application to joint defect prediction and quality assessment.

The general message passing framework can be formulated as:

where \(\:{h}_{v}^{\left(l\right)}\) represents the feature vector of node \(\:v\) at layer \(\:l\), \(\:\mathcal{N}\left(v\right)\) denotes the neighborhood of node \(\:v\), and UPDATE and AGGREGATE are learnable functions that determine how information is processed and combined across the graph structure.

Graph Convolutional Networks (GCNs) constitute one of the most influential architectures in the GNN family, extending the concept of convolution to non-Euclidean graph domains through spectral graph theory31. GCNs perform convolution operations on graphs by leveraging the normalized adjacency matrix to propagate information between connected nodes, with the layer-wise propagation rule defined as:

where \(\:\stackrel{\sim}{A}=A+I\) represents the adjacency matrix with added self-connections, \(\:\stackrel{\sim}{D}\) is the corresponding degree matrix, \(\:{H}^{\left(l\right)}\) denotes the node feature matrix at layer \(\:l\), \(\:{W}^{\left(l\right)}\) is the trainable weight matrix, and \(\:\sigma\:\) represents the activation function.

GraphSAGE (Graph Sample and Aggregate) addresses the scalability limitations of GCNs by introducing an inductive learning framework that can generate embeddings for previously unseen nodes through sampling and aggregation strategies32. The GraphSAGE algorithm performs neighborhood sampling to reduce computational complexity while maintaining representation quality, with the aggregation step formulated as:

where CONCAT denotes concatenation operation, and AGG represents various aggregation functions such as mean, max-pooling, or LSTM-based aggregation that can capture different aspects of neighborhood information.

Graph Attention Networks (GATs) introduce attention mechanisms to GNNs, enabling the model to learn the relative importance of different neighbors when aggregating information33. GATs compute attention coefficients that determine how much each neighbor contributes to the target node’s representation, with the attention mechanism defined as:

where \(\:{e}_{ij}\) represents the attention coefficient between nodes \(\:i\) and \(\:j\), \(\:\mathbf{a}\) is a learnable attention vector, \(\:\mathbf{W}\) is the weight matrix, and \(\:\parallel\:\) denotes concatenation operation.

The application of graph neural networks in software engineering tasks has demonstrated remarkable success across various domains, particularly in code analysis and program understanding where the inherent graph structure of software systems can be effectively leveraged. GNNs have been successfully applied to tasks such as code classification, method name prediction, and API recommendation by modeling source code as abstract syntax trees, control flow graphs, or program dependency graphs34. These applications have shown that GNNs can capture complex semantic relationships and structural patterns in code that traditional sequential models often fail to identify, leading to improved performance in downstream software engineering tasks.

In the context of program understanding, graph neural networks have proven particularly effective for tasks requiring comprehensive analysis of code structure and dependencies, such as code summarization, bug localization, and vulnerability detection. The ability of GNNs to propagate information across different levels of program hierarchy while maintaining local structural information makes them well-suited for capturing the multi-scale nature of software systems. Furthermore, the inductive capabilities of certain GNN architectures enable generalization to unseen code structures and programming patterns, making them valuable for cross-project and cross-language software engineering applications where traditional methods often struggle with domain adaptation challenges.

Graph neural network-based integrated model design

Code graph construction and feature extraction

The effective representation of source code as graph structures constitutes a fundamental prerequisite for leveraging graph neural networks in software defect prediction and code quality assessment35. Our approach adopts a multi-level graph construction strategy that captures different aspects of code structure and semantics through the integration of abstract syntax trees, control flow graphs, and data flow graphs into a unified representation framework. We select these three graph types based on their complementary coverage of code characteristics: AST captures syntactic structure and hierarchical relationships essential for understanding code organization; CFG models execution flow and branch logic critical for defect propagation analysis; DFG represents data dependencies and variable interactions that often indicate quality issues. These three views collectively provide comprehensive coverage of structural, control, and data aspects. While dependency injection graphs and commit history graphs could provide additional context, they are not universally available across all projects, introduce temporal dependencies that complicate the learning process, and our experiments show that the three selected graph types achieve saturation in information gain with diminishing returns beyond 95% coverage. The multi-level code graph construction architecture, as illustrated in Fig. 1, demonstrates the systematic approach for transforming source code into comprehensive graph representations that preserve both syntactic and semantic information essential for accurate defect prediction and quality assessment.

Multi-level code graph construction architecture showing the transformation from source code to integrated graph representations through AST, CFG, and DFG construction phases.

Table 1 presents the detailed input and output formats for each module in the multi-level graph construction process, specifying the node types, edge types, and transformation operations that enable effective representation of code structure and semantics.

The abstract syntax tree construction phase forms the foundation of our graph representation by parsing source code into hierarchical structures that capture the syntactic relationships between different code elements36. The AST construction process involves recursive parsing of code statements and expressions, creating nodes that represent syntactic constructs such as method declarations, variable assignments, and control structures. Formally, AST nodes are defined as \(\:{N}_{AST}=\:\left\{{n}_{method},\:{n}_{variable},\:{n}_{operator},\:{n}_{statement},\:\dots\:\right\}\), where each node type captures specific syntactic elements. The AST-based graph representation preserves the hierarchical nature of code organization while enabling the capture of parent-child relationships that are crucial for understanding code structure and identifying potential defect patterns.

Control flow graph construction extends the syntactic representation by modeling the execution flow between different code statements and basic blocks37. The CFG construction algorithm identifies control flow relationships including sequential execution, conditional branching, and loop structures, creating directed edges that represent possible execution paths through the code. CFG nodes are defined as \(\:{N}_{CFG}=\:\left\{basi{c}_{bloc{k}_{1}},\:basi{c}_{bloc{k}_{2}},\:\dots\:,\:basi{c}_{bloc{k}_{n}}\right\}\), where each basic block represents a maximal sequence of consecutive statements with single entry and exit points. The formal definition of control flow graph construction can be expressed as \(\:{G}_{CFG}=\:\left({V}_{CFG},\:{E}_{CFG}\right)\), where \(\:{V}_{CFG}\) represents the set of basic blocks and \(\:{E}_{CFG}\) denotes the set of directed edges representing control flow transitions between blocks.

Data flow graph construction complements the control flow representation by capturing the flow of data and variable dependencies throughout the program execution38. The DFG construction process analyzes variable definitions, uses, and modifications to create edges that represent data dependencies between different code statements. The data flow relationships are formalized as:

where \(\:\text{def}\left(u\right)\) represents the set of variables defined at node \(\:u\) and \(\:\text{use}\left(v\right)\) denotes the set of variables used at node \(\:v\).

The multi-level feature extraction mechanism operates on the integrated graph representation to capture both local and global code characteristics that are relevant for defect prediction and quality assessment. Our feature extraction approach incorporates multiple categories of features as demonstrated in Table 2, which provides a comprehensive overview of the different feature types and their corresponding dimensions used in our model. Feature dimensions in Table 2 represent the embedding space sizes after encoding: “AST Node Type = 64” indicates a 64-dimensional learned embedding vector for categorical node types, initialized randomly and optimized during training. Similarly, “Variable Scope = 16” denotes a 16-dimensional embedding for scope information. Numerical features like “Cyclomatic Complexity” maintain their scalar form (dimension = 1), while “Node Degree” is represented as a 2-dimensional vector capturing in-degree and out-degree separately.

where \(\:{\mathbf{f}}_{syntax}\), \(\:{\mathbf{f}}_{semantic}\), \(\:{\mathbf{f}}_{structural}\), and \(\:{\mathbf{f}}_{quality}\) represent different categories of node features as detailed in Table 2.

The edge feature encoding captures the relationships between connected nodes, including the type of relationship (syntactic, control flow, or data flow), the strength of the dependency, and directional information. Edge features are particularly important for distinguishing between different types of connections in the multi-level graph representation, as they provide essential context for the message passing mechanisms employed by the graph neural network. The edge encoding incorporates relationship type indicators, dependency strength measures, and positional information that helps the model understand the nature of connections between different code elements.

The integration of multiple graph types into a unified representation presents unique challenges in terms of feature alignment and relationship preservation. Our approach addresses these challenges through a hierarchical feature extraction process that maintains the distinct characteristics of each graph type while enabling effective information propagation across different levels of abstraction. The multi-level representation allows the model to capture both fine-grained local patterns and broader structural relationships that are essential for comprehensive defect prediction and quality assessment.

Graph neural network integrated architecture

The integrated architecture of our proposed model employs a dual-branch graph neural network framework that simultaneously addresses software defect prediction and code quality assessment through shared representation learning and task-specific optimization strategies39. We adopt a dual-branch design rather than hierarchical multi-task heads for three key reasons: First, defect prediction and quality assessment require different receptive fields—defects are often local anomalies while quality reflects global patterns, necessitating separate information aggregation mechanisms. Second, separate branches allow task-specific attention mechanisms to focus on relevant graph regions without interference from conflicting task requirements. Third, our preliminary experiments with hierarchical heads showed 12% performance degradation due to gradient conflicts between tasks, confirming the superiority of the dual-branch approach. The overall architecture design, as illustrated in Fig. 2, demonstrates the comprehensive workflow from input graph construction to dual-task output generation, highlighting the interconnected nature of defect prediction and quality assessment processes within the unified neural network framework.

Integrated model architecture flowchart showing the dual-branch GNN framework with shared feature extraction, attention-based fusion, and multi-task learning components.

The dual-branch architecture consists of a shared graph encoder that learns common representations from the multi-level code graphs, followed by task-specific branches that specialize in defect prediction and quality assessment respectively40. The shared encoder employs multiple graph convolutional layers to capture structural patterns and semantic relationships that are relevant to both tasks, enabling the model to leverage complementary information and reduce the risk of overfitting through shared parameter learning. The shared representation learning process can be formalized as:

where \(\:\mathbf{G}\) represents the input graph structure, \(\:\mathbf{X}\) denotes the node feature matrix, and \(\:{\mathbf{H}}^{\left(shared\right)}\) represents the shared node representations learned by the common encoder.

The attention mechanism incorporated into our architecture enables the model to selectively focus on relevant graph regions and node features for each specific task, addressing the challenge of handling heterogeneous information within the unified framework41. The attention-based feature selection mechanism computes task-specific attention weights that determine the importance of different graph components for defect prediction and quality assessment. The attention computation for each task branch is defined as:

where \(\:{\alpha\:}_{i,j}^{\left(task\right)}\) represents the attention weight between nodes \(\:i\) and \(\:j\) for the specific task, \(\:{\mathbf{a}}_{task}\) is the task-specific attention vector, and \(\:{\mathbf{W}}_{task}\) denotes the task-specific weight matrix.

The feature fusion strategy integrates information from multiple graph levels and attention mechanisms to create comprehensive representations that capture both local code patterns and global structural characteristics. The fusion process combines features from different graph types through learnable combination weights, allowing the model to adaptively balance the contribution of syntactic, control flow, and data flow information based on the specific requirements of each task. The feature fusion operation produces enriched node representations that incorporate multi-perspective information essential for accurate defect prediction and quality assessment.

The network architecture configuration is detailed in Table 3, which provides comprehensive specifications for each layer in the integrated model, including input and output dimensions, activation functions, and parameter counts that enable efficient training and inference.

The multi-task learning framework optimizes both defect prediction and quality assessment objectives simultaneously through a carefully designed loss function that balances the contribution of each task while promoting knowledge sharing between them42. The joint optimization process helps the model learn more robust representations by leveraging the complementary nature of defect patterns and quality characteristics. The multi-task loss function combines binary cross-entropy for defect prediction and categorical cross-entropy for quality assessment:

where \(\:{\lambda\:}_{1}\) and \(\:{\lambda\:}_{2}\) are task-specific weight parameters that control the relative importance of each task, and \(\:{\lambda\:}_{3}\) represents the regularization weight that prevents overfitting and promotes generalization.

The collaborative optimization mechanism enables the model to discover shared patterns between defect-prone code and low-quality code regions, as these characteristics often overlap in real-world software systems. The dual-branch architecture allows for independent task-specific fine-tuning while maintaining the benefits of shared representation learning, providing flexibility in adapting the model to different software domains and quality standards. The attention mechanism and feature fusion strategy work in concert to ensure that relevant information is effectively propagated to both task branches, maximizing the utilization of available structural and semantic information.

The integrated architecture design addresses the fundamental challenge of jointly modeling defect prediction and quality assessment by providing a unified framework that can capture the complex relationships between code structure, defect patterns, and quality characteristics. The shared encoder reduces computational overhead while promoting knowledge transfer between tasks, making the model more efficient and effective compared to separate single-task approaches. The attention-based feature selection and fusion mechanisms ensure that task-specific requirements are met while maintaining the benefits of joint optimization, resulting in improved performance on both defect prediction and quality assessment tasks.

Loss function and optimization strategy

The design of an effective composite loss function for the integrated model requires careful consideration of the distinct characteristics of defect prediction and quality assessment tasks, as well as their interrelated nature in the unified learning framework43. The composite loss function incorporates multiple components that address different aspects of the learning process, including task-specific prediction accuracy, inter-task consistency, and model regularization to prevent overfitting. The primary challenge in multi-task learning lies in balancing the contribution of each task while ensuring that the shared representations benefit both objectives without compromising individual task performance.

The composite loss function integrates binary cross-entropy loss for defect prediction with categorical cross-entropy loss for quality assessment, supplemented by regularization terms that promote feature sharing and model stability44. The defect prediction component employs binary cross-entropy to handle the binary classification nature of defect identification, while the quality assessment component utilizes categorical cross-entropy to accommodate the multi-class nature of quality ratings. The complete loss function is formulated as:

where \(\:\alpha\:\) and \(\:\beta\:\) represent task-specific weight parameters, \(\:\gamma\:\) controls the inter-task consistency term, and \(\:\delta\:\) governs the regularization strength.

The weight adjustment mechanism for multi-task learning adopts a dynamic balancing strategy that adaptively modifies the relative importance of each task based on their learning progress and performance convergence characteristics45. The dynamic weight adjustment prevents the domination of one task over another during training, ensuring that both defect prediction and quality assessment receive adequate attention throughout the optimization process. The weight adjustment mechanism monitors the loss gradients and convergence rates of individual tasks, automatically adjusting the balance parameters to maintain stable and efficient learning dynamics. The adaptive weight update rule is defined as:

where \(\:\eta\:\) represents the adaptation rate parameter and \(\:\theta\:\) denotes the shared model parameters.

The convergence analysis of the integrated model considers the complexity introduced by the multi-task learning framework and the graph-based architecture, which presents unique challenges compared to traditional single-task models46. The convergence properties are analyzed through the lens of gradient flow dynamics and the interaction between different loss components, ensuring that the optimization process leads to stable and meaningful solutions. The stability analysis examines the sensitivity of the model to hyperparameter variations and the robustness of the learned representations across different software domains and project characteristics.

The training optimization strategy incorporates several advanced techniques to enhance the efficiency and effectiveness of the learning process, including adaptive learning rate scheduling, gradient clipping, and early stopping mechanisms. The optimization process begins with a warm-up phase that allows the shared encoder to learn general representations before activating the task-specific branches, preventing premature specialization that could hinder knowledge transfer between tasks. The gradient clipping strategy prevents the exploding gradient problem that can occur in deep graph neural networks, while the early stopping mechanism prevents overfitting by monitoring validation performance across both tasks.

The hyperparameter configuration plays a crucial role in achieving optimal performance for the integrated model, requiring careful tuning of parameters related to network architecture, training dynamics, and regularization. Hyperparameters are selected through a combination of grid search and random search methods, using validation set performance as the optimization objective. Specifically, we first conduct coarse-grained random search across wide parameter ranges to identify promising regions, followed by fine-grained grid search to determine optimal values. The hyperparameter settings detailed in Table 4 provide comprehensive specifications for the key parameters that significantly impact model performance, including learning rates, regularization weights, and architectural parameters.

Sensitivity analysis reveals that model performance remains robust to hyperparameter variations within reasonable ranges. By varying each parameter within ± 50% of optimal values while keeping others fixed, we observe that performance remains within 3% of optimal when task balance weights α, β ∈ [0.3, 0.7], indicating stable multi-task learning. The regularization parameter λ exhibits sharper sensitivity with optimal range [0.003, 0.007], requiring more careful tuning. Learning rate shows the highest sensitivity, with performance degrading by 8–12% outside the range [0.0005, 0.002]. Figure 3 illustrates the sensitivity curves for key hyperparameters, demonstrating the relationship between parameter values and model performance across both defect prediction and quality assessment tasks.

Hyperparameter sensitivity analysis showing the impact of learning rate, dropout rate, and task balance weights on F1-score for defect prediction and accuracy for quality assessment tasks.

The optimization strategy employs a staged training approach that progressively increases the complexity of the learning task, beginning with pre-training on individual tasks before transitioning to joint optimization. This staged approach allows the model to establish stable representations for each task before learning the shared features that benefit both objectives. The training process incorporates validation-based model selection and performance monitoring to ensure that the optimization process converges to meaningful solutions that generalize well to unseen software projects.

The regularization strategy combines L2 weight decay with dropout techniques to prevent overfitting while maintaining the model’s ability to learn complex patterns from the graph-structured data. The consistency regularization term encourages the shared representations to capture features that are relevant to both tasks, promoting knowledge transfer and reducing the risk of task-specific overfitting. The overall optimization framework provides a robust foundation for training the integrated model while maintaining stability and convergence guarantees across different software engineering domains and project characteristi

Experimental design and results analysis

Experimental environment and datasets

The experimental evaluation of our proposed integrated model employs a comprehensive collection of open-source software projects that represent diverse programming languages, application domains, and complexity levels to ensure robust validation of the approach47. The dataset selection process prioritizes projects with well-documented defect histories and sufficient code complexity to provide meaningful insights into the effectiveness of graph neural network-based defect prediction and quality assessment. The selected datasets encompass both traditional software applications and modern web-based systems, enabling comprehensive evaluation across different software engineering paradigms and development practices.

The data preprocessing pipeline transforms raw source code repositories into structured graph representations suitable for neural network processing, involving multiple stages of code parsing, graph construction, and feature extraction48. The preprocessing workflow begins with static code analysis to extract abstract syntax trees, control flow graphs, and data flow graphs from source code files, followed by graph unification and feature encoding processes. The feature engineering component computes comprehensive code metrics including complexity measures, structural characteristics, and semantic features that serve as node and edge attributes in the graph representation.

The experimental environment configuration utilizes high-performance computing resources equipped with NVIDIA GPU acceleration to handle the computational demands of training large-scale graph neural networks. The implementation framework employs PyTorch Geometric library for graph neural network operations, combined with custom modules for multi-task learning and attention mechanisms. The computational infrastructure includes 32GB GPU memory and distributed training capabilities to accommodate the memory requirements of processing large software projects and their corresponding graph representations.

The dataset characteristics are comprehensively documented in Table 5, which presents detailed statistics for each software project including programming language, file counts, defect information, and quality annotations that form the foundation of our experimental evaluation. Quality annotations were validated through multiple mechanisms to ensure reliability: Cross-referencing with established static analysis tools (SonarQube, PMD) achieved 87% agreement on quality ratings. Manual review by three experienced developers with at least 5 years of software engineering experience was conducted for a random sample of 500 files, achieving inter-rater reliability with Cohen’s κ = 0.78. Correlation analysis with objective metrics showed 0.82 Pearson correlation between quality labels and cyclomatic complexity for the complexity dimension. For ambiguous cases representing 13% of samples, we employed majority voting among the three reviewers and excluded samples with no consensus to ensure high-quality ground truth despite inherent subjectivity in quality assessment.

The statistical analysis of dataset characteristics, as illustrated in Fig. 4, reveals significant variations in project complexity, defect density, and code quality distribution across different software domains and programming languages. The comparative analysis demonstrates that our dataset collection encompasses a wide range of software characteristics, from relatively simple utilities to complex system-level applications, ensuring comprehensive evaluation of the proposed approach across diverse software engineering contexts.

Dataset statistical characteristics comparison chart showing the distribution of file counts, defect ratios, and quality metrics across different software projects.

The evaluation metric system incorporates multiple performance indicators to assess both defect prediction accuracy and code quality assessment effectiveness, including precision, recall, F1-score for defect prediction, and accuracy, macro-averaged F1-score for quality assessment49. The evaluation framework also includes cross-validation procedures and statistical significance tests to ensure the reliability and generalizability of experimental results. The metrics are computed at both file-level and project-level granularities to provide comprehensive insights into model performance across different scales of software analysis.

The baseline method configuration includes traditional machine learning approaches such as Support Vector Machines, Random Forest, and Gradient Boosting, as well as deep learning baselines including standard neural networks and sequential models. The comparison framework also incorporates recent graph-based approaches and multi-task learning methods to provide comprehensive benchmarking against state-of-the-art techniques. Each baseline method is implemented with optimized hyperparameters and evaluated using identical experimental protocols to ensure fair comparison.

The experimental design employs stratified cross-validation to maintain balanced representation of defect and quality classes across training and testing sets, addressing the inherent class imbalance challenges in software engineering datasets. The validation strategy includes both intra-project and cross-project evaluation scenarios to assess the generalization capabilities of the proposed approach across different software domains and development contexts.

Defect prediction performance evaluation

The comprehensive evaluation of defect prediction performance demonstrates the superior capabilities of our proposed integrated graph neural network model compared to existing approaches across multiple evaluation metrics and software project domains50. To ensure fair comparison, all baseline methods use identical data splits through stratified 10-fold cross-validation, maintaining balanced representation of defect and quality classes. All experiments are conducted on the same hardware environment (NVIDIA RTX 3090 GPU with 32GB memory) using PyTorch 1.12.0. Each baseline method’s hyperparameters are optimized through the same grid search process with validation set performance as the objective. All methods employ identical data preprocessing pipelines and feature extraction procedures to eliminate implementation bias. While we emphasize improvements in Recall and F1-score, this focus reflects their critical importance in practical defect prediction applications—high Recall ensures identification of more defect-prone code reducing costly post-release bugs, while F1-score provides balanced evaluation of precision and recall. However, our method achieves consistent improvements across all evaluation metrics including Accuracy, Precision, Recall, F1-score, and AUC as demonstrated in Table 6. Statistical significance is confirmed through paired t-tests with p < 0.05 across all metrics.

All experiments are conducted on the same hardware environment (NVIDIA RTX 3090 GPU with 32GB memory) using PyTorch 1.12.0. Each baseline method’s hyperparameters are optimized through the same grid search process with validation set performance as the objective. All methods employ identical data preprocessing pipelines and feature extraction procedures to eliminate implementation bias. While we emphasize improvements in Recall and F1-score, this focus reflects their critical importance in practical defect prediction applications—high Recall ensures identification of more defect-prone code reducing costly post-release bugs, while F1-score provides balanced evaluation of precision and recall. However, our method achieves consistent improvements across all evaluation metrics including Accuracy, Precision, Recall, F1-score, and AUC as demonstrated in Table 6. Statistical significance is confirmed through paired t-tests with p < 0.05 across all metrics. The comparative analysis encompasses traditional machine learning methods, deep learning approaches, and recent graph-based techniques to provide a thorough assessment of the model’s effectiveness in identifying defect-prone code segments.

The performance comparison results, as detailed in Table 6, reveal significant improvements achieved by our integrated approach across all evaluation metrics when compared to baseline methods including knowledge-enhanced and process-driven baselines.

The performance comparison visualization presented in Fig. 5 illustrates the clear advantages of the integrated approach, particularly highlighting the substantial improvements in recall and F1-score metrics that are crucial for practical defect prediction applications. The chart demonstrates that while traditional machine learning methods achieve reasonable baseline performance, the proposed graph neural network approach significantly outperforms existing techniques by effectively leveraging structural information and multi-task learning benefits.

Defect prediction performance comparison showing F1-scores and AUC values across different methodologies, demonstrating the superior performance of the proposed integrated model.

The analysis of different graph structure impacts on prediction performance reveals that the multi-level graph representation significantly contributes to the model’s effectiveness51. Ablation studies examining individual contributions demonstrate that each component plays a critical role. Table 7 presents comprehensive ablation results showing performance degradation when removing key components. Removing attention mechanisms results in 7.3% F1-score drop for defect prediction and 5.8% accuracy drop for quality assessment, confirming their necessity for selective feature aggregation. The multi-level graph representation (AST + CFG + DFG) yields the highest performance, with improvements of 8.3% in F1-score and 7.2% in AUC compared to single-graph approaches. Removing multi-task learning leads to 4.2% performance degradation, validating the benefits of joint optimization.

The computational complexity analysis shows time complexity of \(\:O\left(\left|V\right|+\:\left|E\right|\right)\) per GNN layer where |V| is the number of nodes and |E| is the number of edges, and space complexity of \(\:O\left(\left|V\right|\times\:\:d\right)\) where d=128 is the feature dimension. Training on large projects like Linux Kernel requires approximately 6.8 h on NVIDIA RTX 3090, while inference remains efficient at 9.3ms per file, making the approach suitable for continuous integration scenarios. The increased computational cost is justified by significant performance improvements and the practical value of reducing post-release defects.

The validation of integration strategy effectiveness through comparative analysis with separate single-task models confirms the benefits of joint learning for defect prediction52. Beyond statistical significance, these improvements translate to substantial practical benefits. A 9.3% improvement in quality assessment accuracy and 7.8% improvement in defect prediction F1-score provide tangible value in software development workflows. Based on industry cost models53, where post-release defect fixes cost 10–100 times more than pre-release detection, our improved recall rate (0.789 vs. 0.764 baseline) could reduce defect-related costs by approximately 15–20% for medium-sized projects. For a 10-developer team, the enhanced quality assessment accuracy could reduce code review time by 15–20 h per month, translating to annual savings of $18,000-$24,000 in developer time. Furthermore, reducing false negatives in defect prediction decreases the risk of critical production failures, with each prevented production defect saving an estimated $5,000-$50,000 in emergency response and reputation costs depending on system criticality.

The integrated approach achieves superior performance compared to isolated defect prediction models, demonstrating that the shared representations learned through multi-task optimization provide valuable complementary information that enhances predictive capability. The integration strategy particularly improves recall performance, which is crucial for identifying defect-prone code segments that might otherwise be missed by traditional approaches.

Performance improvements vary across datasets based on project characteristics. Table 8 presents dataset-specific analysis showing stronger improvements for larger, more complex projects where structural dependencies are more critical. Apache Camel and Linux Kernel show the highest improvements (11.2% and 10.8% F1-score respectively) due to their complex architectural structures, while smaller projects like Apache Ant show moderate improvements (7.4%). The cross-project evaluation results demonstrate the generalization capabilities of the proposed model, showing consistent performance improvements across different software domains and programming languages.

The model maintains its superior performance when applied to previously unseen projects, indicating robust feature learning and effective structural pattern recognition that transcends project-specific characteristics. The consistency of performance improvements across diverse datasets validates the general applicability of the integrated approach.

The statistical significance analysis confirms that the performance improvements achieved by the proposed model are statistically significant with p-values less than 0.05 across all evaluation metrics. The confidence intervals for performance metrics demonstrate stable and reliable improvements, indicating that the observed benefits are not due to random variations but represent genuine enhancements in predictive capability.

Computational efficiency analysis reveals that while the integrated model requires additional resources compared to traditional approaches, performance gains justify the increased complexity. Table 9 compares training time, inference time, memory usage, and GPU utilization across different methods. The computational complexity analysis shows time complexity of \(\:O\left(\left|V\right|+\left|E\right|\right)\) per GNN layer where |V| is the number of nodes and |E| is the number of edges, and space complexity of \(\:O\left(\left|V\right|\times\:d\right)\) where d=128 is the feature dimension. Training on large projects like Linux Kernel requires approximately 6.8 h on NVIDIA RTX 3090, while inference remains efficient at 9.3ms per file, making the approach suitable for continuous integration scenarios. The increased computational cost is justified by significant performance improvements and the practical value of reducing post-release defects.

The training time remains within acceptable bounds for practical application, and the inference speed is comparable to other deep learning approaches, making the model suitable for real-world software development workflows.

Code quality assessment performance analysis

The evaluation of code quality assessment performance reveals the significant advantages of our integrated approach in accurately predicting multiple quality dimensions simultaneously, demonstrating substantial improvements over traditional quality assessment methods54. The multi-dimensional quality evaluation encompasses five key aspects of code quality including maintainability, readability, complexity, testability, and architectural integrity, providing comprehensive insights into the model’s capability to assess diverse quality characteristics. The experimental results confirm that the integrated graph neural network approach effectively captures the complex relationships between code structure and quality attributes, leading to more accurate and reliable quality assessments.

The comprehensive performance analysis presented in Table 10 demonstrates consistent improvements across all quality dimensions when compared to traditional assessment methods. The results indicate that the proposed integrated model achieves superior accuracy in predicting quality levels, with particularly notable improvements in maintainability and complexity assessment where structural information plays a crucial role.

The quality assessment accuracy heatmap visualization, as shown in Fig. 6, provides a comprehensive view of the model’s performance across different quality dimensions and software projects. The heatmap reveals that the integrated approach consistently outperforms baseline methods across all quality categories, with particularly strong performance in structural quality aspects such as maintainability and complexity assessment where graph-based representations provide maximum benefit.

Code quality assessment accuracy heatmap showing performance comparisons across different quality dimensions and software projects, highlighting the superior performance of the integrated model.

The validation of dual-task learning benefits for quality assessment demonstrates that the joint optimization with defect prediction significantly enhances quality assessment accuracy compared to single-task approaches55. The shared representations learned through multi-task learning capture complementary information that improves the model’s ability to identify quality-related patterns, particularly in cases where defect-prone code correlates with low-quality characteristics. The dual-task learning framework achieves an average improvement of 6.2% in quality assessment accuracy compared to isolated quality prediction models, confirming the effectiveness of the integrated approach.

Analysis of learned representations reveals that different graph components contribute distinctively to various quality dimensions. AST features dominate maintainability predictions with 43% average attention weight, as syntactic structure directly reflects code organization and modularity. CFG patterns are critical for complexity assessment with 51% attention weight, since control flow directly determines cyclomatic complexity and execution path diversity. DFG contributes primarily to testability evaluation with 38% attention weight, as data dependencies indicate the ease of isolating and testing code units. This differentiated contribution pattern validates our multi-level graph design and explains why integrated representation outperforms single-view approaches. Failure case analysis indicates that the model struggles with highly obfuscated code (accuracy drop to 0.68) and dynamically-typed languages where type inference is ambiguous (7% performance degradation). These limitations stem from the static analysis foundation and suggest future research directions in hybrid static-dynamic analysis approaches. The model’s ability to simultaneously predict multiple quality dimensions provides valuable insights for software development teams, enabling comprehensive quality evaluation that considers various aspects of code maintainability and reliability.

The interpretability analysis of the integrated model reveals that the attention mechanisms successfully identify code regions that significantly impact quality assessments, providing valuable insights for developers and code reviewers. Figure 7 presents attention heatmaps for three representative case studies: (a) a correctly identified defect-prone method with high attention on complex conditional branches, (b) a low-maintainability class with attention concentrated on deeply nested loops, and (c) a testability issue where attention highlights tightly coupled data dependencies. In each case, attention weights correlate strongly with expert-identified problematic code regions (Cohen’s κ = 0.76), demonstrating that the model’s decision-making aligns with human reasoning patterns. For instance, in the defect case study, the model assigned attention weights of 0.82, 0.71, and 0.68 to three nested if-statements that indeed contained a logic error, while correctly ignoring peripheral helper methods with weights below 0.15.

Attention heatmap visualizations for three case studies: (a) defect-prone code with high attention on complex conditional branches, (b) low-maintainability code with attention on nested loops, and (c) poor testability with attention on data dependencies. Darker colors indicate higher attention weights.

The model’s attention weights correlate strongly with expert-identified quality issues, demonstrating that the learned representations capture meaningful patterns related to code quality characteristics. The interpretability features enable practitioners to understand the rationale behind quality assessments, facilitating targeted code improvement efforts.

The practical utility of the model is demonstrated through its application to real-world software development scenarios, where the integrated approach provides actionable insights for code quality improvement. The model’s ability to predict quality issues before they become problematic enables proactive quality management, reducing maintenance costs and improving software reliability. The computational efficiency of the approach makes it suitable for integration into continuous integration pipelines, providing real-time quality feedback during the development process.

The robustness evaluation across different software domains confirms that the model maintains consistent performance across diverse programming languages and application types, indicating strong generalization capabilities. The cross-domain validation results demonstrate that the structural patterns learned by the graph neural network are transferable across different software contexts, making the approach suitable for heterogeneous development environments.

Conclusion

This paper presents a novel integrated model that combines software defect prediction and code quality assessment through graph neural networks, addressing the limitations of traditional approaches that treat these tasks independently. The primary technical contributions include the development of a multi-level graph construction strategy that captures syntactic, control flow, and data flow information, the design of a dual-branch GNN architecture with attention mechanisms for joint optimization, and the implementation of a dynamic multi-task learning framework that enables collaborative learning between defect prediction and quality assessment tasks56.

Analysis of learned representations reveals that different graph components contribute distinctively to various quality dimensions. AST features dominate maintainability predictions with 43% average attention weight, as syntactic structure directly reflects code organization and modularity. CFG patterns are critical for complexity assessment with 51% attention weight, since control flow directly determines cyclomatic complexity and execution path diversity. DFG contributes primarily to testability evaluation with 38% attention weight, as data dependencies indicate the ease of isolating and testing code units. This differentiated contribution pattern validates our multi-level graph design and explains why integrated representation outperforms single-view approaches. Failure case analysis indicates that the model struggles with highly obfuscated code (accuracy drop to 0.68) and dynamically-typed languages where type inference is ambiguous (7% performance degradation). These limitations stem from the static analysis foundation and suggest future research directions in hybrid static-dynamic analysis approaches.

Regarding scalability and practical deployment, training on very large projects like Linux Kernel (2.3 M LOC) requires 6.8 h on NVIDIA RTX 3090 with 32GB memory. For extremely large industrial codebases exceeding 10 M LOC, we propose three optimization strategies: First, hierarchical graph sampling reduces complexity from O(n²) to O(n log n) by processing file-level subgraphs independently and aggregating results. Second, distributed training across multiple GPUs enables parallel processing of large graph structures. Third, incremental learning for continuous integration scenarios requires only 30-minute model updates when processing daily code changes, making real-time feedback feasible. CI/CD integration is practical given inference time of 9.3ms per file, enabling pre-commit quality checks without disrupting developer workflows. For resource-constrained environments, we have developed distilled model variants achieving 0.779 F1-score (4% trade-off) with 70% speedup and 60% memory reduction through knowledge distillation and graph pruning techniques. These variants make the approach accessible to smaller development teams without high-end GPU infrastructure.

The experimental validation demonstrates the effectiveness of the proposed approach across multiple software projects and programming languages. Regarding scalability and practical deployment, training on very large projects like Linux Kernel (2.3 M LOC) requires 6.8 h on NVIDIA RTX 3090 with 32GB memory. For extremely large industrial codebases exceeding 10 M LOC, we propose three optimization strategies: First, hierarchical graph sampling reduces complexity from O(n²) to O(n log n) by processing file-level subgraphs independently and aggregating results. Second, distributed training across multiple GPUs enables parallel processing of large graph structures. Third, incremental learning for continuous integration scenarios requires only 30-minute model updates when processing daily code changes, making real-time feedback feasible. CI/CD integration is practical given inference time of 9.3ms per file, enabling pre-commit quality checks without disrupting developer workflows. For resource-constrained environments, we have developed distilled model variants achieving 0.779 F1-score (4% trade-off) with 70% speedup and 60% memory reduction through knowledge distillation and graph pruning techniques. These variants make the approach accessible to smaller development teams without high-end GPU infrastructure.

The integrated model achieves significant improvements in defect prediction performance, with F1-scores reaching 0.811 and AUC values of 0.896, representing substantial enhancements over traditional methods. Despite these achievements, the research has specific limitations that warrant acknowledgment. First, computational requirements present scalability challenges: training on projects exceeding 2 M LOC requires 8 + hours and 32GB GPU memory, limiting accessibility for resource-constrained development teams. Second, the approach depends on comprehensive static code parsing, meaning it cannot handle incomplete or syntactically invalid code common in work-in-progress commits. Third, quality label subjectivity introduces up to 13% annotation noise in training data, potentially affecting model calibration. Fourth, cross-language generalization requires separate parser configurations and feature engineering for each programming language, limiting the model’s universality. Fifth, the static analysis foundation cannot capture runtime behaviors and dynamic type information crucial for languages like Python and JavaScript.

Despite these achievements, the research has specific limitations that warrant acknowledgment. First, computational requirements present scalability challenges: training on projects exceeding 2 M LOC requires 8 + hours and 32GB GPU memory, limiting accessibility for resource-constrained development teams. Second, the approach depends on comprehensive static code parsing, meaning it cannot handle incomplete or syntactically invalid code common in work-in-progress commits. Third, quality label subjectivity introduces up to 13% annotation noise in training data, potentially affecting model calibration. Fourth, cross-language generalization requires separate parser configurations and feature engineering for each programming language, limiting the model’s universality. Fifth, the static analysis foundation cannot capture runtime behaviors and dynamic type information crucial for languages like Python and JavaScript.

Future research directions are organized into three time horizons with specific technical approaches. Short-term goals (6–12 months) focus on developing lightweight model variants through network pruning and quantization to reduce memory requirements below 8GB while maintaining 95% performance. Medium-term goals (1–2 years) target cross-language transfer learning through language-agnostic graph representations and meta-learning techniques to enable few-shot adaptation to new programming languages with minimal retraining. Long-term goals (2–3 years) aim to develop self-supervised pre-training approaches leveraging large unlabeled codebases to reduce dependency on expensive quality annotations, and hybrid static-dynamic analysis integration to capture runtime behaviors alongside structural patterns. These directions will enhance the practical applicability of graph-based software analysis across diverse development environments and establish foundations for next-generation intelligent software engineering tools57.

Data availability

The datasets supporting the conclusions of this article are publicly available and can be accessed through the following sources: Apache Ant, Eclipse JDT, Apache Camel, Apache Hadoop repositories are available at https://archive.apache.org/, Mozilla Firefox source code is accessible at https://hg.mozilla.org/, and Linux Kernel data can be obtained from https://git.kernel.org/. To ensure reproducibility, upon acceptance of this manuscript, we will publicly release comprehensive resources including: (1) Complete source code implementation including preprocessing, model architecture, and training scripts; (2) Detailed documentation with step-by-step instructions for reproducing all experiments; (3) Configuration files specifying all hyperparameters used in experiments; (4) Example graph visualizations for AST, CFG, and DFG representations using Graphviz; (5) Quality annotation guidelines and inter-rater reliability statistics. Dataset statistics including file counts, defect distributions, and quality label frequencies will be provided in CSV format. Quality annotations were validated through cross-referencing with SonarQube and PMD (87% agreement), manual review by three experienced developers (Cohen’s κ=0.78), and correlation analysis with objective metrics (ρ=0.82). All code and data will be released under the MIT license for academic use. The repository link will be made available in the final published version of this paper.

References

Albattah, W. & Alzahrani, M. Software defect prediction based on machine learning and deep learning techniques: an empirical approach. AI 5 (4), 1743–1758. https://doi.org/10.3390/ai5040086 (2024).

Köksal, Ö., Babur, Ö. & Tekinerdogan, B. On the use of deep learning in software defect prediction. J. Syst. Softw. 194, 111511. https://doi.org/10.1016/j.jss.2022.111511 (2022).

Raschka, S., Patterson, J. & Nolet, C. Machine learning in python: main developments and technology trends in data science, machine learning, and artificial intelligence. Information 11 (4), 193 (2020).

Stradowski, M. & Madeyski, L. Industrial applications of software defect prediction using machine learning: A business-driven systematic literature review. J. Syst. Softw. 200, 111460. https://doi.org/10.1016/j.jss.2023.111460 (2023).

Tantithamthavorn, C., Hassan, A. E. & Matsumoto, K. The impact of class rebalancing techniques on the performance and interpretation of defect prediction models. IEEE Trans. Software Eng. 46 (11), 1200–1219 (2020).

Yang, X., Lo, D., Xia, X., Zhang, Y. & Sun, J. Deep learning for just-in-time defect prediction. In Proceedings of the 2015 IEEE International Conference on Software Quality, Reliability and Security (pp. 17–26). (2015).

Wu, Z. et al. A comprehensive survey on graph neural networks. IEEE Trans. Neural Networks Learn. Syst. 32 (1), 4–24 (2020).

Ying, Z. et al. Hierarchical graph representation learning with differentiable pooling. Adv. Neural. Inf. Process. Syst. https://doi.org/10.48550/arXiv.1806.08804 (2018).

Radjenović, D., Heričko, M., Torkar, R. & Živkovič, A. Software fault prediction metrics: A systematic literature review. Inf. Softw. Technol. 55 (8), 1397–1418 (2013).

Menzies, T. et al. Defect prediction from static code features: current results, limitations, new approaches. Automated Softw. Eng. 17 (4), 375–407 (2010).

Hall, T., Beecham, S., Bowes, D., Gray, D. & Counsell, S. A systematic literature review on fault prediction performance in software engineering. IEEE Trans. Software Eng. 38 (6), 1276–1304 (2012).

Ghotra, B., McIntosh, S. & Hassan, A. E. Revisiting the impact of classification techniques on the performance of defect prediction models. In Proceedings of the 37th international conference on software engineering (pp. 789–800). (2015).

Li, J., He, P., Zhu, J. & Lyu, M. R. Software defect prediction via convolutional neural network. In 2017 IEEE International Conference on Software Quality, Reliability and Security (QRS) (pp. 318–328). (2017).

Wang, S., Liu, T. & Tan, L. Automatically learning semantic features for defect prediction. In Proceedings of the 38th international conference on software engineering (pp. 297–308). (2016).

Devlin, J., Chang, M. W., Lee, K. & Toutanova, K. Bert: Pre-training of deep bidirectional Transformers for Language Understanding. ArXiv Preprint arXiv :181004805. (2018).

Chen, X., Zhao, Y., Wang, Q. & Yuan, Z. Multi: Multi-objective effort-aware just-in-time software defect prediction. Inf. Softw. Technol. 93, 1–13 (2018).

Zhu, E., Wang, S., Liu, C. & Wang, J. Adaptive tokenization transformer: enhancing irregularly sampled multivariate Time-Series analysis. IEEE Internet Things J. 12 (19), 39237–39246. https://doi.org/10.1109/JIOT.2025.3554249 (2025).

Chidamber, S. R. & Kemerer, C. F. A metrics suite for object oriented design. IEEE Trans. Software Eng. 20 (6), 476–493 (1994).

Heitlager, I., Kuipers, T. & Visser, J. A practical model for measuring maintainability. In 6th international conference on the quality of information and communications technology (QUATIC 2007) (pp. 30–39). (2007).

Emanuelsson, P. & Nilsson, U. A comparative study of industrial static analysis tools. Electr. Notes Theor. Comput. Sci. 217, 5–21 (2008).

Ball, T. The concept of dynamic analysis. ACM SIGSOFT Softw. Eng. Notes. 24 (6), 216–234 (1999).

Alves, T. L., Ypma, C. & Visser, J. Deriving metric thresholds from benchmark data. In 2010 IEEE international conference on software maintenance (pp. 1–10). (2010).

ISO/IEC. ISO/IEC 25010:2011 Systems and software engineering – Systems and software Quality Requirements and Evaluation (SQuaRE) – System and software quality models. (2011).

Buse, R. P. & Weimer, W. R. Learning a metric for code readability. IEEE Trans. Software Eng. 36 (4), 546–558 (2010).

Abadeh, M. N. Knowledge-enhanced software refinement: leveraging reinforcement learning for search-based quality engineering. Automated Softw. Eng. 31, 57. https://doi.org/10.1007/s10515-024-00456-7 (2024).

Abadeh, M. N. Performance-driven software development: an incremental refinement approach for high-quality requirement engineering. Requirements Eng. 25, 95–113. https://doi.org/10.1007/s00766-019-00309-w (2020).

Abadeh, M. & Mirzaie, M. An empirical analysis for software robustness vulnerability in terms of modularity quality. Syst. Eng. 26 (6), 754–769. https://doi.org/10.1002/sys.21686 (2023).

Scarselli, F., Gori, M., Tsoi, A. C., Hagenbuchner, M. & Monfardini, G. The graph neural network model. IEEE Trans. Neural Networks. 20 (1), 61–80 (2008).