Abstract

The agricultural sector in Tamil Nadu’s Cauvery Delta Region, a crucial contributor to the state’s economy and a primary source of livelihood, is experiencing declining productivity due to shifts in weather patterns and soil conditions. Although Indigenous Technical Knowledge (ITK) systems in agriculture are context-specific and resource-based, the evolving climate and soil characteristics necessitate the integration of modern technologies with traditional practices to promote climate resilience and sustainable crop production. Addressing this, the manuscript explores the use of statistical models, Machine Learning (ML), and Deep Learning (DL) for region-specific weather forecasting and crop recommendation. However, predicting future Weather dynamically and offering adaptive crop suggestions remains a significant challenge. This article introduces the Dynamic SG-SKRDX model, a hybrid approach that integrates crop recommendations with futuristic weather forecasts. The model predicts current and future weather conditions in the Cauvery Delta Region using a Support Vector Regressor (SVR)-Gated Recurrent Unit (GRU) (SG) model, which has been enhanced through hyperparameter tuning and cross-validation. This SG model forecasts temperature, humidity, and precipitation for the delta regions using ten years of historical meteorological data. For crop recommendation based on these forecasts, the study employs a dynamic ensemble of ML models – Support Vector Machine (SVM), K Nearest Neighbour (KNN), Random Forest (RF), Decision Tree (DT), and eXtreme Gradient Boosting (XGBoost) – termed Dynamic SKRDX. This dynamic ensemble intelligently selects the best-performing ML models based on changes in the predicted weather variables. Evaluation of the Dynamic SG-SKRDX model reveals strong performance, with the SG weather model achieving 0.65% MSE, 8.07% RMSE, 4.69% MAE, and 65.49% R-Squared. The dynamic SKRDX crop recommendation model attained 93.41% accuracy, 93.72% precision, 93.41% recall, and a 93.33% F1-Score. The proposed Dynamic SG-SKRDX model demonstrates superior capabilities in forecasting future Weather and recommending region-specific crops in the Cauvery Delta Region, offering a pathway towards technology-driven, sustainable agriculture and improved economic stability for the region.

Similar content being viewed by others

Introduction

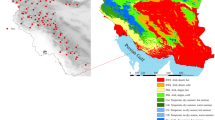

Tamil Nadu’s eastern region is home to the Cauvery Delta Zone (CDZ). Its borders are as follows: the Palk Straight to the south, the Bay of Bengal to the east, the districts of Trichy to the west, Perambalur and Ariyalur to the north-west, Cuddalore to the north, and Puddukkottai to the south-west. The entire geographic land area of CDZ is 14.47 lakh hectares. At 57% of the CDZ, the former Thanjavur district (which included Thanjavur, Thiruvarur, and Nagapattinam) is followed by Trichy, Ariyalur, Cuddalore, and Pudukkottai districts. The primary and most important source of water for the Cauvery Delta Region is the Cauvery River. Rice is a major crop cultivated in CDZ. Other primary crops include pulses, gingelly, groundnut, maize, banana, sugarcane, mango, cashew, coconut, bamboo, jasmine, rose, urad and more1,2,3.

Weather fluctuations and technological advancements have impacted crop productivity over time. Technological factors, such as the introduction of high-yielding cultivars and improved farming techniques, steadily raise yields over time. In contrast, uncontrollable sources of yield variability include weather fluctuations within and between seasons4.

Farmers often find it challenging to select a crop that is suitable for their area due to variations in meteorological conditions, such as temperature, humidity, and rainfall. A farmer with the environment in mind must always choose the best crop. For crop management and selection, farmers mostly rely on historical weather data. Computers can be trained without preset programming, thanks to the field of machine learning. These methods have remarkable predictive capabilities and solve both linear and non-linear agricultural settings. Computer algorithms are therefore trained using labelled data, supervised machine learning techniques, and statistical methodologies to forecast or make judgments on agricultural processes5. ML algorithms have demonstrated relatively more promising results than traditional statistical methods. An ML-based crop recommendation system can assist farmers in selecting the right crops for a given area and optimal weather conditions, thereby reducing the likelihood of crop failure and enhancing overall farming operations management6.

Deep Learning-based Weather Prediction (DLWP) models demonstrated superior accuracy in weather forecasting compared to Numerical Weather Prediction (NWP) models. An automated weather forecasting system was used, utilising GRU, to forecast temperature and soil moisture for the forthcoming years. The GRU-based forecasting proved to be promising, with 94% accuracy, 94% precision, 93% recall, and 93% specificity. Deep Learning Models, namely Convolutional Neural Network (CNN), CNN-GRU, Long Short-Term Memory (LSTM), and CNN-LSTM, as well as Artificial Neural Network (ANN), have been employed to forecast the periodic mean temperature in Zhengzhou city. When compared to the ANN, the deep learning-based model, CNN-LSTM, proved to exhibit enhanced efficiency for both current and future atmospheric temperatures7,8,9.

A short-term runoff forecasting approach is addressed without the need for time-step optimisation. ANN, Recurrent Neural Network (RNN), LSTM and GRU were employed for flood forecasting. From the experimental results, it was found that the GRU performed better than the ANN and LSTM models, with reduced structural complexity and fewer parameters. An explainable deep learning approach, comprising a GRU-based encoder module, an attention mechanism module, a GRU-based decoder module, and an expected-gradient-based explanation module, is proposed for forecasting monthly rainfall. The proposed method demonstrated enhanced forecasting accuracy for future rainfall in the next consecutive months10,11.

According to the study, DL models demonstrated robust and enhanced weather forecasting metrics compared to other methods. The GRU model was able to capture both short-term and long-term weather variables, which are crucial factors in deciding on the type of crops to be cultivated. For a non-linear relationship between crop and weather variables and to recommend region-specific crops for forecasted weather parameters, ANN and Support Vector Regressor (SVR) were employed in the manuscript. The ANN and SVR are found to exhibit improved performance compared to linear regression models, such as Ridge regression, LASSO, Multiple Linear Regression, and Elastic Net models12.

ML models, namely XGBoost, KNN, SVM, DT, and RF, were employed to recommend agricultural and horticultural crops based on soil and fluctuating climatic parameters. When compared to other models, XGBoost showed enhanced performance metrics of 99.0% for recommending crops and 99.3% for horticulture crops. An ensemble of majority voting methodology consisting of Naïve Bayes (NB), RF, KNN, SVM and CNN was employed for recommending crops, which aided farmers in choosing the right kind of crop and also offers fertiliser and pesticide recommendation13,14.

An AutoML model named AutoDES was developed, which dynamically selected base ML pipeline model combinations for different unseen instance predictions. A weighted integration scheme was employed for combining ML pipelines. The proposed model was evaluated against 39 benchmark datasets, which exhibited robust and enhanced performance metrics. An innovative Multi-Label Classification with Dynamic Ensemble Learning (MLDE) selects the most competent base ML model classifiers to predict unseen data for multi-labels. Unlike other dynamic ensemble techniques, the proposed approach integrates classification accuracy and ranking loss as the selection criterion for choosing the competent base classifiers. These selection criteria were adopted to enhance the performance of multi-label classification15,16. Based on the above insights, the research proposes the Dynamic SG-SKRDX model, which employs SG for forecasting weather parameters and Dynamic SKRDX for weather-specific crop recommendations. The primary objective of a dynamic ensemble is to adapt to changing weather conditions and improve prediction accuracy over time by continuously updating model weights and retraining on new, unseen data. The final recommended model is selected based on the prediction from the best-performing model.

Related works

Šuljug, Jelena et al.17 conducted a comparative study on ML models in predicting weather-related parameters for various agricultural applications. The study was conducted to monitor the effects of drought on maize crops in the Republic of Croatia. The weather dataset, comprising 136,965 weather data points collected from various regions using 18 different sensor nodes, was utilised in the study. The weather parameters include temperature, humidity, air pressure and solar radiation. The regions were divided into urban, suburban, and rural areas. Among 19 regression models, four models — namely, Fine Tree, Fine Gaussian SVM, Bagged Tree, and Related Quadratic GPR (Gaussian Process Regression) — were selected as the best models for weather parameter prediction based on Pearson’s Correlation Coefficient and R-Squared values.

Banerjee et al.18 proposed a novel method for region-specific weather prediction and crop recommendation. A linear regression model is employed to predict crop yields based on a crop production dataset. Weather prediction is performed using 12 years of historical weather data with an LSTM (Long Short-Term Memory) model. For the predicted Weather, the crop recommended for the specific region was determined using a Multiple Linear Regression model. When compared to the existing methodology, the proposed approach demonstrated enhanced performance metrics, achieving 92% accuracy.

Sakthipriya et al.19 proposed machine learning models, namely Random Forest Regressor (RFR), Cat Boost Regression (CBR), Linear Regression (LR), Support Vector Regressor (SVR) and hybrid machine learning models with Variation Inflation factor (VIF), such as RFR-VIF, CBR-VIF, LR-VIF and SVR-VIF for paddy yield prediction based on weather conditions in Madurai district, Tamil Nadu. From the experimental results, it was proved that CBR-VIF showed enhanced performance metrics of 1.23 to 1.40% nRMSE for South Madurai, 0.56 to 1.40% nRMSE for Melu, 1.10 to 1.25% nRMSE for Usilampatti, and 0.75 to 1.10% nRMSE for Thirumangalam. Following CBR-VIF, CBR showed the lowest nRMSE for the Thirumangalam district, using the predicted weather variables of normal rainfall, actual rainfall, maximum temperature, and minimum temperature.

Li, Hongli et al.20 implemented a rainfall prediction model, based on weather variables from Indonesia, using three DL models, namely Deep Gated Recurrent Unit (DGRU), Deep Long-Short Term Memory (DLSTM) and Deep Recurrent Neural Network (DRNN). From the experimental results, it was demonstrated that DLSTM performed well in capturing complex weather parameters, with an RMSE of 1.1289 and an R² of 0.9995. The article also discovered that extreme temperatures and solar radiation have a subsidiary effect on precipitation. In contrast, the lowest temperatures, relative humidity, and wind speed have a direct correlation with rainfall.

Huynh Vuong Thu Minh et al.21 employed time-series-based statistical models, such as the Autoregressive Integrated Moving Average (ARIMA) model, Seasonal ARIMA (SARIMA), and SARIMA with exogenous variables (SARIMAX), for predicting twelve-monthly precipitation over the Vietnamese Mekong Delta (VMD). The performance of the models was evaluated using Nash–Sutcliffe coefficient (Nash) and the Root Mean Squared Error (RMSE). Based on the Auto Correlation Function (ACF) and the Partial Auto Correlation Function (PACF), the minimum values of the Akaike Information Criterion (AIC) and the Schwarz Bayesian Information (SBC), the best SARIMA models of the form (p,1, q) (P,1, Q) are calculated. From the experimental results it was found that SARIMA (1, 1, 1) (2, 1, 1)11 and SARIMA (1, 1, 1) (2, 1, 1)12 exhibited accurate future rainfall prediction in VMD, with approximately 0.67 to 0.87 Nash and 0.06 to 0.09 RMSE in different weather stations of VMD.

Zenkner et al.22 implemented two different Bi-LSTMs (Bi-Lateral Long Short-Term Memory) for forecasting Weather from two London-based locations. Model A(Bi-LSTM1) does Weather forecasting for the next 24 h, and Model B (Bi-LSTM2) does the weather forecasting for the next 72 h based on 120 h of historical weather data. Model A predicted 24 h air temperature with ± 2 °C forecasting accuracy and 1.45 °C RMSE. Model B predicted 24-hour air temperature and relative humidity with an RMSE of 2.26 °C and 14%.

Chanti et al.23 presented a dynamic ensemble-based crop yield forecasting using different ML regressor models. Initially, the crop yield dataset was trained using Random Forest Regression (RFR), Decision Tree Regression (DTR), Multiple Linear Regression (MLR), and XGBoost. To improve accuracy, the study employed dynamic ensemble techniques, including stacking ensemble and voting regressor, for dynamically selecting the best-performing regressor models. The proposed approach showed enhanced performance metrics of 0.99 R-Squared and 15,690 RMSE.

Umamaheswari, P., et al.24 proposed a novel approach named SHEM (Stacked Heterogeneous Ensemble Model) for forecasting rainfall in the Cuddalore district of Tamil Nadu. As a pre-processing step, a novel missing value imputation method, Variable-Specific Hot Deck (VSHD), was employed in the study. Recursive Feature Elimination with Cross-Validation (RFECV) was used to select relevant features. The dataset with selected features was trained using the SHEM model, which comprises different base learners, including Random Forest, Decision Tree, Light GBM, and SVM. The predictions from the base learners were fed to meta-learners to obtain the final prediction. The study employed XGBoost as the meta-learner. The proposed SHEM model demonstrated enhanced performance metrics, including 96% accuracy, a Kappa Coefficient of 0.97, and a high F1-Score of 0.94.

Hachimi et al.25 had made a comparative analysis of three data-driven models, namely statistical, ML, and DL models, for weather forecasting. From the experimental results, it was found that the Temporal Convolutional Neural Network (TCNN) showed a superior ability in forecasting weather for 1-day, 3-day, and 1-week periods, with the lowest RMSE and high R-squared when compared to other models. TCNN also surpasses the forecasting capabilities of the Global Forecast System (GFS).

Rani et al.26 proposed an ML-based crop selection based on weather environments and soil-specific features. The proposed approach employs LSTM for weather forecasting and a Random Forest Classifier for crop selection. The LSTM-based weather prediction model showed enhanced and accurate predictions compared to the ANN. The LSTM achieved RMSE of 5.023% for minimum temperature, 7.28% for maximum temperature and 8.24% for rainfall prediction. The Random Forest Classifier, on the other hand, showed 97.647% crop selection accuracy, 96.437% resource dependency prediction accuracy, and a crop sowing time accuracy of 97.647%.

Abdelwahab et al.27 proposed a non-complex and powerful encoder-decoder DL model with a seasonal attention mechanism. This model is specifically employed for remotely located olive grove weather forecasting. The proposed approach showed higher prediction accuracy than the existing models. The approach also achieved a mean absolute error of 2.13 °C and a root mean squared error of 2.64 °C. Storing the model’s parameters only consumed 37.7 KB and 50.1 KB of total memory requirement. The approach was implemented on the Raspberry Pi Pico platform due to its low cost and reduced power consumption.

Anand et al.28 presented a deep-learning approach for selecting the crop category based on weather conditions in Tarakeshwar Village of Hooghly District, WB. Weather forecasting was implemented using the Recalling Enhanced Sigmoid Recurrent Neural Network (RES-RNN) with the Manta Ray Optimisation Algorithm (MROA). Determined by the forecasted Weather and soil conditions, a suitable crop was recommended for Tarakeshwar Village using a Cycle Consistent Generative Adversarial Network (CCGAN), which is further optimised with the Colour Harmony Algorithm (CHA).

Chetan Raju et al.29 presented Interfused Machine Learning (IML) with Advanced Stacking Ensemble (ASE) for enhancing crop prediction accuracy, which utilises several agricultural factors, including climate, soil, and crop types. The IML-ASE approach employs SVM, DT, RF, XGBoost Regression (XGBR), AdaBoost Regression (ABR) and NB as base learners. The Advanced Stacking ensemble functions as a meta-model comprising meta learners and fine learners. The meta-learners, composed of XGBR, ABR, and NB, and the fine learners are trained with XGBR. The stacking ensemble multiplies the coefficients of the weights of the concerned classifiers by the dataset features to enhance prediction accuracy. Investigations from the manuscript indicated that the planned IML-ASE model achieved the lowest MAE and RMSE scores of 0.23% and 1.65%, respectively, with a prediction accuracy of 97.1%.

Elbasi et al.30 conducted a detailed analysis of employing different types of ML algorithms, projecting how different ML models showed varying accuracy in predicting crops based on features, dataset size, and feature selection methods. The ML models comprise Bayes Net, NB, Logistic Regression, Multilayer Perceptron (MLP), RF, RT, and many more. The results of the experiments demonstrated that crop prediction accuracy across models was critically influenced by the features chosen through feature selection techniques. It was found that Bayes Net achieved a high prediction accuracy of 97.05%, followed by RF with 97.32% accuracy, after training the model with the selected features: Humidity, Precipitation, Temperature, and pH.

To overcome limitations like Short-Term weather prediction18,22, VIF with ML models detects only for linear relationships between the weather and crop parameters19, Works for only weather dataset with strong seasonal patterns21 and Dynamic ensemble methods to be adapted to improve the model performance24, the Dynamic SG-SKRDX hybrid model is proposed in the study, which the integration of SG (Weather) model and Dynamic SKRDX (Crop) model.

Problem statement

Crop recommendations based on weather conditions specific to a region are challenging due to the fluctuating nature of weather parameters presented in Table 1. When compared to ML models, Statistical ML Models and Numerical Models, DL models are widely employed for weather forecasting. Many research works have been done on forecasting weather variables for crop selection based on DL models. The major drawback of these models is their short-term weather forecasting capabilities. These models perform well in terms of high prediction accuracy and low error rates, but they struggle to adapt to long-term forecasting.

Additionally, existing models yield the best results for weather data that exhibits strong seasonality. Weather and crop are generally non-linear. However, most existing models are primarily trained to forecast weather variables that have a linear relationship with crop parameters. Many crop prediction models currently rely on machine learning (ML) models or static ensemble techniques for recommending crops. However, the weather variables are not always the same, leading to inaccurate crop recommendations. Due to fluctuating weather parameters, the accuracy of region-specific and weather-specific crop predictions is decreased. Therefore, the discrepancy between forecasts and real values is also increased. To address these challenges, the study proposed an innovative hybrid approach, termed the Dynamic SG-SKRDX model, for weather forecasting and crop selection in the Cauvery Delta Region.

Proposed system

The workflow of the suggested hybrid framework is depicted in Fig. 1. The meteorological data for the delta region, spanning 2012 to 2024, are used to prepare the weather model. The raw data is pre-processed, as the weather dataset shows variations in scale and magnitude. Hence, the Min-Max scaling method, one of the effective normalisation methods, is employed in the study. This process helps to normalise the weather predictions, keeping them within a specified minimum and maximum range. The Min-Max scaling equation is expressed as:

Where:

-

S is the scaled value.

-

is the original value.

-

Omin is the lowest original value.

-

Omax is the extreme original value.

A sliding Window approach is applied to generate input-output pairs on the normalised data, representing both past and future observations. The combination of scaled data values and input-output pairs enhances prediction accuracy and results in a robust weather forecasting system. The sliding window approach is mathematically represented as follows:

Where:

-

T= [t1, t2, t3…tn] is a given time-series.

-

w is the window size.

-

X_j is the input.

-

y_j is the output.

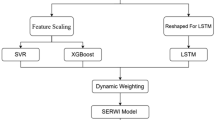

Following data pre-processing, the weather dataset is trained using the SG weather prediction model, which forecasts both current and future weather parameters. The crop model utilises a crop recommendation dataset to suggest suitable crops based on forecasted weather parameters for a specific region. The dataset is pre-processed using StandardScalar, which performs the transformation of crop features to have a mean of zero and a standard deviation of 1. The standard scalar technique is used because it helps generalise the model to data that hasn’t been seen and to models that are sensitive to feature scale. The crop dataset is fed to the Base Learners, BL-1, BL-2, BL-3, BL-4 and BL-5. The Base Learners employed in the study include SVM (S), KNN (K), Random Forest (R), Decision Tree (D), and XGBoost (X) ML models, collectively named the SKRDX model. By adjusting weights for each new data point in the forecasted weather parameters, the Hard Voting Classifier Meta Learner model utilises the Base Learner Predictions (BPs) of the different models—BPs 1, 2, 3, 4, and 5—that dynamically select the top-performing model to recommend appropriate crops. The proposed Dynamic SG-SKRDX is a hybrid model that combines forecasts from crop and weather models to suggest crops depending on region-specific weather characteristics. Farmers will be able to make better decisions regarding the Weather and the crop to cultivate in their fields by using the suggested method, which will boost output and profitability.

Workflow diagram for the proposed dynamic SG-SKRDX hybrid model.

Proposed SG for weather prediction

The proposed SG model is the combination of GRU and SVR models. The GRU and SVR framework is integrated using a simple averaging technique, and the detailed explanations of the models are as follows.

GRU model

GRU is a type of DL model that is a descendant of RNN and LSTM. GRU’s most distinguishing characteristics are its capacity to manage prolonged temporal data and its streamlined gating mechanism. This mechanism effectively mitigates the problems of gradient vanishing and gradient explosion. The input and forget gates in LSTM are united into update gates in GRU to create the GRU model structure, which simplifies the LSTM structure. This eliminates overfitting and reduces the computational time. The GRU is composed of three gates: the Reset gate, the Update gate, and the state candidate gate31,32. The mathematical calculation for the three gates is expressed as follows:

where: r(t), u(t) and s(t) represent reset gate, update gate and state candidate hidden state. \(\:\sigma\:\:\text{r}\text{e}\text{p}\text{r}\text{e}\text{s}\text{e}\text{n}\text{t}\text{s}\) sigmoid activation function. The subscripts r, u and s represent which gates the weight matrix belongs to. \(\:{W}_{r}g\left(t\right)\), \(\:{W}_{u}g\left(t\right)\) and \(\:{W}_{s}g\left(t\right)\) represents the weight matrix for the input sequence g(t) applied to reset, update and candidate hidden state gates. \(\:\left({M}_{r}\right)i\left(t-1\right)\), \(\:\left({M}_{u}\right)i\left(t-1\right)\) and \(\:\left({M}_{s}\right)i\left(t-1\right)\) are the weights for the hidden state (t-1) for reset, update, and candidate hidden state. \(\:{B}_{r}\), \(\:{B}_{u}\) and \(\:{B}_{s}\) This is the Bias vector for the recent update and candidate hidden state. The GRU network converts the input sequence into a high-dimensional vector with complete temporal data, as shown in Eq. 733.

where Temp represents temperature, H represents Humidity and P represents Precipitation. In the proposed approach, the GRU model is built using Keras Sequential Model. The GRU employed in the proposed approach consists of 2 GRU layers, in which the first layer is composed of 64 units, and the input sequence (30,9) represents 30 time steps and 9 features. The second layer consists of 32 units and a fully connected layer (also known as a dense layer or output layer) with 9 units. The approach employs Adam as the optimising function and Mean Squared Error as the loss function. As a whole, the entire model can be represented as:

Where:

-

O represents the final output from dense layer.

-

I is the input sequence (window_size x 9).

-

\(\ominus\) represents the learnable parameters like \(\:{\:\{W}_{r}g\left(t\right)\), \(\:{W}_{u}g\left(t\right)\), \(\:{W}_{s}g\left(t\right),\:\left({M}_{r}\right)i\left(t-1\right),\:\left({M}_{u}\right)i\left(t-1\right)\), \(\:\left({M}_{s}\right)i\left(t-1\right),\:{B}_{r}\), \(\:{B}_{u}\) and \(\:{B}_{s}\)}.

SVR model

The extended version of SVM that works on regression tasks is termed SVR. The ML model’s ultimate aim is to find a perfect regression hyperplane, where the spacing between the hyperplane and the data points are minimal. To capture complex relationships, SVR employs kernel functions to transform the data into a higher-dimensional space. The SVR is mathematically34,35 represented as:

where:\(\:\mathfrak{W}\) represents the weights, \(\:\lambda\:\) is the regularisation parameter, \(\:{\eta\:}_{j}\) and \(\:{\eta\:}_{j\:}^{*}\)are the slack variables. The slack variables introduce a relaxation mechanism, which allows certain data points to violate the margin or tolerance threshold, leading to enhanced generalisation36.

In the proposed approach, the GRU model is fed a weather dataset, with all weather parameters pre-processed to a 0–1 scale. This data is converted into a time series with a 30-day window, predicting the next step based on the previous 30 days, and the window size is adjustable. The GRU architecture consists of two layers, configured with 64 and 32 units followed by dense output layer with nine units corresponding to 9 target variables. SVR is employed in the research to address the non-linear relationship between the crop and weather parameters. The input weather parameters from GRU are mapped to a high-dimensional space using kernel functions, for capturing the complex and non-linear patterns. The model is built using the mean squared error loss function and the Adam Optimiser, and it’s trained for 30 epochs with a batch size of 32. For comparison, Scikit-learn is used to implement a Support Vector Regressor (SVR). GridSearchCV is employed to find the optimal hyperparameters for the SVR model, which are detailed in Table 2.

Neg_mean_squared_error, or negative mean squared error, is the evaluation metric used by GridSearchCV to identify the best hyperparameter. GridSearchCV optimises hyperparameters by minimising negative Mean Squared Error (MSE). The dataset is split into Training (70%), testing (20%), and validation (10%) sets. Both SVR and GRU models employ 8-fold cross-validation. This indicates that there are eight folds in the training data. Seven-fold cross-validation is utilised for Training, and one-fold is used for validation in each iteration. After K-Fold cross-validation, the predictions from GRU and SVR are combined using a simple averaging ensemble technique. Since both GRU and SVR predictions have a vector size of 9, this study sums each corresponding element from both sets of forecasts and divides by 2 to get the combined predictions. The simple averaging is mathematically represented as:

The algorithm of the proposed approach is shown in Table 3.

Simple averaging is chosen for its effectiveness in reducing errors and boosting predictive performance. GRU model excels at capturing long-term dependencies, while SVR handles non-linear relationships between Weather and crop parameters. This combination makes the proposed SG weather prediction model more robust and accurate than current alternatives.

Proposed dynamic SKRDX model for crop recommendation

Based on the Weather predicted by the SG model, the Dynamic SKRDX model dynamically selects the crop type using a dynamic ensemble of SVM, KNN, Random Forest, Decision Tree, and XGBoost. Due to its dynamic nature, the model can enhance its prediction accuracy by adjusting its weights in response to new data and retraining on it. The ML models used in the proposed model will be briefly discussed, followed by a dynamic ensemble method technique in the subsequent sections.

SVM

SVM is a supervised machine learning algorithm employed for both classification and regression tasks. One of the ultimate goals of SVM is to find the best hyperplane that accurately separates classes. The SVM hyperplane- H is a straight line that classifies between the two classes A and B. H1 and H2 are the two straight lines parallel to H, through which the samples from A and B cross. The three straight lines that perfectly classify the data points into sample A and sample B are termed as the hyperplane. The separation interval between H1 and H2 is called the margin, which is expressed as37.

where r represents the vector of the hyperplane.

The separation hyperplane is represented as:

The equation rs + b = 0 is a hyperplane that separates the data points between classes. Here ‘r’ and ‘s’ represents the weights associated with the features and ‘b’ represents the bias.

Decision tree

A supervised machine learning method that takes the form of a tree is called a decision tree. The root node selects the feature with the highest information gain and the lowest impurity after dividing the dataset’s features according to a few test criteria. The branches represent the test condition, while the target values are represented by the leaf nodes. The dataset is split recursively according to the expected values until no more data can be separated. For making predictions, few equations are necessary to calculate errors38.

Random forest

The Radom Forest is developed by building numerous decision trees, by bootstrapping the trials of the original data. Each subtree is trained using a randomly chosen subset of data. The features for each tree are chosen as the root node based on selection criteria such as high information gain and low Gini Index. The final predictions are concluded by averaging the predictions of all subtrees38.

K-nearest neighbour

The training data for the supervised machine learning algorithm KNN consists of both the input features and the class value. The model computes the proximity between each new data point and every training data point after storing the whole training set. The approach allocates the new data point to the class where the k nearest neighbours are found to be the majority after determining the k closest input data points to the new data. The distance between the data points is calculated using Euclidean distance, Manhattan distance or Minkowski distance39.

eXtreme gradient boost

XGBoost constructs an ensemble of decision trees and predicts the outcome using a boosting approach. In the boosting approach, the decision trees are built to run sequentially, where each model corrects the errors made by the previous model. The training data points with high errors are assigned higher weights and combined through a weighted sum40.

Dynamic ensemble selection using voting classifier

A new method called dynamic ensemble selection selects the top-performing classifiers from the pool of training models dynamically. Every test sample is used to train the model, which then selects the top-performing classifiers in the local area depending on the input sequence. The dynamic selection process ensures that only the competing classifiers’ predictions are taken into account. Furthermore, the ensemble is made more accurate and robust by dynamically updating the classifier’s weights in response to changes in the data distribution. The dynamic ensemble selection follows the three steps15,16.

-

Classifier selection.

-

Weight update.

-

Voting mechanism.

Classifier selection

For each test sample, ti, the Dynamic ensemble technique employs Euclidean Distance to find k-Nearest Neighbours (\(\:\varkappa\) (ti)) from the validation set. The performance of each classifier \(\:\mathcal{C}_i\) on \(\:\varkappa\:(t_i)\) is evaluated using the Accuracy metric as follows:

The top-performing classifiers are selected so that the dynamic ensemble consists of classifiers with an accuracy higher than the threshold value T. The dynamic ensemble subset \(\:\mathcal{D}\)E(ti) is represented as:

Dynamic weight updates

Each classifier’s weight \(\:\mathcal{w}\)i is dynamically modified in response to data or instance drifts. The G-mean score, which can be stated mathematically as follows, is used to update the classifiers’ weights:

Where Di is the current data instance, the low-performing classifiers below the Threshold T are removed from the pool of dynamic ensembles.

Voting classifier

Through weighted voting, the top-performing classifier is selected based on \(\:\mathcal{D}E\) (ti), which adds to the final prediction. In this research, a Hard voting classifier is employed for weighted voting.

The final prediction using a hard voting classifier is expressed as:

where F (\({\mathcal{C}}i\), \(\:\varkappa\:(\) ti) = u) is an indicator function that yields the value of 0 or 1.

For a given input x(ti), the ith classifier outputs 1 if it predicts target ‘u’, and 0 otherwise. The final prediction from the hard voting ensemble is determined by feeding x(ti) to all classifiers. We multiply each classifier’s prediction probability for a class’ u’ by its weight. The sum of these weighted probabilities across all classifiers determines the final prediction, with the class having the highest sum being selected as the prediction. This dynamic ensemble technique ensures accurate and robust forecasts by adapting to both local input changes and global shifts in data distribution.

The dynamic SKRDX model for crop recommendation uses SVM, KNN, RF, DT, and XGBoost as base classifiers, all trained on a crop-recommendation dataset. Each base model undergoes hyperparameter tuning with GridSearchCV to ensure optimal performance. Initially, these base models are combined using a stacking ensemble, with Logistic Regression as the final predictor. This stacking process utilises both training and validation datasets. When new, unseen data arrives, the voting classifier is retrained using the combined Training, validation, and new data. Hard voting is applied, and classifier weights are dynamically updated based on the efficiency of each base model with this new data. This retraining and dynamic weight adjustment happen whenever new data is encountered. If no new data is present, the model reverts to the initial stacking ensemble, training on existing data without weight updates. This dynamic adaptation to changing data, facilitated by the voting classifier’s continuous retraining and weight adjustments, significantly enhances the base class prediction accuracy. The algorithm for the Dynamic SKRDX model is presented in Table 4.

The stacking ensemble is employed as an initial model, and Logistic Regression is used as the meta-model, as it combines the best-performing model predictions at the first level, which is crucial for improving model performance. The dynamic ensemble using a voting classifier is employed, as the base classifier’s weights are updated and retained based on new data patterns. The dynamic nature of the proposed approach has a significant impact on model prediction performance, resulting in a robust and accurate model for recommending crops based on environmental parameters.

Proposed dynamic SG-SKRDX hybrid model

The proposed hybrid model combines the weather model and crop model to recommend crops based on the predicted weather parameters for the Cauvery Delta Region. The weather dataset is split into training and test sets. The pre-trained SG weather model is loaded with the test data of up to a specific date. The data is pre-processed using the MinMaxScalar. The SG model forecasts futuristic weather parameters for a certain period of months. From the forecasted weather data, relevant weather parameters, namely temperature, humidity, and precipitation, are extracted. The pre-trained crop model, the dynamic SKRDX model, is trained using the extracted weather parameters from the SG model. For the specified futuristic dates and their associated weather forecasts from the SG model, the crop recommendation model predicts the appropriate crop specific to the delta regions. The algorithm for the proposed Dynamic SG-SKRDX Hybrid Model is shown in Table 4.

Thus, the proposed hybrid model will be robust and efficient, providing farmers with informed decisions on the type of crop to cultivate based on current and future weather conditions. This aids in enhanced productivity and profits for the farmers and agricultural planners.

Dataset description

Weather dataset and its analysis

The Public Works Department (PWD) Thanjavur provided the meteorological data for the delta regions for the years 2012–2024. The weather dataset comprises 10 weather features, including datetime, temperature, dew point, humidity, precipitation, wind speed, wind direction, sea level pressure, solar radiation, and UV index. The weather data is collected daily for 12 years.

Crop dataset and its parameters

The crop recommendation dataset employed in the study is sourced from Kaggle, a renowned benchmark dataset41. The dataset comprises seven different features: N (Nitrogen), P (Phosphorus), K (Potassium), temperature, humidity, pH, and rainfall. The data is collected from Indian regions, comprising both soil parameters (N, P, K, pH) and environmental parameters (temperature, humidity, and rainfall). As previously mentioned, the following sections relate temperature, humidity, and precipitation to various crop types because these meteorological characteristics are relevant to us. There are 22 distinct crops in the collection, including rice, maize, wheat, green mung, black urad, bananas, apples, coconuts, and many more.

Experimental results and discussions

The experimental results for the proposed Weather and crop model are explained in the following sections. The section employs different DL and ML models and compares the results with the proposed Dynamic SG-SKRDX model, using appropriate evaluation metrics.

Proposed weather model evaluation

The study explored several weather prediction models, such as GRU, GRU and SVR, and LSTM, to compare how well the proposed model is superior to the existing Weather forecasting DL models. The performance of these models is compared with the proposed SG model using regression-based performance metrics, such as Mean Squared Error (MSE), Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), and R-squared (R²)42. Table 6 presents a comparison of metrics for GRU, GRU + Cross_Validation, LSTM + Cross_Validation, RNN + Cross_Validation, and the proposed SG model across the Training, validation, and test datasets.

The study applied k-fold cross-validation and trained the models for 10 iterations. The models GRU + Cross_Validation, LSTM + Cross_Validation, RNN + Cross_Validation, and the proposed SG model were applied with 8-fold cross-validation and trained for 10 iterations. For 8-fold cross-validation, the training dataset is divided into eight folds. Across eight iterations, a different fold serves as the test set in each round. This 8-fold cross-validation process is repeated for 10 repetitions for all models to enhance the proposed SG model’s weather forecasting accuracy. From the table, it is clear that the proposed SG model exhibited lower MSE, RMSE, and MAE, and higher R-squared for the Training, testing, and validation datasets, when compared to other weather models. The proposed SG weather model demonstrated strong performance on the test dataset, achieving an MSE of 0.0069, an RMSE of 0.0835, an MAE of 0.0481, and an R-squared of 0.6408. These results indicate that the SG weather model offers more robust and reliable performance compared to other existing weather models. The comparison of metrics between models are depicted as a chart in Fig. 2. Although LSTM and RNN have also reduced error rates, there is a minute difference between the proposed and existing models in terms of system efficiency, thereby making the SG model achieve enhanced weather prediction accuracy and robustness.

SG model performance comparison chart.

Actual vs predicted temperature. (a) GRU, (b) GRU+Cross_Validation, (c) SVR+GRU, (d) LSTM+Cross_Validation, (e) RNN+Cross_Validation, (f) SG (proposed).

shows six actual vs. predicted temperature comparison graphs for models (a−f). The figure lists the model names from (a−f), which will be used to compare the performance of humidity and rainfall as well as for loss comparison. The blue line represents the actual temperature, and the orange line represents the predicted temperature. From the figure, it is evident that models (a−c) showed deviations between the predicted and actual temperatures. Models (d,e,f) showed better predictive performance. The proposed model (f) is found to have reduced error rates and generalised well with unseen data, making the model to exhibit superior predictive power.

Actual vs predicted humidity.

Actual vs predicted rainfall.

Figure 4 shows the actual versus predicted values for humidity. The blue line represents the actual humidity, and the orange line represents the expected humidity. From the plots, it is seen that models (a-c) showed better predictive performance for humidity than models (d–f). But when compared to metrics-based model performance, the proposed (f) model showed enhanced predictive power, with reduced error rates. Figure 5 shows the actual versus predicted rainfall by the models (a–f), where (f) is the proposed model. The blue line represents the actual rainfall, and the orange line represents the predicted rainfall. From the figure, it is evident that the proposed model (f) exhibits enhanced prediction accuracy in capturing the overall trends of the rainfall pattern. With accurate rainfall pattern prediction in the Cauvery Delta Region, suitable crops can be recommended effectively. Figure 6 depicts the comparison graph for Training versus validation loss for the models (a–f). The x-axis represents the number of epochs, and the y-axis depicts the loss, which is the difference between the actual and predicted values. The lower the loss, the better the model efficiency will be. The blue line represents training loss, and the orange line represents validation loss. The proposed model (f) starts with a 0.05 training loss and rapidly decreases in a few epochs, reaching a steady loss of 0.007, which is very low. Similarly, the validation loss starts around 0.012 and after a few epochs, it rapidly decreases to 0.007. This exhibits that the proposed model has good learning and generalisation, leading to enhanced model performance.

From the metrics comparison table, the graphical representation of temperature, humidity, and rainfall trends, as well as validation versus training loss plots, it is evident that the proposed SG weather model shows superior prediction accuracy with reduced error rates, higher R-squared values, and a significantly lower validation loss. This shows that the SG model has good learning ability and generalises well for unseen data, leading to an accurate and robust weather forecasting system. Table 7 shows the performance metrics across different window sizes. The proposed weather model is explored with different window sizes of 15, 45, and 60, other than the 30-day window size.

From the table, it is observed that the 45-day window showed reduced error and higher R2 when compared to the 30-day window, but the improvement is marginal. Agricultural and meteorological monitoring is generally organised around monthly cycles, and a 30-day window can effectively capture weather variations that strongly influence crop development. In contrast, a shorter window (e.g., 15 days) may overlook critical seasonal fluctuations, while a longer window (e.g., 45 or 60 days) could reduce the model’s sensitivity to recent changes and increase the risk of overfitting. Thus, the 30-day window represents the optimal configuration, providing a balance between prediction accuracy, real-world applicability, and model simplicity.

Training vs validation loss.

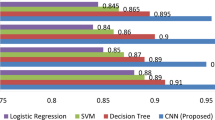

Proposed crop model evaluation

The Proposed Dynamic SKRDX Crop Model employed in the study was compared with other existing ML models used for Crop Recommendation, such as DT, RF, KNN, SVM and XGBoost. The capability of the models is assessed using a classification-based performance system of measurement, namely Accuracy, Precision, Recall, and F1-Score43. Using the classification metrics, the proposed crop model is evaluated against the existing crop model, and a comparison of the metrics is discussed in the following section.

The research analysed and compared the performance of several Crop models with the proposed Dynamic SKRDX model through classification evaluation metrics. Table 8 presents a performance comparison of DT, RF, KNN, SVM, XGBoost, and the proposed Dynamic SKRDX model. The table clearly shows the proposed model outperforms other crop models, achieving an impressive 93% accuracy, 94% precision, 94% recall, and a 94% F1-Score. Therefore, this demonstrates that the proposed dynamic ensemble-based crop model can recommend suitable crops for the predicted weather parameters with greater accuracy and robustness. The Crop Model comparison chart is depicted in Fig. 7. The X-axis represents the Metrics in (%) and Y-axis represents the Crop Models.

Performance comparison chart.

Figure 8 depicts the classification report and Fig. 9 shows confusion matrix for the proposed Crop Model. The classification report depicts the performance of the proposed model in classifying different crops. From the classification report it is clear that the proposed crop model showed high accuracy and high performance in classifying the crop classes.

Classification report.

Confusion matrix.

The confusion matrix projects the model’s performance, including the number of correctly and incorrectly classified samples. The X-axis denotes predicted labels, and Y-axis denotes actual labels. The diagonal represents the number of correctly classified samples. The numbers off the diagonals represent the number of misclassified trials, which is very few. For example, 3 samples of pomegranate are misclassified as apples, 3 orange samples are misclassified as apples, 1 papaya sample is misclassified as a coconut, and so on. Overall, the misclassifications are very few, allowing the model to predict with high performance and classification accuracy. From the metrics comparison table, visual plots, classification reports, and confusion matrix, it is evident that the proposed Dynamic SKRDX model recommends an appropriate crop based on the forecasted temperature, humidity, and rainfall with enhanced accuracy. For every different unseen weather parameter, the models are trained and chosen dynamically, making the model more efficient and robust.

Proposed dynamic SG-SKRDX hybrid model

The SG weather model forecasts future Weather based on specified future steps and window size. This forecast is then fed into the suggested crop model, which recommends the appropriate crop for that month. By providing futuristic weather predictions, this hybrid model enables farmers to make informed decisions about their monthly crop selections. For example, Table 9 shows the hybrid model’s forecasted Weather for January 2025, using data from 2012 to 2024 for Training and 2025 for testing. As a result, the model recommended blackgram cultivation for the season, given the predicted conditions: temperatures between 70 °F and 72 °F, humidity ranging from 61% to 66%, and rainfall from 0.36 to 0.55 mm. These conditions—warm temperatures, high humidity, and minimal rain—are optimal for blackgram in the delta region. Table 8 further details the recommended crops.

Table 10 presents the weather forecast for July 26th to August 2025, recommending maize as the ideal crop. The forecast predicts temperatures between 68 °F and 69 °F, humidity ranging from 61% to 66%, and rainfall between 0.34 mm and 0.36 mm. These conditions—warmer temperatures, moderate humidity, and minimal rainfall—highlight late July to August as the optimal, dry season for maize cultivation in delta regions. The hybrid model provides reliable and precise weather predictions, as well as dynamic crop recommendations for the Cauvery Delta Regions, which are validated by various evaluation metrics discussed earlier. This capability to provide futuristic weather forecasts and crop recommendations empowers farmers to make informed decisions about monthly harvests, ultimately boosting their crop production, yield, and profit.

Table 10 shows that the proposed model recommends maize for July–August, even though rice has traditionally been the dominant crop during the Kharif season. This recommendation is mainly influenced by prevailing weather conditions, including low precipitation and limited irrigation availability in the Thanjavur region during this period. The Southwest monsoon typically brings only moderate rainfall to the area, making rice—a crop that requires substantial irrigation and field puddling—less suitable. Continuous submergence of fields is not feasible under these conditions, especially when irrigation resources are constrained. Additionally, reduced water release from the Mettur Dam further heightens the risk of crop failure during the early stages of paddy establishment. In contrast, maize is more tolerant to low rainfall and restricted irrigation, which supports its selection by our model.

Conclusion and future work

A Dynamic SG-SKRDX hybrid model for a region-centric, weather-specific, and futuristic crop recommendation framework is presented in the research work, addressing the challenges of fluctuating weather forecasting and its impact on crop recommendations. The dynamic SG-SKRDX hybrid model forecasts both current and future weather parameters, and based on the forecasted Weather, suitable crops are recommended. SVR (S) helps in capturing the non-linear relationship between Weather and crop variables. The GRU (G) model helps in forecasting both long-term and short-term weather parameters. By combining the predictions from SG using a simple averaging technique, the forecasting of weather parameters is done with enhanced performance metrics. SG weather model undergoes hyperparameter tuning and cross-validation to improve the weather forecasting accuracy. For region-centric weather-based crop recommendations, the paper proposes the Dynamic SKRDX crop model, which recommends crops based on weather forecasts provided by the SG weather model. Dynamic SKRDX models perform a dynamic ensemble of SVM, KNN, RF, DT, and XGBoost models for recommending crops based on predicted weather variables. The main goal of a dynamic ensemble is to adapt to varying weather parameters and enhance predictions over time by updating model weights and retraining on unseen data. The proposed weather model is subjected to hyperparameter tuning and cross-validation to strengthen weather forecasting accuracy. From the experimental results, it was found that the weather model showed 0.65% of MSE, 8.07% of RMSE, 4.69% of MAE, and 65.49% of R-Squared. The hard voting classifier updates the weights of the classifier based on changes in the forecasted Weather and dynamically chooses the best-performing model for recommending crops. From the experimental results, it was found that the proposed dynamic SKRDX model showed 93.41% accuracy, 93.72% precision, 93.41% recall, and 93.33% F1-Score. The presented approach offers a futuristic, region-centric weather forecasting and crop recommendation system that helps farmers make informed decisions on crop cultivation based on forecasted weather parameters. After suggesting futuristic crops to be sown in a specific month, there is a chance of a sudden, unexpected, and drastic change in weather parameters due to cyclones, floods, and summer storms. To effectively manage these challenges, the proposed model must connect with the meteorological department to issue timely warnings about unexpected events. Additionally, during the summer, crops rely solely on groundwater. Hence, groundwater-based crop recommendations and integrating the proposed model with the meteorological department can be extended as a future scope of the research.

Data availability

All data generated or analysed during this study are included in this published article. https:/agritech.tnau.ac.in/agriculture/agri_agrometeorology_croppingpattern_cauvery.html; https:/www.kaggle.com/datasets/atharvaingle/crop-recommendation-dataset.

References

Aishwarya, B., Geethapriya, S. & Suribabu, C. R. Cauvery water policy plan for sustainable agricultural and domestic usage. Internafional J. Eng. Res. Technol. (IJERT). 10 (4), 671–695 (2021).

https://agritech.tnau.ac.in/agriculture/agri_agrometeorology_croppingpattern_cauvery.html.

Geetha, M. et al. A time-series based yield forecasting model using stacked lstm to predict the yield of paddy in cauvery delta zone in tamilnadu. In: 2022 First International Conference on Electrical, Electronics, Information and Communication Technologies (ICEEICT). 1–6 (IEEE, 2022).

Debnath, M. K. Statistical and machine learning models for location-specific crop yield prediction using weather indices. International J. Biometeorology 68, 1–23 (2024).

Mahale, Y. et al. Crop recommendation and forecasting system for Maharashtra using machine learning with LSTM: a novel expectation-maximisation technique. Discover Sustainability 5(1), 134 (2024).

Surendran, A., Ozkan, B. & Kumar, S. S. In Tamil nadu, India.

Ren, X. et al. Deep learning-based weather prediction: a survey. Big Data Res. 23, 100178 (2021).

Akilan, T. & Baalamurugan, K. M. Automated weather forecasting and field monitoring using GRU-CNN model along with IoT to support precision agriculture. Expert Syst. Appl. 249, 123468 (2024).

Guo, Q., He, Z. & Wang, Z. Prediction of monthly average and extreme atmospheric temperatures in Zhengzhou based on artificial neural network and deep learning models. Front. Forests Global Change. 6, 1249300 (2023).

Gao, S. et al. Short-term runoff prediction with GRU and LSTM networks without requiring time step optimisation during sample generation. J. Hydrol. 589, 125188 (2020).

He, R., Zhang, L. & Chew, A. W. Z. Data-driven multi-step prediction and analysis of monthly rainfall using explainable deep learning. Expert Syst. Appl. 235, 121160 (2024).

Wickramasinghe, L., Weliwatta, R., Ekanayake, P. & Jayasinghe, J. Modeling the relationship between rice yield and climate variables using statistical and machine learning techniques. J. Math. 2021 (1), 6646126 (2021).

Dey, B., Ferdous, J. & Ahmed, R. Machine learning based recommendation of agricultural and horticultural crop farming in India under the regime of NPK, soil pH and three climatic variables. Heliyon 10(3), e25112 (2024).

Kulkarni, N. H., Srinivasan, G. N., Sagar, B. M. & Cauvery, N. K. Improving crop productivity through a crop recommendation system using ensembling technique. In: 2018 3rd international conference on computational systems and information technology for sustainable solutions (CSITSS). 114–119 (IEEE, 2018).

Zhu, X., Ren, J., Wang, J. & Li, J. Automated machine learning with dynamic ensemble selection. Appl. Intell. 53 (20), 23596–23612 (2023).

Zhu, X., Li, J., Ren, J., Wang, J. & Wang, G. Dynamic ensemble learning for multi-label classification. Inf. Sci. 623, 94–111 (2023).

Šuljug, J., Spišić, J., Grgić, K. & Žagar, D. A comparative study of machine learning models for predicting meteorological data in agricultural applications. Electronics 13 (16), 3284 (2024).

Banerjee, S., Chakraborty, S. & Mondal, A. C. Machine learning based crop prediction on region wise weather data. Int. J. Recent. Innov. Trends Comput. Communication. 11 (1), 145–153 (2023).

Sakthipriya, D. & Chandrakumar, T. Weather based paddy yield prediction using machine learning regression algorithms. J. Agrometeorology. 26 (3), 344–348 (2024).

Li, H., Li, S. & Ghorbani, H. Data-driven novel deep learning applications for the prediction of rainfall using meteorological data. Front. Environ. Sci. 12, 1445967 (2024).

Minh, H. V. T. et al. Modelling and predicting annual rainfall over the Vietnamese Mekong Delta (VMD) using SARIMA. Disc. Geosci. 2(1), 19 (2024).

Zenkner, G. & Navarro-Martinez, S. A flexible and lightweight deep learning weather forecasting model. Appl. Intell. 53 (21), 24991–25002 (2023).

Chanti, S. et al. Crop yield forecasting through novel machine learning dynamic ensemble selection techniques. In: 2024 15th International Conference on Computing Communication and Networking Technologies. (ICCCNT) 1–6 (IEEE, 2024).

Umamaheswari, P. & Ramaswamy, V. An integrated framework for rainfall prediction and analysis using a stacked heterogeneous ensemble model (SHEM). Expert Syst. Appl. 256, 124831 (2024).

Hachimi, C. E. et al. Advancements in weather forecasting for precision agriculture: from statistical modeling to transformer-based architectures. Stoch. Env. Res. Risk Assess. 38 (9), 3695–3717 (2024).

Rani, S., Mishra, A. K., Kataria, A., Mallik, S. & Qin, H. Machine learning-based optimal crop selection system in smart agriculture. Sci. Rep. 13 (1), 15997 (2023).

Abdelwahab, M. H., Mostafa, H. & Khattab, A. A low footprint Olive grove weather forecasting using a single-layered seasonal attention encoder-decoder model. Ecol. Inf. 75, 102113 (2023).

Anand, M., Jain, A. & Shukla, M. K. Deep learning: crop selection based on weather conditions in Tarakeswar village of Hooghly district in West Bengal. Multimedia Tools Appl. 83 (10), 29715–29740 (2024).

Raju, C., Ashoka, D. V. & BV, A. P. CropCast: harvesting the future with interfused machine learning and advanced stacking ensemble for precise crop prediction. Kuwait J. Sci. 51 (1), 100160 (2024).

Elbasi, E. et al. Crop prediction model using machine learning algorithms. Appl. Sci. 13 (16), 9288 (2023).

Xu, X., Guan, L., Wang, Z., Yao, R. & Guan, X. Short-term photovoltaic power intelligent forecasting based on XGBoost-GRU-Informer-SVR model. Applied Soft Computing 172, 112768 (2025).

Ławryńczuk, M. & Zarzycki, K. LSTM and GRU type recurrent neural networks in model predictive control: A Review. Neurocomputing 632, 129712 (2025).

He, X. et al. Short-term load forecasting by GRU neural network and DDPG algorithm for adaptive optimisation of hyperparameters. Electr. Power Syst. Res. 238, 111119 (2025).

Sánchez, J. C. M., Mesa, H. G. A., Espinosa, A. T., Castilla, S. R. & Lamont, F. G. Improving wheat yield prediction through variable selection using Support Vector Regression, Random Forest, and Extreme Gradient Boosting. Smart Agricultural Technology https://doi.org/10.2139/ssrn.5021080 (2025).

Ye, Y. F., Wang, J. & Chen, W. J. Non-linear feature selection for support vector quantile regression. Neural Netw. 185, 107136 (2025).

Turnip, A., Taufik, M. & Kusumandari, D. E. Precision blood pressure prediction leveraging photoplethysmograph signals using support vector regression. Egypt. Inf. J. 29, 100599 (2025).

Shih, C. Y., Lin, Y. T., Chen, W. & Huang, J. C. SVM-Adaboost based badminton offensive movement parsing technique. Signal. Image Video Process. 19 (4), 280 (2025).

Pande, C. B. et al. Forecasting of monthly air quality index and Understanding the air pollution in the urban city, India based on machine learning models and cross-validation. J. Atmos. Chem. 82 (1), 1 (2025).

Xing, W. & Bei, Y. Medical health big data classification based on KNN classification algorithm. Ieee Access. 8, 28808–28819 (2019).

Noa-Yarasca, E., Babbar-Sebens, M. & Jordan, C. E. Machine learning models for prediction of Shade-Affected stream temperatures. J. Hydrol. Eng. 30 (1), 04024058 (2025).

https://www.kaggle.com/datasets/atharvaingle/crop-recommendation-dataset.

Tatachar, A. V. Comparative assessment of regression models based on model evaluation metrics. Int. Res. J. Eng. Technol. (IRJET). 8 (09), 2395–0056 (2021).

Obi, J. C. A comparative study of several classification metrics and their performances on data. World J. Adv. Eng. Technol. Sci. 8 (1), 308–314 (2023).

Acknowledgements

The authors thank SASTRA Deemed University for providing infrastructural support to conduct this research work. We would like to express our sincere gratitude to the Public Works Department (PWD) for providing weather data, which supported the gathering of a real-time and robust historical weather dataset for the Thanjavur region.

Author information

Authors and Affiliations

Contributions

Janani. K, and R. Alageswaran designed the study, conducted the experiments, and analysed the data. R. Alageswaran and Rengarajan Amirtharajan provided critical feedback, assisted in writing and editing the manuscript, and guided the project. All authors reviewed and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethics approval

All authors have read, understood, and have complied as applicable with the statement on “Ethical responsibilities of Authors” as found in the Instructions for Authors.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Janani, K., Alageswaran, R. & Amirtharajan, R. Dynamic SG-SKRDX hybrid framework for precision weather forecasting and crop suitability in the Cauvery Delta. Sci Rep 16, 2042 (2026). https://doi.org/10.1038/s41598-025-31717-4

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-31717-4