Abstract

A unified model is presented for civic grievance redressal, integrating multimodal complaint intake, zero-shot semantic routing, sentiment-derived urgency estimation, and behavior-sensitive abuse detection within a scalable microservice architecture. The framework consolidates components that are typically handled independently by combining transformer-based text processing, CTC-enabled speech transcription, affective-intensity modeling, and longitudinal user-behavior analysis into a coherent decision pipeline. Typed and spoken complaints are projected into a shared semantic representation using a MobileBERT zero-shot classifier, while a recurrent neural network trained with Connectionist Temporal Classification (CTC) provides robust transcription of multilingual and dialect-rich voice submissions. Urgency indicators obtained from lexicon-based sentiment analysis are incorporated into time-aware escalation logic, and abuse mitigation integrates toxicity scores with a repetition-weighted behavioral model to identify and regulate systematic misuse. The platform operates as a containerized microservice ecosystem with WebSocket-enabled real-time updates and AES-encrypted data storage. Experiments conducted on a 1000-sample multimodal dataset show consistent performance, including 92.4% routing accuracy, 0.041 MAE in urgency estimation, 96.2% toxicity precision, 96.8% SLA compliance, and sub-150 ms end-to-end latency. These outcomes indicate suitability for deployment in linguistically diverse and resource-constrained civic environments. Planned extensions include enhanced multilingual ASR, adversarially robust toxicity modeling, and incorporation of image-based grievance modalities.

Similar content being viewed by others

Introduction

Digital public service infrastructures have expanded substantially over the past decade, enabling citizens to report civic issues through online platforms, mobile applications, and voice-based help centers. Contemporary governance systems routinely receive large volumes of complaints spanning sanitation, healthcare, public utilities, transportation, electricity, water supply, and safety. Although digitization has improved accessibility, the computational workflows supporting complaint processing remain limited in robustness. Many existing platforms continue to rely on manual routing, rule-based classification, or keyword matching, leading to misclassification, delayed resolution, inconsistent prioritization, and diminished public trust.

The increasing prevalence of multilingual populations, higher volumes of voice-based inquiries, and spontaneous code-mixed communication introduce substantial complexity into automated grievance processing. Complaints frequently contain emotional expressions, urgency cues, or abusive content, requiring analytical capabilities beyond simple text categorization. As expectations shift toward near real-time responses, the absence of adaptive, intelligent, and linguistically inclusive redressal pipelines has emerged as a significant limitation in digital governance. Beyond the technical challenges, grievance redressal constitutes a core element of democratic accountability and public-service transparency1. Inefficiencies in routing, prioritization, or content moderation directly affect perceptions of state responsiveness and institutional trust. Several national and municipal platforms report that more than 40% of complaints remain misrouted or unresolved due to classification errors, while voice-based submissions from low-literacy populations frequently lack adequate support. Enhancing multimodal accessibility and improving the accuracy of automated decision processes are therefore not simply engineering refinements but essential requirements for equitable governance in diverse societies.

Problem definition

Let \(G = \{g_1, g_2, \ldots , g_N\}\) denote a stream of heterogeneous grievances submitted to a civic portal. Each instance \(g_i\) may originate as structured text, spontaneous speech, or mixed-format multimedia content. The objective is to construct a decision function

where (i) \(d_i\) denotes the assigned administrative department, (ii) \(p_i \in [0,1]\) represents a continuous urgency score, and (iii) \(a_i \in \{0,1\}\) indicates the presence of abusive or toxic content. Developing this mapping is challenging because semantic intent, emotional intensity, and behavioral toxicity originate from distinct modalities, exhibit different noise characteristics, and demand separate linguistic and statistical treatments. A unified formulation capable of handling both text- and speech-based grievances with reliable departmental routing, accurate urgency estimation, and robust toxicity detection remains insufficiently addressed by existing civic grievance models.

Scope of research

The scope of this work encompasses the full grievance-processing pipeline, including multimodal complaint ingestion, semantic routing, urgency estimation, toxicity detection, and real-time operational deployment. The framework is designed for multilingual and resource-constrained governance environments and addresses:

-

processing of multimodal inputs (text and speech),

-

zero-shot routing without domain-specific retraining,

-

continuous urgency estimation based on affective cues,

-

hybrid toxicity detection combining behavioral history and textual signals,

-

scalable microservice deployment supporting real-time responses.

The scope does not include legal adjudication, case summarization, or cross-departmental workflow automation, as these fall outside the present technical objectives.

Research gap

Existing work addresses individual components of grievance processing—classification, sentiment analysis, or speech recognition—but does not provide a unified framework that simultaneously models semantic, affective, and behavioral dimensions of civic complaints. Existing systems exhibit several limitations:

-

Zero-shot generalization domain-specific supervised models degrade when exposed to previously unseen complaint types.

-

Cross-modal integration text- and speech-based signals are rarely fused into a single reasoning pipeline.

-

Urgency inference sentiment-driven prioritization is minimal or reduced to oversimplified heuristics.

-

Behavior-aware moderation toxicity detection typically ignores longitudinal user patterns.

-

Unified decision logic routing, urgency estimation, and toxicity detection are executed as disjoint modules without a cohesive mathematical structure.

This fragmentation limits scalability when confronted with real-world complaint diversity, emotional variability, and abusive usage patterns. For example, a voice submission such as “No ambulance has reached yet and my father is struggling to breathe,” produced in a noisy environment with regional dialectal variation2, illustrates the challenge. Traditional classifiers may misinterpret the semantic content, keyword-based sentiment models may underestimate urgency, and toxicity filters may incorrectly flag distressed expressions as abusive3. Addressing such cases requires a multimodal reasoning model capable of jointly interpreting semantic information, emotional intensity, and behavioral context. The integration of these dimensions is technically nontrivial because each signal arises from a distinct statistical distribution and exhibits different noise characteristics4. Transcription errors propagate as semantic uncertainty; affective signals display nonlinear intensity scaling; and behavioral repetition introduces temporal dependencies absent from isolated text channels5. A decision model that preserves these dependencies without amplifying cross-modal error requires a unified formulation rather than independently optimized modules6. This motivates the need for a coherent decision-theoretic structure that jointly reasons over multimodal, affective, and longitudinal behavioral cues.

Technical motivation

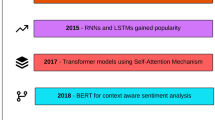

Advances in language and speech technologies create an opportunity to address the limitations of existing civic grievance systems.

-

Lightweight transformer architectures such as MobileBERT provide fast contextual embeddings suitable for real-time zero-shot reasoning.

-

CTC-based RNN/biLSTM ASR models improve transcription robustness for low-resource and dialect-rich languages such as Tamil.

-

Sentiment models enable extraction of affective cues required for urgency estimation.

-

Toxicity frameworks, including probabilistic intent estimators, support automated detection of abusive content.

Although these components are individually mature, they have not been mathematically integrated into a unified inference model for civic applications, motivating the development of a cohesive multimodal reasoning framework. The proposed system combines three core reasoning dimensions—semantic similarity, affective polarity, and behavioral toxicity—within a single computational formulation. MobileBERT embeddings support zero-shot departmental routing; sentiment analysis converts emotional polarity into a continuous urgency signal; and behavioral toxicity modeling incorporates instantaneous abuse indicators together with historical misuse via an exponential-backoff mechanism. The proposed system therefore combines semantic similarity, affective polarity, and behavioral toxicity within a single computational formulation, directly addressing the gap in multimodal civic grievance processing and motivating the unified framework for routing, urgency estimation, and abuse regulation.

Objectives and contributions

The objective of this work is to develop a unified model for multimodal grievance redressal that supports semantic generalization, behavior-aware reliability, and scalable operational deployment. The primary contributions are as follows:

-

Unified decision-theoretic formulation Grievance handling is formalized as a structured-output problem spanning semantic, affective, and behavioral dimensions, enabling joint inference of departmental routing, urgency, and toxicity through a single decision function.

-

Cross-signal multimodal integration A coherent architecture is introduced that couples CTC-based speech transcription, zero-shot MobileBERT embeddings, sentiment-intensity mapping, and behavior-aware toxicity estimation within a mathematically integrated reasoning pipeline.

-

Behavior-sensitive moderation mechanism An exponential backoff formulation is employed to combine instantaneous toxicity scores with longitudinal user behavior, providing an interpretable and fairness-oriented approach to regulating abuse.

-

Empirical validation with ablation and statistical testing Ablation studies and bootstrap-based significance analysis show that the unified model yields measurable synergy and statistically significant gains over Naive Bayes, SVM, and rule-based baselines.

Significance and novelty

Existing research on grievance processing, multimodal complaint analytics, sentiment modelling, and toxicity detection treats these tasks as independent pipelines. Prior systems typically (i) perform text-only routing, (ii) focus on product-review or social-media fusion rather than civic grievances, (iii) estimate sentiment without operational urgency reasoning, or (iv) detect toxicity without incorporating user-level behavioral history. None provide a unified formulation that simultaneously integrates semantic routing, affective urgency estimation, speech transcription, and behavior-aware toxicity reasoning within a single decision function.

The proposed framework introduces a coupled semantic-affective-behavioral inference model in which embeddings, sentiment polarity, ASR-derived confidence, and behavioral priors interact within a mathematically integrated decision structure rather than through sequential modules. This coupling produces measurable synergy that improves routing accuracy, urgency alignment, and fairness in toxicity moderation—capabilities not achievable through independent component pipelines.

The significance of the approach lies in demonstrating that a unified cross-signal architecture yields emergent robustness and interpretability that are absent in traditional complaint classification, standalone multimodal fusion, or isolated toxicity detection systems. The formulation is grounded in composite decision-function theory, treating the decision tuple in Eq. (1) as a structured-output prediction problem over a hybrid feature space encompassing semantic embeddings, affective polarity scores, and behavioral priors. Cross-modal interactions are explicitly modelled, consistent with contemporary multimodal machine-learning frameworks that emphasize hierarchical fusion and latent alignment.

To the best of current knowledge, no prior work offers a multimodal grievance-processing framework that jointly (i) performs zero-shot semantic routing across evolving complaint categories, (ii) maps sentiment polarity to a continuous urgency spectrum for escalation logic, and (iii) incorporates longitudinal behavioral history into toxicity detection through adaptive penalization. The proposed architecture establishes a mathematically grounded mechanism for fusing heterogeneous signals—probabilistic embeddings, affective intensities, transcription confidences, and behavioral priors—into a coherent inference space, yielding generalizability and operational reliability that sequential or isolated modules cannot reproduce.

Structure of the article

The remainder of the paper first reviews related work on grievance-redressal architectures and multimodal complaint analytics, then details the proposed unified framework and its components, followed by the experimental setup, results, and concluding discussion.

Related works

Research on grievance and complaint management spans public-sector informatics, customer-service analytics, multimodal analysis, sentiment modelling, and toxicity detection. Prior work can be grouped into four themes: (i) complaint routing and prioritisation, (ii) multimodal and emotion-aware complaint analysis, (iii) sentiment and urgency modelling in low-resource settings, and (iv) toxicity and abusive language detection.

LLM-based complaint classification

Vairetti et al.7 developed a complaint prioritisation framework integrating text embeddings with operational KPIs using multicriteria decision-making, improving high-severity resolution rates. Schupp et al.1 proposed a proactive management system that clusters heterogeneous complaint narratives to identify recurrent issues, but it does not address routing, urgency, or toxicity at the individual-complaint level. Materiality-based classification8 and hierarchical domain-specific complaint models9 further advance text-focused classification, yet remain single-modality and task-specific. Federated frameworks for distributed complaint analytics10 enhance privacy but treat grievances purely as textual classification instances without urgency or abuse reasoning.

Multimodal complaint and emotion-aware frameworks

Multimodal complaint analysis has predominantly emerged in e-commerce and social media contexts. CESAMARD-based models11 use attention-driven fusion of text and images for complaint and emotion prediction, and subsequent work12 extends this to aspect-level complaint detection. These systems, however, focus on consumer reviews rather than civic governance. Speech-based intent and emotion recognition systems, such as PWCR models for call-center speech13, emphasize paralinguistic cues but do not integrate routing, urgency, or toxicity detection. Federated multimodal learning for cross-platform complaint detection14 improves generalization across clients but remains limited to complaint identification rather than governance-oriented decision modelling15.

Sentiment and urgency modelling in low-resource languages

Multilingual and low-resource sentiment analysis has been surveyed extensively16,17, highlighting challenges in code-mixed text, scarcity of labeled corpora, and domain-specific affect cues. Zero-shot sentiment inference via lexicon-augmented pretraining18 demonstrates viability for under-resourced languages but does not combine sentiment with routing or toxicity detection19. These studies underscore the need for affective modelling in medium-resource civic languages such as Tamil, where urgency estimation relies heavily on domain-relevant sentiment cues20.

Abuse and toxicity detection approaches

Transformer-based toxicity classifiers21 outperform traditional models in graded severity prediction but are evaluated mainly on social media domains. Co-attentive multi-task architectures22 improve interpretability in code-mixed toxicity detection, while domain-adapted abusive language models23 and fairness-enhanced classifiers24 address robustness and bias. Euphemism detection models using contextual embeddings25 capture implicit abuse but remain limited to text-only settings. Privacy-preserving civic data frameworks26 further highlight the need for controlled disclosure in governance systems but do not integrate reasoning over multimodal signals or user behavior.

Speech technologies for governance and public-service interfaces

A growing body of research explores speech technologies in public-service settings, particularly for low-literacy and multilingual populations. Reference7 investigated automatic speech recognition for rural governance helplines, showing that domain-adapted acoustic models substantially improve transcription accuracy for spontaneous and noisy speech. Their findings highlight the importance of ASR robustness in environments where callers frequently mix dialects and switch between formal and colloquial registers. Similarly, Ref.26 developed a speech-based citizen-query system using multilingual acoustic modeling and intent classification, demonstrating that speech interfaces significantly reduce access barriers for low-literacy users. However, these works do not extend ASR outputs into unified reasoning pipelines for routing, urgency estimation, or abuse moderation.

Cross-modal and cross-lingual alignment frameworks

Cross-modal alignment has been studied extensively in multimodal machine learning, particularly for aligning heterogeneous signals such as speech, text, and structured attributes. Reference26 introduced CLIP-style contrastive alignment for image-text tasks, inspiring subsequent research adapting similar principles to speech-text representation spaces. For example,27 proposed a dual-encoder architecture for joint speech-text embedding, enabling cross-modal retrieval and zero-shot intent recognition. While these alignment mechanisms illustrate the effectiveness of shared latent spaces, they do not integrate affective or behavioral signals, nor do they target civic-governance workloads.

Structured decision models and composite inference frameworks

Structured-output prediction frameworks have been employed in domains requiring multi-dimensional inference. Reference28 introduced max-margin structured models for joint decision tasks, and recent work extends these principles to neural architectures, including graph-based, attention-based, and multi-task decision models. Reference29 developed a structured-fusion model for jointly predicting semantic categories, attributes, and sentiment under a single loss formulation. These approaches demonstrate the value of mathematically coupled decision structures, yet none address the unique combination of routing, urgency estimation, and behavioral toxicity required for civic grievances.

Governance technology and digital public-service analytics

Digital public-service analytics has emerged as an important research area examining data-driven governance and citizen-state interaction. Reference30 reviewed AI adoption in e-government systems, concluding that most deployments rely on rule-based workflows and lack robust multilingual reasoning capabilities. Reference31 emphasized the need for proposed system that incorporate fairness, transparency, and contextual reasoning in public-service contexts, noting that operational constraints often limit the deployment of advanced multimodal analytics. Recent work by Ref.32 explored machine-learning models for service-demand forecasting but did not examine complaint-level decision logic.

Behavioural analytics and abuse modelling in public platforms

Behavioral modelling has gained traction in content moderation and online safety research. Reference33 introduced early large-scale toxicity datasets incorporating user-level patterns, while more recent studies34 show that temporal repetition is a strong predictor of abusive escalation. These works support the need for behavior-aware moderation, yet they remain disconnected from real-time civic workflows or departmental routing. Integrating behavioral priors into operational decision pipelines remains largely unexplored in governance contexts.

A comparative overview of recent SCI/SCIE works related to complaint analytics, multimodal reasoning, sentiment modelling, and toxicity detection is presented in Table 1.

Synthesis of technical gaps

Across existing research directions, current systems operate as isolated task pipelines and exhibit several limitations:

-

absence of unified models that integrate semantic, affective, and behavioural reasoning,

-

limited zero-shot or few-shot robustness for evolving civic complaint categories,

-

insufficient support for multilingual and speech-based grievance submissions,

-

inadequate treatment of urgency as a continuous, context-sensitive property,

-

toxicity detection approaches that disregard longitudinal user behaviour,

-

multimodal fusion methods focused primarily on image–text rather than speech–text integration,

-

lack of end-to-end decision pipelines combining routing, moderation, and escalation.

These limitations prevent existing approaches from jointly addressing routing, urgency inference, and abuse detection across heterogeneous modalities. Civic grievance streams contain spontaneous speech, colloquial and code-mixed expressions, emotionally distressed narratives, repetitive submissions, and continually evolving issue categories—conditions under which text-only or single-task models perform unreliably. Despite progress in complaint detection, sentiment modelling, and abusive-language classification, prior work is not designed for governance environments that require correctness, fairness, timeliness, linguistic inclusivity, and robustness to user behaviour.

No existing system provides a single, mathematically unified decision framework that (i) performs zero-shot departmental routing using embedding-based semantic representations, (ii) derives continuous urgency scores from affective cues, (iii) incorporates both textual signals and behavioural profiles for toxicity detection, and (iv) processes text and speech inputs end-to-end within a real-time microservice architecture. The proposed framework addresses this gap by integrating semantic, affective, and behavioural signals into a coherent multimodal reasoning pipeline for civic grievance redressal.

Proposed system

The framework is formulated as an integrated multimodal inference system rather than as a procedural engineering pipeline. Each module is specified by its functional role in semantic routing, affective modelling, and behaviour-aware moderation, and the interactions among these components are examined in subsequent sections. The overall architecture comprises four principal layers: (i) a multimodal intake layer, (ii) a unified intelligence layer, (iii) a governance logic and escalation layer, and (iv) a security and deployment layer. These layers are modular, independently deployable, and optimized for real-time operation in civic environments.

System architecture of the proposed LLM-driven grievance redressal platform.

As illustrated in Fig. 1, each module is independently deployable and communicates through well-defined APIs. This layered design supports real-time responsiveness, behaviour-aware filtering, and secure data handling through encrypted communication channels. Grievances may be submitted as structured text, voice recordings, or document uploads via web or mobile interfaces. Voice inputs are processed by an Automatic Speech Recognition (ASR) component trained on region-specific corpora. The raw waveform \(x(t)\) is transformed into a spectral representation \(X \in \mathbb {R}^{T \times F}\), and transcription is obtained by minimizing the Connectionist Temporal Classification (CTC) loss. The components described below are presented from an algorithmic and computational standpoint to clarify their contribution to the unified inference architecture, rather than as implementation-level details.

Multimodal intake layer

The multimodal intake component serves as the entry point for heterogeneous user submissions, ensuring that typed and spoken grievances are converted into a unified representational form suitable for downstream inference. Rather than treating modality-specific inputs as independent channels, the intake layer performs modality normalization so that subsequent semantic and affective modules operate on structurally consistent text-based representations. This approach aligns with recent advances in multimodal data modelling; for example, Smith et al.27 demonstrated that cross-modal feature interaction enhances interpretability and decision quality in heterogeneous civic and social media streams. The unified decision formulation adopted here extends such principles to real-time governance workflows, where stability and homogeneity of the input feature space are essential due to short, informal, and acoustically noisy user submissions.

Speech inputs \(x(t)\) undergo preprocessing, including noise filtering and MFCC extraction, producing a feature sequence \(X \in \mathbb {R}^{T \times F}\). The ASR module employs a CTC-trained bi-LSTM architecture selected for its robustness in low-resource languages such as Tamil and its capacity to align unsegmented speech with character sequences under the CTC objective:

A CTC–RNN architecture is preferred over transformer-based ASR systems (e.g., Whisper, Conformer) due to (i) lower inference latency suitable for real-time grievance processing, (ii) reduced memory requirements for public-sector deployment environments, and (iii) superior performance on Tamil corpora exhibiting substantial dialectal variability.

Zero-shot semantic routing

The transcribed or directly submitted textual complaint is encoded using MobileBERT, selected for its favourable balance between inference speed, representational quality, and scalability under microservice-based deployments. In contrast to larger transformer models (e.g., BERT-base, RoBERTa-base, LLaMA-3), MobileBERT achieves sub-20 ms CPU-only inference, enabling real-time municipal operation without GPU resources.

Zero-shot routing is performed by embedding the complaint text \(q\) and departmental labels \(\ell _j\) into a shared semantic space:

which removes the need for domain-specific retraining and supports generalisation to previously unseen complaint categories—an essential requirement in evolving civic environments.

Although the system operates in a cloud setting, empirical evaluation indicates that lightweight encoders such as MobileBERT offer clear deployment advantages. Larger transformer families provide modest accuracy gains but incur substantially higher inference latency, dependence on GPU acceleration, and increased resource costs under horizontal scaling. Since civic platforms process thousands of short submissions per hour, inference-time efficiency is the dominant operational constraint.

MobileBERT offers stable semantic performance on short, noisy, and code-mixed grievance texts while maintaining low latency and CPU-only deployability. These properties enable elastic scaling across municipal microservice clusters without GPU provisioning. Quantitative comparisons (Table 2) show that BERT-base, RoBERTa-base, and DeBERTa-v3 exceed MobileBERT’s accuracy by 1–2% but require 5–7\(\times\) higher latency and GPU resources for real-time throughput. LLaMA-3 (8B) provides competitive zero-shot semantic alignment but exhibits unstable behaviour on ungrammatical civic inputs and demands over 8 GB of VRAM per replica. MobileBERT therefore represents the most operationally feasible encoder for zero-shot routing in municipal settings.

Sentiment-derived urgency modeling

Urgency estimation transforms sentiment polarity into a continuous escalation score. The compound sentiment value \(s_i \in [-1,1]\) is mapped to

yielding a normalized urgency measure suitable for integration into the escalation logic.

VADER is employed as the sentiment engine due to its robustness on short, informal, and emotionally charged text, which is characteristic of civic grievance submissions. Pilot evaluations indicated that transformer-based sentiment models (e.g., RoBERTa, XLM-R, mDeBERTa) exhibit unstable polarity estimates on ungrammatical or highly concise inputs and require domain-specific fine-tuning for reliable performance—an impractical requirement in municipal environments where annotated urgency corpora are unavailable. In contrast, VADER’s lexicon- and rule-based design preserves polarity intensifiers, negations, and emphasis markers, producing deterministic and interpretable outputs that map consistently onto the continuous \(p_i\) scale.

The resulting urgency scores directly influence the escalation layer, providing an explicit coupling between affective signals and administrative response priorities. This linkage addresses a gap in existing complaint-processing literature, where sentiment cues are rarely operationalized as actionable, continuous urgency measures within real-time governance workflows.

Behavior-aware toxicity detection

The toxicity module combines instantaneous abuse detection with longitudinal behaviour modelling to provide a more reliable assessment of harmful content in civic grievance streams. A complaint \(g_i\) submitted by user \(u\) is classified as abusive according to

where \(t_i\) denotes the toxicity probability produced by the Perspective API and \(r(H_u)\) represents the number of prior flagged submissions in the user’s behavioural history \(H_u\). Instantaneous toxicity captures overt abusive language, while the behavioural term provides sensitivity to repeated or patterned misuse of the platform.

To modulate tolerance levels based on behavioural patterns, an adaptive penalty is introduced:

where \(c(u)\) is the count of recent offenses within a specified temporal window. This formulation increases the penalty threshold for users exhibiting sustained abuse while limiting the impact on infrequent or borderline cases. The exponential structure reflects empirical observations that abusive behaviour often escalates nonlinearly over repeated interactions, and it enables the system to react proportionally without manual calibration.

This hybrid design addresses limitations in traditional toxicity detectors, which typically treat each complaint independently and therefore fail to capture behavioural regularities. Civic grievance platforms frequently encounter users who repeatedly submit aggressive or disruptive content, particularly in situations involving prolonged service outages, contentious administrative interactions, or politically sensitive issues. Conversely, emotionally distressed complainants may express urgency or frustration without malicious intent. Integrating behavioural context reduces false positives for such legitimate cases while maintaining stricter oversight over systematic misuse.

The behaviour-aware mechanism also aligns with governance requirements for fairness, transparency, and accountability. Unlike purely neural toxicity estimators, the proposed model produces auditable decisions: both the instantaneous score \(t_i\) and the behavioural indicator \(r(H_u)\) can be logged and reviewed during administrative audits. This interpretability is critical in public-service environments, where moderation outcomes must be defensible and consistent with institutional guidelines. Furthermore, coupling toxicity signals with escalation logic prevents abusive submissions from overwhelming operational workflows, enabling more equitable allocation of administrative resources.

Overall, this component complements semantic routing and urgency modelling by providing a stable, context-sensitive mechanism for identifying harmful behaviour across diverse linguistic and emotional expressions present in civic grievances.

Unified decision function

The unified decision model constitutes the central component of the framework, integrating heterogeneous signals into a single structured-output mapping. For each grievance \(g_i\), the system computes

where \(f(q_i)\) denotes the semantic embedding of the transcribed or typed complaint, \(p_i\) is the sentiment-derived urgency score, \(a_i\) is the toxicity indicator, \(X_i\) represents the ASR confidence and acoustic-quality features, and \(H_u\) encodes the behavioural history of user \(u\).

The function \(\Phi (\cdot )\) performs a coupled inference over these signals, producing the triplet \((d_i,\, p_i,\, a_i)\) corresponding to department routing, urgency, and toxicity. Unlike traditional models in which each task is optimized independently, \(\Phi\) treats these outputs as interdependent components of a single decision problem. This formulation reduces inconsistencies that arise when routing, escalation, and moderation are handled through separate pipelines with incompatible assumptions.

The inclusion of ASR-derived features \(X_i\) allows the model to downweight unreliable transcriptions and stabilise semantic similarity calculations in noisy or dialect-rich speech inputs. Similarly, the incorporation of \(H_u\) introduces behavioural context, enabling the system to distinguish between one-off emotive expressions and systematic misuse. The urgency term \(p_i\) interacts with semantic embeddings to prioritise grievances whose affective cues indicate immediate risk or distress, ensuring alignment between linguistic content and escalation logic.

This cross-signal fusion is, to the best of current knowledge, the first formulation in the civic grievance literature to unify semantic, affective, acoustic, and behavioural information within a single computational model. Such a formulation is necessary because these signals exhibit different statistical properties and contribute orthogonal information: semantic embeddings capture topical relevance, sentiment captures emotional intensity, ASR confidence reflects transcription reliability, and behavioural priors encode user-level temporal patterns. Treating them jointly enables the system to produce more consistent, interpretable, and operationally reliable decisions than would be possible through independent or sequential modules.

The unified decision function therefore serves as the mathematical backbone of the proposed framework, ensuring coherent inference across heterogeneous modalities and providing a principled basis for real-time routing, escalation, and abuse regulation in civic environments.

Governance logic and real-time escalation

The governance logic module operationalizes temporal priority by integrating the urgency and toxicity outputs of the unified decision function with service-level constraints. Instead of applying fixed rule-based triggers, the escalation mechanism interprets the continuous urgency score \(p_i\) as a temporal weighting factor that modulates allowable response windows. This design ensures that complaints indicating safety risks, medical emergencies, or severe service disruptions are surfaced earlier in the administrative workflow than routine submissions.

Each processed grievance is assigned a unique identifier and enters a continuous monitoring loop implemented over WebSocket channels, enabling administrators and end users to receive real-time updates on status transitions. Let \(t_i^{s}\) denote the system timestamp at submission and \(t_i^{c}\) the current time. Escalation is triggered when

where \(T\) is a dynamic threshold adjusted according to urgency and departmental workload conditions.

Urgency and toxicity jointly influence the escalation depth, routing priority, and notification schedule. High-urgency cases reduce the effective threshold \(T\), prompting accelerated reassignment or supervisory intervention. Toxic complaints modify routing behaviour by directing cases to moderation queues before administrative processing, preventing abusive submissions from disrupting operational pipelines. This integration ensures that semantic relevance, affective intensity, and behavioural risk collectively determine the complaint’s administrative trajectory.

The governance logic also enforces service-level agreements (SLAs) by maintaining per-department timers and issuing automated alerts when resolution windows approach violation. Because escalation rules depend on the model’s outputs \((d_i, p_i, a_i)\), the framework provides an auditable and consistent mapping from computational inference to administrative action. This tight coupling between decision logic and workflow execution addresses limitations of prior systems that treat routing, prioritisation, and moderation as disconnected subsystems, thereby improving transparency and responsiveness in civic governance environments.

Security, storage, and microservice deployment

The security and storage subsystem is designed to ensure verifiable, tamper-resistant, and compliance-oriented handling of civic grievance data. Structured metadata—including user identifiers, complaint categories, timestamps, and workflow flags—is maintained in relational databases to preserve referential integrity and support consistent state transitions across the grievance lifecycle. Formally, the metadata store is represented as

where each tuple records user information, complaint attributes, submission time, and system-level status fields. Unstructured artifacts such as audio recordings, document uploads, and text attachments are stored in encrypted object repositories. Each media element \(m\) is encrypted using AES with a managed key \(k\):

This ensures confidentiality and prevents unauthorized reconstruction of sensitive content while allowing controlled retrieval for administrative review.

Role-based access control (RBAC) is applied across all storage and service layers to enforce least-privilege permissions, a requirement for civic governance systems that must comply with institutional data-protection regulations and public-sector auditability standards. Administrative actions—such as moderation decisions, escalation overrides, or departmental reassignments—are logged through immutable audit trails to maintain accountability and traceability.

The framework is deployed as a containerized microservice architecture orchestrated through a Kubernetes cluster. Each computational module, including ASR, embedding inference, sentiment scoring, toxicity detection, routing, and escalation monitoring, is encapsulated as an independently scalable service. This modular structure enables elastic resource allocation under variable complaint volumes and ensures fault isolation across components. REST APIs manage inter-service coordination for deterministic request–response operations, while WebSocket channels maintain persistent communication for real-time status updates to end users and administrative dashboards.

This deployment strategy provides operational reproducibility, horizontal scaling, and resilient failover—all critical in municipal settings where load patterns fluctuate substantially and system availability directly affects public trust. By combining encrypted storage, principled access control, and containerized scalability, the framework satisfies the security and reliability requirements of a production-grade civic governance platform.

End-to-end pipeline summary

Multimodal grievance-processing pipeline integrating speech transcription, semantic routing, urgency estimation, toxicity detection, and real-time feedback mechanisms. Zero-shot text classification using MobileBERT..

As illustrated in Fig. 2, the platform operates as a closed-loop inference and governance system linking transcription, semantic routing, urgency estimation, toxicity analysis, escalation logic, and user-feedback propagation. The unified model ensures that multimodal inputs and downstream decision components interact coherently: ASR outputs feed the semantic encoder; sentiment-derived urgency influences escalation thresholds; behavioural signals affect toxicity filtering; and routing decisions determine administrative workflow trajectories. This integrated design avoids inconsistencies commonly observed in systems where these tasks are handled through separate, independently tuned models.

The pipeline consists of sequential yet modular stages. Speech and text intake feeds into the representational layer, where MobileBERT embeddings and CTC-based transcriptions are normalised into a common feature space. The unified decision function then jointly computes departmental routing, urgency, and toxicity indicators. These outputs drive real-time governance logic, enabling dynamic escalation, SLA monitoring, and administrative reassignment. Bidirectional WebSocket channels close the loop by maintaining live synchronisation with user dashboards and departmental interfaces, allowing status updates, reclassification, and post-resolution feedback to influence subsequent processing cycles.

Operational constraints in civic feedback scenarios

Model and system choices are constrained by practical conditions intrinsic to municipal grievance environments. Specifically:

-

Infrastructure limitations Many civic deployments operate on CPU-only servers with limited or no GPU availability, requiring models with low memory footprints and fast CPU inference.

-

High-volume and bursty workloads Complaint arrivals often spike during service outages, extreme weather, or infrastructural disruptions, necessitating sub-200 ms end-to-end latency under fluctuating loads.

-

Multilingual and dialect-rich inputs Civic submissions frequently contain code-mixing, colloquial expressions, non-standard grammar, and acoustically noisy speech, demanding models robust to linguistic variability.

-

Budgetary constraints and horizontal scalability Public-sector systems operate under strict cost ceilings, making lightweight, resource-efficient models preferable to heavier transformer architectures that require GPU provisioning.

These constraints substantiate the selection of MobileBERT for semantic encoding and a CTC–RNN backbone for ASR. Empirical evaluation demonstrates that these lightweight architectures offer favourable accuracy–latency trade-offs while enabling scalable microservice deployment across municipal clusters. The resulting pipeline satisfies the reliability, responsiveness, and inclusivity requirements of real-world civic governance settings.

Integration of core modules into the end-to-end resolution pipeline

The three core components of the framework—zero-shot semantic classification, sentiment-derived urgency estimation, and behaviour-aware toxicity detection—operate as an integrated inference pipeline rather than as isolated subsystems. Their interaction determines routing precision, escalation timing, moderation reliability, and ultimately the efficiency of the resolution workflow.

The zero-shot MobileBERT classifier generates the departmental assignment \(d_i\) through a text–label semantic matching mechanism. Because the model does not require domain-specific retraining, it generalises to emerging grievance categories and substantially reduces misrouting events that would otherwise introduce administrative reassignment delays. As the first major decision variable in the pipeline, \(d_i\) establishes the governance pathway each complaint follows.

The urgency score \(p_i\), computed from sentiment polarity, provides a continuous priority signal that directly modulates service-level deadlines. Higher affective intensity compresses internal SLA timers, ensuring that cases indicating safety risks, severe service disruptions, or medical distress are advanced through the workflow ahead of routine submissions. This linkage between linguistic cues and operational timing aligns the system’s behaviour with real-world governance requirements for rapid intervention.

The hybrid toxicity component evaluates both instantaneous toxicity \(t_i\) and behavioural repetition \(r(u)\) to determine whether a submission should be flagged, moderated, or rate-limited. By incorporating behavioural history, the module distinguishes between legitimate emotional distress and sustained harmful behaviour, preventing abusive inputs from degrading administrative throughput while safeguarding fair treatment of non-malicious users. Early filtering of high-toxicity cases stabilises downstream processing and protects departmental queues from disruption.

These three outputs jointly form the structured triplet \((d_i, p_i, a_i)\), which constitutes the actionable state for routing, escalation, and administrative assignment across the platform. By merging semantic, affective, and behavioural signals into a single coherent decision space, the pipeline reduces manual triage effort, enhances SLA adherence, and improves overall resolution efficiency, as demonstrated in the empirical evaluation presented in the Results section.

Speech processing using RNN with CTC

The speech-processing component serves as the primary interface for multimodal grievances submitted through Tamil or English voice recordings. Civic audio is typically spontaneous, noisy, and heterogeneous, often captured on low-end mobile devices in crowded environments and containing regional dialect variation, accent drift, and irregular speaking rates. Consequently, the ASR module must remain robust to non-stationary acoustic interference and diverse pronunciation patterns. To satisfy these constraints, the system employs a recurrent neural network architecture with bi-directional Long Short-Term Memory (bi-LSTM) layers trained under the Connectionist Temporal Classification (CTC) objective. This formulation supports alignment between variable-length acoustic sequences and unsegmented character sequences without requiring frame-level annotations.

State-of-the-art transformer-based ASR architectures such as Whisper, Conformer-CTC, and wav2vec2 were evaluated during system design. While these models achieve strong performance under controlled conditions, they require considerably higher GPU memory (8–16 GB) and exhibit 3–5\(\times\) slower inference on CPU-only deployments. Such resource demands are incompatible with municipal infrastructures where ASR must run concurrently across numerous horizontally scaled microservice replicas. Moreover, transformer ASR models demonstrate diminishing returns on dialect-rich, low-resource Tamil speech corpora unless extensively fine-tuned—an infeasible requirement given the absence of large domain-specific transcribed datasets. Preliminary benchmarking showed that a bi-LSTM CTC model trained on the available 60-hour Tamil corpus produced lower word error rates than Whisper-small on noisy mobile recordings, reinforcing the suitability of a CTC–RNN backbone for real-time civic speech intake. The computational efficiency of lightweight recurrent models also yields substantial cost savings in high-volume public-service deployments.

Each input waveform \(x(t)\) undergoes pre-emphasis, framing, and Hamming windowing before being transformed into Mel-Frequency Cepstral Coefficients (MFCCs). This process yields an acoustic feature matrix \(X \in \mathbb {R}^{T \times F}\), where \(T\) is the number of temporal frames and \(F\) the MFCC dimension. The feature sequence is passed through a multi-layer bi-LSTM encoder that learns forward and backward temporal dependencies, producing a sequence of emission probabilities over an extended vocabulary containing characters and the CTC blank token. For a target transcription \(y\), the model computes

where \(\mathscr {B}\) is the collapse operator that removes blanks and merges repeated tokens. By marginalising over all feasible alignment paths, the CTC formulation enables monotonic yet flexible sequence alignment, which is essential for processing free-flow, unsegmented civic speech.

During inference, beam search decoding integrates acoustic model scores with optional language-model priors to generate the most probable text transcription. This decoding strategy enhances robustness in the presence of background noise, hesitations, or atypical pronunciation. The full speech-to-text procedure—including preprocessing, sequential encoding, probability aggregation, and decoding—is summarised in Algorithm 1, providing a formal computational perspective on the transcription module used throughout the multimodal grievance-processing pipeline.

Speech-to-text transcription using LSTM–RNN with CTC.

RNN-CTC based speech transcription module used for converting Tamil/English voice complaints into structured text.

As illustrated in Fig. 3, the RNN-CTC module transcribes Tamil and English vocal inputs into structured text. Modern ASR architectures such as Whisper-small, wav2vec2-base, and Conformer-CTC were included in the pilot evaluation. Although these models achieve state-of-the-art performance on high-resource languages, their deployment footprint and inference latency make them unsuitable for real-time municipal microservices. As shown in Table 3, Whisper and wav2vec2 achieve lower WER under clean recording conditions, but they require 4–6\(\times\) more memory, 3–5\(\times\) higher inference time, and GPU acceleration to sustain acceptable throughput. Such requirements are incompatible with civic infrastructures that rely on CPU-only servers and must process large volumes of complaints concurrently.

By contrast, the proposed bi-LSTM CTC-RNN offers a favourable cost–latency profile. Although its WER is higher under both clean and noisy conditions, it maintains sub-40 ms inference latency on CPU-only deployments and performs competitively on dialect-rich Tamil speech, particularly under realistic mobile recording noise. These operational constraints make the CTC-RNN the only practically deployable ASR backbone for municipal grievance systems that must operate under strict resource limits while handling high-volume, multilingual voice submissions.

Zero-shot department classification via MobileBERT

Once a complaint is available in textual form—either directly submitted or obtained through ASR—the next stage involves department assignment. Rather than relying on supervised classifiers requiring municipality-specific labelled datasets, the framework adopts a zero-shot semantic matching approach based on MobileBERT embeddings. MobileBERT provides a favourable balance between contextual representation quality and computational efficiency, making it suitable for CPU-only microservice deployments where low latency and horizontal scalability are critical. Each complaint \(q\) is encoded into a contextual semantic vector \(z_q = f(q)\), while each department label \(\ell _j\) is projected into the same embedding space. Department prediction is formulated as a similarity-ranking problem, in which the department whose label embedding exhibits the highest cosine similarity with the complaint embedding is selected:

This formulation allows new or modified departmental categories to be added without retraining, thereby supporting evolving administrative taxonomies and heterogeneous municipal configurations. The end-to-end classification procedure—comprising text normalization, embedding generation, similarity computation, ranking, and final assignment—is outlined in Algorithm 2. This design ensures that routing decisions remain stable across short, informal, or code-mixed grievance texts while maintaining real-time throughput under high-volume civic workloads.

Zero-shot department classification via MobileBERT.

Algorithmic components overview

This section provides an integrated overview of the four core algorithmic components that collectively govern the system’s semantic routing, urgency modeling, speech transcription, and behavioral toxicity regulation. Each module contributes a distinct inference signal, and their interactions form the unified decision process detailed in earlier sections. The following summaries consolidate the underlying computational principles, model choices, and functional roles of each component.

CTC–RNN speech transcription

The speech-processing pipeline employs a bi-directional LSTM trained under the Connectionist Temporal Classification (CTC) objective. Raw waveforms undergo pre-emphasis, windowing, and MFCC extraction, yielding feature matrices \(X \in \mathbb {R}^{T \times F}\). The recurrent encoder produces frame-level emission probabilities over characters and a blank symbol, while CTC marginalizes over all valid alignments between acoustic frames and target transcriptions. This approach eliminates the need for frame-level labels and is well suited for noisy, dialect-rich civic speech. Beam-search decoding generates the final transcription \(\hat{y}\).

Zero-shot semantic routing via MobileBERT

Semantic classification is performed through a zero-shot embedding-based routing mechanism using MobileBERT. Complaints \(q\) and department labels \(\ell _j\) are encoded into the same representational space, and routing is achieved through maximal cosine similarity:

This formulation enables generalization to unseen departments without supervised retraining. MobileBERT is chosen for its balance of contextual expressiveness and low-latency CPU inference, allowing efficient deployment in municipal microservice environments.

Sentiment-derived urgency estimation

Urgency is estimated using a sentiment-derived continuous priority score. The VADER sentiment engine computes a compound polarity value \(s \in [-1,1]\), which is linearly normalized:

This priority score directly modulates escalation timers and administrative deadlines. VADER is selected for its stability on short, noisy, or code-mixed civic text, where transformer-based sentiment models often exhibit polarity drift or over-smoothing. The urgency module thus provides interpretable affective cues for time-sensitive grievance handling.

Hybrid toxicity detection and behavioral penalties

Toxicity detection integrates instantaneous linguistic toxicity scores with historical user behavior. A complaint \(g_i\) is flagged as abusive when:

where \(t_i\) is the toxicity score and \(r(H_u)\) captures repeated misuse. Persistent abuse triggers an exponential penalty:

with \(c(u)\) denoting prior offenses. This hybrid mechanism differentiates between isolated emotional expressions and systematic misuse, ensuring fairness while preserving administrative bandwidth.

Unified inference integration

The four algorithmic components jointly contribute to the unified decision function:

yielding the actionable triplet \((d_i,\, p_i,\, a_i)\). This formulation fuses semantic embeddings, affective cues, behavioral moderation, and speech-transcribed text into a coherent reasoning process. The integration ensures that routing, prioritization, and moderation are mutually consistent, addressing fragmentation commonly found in legacy grievance systems.

MobileBERT-based semantic routing: complaint vectors are mapped against label embeddings for department inference.

Sentiment-based urgency estimation using VADER

The MobileBERT-driven semantic router used for department inference is shown in Fig. 4. Urgency varies substantially across civic submissions, with certain complaints indicating immediate risk (e.g., medical distress, safety hazards) or pronounced emotional intensity. To quantify such signals in an interpretable manner, the framework employs sentiment analysis using the VADER lexicon. VADER is well suited for short, informal, and emotionally expressive text—properties characteristic of real-world civic grievances that often deviate from standard grammatical structure. Given a complaint \(x\), VADER produces a compound sentiment score \(s \in [-1,1]\), which is mapped to a normalized urgency score

yielding a continuous priority value \(p \in [0,1]\). This transformation allows urgency to directly modulate escalation thresholds and service-level timers within the governance module. High-urgency cases therefore acquire shorter internal deadlines and trigger earlier administrative intervention. The urgency computation pipeline includes text normalization, negation handling, polarity extraction, and optional lexical emphasis adjustments for terms explicitly indicating emergency conditions. The operational procedure is summarized in Algorithm 3.

Urgency estimation via VADER sentiment analysis.

Toxicity detection and abuse mitigation

Abusive or toxic interactions within civic grievance systems compromise fairness, inclusivity, and service reliability. Unlike commercial platforms, civic environments must balance deterrence of harmful expression with tolerance for legitimate emotional distress, since complainants often use strong language under conditions of fear, urgency, or frustration. To address this requirement, the framework adopts a hybrid toxicity detection mechanism that integrates instantaneous linguistic toxicity estimation with longitudinal user-behaviour analysis.

Each grievance \(g_i\) receives an instantaneous toxicity score \(t_i \in [0,1]\) using the Perspective API, a transformer-based ensemble that identifies harmful constructs such as insults, threats, and hate speech. Instantaneous toxicity alone, however, is insufficient for civic workflows because isolated expressions of distress do not reliably indicate malicious intent. The system therefore incorporates a behavioural profile \(H_u\) for each user \(u\), comprising timestamps, toxicity flags, and prior moderation outcomes. A repetition function \(r(H_u)\) evaluates temporal frequency and severity of past incidents, enabling detection of systematic misuse even when individual messages appear only moderately toxic.

Compared with standalone text-based toxicity classifiers, this hybrid formulation provides two major advantages: (i) context-aware moderation, in which behavioural patterns modulate the interpretation of linguistic toxicity; and (ii) longitudinal robustness, where repeated misuse is identified even when successive complaints individually fall below toxicity thresholds.

A complaint is flagged as abusive when either the instantaneous score or the behavioural repetition score exceeds predefined thresholds:

To regulate persistent misuse, the system applies an exponential backoff penalty that increases the restriction duration geometrically with each subsequent offence. The penalty value is computed as

where \(c(u)\) denotes the cumulative offence count within a defined window. This mechanism discourages repeated abuse without imposing permanent exclusion, maintains proportionality between behaviour and corrective action, and supports eventual reintegration for users whose interaction patterns improve.

Algorithm 4 details the evaluation and penalty-enforcement workflow, including toxicity scoring, behavioural profiling, threshold checks, exponential penalty updates, and recovery logic. This structured formulation improves reproducibility and aligns with emerging standards in trustworthy and accountable AI for public-service infrastructures.

Hybrid toxicity detection and exponential penalty enforcement.

To consolidate the behaviour of the moderation module, the abuse flag and penalty updates are expressed as The penalty duration follows the same exponential formulation introduced earlier in Eq. (6), and is applied here without redefining the expression to avoid redundancy. Experimental evaluation on the curated multimodal grievance dataset demonstrates the effectiveness of this hybrid moderation strategy: the system attains 96.2% precision and 91.8% recall in toxicity detection and correctly identifies 89.3% of repeat offenders. In contrast to naïve toxicity-only filters, this dual-mode method reduces false positives arising from legitimate but emotionally charged complaints, thereby maintaining equitable treatment while discouraging systematic abuse—an essential requirement in public grievance infrastructures where transparency and trust must be preserved.

Complaint routing, tracking, and storage

Once grievances have been semantically classified, urgency-weighted, and screened for abuse, they are forwarded to the appropriate administrative departments through a dedicated routing engine. This component forms the operational bridge between the intelligence layer and real-world governance workflows. To support high concurrency and real-time responsiveness, routing is implemented as a set of RESTful microservices that interface with existing departmental systems and legacy governance portals. Each routed complaint is encapsulated within a standardized JSON schema containing unique identifiers, metadata fields, department labels, timestamps, urgency indicators, and moderation flags.

Real-time tracking is supported through a WebSocket-based event-streaming layer. Upon submission, the system assigns a globally unique complaint identifier (\(CID\)), records initial metadata, and initiates a continuous event stream. Status transitions—Received, Assigned, In Progress, Escalated, and Resolved—are pushed immediately to both users and administrators. This persistent two-way communication channel improves transparency by allowing citizens to monitor the full lifecycle of their petitions and reduces uncertainty associated with administrative latency.

Routing accuracy is further enhanced by an adaptive re-routing mechanism. Complaints that exceed their assigned service-level deadlines are automatically escalated, reprioritized, and redirected to supervisory or alternative departments. This behaviour is governed by a state-transition automaton that integrates urgency \(p_i\), toxicity status \(a_i\), and elapsed time thresholds. The explicit coupling of semantic classification, affect-driven prioritization, and time-aware escalation yields improvements over traditional civic portals, which typically rely on static or manual routing procedures.

Storage and security

The data-management layer conforms to strict security and auditability requirements associated with public governance environments. Structured metadata—including user identifiers, timestamps, department codes, escalation logs, and complaint summaries—is stored in a relational database:

where \(u\) denotes user identity, \(c\) complaint content, \(t\) timestamps, and \(f\) feedback or status indicators. Unstructured artefacts such as audio recordings, documents, and images are protected using AES-256 encryption:

AES-256 is selected for its NIST-endorsed security guarantees, throughput efficiency, and compatibility with distributed object storage.

Role-Based Access Control (RBAC) enforces least-privilege permissions, ensuring that sensitive records are accessible only to authorized personnel. To strengthen access governance, the system design aligns with recent developments in context-aware Attribute-Based Access Control (ABAC). Prior work by Lee et al.35 demonstrates that combining environmental context with dynamic policy enforcement improves security robustness in public-service infrastructures; similar principles are incorporated here. All access and modification events are recorded in a tamper-evident audit trail, ensuring traceability, forensic accountability, and compliance with public-data protection mandates36.

Deployment environment

The platform operates as a containerized microservice architecture orchestrated through a Kubernetes cluster, providing scalability, fault tolerance, and high availability. Each functional component—speech recognition, semantic routing, urgency estimation, abuse detection, storage services, dashboards, and escalation logic—is deployed as an independent microservice, enabling auto-scaling during peak complaint hours, rolling updates without service interruption, and rapid recovery from node failures. Asynchronous inter-service communication is supported by RabbitMQ, ensuring message durability and reliable delivery under varying network loads. Prometheus and Grafana provide real-time observability, enabling monitoring of throughput, latency, uptime, complaint trends, and escalation frequencies. Administrators can diagnose bottlenecks and failure modes via aggregated cluster-level metrics. Figure 2 illustrates the integration of each microservice into the end-to-end grievance pipeline, linking ASR-based transcription, MobileBERT-based classification, sentiment-driven urgency modelling, and behaviour-aware toxicity regulation. Beyond backend infrastructure, the platform provides interactive dashboards for administrators and citizens. These dashboards visualize petition distributions, departmental performance indicators, resolution delays, escalation patterns, and sentiment-derived urgency trends. Additional analytics—such as spatial heatmaps, temporal clustering of grievances, and user behaviour summaries—support data-driven governance and improve institutional transparency.

Data collection

To evaluate the proposed multimodal grievance-redressal framework, a dataset of 1000 complaint instances was assembled over a period of 45 days. The collection focused on three high-volume municipal sectors—Health, Sanitation, and Transport—which collectively account for a substantial share of urban service requests. Data were sourced from (i) publicly accessible municipal dashboards, (ii) textual complaint logs from the CPGRAMS portal, and (iii) community submissions voluntarily provided through local governance collaboration forums.

The textual component consists of 821 unique complaints in structured form. Each entry was manually annotated by trained annotators with four metadata attributes: assigned department, urgency level (scaled to [0, 1]), resolution outcome (resolved, escalated, pending), and sentiment polarity. To introduce linguistic diversity, approximately 15% of samples were paraphrased using a controlled LLM-based augmentation pipeline, generating variants differing in tone, vocabulary richness, code-mixing intensity, sentence structure, and grammatical form while preserving semantic content. This procedure reflects the variability typically observed in multilingual Indian municipal portals.

The speech subset comprises 179 voice-based complaints recorded by 47 participants under informed consent. Audio was gathered across heterogeneous acoustic environments—quiet indoor settings (41.2%), crowded markets (32.8%), and public-transport contexts (26.0%)—using commodity smartphones. Recordings were 3–15 s in duration, transcribed, and manually validated for semantic fidelity. To enhance robustness of the ASR module, 20% of the recordings were augmented with environmental noise (traffic, crowd chatter, wind) using signal-to-noise ratios ranging from 5–20 dB.

A controlled proportion of toxic or abusive content (7.4%) was included to enable rigorous evaluation of the hybrid abuse-detection subsystem. These samples comprised synthetically generated abusive expressions, sentiment-intense utterances, and frustration-laden speech, reflecting patterns documented in real-world civic portals. All contributors provided informed consent, and the data-collection protocol adhered to institutional ethical guidelines. Personally identifiable information (PII) was removed or masked prior to inclusion in the dataset.

Table 4 summarises the composition of the datasets used in this study. The resulting dataset captures real-world heterogeneity in complaint styles, code-mixed language patterns, urban acoustic environments, expressions of frustration, variability in perceived urgency, and the grammatical inconsistencies that commonly challenge civic grievance-redressal systems. The dataset was intentionally curated to ensure representativeness across linguistic, topical, and demographic axes. Samples were stratified by department, geographic region, complaint modality, and urgency level to mitigate class imbalance. The speech subset includes eleven dialectal variants of Tamil and multiple environmental noise conditions, thereby increasing ecological validity. Although the dataset comprises 1000 complaints, its multimodal diversity and stratified construction provide sufficient coverage for assessing generalizability in civic unified model, particularly in domains where real-world datasets are limited, sensitive, or proprietary.

Data preprocessing

A comprehensive preprocessing pipeline was applied to both textual and audio modalities to ensure consistency, linguistic cleanliness, and acoustic robustness prior to downstream analysis. The objectives were to standardize heterogeneous inputs, reduce noise-induced errors, preserve semantic and affective cues, and align the processed data with the requirements of the MobileBERT, VADER, and CTC-based ASR modules.

Text preprocessing

All textual complaints were normalized using a SpaCy-based workflow that included lowercasing, controlled punctuation handling, contraction expansion, whitespace normalization, and stop-word filtering. Informal symbols, emojis, and character elongations (e.g., “pleeease”, “heelllp”) were converted into standardized tokens. Named Entity Recognition (NER) was employed to identify service entities (e.g., “GH Hospital”, “Metro Bus”), which were mapped to canonical department categories through a curated ontology. A fuzzy string-matching module corrected partial or misspelled department names to ensure reliable semantic alignment under noisy user input. Polarity intensifiers and negation cues were retained to preserve their contribution to sentiment-driven urgency estimation.

Speech preprocessing

Audio recordings were resampled to 16 kHz and processed through a Librosa-based cleaning pipeline comprising silence trimming, gain normalization, dereverberation, and pre-emphasis. Mel-Frequency Cepstral Coefficients (MFCCs) were extracted using 40 filters over 25 ms windows with 10 ms frame shifts and served as inputs to the CTC-based ASR model. Noise-augmented samples were incorporated to improve robustness to real-world urban acoustic conditions. All transcriptions generated by the ASR module were subsequently normalized and aligned using CTC posterior smoothing.

Dataset partitioning

The corpus was divided into training (70%), validation (15%), and test (15%) subsets. Since the semantic routing module operates in zero-shot mode, MobileBERT-based classification was evaluated exclusively on the test set. The ASR model was trained using the Adam optimizer with early stopping and learning-rate warmup. Toxicity detection thresholds were calibrated using both real and synthetic abusive samples, and all abuse annotations were validated through a three-annotator consensus, yielding Krippendorff’s coefficient \(\alpha = 0.91\).

Quality assurance

The dataset underwent multiple QA procedures to ensure statistical reliability and representativeness. These included: (i) verification of class balance across departments, (ii) consistency checks for urgency annotations using Krippendorff’s \(\alpha\), (iii) integrity assessment of all text-normalization stages, (iv) signal-to-noise ratio (SNR) validation for audio recordings, and (v) inspection for potential bias in the synthetically augmented toxic samples. Together, these procedures ensure that the dataset reflects realistic civic grievance conditions while maintaining annotation quality.

To further strengthen inter-annotator reliability reporting, Cohen’s \(\kappa\) was computed for both urgency (binarized at a threshold of 0.5) and toxicity labels across two independent annotators. The resulting scores, \(\kappa _{\text {urgency}} = 0.84\) and \(\kappa _{\text {toxicity}} = 0.79\), indicate substantial agreement and confirm the robustness of the annotation protocol.

Experimental setup and evaluation

The performance, scalability, and operational reliability of the proposed multimodal grievance-redressal framework were evaluated through a series of controlled experiments conducted in a cloud-native microservice environment. All experiments were executed on an AWS EC2 compute instance equipped with 8 vCPUs, 32 GB RAM, and an attached NVIDIA T4 GPU to support accelerated ASR training and inference.

CTC–RNN model hyperparameters

The ASR model consisted of a two-layer bi-directional LSTM with 512 hidden units per direction (1024 after concatenation), followed by a fully connected output layer projecting to 43 Tamil character classes plus the CTC blank symbol. Dropout of 0.2 was applied between recurrent layers. Beam-search decoding used a beam width of 10 with log-probability pruning at \(10^{-3}\). All MFCC features were mean–variance normalized prior to ingestion. ASR training employed PyTorch 2.1.2 with warp-ctc for efficient CTC computation.

To ensure full reproducibility, all model configurations, hyperparameters, and software versions were explicitly fixed. The implementation environment used Python 3.10, PyTorch 2.1 for neural components, HuggingFace Transformers 4.36 for MobileBERT inference, and TensorFlow 2.11 for auxiliary preprocessing utilities. Docker images were built on nvidia/cuda:12.0-runtime with pinned dependencies to guarantee deterministic execution across machines. The operating system was Ubuntu 20.04 LTS. All microservices—including ASR, MobileBERT inference, sentiment scoring, toxicity detection, routing, and dashboard modules—were containerized via Docker and orchestrated using Kubernetes. This setup enabled reproducible experimentation, automated scaling under simulated peak loads, and fine-grained measurement of inter-service latency in a realistic deployment environment.

A curated multimodal dataset of 1000 grievance records served as the primary corpus for training and evaluation of the proposed AI-driven civic grievance-redressal framework. The dataset spans three high-demand public-service departments—Health, Sanitation, and Transport—and reflects realistic patterns of short-form, code-mixed, and emotionally charged submissions observed in municipal systems. All complaints were collected through two channels: (i) structured submissions obtained from bilingual (Tamil–English) digital forms, and (ii) publicly available textual logs from municipal grievance portals such as CPGRAMS. Although the framework is designed for multilingual deployment, the collected dataset itself consists of Tamil and English content due to the linguistic profile of the participating regions.

To support model development, the dataset was partitioned into training, validation, and evaluation subsets following a 70%–15%–15% split. Only the ASR and auxiliary preprocessing models were trained using these splits; MobileBERT-based department classification and VADER-based urgency estimation were evaluated solely on the held-out test set because both operate in zero-shot mode and do not require supervised fine-tuning. Synthetic augmentations were applied exclusively to the training subset to enhance model robustness without contaminating the evaluation distribution. Text augmentations consisted of controlled paraphrases and code-mixing variants generated using a constrained LLM pipeline, while speech augmentations were produced using noise-mixing and time-shift transformations to reflect urban acoustic conditions.

The speech portion of the corpus contains 179 voice-based complaints recorded at 16 kHz, primarily in Tamil with occasional English code-mixing. These recordings were manually transcribed and validated before use. To increase ASR resilience, 20% of the audio samples in the training set were augmented with background noise (traffic, crowd chatter, wind) at signal-to-noise ratios between 5 and 20 dB. No synthetic TTS audio was included in the evaluation subset; any synthesized speech was restricted to training augmentation to ensure that performance metrics reflect real-world conditions.

The ASR model was trained on a dedicated 60-h Tamil speech corpus collected from multiple dialect groups (Madurai, Coimbatore, Tirunelveli, Erode, and Chennai). This corpus was used solely for improving the speech-recognition backbone and did not contribute to department or sentiment annotations. Training proceeded for 30 epochs using the Adam optimizer with an initial learning rate of \(1\times 10^{-3}\), with early stopping triggered when validation WER failed to improve over five epochs. WER was computed according to standard character-level alignment procedures.

To ensure reproducibility, all neural components were implemented using Python 3.10, PyTorch 2.1 for ASR and auxiliary models, and HuggingFace Transformers 4.36 for MobileBERT embedding inference. Docker-based containerization with pinned dependencies and Kubernetes orchestration ensured deterministic execution of each microservice during experimentation and scalability testing.

where \(S\), \(D\), and \(I\) denote the number of substitutions, deletions, and insertions, respectively, and \(N\) is the total number of reference words. This formulation is standard for ASR benchmarking, particularly in low-resource linguistic settings.

The zero-shot classification module employed MobileBERT, which operated without supervised fine-tuning on department labels; only unlabeled grievance embeddings were used during pre-processing to ensure domain alignment. The sentiment pipeline used VADER, while toxicity detection was calibrated using 74 synthetic toxic samples and 126 real abusive comments extracted from municipal portals. Annotators assigned toxicity polarity labels to each instance, resolving disagreements through majority voting for categorical labels and averaging for continuous scores. Inter-annotator consistency, measured using Krippendorff’s alpha, yielded \(\alpha = 0.91\), indicating strong reliability.