Abstract

Rapid and accurate prediction of thickness distribution remains a critical challenge in achieving real-time process optimization for superplastic forming (SPF) operations. Conventional prediction method based on finite element analysis (FEA) faces constraints in computation efficiency and completely dependency on precisely defined boundary conditions, rendering them unsuitable for real-time control systems. This study proposes a mesh-informed neural network surrogate model based on particle swarm optimized-back propagation (PSO-BP) algorithm to predict the thickness distribution of superplastic forming parts with different geometric feature parameters. A geometric fitness function based on SPF part features is proposed to solve the problem of large local errors in round-corner areas. The mean absolute percentage error of the improved algorithm prediction results has reduced from 1.3% to 0.8% by approximately 38.5% compared to the standard PSO-BP neural network. A rapid prediction of the thickness nephogram of Ti-6Al-4 V box-shaped parts within 0.5 s was achieved with an average deviation from the finite element simulation results less than 1%. This computational advancement enables closed-loop process control by bridging the temporal gap between simulation-based optimization and actual manufacturing cycle times. The developed system shows significant potential for in-process quality monitoring and dynamic parameter adjustment in industrial SPF applications.

Similar content being viewed by others

Introduction

Superplastic forming (SPF) is a material forming technology which takes advantage of the extremely large elongations of polycrystalline materials, i.e. 1200% in titanium alloys1, under specific conditions of high temperature and low strain rate (i.e. 10− 3~10− 5 s− 1)2. It has been widely used in aviation & aerospace3, automotive4, rail transportation5 fields, because of its high-precision and low-cost forming ability in complex structures6. In SPF process, metal sheets are clamped by the blank holder and bulged under pressurized gas. Due to the absence of drawing, the thickness distribution of parts produced by SPF is uneven, and the thinning effect is related to part structure, mold design, stress and strain field during processing7. Predicting the thickness distribution of SPF parts rapidly and accurately is important for achieving on-site process optimization and intelligent equipment control.

It is well known that finite element analysis (FEA) has been widely used in the development and optimization of SPF processes8,9 because of its high prediction accuracy in titanium alloys10, aluminum alloys11, magnesium alloys12 and other material systems13. However, FEA method is based on the numerical solution of partial differential equations, which is inefficiency and computility costly, making it almost impossible to combine the prediction results with the control system for in-process regulation. Meanwhile, the prediction accuracy of FEA depends on accurate physical models and boundary conditions, which makes it difficult to migrate predictions to other situations, even if the differences are small.

Instead of the reliance on accurate physical models, data-driven machine learning (ML)14,15 methods, on the other hand, are appropriate for rapidly predicting part performance from unknown and variable input data16. Such models are often referred to as surrogate models or metamodels. Recently, some progress has been made in ML algorithms based on FEA, including artificial neural networks (ANN)17, support vector machine (SVM)18, regression tree19, random forest20, etc. to achieve prediction in metal forming. These ML methods provide rapid results in sheet metal constitutive modelling21, prediction of forming results22, process parameters optimization23, process energy estimation24, prediction of defects25, etc.

Among the ML algorithms, neural network algorithms show better performance in problems with nonlinearities and multiple parameters26, such as hot forming & cold die quenching (HFQ) technology27, incremental sheet metal forming28, air-bending forming of sheet metal29, cold roll-forming30, etc. In the preliminary study of our group31, a particle swarm optimized back propagation (PSO-BP) algorithm has been developed to predict the thickness distribution of SPF parts. As the result, the PSO-BP neural network showed a better performance in accuracy than the conventional BP neural network. Although these works got relatively low mean absolute error, they still had significant local deviations in the predicted results. These deviations usually happened in large curvature gradient areas, which limits their application in engineering.

The purpose of this study is to construct a surrogate model for predicting the thickness distribution of SPF parts with high accuracy, short prediction time, and migratable to different geometric features. The surrogate model was realized by a mesh-informed PSO-BP neural network based on the geometrical features of the parts. The network was trained by a dataset with batched FEA simulation. By analyzing the distribution pattern of the error, we added a geometry fitness function in PSO algorithm to optimize the large local prediction errors. As the result, the accuracy of thickness prediction in round-corner areas has been significantly improved. Our surrogate model shows a prediction time less than 0.5 s in a commercial PC, which has a great application prospect for in-process quality monitoring and dynamic parameter adjustment in industrial SPF applications.

Prediction modeling

Problem statement

SPF is a bulging process loaded by gas pressure at high temperature, which obtains specific geometry by mold constraints. During the SPF process (Fig. 1), the thickness of the metal sheet reduces, and its distribution is predominantly influenced by the depth-diameter ratio of the deformed area along with various geometrical features, including round corner radius and local curvature distributions.

Schematic diagram of SPF process and its thinning effect.

Box-shaped components represent a class of SPF parts that are commonly encountered and extensively utilized in various engineering applications due to their geometric representativeness. The geometric parameters, including length, width, depth, and corner radius, are the critical factors affecting the thickness distribution in SPF parts. In this paper, Ti-6Al-4V box-shaped part with side length l = 100 mm and initial sheet thickness t = 1.5 mm was selected as the study object for thickness distribution prediction.

Based on the characteristics of the box-shaped parts, the geometric parameters height (H), entrance radius (R1), bottom radius (R2) and corner radius (R3) are considered as the input variables. According to the design requirements and forming limitation of Ti-6Al-4 V SPF parts32, the ranges of R1, R2, R3 and H are shown in Table 1.

Dataset and simulation model

The Latin hypercube sampling in the parameter spaces of Table 1 was used to select 1,000 different box-shaped geometries. For each geometry, the SPF process was simulated by FEA software MSC.MARC/Mentat and the mesh configuration is bilinear thick-shell element. The Ti-6Al-4 V sheet was discretized using a 4-node quadrilateral element mesh configuration and deformed by a fixed strain rate under face loads in the direction perpendicular to the surface. A Coulomb model with a friction factor of 0.233 was taken to describe the friction state between the part and mold. Since the SPF process takes place at high temperatures and low strain rates with large deformations and almost no rebound, a rigid-plasticity model was used in the simulation to describe the SPF characteristics of Ti-6Al-4V alloy. The stress-strain relationship was described by Backofen constitutive Eq. (1) based on the mechanisms of grain boundary sliding (GBS)34,35,

where \(\:K\), \(\:\dot{\epsilon\:}\) and m are the material coefficient, strain rate and the material strain rate sensitivity coefficient, and \(\:\sigma\) is stress.

Based on preliminary experimental results of our group36, the Ti-6Al-4V alloy demonstrated optimal superplastic performance of an elongation about 900% at a temperature of 875 °C and a strain rate of 0.0003 s− 1. Fitted by Eq. (1), K = 497.24 and m = 0.715 are used in this paper.

The position and thickness data of all nodes in each simulation result file are exported through our python script. A dataset was built with geometric parameters and node positions as the input, while corresponding node thickness as the output.

Neural network architecture and training

Neural network architecture

Back propagation neural network (BPNN)37,38 is a multi-layer feed-forward neural network trained according to the error back propagation algorithm, with strong nonlinear mapping capabilities. A mesh-informed BPNN with two hidden layers was constructed as the thickness prediction surrogate model. The number of neurons in each hidden layer was set between 1 and 16 and trained individually, with mean squared error serving as the evaluation metric31. The neural network architecture with the highest prediction accuracy is shown in Fig. 2. The hidden layer activation function of the model is the hyperbolic tangent function, while the output layer activation function is a linear function.

The input layer neurons represent the geometric parameters of the SPF part and the position of mesh nodes, while the output layer neurons represent the thicknesses of the mesh nodes.

The input x and output y of each neuron can be described as:

where \(\:{x}_{i}\) is input signal to the neuron, \(\:{w}_{ij}\) is the weight value, \(\:{b}_{i}\) is the bias value, \(\:{n}_{p}\) is the number of neurons in the previous layer of the neural network and f is a hyperbolic tangent activation function.

The structure of mesh-informed BPNN.

Neural network training

To overcome the low speed and local minima problems in gradient descent training method, PSO algorithm39,40 was taken to improve the prediction accuracy and generalization ability, by searching the optimal weights and biases value.

In PSO algorithm, possible configurations of the above BPNN were treated as particles. The position of the particle represents the weights and biases of the BPNN. By update the position and velocity of each particle according to individual and global optimal value in searching space, we get the optimized weights and biases of BPNN. The formulas for particle updating process are.

where \(\:{\varvec{v}}_{id}^{k}\) and \(\:{\varvec{x}}_{id}^{k}\) are the velocity and position of the i-th particle in iteration step k; \(\:{c}_{1}\) and \(\:{c}_{2}\) represent the individual learning factor and social learning factor; \(\:\omega\:\) is the inertia weight, which is used to balance global search and local search capabilities; \(\:{r}_{1}\) and \(\:{r}_{2}\) are the random numbers distributed between 0 and 1; \(\:{\varvec{p}}_{id}^{k}\) and \(\:{\varvec{p}}_{gd}^{k}\) are the individual optimal value of the i-th particle and the global optimal value in iteration step k, which are obtained by calculating the fitness function E. The fitness function determines the training route of these particles.

The paper employs an adaptive inertia weighting method that adjusts the inertia weight \(\:\omega\:\) based on the number of iterations, with the formula defined as:

where \(\:{\omega\:}_{max}\) is the maximum inertia weight, set to 0.9 in this paper; \(\:{\omega\:}_{min}\) is the minimum inertia weight, set to 0.4; \(\:q\) is the current iteration count; \(\:{q}_{max}\) is the maximum iteration count.

The formulas of \(\:{\varvec{p}}_{id}^{k}\) and \(\:{\varvec{p}}_{gd}^{k}\) are:

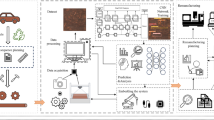

The workflow of the training process is shown in Fig. 3. We randomly take 90% of geometries from the database as the training data and the remaining 10% as the test data. The network is trained using the training data and the training result is used to update the particle parameters. The study used a computer with 192 CPU cores, 512 GB RAM, and 2.4 GHz memory frequency for training. The continuously iterate training process ends until the termination condition is reached.

PSO based neural network training workflow.

The hyperparameters in the PSO algorithm primarily include the population size N and learning rates \(\:{c}_{1}\) and \(\:{c}_{2}\). The population size N refers to the number of particles in the PSO algorithm, directly affecting the coverage of the search space and computational cost. The parameters are set as \(\:N=40\), \(\:{c}_{1}=2.0\) and \(\:{c}_{1}=2.0\) in this paper.

Results and discussion

Thickness distribution prediction

After the training process was finished, geometries from the test set were used to validate and evaluate the performance of the neural network surrogate model. Thickness distribution nephograms of 6 randomly test geometries are shown in Fig. 4. Compared with FEA simulation results, our surrogate model shows a good agreement. The percentage error in most areas remains less than 0.02 mm, and the maximum percentage error in particular areas is less than 0.1 mm.

Comparison of thickness distributions between FEA simulation and the surrogate model and results of six random test cases.

In this paper, the prediction accuracy of the surrogate model was evaluated using the correlation coefficient (R), mean square error (MSE), mean absolute error (MAE), and mean absolute percentage error (MAPE) with the formulas:

where n is the number of samples, \(\:{\widehat{y}}_{i}\) is the predicted value for the i-th sample, \(\:{y}_{i}\) is the FEA simulation value for the i-th sample.

By calculating the results of all test sets, the R, MSE, MAE, MAPE values in full test scale are 0.9982, 0.000278 mm2, 0.0126 mm, 1.3%, respectively. These results indicate a relatively high prediction accuracy of the surrogate model.

Error mechanism analysis

According to above results, the surrogate model shows a good performance of mean error in thickness distribution prediction. However, there remains some big-error areas which can be observed from the error nephograms in Fig. 4. These areas usually appear at the round corners of the SPF parts.

By cutting the symmetrical cross-section edges of the box-shaped part and plotting the thickness curve in Fig. 5, it can be seen that the slope of the thickness curve has several obvious changes from plots A to E. Section AB is the blank holder of the mold, where the thinning effect is not as noticeable as in other sections. Section BC is the round corner at the entrance part of the mold, where the thickness change in this area is more obvious. The area near point D of the curve is near the round corner of the bottom surface of the model, where the thinning effect is the most obvious. Compared with FEA simulation results, the prediction error mainly happens around this area. As the thickness increases along the DE line, the prediction becomes more accurate.

The comparison of the thickness curve between FEA simulation results and surrogate model predictions of the symmetrical cross-section edges of a box-shaped part.

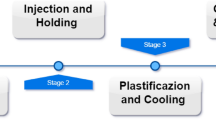

Considering the characteristics of the SPF, the uneven distribution of the stress and strain field during processing will result in a significant difference in the wall thickness of the parts. As shown in Fig. 6, SPF process can be divided into four main stages, i.e., free bulging, bottom contacting, side contacting and corner contacting. The uneven deformation caused by the mold constraints and mold-part friction makes the uneven distribution of wall thickness. When the forming part contacts with the mold, the friction between them prevents the flow of metal. The metal in the contacted areas flows slowly, while the uncontacted area continues to bulge freely with a relatively high speed. The sequence of the mold-part contact determines the thickness distribution trend in each section of Fig. 5.

Four main stages of a box-shaped part SPF process.

Figure 7 shows the thickness and its first- and second-order gradient in the symmetrical cross-section edge. It can be seen that the large-error appears at the round corners of the entrance part and the bottom surface, where large values of second-order thickness gradient emerge. The underlying reason for this phenomenon lies in the high nonlinearity of these areas. The nonlinearity induces large differences in the gradient amplitudes of the corresponding grid nodes, and the fitness function has a large number of local minima or saddle points, which makes the data in these areas prone to suboptimal solutions or slow convergence, while other areas exhibit faster training process. Consequently, premature termination of the training process occurs once the global accuracy metric satisfies predefined thresholds, despite unresolved convergence deficiencies in critical nonlinear zones. This results in excessive deviation in the thickness distribution of the round-corner area.

The thickness curve, thickness second-order gradient and surrogate model prediction error of the symmetrical cross-section edges, for three random test set cases.

By taking out a small number of samples with large errors, it was found that most of them have smaller entrance radius R1 and bottom radius R2. In order to verify this trend, we design two new groups of geometries. The geometry parameters except R1 and R2 keeps unchanged in Group 1 and 2, respectively (Table 2). By analysis the performance of the trained surrogate model, the influences of R1 and R2 on the prediction error of the neural network are shown in Fig. 8. It indicated that the prediction error tends to decrease with the increasing R1 and R2. According to the processing properties of SPF, the thinning is more obvious near the round corner when the corner radius is smaller, which verifies the influence of the second-order gradient on the prediction accuracy of the surrogate model. In addition, to address the issue of errors between simulation and experimentation, a dynamic database update feature could be developed. This feature collects real-time data during actual production processes and updates the surrogate model accordingly.

The MAE between the simulated and predicted values of the two groups of samples and the thickness second-order gradient.

Algorithm improvement

To solve the problem of low prediction accuracy caused by high nonlinearity in round-corner areas, we attempt to adjust the training route locally based on the geometric features. In Eqs. (5) and (6), the fitness function E in PSO is usually used to judge the individual optimal value \(\:{\varvec{p}}_{id}^{k}\) and global optimal value \(\:{\varvec{p}}_{gd}^{k}\) of the particles, which guides the training route of the neural network.

Due to the large-error areas usually appear in round corners, we create a geometry fitness function \(\:{E}_{g}\), which takes the curvature information of mesh nodes into consideration.

where \(\:{MAE}_{r}\) is the MAE of the nodes in the round corners, \(\:{MAE}_{w}\) is the MAE of the rest of the nodes, \(\:{k}_{r}\) and \(\:{k}_{w}\) are the weights of each area, \(\:{k}_{r}+{k}_{w}=1\).

By adjusting the weights of nodes in round corners and rest areas in the fitness function, the particle searching process tends to search in the directions with lower error of round-corner area. Thus, the training rate of weights between the neurons in BPNN that affects the round-corner area increased, which weakens the high nonlinearity influence in these areas, such as local minima or saddle points. This method leads to an improvement of the prediction accuracy in round-corner areas.

Table 3 shows the performance parameters R, MSE, MAE and MAPE of the surrogate models with and without the geometry fitness function \(\:{E}_{g}\). Compared with the initial PSO-BPNN model, the model with geometry fitness function shows a significant improvement in overall prediction accuracy. The MSE, MAE and MAPE have decreased 64.1%, 41.3% and 38.5%, respectively.

Five groups of geometries are randomly selected from the test set, the nephograms of prediction results for the two networks, as well as the nephograms of prediction errors at the same scale are shown in Fig. 9. It shows that the prediction accuracy of the round-corner areas has been largely improved.

Comparison of thickness distributions between FEA simulation results and two surrogate models in five random test cases.

The MAE between the prediction results and FEA simulation results of the two surrogate models were calculated for all geometries in the test set. The distribution of the errors of both models were plotted in Fig. 10. In the prediction results of the initial PSO-BPNN model, most of the geometries have a prediction error between 0.01 mm and 0.02 mm, while most of the geometries in the results of the PSO-BPNN model with \(\:{E}_{g}\) have a prediction error between 0.004 mm and 0.012 mm. It indicates that the addition of local optimization in round-corner areas has a comprehensive optimization effect of accuracy.

The MAE between the prediction results and FEA simulation results of the two surrogate models for all the geometries in the test set.

Figure 11 shows a comparison between finite element calculations of thickness distribution and prediction results from surrogate model for a set of parts with the same geometric parameters but varying numbers of mesh elements: 3,455, 6,091, and 9,470 elements. It is shown that the prediction results from the surrogate model for the three parts exhibit good consistency, with prediction accuracy remaining unaffected by changes in the number of mesh elements.

Comparison of thickness distributions among three parts with different mesh number and the same geometric parameters: H = 35 mm, R1 = 7 mm, R2 = 4 mm, R3 = 15 mm.

Table 4 shows the prediction performance R, MSE, MAE, and MAPE for the surrogate model of the three parts with different mesh number. As the mesh number increased from 3,455 to 9,470, none of the error metrics showed a systematic upward or downward trend. The R-values for the three cases remained above 0.999, indicating extremely high prediction consistency. The MSE, MAE, and MAPE for all cases remained at extremely low levels: MSE ranged from 10⁻⁵ to 10⁻⁶ mm², MAE was approximately 0.0055 mm, and MAPE below 0.62%. This validates the mesh-independent predictive accuracy of the surrogate model. Regardless of variations in part mesh number, the model maintains stable and high-precision prediction performance.

Comparison of computational efficiency

Figure 12 shows the prediction time of FEA simulation and surrogate models by using a computer with 16 GB RAM and 2.60 GHz CPU clock speed. The prediction time of FEA simulation increases from 100 s to 1,000 s as the number of nodes increases from 1,000 to 14,000. While the prediction time of our surrogate model remains less than 0.5 s despite the increment of the node number, which can significantly improve the prediction efficiency of thickness distribution in SPF parts.

Comparison in the running time of FEA simulation and surrogate model with different number of nodes.

FEA is a mathematical approximation of real physical systems based on the solution of partial differential equations. The continuum is divided into a set of finite-sized elements, so that a continuous infinite degree of freedom problem is turned into a discrete finite degrees of freedom problem. Consequently, as the number of meshes increases, the FEA prediction time increases significantly without increasing of computility. Since the forming time of the SPF equipment is about 30 min to 1 h, FEA prediction results are almost impossible to use in the on-site control systems of SPF equipment, which leads to a large amount of cost in trial-and-error process development and mold manufacturing. However, 0.5 s prediction time of surrogate model is capable to help the on-site SPF control system make decisions. In addition, the prediction time of neural network surrogate models does not depend on the increasement of mesh number, which takes more advantages in dealing with thickness prediction of complex structures.

Conclusions

This study establishes a surrogate model based on a mesh-informed neural network to predict the thickness distribution of superplastic forming parts with different geometric feature parameters. The PSO algorithm is employed to achieve the regulatable neural network training with its weights and biases, which can perform targeted parameter optimization in localized areas according to the geometric characteristics of the SPF parts. A geometric fitness function based on SPF part features is proposed to solve the problem of large local errors in round-corner areas. By applying the function to PSO algorithm training, the average percentage error of our surrogate model is reduced by 38.5%. A rapid prediction of the thickness nephogram of Ti-6Al-4 V box-shaped parts within 0.5 s was achieved with an average deviation from the finite element simulation results less than 1%.

This work provides an advanced prediction method which enables closed-loop process control by bridging the temporal gap between simulation-based optimization and actual manufacturing cycle times. The developed system shows significant potential for in-process quality monitoring and dynamic parameter adjustment in on-site SPF control systems. In the future, prediction of thickness distribution in SPF parts with arbitrary surfaces will be carried out to expand the generalization ability of the surrogate model for border industrial applications.

Data availability

The datasets generated during and analyzed during the current study are available from the corresponding author on reasonable request.

Abbreviations

- l :

-

side length of the box-shaped parts

- t :

-

initial sheet thickness of the box-shaped parts

- H :

-

design height of the box-shaped parts

- R 1 :

-

entrance radius of the box-shaped parts

- R 2 :

-

bottom radius of the box-shaped parts

- R 3 :

-

corner radius of the box-shaped parts

- \(\:\sigma\:\) :

-

stress

- K :

-

material coefficient

- \(\:\dot{\epsilon\:}\) :

-

strain rate

- m :

-

material strain rate sensitivity coefficient

- \(\:{x}_{ni}\) :

-

input signal to the i-th neuron

- \(\:{y}_{ni}\) :

-

output signal to the i-th neuron

- \(\:{w}_{ij}\) :

-

weight value

- \(\:{b}_{i}\) :

-

bias value

- \(\:{n}_{p}\) :

-

the number of neurons in the previous layer of the neural network

- f :

-

a hyperbolic tangent activation function

- \(\:{\varvec{v}}_{id}^{k}\) :

-

velocity of the i-th particle in iteration step k

- \(\:{\varvec{x}}_{id}^{k}\) :

-

position of the i-th particle in iteration step k

- \(\:{c}_{1}\) :

-

individual learning factor

- \(\:{c}_{2}\) :

-

social learning factor

- \(\:\omega\:\) :

-

inertia weight

- \(\:{\varvec{p}}_{id}^{k}\) :

-

individual optimal value of the i-th particle in iteration step k

- \(\:{\varvec{p}}_{gd}^{k}\) :

-

global optimal value in iteration step k

- \(\:{\omega\:}_{max}\) :

-

the maximum inertia weight

- \(\:{\omega\:}_{min}\) :

-

the minimum inertia weight

- \(\:q\) :

-

current iteration count

- \(\:{q}_{max}\) :

-

the maximum iteration count

- E :

-

fitness function

- N :

-

population size

- n :

-

the number of samples

- \(\:{\widehat{y}}_{i}\) :

-

predicted value for the i-th sample

- \(\:{y}_{i}\) :

-

FEA simulation value for the i-th sample

- \(\:{E}_{g}\) :

-

geometry fitness function

- \(\:{MAE}_{r}\) :

-

the MAE of the nodes in the round corners

- \(\:{MAE}_{w}\) :

-

the MAE of the rest of the nodes

- \(\:{k}_{r}\) :

-

the weights of\(\:{MAE}_{r}\)

- \(\:{k}_{w}\) :

-

the weights of\(\:{MAE}_{w}\)

References

Akula, S. P., Ojha, M., Rao, K. L. & Gupta, A. K. A review on superplastic forming of Ti-6Al-4V and other titanium alloys. Mater. Today Commun. 34, 105343 (2023).

Kotkunde, N., Deole, A. D., Gupta, A. K. & Singh, S. K. Comparative study of constitutive modeling for Ti-6Al-4V alloy at low strain rates and elevated temperatures. Mater. Des. 55, 999–1005 (2014).

Tang, J. S., Fuh, Y. K. & Lee, S. Superplastic forming process applied to aero-industrial strakelet: wrinkling, thickness, and microstructure analysis. Int. J. Adv. Manuf. Technol. 77, 1513–1523 (2015).

Ng, J. C., Luckey, S. G., Kridli, G. T. & Friedman, P. A. Validation of a modified material model for use with shell elements to improve the predictive accuracy of the thickness distribution in superplastic forming of sheet metals. J. Mater. Process. Technol. 211 (8), 1386–1394 (2011).

Liu, Z. et al. Dynamic experimental studies of A6N01S-T5aluminum alloy material and structure for high-speed trains. Acta. Mech. Sin. 35 (4), 763–772 (2019).

Wongsa-Ngam, J. & Langdon, T. G. Advances in superplasticity from a laboratory curiosity to the development of a superplastic forming industry. Metals 12, 1921 (2022).

Piccininni, A., Sorgente, D. & Palumbo, G. GA-based optimization to control the thickness distribution in components manufactured via superplastic forming. J. Manuf. Process. 86, 126–135 (2023).

Du, Z. et al. Structural optimization and direct reverse superplastic forming process for aluminum alloy multi cavities pallet. Sci. Rep. 15 (1), 15508 (2025).

Fuerth, Z. et al. Enhancing the superplastic deformation capabilities of the Ti-6Al-4V alloy using superimposed oscillations. J. Manuf. Process. 132, 863–877 (2024).

Chen, K. & Dong, J. High-temperature bulging technique for manufacturing titanium alloy spherical pressure vessels using single-curvature polyhedron. Int. J. Press. Vessels Pip. 214, 105405 (2025).

Wang, M. et al. Texture and bendability evolution mechanism of 6063 aluminum alloy tube formed by free-bending technology employing cross-scale numerical modeling. J. Mater. Process. Technol. 332, 118568 (2024).

Xia, Q. et al. Deformation mechanism of ZK61 magnesium alloy cylindrical parts with longitudinal inner ribs during hot backward flow forming. J. Mater. Process. Technol. 296, 117197 (2021).

Tian, Y. et al. A new finite element model for Mn-Si-Cr bainitic/martensitic product quenching process: simulation and experimental validation. J. Mater. Process. Technol. 294, 117137 (2021).

Zheng, Y. Artificial intelligence and machine learning for materials. Curr. Opin. Solid State Mater. Sci. 34, 101202 (2025).

Wolter, B. et al. Nondestructive material characterization and component identification in sheet metal processing with electromagnetic methods. Sci. Rep. 14 (1), 6274 (2024).

Petrik, J., Ali, S. I., Feistle, M., Bambach, M. & CrystalMind A surrogate model for predicting 3D models with recrystallization in open-die hot forging including an optimization framework. Mech. Mater. 189, 104875 (2024).

Esim, E. & Demirel, M. Determination of vibration characteristic in automatic tapping operations based on artificial neural networks. Sci. Rep. 15 (1), 16386 (2025).

Romero, P. E., Rodriguez-Alabanda, O., Molero, E. & Guerrero-Vaca, G. Use of the support vector machine (SVM) algorithm to predict geometrical accuracy in the manufacture of molds via single point incremental forming (SPIF) using aluminized steel sheets. J. Mater. Res. Technol. 15, 1562–1571 (2021).

Najm, S. M. et al. Application of the gradient-boosting with regression trees to predict the coefficient of friction on drawbead in sheet metal forming. Materials 17 (18), 4540 (2024).

Sevšek, L., Baressi Šegota, S., Car, Z. & Pepelnjak, T. Determining the influence and correlation for parameters of flexible forming using the random forest method. Appl. Soft Comput. 144, 110497 (2023).

Fazily, P. & Yoon, J. W. Machine learning-driven stress integration method for anisotropic plasticity in sheet metal forming. Int. J. Plast. 166, 103642 (2023).

Stefanovska, E. & Pepelnjak, T. Optimising predictive accuracy in sheet metal Stamping with advanced machine learning: A LightGBM and neural network ensemble approach. Adv. Eng. Inform. 65, 103103 (2025).

Zhang, Z. et al. A forming method of variable-diameter tubes straightforward from sheet metals. J. Mater. Process. Technol. 333, 118613 (2024).

Mirandola, I. et al. Machine learning-based models for the Estimation of the energy consumption in metal forming processes. Metals 11 (5), 833 (2021).

Dib, M. A. S., Prates, P. & Ribeiro, B. SecFL – Secure federated learning framework for predicting defects in sheet metal forming under variability. Expert Syst. Appl. 235, 121139 (2023).

Cruz, D. J. et al. Application of machine learning to bending processes and material identification. Metals 11 (9), 1418 (2021).

Attar, H. R., Zhou, H., Foster, A. D. & Li, N. Rapid feasibility assessment of components to be formed through hot stamping: A deep learning approach. J. Manuf. Process. 68, 1650–1671 (2021).

Low, D. W. W., Chaudhari, A., Kumar, D. & Kumar, A. S. Convolutional neural networks for prediction of geometrical errors in incremental sheet metal forming. J. Intell. Manuf. 34 (5), 2373–2386 (2023).

Fu, Z., Mo, J., Chen, L. & Chen, W. Using genetic algorithm-back propagation neural network prediction and finite-element model simulation to optimize the process of multiple-step incremental air-bending forming of sheet metal. Mater. Des. 31 (1), 267–277 (2010).

Downes, A. & Hartley, P. Using an artificial neural network to assist roll design in cold roll-forming processes. J. Mater. Process. Technol. 177 (1), 319–322 (2007).

Sun, M. et al. Thickness Distribution Prediction of Superplastic Forming Parts Based on Machine Learning and Computer Aided Engineering. 4th International Symposium on Artificial Intelligence and Intelligent Manufacturing 827–830 (2024).

Krohn, M. A., Leen, S. B. & Hyde, T. H. A superplastic forming limit diagram concept for Ti-6Al-4V. Proceedings of the Institution of Mechanical Engineers, Part L: Journal of Materials: Design and Application. 221(4), 251–264 (2007).

Zhang, T., Sha, H., Li, L. & Gong, H. Study of macroscopic defects of four-layer structure of Ti–6Al–4V during superplastic forming/diffusion bonding. Int. J. Precis. Eng. Manuf. 22 (1), 27–39 (2021).

Motallebi, R., Savaedi, Z. & Mirzadeh, H. Additive manufacturing – A review of hot deformation behavior and constitutive modeling of flow stress. Curr. Opin. Solid State Mater. Sci. 26 (3), 100992 (2022).

Mikhaylovskaya, A. V. et al. Influence of minor Zn additions on grain boundary anelasticity, grain boundary sliding, and superplasticity of Al-Mg-based alloys. J. Alloys Compd. 926, 166785 (2022).

Zhang, C. Reach on process of superplastic forming for four-sheet rudder structure of TC4 titanium alloy (Master’s thesis). China Academy of Machinery Science & Technology (2017).

Tian, Y., Fu, D. & Li, G. Research on the generalization problem of BP neural network. IEEE Access. 12, 125416–125426 (2024).

Gu, L. & Zheng, T. Study on the generalized holo-factors mathematical model of dimension-error and shape-error for sheet metal in Stamping based on the back propagation (BP) neural network. J. Manuf. Sci. Eng. 138 (6), 1–3 (2016).

Zhang, J. R., Zhang, J., Lok, T. M. & Lyu, M. R. A hybrid particle swarm optimization-back-propagation algorithm for feedforward neural network training. Appl. Math. Comput. 185 (2), 1026–1037 (2007).

Wan, Q., Chen, J., Zhu, R. & Chen, W. Tooth root stress prediction of helical gear pair with misalignment errors based on PSO-BP neural network. IEEE Sens. J. 24 (10), 15847–15856 (2024).

Funding

This work was supported by National Science and Technology Major Project 2024ZDZX33-01.

Author information

Authors and Affiliations

Contributions

Muqi Sun: Writing-original draft, Software, Data curation, Visualization, Formal analysis. ChengYue Xiong: Writing-review & editing, Conceptualization, Supervision, Funding acquisition. Yuwei Zhou: Data curation, Resources, Software. Xicheng Zhang: Software, Investigation. Aoming Yuan: Data curation, Resources. Yan Sun: Data curation, Resources. Yongbing Li: Supervision, Funding acquisition.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Sun, M., Xiong, C., Zhou, Y. et al. Rapid thickness distribution prediction of superplastic formed parts based on geometry adapted PSO-BP neural network surrogate model. Sci Rep 16, 3587 (2026). https://doi.org/10.1038/s41598-025-33493-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-33493-7