Abstract

Automatic modulation recognition (AMR) is vital for 5G/6G, yet existing methods often overlook the specific spectral structures of dominant OFDM systems. To address this, we propose the Fourier Adaptive Filter with Attention (FAFT), a parameter-efficient framework that explicitly models OFDM spectra. FAFT integrates a learnable FFT-based adaptive filter branch with a lightweight time-domain convolutional branch, fused via channel attention. Additionally, a novel frequency-domain regularizer is introduced to enhance spectral feature learning. Experiments on RML2016.10a, RML2016.10b, and the practical EVAS OFDM dataset demonstrate that FAFT achieves competitive accuracy with remarkable efficiency (0.13M parameters, 39.3M FLOPs). Its robustness under low SNR and multipath conditions highlights its strong potential for practical 5G/6G deployment.

Similar content being viewed by others

Introduction

With the rapid development of wireless communication, long-term evolution (LTE) systems have been widely deployed to support daily services. As a further evolution, 5G and 6G communication systems have emerged, characterized by high data rates, massive connectivity, and low latency1,2. These systems enable the Internet of Things (IoT), smart cities, and autonomous driving3,4.

In such scenarios, automatic modulation recognition (AMR) plays a key role in cognitive radio (CR)5,6, physical-layer security5, and spectrum monitoring7. As a typical application, AMR contributes to safe and efficient operation of wireless communication systems by providing high-throughput, low-latency signal identification in dense and dynamic spectrum environments8.

Existing AMR approaches can be broadly categorized into two families: likelihood-based (LB) methods and feature-based (FB) methods. LB methods formulate AMR as a hypothesis testing problem, leveraging Bayesian estimation and likelihood ratio tests to determine the most probable modulation type9,10. Traditional FB algorithms rely on hand-crafted features derived from higher-order statistics and expert knowledge of communication signals11,12. Typical machine learning classifiers such as support vector machines and decision trees are then trained on these features13,14. Although these methods are interpretable and effective in controlled conditions, they depend heavily on prior expert knowledge and lack flexibility when facing complex, time-varying electromagnetic environments with strong noise, multipath effects, and Doppler frequency offsets15.

In recent years, fueled by advances in artificial intelligence (AI), deep learning (DL) has achieved remarkable success in computer vision16, natural language processing17, and image processing18. Naturally, DL-based FB AMR methods have attracted extensive research interest. Unlike traditional FB methods, DL-based AMR can automatically learn discriminative representations from large amounts of labeled modulation data without relying on manually designed features19. These approaches demonstrate strong robustness and generalization ability in complex environments.

In 2018, a convolutional neural network (CNN) with truncated transfer and multi-task learning was proposed to improve the discrimination of easily confused modulation types, achieving higher accuracy and efficiency than conventional CNNs20. Some researchers have also proposed exploiting constellation diagrams as images and applying CNNs to perform AMR21. However, in practical systems, constellation diagrams can only be obtained after demodulation and equalization, which limits the applicability of such methods in blind sensing scenarios. In 2020, MCNet was proposed with specially designed convolutional blocks to jointly capture spatio-temporal correlations using asymmetric convolution kernels22.

Beyond standard CNNs, recurrent architectures such as RNNs and LSTMs have been explored to exploit temporal dependencies in received signal sequences. For example23, used recurrent neural networks to model temporal relationships, while in 2019 an LSTM-based architecture was proposed to recognize noisy digital modulation signals from time-series data24. In 2022, a Transformer with multi-head attention was introduced for modulation classification25, achieving improved accuracy in low-SNR scenarios compared to conventional CNNs by leveraging long-range dependencies and attention mechanisms.

To further improve performance, hybrid architectures combining CNNs and RNNs have been proposed. A three-stream multi-channel framework was designed in26 to extract features from individual and combined in-phase/quadrature (I/Q) components using 1D convolution, 2D convolution, and LSTM, simultaneously capturing temporal and spatial features. CLDNN, which integrates CNN and LSTM layers, was applied to AMR in27 to study the relationship between network depth and modulation recognition performance. In 2023, IQCLNet was proposed28, using directional convolutional filters to extract phase and short-term features, followed by LSTMs to capture long-term dependencies. By combining CNNs, filters, and LSTMs, IQCLNet achieves a favorable trade-off between performance and resource consumption. Recent works have also explored data-limited and cross-domain AMC scenarios, including few-shot or meta-learning based methods and domain-adaptation approaches. Deng29, propose a multimodal progressive unsupervised domain adaptation network to handle cross-domain modulation classification.

Most of the aforementioned works focus on single-carrier communication signals. However, modern wireless systems (e.g., 5G and 6G) predominantly employ multi-carrier modulation schemes such as OFDM, which are fundamentally different from single-carrier systems in terms of spectral structure, sensitivity to carrier frequency offset (CFO), and multipath effects. Hence, there has been a growing interest in AMR for OFDM communication systems.

For instance30, proposed a CNN-based AMR method to classify four OFDM modulation formats (BPSK, QPSK, 8PSK, 16QAM) and analyzed its performance. A residual CNN was developed in31 to recognize eight OFDM modulation formats (BPSK, QPSK, OQPSK, 16QAM, \(\pi /4\)QPSK, MSK, 8PSK, 64QAM). To enhance robustness to CFO, a Cross-SKNet architecture was introduced in32 with a compensation mechanism to mitigate CFO-induced distortion for OFDM signals (BPSK, QPSK, 16QAM, 64QAM). In 5G and 6G systems, higher-order PSK and QAM schemes are widely adopted to increase spectral efficiency and symbol rate32,33, such as 128QAM, 256QAM, and even 512QAM, where each symbol carries more bits34. However, directly deploying very deep and complex networks tailored for single-carrier or low-order modulation onto base stations is impractical due to stringent latency and computational constraints35.

Motivated by these challenges, this paper focuses on designing a Parameter-Efficient yet effective AMR model that combines traditional signal processing with deep learning for OFDM-based 5G/6G systems. Specifically, we propose a Fourier Adaptive Filter with Attention (FAFT) module that combines an FFT-based adaptive filter with a carefully designed frequency-domain regularization term.

The main contributions of this work are summarized as follows:

-

We propose an FAFT-based AMR framework that jointly extracts frequency-domain features via an FFT-based adaptive filter with spectral enhancement, and time-domain features via a two-layer convolutional network. An attention mechanism adaptively fuses these heterogeneous features, leading to a compact time–frequency representation.

-

We introduce a learnable spectral enhancement module in the FAFT branch. This module acts directly on the amplitude spectrum to emphasize modulation-discriminative spectral components and suppress noise, thus clarifying the role of spectral enhancement within FAFT and making the OFDM-specific prior knowledge explicit.

-

We design a simple frequency-domain regularization term defined on the approximate and detail components of the filtered spectrum. Although it plays a contrast-like role by balancing coarse spectral envelopes and fine spectral details, it is implemented as a regularizer added to the cross-entropy loss rather than as a separate contrastive-loss function (e.g., InfoNCE or triplet). This term stabilizes training and drives the adaptive FFT filter to learn non-trivial, discriminative frequency responses in an end-to-end manner.

-

Extensive experiments on RML2016.10a, RML2016.10b, and a practical OFDM dataset (EVAS) generated by USRP show that the proposed FAFT model achieves competitive or superior accuracy compared with state-of-the-art methods, while significantly reducing computational complexity and model size.

Novelty relative to closely related AMR models: Compared with CLDNN27 and Transformer-based AMR25, which operate purely on time-domain I/Q sequences and rely on deep CNN–LSTM stacks or self-attention blocks to implicitly learn spectral structure, FAFT adds an explicit FFT-based adaptive filter branch. This branch works directly on the OFDM spectrum and is regularized in the frequency domain, making the use of OFDM priors transparent while keeping the model shallow and parameter-efficient. MAMC36 and Cross-SKNet31 also employ multi-branch or multi-scale CNNs with attention, but their branches consist of fixed convolutional kernels; they do not contain a learnable Fourier filter bank nor a frequency-domain regularizer on approximate and detail spectral components. In contrast, FAFT learns a small number of frequency responses in the Fourier domain, regularizes their coarse and fine parts, and then fuses them with a lightweight time-domain CNN through channel attention. This design allows FAFT to match or outperform MAMC, CLDNN, Cross-SKNet, and Transformer-based AMR in terms of recognition accuracy, while using substantially fewer parameters (e.g., 0.13M for FAFT versus 1.58M for MAMC) and comparable FLOPs, thus offering a more favorable accuracy–complexity trade-off.

System model

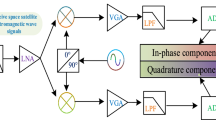

In a digital communication system, the modulation process shifts the spectrum of the baseband signal to a higher carrier frequency, enabling reliable transmission over the channel and improving robustness to channel distortions. In this paper, we consider a typical single-input single-output (SISO) communication model with one transmit and one receive antenna, as illustrated in Fig. 1.

Single-input single-output OFDM system diagram.

The received signal \(r_r(t)\) of the communication system can be written as

where c(t) denotes the channel impulse response, n(t) represents the additive noise in the transmission process (e.g., additive white Gaussian noise, AWGN), and \(*\) denotes convolution. The baseband-equivalent transmitted modulated signal \(r_s(t)\) can be expressed as

where \(r_b(t)\) is the complex baseband signal, \(\Re (\cdot )\) denotes the real part, and \(f_c\) is the carrier frequency. Phase variations induced by multipath propagation can be represented by a time-varying phase term absorbed into \(r_b(t)\).

In general, common modulation schemes can be divided into analog and digital modulation. Examples of analog modulation include amplitude modulation (AM), double-sideband modulation (DSB), and frequency modulation (FM). With the development of digital signal processing and modern communication systems, digital modulation schemes such as frequency-shift keying (FSK), amplitude-shift keying (ASK), phase-shift keying (PSK), and quadrature amplitude modulation (QAM) have become mainstream. A general expression for these modulation schemes can be written as

where \(a_n\) and \(b_n\) are symbol sequences, \(\delta (t)\) is the Dirac pulse, and \(T_s\) is the symbol period. The variables \(\varphi _n\) and \(\omega _n\) denote the modulation phase and modulation frequency, respectively; \(\omega _c\) is the carrier angular frequency and \(\theta _0\) is the initial phase. The term \(\sqrt{s}\) represents the signal energy.

At the receiver, the complex baseband signal \(r_r(t)\) can be represented by its in-phase and quadrature components as

where \(r_I(t)\) and \(r_Q(t)\) are the in-phase and quadrature-phase samples, respectively. A(t) denotes the instantaneous amplitude and P(t) denotes the instantaneous phase.

Proposed model

The overall architecture and hyperparameters of the proposed FAFT model are summarized in Table 1.

In this section, the proposed FAFT-based AMR network is presented in a unified framework. The proposed architecture processes IQ samples by initially applying a Fast Fourier Transform (FFT). The signal subsequently undergoes spectral enhancement and adaptive filtering for noise reduction and feature extraction. These processed features are concatenated and refined via an attention layer, which weights the most relevant features prior to the final classification stage. The description starts from the overall architecture and design motivation, and then explains in detail the forward and backward propagation of each component, including the frequency-domain FAFT branch, the time-domain convolutional branch, the attention-based fusion module, and the global training objective.

The received complex baseband signal in one observation window is denoted by

where \(r_I[n]\) and \(r_Q[n]\) are the in-phase and quadrature components, respectively. As shown in Fig. 2,

Overview of the proposed FAFT-based AMR network, including the frequency-domain FAFT (FCC) branch, the time-domain TSCC branch, and the attention-based fusion head.

the network consists of two parallel feature extraction branches and one fusion-and-classification head. The first branch operates in the frequency domain and constitutes the core Fourier Adaptive Filter with spectral enhancement (FAFT), which corresponds to the Frequency-Character Capture (FCC) module. It explicitly exploits OFDM spectral structures through FFT, a learnable spectral enhancement module, and an adaptive filter bank. The second branch operates in the time domain and corresponds to the Time-Series Character Capture (TSCC) module, where two Parameter-Efficient one-dimensional convolutions act directly on \(r_r[n]\) to extract local temporal features. The outputs of these two branches are concatenated and passed through a channel attention mechanism, which automatically adjusts the relative importance of different feature channels before the final classification layer. In this way, the model combines explicit OFDM-aware spectral analysis with generic temporal pattern extraction, while keeping the number of parameters and FLOPs low.

Frequency-domain FAFT branch

The FAFT branch is designed around the observation that OFDM signals are inherently characterized by their spectral structure, such as the distribution of energy over subcarriers and the way different modulation formats shape the power spectrum. Instead of relying solely on generic convolutions in the time domain, FAFT makes this structure explicit. The received sequence is first transformed into the frequency domain, then its amplitude spectrum is enhanced by a learnable spectral operator that suppresses noise and emphasizes modulation-discriminative regions, and finally an adaptive filter bank learns a small set of frequency responses that are shaped by training into modulation-aware band-pass or band-stop patterns. In this sense, FAFT can be regarded as a data-driven, OFDM-aware filter bank that is jointly optimized with the rest of the network.

Forward computation in the FAFT branch

The forward pass in the FAFT branch proceeds through several clearly defined stages. First, the complex sequence \(r_r[n]\) is converted into the frequency domain by a discrete Fourier transform, implemented in practice by an FFT:

The complex quantity \(R_o[k]\) can be written in polar form as \(R_o[k]=|R_o[k]|e^{\textrm{j}\theta [k]}\), where \(|R_o[k]|\) is the amplitude spectrum and \(\theta [k]\) is the phase.

Directly using \(|R_o[k]|\) as input to the learning system is often suboptimal, especially at low SNR or in multipath channels, because noise and channel distortions introduce rapid and irregular spectral fluctuations. To mitigate this effect, a learnable spectral enhancement module is introduced. The amplitude spectrum is first compressed by a logarithmic operation,

which stabilizes large dynamic ranges. Then \(\tilde{A}[k]\) is passed through two one-dimensional convolutions along the frequency axis with a ReLU nonlinearity in between, followed by a sigmoid activation,

The output M[k] is a soft mask with values in (0, 1), which is applied element-wise to the original magnitude spectrum,

In this way, frequency bins that are dominated by noise or are less informative for distinguishing modulation types tend to receive smaller mask values, whereas bins whose patterns correlate with specific modulations are enhanced. The spectral enhancement module therefore acts as a learnable, data-driven spectral equalizer that prepares a cleaner and more discriminative input for the subsequent adaptive filter bank.

On top of the enhanced spectrum \(R_E[k]\), an F-channel adaptive filter bank is applied. The f-th filter is modeled as a finite impulse response (FIR) filter with complex coefficients \(h_f[m]\) of length L, where \(f=1,\dots ,F\) and \(m=0,\dots ,L-1\). Its frequency response is given by

The output of this filter in the frequency domain is obtained by pointwise multiplication,

Stacking the F amplitude spectra \(A_f^{(f)}[k]\) along the filter index produces a two-dimensional representation indexed by frequency and filter channel. During training, the complex coefficients \(h_f[m]\) are adjusted so that the corresponding frequency responses \(H_f[k]\) emphasize or suppress certain spectral regions in a way that is discriminative for different modulation formats.

In order to structure these spectral outputs and to define a regularization term, each amplitude spectrum \(A_f^{(f)}[k]\) is decomposed into a coarse (approximate) component and a fine (detail) component. The approximate component of the f-th filter is defined as the average value over all frequency bins,

and the corresponding detail component measures the deviation of each bin from this mean,

The scalar \(c_A^{(f)}\) captures the overall spectral energy level associated with filter f, whereas \(c_D^{(f)}[k]\) encodes fine-grained fluctuations across frequency. Both types of components are later used in the attention-based fusion and in the frequency-domain regularization.

Backward propagation in the FAFT branch

The backward pass through the FAFT branch follows the principle of standard gradient backpropagation, but it is helpful to describe the flow of gradients explicitly in terms of the main operations. The total loss used for training is denoted by

where \(L_{rec}\) is the classification loss and \(L_{reg}\) is the frequency-domain regularization term. After the forward pass, the classifier produces predicted probabilities, and \(L_{rec}\) is computed from these probabilities and the ground-truth labels. At the same time, \(L_{reg}\) is computed from the approximate and detail components \(\{c_A^{(f)},c_D^{(f)}[k]\}\).

The gradient of L with respect to the concatenated feature vector at the input of the classifier, which contains the FAFT features and the TSCC features after attention, is first obtained by differentiating the classifier and the attention module. The attention operation is linear in its input for fixed attention weights, so the gradient with respect to the FAFT feature channels can be computed in closed form and then propagated backward to the approximate and detail components. Since \(c_A^{(f)}\) and \(c_D^{(f)}[k]\) are linear functions of \(A_f^{(f)}[k]\), the gradient with respect to \(A_f^{(f)}[k]\) is the sum of the gradients coming from the classifier path and those coming directly from \(L_{reg}\).

Once \(\partial L/\partial A_f^{(f)}[k]\) is known, it can be converted into gradients with respect to the complex filtered spectrum \(R_f^{(f)}[k]\). Using the relation \(R_f^{(f)}[k]=R_E[k]H_f[k]\) and the definition of \(H_f[k]\), the gradient with respect to the filter coefficient \(h_f[m]\) can be written as

This expression shows that the update of each filter tap \(h_f[m]\) depends on a weighted sum of the enhanced spectrum \(R_E[k]\), where the weights are the backpropagated gradients and the complex exponential factors. In parallel, the gradient with respect to \(R_E[k]\) is obtained by summing the contributions from all filters, which then propagates through the spectral enhancement module. Because the enhancement module is implemented by standard convolution and element-wise non-linearities, the gradients with respect to its convolution kernels and intermediate feature maps are computed automatically by the deep learning framework. Finally, the gradient with respect to \(R_o[k]\) is propagated back through the FFT to the time-domain samples \(r_r[n]\). This chain of differentiable operations ensures that the spectral enhancement parameters and the adaptive filter coefficients are all updated by gradient descent in a fully end-to-end manner, so that FAFT learns frequency responses that are jointly optimal for the AMR task.

Time-domain convolutional branch (TSCC)

The TSCC branch complements the FAFT branch by extracting features directly in the time domain. Although OFDM signals have a clear spectral interpretation, several modulation-discriminative cues, such as pulse shapes, transitions between symbols, and certain types of nonlinear distortion, can be more naturally captured in the temporal domain. Furthermore, shallow one-dimensional convolutions on short I/Q sequences are computationally inexpensive and easy to implement on existing hardware, making them well suited for latency-sensitive applications.

In the TSCC branch, the complex sequence \(r_r[n]\) is viewed as a two-channel real-valued signal \([r_I[n], r_Q[n]]\) of length N. The forward pass consists of two successive one-dimensional convolutional layers. The first layer applies convolution kernels with a small temporal receptive field (for example, length three or five) across the two input channels, followed by batch normalization and a ReLU activation. This operation extracts local correlations among neighboring samples and between the in-phase and quadrature components. The second layer applies another set of one-dimensional convolutions, possibly with a larger number of channels or a slightly wider receptive field, again followed by batch normalization and ReLU. This second layer forms higher-level temporal representations that summarize short-term dependencies over several samples, such as the shape of symbol transitions and short bursts of interference. After these two layers, the resulting feature maps are aggregated along the time dimension, for example by global pooling or by flattening, to produce a compact time-domain feature vector for each input sample. This vector will later be concatenated with the FAFT features in the fusion stage.

The backward propagation in the TSCC branch follows the usual pattern for convolutional neural networks. Gradients of the loss with respect to the concatenated feature vector are split into FAFT-related and TSCC-related parts. The TSCC-related gradients are propagated through the pooling or flattening operation back to the second convolutional layer, then through its batch normalization and ReLU activation to the first convolutional layer, and finally to the raw I/Q inputs. The convolution kernels and batch normalization parameters are updated by the optimizer, together with all other network parameters. In this way, the TSCC branch learns temporal filters that complement the spectral filters learned in the FAFT branch.

Attention-based feature fusion and classifier

After the FAFT and TSCC branches have produced their respective feature representations, these features must be fused in a way that adapts to different SNRs, channels, and modulation types. The FAFT branch yields, for each of the m frequency groups or filters, an approximate component \(a_j\) and a detail component \(d_j\), while the TSCC branch provides a time-domain feature vector. To stabilize the fusion of the approximate components, their average is computed as

The detail components, the averaged approximate component, and the TSCC feature vector are concatenated along the channel dimension to form a joint feature vector

This joint representation contains heterogeneous information: fine spectral details, coarse spectral envelopes, and temporal patterns.

To let the network automatically determine which parts of X are most informative under given channel conditions, a channel attention mechanism is employed. The vector X is first passed through a small multi-layer perceptron, which performs a nonlinear transformation and then projects back to the original channel dimension. A sigmoid activation is applied to obtain attention weights between zero and one for each channel. Denoting the weights by \(\textrm{Att}\), the attention operation can be written as

where \(W_1\) and \(W_2\) are the trainable weight matrices of the multi-layer perceptron, \(\delta (\cdot )\) is the ReLU function, \(\sigma (\cdot )\) is the sigmoid function, and \(\odot\) denotes channel-wise multiplication. The attended feature vector \(\tilde{X}\) emphasizes channels whose patterns are strongly correlated with the target modulation class and suppresses less informative or redundant channels.

Finally, \(\tilde{X}\) is fed into a fully connected classification layer followed by a softmax function to produce the predicted probabilities \(\{p_i\}_{i=1}^{C}\) for the C modulation categories. The gradient of the loss with respect to \(\tilde{X}\) is obtained by differentiating the softmax layer and the fully connected layer, and then propagated back through the attention mechanism, splitting naturally into contributions for the FAFT and TSCC branches.

Spectral enhancement module

Let \(\textbf{X}\in \mathbb {R}^{C\times T}\) denote the feature map produced by the temporal CNN front-end, where \(C=64\) is the number of channels and T is the sequence length. Our spectral enhancement module consists of a fixed 2-D FFT based multi-band decomposition and a lightweight channel attention.

FFT-based multi-band decomposition: We first flatten the temporal dimension and reshape \(\textbf{X}\) into the smallest square of size \(H\times W\) such that \(HW\ge T\), padding zeros if necessary. A 2-D FFT followed by a spectrum shift is applied:

Let \(r_{\max }\) be the distance from the spectrum center to a corner. We set a cutoff factor \(\alpha =0.3\) and define \(r_{\text {low}} = \alpha r_{\max }\) and \(r_{\text {high}} = (1-\alpha ) r_{\max }\). Three fixed radial masks \(\textbf{M}^{\text {low}}\), \(\textbf{M}^{\text {mid}}\), \(\textbf{M}^{\text {high}}\in \{0,1\}^{H\times W}\) are constructed to keep, respectively, the low-frequency disk (\(r\le r_{\text {low}}\)), the mid-frequency ring (\(r_{\text {low}}<r<r_{\text {high}}\)), and the high-frequency ring (\(r\ge r_{\text {high}}\)). Element-wise multiplication in the frequency domain and inverse FFT yield three real-valued feature maps, which are reshaped back to \(C\times T\):

Channel aggregation and attention: For each level l, we summarize the high-frequency response by temporal global average pooling, \(\bar{\textbf{x}}^{\text {high}}_l = \text {GAP}_t(\textbf{X}^{\text {high}}_l)\in \mathbb {R}^{C}\), and at the final level we also pool the low-frequency response, \(\bar{\textbf{x}}^{\text {low}} = \text {GAP}_t(\textbf{X}^{\text {low}}_L)\in \mathbb {R}^{C}\). Concatenating all pooled vectors over L levels gives a \((L+1)C\)-dimensional descriptor \(\bar{\textbf{x}}\in \mathbb {R}^{(L+1)C}\). In all experiments we use \(L=1\) and \(C=64\), so \((L+1)C=128\).

This descriptor is passed through a squeeze-and-excitation style MLP

where \(\textbf{W}_1\in \mathbb {R}^{16\times 128}\), \(\textbf{W}_2\in \mathbb {R}^{128\times 16}\), \(\phi\) denotes ReLU followed by dropout with rate 0.5, and \(\sigma\) is the element-wise sigmoid. The resulting attention vector \(\textbf{a}\in (0,1)^{128}\) is applied to rescale the concatenated spectral features before feeding them into the classifier.

Loss function and training procedure

The training objective combines a conventional classification loss with a frequency-domain regularization term that explicitly guides the learning of the FAFT filters. In earlier drafts we loosely referred to this term as a “frequency-domain contrastive regularization”, because it encourages a desirable tension between the coarse (approximate) and fine (detail) spectral components of the adaptive filters. However, it is not a separate contrastive-learning objective in the sense of InfoNCE or triplet losses. Throughout this paper we therefore treat it explicitly as a frequency-domain regularizer that is added to the cross-entropy loss. The classification loss is the standard cross-entropy,

where \(y_i\) is the one-hot encoded ground-truth label and \(p_i\) is the predicted probability for the i-th modulation class.

The regularization term \(L_{reg}\) is defined directly on the approximate and detail components of the FAFT branch. For the F filters in the adaptive bank, the quantities \(c_A^{(f)}\) and \(c_D^{(f)}[k]\) describe the coarse spectral level and the frequency-dependent deviations for filter f. To summarize their magnitudes, the average absolute detail magnitude is computed as

and the average absolute approximate magnitude is computed as

The regularization term is then defined as a weighted sum of these two quantities,

where \(\lambda _a\) and \(\lambda _d\) are non-negative hyperparameters. In the experiments, the values \(\lambda _d = 1\times 10^{-2}\) and \(\lambda _a = 2\times 10^{-2}\) are used. The term involving a prevents the filters from collapsing to trivial solutions with vanishing spectral energy, while the term involving d discourages extremely oscillatory responses that may fit noise rather than signal structure. By balancing these two effects, the regularization encourages the FAFT filters to capture meaningful spectral envelopes with moderate, discriminative fine details.

Combining the classification and regularization losses yields the total loss function

During training, this loss is minimized over the network parameters using the Adam optimizer with a fixed learning rate of 0.001. For each mini-batch, the forward pass starts by feeding the I/Q sequences \(r_r[n]\) into the FAFT and TSCC branches, computing their respective feature representations, fusing them through the attention module, and obtaining the predicted probabilities through the classifier. The loss L is then evaluated from the predicted probabilities and the FAFT components. In the backward pass, gradients of L are propagated through the classifier, the attention mechanism, and the two feature extraction branches down to every trainable parameter, including the spectral enhancement convolutions, the adaptive filter coefficients \(h_f[m]\), the TSCC convolution kernels, and the attention and classifier weights. The optimizer updates all these parameters simultaneously. Over many iterations, this end-to-end gradient-based procedure turns the FAFT branch into a learned adaptive filter bank whose frequency responses, together with the time-domain filters of the TSCC branch and the attention weights, are jointly optimized for accurate and robust modulation recognition.

Experimental results and discussion

Datasets and experimental settings

We evaluate the proposed FAFT model on three datasets: RML2016.10a, RML2016.10b, and a practical OFDM dataset named EVAS. The RML datasets are publicly available and generated using GNU Radio, while the EVAS dataset is collected using USRP hardware and a channel emulator.

RML datasets

The RML2016.10a and RML2016.10b datasets were generated by O’Shea37 using GNU Radio. RML2016.10a contains I/Q samples corresponding to 11 modulation types (8PSK, BPSK, CPFSK, GFSK, PAM4, QAM16, QAM64, QPSK, AM-SSB, AM-DSB, and WBFM), with SNR levels from − 20 to 18 dB in steps of 2 dB (20 SNR levels in total). Each modulation class contains 220000 samples, and each sample consists of 128 complex samples (I/Q pairs).

RML2016.10b includes 10 modulation types (8PSK, BPSK, CPFSK, GFSK, PAM4, QAM16, QAM64, QPSK, AM-DSB, and WBFM), with 1200000 samples for each class. Each sample again contains 128 complex samples. Both datasets include labels for the modulation type and SNR. For our experiments, we randomly split each dataset into 70% training and 30% testing.

EVAS dataset

Unlike the RML datasets generated by GNU Radio, the EVAS dataset is collected using real RF hardware and a channel emulator. A National Instruments (NI) USRP RF transmitter and receiver are used to generate and capture complex baseband I/Q samples. The baseband bit streams are first mapped to different modulation formats (BPSK, QPSK, 8PSK, 16PSK, 4PAM, 16QAM, 32QAM, 64QAM, 128QAM, 256QAM, and 512QAM) and then assembled into OFDM frames with K = 256 data subcarriers and a cyclic prefix. The OFDM waveform is upconverted to a carrier frequency of 4 GHz by the USRP transmitter, passed through a channel emulator, and then downconverted and sampled at the receiver. The sampling rate and frame structure are configured such that each captured example contains 1024 complex sampling points, obtained by sliding a fixed-length window over the continuous receive stream after coarse timing synchronization.

During collection, the propagation channel between the USRP transmitter and receiver is emulated by a 3GPP Extended Vehicular A (EVA) model. Specifically, the channel emulator is configured according to the EVA profile defined in the 3GPP specification, which consists of nine independent Rayleigh fading taps with predefined relative delays and average powers. The carrier frequency is set to 4 GHz and the maximum Doppler shift is set to 800 Hz, corresponding to a high-speed vehicular scenario. Additive white Gaussian noise is added at the emulator output to obtain SNR values ranging from − 10 to 20 dB in steps of 2 dB. Under these settings, each receive example simultaneously experiences frequency-selective multipath fading, time-selective fading due to Doppler, and additive noise, together with hardware impairments such as phase noise, quantization noise, and I/Q imbalance introduced by the USRPs themselves.

For each modulation type and each SNR level, 3000 OFDM frames are generated and captured, and a total of 1800 examples per modulation and SNR are reserved for testing. The main physical-layer parameters of the OFDM signal are summarized in Table 2. Compared with the RML2016.10a and RML2016.10b datasets, which are fully generated in software using GNU Radio under relatively idealized channel models, EVAS differs in three important aspects. First, it is based on a practical OFDM physical layer with a realistic frame structure and high-order QAM/PSK constellations, whereas RML mainly contains single-carrier signals with lower-order modulations. Second, the channel conditions in EVAS include standardized 3GPP EVA multipath with Doppler and hardware non-idealities from the USRP platform, making the task more representative of real 5G/6G deployments than the synthetic AWGN-dominated channels in RML. Third, the SNR range and sample length in EVAS are chosen to reflect typical link budgets and symbol durations in vehicular scenarios, so that the resulting AMR problem is closer to practical cognitive radio applications on commercial base stations.

For each modulation type, OFDM signals are generated with:

-

Data subcarriers \(K = 256\),

-

SNR values ranging from − 10 to 20 dB with a 2 dB step,

-

3000 examples per modulation and SNR value.

The main parameters of the OFDM signal are summarized in Table 2.

In our experiments, OFDM signals are transmitted through the Extended Vehicular A (EVA) channel model defined in the 3GPP standard38, which consists of nine Rayleigh fading taps with specified relative power and delay. This setup simulates realistic vehicular scenarios with strong multipath and Doppler effects, as illustrated in Fig. 3, matching the requirements of high-efficiency, low-latency communication8.

Simulated vehicular communication scenario for EVAS dataset generation.

The EVAS dataset is split similarly: 70% of the data is used for training and the remaining 30% for validation/testing. The test set contains 1800 examples per modulation type and SNR.

Training configuration

We implement the proposed FAFT model in PyTorch 2.0.1 within the PyCharm development environment. All experiments are conducted on NVIDIA Tesla V100 GPUs.

For the RML datasets, we use samples with SNR from − 20 to 18 dB, and for the EVAS dataset, we use SNR from − 10 to 20 dB. The proposed model is trained for 50 epochs using the Adam optimizer with a fixed learning rate of 0.001. The batch size is set to 512. The network architecture is designed to be independent of the number of samples in the input signal, and in this work we focus on signals of length 128 complex samples, consistent with the RML datasets.

To provide fair comparisons, we re-implement or use existing implementations of several representative AMR models as baselines, including ResNet39, Transformer25, CLDNN27, MAMC36, and MCNET22. These methods are chosen because they represent the main architectural paradigms in recent AMC literature: ResNet and MCNET are deep convolutional or residual architectures, CLDNN combines convolutional and recurrent layers, Transformer is a self-attention-based model, and MAMC is a strong multi-branch or multi-scale CNN baseline specifically designed for modulation recognition. All of them have demonstrated competitive performance on standard benchmarks such as RML2016.10a/10b, making them suitable and representative baselines for our study.

For ResNet, Transformer, CLDNN, and MCNET, we re-implemented the architectures in PyTorch strictly following the descriptions and hyperparameters in the original papers, only adapting the input length and the number of output classes to our datasets. For MAMC, we directly used the official implementation released by the authors and retrained it on our datasets. All baseline models are trained from scratch on the same training/validation/test splits, SNR ranges, and input preprocessing as the proposed method, using the Adam optimizer, an initial learning rate of 0.001, batch size 256, learning-rate decay on validation plateau, and early stopping. This unified training protocol ensures that the reported results are obtained under comparable and fair conditions rather than copied from heterogeneous settings in the literature.

Results and comparison

To comprehensively evaluate the proposed FAFT model, we consider the following metrics:

-

(a)

Maximum accuracy: the highest recognition accuracy achieved across all SNR levels.

-

(b)

Average accuracy: the average recognition accuracy over the entire SNR range (\(-20\) dB to 18 dB for RML2016.10a/b, − 10 to 20 dB for EVAS).

-

(c)

FLOPs: the number of floating-point operations, indicating computational complexity.

-

(d)

Number of parameters: indicating model size and storage requirements.

Table 3 compares model complexity and performance on RML2016.10a and RML2016.10b. The proposed FAFT model achieves the highest average accuracy on both datasets, i.e., 60.88% on RML2016.10a and 61.99% on RML2016.10b, slightly outperforming MAMC while using only 0.13M parameters and \(3.93\times 10^{1}\)M FLOPs. Although MCNET has a slightly smaller parameter count (0.11M), FAFT improves the average accuracy by 4.18 percentage points on RML2016.10a and 1.24 percentage points on RML2016.10b. Compared with larger models such as Transformer and ResNet, FAFT achieves better accuracy with significantly lower complexity, demonstrating its parameter-efficient nature.

It should be noted that the FLOPs of FAFT (\(3.93\times 10^{1}\)M) are of the same order as those of CLDNN (\(4.08\times 10^{1}\)M) and MCNET (\(3.78\times 10^{1}\)M), so FAFT does not have the absolutely lowest arithmetic cost among all baselines. The main source of its lightweightness lies in the small number of trainable parameters and the compact model size: compared with MAMC, ResNet, and Transformer, FAFT uses about 92%, 77%, and 97% fewer parameters, respectively. In many practical AMR deployment scenarios, such as embedded software-defined radios and edge base stations, on-chip memory and model storage are often more constrained than peak compute capability. Therefore, reducing the number of parameters directly lowers memory footprint and storage requirements, which is more critical than minimizing FLOPs alone. Throughout this paper, when we describe FAFT as a lightweight or parameter-efficient model, we specifically refer to its substantially reduced parameter count and model size, together with a more favorable accuracy–complexity trade-off under comparable FLOPs, rather than an absolute minimum FLOP count. Unless otherwise stated, all models are evaluated with the same input size, i.e., complex baseband sequences of length \(L = 1024\) for a single receive antenna. For a fair comparison, the reported FLOPs and parameter counts are computed analytically under this common input size and number of channels for all methods. Note that FLOPs and parameter counts are architecture-level metrics and therefore independent of the specific hardware platform; when reporting inference latency, all models are measured on the same machine. Compared with recent CNN- or ResNet-based AMC models that typically contain 1–3M parameters, FAFT uses only 0.13M parameters and substantially fewer FLOPs while achieving comparable or higher accuracy, especially at low SNR. This indicates a favorable trade-off between recognition performance and computational cost. Although we do not provide a full hardware implementation in this work, the small model size and low FLOP count suggest that FAFT is suitable for real-time deployment on embedded GPUs or FPGAs in base-station or SDR platforms. A detailed latency and energy evaluation on specific hardware is left for future work.

Classification accuracy (%) versus SNR (dB) of the proposed FAFT method and baseline models on different datasets. Legends indicate different AMR models.

Figure 4a and b show the AMR accuracy versus SNR on RML2016.10a and RML2016.10b, respectively. For all models, accuracy improves as SNR increases, and FAFT consistently achieves the best or near-best performance across most SNR levels. On RML2016.10a, FAFT attains the highest maximum accuracy of 91.8% at SNR = 14 dB, while MAMC, ResNet, Transformer, CLDNN, and MCNET reach 90.6%, 89.2%, 88.3%, 89.4%, and 83.2%, respectively. On RML2016.10b, MAMC achieves a slightly higher peak accuracy (92.7%) at 14 dB; however, FAFT also reaches 92.6% at 14 dB and achieves higher average accuracy across all SNRs.

Furthermore, FAFT shows clear advantages in the low-SNR region. For RML2016.10a, the average accuracy gain between − 2 and 0 dB reaches about 13% compared with traditional networks, and for RML2016.10b the gain is about 6.1%. We attribute this improvement mainly to the spectral enhancement module, which strengthens the amplitude of dominant spectral components and suppresses noise, enabling more reliable feature extraction in noisy conditions. In RML2016.10b, the larger number of training samples per SNR level also benefits other models, so the relative advantage of FAFT is slightly less pronounced than on RML2016.10a.

We also observe that, for some models, the accuracy curves exhibit a slight drop at the highest SNR points (e.g., from 16 to 18 dB). This non-monotonic behavior has also been reported in previous studies on RML2016.10a/b and is mainly caused by two factors. First, each SNR level contains a finite number of test samples, so a change of only a few misclassified examples at high SNR can lead to fluctuations of about 1–2 percentage points in the reported accuracy. Second, at high SNR the residual errors are dominated by a few inherently confusable modulation pairs (e.g., 16QAM vs. 64QAM, 8PSK vs. QPSK), whose confusion is largely independent of the noise level and is instead related to the short observation length and the similarity of their constellations and spectra. As a result, the curves saturate and may slightly oscillate around the peak value, rather than increasing strictly monotonically with SNR. Importantly, these small high-SNR fluctuations do not affect the overall trend: FAFT consistently outperforms the baselines over almost all SNR levels and achieves the highest average accuracy. A similar saturation with small fluctuations is also observed on EVAS. In this case, when the SNR is already high, the dominant impairment is the EVA fading channel (multipath and Doppler), which is not removed by further increasing SNR, so the performance no longer improves monotonically with SNR.

Confusion matrices of FAFT and MAMC models on different datasets at SNR = 14 dB (rows: true modulation labels; columns: predicted labels).

Figure 5a and b compare the confusion matrices of FAFT and MAMC on RML2016.10a and RML2016.10b at SNR = 14 dB. The FAFT model exhibits better discrimination among different modulation types, with fewer misclassifications between closely related modulation schemes, indicating that the fused time-frequency features and attention mechanism effectively enhance class separability.

Figures 4c and 5c and f present the results on the EVAS dataset. FAFT significantly outperforms conventional models such as Transformer and ResNet at low SNRs (\(-10\) to \(-8\) dB) and mid-to-high SNRs (6–12 dB). At very high SNRs (above 14 dB), FAFT and MAMC achieve similar performance. The improvement in low-SNR regimes again benefits from the spectral enhancement module, which suppresses noise and emphasizes multiple carriers in OFDM signals. At high SNRs, we believe the adaptive filter and regularization help mitigate multipath effects, enabling FAFT to remain robust in realistic EVA channel conditions.

Conclusion and future work

In this paper, we proposed a FAFT-based neural network for automatic modulation recognition of OFDM signals in 5G/6G scenarios. The frequency-domain branch combines FFT, a learnable spectral-enhancement module and an adaptive filter bank, while the time-domain branch uses lightweight 1-D convolutions to capture local temporal structures. A channel-attention block fuses both representations. A simple frequency-domain regularizer on approximate and detail spectrum components, added to the cross-entropy loss, guides the filters towards informative, non-trivial frequency responses. Experiments on RML2016.10a/10b and the practical EVAS dataset show that FAFT attains state-of-the-art accuracy with only 0.13M parameters and low FLOPs. Beyond numerical performance, FAFT’s design explains its robustness at low SNR. The spectral-enhancement module and the frequency-domain regularizer bias the adaptive FFT filters toward smooth, large-scale spectral envelopes and penalize highly oscillatory, noise-dominated patterns. Because additive noise and fast channel ripples mainly appear as wideband, rapidly varying spectral components, FAFT is encouraged to rely on stable spectral structures and complementary short-term temporal cues from the TSCC branch, yielding consistent gains in low- and medium-SNR regimes. Several limitations remain and motivate future work. The current model is evaluated only in single-antenna (SISO) settings and on a limited set of channels and hardware platforms, and our complexity analysis is purely algorithmic, without real-time implementation. Future work will extend FAFT to multi-antenna (MIMO) receivers and wideband sensing, and explore low-precision quantization, model compression, FPGA-based acceleration and online or semi-supervised adaptation so that FAFT can operate efficiently under changing channels and waveform types.

Data availability

The RML2016.10a and RML2016.10b datasets analysed in this study are publicly available as described by O’Shea et al.37. The EVAS dataset (raw complex I/Q captures acquired with a USRP and a channel emulator under proprietary channel profiles) involves third-party licensing constraints and is very large in size; it is therefore not posted publicly. The full EVAS datasets are available from the corresponding author upon reasonable request for academic, non-commercial use under a data-use agreement and, where applicable, subject to permission from the vendor. Requests will be answered within two weeks.

References

Mishra, P. & Singh, G. 6G-IoT framework for sustainable smart city: Vision and challenges. IEEE Consum. Electron. Mag. 13, 93–103. https://doi.org/10.1109/MCE.2023.3307225 (2024).

Wang, C.-X. et al. On the road to 6G: Visions, requirements, key technologies, and testbeds. IEEE Commun. Surv. Tutor. 25, 905–974. https://doi.org/10.1109/COMST.2023.3249835 (2023).

Bhuiyan, M. N., Rahman, M. M., Billah, M. M. & Saha, D. Internet of things (IoT): A review of its enabling technologies in healthcare applications, standards protocols, security, and market opportunities. IEEE Internet Things J. 8, 10474–10498. https://doi.org/10.1109/JIOT.2021.3062630 (2021).

Ara, I. & Kelley, B. Physical Layer Security for 6G: Toward achieving intelligent native security at Layer-1. IEEE Access 12, 82800–82824. https://doi.org/10.1109/ACCESS.2024.3413047 (2024).

Yang, C. et al. Open-Set Radar Emitter Recognition via Deep Metric Autoencoder. IEEE Internet Things J. 11, 18281–18291. https://doi.org/10.1109/JIOT.2024.3361899 (2024).

Zining, W. et al. Multi-objective robust secure beamforming for cognitive satellite and UAV networks. J. Syst. Eng. Electron. 32, 789–798. https://doi.org/10.23919/JSEE.2021.000068 (2021).

Hao, X. et al. Contrastive Self-Supervised Clustering for Specific Emitter Identification. IEEE Internet Things J. 10, 20803–20818. https://doi.org/10.1109/JIOT.2023.3284428 (2023).

Satapathy, J. R., Bodade, R. M. & Ayeelyan, J. CNN based modulation classifier and radio fingerprinting for electronic warfare systems. In2024 4th International Conference on Advance Computing and Innovative Technologies in Engineering (ICACITE) 1880–1885, https://doi.org/10.1109/ICACITE60783.2024.10617103 (2024).

Xu, J. L., Su, W. & Zhou, M. Software-Defined Radio Equipped With Rapid Modulation Recognition. IEEE Trans. Veh. Technol. 59, 1659–1667. https://doi.org/10.1109/TVT.2010.2041805 (2010).

Hameed, F., Dobre, O. A. & Popescu, D. C. On the likelihood-based approach to modulation classification. IEEE Trans. Wirel. Commun. 8, 5884–5892. https://doi.org/10.1109/TWC.2009.12.080883 (2009).

Kharbech, S., Simon, E. P., Belazi, A. & Xiang, W. Denoising higher-order moments for blind digital modulation Identification in multiple-antenna systems. IEEE Wirel. Commun. Lett. 9, 765–769. https://doi.org/10.1109/LWC.2020.2969157 (2020).

Ara, H. A., Zahabi, M. R. & Ebrahimzadeh, A. Blind digital modulation identification using an efficient method-of-moments estimator. Wirel. Pers. Commun. 116, 301–310. https://doi.org/10.1007/s11277-020-07715-2 (2021).

Li, J. et al. Automatic modulation classification using support vector machines and error correcting output codes. In Xu, B. (ed.) Proceedings of 2017 IEEE 2nd information technology, networking, electronic and automation control conference (ITNEC), 60–63 (IEEE PRESS, 2017). IEEE 2nd information technology, networking, electronic and automation control conference (ITNEC), Chengdu, PEOPLES R CHINA, DEC 15-17, 2017.

Luan, S., Gao, Y., Chen, W., Yu, N. & Zhang, Z. Automatic Modulation Classification: Decision Tree Based on Error Entropy and Global-Local Feature-Coupling Network Under Mixed Noise and Fading Channels. IEEE Wirel. Commun. Lett.11, 1703–1707. https://doi.org/10.1109/LWC.2022.3175531 (2022).

Dobre, O. A., Abdi, A., Bar-Ness, Y. & Su, W. Survey of automatic modulation classification techniques: classical approaches and new trends. IET Commun. 1, 137–156. https://doi.org/10.1049/iet-com:20050176 (2007).

He, S., Jiang, X., Jiang, W. & Ding, H. Prototype Adaption and Projection for Few- and Zero-Shot 3D Point Cloud Semantic Segmentation. IEEE Trans. Image Process. 32, 3199–3211. https://doi.org/10.1109/TIP.2023.3279660 (2023).

Min, B. et al. Recent Advances in Natural Language Processing via Large Pre-trained Language Models: A Survey. ACM Comput. Surv. https://doi.org/10.1145/3605943 (2024).

Ma, J. et al. Segment anything in medical images. Nat. Commun. https://doi.org/10.1038/s41467-024-44824-z (2024).

Deng, W., Li, S., Wang, X. & Huang, Z. Cross-Domain Automatic Modulation Classification: A Multimodal-Information-Based Progressive Unsupervised Domain Adaptation Network. IEEE Internet Things J. 12, 5544–5558. https://doi.org/10.1109/JIOT.2024.3488118 (2025).

Li, R., Li, L., Yang, S. & Li, S. Robust Automated VHF Modulation Recognition Based on Deep Convolutional Neural Networks. IEEE Commun. Lett. 22, 946–949. https://doi.org/10.1109/LCOMM.2018.2809732 (2018).

Peng, S. et al. Modulation Classification Based on Signal Constellation Diagrams and Deep Learning. IEEE Trans. Neural Netw. Learn. Syst. 30, 718–727. https://doi.org/10.1109/TNNLS.2018.2850703 (2019).

Zhang, Y., Zhou, Z., Cao, Y., Li, G. & Li, X. MAMC-Optimal on Accuracy and Efficiency for Automatic Modulation Classification With Extended Signal Length. IEEE Commun. Lett. 28, 2864–2868. https://doi.org/10.1109/LCOMM.2024.3474519 (2024).

Yang, C., He, Z., Peng, Y., Wang, Y. & Yang, J. Deep Learning Aided Method for Automatic Modulation Recognition. IEEE Access 7, 109063–109068. https://doi.org/10.1109/ACCESS.2019.2933448 (2019).

Daldal, N., Yildirim, O. & Polat, K. Deep long short-term memory networks-based automatic recognition of six different digital modulation types under varying noise conditions. Neural Comput. Appl. 31, 1967–1981. https://doi.org/10.1007/s00521-019-04261-2 (2019).

Cai, J., Gan, F., Cao, X. & Liu, W. Signal Modulation Classification Based on the Transformer Network. IEEE Trans Cognit. Commun. Netw. 8, 1348–1357. https://doi.org/10.1109/TCCN.2022.3176640 (2022).

Xu, J., Luo, C., Parr, G. & Luo, Y. A Spatiotemporal Multi-Channel Learning Framework for Automatic Modulation Recognition. IEEE Wirel. Commun. Lett. 9, 1629–1632. https://doi.org/10.1109/LWC.2020.2999453 (2020).

Tu, a., Lin, Y., Hou, C. & Mao, S. Complex-valued networks for automatic modulation classification. IEEE Trans. Veh. Technol. 69, 10085–10089, https://doi.org/10.1109/TVT.2020.3005707 (2020).

Cui, T., Wang, D., Ji, L., Han, J. & Huang, Z. Time and phase features network model for automatic modulation classification. Comput. Electr. Eng. 111, https://doi.org/10.1016/j.compeleceng.2023.108948 (2023).

Deng, W., Li, S., Wang, X. & Huang, Z. Cross-domain automatic modulation classification: A multimodal-information-based progressive unsupervised domain adaptation network. IEEE Internet Things J. 12, 5544–5558. https://doi.org/10.1109/JIOT.2024.3488118 (2025).

Song, G., Jang, M. & Yoon, D. CNN-Based Modulation Classification for OFDM Signal. In 12th International Conference on ICT Convergence (ICTC 2021): Beyond the Pandemic Era with ICT Convergence Innovation, International Conference on Information and Communication Technology Convergence, 1326–1328, https://doi.org/10.1109/ICTC52510.2021.9620896 (IEEE Commun Soc; IEICE Commun Soc; Korean Inst Commun & Informat Sci; Minist Sci & ICT; Elect & Telecommunicat Res Inst; KOFST; Jeju Convent & Visitiors Bur; Korea Tourism Org; Samsung; LG Elect; SK Telecom; KT; LG U+; Youngwoo Cloud; Netvis Telecom; Innox; Huawei; LG, Ericsson; Fiber Radio Technologies; ICT Convergence Korea Forum; Soc Safety Syst Forum; 5G Based Smart Factory Standardizat Forum, 2021). 12th International Conference on ICT Convergence (ICTC) - Beyond the Pandemic Era with ICT Convergence Innovation, SOUTH KOREA, OCT 20-22, 2021.

Kumar, A., Srinivas, K. K. & Majhi, S. Automatic Modulation Classification for Adaptive OFDM Systems Using Convolutional Neural Networks With Residual Learning. IEEE Access 11, 61013–61024. https://doi.org/10.1109/ACCESS.2023.3286939 (2023).

Ren, B., Teh, K. C., An, H. & Gunawan, E. OFDM Modulation Classification Using Cross-SKNet With Blind IQ Imbalance and Carrier Frequency Offset Compensation. IEEE Trans. Veh. Technol. 73, 8389–8403. https://doi.org/10.1109/TVT.2024.3356606 (2024).

Fayad, A., Cinkler, T. & Rak, J. Toward 6G Optical Fronthaul: A survey on enabling technologies and research perspectives. IEEE Commun. Surv. Tutor. 27, 629–666. https://doi.org/10.1109/COMST.2024.3408090 (2025).

Chen, R., Yan, B. & Chang, M.-C. F. A review of circuits and systems for advanced Sub-THz transceivers in wireless communication. Electronics https://doi.org/10.3390/electronics14050861 (2025).

Halamandaris, A. et al. IEEE Latin-American Conference on Communications, Latincom, IEEE Latin American Conference on. Communications2023, https://doi.org/10.1109/LATINCOM59467.2023.10361876 (IEEE, 2023). IEEE Latin-American Conference on Communications (LATINCOM), Panama City, PANAMA, NOV 15-17 (2023).

Lu, X. Lightweight. et al. IEEE 94th Vehicular Technology Conference (VTC2021-FALL). IEEE Vehicular Technology Conference Proceedings 2021, https://doi.org/10.1109/VTC2021-FALL52928.2021.9625558 (IEEE; IEEE VTS, 2021). 94th IEEE Vehicular Technology Conference (VTC-Fall), Electr Network, Sep 27-30 (2021).

Anis, M. A., Elgamel, S. A. & Aboelazm, M. A. Robust modulation classification in the presence of realistic propagation in rural environment. 2024 International Telecommunications Conference (ITC-Egypt) 257–262, https://doi.org/10.1109/ITC-Egypt61547.2024.10620527 (2024).

O’Shea, T. J., Johnathan, C. & Clancy, T. C. Convolutional radio modulation recognition networks. In Proc. 17th Int. Conf. Eng. Appl. Neural Netw. Cham 213–226 (2016).

Luo, C., Tang, A., Gao, F., Liu, J. & Wang, X. Channel modeling framework for both communications and bistatic sensing under 3GPP standard. IEEE J. Sel. Areas Sens. 1, 166–176. https://doi.org/10.1109/JSAS.2024.3451411 (2024).

Funding

This work was supported by the National Natural Science Foundation of China (Grant No. 62027801).

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Li, Y., Tang, X., Wang, L. et al. A novel automatic modulation recognition algorithm for OFDM signals based on FAFT. Sci Rep 16, 9614 (2026). https://doi.org/10.1038/s41598-025-33752-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-33752-7