Abstract

This study presents a data-driven framework for predicting key thermophysical properties including dynamic viscosity, speed of sound, and density of aqueous solutions containing aliphatic biogenic polyamines (ABPs), which are of growing importance in biochemical and industrial applications. A total of 198 experimentally measured data points were used to train and validate five advanced machine learning models: K-Nearest Neighbors (KNN), Ensemble Learning (EL), Convolutional Neural Networks (CNN), Adaptive Boosting (AdaBoost), and Multi-Layer Perceptron Artificial Neural Networks (MLP-ANN). Hyperparameter optimization was conducted using the Coupled Simulated Annealing (CSA) algorithm. The study focused on three ABPs including Putrescine dihydrochloride (PUT), Cadaverine dihydrochloride (CAD), and Spermine tetrahydrochloride (SPER) across a range of molar masses, concentrations, and temperatures. Sensitivity analysis using the Monte Carlo method revealed ABP type and molar mass as dominant factors influencing the target properties. Among the models, MLP-ANN demonstrated the highest predictive accuracy for density and viscosity, while EL performed best for speed of sound. The results confirm the potential of machine learning as a reliable, efficient, and cost-effective alternative to conventional experimental approaches for modeling complex solution behavior.

Similar content being viewed by others

Introduction

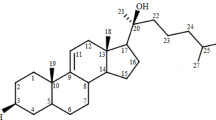

Naturally occurring aliphatic compounds known as polyamines are small, water-soluble, multiply charged molecules with crucial roles in physiological processes. Among them, putrescine, cadaverine, and spermidine are the most prominent variants. These cationic molecules exhibit strong affinities for negatively charged biomolecules such as nucleic acids and phospholipid membranes, influencing critical biological functions including cell proliferation, gene regulation, and molecular transport across systems such as the digestive, immune, and reproductive networks1. Their involvement in cell cycle regulation and neural development has attracted attention to their potential links with diseases like cancer, psoriasis, and sickle cell anemia2.

Despite their biological significance, the physical and thermodynamic characterization of polyamines in aqueous environments remains limited. Most existing studies have focused on their chemical behavior, pharmacological activities, and cytotoxic potential particularly regarding their anticancer effects3,4. However, experimental investigations into thermophysical properties such as density, viscosity, and acoustic velocity are scarce, especially for biologically relevant ABPs like putrescine, cadaverine, and spermidine. Previous efforts have largely concentrated on simpler diamines such as ethylenediamine and 1,3-diaminopropane5,6,7,8.

Alatefi et al. developed advanced explainable AI models for predicting water activity in ionic liquid–based ternary aqueous systems, employing CatBoost combined with Grey Wolf, Whale, and Gravity Search optimization algorithms9. Their hybrid machine-learning framework achieved exceptional predictive accuracy (R² = 0.993) and provided physical interpretability through SHAP analysis. This study demonstrated the effectiveness of data-driven and explainable AI models for capturing thermodynamic properties in multicomponent aqueous solutions an approach conceptually aligned with the present work, which also integrates machine-learning methods to predict the thermophysical behavior of complex liquid systems.

Similarly, Alatefi et al. applied Convolutional Neural Networks (CNN), Extreme Learning Machine (ELM), and Long Short-Term Memory (LSTM) architectures to model the heat capacity of deep eutectic solvents. Their results confirmed the high accuracy and practical applicability of deep-learning frameworks in predicting key physical properties of green solvents. The CNN model, in particular, provided superior performance and interpretability through SHAP analysis, emphasizing how explainable AI can facilitate the design and optimization of environmentally friendly solvent systems. This approach shares conceptual parallels with the current study’s focus on employing advanced machine-learning models to predict density, viscosity, and speed of sound in biogenic polyamine aqueous systems.

In this context, a reliable understanding of the behavior of ABP aqueous solutions is vital for process optimization and biochemical modeling. Experimental measurement of density, viscosity, and speed of sound under varying thermodynamic conditions is not only time-consuming but also constrained by cost and labor intensity. Although some physical data exist, they are often fragmented and insufficient in terms of coverage across multiple ABP types, concentrations, and temperature ranges.

To address this gap, the present work compiles an extensive experimental dataset (198 data points) encompassing the thermophysical behavior of aqueous solutions of three ABPs including Putrescine dihydrochloride (PUT), Cadaverine dihydrochloride (CAD), and Spermine tetrahydrochloride (SPER). This dataset provides the foundation for developing advanced predictive models using a suite of machine learning algorithms, including K-Nearest Neighbors (KNN), Ensemble Learning (EL), Convolutional Neural Networks (CNN), Adaptive Boosting (AdaBoost), and Multi-Layer Perceptron Artificial Neural Networks (MLP-ANN). The Coupled Simulated Annealing (CSA) algorithm was employed for hyperparameter optimization. Until now, no comprehensive models have yet been developed for predicting thermophysical properties such as density, viscosity, and speed of sound in aqueous solutions of aliphatic biogenic polyamines, and this is the first study to apply hybrid machine learning models to predict the aforementioned properties. To ensure model reliability, the dataset was divided into training (158 points) and testing (40 points) subsets. To examine the effects of input parameters, a Monte Carlo sensitivity study was performed. The model’s effectiveness was measured via quantitative indicators and visual representations. An overview of the full approach appears in Fig. 1.

Method to Identify the Leading Data-Based Predictive Model.

To highlight the novelty and contribution of the present study, a comparative summary of relevant literature is provided in Table 1. This table outlines prior efforts on the investigation of biogenic amines and related compounds, including their thermodynamic properties, DNA-binding behavior, and quantitative profiling. While these studies offer valuable insights, none have explored the simultaneous prediction of density, viscosity, and speed of sound for aqueous solutions of aliphatic biogenic polyamines using machine learning approaches. The current study addresses this gap by applying hybrid models to accurately forecast these key thermophysical properties.

Survey of thermodynamic methods and machine learning approaches

Methods of machine learning

In the realm of biochemistry, data-driven predictive approaches powered by machine learning play a crucial role, especially when tackling elaborate setups with numerous interdependent factors like estimating the material characteristics of solutions derived from aliphatic biogenic polyamines (ABP)13,14,15. This study employs five sophisticated machine learning algorithms including K-Nearest Neighbors (KNN), Ensemble Learning (EL), Convolutional Neural Networks (CNN), Adaptive Boosting (AdaBoost), and Multi-Layer Perceptron Artificial Neural Networks (MLP-ANN) to simulate the density, speed of sound, and dynamic viscosity of aqueous ABP solutions. Such approaches excel at modeling intricate, non-linear interactions in laboratory data, delivering a dependable and efficient substitute for conventional testing protocols owing to their rapid processing, adaptability, and enhanced forecasting precision.

Convolutional neural networks (CNNs)

Convolutional Neural Networks (CNNs) are deep learning frameworks tailored for processing structured data, like images. Utilizing convolutional layers, these models identify regional elements like contours, motifs, and forms within the provided information, thereby facilitating robust detection of elaborate configurations and interconnections16. In mathematical language, convolution process in a CNN is denoted as:

In this formulation, input feature map corresponds to f, convolutional kernel to g, whereas (x, y) specify positions across output feature map.

Convolutional neural networks feature multiple convolutional layers that successively identify increasingly detailed and conceptual patterns17. A major strength of convolutional neural networks is handling vast, multi-dimensional data volumes, rendering them perfectly suited for applications including image categorization and object detection. CNN designs commonly integrate pooling layers to lower dimensional complexity and mitigate the risk of overfitting18.

Ensemble learning (EL)

Ensemble learning represents an advanced methodology in machine learning wherein several individual models are strategically integrated to improve overall predictive accuracy. For example, in a framework of weighted voting, ultimate estimation ŷ is established as:

For example, consider a weighted voting mechanism where ultimate forecast ŷ arises from integrating the results produced by the constituent models. Within this setup, T denotes the aggregate count of ensemble members, wt signifies the assigned priority or weight for the t-th member, ht(x) represents the t-th member’s output for input x, I(⋅) serves as an indicator that outputs 1 if input condition is maintained and 0 if it does not, and c stands for the array of feasible category options19.

Multilayer perceptron artificial neural network (MLP-ANN)

The Multi-Layer Perceptron, often abbreviated as MLP, is a foundational model within the broader family of Artificial Neural Networks and is frequently utilized in numerous machine learning applications20,21. When data is introduced to an MLP, it first passes through the input units. Subsequently, successive intermediate layers handle incoming inputs, implement activation mechanisms, and relay the altered data to the following layer. This sequential flow of operations persists until the information arrives at the concluding layer, which generates the system’s ultimate output or forecast22. The output of a neuron in MLP-ANN is expressed as:

Here, n denotes the total number of input variables, while xi represents the i-th input and wi is the weight assigned to that input. The parameter b is an additional constant (bias) that shifts the neuron’s output independently of the input values.

Adaptive boosting

AdaBoost, an acronym for Adaptive Boosting, emerges as a key ensemble method that boosts forecasting performance by sequentially combining weak classifiers commonly shallow decision trees into a unified predictive framework. By integrating the outputs of these weighted classifiers, AdaBoost demonstrates strong capabilities in handling binary classification and regression tasks, particularly when data complexity or noise is a concern23,24.

The mechanism by which AdaBoost updates the importance of each training example is formalized through a mathematical expression. The parameter αₜ itself, which shapes how much each weak learner influences the final prediction, is derived from a formula that factors in the model’s classification error during iteration t.

In the context of this research, AdaBoost was deployed to refine prediction accuracy through a progressive training process that emphasizes harder-to-predict cases.

KNN

K-Nearest Neighbors, often abbreviated as KNN, is a memory-based approach in machine learning that does not require an explicit training model and instead relies on the structure of the data itself to make predictions. To identify these neighboring points, the algorithm computes a distance between the input sample and all examples in the training set, frequently using Euclidean distance as the similarity measure.

This distance measure is obtained by evaluating the differences between matching attributes of the two samples, x and y, across all n feature dimensions. In this context, xi and yi represent the i-th feature values of the respective data points25,26.

CSA optimization algorithm

CSA stands as a refined enhancement of the traditional Simulated Annealing (SA) algorithm, offering improvements in both efficiency and robustness. While the classical SA method explores the search landscape using a single path and relies on probabilistic rules to occasionally accept inferior solutions helping to avoid entrapment in local optima CSA modifies this paradigm by orchestrating several parallel searches simultaneously27,28.

The core strength of CSA lies in its strategic coordination between exploring new regions of the solution space and refining promising candidates. Although each search unit independently proposes candidates, their acceptance is collectively regulated through a system rooted in entropy, which governs the overall randomness, often referred to as “temperature,” during the search. This global synchronization ensures that the system maintains a dynamic equilibrium between randomness and direction. Its versatility has made it a valuable tool in diverse areas such as optimizing machine learning parameters, designing engineering systems, and modeling multifaceted real-world phenomena29.

Collection of data and assessment metrics

Data bank description

Data originating from lab-based assessments of essential physical traits such as sound propagation rate, mass per volume, and flow resistance in water-mixed aliphatic biogenic polyamine (ABP) formulations across diverse scenarios served as the foundation for building the machine learning frameworks applied in this investigation. The dataset includes 198 observations from experiments, incorporating vital parameters such as ABP category, molecular weight, molal amount, and thermal conditions. The ABPs investigated were Putrescine dihydrochloride (PUT), Cadaverine dihydrochloride (CAD), and Spermine tetrahydrochloride (SPER), analyzed in aqueous solutions. This extensive and dependable collection of data establishes a firm groundwork for developing and assessing simulation-based forecasting tools that replicate the patterns of acoustic velocity, mass density, and fluid resistance in diverse biological and ecological contexts30. All measurements were conducted under controlled conditions to ensure consistency and accuracy. The dataset includes three ABPs including PUT, CAD, and SPER across varying temperatures and concentrations.

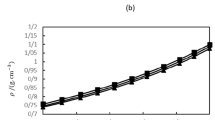

Table 2 offers a detailed compilation of the laboratory configurations analyzed here, outlining essential attributes and testing conditions applied to forecast the material traits of aliphatic biogenic polyamine (ABP) mixtures. The dataset, comprising 198 observations, includes molar masses ranging from 161.07 to 348.18 g/mol, molal concentrations from 0 to 0.20094 mol/kg, temperatures between 288.15 and 313.15 K, density values from 992.21 to 1017.07 kg/m³, speed of sound values from 1465.96 to 1576.52 m/s, and dynamic viscosity values from 0.6531 to 1.3838 mPa·s. This extensive dataset, covering diverse ABP types, molar masses, concentrations, and temperatures, establishes a reliable platform for developing highly accurate machine learning models capable of precisely predicting the physical properties of ABP solutions across various experimental scenarios.

Pre-processing details

Prior to model development, all data underwent the following preprocessing steps:

-

a)

Outlier Detection: Outliers were identified using the leverage approach with standardized residual analysis and Williams plot. Observations exceeding the threshold h∗=3(m + 1)/N or having standardized residuals ∣SDi∣>3 were flagged for review.

-

b)

Normalization: To mitigate scale discrepancies among the variables, an adapted form of min–max scaling was employed, transforming all input and target values into the interval [− 1, 1]. This standardization enhanced numerical stability during training and supported more consistent convergence across the different models.

-

c)

Data Splitting: The complete dataset, consisting of 198 observations, was randomly partitioned into 158 samples for training (80%) and 40 samples for testing (20%), enabling an unbiased assessment of each model’s ability to generalize beyond the data used for learning.

Indices of model evaluation

Various important evaluation indicators were determined for every machine learning technique in order to evaluate and compare the forecasting performance of the constructed models. The models assessed in this study include K-Nearest Neighbors (KNN), Ensemble Learning (EL), Convolutional Neural Networks (CNN), Adaptive Boosting (AdaBoost), and Multi-Layer Perceptron Artificial Neural Networks (MLP-ANN). These algorithms were employed to forecast the physical properties including speed of sound, density, and dynamic viscosity of aqueous solutions of aliphatic biogenic polyamines (ABPs), using input variables such as ABP type, molar mass, molal concentration, and temperature. For measuring the precision and stability of individual models throughout their development and validation stages, core assessment indicators such as R², RMSE, and AARE% were determined31,32,33,34,35:

In these formulas, the subscript index ‘i’ identifies each distinct entry in the dataset. Here, ‘pred’ refers to the forecasted measurements, and ‘exp’ to the laboratory-obtained measurements, for key material attributes such as acoustic velocity, mass per unit volume, and shear resistance in water-dissolved aliphatic biogenic polyamine (ABP) formulations36,37. Additionally, ‘N’ indicates the complete set of entries utilized in the analysis, comprising 198 laboratory measurements of such attributes within ABP formulations38,39.

The predictive frameworks were developed by incorporating predictors like ABP category, molecular weight, molal density, and thermal level, targeting mass density, acoustic velocity, and shear resistance as response measures. In support of effective learning processes and dependable validation, the full collection of observations underwent a random partitioning into development and validation groups, designating 80% (158 samples) for model fitting and the balance of 20% (40 samples) for independent appraisal.

To normalize the varied scales present in predictor and response variables, a sophisticated min-max scaling approach was utilized before initiating model training, rescaling each attribute uniformly to the interval [−1, 1]. By counteracting the disproportionate influence of high-magnitude attributes, this data preparation technique fosters more effective training dynamics and enhanced resilience, ultimately elevating the forecasting dependability across the machine learning frameworks. The normalization method implemented is outlined next:

Rescaling attributes to consistent interval [−1, 1] through this data preparation approach fosters uniformity in the inputs, which in turn bolsters the dependability and estimation quality of the predictive frameworks constructed for this research. Within the given formula, xN denotes the adjusted measurement, x the raw and unmodified input, while Xmax and Xmin indicate the peak and lowest extents of the full data collection, respectively.

The customized scaling technique, implemented across the 198 laboratory measurements of core material attributes such as mass density, acoustic propagation rate, and shear resistance in water-dissolved aliphatic biogenic polyamine (ABP) preparations, rescales inputs into the [−1, 1] interval to achieve dataset consistency, thereby substantially boosting the dependability and exactness of the predictive systems developed here. As detailed in the equation, xN denotes the adjusted measurement, x the unmodified original input, while Xmax and Xmin mark the dataset’s uppermost and lowermost extents, respectively.

Results and analysis

Outlier detection

The leverage approach is employed to identify outliers by combining the assessment of standardized residuals and leverage scores for each data point. The primary equation defines the discrepancy Di as follows40,41:

Within this setup, the residual Di associated with i-th observation arises from subtracting the lab-derived measurement (XExp, i) from the estimated output (XPred, i) for material attributes such as mass density, acoustic propagation rate, or fluid friction in water-diluted aliphatic biogenic polyamine (ABP) preparations. This discrepancy reflects the estimation shortfall tied to every record in the compilation. The normalized residual (SDi) takes the form given in the equation below:

In this formulation, the standardized residual SDi represents the normalized deviation of the i-th observation. It is computed using the previously defined discrepancy Di, the total number of data points N (198 in this study), and the leverage value hi, which quantifies the influence of that observation based on the hat matrix. Figure 2 depicts the detection of anomalies in the databank of physical properties including speed of sound, density, and dynamic viscosity of aqueous aliphatic biogenic polyamine (ABP) solutions using the leverage approach, visualized through a Williams plot. The divisor in this setup scales the discrepancy by dividing it through the residuals’ standard deviation, fine-tuned via the leverage value hi a metric that gauges each observation’s impact on how well the model aligns with predictions. The critical leverage limit h* emerges from the formula h*=3(m + 1)/N, in which m stands for the quantity of predictor elements (specifically 4 here: ABP category, molecular weight, molal density, and heat level), and N captures the entire data volume. Applying this to the current collection yields h*=3(4 + 1)/198 = 0.0758. Entries where hi surpasses h* qualify as influential outliers, signaling notable sway, as do standardized discrepancies that go beyond standard bounds (typically |SDi|>3), which signal anomalies. Overall, this evaluation affirms the data collection’s suitability for forecasting key traits of ABP mixtures and uncovers meaningful patterns in the dynamics shaping mass density, acoustic speed, and flow resistance within these setups.

Detection of outliers utilizing the Leverage method (for a) density, (b) sound speed, and (c) viscosity data.

Sensitivity analysis

This portion of the study examines the effects exerted by essential predictors namely ABP category, molecular weight, molal density, and thermal level upon the material attributes of water-dissolved aliphatic biogenic polyamine (ABP) preparations, concurrently gauging the comparative weight of each contributor. Utilizing correlation metrics, the relevance of these predictors is determined, yielding key perspectives on their influence within the forecasting frameworks established via 198 laboratory measurements42.

The Monte Carlo simulation technique, prized due to its straightforwardness and openness, is applied in present study to gauge the comparative effects of input factors on the mass density, acoustic propagation rate, and fluid resistance traits of aliphatic biogenic polyamine (ABP) mixtures. Through methodical extraction from an extensive spectrum of potential input quantities, this strategy adeptly addresses indeterminacies, allowing immediate scrutiny of response fluctuations while sidestepping auxiliary simulation layers. In this context, the predictive framework integrates various input elements, detailed below42:

For every predictor variable (x), the interval and probabilistic traits are specified and applied to create a thorough collection of randomized samples:

Within the given formula, k signifies the aggregate quantity of produced samples, whereas n stands for the count of predictor elements incorporated. At this stage, a range of selection techniques including uniform randomization, prioritized selection, and Latin hypercube methodology (LHS) can be utilized to assemble the entry data compilation. Next, the predictive system processes each collection of selected entries to yield the associated result metrics:

In this setup, the parameter k corresponds to the quantity of produced samples, whereas n denotes the count of predictor elements. Diverse extraction methods such as uniform randomization, targeted selection, and LHS may be utilized during this process. Following that, the predictive system evaluates each group of chosen predictors to yield the associated response metrics:

In the given setup, the symbols E and V denote expected value and variance, in that order. The evaluation of sensitivity then proceeds, leveraging the linkage between inputs and outputs as specified in Eq. (19). For illustrating such linkages, among the array of available techniques, the creation of scatter plots stands out as a notably powerful and accessible choice:

Figure 3 delivers a thorough impact assessment that gauges the comparative effects of key factors ABP category, molecular weight, molal density, and thermal conditions upon essential material traits like acoustic propagation rate, mass per unit volume, and fluid friction in water-dissolved aliphatic biogenic polyamine (ABP) preparations, by incorporating Monte Carlo simulations alongside the predictive frameworks built in this investigation. Renowned for its reliability in tackling indeterminacies, the Monte Carlo technique methodically extracts samples from input deviations to measure their sway over the forecasting of these attributes. The outcomes are illustrated using a correlation matrix or an equivalent graphical tool, which highlights both the magnitude and the direction of the associations between each input variable and the corresponding predicted physical property.

For density, the analysis identifies ABP type as the primary influencing element, showing a correlation coefficient of 3.8476, followed by molar mass (3.7618), molal concentration (3.05843), and temperature (2.99643). For speed of sound, molar mass is the most significant, with a correlation coefficient of 4.0963, followed by ABP type (3.9514), molal concentration (3.37512), and temperature (3.1459). For dynamic viscosity, ABP type exerts the greatest influence, with a correlation coefficient of 4.2576, followed by molar mass (4.0029), molal concentration (3.5783), and temperature (3.6714). Insights gleaned from 198 laboratory measurements emphasize the tangled dynamics between predictor elements, positioning ABP category and molecular weight as the dominant influencers shaping material attributes in aliphatic biogenic polyamine (ABP) formulations. Integrating Monte Carlo methods strengthens the credibility of this evaluation through systematic handling of input variations, establishing a sturdy base for deciphering fundamental dynamics. As a practical aid for investigators, Fig. 3 delivers actionable perspectives to streamline workflows in settings where precise regulation of mass density, acoustic propagation rate, and shear resistance proves vital for biological and production endeavors.

Assessment of the factors affecting (a) density, (b) speed of sound, and (c) viscosity variations utilizing the created predictive algorithms and Monte Carlo simulation.

Model construction

All machine learning models and optimization algorithms were implemented using MATLAB R2021a. While most of the code was developed in-house, certain built-in functions from MATLAB Toolboxes (e.g., Statistics and Machine Learning Toolbox, Optimization Toolbox) were utilized. Figure 4 illustrates the optimization procedure for determining the optimal number of base estimators, a critical hyperparameter in the Adaptive Boosting (AdaBoost) model, which significantly affects the ensemble’s learning capacity and predictive performance. The figure is expected to depict how key evaluation metrics such as the coefficient of determination (R²) and error-based indicators like RMSE and AARE% vary as the number of base estimators changes. These assessments were performed using the normalized dataset of 198 observations describing density, speed of sound, and viscosity for aqueous aliphatic biogenic polyamine (ABP) solutions. From this optimization procedure, the most effective numbers of estimators were determined to be 18 for density, 11 for speed of sound, and 10 for viscosity. These selections provide a balanced trade-off between model capacity and its ability to generalize, allowing AdaBoost to strengthen the predictive contribution of its weak learners while reducing the risk of overfitting. Consequently, the resulting configurations yield more accurate and reliable predictions across the various ABP systems examined.

Optimal hyperparameter settings for the AdaBoost model, showing the selected number of base estimators for predicting (a) density, (b) speed of sound, and (c) viscosity.

Figure 5 illustrates the optimization process for the number of neighbors (K), a pivotal hyperparameter in the K-Nearest Neighbors (KNN) model, which significantly influences the model’s predictive accuracy and complexity. The figure likely presents performance curves, depicting the relationship between the coefficient of determination (R²) and various K values evaluated during the training and testing phases on the 198-point dataset concerning the physical properties including speed of sound, density, and dynamic viscosity of aqueous solutions of aliphatic biogenic polyamines (ABPs). Optimal K values are determined as follows: 13 for density, 18 for speed of sound, and 16 for viscosity. These optimized settings provide a practical compromise between capturing subtle, non-linear trends in the dataset such as the influence of molal concentration within the 0–0.20094 mol/kg range and preventing the model from fitting random fluctuations in the data. With this configuration, the KNN algorithm can fully leverage its distance-driven structure to deliver accurate predictions of the thermophysical properties of ABP solutions while maintaining strong generalization performance across varying experimental scenarios.

Optimal K values determined for the KNN model in predicting (a) density, (b) speed of sound, and (c) viscosity.

Figure 6 illustrates the tuning process used to identify the optimal number of neurons in the second hidden layer of the MLP-ANN architecture an essential hyperparameter that directly shapes the model’s ability to learn complex relationships and deliver accurate predictions. In this study, the neural network is composed of an input layer, followed by a first hidden layer with 20 neurons employing a tansig activation function to capture nonlinear patterns. The second hidden layer, whose size was systematically adjusted during optimization, enhances the model’s representational depth by extracting more refined features from the data. The network is completed with an output layer consisting of a single neuron configured with a purelin activation function to generate continuous-valued predictions. Through targeted hyperparameter optimization, the most effective number of neurons in the second hidden layer was determined to be 15 for density, 11 for speed of sound, and 9 for viscosity. These configurations yielded the smallest prediction errors when evaluated on the scaled dataset of 198 measurements encompassing density, sound velocity, and viscosity for aqueous solutions of aliphatic biogenic polyamines. The tansig transfer function was also implemented in the second hidden layer to effectively capture complex non-linear relationships in the data. This carefully optimized network configuration enhances the MLP-ANN model’s capability to accurately predict the physical properties of ABP solutions while ensuring robust generalization across diverse experimental conditions.

Optimal number of neurons in the hidden layer of the MLP-ANN model for predicting (a) density, (b) speed of sound, and (c) viscosity.

The EL approach adopted in this work combines three complementary algorithms SVM, Decision Tree, and KNN operating in parallel to improve the predictive performance for the thermophysical characteristics of aqueous ABP solutions. In this setup, the KNN component uses the Euclidean distance measure, with its optimal number of neighbors determined as six for density, five for sound velocity, and seven for viscosity, ensuring balanced accuracy across all target properties. The SVM component utilized an RBF kernel, likewise grounded in Euclidean distance, and its key hyperparameters were finely tuned to strike an appropriate balance between model expressiveness and generalization. The optimal settings included a regularization constant of C = 120, an epsilon of 0.001, and a gamma value of 0.02, enabling the SVM to model non-linear relationships effectively without overfitting. In tandem, the convolutional neural network (CNN) was configured with tailored architectures: for density, it comprised two convolutional layers, one pooling layer, and a fully connected neural network; for speed of sound and viscosity, it included three convolutional layers, one pooling layer, and a fully connected neural network. These optimized CNN structures were designed to effectively capture and represent complex patterns within the 198-point dataset, enhancing the prediction of ABP solution properties.

Tables 2, 3 and 4 present a detailed comparison of the predictive performance achieved by the five machine learning models used to estimate density, speed of sound, and dynamic viscosity in aqueous aliphatic biogenic polyamine (ABP) systems. Model accuracy was quantified using three primary indicators: R², RMSE, and AARE%. These metrics were computed separately for the training subset consisting of 158 samples, the testing subset of 40 samples, and the full dataset of 198 experimental observations. All model hyperparameters underwent precise tuning through CSA technique, designed to boost effectiveness and curb excessive fitting. Tables 2, 3 and 4 display a distinct hierarchy in model effectiveness for the different attributes. In terms of mass density, the MLP-ANN framework achieved the best estimation precision, reaching an R² of 0.997800542 over the complete data collection, coupled with the smallest RMSE (0.344613659) and AARE% (0.025414597). Such outcomes highlight its outstanding capacity for representing elaborate interconnections between key predictors, namely ABP category, molecular weight (161.07–348.18 g/mol), molal density (0–0.20094 mol/kg), and thermal setting (288.15–313.15 K). The KNN model followed closely, with an R² of 0.997755089, while CNN and EL also showed strong performance, maintaining R² values above 0.992. For speed of sound, the EL model outperformed others, with an R² of 0.99825565, RMSE of 1.451654798, and AARE% of 0.072083185, reflecting its robustness in modeling acoustic properties. For dynamic viscosity, the MLP-ANN model again led, achieving an R² of 0.999540903, RMSE of 0.005566581, and AARE% of 0.479200074, with AdaBoost and CNN also performing exceptionally well, with R² values above 0.998. Although the AdaBoost framework delivers solid results, its precision for select attributes trailed marginally behind MLP-ANN and EL, yet it consistently operated inside dependable estimation limits.

Figure 7a to c bolster the observations detailed in Tables 2, 3 and 4 via graphical evaluations of predictive effectiveness in the validation stage, presumably employing bar graphs or comparable formats to illustrate R², RMSE, and AARE% figures for each model with respect to mass density (Fig. 7a), acoustic velocity (Fig. 7b), and shear resistance (Fig. 7c). These visuals distinctly underscore the MLP-ANN framework’s exceptional forecasting fidelity for mass density and shear resistance, coupled with EL’s outstanding capability in acoustic velocity estimation, marked by the most precise correspondence between estimated and lab-derived measurements among the 40 validation entries. Moreover, they reveal modestly increased deviation rates for algorithms including AdaBoost and KNN in select scenarios when pitted against MLP-ANN and EL, thereby accentuating the merits of neural-based and ensemble strategies in navigating multifaceted trait behaviors.

In aggregate, the data from Tables 3, 4 and 5 alongside Fig. 7a–c affirm the prowess of sophisticated machine learning strategies most notably MLP-ANN and EL in delivering precise forecasts for the material characteristics of aliphatic biogenic polyamine (ABP) mixtures. Such outcomes illustrate the promise held by CSA-refined predictive systems as trustworthy and streamlined substitutes for conventional laboratory procedures, furnishing practical resources to enhance biological and manufacturing workflows that demand meticulous oversight of mass density, acoustic velocity, and shear resistance.

RMSE, R-squared and AARE% for all created models in this paper (testing phase).

Figure 8a–c display CDFs of the AARE% for each predictive model, offering a comprehensive view of how accurately the algorithms estimate the principal thermophysical properties density, sound velocity, and dynamic viscosity in aqueous solutions of aliphatic biogenic polyamines (ABPs). These CDF curves clearly reveal which models deliver the highest accuracy for each physical property. Models whose curves reach near-complete cumulative frequency at very low AARE% values demonstrate strong predictive capability and an ability to accurately represent the influences of variables such as ABP identity, molar mass (161.07–348.18 g/mol), molal concentration (0–0.20094 mol/kg), and temperature (288.15–313.15 K). For density (Fig. 8a), the MLP-ANN exhibits the best performance, achieving an AARE% of 0.025414597, with KNN model ranking closely behind, indicating highly reliable estimation accuracy for this property. For speed of sound (Fig. 8b), the Ensemble Learning (EL) model excels with an AARE% of 0.072083185, showcasing its robustness in modeling acoustic properties. For dynamic viscosity (Fig. 8c), MLP-ANN again leads with an AARE% of 0.479200074, with Adaptive Boosting (AdaBoost) and Convolutional Neural Network (CNN) also performing strongly. In contrast, models like AdaBoost and KNN exhibit slightly broader AARE% distributions for certain properties, indicating relatively lower precision in those cases. This visual assessment highlights the strong capability of advanced machine learning approaches especially MLP-ANN and EL in capturing the intricate, nonlinear behavior of ABP solution properties. Their demonstrated accuracy reinforces their suitability for use in biochemical and industrial settings where reliable prediction of density, acoustic velocity, and viscosity plays a vital role in process design, monitoring, and optimization.

CDF of ARE% for artificial intelligence models predicting (a) density, (b) speed of sound, and (c) viscosity.

Figures 9a–c, 10, 11, 12 and 13a–c display crossplots comparing predicted versus experimental values for the physical properties including speed of sound, density, and dynamic viscosity of aqueous solutions of ABPs, utilizing five distinct machine learning models: KNN, EL, CNN, AdaBoost, and MLP-ANN. Derived from a collection of 198 laboratory measurements partitioned into 158 entries for model development and 40 for validation these scatter diagrams function as vital graphical instruments to gauge the estimation fidelity of every predictive framework, where flawless outcomes manifest as points tightly following the 45-degree reference axis. The dataset includes three ABPs Putrescine dihydrochloride (PUT), Cadaverine dihydrochloride (CAD), and Spermine tetrahydrochloride (SPER) with molar masses ranging from 161.07 to 348.18 g/mol, molal concentrations from 0 to 0.20094 mol/kg, temperatures from 288.15 to 313.15 K, density values from 992.21 to 1017.07 kg/m³, speed of sound values from 1465.96 to 1576.52 m/s, and dynamic viscosity values from 0.6531 to 1.3838 mPa·s. Collectively, Figs. 9a–c, 10, 11, 12 and 13a–c provide a thorough visual evaluation of the models’ predictive capabilities. For density (Figs. 9a, 10, 11, 12 and 13a), the MLP-ANN and KNN models exhibit exceptional accuracy, with data points tightly clustered along the 45-degree line, reflecting their high R² values (0.997800542 and 0.997755089, respectively). For speed of sound (Figs. 9b, 10, 11, 12 and 13b), the EL model demonstrates superior performance, achieving an R² of 0.99825565, with precise alignment of predicted and experimental values. For dynamic viscosity (Figs. 9c, 10, 11, 12 and 13c), the MLP-ANN model again excels, with an R² of 0.999540903, followed closely by AdaBoost and CNN. Models like AdaBoost and KNN show slightly wider scatter for certain properties, indicating marginally lower precision in those cases.

The scatter diagrams validate the sturdiness of the 198-observation data collection alongside the success of the implemented min-max scaling process, which rescaled predictor and target magnitudes uniformly to the [−1, 1] interval, thereby enabling dependable and precise learning procedures. In essence, these visuals emphasize the remarkable proficiency of MLP-ANN and EL frameworks in representing elaborate, curved linkages connecting core predictors (ABP category, molecular weight, molal density, thermal setting) to the material traits of water-dissolved aliphatic biogenic polyamine (ABP) preparations, thus revealing their promise for advancing areas including operational refinement, substance formulation, and progress in biological fields.

Crossplots comparing predicted and experimental values for all data segments using the KNN model.

Crossplots showing predicted versus experimental values across all data segments for the Ensemble Learning model.

Crossplots illustrating the relationship between predicted and actual values for both training and testing datasets using the Adaptive Boosting model.

Crossplots depicting predicted versus experimental values for the training and testing sets generated by the CNN model.

Crossplots illustrating the comparison between predicted and experimental values for both the training and testing sets using the MLP-ANN model.

Figures 14a–c, 15, 16, 17 and 18a–c illustrate the percentage relative errors observed in both development and validation stages across five predictive algorithms KNN, EL, CNN, AdaBoost, and MLP-ANN designed for estimating key material traits (acoustic velocity, mass density, and shear resistance) in water-based ABP mixtures. Within the 198-observation compilation (158 for fitting and 40 for appraisal), entries clustering around the y = 0 axis reflect superior estimation fidelity. Regarding mass density (Figs. 14a, 15, 16, 17 and 18a), the MLP-ANN approach stands out with a compact deviation profile (AARE% = 0.025414597, R² = 0.997800542), trailed closely by KNN (AARE% = 0.026013582, R² = 0.997755089). For acoustic velocity (Figs. 14b, 15, 16, 17 and 18b), EL delivers the tightest spread (AARE% = 0.072083185, R² = 0.99825565), with AdaBoost and CNN performing solidly in pursuit. In the case of shear resistance (Figs. 14c, 15, 16, 17 and 18c), MLP-ANN again dominates (AARE% = 0.479200074, R² = 0.999540903), supported by robust showings from AdaBoost and CNN. By comparison, approaches such as AdaBoost and KNN display wider deviation patterns for specific traits, suggesting marginally diminished exactness there. Through these graphical depictions, the exceptional prowess of MLP-ANN and EL becomes evident in modeling the intricate influences from primary factors (ABP category, molecular weight spanning 161.07–348.18 g/mol, molal density from 0 to 0.20094 mol/kg, and heat levels between 288.15 and 313.15 K) upon ABP mixture attributes, thereby confirming their utility in fields like workflow enhancement, substance engineering, and strides in biological engineering.

Percentage of relative error for training and testing segments of MLP-ANN model.

Percentage of relative error for the training and testing segments of the EL model.

Percent of relative error for CNN model in training and testing segments.

Percentage of relative error for the training and testing portions of the AdaBoost model.

Relative percentage of error for the training and testing segment of the MLP-ANN model.

Industrial applications

The machine learning algorithms created in this research offer immediate utility in biopharmaceutical, biochemical, and green chemical industries where aliphatic biogenic polyamines (ABPs) such as putrescine, cadaverine, and spermine serve as key intermediates or functional agents. Accurate prediction of density and dynamic viscosity is essential for the design and operation of unit processes including mixing, pumping, filtration, and heat exchange, where fluid behavior directly impacts energy consumption, equipment sizing, and product consistency. By replacing time-intensive experimental measurements with instant, high-accuracy predictions based on easily accessible inputs (ABP identity, concentration, temperature, and molar mass), these models enable rapid formulation screening, accelerate process development cycles, and support quality-by-design approaches in regulated manufacturing environments.

In biorefineries producing bio-based polyamides or specialty chemicals from renewable feedstocks, real-time knowledge of thermophysical properties is critical during fermentation, separation, and purification stages. For instance, the speed of sound, predicted with high fidelity by the developed models, can be leveraged for in-line ultrasonic monitoring of ABP concentration and solution homogeneity, enabling non-invasive process control. Similarly, viscosity predictions inform the design of downstream operations such as evaporation, crystallization, and membrane separation, where deviations in fluid rheology can lead to fouling or reduced throughput. Integration of these models into digital twin frameworks or process simulation platforms allows engineers to simulate and optimize entire production workflows under varying operational conditions without reliance on sparse empirical correlations.

From a deployment perspective, the models are highly compatible with industrial digital infrastructure. Trained MLP-ANN and EL models can be exported in lightweight, standardized formats and embedded into existing control systems, cloud-based analytics platforms, or edge devices for on-site inference. Their low computational demand and dependence only on measurable or design-specified parameters make them ideal for soft-sensing applications in resource-constrained or hazardous environments. Furthermore, the inclusion of sensitivity analysis via Monte Carlo methods provides insight into prediction reliability across input domains, supporting risk-aware decision-making. As industries increasingly adopt data-driven process intensification strategies, this modeling framework represents a scalable, cost-effective tool for advancing sustainable and efficient production of bio-based chemicals.

Conclusions

This research effectively created and confirmed a strong collection of predictive models employing advanced machine learning techniques, including KNN, EL, CNN, AdaBoost, and MLP-ANN, to forecast the physical properties including speed of sound, density, and dynamic viscosity of aqueous solutions of aliphatic biogenic polyamines (ABPs). A detailed experimental dataset of 198 points was utilized, encompassing key input variables such as ABP type, molar mass (161.07–348.18 g/mol), molal concentration (0–0.20094 mol/kg), and temperature (288.15–313.15 K). The main objective centered on creating reliable forecasting tools for these material characteristics amid diverse biological environments. Predictive capabilities saw substantial improvements via fine-tuning of hyperparameters with the CSA method, guaranteeing exceptional estimation fidelity. Thorough evaluations encompassing linkage assessments, scatter diagrams, aggregated deviation profiles, and deviation ratio analyses pinpointed standout approaches for individual traits. With respect to mass density, MLP-ANN dominated, attaining an R² value of 0.997800542, RMSE of 0.344613659, and AARE% of 0.025414597, with KNN trailing narrowly (R² = 0.997755089, AARE% = 0.026013582). Regarding acoustic velocity, EL took the forefront, posting an R² of 0.99825565, RMSE of 1.451654798, and AARE% of 0.072083185. For shear resistance, MLP-ANN once more surpassed competitors, delivering an R² of 0.999540903, RMSE of 0.005566581, and AARE% of 0.479200074. These results demonstrate the models’ exceptional ability to capture complex relationships in ABP solution properties. Sensitivity analysis conducted via Monte Carlo simulations revealed relative influence of input variables. For density, ABP type had the most significant influence (correlation coefficient = 3.8476), followed by molar mass (3.7618), molal concentration (3.05843), and temperature (2.99643). For speed of sound, molar mass was most influential (4.0963), followed by ABP type (3.9514), molal concentration (3.37512), and temperature (3.1459). For dynamic viscosity, ABP type led (4.2576), followed by molar mass (4.0029), molal concentration (3.5783), and temperature (3.6714). These findings provide critical insights into the key factors driving the physical properties of ABP solutions. These outcomes underscore the marked benefits of data-driven predictive techniques compared to conventional lab-based techniques, delivering a targeted, streamlined, and economical structure for estimating biological material traits. Such methodologies hold considerable promise for utilization in workflow refinement, substance engineering, and production innovations centered on ABP formulations. Subsequent investigations might elevate forecasting reliability by integrating extra elements, like fluid mixtures or atmospheric forces, thereby broadening their utility in varied biological frameworks. Although the constructed predictive systems exhibit superior estimation capabilities for the core material attributes of water-diluted ABP preparations, certain constraints persist in this work. Despite its thoroughness, the existing data compilation is confined to three particular ABPs alongside specified thermal and density boundaries. Achieving greater versatility would necessitate expanded lab-derived records covering an extended array of polyamines, liquid environments, and functional scenarios including fluctuations in compression. Furthermore, although CSA method proficiently refined parameter settings, adopting combined tuning approaches or advanced collective modeling could yield even greater efficacy. Investigators are urged to investigate the addition of structural indicators, fluid affinity measures, and compression influences to amplify the relevance of these systems in a broader spectrum of biological and manufacturing contexts.

Study limitations and future recommendations

This study successfully developed a high-accuracy, data-driven framework for predicting the thermophysical properties of aqueous aliphatic biogenic polyamines (ABPs). However, to guide the correct application and future development of this work, its limitations must be clearly acknowledged. The following points outline the current constraints and the corresponding pathways for advancement.

Limitations

-

The models were trained and validated on a dataset encompassing three specific ABPs (putrescine, cadaverine, and spermine), concentrations up to 0.2 mol/kg, and a temperature range of 288.15 to 313.15 K. While this scope is highly relevant for many ambient-condition processes, the predictive performance for other polyamines, higher concentrations, more extreme temperatures, or different pressures cannot be guaranteed and represents a boundary of the current work.

-

The framework relies on experimentally accessible variables (e.g., concentration, temperature). While this ensures practical utility, it does not explicitly incorporate fundamental molecular-level interactions, such as specific hydrogen-bonding networks or ion-solvent effects. This limits the model’s innate physical interpretability and its ability to generalize to more complex systems, such as polyamines with divergent structures or mixed-solvent environments, without retraining on new data.

-

The model’s accuracy is inherently tied to the quality and scope of the underlying experimental data. As with any machine learning model, predictions in sparsely populated regions of the feature space or near the boundaries of the trained domain carry higher uncertainty.

Future recommendations

-

A primary and essential direction for future work is to systematically expand the dataset to include a wider variety of ABPs, broader concentration and temperature ranges, the effects of pressure, and the presence of non-aqueous co-solvents. This will be the foundation for developing next-generation models with expanded industrial applicability.

-

To enhance generalizability and physical realism, future models should integrate thermodynamic constraints and molecular descriptors. Techniques such as Physics-Informed Neural Networks (PINNs) could be employed to embed known physical laws directly into the learning process, making the predictions more robust and reliable even with limited data.

-

Research should explore more sophisticated machine learning architectures, such as graph neural networks, which can naturally encode molecular structure, to handle structurally diverse polyamines without being limited to pre-defined input features.

-

The ultimate validation and application of this framework lie in its integration into real-time process control and optimization systems. Future efforts should focus on deploying these models as digital twins or soft sensors within industrial workflows to actively guide bioprocessing and formulation design.

Data availability

Data is available on request from the corresponding author.

Abbreviations

- ABP:

-

Aliphatic Biogenic Polyamines

- PUT:

-

Putrescine dihydrochloride

- CAD:

-

Cadaverine dihydrochloride

- SPER:

-

Spermine tetrahydrochloride

- MLP-ANN:

-

Multi-Layer Perceptron Artificial Neural Network

- CNN:

-

Convolutional Neural Network

- KNN:

-

K-Nearest Neighbors

- EL:

-

Ensemble Learning

- AdaBoost:

-

Adaptive Boosting

- CSA:

-

Coupled Simulated Annealing

- R²:

-

Coefficient of Determination

- RMSE:

-

Root Mean Square Error

- AARE%:

-

Average Absolute Relative Error Percentage

- Di :

-

Residual for i-th data point (difference between predicted and experimental values)

- SDi :

-

Standardized residual

- Hi:

-

Leverage (hat) value for i-th observation

- h*:

-

Leverage threshold

- ρ(rho):

-

Density (kg/m³)

- µ(mu):

-

Dynamic viscosity (mPa·s)

- u:

-

Speed of sound (m/s)

- T:

-

Temperature (K)

- M:

-

Molal concentration (mol/kg)

- M:

-

Molar mass (g/mol)

- xi, yi:

-

Feature values for data point i

- wt:

-

Weight assigned to t-th model in ensemble

- ht(x):

-

Prediction by t-th model for input x

- k:

-

Number of samples (in Monte Carlo / KNN context)

- xN :

-

Normalized value

- Xmax, Xmin :

-

Maximum and minimum values in dataset

References

Ramani, D., De Bandt, J. P. & Cynober, L. Aliphatic polyamines in physiology and diseases. Clin. Nutr. 33 (1), 14–22 (2014).

Agostinelli, E. et al. Polyamines: fundamental characters in chemistry and biology. Amino Acids. 38, 393–403 (2010).

Pegg, A. E. & Casero, R. A. Current Status of the Polyamine Research Field 3–35 (Methods and Protocols, 2011).

Kim, D. et al. Biomimetic mineralization of 3D-printed polyhydroxyalkanoate-based microbial scaffolds for bone tissue engineering. Int. J. Bioprinting. 10 (2), 489–499 (2024).

Huxoll, F. et al. Thermodynamic properties of biogenic amines and their solutions. J. Chem. Eng. Data. 66 (7), 2822–2831 (2021).

Ramanathan, P. S., Krishnan, C. V. & Friedman, H. L. Models having the thermodynamic properties of aqueous solutions of tetraalkylammonium halides. J. Solution Chem. 1, 237–262 (1972).

Ocak, M., Buschmann, H. J. & Schollmeyer, E. Thermodynamic data for the formation of 1: 1 and 2: 1 complexes of α, ω-diamino dihydrochlorides with 18-crown-6 in aqueous solution. J. Solution Chem. 37, 595–601 (2008).

Campetella, M. et al. Physical-chemical studies on Putrescine (butane-1, 4-diamine) and its solutions: experimental and computational investigations. J. Mol. Liq. 322, 114568 (2021).

Alatefi, S. et al. Accurate prediction of water activity in ionic liquid-based aqueous ternary solutions using advanced explainable artificial intelligence frameworks. Chem. Eng. Sci. 318, 122218 (2025).

Pozdeev, V. A. & Verevkin, S. P. Vapor pressure and enthalpy of vaporization of linear aliphatic alkanediamines. J. Chem. Thermodyn. 43 (12), 1791–1799 (2011).

Kabir, A., Hossain, M. & Kumar, G. S. Thermodynamics of the DNA binding of biogenic polyamines: calorimetric and spectroscopic investigations. J. Chem. Thermodyn. 57, 445–453 (2013).

Ai, Y. et al. Determination of biogenic amines in different parts of lycium barbarum L. by HPLC with precolumn dansylation. Molecules 26 (4), 1046 (2021).

Cen, Q. et al. Global Yield Surface Construction of Polymethacrylimide Foam by an Integrated Approach Combining nanoindentation, Machine Learning and microstructure-informed Modeling 114412 (Materials & Design, 2025).

Sui, Y., Guo, T. & Cao, G. Characteristics of sodium p-styrenesulfonate modified polyacrylamide at high temperature under dual scale boundary. Phys. Fluids 37(7), (2025).

Yan, Z. et al. Effects of CO2 pressure on the dynamic wettability of the kerogen surface: insights from a molecular perspective. Appl. Surf. Sci. 694, 162822 (2025).

Raja Sarobin, M. & Panjanathan, R. V. Diabetic retinopathy classification using CNN and hybrid deep convolutional neural networks. Symmetry, 14(9), 1932 (2022).

Giusti, A. et al. Fast Image Scanning with Deep max-pooling Convolutional Neural Networks. IEEE. (2023).

Yang, K. et al. Multi-criteria spare parts classification using the deep convolutional neural network method. Appl. Sci. 11 (15), 7088 (2021).

Bemani, A., Madani, M. & Kazemi, A. Machine learning-based Estimation of nano-lubricants viscosity in different operating conditions. Fuel 352, 129102 (2023).

Valles, J., Application of a Multilayer Perceptron Artificial Neural Network (MLP-ANN) in Hydrological Forecasting in El Salvador. Advanced Hydroinformatics: Machine Learning and Optimization for Water Resources, 213–239 (2024).

Al-Mejibli, I. S., Alwan, J. K. & Abd, D. H. The effect of gamma value on support vector machine performance with different kernels. Int. J. Electr. Comput. Eng. 10 (5), 5497–5506 (2020).

Paluang, P., Thavorntam, W. & Phairuang, W. Application of multilayer perceptron artificial neural network (MLP-ANN) algorithm for PM2. 5 mass concentration Estimation during open biomass burning episodes in Thailand. 20(7), 28-42 (2024).

Margineantu, D. D. & Dietterich, T. G. Pruning Adaptive boosting. In ICML (Citeseer, 1997).

Zheng, Z. & Yang, Y. Adaptive boosting for domain adaptation: toward robust predictions in scene segmentation. IEEE Trans. Image Process. 31, 5371–5382 (2022).

Pandey, A. & Jain, A. Comparative analysis of KNN algorithm using various normalization techniques. Int. J. Comput. Netw. Inform. Secur. 10 (11), 36 (2017).

Prasath, V. et al. Distance and Similarity Measures Effect on the Performance of K-Nearest Neighbor Classifier–A. arXiv preprint arXiv:1708.04321, (2017).

Gonçalves-e-Silva, K. & Aloise, D. Xavier-de-Souza, Parallel synchronous and asynchronous coupled simulated annealing. J. Supercomputing. 74, 2841–2869 (2018).

Yang, S. et al. A coupled simulated annealing and particle swarm optimization reliability-based design optimization strategy under hybrid uncertainties. Mathematics 11 (23), 4790 (2023).

Suykens, J. A. K., Yalçin, M. E. & Vandewalle, J. Coupled Chaotic Simulated Annealing Processes. IEEE. (2003).

Umredkar, P. Y. et al. Molecular Interaction and Hydration Properties of Aliphatic Biogenic Polyamines: Insights Through Volumetric, Viscometric and Acoustic Investigations (Journal of Chemical & Engineering Data, 2025).

Daryasafar, A. et al. Connectionist approaches for solubility prediction of n-alkanes in supercritical carbon dioxide. Neural Comput. Appl. 29, 295–305 (2018).

Ren, D. et al. Harmonizing physical and deep learning modeling: A computationally efficient and interpretable approach for property prediction. Scripta Mater. 255, 116350 (2025).

Gao, N. et al. On-demand prediction of low-frequency average sound absorption coefficient of underwater coating using machine learning. Results Eng. 25, 104163 (2025).

Bassir, S. M. & Madani, M. Predicting asphaltene precipitation during Titration of diluted crude oil with paraffin using artificial neural network (ANN). Pet. Sci. Technol. 37 (24), 2397–2403 (2019).

Songolzadeh, R., Shahbazi, K. & Madani, M. Modeling n-alkane solubility in supercritical CO 2 via intelligent methods. J. Petroleum Explor. Prod. 11, 279–287 (2021).

Anping, W. et al. Bayesian-Driven Optimization of MDCNN-LSTM-RSA: A New Model for Predicting Aeroengine RUL (IEEE Transactions on Reliability, 2025).

Guo, B. & He, X. The mechanism of bisphenol S-Induced atherosclerosis elucidated based on network Toxicology, molecular Docking, and machine learning. J. Appl. Toxicol. 45 (6), 1043–1055 (2025).

Paul, S. et al. Beam shear strength prediction of recycled aggregate concrete using explainable artificial intelligence. Asian J. Civil Eng., 1–16 (2025).

Zhang, H. et al. Fatigue Life Prediction for Orthotropic Steel Bridge Decks Welds Using a Gaussian Variational Bayes Network and Small Sample Experimental Data 111406 (Reliability Engineering & System Safety, 2025).

Bemani, A. et al. Estimation of adsorption capacity of CO2, CH4, and their binary mixtures in Quidam shale using LSSVM: application in CO2 enhanced shale gas recovery and CO2 storage. J. Nat. Gas Sci. Eng. 76, 103204 (2020).

Bassir, S. M. & Madani, M. A new model for predicting asphaltene precipitation of diluted crude oil by implementing LSSVM-CSA algorithm. Pet. Sci. Technol. 37 (22), 2252–2259 (2019).

Madani, M. & Alipour, M. Gas-oil gravity drainage mechanism in fractured oil reservoirs: surrogate model development and sensitivity analysis. Comput. GeoSci. 26 (5), 1323–1343 (2022).

Acknowledgements

We acknowledge the support provided by Zarqa University.

Author information

Authors and Affiliations

Contributions

All authors contributed to this paper.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Mohammad, S.I., Owida, H.A., Vasudevan, A. et al. Accurate prediction of density, viscosity, and speed of sound in aqueous aliphatic biogenic polyamine solutions using data-driven modeling. Sci Rep 16, 4635 (2026). https://doi.org/10.1038/s41598-025-34948-7

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-34948-7