Abstract

Although the Transformer architecture has established itself as the industry standard for jobs involving natural language processing, it still has few uses in computer vision. In vision, attention is used in conjunction with convolutional networks or to replace individual convolutional network elements while preserving the overall network design. Differences between the two domains, such as significant variations in the scale of visual things and the higher granularity of pixels in images compared to words in the text, make it difficult to transfer Transformer from language to vision. Masking autoencoding is a promising self-supervised learning approach that greatly advances computer vision and natural language processing. For robust 2D representations, pre-training with large image data has become standard practice. On the other hand, the low availability of 3D datasets significantly impedes learning high-quality 3D features because of the high data processing cost. We present a strong multi-scale MAE prior training architecture that uses a trained ViT and a 3D representation model from 2D images to let 3D point clouds learn on their own. We employ the adept 2D information to direct a 3D masking-based autoencoder, which uses an encoder-decoder architecture to rebuild the masked point tokens through self-supervised pre-training. To acquire the input point cloud’s multi-view visual characteristics, we first use pre-trained 2D models. Next, we present a two-dimensional masking method that preserves the visibility of semantically significant point tokens. Numerous tests demonstrate how effectively our method works with pre-trained models and how well it generalizes to a range of downstream tasks. In particular, our pre-trained model achieved 93.63% accuracy for linear SVM on ScanObjectNN and 91.31% accuracy on ModelNet40. Our approach demonstrates how a straightforward architecture solely based on conventional transformers may outperform specialized transformer models from supervised learning.

Similar content being viewed by others

Introduction

The transformer architecture is becoming increasingly popular for handling various data modalities1. The primary rationale for this is that they reduce the inductive biases inherent in constructing network topologies. Transformers heavily depend on attention mechanisms, particularly self-attention. It is a fundamental computational element that assists a network in comprehending the alignments and hierarchies present in the input data by measuring the interactions between paired entities1,2. The necessity for meticulously crafted inductive biases will be practically eradicated due to these desired qualities. A prominent example is the Transformers model, as introduced by1. The prevailing paradigm, as described in3’s work on BERT, entails using a sizable text corpus for pre-training and a smaller, purpose-built dataset for fine-tuning. The scalability and computational efficiency of transformers have enabled the training of models with more than 100 billion parameters, a size that has never been seen before4,5. Despite the continuous expansion of models and datasets, no evidence of performance reaching its maximum limit exists. Self-supervised learning, a method of learning to represent information from annotated but unlabeled data, has demonstrated significant efficacy in multi-modality learning6,7, CV8,9,10, and NLP11,12. Self-supervised learning utilizes unlabeled data to extract latent features instead of relying on human-defined labels to create representations. Unsupervised learning has greatly enhanced computer vision13,14,15,16 and natural language processing (NLP)12,17 by reducing the dependence on annotated data. 3D reconstruction holds great importance in computer vision and image processing18,19.

2D images have garnered significant attention in recent years due to their limited usage20. The profound influence of three-dimensional images in several domains, including security, robotics, and medicine, has captured the attention of professionals in image processing21. 3D images enable us to achieve actions that are unattainable with 2D images. Although 3D reconstruction22,23 has various applications, face recognition remains challenging due to its limited efficiency and accuracy in matching faces in the 3D domain24,25. CNN has been the predominant method for simulating CV for a significant period. Following the success of AlexNet26 in the ImageNet image-classification task, CNN has evolved to become increasingly powerful through the use of larger scales27,28, longer connections29, and more advanced convolutional forms30,31,32. Vision Transformers (ViTs) have been recently introduced as an alternative to CNN33. ViTs demonstrate exceptional performance in various image perception tasks, such as image classification33, object detection34, and semantic segmentation35, despite the absence of significant domain-specific assumptions, except for the image tokenization process33. These architectural developments have significantly improved the field since CNNs have been employed as backbone networks for various applications. Deep learning architectures have experienced a surge in growth, both in terms of their capacity and competence, as evidenced by the works of1,26. Due to rapid technological progress, models may now easily overfit one million images36 and begin to require hundreds of millions of tagged images, which are often not publicly accessible33. The large amount of image data accessible has attracted a lot of interest in the field of computer vision to pre-train 2D models37,38,39, which has several advantages for different 3D reconstruction tasks40,41,42,43.

Although 2D pre-trained models are widely used, extensive 3D images are still scarce within the 3D world. This can be due to the pricey nature of image collection and the laborious process of annotating. As demonstrated in the studies by10,14,16, Masked autoencoding shows great potential in the domains of both languages and images. An autoencoder is used to reconstruct either explicit characteristics (like pixels) or implicit features (like discrete tokens) associated with the original information that was randomly masked in a portion of the input data. This reconstruction assignment aims to enable the autoencoder to learn and extract complex latent characteristics from unmasked sections since the masked regions provide no relevant data information. As an illustration, to attain cutting-edge results, BERT3 in natural language processing and MAE10 in computer vision employ masked autoencoding. Given that point clouds and images share similar characteristics, masked autoencoding can also be extended to self-supervised learning for point clouds. The complete assemblage of elements constituting global features is merged with local features. Hence, the point cloud can undergo processing akin to languages and images by incorporating point subsets into tokens. Furthermore, masked autoencoding, a self-supervised learning technique44,45, can efficiently handle the substantial data requirements of the transformer architecture because of the comparatively modest size of the point cloud’s datasets, which serves as the central component of the autoencoder. Masked autoencoding, a method inspired by masked language modeling3,11, is employed for self-supervised learning on 2D images. This approach utilizes asymmetric encoder-decoder transformers33, as implemented in MAE10 and other related methods14,16. We are interested in using masked autoencoding to 2D pre-trained vision transformer models to reconstruct 3D representations. When comparing the number of points, ShapeNet has far fewer points than ImageNet and image-text pairs. ShapeNet has 14 million points, while ImageNet has 400 million points. Additionally, ShapeNet only includes 50k point clouds of 55 item types.

Despite efforts to derive self-supervisory signals for 3D pre-training46,47,48, the ability of pre-training to generalize is constrained due to the limited semantic richness and diversity provided by point clouds in raw form with little structural patterns, in comparison to colored images. Given the resemblances between point clouds and images, which both depict distinct visual characteristics of objects, with 2D to 3D spatial representation serving as their link, The authors inquire whether the process of learning 3D representations can be enhanced by transferring robust 2D knowledge into the 3D domain through the utilization of readily accessible pre-trained 2D models49,50. We offer a 3D reconstruction model that utilizes a pre-trained 2D vision transformer called ViT to generate multi-view 2D features and 2D attention maps for the point cloud. Additionally, we employ a Masked Autoencoding framework to transfer knowledge from images to points, enabling self-supervised pre-training of the 3D point cloud. The proposed approach utilizes 2D semantics obtained from a vast amount of visual input. The proposed paradigm produces exceptional 3D representations and exhibits a high degree of transferability to future 3D problems. Our primary design for 3D pre-training is an asymmetric encoder-decoder transformer based on the 3D MAE models mentioned in the works of33,51,52. This method uses the visible points to recreate the masked points using an input of a randomly masked point cloud. Our approach to 2D-guided masking prioritizes point-tokens with larger geometric meaning to remain appeared for the M.A.E Encoder, in contrast to other techniques51,52 that select visible tokens at random.

Comparison of different methods for Masking Technique

Problem statement

The difficulty stems from the limited availability of extensively labeled 3D datasets compared to their 2D equivalents. Training 3D models from the beginning is both costly and time-consuming. Thus, it is necessary to construct 3D models that can benefit from 2D models. Researchers seek to apply the knowledge gained by 2D transformers to 3D representation learning using a method called masked autoencoders. The approach involves reconstructing a three-dimensional model using a two-dimensional image while selectively masking some elements of the three-dimensional data. Essentially, this approach connects the disparity between ample 2D data and restricted 3D data, facilitating the efficient development of 3D models.

Contribution

The main contributions of this research in utilizing 2D pre-trained vision transformers for 3D model generation with masked autoencoders are summed up in the following way:

-

1.

We proposed the reconstruction of 3D models using masking and semantic strategies with a 2D pre-trained vision transformer model in this study. This research offers a more efficient method for producing 3D models, rather than typical transformer methods that rely solely on restricted 3D information.

-

2.

We propose a novel approach to 2D semantic reconstruction, which leverages pre-trained 2D vision transformer models to transfer the acquired 2D information to 3D environments efficiently.

-

3.

We successfully employed the transfer learning technique using 2D models to generate 3D models. Our strategy is efficient, accurate, and generally applicable, utilizing easily accessible 2D data.

Organization

The following outlines how this paper is organized: A brief Introduction is provided in the section. Detailed related work showing how the transformation of image representation is applied in computer vision in section. Training of the proposed model with mathematical modeling techniques is given in the section. Results and Discussion of the ViT3D model are presented in section. The study is concluded in a section with a discussion of future directions.

Related work

Transformer

Transformers1 approach has gained great success in NLP by using a self-attention mechanism to capture global dependencies of input, as evidenced by several studies3,12,17 and53,54. Following the introduction of ViT [33, transformer models are becoming more and more common in the CV sector.55,56,57,58. Nevertheless, the development of Transformers architectures for point cloud representation learning as backbones for masked autoencoders is not as advanced. PCT59 implements a specialized input embedding layer and alters the self-attention process in Transformer layers. Point Transformer57 modifies the layer and incorporates additional aggregating actions between Transformer blocks. Point-BERT60 utilizes a conventional Transformer architecture but relies on DGCNN61 for pre-training assistance. In contrast to earlier studies, our research introduces an architecture built using conventional transformers, along with additional features such as masking, transfer learning, and semantic reconstruction techniques.

CNN and computer vision

CNNs are widely used as the conventional network model in computer vision. Although CNN has been in existence for some decades62, it only gained popularity and became widespread with the introduction of AlexNet26. Subsequently, more advanced and efficient convolutional neural architectures have been suggested to further the development of DL in CV. Examples of these designs include VGG63, GoogleNet64, ResNet27, DenseNet29, HRNet65, and EfficientNet66. Although CNNs and their variations remain the main architectural frameworks for computer vision applications, we emphasize the significant potential of transformer-like designs for integrating vision and language modeling. We have achieved exceptional results in various fundamental visual recognition tasks.

Masked autoencoders

An encoder and a decoder are typically found in an autoencoder. The inputs must be transformed into latent, high-level properties by the encoder. The decoder then uses latent characteristics to reconstruct and decode the input. Reducing the difference between the original input and the reconstructed data is the aim of optimization; this is typically computed for images using the mean squared error loss in pixel space. To be more precise, our method falls within the category of denoising autoencoders. The main idea behind denoising autoencoders is to introduce input noise to increase the model’s resilience. Masked autoencoders employ a masking procedure to introduce input noise according to the same principle. For example, BERT3 uses masked language modeling in natural language processing (NLP). Tokens are chosen at random from the input, and an autoencoder is then used to forecast the vocabulary that matches the masked tokens. In computer vision, both MAE15 and SimMIM16 provide a comparable approach to modeling masked images, where parts of the input image are randomly masked. Next, autoencoders forecast the concealed patches in the pixel space. Building upon the concepts above, we aim to include masked autoencoders in point cloud data.

Self supervised learning and computer vision

Self-supervised learning (SSL) enables algorithms to anticipate unknown inputs by using observed inputs. In Natural Language Processing (NLP), SSL has undergone significant advancements. Pretext challenges that mask input tokens are created via generative SSL techniques, such as BERT3, which achieve notable performance by pre-training the model to anticipate the original vocabulary. In the field of computer vision, self-supervised learning techniques have drawn a lot of attention, with a focus on different pretext tasks for pre-training67,68,69. Contrastive self-supervised learning methods in CV for images focus on discerning the level of similarity between several augmented images. It involves modeling visual similarity and dissimilarity, or sometimes merely similarity, between two or more viewpoints. This approach has been widely used, with references to relevant studies8,15,70. Contrastive and related approaches heavily rely on data augmentation8,9,71.

SSL and point clouds

Conventional approaches employ pretext jobs to reconstitute the modified input point cloud using encoded latent vectors. These pretext tasks include rotation72, deformation73, rearranged sections74, and occlusion75. PointContrast76 employs contrastive learning to train discriminative 3D representations by comparing properties of the same points from various viewpoints. DepthContrast48 uses different augmentations to improve the contrast of depth maps. By comparing point clouds with the corresponding rendering images, CrossPoint77 uses cross-modality contrastive learning to acquire ample self-supervised signals. Point-MAE51 and Point-BERT60 have introduced transformer network-based BERT style3 and MAE style10 pre-training methods for 3D point-clouds. These techniques have demonstrated competitive performance across several tasks. Nevertheless, they ignore the local-global interactions among 3D objects and can only represent point clouds at a single resolution.

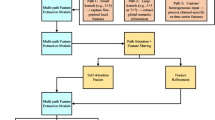

Pre-training using 3D point cloud

Significant advancements have been made in supervised learning for point clouds with the use of carefully crafted architectures59,78,79,80 and local operators81,82,83. Nevertheless, the techniques acquired from closed-set datasets84 have a constraint of generating only broad 3D representations. On the other hand, the use of unlabelled point clouds for self-supervised pre-training85 has shown potential for transferable skills, making it a useful method for initializing networks before fine-tuning. Conventional 3D self-supervised methods commonly use encoder and decoder structures to reconstruct the original point clouds from modified illustrations. These modifications include rearranging points, occluding parts, rotating, downsampling, and encoding using code words. Concurrent investigations additionally utilize contrasting pretext tasks between pairings of 3D data, such as local-global linkages86,87, temporal frames88, and enhanced views76. Our proposed model utilizes a masked autoencoder as the fundamental pre-training framework with a pre-trained vision transformer ViT algorithm, as shown in Figure 1. However, it is directed by 2D pre-trained models with the concept of transfer learning and through image2point learning techniques, that enhance 3D prior training by incorporating a wide range of 2D semantics.

ViT3D Framework and process flow for 3D object Reconstruction

Survey of pre-trained 2D-to-3D transformers

A major advance in the 3D field has been made in recent years. The authors of this work89 included a thorough assessment of current developments in the 3D sector, covering a wide range of issues such as downstream jobs, backbone designs, and various pre-training methodologies. This allowed readers to stay current on the most recent developments in the field. This survey is more thorough than the prior literature review on point clouds. The 3D pre-training techniques, several downstream activities, well-known benchmarks, assessment measures, and several exciting future approaches are all included in this survey89. The foundation of computer graphics is the generation of 3D models, which has been the subject of decades of research. The field of 3D content production is expanding quickly with the advent of sophisticated neural representations and generative models, making it possible to produce a growing variety and quality of 3D models. It’s challenging to keep up with all of the latest advancements in this industry because of its explosive expansion. The authors of this survey90 created an organized road map that included 3D representation, generation methods, datasets, and related applications in addition to introducing the basic methodologies of 3D generating methods. To be more precise, the authors of90 presented the 3D representations that form the foundation of 3D creation. In addition, the authors offered a thorough summary of the quickly expanding body of research on generation techniques, classifying it according to the different kinds of algorithmic paradigms for example, procedural, feed forward, optimization-based, and generative novel view synthesis90. This survey aims to methodically summarise and categorize 3D generation techniques, as well as the relevant datasets and applications.

Proposed hybrid model

We aim to develop a streamlined and effective system of generating 3D models from 2D ViT models using masked autoencoders for self-supervised learning of point clouds. Our initial step involves presenting the fundamental 3D structure of our suggested model for point cloud masked autoencoding not relying on the 2D-based direction. Figure 2 depicts the comprehensive framework of our approach. The initial point cloud is subjected to a masking and embedding module for processing. Subsequently, a conventional autoencoder utilizing a transformer architecture is employed, with a straightforward prediction component, to restore the concealed segments of the input point cloud. Next, we use pre-trained 2D model ViT to extract graphic illustrations from 3D point clouds. Here, we will demonstrate transferring knowledge from images to points to understand 3D representations.

Point cloud learning schemes

In addition to the pre-trained 2D representations for the point cloud, our proposed method incorporates a 2D masking technique to the Encoder and a 2D semantically-reconstruction technique following the Decoder. Additionally, we learn 2D semantics using transfer learning with the vision transformer ViT and apply that information to represent objects in 3D.

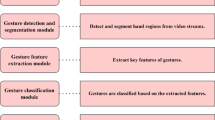

2D Attention Maps and Visual Features for 2D Semantic Target

Masking technique

The standard masking strategy employs a random sampling method that follows a uniform distribution for selecting masked tokens. This method, however, might interfere with the encoder’s ability to perceive important spatial characteristics and confuse the decoder with unimportant structures. Consequently, we use the 2D attention maps to direct the masking of point tokens, allowing us to select more semantically meaningful 3D regions for encoding. More precisely, we use the coordinates of point tokens \(PO^{Tk} \epsilon R^{MS \times 3}\) to index the multi-view attention maps \(({S_{j}^{2D}})^{3}_{i=1}\) and then project them back into 3D space as shown in Figure 3. These projected maps are combined to form a 3D attention cloud \(S^{3D} \epsilon R^{MS \times 1}\). To assign the semantic score to each point in the 3D space, We determine the multi-view attention maps’ average of the related 2D metrics in \(S^{3D}\) as given in Equation 1.

We utilize SoftMax to standardize the masked points inside \(S^{3D}\) and consider the magnitude of each element as the appeared probability for the correlating point token. By incorporating a 2D pre-directed, random masking is transformed into a non-uniform sampling method, where each token has a distinct probability of being kept. Tokens that represent crucial retention rates are higher for 3D constructions. It increases learning how to convey information in the encoder by emphasizing critical 3D shapes. It also gives the masked tokens in the decoder more valuable hints, resulting in improved reconstruction.

Semantic reconstruction technique

To reconstitute the extracted 2D semantics from various viewpoints, the authors additionally applied the visible point-tokens, \(TK^d_{visible}\). This successfully translates the 2D pre-trained knowledge into 3D pre-training. We use visible point token coordinates \(PO^{TK}_{visible} \epsilon R^{MS_{visible} \times 3}\) as indexes for combining related 2D characteristics from \({(F_{j}^{2D}})^3_{i=1}\) via channel-wise concatenation, formulated as follows, to get the 2D semantic target as given in Equation 2.

Next, we also use \(TK^{d}_{visible}\) as the rebuilding head for just one layer of linear projection, and we calculate the l2 loss as given in Equation 3.

where rebuilding head is indicated by \(H_{2D}\), which is parallel to \(H^{3D}\) for masked 3D coordinates. Next, we formulate eventual prior training loss of the suggested technique as follows, given in Equation 4.

Pretrained 2D models

To help with the 3D representation learning, the authors have been using 2D models of various frameworks (ResNet27, ViT33, SWIN91), as well as self-supervised6,92. We used the ViT as our pre-trained 2D model in this study. To create depth maps, we projected the input point cloud over several image planes, which are subsequently encoded into multi-view 2D representations to position the input for 2D models. The transformer encoder alternates between layers of multiheaded self-attention and MLP blocks1. Layernorm (LN) comes before each block, and residual connections come after each block. The MLP has two GELU non-linearity layers. The authors applied the notion of the transformer-based attention mechanism as described in33 as mentioned in Equations [5,6, 7, 8].

We prepend a learnable embedding to the sequence of embedded patches \(z^{0}_{0} = xclass\), whose state at the Transformer encoder’s output \(z_{il}\) acts as the image representation \(y_{j}\) as shown in Eq. 8, much like BERT’s [class] token. Pre-training and fine-tuning both involve attaching a classification head to \(z_{0l}\). During pre-training, an MLP with a single hidden layer, and during fine-tuning, a single linear layer implements the classification head. To preserve positional information, position embeddings are appended to the patch embeddings.

Optimal point cloud projection

We projected the input point cloud along the x, y, and z axes from three different views to guarantee the effectiveness of pre-training. To get the 2D position on the relevant map, we immediately subtract two of each point’s three coordinates and round down the remaining two. The estimated value of each pixel, which represents the relative depth relations of the points, is initially assigned as the missing coordinate to reproduce the three-channel RGB. And so this is repeated three times.

Multi view features of point cloud

Next, we extract multi-view properties of the point cloud with channels using a 2D pre-trained model (e.g., pre-trained ResNet or ViT). These features are expressed as \({(FM_{j}^{2D}})^3_{i=1}\), where the feature map size is indicated by \(F_{j} \epsilon R^ {K \times W \times CH}\). Sufficient high-level semantics acquired from extensive image data are present in these 2D characteristics. By encoding from many viewpoints, the spatial data loss during projections can also be lessened.

Semantic attention maps for 2D pre-trained models

Using the 2D pre-trained model, we obtain a semantic attention map for every view in addition to 2D features. The one-channel maps, which we represent as \(({S_{j}^{2D}})^{3}_ {i=1}\), show the semantic significance of various picture regions, where \(S_{j} \epsilon R^{H \times W \times 1}\). For ResNet, we apply pixel-wise max pooling on \({(F_{j}^{2D}})^3_{i=1}\) to reduce the feature dimension to one. We employ attention maps of the class token at the last transformer layer for ViT since the attention weights to the class token represent the degree to which the characteristics contribute to the final classification.

3D point cloud masked autoencoding framework

As the fundamental structure for image2point learning, our suggested method includes an encoder-decoder transformer, a token embedding module, and a head for rebuilding masked 3D coordinates. It builds on earlier work51,52,93,94.

Point cloud token embedding

We then use Furthest Point Sampling to downsample the point number from Ni to Mj, denoted as \(Pt \epsilon R^{Mj \times 3}\), given a raw point cloud \(Pt \epsilon R^{Ni \times 3}\). After that, we use kNN to identify each downsampled point’s k neighbors. We next use a micro PointNet78 to aggregate their features and generate \(M_{j}\) point tokens. Each token can interact with other tokens in the follow-up transformer that represent long-range attributes while also representing a local spatial region. We express them as \(TF \epsilon R^{Mj \times fd}\), where the feature dimension is indicated by \(f_{d}\). We replace each masked point patch that needs to be embedded with a mask token whose weight is learned through sharing. With C serving as the embedding dimension, the full set of mask tokens is expressed as \(T_{ms} \in R^{ms \times n \times C}\). To handle the unmasked point patches, one straightforward solution is to use a trainable linear projection to flatten and embed them, as outlined in33. Nevertheless, we contend that linear embedding does not adhere to the notion of permutation invariance. An alternative embedding method should be implemented, which is more rational and practical. To maintain cleanliness, we employ a streamlined PointNet architecture, mostly composed of Multilayer Perceptrons (MLPs) and max pooling layers. The point patches \(P_{v}\), which are visible and belong to \(R^{(1-m)n \times k \times 3}\), are embedded into visible tokens \(T_{vi}\) as follows in Equation 9:

Transformer-based encoder-decoder architecture

The decoder in our system is analogous to the encoder, except it incorporates a reduced number of Transformer blocks. The input consists of two components: encoded tokens \(T_{en}\) and masked tokens \(T_{ms}\). Each Transformer block is equipped with a complete set of positional embeddings, which furnish location information to all the tokens. Only the decoded mask tokens are produced by the decoder when processing is complete, and these are then passed to the following prediction head. The structure of an encoder-decoder is expressed as follows in Equations 10 and 11:

We use a ratio to conceal the point tokens to create the pre-text learning targets, such as 81%, and feed only the visible tokens into the encoder (\(T_{visible} \epsilon R^{Mj_{visible} \times C}\)), where \(Mj_{visible}\) shows the different visible tokens. Each encoder block incorporates pre-training and a self-attention layer to identify the global 3D shape among the other visible components. The encoded \(T^{en}_{visible}\) is then fed into a lightweight decoder, where \(Mj_{mask}\) denotes the masked token number, and is concatenated with a set of learnable shared masked tokens \(T_{mask} \epsilon R^{M^{mask \times C}}\) after encoding. MA is determined by the Equation 12:

Through the extraction of relevant spatial cues from visible ones, the masked tokens in the decoder are taught to reconstruct the masked 3D coordinates.

3D-coordinate reconstruction

To reassemble the masked tokens’ 3D coordinates and the k points that surround them, we use the \(T^d\) mask in addition to the decoded point tokens \(T^{d}_{visible}\) and \(T^{d}_{mask}\). The reconstruction head used by the model is made up of a single linear projection layer to provide predictions for \(P_{mask} \epsilon R^{{M}^{mask \times k \times 3}}\) represents the actual 3D coordinates of the points that have been masked. Next, we calculate the loss using the Chamfer Distance method proposed by95 and express it in Equation 13:

Results and discussion

First of all, we demonstrate our pre-training setup and the classification performance of a unified linear SVM. Next, we demonstrate the outcomes by optimizing our suggested approach for several 3D downstream tasks. Ultimately, we do experimental studies to examine the specific attributes of the proposed approach.

Self-supervised 3D pre-training

We utilize the widely-used ShapeNet85 dataset for self-supervised 3D pre-training. This dataset comprises 57,448 artificially generated point clouds representing 55 object types. We follow architecture for the MAE from the52. We chose input point number (N) as 4,096 initially and checked if it works with our datasets, the downsampled number (M) is set to 512 initially, the number of nearest neighbors (K) is set to 16, the number of features channels (C) is set to 448, and the mask ratio is set to 81%. We default use the ViT Base33 model pre-trained by CLIP6 for existing 2D models. During 3D pre-training, we keep the weights of this model frozen. The point cloud is transformed into three depth maps with dimensions of \(240 \times 240\). We extract a 2D feature size of \(16 \times 16\) from these maps, denoted as \(H_{e} \times W_{g}\). Our proposed approach has undergone pre-training for 250 epochs, batch size 64, and a lr \(1 \times 10^{-3}\). We utilize the AdamW97 optimizer with a weight decay of \(3 \times 10^{-2}\).

Assessment and comparison of model using linear SVM

According to Table 1, the suggested method performs better in classifying 3D shapes in both domains. The results of our SVM model, with an accuracy of 93.63% and 89.8%, outperform specific established approaches in Table 2. For example, PointCNN98 achieved an accuracy of 92.35% and 86.2%, Point-BERT60 achieved an accuracy of 92.85% and 88.43%, and Point-M2AE52 achieved an accuracy of 93.40% and 91.22%. Hence, the performance of the proposed technique in SVM showcases the excellent 3D representations it has acquired and highlights the importance of our point cloud learning strategies. After pre-training, we have optimized our proposed method for both artificial and real-world 3D categorization.

3D classification using real-world scenes

The ScanObjectNN dataset84 is a demanding dataset that contains 11,416 training and 2,882 test 3D forms. These shapes are obtained by scanning real-world scenes, including backdrops with noise. According to the data presented in Table 2, our suggested method demonstrates significant superiority compared to previous self-supervised methods. It outperforms the second-best method by +0.76%, +0.34, and +0.28% for three different splits, respectively. Similarly, it outperforms the second-best method on ModelNet40 dataset by +0.17%, and +0.31% for three different splits, respectively as given in Table 3.

Synthetic 3D classification

The widely used ModelNet4099 comprises 2,468 test and 9,843 training 3D point clouds. These point clouds are created from 40 distinct categories’ worth of synthetic CAD models. The classification accuracy of current approaches is presented in Tables 4 and 5. Our suggested method demonstrates exceptional performance in both contexts, achieving superior results with a mere 1k input point number. Furthermore, it surpasses most earlier efforts, indicating its strong adaptability. It also achieved an accuracy of 94% with an increase of +0.10% without voting and an accuracy of 94.63% with an increase of +0.43% with voting on ScanObjectNN Dataset as given in Table 4. It achieved an accuracy of 93.90% with an increase of +0.16% without voting and an accuracy of 93.73% with an increase of +0.33% with voting on ScanObjectNN Dataset as given in Table 5.

Experiments

This section presents the results of our experimentation and their comparison with other methods with various masking schemes for our proposed model ViT3D. We demonstrated the efficiency of using masked tokens to rebuild 2D semantics for 3D object reconstructions.

Pre-training using restricted 3D data

In Table 6, the pre-training dataset85 was randomly sampled with varying ratios. The effectiveness of our suggested approach was then evaluated under conditions where the availability of 3D data is further limited. With the assistance of 2D pre-trained models, the proposed method demonstrates competitive performance, particularly for 20%, 40%, and 60%. Significantly, the suggested technique ViT3D (91.85%)performs better than Point-BERT (90.50%), Point-MAE (91.05%), Point M2AE (91.20%), and I2P-MAE (91.60%) when trained with full training data despite only utilizing 80% of the pre-training data. Our ViT3D learning approach demonstrates its ability to reduce the requirement for extensive 3D training datasets effectively.

Experiments with 2D masking technique

Table 7 presents the results of our experimentation with various masking schemes for the suggested method. The last row depicts a 2D masking technique that retains tokens of greater semantic significance, ensuring their visibility to the encoder. The utilization of 2D attention maps results in an accuracy improvement of 0.23% and 1.1% on two datasets when compared to the third-row random masking. Next, the spatial attention cloud’s token scores are switched around, and the most significant tokens are subsequently concealed. The findings in the fourth row are significantly damaged, highlighting the need to encode crucial 3D structures. In conclusion, the masking ratio is adjusted by ±1.0, regulating the ratio of visible to masked tokens. The decline in performance suggests that a proper balance between 2D-semantic and 3D coordinate reconstruction is necessary for an appropriately hard pre-text job.

2D-semantic reconstruction

We look at the best token groups to learn about 2D semantic targets in Table 8. The efficiency of using visible tokens to rebuild 2D semantics is demonstrated by comparing the first three rows, with classification accuracy improvements of 0.30% and 0.40%, respectively. The significance of 3D-coordinate reconstruction in the suggested method is confirmed by exclusively utilizing 2D targets for visible or masked tokens. This method provides further insights into the high-level 2D semantics and makes it possible to acquire low-level geometric 3D patterns. However, when reconstructing the 3D and 2D targets with the masked tokens, the network can only learn 2D information about the masked trivial 3D geometries, leaving out the more distinctive elements. Additionally, assigning two targets to the same tokens may result in semantic conflicts between two dimensions. By reconstructing visible 2D semantics, the 3D network can acquire a more significant number of distinguishing traits.

In Table 9, we compare our model ViT3D to other approaches and find that it outperformed them using masking and semantic strategies for reconstructing 3D images. We also observe that most recent research papers [93-98] use transformer-based architectures to understand 2D images and that multi-views are also being used to capture every point of the image to precisely reconstruct 3D models. Finally, the papers that are proposed have yet to combine the concept of pre-trained 2D vision transformer models for image reconstruction to save time and processing of training the model from scratch. We have suggested using vision transformers in this study, and this will serve as the foundation for future research.

3D classification on modelNet40 with diverse input points

We conducted a comparative analysis of various 3D classification models on the ModelNet40 dataset, utilizing different input points and voting techniques to evaluate their performance as given in Table 10. Having previously tested with 1K input points, we have also evaluated our ViT3D model using a variety of input points. The input points for the various models range from 4K to 8K input points. Initial experiments with 4K input points revealed that, without voting, accuracy values ranged from 90.54% for the [ST] Transformer33 model to 94.05% for the proposed ViT3D model, demonstrating the superiority of our model. In our experiments with 4K input points for the With Voting method, accuracy results varied from 91.2% for the [ST] Transformer33 model to 94.25% for the proposed ViT3D model, demonstrating the higher accuracy of our model. We subsequently conducted experiments with 5K input points, and the findings indicate that for the Without Voting condition, we could not get accuracy values; however, using identical input points, our model ViT3D surpassed other models, achieving an accuracy of 94.25%. Subsequently, we conducted experiments using 5K input points, revealing that our model, ViT3D, surpassed other models in the With Voting category, with an accuracy of 94.15%. The KPConv model106 exhibits the accuracy of 92.9% in the With Voting category among the offered models. The [ST]Point-BERT60 model, with 8k input points, achieves the greatest accuracy of 93.8% in the With Voting scenario. The suggested ViT3D model, with 4K and 5K input points, achieves the maximum accuracy, with and without voting, at 94.05% and 94.15%, respectively. Our model, ViT3D, demonstrated superior performance with input points of 4K and 5K in comparison to alternative techniques. We note that without voting and using 4K input points, we achieved an accuracy of 94.05%, which surpasses that of 1K input points. However, our suggested model, ViT3D, demonstrated superior performance on 1K input points, with an accuracy of 94.63%, compared to its performance on 5K input points. The accuracy of Voting is 94.25%. Through experimentation with various input points, we observed that an increased number of input points enhances accuracy; nevertheless, this may necessitate greater memory and computational capability to obtain optimal results.

Role of two-dimensional masking method & its importance in reconstructing 3D point clouds

The two-dimensional masking technique is essential for maintaining semantically important point tokens by meticulously regulating information flow during processing. The main goal of 2D masking is to selectively keep important semantic and spatial information while eliminating less important ones. The 2D masking method preserves the spatial links among points by considering them within a two-dimensional framework instead of a mere linear sequence. This maintains the essential geometric links among adjacent points that frequently convey semantic significance. During the application of the mask, the approach assesses tokens according to their local importance and global significance. This two-way evaluation prevents the loss of tokens that may not seem important at the moment but are necessary for the overall meaning. Masking usually uses dynamic thresholding, which changes based on the number of points in different areas, how complex the features being shown are, and the overall context of the data. The method consistently keeps important structural elements that define important traits, boundary points that separate different semantic areas, and points with a lot of semantic entropy. The masking process considers contextual dependencies by keeping the right amount of points in areas that are semantically important. It maintains points that serve as connections between distinct semantic domains.

The two-dimensional masking technique is important for reconstructing a 3D point cloud for several main reasons:

-

Firstly, it’s important to keep the most semantically important points while keeping the computational load as low as possible during the reconstruction process.

-

In 3D reconstruction, specific traits are more critical than others for preserving object identification and structure.

-

3D point clouds frequently encompass information across several scales, ranging from intricate minutiae to substantial structural components. The 2D masking technique facilitates this by maintaining hierarchical relationships among features.

-

The masking technique enhances reconstruction quality by eliminating noisy points that may compromise the final output.

-

The method minimizes memory needs for processing extensive point clouds by retaining only the most semantically significant points; hence, it accelerates later reconstruction phases.

Multi-scale MAE and pre-trained ViT architecture for 3D model generation based on self-supervised learning

This multi-scale MAE prior training architecture significantly advances 3D point cloud understanding by efficiently integrating 2D and 3D representation learning, illustrating how cross-modal learning could significantly enhance machine perception learning. Our suggested ViT3D multi-scale MAE previous training architecture effectively tackles a significant barrier in 3D point cloud representation learning by effectively integrating 2D and 3D visual comprehension. This solution primarily utilizes pre-trained 2D Vision Transformers as a robust feature extractor and knowledge transfer mechanism for 3D point cloud representations. Conventional 3D learning techniques frequently encounter difficulties in accurately capturing intricate spatial and semantic characteristics, particularly in the context of sparse or irregularly sampled point clouds. We attempted to implement the concept of transfer learning to minimize computational demands and employed the Vision Transformer as a pre-trained model. Our proposed model architecture comprises several essential components, including

-

2D Vision Transformer pre-trained on extensive image datasets.

-

Multi-scale point cloud encoder

-

Masked Autoencoder Encoder

-

Masked Autoencoder Decoder

-

Cross-modal Feature Alignment Techniques

This design employs an innovative multi-scale methodology in which point clouds are analyzed at varying granularities; coarse-scale captures global geometric structures, while fine-scale retains local geometric features. Intermediate scales are employed to capture mid-level geometric relationships. Utilizing transfer learning, we employ the 2D Vision Transformer as an effective feature extractor. The 2D Vision Transformer facilitates semantic feature transfer and aids in comprehending geometric translations, which are essential for identifying features necessary for reconstructing various shapes. Utilizing 2D ViT facilitates the acquisition of contextual representations that enable the extraction of elements essential for the reconstruction process. We trained our ViT3D model via a Masked Autoencoder (MAE). We randomly masked segments of the input point cloud and utilized 2D Vision Transformer features as a transfer learning method. It assists the model in reconstructing the masked regions, so diminishing the memory and computational demands that would otherwise escalate. Utilizing this masking method enables the model to acquire robust and more generalized representations of the input point clouds.

The workflow of the proposed model is summarized as follows: we first input the point cloud and create multi-scale sampling, followed by the utilization of a 2D Vision Transformer for feature extraction, which facilitates the learning of significant features. Subsequently, we implemented a masking approach and acquired masked point cloud regions. Utilizing cross-modal priors, we implemented MAE Reconstruction, which improved 3D representation learning to reconstruct 3D shapes. This assists in recognizing 3D objects, perception in autonomous driving, spatial comprehension for robotics development, and X-ray reconstruction in the medical field. Through the development of the ViT3D model, we acquired various advantages:

-

Enhanced feature representation

-

Enhanced management of sparse point clouds

-

Augmented semantic comprehension

-

Decreased reliance on extensive 3D-specific datasets

-

Utilizes transfer learning from two-dimensional visual domains

Limitations and challenges of ViT3D

Although our model ViT3D is a promising method for 3D model production, there are a few restrictions and possible difficulties to be aware of to fully utilize its potential.

-

To project 3D data into 2D for training, we employed our model ViT3D, which makes use of 2D pre-trained Vision Transformers. But occasionally, this projection can result in a loss of 3D data, which could affect the precision and finer features of the 3D models that are produced.

-

ViT3D uses a lot of images, and because the Transformer design has a lot of parameters, training, and inference can be computationally expensive. It restricts its suitability for areas with limited resources.

-

A significant amount of high-quality 3D data is needed for deep learning models like ViT3D to be trained effectively. Obtaining such data can occasionally be difficult and serve as a barrier for numerous applications.

There are a few potential challenges that need to be considered in the reconstruction of 3D models.

-

It is difficult to produce reliable 3D models from 2D projections. One of the most important jobs in reconstructing 3D models was to make sure that the 3D models adequately represented the underlying 3D structure.

-

One more challenge was handling occlusions in 2D projections. It could be challenging for the model to faithfully recreate obscured areas of the three-dimensional object while maintaining the true semantics of the image data.

-

Generating precise 3D models from unknown perspectives posed an additional difficulty in the reconstruction process. It is a difficult undertaking to attain greater generalization performance. We shall make an effort to lessen the effects of the constraints and difficulties in the future. ViT3D has the potential to develop into an effective tool for 3D model production in a variety of applications by resolving these issues.

Conclusion

Although the Transformer architecture is now often used for jobs involving natural language processing, there are still few uses for it in computer vision. In computer vision, attention is used either in conjunction with convolutional networks or as a stand-in for specific convolutional network elements while preserving the overall structure of the network. The dissimilarities between the two domains, such as substantial disparities in the magnitude of visual elements and the finer level of detail in pixels inside images as compared to words in text, pose challenges in adapting the Transformer model from language processing to visual tasks. Masking autoencoding is an up-and-coming technique for self-supervised learning that shows remarkable capabilities in computer vision and natural language processing. Pre-training with a large amount of image data has become a standard approach for creating solid and reliable 2D representations. However, the limited availability of 3D datasets hinders the acquisition of high-quality 3D features due to the substantial expense of processing the data. The present study introduces a novel framework for masked point modeling using efficient image-to-point learning techniques. This study presents two efficient techniques. With the assistance of 2D guidance, the suggested methodology effectively acquires advanced 3D representations and mitigates the need for extensive 3D data. Numerous tests demonstrate how effectively our method works with pre-trained models and how well it generalizes to a range of downstream tasks. In particular, our pre-trained model is better than all existing self-supervised learning techniques; it achieved 93.63% accuracy for linear SVM on ScanObjectNN and 91.31% accuracy on ModelNet40. Our approach demonstrates how a straightforward architecture solely based on conventional transformers may outperform specialized transformer models from supervised learning. In future research, we will investigate additional image-to-point learning methods, such as point token sampling and 2D to 3D class-token contrast, as well as masking and reconstruction techniques.

Future work

Training data has intrinsic restrictions that make handling unexpected 2D objects in 3D model development difficult. Although a large dataset of images and associated 3D models is usually used to train models, real-world scenarios frequently contain objects or combinations that differ from the training distribution. We have, however, thought of a few possible strategies that we might employ in our future work to address the issue:

-

The model can develop a more resilient representation of 3D shapes by using Generative Adversarial Networks, which may enhance its capacity to handle unseen objects.

-

By using data augmentation techniques for images, the model can be made to generalize more effectively by producing a variety of synthetic images with different object combinations and occlusions.

-

Another tactic for generalizing our model is domain adaptation, which can be used to close the gap when training and target data diverge considerably.

-

To assist the system learn from errors and get better over time, developers must pay close attention to user feedback on models that are generated.

-

Another tactic to boost the probability of creating correct models is to generate 3D models in phases and refine them based on interim outcomes.

Data availability

All the data supporting this study is openly available on paper and online as open source, without restrictions. The Datasets can be found at the following URLs: https://www.kaggle.com/datasets/mitkir/shapenet/datahttps://www.kaggle.com/datasets/ssfailearning/scanobjectnn-xthttps://www.kaggle.com/datasets/balraj98/modelnet40-princeton-3d-object-dataset

References

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Ł., Polosukhin, I.: Attention is all you need. Advances in neural information processing systems. 30 (2017).

Bahdanau, D., Cho, K., Bengio, Y.: Neural machine translation by jointly learning to align and translate. arXiv preprint arXiv:1409.0473 (2014).

Devlin, J., Chang, M.-W., Lee, K., Toutanova, K.: Bert: Pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805 (2018).

Brown, T. et al. Language models are few-shot learners. Advances in neural information processing systems 33, 1877–1901 (2020).

Lepikhin, D., Lee, H., Xu, Y., Chen, D., Firat, O., Huang, Y., Krikun, M., Shazeer, N., Chen, Z.: Gshard: Scaling giant models with conditional computation and automatic sharding. arXiv preprint arXiv:2006.16668 (2020).

Radford, A., Kim, J.W., Hallacy, C., Ramesh, A., Goh, G., Agarwal, S., Sastry, G., Askell, A., Mishkin, P., Clark, J., et al. Learning transferable visual models from natural language supervision. In: International Conference on Machine Learning. 8748–8763 (2021).

Zhang, R., Guo, Z., Zhang, W., Li, K., Miao, X., Cui, B., Qiao, Y., Gao, P., Li, H.: Pointclip: Point cloud understanding by clip. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 8552–8562 (2022).

Chen, T., Kornblith, S., Norouzi, M., Hinton, G.: A simple framework for contrastive learning of visual representations. In: International Conference on Machine Learning, pp. 1597–1607 (2020).

Chen, X., He, K.: Exploring simple siamese representation learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 15750–15758 (2021.)

He, K., Chen, X., Xie, S., Li, Y., Dollár, P., Girshick, R.: Masked autoencoders are scalable vision learners. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 16000–16009 (2022).

Radford, A., Narasimhan, K., Salimans, T., Sutskever, I., et al.: Improving language understanding by generative pre-training. OpenAI blog (2018).

Radford, A., Wu, J., Child, R., Luan, D., Amodei, D., Sutskever, I., et al.: Language models are unsupervised multitask learners. OpenAI blog (8), 9 (2019).

Bao, H., Dong, L., Piao, S., Wei, F.: Beit: Bert pre-training of image transformers. arXiv preprint arXiv:2106.08254 (2021).

Baevski, A., Hsu, W.-N., Xu, Q., Babu, A., Gu, J., Auli, M.: Data2vec: A general framework for self-supervised learning in speech, vision and language. In: International Conference on Machine Learning, pp. 1298–1312 (2022). PMLR

He, K., Fan, H., Wu, Y., Xie, S., Girshick, R.: Momentum contrast for unsupervised visual representation learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 9729–9738 (2020).

Xie, Z., Zhang, Z., Cao, Y., Lin, Y., Bao, J., Yao, Z., Dai, Q., Hu, H.: Simmim: A simple framework for masked image modeling. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 9653–9663 (2022).

Raffel, C. et al. Exploring the limits of transfer learning with a unified text-to-text transformer. The Journal of Machine Learning Research 21, 5485–5551 (2020).

Jiang, L., Zhang, J., Deng, B., Li, H. & Liu, L. 3d face reconstruction with geometry details from a single image. IEEE Transactions on Image Processing 27, 4756–4770 (2018).

Xu, Y., Shi, Z., Yifan, W., Chen, H., Yang, C., Peng, S., Shen, Y., Wetzstein, G.: Grm: Large gaussian reconstruction model for efficient 3d reconstruction and generation. arXiv preprint arXiv:2403.14621 (2024).

Tu, X. et al. 3d face reconstruction from a single image assisted by 2d face images in the wild. IEEE Transactions on Multimedia 23, 1160–1172 (2020).

Ding, C. & Tao, D. A comprehensive survey on pose-invariant face recognition. ACM Transactions on intelligent systems and technology (TIST) 7, 1–42 (2016).

Wewer, C., Raj, K., Ilg, E., Schiele, B., Lenssen, J.E.: latentsplat: Autoencoding variational gaussians for fast generalizable 3d reconstruction. In: European Conference on Computer Vision, pp. 456–473 (2024). Springer.

Cai, Y., Wang, J., Yuille, A., Zhou, Z., Wang, A.: Structure-aware sparse-view x-ray 3d reconstruction. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 11174–11183 (2024).

Kakadiaris, I. A. et al. 3d–2d face recognition with pose and illumination normalization. Computer Vision and Image Understanding 154, 137–151 (2017).

Sanghi, A., Jayaraman, P.K., Rampini, A., Lambourne, J., Shayani, H., Atherton, E., Taghanaki, S.A.: Sketch-a-shape: Zero-shot sketch-to-3d shape generation. arXiv preprint arXiv:2307.03869 (2023).

Krizhevsky, A., Sutskever, I. & Hinton, G. E. Imagenet classification with deep convolutional neural networks. Communications of the ACM 60, 84–90 (2017).

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016).

Zagoruyko, S., Komodakis, N.: Wide residual networks. arXiv preprint arXiv:1605.07146 (2016).

Huang, G., Liu, Z., Van Der Maaten, L., Weinberger, K.Q.: Densely connected convolutional networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4700–4708 (2017).

Dai, J., Qi, H., Xiong, Y., Li, Y., Zhang, G., Hu, H., Wei, Y.: Deformable convolutional networks. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 764–773 (2017).

Xie, S., Girshick, R., Dollár, P., Tu, Z., He, K.: Aggregated residual transformations for deep neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1492–1500 (2017).

Zhu, X., Hu, H., Lin, S., Dai, J.: Deformable convnets v2: More deformable, better results. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 9308–9316 (2019).

Dosovitskiy, A., Beyer, L., Kolesnikov, A., Weissenborn, D., Zhai, X., Unterthiner, T., Dehghani, M., Minderer, M., Heigold, G., Gelly, S., et al. An image is worth 16x16 words: Transformers for image recognition at scale. arXiv preprint arXiv:2010.11929 (2020).

Carion, N., Massa, F., Synnaeve, G., Usunier, N., Kirillov, A., Zagoruyko, S.: End-to-end object detection with transformers. In: European Conference on Computer Vision, pp. 213–229 (2020). Springer.

Strudel, R., Garcia, R., Laptev, I., Schmid, C.: Segmenter: Transformer for semantic segmentation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 7262–7272 (2021).

Deng, J., Dong, W., Socher, R., Li, L.-J., Li, K., Fei-Fei, L.: Imagenet: A large-scale hierarchical image database. In: 2009 IEEE Conference on Computer Vision and Pattern Recognition, pp. 248–255 (2009). Ieee.

Lin, T.-Y., Maire, M., Belongie, S., Hays, J., Perona, P., Ramanan, D., Dollár, P., Zitnick, C.L.: Microsoft coco: Common objects in context. In: Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6-12, 2014, Proceedings, Part V 13, pp. 740–755 (2014). Springer.

Russakovsky, O. et al. Imagenet large scale visual recognition challenge. International journal of computer vision 115, 211–252 (2015).

Thomee, B. et al. Yfcc100m: The new data in multimedia research. Communications of the ACM 59, 64–73 (2016).

Chen, L.-C., Papandreou, G., Kokkinos, I., Murphy, K. & Yuille, A. L. Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE transactions on pattern analysis and machine intelligence 40, 834–848 (2017).

He, K., Gkioxari, G., Dollár, P., Girshick, R.: Mask r-cnn. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2961–2969 (2017).

Tian, Z., Shen, C., Chen, H., He, T.: Fcos: Fully convolutional one-stage object detection. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 9627–9636 (2019).

Lin, T.-Y., Goyal, P., Girshick, R., He, K., Dollár, P.: Focal loss for dense object detection. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2980–2988 (2017).

Rani, V., Nabi, S. T., Kumar, M., Mittal, A. & Kumar, K. Self-supervised learning: A succinct review. Archives of Computational Methods in Engineering 30, 2761–2775 (2023).

Yu, J. et al. Self-supervised learning for recommender systems: A survey. IEEE Transactions on Knowledge and Data Engineering 36, 335–355 (2023).

Jiang, J., Lu, X., Zhao, L., Dazaley, R., Wang, M.: Masked autoencoders in 3d point cloud representation learning. IEEE Transactions on Multimedia (2023).

Liu, H., Cai, M., Lee, Y.J.: Masked discrimination for self-supervised learning on point clouds. In: European Conference on Computer Vision, pp. 657–675 (2022). Springer.

Zhang, Z., Girdhar, R., Joulin, A., Misra, I.: Self-supervised pretraining of 3d features on any point-cloud. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 10252–10263 (2021).

Chung, H., Ryu, D., McCann, M.T., Klasky, M.L., Ye, J.C.: Solving 3d inverse problems using pre-trained 2d diffusion models. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 22542–22551 (2023).

Avram, O., Durmus, B., Rakocz, N., Corradetti, G., An, U., Nittala, M.G., Terway, P., Rudas, A., Chen, Z.J., Wakatsuki, Y., et al.: Accurate prediction of disease-risk factors from volumetric medical scans by a deep vision model pre-trained with 2d scans. Nature Biomedical Engineering, 1–14 (2024).

Pang, Y., Wang, W., Tay, F.E., Liu, W., Tian, Y., Yuan, L.: Masked autoencoders for point cloud self-supervised learning. In: European Conference on Computer Vision, pp. 604–621 (2022). Springer.

Zhang, R. et al. Point-m2ae: multi-scale masked autoencoders for hierarchical point cloud pre-training. Advances in neural information processing systems 35, 27061–27074 (2022).

Mann, B., Ryder, N., Subbiah, M., Kaplan, J., Dhariwal, P., Neelakantan, A., Shyam, P., Sastry, G., Askell, A., Agarwal, S., et al.: Language models are few-shot learners. arXiv preprint arXiv:2005.14165 (2020).

Liu, Y., Ott, M., Goyal, N., Du, J., Joshi, M., Chen, D., Levy, O., Lewis, M., Zettlemoyer, L., Stoyanov, V.: Roberta: A robustly optimized bert pretraining approach. arXiv preprint arXiv:1907.11692 (2019).

Yuan, L., Chen, Y., Wang, T., Yu, W., Shi, Y., Jiang, Z.-H., Tay, F.E., Feng, J., Yan, S.: Tokens-to-token vit: Training vision transformers from scratch on imagenet. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 558–567 (2021).

Yuan, L., Hou, Q., Jiang, Z., Feng, J. & Yan, S. Volo: Vision outlooker for visual recognition. IEEE transactions on pattern analysis and machine intelligence 45, 6575–6586 (2022).

Guo, M.-H. et al. Attention mechanisms in computer vision: A survey. Computational visual media 8, 331–368 (2022).

Wang, W., Chen, W., Qiu, Q., Chen, L., Wu, B., Lin, B., He, X., Liu, W.: Crossformer++: A versatile vision transformer hinging on cross-scale attention. arXiv preprint arXiv:2303.06908 (2023).

Guo, M.-H. et al. Pct: Point cloud transformer. Computational Visual Media 7, 187–199 (2021).

Yu, X., Tang, L., Rao, Y., Huang, T., Zhou, J., Lu, J.: Point-bert: Pre-training 3d point cloud transformers with masked point modeling. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 19313–19322 (2022).

Wang, Y. et al. Dynamic graph cnn for learning on point clouds. ACM Transactions on Graphics (tog) 38, 1–12 (2019).

LeCun, Y., Bottou, L., Bengio, Y. & Haffner, P. Gradient-based learning applied to document recognition. Proceedings of the IEEE 86, 2278–2324 (1998).

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition. arXiv preprint arXiv:1409.1556 (2014).

Szegedy, C., Liu, W., Jia, Y., Sermanet, P., Reed, S., Anguelov, D., Erhan, D., Vanhoucke, V., Rabinovich, A.: Going deeper with convolutions. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–9 (2015).

Wang, J. et al. Deep high-resolution representation learning for visual recognition. IEEE transactions on pattern analysis and machine intelligence 43, 3349–3364 (2020).

Tan, M., Le, Q.: Efficientnet: Rethinking model scaling for convolutional neural networks. In: International Conference on Machine Learning, pp. 6105–6114 (2019). PMLR.

Doersch, C., Gupta, A., Efros, A.A.: Unsupervised visual representation learning by context prediction. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 1422–1430 (2015).

Gidaris, S., Singh, P., Komodakis, N.: Unsupervised representation learning by predicting image rotations. arXiv preprint arXiv:1803.07728 (2018).

Noroozi, M., Favaro, P.: Unsupervised learning of visual representations by solving jigsaw puzzles. In: European Conference on Computer Vision, pp. 69–84 (2016). Springer.

Becker, S. & Hinton, G. E. Self-organizing neural network that discovers surfaces in random-dot stereograms. Nature 355, 161–163 (1992).

Grill, J.-B. et al. Bootstrap your own latent-a new approach to self-supervised learning. Advances in neural information processing systems 33, 21271–21284 (2020).

Poursaeed, O., Jiang, T., Qiao, H., Xu, N., Kim, V.G.: Self-supervised learning of point clouds via orientation estimation. In: 2020 International Conference on 3D Vision (3DV), pp. 1018–1028 (2020). IEEE.

Achituve, I., Maron, H., Chechik, G.: Self-supervised learning for domain adaptation on point clouds. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 123–133 (2021).

Sauder, J., Sievers, B.: Self-supervised deep learning on point clouds by reconstructing space. Advances in Neural Information Processing Systems 32 (2019).

Wang, H., Liu, Q., Yue, X., Lasenby, J., Kusner, M.J.: Unsupervised point cloud pre-training via occlusion completion. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 9782–9792 (2021).

Xie, S., Gu, J., Guo, D., Qi, C.R., Guibas, L., Litany, O.: Pointcontrast: Unsupervised pre-training for 3d point cloud understanding. In: Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part III 16, pp. 574–591 (2020). Springer.

Afham, M., Dissanayake, I., Dissanayake, D., Dharmasiri, A., Thilakarathna, K., Rodrigo, R.: Crosspoint: Self-supervised cross-modal contrastive learning for 3d point cloud understanding. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 9902–9912 (2022).

Qi, C.R., Su, H., Mo, K., Guibas, L.J.: Pointnet: Deep learning on point sets for 3d classification and segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 652–660 (2017).

Zhang, R., Wang, L., Guo, Z., Shi, J.: Nearest neighbors meet deep neural networks for point cloud analysis. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 1246–1255 (2023).

Zhang, R., Wang, L., Wang, Y., Gao, P., Li, H., Shi, J.: Parameter is not all you need: Starting from non-parametric networks for 3d point cloud analysis. arXiv preprint arXiv:2303.08134 (2023).

Zhang, R., Zeng, Z., Guo, Z., Gao, X., Fu, K., Shi, J.: Dspoint: Dual-scale point cloud recognition with high-frequency fusion. arXiv preprint arXiv:2111.10332 (2021).

Xu, M., Ding, R., Zhao, H., Qi, X.: Paconv: Position adaptive convolution with dynamic kernel assembling on point clouds. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 3173–3182 (2021).

Xiang, T., Zhang, C., Song, Y., Yu, J., Cai, W.: Walk in the cloud: Learning curves for point clouds shape analysis. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 915–924 (2021).

Uy, M.A., Pham, Q.-H., Hua, B.-S., Nguyen, T., Yeung, S.-K.: Revisiting point cloud classification: A new benchmark dataset and classification model on real-world data. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 1588–1597 (2019).

Chang, A.X., Funkhouser, T., Guibas, L., Hanrahan, P., Huang, Q., Li, Z., Savarese, S., Savva, M., Song, S., Su, H., et al.: Shapenet: An information-rich 3d model repository. arXiv preprint arXiv:1512.03012 (2015).

Fu, K., Gao, P., Zhang, R., Li, H., Qiao, Y., Wang, M.: Distillation with contrast is all you need for self-supervised point cloud representation learning. arXiv preprint arXiv:2202.04241 (2022).

Rao, Y., Lu, J., Zhou, J.: Global-local bidirectional reasoning for unsupervised representation learning of 3d point clouds. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5376–5385 (2020).

Huang, S., Xie, Y., Zhu, S.-C., Zhu, Y.: Spatio-temporal self-supervised representation learning for 3d point clouds. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 6535–6545 (2021).

Hou, Y., Huang, X., Tang, S., He, T. & Ouyang, W. Advances in 3d pre-training and downstream tasks: a survey. Vicinagearth 1, 6 (2024).

Li, X., Zhang, Q., Kang, D., Cheng, W., Gao, Y., Zhang, J., Liang, Z., Liao, J., Cao, Y.-P., Shan, Y.: Advances in 3d generation: A survey. arXiv preprint arXiv:2401.17807 (2024).

Liu, Z., Lin, Y., Cao, Y., Hu, H., Wei, Y., Zhang, Z., Lin, S., Guo, B.: Swin transformer: Hierarchical vision transformer using shifted windows. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 10012–10022 (2021).

Caron, M., Touvron, H., Misra, I., Jégou, H., Mairal, J., Bojanowski, P., Joulin, A.: Emerging properties in self-supervised vision transformers. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 9650–9660 (2021)

Zhang, R., Wang, L., Qiao, Y., Gao, P., Li, H.: Learning 3d representations from 2d pre-trained models via image-to-point masked autoencoders. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 21769–21780 (2023).

Chen, A., Zhang, K., Zhang, R., Wang, Z., Lu, Y., Guo, Y., Zhang, S.: Pimae: Point cloud and image interactive masked autoencoders for 3d object detection. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 5291–5301 (2023).

Fan, H., Su, H., Guibas, L.J.: A point set generation network for 3d object reconstruction from a single image. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 605–613 (2017).

Han, Z., Shang, M., Liu, Y.-S., Zwicker, M.: View inter-prediction gan: Unsupervised representation learning for 3d shapes by learning global shape memories to support local view predictions. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 33, pp. 8376–8384 (2019).

Kingma, D.P., Ba, J.: Adam: A method for stochastic optimization. arXiv preprint arXiv:1412.6980 (2014).

Li, Y., Bu, R., Sun, M., Wu, W., Di, X., Chen, B.: Pointcnn: Convolution on x-transformed points. Advances in neural information processing systems 31 (2018).

Wu, Z., Song, S., Khosla, A., Yu, F., Zhang, L., Tang, X., Xiao, J.: 3d shapenets: A deep representation for volumetric shapes. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1912–1920 (2015)

Srivastava, S., Sharma, G.: Omnivec: Learning robust representations with cross modal sharing. In: Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision, pp. 1236–1248 (2024)

Qi, Z., Dong, R., Zhang, S., Geng, H., Han, C., Ge, Z., Yi, L., Ma, K.: Shapellm: Universal 3d object understanding for embodied interaction. arXiv preprint arXiv:2402.17766 (2024).

Wang, C., Wu, M., Lam, S.-K., Ning, X., Yu, S., Wang, R., Li, W., Srikanthan, T.: Gpsformer: A global perception and local structure fitting-based transformer for point cloud understanding. arXiv preprint arXiv:2407.13519 (2024).

Tochilkin, D., Pankratz, D., Liu, Z., Huang, Z., Letts, A., Li, Y., Liang, D., Laforte, C., Jampani, V., Cao, Y.-P.: Triposr: Fast 3d object reconstruction from a single image. arXiv preprint arXiv:2403.02151 (2024).

Moliner, O., Huang, S., Åström, K.: Geometry-biased transformer for robust multi-view 3d human pose reconstruction. In: 2024 IEEE 18th International Conference on Automatic Face and Gesture Recognition (FG), pp. 1–8 (2024). IEEE.

Min, C., Xiao, L., Zhao, D., Nie, Y., Dai, B.: Multi-camera unified pre-training via 3d scene reconstruction. IEEE Robotics and Automation Letters (2024).

Thomas, H., Qi, C.R., Deschaud, J.-E., Marcotegui, B., Goulette, F., Guibas, L.J.: Kpconv: Flexible and deformable convolution for point clouds. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 6411–6420 (2019).

Li, J., Chen, B.M., Lee, G.H.: So-net: Self-organizing network for point cloud analysis. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 9397–9406 (2018).

Acknowledgements

The authors extend their appreciation to Taif University, Saudi Arabia, for supporting this work through project number (TU-DSPP-2024-253).

Funding

This research was funded by Taif University, Taif, Saudi Arabia, Project No. (TU-DSPP-2024-253).

Author information

Authors and Affiliations

Contributions

Conceptualization, M.S., T.S.M. and K.R.M.; Data Gathering, A.U.R. and T.S.M; For- mal analysis, M.A., A.H.K. and S.H.; Investigation, T.S.M and M.S.; Methodology, M.S.; A.U.R. and T.S.M.; Supervision, K.R.M., and T.S.M.; Writ- ing-original draft, T.S.M., and M.S.; Writing-review & editing, A.U.R., M.A. and S.H. All authors have read and agreed to the published version of the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Sajid, M., Razzaq Malik, K., Ur Rehman, A. et al. Leveraging two-dimensional pre-trained vision transformers for three-dimensional model generation via masked autoencoders. Sci Rep 15, 3164 (2025). https://doi.org/10.1038/s41598-025-87376-y

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-87376-y

Keywords

This article is cited by

-

A hybrid steganography framework using DCT and GAN for secure data communication in the big data era

Scientific Reports (2025)

-

TVAE-3D: Efficient multi-view 3D shape reconstruction with diffusion models and transformer based VAE

Cluster Computing (2025)