Abstract

Predicting runoff with precision holds immense importance for flood control, water resource management, and basin ecological dispatch. Deep learning, especially long short-term memory (LSTM) neural networks, has excelled in runoff prediction, often outperforming traditional hydrological models. Recent studies suggest that deep learning models employing the self-attention mechanism, such as Transformer and Informer, can achieve even better results than LSTM. However, research exploring the multi-step runoff prediction capabilities of these novel models across diverse scenarios remains scarce. In this investigation, we introduce a relative location coding-enhanced Informer model, termed Rel-Informer, and compare its performance in rainfall-runoff prediction against the standard Informer, Transformer, and LSTM models. The publicly available CAMELS dataset is utilized for training and validating the models, and four experiments are designed: (1) Individual rainfall-runoff modeling (one model per catchment); (2) Regional rainfall-runoff modeling (one model per region); (3) Fine-tuned regional rainfall-runoff modeling (fine-tuned from Experiment 2); (4) Large-scale rainfall-runoff modeling for ungauged catchments (one model for all catchments). The findings reveal that Rel-Informer consistently performs better than the other models, particularly in short-term runoff predictions (1–3 days ahead). Although regional modeling is less precise than individual modeling, it significantly benefits from fine-tuning. The large-scale regional Rel-Informer model effectively predicts runoff in ungauged catchments, showcasing its potential for widespread runoff prediction. This study underscores the influence of hydrological characteristics, such as snowmelt and baseflow indices, on prediction accuracy. In conclusion, the Rel-Informer model, enhanced with improved relative position encoding, emerges as a promising tool for runoff forecasting, especially in data-rich catchments.

Similar content being viewed by others

Introduction

Rainfall-runoff modeling plays a crucial role in hydrological research by forecasting future runoff based on available rainfall and other meteorological forcing observations1,2,3. Developing practical and applicable models can help in decision-making related to flood prevention, water resources planning, and mitigation of pollution4.

Currently, there are three main types of rainfall-runoff simulation models: physical modeling, conceptual modeling, and data-driven modeling5,6. Physical modeling is based on fluid dynamics and surface processes laws, providing a strong theoretical foundation for accurate predictions but requiring extensive input data7,8. Conceptual modeling simplifies data requirements and model complexity, capturing hydrologic processes with fewer parameters and data but may fail to account for the system complexity. Moreover, the applicability of conceptual models may be limited by geographic and climatic conditions9. Data-driven modeling utilizes statistical and machine learning techniques to build predictive models based on historical and observational data. Its main drawback lies in its heavy reliance on the quality and quantity of available data. If the data is lacking or biased, the accuracy of the model may be compromised. In addition, data-driven models primarily provide statistical relationships among phenomena. They cannot conduct scenario analysis (e.g., the impact of land use changes) nor simulate non-runoff hydrological states and fluxes (e.g., evapotranspiration, soil moisture, etc.).

Data-driven models, despite possessing certain limitations, continue to hold immense significance in the realm of hydrologic runoff prediction research. These models can be modeled from accessible observational data without assumptions or parameterizing specific physical processes, thereby affording them adaptability and flexibility across different hydrologic scenarios and geographic regions10,11. The construction of data-driven model is relatively straightforward and swift compared to physical and conceptual models. Once sufficient data is collected, models can be constructed using a variety of statistical and machine learning algorithms, without the need for detailed system knowledge and complex parameterization processes. By learning the patterns and correlations within the data, data-driven models excel at handling nonlinear relationships and offering more precise runoff predictions12,13,14,15.

In recent years, numerous data-driven models, particularly those grounded on artificial intelligence, have been extensively utilized in rainfall-runoff modeling, yielding results superior to those of physical and conceptual models. Kratzert et al. utilized both the LSTM and Sacramento soil moisture accounting (SAC-SMA) model on the catchment attributes and meteorology for large-sample studies (CAMELS) dataset, constructing daily runoff prediction models for each of the 241 catchments8. Their findings revealed that the LSTM model, requiring only minimal parameter tuning, delivered comparable or even slightly superior performance to the well-established SAC-SAM model. Yin et al. evaluated the effectiveness of three models—LSTM, SAC-SAM, and the national water model (NWM)—for rainfall-runoff modeling in ungauged catchments using the CAMELS dataset16. The results showed that the LSTM model outperformed the two process-driven models on most catchments. In addition, extensive studies in recent years have shown that the LSTM model, including its variants, excels at capturing high-dimensional time-dependent patterns in time-series data, thereby often achieving better performance in runoff forecasting17,18,19,20,21.

Initially, LSTM models were predominantly applied to natural language processing. As hydrologists embarked on experimenting with LSTM for runoff prediction, its powerful performance in this domain gradually became evident. Recently, a novel deep learning model known as Transformer22, based on self-attention mechanism, has emerged, rapidly gaining recognition for its unique ability to capture distant dependencies and contextual relationships in sequential data23. Leveraging the multi-head attention mechanism, the Transformer architecture has achieved state-of-the-art performance in various natural language processing (NLP) applications, demonstrating its power in encoding and decoding complex linguistic patterns3. In recent years, the application of the Transformer model has transcended the boundaries of NLP, extending into other domains involving time series analysis, including hydrological modeling24,25. This expansion is attributed to the shared sequential nature of natural language and time series data, enabling the adaptation of the Transformer’s attention mechanism for tasks such as rainfall-runoff forecasting26.

Compared to LSTM, the Transformer model can encode the entire input sequence simultaneously, eliminating the need for sequential, step-by-step processing. This capability enables the Transformer to effectively capture global information and contextual relationships within the sequence, thereby facilitating the extraction of richer feature representations27. Yin et al. proposed the rainfall-runoff Former (RR-Former), a Transformer-based model specifically tailored for rainfall-runoff modeling, which surpassed LSTM and other benchmarks on the CAMELS dataset3. Their findings highlight the potential of Transformer models in capturing intricate hydrological patterns and dynamics, echoing the success of Transformer in NLP. The primary advantage of the Transformer model lies in its ability to process the entire input sequence simultaneously, in contrast to the sequential processing of recurrent neural network (RNN) like LSTM. This characteristic enables the Transformer to effectively encode global context and long-term dependencies within hydrological time series, facilitating more accurate runoff predictions. Peng et al. further demonstrated the Transformer’s superiority in capturing long-term dependency by applying it to water quality prediction, achieving better performance compared to traditional models28.

However, the transformer model has primarily been proposed for applications within the realm of natural language processing. While there exist similarities, there are also notable differences when comparing these models to time series forecasting problems. Consequently, Zhou et al. introduced a novel model, Informer, tailored specifically for long-sequence time-series forecasting, leveraging the transformer framework29. In their research, the Informer model was applied to four extensive publicly available datasets and benchmarked against six state-of-the-art models. The results showed that the Informer model is the optimal model. Furthermore, it required less training time and achieved higher prediction accuracy compared to the transformer model. Given its promising performance, some researchers have extended the application of the Informer model to hydrological forecasting. For instance, Du et al. applied the Informer model and an enhanced version, RF-Informer, for water level prediction in Hongze Lake, China30. Their findings indicated that the RF-Informer model outperformed both the ARIMA and LSTM models, exhibiting higher median and mean values of the NSE metric. Similarly, Bi et al. tested the efficacy of the Informer, Seq2seq, XG-Boost, and ANN models for forecasting the water quality indicator TN31. The results revealed that the Informer model had the smallest forecasting error and the highest accuracy.

However, Yan et al. demonstrated that the Transformer model loses relative position information when subjected to an unknown linear transformation32. The Informer employs the same position encoding method (absolute position encoding) as the Transformer. This implies that when either the Informer or Transformer is used for runoff forecasting, meteorological and runoff data input in chronological order will lose their relative positional information. To address this issue, Dai et al. proposed a relative position encoding method that effectively mitigates the loss of relative position information in the Transformer33. Therefore, our study introduces the relative position encoding method into the Informer (Rel-Informer) and applies it to rainfall-runoff modeling.

As previously mentioned, Rel-Informer (along with Informer and Transformer) represents a novel class of time series forecasting models. Existing studies primarily focus on comparing either the Transformer or Informer models to other deep learning models3,24,27,34. However, there is a notable lack of systematic comparisons regarding their runoff prediction performance. What are the respective advantages and disadvantages of Rel-Informer (Informer and Transformer) compared to LSTM? What hydrologic features affect the prediction accuracy in the runoff prediction studies of these models? Additionally, numerous studies have constructed regional rainfall-runoff models for large sample catchments and applied them to ungauged catchments8,17,35,36. Predicting runoff from ungauged catchments poses a significant challenge in hydrology. Therefore, what is the performance of the aforementioned models when utilized for regional rainfall-runoff modeling?

In this study, we propose the Rel-Informer model, which enhances the Informer model by incorporating relative position encoding. We evaluate and investigate the performance of this models, specifically Rel-Informer, Informer, Transformer, and the classical LSTM model, for runoff prediction across U.S. catchments utilizing the CAMELS dataset. Our specific objectives are as follows: (1) To assess the efficacy of the four models in both individual rainfall-runoff modeling (where separate models are constructed for each catchment) and regional rainfall-runoff modeling (where a single model is developed for each region encompassing multiple catchments), further identifying their respective strengths and limitations. (2) To evaluate the predictive capabilities of the distinct models across various catchments and examine the relationship between the actual hydrologic characteristics and prediction accuracy. (3) To harness the four models for large-scale rainfall-runoff modeling and extend their application to ungauged catchments, comparing the runoff prediction performances of different models in ungauged catchments.

Materials and methods

CAMELS dataset

We utilized the CAMELS dataset as the foundation of our research37,38, a comprehensive collection widely employed in hydrology machine learning studies due to its capacity for facilitating straightforward performance benchmarking. This dataset comprises 671 catchments across the contiguous United States, characterized by minimal human intervention. It contains meteorological forcing data and observed runoff data for each catchment, spanning from October 1, 1980, to December 31, 2014. The meteorological data, sourced from three distinct gridded platforms—Daymet, Maurer, and NLDAS—include day length, precipitation, surface solar radiation, maximum and minimum temperatures, snow-water equivalent, and near-surface daily average vapor pressure. In this study, we prioritized the Daymet data, which boasts the highest spatial resolution, as the foundation for calculating catchment averages and incorporating nearly all pertinent meteorological input variables (excluding snow-water equivalent and day length). Additionally, we leveraged the 30 static attributes data associated with each catchment within the CAMELS dataset to facilitate regionalized modeling endeavors. A concise summary of the model input data is presented in Table S1.

The 671 catchments are systematically categorized into 18 hydrological units (HUCs), adhering to the U.S. Geological Survey’s HUC map8. These units demarcate distinct geographical regions, encompassing the drainage areas of major rivers or the amalgamated counterparts.

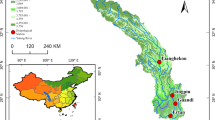

It is worth noting that a significant portion of catchments in the dataset lacks complete runoff data up to December 31, 2014. To ensure uniformity in the temporal scope of data across all catchments, we selected 596 catchments with recorded data until September 30, 2014, as illustrated in Fig. 1.

Location of catchments in the CAMELS dataset.

Methods

LSTM

LSTM is a variant of RNN which learns from sequential data. It currently stands as one of the most prevalent deep learning models in hydrological modeling, with numerous studies demonstrating its effectiveness of LSTM in runoff prediction using the CAMELS dataset8,9,16,17,34. Unlike traditional RNN, LSTM incorporates three different “gates”: the forget gate, update gate, and output gate. The forget gate serves to filter out irrelevant information from the previous timestep, whereas the update gate selects crucial information from the output of the forget gate for storage. This unique architecture enables LSTM to capture both long-term and short-term dependencies within input time series data, which is vital for accurate runoff predictions, particularly in relation to snowmelt and groundwater storage dynamics.

Transformer

The Transformer is a model featuring an encoder-decoder structure22, as illustrated in Fig. 2. It encompasses an embedding layer, a positional encoding layer, an encoder, and a decoder. Positioned at the beginning of both the encoder and decoder, the embedding layer aligns the input dimension with the model dimension. Unlike RNNs, the Transformer lacks an explicit recurrent structure, thereby lacking the inherent ability to discern positional information. To address this limitation, the Transformer incorporates a positional encoding layer that provides positional information for each element in the input sequence, thereby enabling the model to comprehend sequential relationships. The encoder’s function is to encode historical meteorological and hydrological observations into a fixed-length vector representation, extracting temporal patterns and correlations. Using the encoder’s output vector as its initial state, the decoder progressively generates future runoff sequences. For each predicted timestep, the decoder integrates the previously predicted runoff data with information from the encoder to produce the runoff prediction for the subsequent timestep.

Furthermore, the encoder-decoder structure also integrates a multi-head attention mechanism, enabling the model to analyze the data from different perspectives to extract richer information. Each attention head processes queries, keys, and values through linear transformations and then combines their outputs. In the encoder, the multi-head attention mechanism helps the model learn temporal dependencies from historical meteorological and hydrological observation data. By performing self-attention calculations on the input sequence, the encoder can focus on different timesteps and effectively capture relevant patterns. In the decoder, the multi-head attention mechanism aligns the decoder’s current state with the encoder’s output, enhancing prediction accuracy by considering pertinent segments of the encoder’s output during the generation of runoff values. For a deeper comprehensive understanding of the Transformer and its applications in runoff prediction, readers may consult relevant literature3,27,28.

Structure of Transformer.

Informer

The Informer model, an improved version of the Transformer model, is tailored for time series forecasting, incorporating three pivotal enhancements29. Firstly, it refines the multi-head attention mechanism by introducing a probabilistic sparse self-attention mechanism. Unlike traditional self-attention, which integrates information across all positions, this approach filters out potentially distracting weak or irrelevant connections between positions. By combining global information with local relevance through a masking strategy, it enhances the precision of capturing crucial relationships within the sequence. Secondly, self-attention extraction in the Informer highlights dominant attention by reducing the input dimensionality of cascaded layers by half, enabling it to effectively handle extremely long input sequences. Lastly, the integration of a generative decoder allows for a single forward pass to make multi-step predictions of future values across long sequences, eliminating the need for sequential step-by-step predictions. This not only accelerates the inference speed for long-sequence predictions but also mitigates cumulative prediction errors. As an optimized version of the Transformer, the Informer model broadens its applicability to time series forecasting. Researchers interested in exploring further examples of Informer applications in runoff prediction may consult relevant literature3,39,40.

Rel-informer

Both Transformer and Informer employ the same method of position encoding, known as absolute position encoding. The formula for expressing their specific encoding schemes is as follows:

Where \(pos\) is the position of the input data, \({d_{{\text{model}}}}\) is the dimension of the data, and i is in the range of \([0,\frac{d}{2}]\). However, this position encoding approach does not consider directionality, and the relative position information is lost when the position encoding information is inputted to the self-attention layer. A more detailed proof can be found in the literature32.

Relative position encoding introduces positional information into the self-attention mechanism by encoding the positional relationship through relative positional offsets between query \({q_i}\) and key \({k_j}\). The specific expression formula for relative position encoding is as follows:

Where \({W_q}\) and \({W_k}\) are the query and key parameters added to each head by multi-head attention. \({E_{{x_i}}}\) and \({E_{{x_j}}}\) is the data embedding vector of the input \({x_i}\) and \({x_j}\). \({P_i}\) and \({P_j}\) is the position embedding of the i-th position and the j-th position. The factorization of (5) results in (6):

Equation (6) is further converted into the following equation:

\(P_{j}^{T}\) is replaced by \(R_{{i - j}}^{T}\) which represents the relative position. \({P_i}{W_q}\) is replaced by trainable parameters \(u \in {R^d}\) in the third term and \(v \in {R^d}\) in the fourth term, which means the attentive bias should remain unchanged. Finally, the two weight matrices \(W_{{k,E}}^{T}\) and \(W_{{k,R}}^{T}\) are separated to generate content-based and location-based key vectors, respectively. More information about relative position encoding can be found in the literature33,41.

In this study, we substituted the absolute position encoding employed by the Informer model with the aforementioned relative position encoding, referred to as Rel-Informer, as illustrated in Fig. 3. The entire model consists of the embedding layer (1-D convolutional neural network), position encoding layer, encoder, and decoder components. The historical meteorological and runoff observation data undergoes an embedding operation using a convolutional neural network, while simultaneously being subjected to relative position encoding. The results of these two processes are added together and serve as the input to the encoder. On the right side of the figure, the input includes historically known meteorological and runoff data, along with a sequence padded with zeros. Similarly, after performing embedding and relative position encoding operations, the two results are added together and serve as the input to the decoder. The output of the encoder is fed as input to the decoder’s multi-head attention mechanism for subsequent operations. Finally, the decoder’s output is passed through a fully connected layer to obtain the predicted runoff.

Structure of Rel-Informer.

Model settings

In this study, the hyper-parameter configurations for the Rel-Informer, Informer, and Transformer models are consistent. The input to these models comprises meteorological and runoff data lagged by 60 days, producing predictions for runoff 1 to 7 days ahead. For individual rainfall-runoff modeling, the model’s input features total 6, representing six time series datasets. Conversely, for regionalized rainfall-runoff modeling, the model’s input features amount to 36, encompassing six time series datasets and 30 static catchment attribute datasets. The static attribute datasets are filled with the same data to ensure alignment with the time series datasets. In this study, the Rel-Informer, Informer, and Transformer models all use an automatically updated method to adaptively adjust the learning rate. The fundamental approach involves initially setting a relatively high learning rate for the model (0.01 in this instance). Following several iterations, when the validation set loss ceases to decrease or begins to increase, the learning rate is decreased by a specified percentage until the validation set loss stabilizes or increases again, and this process continues iteratively. The learning rate ceases to update once it reaches a predefined threshold. Furthermore, an early stopping mechanism is implemented for the number of training iterations, with a maximum of 200 epochs. After multiple adjustments to the learning rate, training terminates when the validation set loss ceases to decrease or begins to increase. The hyper-parameters determined in this study are presented in Table 1, which correspond to the hyper-parameters defined in the source code of the Informer, available at https://github.com/zhouhaoyi/Informer2020.

To ensure a relatively impartial comparison, the LSTM model incorporates one LSTM layer and one dropout layer, with 512 neurons in the LSTM layer and a dropout ratio of 0.05. The input rules for this model are identical to those for the Rel-Informer, Informer, and Transformer models mentioned previously. The Adam optimizer is employed, with a batch size of 64. The model’s learning rate and epochs are determined using the same adaptive learning rate adjustment and early stopping mechanism methodologies.

Multi-step runoff forecasting

The Informer model incorporates a generative decoder design, enabling it to directly yield results for all prediction timesteps through a single forward operation, rather than making predictions step by step29. This method eliminates the need for recalculation at each step in rolling predictions, thereby significantly boosting inference speed for long sequence predictions. In our study, we have also applied this generative decoder to the Transformer and Rel-Informer models, facilitating direct multi-step runoff predictions. Traditionally, LSTM relies on rolling predictions for multi-step runoff forecasting, but this approach is prone to error accumulation. Furthermore, when dealing with multiple variables such as meteorological and runoff data, rolling predictions require forecasting both types of data at each timestep, introducing additional uncertainties and potentially compromising model performance. To ensure a fair comparison with Rel-Informer, Informer, and Transformer, our study specifically focuses on 1- to 7-day ahead runoff predictions. For the seven-step runoff prediction, we have constructed seven individual LSTM models. For instance, in the 1-day ahead runoff prediction, LSTM takes [x1, x2, ., xn] as input and predicts yn+1 as runoff output, where x represents input features, including meteorological and runoff data, with subscripts indicating timesteps. Similarly, for the 2-day ahead runoff prediction, the input remains [x1, x2, ., xn], while the output becomes yn+2. This pattern continues until the 7-day ahead runoff prediction, where the output is yn+7. Although this approach has certain limitations, it effectively mitigates error accumulation and tends to exhibit better performance for 1- to 3-day ahead runoff predictions in larger catchments, owing to their inherent storage and regulation capabilities.

Evaluation metrics

In order to reasonably evaluate the performance of the model, we choose the Nash-Sutcliffe efficiency (NSE) and root mean square error (RMSE) as the evaluation metrics for the simulation effect of the model. Meanwhile, we also introduce the absolute top 2% prediction error (ATPE) to better evaluate the model’s simulation effect on the peak.

The NSE and RMSE are defined as follows:

where \({y_i}\) is the observed value at time i, \({\hat {y}_i}\) is the prediction result of \({y_i}\), \(\bar {y}\) refers to the average of N observations.

The ATPE is defined as follows:

where \({y_{\left( 1 \right)}} \geqslant {y_{\left( 2 \right)}} \geqslant \cdots \geqslant {y_{\left( H \right)}}\), \({y_{(j)}}\) is the jth-ordered runoff observations, \({\hat {y}_j}\) is the prediction result of \({y_{(j)}}\), H denotes the number of the top 2% peaks. The smaller TPE refers to the better model.

Regarding the four deep learning models involved in this study, there may exist minimal differences in the performance evaluation metrics between the models. To quantify the percentage improvement (PI) in evaluation metrics of model A relative to model B, the calculation is shown in Eq. (11).

where A and B refer to the four deep learning models involved in this study.

Experimental setup

In this study, we designed four experiments to assess the performance of the Rel-Informer model. Initially, we sought to verify the model’s forecasting proficiency in individual rainfall-runoff modeling across 596 catchments. Secondly, we explored Rel-Informer’s potential for regional rainfall-runoff modeling by training distinct models for each HUC to predict runoff within their respective catchments, followed by fine-tuning these models for enhanced accuracy. Finally, we examined the model’s transfer learning capabilities by training a hyper-large model on data from 17 HUC regions and applying it to forecast runoff in the catchments of the remaining HUC.

Experiment 1: individual rainfall-runoff modeling for each catchment

We trained a separate Rel-Informer model for each of the 596 catchments, with detailed parameters outlined in Table 1. Among various deep learning models, we selected LSTM as the benchmark due to its proven effectiveness in time series forecasting. For comparison, we also incorporated Transformer and Informer to validate the efficacy of our improvements. Despite introducing additional learnable parameters, Rel-Informer did not require extra hyper-parameter tuning beyond those of Informer, as these parameters were automatically optimized through backpropagation. Consequently, we modeled the 596 catchments using LSTM, Transformer, Informer, and Rel-Informer, achieving 1- to 7-day-ahead runoff predictions.

Experiment 2: regional rainfall-runoff modeling for each HUC

The strength of deep learning models lies in their ability to accurately model input-output relationships using extensive training samples. When provided with sufficient data, these models excel. However, hydrological studies are often constrained by limited observation sites and historical data. By constructing substantial training samples from existing hydrological data, we can significantly enhance the model’s forecasting performance. Specifically, a model trained on data from multiple catchments is more likely to understand the general rainfall-runoff process better. For instance, consider two similarly behaving catchments. Due to limited data, the training, validation, and testing sets may not have the same distribution. If one catchment experiences extreme events during testing but not training, the model may struggle to predict these events. However, if the other catchment’s training data includes such events, the model could learn from both and improve its predictions for the first catchment.

Based on this rationale, we propose an experimental design that builds a single regional Rel-Informer model for each HUC, utilizing data from all within-region catchments. This approach aims to improve the model’s forecasting of extreme events during testing for each catchment. We train and validate the model using within-HUC data and evaluate its forecasting effectiveness on testing data from all catchments within the same region. The results are then compared with those from Experiment 1 to assess whether this method further enhances the model’s ability to forecast extreme runoff events.

Experiment 3: fine-tuning for the regional rainfall-runoff modeling

In Experiment 2, we constructed the regional Rel-Informer model using training data from all within-HUC catchments. This model minimizes global errors across all catchments through iterative updates using the backpropagation algorithm. While this approach ensures relative optimality with a single model, it may overlook individual forecast performance for each catchment.

To address this, we fine-tune the completed regional model using specific catchment data. This involves further training the model with a small dataset designed to reduce errors between individual predictions and actual observations. The idea is to first learn general runoff generation processes from global data and then adapt the model to specific catchment behaviors. This process typically requires few epochs to balance errors. Ultimately, fine-tuning the regional model for each HUC leads to improved forecasts for individual catchments.

Experiment 4: rel-informer model applied to ungauged catchments

Extending from Experiment 3, we investigate whether a hyper-large Rel-Informer model trained on all observed catchment data can be applied to ungauged catchments. Specifically, we train the model using data from 17 out of 18 HUCs and apply it to the test period data of all catchments within the remaining HUC. This process is repeated 18 times, ensuring all HUCs are tested. The goal is to assess the model’s transferability. If the results of this experiment are satisfactory compared to those of Experiment 1, it suggests the feasibility of runoff forecasting in ungauged catchments without relying on observational data.

Results

In this section, we evaluate the performance of four models (Rel-Informer, Informer, Transformer, and LSTM) on the 596 catchments of the CAMELS dataset, using the four sets of experiments outlined in Sect. Experimental setup. To visually depict the overall distribution of multi-day-ahead predictions across different models and experiments, we present box plots of NSE, RMSE, and ATPE metrics for all models in Fig. 4.

The box plots of (a) NSE, (b) RMSE, and (c) ATPE metrics for four models in four experiments. The abscissa represents experiments 1, 2, 3, and 4, which are aligned with the experiments discussed in subsections Experiment 1: individual rainfall-runoff modeling for each catchment to Experiment 4: Rel-Informer model applied to ungauged catchments, respectively.

Performances of individual rainfall-runoff modeling

Based on the experimental design outlined in Experiment 1, we constructed individual runoff prediction models for 596 catchments. These models were developed using Rel-Informer, Informer, Transformer, and LSTM for 1- to 7-day ahead runoff predictions. Figure 5 illustrates the median and mean values for the NSE, RMSE, and ATPE metrics across these four models, with detailed metric results provided in Table S2. Additionally, we calculated the PI values between each pair of the four models based on the metrics presented in Table S2, as shown in Fig. S1.

The results presented in Fig. 5 clearly indicate that the Rel-Informer consistently outperforms the Informer in 1- to 7-day ahead runoff predictions. This finding underscores the effectiveness of our enhancements to the Informer model and highlights the critical role of relative positional relationships between input features in prediction performance. The original Informer model, relying on absolute position encoding, fails to capture directional information regarding relative distances, leading to the loss of relative positional information within the self-attention mechanism. Conversely, our proposed Rel-Informer model seamlessly integrates relative positional information within the self-attention mechanism, enabling it to simultaneously consider both the magnitude and direction of the impact of relative positions on prediction outcomes. As evident from Figs. S1 (b) and (c), the Rel-Informer model demonstrates an ATPE 25th percentile that is 1.9% higher for 6-day ahead runoff prediction and an ATPE 75th percentile that is 2.0% higher for 7-day ahead runoff prediction compared to the Transformer model. When compared to LSTM, the RMSE 25th percentile for Rel-Informer increases by 4.9% and 8.7% for 4-day and 5-day runoff predictions, respectively. However, it is important to note that in most cases, the NSE, RMSE, and ATPE metrics of the Rel-Informer model surpass those of the Transformer and LSTM models. Specifically, Rel-Informer consistently outperforms the latter two models across all evaluation metrics for 1- to 3-day ahead runoff predictions, further supporting its superiority. The box plots in Fig. 4 provide an intuitive visualization of these conclusions for the NSE, RMSE, and ATPE metrics of Experiment 1.

Regarding the comparison between the Informer, Transformer, and LSTM models, significant differences cannot be visually discerned based on the box plots in Fig. 4. However, Fig. 5 reveals that the Informer model generally performs better than the Transformer model in terms of NSE and RMSE metrics in a substantial number of cases. On the other hand, the Transformer model excels over the Informer model in simulating peak flows, as indicated by its superior ATPE metric performance in more instances. This can be attributed to the Informer model’s probabilistic sparse self-attention mechanism, which enhances operational efficiency and prediction robustness by pruning redundant input information. Additionally, the integration of a 1-D convolutional neural network within the Informer model aids in extracting hidden information from input features, facilitating pattern learning from the data. However, the removal of redundant information may inadvertently result in the loss of crucial features, leading to relatively weaker peak flow simulation. Fig. S1 (e) compares the PI values between the Informer and LSTM models, indicating that the Informer model outperforms LSTM for 1- to 3-day ahead predictions. For longer-term predictions, the performance gap between the two models narrows, suggesting comparable performance beyond three days. Furthermore, Fig. S1 (f) demonstrates that while LSTM performs better than the Transformer model in terms of NSE and RMSE metrics, the Transformer model consistently exhibits superior ATPE metric scores for 1- to 7-day ahead runoff predictions, confirming its strength in simulating peak flows.

The median and mean values of the NSE, RMSE, and ATPE metrics for four models in Experiment 1.

Deficiencies of the regional rainfall-runoff modeling

The results obtained from Experiment 1 demonstrate the efficacy of our proposed Rel-Informer model in enhancing the performance of the Informer and Transformer models, surpassing the benchmark LSTM model. In Experiment 2, we aimed to develop a regional model based on catchment similarity to further improve model performance. We trained four regional runoff prediction models (Rel-Informer, Informer, Transformer, and LSTM) to provide 1- to 7-day ahead runoff predictions for a total of 596 catchments across 18 HUCs. Figure 6 presents the median and mean values for the NSE, RMSE, and ATPE metrics of these four models, with detailed metric results provided in Table S3. Additionally, we calculated the PI values between each pair of the four models based on the metrics presented in Table S3, as shown in Fig. S2.

Initially, we compared the box plots of NSE, RMSE, and ATPE metrics for the same models across both Experiment 1 and Experiment 2, as shown in Fig. 4. For the NSE metric, the box plot for Experiment 2 models is noticeably lower than that of Experiment 1 for the corresponding model. Similarly, for RMSE and ATPE metrics, the box plots for Experiment 2 models are significantly higher compared to Experiment (1) These findings suggest that the performance of regional rainfall-runoff models is considerably inferior to that of individual rainfall-runoff models. Even the Rel-Informer model, which exhibited the best performance in Experiment 1, shows diminished performance in Experiment (2) Specifically, for 1- to 7-day ahead runoff predictions, the mean and median NSE of the Rel-Informer model in Experiment 2 decrease by 3.9–5.8% and 4.4–6.6%, respectively, compared to Experiment 1. The mean and median ATPE of the Rel-Informer model in Experiment 2 increase by 2.0–12.4% and 3.0–15.4%, respectively. Comparable trends are observed for the other three models as well.

Next, we delved deeper into the performance evaluation of the four models in Experiment 2. As evident from Fig. 6, the Rel-Informer consistently outperforms the Informer, Transformer, and LSTM models in terms of both NSE and RMSE metrics. Specifically, as shown in Figs. S2 (a), (b), and (c), for 1- to 7-day ahead runoff predictions, the PI values for the mean, first quartile, median, and third quartile of NSE are all positive (in red), whereas those for RMSE are all negative (indicated in blue). The Rel-Informer model exhibits significantly superior performance in the majority of cases for 1- to 7-day ahead runoff predictions compared to the Informer, Transformer, and LSTM models. Additionally, Fig. 6 reveals that for 1- to 2-day ahead runoff predictions, the Informer model’s mean and median metrics surpass those of the Transformer, whereas for forecasts beyond 2 days, the performance of both models becomes comparable, a finding consistent with Experiment 1. The Informer and Transformer models notably outperform LSTM, particularly in terms of the NSE metric (Fig. 6). Furthermore, for the RMSE and ATPE metrics, the Informer and Transformer models generally surpass LSTM in most cases, albeit with some variations compared to the conclusions drawn in Experiment 1.

To address the aforementioned limitations, we offer the following explanations: Individual rainfall-runoff modeling utilizes training data from each catchment to generate specific forecasts for that catchment. Each model is trained to minimize the error between forecasted and observed values within its respective training set. On the other hand, regional rainfall-runoff modeling employs training data from all catchments within the HUCs to construct a unified model. This approach aims to minimize the overall forecasting error across all catchments. However, it is not feasible to simultaneously guarantee precise minimum errors for individual catchments while also ensuring the minimum error across all catchments. In other words, while regional rainfall-runoff modeling strives to minimize the overall forecasting error, it cannot guarantee minimal forecasting errors for specific catchments. Although regional rainfall-runoff modeling initially intended to capture inherent hydrological characteristics and similarities among multiple catchments, it cannot fully eliminate disparities in forecasting accuracy compared to individual rainfall-runoff modeling tailored to each catchment.

The median and mean values of the NSE, RMSE, and ATPE metrics for four models in Experiment 2.

Power of the fine-tuned regional rainfall-runoff modeling

The results from Experiment 2 show that the performance of the regional rainfall-runoff model is inferior to that of the individual rainfall-runoff model. This is primarily due to its limitation of focusing solely on minimizing overall prediction and observation errors. Therefore, we embarked on fine-tuning the regional rainfall-runoff model, with the aim of achieving high-accuracy predictions for each catchment by learning the hydrological characteristics and similarities among different catchments. Figure 7 displays the median and mean values of the NSE, RMSE, and ATPE metrics for these four models, while more detailed metric results are provided in Table S4. Additionally, based on these metrics in Table S4, we calculated the PI values between each pair of the four models, as illustrated in Fig. S3.

Firstly, a comparison of the evaluation metrics of the simulated runoff results from Experiments 3 and 2 is presented in Fig. 4. Overall, for the NSE metric, the box plots of the four models in Experiment 3 are significantly higher than those in Experiment 2. Conversely, for the RMSE and ATPE metrics, the box plots of the four models in Experiment 3 are noticeably lower than those in Experiment 2. This suggests that regional rainfall-runoff models have considerable potential in capturing general patterns within the catchment cluster. Although the results of Experiment 2 indicated that regional rainfall-runoff models were inferior to individual rainfall-runoff models, the performance of the former significantly improved after fine-tuning. This improvement can be attributed to the model’s enhanced ability to capture general patterns of individual catchments during the regional modeling process, followed by refinement through learning from specific catchment’s historical patterns, thereby enhancing the model’s effectiveness. Furthermore, a comparison of the evaluation metrics of the runoff simulation results for the four models in Experiments 3 and 1, based on Fig. 4, reveals comparable results. In most cases, the evaluation metric results in Experiment 3 are even better. However, it’s worth noting that Experiment 3 requires less data for predicting specific catchments. The strategy employed in Experiment 3 involves initial training of a regional model using data from multiple catchments, followed by fine-tuning with a small amount of historical data specific to each catchment. This approach achieves comparable or even superior performance compared to models trained exclusively for each individual catchment.

Further analysis of Experiment 3 results yields similar conclusions to those of Experiments 1 and 2 (refer to Fig. S3). Specifically, in most scenarios, the Rel-Informer in Experiment 3 significantly outperforms the Informer, Transformer, and LSTM models. However, the performance gap between the Informer and Transformer models narrows in Experiment 3 compared to Experiments 1 and 2, where the Informer excelled in short-term runoff predictions. This implies that as the amount of trainable data increases, the performance disparity between the Informer and Transformer models gradually diminishes. Although the performance difference between the Informer and Transformer models is minimal, they generally surpass the LSTM model (refer to Figs. S3 (e) and (f)). For the NSE metric, both the Informer and Transformer models significantly outperform the LSTM (in red). For the RMSE and ATPE metrics, the Informer and Transformer models are generally superior to the LSTM (in blue) in a significant number of cases. This indicates that with increased trainable data, the Rel-Informer, Informer, and Transformer models significantly outperform the LSTM, owing to the complexity and superiority of their encoder-decoder structure.

The median and mean values of the NSE, RMSE, and ATPE metrics for four models in Experiment 3.

Rel-informer enables better runoff forecasting for ungauged catchments

The outcomes of Experiments 2 and 3 underscore the substantial potential of regional rainfall-runoff modeling. Adhering to the design of Experiment 4, we trained hyper-large rainfall-runoff models using the four approaches to forecast runoff in ungauged catchments. Figure 8 showcases the median and mean values of the NSE, RMSE, and ATPE metrics for these models, with detailed metric results provided in Table S5. Additionally, based on these metrics in Table S5, we calculated the PI values between each pair of the four models, as depicted in Fig. S4.

Based on Fig. 4, we comprehensively compare the runoff simulation results from Experiment 4 with those obtained from the 596 catchments in Experiments 1, 2, and 3. Evidently, for the same model, the simulation results from Experiment 4 surpass those from Experiment 2 but are inferior to Experiments 1 and 3. Furthermore, a comparative analysis, based on Figs. S5 (a), (b), and (c), assesses the performance disparities between the Rel-Informer model and the Informer, Transformer, and LSTM. The performance gap between the Rel-Informer and Informer models is relatively narrow, with instances where the Informer model even outperforms the Rel-Informer in terms of the NSE metric, particularly for 2-day and 7-day ahead runoff predictions. Both the mean and median NSE values indicate the Informer’s superior performance. From the perspective of the RMSE and ATPE metrics, the Rel-Informer still exhibits significant advantages. In most cases, the Rel-Informer model surpasses the Informer, and in all instances, it outperforms both the Transformer and LSTM. Moreover, as evident from Figs. S5 (d), (e), and (f), when compared to the LSTM, the performance of the Informer and Transformer models significantly exceeds that of the LSTM. Regarding the Informer and Transformer, the Informer generally outperforms the Transformer in most cases when considering the NSE and RMSE metrics, whereas the Transformer tends to outperform the Informer in most instances when evaluating the ATPE metric.

We observe that in Experiment 1, the LSTM model’s performance attains a level comparable to that of the Informer and Transformer. However, in Experiments 2, 3, and 4, it becomes apparent that in most scenarios, the performance of the Informer and Transformer significantly surpasses that of the LSTM. In these experiments, the primary difference, with the same model structure, lies in the quantity of trainable data. This indicates that when only a limited amount of data is available, the performance disparity between the four models is narrow, and in certain cases, the LSTM’s performance may even prove superior to the Informer and Transformer. Conversely, as the accessible data increases, the predictive performance gap between the Rel-Informer, Informer, and Transformer models, in comparison to the LSTM model, gradually widens.

The median and mean values of the NSE, RMSE, and ATPE metrics for four models in Experiment 4.

Discussion

Relationship between prediction performance and hydrologic characteristics

The experimental results reveal significant variations in the predictive performance among the four models across the 596 catchments. Focusing specifically on Experiment 3, which demonstrates the best performance among the four sets of experiments, we delve into the details. Taking the Rel-Informer model as a representative case, Fig. 9(a) presents the NSE results for the model’s 1-day-ahead runoff prediction across the 596 catchments. In terms of spatial distribution, the overall runoff prediction is better in the eastern and western catchments, poorer in the central catchments, better in the northern catchments compared to the southern ones, and better in the coastal areas compared to the inland areas. Furthermore, Fig. 9(b), (c), and (d) show the plots for the mean daily precipitation, frac_snow_daily (fraction of precipitation falling as snow), and baseflow index of the 596 catchments, respectively. Fig. (e) displays scatter plots between NSE and the three hydrological features. It is shown that the prediction accuracy has some correlation with these hydrological features.

In snow-rich catchments like the Rockies, effective streamflow prediction can be achieved with only a few measurements. This is primarily because snowmelt-dominated catchments are less affected by precipitation’s spatial and temporal variability compared to those driven by rainfall. The greater predictability in snowmelt-dominated catchments aligns with numerous existing studies that utilize both physical-based42,43,44 and data-driven models45. In such regions, flow conditions exhibit more consistent seasonal patterns. Similarly, catchments with higher baseflow indices demonstrate better flow prediction compared to those with lower baseflow indices. This may be attributed to the fact that baseflow-dominated catchments rely more on subsurface storage and experience greater precipitation variability46. Furthermore, flows in these baseflow-dominated catchments are less affected by precipitation variability. In summary, catchments characterized by temporal consistency and lower intermittency act as filters for rainfall variability, emphasizing storage processes. Consequently, streamflow in storage-dominated systems exhibits higher predictability compared to rainfall-dominated high-dimensional systems. However, streamflow in rainfall-dominated high-dimensional systems remains relatively predictable.

Some of the hydrological characteristics of the CAMELS dataset used in this study and some of the experimental results. Panel (a) shows the NSE results for the top-performing Rel-Informer model for 1-day-ahead runoff prediction in Experiment 3. Panel (b) shows the average daily precipitation for each catchment. Panel (c) shows the proportion of precipitation falling as snow in each catchment. Panel (d) shows the baseflow index for each catchment. Panel (e) shows scatter plots between each of the three hydrologic features and the NSE.

Advantages and limitations of Rel-Informer

The results of Experiment 3 highlight the remarkable performance of the Rel-Informer model in fine-tuned regional rainfall-runoff modeling. Compared to individual catchment modeling (Experiment 1) and basic regional modeling (Experiment 2), fine-tuning the regional Rel-Informer model with specific catchment data significantly enhanced its predictive accuracy. This finding suggests that Rel-Informer’s ability to capture general runoff patterns across a region can be further optimized through fine-tuning with catchment-specific data. One possible explanation for Rel-Informer’s outstanding performance in this context is its capacity to incorporate both global and local information. The encoder-decoder structure, combined with the probabilistic sparse self-attention mechanism and relative position encoding, enables Rel-Informer to learn general runoff patterns from extensive regional data while fine-tuning its predictions to individual catchments. This balance between generality and specificity appears to be particularly effective in regional runoff modeling.

The comparative analysis of the four models across the four experiments reveals several noteworthy observations. In individual catchment modeling (Experiment 1), Rel-Informer consistently outperforms the other models, with the performance gap being particularly evident for short-term predictions (1–3 days ahead). This finding suggests that when sufficient data is available for a specific catchment, Rel-Informer’s enhanced model structure and relative position encoding enable it to capture intricate runoff patterns more accurately. However, in regional modeling (Experiment 2), the performance of all models, including Rel-Informer, decreases compared to individual catchment modeling. This deterioration can be attributed to the complexity of modeling multiple catchments with diverse hydrological characteristics using a single model. Despite this, Rel-Informer still performs the best among the four models, indicating its robustness even in challenging regional contexts. The results of fine-tuned regional modeling (Experiment 3) indicate that Rel-Informer’s performance can be further improved by incorporating catchment-specific data. This finding highlights the importance of tailored model training for optimal predictive performance, especially in applications where high accuracy is crucial. Finally, in ungauged catchment modeling (Experiment 4), Rel-Informer again demonstrates its superiority, suggesting its potential for transfer learning applications. The ability to apply a hyper-large Rel-Informer model trained on observed catchments to ungauged catchments is a significant step forward in the field of runoff prediction, particularly in data-scarce regions.

The CAMELS dataset used in this study contains ample data to meet the requirements of large models like Transform (Rel-Informer and Informer). Our final conclusions show that models employing the encoder-decoder structure outperform LSTM on the CAMELS dataset. Numerous research findings support this view as well3,24,27. However, we acknowledge that encoder-decoder models place high demands on data due to their extensive parameterization, and inadequate data can result in insufficient model training (Effective predictions in such cases may depend on the hydrological characteristics of the catchment area, and as mentioned in Sect. 4.1, snow-rich mountainous regions require only a small amount of training data for achieving accurate predictions). For example, the study by Wei et al. demonstrated that the GRU model (a variant of LSTM) demonstrated the highest effectiveness in runoff prediction at Datong Station on the Yangtze River, while the Transformer model with a significantly larger number of parameters was the least effective due to inadequate data24. The side-by-side comparison of Experiment 1 results with the other three experiments in this study further strengthens this conclusion. Currently, numerous regions worldwide still lack sufficient historical hydrological data or have missing data, significantly limiting the application of such models.

Lastly, we wish to emphasize that, despite the prevalence of utilizing static attributes available within the CAMELS dataset as inputs in regionalized modeling studies (including the present one), selecting all available static attribute features as inputs may lead to significant model uncertainty3,8,17,35,36. There may exist issues of multicollinearity among various input features, and furthermore, some features could be redundant. Whether these factors impact model performance warrants further investigation in future research.

Limitations of deep learning models and prospects for our future studies

Our study shows that deep learning models (Rel-Informer, Informer, Transformer, and LSTM) perform well on large datasets. However, these models still have several limitations for runoff prediction in different catchments47,48. Firstly, deep learning models generally require a substantial amount of data. Obtaining long-term continuous runoff observations for runoff prediction is particularly challenging, especially in areas with a lack of reliable observation stations. This may result in insufficient training samples for deep learning models, thereby affecting the accuracy and reliability of prediction results. Secondly, deep learning models face challenges in hydrological feature extraction. For complex nonlinear and non-smooth processes, these models struggle with feature extraction, leading to limited performance. Thirdly, interpretability is another issue with deep learning models. These models are often considered as black-box models, making it difficult to interpret and understand their prediction results due to their internal complex structures and parameters. In certain application scenarios such as water resource management, the lack of interpretability can limit the practical application and reliability assessment of deep learning models. Lastly, the generalization ability of deep learning models is a concern. Although these models may perform well on training data, their ability to generalize to new unseen samples is not necessarily reliable. Runoff patterns can significantly vary in different areas due to geographic, climatic, and geomorphological changes, which places higher demands on the model’s generalization ability.

This study analyzes the runoff prediction effectiveness of four deep learning models on 596 catchments from a macroscopic perspective. However, the tuning of deep learning model parameters remains challenging and significantly influences the final prediction accuracy49,50,51. While this study primarily refers to the parameter settings of other studies, we utilized the same parameters for constructing the model in each catchment. This approach saves time in training the model but introduces greater uncertainty in the final results. By tuning the parameters, we can improve poorly performing models. Therefore, future studies will involve a reduced number of catchments to explore suitable parameter values based on the hydrological characteristics of each catchment. Furthermore, research on interpretability of data-driven models is a current hotspot and challenging area. We aim to employ different techniques and methods in the future to interpret various deep learning models and integrate some aspects of physical models with data-driven models.

Conclusions

In this study, we introduced relative position encoding based on Informer, proposed the Rel-Informer model, and applied it to CAMELS data for rainfall-runoff modeling. We designed four experiments to evaluate the performance of Rel-Informer with Informer, Transformer and LSTM models. The first experiment is individual rainfall-runoff modeling, where separate models are constructed for each catchment. The second experiment involves regional rainfall-runoff modeling, with models constructed separately for each HUC. In the third experiment, we perform fine-tuned regional rainfall-runoff modeling by providing additional training for the catchments predicted in Experiment 2. Lastly, the fourth experiment constructs a hyper-large rainfall-runoff model for runoff prediction in ungauged catchments. Eventually, we draw the following conclusions:

-

(1)

In terms of overall model performance, fine-tuned regional rainfall-runoff modeling outperformed individual rainfall-runoff modeling, while individual rainfall-runoff modeling outperformed regional rainfall-runoff modeling. Rel-Informer has the best overall performance in individual and regional rainfall-runoff modeling, Informer and Transformer have comparable overall performance, and LSTM has the worst overall performance.

-

(2)

When the amount of trainable data is small, the performance of the LSTM model is comparable to or even better than that of the encoder-decoder structural models (Rel-Informer, Informer, and Transformer). However, when there is sufficient trainable data, the encoder-decoder structural model usually outperforms LSTM.

-

(3)

There are spatial variations in the predictive efficiency of the models. Snow-dominated catchments and those with high baseflow indices generally have better predictions compared to rainfall-dominated catchments. The snowmelt zone demonstrates better prediction efficiency, possibly due to the strong low-dimensional dynamic characteristics of the catchment and the seasonality of the flow regime.

Although this study tested the potential of Rel-Informer only on the CAMELS dataset, it can still be used as a valid deep learning model for studying rainfall-runoff modeling in other regions. It will help researchers to develop more appropriate and efficient Rel-Informer-based rainfall-runoff models for specific catchments.

Data availability

The CAMELS dataset used in this study is publicly available and can be downloaded at https://dx.doi.org/10.5065/D6MW2F4D.

References

Cui, H. et al. On method of regional non-stationary flood frequency analysis under the influence of large reservoir group and climate change. J. Hydrol. 618, 129255. https://doi.org/10.1016/j.jhydrol.2023.129255 (2023).

Jaiswal, R. K., Ali, S. & Bharti, B. Comparative evaluation of conceptual and physical rainfall-runoff models. Appl. Water Sci. 10. https://doi.org/10.1007/s13201-019-1122-6 (2020).

Yin, H. L., Guo, Z. L., Zhang, X. W., Chen, J. J. & Zhang, Y. N. RR-Former: rainfall-runoff modeling based on Transformer. J. Hydrol. 609. https://doi.org/10.1016/j.jhydrol.2022.127781 (2022).

Jin, J. et al. Benchmarking data-driven rainfall-runoff modeling across 54 catchments in the Yellow River Basin: overfitting, calibration length, dry frequency. J. Hydrol-Reg Stud. 42, 101119. https://doi.org/10.1016/j.ejrh.2022.101119 (2022).

Lees, T. et al. Benchmarking data-driven rainfall–runoff models in Great Britain: a comparison of long short-term memory (LSTM)-based models with four lumped conceptual models. Hydrol. Earth Syst. Sci. 25, 5517–5534. https://doi.org/10.5194/hess-25-5517-2021 (2021).

Moosavi, V., Gheisoori Fard, Z. & Vafakhah, M. Which one is more important in daily runoff forecasting using data driven models: Input data, model type, preprocessing or data length? J. Hydrol. 606, 127429. https://doi.org/10.1016/j.jhydrol.2022.127429 (2022).

Wood, E. F. et al. Hyperresolution global land surface modeling: Meeting a grand challenge for monitoring Earth’s terrestrial water. Water Resour. Res. 47. https://doi.org/10.1029/2010WR010090 (2011).

Kratzert, F., Klotz, D., Brenner, C., Schulz, K. & Herrnegger, M. Rainfall-runoff modelling using long short-term memory (LSTM) networks. Hydrol. Earth Syst. Sci. 22, 6005–6022. https://doi.org/10.5194/hess-22-6005-2018 (2018).

Xiang, Z. R., Yan, J., Demir, I. A. & Rainfall-Runoff Model with LSTM-Based sequence-to-sequence learning. Water Resour. Res. 56. https://doi.org/10.1029/2019wr025326 (2020).

Kim, C. & Kim, C. S. Comparison of the performance of a hydrologic model and a deep learning technique for rainfall- runoff analysis. Trop. Cyclone Res. Rev. 10, 215–222. https://doi.org/10.1016/j.tcrr.2021.12.001 (2021).

Xu, T. F. & Liang, F. Machine learning for hydrologic sciences: an introductory overview. Wires Water. 8. https://doi.org/10.1002/wat2.1533 (2021).

Chen, X. et al. Comparison of deep learning models and a typical process-based model in glacio-hydrology simulation. J. Hydrol. 615, 128562. https://doi.org/10.1016/j.jhydrol.2022.128562 (2022).

Huang, S. et al. Coupling machine learning into Hydrodynamic models to improve river modeling with Complex Boundary conditions. Water Resour. Res. 58. https://doi.org/10.1029/2022wr032183 (2022).

Karimi, S. M., Mirzaei, M., Dehghani, A., Galavi, H. & Huang, Y. F. Hybrids of machine learning techniques and wavelet regression for estimation of daily solar radiation. Stoch. Env Res. Risk A. 36, 4255–4269. https://doi.org/10.1007/s00477-022-02261-8 (2022).

Toh, S. C. et al. Sequential Data Processing for IMERG Satellite Rainfall Comparison and Improvement Using LSTM and Appl. Sci-Basel 13. https://doi.org/10.3390/app13127237 (2023).

Yin, H., Guo, Z., Zhang, X., Chen, J. & Zhang, Y. Runoff predictions in ungauged basins using sequence-to-sequence models. J. Hydrol. 603. https://doi.org/10.1016/j.jhydrol.2021.126975 (2021).

He, M. et al. Streamflow prediction in ungauged catchments through use of catchment classification and deep learning. J. Hydrol. 639, 131638. https://doi.org/10.1016/j.jhydrol.2024.131638 (2024).

Mirzaei, M. et al. A Novel Stacked Long Short-Term Memory Approach of Deep Learning for Streamflow Simulation. Sustainability 13. https://doi.org/10.3390/su132313384 (2021).

Wang, W. et al. An enhanced monthly runoff time series prediction using extreme learning machine optimized by salp swarm algorithm based on time varying filtering based empirical mode decomposition. J. Hydrol. 620, 129460. https://doi.org/10.1016/j.jhydrol.2023.129460 (2023).

Wang, Y., Wang, W., Xu, D., Zhao, Y. & Zang, H. -f. A novel strategy for flood flow prediction: integrating spatio-temporal information through a two-dimensional hidden layer structure. J. Hydrol. 638, 131482. https://doi.org/10.1016/j.jhydrol.2024.131482 (2024).

Dehghani, A. et al. Comparative evaluation of LSTM, CNN, and ConvLSTM for hourly short-term streamflow forecasting using deep learning approaches. Ecol. Inf. 75. https://doi.org/10.1016/j.ecoinf.2023.102119 (2023).

Vaswani, A. et al. Attention Is All You Need. in 31st Annual Conference on Neural Information Processing Systems (NIPS). (2017).

He, S. T. et al. TransReID: Transformer-based Object Re-Identification. in 18th IEEE/CVF International Conference on Computer Vision (ICCV). https://doi.org/10.1109/iccv48922.2021.01474 (2021).

Wei, X., Wang, G., Schmalz, B., Hagan, D. F. T. & Duan, Z. Evaluation of Transformer model and self-attention mechanism in the Yangtze River basin runoff prediction. J. Hydrol-Reg Stud. 47, 101438. https://doi.org/10.1016/j.ejrh.2023.101438 (2023).

Li, B. et al. Hybrid hydrological modeling for large Alpine basins: a distributed Approach. Hydrol. Earth Syst. Sci. 2024, 1–26. https://doi.org/10.5194/hess-2024-54 (2024).

Xu, Y. et al. Deep transfer learning based on transformer for flood forecasting in data-sparse basins. J. Hydrol. 625, 129956. https://doi.org/10.1016/j.jhydrol.2023.129956 (2023).

Botterill, T. E. & McMillan, H. K. Using machine learning to identify hydrologic signatures with an encoder-decoder Framework. Water Resour. Res. 59. https://doi.org/10.1029/2022wr033091 (2023).

Peng, L. et al. Recurrent fine-tuning transfer learning for water quality long-term prediction. Water Res. 225. https://doi.org/10.1016/j.watres.2022.119171 (2022).

Zhou, H. Y. et al. Informer: Beyond Efficient Transformer for Long Sequence Time-Series Forecasting. In 35th AAAI Conference on Artificial Intelligence. (2021).

Du, N., Liang, X., Wang, C. & Jia, L. Multi-station Joint Long-term Water Level Prediction Model of Hongze Lake Based on RF-Informer. In: 2022 3rd International Conference on Information Science, Parallel and Distributed Systems (ISPDS). https://doi.org/10.1109/10.1109/ISPDS56360.2022.9874178 (2022).

Bi, J., Zhang, C., Yuan, H., Guan, Z. & Qiao, J. Multi-indicator Water Quality Prediction with ProbSparse Self-attention and Generative Decoder. In: 2022 IEEE International Conference on Systems, Man, and Cybernetics (SMC).. https://doi.org/10.1109/SMC53654.2022.9945230 (2022).

Yan, H., Deng, B., Li, X. & Qiu, X. TENER: adapting transformer encoder for named entity recognition. https://doi.org/10.48550/arXiv.1911.04474 (2019).

Dai, Z. et al. Transformer-xl: attentive language models beyond a fixed-length context. https://doi.org/10.48550/arXiv.1901.02860 (2019).

Kao, I. F., Zhou, Y. L., Chang, L. C. & Chang, F. J. Exploring a long short-term memory based Encoder-Decoder framework for multi-step-ahead flood forecasting. J. Hydrol. 583. https://doi.org/10.1016/j.jhydrol.2020.124631 (2020).

Kratzert, F. et al. Toward improved predictions in Ungauged basins: exploiting the power of machine learning. Water Resour. Res. 55, 11344–11354. https://doi.org/10.1029/2019wr026065 (2019).

Kratzert, F. et al. Towards learning universal, regional, and local hydrological behaviors via machine learning applied to large-sample datasets. Hydrol. Earth Syst. Sci. 23, 5089–5110. https://doi.org/10.5194/hess-23-5089-2019 (2019).

Addor, N., Newman, A. J., Mizukami, N. & Clark, M. P. The CAMELS data set: catchment attributes and meteorology for large-sample studies. Hydrol. Earth Syst. Sci. 21, 5293–5313. https://doi.org/10.5194/hess-21-5293-2017 (2017).

Newman, A. J. et al. Development of a large-sample watershed-scale hydrometeorological data set for the contiguous USA: data set characteristics and assessment of regional variability in hydrologic model performance. Hydrol. Earth Syst. Sci. 19, 209–223. https://doi.org/10.5194/hess-19-209-2015 (2015).

Zou, Y. S., Wang, J., Lei, P. & Li, Y. A novel multi-step ahead forecasting model for flood based on time residual LSTM. J. Hydrol. 620. https://doi.org/10.1016/j.jhydrol.2023.129521 (2023).

Shang, J. D. et al. Application of Informer Model Based on SPEI for Drought Forecasting. Atmosphere 14. https://doi.org/10.3390/atmos14060951 (2023).

Gong, M. J. et al. Load forecasting of district heating system based on informer. Energy 253. https://doi.org/10.1016/j.energy.2022.124179 (2022).

Knoben, W. J. M., Freer, J. E., Peel, M. C., Fowler, K. J. A. & Woods, R. A. A brief analysis of conceptual model structure uncertainty using 36 models and 559 catchments. Water Resour. Res. 56. https://doi.org/10.1029/2019wr025975 (2020).

Pool, S., Viviroli, D. & Seibert, J. Value of a limited number of discharge observations for improving regionalization: a large-sample study across the United States. Water Resour. Res. 55, 363–377. https://doi.org/10.1029/2018wr023855 (2019).

Pool, S. & Seibert, J. Gauging ungauged catchments - active learning for the timing of point discharge observations in combination with continuous water level measurements. J. Hydrol. 598. https://doi.org/10.1016/j.jhydrol.2021.126448 (2021).

Pham, L. T., Luo, L. F. & Finley, A. Evaluation of random forests for short-term daily streamflow forecasting in rainfall- and snowmelt-driven watersheds. Hydrol. Earth Syst. Sci. 25, 2997–3015. https://doi.org/10.5194/hess-25-2997-2021 (2021).

Tetzlaff, D. et al. How does landscape structure influence catchment transit time across different geomorphic provinces? Hydrol. Process. 23, 945–953. https://doi.org/10.1002/hyp.7240 (2009).

Feng, D. P., Lawson, K. & Shen, C. P. Mitigating prediction error of Deep Learning Streamflow models in large data-sparse regions with ensemble modeling and Soft Data. Geophys. Res. Lett. 48. https://doi.org/10.1029/2021gl092999 (2021).

Zhang, K., Luhar, M., Brunner, M. I. & Parolari, A. J. Streamflow Prediction in Poorly Gauged watersheds in the United States through Data-Driven Sparse sensing. Water Resour. Res. 59. https://doi.org/10.1029/2022wr034092 (2023).

Sharafati, A., Asadollah, S. & Hosseinzadeh, M. The potential of new ensemble machine learning models for effluent quality parameters prediction and related uncertainty. Process. Saf. Environ. 140, 68–78. https://doi.org/10.1016/j.psep.2020.04.045 (2020).

Yadav, A. K., Malik, H. & Chandel, S. S. Selection of most relevant input parameters using WEKA for artificial neural network based solar radiation prediction models. Renew. Sust Energ. Rev. 31, 509–519. https://doi.org/10.1016/j.rser.2013.12.008 (2014).

He, M. et al. Y. Streamflow prediction in ungauged catchments through use of catchment classification and deep learning. J. Hydrol. 639, 131638 (2024).

Acknowledgements

This research was supported by the Jiangxi Provincial Natural Science Foundation (Grant NO: 20232BAB214089), and the Science and Technology Project of Jiangxi Provincial Department of Education (Grant No: GJJ190943, GJJ190973).

Author information

Authors and Affiliations

Contributions

H.M.: Conceptualization, Methodology, Software, Validation, and Editing. X.X.: Conceptualization, Methodology, Software, Validation, and Editing. W.S.: Funding, Conceptualization, Methodology, Reviewing, and Editing. K.C.: Funding, Conceptualization, Methodology, Reviewing, and Editing. B.H.: Funding, Conceptualization, Reviewing, and Editing.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

He, M., Xu, X., Wu, S. et al. Multi-step ahead forecasting of daily streamflow based on the transform-based deep learning model under different scenarios. Sci Rep 15, 5451 (2025). https://doi.org/10.1038/s41598-025-89837-w

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-89837-w