Abstract

Artificial intelligence (AI) has promoted application and development of self-driving cars. However, when self-driving cars encounter ethical dilemma, it is still hard to make a satisficing and clear decision-making by these present moral rules and mechanisms, which makes people distrust in self-driving cars in real life. It is necessary to design a computational and multi-factor decision-making model for self-driving cars. ACWADOE (WADOE Based on Attribute Correlation) is proposed to achieve probabilities of going straight and swerving in ethical dilemmas from more influencing factors to make satisficing and clear decision-making as far as possible. In order to construct ACWADOE model, the prior probability between influencing factor and decision-making is calculated by survey data in moral machine, which can express human preferences and tendencies, align with the requirements of the majority. Then 116 dilemmas are designed and chosen to solve correlation coefficient between influencing factors. Moreover, 84 comparative dilemmas are designed to achieve information gain ratio between influencing factors, then the weight of each factor in decision-making can be calculated by constructing pairwise comparison matrix. Lastly, 40 dilemmas are used to test and verify NB (Naive Bayes), ADOE (Averaged One-Dependence Estimators), WADOE (Weighted ADOE) and ACWADOE respectively. The test results show that ACWADOE is more suitable with human requirements than other models, its accuracy is 92.5%. Furthermore, ACWADOE not only provides a computational decision-making model in ethical dilemma for self-driving cars, but also provides a few references for other AI systems to solve ethical dilemma, which is conducive to make satisficing and clear decisions.

Similar content being viewed by others

Introduction

Self-driving technology can not only facilitate driving, but also reduce the occurrence of traffic accident, improve efficiency of traffic communication, reduce energy consumption1,2,3. However, due to the failure of hardware or software or execution delay in accident, it can’t still avoid traffic accident and encounters ethical dilemmas in real life, just as an ethical dilemma in Fig. 1, which was generated and designed by open tools in moral machine, its link is https://www.moralmachine.net/.

An ethical dilemma.

There are three old ladies crossing the road in the straight at red light, while only a little girl is crossing the road in the swerving at green light. If self-driving car malfunctions or has no time to stop to avoid colliding with pedestrians, how about self-driving car in the ethical dilemma? If it chooses to go straight, three old ladies are hit to cause death. Or else, the little girl is hit to be died in the accident. It is hard for self-driving car to make reasonable and clear decision-making by these present moral rules or theories. Therefore, many scholars and research institutions are engaged in exploring some decision-making mechanisms and rules in ethical dilemmas. For example, Menon et al. proposed a method to integrate decision-making principles into safety requirements, which implements decision-making by safety modules. Moreover, according to risk balance, all participants related to traffic accidents are taken as risk bearers, ranked by risk priority, and risk compensation is made for different risk bearers4,5. Hong et al. designed a decision-making platform (LO-MPC) for self-driving cars, which assigns different priorities for road users and considers performance restrictions. Self-driving car should make decision by road users’ priorities and performance restrictions6, so that it chooses to protect these road users with high priority in ethical dilemmas. Pickering et al. used a simulation tool to predict harm to passengers and pedestrians in traffic accidences. According to simulation data, an ethical decision-making model (EDM) is established to make decisions in ethical dilemmas to minimize harm in accidents7. Yueh-hua Wu and Shou-de Lin proposed an integration of human strategy and machine decision-making, which prevents machines from making error decisions by reinforcement learning8. Katherine Evans et al. proposed Ethical Valence Theory (EVT), which provided a decision-making mechanism based on ethical valences and harms. In general, when passengers are not seriously injured, priority should be given to protect those pedestrians with high ethical valences, but also attention should be paid to keep balances of interest among road users9. J. Christian Gerdes and Sarah M. Tronton proposed an implementable decision-making method for self-driving cars, which would keep the balance between goal and cost10. Furthermore, Wang Y designed a decision-making model based on neural network, random forest and analytical IES to make decision in ethical dilemmas. It integrates public opinions and personal preferences to avoid unreasonable decision-making11. In addition, A decision-making model (DDM) was constructed by T-S neural network, which integrated ethics and legal factors into decision-making in the conflict caused by signal lights12. However, there are two problems in the method: (1) The opacity of neural network means that people is hard to explain the relationship between input and output. (2) The training agent is adopted to imitate human’s decision, and it is very critical to choose reasonable and too many samples in training sets for learning. Li F proposed that laws should be applied to construction and shaping of ethics to prevent them from replacing of legal justice by their own decision-making mechanisms13. Huang proposed that self-driving cars, as AI devices, have the problem of autonomy and can not be regarded as moral entities bearing responsibilities. So they make decisions by combining moral philosophy and personal preferences in ethical dilemmas14. Zhu believed that “trolley problem” is a thought experiment, decision-making cannot only depend on statistics and analysis, but can be demonstrated by philosophical theories. Moreover, two methods are not in opposition. If philosophical theory can be assisted by feature detection, it may be the best solution to solve the problem15. Due to irrationality and ambiguity in decision-making by a moral rule and method, a few scholars propose a few dynamic decision-making architectures and methods for self-driving cars. For example, Contissa et al. proposed a “moral knob” architecture, where an end denotes altruism and another denotes egoism, and the center corresponds to “complete neutrality”16, user may be free to choose moral rule by the knob. Furthermore, personal ethics setting (PES) is designed to transfer rights from the designer and manufacturer of self-driving cars to user to choose to reduce liability disputes. However, this customized decision-making strategy may bring uncertainty and opacity for pedestrians, some people ignore other or collective interests for self-interests, which can bring prisoner’s dilemma. A few experts put forward mandatory ethics setting (MES), which embeds a uniform rule in advance to make decisions facing ethical dilemmas17. In addition, Maximilian Geisslinger et al. proposed a hybrid decision-making rule: ethics of risk, which compels Bayes principle, Maximum and Minimum principle and Equality principle to ensure the rationality and fairness during decision-making18,19. Moreover, Bayes principle is used to make decisions based on collision probability. Maximum and Minimum principle is used to make decisions based on maximum effect and minimum damage during collision. Equality principle is used to keep the balance of life rights and interests among road users in decision-making.

In all, due to the inability to evaluate the weight and role of each factor and consider more influencing factors in decision-making, the present methods and rules can not make satisficing and clear decision-making in ethical dilemmas. Therefore, it is necessary for self-driving cars to design a computational decision-making model with multi-factors and different weight for each factor by survey data.

There are 7 parts in this paper. Part 1 presents all kinds of Bayesian theories and algorithms, such as NB, Tree Augmented Naive Bayes (TAN), Selective Bayesian Classifier (SBC), k-Dependence Bayesian Network (KDB), ADOE, WADOE. Part 2, 3 and 4 describe how to solve prior probability, correlation coefficient and the weight of each factor in decision-making respectively. How to construct and apply ACWADOE is described in part 5. Part 6 presents test results. Lastly, a few constraints and limitations in decision-making by ACWADOE and analysis of contributions for some stakeholders are summarized.

Bayesian theory

Bayesian inference is a kind of inference method proposed by Bayes, which converts the variable to be evaluated into a posterior distribution by prior distribution when data distribution is known20. Moreover, this prior distribution is not easy to be interfered by noisy data, so the model trained from data sets has good stability. In particular, when data set is incomplete or the consequence is uncertain, Bayesian network classifier can give more reasonable, sensible and explainable decision-making or classification. Naive Bayes (NB) is the earliest proposed network with the simplest structure, which assumes conditional independence among all attributes and has achieved good classification in many fields21,22, for example, Langarizadeh uses NB to diagnose sickness23, and Rajeswari used NB to implement multi-text classification24. However, NB can work with the assumption of independence, but it is difficult to satisfy with the independence in real life to not make right classification by NB. Therefore, many scholars put forward a few optimizational algorithms for NB. For example, Langley proposed Selective Bayesian Classifier (SBC) for selecting favorable data sets by forward search technology25. Konoenko proposed a semi-naive Bayes classifier in 2000, which considers interdependence between a few attributes to reduce complexity and consider a few strong dependent attributes26. Friedman proposed Tree Augmented Naive Bayes (TAN), which aims to allow the first-order dependence between attributes, and use mutual information to build a spanning tree with maximum dependence27. Sahami proposed k-Dependence Bayesian Network (KDB), which allows higher order dependence between attributes and has good scalability28. However, both TAN and KDB require to constantly choose appropriate super-node to construct NB network, for which it takes more time. Especially, when k increases in KDB, the complexity increases exponentially. In order to reduce the complexity and allow some dependencies between attributes, Geoffrey I Webb, et al. proposed ADOE, which adopts average strategy and is composed of many SPODE models (Super Parent First-order Dependent Bayesian), which depends on the same attribute called super-parent node. The posterior probability is calculated by Formula 129,30,31.

Furthermore, many examples show that ADOE has better and more stabilities in classification and decision-making. In particular, when data distribution is inconsistent in training sets, ADOE has more prominent. However, it does not consider that various factors have different influences on decision-making, which is not conducive to make reasonable decision31. Therefore, WADOE is proposed to assign different weights for each factor based on the importances and roles of various factor, which can strengthen the role of important factors and weaken or reduce the interference of minor factors32,33,34,35. The posterior probability may be calculated in WADOE by Formula 2.

wi represents the weight of i factor in decision-making, which may be calculated by information gain ratio or mutual information. However, there is a mandatory constraint that each attribute is regarded as super-parent node in turn in ADOE and WADOE, it amplifies dependency between attributes, but ignores attribute correlation between super-parent and sub-node attribute31,36,37. Furthermore, it is hard to solve the joint probability of multiple attributes P(xj|c,xi) for small training set. So it is easy to solve the posterior probability by attribute correlation in ACWADOE to make decisions in ethical dilemmas for self-driving cars.

Prior probability

To solve the posterior probability with multiple attributes, the prior probability must be calculated in ethical dilemmas with single attribute firstly. The prior probability refers to the probability of making decision or classification under single factor, which is usually achieved by survey data in moral machine. Although these survey data in moral machine indicates human preferences and decision-making in ethical dilemmas, carrying some emotional and moral sensibilities, but it can reflect choices and decisions of the majority. Perhaps these survey data has no rationality and even violates some moral rules, such as utilitarisanism and deontology. But they can be suitable with the expections and satisfactories of the majority, which is taken as a people-centered philosophy. Therefore, it is crucial to adequately consider human requirements in policy-making, social governance and product design to ensure keeping the interests and developments for human. For example, the prior probability of the female in Table 1 is 0.509, which indicates there is difference in gender in an ethical dilemma, the probability of choosing to protect the female is 0.509 for the majority. The posterior probability refers to the probability of making decision or classification in ethical dilemma with multiple attributes. As the survey data in Moral Machine mainly aims at the statistics for the choices and decisions made by respondents in ethical dilemma with single factor, it is used to calculate the prior probability for each attribute by survey data. The prior probability for each attribute is shown in Table 1.

In the table, “swerving” indicates that self-driving cars choose to swerve . “straight” indicates that self-driving cars choose to go straight. For species, human is given more priority to be protected in most dilemmas, so the prior probability of species can not be calculated in ACWADOE. Only gender, number, age, social status, traffic rule and harm are chosen as influencing factors in decision-making. Moral Machine was set up by the Massachusetts Institute of Technology (MIT) in 2016, to aim to get survey data that how human would like self-driving cars to make decisions in ethical dilemmas. Furthermore, in order to gather as much data as possible around the world to assess human preferences and role of each factor in an unavoidable accident, more one million survey data have been collected in Moral Machine31.

Correlation coefficient

To solve correlation coefficient between influencing factors, 116 dilemmas with two or more factors are designed and chosen to be surveyed by online. each respondent randomly choose 15 dilemmas to be answered. 844 valid questionnaires are received and 12,660 data items are collected. All respondents are adults, 321 of which are female and understand traffic rules. According to majority principle, more respondents choose to swerve in 72 dilemmas and choose to go straight in 44 dilemmas. Therefore, decision-making data is obtained in 116 dilemmas to calculate the correlation coefficients between influencing factors by formula 3. The correlation coefficient represents dependence between influencing factors, if the correlation coefficient is more, the dependence between them is stronger in decision-making, which indicates the probability of two factors, which contribute to the same decision-making, is larger than the product of prior probability of single factor. it is different from the Pearson correlation coefficient commonly used in statistics, which reflects the synchronization between two variables. Correlation coefficients between influencing factors for swerving and straight are just as shown in Tables 2 and 3.

Due to few dilemmas related to harm in training data for straight, the correlation coefficient between harm and other factors can not be calculated. Therefore, it can be argued that there is independence between harm and other factors in this paper.

Weight solution

In order to evaluate the weight wi of each influencing factor in decision-making, 84 comparative dilemmas, where there are only two attributes, are designed and chosen to be investigated by online to collect survey data. According to majority principle, decision-making in these dilemmas are achieved to calculate information gain of each influencing factor, just as shown in Table 4.

Information gain refers to the difference between information entropy and conditional entropy38, which can be remarked as g(D,A). The information gain under the conditional factor A is calculated by Formula 4.

H(D) is information entropy of decision factor D, H(D|A) is condition entropy of decision attribute D under conditional attribute A.

According to information gain of each factor, information gain ratio can be calculated between two factors to construct a pairwise comparison matrix A in Formula 5.

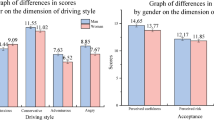

The attribute value and attribute vector for the pairwise comparison matrix A can be solved by Python or Matlab tool, as shown in Fig. 2. The maximum attribute value λ is 5.9607 for the pairwise comparison matrix A, which corresponding attribute vector V = (0.07,0.4982,0.2597,0.1955,0.5333,0.5973)T. Therefore, the weight wi of each factor in ACWADOE can be calculated by normalized processing, wi = (0.0325, 0.2313, 0.1206, 0.0908, 0.2476, 0.2773)T. In a result, the weight of gender is the least and that of harm is the highest in decision-making, which means gender and age have little influence. However, the weights of harm and traffic rule are more than other factors, they have more influences and roles in decision-making to ensure to minimize harm and comply with traffic laws in decision-making. However, decision-making by only traffic laws may not align with acceptance and permissible of the majority in real life. For example, when a self-driving car collides with a pedestrian who violates traffic laws, maybe it complies with traffic laws, but such an action will not be accepted by human. Additionally, it is necessary task for self-driving cars to ensure the safety of passengers inside the vehicle in ethical dilemmas, it is also a significant driving force to improve the application and deployment of self-driving technology. Nevertheless, prioritizing passenger safety at the expense of pedestrians’ right is also unacceptable from ethical standpoints. Therefore, multiple factors are considered to make decisions by ACWADOE to avoid the absolute dominance of any single factor, which prevents self-driving cars from making decisions, which are unsatisfying for the majority. It keeps a balance between traffic laws, safety and human satisfactory in real life.

Schematic diagram of solving attribute value and vector.

ACWADOE construction

Considering the existing problems in WADOE and ADOE, correlation coefficient corr(xi,xj) between super-parent node and sub-node should be added to construct ACWADOE, the detailed process is the following in Table 5.

In order to describe decision-making process by ACWADOE, the ethical dilemma in Fig. 1 is taken as an example. Compared with the little girl in the swerving, three old ladies in the straight have three different attributes value: more (x2 = 1), older (x3 = −1), violating traffic rules (x5 = −1) and other attributes are 0. When attribute value is 1, it indicates that the party with the attribute should be preferentially protected. If attribute value is − 1, it indicates that the party with the attribute should not be preferentially protected. Or else, attribute value is 0, it indicates the attribute in two lane is similar or same. When two or more attribute values are 1 or − 1, correlation coefficient between these attributes is adding.

The posterior probability of choosing to swerve c1 is solved in the ethical dilemma by Formula 6 firstly.

Then the posterior probability of choosing to go straight c2 is also solved in the ethical dilemma by Formula 6.

Since p(c1|x) < p(c2|x), the three ladies in the straight are chosen to be hit in the ethical dilemma. However, the decision-making is not suitable with utilitarianism, but complying with traffic laws and rules.

Test

In order to test and verify ACWADOE, 40 dilemmas are chosen to test NB, ADOE, WAODE and ACWADOE respectively. Test results are shown in Table 6.

In the table, accuracy describes the consistency ratio between decision-making and public opinions. If accuracy is more, it indicates that decision-making is more suitable with public opinions, decision-making is easy to be accepted in ethical dilemmas. In a result, the accuracy by ACWADOE is the highest among all models. In addition, these dilemmas are tested by WADOE and ACWADOE respectively, to calculate the probability that self-driving cars choose to swerve or go straight. The test results show that the difference between both probabilities of swerving and going straight by ACWADOE is more than that by WADOE, and ACWADOE has larger amplitude and fluctuation than WADOE as shown in Fig. 3, which demonstrates that ACWADOE has more robustness than WADOE.

Statistical diagram of probability deviation by WADOE and ACWADOE.

Conclusions

Testing by 40 ethical dilemmas, ACWADOE is more suitable for moral requirements and has more robustness than NB, ADOE, WADOE. However, due to the limited cases in training set, there are a few constraints in the generalization. For example, it is a problem to calculate correlation coefficient between harm and other attributes for straight, which impacts on making decision in some dilemmas by ACWADOE. However, a computational decision-making model based on weight is proposed to make reasonable and clear decision in ethical dilemma for self-driving cars by quantification, it may provide a new solution to solve dilemmas for car manufactures. Furthermore, according to the weight of each influencing factor, it indicates that each attribute has different role and influence in decision-making. So traffic management and law department should pay emphasis on a few important factors during formulating traffic laws and regulations related to self-driving technology, so that laws and regulations are more suitable for human requirement and morality. In addition, other AI systems, like self-driving cars, also face ethical dilemmas in real life. Therefore, it provides other AI systems an approach to make reasonable and clear decision-making as far as possible.

Data availability

The data used to support the findings of this study are available from the corresponding author upon request.

References

Yuanyuan, Q. Moral deviations of “moral machines” and ethical approaches to autonomous vehicles. J. Northeastern Univ. 23(3), 8–17 (2021).

Yu, C. Study on the Subject of Accident Responsibility of Autonomous Vehicle Under Ethical Dilemma (Xiangtan University, 2020).

Qingling, D. Moral hazard and machine ethics of artificial intelligence. J. Yunmeng 39(5), 39–45 (2018).

Lin, T. et al. Feature pyramid networks for object detection. Comput. Vis. Pattern Recogn. 1, 936–944 (2017).

Menon, C. & Alexander, R. A safety-case approach to the ethics of autonomous vehicles. Saf. Reliab. 39(1), 33–58 (2020).

Wang, H. et al. Ethical decision-making platform in autonomous vehicles with lexicographic optimization based model predictive controller. IEEE Trans. Veh. Technol. 69(8), 8164–8175 (2020).

Pickering, J. E. A M2D Approach for av Ethical Dilemma:av Collision with a Barrier/Pedestrian. IFAC Paperonline (2019).

Wu, Y.-H. & Lin, S.-D. A low-cost ethics shaping approach for designing reinforcement learning agents. ResearchGate 12, 1 (2017).

Evans, K., de Moura, N., Chauvier, S., Chatila, R. & Dogan, E. Ethical decision making in autonomous vehicles: The ethics project. Sci. Eng. Ethics 26, 3285–3312 (2020).

Gerdes, J. C. & Thornton, S. M. Implementable ethics for autonomous vehicles. Auton. Fahren 1, 87–102 (2015).

Yutian, W. Research on Ethical Decision Making of Autonomous Vehicles in Dilemmas (Hunan University, 2021).

Li, S. X., Zhang, J. Y., Wang, S. F., Li, P. C. & Liao, Y. P. Ethical and legal dilemma of autonomous vehicles: Study on driving decision-making mode under the emergency situations of red-light running behaviors. Electronic 10(7), 264–282 (2018).

Fei, L. Ethical position and legal governance of driverless collision algorithm. Legal Syst. Soc. Dev. 5, 167–188 (2019).

Shanshan, H. Research on implanting ethics into self-driving cars. Theory Mon. 05, 182–189 (2018).

Zhen, Z. The measure of life: How self-driving cars can solve the “trolley problem”. J. East China Univ. Polit. Sci. Law 6, 20–35 (2020).

Contissa, G., Lagioia, F. & Sartor, G. The ethical knob: Ethically customisable automated vehicles and the law. Artif. Intell. Law 25(6293), 365–378 (2017).

Gogoll, J. & Muller, J. F. Autonomous cars: In favor of a mandatory ethics setting. Sci. Eng. Ethics 23(3), 681–700 (2017).

Maximilian, G., Franziska, P., Johannes, B., Christoph, L. & Markus, L. Autonomous driving ethics: From trolley problem to ethics of risk. Philos. Technol. 4, 78–89 (2021).

Aletras, N., Tsarapatsanis, D., Preotiuc-Pietro, D. & Lampos, V. P. Redicting judicial decisions of the European court of human rights: A natural language processing perspective. Peer J. Comput. Sci. 02, 1–19 (2019).

Xiangyang, Z. & Ming, L. Review on Bayesian reasoning research. Adv. Psychol. Sci. 10(4), 388–394 (2002).

Song, X. M. Research on Chinese Information Classification Based on Improved Bayesian Algorithms (Beijing University of Posts and Telecommunications, 2019).

Zhou, Z. H. Machine Learning (Tsinghua University Press, 2016).

Langarizadeh, M. & Moghbeli, F. Applying Naive Bayesian networks to disease prediction: A systematic review. Acta Inf. Media 24(5), 364–369 (2016).

Rajeswari, R. P., Juliet, K. & Hana, A. Text classification for student data set using Naive Bayes classifier and KNN classifier. Int. J. Emerg. Trends Technol. Comput. Sci. 43, 8–12 (2017).

Blum, A. L. & Langley, P. Selection of relevant features and examples in machine. Artif. Intell. 1–2, 245–271 (1997).

Kononenko, I. Semi-Naive Bayesian classifier. Lect. Notes Comput. Sci. 482, 206–219 (1991).

Friedman, N., Dan, G. & Goldszmidt, M. Bayesian network classifiers. Mach. Learn. 29(2–3), 131–163 (1997).

Sahami, M. Learning limited dependence Bayesian classifiers. In Proceedings of the Second International Conference on Knowledge Discovery and Data Mining 335–338 (1996).

Zheng, F. & Webb, G. I. Averaged One-Dependence Estimators 65–73 (University of Technology Sydney, 2017).

Webb, G. I., Boughton, J. R. & Wang, Z. Not so naive Bayes: Aggregating one-dependence estimators. Mach. Learn. 58, 5–24 (2005).

Liu, G., Luo, Y. & Sheng, J. Research on application of Naive Bayes algorithm based on attribute correlation to unmanned driving ethical dilemma. Math. Probl. Eng. 4163419, 1–9 (2022).

Jiang, L. & Zhang, H. Weightily averaged one-dependence estimators. In Pacific Rim International Conference on Artificial Intelligence 970–974 (Springer, 2006).

Jiang, L. X., Zhang, L. G., Yu, L. J. & Wang, D. H. Class-specific attribute weighted naive Bayes. Pattern Recogn. 88, 321–330 (2019).

Jiang, L. X., Zhang, H., Cai, Z. H. & Wang, D. H. Weighted average of one-dependence estimators. J. Exp. Theor. Artif. Intell. 24(2), 219–230 (2012).

Zhang, H., Jiang, L. X. & Yu, L. J. Class-specific attribute value weighting for naive Bayes. Inf. Sci. 508, 260–274 (2020).

Zhong, G. J. Research on the Weighted One-Dependence Bayesian Forest Based on AODE Model (Jilin University, 2021).

Jiang, L. X., Zhang, L. G., Li, C. Q. & Wu, J. A correlation-based feature weighting filter for Naive Bayes. IEEE Trans. Knowl. Data Eng. 31(2), 201–213 (2019).

Vanfretti, L. & Narasimham Arava, V. S. Decision tree-based classification of multiple operating conditions for power system voltage stability assessment. Int. J. Electric. Power Energy Syst. 123, 1 (2020).

Author information

Authors and Affiliations

Contributions

Guoman Liu is a corresponding author and responsible for writing manuscript and communication, he has reviewed this manuscript. Jing Sheng is responsible for giving out and collecting survey questionnaires by online and offline, she has reviewed this manuscript. Zhen Tao is responsible for analysing survey data, he has reviewed this manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Ethical approval

All methods were carried out in accordance with relevant guidelines and regulations. All informed consent was obtained from all subjects. All subjects were informed in advance about the purpose, tasks. All experimental protocols were approved by China Association For Ethical Studies (CAES).

Informed consent

All written informed consent was obtained from all subjects.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Liu, G., Sheng, J. & Tao, Z. Application and design of a decision-making model in ethical dilemma for self-driving cars. Sci Rep 15, 8187 (2025). https://doi.org/10.1038/s41598-025-91921-0

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-91921-0