Abstract

Vessel recognition based on hydroacoustic signals is an important research area. The marine environment is complex and variable, which makes the transmission and reception process of the signals have some random cases. At the same time, there are various interference and noise sources in the water, such as waves, underwater equipment, marine organisms, etc., which bring difficulties to the identification and analysis of vessel targets. This paper proposed a model named Emphasized Dimension Attention and Future Fusion-Time Delay Neural Network (EDAFF-TDNN). The model adjusts the weights of the feature map dynamically by learning the correlation between dimensions through Squeeze and Excitation Block (SE-Block), which enables the model to capture the contextual information, thus the model performance is improved. The mechanism of feature fusion is also introduced to extract multi-layer features to improve the feature representation capability. The attention mechanism is added on top of TDNN. By considering the differences of each feature dimension, it enables the model to focus on the key information when learning feature representations. Which improves the model performance in complex scenarios. In addition, experiments of the model on the ShipsEar dataset show a recognition accuracy of 98.2%.

Similar content being viewed by others

Introduction

In recent years, with the development of science and technology, the successful application of deep learning methods has promoted the development of several fields. And the deep learning method has achieved good results in acoustic signal processing and other aspects1,2. Deep neural networks enable the learning of highly expressive features, leading to enhanced efficiency and accuracy in automatic recognition as the model undergoes continuous optimization. Target recognition technology based on hydroacoustic signals, as an important field of acoustic signal processing, has received more and more attention from international scholars. When vessels navigate in the ocean, they radiate noise to the surrounding environment inevitably. Ship radiated noise contains rich characteristic information of ship targets. Before the popularity of deep learning methods, traditional approaches often relied on shallow features, which are inadequate for capturing comprehensive target characteristics. Moreover, due to the complex and dynamic nature of marine environments, traditional methods often lacked sufficient adaptability and generalization capability. As a result, they are unable to address the target recognition demands in various environments effectively3,4. However, there are a variety of hydroacoustic signals in the underwater environment, such as marine life signals, communication signals, seismic signals, etc. Owing to the hydroacoustic signals have strong time-variation5, the target recognition classification based on the traditional features has some problems such as poor performance and limited generalization ability6. In addition, in the current hydroacoustic signal target recognition technology, it is difficult to collect enough high-quality hydroacoustic signals to be used as experimental data due to the complexity and variability of the marine environment, thus limiting the accuracy of the recognition. Under the constraints of existing hydroacoustic data, deep learning opens up a new development direction for hydroacoustic target recognition technology with its powerful feature extraction and optimization capabilities.

In this paper, a new method for vessel recognition of hydroacoustic signals based on EDAFF-TDNN is proposed, which mainly includes the following three contributions.

-

1.

Considering the influence of the Doppler effect, sampling segmentation is used in the preprocessing stage. It improves the amount of data and ensures that preprocessed hydroacoustic signal contains characteristic information for whole time period.

-

2.

Fusion of features from different layers expresses more comprehensive feature information of hydroacoustic signals and improves the characterization ability of features. The features from different layers are fused to improve the characterization ability of the features. And comprehensive feature is obtained to express the hydroacoustic signal of vessel.

-

3.

Introducing channel attention mechanism to calculate the weights between different feature dimensions automatically, so that the model can capture the subtle feature information of hydroacoustic signals of vessel.

The remaining sections of this paper are organized as follows: In section “Related work”, related work is introduced. In section “Data preprocessing”, we introduce the steps and processes of data preprocessing. Section “Feature extraction” presents the process of feature extraction. Section “Methodology” introduces the methods adopted in this paper. In section “Experiment”, experimental data are used to illustrate the performance of the proposed method. Finally, Section “Conclusion” draws the conclusion and the next work.

Related work

In recent years, there has been a rapid surge in the advancement of deep learning techniques applied to hydroacoustic signal recognition. Features can be extracted from raw data by deep learning methods automatically, which reduces the workload of feature engineering greatly and extracts more representative sample features for recognition and classification. In addition, due to the strong adaptive nature of deep learning algorithms, it can handle complex data structures and nonlinear problems. It is able to adapt to different scenarios and tasks by learning and adjusting the parameters continuously, and shows good performance in hydroacoustic target recognition.

When the vessel moves towards or away from the hydrophone, the spectrum will be changed due to the Doppler effect, resulting in some differences between the hydroacoustic signals collected by the hydrophone and the original hydroacoustic signals emitted by the target. Wang et al.7 introduced a Doppler frequency shift invariant feature extraction method for underwater acoustic target classification and pointed out the limitations of traditional feature extraction methods in dealing with such variations. They also proposed a new method based on time–frequency analysis and feature extraction, which is able to effectively capture the Doppler frequency shift features of an underwater target. The experimental results show that the method achieves better performance in the underwater acoustic target classification task, proving its superiority in handling Doppler frequency shift invariant features. Naderi et al.8 proposed a non-stationary time-continuous simulation model for broadband shallow water acoustic channels based on measured Doppler power spectrum to reduce the impact of Doppler effect on hydroacoustic target identification. Li et al.9 applied the square root untraceable Kalman filter (SRUKF) algorithm to reduce the impact of Doppler effect for the underwater non-maneuverable target tracking problem, and applied to underwater purely azimuthal and Doppler non-motorized target tracking problems.

Feature fusion in deep learning combines features extracted from different layers or multiple feature extraction methods to express more comprehensive information about the target and improve feature representation. Thus improve model performance and robustness. Wu et al.10 proposed a method called VFR for UATR. It replaces the CNN network with ResNet18 by fusing the 3D Fbank as the input feature to the model. The validation carried out on the ShipsEar dataset with two different divisions of data. The method of VFR achieves the accuracies of 98.5% and 93.8%, respectively. Liu et al.11 extracted six learning amplitude-time–frequency characteristics by using the sixth-order decomposition signal for highly unsteady and nonlinear noise signals. In the recognition of 12 specific noises in ShipsEar, the recognition rate reached 98.89%. However, the complexity of the model is high, which can not meet the real-time needs of ship identification. In order to describe underwater acoustic signals more comprehensively, Hong et al.12 proposed three-dimensional fusion features of underwater acoustic signals, extracted Log Mel as the first channel, MFCC as the second channel, and extracted chroma, contrast, tonality features Tonnetz and zero-crossing rate as the third channel. In the actual environment of vessel radiation noise data recognition experiment, the recognition accuracy is just 94.3%. Wang et al.13 addressed the problem of low recognition rate due to single feature inputs. The fusion features of Gammatone frequency cepstral coefficient (GFCC) and modified empirical mode decomposition (MEMD) were proposed to represent underwater acoustic signals. The recognition rate of 94.3% was obtained in the improved deep neural network model. Wang et al.14 employed a feature fusion strategy integrated with deep learning to enhance feature extraction capabilities. They input the fusion features obtained by splicing the improved MFCC features with the original signal into the CNN network. Their approach yielded a remarkable recognition accuracy of 98.6% on the ShipsEar dataset. Liu et al.15 combined the feature extraction method of deep learning and time-spectrogram to provide a more comprehensive representation of the distinctions among various targets. Three-dimensional features are constructed with Mel spectrogram and delta and delta-delta features. The system was evaluated by performing three tasks on the ShipsEar dataset, with recognition accuracy of 94.6%, 87.5%, and 72.6% in tasks 1, 2 and 3 respectively. Tang et al.16 proposed a new method to convert Mel spectrum to 3D data. TFSConv module was introduced to extract 3D time–frequency features by using two convolve operators of time and frequency dimensions, and the final identification accuracy of 93.5% was achieved in the ShipsEar dataset.

Attention mechanism in deep learning improves the robustness of models by focusing attention on the feature information that is most relevant to the task at hand, while suppressing irrelevant or useless feature information. Liu et al.17 proposed a neural network architecture with a dual-attention mechanism, which achieved a recognition accuracy of 95.6% on the ShipsEar dataset by fusing multiple features to form 3-D features and combining positional and channel attention. Wang et al.18 proposed an attention mechanism network (AMNet). It consists of a multi-branch backbone and a convolutional attention module. By extracting the STFT features of one-dimensional signals as input of the model. And different sizes of convolutional kernels are used to obtain multi-scale features. Channel and temporal attention are introduced in the backbone network to highlight key features. An accuracy of 99.4% is achieved on ShipsEar dataset and 98% on ShipMini. Lu et al.19 proposed a multi-scale space-spectral residual network based on three-dimensional (3-D) channels and spatial attention. The three-layer parallel residual network structure is used to enhance the expression of image features from channel domain and spatial domain by using different three-dimensional convolution kernels to enhance the accuracy of classification. Xue et al.2 used ResNet to extract the depth spectral features of underwater acoustic targets and introduced a channel attention mechanism into the camResNet network to enhance the stable spectral feature energy of residual convolution. The method achieved a recognition accuracy of 98.2%. Ji et al.20 proposed a Deep Residual Attention Convolutional Neural Network (DRACNN), where the original time-domain audio signal is used as the input to the model. The Squeeze Excitation (SE) module is introduced into the model to adaptively adjust the channel weights of the feature maps to emphasize key features. An accuracy of 97.1% and 89.2% is achieved on the ShipsEar and DeepShip datasets, respectively. Compared to the Resnet-18 model, the accuracy of DRACNN is 2.2% higher than Resnet-18 with a comparable number of parameters. Jin et al.21 proposed Channel-Temporal Attention Res-DenseNet (CTA-RDnet), which takes and one Res block and three Dense blocks as the backbone of the model. It introduces spatial and temporal attention mechanisms between each block to obtain features with more generalisation ability. According to the ablation experiments, CTA-RDnet has the accuracy of 96.79% on the self-collected dataset.

Data preprocessing

In this paper, the public dataset ShipsEar22 is used to verify and evaluate the proposed method. Since the data is collected in a real environment, the audio with back background noise and ambiguous information are eliminated from the dataset to ensure recognition accuracy. The dataset contains radiated noise from a variety of vessels, as shown in Table 1, 90 files of different durations were recorded, including four experimental classes and one background noise.

Considering the Doppler effect, when the vessel target is close to the hydrophone, the frequency received is higher than the original frequency, and the feature information collected is dense relatively. When the vessel target is far away from the hydrophone, the frequency received is lower than the original frequency, and the feature information collected is sparse relatively. Therefore, the following data preprocessing method was used to slice and combine the original hydroacoustic signal, as shown in Fig. 1.

-

(1)

The original hydroacoustic signal of T seconds is sliced into a number of 5 s equal length sample segments, and the last part of the signal less than 5 s is deleted.

-

(2)

Cut each 5 s sample segment into m equal-length sample segments, where m = ⌊T/5⌋.

-

(3)

The nth (n = 1, 2, …, m) fragment of each segmented fragment was combined to obtain m hydroacoustic signals as experimental data finally.

Data preprocessing.

The data is preprocessed to obtain a comprehensive feature coverage of the signals of vessels, which helps to extract representative features. Especially in the case of fast-changing data, it ensures that each hydroacoustic signal contains the feature of different time period.

After data preprocessing, the original 90 unequal length hydroacoustic signals were expanded into 2221 equal length hydroacoustic signals. Among them, the original 17 vessel signals of class A were sliced and combined into 369 5 s hydroacoustic signals, the original 19 vessel sound signals of class B were sliced and combined into 301 5 s hydroacoustic signals, the original 30 vessel signals of class C were sliced and combined into 842 5 s hydroacoustic signals, the original 12 vessel signals of class D were sliced and combined into 485 5 s hydroacoustic signals, and the original 12 background noise signals of class E were sliced and combined into 224 5 s hydroacoustic signals.

Feature extraction

The design of Mel Frequency Cepstral Coefficient (MFCC) features is based on human hearing and has become accepted and used in acoustic signal processing commonly23. However, MFCC features remove the correlation between the dimensional signals after the discrete cosine transform and require more computational resources. Mel spectrogram uses a nonlinear mapping method to convert the frequency axis of a signal into a mel scale, which improves resolution of the signal. This helps to analyze the spectral features of the hydroacoustic signal.

A pre-emphasized operation was used to enhance the high-frequency components to improve the clarity of the vessel signal. Framing is applied to the pre-emphasized signal to divide the signal into a series of short-time smooth segments. In addition, in order to make a smooth transition between frames, there is a certain overlap between frames, and we call the time of overlap between frames as frame shift. In the experiments, each frame is 25 ms, and the frame shift is 10 ms. A window function is added to each frame after framing to reduce the spectral leakage and overlap of vessel noise signals. We use the Hamming window, compared with the rectangular window and the Hanning window, the Hamming window can reflect the frequency characteristics of the short-time signal of each frame to a higher degree. The frequency distribution of the signal is transferred from time domain to frequency domain by fourier transform, and the spectrum information of each frame signal is obtained. By squaring the amplitude, the amplitude spectrum can be normalized so that the amplitudes of different frequency components can be compared on the same scale. The spectrogram is smoothed by a set of Mel filters, and the number of Mel filters in the experiment is set to 64. Finally, a 64-dimension feature vector is obtained to represent the characteristics of the vessel signal, as shown in Fig. 2.

Future extraction of hydroacoustic signal.

Methodology

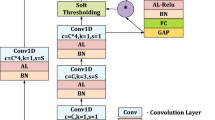

Deep learning promotes the development in terms of hydroacoustic signal classification and recognition tasks. Due to the complexity and variability of the hydroacoustic environment, traditional hydroacoustic signal processing methods are difficult to achieve the desired results. In this paper, a new hydroacoustic target recognition method based on EDAFF-TDNN is proposed, as shown in Fig. 3. It can capture the temporal relationship and spectral information in the sound signal effectively. The attentional mechanism allows the model to adjust the weights of different feature dimensions adaptively to distinguish different sound features better. Residual connections are employed in order to help solve the problem of gradient disappearance in neural networks. These connections allow the transfer of raw feature information across layers, improving the feature representation of the sound signal. The use of the dilated convolution operation allows the network to analyze the input features at different scales.

Structure of EDAFF-TDNN.

SE-ODDC block

There are two parts in SE-One Dimensional Dilated Convolution Block (SE-ODDC). One is Convolutional Block and the other is SE-Block24. The feature maps are summed with the original input features to form the residual connections via the Convolutional Block and SE-Block. In this way, the network is able to learn the residual features while maintaining the original feature information, as shown in Fig. 4.

Structure of SE-ODDC block.

In the convolution block, a convolution kernel of size 1 and step size 1 is used twice to perform a convolution operation on a feature map. The nonlinear activation function ReLU is back-connected to increase the nonlinear properties significantly, so the network can express more complex features. Subsequently, the feature map is divided into eight equal parts according to the dimension of the feature map, and eight feature maps of size 64*T are obtained for group convolution operation. The first feature map is kept without any operation. This is a reuse of the previous layer of features and also reduces the number of parameters and computation. Starting from the second feature map, the convolution result of the feature map is summed with the latter feature map and then as the input of the next set of convolutions. Finally, all the feature maps are connected in series according to the order of dimension to get the feature map consistent with the original input size. By grouping convolutions, more diverse feature representations can be learned and the generalization ability of the model is improved while reducing the number of parameters and complexity of the model, as is shown in Fig. 5.

Structure of convolutional block.

For the specific requirement of vessel target recognition, a larger reception field and richer features are beneficial to the final recognition effect. Therefore, the concept of dilated rate is also introduced in the convolution operation in this paper, which allows to skip some values at certain intervals during the convolution operation. By adjusting the dilated rate, the reception field of the model can be increased, which enhances the ability of the network to perceive contextual information. At the same time, Padding is introduced to make the output result consistent with the original input image size, as well as to avoid the loss of edge feature information in the convolution process. The relationship between input feature map size and output feature map size is shown in Eq. 1.

where p is the number of circles filled with 0. d stands for the size of the dilatation. k stands for the size of the convolution kernel. s stands for the step size of the convolution. As the number of network layers increases, p, k, and s are set to 2, 3, and 1 in three SE-ODDC Block modules, and d is set to 2, 3, 4 respectively.

The feature map of the output of the convolutional block is passed through an SE-Block, as shown in Fig. 6. The SE-Block uses the attention mechanism to learn the importance weight relationship between frames in each dimension. It adjusts the weight between different frames of the same dimension adaptively in the feature map, thus reducing the weight of the background noise and enhancing the weight of the vessel. The SE-Block compresses the feature map by global average pooling and obtains a 512-dimension weight matrix, representing the degree of contribution of each dimension to the overall features. The compressed feature is input into two fully connected layers. The ReLU and Sigmoid activation functions are applied to map the output of the weight matrix between 0 and 1, which represents the importance of each dimension relative to the whole feature map. After that, the learned weights are multiplied with the original feature map weights. A large weight will enhance the corresponding feature information in the feature map, and a small weight will weaken the corresponding feature information in the feature map. This enables the model to consider information from all frames more comprehensively.

Structure of SE-Block.

Attentive statistics pooling

Attentive Statistics Pooling25 is a statistical pooling layer with attention, which can process features in different time periods more accurately. The outputs of the three SE-ODDC Blocks in the model are fused according to the feature dimension, so that the feature maps input to the next layer not only have low dimensional features, but also contain high dimensional features. The fused 1536-dimension feature map is mapped to 128 dimensional by a linear layer. Then the feature map is restored to the original 1536 dimension by tanh activation function and a linear layer. And softmax activation is back-connected to make the output sum to 1 in the time dimension, which can be regarded as an attention score representing the importance of each frame. After that, the attention score matrix is multiplied with the original 1526-dimension feature map. This operation will enlarge the feature information of important frames. Finally, the weighted average and \(\tilde{\mu }_{{\text{c}}}\) weighted standard deviation \(\tilde{\sigma }_{{\text{c}}}\) of each dimension are obtained, and the two results are spliced together as the output of Attentive Statistics Pooling, as shown in Eqs. (2) and (3).

where \(\widetilde{{\mu_{{\text{c}}} }}\) represents the weighted statistical value of dimension c, \(\alpha_{{\text{t,c}}}\) represents the weight score of frame t in dimension c, and \(h_{t,c}\) represents the original value of frame t in dimension c in the feature map.

Experiment

In order to validate the superiority of the approach and the performance advantages of the model proposed in this paper, we conducted training and testing experiments on the ShipsEar dataset. The experimental platform configuration is shown in Table 2.

Training setup

A total of 30 epochs of training were carried out on the ShipsEar dataset, with batch size set to 16 and learning rate set to 0.001. The cross-entropy loss function was used in the experiment, as shown in Eq. (4),

where x is the predicted value, y is the true value, and c is the number of categories.

At the same time, the Adam optimizer is used to update the model parameters, with the weight decay rate set to 0.0001. During the training process, the model will approach the optimal solution gradually as the number of iterations increases. If continue to use a larger learning rate may cause the model to search near the optimal solution unnecessarily and reduce the training efficiency. Cosine Annealing can reduce the learning rate according to the progress of training gradually, it uses the cosine function to adjust the learning rate, and as the number of iterations increases, the learning rate is reduced gradually, thus helping the model to better converge to the optimal solution. The formula of Adam optimizer is shown in Eqs. (5–9).

where Eq. (5) is used to calculate the first order exponential sliding average of the gradient. Equation (6) is used to calculate the exponential sliding average of the second order term of the gradient. Equations (7) and (8) debias the calculated exponential sliding average. Equation (9) is the update formula for Adam.

Results and analyses

The learning rate curves for the training set of the EDAFF-TDNN model used for training and validation are shown in Fig. 7, which describes a trend of decreasing and stabilizing as the number of training rounds increases. The initial value of the learning rate is set to 0.001, and a larger learning rate is used for model optimization at the beginning of the training, and the learning rate decreases with the increase of the number of training epochs to ensure that the model slows down the rate of parameter updates and helps the model to converge to a more stable state.

Learning rate curve.

In the training set loss curve, the overall trend of the training set loss is slowly decreasing and accompanied by small fluctuations during the training process and finally gradually stabilizing, as shown in Fig. 8. In the test set loss curve, the test set loss fluctuates more in epoch 5 and 12, but the overall downward trend decreases, and finally the loss drops to 0.022, as shown in Fig. 9.

Training set loss curve.

Test set loss curve.

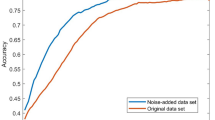

In the training set accuracy curve, the accuracy rate rises in the first few epochs rapidly, and then shows a trend of slow increase. With the gradual decrease of the learning rate and the increase of the number of training rounds, the accuracy rate shows a trend of gradual convergence, and finally converges to 98.6%, as shown in Fig. 10. In the test set accuracy curve, the accuracy rate also shows a gradually increasing trend and finally converges to 98.2%, as shown in Fig. 11.

Training set accuracy curve.

Test set accuracy curve.

We use four evaluation metrics, recognition accuracy, recall, precision and F1 score to describe the performance of the model. Each metric is shown in Eqs. (10–13).

TP is true positive, FP is false positive, TN is true negative, and FN is false negative. The recall, F1-score and precision of the test samples are shown in Table 3. The average precision, recall and F1-score of our method on the ShipsEar dataset are 0.980, 0.979 and 0.980, respectively. Among all the tested categories, category D achieves the best recognition results, with all of its evaluation metrics achieving 0.99. The average precision, recall and F1-score of all categories in the ShipsEar test set all exceeded 0.93, indicating that the model has a balanced effect on recognizing vessel sound signals of all categories with a certain degree of reliability.

The recognition result of the test set is shown in Fig. 12, according to the confusion matrix, the recognition results are correct for all the vessel signals of class A. Vessel signals of class D as well as a background noise signals of class E, both of them have only one test sample misrecognized. The recognition results for Class B and C vessel signals are slightly weaker, in which four of all Class B signals are recognized as Class C and two as Class E. Of all Class C vessel signals, two are recognized as Class A, one is recognized as Class B, and one as Class D. The results of Class A vessel signals and Class E background noise signals are better, with only one test sample being recognized as being in another category. As a result, we found the misidentified Class B vessel signal of number 130, its RMS energy diagram and Time domain diagram are shown in Fig. 13. In the training set of class C, there is a testing sample’s RMS energy diagram is shown in Fig. 14, which is similar to number 130. It can be seen that in all classes of vessel signal recognition, the vessel signals of classes B and C are confused easily.

Recognition result.

RMS energy diagram for the signal misidentified as Class C.

RMS energy diagram of Class C vessel signal.

In addition, we also take comparisons with other methods, as shown in Table 4. The baseline method2 uses a basic machine learning approach and achieves an accuracy of 0.754. Some of the methods in the table employ data augmentation techniques, such as Ref12 and Ref15, which both use the data augmentation method of specAugment and extract multiple features to construct three-channel features as inputs to the model, and achieve the accuracy of 0.946 and 0.943 respectively. In Ref26, the data augmentation method ResNet_cDCGAN was used to enhanced feature samples and improved the recognition accuracy. With the use of data augmentation, the accuracy of the above three methods is still 0.036, 0039 and 0.019 lower than our method. In Ref16, the accuracy of our method is 0.047 higher than that proposed. Given that the datasets of the different approaches of segmentation may be different, the method in this paper achieved the best performance in terms of recognition results.

In addition, the computational cost of the proposed method is analyzed, as shown in Table 5.

We can see that after the introduction of these structures, FLOPs of the model increased from 42.86 to 49.17 M, GPU usage increased from 1558 to 1802 MB, and parameter number increased from 5.35 to 6.14 M. Training time went from 26 to 28 s per epoch. Despite the increase in computational costs, the accuracy of the model increased from 96.3 to 98.2%, indicating that these modules have a significant effect in improving performance.

Conclusion

In this paper, we present a new hydroacoustic target recognition method based on EDAFF-TDNN, which has been used to classify vessel signals on the ShipsEar dataset successfully. This paper shows the experimental process of constructing the EDAFF-TDNN model, which achieves 98.2% accuracy on the ShipsEar dataset. This model uses SE-Block to learn the correlation between dimensions to adjust the weight of the feature map dynamically. At the same time, a feature fusion mechanism is introduced to form multi-layer features to improve the feature representation ability. In addition, an attention mechanism based on TDNN is added to the model. By considering the relationship between feature dimensions, the model can focus on key information when learning feature representation, thus improving the performance of the model.

Our next work is to focus on the further refinement and optimization of the existing hydroacoustic target recognition model, and attempt to apply the model to another water environment. In addition, experiments and evaluations will be carried out on another large scale underwater acoustic datasets to verify the performance and practicability of the proposed model.

Data availability

Data will be made available on reasonable request.

References

Chen, Z. et al. Model for underwater acoustic target recognition with attention mechanism based on residual concatenate. J. Mar. Sci. Eng. 12(1), 24 (2023).

Xue, L., Zeng, X. & Jin, A. A novel deep-learning method with channel attention mechanism for underwater target recognition. Sensors 22(15), 5492 (2022).

Kang, C., Zhang, X. & Zhang, A. et al. Underwater acoustic targets classification using welch spectrum estimation and neural networks. In International Symposium on Neural Networks 930–935 (Springer, Berlin, 2004).

Zhang, L. et al. Feature extraction of underwater target signal using mel frequency cepstrum coefficients based on acoustic vector sensor. J. Sens. 2016, 7864213 (2016).

Miao, Y. et al. Underwater acoustic signal classification based on sparse time–frequency representation and deep learning. IEEE J. Ocean. Eng. 46(3), 952–962 (2021).

Xie, Y., Ren, J. & Xu, J. Adaptive ship-radiated noise recognition with learnable fine-grained wavelet transform. Ocean Eng. 265, 112626 (2022).

Wang, L., Wang, Q. & Zhao, L. et al. Doppler-shift invariant feature extraction for underwater acoustic target classification. In 2017 International Conference on Wireless Communications, Signal Processing and Networking (WiSPNET) 1209–1212 (IEEE, 2017).

Naderi, M. & Patzold, M. Modelling the Doppler power spectrum of non-stationary underwater acoustic channels based on Doppler measurements. In OCEANS 2017-Aberdeen 1–6 (IEEE, 2017).

Li, X. et al. Underwater bearing-only and bearing-Doppler target tracking based on square root unscented Kalman filter. Entropy 21(8), 740 (2019).

Wu J, Li P, Wang Y, et al. VFR: The underwater acoustic target recognition using cross-domain pre-training with fbank fusion features[J]. J. Mar. Sci. Eng. 11(2), 263. (2023)

Liu, S. et al. A fine-grained ship-radiated noise recognition system using deep hybrid neural networks with multi-scale features. Remote Sens. 15, 2068 (2023).

Hong, F., Liu, C., Guo, L., Chen, F. & Feng, H. Underwater acoustic target recognition with a residual network and the optimized feature extraction method. Appl. Sci. 11, 1442 (2021).

Wang, X. et al. Underwater acoustic target recognition: A combination of multi-dimensional fusion features and modified deep neural network. Remote Sens. 11(16), 1888 (2019).

Wang, H., Xu, C. & Li, D. Underwater acoustic target recognition combining multi-scale features and attention mechanism. In 2023 IEEE 3rd International Conference on Electronic Technology, Communication and Information (ICETCI), Changchun, China 246–253 (2023).

Liu, F. et al. Underwater target recognition using convolutional recurrent neural networks with 3-D Mel-spectrogram and data augmentation. Appl. Acoust. 178, 107989 (2021).

Tang, N. et al. Differential treatment for time and frequency dimensions in mel-spectrograms: An efficient 3D Spectrogram network for underwater acoustic target classification. Ocean Eng. 287, 115863 (2023).

Liu, C., Hong, F. & Feng, H. et al. Underwater acoustic target recognition based on dual attention networks and multiresolution convolutional neural networks. In OCEANS 2021: San Diego-Porto 1–5 (IEEE, 2021).

Wang, B. et al. An underwater acoustic target recognition method based on AMNet. IEEE Geosci. Remote Sens. Lett. 20, 1–5 (2023).

Lu, Z. et al. 3-D channel and spatial attention based multiscale spatial–spectral residual network for hyperspectral image classification. IEEE J. Sel. Top. Appl. Earth Obs. Remote Sens. 13, 4311–4324 (2020).

Ji, F. et al. Underwater acoustic target recognition based on deep residual attention convolutional neural network. J. Mar. Sci. Eng. 11(8), 1626 (2023).

Jin, A. & Zeng, X. A novel deep learning method for underwater target recognition based on res-dense convolutional neural network with attention mechanism. J. Mar. Sci. Eng. 11(1), 69 (2023).

Santos-Domínguez, D. et al. ShipsEar: An underwater vessel noise database. Appl. Acoust. 113, 64–69 (2016).

Yao, Q., Wang, Y. & Yang, Y. Underwater acoustic target recognition based on data augmentation and residual CNN. Electronics 12(5), 1206 (2023).

Hu, J., Shen, L. & Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition 7132–7141 (2018).

Okabe, K., Koshinaka, T. & Shinoda, K. Attentive statistics pooling for deep speaker embedding. arXiv:1803.10963 (2018).

Luo, X. et al. An underwater acoustic target recognition method based on spectrograms with different resolutions. J. Mar. Sci. Eng. 9(11), 1246 (2021).

Funding

This work is supported by General Project of Science and Technology Plan of Beijing Municipal Education Commission (No. KM202210017006), the Beijing Science and Technology Association 2021–2023 Young Talent Promotion Project (BYESS2021164), Beijing Digital Education Research Project (BDEC2022619048), Ningxia Natural Science Foundation General Project (2022AAC03757, 2023AAC03889), Beijing Higher Education Association Project (MS2022144), Ministry of Education Industry-School Cooperative Education Project (220607039172210, 22107153134955).

Author information

Authors and Affiliations

Contributions

Conceptualization, W.W. and J.L; methodology, W.W. and J.L; software, Y.H.; validation, Y.H., L.Z. and N.C.; formal analysis, X.Y.; investigation, K.Y.; resources, J.L.; data curation, P.Y.; writing original draft preparation, W.W.; writing review and editing, J.L.; visualization, H.T.; supervision, L.Z.; project administration, L.Z.; funding acquisition, W.W. All authors have read and agreed to the published version of the manuscript.

Corresponding authors

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License, which permits any non-commercial use, sharing, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if you modified the licensed material. You do not have permission under this licence to share adapted material derived from this article or parts of it. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc-nd/4.0/.

About this article

Cite this article

Wei, W., Li, J., Han, Y. et al. Underwater vessel sound recognition based on multi-layer feature and attention mechanism. Sci Rep 15, 11239 (2025). https://doi.org/10.1038/s41598-025-95562-1

Received:

Accepted:

Published:

Version of record:

DOI: https://doi.org/10.1038/s41598-025-95562-1